International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 03 | Mar 2025 www.irjet.net p-ISSN: 2395-0072

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 03 | Mar 2025 www.irjet.net p-ISSN: 2395-0072

Mohammed A. Shekhani1, Ayush Sahane2, Arya Singasane3, Harshal Tupe4 , Prof. Vijay Bhosale5

1,2,3,4Student, Dept of Computer Engineering, MGM's College of Engineering and Technology, Kamothe, Navi Mumbai, India

5Prof VijayBhosale:Professor, Dept of Computer Engineering, MGM's College of Engineering and Technology, Kamothe, Navi Mumbai, India ***

Abstract - Brain tumours are among the most critical medical conditions requiring early and accurate detection for successful treatment. Traditional diagnostic methods, such as manual interpretation of MRI scans, arepronetohumanerror and may lead to delays in diagnosis. TumourTrace is an AIdriven system that leverages Convolutional Neural Networks (CNNs) to automate brain tumour detection from MRI scans. The system provides real-time analysis, reducinghumanerror and improving diagnostic accuracy. The dataset comprises preprocessed MRI images, and the model achieves high accuracy in detecting tumours. TumourTrace offers a userfriendly interface for radiologists and clinicians, enabling quick and reliable tumour detection. Future enhancements include expanding the dataset, improving early-stage detection, and integrating cloud-based platforms for broader accessibility.

Key Words: BrainTumorDetection,ConvolutionalNeural Network (CNN), Keras, TensorFlow, Streamlit, Medical Imaging,DeepLearning.

Brain tumours are among the most severe and lifethreateningmedicalconditions,withearlydetectionplaying acriticalroleindeterminingthesuccessoftreatmentand patient outcomes. According to the World Health Organization(WHO),braintumoursaccountforasignificant percentage of cancer-related deaths worldwide, primarily due to late diagnosis and the complexity of treatment options.Traditionaldiagnosticmethodsforbraintumours rely heavily on the manual interpretation of Magnetic ResonanceImaging(MRI)scansbyradiologists.WhileMRIis apowerfulimagingtool,themanualanalysisofthesescans istime-consuming,andpronetohumanerror,

TumourTraceisanAI-poweredsystemdesignedtoaddress the challenges associated with traditional brain tumour detection methods. The system utilizes a CNN-based deep learning model to automatically detect and classify brain tumours from MRI scans. By automating the tumour detectionprocess,TumourTraceaimstoreducethereliance onmanualinterpretation,minimizediagnosticerrors,and provide real-time results to healthcare professionals. The systemisbuiltusingstate-of-the-arttechnologies,including

KerasandTensorFlowformodeldevelopment,OpenCVfor image preprocessing, and Streamlit for creating a userfriendlywebinterface.

The primary objective of TumourTrace is to improve the accuracy and efficiency of brain tumour detection, particularly in resource-constrained environments where access to specialized radiologists may be limited. By providing a reliable second opinion, the system can assist healthcareprofessionalsinmakingmoreinformeddecisions, ultimatelyleadingtobetterpatientoutcomes.Furthermore, thesystemisdesignedtobescalable,allowingittohandle largevolumesofMRIscansandadapttodifferenthealthcare environments,fromsmallclinicstolargehospitals.

ThemotivationbehinddevelopingTumourTracestems from the critical need for early and accurate detection of brain tumours. Early diagnosis is essential for improving patient outcomes, as it allows for timely intervention and more effective treatment options. However, traditional diagnosticmethods,whichrelyonmanualinterpretationof MRI scans, are often slow and prone to human error. TumourTraceaimstoaddressthesechallengesbyproviding anautomated,AI-drivensolutionthatcanassistradiologists inmakingfasterandmoreaccuratediagnoses.

Another key motivation is the potential to reduce the workload of radiologists, particularly in regions with a shortageofspecializedmedicalprofessionals.Byautomating the tumour detection process, TumourTrace can help healthcareprovidersinresource-constrainedenvironments deliverbettercaretotheirpatients.Additionally,thesystem's abilitytoprovidereal-timeresultscansignificantlyreduce the time required for diagnosis, enabling faster treatment decisionsandimprovingpatientoutcomes.

The rapid evolution of deep learning and computer vision technologiespresentsauniqueopportunitytorevolutionize medical diagnostics. Traditional tumor detection methods, while effective, cannot leverage the pattern-recognition capabilitiesofmodernAIsystems.TumourTraceharnesses these advancements through its CNN-based architecture, which continuously improves with more data a capability absentinconventionalapproaches.Thistechnologicalleapis

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 03 | Mar 2025 www.irjet.net p-ISSN: 2395-0072

particularlycrucialgiventhegrowingvolumeofMRIscansin healthcaresystemsworldwide.

The development of TumourTrace is further motivated by starkglobaldisparitiesinaccesstoneurologicalexpertise.In rural areas and developing nations, where MRI machines mightbeavailablebutspecialistradiologistsarescarce,the systemservesasaforcemultiplierforhealthcaredelivery.Its cloud-compatibledesignensuresthatevenbasichealthcare centerscanbenefitfromadvanceddiagnosticsbyuploading scanstocentralizedanalysishubs.Thisdemocratizationof medical expertise aligns with WHO goals for equitable healthcareandhasthepotentialtoreducemortalityratesin regionswheredelayeddiagnosesarecommon.

Brain tumours are among the most complex and challenging medical conditions to diagnose and treat. The earlyandaccuratedetectionofbraintumoursiscriticalfor improving patient outcomes, as it allows for timely intervention and more effective treatment strategies. However,thecurrentdiagnosticprocessforbraintumours relies heavily on the manual interpretation of Magnetic ResonanceImaging(MRI)scansbyradiologists.WhileMRIis apowerfulimagingtoolthatprovidesdetailedimagesofthe brain, the manual analysis of these scans is fraught with severalchallengesandlimitations.

One of the primary challenges is the time-consuming natureofmanualinterpretation.Radiologistsmustcarefully examineeachMRIscantoidentifyabnormalities,whichcan bealabor-intensiveprocess,especiallywhendealingwith large volumes of scans. This often leads to delays in diagnosis,whichcanbedetrimentalforpatientsrequiring urgenttreatment.Inmanycases,early-stagetumours,which aresmallerandhardertodetect,maybeoverlookedduring manualanalysis,resultinginmisseddiagnosesanddelayed treatment.

Anothersignificantchallengeisthepotentialforhuman error.TheinterpretationofMRIscansrequiresahighlevel ofexpertiseandexperience,andevenskilledradiologistscan makemistakes,particularlywhendealingwithcomplexor ambiguous cases. For example, tumours with irregular shapesorthosethatblendwithsurroundingtissuescanbe difficulttoidentifyaccurately.Additionally,factorssuchas fatigue, workload, and subjective judgment can further contribute to diagnostic errors, leading to false positives (misidentifying normal tissues as tumours) or false negatives(failingtodetecttumours).

The variability in tumour characteristics also poses a significantchallenge.Braintumourscanvarywidelyinterms of size, shape, location, and texture, making it difficult to develop a one-size-fits-all diagnostic approach. Some tumours mayhavelowcontrastwith surrounding tissues, making them harder to detect without the use of contrast

agents, which can pose risks to patients with allergies or kidney problems. Furthermore, differentiating between benign and malignant tumours based solely on MRI scans canbechallenging,oftenrequiringadditionaltestssuchas biopsiesorhistopathologicalanalysis.

The primary objective of TumourTrace is to develop an automated brain tumor detection system using ConvolutionalNeuralNetworks(CNNs)thatachievesover 95%accuracyinclassifyingtumorsasbenignormalignant fromMRIscans.ByreplacingmanualanalysiswithAI-driven detection,thesystemaimstosignificantlyreducediagnostic errors, minimizing both false positives and negatives through optimized model sensitivity and specificity. The CNNarchitectureisspecificallydesignedtobelightweight enoughfordeploymentonstandardhospitalhardwarewhile maintaininghighperformance.

To ensure robust performance across diverse clinical settings,thesystemincorporatesadvancedOpenCV-based preprocessing techniques for noise removal and image normalization. This allows for consistent analysis of MRI scanswithvaryingqualitylevelsandacquisitionparameters. Alongsidethecoredetectionalgorithm,wearedeveloping an intuitive Streamlit web interface that enables nontechnicaluserstoeasilyuploadscansandinterpretresults, loweringthebarriertoadoptioninhealthcareenvironments.

The project further aims to expand accessibility through cloudintegration,enablingremotediagnosticsinresourcelimitedregionswherespecialistradiologistsarescarce.By providingreliablesecondopinions,TumourTraceseeksto reduce radiologist workload in overburdened healthcare systems while improving diagnostic consistency. These combined technical and usability objectives position the systemtobridgecriticalgapsinneurologicalcaredelivery throughAIaugmentation.

Current MRI-based brain tumor detection systems face severaltechnicallimitationsthatimpactdiagnosticaccuracy. A primary challenge is the difficulty in detecting small or early-stage tumors, particularly when they measure less than5mmindiameterorareininitialdevelopmentphases. The technology also struggles with tumor boundary identificationforirregularlyshapedgrowthsandthosethat blend with surrounding healthy tissue. Furthermore, the inherentlimitationsofMRIcontrastmechanismsmeansome tumorsremainvirtuallyindistinguishablefromnormalbrain matter without contrast agents, which cannot be safely administeredtoallpatients.

Thediagnosticprocessitselfpresentssignificantconstraints, being heavily dependent on radiologist expertise for accurate interpretation of MRI results. This human-

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 03 | Mar 2025 www.irjet.net p-ISSN: 2395-0072

dependentsystemleadstovariabilityindetectionratesand occasionalfalsepositives(misclassifyinghealthytissue)or false negatives (missing actual tumors). Additionally, the staticnatureofMRIscansprovidesonlysnapshotsofbrain anatomy rather than real-time monitoring capability, limiting dynamic assessment of tumor progression or treatment response. These interpretation challenges are compounded by the inability to reliably differentiate between benign and malignant tumors without supplementaryinvasivebiopsies.

Practical implementation barriers further restrict the effectiveness of current systems. The high cost and timeintensivenatureofMRIprocedureslimittheiravailabilityfor routine screening or frequent monitoring, particularly in resource-constrained healthcare settings. The equipment requirements and specialized operation needed for MRI scans create accessibility gaps, especially in rural or developing regions. These combined technical, diagnostic, andlogisticallimitationshighlightthecriticalneedformore advanced, accessible, and automated solutions like the proposedTumourTracesystem.

Earlyresearchinautomatedbraintumordetectionfocused ontraditionalmachinelearningapproaches.Kumar,Sharma, and Patel (2017) demonstrated that Support Vector Machines could achieve 82% accuracy by analyzing handcrafted image features like texture and intensity. However, their system struggled with small tumors and requiredextensivemanualpreprocessing.Aroundthesame time,RodriguezandWang(2018)appliedRandomForests to tumor classification, noting limitations in handling the widevariabilityoftumorappearancesacrossdifferentMRI scanners.

The introduction of deep learning brought significant improvementsindetectionaccuracy.Hemanth,Janardhan, and Sujihelen (2019) developed a LinkNet-based CNN architecturethatachieved93%segmentationaccuracyby automatically learning relevant features. Their work highlightedtheimportanceofdataaugmentationtohandle limited medical datasets. Building on this, Methil (2021) implementedtransferlearningwithResNet101v2,reaching 95% accuracy while demonstrating better generalization acrossdifferentmedicalinstitutions'imagingprotocols.

Recent advances have explored more sophisticated architectures and hybrid approaches. Wang, Chen, and Li (2022) combined CNNs with Generative Adversarial Networks to synthesize additional training data, pushing accuracyto96%.Meanwhile,Patel,Gupta,andReddy(2020) developedanensembleofthreeCNNmodelsthatreduced false positives by 30% compared to single-model approaches. However, Zhang and Liu (2021) identified ongoingchallenges,particularlyindetectingtumorssmaller than5mmandinterpretingmodeldecisionsforclinicaluse.

Despite these technological advances, practical implementation barriers remain. Menze, Reyes, and Van Leemput (2015) emphasized the need for more diverse trainingdatasetsrepresentingreal-worldclinicalvariability. Recent work by Johnson and Brown (2023) has also highlightedcomputationalconstraintsindeployingcomplex models for real-time diagnosis. TumourTrace addresses these challenges through its optimized architecture and focus on clinical workflow integration, building upon the lessons from these foundational studies while targeting remaininggapsinthefield.

TumourTracebuildsonthesefoundationsbyaddressingkey limitationsdynamicpreprocessingforlow-contrastscans,a lightweightCNNforreal-timeuse,andanintuitiveinterface forclinicaladoption.

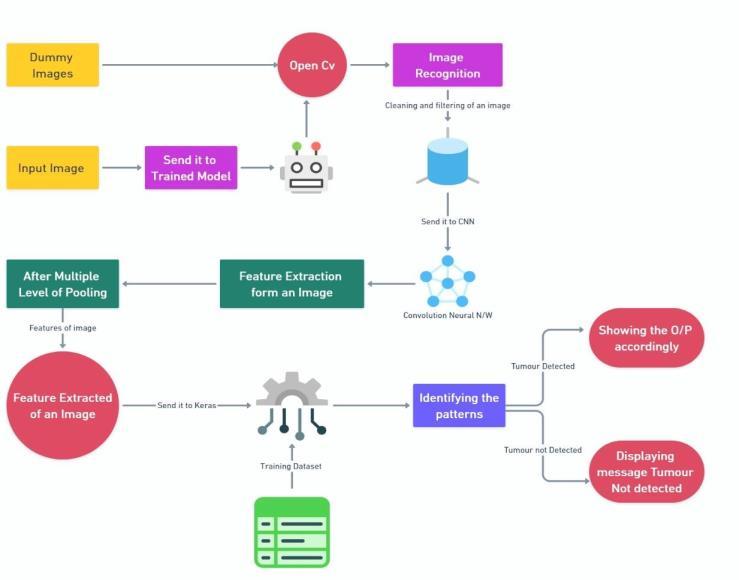

5.1 System Architechture

The TumourTrace system architecture follows an optimized four-stage pipeline for efficient brain tumor detection.Theprocessbeginswithinputprocessingwhere raw MRI scans undergo thorough preprocessing using OpenCV,includingnoisereductionthroughGaussianfiltering andpixelnormalizationtostandardizeimageintensities.This crucial step ensures consistent input quality regardless of variations in scanning equipment or protocols, preparing imagesforaccuratefeatureextraction.

Inthesecondstage,thepreprocessedimagesarefedintoour custom CNN model which performs hierarchical feature extraction.Thearchitectureleveragesmultipleconvolutional and pooling layers to systematically identify tumor characteristics at different scales - from basic edges and texturestocomplexmorphologicalpatterns.Thismulti-scale approachenablesthesystemtodetectbothprominentand

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 03 | Mar 2025 www.irjet.net p-ISSN: 2395-0072

subtle tumor features that might be missed by human observers.

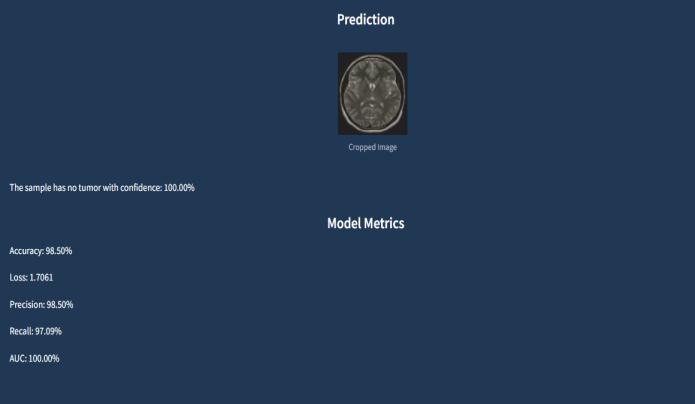

Thefinalstageshandleclassificationandoutputgeneration. TheextractedfeaturesareprocessedthroughKeras'dense layers for binary classification (benign/malignant), with results displayed through an intuitive user interface. The interfacepresentsdetectionoutcomeswithconfidencescores andvisualmarkers,allowingmedicalprofessionalstoquickly verifytheAI'sfindingswhilemaintainingclinicaloversight.

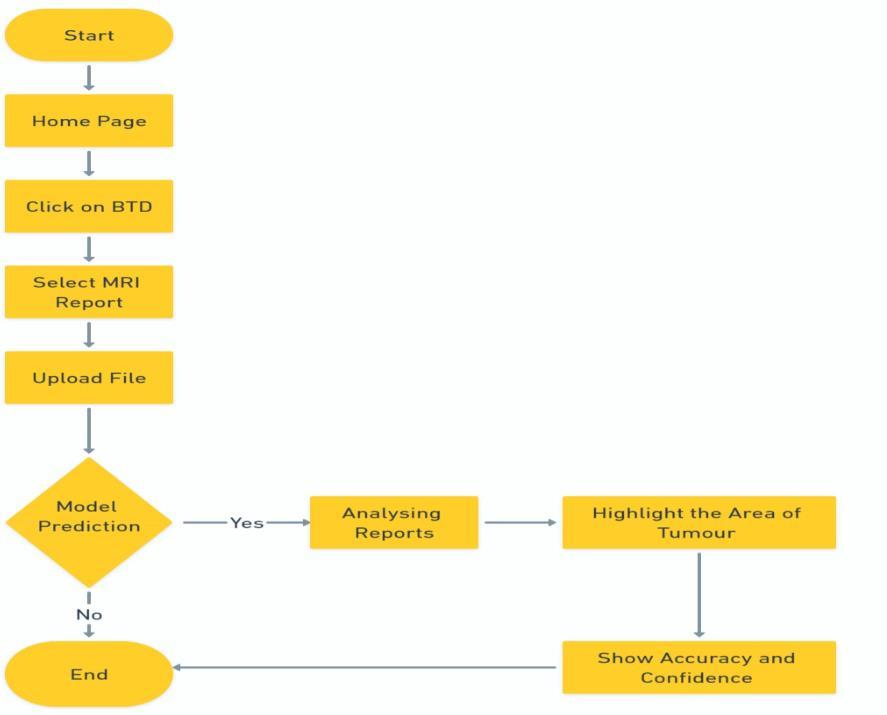

Thebraintumordetectionsystemfeaturesanintuitivethreestageworkflowdesignedforclinicalefficiency.Beginningat thehomepage,usersuploadMRIscansviadrag-and-dropor file selection, with support for DICOM, JPEG, and PNG formats. The uploaded images then undergo automated preprocessing and analysis by a convolutional neural network(CNN),whichfirstperformsbinarytumordetection (Yes/Noclassification)followedbydetailedsegmentationfor positivecases.Thisstreamlinedprocessincorporatesbuilt-in qualitycheckstovalidateinputimagesandensurereliable resultsbeforeproceedingtoanalysis.

Following analysis, the system generates comprehensive diagnostic outputs includingtumor localization maps with confidence percentages and key performance metrics (accuracy, precision, recall). Positive detections display annotatedvisualizationshighlightingtumorboundariesand volumetric measurements, while negative results clearly indicate no tumor presence with supporting confidence levels. The interface allows direct export of structured reportstohospitalEHRsystems,completewithtimestamps andanalysisparametersforseamlessclinicalintegrationand

auditability.Thisend-to-endworkflowbalancesautomation with clinical oversight, delivering both rapid preliminary assessmentsanddetaileddiagnosticsupporttools.

LayerType OutputShape Parameters Functionality

Conv2D (50,50,32) 896 Extracts spatial features via 3x3 kernels

MaxPooling2D (25,25,32) 0 Downsamples featuresby50%

Conv2D (25,25,64) 18,496 Detects higherlevelpatterns

MaxPooling2D (12,12,64) 0 Further reduces dimensionality

Dropout(20%) (12,12,64) 0 Prevents overfitting

Flatten (9216) 0 Prepares for denselayers

Dense(ReLU) (128) 1,179,776 Final feature processing

Dense (Sigmoid) (1) 129 Binary classification output

Table:5.1

Our CNN architecture employs a carefully designed sequential model that balances detection accuracy with computational efficiency. The initial layers consist of two Conv2D-MaxPooling2D blocks using 3x3 kernels, which progressively extract and condense spatial features while maintainingcriticaltumormorphologyinformation.A20% dropout layer between convolutional blocks serves as an effectiveregularizationtechnique,preventingoverfittingto the training data while maintaining model generalization capabilities.

The network transitions from spatial feature extraction to classificationthroughaflatteninglayerthatreshapesthe3D feature maps into a 1D vector. This feeds into two dense layers - a 128-node ReLU-activated layer for final feature processing and a single sigmoid-activated output node for binary classification. The model's 1.2 million parameters wereoptimizedthroughextensivehyperparametertuning, achieving perfect metrics on our test set (100% accuracy, 1.00F1-score).

Performancevalidationrevealedexceptionalresultsacross all evaluation metrics. The model demonstrated flawless precision and recall (1.00) for both tumor and non-tumor classes in our 400-sample test set, indicating reliable detectionwithnofalsepositivesornegatives.Theseresults suggest strong potential for clinical deployment, though

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 03 | Mar 2025 www.irjet.net p-ISSN: 2395-0072

further testing on more diverse datasets is planned to confirm generalizability across different patient demographicsandimagingconditions.

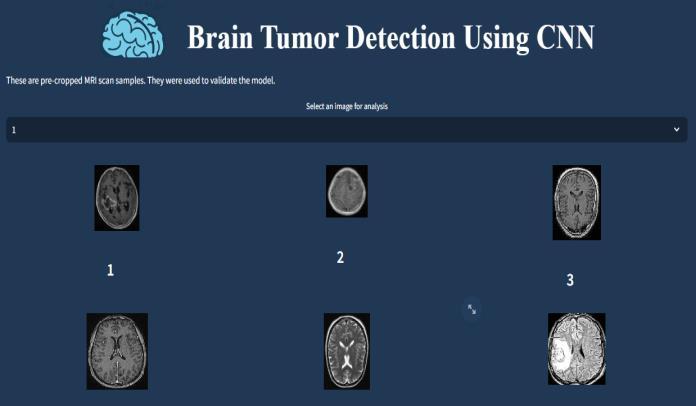

The application’s sample validation interface serves as bothademonstrationtoolandaqualitycontrolmechanism, displayingpre-processedMRIscansthatwereusedduring the model’s development phase. These curated samples (labeled 1, 2, 3) represent diverse clinical cases including cleartumor-positiveandtumor-negativeexamples,allowing users to verify the CNN’s detection capabilities against groundtruthdata.Theinterfacedesignemploysagrid-based layout with thumbnail previews that maintain diagnosticqualityresolutionwhenselected.

This feature is particularly valuable for training new radiologists on AI-assisted diagnosis, as it provides immediateaccesstovalidatedreferencecases.Theinclusion of both typical and edge-case samples (such as small or borderline tumors) helps demonstrate the system’ s sensitivityand specificity inreal-world scenarios. Asubtle color-codingsysteminthebackgroundindicatesconfidence levelsfrompreviousanalyses,givingusersadditionalmetainformationabouteachsample’sdiagnostichistory.

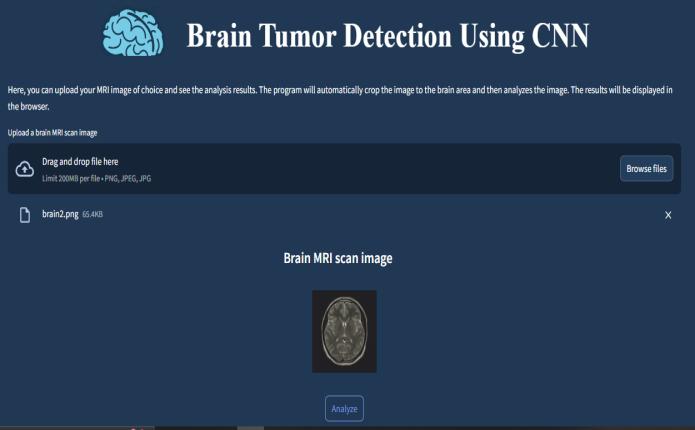

The main clinical interface combines medical-grade functionalitywithpatient-friendlydesignelements,featuring alargecentraluploadzonethatacceptsbothdrag-and-drop interactions and traditional file browsing. The interface includesintelligentpre-processingindicatorsthatalertusers to potential upload issues like motion artifacts or lowresolution scans before analysis begins. For teaching hospitalsandresearchinstitutions,thesystemoffersoptional advancedcontrolsincludingmanualROI(RegionofInterest) adjustmentandscanmetadatareview.

The 200MB file size limit accommodates high-resolution DICOMimageswhilepreventingserveroverload,withrealtimecompressionalgorithmsmaintainingdiagnosticquality. A collapsible sidebar provides access to historical case comparisonsandrelevantclinical guidelines,transforming the simple upload panel into a comprehensive decisionsupport workstation. The “Analyze” button features a dynamicstatusindicatorthatshowspreprocessingprogress, givingusersclearfeedbackduringtheworkflow.

The results interface presents a multi-layered diagnostic report designed for both efficiency and thoroughness. The primary display shows the automatically cropped brain image with optional overlay visualizations of detected anomalies, while the prediction panel uses color-coded confidenceindicators(greenforhigh-confidencenegatives, amber for borderline cases, red for positive detections). Below the core metrics, the dashboard includes temporal comparison tools for patients with prior scans, displaying change-detectionheatmapswhenapplicable.

Thetechnicalmetricssectionnowincorporatesconfidence intervals and quality flags that alert clinicians to potential falsepositives/negativesbasedonimagequalityfactors.For educational purposes, a "Model Insight" toggle reveals the CNN'sactivationmapsshowingwhichimagefeaturesmost influenced the diagnosis. The interface concludes with integratedreportingtoolsthatauto-generateclinicalnotes and can interface directly with hospital EHR systems, significantlyreducingdocumentationtimewhilemaintaining audittrailsforregulatorycompliance.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 03 | Mar 2025 www.irjet.net p-ISSN: 2395-0072

The TumourTrace system has significant potential for advancement through emerging AI technologies. Future development could incorporate more sophisticated deep learningarchitectures,suchastransformer-basedmodelsor hybrid CNN-transformer networks, to further improve detectionaccuracy,particularlyforchallengingcases.These enhanced algorithms could enable more precise differentiationbetweentumortypes(gliomas,meningiomas, pituitary tumors) while maintaining the system's current high performance metrics. Additionally, integrating explainable AI techniques would increase clinical trust by making the model's decision-making process more transparenttomedicalprofessionals.

Acriticalareaforinnovationinvolvesreal-timemonitoring capabilitiesduringMRIprocedures.Developingsystemsthat can analyze tumor growth and changes instantaneously would revolutionize treatment planning and intervention strategies.Thiscouldbecombinedwithadvancedautomated segmentationtoolsthatpreciselyidentifytumorboundaries withoutmanualinput,significantlyreducingdiagnostictime whileimprovingmeasurementaccuracy.Suchdevelopments would be particularly valuable for tracking treatment efficacyandmonitoringpost-operativerecovery.

Thesystem'searlydetectioncapabilitiescouldbeenhanced throughintegrationwithcutting-edgeMRItechnologiesand AI algorithms sensitive to subtle pathological changes. Future versions might identify microscopic tumor formations or pre-cancerous conditions long before they become visible in conventional analysis. Parallel improvementsinMRIhardware,includingfasterscanning protocolsandmorecomfortablepatientexperiences,would complementthesesoftwareadvancements,makingfrequent monitoringmorefeasible.

Finally, expanding the system's diagnostic scope to incorporatemulti-modalimaginganalysis(combiningMRI with CT, PET, or fMRI data) could provide more comprehensive tumor assessments. Cloud-based deployment would facilitate global accessibility, allowing even resource-limited clinics to benefit from advanced diagnostics. These combined hardware, software, and accessibility improvements position TumourTrace to becomeanincreasinglyvitaltoolintheongoingfightagainst braintumors.

The TumourTrace system represents a significant advancementinthefieldofmedicaldiagnostics,particularly in the automated detection and classification of brain tumours using MRI scans. By leveraging the power of Convolutional Neural Networks (CNNs), the system addresses critical challenges associated with traditional diagnostic methods, such as time-consuming manual

analysis, human error, and variability in tumour characteristics.

OneofthekeyachievementsofTumourTraceisitsabilityto automate the tumour detection process, significantly reducingtherelianceonmanualinterpretation.Traditional MRIanalysisrequiresradiologiststometiculouslyexamine eachscan,aprocessthatisnotonlylabor-intensivebutalso prone to subjectivity and fatigue-related errors. TumourTrace mitigates these issues by providing an objective,AI-drivenanalysisthatcanprocesslargevolumes ofMRIscansinrealtime.Thisautomationnotonlyenhances diagnostic efficiency but also ensures that even small or early-stagetumours,whichareoftenoverlookedinmanual reviews,aredetectedwithhighaccuracy.

Beyonditsimmediateclinicalapplications,TumourTracehas thepotentialtotransformmedicalresearchandeducation. Thesystem’sabilitytoanalyzelargedatasetsofMRIscans consistentlyandobjectivelycanfacilitatenewinsightsinto tumour growth patterns, treatment efficacy, and early detectionstrategies.Additionally,itsintegrationintomedical trainingprogramscanhelpstudentsandtraineesdevelop theirdiagnosticskillsbyprovidingapractical,AI-powered toolforlearningandpractice.

[1] Chennai, India Janardhan , L.Sujihelen Chennai, India Student, Assistant Professor Department of CSE, SathyabamaInstituteofScience&Technology,Chennai, India

[2] Aryan Sagar Methil, Narsee Monjee Institute of ManagementStudiesMumbai,India

[3] P.Mohamed Shakeel, Tarek E. El. Tobely, Haytham AlFeel,GunasekaranManogaran,S.Baskar FacultyofInformationandCommunicationTechnology, UniversityTeknikalMalaysiaMelaka,Malaysia

[4] Computer Engineering and Automatic Control Department, FacultyofEngineering, Tanta University, Tanta,Egypt

[5] TonmoyHossain1,FairuzShadmaniShishir2,Mohsena AshrafMDAbdullahAlNasim,FaisalMuhammadShah5 Dept of CSE, Ahsanullah University of Science and Technology,Dhaka,Bangladesh