Welsh National Opera trusts wireless solution from Riedel Bolero

Welsh National Opera (WNO) recently invested in a full Riedel Bolero digital wireless intercom system, specified by Autograph Sound, in need of a new in-house communication system. The prestigious opera company is a prolific operatic ensembles in the UK, with a national and international touring schedule including an average of 120 performances per year.

“We spend around 5-7 weeks, twice a year, at our home in the Millennium Centre, producing our operas. From there, we head off on tour for around 7 weeks at a time,” explains Benjamin Naylor, Head of Lighting and Sound at WNO. “We’re on the move a lot and require a communication system that works for us, not against us.”

Based in Cardiff’s landmark Wales Millennium Centre, its team were using an outdated digital wireless system prior to the upgrade, which had served

them well for around 7 years but had run its course as upgrade requirements and maintenance began to incur considerable costs. “The old system was liable to a lot of interference on the line, and from a health and safety point of view we needed a system that provided clear communication,” explains Naylor. “Range is also important for us – our team

covers a lot of ground.”

Autograph recommended a wireless system from Riedel, a clear choice that fitted WNO’s critical needs.

“Welsh National Opera stressed the fact that they wanted a system that was quick and efficient to set up when they go on tour,” explains Nacho Lee, UK Sales Manager at Riedel.

“Clarity of communication

NEWS - SUCCESS STORIES 14

RIEDEL BOLERO CHARGING STATION.

was the second most important requirement. Riedel Bolero ticks several boxes for systems that move around a lot: clear communication, ease of use and incredible range, no matter the venue. So, we recommended Bolero.”

The system was commissioned in August 2022, with both Riedel and Autograph in attendance to ensure WNO were confident in setting up and using the Bolero system. “In total, the system consisted of three wireless antenna w/PSU, fifteen Bolero belt packs, three 5 bay chargers with rack mount kits, an NSA-002A interface box with rack mount kit and the standalone licence for the system. A network switch for communication and supplying POE to the antenna and NSA units were also part of the package,” explains Will Cottrell, Technical Sales Engineer at Autograph.

Once installed, the system was put to the test both at home and on tour. “We implemented a soft introduction of the system, to ensure everyone was confident using Bolero,” explains Thomas Roberts,

Lighting Supervisor at WNO. “During the first season, we configured the Bolero system like our old system before rolling out more features throughout the company.”

“We use 2-4 channels at a time, with roles defined per department plus separate chat channels alongside the main channel, to avoid overloading any one channel,” continues Roberts. “Not only does this system provide the best audio quality I’ve ever experienced, but it’s also much better at dealing with distances between team members than our previous system. It’s like having a normal conversation with someone – you can hear the inflection in people’s voices so it’s easy to determine who is speaking.”

The intuitive user interface of the Bolero system has also improved WNO’s communications. “The user experience couldn’t be simpler. The screen is easy to understand, and the buttons are much nicer than competitors’ offerings,” confirms Roberts. Stage Manager, Amy Batty echoes these sentiments and adds, “The

ergonomics are good and the buttons are intuitive and easily accessible. You forget you’re wearing it and it becomes part of the day, part of the routine – there’s no thinking, we just use it.”

One of the most crucial requirements of the system was its portability. “With the new Riedel system, we just pack the system in a flight case and take it with us,” explains Roberts, who is responsible for maintaining and managing the communications systems. “Once we arrive at a venue, we unpack the system, install two antennas and we’re ready to go.”

The project has been a success for all parties involved, and WNO can now rely on the system to support them throughout their touring season. “It was great to work with a company as well-known and historic as Welsh National Opera,” reflects Cottrell of Autograph. “The end result of this project is a very reliable, clear and portable system which allows the same excellent levels of communication whether at home in Cardiff or on tour.”

15 NEWS - SUCCESS STORIES

Jordan’s largest and most prominent mosque use to broadcast regularly its religious services for worshippers at home; this new integration of robotic camera control aims to capture not only religious services but visitors flows and other events

Telemetrics’ robotic camera control integrated at the King Hussein mosque

The project of the live TV studio was funded by the Royal Hashemite Court (RHC), who also managed the tendering process and supervised the commissioning of the project. The robotic systems officially went live on March 20th of this year, 2023.

The King Hussein Mosque, as the largest and most prominent mosque in Amman, Jordan’s capital town, offers a fully functioning TV studio that provides live broadcasts of important events such as Friday prayers, Holy Eids and Ramadan. That studio and the building’s main prayer room now include robotic camera systems from Telemetrics, an innovator in robotic camera control.

The new equipment consists of five HP-S5 robotic Pan/Tilt heads installed throughout the mosque and a RCCP-2A

control panel to operate and adjust the cameras as needed. The project also included installation of a Telemetrics PT-WP-S5 weatherproof camera system on the building’s roof for unique POV shots.

“The five cameras are distributed inside the main hall of the mosque recoding live video footage from different angles without distracting the worshipers from performing their religious duties,” said Omar Hikmat, Founding Partner and CEO of local systems integrator HEAT. “This is very important in Islam to show respect and dedication to God.”

Hikmat added that the mosque staff has found the equipment easy to use and like the results it is providing.

“The client is very happy, and all of the equipment is working very reliably.”

NEWS - SUCCESS STORIES 16

For those looking to improve operations

efficiency while reducing costs, the PT-HP-S5 Robotic Pan/Tilt head— with its Advanced Servo technology—is the ideal choice as it offers smooth motion, whisper-quiet operation, and improved production values for venues like the King

Hussein Mosque. The rugged construction of the PT-HP-S5 Camera Head is perfectly suited for daily use in applications where support of mid-to-heavy payloads of up to 40 pounds is required.

In addition to a variety of features for programming and launching creative and

consistent camera moves, the RCCP-2A Robotic Camera Control Panel

offers Telemetrics’ exclusive reFrame® Talent and Object Tracking software that, using special artificial intelligence algorithms, automatically keeps the talent in frame at all times.

17 NEWS - SUCCESS STORIES

New appointment in Vizrt Group to reinforce Channel Program

Vizrt Group, the company specialized in software for live video production, announced the hiring of Jenny Isaksson and her appointment as the Director of Channel Development; this movement comes easily as the company focuses on additional investment and support for its channel partners and customers.

Vizrt Group appoints Jenny Isaksson as new Director of Channel Development; she is a proven channel streategy expert and her mission will be to best support the organization’s growing global channel and ultimately, customers

Isaksson brings several years of experience and a depth of knowledge to Vizrt Group. She previously held roles at Nordic Capital as an Operations Manager where she was focused on channel strategy, commercial excellence, value creation planning, and portfolio management, and at Bain and Company as a manager across growth strategy, financial planning, customer satisfaction, change management, commercial due diligence, and more.

Isaksson will work alongside the recently appointed Channel Sales leadership team which includes Paul Dobbs (APAC), Thomas Thal (EMEA), and Ed

Holland (AMECS) who are focused on customer relations and growing and developing their respective regions. Together with the leadership team, Isaksson will create a comprehensive channel strategy across the territories to ensure our channel partners are equipped with the best experience alongside the best technology to support Vizrt Group’s global end users and customers.

“Half of Vizrt Group business is conducted in collaboration with our valued partners. Our appointment of a strong channel leadership, alongside the addition of Jenny, demonstrates our focus on expanding and growing our joint channel business. Her expertise allows us to take our existing strategy to new heights, leveraging untapped opportunities and establishing even stronger alliances with the channel and our customers,” states Daniel Nergård, Chief Revenue Officer, Vizrt Group.

25

NEWS - BUSINESS & PEOPLE

26 UEFA CHAMPIONS LEAGUE

In live broadcas ng, football is one of the most demanding sports and TVN Live Produc on is regarded as trend-se ers. Bas an Berlin, TVN Live Produc on Head of Sales, talks about how the company prepares the final of one of the most important spor ng events of the year.

27 TVN LIVE PRODUCTION

You covered several UEFA games and you will be producing / filming / broadcasting the final match; how does TVN get ready for a major event like this? Which vehicles and with which equipment are you planning to take with you?

In the course of the current season, TVN LIVE PRODUCTION realised more than 10 UEFA Champions League host broadcasts plus more than 15 UEFA Europa League and Conference League host broadcasts. Concerning UEFA club unilateral production abroad, we additionally

realised more than ten matches this season. Thus, our staff, engineers and production planners have extensive experience in the realisation of UEFA productions.

28

UEFA CHAMPIONS LEAGUE

29 TVN LIVE PRODUCTION

30 UEFA CHAMPIONS LEAGUE

The production of a final is always very special. For a final, the UEFA regulations (parking plan, electricity certificates, accreditation, etc.) are even stricter because numerous OB vans from all over Europe have to be coordinated at the TVC.

Two customers from the German-speaking region have commissioned us with

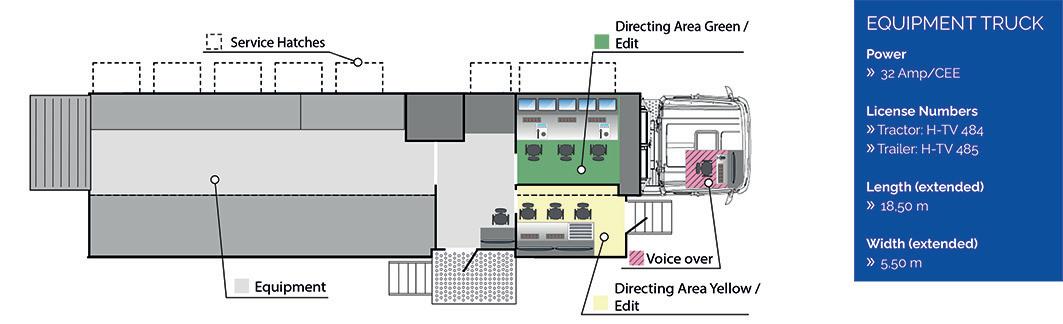

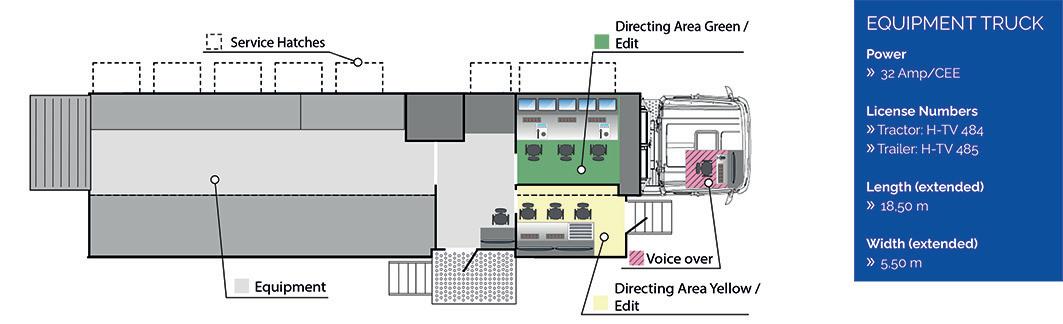

their broadcasts at the UCL Final. Our TVN-Ü6 is the perfect OB van to realise two productions in parallel because it has two control rooms of the same size.

Our plan is to travel to Istanbul with one OB van and one set-up truck. For both clients, we will have a total of about 10 cameras in use.

How and with which technology are you planning to broadcast the signal?

Both broadcasters transmit in 1080i50. The signals we generate are processed both via fibre optics and via satellite uplink to the customers´ broadcasting centres and from there to the terminal devices.

31

TVN LIVE PRODUCTION

Which equipment will your team unfold on venue?

The TVN-Ü6 with mixers, etc.

How large is the team you will deploy in an event like this?

We will be on location with a TVN crew of more than 30. In addition, there are the contact persons of our clients and the on-air staff.

Can you describe for us how your workflow works? What kind of tech, tools and protocols does it include?

On board the TVN-Ü6: SONY video mixer and cameras / LAWO Audio Pulse.

To transmit the signals from the set to the OB van, we use stage boxes. As individual cabling is not allowed for the production

of finals like this, we use UEFA’s fibre-optic precabling.

Are you planning to use 5G for broadcasting or in any way? If yes, in which way?

We are not using 5G for the Istanbul final.

In the coming months, we are planning to use 5G technology for productions such as music festivals.

32

UEFA CHAMPIONS LEAGUE

33 TVN LIVE PRODUCTION

We see more and more immersive experience in Sports broadcasting, primarily in football. Are you planning to do any test regarding this issue in the future?

For the Bundesliga, we constantly implement AR elements into the video signals of our TVN Live Copter – a collaboration with the company Netventure. Only recently, we used this solution

during the production on match day 34 in Dortmund.

Have you produced other events this year? Which ones? And, are you planning to produce any in next months? Which ones?

Apart from TVNs´ regular show productions and music festivals, our operational schedule is dominated by the finals at the end of the football

season.

Games of the last weeks and the rest of the running season:

1. DFB Cup Final Women | 18.05. in Cologne / RheinEnergieStadion –TVN-Ü1

2. Bundesliga, 34th match day / championship decision | 27.05. in Cologne / RheinEnergieStadion – TVN-Ü6 in Cologne,

34

UEFA CHAMPIONS LEAGUE

Dortmund / SIGNAL IDUNA PARK – TVN-Ü5

3. UEFA Europa League Final | 31.05. in Budapest / Puskás Aréna – TVN-Ü4

4. DFB Cup Final | 03.06. in Berlin / Olympiastadion –TVN-Ü5, TVN-Ü4 & TVN-Ü3

5. Bundesliga Relegation second legs | 05.06. / 06.06. – TVN-Ü1 & TVN-Ü3

6. UEFA Europa Conference League Final | 07.06. in Prague / Eden Aréna –TVN-Ü4 & SNG-A

7. UEFA Champions League Final | 10.06. in Istanbul / Atatürk Olimpiyat – TVN-Ü6

8. UEFA Nations League Final | 18.06. in Rotterdam / Stadion Feijenoord –TVN-Ü5.

35

TVN LIVE PRODUCTION

36 VIRTUAL PRODUCTION

Making reference to virtual produc on is making reference to Unreal

How virtual produc on will change broadcas ng

Virtual produc on is in at an implementa on stage. Studios, integrators and broadcasters are experimen ng with solu ons developed on green-screen techniques, video game graphic engines and large-format LED screens and a short distance between pixels. In this early stage featuring expensive, inaccessible solu ons, a clearing in the woods is appearing. Because virtual produc on is beginning to mean Unreal. The Epic Games’ proprietary graphics engine, originally from the video game arena, has come here to revolu onize our industry. The big brands in the broadcast market, such as Vizrt, Aximmetry, Brainstorm, Zero Density, Pixotope…, are kneeling at their feet. And the professionals too. How does this solu on fit in broadcas ng? How will virtual produc on techniques grow? Why do you think the crea on of mul media content with virtual techniques is so important? Rafael Alarcón and Asier Anitua answer these ques ons. Rafael Alarcón is the Broadcast Design Coordinator at Movistar Plus. He is also in charge of genera ng and supervising the virtual sets created by this network. Currently, he is one of the few experts in Unreal Engine for broadcast. Asier Anitua is a Business Development Manager and a great connoisseur of virtual produc on markets.

37

UNREAL

What technical infrastructure are you working on at Movistar Plus?

Rafael: We at Movistar Plus work with Vizrt. We have it within the graphic ecosystem, both for the development of virtual sets and for all the graphic part that requires working in real time; such as sports broadcasts, programs, broadcast branding.

Currently, we have a virtual set with three cameras, two of them robotized with Vinten mechanical tracking, while the third one is a sixaxle robotic arm that works as a crane. In addition, we have another studio with physical sets that is equipped with a robotized, sensorized telescopic crane with Mo-sys technology to make augmented reality items show up.

How are virtual production infrastructure, content design, and implementation teams made up?

Rafael: There is a scenography team that

is responsible for the design of the sets. Then, we have my team, which is in charge of doing the implementation in Vizrt Artist, version 4, and the features that virtual set has to have are then prepared: screens, RA elements, scene controls; all this so that they can easily operate it from Vizrt ARC. Post-processing, mask

adjustment, and depth of field adjustments are also made to respond on the robotic cameras.

Once all the adjustments are finished it is time to exploit that virtual set that only requires four operators. Camera and lighting control, mixer, sound technician and a Vizrt ARC operator. Beyond

38

VIRTUAL PRODUCTION

that, the imagination when it comes to “tweaking” with the virtual set can run wild.

Nowadays, many manufacturers are strongly committed to developing virtual production software solutions. Each of these solutions has its own features and characteristics. For

which projects is each of these solutions most suitable?

Rafael: This is something I’ve been studying for a while now. All virtual production software has the same purpose: to show scenarios in real time with the best possible quality and get an integration between real and virtual

worlds as close as possible to the perception of something real by viewers.

Now there is going to be a big change in virtual production. Unreal is developing a tool for realtime motion graphics. It is known as Project Avalanche and is called to be a substitute for After Effects.

We live in an age of immediacy. Therefore, rendering times have already become obsolete. North American network ESPN has already integrated it into a production and has lent it to Epic Games, to broadcast the 2023 edition of the Rose Parade. In this broadcast for ABC, ESPN used Project Avalanche to develop a graphic solution that encompasses all graphic production tasks, from design to final broadcast.

The key has been, given Unreal’s ability to create video game interface graphics, -commonly referred to as HUD—, move that system, obviously in an adapted form, to virtual production tools, motion graphics in headers,

39 UNREAL

curtains, labeling, etc. This will achieve capabilities to create, deploy and modify graphics in real time, without any rendering times, of course.

What is the origin of Unreal’s expansion in broadcast?

Rafael: The basis of this ability is in the operation of a video game. In this format, once the information has been uploaded it is then ready to run in real time.

Epic Games put its graphics engine -a system that had reached high levels of

photorealism- at the service of other industries. It was then when interest from broadcasters and postproduction companies started to grow.

Today it has been possible to convey Unreal’s photorealism to these broadcast tools, thanks

40

VIRTUAL PRODUCTION

also to the facts that those industry players have fostered its growth by helping Epic Games to develop it.

Regarding the competition that Unreal is facing, why has it triumphed so much, as

there are other engines on the market?

Rafael: For me the reason behind Unreal’s success lies in the optimization it offers when creating environments and own designs within the software. Additionally, Unreal 5 has two great features. Lumen enables working with dynamic lighting that does not need to be ‘cooked’, that is, it does not require processing time. Then there’s Nanite, which is the modeling part, providing the ability to deploy infinite polygons, until the processing capabilities of the GPU are overflowed, of course. But Unreal also handles all these polygons differently. The key is how they are calculated and then interpreted. It does this through pixels and not as a geometry, so it only calculates the part that can be seen. So far in all real-time technologies, whenever there was a chair, the whole chair -and not just the portion visible on the screen- would be calculated. Unreal still does this, but only optimizes the look of the visible portion.

So, if we think about broadcast production environments, the key that Unreal provides here is the ability to optimize workflows and save time, right?

Rafael: Definitely. The time is of the essence. But it is also true that Unreal offers you a lot in a very short time and the initial learning curve is not a steep one. Of course, then it is necessary to continue working so that the result gets better. That is where the process becomes harder. And it is precisely there where many of the proprietary engine software on the market falters. Therefore, Unreal gives you the tools to become more creative, which ultimately is the goal for all; that imagination prevails beyond the features of the relevant technological infrastructure.

As you have already pointed out, most proprietary graphic systems are adding integration capabilities with Unreal. Is this the way to adapt to the trend?

41

UNREAL

Rafael: That’s right. Because they have to recognize the potential that Unreal has. They really don’t have a choice.

Asier: Certainly, but there is another factor that is also making all manufacturers position themselves in support of this graphic engine: there is already a huge library of templates and assets available. In fact, is getting bigger every day because proprietary developments can also be integrated into that array and, in addition, they can be turned into money. Let’s say that for 20 dollars you can have a Formula 1, while developing it from scratch would imply a human cost of many hours. Imagine that I am a client who wants to develop a virtual.

As we said, all manufacturers have to get on this bandwagon, but on the other hand these manufacturers will guarantee integration into broadcast environments.

Epic Games is not going to get into integrating Unreal with certain technologies, it is the traditional providers who will be responsible for

offering you the best tool for each production.

From a business point of view, it also opens up the fields of specialization a lot. For example, a broadcast graphics engine artist is only going to be specialized in that area. However, as Unreal specialists they can easily adapt to many other sectors. From architects to video game designers.

That is one of the biggest challenges at present. Is there already a professional offering of Unreal specialist profiles?

Rafael: It’s still hard to find people who specialize in Unreal. The most obvious reason is that it is a very new tool and lacks a lot of broadcasting-specialist training. The TVs are now starting to trust in Unreal.

Asier: There is a lack of broadcasting-specialist training now, but this will change. In fact, universities are already implementing these tools in their training programs. TSA has developed the virtual production studio of

Universidad Rey Juan Carlos at the Vicálvaro campus. We are even pushing towards this direction in corporate training programs, such as in the Telefónica Booth space with Brainstorm’s Edison virtual production solution. In this way we want to generate a pool of talent.

We have followed your latest integrations and we dare to say that you are betting strongly on the Aximmetry system. What is the reason for this preference?

Rafael: Actually, because of

42

VIRTUAL PRODUCTION

its accessibility. There was a time when all other systems were closed. Aximmetry immediately opened its system at no greater cost than a watermark. That was by late 2019.

It was precisely then that I wanted to try tools to work with Unreal. But the truth is that there was nothing available. When, at the same time, I discovered Aximmetry, a world opened up for me. I had a playout to see if all the things I did in Unreal worked without having to program them into the system itself, which you can too, but then you

get into a much bigger mess. This was an easier way to do it.

On the other hand, there is the price. Aximmetry is the cheapest of them all. It features a very stable and accessible pricing policy.

Regarding support, I also saw it very affordable. In my case I can tell you that I communicated with them to try to solve doubts and in less than 24 hours I had an answer.

Asier: They are really accessible. Both in terms of costs and support. And the quality offered is the same as the others. The way to stand out from the rest is to be more affordable. They are moving towards a business model that gives everyone the opportunity to test their solutions. It is a long-term business model that seeks loyalty.

However, if we look at other ranges, we find highly professional solutions that offer guaranteed results, such as Brainstorm or Vizrt.

Rafael: Of course, each of these applications is designed for a specific

purpose. If you’re going to work on a television with a workflow that involves a lot of people, you will need a hub everyone can run on without harming the one next to it. In solutions such as Vizrt, this system is guaranteed. It is also the case with Zero Density.

In Movistar Plus, for example, we are interested in Vizrt because we keep it as a basis for everyday life. On top of that layer of routine work, we also want to create a layer of innovation on Unreal. If we are 15 people at the graphic area, I do not want all of them to learn Unreal, because the cost of learning is quite hefty. However, the fact that these people can continue working in the same way but with an Unreal background, turns out to be a very good scheme. It is a hybrid system that they are implementing in tools like Edison. It is also in Vizrt. But then many others like Zero Density, Aximmetry or Pixotope work natively with Unreal. That means that at a new TV channel with a team of 15 graphic

43

UNREAL

designers, if you integrate the Unreal solution, all 15 of them must learn that solution. So it ends up depending on what your specific workflows are.

LED displays or Green Screens?

Rafael: One of the cons that I see in XR screens, is that contaminates the filmed subjects with lighting and that is okay. But, at present, the screens continue to generate a moiré effect that must be solved with a blur, a technique that often

provides an unrealistic result.

Asier: That will be eventually solved because it is a matter of syncing the capture of the image with the screen refresh. A technical solution is available.

The big difference between a XR screen and green screens is that the actor or presenter feels really embedded into the scene. The bad news about the green screen is that you have to look at the return

to see where you are and what you are doing.

Rafael: Another thing you can’t do on LED screens, and this feature is really powerful, is a virtual camera. Unless it’s through direct clipping. They are trying to introduce an iPhone technology that can perform a clipping through brightness or contrast specifications. Nowadays, virtual cameras on green screen can do a clipping, insert a character and also allow you to perform a

44

VIRTUAL PRODUCTION

camera flyover. You can’t do that on an XR screen.

Asier: Of course, but this is for the time being. That need to save computing power by loading only the parts of a virtual scenario that are going to appear on camera is necessary only at present. However, when that challenge is overcome by the then existing GPUs, it will no longer be necessary to limit this so much. Therefore, camera movements will be freer and will not be constrained

by blank spaces on XR screens. And that’s why I say that LED displays will end up becoming the main technology for virtual production in the future. Most televisions will have their LED-screen set equipped with Unreal technology. Because the screens will get cheaper and cheaper and Unreal assets will also gradually become more affordable. On the contrary, creating a physical set will not make sense because raw materials will get more and

more expensive.

Rafael: Over time, tracking systems will also disappear. Viz Arena was used in LaLiga during the pandemic. A whole virtual audience in stadiums was created. This is a very expensive thing, but the technology is heading in that direction. The camera pans throughout the whole scene and calculates where the audience has to be placed without a need for broadcast reference systems.

45

UNREAL

Asier: Indeed. But there are some solutions that already come with that. Take Edison, from Brainstorm. In fact, with this program you can do the production by means of an iPhone. With that smartphone, which already has a built-in LiDAR (Light Detection and Ranging) sensor system, you can move through a virtual environment as if it were a steady cam for the 1,500 euros the phone costs. Because everything is going to change over time as technology evolves and undergoes democratization.

In addition to the democratization you mentioned, where will virtual production technology evolve to?

Rafael: The broadcast world is geared towards virtual production. The future lies in cloud hosting and everything will be made available as software as a service.

Asier: From Telefónica we are already working on offering different cloud hyper-scales that allow work with GPUs. Such processing power has

not been developed and these virtual production environments will always need a lot of GPU processing. In addition, the cloud will eventually end up being something much more powerful than it is today. In the Telefónica testing lab we have a rig of 4K cameras that are recording 360 degrees of everything in between and then generate a real-time render of a person. We haven’t taken these capabilities into Unreal yet, but that will be the next step. With automation, actors can be scanned in real time and then live-streamed in any Unreal environment. That is the future ahead of us.

Rafael: Another future of virtual production will be the metaverse. In 2017, The Future Group did a show called “Lost In Time.” The idea behind it that viewers would go beyond their usual roles and became participants in the story. It was a video game that comes very close to what our notion of the metaverse might be today. The idea is trying to bring experiences

of reality to homes, while providing, in addition, an interaction. This could attract new audiences to consumption of content.

And is there really a demand by viewers to enjoy content that is already accessible and good for consumption through technologies that run parallel to the narrative of the content they are watching?

Asier: Think about 80 years ago, when you were a regular radio listener and then television came along. It is the same situation: in the end content creators have the possibility to serve it to their different audiences in different formats. Those who want to hear content, through radio or podcasts; those who want to see it, through television or cinema; and those who want to experience it, must approach the world of video games or the metaverse.

Rafael: A great example of this need to create a closeness with content is the success of Twitch. People are on that platform

46

VIRTUAL PRODUCTION

because viewers interact with the presenter.

Asier: Of course, but that’s what TV is on the OTT platform. That is, it is to carry the linear broadcast media, adding presenterviewer interaction, but within a digital platform. The thing is that this is the only context in which you can really do something like that; on traditional television it is highly complex, technically speaking.

In fact, there is an audience that only wants to consume content passively. But there is another type of audience, growing in numbers, that prefers to enjoy another type of content and is already used to consuming it.

Do you think that both types of audiences will always coexist or will the scales tilt in favor of one or the other?

Asier: Just as the radio didn’t disappear, the

TV won’t either, but the revenue pie will become much smaller and much more fragmented. Rafael: It actually happened with the radio. All the advertising was there and when television came, it took revenues on advertising from the radio.

Asier: Indeed, history is repeating itself. Content consumption, on average, is 6 hours per day per person. Out of that time, traditional broadcast only gets one hour. So traditional television, which is the one that really has all the potential to exploit those 6 hours of viewing time, in many cases is losing it because it is not being able to innovate at the speed at with everything else is evolving. Therefore, they are losing a share in the pie. To overcome this, they have to focus on content. But it is no longer worth making content for 20 million people. You’re going to have to do 300 pieces of content for 100,000 people.

There are three options: either you go for cheaper costs and democratize all the technical solutions behind that sectorization of content, or you produce much more at a lower cost; or else you go out of business.

Rafael: This is where virtual production comes in. People who make physical sets have to worry about handling it, providing maintenance, renovating it,; all this can involve occupational risks. This does not exist in virtual environments.

Asier: However, we must be honest. The truth is that physical sets still beat a virtual set when it comes to realism. But we’ll get to a point where there won’t be that much difference anymore. At that point, we’ll say, what’s the point of making a set? I can just deploy some XR screens and ready to go. This is how 90% of the world’s sets will end up.

47

UNREAL

48 TECHNOLOGY

Revolu onizing the media industry: Unleashing the power of virtualized environments in the cloud

By Jonas Michaelis, CEO Techtriq GmbH

In the fast-paced and ever-evolving landscape of the media industry, traditional approaches often struggle to keep up with the demands of real-time content creation and distribution. Challenges such as dynamic production requirements, scalability and flexibility needs, and the complexities of collaboration in remote work settings have become more prevalent. Virtualized environments offer a solution to these challenges. By creating virtual machines or containers that replicate physical hardware, they are transforming the media industry and enabling efficient use of computing resources.

49 VIRTUALIZED ENVIRONMENTS

These environments hold immense potential for media organizations seeking scalable, flexible, and collaborative solutions in an era defined by rapid change and digital innovation.

Virtual environments

offer a range of benefits for the media industry.

First and foremost, they optimize resource

utilization, allowing media organizations to make the most of their computing capabilities while reducing costs. Scalability and elasticity are also key advantages, enabling media companies to seamlessly scale their operations based on demand, accommodating surges without disruptions. Moreover, virtualized environments facilitate

collaboration and remote work, overcoming geographical barriers and enabling real-time cooperation among dispersed teams.

Integrating advanced technologies into virtualized environments opens even more possibilities for media professionals. Generative AI Tools, like OpenAI’s ChatGPT, have emerged

50

TECHNOLOGY

as a game-changer in the industry. Combined with the possibilities of a media integration platform like qibb, this offers numerous options for automating and optimizing media processes.

Challenges in the media industry

The media industry operates within a dynamic and fast-paced environment, where the demand for real-time content creation and distribution is ever-present. Media organizations face a range of challenges as they navigate this landscape, adapting to the evolving needs and expectations of their audiences. The industry requires the ability to respond quickly to emerging trends, breaking news, and changing audience preferences. Content creators and distributors must be able to generate and deliver content in real time, keeping pace with the constantly shifting environment.

Workloads can fluctuate dramatically, with sudden spikes in demand for specific events or breaking news stories. Media companies need infrastructure that can seamlessly scale up or down, allowing them to efficiently allocate resources based on demand. This

agility ensures that they can handle increased workloads without sacrificing performance or incurring unnecessary costs during slower periods.

In addition, the need for remote work and timeand location-independent collaboration options has become apparent at the latest as a reaction to the global pandemic. Effective collaboration becomes vital to ensure seamless coordination among team members, regardless of their physical location. However, collaboration in such distributed environments can be challenging, with barriers in communication, file sharing, and project management.

Streamlining collaboration processes is essential to enable efficient remote work and effective team coordination. Media organizations need tools and systems that facilitate real-time collaboration, enabling seamless communication, file sharing, and version control. Overcoming these hurdles allows teams to work together seamlessly,

51

VIRTUALIZED ENVIRONMENTS

maximizing productivity and efficiency.

By understanding and addressing the dynamic nature of media production, scalability and flexibility requirements, and the challenges of collaboration and remote work, media organizations can position themselves for success in this rapidly evolving industry.

Addressing the challenges: virtualized environments

To overcome the challenges faced by the media industry, virtualized environments have emerged as a transformative solution. These environments leverage virtual machines or containers to replicate physical hardware, offering

scalability, flexibility, and efficient resource utilization. By decoupling the underlying hardware and software, virtualized environments provide media companies with the freedom to choose bestof-breed tools and services without being locked into a single vendor ecosystem.

This vendor-agnosticism is a critical aspect of virtualized environments that directly addresses the challenge of diverse tools and services from different vendors. This approach allows for seamless integration between different tools and services, streamlining workflows, reducing complexity, and enhancing interoperability.

Virtualized system integration further reinforces the benefits of

virtualized environments by consolidating disparate systems and services into a unified ecosystem. This integration eliminates the siloed approach that hampers scalability, resource optimization, and collaboration. By leveraging virtualized system integration, media organizations can achieve seamless interoperability, enabling professionals to access and utilize resources across systems, share and collaborate on projects in real time, and optimize resource allocation based on demand.

By integrating virtualized environments into their workflows, media organizations can overcome the challenges of vendoragnosticism and virtualized system integration. These transformative

52

TECHNOLOGY

solutions empower media professionals with scalable, flexible, and collaborative environments, enabling them to meet the demands of the rapidly evolving media industry.

Media integration platforms: a future-proof workflow solution

One leading example in this field is qibb. As a cloudbased media integration platform, qibb supports media organizations to streamline their workflows, collaborate seamlessly, and unlock new possibilities in content creation and delivery. By consolidating numerous tools and services into a unified environment, qibb

eliminates the complexity and inefficiencies associated with working across multiple platforms, allowing media professionals to focus on their creative efforts and increase productivity. With qibb, teams can seamlessly integrate their preferred tools, streamline workflows, and reduce the complexities of vendorspecific workflows.

With a growing catalog of connectors and prebuilt templates, qibb offers various features to work with virtualized environments. This allows media organizations to build and maintain integrations more cost-effectively, faster,

and without vendor dependencies. The lowcode approach of platforms like qibb simplifies the process of automating and integrating media workflows, making it easier than ever before to create efficient and scalable production processes.

Transforming workflows with artificial intelligence

The integration of artificial intelligence (AI) tools like ChatGPT into a cloud-based workflow leads to a variety of new possibilities. By seamlessly incorporating generative AI into the content creation process,

53

VIRTUALIZED ENVIRONMENTS

the media integration platform can empower media professionals to automate and enhance their workflows, resulting in saved time and effort without compromising the quality of output.

In this exemplary workflow, qibb leverages its advanced capabilities in collaboration with ChatGPT to enhance the content creation process. Upon uploading the video, an automated speech-to-text transcription process will be initiated. This eliminates the need for manual transcription, saving time and effort. With the

transcript readily available, qibb further incorporates ChatGPT into the workflow. ChatGPT, driven by advanced natural language processing algorithms, analyzes the transcript and generates a concise and coherent summary of the video’s key points. This summary provides media professionals with a quick overview of the video’s content, facilitating efficient content review and decision-making. As a final step, leveraging its contextual understanding of the transcript, ChatGPT automatically generates tailored content for various

social media platforms. By utilizing its language processing capabilities, ChatGPT produces optimized text snippets suitable for platforms like LinkedIn or Twitter. This automation eliminates the need for manual content creation for each platform, ensuring consistent messaging and saving time for media professionals.

54

TECHNOLOGY

Embracing a dynamic future

The future of media workflows holds immense potential as virtualized environments continue to evolve and shape the industry. With advancements in technology, we can expect even more innovative solutions that enhance productivity, creativity, and collaboration.

Virtualized environments will increasingly integrate advanced technologies like artificial intelligence, revolutionizing content management, creation, and distribution. These technologies will enable media professionals to unlock new levels of efficiency and effectiveness, delivering personalized and immersive experiences to audiences across various

platforms and devices.

Collaboration will continue to be a key focus as virtualized environments foster seamless teamwork and knowledge sharing across global networks. Media professionals will collaborate in real-time, transcending geographical constraints and time zones. This global collaboration will result in diverse perspectives, enhanced creativity, and accelerated innovation.

By embracing virtualized environments, media organizations can navigate the ever-changing landscape, drive innovation, and deliver compelling content that captivates audiences in the digital age and beyond. As the industry continues to evolve, the opportunities for growth and creativity are boundless, and those who embrace the dynamic future of media workflows will thrive in this rapidly evolving landscape.

55

VIRTUALIZED ENVIRONMENTS