EN

EN

www.medecinsdumonde.org DVD INCLUS / DVD INCLUDED / INCLUYE DVD

15 € This guide may be used or reproduced for non-commercial use only and the original source must be mentioned. Ce guide est également disponible en français. Esta guía también está disponible en español.

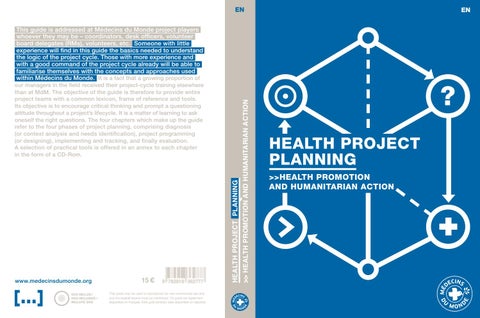

HEALTH PROJECT PLANNING

our managers in the field received their project-cycle training elsewhere than at MdM. The objective of the guide is therefore to provide entire project teams with a common lexicon, frame of reference and tools. Its objective is to encourage critical thinking and prompt a questioning attitude throughout a project’s lifecycle. It is a matter of learning to ask oneself the right questions. The four chapters which make up the guide refer to the four phases of project planning, comprising diagnosis (or context analysis and needs identification), project programming (or designing), implementing and tracking, and finally evaluation. A selection of practical tools is offered in an annex to each chapter in the form of a CD-Rom.

>> HEALTH PROMOTION AND HUMANITARIAN ACTION

This guide is addressed at Médecins du Monde project players whoever they may be – coordinators, desk officers, volunteer board delegates (RMs), volunteers, etc. Someone with little experience will find in this guide the basics needed to understand the logic of the project cycle. Those with more experience and with a good command of the project cycle already will be able to familiarise themselves with the concepts and approaches used within Médecins du Monde. It is a fact that a growing proportion of

HEALTH PROJECT PLANNING >>HEALTH PROMOTION AND HUMANITARIAN ACTION