Real-time object detection and video monitoring in Drone System

Ahmad Bilal Zaidi1 , Sadaf Zahera2

Ahmad Bilal Zaidi1 , Sadaf Zahera2

1Student, Deptt. Of Computer Engineering, Zakir Husain College of Engineering and Technology, Aligarh Muslim University

2Student, Deptt. Of Computer Engineering, Zakir Husain College of Engineering and Technology, Aligarh Muslim University

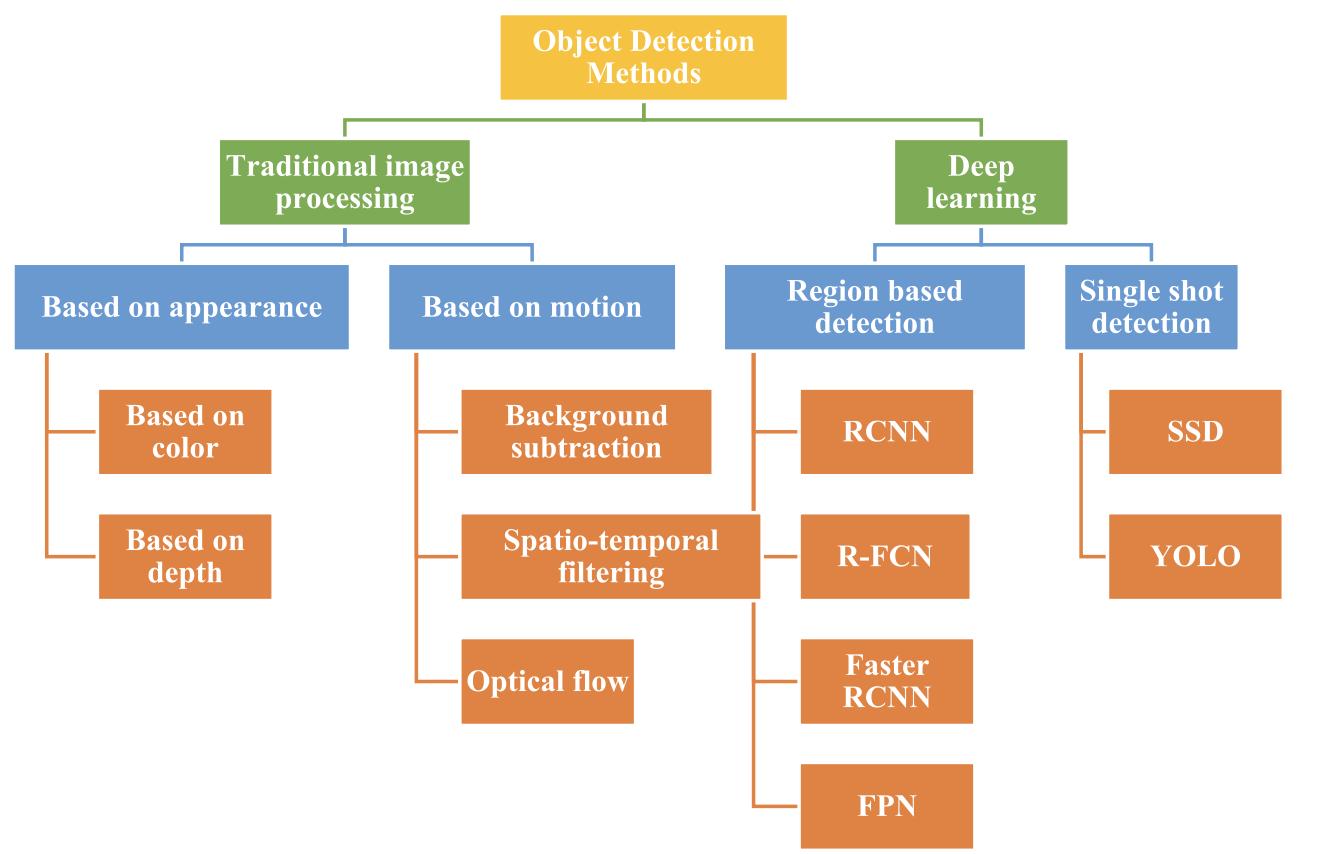

Abstract - This research paper investigates real-time object detection and video monitoring in drone systems, with a focus on traditional computervisionalgorithmsand deep learning algorithms. Traditional computer vision algorithms such as Haar cascades, HOG, template matching, edge detection, and optical flow are explored in the first section, while the second section focuses on deep learning algorithms, specifically region-based detection and YOLO.

However, using deep learning algorithms on drones poses challenges due to their limited computational capabilities. To address this, the paper proposes a cloud computation approach that enables real-timeobject detectionandvideo monitoring. The results show that traditional computer vision algorithms are not fast enough for real-time monitoring, and deep learning algorithms are a more suitable alternative.

The proposed cloud computation approach provides a feasible solution to overcome the computational limitations of drone systems. This research paper makes a significant contribution to the field of drone systems and real-time object detection by proposing a new approach that can be used in various applications, including vigilance, redeem and save, and agricultural monitoring. The proposed approach can also be extended to other applications that require real-time object detection in limited resource environments

Key Words: Object-Detection, UAVs, Cloud Tracking,Drone, Region based detection, YOLO, SSD, Traditional computer vision algorithms, Deep learning.

1.INTRODUCTION

Computer vision has improved significantly in recent years as a result of the advancement of deep learning algorithms[11],advancesinhardwarecapabilities, and more data availability. Detecting items in a specific categorysuchaspeople, cars,oranimalswithinanimage and reporting the location and extent of each object instanceisoneofthemostcommonlystudiedaspectsof computer vision.Object detection, including object

***

finding, scene assessment, crowd monitoring, segmentation,imagecaptioningandactivityrecognition arekeyelementsofawiderangeofextremelycomplex computer vision tasks. Despite significant progress in developing broad object detection systems that can distinguishawiderangeofitems, thereisstillaneedfor precise and efficient object detection in the context of droneapplications[14].

Dronesarebecomingmoreandmorepopularinavast range of timely applications such as surveillance [26], delivery services [27], traffic tracking [28], agriculture [29], disastermanagement[30],andmaritimesecurity [31]. Amazon, for example, has been given federal authorisation to deploy drones as part of its delivery service and there are reports that drones may be an acceptablemeansoftransportingmedicinalproductsin ruralareas.Intheareaofprecisionfarming,dronesare alsoexpectedto haveasignificantimpactsincetheycan assistfarmersintaskssuchascropmonitoring,analyses, and management, including selection of effective pesticides and optimisation of water supply. DJI, the world'sleadingdronemaker,isdevelopingdronesthat areequippedwithsensorsspecifictoprotectagricultural cropsfrominsectsandweeds.

The history of drones dates back many years and it is possibletoclassifythemonthebasisoftheirflightspeed, ability to stabilise position, hovering or loitering capability, environmental conditions as well as other characteristics. VarioustypesofUnmannedAirVehicles, eachhavingitsown.

strengthsandweaknesses,includingbutnotlimitedto single UAVs [11], multiUAVs, fixed wing aircraft or hybridUAVs.Autonomousvehiclesareanotherareaof research,withsomedronesabletoexecuteflightplans without human interference, relying on Global Positioning System onboard and an ordered list of 3D pointscalledwaypoints[16].

Detectingobjectsfromdronesposesdistinctchallenges as opposed to conventional object detection. For instance,inthecaseoftrafficmonitoring,dronescapture trafficactivityfromanaerialperspective,whichprovides morecontextualinformationbutmakesobjectdetection morechallengingduetochangesinviewpoint,scale,and aspectratio.Bird's-eye-viewobjectdetectionisfurther complicatedbyabruptcameramotion,motionblur,high object density, severe perspective distortion, and complex backgrounds. Additionally, aerial object detection studies often face the problem of biased datasets,asdatasets needtobeannotated. Thismeans thatobjectdetectionmodelstrainedonstandardimages may not be appropriate for detecting objects in aerial images.

Despite these challenges, researchers are making significantprogressindevelopingaccurateandefficient objectdetectionalgorithmsfordroneapplications.One approachinvolvesusingdeeplearningmodelsthatcan handlethecomplex,real-worldconditionsencountered in drone imagery. These models typically use

convolutional neural networks (CNNs) to draw-out features from the images, followed by object proposal generation and classification of the proposed regions. Other approaches include adapting traditional object detection algorithms to the aerial domain, such as the FasterR-CNN[14]andYOLOalgorithms[18].

Inconclusion,object-detectionindroneapplicationsisa critical area of research with numerous real-world applications,fromsurveillanceanddeliveryservicesto precisionagriculture[29]anddisastermanagement[30]. The use of specialized algorithms that can take into accountthedistinctcharacteristicsofdrones'imagesis required to solve the problem of detecting objects in bird'seyeview.

Further developments in the field of aerial object detection, which will lead to even more accurate and efficient detection systems in the future [11], will be expectedasdronetechnologycontinuestoadvance

2. LITERATURE REVIEW

Objectdetectionisacomputervisiontaskthatinvolves many complex mathematical calculations and computation for identifying and localizing objects of interest within an image or video. This technique of detecting objects by a machine in a live video has got tremendous advancement in the field of robotics,

autonomousvehicles,surveillancesystem,construction Industryandanymore.

Detecting objects in videos or images can be accomplishedusingavarietyofmethodsandtechniques, suchasdeeplearning-basedapproacheslikeSSD(Single ShotDetector)[15],YOLO(YouOnlyLookOnce)[15]and Faster R-CNN (Region-based Convolutional Neural Network)Orconventionalcomputervisionmethodslike Haar cascades and HOG (Histogram of Oriented Gradients)[1].

Deeplearning-basedobjectdetectionmethodshavebecome increasinglypopularinrecentyearsduetotheirability to achievehighaccuracyinreal-timeapplications.Butwewill firstdiscusstraditionalcomputervisionalgorithmforobject detection.Traditionalcomputervisiontechniquesforobject detection and video monitoring involve a wide range of methods and algorithms, many of which have been developedoverseveraldecadesofresearch.Herearesome ofthemostcommonlyusedtraditionaltechniques

2.1.Traditional Computer vision Techniques

2.1.1. Haar cascades : Onewaytoclassifyobjectsin imagesisbycolor.Thismethodisoftenusedinrobotic soccer, where teams of robots compete against each other [1]. However, relying solely on color can be problematic.

The results of the international RoboCup contest have revealedthatthelightingenvironmentplaysasignificant role in determining the competition's outcome. Even minor variations in the surrounding illumination can significantly impact a team's ability to succeed in the event. Participants must recalibrate their systems multipletimesduetominorchangesinambientlightthat occurthroughouttheday[3].Todetectobjectsinimages, usingonlycolorisnotveryreliable.

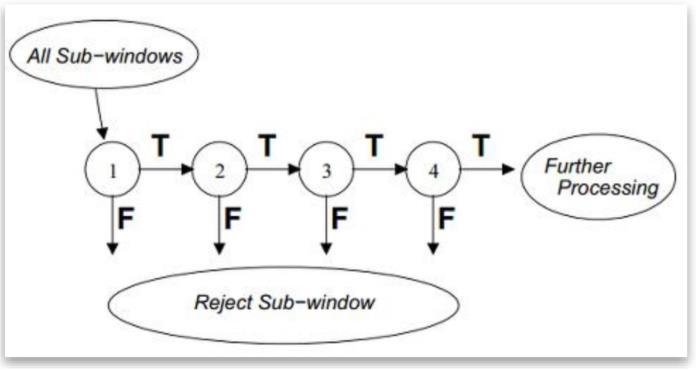

A more advanced technique for identifying objects in images involves analyzing specific attributes or structuresoftheobject.ViolaandJonescreatedHaar-like features, which help overcome the challenge of performing computationally intensive feature calculations.Acascadeclassifierinvolvesmultiplestages, eachcontainingweaklearners,andscansanimagewitha slidingwindow.Theclassifiercategorizesaspecificarea ineachstageaseitherpositiveornegative.Tofunction effectively,theclassifierrequiresalowfalsenegativerate ineachstage,whileitcantoleratearelativelyhighfalse positiverate.

Forthecascadetofunctionproperly,it'snecessarythat eachstagehasalowfalsenegativerate.Thisisbecauseifan object is incorrectly classified as a non-object, then the classificationforthatbranchstops,andunfortunatelythere's no other opportunity to correct the mistake later on. It is

considered acceptable for individual stages in the object detection process to have a relatively high rate of falsely identifying non-objects as objects. If this occurs at a particular stage, the mistake can still be rectified in subsequentstagesoftheclassifier,startingfromthe(n+1)th stageonwards[4].

2.1.2. HOG(Histogram of Oriented Gradients): To detectobjects,HistogramofOrientedGradients(HOG)is a technique used in computer vision that extracts features. The HOG approach involves calculating the gradientofpixelintensitiesinanimage,whichyieldsa set of gradient vectors. These vectors are then represented as histograms, and these histograms are utilizedasfeaturesforobjectdetection.[5].

The HOG feature extraction process involves several steps:

• Pre-processing: The input image is always preprocessedtoenhancecontrastingremovenoise.This involvessmoothingtheimageandapplyingahigh-pass filtertoextractedges.

• Gradient calculation: The gradient of the pixel intensitiesiscalculatedusingaderivativefilter,suchas the Sobel operator. This produces two gradient components, oneinthex-directionand onein theydirection.

• Orientationbinning:Thegradientarebinnedintoaset of orientation bins. The orientation bins divide the gradientanglevariesintoasetofdiscretebins,suchas 0-20degrees,20-40degrees,andsoon.Themagnitude of each gradient vector is accumulated into the correspondingorientationbin.

• Block normalization: The histogram of gradient orientations is normalized over a local region of the imagecalledablock.Theblockistypicallyrectangular and overlaps with neighbouring blocks. The normalizationisperformedtoimprovetherobustness

oftheHOGfeatures tovariations inilluminationand contrast.

• Descriptorgeneration:TheHOGfeaturesaregenerated byconcatenatingthenormalizedhistogramsfromall theblocksintheimage.

HOG has been used flourishingly in several computer visionapplications,includingface-detection,pedestriandetectionandobjecttracking.Oneoftheadvantagesof HOGisthatitiscomputationallyefficientandcanbeused forreal-timeapplications.However,HOGmaynotbeas accurate as deep learning-based methods for object detection.

2.1.3. Template matching: It is a computer vision methodthatisoftenutilizedtolocateasub-imageinthe targeted image that get matched a given template of image.Itisapopulartechniquethatfindsapplicationsin diverse fields such as robotics, medical imaging, manufacturing, and surveillance. Based on the method used for feature extraction, template matching approachescanfurtherbecategorizedintotwodifferent groups: level histogrammethod andfeatureextraction method[6].

However, Performing template matching can be computationally intensive since it involves taking the templateimageandplacingitineverypossibleposition within a larger target image. This process requires the calculation of a numerical metric for each position.to determinethelevelofsimilarity.Toaddressthis,swarm intelligence algorithms have been considered as a solution in recent works. Swarm intelligence is a problem-solvingapproachinspiredbythebehaviourof socialanimalssuchasants,birds,andfish.Theseanimals display collective behaviour without a central control unitoranyindividualmemberknowingtheoverallgoal ofthegroup.Instead,theyfollowsimplelocalrulesthat leadtoemergentbehaviouratthegrouplevel[7].

Swarm intelligence algorithms aim to replicate this behaviour in computational systems. They typically involveapopulationofagents(e.g.,"ants"or"particles") thatinteractwitheachotherandwiththeirenvironment tocollectivelysolveaproblem.Eachagentfollowsasetof simplerules thatgovernits behaviour, such as moving towards or away from certain stimuli or other agents, andupdating its behaviour based onfeedback fromits environment.

The algorithms have been applied to a wide range of problems in optimisation, routing, classification, and otherareas,andhavebeenshowntobeeffectiveinmany caseswheretraditionaloptimisationmethodsfaildueto thecomplexityoftheproblemorthehighdimensionality ofthesearchspace.

2.1.4. Edge Detection: Edgedetectionisatechnique used to locate and identify sudden changes in the intensityofpixelswithinanimage.Theseabruptchanges inintensityareknownasdiscontinuities,andtheyoften indicate the boundaries of objects within a scene.[8]. Classicaledgedetectionmethodsusea2-Dfilter,whichis designed to highlight areas of the image with a large change in intensity. There are many different types of filters,eachdesignedtoworkwellforacertaintypeof edge. Noise in the image can make edge detection difficult, and attempt to reduce noise can result in a blurredordistortededge.Someedgesarenotasudden changeinintensity,butinsteadagradualchange,which requires a different type of filter. There are two main types of edgedetection: gradient-basedandLaplacianbased, which use different mathematical techniques to find edges. The goal is to compare different edge detection methods to find the one that works best for differentsituations.

Edge detection can be performed using a variety of techniques,butthesetechniquescangenerallybeoftwo categories.

Gradient based Edge Detection:

The gradient-based method for detecting edges in an imageinvolvesidentifyingthehighestandlowestpoints intheimage'sfirstderivative.Thistechniqueisusedto locatetheedgesinanimage.

Laplacian based Edge Detection:

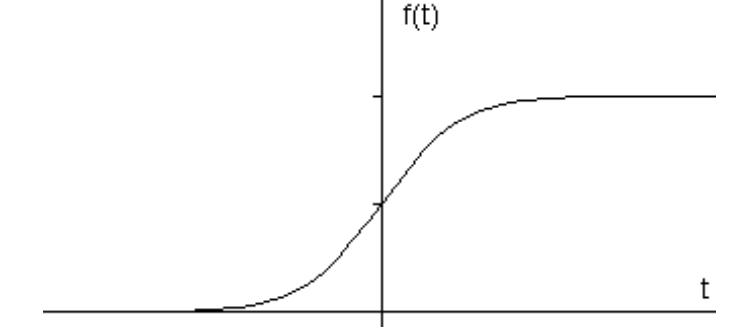

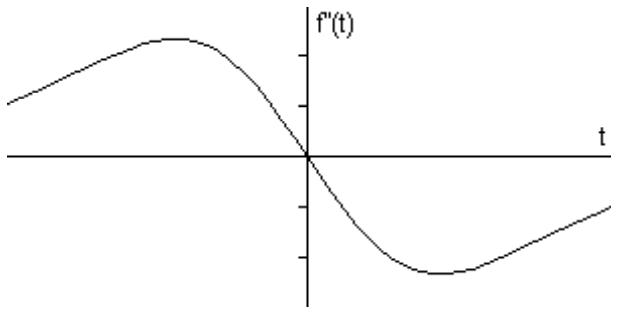

The Laplacian approach detects edges in images by identifying the edges in an image can be identified by detectingzerocrossingsinthesecondderivativeofthe image.Theseedgestakeonaramp-likeshapeandcanbe detected by calculating the derivative of the image. If thereisasuddenchangeinintensity withintheimage, thederivativecanbeusedtopinpointtheedge'slocation [8].

When we compute the gradient of this signal, which involves taking the initial derivative w.r.t time in one dimension,theresultingsignalisasfollows.[8]:

Thederivativedisplaysapeakatthecenterofthecorner in the original signal, which is a feature of "gradient filter"edgedetectionfilterssuchasSobelmethod.Ifthe gradientincreasesaspecificthreshold-value,thepixel’s location is identified as an edge's location. Edges are characterizedbyhigherpixelintensityvaluesthantheir neighbouringpixels.Therefore,comparingthegradient valuetothethresholdvalueallowsforthedetectionofan edge whenever the gradient surpasses the threshold. Additionally,whenthefirstderivativereachesitspeak, thesecondderivativebecomeszero.Hence,theLaplacian methodcanbeusedtodetectedgesbyidentifyingzeros in the second derivative of the signal. The second derivativeofthesignalisillustratedbelow[8].

2.1.5. Optical Flow: Optical flow is a method in computervisionthatisutilizedtomonitorthemovement ofobjectsinanimageorvideo.Thistechniqueinvolves examining the alterations in pixel intensities between successive frames of a video to calculate the apparent motionofobjectspresentinthescene.

Optical flow can be used to solve various computer vision problems, such as object tracking, activity recognition,andvideostabilization Theresultingoptical flowfieldisadensemapofvectors,whereeachvector represents the motion of a pixel in the scene between consecutiveframes.Thedirectionandmagnitudeofthe vectorindicatethedirectionandspeedofthemotion.

There are various techniques used to estimate optical flow,suchastheLucas-Kanadetechnique,HornSchunck method, and the Farneback method. These techniques differintheirassumptionsaboutthemotionfieldandthe costfunctionsusedtoestimatetheflowvectors[9].

Optical flow has numerous applications in different fields, such as robotics, autonomous navigation, and sportsanalysis.Forexample,opticalflowcanbeusedin self-drivingcarstoestimatethemotionofsurrounding vehicles and pedestrians, which is crucial for safe navigation. Additionally, optical flow can be used to monitorandavoidallobstaclesinrealtime,enablingthe drone to fly safely and autonomously in complex environments.Overall,opticalflowisapowerfultoolfor dronenavigationandhasthepotentialtorevolutionize thewaydronesareusedinavastvarietyofapplications, from search and rescue operations to agriculture and deliveryservices[10]

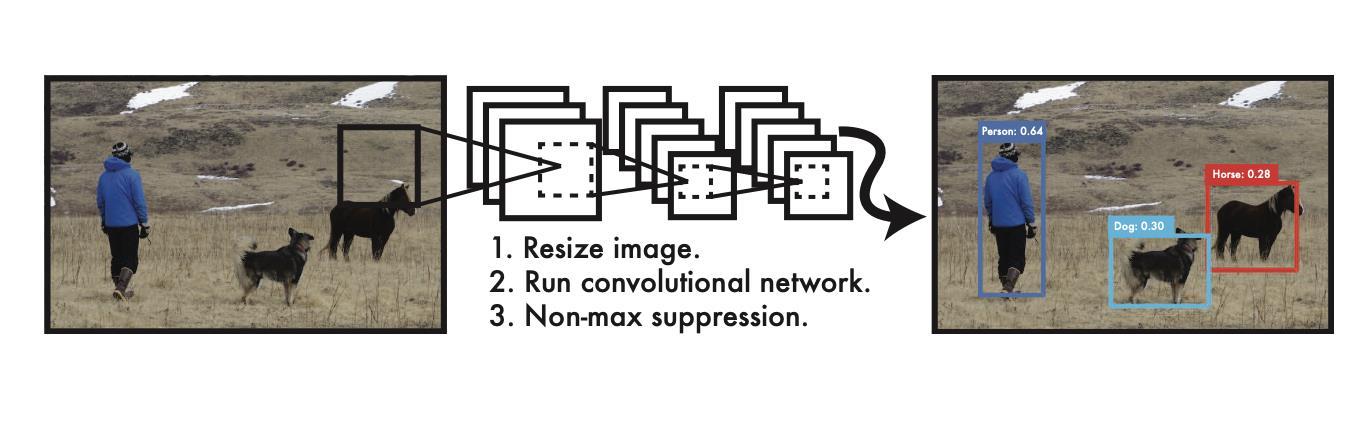

2.2.1. Region based object detection: It is a technique in deep learning based object detection that usesatwo-stageapproachtoidentifytargetedobjectsin images. In the first stage, a set of region proposals are generated that has a very high possibility to contain objects. These region proposals are generated using a regionproposalnetwork(RPN)thatscanstheimageat multiplescalesandidentifiespotentialobjectlocations [11]. The RPN outputs a set of bounding boxes with correspondingobjectnessscoresindicatingthelikelihood ofeachboxcontaininganobject.

Inthesecondstage,theregionproposalsarerefinedand classified by a Region-Based-Convolutional-NeuralNetwork(RCNN).TheRCNNtakeseachproposedregion as input and outputs a label and a more accurate boundingrectanglesfortheobjectwithintheseregion.

TheRegion-basedConvolutionalNeuralNetwork(RCNN) usually consists of a layer known as the Region of Interest (ROI) pooling layer. Its purpose is to extract a feature vector of a predetermined length from each regionproposal.followedbyoneormorefullyconnected layers that perform classification and bounding box regression[14].

Methods for detecting objects based on regions, like faster R-CNN and cascade R-CNN, mask R-CNN have attained excellent performance on object detection benchmarks like COCO and PASCAL VOC. These techniquesareextensivelyusedindiverseapplications, including object tracking, autonomous driving, and surveillance.

2.2. Deep learning-based object detection

Deep learning-based object detection and video monitoringisaCV(computervision)methodthataimsto detectandlocateobjectswithinanimageorvideoframe usingDNN(deep-neural-networks).Theapproachisable toperformobjectdetectiontaskswithagreataccuracy, andhasbecomeincreasinglypopularinrecentyearsdue to the growing availability of large datasets and the computationalpowerrequiredfortrainingDNN.

ThemainideabehindDNNbasedobjectdetectionisto trainaneuralnetworktoidentifypatternsandfeatures that are indicative of objects within an image or video frame. There are several popular techniques for performingobjectdetectionwithdeeplearning,including region-based detection including convolutional neural networks(R-CNNs),andsingle-shotdetectionmethods (SSDs)likeYouOnlyLookOnce(YOLO).

TheFasterRCNNisintroducedasadeeplearningbased objectdetectionmethod.Itconsistsofapre-trainedCNN for feature extraction, followed by two more subnetworks that are trained. The Region- ProposalNetwork (RPN) generates object proposals, while the secondsubnetworkpredictstheobject’sclass.Themain differencebetweenfasterR-CNNandotherregion-based detectorsisthatRPNisaddedatthelastconvolutional layer,allowingforreal-timeframerateswithouttheneed for selective search[13]. Additionally, Faster R-CNN outperforms other region-based detectors in terms of mAP and allows for single-stage training of both classificationandregression.FeaturePyramidNetwork (FPN) is another method for generating multi-scale featurerepresentationsathighresolutionlevels,which canimproveobjectdetectioninmultiplescales.TheDeep DroneframeworkproposedbyHan,Shen,&Liu(2016) [12] uses a CNN for object detection and achieves fast andaccurateresults.

2.2.2. Single Shot Object detection:

Although region-based detection methods deliver impressive accuracy, their computational speed is not optimal. In contrast, single-shot detection methods offer faster processingtimesandrequirelessmemorythanregionbased methods. These methods use the concept of "multibox" to identify multiple objects in a single shot. They achieve higher efficiency and accuracy by eliminatingtheneedforboundingboxproposals,which is a requirement for RCNN. Instead, they use a convolutionfiltertograduallypredicttheclassesofthe objectsanditslocationoffsets[15].

Theresearcherssuggestedadeeplearning-basedmodel forobjectdetectionindroneimagesofaparticularclass. TheimageswerecapturedusingParrotARDrone2,and thedatawasprocessedonaconnectedPCviaWiFi.To overcome the computational burden of region-based algorithms, they used SSD (Single Shot Detector) for object detection.Theresulting output was fedtoa PID (Proportional Integral Derivative) controller, which trackedtheobjectsina3Dplanecomprisingx,y,andz axes. This approach outperformed other methods in terms of computational time also accuracy, making it idealforrealtimeapplications[15].

technique where the classifier is applied at regular intervalsthroughouttheimage[16].

NewermethodssuchasR-CNNstartbycreatingpossible bounding boxes in an image using region proposal techniques. Then, a classifier is applied to these boxes andpost-processingisdonetoenhancetheaccuracyof theboxesbyremovingredundantdetectionsandscoring them based on other objects in the scene [17]. These methods involve many components that need to be trained separately, making them difficult and time consumingtooptimise.

2.2.3 You Only Look Once

: Ross Girshick, Joseph Redmon, Santosh Divvala, and Ali Farhadi developed YOLOin2016asanobjectdetectionsystem.YOLOisa deep learning algorithm that can be used to detect objects in real time videos and images quickly and accurately.Insteadoftakingaslidingwindowapproach, YOLOcomputesobjectdetectionasaregressionproblem and estimates bounding rectangles and class probabilities directly fromfull imageina singleneural network.

Atpresent,detectionsystemsutilizeclassifiersinorder toconductobjectdetection.Theprocessinvolvesusinga classifierinquestionandtestingitatdifferentlocations and the scales within an images.The Deformable parts models(DPM)andsimilarsystemsusesaslidingwindow

The creators of YOLO have redefined the problem of object detection as a single regression-problem that estimatetheclassprobabilitiesandboundingrectangles coordinatesofmultipleobjectsinanimagedirectlyfrom thepixels. YOLOonly needs one pass overanimageto predict the location of all objects in the image. This makes YOLO simpler, faster, and more accurate than traditionalmethodsofobjectdetection.YOLOcanrunon anewimageattesttime,withouttheneedforacomplex pipelineorindividualcomponenttraining,whichmakes itveryfast.Thebasenetworkcanrunat45fpsandthe fastversioncanrunatmorethan150fps.Accordingto research, YOLO has the capability to process video streams in real-time with a latency of under 25 milliseconds.Ithasbeenshowntooutperformotherrealtime systems with more than twice the mean average precision,asdocumentedin[18].

2.3. Real-time tracking

Currently,therearemanyresearchinitiativesaimedat creatingdependablecloud-basedroboticapplicationsfor the future. These initiatives can be classified into two main categories: Cloud Robotics Systems and Dronebased Systems. The author’s introduced some of the algorithms and demonstrated the implementation of a cloud-basedsystemcalledRobotCloud.Thepurposewas to take advantage of the flexibility, re-usability, and extensibilityofferedbycloudbasedrobotsystems.They builtaprototypeofRobotCloudusingServiceOriented Architecture (SOA) and deployed it on Google App

Engine(GAE)[19].TheresearcherscreatedanopenEASE systemthatenablesrobotsandresearcherstoremotely solvecomplexmentalparallelproblemsusingthecloud's vast storage and computational resources. They incorporated learning algorithms into the system and providedtherobotwithsuggestedsolutionsfordealing withsituations[20].Thetext[21]explainsthataCloud RoboticsMiddlewarehasbeenintroducedthatpermits thetransferof storageandcomputationsfromrobotsto the cloud. This is considered to be an initial implementationofcloudroboticssystems,similartothe worksmentionedpreviously.

Theproblemofinsufficientcomputingpoweronrobots for modelling Simultaneous Localisation and Mapping (SLAM)tasks[22],whichinvolvescreatingamapofthe robot'ssurroundings.Toaddressthisissue,theysuggest a software framework called Cloudroid, which is designed to deploy robotic packages to the cloud for cloudasservices.Theyalsoconductteststoevaluatethe framework's performance in dynamic and resourcelimitedenvironments,withafocusonrequestresponse time. New system was introduce then which is called, Context Aware Cloud Robotics (CACR) [23] which includes decision-making capabilities for industrial robots like automated guided vehicles. The system's design incorporates cloud based application for simultaneous localisation and mapping, and the researchershighlightenergyproficiencyandcostsavings as the major advantage of using the cloud-based approach.

The primary target of this study is to integrate robots with the cloud and offer task-oriented services while ensuringahighqualityofservice.RoboCloudpresentsa cloudservicewithaspecifiedmissionandcontrollable resources that are determined based on predictable behaviour.Theeffectivenessoftheproposedapproachis assessed by analyzing the quality of service variables, such as latency, of a cloud service that offers cloud focusedobjectmonitoring.

2.3.1 Drone Based System: Severalinitiativeshave aimedtocombinedroneswithcloudcomputingandthe Internet of Things (IoT). One such initiative is the Internet-of-DronesmodelproposedbyGharibietal.[24]. Their model comprises three primary networks - air trafficcontrolnetwork,cellularnetwork,andtheinternet - which provide generic service for various UAV applications such as delivery, surveillance, search and rescue,amongothers.However,thearticleonlypresents atheoreticalframeworkfortheIoDwithoutanyconcrete implementation or realization of the proposed architecture. In contrast, our research presents a validatedarchitecturefortheIoDalongwithareal-world implementationandexperimentation.

The research paper [25] introduces a cloud robotics platform,FLY4SmartCity,thatusesROSasthebase.The platformarchitectureconsistsofessentialfeaturesthat allowthecreationofdroneinstancesasnodes,whichare managedbytheplatformmanagerformanagementevent and planning. During events, the service manager provides services, while the rule manager handle the actions.Ermacoraetal.[22]presentedanotherpaperon a cloud robotics platform designed for monitoring and basedonROS.Theplatformutilizescloudcomputingto offload data and computational capabilities. The architecture is layered and includes services that use APIsprovidedbyapplicationsutilizingdronecapabilities andadaptation.Drones generallyserveasthephysical layerofthearchitecture.

DronemapPlannerisacloud-basedsystemthatutilizes theInternet-of-Drones(IoD)concepttoenableusersto control and manage multiple autonomous drones. A mission,suchasvisitingasetofwaypoints,isinitiatedby theuserthroughthecloud.VirtualUAVsarethencreated and mapped to physical UAVs using a service-oriented approachbasedonRESTorSOAPWebservices.Oncea missionrequestisreceived,theselectedUAVscarryout themissionandsendreal-timedatatothecloudservice, which then stores, processes, and forwards the synthesizedresultsbacktotheuser.Anoverviewofthe DronemapPlanner'sarchitectureisprovidedbelow.[25].

• The UAV layer : This layer provides users with servicesbymakingsystemresourcesavailabletothem. TheUAVlayerfacilitatescommunicationwithhardware throughtheuseoftheMicroAirVehicleLink(MAVLink) andtheRobotOperatingSystem(ROS)communication protocol.ROSiswidelyusedmiddlewarethatisusefulin developing robotics applications, and the Micro-AirVehicle-Link(MAVLink)communicationprotocolhelpsin messageexchangebetweendronesandgroundstations usingdifferenttransportprotocolssuchasTCP,UDP,and USB ,Telemetry. When ROS and MAVLink are used

together, developers can have an interface that allows them to control and monitor drones at a high-level withouthavingtoprogramorinteractwiththehardware directly.

• Cloud services layer :Thecloudserviceslayerisin charge of deploying cloud services utilizing three component sets: remote computation, communication interactions,andcloud-basedstorage.Inthecloud,data from UAVs is stored, including data on environmental variables, mission data, localization parameters, and time-stamped transmitts data streams, such as images andsensordata.Thedataisstoredinadistributedfile system, like HBase or HDFS (Hadoop Distributed File System),depending on the specific application requirements. The usage of distributed file system storage allows for extensive batch processing through tools such as HadoopMap/Reduce. This systemoffers both realtime and batch processing of the data. In the case of real-time data streams, the cloud operates on incoming data to detect crucial events or threats that demand prompt action or to execute dynamic computationinadistributedenvironment.Ontheother hand, for batch processing the incoming data which is retainedinHDFSandisanalyzedatalaterstage.

In addition, the system offers cloud-based remote computation, encompassing resource-intensive algorithms for data analysis and image processing. Furthermore,itsupportsMap/Reducejobsthatrunon Hadoop, enhancing processing speed and boosting systemefficacy.Additionally,dataanalyticsalgorithmsis executedonvoluminoussetsofstoreddata.

ThethirdcomponentofCloudServiceslayercomprises communication interfaces. The system enables interactionsvianetworkinterfacesandwebservices.The networkinterfacesrelyonserver-sidenetworksockets that receive JSON serialized messages sent from UAVs. Meanwhile,webservicesenableclientstomanagedrone

missions and parameters. Both SOAP and REST web services are utilized to provide end-users and client applications with varied means of controlling and monitoring drones. Network interfaces are mainly utilized to manage continuous streams, while web servicesareemployedtoissuecommandstodronesand retrievecloud-baseddata.

• Client-Layer : The layer described here provides interfaces for drone application developers and endusersalike.End-userscanaccessthecloudserviceslayer andUAVlayerviadronemapclientsidewebapplications runningontheclientlayer.Thisallowsuserstoregister multiple UAVs, modify mission parameters based on cloudresults,remotelymonitorandcontroltheUAVsand theirmissions.Thefront-endinterfaceincludesfeatures to connect/disconnect, utilise physical UAVs and their services,configure,controlamissionandkeeptrackof UAV parameters. For developers, the client layer providesmanyApiinvariousprogramminglanguagesto facilitatethedevelopmentofdroneapplications.

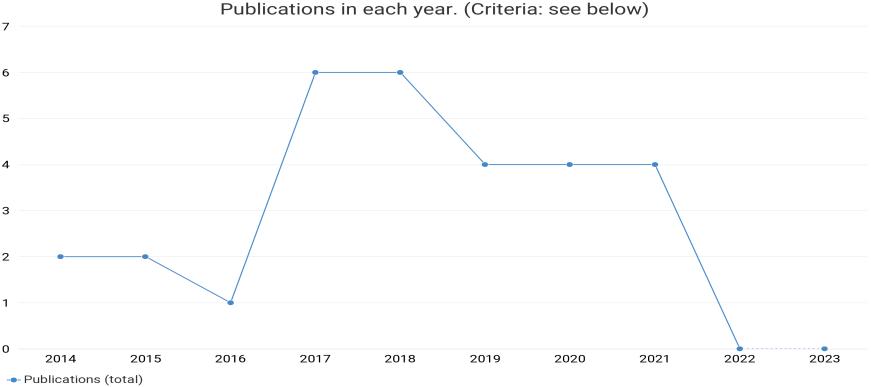

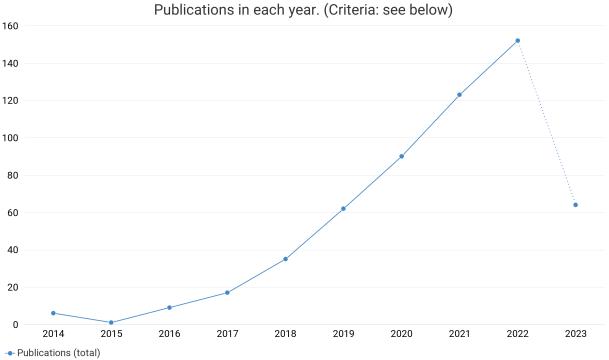

3. BIBLIOMETRIC ANALYSIS

Toidentifytheresearchpapersandinthefieldofrealtime object detection and video monitoring in drone system,weusedtheDimensions.aidatabasetoretrieve all publications relatedto real-timedetectioninDrone systemusingthefollowingsearchquery:

Fig8. Yearwisedistributionofqueryresult

ExclusionCriteria: Researchpaperolderthan2014 areexcludedand31papersare included.

InclusionCriteria:

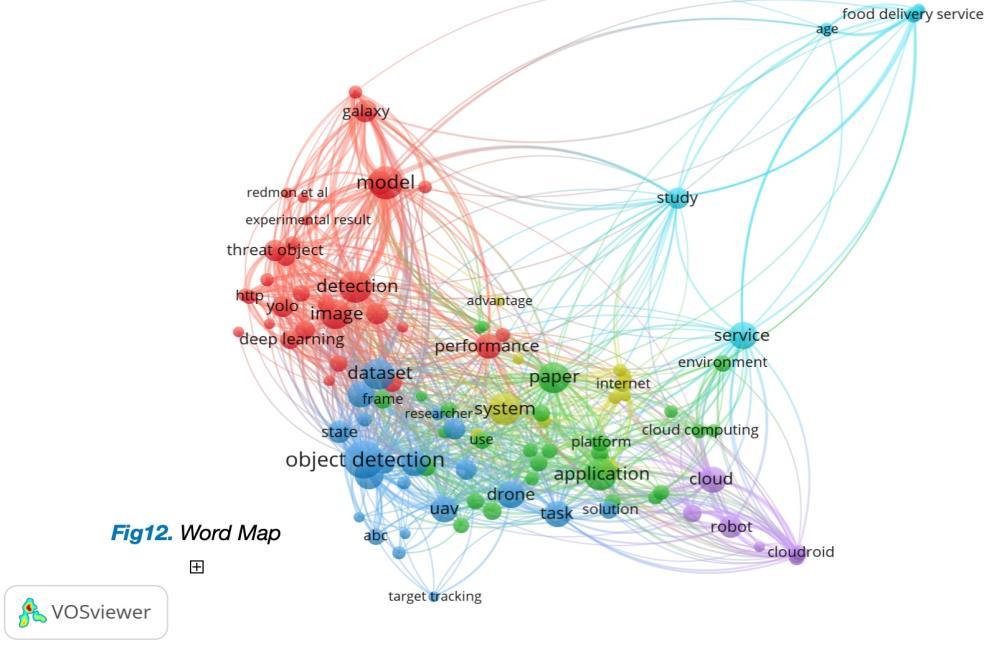

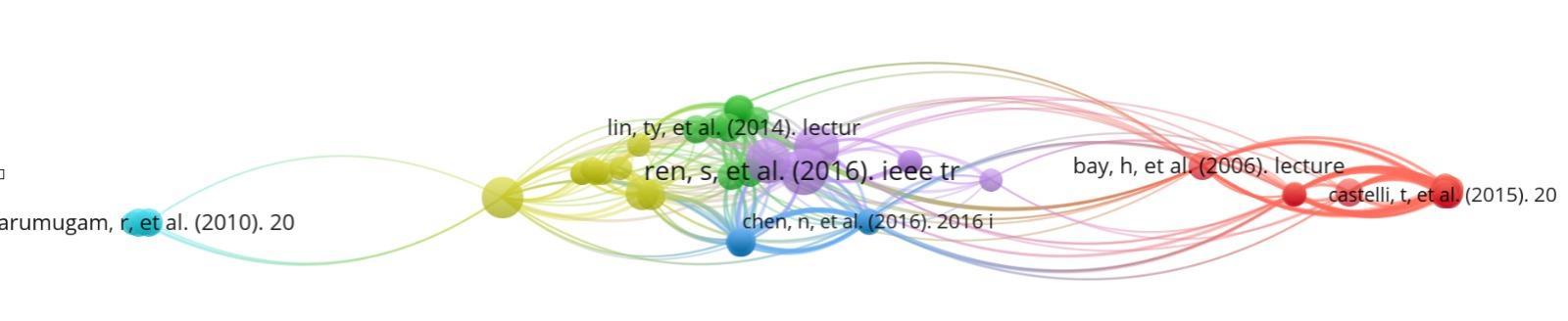

Co-Citationanalysisusingcitedsourcesastheunitofanalysis.Theconditionisforasourcetohaveatleastbeencited2 times.Thisfilteredout69sourcesfrom566,asdisplayedinFigure10

Co-AuthorshipanalysisusingAuthorsasthe unitofanalysis.Theconditionisforthe authortohaveatleast2documents.Outof 101authors,22metthisthreshold.Outof22, 5formedthelargestconnectedset(cluster).

OccurrencesofwordswascountedfromTitleandAbstractFields,usingtheFullCountingmethod.Allcommonwordswith aminimumoccurrenceof3werechosen,givingatotalof105words.

4. CONCLUSION

In conclusion, real-time object detection and video monitoringarecriticalfunctionalitiesinmoderndrone systems that enable them to perform a wide range of tasks, from surveillance and monitoring to search and rescueoperations.Thesecapabilitiesaremadepossible byusingadvancedcomputervisionalgorithmsanddeep learning models thatcanprocess data inreal-timeand detectobjectswithhighaccuracy.

Traditional computer vision algorithms are good approach for detecting objects in a static image but it becomes a bit slower for 40fps to 60fps videos and it becomesquitedifficulttodetectobjectsinalivestream, whereasdeeplearningalgorithmslikeSSD,YOLO,R-CNN andFasterR-CNNarequiteefficientfordetectingobjects inahighframeratevideosbutthereisabigproblemjust because of size of drones, since these technologies are quite complex and requires a lot of computation it is difficult to provide such a high end system on a drone hencethecloudconnecting droneisintroducedandall thesecomplexcomputationsarecomputedondedicated servermachineandthentheresultissentback.

Moreover, cloud connection of drones is becoming increasingly important as it enables data storage, computation, and communication capabilities that are crucialformanydroneapplications.Storingdatainthe cloud enables drones to engage in extensive batch processing using tools like Hadoop Map/Reduce. Meanwhile,real-timeprocessingiscapableofdetecting pressingeventsandthreatsthatdemandpromptaction.

The cloud also provides remote computation and communication interfaces for both end-users and developers, enabling them to control and monitor the dronesremotelyanddevelopapplicationsthatleverage thedrones'capabilities.Overall,theintegrationofrealtime object detection, video monitoring, and cloud connectivityisapowerfulcombinationthatcanenable dronestoperformcomplextaskswithhighefficiencyand accuracy.

5. REFRENCES

[1] Soo, Sander. "Object detection using Haar-cascade Classifier." Institute of Computer Science, University of Tartu 2.3(2014):1-12.

[2]Nagabhushana,S."IntroductioninComputerVision and Image Processing." New Age International (P) Ltd. Publishers, New Delhi (2005):3.

[3] Lovell, Nathan, and Vladimir Estivill-Castro. "Color classification and object recognition for robot soccer

undervariableillumination." RoboticSoccer.IntechOpen, 2007.

[4]T.M.Inc.,“TrainaCascadeObjectDetector,”[Online]. Available: http://www.mathworks.se/help/ vision/ug/trainacascadeobjectdetector.html#btugex8. 2014

[5]Felzenszwalb,PedroF.,etal."Objectdetectionwith discriminatively trained part-based models." IEEE transactions on pattern analysis and machine intelligence 32.9(2009):1627-1645.

[6] Banharnsakun, Anan, and Supannee Tanathong. "Objectdetection based on templatematchingthrough use of best-so-far ABC." Computational intelligence and neuroscience 2014(2014):7-7.

[7]Brunelli,Roberto.Templatematchingtechniquesin computervision:theoryandpractice.JohnWiley&Sons, 2009.

[8]Maini,Raman,andHimanshuAggarwal."Studyand comparison of various image edge detection techniques." International journal of image processing (IJIP) 3.1(2009):1-11.

[9]Lezki,Hazal,etal."Jointexploitationoffeaturesand optical flow for real-time moving object detection on drones." Proceedings of the European Conference on Computer Vision (ECCV) Workshops 2018.

[10]BerkerLogoglu,K.,Lezki,H.,KerimYucel,M.,Ozturk, A.,Kucukkomurler,A.,Karagoz,B.,Erdem,E.,Erdem,A.: Feature-basedefficientmovingobjectdetectionforlowaltitude aerial platforms. In: The IEEE International ConferenceonComputerVision(ICCV)Workshops.(Oct 2017)

[11]Anitha Ramachandrana,Arun Kumar Sangaiah, “A review on object detection in unmanned aerial vehicle surveillance” International Journal of Cognitive ComputinginEngineering2(2021)215–228

[12] Han, Song, William Shen, and Zuozhen Liu. "Deep drone:Objectdetectionandtrackingforsmartdroneson embedded system." URL https://web. stanford. edu/class/cs231a/prev_projects_2016/deepdroneobject__2_. pdf (2016).

[13] Wang, Xiaoliang, et al. "Fast and accurate, convolutionalneuralnetworkbasedapproachforobject detectionfromUAV." IECON2018-44thAnnualConference of the IEEE Industrial Electronics Society.IEEE,2018.

[14]Subash,K.V.V.,Srinu,M.V.,Siddhartha,M.,Harsha, N. S., & Akkala, P. (2020). Object detection using Ryze Tellodronewithhelpofmask-RCNN.InProceedingsof the 2020 2nd international conference on innovative mechanisms for industry applications (ICIMIA)

[15] Rohan, Ali, Mohammed Rabah, and Sung-Ho Kim. "Convolutional neural network-based real-time object detection and tracking for parrot AR drone 2." IEEE access 7(2019):69575-69584.

[16]Felzenszwalb,PedroF.,etal."Objectdetectionwith discriminatively trained part-based models." IEEE transactions on pattern analysis and machine intelligence 32.9 (2009): 1627-1645.

[17] Girshick, Ross, et al. "Rich feature hierarchies for accurate object detection and semantic segmentation." Proceedings of the IEEE conference on computer vision and pattern recognition 2014.

[18]Redmon,Joseph,etal."Youonlylookonce:Unified, real-time object detection." Proceedings of the IEEE conference on computer vision and pattern recognition 2016.

[19]Du,Zhihui,etal."Robotcloud:Bridgingthepowerof robotics and cloud computing." Future Generation Computer Systems 74(2017):337-348.

[20] A.K.BozcuogˇluandM.Beetz, ‘‘Acloudservicefor robotic mental simulations, ’’in Proc. IEEE Int. Conf. Robot. Autom. (ICRA),May2017,pp.2653–2658.

[21] C.Huang, L.Zhang, T.Liu,and H.Y.Zhang,’‘Acontrol middlewareforcloudrobotics, ’’in Proc. IEEE Int. Conf. Inf. Autom. (ICIA),Aug.2016,pp.1907–1912.

[22] B. Hu, H. Wang, P. Zhang, B. Ding, and H. Che, ‘‘Cloudroid:AcloudframeworkfortransparentandQoSawareroboticcomputationoutsourcing, ’’in Proc. IEEE 10th Int. Conf. Cloud Comput. (CLOUD), Jun. 2017, pp. 114–121.

[23] Wan,Jiafu,etal."Context-awarecloudroboticsfor material handling in cognitive industrial Internet of Things." IEEE InternetofThingsJournal 5.4(2017):22722281.

[24]Gharibi,Mirmojtaba,RaoufBoutaba,andStevenL. Waslander. "Internet of drones." IEEE Access 4 (2016): 1148-1162.

[25]Bona,Basilio."‘Advancesinhumanrobotinteraction for cloud robotics applications." Polytech. Univ. Turin, Turin, Italy, Tech. Rep (2016).

[26]Sien,JonathanPhangThen,KingHannLim,andPekIng Au. "Deep learning in gait recognition for drone surveillance system." IOP Conference Series: Materials Science and Engineering.Vol.495.No.1.IOPPublishing, 2019.

[27]Hwang,Jinsoo,andHyunjoonKim."Consequencesof a green image of drone food delivery services: The moderating role of gender and age." Business Strategy and the Environment 28.5(2019):872-884.

[28] Kyrkou, Christos, et al. "DroNet: Efficient convolutionalneuralnetworkdetectorforreal-timeUAV applications." 2018 Design, Automation & Test in Europe Conference & Exhibition (DATE).IEEE,2018.

[29]Nuijten,RikJG,LammertKooistra,andGerlindeB. De Deyn. "Using unmanned aerial systems (UAS) and object-basedimageanalysis(OBIA)formeasuringplantsoil feedback effects on crop productivity." Drones 3.3 (2019):54.

[30] Kyrkou, Christos, and Theocharis Theocharides. "EmergencyNet:Efficientaerialimageclassificationfor drone-based emergency monitoring using atrous convolutional feature fusion." IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing 13(2020):1687-1699.

[31] Kim, Hangeun, et al. "Development of a UAV-type jellyfishmonitoringsystemusingdeeplearning." 2015 12th International Conference on Ubiquitous Robots and Ambient Intelligence (URAI).IEEE,2015.