Mouse Cursor Control Hands Free Using Deep Learning

1UG student, Dept. of Electronics and Computers Engineering, Sreenidhi Institute of Science and Technology, Telangana, India

2UG student, Dept. of Electronics and Computers Engineering, Sreenidhi Institute of Science and Technology, Telangana, India

3UG student, Dept. of Electronics and Computers Engineering, Sreenidhi Institute of Science and Technology, Telangana, India

4Asst. Professor, Dept. of Electronics and Computers Engineering, Sreenidhi Institute of Science and Technology, Telangana, India

Abstract – Mouse-free cursor control using facial movements has emerged as an innovative technology that revolutionizes the way we interact with digital interfaces. By harnessingthepoweroffacial expressions, users cannavigate menus, click, scroll, and perform various actions seamlessly, mimickingnaturalinteraction. This technologyeliminatesthe need for physical input devices such as mice and trackpads, offering a more intuitive and accessible approach to humancomputer interaction. Facial recognition and tracking technologies have played a crucial role in the development of mouse-freecursorcontrol..Bypredictingfaciallandmarksand analyzing features like eye aspect ratio and mouth aspect ratio, the system can detect actions such as winks, blinks, yawns, and pouts, which act as triggers for controlling the mouse cursor. We have used SVM and HOG algorithms in this project to predict cursor movement from facial features extracted and HOG for obtaining facial landmarks. The applications of mouse-free cursor control extend beyond personal computing devices. Industries such as healthcare, gaming, virtual reality, and design can greatly benefit from this technology.Inhealthcare,itenableshands-freenavigation of medical records, improving workflow efficiency and reducing the risk of contamination. In gaming and virtual reality, it provides an immersive and intuitive experience by allowing users to control their virtual avatars with facial expressions

Key Words: Mouse-free cursor control, facial movements, digital interfaces, natural interaction, SVM, HOG, facial recognition, tracking technologies, intelligent systems, facial landmarks, eye aspect ratio, mouth aspect ratio

1.INTRODUCTION

Intoday'sdigitalage,wherecomputersanddevicesplayan integral and essential role in our daily lives, the way we interactwithtechnologycontinuestoevolve.Thetraditional cursorcontrolmethodsthatareprevailinginthemarketare primarilyreliantonmanualinputdevicessuchasmiceand trackpads, are being challenged by innovative solutions aimedatenhancinguserexperienceandaccessibility.One suchgroundbreakingadvancementistheadventofmouse-

freecursorcontrolusingfacialmovements.This technology allowsuserstonavigateandinteractwithdigitalinterfaces through natural facial expressions. Facial recognition and trackingtechnologieshavewitnessedsignificantprogressin recentyears,findingapplicationsinvariousdomains,from securitysystemstoaugmentedreality.Buildinguponthese advancements, researchers and engineers have devised intelligentsystemscapableofaccuratelyinterpretingfacial movements and translating them into precise cursor movements on the screen. This breakthrough opens up a worldofpossibilities,empoweringindividualswithlimited mobility,eliminatingtheneedforphysicalperipherals,and revolutionizingthewayweinteractwithdigitalinterfaces. The concept of this project is to make facial movements embodies a user-centered approach, emphasizing the importance of intuitive and natural interaction. By harnessing the power of facial expressions, users can navigate menus, click, scroll, and perform a wide range of actions seamlessly, mimicking the way we instinctively interact with the physical world. This technology will not justenhancetheaccessibilitybutalsoimprovesproductivity, as it eliminates the physical constraints linked with traditional input devices, allowing users to interact with their devices in a more dynamic and flexible manner. Moreover, the potential applications of mouse-free cursor control extend beyond personal computing devices. Industries such as healthcare, gaming, virtual reality, and design can greatly benefit from this technology. In healthcare, for instance, it can facilitate hands-free navigation of medical records, reducing the risk of contamination and improving workflow efficiency. In the gaming and virtual reality realms, it can provide an immersive and intuitive experience by enabling users to controltheirvirtualavatars

2. LITERATURE SURVEY

The primary objective of this eye-controlled system is to monitorcursormovementbyidentifyingthecentralposition ofthepupil.Toachievethis,acombinationofRaspberryPi and OpenCV is employed. The Raspberry Pi serves as the hardwareplatformandisequippedwithanSD/MMCcard

slot.TheSDcardcontainsthenecessaryoperatingsystem fortheRaspberryPitobeginoperating.Oncetheapplication programisinstalledontheRaspberryPi,itcanactivatethe eye-tracking feature and carry out the required operations.[1]

Inthisproject,theauthorsaimedtoassistindividualswith handdisabilitiesthathindertheirabilitytouseamouse.To addressthis,wedevelopedanapplicationthatutilizesfacial features such as the nose tip and eyes to interact with a computer. Our approach for detection involves quickly extractingpotentialfacecandidatesusingaSix-Segmented Rectangular (SSR) filter. We then verify these candidates using a support vector machine to ensure accurate face detection. To avoid mistakenly selecting background elements, we incorporate a straightforward motion cue. Additionally, for face tracking purposes, we track the patterns between the eyes by continuously updating templatematchingtechniques.[2]

ThesystemutilizesDlibandtheHaarCascadealgorithmfor cursor control, relying on eye and facial movements. This systemcanbefurtherenhancedbyincorporatingadditional techniques, such as clicking events and interfaces that respondtoeyemovementandblinking.Byleveragingthese methods, users can interact with the cursor and perform actionsthroughtheireyesandfacialexpressions.[3]

This paper presents a real-time method for detecting eye blinksandwinksusingvideofootageandMATLABsoftware. Theapproachincludeslocalizingtheeyesandfacialpairs,as wellasapplyingopticalflowclassificationandanalysis.The methodology in this study has been verified and tested in various environments, demonstrating a high level of accuracy in detecting blinks and winks. Furthermore, the system is capable of performing mouse-like functions, providing users with an alternative means of interacting withtheircomputers.[4]

3. METHODOLOGY

The procedure entails utilizing a Face Detection Model to capture a facial image from a live video feed obtained throughacameraorwebcam.Followingthat,theLandmark Detection Model is applied to identify different facial componentssuchasthemouth,leftandrighteyes,andother facialcharacteristicsfromtheobtainedimage.Subsequently, theHeadPoseEstimationModelisemployedtoanalyzethe movementsofthehead,whichcorrespondtothemotionof thecomputermousecursor Theaccurateorientationofthe computermousecursorreliesonthecollaborativeoutcomes ofboththeLandmarkDetectionModelandtheHeadPose Estimation Model, which are integrated by the Gaze EstimationModel.TheGazeEstimationModelplaysavital role in deciphering facial expressions to ensure precise positioningofthemousepointer

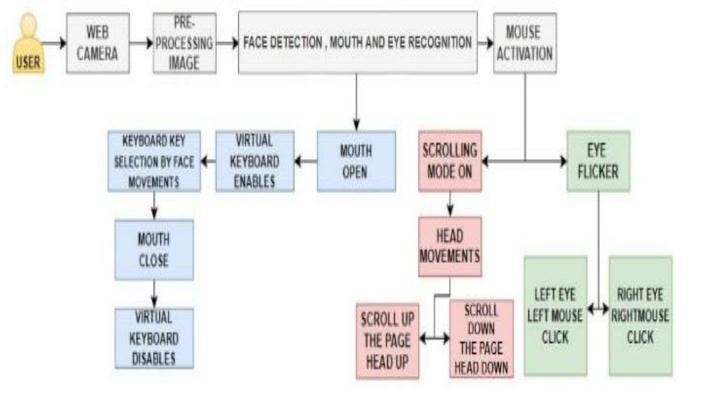

4. WORKING

This system that we developed seamlessly integrates advancedmachinelearningalgorithmslikeSupportVector Machines(SVM)andHistogramofOrientedGradients(HOG), alongsidefacialratiossuchasMouthAspectRatio(MAR)and EyeAspectRatio(EAR).Essentialmachinelearninglibraries like OpenCV, dlib, and pyautoGUI are instrumental in the implementation of this cutting-edge technology. By effectively detecting and tracking facial landmarks, extracting pertinent features, and employing SVM classification with the MAR and EAR ratios, the system preciselyinterpretsfacialmovementstoaccuratelypredict the desired cursor direction. The steps involved in the workingofthisprojectare:-

Step 1:Thesystemstartsbyusingafacedetectionalgorithm from OpenCV or dlib to locate and extract the face region fromavideostreamcapturedbyacameraorwebcam.

Step 2: Once the face is detected, a landmark detection algorithm,suchasdlib'sshapepredictororOpenCV'sfacial landmark detector, is employed to identify key facial landmarkssuchastheeyes,nose,mouth,andeyebrows.

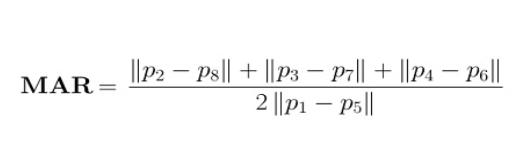

Step 3:Thesystemcomputesfacialratios,suchastheMouth Aspect Ratio (MAR) and Eye Aspect Ratio (EAR). MAR is calculatedbymeasuringthe ratioofthedistancebetween theupperandlowerlipstotheverticaldistancebetweenthe mouthandthechin.EARiscalculatedbymeasuringtheratio ofthedistancebetweentheinnerandoutercornersofthe eyestothedistancebetweentheverticalmidpointsofthe eyes and the eye corners. These ratios provide valuable informationaboutthemouthandeyemovements.

Step 4: Toenablemachinelearning,atrainingdatasetneeds to be created. This dataset includes samples of facial movement patterns, such as moving the cursor up, down,

left,orright.Thedatasetislabeledwiththecorresponding cursormovement.

Step 5:ASupportVectorMachine(SVM)classifieristrained usingtheextractedfeatures,includingMARandEAR,andthe correspondingcursormovementlabels.SVMisasupervised learningalgorithmthatcanclassifyandpredictnewsamples basedonthelearnedpatterns.

Step 6:OncetheSVMmodelistrained,thesystemutilizes thereal-timefacialmovementdataobtainedfromthefacial landmarkstocalculatetheMARandEARratios.Theseratios are then fed into the trained SVM model to predict the correspondingcursormovement.TheSVMmodelclassifies the facial movement features and determines the appropriatecursordirection.

Step 7: Finally, the pyautogui library is employed to translatethepredictedcursormovementintoactualmouse movements. Pyautogui provides functions to control the mousecursor'spositionandsimulatemouseclicks.

Eye Region Detection: It is evident that the Eye-AspectRatio (EAR) is a straightforward yet effective feature that effectivelyutilizesfaciallandmarks.EARprovesvaluablein detecting both blinks and winks. It Begins with the left corneroftheeyeasyouwerelookingatthepersonanditis working clockwise around the left out region. Each eye is representedwithcoordinates(x,y).Onlookinganimage,we should consider the key points of an eye: As there is a relation between the height and width of the points (coordinates).ThisiscalledEyeAspectRatio(EAR).

5. RESULTS

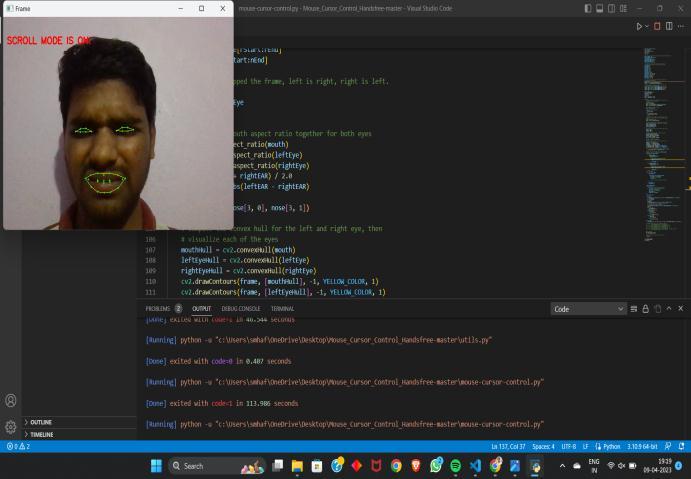

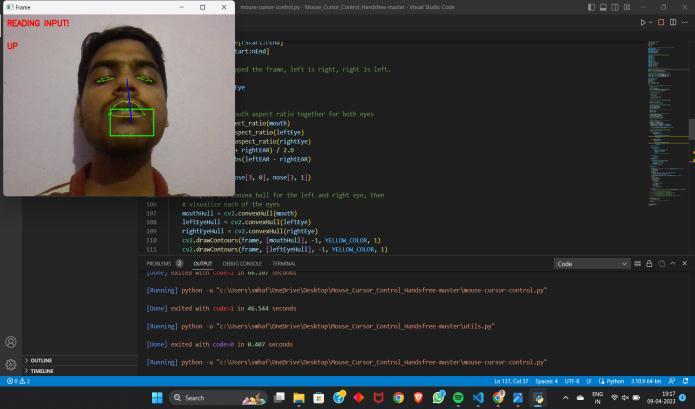

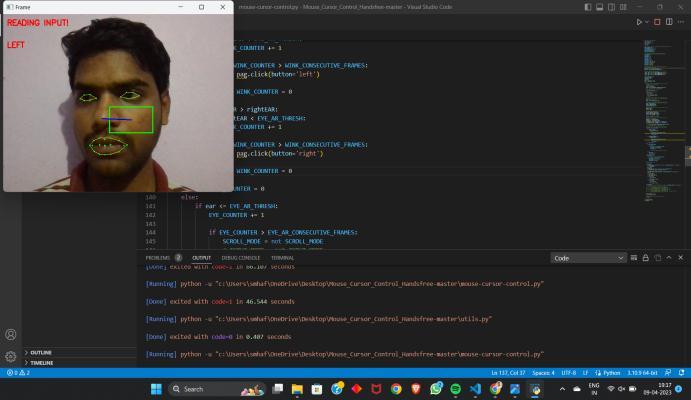

By capturing and analyzing facial movements, the system accurately interprets the user's intentions and translates themintoprecisecursormovementsonthescreen.Theuser can control the cursor's direction by simply moving their head, and basic mouse actions such as left-clicking, rightclicking, and scrolling can be performed through intuitive facialgesturessuchaswinkingandsquinting.

Fig 4.1 EyeAspectRatio

Mouth-Aspect-Ratio (MAR): Drawing significant inspiration from the EAR feature, we made slight modifications to the formula to create a metric capable of detecting the state of an open or closed mouth. While not entirelyoriginal,theapproachproveseffectiveinachieving thedesiredoutcome.ThisfeaturealongwithEARbothare takenintoconsiderationtocalculatetheresult.

6. CONCLUSIONS

The concept of mouse-free cursor control using facial movementspresentsaninnovativeandpromisingsolution forrevolutionizingourcomputerinteractions.Byharnessing the power of facial movements, this technology opens up new frontiers of accessibility, enabling individuals with disabilities to effortlessly engage with digital devices. Moreover,itsimplicationsspanacrossindustries,boosting productivityandeffectivenessineducation,entertainment, andhealthcare.Withcontinuedresearchanddevelopment, we can expect even more sophisticated and user-friendly solutionsinthefuture.Mouse-freecursorcontrolusingfacial movements holds the potential to transform the way we interactwithtechnology,makingitmoreintuitive,natural, andaccessibletoallusers,ultimatelyenhancingourdigital experiences

REFERENCES

[1] A.Sivasangari.,D.Deepa.,T.Anandhi.,A.PonrajandM.S. Roobini., "Eyeball based Cursor Movement Control," 2020InternationalConferenceonCommunicationand Signal Processing (ICCSP), Chennai, India, 2020, pp. 1116-1119,doi:10.1109/ICCSP48568.2020.9182296.

[2] Sunita Barve, Dhaval Dholakiya, Shashank Gupta, Dhananjay Dhatrak / International Journal of Engineering Research and Applications (IJERA) ISSN: 2248-9622www.ijera.comVol.2,Issue2,Mar-Apr2012, pp.1406-1410

[3] SatyanarayanaB.,etal."EyeballMovementbasedCursor Control using Raspberry Pi and OpenCV." 2020 International Journal of Innovative Technology and ExploringEngineering(IJITEE).ISSN:2278-3075

[4] Sovit Ranjan Rath, et al. “Machine Learning and Deep Learning,FacedetectionwithDlibusingHOGandLinear SVM”