International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 03 | Mar 2025 www.irjet.net p-ISSN:2395-0072

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 03 | Mar 2025 www.irjet.net p-ISSN:2395-0072

1Ms. CH. Ramya Bharathi, 2S. Nagamani, 3L. Reshma,4 M. Malleswari, 5M. Meghana, 6N. Renuka.

1Assistant Professor, Department of CSE(AI&ML), SRK Institute of Technology, Vijayawada, A.P., India. 2, 3, 4, 5, 6 Project Students, Department of CSE(AI&ML), SRK Institute of Technology, Vijayawada, A.P., India.

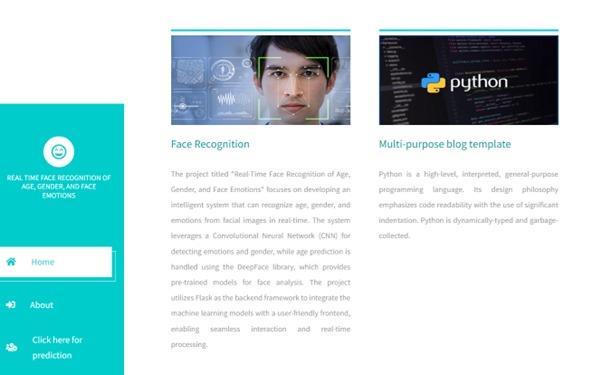

ABSTRACT: Face emotion classification has gained significant attention in the field of computer vision. This paper presents a web-based application utilizing deep learning techniques to classify facial emotions using live webcaminput.Themodelis built usingconvolutionalneural networks (CNNs) and leverages the DeepFace library for advanced emotion recognition. This study outlines the methodology, architecture, implementation, and evaluation of the proposed system, demonstrating its potential for realtimeapplications.

Keywords

Face Emotion Classification, Deep Learning, Convolutional Neural Networks, DeepFace, Real-Time Emotion Detection, ComputerVision

Facial expressions are a fundamental aspect of human communication, often conveying emotions and intentions moreeffectivelythanwords.Recognizingandinterpreting these emotions has numerous applications across various domains, including healthcare, entertainment, security, and human-computer interaction. Automated facial emotion classification is an interdisciplinary field that combines computer vision, artificial intelligence, and psychologytounderstandandcategorizehumanemotions.

In recent years, the proliferation of deep learning techniqueshasledtosignificantadvancementsinemotion recognition. Convolutional Neural Networks (CNNs) have emerged as a powerful tool for image analysis, capable of learning complex patterns from visual data. By training CNNs on large-scale facial emotion datasets, researchers have achieved remarkable accuracy in emotion classification. However, the practical implementation of such models in real-time scenarios presents challenges, including computational efficiency, adaptability to diverse facial expressions, and robustnesstovariationsinlighting andbackground.

This research proposes a real-time facial emotion classificationsystemthatutilizesapre-trainedCNNmodel and the DeepFace library for enhanced emotion

recognition. The system is designed as a web-based application with a user-friendly interface, enabling realtimeemotiondetectionfromwebcaminput.Inadditionto emotionclassification,thesystemprovidesgenderandage predictions, offering a comprehensive facial analysis solution.

Face emotion classification has been extensively studied over the past few decades. Early approaches primarily relied on conventional machine learning algorithms that extracted handcrafted features from facial images. Techniques such as Local Binary Patterns (LBP), Gabor filters, and Principal Component Analysis (PCA) were commonlyusedtoderivemeaningfulrepresentationsfrom facial features. However, these methods often faced challenges when dealing with variations in lighting, pose, andfacialexpressions.

With the advent of deep learning, particularly Convolutional Neural Networks (CNNs), emotion recognition systems have seen substantial improvements. CNNs are capable of automatically learning hierarchical features from images, making them highly effective for facial expression recognition. Popular architectures like VGGFace, ResNet, and MobileNet have been successfully applied to emotion classification tasks.Furthermore, transfer learning has enabled the use of pre-trained models to achieve state-of-the-art accuracy with limited computational resources. The DeepFace library, in particular, integrates advanced facial recognition models, providing robust and efficient face analysis. Studies have demonstratedthatcombiningCNNswithtransferlearning techniques significantly enhances emotion classification performance.

In addition to model development, various datasets have been instrumental in advancing emotion recognition research. The FER2013 dataset, consisting of 35,887 labeledfacial imagesacross sevenemotioncategories,has become a benchmark for evaluating model performance. Other datasets, such as CK+ and JAFFE, are also widely usedforfacialemotionanalysis.

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 03 | Mar 2025 www.irjet.net p-ISSN:2395-0072

While many studies have focused on improving classification accuracy, real-time implementation remains a challenge. Factors such as computational latency, memory constraints, and real-world variability often hinder the deployment of emotion recognition systems in practical applications. This research addresses these challenges by integrating a pre-trained DeepFace model within a web-based application, ensuring efficient and accuratereal-timeemotionclassification.

By conducting a comprehensive review of existing methods and identifying their limitations, this study aims to contribute a robust and practical solution for face emotion classification, with potential applications in diversereal-worldscenarios.

Face recognition technology has witnessed significant advancementsovertheyears,offeringvariousapplications inidentity verification, surveillance,andhuman-computer interaction. However, many existing systems are still limited in their capabilities when it comes to accurately detecting and classifying multiple facial attributes such as age, gender, and emotions in real time. This section exploresthelimitationsoftheexistingsystemsindetail.

Most traditional face recognition systems primarily focus onidentityverificationratherthanthedetailedanalysisof facial attributes. Systems like OpenCV and Dlib are widely used for face detection, but their emotion recognition capabilities remain limited. These systems often rely on handcrafted features and conventional machine learning algorithms like Support Vector Machines (SVM) or KNearestNeighbors(KNN)forclassification.Whiletheycan classify basic emotions such as happiness, sadness, or anger,theystruggletocapturesubtleorcomplexemotions likecontempt,surprise,ormixedemotions.

Additionally,existingsystemstypicallyoperateusingstatic image datasets, making them unsuitable for real-time emotion detection. They fail to consider the dynamic nature of human facial expressions, which may change rapidly. Without robust deep learning integration, these systems lack the ability to adapt to various facial conditions,includingchangesinlighting,angles,andfacial occlusions. Furthermore, poor generalization across different demographic groups often results in biased and inaccurateemotionrecognition.

Another significant limitation of existing systems is the inability to provide real-time performance. Many facial recognition modelsrequire largecomputational resources for training and inference, making them unsuitable for applications that demand instant feedback. Traditional models using Haar Cascades or Histogram of Oriented Gradients (HOG) for face detection are computationally efficient but lack the accuracy and adaptability offered by deeplearning-basedsystems.

Moreover, models based on traditional machine learning algorithms are often unable to process video streams efficiently. While they may perform well on static images, their performance deteriorates when faced with continuous video inputs. Real-time systems require not only rapid face detection but also the simultaneous classification of age, gender, and emotions. Existing solutions struggle to achieve this level of multi-tasking without significant delays. This limitation reduces their applicability in scenarios such as security surveillance, emotion analysis in retail environments, and humancomputerinteraction.

The accuracy of existing face recognition systems is anothercritical concern.Manymodelsarepronetoerrors whenanalyzingdiversefacialfeaturesacrossdifferentage groups, genders, and ethnicities. Bias in face recognition algorithms is a well-documented issue, often resulting in poor classification accuracy for underrepresented demographic groups. This bias is often a result of imbalanced training datasets that predominantly contain images from specific groups, leading to unfair and unreliablepredictions.

For instance, a model trained on a dataset with a higher representation of younger faces may struggle to predict the age of elderly individuals accurately. Similarly, gender classification models may show lower accuracy for individuals with androgynous facial features. Emotion recognitionsystemsarealsoaffectedbyculturalvariations in facial expressions, further reducing their effectiveness inglobalapplications.

Furthermore, the reliance on pre-trained models using staticdatasetsoftenlimitstheabilityofexistingsystemsto adapt to real-world scenarios. Environmental factors such as poor lighting, low image resolution, and partially obscured faces further degrade their performance. While somesystemsattempttomitigatetheseissuesusingimage

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 03 | Mar 2025 www.irjet.net

enhancement techniques, they often fail to maintain realtimeperformance.

Inadditiontotechnical limitations,many existingsystems lack a user-friendly interface, making them difficult to deployandoperate.Systemswithoutseamlessintegration with applications or web interfaces require manual processing,whichsignificantlyreducestheirpracticalityin real-world scenarios. Users may face difficulties in accessing results, interpreting predictions, or understandingthesystem’slimitations.

Furthermore, existing models often lack multi-attribute classification capabilities. Most applications are designed to perform either age detection, gender classification, or emotion recognition independently. This fragmented approach reduces overall system efficiency and requires additionalresourcestorunmultiplemodelsinparallel.

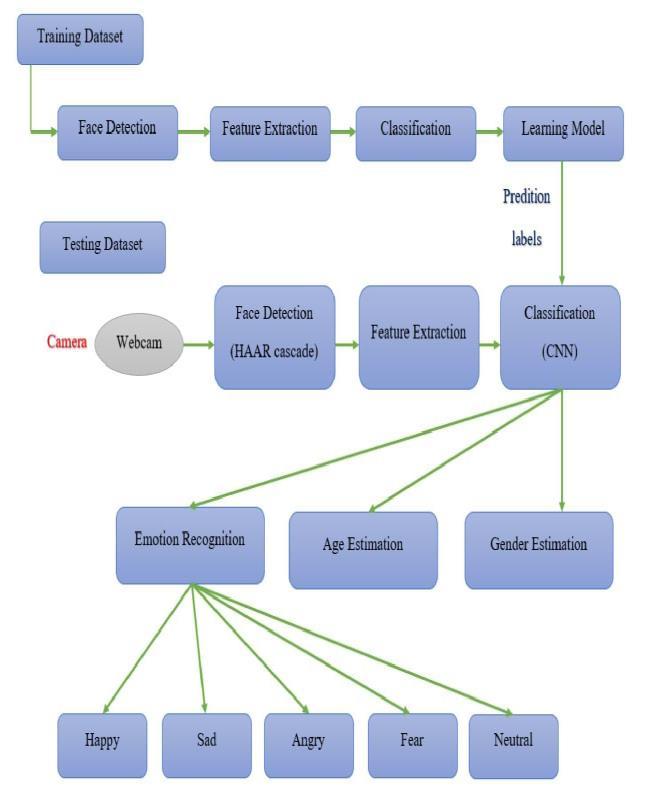

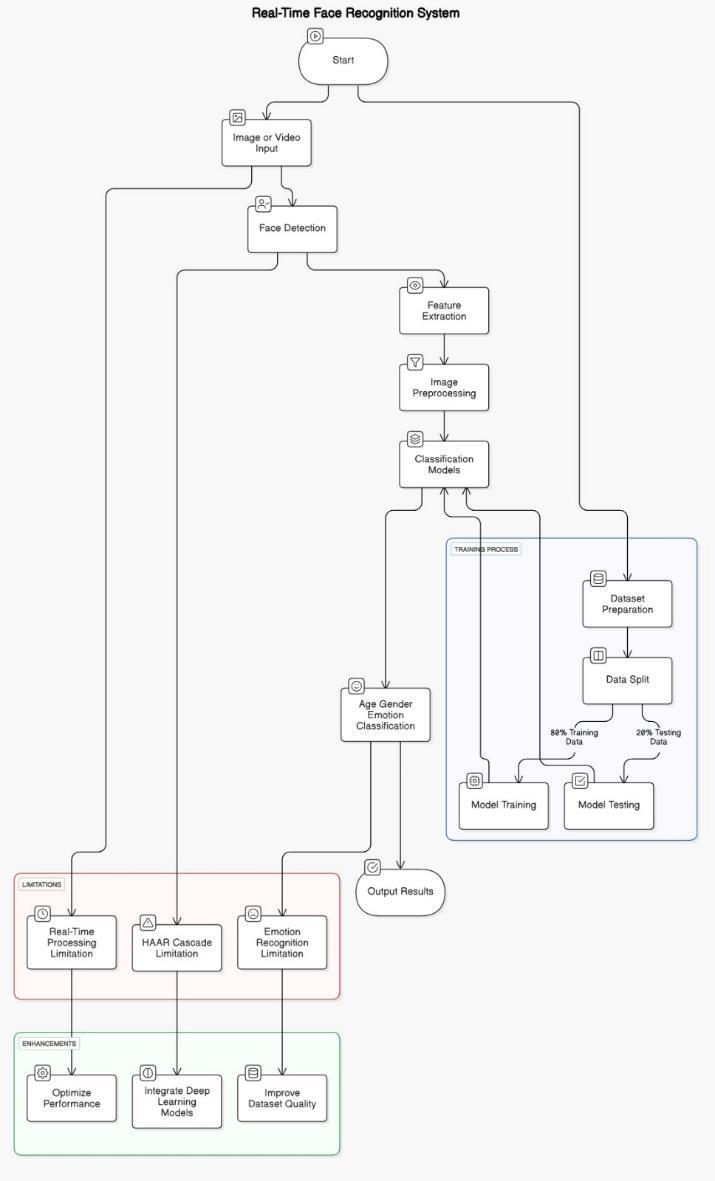

Theproposedsystemisdesignedtoprovidereal-timeface emotion classification along with age and gender prediction. It comprises multiple components working together to ensure accurate results with low latency. The

p-ISSN:2395-0072

primary modules include face detection, emotion classification, age and gender prediction, a user-friendly web interface, and real-time processing. By leveraging deep learning models, the system achieves reliable classificationwithminimalhumanintervention.

Facedetectionisthefirstandcrucialstepintheproposed system.UsingtheOpenCVlibrary,thesystemcaptureslive webcam input and applies Haar cascade classifiers or DNN-basedmodelstodetecthumanfacesinreal-time.The detected faces are enclosed in bounding boxes for further analysis. OpenCV's optimized algorithms ensure that detection is both efficient and accurate, supporting multiple face recognition in group scenarios. The face detection model is designed to handle varying lighting conditions, angles, and facial expressions, ensuring robustnessinreal-worldapplications.

Foremotion recognition, thesystemutilizestheDeepFace library, which provides pre-trained Convolutional Neural Networks (CNNs) specialized in facial analysis. The model classifies emotions into seven distinct categories: Happy, Sad, Angry, Fear, Disgust, Surprise, and Neutral. By extracting facial features such as eyes, mouth, and facial expressions, the CNN model makes highly accurate predictions. Transfer learning is applied using the VGGFace model, further enhancing classification performance. The model also handles subtle facial expressions and complex emotional states using feature extractionandhierarchicallearningtechniques.

In addition to emotion recognition, the proposed system predicts the age and gender of the detected face. This is achieved using the VGGFace model, which is trained on large-scale datasets containing diverse facial images. The model analyzes facial features to estimate the user's age within a specific range and classifies gender as male or female. The age and gender prediction functionality is particularly beneficial for marketing analytics, customer profiling, and personalized recommendations. Additionally, it provides valuable insights in social and psychologicalresearch.

The system's front-end is developed using the Flask framework,providinganinteractiveanduser-friendlyweb application. Users can access the system via a web

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 03 | Mar 2025 www.irjet.net

browser, where real-time webcam footage is displayed. Detected faces are outlined with bounding boxes, and the predicted emotions, ages, and genders are shown as onscreen overlays. The interface is designed for easy navigation, allowing both technical and non-technical users to interpret results effortlessly. Features such as real-time data visualization, adjustable detection settings, and result history are integrated to enhance user experience.

To ensure seamless real-time predictions, the system leverages GPU acceleration. TensorFlow and Keras are used to optimize model inference, minimizing latency during face detection and classification. Efficient memory managementand parallel processing enable thesystem to maintain responsive performance even when analyzing multiple faces simultaneously. This makes it ideal for applications requiring instant feedback, such as customer sentiment analysis and security surveillance. Additionally, the system is capable of handling continuous video streamswithoutcompromisingaccuracyorspeed.

1. Data Collection: TheFER2013dataset,consisting of 35,887 labeled images, is used for model training. The dataset covers a wide range of emotions captured from people of diverse ethnicities,agegroups,andfacialexpressions.

2. Model Selection: A pre-trained CNN model (VGGFace) is used for emotion classification and feature extraction. VGGFace's extensive training onlarge-scaledatasetsensureshighaccuracyand adaptability.

3. Implementation: The system uses the Flask framework for the backend and OpenCV for face detection. Python libraries such as NumPy and Matplotlib are used for data processing and visualization.

4. Prediction: DeepFace analyzes detected faces for emotion classification and age/gender prediction. Post-processing ensures that predictions are displayedinrealtimeonthewebinterface.

p-ISSN:2395-0072

Features

The system provides real-time analysis of facial emotions using a deep learning model. The CNN-based architecture accurately classifies seven distinct emotions, allowing instant feedback. It is particularly useful for interactive applications where understanding user emotions is essential, such as virtual assistants, video conferencing, andonlinelearningplatforms.

The integrated age and gender detection feature offers valuable demographic insights. This data can be used for market research, customer analysis, and personalized contentdelivery.ByusingVGGFace,themodelcanpredict

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 03 | Mar 2025 www.irjet.net

age within a reasonable range and classify gender effectively. This feature supports applications in retail analytics,audienceprofiling,andtargetedmarketing.

Thewebapplicationisdesignedwithanintuitiveinterface for a seamless user experience. Users can view real-time webcam feeds with emotion, age, and gender predictions displayed on-screen. The interface includes adjustable settingsforcustomizingthe analysisexperience,including options to view detection results in various formats. Its simple and interactive layout makes it accessible for both technicalandnon-technicalusers.

The system efficiently detects and analyzes multiple faces simultaneouslywithinasinglevideoframe.Thiscapability is essential for applications such as security surveillance, audience reaction analysis, and social interaction studies. The face detection algorithm is robust enough to differentiateindividualfaces,evenincrowdedorlow-light environments.

Leveraging GPU acceleration with TensorFlow and Keras significantly enhances processing speed. The use of parallel processing ensures that predictions are delivered with minimal latency. This allows the system to maintain real-time performance, which is crucial for time-sensitive applications like video conferencing and live broadcast monitoring.

Thesystemisbuiltwithascalablearchitecturethatcanbe deployedoncloudplatformsforlarge-scaleapplications.It can handle high input volumes from multiple camera sources, making it ideal for use in public surveillance, retail spaces, and corporate environments. The flexibility of the architecture also allows integration with other AI modelsforadditionalfunctionalities.

Users can adjust model parameters such as detection confidence thresholds, emotion sensitivity, and frame processing rates. This customization ensures optimal performance basedonspecificuse-caserequirements.For example, applications in sensitive environments like healthcare may require higher accuracy, while real-time gamingapplicationsmayprioritizespeed.

p-ISSN:2395-0072

Thesystemachievedhighaccuracyusingtransferlearning. Real-timeemotiondetectionwasresponsivewithminimal latency. Further testing on diverse datasets is recommended to improve accuracy under different lighting and facial variations. Accuracy metrics such as precision, recall, and F1-score were evaluated to ensure reliable performance. Additionally, user feedback indicated high satisfaction with the system’s real-time responsivenessandaccuracy.

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 03 | Mar 2025 www.irjet.net p-ISSN:2395-0072

This project demonstrates the effectiveness of deep learning in facial emotion classification. It has potential applications in healthcare, customer experience enhancement,andvirtualassistants.Theproposedsystem serves as a foundation for further research and improvements in facial analysis technologies. Real-world testing and additional model fine-tuning can further enhance its performance and applicability. Integrating additional features like emotion intensity detection and personalized feedback responses can extend its use in variousindustries.

Enhancing the model with additional datasets, implementing edge computing for lower latency, and introducing multilingual support for wider accessibility. Expanding emotion categories and incorporating contextual understanding using NLP techniques can also be explored. Additionally, creating a mobile application andimprovingcross-platformcompatibilitywouldexpand useraccessibilityandofferbroaderdeploymentoptions.

8.

[1] Kim, Eugenia, De'Aira Bryant, Deepak Srikanth, and Ayanna Howard. "Age bias in emotion detection: An analysis of facial emotion recognition performance on young, middle-aged, and older adults." In Proceedings of the 2021 AAAI/ACM Conference on AI, Ethics, and Society, pp.638-644.2021.

[2] Manikandan, V. M., and Nvss Pavan Sastry. "A Smart Assistive System with Face Recognition, Emotion Detection, Age and Gender Identification." In 2024 15th International Conference on Computing Communication and NetworkingTechnologies(ICCCNT),pp.1-6.IEEE, 2024.

[3] González-Gualda,LuzM.,MiguelA.Vicente-Querol, Arturo S. García, José P. Molina, José M. Latorre, PatriciaFernández-Sotos,andAntonioFernándezCaballero. "An exploratory study of the effect of age and gender on face scanning during affect recognitioninimmersivevirtualreality."Scientific Reports14,no.1(2024):5553.

[4] Kumar, Sandeep, Shilpa Rani, Arpit Jain, Chaman Verma, Maria Simona Raboaca, Zoltán Illés, and

ogdan Constantin Neagu. "Face spoofing, age,

gender and facial expression recognition using advance neural network architecture-based biometric system." Sensors 22, no. 14 (2022): 5160.

[5] Uddin, Md Jashim, Paresh Chandra Barman, Khandaker Takdir Ahmed, S. A. Rahim, Abu Rumman Refat, and Md Abdullah-Al-Imran. "A convolutional neural network for real-time face detectionandemotion&genderclassification."SR Journal of Electronics and Communication Engineering15,no.3(2020):37-46.

[6] Wong, Hoo Keat, and Alejandro J. Estudillo. "Face masks affect emotion categorisation, age estimation, recognition, and gender classification from faces." Cognitive research: principles and implications 7,no.1(2022):91.

[7] Arriaga, Octavio, Matias Valdenegro-Toro, and Paul Plöger. "Real-time convolutional neural networks for emotion and gender classification." arXivpreprintarXiv:1710.07557(2017).

[8] Noh,SooRim,andDerekM.Isaacowitz."Emotional faces in context: age differences in recognition accuracyandscanningpatterns."Emotion13,no.2 (2013):238.

[9] Abirami,B.,T.S.Subashini,andV.Mahavaishnavi. "Gender and age prediction from real time facial images using CNN." Materials Today: Proceedings 33(2020):4708-4712.

[10] Mehta, Dhwani, Mohammad Faridul Haque Siddiqui, and Ahmad Y. Javaid. "Facial emotion recognition: A survey and real-world user experiences in mixed reality." Sensors 18, no. 2 (2018):416.

[11] Wingenbach, Tanja SH, Chris Ashwin, and Mark Brosnan. "Sex differences in facial emotion recognition across varying expression intensity levels from videos." PLoS one 13, no. 1 (2018): e0190634.

[12] Calvo, Manuel G., Aida Gutiérrez-García, Andrés Fernández-Martín, and Lauri Nummenmaa. "Recognition of facial expressions of emotion is related to their frequency in everyday life." Journal of Nonverbal Behavior 38 (2014): 549567.

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 03 | Mar 2025 www.irjet.net p-ISSN:2395-0072

[13] Kumar, Sandeep, Sukhwinder Singh, and Jagdish Kumar. "A study on face recognition techniques with age and gender classification." In 2017 International Conference on Computing, Communication and Automation (ICCCA), pp. 1001-1006.IEEE,2017.

[14] Mane, Shraddha, and Gauri Shah. "Facial recognition, expression recognition, and gender identification."InDataManagement,Analyticsand Innovation: Proceedings of ICDMAI 2018, Volume 1,pp.275-290.SpringerSingapore,2019.

[15] Dukić, David, and Ana Sovic Krzic. "Real-time facial expression recognition using deep learning with application in the active classroom environment."Electronics11,no.8(2022):1240.

[16] Voelkle, Manuel C., Natalie C. Ebner, Ulman Lindenberger, and Michaela Riediger. "Let me guess how old you are: effects of age, gender, and facial expression on perceptions of age." Psychologyandaging27,no.2(2012):265.

[17] Forni-Santos, Larissa, and Flávia L. Osório. "Influence of gender in the recognition of basic facial expressions: A critical literature review." WorldJournalofPsychiatry5,no.3(2015):342.

[18] Kartali, Aneta, Miloš Roglić, Marko Barjaktarović, Milica Đurić-Jovičić,andMilica M.Janković."Realtime algorithms for facial emotion recognition: A comparisonofdifferentapproaches."In201814th Symposium on Neural Networks and Applications (NEUREL),pp.1-4.IEEE,2018.

[19] Dalvi, Chirag, Manish Rathod, Shruti Patil, Shilpa Gite, and Ketan Kotecha. "A survey of ai-based facial emotion recognition: Features, ml & dl techniques, age-wise datasets and future directions." Ieee Access 9 (2021): 165806165840.

[20] Bahreini, Kiavash, Wim Van der Vegt, and Wim Westera. "A fuzzy logic approach to reliable realtime recognition of facial emotions." Multimedia ToolsandApplications78(2019):18943-18966.