Welcome to the February 2023 issue of

ARCUS > Engineered for demanding sports and live major market news. Both touchscreen and physical control, plenty of IP layering, and paging, routing and logic control.

STRATA For remote work and local news. Works with Wheatstone’s LAYERS Server Engine or the Wheatstone BLADE-4 IP physical mix engine.

TEKTON > Compact, easy operation with physical and touch-screen flexibility. The TEKTON has all the TV features most studios need.

> < LAYERS MIX SUITE

Build/control/extend your network virtually. Deploy multiple software mix engines and multitouch glass controllers from one or multiple servers to streamline your workflow.

BROADCAST AUDIO PERFECTIONISTS ®

News Starts

Good

Here Wheatstone AES67/SMPTE 2110 -30 IP Audio Network Systems Learn more: wheatstone.com/tv-layers

AoIP WheatNet-IP Tablets Physical Control Surfaces Larger Format Touch Screens

LAYERS MIX SUITE SOFTWARE EQ/Dynamics Routing Logic LAYERS

equipment guide signal conversion www.tvtech.com | February 2023 WHAT’S NEW IN DISPLAY TECH? • 32-BIT AUDIO COMES OF AGE • POWER CONTROL FOR LIGHTING ST2110SMPTEUPDATE p.16 Virtual Production Bringing new creative techniques to reality

By Michael Silbergleid

By Michael Silbergleid

By Pete Putman

By Pete Putman

contents 10 The Reality of Virtual Production Making the unreal look real while delivering cost and time savings

13 Display Tech—What’s Behind the Glass A primer on the different display technologies spotted at this year’s CES

16 SMPTE ST 2110: A Vibrant Six-Year-Old How has this critical standard impacted broadcasters?

Wes Simpson 18 Discovering the Magic of 32-Bit Float Audio Recording Companies such as Zoom, Tascam and Sound Devices are leading the charge By Frank Beacham 20 Preparing for Your Next Lighting Package—Power Control Existing lighting infrastructure will need modification to work with new lighting

Bruce Aleksander 22 Why Does Football Sound Different Across TV Networks? A number of variables and guidelines create the differences in NFL audio

Dennis Baxter February 2023 volumn 41, issue 02 6 in the news 24 eye on tech 34 people 22 13 16 twitter.com/tvtechnology | www.tvtech.com | February 2023 3 equipment guide user reports signal conversion • Lawo • ENCO • Blackmagic Design • Multidyne • Grass Valley 26 26 6 10

By

By

By

TVs at CES? Meh

By all accounts, the 2023 International CES returned to its pre-pandemic form last month with attendance exceeding 115,000, making it the largest audited global tech event since the 2020 CES, according to the organization. Attendees were wowed by the latest advancements in health and auto tech as well as “smart” everything from strollers to bird feeders.

As for TVs? Yeah, they were there—you couldn’t miss them in their traditional home in the Central Hall. Samsung, LG, Hisense, Panasonic and others were naturally enthusiastic about the latest technology behind their advanced displays that feature an ever-expanding alphabet soup of LED+ technologies.

But perhaps the current state of television at CES was best exemplified by the company that once stood alone among the crowd of consumer TVs: Sony—which announced prior to the show that it would not be showing any new TV-related products.

TVs ceased to be the darling of CES long ago. After the rush of flat screen HDTV sets two decades ago that (sort of) coincided with broadcasters’ move to digital, and the disastrous detour of 3DTV in the early aughts, the next big thing was “4K.” For several years that was the buzzword of CES, with the “promise” of 8K not far behind.

Displace’s new TVs that do away with all the wires, (including the power cord), was among the few products that elicited any measure of enthusiasm of 2023 CES.

But now, when the average consumer can purchase a 4K 55-inch flat screen for $150, the enthusiasm has waned. Flat screens have gotten larger and more lightweight, but they’re probably not even the target of thieves anymore, who are more interested in stealing your iPhone.

It’s important to remember that there are “TVs” and then there are the “displays,” which are not one and the same. As for display technology, there were some incremental advances at the show, with LG showing off a transparent OLED (albeit not really new) and “wireless” displays from the Korean manufacturer as well as startup Displace, which promised to do away with even the power cord by utilizing battery packs. Samsung continues to push the envelope with its Micro- and QLED displays, as reported by display expert Pete Putman in this issue.

But these technologies will really have more of an impact on digital signage, an area that has greatly benefited from such advances.

The key word here is “incremental.” With advances in upscaling powered by AI, 4K is practically an afterthought now, with 1080p/60 being “good enough” for average viewers. Only a small cross-section of viewers subscribe to the “4K tiers” that are offered by YouTube and cable and satellite companies during special events like the Olympics and the Super Bowl. Even Fox Sports’ announcement that it would “broadcast” the NFL divisional playoffs last month (for the umpteenth time) elicited yawns from consumers who have heard it all before.

As for audio? Yes, immersive audio has been on the horizon for awhile now, but hardly any programming is available in the format and until home audio products become more affordable and consumers see a need for it, it continues to remain a niche area.

At TV Tech, our main focus is on the technology that brings the content to the home (and now mobile) viewers. We cover advances in TVs and displays because we know you want to know not only how the content is being produced and delivered, but what consumers are viewing it on. And this is a dynamic time for media production, with advances in remote and virtual production offering new ways for broadcasters and media companies to lower costs, while increasing options and quality.

As for the average consumer and the TV set? This year’s CES proved more than ever that it has now reached its “meh” phase.

Tom Butts Content Director tom.butts@futurenet.com

FOLLOW US www.tvtech.com twitter.com/tvtech

CONTENT

Content Director

Tom Butts, tom.butts@futurenet.com Content Manager

Terry Scutt, terry.scutt@futurenet.com

Senior Content Producer

George Winslow, george.winslow@futurenet.com

Contributors Gary Arlen, Susan Ashworth, James Careless, Kevin Hilton, John Maxwell Hobbs, Craig Johnston, Bob Kovacs and Mark R. Smith

Production Managers Heather Tatrow, Nicole Schilling

Managing Design Director Nicole Cobban

Senior Design Director Cliff Newman

ADVERTISING SALES

Vice President, Sales, B2B Tech Group Adam Goldstein, adam.goldstein@futurenet.com

SUBSCRIBER CUSTOMER SERVICE

To subscribe, change your address, or check on your current account status, go to www.tvtechnology.com and click on About Us, email futureplc@computerfulfillment.com, call 888-266-5828, or write P.O. Box 8692, Lowell, MA 01853.

LICENSING/REPRINTS/PERMISSIONS

TV Tech is available for licensing. Contact the Licensing team to discuss partnership opportunities. Head of Print Licensing Rachel Shaw licensing@futurenet.com

MANAGEMENT

Chief of Staff Sarah Rees

Chief Revenue Officer, B2B Walt Phillips VP, B2B Tech Group, Carmel King Head of Production US & UK Mark Constance Head of Design Rodney Dive

FUTURE

US, INC.

130 West 42nd Street, 7th Floor, New York, NY 10036

All contents © 2023 Future US, Inc. or published under licence. All rights reserved. No part of this magazine may be used, stored, transmitted or reproduced in any way without the prior written permission of the publisher. Future Publishing Limited (company number 2008885) is registered in England and Wales. Registered office: Quay House, The Ambury, Bath BA1 1UA. All information contained in this publication is for information only and is, as far as we are aware, correct at the time of going to press. Future cannot accept any responsibility for errors or inaccuracies in such information. You are advised to contact manufacturers and retailers directly with regard to the price of products/services referred to in this publication. Apps and websites mentioned in this publication are not under our control. We are not responsible for their contents or any other changes or updates to them. This magazine is fully independent and not affiliated in any way with the companies mentioned herein.

If you submit material to us, you warrant that you own the material and/or have the necessary rights/permissions to supply the material and you automatically grant Future and its licensees a licence to publish your submission in whole or in part in any/all issues and/or editions of publications, in any format published worldwide and on associated websites, social media channels and associated products. Any material you submit is sent at your own risk and, although every care is taken, neither Future nor its employees, agents,subcontractors or licensees shall be liable for loss or damage. We assume all unsolicited material is for publication unless otherwise stated, and reserve the right to edit, amend, adapt all submissions.

Please Recycle. We are committed to only using magazine paper which is derived from responsibly managed, certified forestry and chlorine-free manufacture. The paper in this magazine was sourced and produced from sustainable managed forests, conforming to strict environmental and socioeconomic standards.

TV Technology (ISSN: 0887-1701) is published monthly by Future US, Inc., 130 West 42nd Street, 7th Floor, New York, NY 10036-8002. Phone: 978-667-0352.

Periodicals postage paid at New York, NY and additional mailing offices.

POSTMASTER: Send address changes to TV Tech, P.O. Box 848, Lowell, MA 01853.

February 2023 | www.tvtech.com | twitter.com/tvtechnology 4

Vol. 41 No. 2 | February 2023 Future plc is a public company quoted on the London Stock Exchange (symbol:

Chief Executive Zillah Byng-Thorne Non-Executive Chairman Richard Huntingford Chief Financial and Strategy Officer Penny Ladkin-Brand Tel +44 (0)1225 442

editor’s note Future plc is a public company quoted on the London Stock Exchange (symbol: FUTR)

Chief Executive Zillah Byng-Thorne Non-Executive Chairman Richard Huntingford Chief Financial and Strategy Officer Penny Ladkin-Brand Tel

FUTR) www.futureplc.com

244

www.futureplc.com

+44 (0)1225 442 244

Media Companies Form Group to Develop New TV Measurement Standards

Some of the world’s largest media companies have formed a consortium to design a new method of measuring media consumption in an attempt to streamline the process of measuring premium video content as well as provide an alternative to Nielsen.

Fox, NBCUniversal, Paramount, TelevisaUnivision, and Warner Bros. Discovery and the VAB are working with advanced advertising company OpenAP to form a new Joint Industry Committee on Premium Video Currency (JIC) “to enable multiple currencies with the primary focus of creating a measurement certification pro-

cess to establish the suitability of emerging cross-platform measurement solutions in advance of the 2024 upfront.”

The JIC said the process to develop measurement certification standards is currently underway and will be formalized and officially announced March 1st. It will reveal its preliminary findings April 25. It is also reaching out to other qualified premium video programmers to join the committee and elicit active participation from advertising agencies and qualified trade bodies to advance the multi-currency proposal..

Tom Butts

NABLF Opens Entries for 2023 Celebration of Service to America Awards

The NAB Leadership Foundation is accepting entries for the 2023 Celebration of Service to America Awards, spotlighting excellence in community service by local U.S. TV and radio stations. Stations and broadcast groups can enter their best community service campaign from the past year. Award categories are based on market size, and NAB members and non-members are eligible to enter. The entry window closes Monday, March 13.

"We are proud to honor and acknowledge the significant role broadcasters play within their communities," said NAB

Leadership Foundation president Michelle Duke. "Local television and radio stations have always been actively engaged with the neighborhoods they serve, and this is a way to show how they make a real difference in the lives of their viewers and listeners."

Finalists from each category will be announced in early April and winners will be named at The Celebration of Service to America Awards in Washington D.C. June 6. Attendees and invited guests include industry executives, broadcasting and media professionals, policy makers and past honorees.

George Winslow

George Winslow

FebruaryIn celebration of our 40th anniversary in 2023, TV Tech takes a look back at the past four decades of technology advances:

1983: Ampex announced its latest “C” format one-inch VTR, the VPR-3. Building on technology developed for the AVR-1 quad machine, the VPR-3 incorporated a vacuum capstan, which eliminated the pinch roller, and gas-film tape guidance to reduce friction and allow extremely rapid tape acceleration and deceleration. The new model also featured microprocessor-based control, built-in SCH meter and four-channel audio. (The price tag was $60k; about $179k in today’s money.)

1993: In an about face, Japan’s NHK admitted that its analog-based Narrow-Muse HD terrestrial broadcasting

Ampex’s VPR-3 videotape recorder with its companion TBC-3 digital time base corrector was considered by many in 1983 to be the ultimate one-inch type “C” machine due to its superior tape handling capabilities.

system did not perform as well as its digital rivals and decided to withdraw the technology from consideration in the ongoing U.S. trials to determine the best HDTV standard for implementation.

2003: Reflecting the internet’s ascent, a University of California study revealed that U.S. online users now consider it “far more important” than TV as a news source, and at least as important as printed media. The study also showed that an individual’s time online increased to 11 hours per week, up an hour from 2002.

2013: Although 4K TVs are now rolling off production lines and onto dealers’ sales floors, UHD signal sources remain problematic as cable and OTA channels can’t convey the necessary volume of data. However, if you purchased a top-of-the-line ($25k) 84-inch set, Sony threw in a loaner media player preloaded with 4K content for viewing.

James O’Neal

in the news 6 February 2023 | www.tvtech.com | twitter.com/tvtechnology

Credit: Jay Ballard, Museum of Broadcast Technology

Media Tech Sustainability Summit Slated for June

Former SMPTE Executive Director Barbara Lange of Kibo21 and Lisa Collins of Dovetail Creative have announced the launch of the Media Tech Sustainability Summit, an online event scheduled for June 20-21, focusing on sustainability issues within the broadcast, media & entertainment industry.

“Every person and every industry needs to take action now to mitigate the impacts of climate change; it is not someone else’s problem—it is all of ours, and there are plenty of areas where the media tech sector can step up to do its part,” Lange said. “Not only is it an imperative to meet the global sustainability challenges but being sustainable also makes real business sense as organizations understand going green can create market opportunities. We think it is time for the media tech sector to understand the topic as we start on our sustainability journey.”

“Some great work is taking place within our industry to move the needle on environmental issues but what is not evident are the two other key spheres of sustainability that relate to people and company purpose,” Collins added. “With sustainability agendas now under question in key RFPs I believe it is important to share best practice and education around the subject to ensure that our industry thrives.”

Starting with an introduction of sustainability in general, MTSS will then move into what the broadcast, M&E industry is doing today, and what more can be done. The event will provide a baseline understanding of this complex topic while discussing best practices and suggesting ideas on how to tackle the key issues and solutions for a sustainable future.

For more information, visit www. mediatechsustainabilitysummit.com

George Winslow

Of Slats Grobnik, Jason Whitlock and ChatGPT

There was a time some 40 years ago that my college roommate and I would compete to get to the Columbia Missourian first— specifically the Op-Ed page to see if Mike Royko’s syndicated column appeared.

Maybe we liked Royko’s take on Chicago, which seemed so far away and different from Columbia. Maybe it was the chuckle we’d get, especially on the days his alter-ego Slats Grobnik made an appearance. Whatever the reason, Royko spoke to us in a way we looked forward to, enjoyed and appreciated.

A decade later when he arrived at The Kansas City Star, Jason Whitlock began building a 16-year rapport with readers not unlike our relationship with Royko. I know many, many people here who couldn’t wait to read his perspective on a game, a player or coach and who were sorely disappointed when he left.

This story undoubtedly has been repeated millions of times over the years as successive generations of readers and columnists engage with one another in newsprint and now online.

Enter OpenAI’s ChatGPT, an artificial intelligence-driven tool that generates wellwritten copy. How good is it? Better than the majority of writing the average teacher or professor sees, in the opinion of Daniel

Herman, a high-school teacher in Berkeley, Calif., and author of “The End of High-School English,” appearing in The Atlantic online.

Somehow, I doubt, no matter how humanlike ChatGPT’s prose may be, that my roommate and I would have looked at its musings as anything more than a curiosity and then would have begun scouring the page looking for Royko.

Ditto Kansas Citians looking for Whitlock’s observations and insights when he was here. For others, it might be Maureen Dowd, George Will or Thomas Friedman. I’m sure that the same was true of readers and William F. Buckley Jr. and even H.L. Mencken in the distant past.

The point is simple. People like people. Perhaps more accurately, people enjoy the unique perspectives—informed by life experience—of other thoughtful people.

Word to the wise for television reporters toiling away on their next story and deadline: Draw on your life experience made fresh daily by your interactions with sources and viewers alike to bring humanity and perspective to your stories.

Otherwise risk someday having a Sophia-like AI creature reciting words from a ChatGPT-type program that will be your undoing.

Sure, people like the real thing. Just consider the millions who tune in on Sundays to watch an NFL game. Then again, there’s eSports, too. l

8 in the news

OPINION

February 2023 | www.tvtech.com | twitter.com/tvtechnology

Phil Kurz

Credit: Getty Images

Bring Next Generation Audio to Life

Creating an exciting immersive experience for your TV viewers and delivering the many benefits of Next Generation Audio shouldn’t be a struggle. We’ve got your back. Through our Linear Acoustic and Minnetonka Audio products, Telos Alliance has the solutions you need for every conceivable Dolby Atmos® and Next Generation Audio workflow. Only Telos Alliance has the tools you need to bring immersive and Next Generation Audio to life.

BROADCAST AUDIO PROCESSOR

PROFESSIONAL AUDIO ENCODER

UPMAX® ISC

Upmix from stereo or surround to immersive audio for a consistent soundfield and better audio experience.

LA-5300

Everything you need to be ready for ATSC 3.0, including loudness control, upmixing, audience measurement watermarking, bitstream analysis and monitoring, and encoding to Dolby AC-4.

LA-5291

Deliver an amazing Dolby Atmos® experience for cable, satellite, OTT, and streaming platforms via Dolby Digital Plus JOC.

IMMERSIVE SOUNDFIELD CONTROLLER

©2023 Telos Alliance®. All Rights Reserved. C23/2/19096 BROADCAST WITHOUT LIMITS TelosAlliance.com/NextGenTV ATSC 3.0/NEXTGEN TV MEASUREMENT AND MONITORING ON-AIR TV PROCESSING SITE-TO-SITE CONNECTIVITY PROCESSING AND AUTOMATION OEM SOLUTIONS + PARTNERSHIPS IP INTERCOM AND COMMUNICATIONS ENTERPRISE FILE-BASED AUDIO

Contact Us Today. We’ll Help You Get Ready for Next Generation Audio!

The Reality of Virtual Production

Making the unreal look real while delivering cost and time savings

By Michael Silbergleid

FORT MYERS, FLA.—Virtual production has been around for years: Rear/front projection, matte painting, blue/green screen, chromakey, motion capture. Its latest iteration took “The Mandalorian” to bring it to the forefront. In-camera VFX virtual production (aka “Mando Style”) using LED panels to project a background that not only reflects on actors and foreground scenery, but that actors can see and interact with. It’s a seamless transition between the real and unreal.

Research firm Research and Markets reported the virtual production global marketplace was valued at $2.4 billion in

2021, growing to $3.1 billion by 2026. That’s a compound annual growth rate (CAGR) of 14.3%. But that was in February 2021. By December 2021, CAGR was increased to 17.6%. By October 2022, estimating out to 2027, CAGR was 18.7%.

NON-VIRTUAL SAVINGS

A term to know is “volume,” the space where virtual production happens. Volumes combined with the rest of what virtual production offers is a cost and time savings juggernaut.

According to A.J. Wedding, co-founder & director of virtual production at Orbital Studios, while the big LED wall is what amazes most people, with virtual reality headsets you can bring all department heads

together to scout locations all in one place— saving on travel and time.

From a production cost standpoint, examine the FX series “Snowfall,” where Wedding served as virtual production supervisor for seasons five and six. The series realized a savings of $1 million per season.

While a 62x14-foot LED wall is used for a penthouse set, Wedding explained the savings didn’t end there. “The car process used to mean one car, one scene and one location. But for ‘Snowfall,’ it meant multiple scenes with multiple cars. That’s saving money and it looks so good. We also used a 20x12-foot LED wall on casters and moved it from set to set to show Paris or Detroit—the production didn’t have to pay for two LED walls, just the single portable.”

10 future

trends

February 2023 | www.tvtech.com | twitter.com/tvtechnology

A virtual production car setup from a corporate project at Orbital Studios providing the camera with the ability to shoot around the car and the talent to be illuminated from the front, side rear and above.

With virtual production, the set is typically ready before the actors, with turnaround sometimes 30-50% faster, according to Erik Weaver, head of virtual and adaptive production at the Entertainment Technology Center (ETC) at USC—a think-tank working on standards for the industry and the standardization of education and curriculum within the field.

Until recently, virtual production was limited to complex, outlandish and science fiction productions. “There’s been a radical change in who uses virtual production that will continue,” said Weaver. “The shift is from large ‘Mando’ volumes to places like Stargate Studios that purchased LEDs from Costco to use as side panels outside of train windows.”

According to Weaver, tighter pixel pitch— the distance between pixels in millimeters— will mean cameras can get closer to the LED wall. “‘Mando’ used a 2.84 pitch so the camera was 16-feet away, while a pitch of 1.5 means you can get the camera 3-feet from the wall, that’s what Orbital does. This makes virtual production economically feasible for mid to smaller-sized stages.

For the new History Channel original series “History’s Greatest Heists with Pierce Brosnan,” virtual production significantly compressed Brosnan’s time on set. “This is a really cool crime reenactment show and places Brosnan as though he was in the locations,” said Wedding. “They created the virtual environments based on all the

re-creations and shot Brosnan for the entire season in three days, with 11 setups a day and working no more than six hours a day.”

FAMILIAR NAMES

Last December, Amazon Studios opened Stage 15, its new volume and formation of the new Amazon Studios Virtual Production

(ASVP) department. The stage accommodates an LED wall that’s 80 feet in diameter of near wraparound LEDs with two additional floating walls 26 feet tall in a 34,000 square foot space with 46-foot ceilings.

Stage 15 is fully connected into the AWS cloud, and is an integrated part of the production-in-the-cloud ecosystem. The facility provides a camera-to-cloud workflow with direct connection from Stage 15 to AWS S3 storage for cameras, post-production, remote global collaboration and compute power.

Ken Nakada, head of Virtual Production Operations for Amazon Studios says what’s learned during a project stays with ASVP. “With normal productions, the experience, crew and key learnings wrap at the end of each given project. ASVP aims to retain and build on these learnings, thoroughly document them, and share them across projects to elevate the industry’s use of this new technology.”

In August 2021, NEP Group launched NEP Virtual Studios through the acquisition of Prysm Collective, Lux Machina and Halon Entertainment. Stage 22 at Trilith Studios outside Atlanta was designed and commissioned by Lux Machina for Prysm and is powered by Lux Machina’s real-time 3D engines. It’s a fully enclosed 80-by-90by-29.5 foot volume in an 18,000 square foot purpose-built stage, built to accommodate large set pieces wrapped 360 degrees with

future

11

trends

twitter.com/tvtechnology | www.tvtech.com | February 2023

“There’s been a radical change in who uses virtual production that will continue.”

ERIK WEAVER, ENTERTAINMENT TECHNOLOGY CENTER

A typical Mo-Sys virtual production setup with a real foreground and virtual background. Note the StarTracker system

LED panels, including an LED ceiling.

“Stage 22 incorporates new LED, volumetric capture and structural technologies with amazing image processing capabilities to allow the utmost flexibility for today’s filmmakers,” said Wyatt Bartel, vice president of Production for Lux Machina. “We’ve seen virtual production grow significantly in recent years. This is partially due to the increasing capabilities and affordability of virtual production technology, broader understanding from filmmakers of its benefits and capabilities, and the number of virtual production companies worldwide.”

Last October, Sony Pictures Entertainment (SPE) announced its first volume located at Sony Innovation Studios. It uses Sony’s high brightness and wide color gamut Crystal LED “B-Series” display, a fine-pitch LED system co-developed by Sony Electronics and SPE for use in virtual production.

It should be noted that others work with LED manufacturers to customize their walls. Orbital Studios works closely with Planar. ETC with a variety of manufacturers.

LIVE BROADCAST VIRTUAL PRODUCTION

“Mo-Sys has been involved in the evolution

of in-camera visual effects [ICVFX] from the very beginning,” said Mike Grieve, Mo-Sys Commercial Director. “We’ve been selling solutions for ICVFX almost as long as we’ve been selling broadcast virtual set and augmented reality solutions.”

Mo-Sys sees companies using its ICVFX solutions because the real-world alternative is either too costly, too dangerous or technically impossible. “ICVFX is normally associated with LED virtual production, but it’s also used with blue/green screen,” said Grieve. “LED ICVFX is popular for commercials and drama, blue screen ICVFX for cinematic drama and green screen ICVFX for general entertainment.”

Then there’s multicamera switching. “When the director switches cameras, it takes five frames for the wall to update with the new camera’s background with the correct perspective based on the camera/lens tracking data,” said Grieve. “This delay has to be compensated for, and that’s what Mo-Sys’ Multi-Cam Switching does.”

For Vizrt/NewTek’s Martin Klampferer, R&D manager and product owner of Viz Engine, virtual production is all about live. “It’s virtual studios, blue/green screen and Viz Engine delivering the graphics,” he said. “And increasingly more about live talent in front of

video walls. Viz Engine combines everything, even if using multiple cameras. Viz Engine gets the camera tracking data in 3D space, the key of the talent, the graphics and does the complete compositing, including placing elements in front of and behind the talent.”

NON-VIRTUAL HURDLES

It’s all about education and misinformation.

“This is not an LED rental, it’s a workflow,” said Wedding. “Producers say ‘let’s rent the LED wall, let me get the individual companies needed,’ but there’s no synergy. No department head on top of it. Something always falls through the cracks and I’ve seen it multiple times. We want to be the one you fire, that way we can control it all—tell people what’s possible.”

“Mo-Sys opened a facility in Los Angeles where people could learn, train and experiment,” said Grieve. “We also started the Mo-Sys Academy in London where onset virtual production could be taught to students, as well as cross-training experienced production people.”

Perhaps Weaver put it best: “We’re at virtual production 1.0. It’s the difference between getting a nice pasta dinner from a restaurant to you, getting the ingredients and learning how to make it from scratch.” l

12 future trends

February 2023 | www.tvtech.com | twitter.com/tvtechnology

LED virtual studio for live news broadcast using Mo-Sys virtual production technology

Display Tech—What’s Behind the Glass

A primer on the different display technologies spotted at this year’s CES

By Pete Putman

By Pete Putman

LAS VEGAS—This year’s edition of CES was vastly different from a quarter-century ago. Back then, televisions were just breaking free from low-resolution cathode-ray tubes (CRTs) as high-definition television was taking its baby steps. A 50-inch high-resolution display in 1998 contained just 1280 x 768 pixels (Wide XGA) and cost as much as a car. Rearprojection televisions were just switching to solid-state light modulators. And liquidcrystal display (LCD) televisions weren’t even available—the largest flat screen TVs all used expensive plasma display panels (PDPs).

Today, all of those technologies (and some of their manufacturers) are distant, fading

memories. We’ve had 4K TVs for over a decade, while 8K sets appeared five years ago. LCD technology rules the roost nowadays, and the median TV screen size is about 55 diagonal inches and slowly increasing. Organic light-emitting diode (OLED) displays have supplanted plasma for image quality, but with much lower power consumption.

But there are other contenders: Mini and microLEDs, quantum dots and QD-OLEDs are finding their way into televisions and computer monitors. What are the differences between them? Will they replace or obsolete any currently available displays? Read on…

LIQUID-CRYSTAL DISPLAYS AND ENHANCEMENTS

It didn’t take long for large LCD panels

to become the preferred solution for large televisions after their introduction 20 years ago. Those original models have evolved from heavy, bulky designs using fluorescent lamp backlights to modulate thousands of tiny light-shuttering pixels, to sleek housings stuffed with light-emitting diode (LED) backlights. Over time, pixel counts have continued to grow as retail prices drop with increased manufacturing efficiency.

The debut of high-dynamic range (HDR) video forced more design changes. Given the gross inefficiency of LCD imaging panels (only 5% of the backlight illuminance actually makes it to the front of the screen), other solutions were needed to boost brightness levels. One approach was to add a thin layer of quantum dots, tiny metallic particles that absorb blue light from LEDs and re-emit it as higher-intensity red and green light (hence, the quantum energy conversion effect).

Televisions with a “Q” in their model names (like Samsung’s QLED TVs) use quantum dots (QDs) to produce high dynamic range images. These displays are still hamstrung by the light transmission inefficiency of LCDs, but they are now able to pump out luminance levels in the range of 1200 to 1500 candelas per square meter (cd/m2). TCL also manufactures LCD

display technology 13

twitter.com/tvtechnology | www.tvtech.com | February 2023

Samsung launched its 76-inch Micro LED CX at CES 2023.

TVs with quantum dot enhancement layers. LCD TVs equipped with quantum dots are priced at a premium over conventional LCD models.

There’s another way to achieve HDR imaging by packing more “mini” LEDs into a smaller area and change their light levels in step with luminance levels in video content, a process known as local area dimming. Sony and Hisense (ULEDs) use this approach instead of QDs. The challenge is to minimize LED light from bleeding into adjacent pixels, creating what looks like a halo effect around bright text and objects.

Fixing that problem requires some additional structural changes to each pixel as well as specialized light modulation techniques. But there’s another problem looming—TVs using large matrices of miniLEDs for local area dimming consume lots of power, and pending European Union regulations on energy conservation may keep these models from ever coming to market.

ORGANIC LIGHT-EMITTING DIODE DISPLAYS

OLEDs have been in development for decades, yet it seemed like they could never get over the finish line. Tricky to manufacture, they were susceptible to

moisture and differential aging of colors. And you couldn’t drive them too hard, as they’d burn out quickly.

OLEDs emit different colors of light when a low voltage is applied across a junction of organic compounds. Those colors are saturated and bright, and OLED displays exhibit high contrast, deep blacks, and wide viewing angles. Unlike LCD panels, OLEDs are very thin and can bend and warp. These latter properties have made it possible to offer foldable smartphones and tablets, not to mention digital signs that can be wrapped around poles,

buildings, cars, and other objects.

There are two types of OLED displays in wide use today. For televisions, white OLED panels with color filters (WOLEDs) dominate the market. (The white color in RGBW displays is generated by a compound of blue and yellow organic chemicals.) LG Display is the source of all WOLED panels used in OLED TVs, no matter whose brand you see on the bezel. The underlying technology uses an RGBW pixel stripe to produce high levels of luminance (up to 1000 cd/m2 with a 10% full white window). WOLED televisions are available in sizes from 42 inches to 97 inches.

The second type of OLED display, used in smaller products such as smartphones, has discrete red, green, and blue emitters (RGB stripe). Several companies manufacture RGB OLEDs, among them Japan OLED, Samsung Display, and Chinese manufacturers AUO and BOE. While RGB OLEDs can achieve similar peak luminance levels as WOLEDs, the largest RGB OLED display currently available is a 32inch desktop monitor.

The challenge for both RGBW and RGB OLEDs is the time to half-brightness of the blue organic materials. (A similar problem affected blue phosphors in color TV picture tubes and plasma displays.) Some clever solutions have been devised to overcome this problem, such as using multiple blue emitters with each running at reduced brightness. One way to get more luminance out of an OLED display is to employ microlens arrays on each pixel, collimating the light and directing more of it to the screen. This technique is currently implemented by LG on its latest series of Evo OLED televisions.

QD-OLED HYBRIDS

A new, clever hybrid display technology combines a stack of blue OLED emitters with red and green quantum dots. This QD-OLED hybrid was launched by Samsung Display last year at CES in 55-inch and 65-inch screen sizes and was joined by a 77-inch television at this year’s show (Samsung and Sony both sell QD-OLED models). QD-OLED TVs command higher prices than conventional LCD TVs and are priced on par with quantum dot-equipped sets and WOLED TVs.

The big advantage of the QD-OLED is the simplicity of imaging layers—four in all—in the display panel. As an emissive display, it too exhibits excellent contrast performance, deep black levels, high color saturation, and a wide viewing angle. The blue OLED emitter is actually a stack of smaller blue OLEDs, each running at reduced power to extend their useful life. The rest of the horsepower comes

14 display technology

February 2023 | www.tvtech.com | twitter.com/tvtechnology

LG showcased its EVO series of WOLED TVs at CES 2023.

TVs using large matrices of miniLEDs for local area dimming consume lots of power, and pending European Union regulations on energy conservation may keep these models from ever coming to market.

from the quantum dots, with Samsung Display claiming a maximum luminance of 2,000 cd/ m2 for 2023 models. Think of the QD-OLED as a turbocharged WOLED or RGB OLED!

MICROLED DISPLAYS

Display manufacturers are now prototyping televisions made up solely of tiny red, green, and blue LED emitters. These “micro” LED displays can also be used across a wide range of display products from smart watches and phones to tablets, computer monitors, and in transportation applications. To make this happen requires high manufacturing yields of microLED chips at a reasonable cost, which has so far proven to be a difficult task.

The advantages of microLED displays are in simplicity and image quality. Instead of the multiple light-absorbing layers of polarizers, backlights, and color filters in an LCD display, there’s just an array of LED emitters and transistors to switch them on and off. Since microLEDs are emissive displays, there are no issues with black levels and contrast flattening when viewed at wide angles. And they’re plenty bright at 1500 cd/ m2, although they can easily hit peak levels

exceeding 2000 cd/m2.

Although multiple companies are researching and developing microLED displays, only Samsung is currently offering models for consumers. At CES, they unveiled a 76-inch Ultra HD model to complement earlier 89-inch, 101-inch, and 110-inch offerings. The main selling point of the 76inch Micro LED CX is that it can be installed by the end user. However, given the steep price point of the previously-introduced 89inch model (about $80,000), it’s going to be a

high-end, ultra-premium television for now.

Even so, many display analysts predict microLED displays will likely replace all other display technologies by the end of this decade—if manufacturing costs can be lowered and high yields achieved. And the odds are that it will happen. Recall that the first plasma televisions came with five-figure price tags, but by 2010 cost well under $1K. And the first 4K monitors (not TVs) sold in North America in 2012 were priced over $20,000! Today, you can buy a 65-inch “smart” Ultra HDTV for as little as $400 on sale.

LOOKING AHEAD

Given the popularity of WOLED TVs, you’ll see more companies like Toshiba and Sharp offering them in 2023. LCD TVs will continue to be the cheapest TV offering, while QD-equipped models are slowly coming down in price to keep pace with OLEDs.

As for microLED TVs—well, if you have eighty grand just lying around… l

Pete Putman, CTS, KT2B, is president of ROAM Consulting L.L.C.

display technology

Many display analysts predict microLED displays will likely replace all other display technologies by the end of this decade—if manufacturing costs can be lowered and high yields achieved.

SMPTE ST 2110: A Vibrant Six-Year-Old

How has this critical standard impacted broadcasters?

By Wes Simpson

ORANGE, Conn.—Since the first document release in 2017, the SMPTE ST 2110 suite of standards for video transport over IP networks has made major inroads in the market for professional video and audio production gear. By removing the highlycompressed, unreliable stigma created by early IP streaming technologies (looking at you, Flash), ST 2110 enabled bit-perfect production systems to be built using widelyavailable Ethernet networking gear.

As IP networking infrastructure continues to grow in capacity—while simultaneously lowering the cost per bit—IP systems have become ever-more capable and affordable. Like any major technology refresh, the transition to IP-centric media systems has

experienced a few bumps along the way, but overall progress has been steady and new products are filling in the few remaining gaps needed to support every conceivable broadcast application.

When first released in 2017, ST 2110 provided a standard way to transport video over general purpose IP networks. Since it was targeted as a direct replacement for SDI, the focus was on uncompressed video inside a live studio production environment.

One important difference from SDI is that each signal type is transported in a separate stream of packets, thereby eliminating the need to “embed” audio signals within their associated video signals. Synchronization is provided by distributing a precision (PTP) clock to every media device on the network, thereby allowing each device to align its outputs to a common timing reference point.

MARKET IMPACT

Over the past five years, IP media transport generally and ST 2110 specifically have made major inroads within the professional broadcast market. According to John Mailhot, CTO, Networking and Infrastructure for Imagine Communications, the industry has already reached the point where “ST 2110 is a better choice for greenfield studio construction and for applications that require more than 512 video router crosspoints.”

Alan Wollenstein, director, Engineering Systems for the National Football League, spoke about how critical ST 2110 technology was for the implementation of the NFL Network’s new Los Angeles Facility in Inglewood, Calif. “We have 19 physical and 75 virtual edit bays in our new facility, which stretches over 200 yards from one end to the other. It simply would not have been possible to build this brand-new installation without using ST 2110.”

IPMX

One major way that ST 2110 technology is being expanded to support new applications is in the development of IPMX (Internet Protocol Media eXperience). The goal of this development is to help reduce the cost of ST 2110 technology for applications that may

16 standards update

February 2023 | www.tvtech.com | twitter.com/tvtechnology

The NFL Network’s Inglewood, Calif. facility was built for SMPTE ST 2110.

not require its full range of capabilities.

Another goal is to produce the first truly open, license-free IP video specification for the ProAV market (in contrast with NDI, SDVoE and HDBaseT). IPMX supports HDCP content protection, multi-monitor synchronization, FEC (Forward Error Correction), EDID (Extended Display Identification Data) and a variety of other features that are crucial to supporting this market.

IPMX specifications are currently being developed by a group within the Video Services Forum (the same source as many of the key concepts behind ST 2110). More info, including downloadable copies of all the released specification can be found at www.vsf.tv.

WHAT THE FUTURE HOLDS

Not everything is perfect in the world of ST 2110. The capabilities of system and

broadcast controllers are lagging behind those of video and audio endpoints, at least based on the results of the JT-NM Tested event in Wuppertal, Germany last August. Development of these systems proceeds apace, with particular emphasis on improving IP network security for broadcast devices.

Most of the key ST 2110 standards have stabilized, and are not expected to change much (if at all) in the coming years. This is good news for developers and implementers, allowing them to focus on fine-tuning and cost-reducing existing designs, rather than having to implement new features. It is likely that system cost reductions will also continue, as video systems are now more closely aligned with present trends in the much larger IT and datacom industry (including Moore’s Law and other factors). John Mailhot noted that “The cost premium for ST 2110 in media endpoints is going away, and the cost of 100 gigabit optics

is dropping dramatically.” Advances in Ethernet switch capabilities will also help significantly; Alan Wollenstein indicated that “The choice of spine and leaf IP network architecture for our facility was key for our application.”

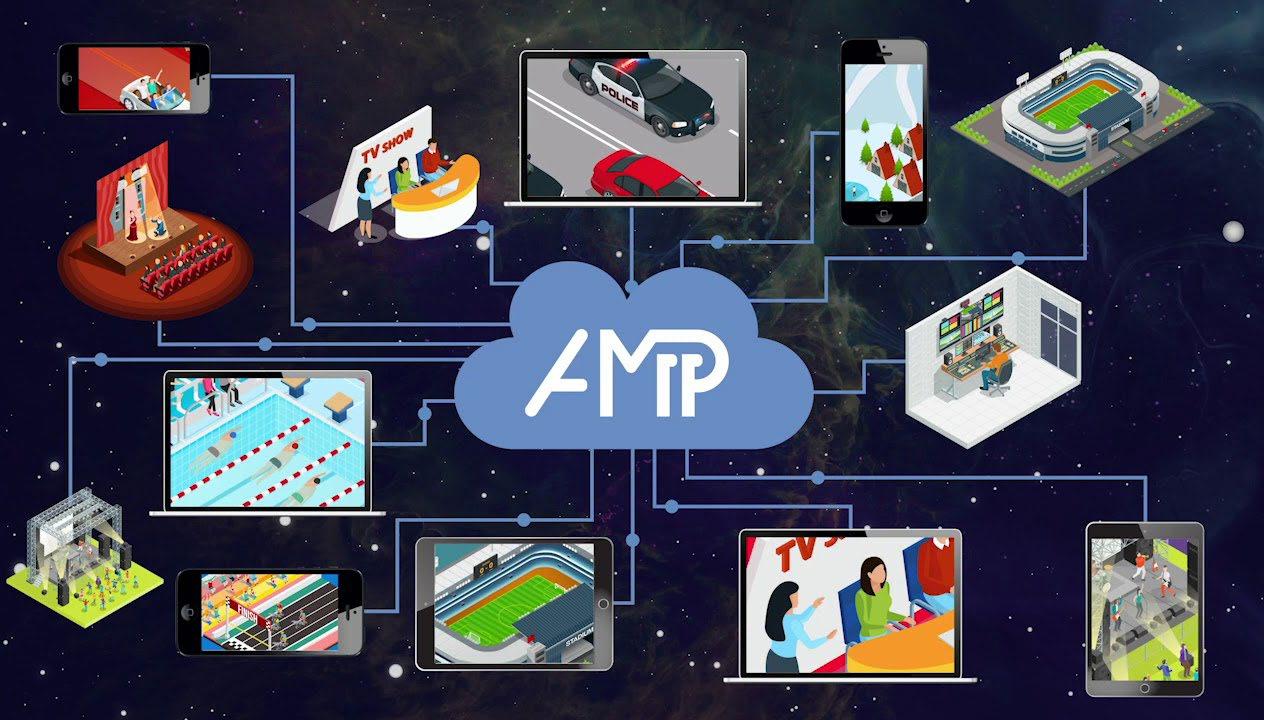

Cloud-based production is much easier to implement with an IP-native technology like ST 2110 as compared to SDI-based systems. As more ST 2110 systems migrate from using uncompressed video to deploying JPEG XS or other compressed formats, the costs of transporting video to and from the cloud will become more attractive, making other benefits of the cloud (including rapid scalability, AI-based functions, pay-as-you go, and more) accessible to a wider market.

Overall, today’s market and technology trends will continue to make ST 2110-based systems more affordable and flexible throughout the broadcast industry. Pretty impressive for a six-year-old! l

video in order to support standard definition video formats (which are still used in many applications around the globe).

New Updates in 2022

ST 2110 is made up of a number of standards, each of which covers one aspect of IP media transport; this makes it so that each of these documents can be updated independently. Many of the core set of ST 2110 standards were updated in 2022, as shown in Fig. 1. The good news about these updates is that they were done very carefully, so as to avoid breaking equipment and software that were built using the 2017 edition of the standards. Here are a few highlights on the new standards:

ST 2110-10 System Definition: This document focuses on providing better information for control systems. Two new (recommended) SDP parameters have been added: TSDELAY and TSMODE. The first of these, TSDELAY, allows a device to indicate the amount of time (in microseconds) that elapses between the sampling or other time indicated by the RTP timestamp for a packet and the time that the first packet containing that timestamp is emitted by that device. The second of these, TSMODE, allows a device to indicate whether or not the RTP timestamps present on packets coming into the device are preserved or modified in the output of the device. Used together, these two new parameters allow a broadcast controller to more accurately assess the delays incurred within each step of a workflow, allowing tighter control of end-to-end delays and simplifying overall media synchronization.

ST 2110-20 Uncompressed Video: This adds support for two new video formats. One addition supports a new Transfer Characteristic (the “TCS” parameter in SDP, which indicates how binary pixel values relate to pixel brightness) to support “Camera Log S3” as defined in SMPTE ST 2115 (and is used in a wide variety of high-end video and digital cinema cameras). The other addition was a new colorimetry type of “ALPHA” which is specifically designated for key signals, while it was clarified the key signals must not declare a TCS value.

ST 2110-21 Traffic Shaping: This new version clarifies that the virtual receiver buffer (VRX) constraints do not apply for constant bitrate compressed video signals, and providing a more flexible way of calculating the timing for interlaced

ST 2110-22 Compressed Video: This revision clarified that the Virtual Receiver Buffer constraints in the packet timing model do not apply, and cleared up some confusion about how the bitrate of a compressed signal is defined in SDP.

ST 2110-30 Uncompressed Audio: This document is currently undergoing very minor revisions to clarify some wording and to provide clearer descriptions of the audio receiver conformance levels.

ST 2110-40 Ancillary Data: This new revision creates two packet transmission models for ancillary data. LLTM, the Low Latency Transmission Model, requires senders to transmit ancillary data packets within 8 video lines of their specified location. CTM, the Compatible Transmission Model, allows a 1 msec window for transmission. These are signaled with the SDP parameter “TM.” One other minor change was to require senders to transmit packets in increasing order by original line number.”

standards update 17

twitter.com/tvtechnology | www.tvtech.com | February 2023

©2023 All Rights Reserved LearnIPvideo.com SMPTE ST 2110 Standard Revision Dates ST 2110 Standard Name -10 System Timing and Definitions -20 Uncompressed Active Video -21 Traffic Shaping and Delivery Timing for Video -22 Constant Bit-Rate Compressed Video -23 Single Video Essence Transport over Multiple ST 2110-20 Streams -30 PCM Digital Audio -31 AES3 Transparent Transport -40 SMPTE ST 291-1 Ancillary Data -43 Timed Text Markup Language for Captions and Subtitles 2017 2018 2019 2020 2021 2022 R2 R1 R1 R1 R1 R1 R1 R1 R1 R1 R2 R2 R2 R2 R2 Figure 1: Timeline showing initial release dates (R1) and subsequent revision dates (R2) of SMPTE ST 2110 standards.

Wes Simpson

Discovering the Magic of 32-Bit Float Audio Recording

We have long been in the era of “one man band” media production. Outside of major digital cinema or documentary productions, most video and sound today are recorded with a minimal crew and budget. That often means only one person.

This used to be a nearly impossible task—fraught with simple mistakes that could endanger the entire production. Now, recording high-quality audio in the field is more foolproof than ever thanks to a new technology first introduced in 2019.

GROUNDBREAKING TECH

Over the past year, this groundbreaking technology has quickly caught on and now appears in a range of very low-cost, professional audio recorders. Called “32-bit float

audio recording,” it allows the recording of sound without the user having to set gain levels.

Do not confuse 32-bit float recording with the old “auto gain” setting found on most traditional audio recorders. The artifacts produced by auto gain were never acceptable for professional-quality sound.

Today, the company with the most 32-bit float recorder models is Zoom, followed by Sound Devices and Tascam. Most video editing software and digital audio workstations can now play back 32-bit float audio. The cost and quality of the equipment has hit new levels in the past few months.

What’s so special about 32-bit float recording? Clipped recordings over zero dBFS can be fully recovered without distortion. With conventional 16- and 24-bit audio recording, this was not possible. With 16- and 24-bit recording, if the audio level peaked above zero dBFS, it was permanently distorted. That’s because these digital formats lack the ability to record any data over this threshold.

With 32-bit float recording, the system can record audio data +770 dB above zero dBFS and –758 dB below. This is a dynamic range at an astounding 1,528 dB.

It is hard to grasp this range since the loudness difference between the quietist anechoic chamber to the loudest sound possible is only 185 dB. With 32-bit float, clipping is now impossible.

THE GOOD WITH THE BAD

Of course, as with all new technology, there are some negatives to using and depending on 32-bit float audio. First of all, the recorded files are about a third larger than standard 24-bit

files. The user will need larger size flash cards for audio storage.

If distortion creeps in before the recording begins, 32-bit float won’t help save the session. Common problems are an overloaded mic capsule, power line hum or overload from wind.

Even when using 32-bit float, sound operators still need to do correct mic placement, use the right mic mounting gear and employ good wind-protection practices when working in the field. It is always important to check that the signal being recorded is problem-free before hitting record.

The most important change with 32-bit float is that operators don’t have to set or worry about sound levels during recording. This is an advance that removes a key task for overworked one-person sound recordists. It frees the mind to concentrate on other things during the production.

18 February 2023 | www.tvtech.com | twitter.com/tvtechnology media tech

Companies such as Zoom, Tascam and Sound Devices are leading the charge

Tascam Portacapture X8

32-Bit float graphic

Frank Beacham

EXPERTISE

Even with 32-bit float, production workflows including editing, mixing and distribution continue to use a 24-bit workflow. This means some data will be lost at some point in the chain. An audio engineer will need to make adjustments to ensure that the audio signal doesn’t get clipped when downsampling to 24-bit.

At this point, there is a choice: Either set levels properly on set and record directly in 24-bit, or record in 32-bit float and add the extra step later. It’s part of the process that is essential. But it’s always easier to fix level problems in post than having them on location.

PRODUCT INTRODUCTIONS

Just before Christmas, 2022, Zoom introduced a series of 32-bit float recorders that sets a new standard for quality, features and price. Called the M-Series and starting at $199, these new recorders come in handheld and shotgun styles.

Most interesting is the new Zoom M4 MicTrak ($399), a new handheld microphone-shaped recorder. It features 32-bit float recording; 192 kHz sample rate; simultaneous recording of up to four discrete channels; two ultra-low noise pro-quality XLR preamps; and an internal timecode generator.

Journalists can easily use this new recorder for handheld interviews. Just turn it on and hit record. No need to set levels. The color on-screen display shows a waveform to confirm recording. The reporter can concentrate

on the content of the interview, rather than on the equipment.

When four mic channels are needed, two pro mics can be plugged into the XLR jacks on the side of the M4. It also takes 3.5mm input mics and has an internal timecode generator with an in/out jack. The timecode oscillator offers accurate code with a discrepancy of less than 0.5 frames per 24 hours.

Another major advance is the new recorder’s super-quiet preamps, which are taken from Zoom’s high-end F-series recorders. The preamps have a self-noise rating of –127 dBu. This is a level of recording technology unheard of even a few years ago.

Also recently introduced was Tascam’s Portacapture X8 recorder ($389.99) and Zoom’s F2 Portable Field Recorder ($229.99), both with 32-bit float recording. These join Zoom’s existing F-series models and Sound Devices MixPre II series, beginning at $895.

Though 32-bit float recording does not solve all recording problems, it’s a major step forward for recordists working solo in the field. Just as with lighting, good sound recording demands a complex set of choices that used to be a dedicated job in itself.

Poor sound has traditionally been the biggest killer on low-budget, independent video productions. Until recently, audio could not be recorded so easily and reliably by solo operators. That has now changed, thanks to 32-bit float audio. l

Frank Beacham is a New York City-based writer and media producer.

media tech

19 twitter.com/tvtechnology | www.tvtech.com | February 2023

Over the past year, this groundbreaking technology has quickly caught on and now appears in a range of very low-cost, professional audio recorders.

Sound Devices MixPre 3 II

Zoom M4 MicTrak Recorder

Unless your studio was built in the last decade, it was almost certainly not designed to support today’s LED fixtures. During the age of incandescent, racks of dimmers supplied power to stage-pin and twist-lock outlets hanging from the grid.

Intensity-level commands were sent from the lighting console to racks of humming dimmers, tucked away in some room where their buzzing modules and cooling fans couldn’t be picked up by studio microphones. These were the

BRUCE ALEKSANDER

trademarks of every lighting system of the last generation.

This type of dimming infrastructure risks becoming obsolete with the arrival of today’s LED lighting fixtures. This column will help you understand what changes must be implemented before the arrival of your next lighting package.

TRANSITIONING FROM DIMMING TO NON-DIM POWER

You might be tempted to just change the plugs and park your dimmers at 100%. Don’t.

Your existing lighting infrastructure will need some modification to work with the new lights. In evaluating what must be changed, we need to first note the different requirements of incandescent vs. LED fixtures.

Legacy dimming systems were designed to control incandescent lights using a single modulated power feed. Heating up a tungsten filament to the desired degree of brightness is a fundamentally simple way to make light. But things get more complicated when you need to dim a lamp.

Controlling incandescent brightness was originally done with resistance dimmers—a relatively simple, if wasteful, solution. This

20 February 2023 | www.tvtech.com | twitter.com/tvtechnology

lighting technology

EXPERTISE Preparing for Your

Lighting Package—Power Control Existing lighting infrastructure will need modification to work with new lighting Credit: Getty images

Next

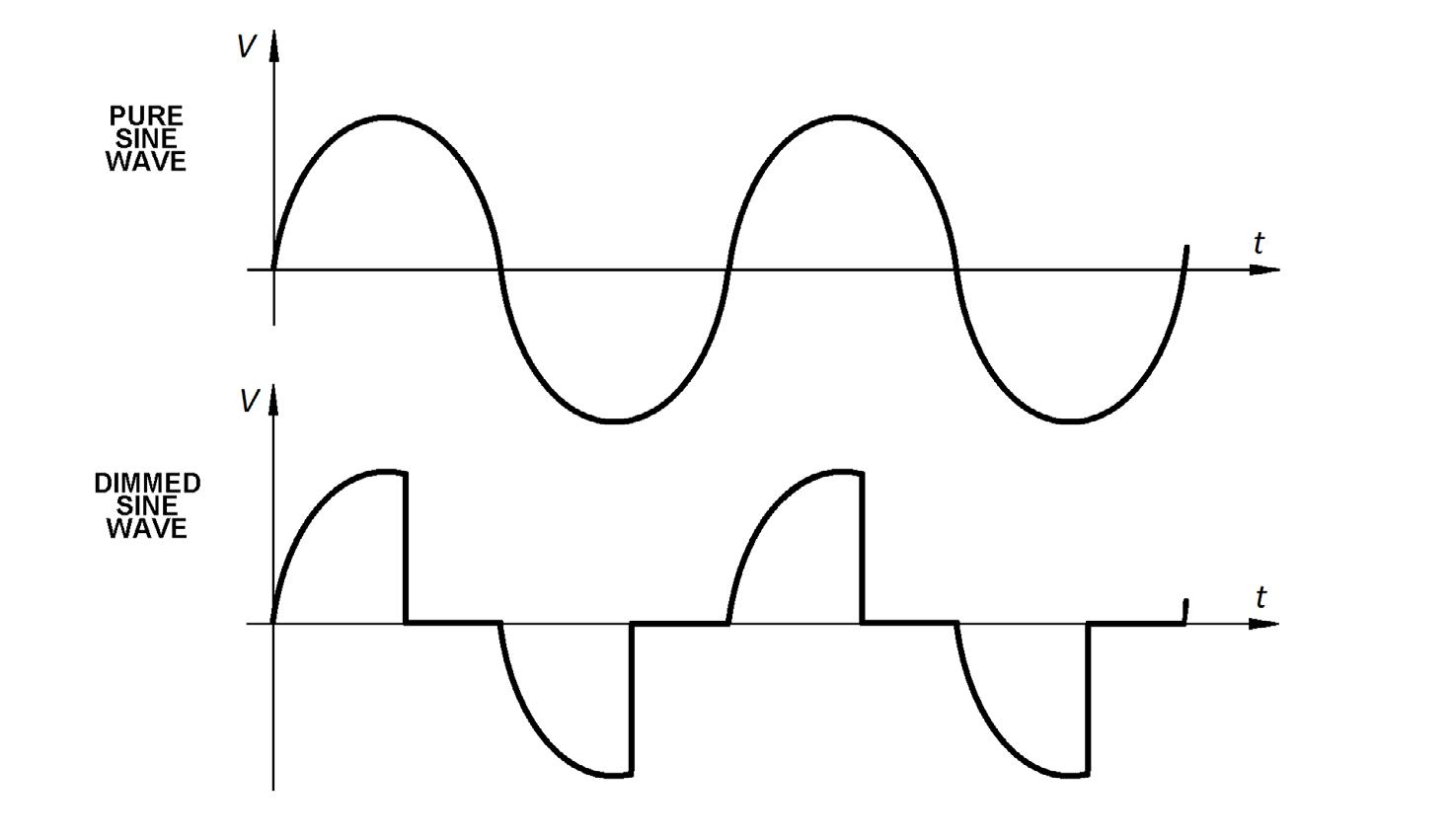

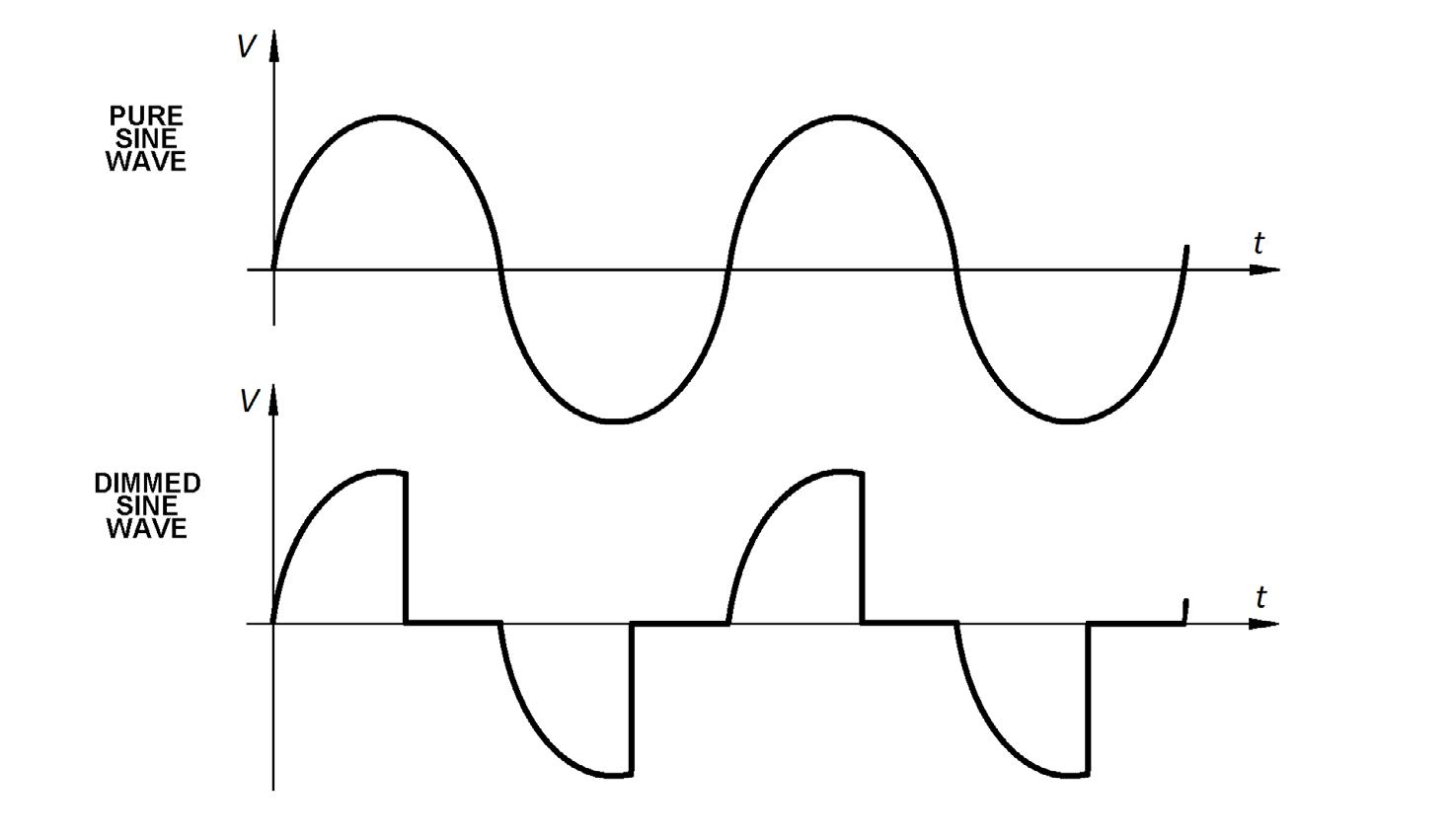

method gave way to more efficient modern dimming, which works by “chopping” the power to control intensity. Tungsten filaments were fine with this, thanks to their thermal inertia as a resistive load, but the chopping of the sine wave causes problems for solid-state electronics, such as contemporary LED fixtures.

Solid-state lights require a constant “non-regulated” power source. Dimming is done with on-board dimmers, rather than by dimming the power source they’re plugged into.

A modern dimmer at “full” is not the same thing as a non-dimmed/unregulated circuit. What comes out the back end of a dimming module looks nothing like a pure sine wave.

There’s further distortion of the sine wave, along with wasted energy, from the “chokes,” which helped limit the vibrations of incandescent filaments when they were dimmed (Fig. 1).

Furthermore, some dimming systems used techniques to “goose” or reduce the voltage when the power mains weren’t operating up to standard. All of these modifications to the power create challenges for devices that were designed to be plugged directly into a standard power source.

In short, your legacy dimming equipment may not play well with your next generation of lighting equipment without some modification.

To quote the advice from lighting company ETC, “No matter what type of dimmer you are using, you must set the dimmer to not regulate the sine wave on the circuit

that is providing power to the fixture.”

And while your dimmer system may provide an option for something labeled “Non-dim” mode, that’s not always the same thing as “switched.” In fact, “Switched Mode” on some ETC modules still runs through chokes. Naming conventions aren’t standardized or dependable, so verify the actual output with a True-RMS meter.

lighting technology

for switching modules can be your “plug & play” solution to powering solid-state lights. That only leaves changing the connectors. “Household” (NEMA 5-15) is the typical connector for powering lights today.

Because LED lights are so efficient, multiple fixtures are often run on a single power drop. To help accommodate this, most studio fixtures have pass-through “convenience” outlets. Unlike incandescent fixtures, ganging several lights on the same outlet doesn’t limit individual fixture control because each LED fixture has its own unique DMX address. This feature also reduces the total number of power drops you’ll need to modify.

Even if your dimming system’s manufacturer is no longer in business, it may still be possible to update your system. Any changes should only be undertaken by qualified professionals who understand the system and how you’ll be using it. Any missteps in wiring will almost certainly void your fixture’s warranty. Be careful.

GETTING FROM THERE TO HERE

Your existing electrical distribution infrastructure can almost certainly be modified to work with the new lights, which is always a more efficient choice than running new circuits from scratch. Modifying the electrical infrastructure you already have has the inherent advantage of saving time, materials, and labor—none of which come cheap. Some dimmer manufacturers offer nondim/switched-output modules specifically designed for the purpose of passing through the “unregulated” power, as required by the new LED fixtures. Swapping out dimmers

Even with the churn in the dimming industry that’s occurred over the years, you still have some options if your manufacturer is no longer supporting their equipment. One aftermarket service company that specializes in converting dimming systems to non-dim applications is Johnson Systems ( www.johnsonsystems.com ). They have decades of experience extending the useful life of dimming systems with plug-and-play solutions.

POWER OFF

There’s an additional advantage in retaining the ability to switch off the power to your lights when they’re not actively being used. As with many electronic devices, they’re never completely “off.”

All new light fixtures continue to use some power in their “standby” state. This not only wastes energy, but causes some additional wear to the fixture’s electronic components.

From the perspective of energy use and reducing unproductive running hours on your lights, being able to turn them completely off is a major advantage.

Transitioning to any new technology can be a bumpy ride, but preparing your lighting power infrastructure to match the needs of solid-state fixtures will help smooth the way. l

Fig. 1: Sine wave with and without triac dimming

Bruce Aleksander invites comments and topic suggestions from those interested in lighting at TVLightingguy@hotmail.com.

21 twitter.com/tvtechnology | www.tvtech.com | February 2023

Your legacy dimming equipment may not play well with your next generation of lighting equipment without some modification.

Credit: Cooper Neill/Getty images

Why Does Football Sound Different Across TV Networks?

A number of variables and guidelines create the differences in NFL audio

After months of listening to games of American football, I once again wonder why audio sounds differently across the networks—CBS, Fox, NBC and ESPN/ABC—for basically the same event. There seem to be several noteworthy variables that significantly shape the sound of a telecast including the venue, the broadcaster/network and finally a sound mixer’s tone, processing and mixing style.

STADIUM SOUND

EXPERTISE

Dennis Baxter

were no indoor stadiums and they played in the rain, snow and occasionally in sunny conditions on grass. Audio coverage was mono and basic with various parabolic receptor designs, but the sound was adequate for the moment. My sound impression memory is that there is a sound to a snow-covered icy stadium with hard surfaces, a crispness that carries the sound. But broadcast sound changed with the addition of roofs, artificial surfaces and PA craziness.

confined space. Sound energy builds and the cumulative effect is destructive to a clean capture for television purposes.

Jonathan Freed, mixer for ESPN/ABC, stated the obvious: “Open air stadiums sound different because the PA and crowd don’t echo off the roof and walls when there aren’t any.”

When football first went on the air there

A little physics tells us that an “open stadium” lets the sound/noise escape from a

Additionally, Freed has presented his theories about “air inversions” for years. “In a really cold outdoor stadium the warm crowd and heaters by the team benches cause a layer of warmer air to rise and when it gets to a certain height it collides with the colder air forming an atmospheric inversion layer over the stadium. Sound from the field of play will actually reflect off of this layer back

22 February 2023 | www.tvtech.com | twitter.com/tvtechnology

inside audio

A CBS Sports employee holds a parabolic microphone dish during an NFL game between the New England Patriots and the Miami Dolphins.

towards the sidelines and in some cases create a noticeably fuller sound for the parabs to pick up.”

The venue acoustics clearly are a factor, but Fred Aldous of Fox Sports told me that “the PA systems have gotten bigger and louder, and, significantly, the PA useage has crept into play action. Aldous said, “Music is being played over kickoffs and at the end of play, which interferes with useable field of play sound.” To make things worse, several years ago Atlanta was fined for pumping crowd sound back through the PA.

Acoustics impact the tone of the sound that the microphones capture, but the mixers control the balance of the sound elements—announcer, effects, crowd and music. With most major sporting events there is a significant effort to capture the sound from the field of play. Microphones and their placement are not a secret and there is fair consistency across the different mixers and networks particularly today, but clearly this did not happen by accident.

My sound observation is that Fox Sports mixes in more field presence. David Hill was chairman of Fox Sports for almost two decades and was instrumental in advancing the sound of football with his persuasion of the NFL to allow wireless microphones, first, on officials, and later, on players

SOUND VARIABLES

Even though football has had a powerful advocate with Hill, the NFL still controls where and when microphones can be placed and opened.

For example the NFL does not permit microphones near the benches and regulates the use of the microphone on the sky cam and even controls opening the player microphones before the networks receive a feed. The sound obstacles and variables are well entrenched in the Sunday sound and there are guidelines, but there are differences in NFL crews. I have been told that some NFL sound personnel are better than others.

Of the number of games telecast on a Sunday afternoon, it seems that there would be a desire for a network to have consistency between the multiple games that are broadcast. Phil Adler, who did audio mixing for CBS Sports for more than two decades, told me that the network only has a mandate that the announcers’ volume level be between 4 and 6 dB louder than the field sound, but everything else is subjective to the mixer.

Aldous believes that Fox’s consistency endures because of weekly phone meetings,

adding that while the meetings are not mandated, they do offer a forum to share ideas, philosophies and give “ownership” to the mixers.

Given that the sound variables, which include microphones, venue characteristics and technical facilities are somewhat equal, then the real difference is that the sound is the artistic and subjective interpretation of a sound mixer. You can not underestimate the range of subjective interpretation of a single sport from a group of audio practitioners that bring the consumer 16 consecutive Sundays of NFL football.

EXPERIENCE COUNTS

As with everything in life, experience is the difference. It is hard to understand how unpredictable live sports can be, and how much of a challenge it is for the mixer to control, manage and tame the sound.

An inexperienced mixer tends to use too

much compression to control the wide swings in the sound. Under compression/limiting can lead to the sound distorting through the signal chain while over-compression can be equally unpleasant with the audio pumping up and down.

An inexperienced mixer may not understand why the transmission signal flow was problematic when the networks went from mono to stereo and then to surround. What about immersive? Even though there is little talk of football in immersive sound, it is going to happen, and the learning curve will impact the sound of the show.

Finally, sound reproduction to the consumer is a moving target as well. Stereo, surround, immersive, soundbars, headphones, TV speakers—my head hurts. Sound has been equal parts evolution and innovation and maybe a little sound voodoo. What draws viewers’ attention to a game, even if they are half asleep? The sound! What can carry an entire show without pictures? The sound!

The audience can finally hear a difference and sound is a differentiating factor with broadcast brands. Some of my interviewees commented that some of the broadcast sound today is difficult to listen to. l

Dennis Baxter has contributed to hundreds of live events including sound design for nine Olympic Games. He has earned multiple Emmy awards and is the author of “A Practical Guide to Television Sound Engineering” and “Immersive Sound Production — A Practical Guide” on Focal Press. He can be reached at dbaxter@dennisbaxtersound.com or at www.dennisbaxtersound.com.

23 twitter.com/tvtechnology | www.tvtech.com | February 2023 Credit: Getty images

inside audio

Acoustics impact the tone of the sound that the microphones capture, but the mixers control the balance of the sound elements—announcer, effects, crowd and music.

Blackmagic Design DaVinci Resolve for iPad

Blackmagic Design now offers a its DaVinci Resolve for the Apple iPad, as a free download from the Apple iOS Store. The iPad version offers the same color correction and editing tools used by Hollywood. It also supports Blackmagic Cloud multi-user collaboration as well as powerful AI tools, such as magic mask, voice isolation and dialog lever and smart reframe for social media video content distribution.

The iPad version includes the DaVinci Resolve for iPad color page, an advanced color correction tool used in Hollywood to color and finish high-end feature films and TV shows. It also features AI processing powered by the DaVinci Neural Engine, which powers looks like “magic mask,” which can locate and track people with a single stroke, invert a person mask and stylize the background.

z For more information, visit www.blackmagicdesign.com

DJI RS 3 Mini Stabilizer

DJI’s new RS 3 Mini is a lightweight, handheld travel stabilizer developed to support mainstream brands of mirrorless cameras and lenses. The new miniature gimbal offers the stabilization available from other members of the RS 3 series, making it possible to capture professional content while traveling around landscapes and in urban locations. Weighing less than 1.8 pounds, the gimbal can carry up to 4.4 pounds and features Bluetooth shutter control, a third-generation stabilization algorithm, native horizontal and vertical switching and a 1.4-inch color touchscreen. With an all-in-one design, the RS 3 Mini supports many mainstream mirrorless cameras and lens combinations, including the Sony A7S3 + 24-70mm F2.8 GM lens, Canon EOS R5 + RF24-70mm F2.8 STM lens or Fuji X-H2S + XF 18-55 mm F2.8-4 lens.

z For more information visit www.dji.com.

Avid

MediaCentral | Acquire

MediaCentral | Acquire is an IPbased ingest scheduler offering support for remote collaboration and improved hybrid working. The update for the MediaCentral workflow platform enables media companies to accelerate production on premise and in the cloud. MediaCentral

| Acquire adds ingest management to MediaCentral | Cloud UX with support for teams to collaborate from anywhere, thereby benefiting workflows like news production.

The MediaCentral | Acquire ingest scheduler app in MediaCentral | Cloud UX automates ingest scheduling for SDI and IP sources by controlling FastServe | Ingest, FastServe | I/O and MediaCentral | Stream. The new capabilities support production teams from story creation to delivery, whether the final product is part of a rundown-based on-air show or is posted to a content platform or social media site.

z For more information visit www.avid.com.

Lumens Digital Optics

VC-A71P-HN UltraHD PTZ Camera

The VC-A71P-HN UltraHD PTZ camera can transmit 4K 60fps over SDI and HDMI. Supporting a range of streaming protocols, including the Secure, Reliable Transport (SRT) protocol, high bandwidth NDI and NDI|HX3, the new camera also supports the FreeD camera positioning data protocol for VR, AR and MR production, ITU-R BT.2020 and Rec. 709 color spaces and genlock, making the VC-A71P-HN a fully featured broadcast camera ready for years of service.

Among the first NDI|HX3 products to become available, the VCA71P-HN delivers low-latency video at reduced bandwidth while maintaining visually lossless 4K image quality. The camera is capable of simultaneous output of full-bandwidth NDI for master recording and display on in-venue screens.

z For more information visit www.mylumens.com/en

Tiffen

Lowel Tota LED XL

Lowel Tota LED XL is a new daylight-balanced panel floodlight with foldable design and a 3x increase in brightness compared to previous models. The new floodlight can be used to light a subject or raise the ambient set lighting for both video and photography. The Tota LED XL emits 11,200 lux of flicker-free, continuous light from 216 LEDs. The 8-inch-by-8-inch panel produces a 60-degree beam at 5600 degrees K +/-200 degrees K. Offering accurate color reproduction with a Television Lighting Consistency Index (TLCI) rating of 98 and a Color Rendering Index (CRI) of 96, the new floodlight can render vibrant colors as well as warm, nuanced skin tones. When a hard light source is needed, the included rigid diffusers can be quickly removed.

z For more information, visit www.tiffen.com/pages/lowel-lighting-system.

Sony LBN-H1 Battery Station

The LBN-H1 provides convenient storage and transportation of up to 10 Airpeak S1 battery packs (LBP-HS1), while providing intelligent and fast charging for eight battery packs. It is designed for professional drone operators flying Sony Electronics’ Airpeak S1 drone1, from film sets to industrial applications.

The LBN-H1 includes built-in charging cables for two remote controllers (RCRVH1) and equipped with three standard auxiliary power outlet accessory sockets that can support a variety of accessories including 12V outlets that can charge mobile devices, cameras, and other USB accessories. The accessory socket cap can also be used as a stopper to prevent the lid from accidentally shutting due to wind or other factors while using this product.

z For more information visit www.pro.sony

24 XXXXXXXX 2021 | www.tvtechnology.com | twitter.com/tvtechnology 24

eye on tech | product and services February 2023 | www.tvtech.com | twitter.com/tvtechnology

Peru Is on a Lawo-Fueled Mission

By Victor Solis Talaverano Sound Engineer Latina

LIMA, Peru—My first experience with a Lawo desk was about nine years ago, at RPP Group, a popular radio station. It was among the first in Peru to implement visual radio and it then refined the concept by adding television programming to its multiplatform broadcast offering, which today encompasses radio, TV and web streaming.

The setup involved a Lawo sapphire radio console and an mc²56 Audio Production Console that needed to exchange data in a dependable fashion. More than 70 channels were configured on RPP’s mc²56 console for lightning-fast access and convenient mixing of a variety of program material.

I currently work at Latina, a TV station dedicated to the production of news programs, entertainment, sports, magazines, primetime shows, and more. My audio engineering colleagues and I enjoy working with the Lawo technology there, which was introduced a few years ago to facilitate the leap from analog to digital.

CONNECTED STUDIOS

Latina has seven studios, each equipped with a Compact I/O unit connected over fiber-optic links to make all audio channels accessible from any mc²36 console in any audio control room. Routing the studio I/Os to the required console is straightforward, and this is a great help for us.

The station owns a plug-in-based WAVES SoundGrid DSP server, which can be connected to the desired console via a Digigrid MGO optical interface. All audio consoles are conveniently linked to a network switch, so that the DSP server is available to the engineer who needs it. The WAVES plug-ins and settings can be tweaked directly on the console screen.

Latina TV was the Peruvian rightsholder for the 2022 FIFA World Cup in Qatar, and I was fortunate enough to be in charge of the live matches. We decoded the Enhanced TV Binary Interchange Format (EBIF) signals received from FIFA in such a way as to isolate the ambience microphone and backup audio

channels.

We then added the audio signals from our commentators and correspondents in Qatar to this sound bed, which we de-embedded using a V__pro8. This approach allowed me to create an audio mix for viewers in Peru.

READY FOR THE FUTURE

One might argue that some of the devices mentioned above have been discontinued. But they are still relevant: the power and flexibility of these components meet the requirements of the leading media in our country. I am the first to admit, though, that I cannot wait to take Lawo’s next-generation technology for a spin, because I know it will make my work easier still.