And here we go, at last. The happening of the year, the annual meeting that all broadcasters and AV professionals have been waiting for a year is here. For our industry, September comes not only with the beginning of classes at school or the comeback to work from holidays —if you are so lucky to be on holidays during summer season— but with all the excitement of getting ready to travel to Amsterdam and join the buzzing of the industry’s engines roaring because the trip is about to start: IBC 2023, oh, boy, what a thrilling time!

And these times are indeed thrilling, if we stop just a moment and take a look around us, at how broadcast industry has been blooming and sparkling fueled by technological developments at all fronts: from managing live video delivery or virtual production workflows, developing new ways of video compression or better Cloud storage capacity, to AI being able to track players at field —and even generate characters—, the hunger for improvement has penetrated all broadcast industry layers.

Because times are changing and our sector borders expanding, TM Broadcast is offering a brief preview, just a glimpse if we bear in mind IBC’s numbers, of the International Broadcasting Convention in hope it will serve as a navigation card for the meeting. Besides, our staff will be on site, ready for whatever may come, to find out the most remarkable highlights and bringing them to our next issue. We always bet for face-to-face interaction and the IBC’s more than 37.000 attendants turns it into the ideal environment for sharing knowledge, strengthen participants’ network and forge alliances that will sure impulse new developments.

It couldn’t be any different: the most expected broadcast convention in Europe boosts and dynamizes the entire industry with the IBC Accelerator Hub and thirteen innovation halls, in addition to show floor, exhibitors from 170 different countries and renowned IBC Awards. But let’s say no more and please, come and see; the show is about to start…

This last year has been thrilling: a year full of progress, advances and new solutions that makes workflows easier and more efficient, a higher quality in video and streaming delivery and automations that saves lots of time and money. With all the recent achievements in mind, this year’s IBC promises to be exciting as well as a boost for the AV industry.

Because IBC 2023 is not merely a gathering of industry players; it is a crucible of technical progress. With this special report, we will delve into the myriad technical advances that will be unveiled at the event. Whether it’s the latest advancements in 4K and 8K broadcasting, immersive audio experiences, cloud-based production workflows, or AI-driven content analysis, our keen eye for detail will dissect and scrutinize each innovation. As a visitor or professional, you’ll have the opportunity to speak with the engineers, designers, and thought leaders behind these breakthroughs, providing your readers with an informed and insightful perspective on the implications and potential disruptions these technical advances may bring to the broadcast and audiovisual production landscape.

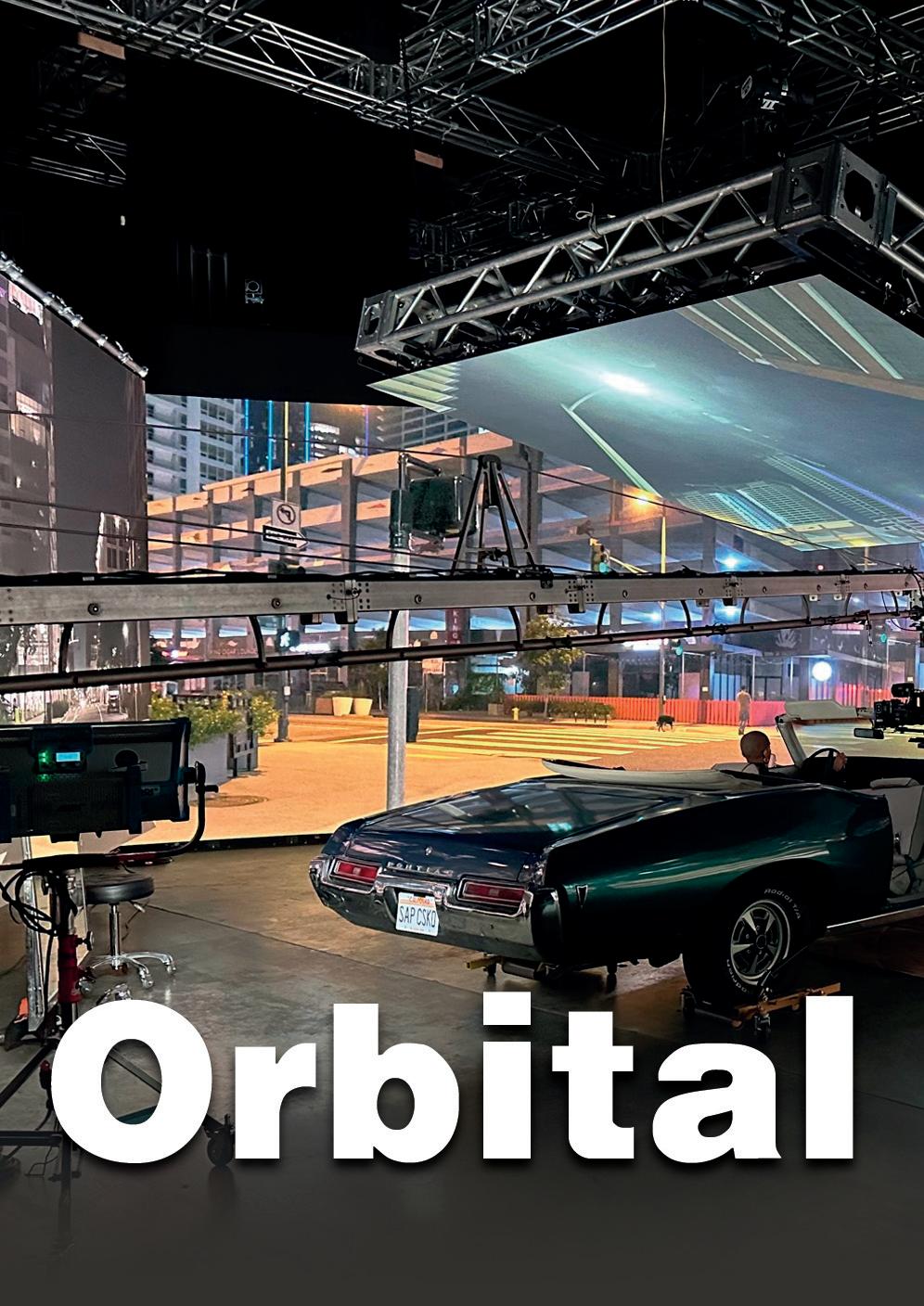

TM Broadcast talks with A.J. Wedding, Orbital Studio’s CEO, about how to achieve success in so little time as well as the advantages of virtual production, video industry and what are the main challenges in audiovisual production nowadays.

Zero Density has recently released a solution that makes high-precision tracking in virtual production easier than ever: its Traxis Tracking Platform. The Traxis Platform allows creatives to produce immersive stories with photoreal virtual graphics, while eliminating traditional barriers like long calibration times, distracting markers and expensive latency errors. Also, it features solutions for camera tracking, talent tracking and tracking control.

The plattform offers an accuracy of up to 0.2 mm and ultra-low latency of just six milliseconds, so the Traxis Camera Tracking system offers exceptional precision. On a

virtual production, this ensures continuous calibration and seamless real-time results, so that every camera move that happens on set is reflected in the virtual world without delay. Virtual graphics can then be broadcast live without breaking the audience’s immersion.

For talent tracking, the Traxis platform includes an AI-powered system that can identify multiple actors within a 3D environment at once. The Talent Tracking uses AI to extract the actor’s 3D location from the image in real time, then sends the data to Reality Engine to create accurate reflections, refractions and virtual shadows of the actor inside the 3D space. The technology

is completely markerless, which streamlines setup and maintenance, and means actors no longer need to be distracted by wearables or beacons that make it harder to perform.

At the heart of the Traxis platform is the Traxis Hub, a comprehensive tool that manages both tracking and lens calibration data. Compatible with all industry-standard tracking protocols, the hub brings together all available tracking and lens data into a centralized interface, significantly reducing setup time. The hub has a library of the most commonly used broadcast lenses, enabling quick and easy calibration.

Broadcasters, telcos and CSPs face mayor challenges in delivering content efficiently and effectively, as the video industry grows. Varnish Software, a company that specializes in caching, streaming and content delivery software solutions, answers to this demand with the latest enhancements to Varnish Enterprise 6, with the goal of helping organizations gain greater control over their content delivery.

The shift from traditional broadcasting to streaming, coupled with the need to leverage existing infrastructure, are driving factors behind the need for more flexible and scalable Content Delivery Networks (CDNs). These challenges are further exacerbated by growing demand for live events, like sports, where low latency streaming and efficient management of unpredictable traffic surges are crucial.

However, the adoption and management of CDNs brings its own set of challenges. These range from having limited expertise, to orchestrating changes without disruptions, integrating with existing systems and more.

Recent updates to Varnish Enterprise 6 include the new Massive Storage Engine (MSE 4) and updated Traffic Router, which

aim to address these emerging challenges. Major telcos and broadcasters worldwide have swiftly adopted these enhancements to optimize and augment their content delivery, from origin to the edge.

“Skyrocketing demand for more content and digital experiences has placed an immense burden on broadcasters and telcos, who require efficient distribution without extensive new hardware investments,” said Adrian Herrera, CMO, Varnish Software. “Optimizing through software is the answer. Varnish Enterprise 6 is constantly evolving to enable more efficient distribution, reduced complexity and futureproof operations with greater control over content.”

Many leading broadcasters and telcos rely on Varnish Software

to support their migration from linear to streaming distribution. RTÉ, for example, uses Varnish Enterprise 6 to power flexible live and on-demand video streaming, while future-proofing its HD and potential UHD delivery capabilities.

Since deploying Varnish, RTÉ was able to minimize backend storage reads and maximize cache efficiency, allowing it to support greater traffic volumes at higher bitrates without additional hardware. In addition, RTÉ has found many unique creative use cases for Varnish Enterprise 6, which have helped rapidly implement new features and capabilities. For example, RTÉ is using Varnish Configuration Language (VCL) to integrate dynamic ad insertion, customizing responses based on client requests.

Ideal Systems, one of the major companies in Asia specialized in systems integrating for broadcast, cloud and professional audiovisual, recently announced that it has designed, built and delivered brand new 4K, NDI® based, corporate production studios with live streaming features for PropNex Limited, a leading real estate agency in Singapore

Studio as a natural extension of their customer driven business as they continually seek to better serve, educate and engage their customers.

At over 1,700 SqFt, the extensive studios, located at PropNex’s head office in Singapore HDB HUB, contain a fully featured production control room and a large chroma key green screen set for virtual productions. All the studio cameras are 4K Native NDI, and all the networking and production systems from BirdDog, Kiloview and VizRT are run on the latest NDI 5 technology standard.

The new PropNex studio is built in what was formerly a large office space. The studio will provide unprecedented

communications ability for PropNex to produce and live-stream high quality 4K professional video content and provide a content library to its customers and over 12,000 sales professionals.

PropNex has worked to ensure their clients have access to information and research content that can help them in their property investment journey. Tapping onto the potential of the fast growing digital media space, they see the high-quality video content created in their new PropNex

Due to the wide audience reach of online video platforms, many corporations are choosing to build their own professional TV grade studios to create content and communicate directly with their customers via social media and streaming to apps. For the PropNex Studio, Ideal Systems based the whole video production architecture on next generation NDI® infrastructure with zero legacy SDI equipment or cabling used in the entire facility. This is a next generation TV production system supporting end-to-end 4K over IP from camera, through production and live streamed securely up to 4K to the viewer.

By utilizing NDI® with live streaming capability of the TriCaster® Mini 4K, the Ideal Systems solution design reduces the complexity of the solution architecture for technically complex productions featuring multiple video callers from platforms like Zoom® and Microsoft® Teams in live interviews on the Video Wall or in Virtual Space in the Chroma set.

Nowadays, the manufacturing industry faces a huge challenge to reduce its environmental impact, in a world that more and more strives for sustainability. The UK company CP Cases, renowned for designing and manufacturing protective equipment cases and containers, has joined a partnership with Matrix Polymer, experts in rotomoulding materials and Queen’s University, a renowned research institution, in order to launch a study project funded by Innovate UK for introducing a field-tested recyclable Bio Polymer.

This project focuses on shaking up rotational moulding by integrating Matrix Polymer’s state-of-the-Art materials, with sustainability as a flag.

The collaboration between CP Cases, Matrix Polymer, and Queen’s University is a testament to their shared commitment to sustainable innovation. These industry leaders have come together under the umbrella of Innovate UK’s initiative, aiming to introduce the power of recyclable bio polymers into the rotational moulding process, thus paving the way for a more eco-friendly manufacturing approach.

Matrix Polymer brings to the table its expertise in raw materials, as a leading supplier to the rotational moulding industry. With a focus on reducing carbon emissions and plastic waste,

these materials align seamlessly with the project’s core objective of sustainability. Matrix Polymer’s key objective is to bring fresh ideas and exciting new materials to the market and strong technical roots is a cornerstone of this collaboration.

The research ability of Queen’s University plays an integral role in this collaborative endeavour. With a deep understanding of material science and manufacturing processes, the university is providing critical insights and technical expertise to validate the feasibility of creating recyclable bio polymers to introduce into rotational moulding. Their involvement ensures that the project’s outcomes are grounded in scientific rigour.

CP Cases specialises in crafting high-quality protective solutions for a wide array of industries. Leveraging decades of experience, they design and

manufacture custom cases, containers, and enclosures that safeguard valuable equipment and delicate instruments from even the harshest conditions. Their commitment to innovation and precision ensures that their solutions not only meet but exceed the stringent demands of various sectors, including defence, aerospace, technology, and more. With an unwavering focus on quality and functionality, CP Cases is your trusted partner in safeguarding your assets wherever your journey takes you. Their dedication to sustainability and their commitment to pushing the boundaries of rotational moulding make them an invaluable contributor to the project.

The project’s success hinges on rigorous testing and validation, a process guided by the combined expertise of CP Cases, Matrix Polymer, and Queen’s University. Through meticulous examination of the materials’ performance within rotational moulding processes, the collaboration aims to ensure a seamless integration that meets industry standards and requirements.

Both companies, Gravity Media and JAM TV, recently confirmed the renewal of their long lasting partnership to broadcast the 2023 AFLW season. This will be the seventh AFLW season delivered by Gravity Media’s broadcast facilities in collaboration with JAM TV. Besides, this broadcaster produces AFLW television coverage for Fox Footy and Kayo.

Gravity Media’s latest outside broadcast units, satellite and uplink facilities and crews will be working closely with JAM TV’s production team as they criss-cross the country over the coming three months. The australian company will be involved in the coverage of 47 games in this AFLW season. In addition to providing broadcast technologies for JAM TV, Gravity Media will also produce the big screens coverage at the stadiums.

Cos Cardone – CEO, JAM TV:

“Gravity Media has been our AFLW technical provider since the very f irst game in the very f irst year of AFLW. It’s been an outstanding collaboration which has delivered a seamless and successful coverage to our broadcast partner Fox Sports every year. We look forward to the launch of another AFLW season in September which together with JAM TV’s f inals coverage of the VFL, SANFL and WAFL competitions makes for a big and exciting month ahead for our talented football production team at JAM TV.”

Marcus Doherty, Account Executive – Media Services and Facilities, Gravity Media:

“We are thrilled to again be working with JAM TV on AFLW. We have a long partnership with JAM

TV working on major events and look forward to again being a part of such an exciting competition and delivering JAM TV’s Fox Sports and Kayo coverage of the AFLW. We are also pleased to continue our partnership with JAM TV on its production of VFL coverage.”

Kahleah Webb, Head of Operations, Gravity Media:

“Gravity Media will use nine outside broadcast trucks, including an SNG f leet, and a total team of 800 crew across the country to deliver a total of 130 hours of AFLW coverage f rom 17 venues around Australia over the coming 10 weeks. It is a significant technological and logistical exercise that builds on our successful AFLW broadcast facilities provision to JAM TV over the past seven years.”

The alliance between Cellcom, Israel’s cellular provider, and Novelsat, company specialized in content connectivity, aims to leverage 5G technology for delivering dazzling multi-camera perspectives to fans at live events. This collaboration will introduce an immersive real-time multi-camera viewing experience for in-stadium fans through the integration of Novelsat’s edgebased media delivery solution within a stadium equipped with Cellcom’s 5G network.

This way, video feeds from various cameras positioned throughout the stadium will be seamlessly processed by Novelsat’s solution and promptly distributed to fans’ smartphones over Cellcom’s public 5G network. Utilizing state-of-the-art edgecomputing capabilities, Novelsat’s

solution will stream video content with high quality and minimal latency to a dedicated application installed on users’ devices. This pilot will demonstrate the advantages of edge-based video delivery, offering enhanced video traffic efficiency and an elevated end-user experience quality (QoE), all the while showcasing the potential of 5G to deliver customized, engaging experiences that draw fans even closer to the heart of the action.

“5G is a game-changer for sports and stadium business, stimulating fan experiences. The smartphone in the hand of each fan is generating the opportunity for an interactive and unforgettable fan experience,” said Gary Drutin, CEO of Novelsat. “Novelsat’s edge- based media distribution solution

leverages next-generation connectivity to enable new values for connected stadiums. Our pioneering solution ideally addresses the on-site demand for high bandwidth with low latency, offering market-leading video delivery economics for large public venues. Creating a platform that supports the future of interactive stadiums, we empower the vision of the connected fan experience with future-proofed solutions that stay ahead of the game.”

“The 5G technology enables the advancement of highly innovative products and services across various domains. At Cellcom, we are diligently working to enhance Israel’s most powerful and highest quality 5G network, alongside fostering and developing greater and better experiences for our customers,” said Avi Grinman, Cellcom’s CTO and VP of Engineering.

“Stimulating innovation in sports fan experiences aligns perfectly with our mission, and by integrating Novelsat’s expertise in content delivery with our advanced 5G infrastructure, we aim to explore a new dimension of in-stadium entertainment.”

Sencore has announced the acquisition of Adtec Digital’s Afiniti platform. Adtec Digital’s Afiniti has made significant strides in contribution, news gathering, and REMI applications, and its integration into Sencore’s product portfolio marks a strategic move towards expanding our offerings to our valued customers.

Existing customers of Adtec Digital can rest assured as Sencore remains committed to further developing and supporting the Afiniti platform, accompanied by their worldrenowned ProCare support services.

Some of the most important features of Afiniti platform are: the highest-quality, lowest latency encode and decode functionalities available in the market today. It supports up to 4K UHD resolutions at 4:2:2 10-bit, with full compatibility for HEVC, H.264, and MPEG2 video codecs. The modular design of Afiniti enables seamless customization, offering options for contribution-level encoding and decoding, ASI, IP, and SRT I/O, as well as multiplexing capabilities – all in one platform.

In addition to the processing abilities of Afiniti, Sencore has ambitious plans to elevate

the platform’s functionality by introducing Centra Gateway. This software platform will provide users with full control over the Afiniti platform, simplifying the management and control of contribution encode and decode workflows like never before.

“We are thrilled about this acquisition and firmly believe that it will deliver immense value to our customers, empowering them with cuttingedge technology and enhanced support to achieve their broadcasting goals,” says Seth VerMulm, Director of Product Management at Sencore.

Zixi, the company specialized in enabling costefficient and highly scalable live broadcastquality video over any IP network or protocol has recently announced its achieving of the Amazon Web Services (AWS) Service Delivery designation for AWS Graviton. This designation means that it’s recognized that Zixi provides deep technical knowledge, experience, and proven success delivering the SDVP on AWS Graviton-based Amazon Elastic Compute Cloud (Amazon EC2) instances and deployed support for AWS Graviton2 and AWS Graviton3.

Besides, this achievement validates that Zixi’s capabilities in cloud architecture, engineering and cloud native application development on AWS are helping customers accelerate and scale their adoption of AWS Graviton to realize the price performance benefits sooner across more workloads.

AWS Graviton processors are custom ARMbased processors built by AWS to deliver a great quality performance for your cloud workloads running in Amazon EC2. These instances are available in different sizes and configurations to cater to various workload requirements. AWS Graviton2 and AWS Graviton3 processors offer a significant performance improvement, with increased core counts, faster clock speeds, and improved memory subsystems. One of the advantages of using AWS Graviton instances is cost savings where instances typically provide a lower price per compute unit compared to x86-based instances, allowing users to optimize costs for their workloads.

This last year has been thrilling: a year full of progress, advances and new solutions that makes workflows easier and more efficient, a higher quality in video and streaming delivery and automations that saves lots of time and money. With all the recent achievements in mind, this year’s IBC promises to be exciting as well as a boost for the AV industry.

The fifteen exhibition halls, themed by creation, management and delivery are complimented by a host of feature areas specially designed to enhance visitants and professional’s experience. The IBC Conference, as well as the guest speaker’s interventions, allows a deeper understanding as well as facilitating networking at events including the industry established IBC Awards.

At the heart of IBC’s allure are the industry giants and trailblazers who converge to unveil their latest creations. This preview will shine a spotlight on these prominent companies, from longstanding powerhouses to up-and-coming disruptors. Each of them brings its unique vision and innovations to the forefront,

vying for attention and recognition. This preview will serve every professional to navigate the labyrinthine exhibition halls, conduct interviews, and provide an indepth exploration of their offerings.

Because IBC 2023 is not merely a gathering of industry players; it is a crucible of technical progress. With this special report, we will delve into the myriad technical advances that will be unveiled at the event. Whether it’s the latest advancements in 4K and 8K broadcasting, immersive audio experiences, cloudbased production workflows, or AI-driven content analysis, our keen eye for detail will dissect and scrutinize each innovation. As a visitor or professional, you’ll have the opportunity to speak with the engineers, designers, and thought leaders behind these breakthroughs, providing your readers with an informed and insightful perspective on the implications and potential disruptions these technical advances may bring to the broadcast and audiovisual production landscape. This guide is designed to be your navigation map in order to accomplish all your goals. Let’s begin.

Argosy will be launching its new Ultra range of 12G solutions at IBC. The ULTRA suite makes UHD/4K accessible to those working with traditional SDI workflows.

This new Argosy’s suite provides an end-to-end solution comprising a range of 12G products, including the Image 360 ULTRA, Image 1000 ULTRA, and Image 1500 ULTRA coax cables. Primarily used for in-rack wiring and OB trucks, these UHD SDI Digital Video cables are designed to deliver high performance for UHD/4K SDI video applications.

Additionally, the suite features the coax KORUS ULTRA HD 12G BNC’s, tested to meet the requirements of SMPTE ST 2081-1 & ST 2082-1 at 6G & 12G systems for use with the Argosy Image ULTRA coax cable range.

This new Argosy-branded ULTRA range enables straightforward installation for end-to-end UHD/4K and eliminates the need for expensive infrastructure fibre upgrades.

Primarily used for in-rack wiring and OB trucks, these UHD SDI Digital Video coax cables are exclusive to Argosy and designed to provide superior performance for UHD/4K applications.

Ateme will be checking out the latest industry trends and help visitors and customers find the most agile solutions, on-prem, cloud-based, or a combination of the two.

The company will be showing exciting demos showcasing the latest state-of-the-art solutions enabling:

Transformation — Transform operations with efficient

solutions including Ateme’s true cloud-native Cloud DVR and the latest developments of our SaaS offering, Ateme+. Monetization — Increase revenue through dynamic ad insertion, FAST solutions, and shoppable TV capabilities.

Viewer Engagement —

Engage current and attract new viewers with high OTT QoE, fan-retention solutions and enhanced stadium experiences.

Going Green — Curb costs and your business’s carbon footprint while improving the viewing experience with our winning solutions including end-to-end Audience-aware Streaming.

During the IBC tradeshow, BCE will showcase its remote production and gamification modules, featuring two

cutting-edge solutions, Holovox and Fan engagement.

Holovox is a remote voiceover solution that enables users to add one- or multilanguage voice-over streams to live shows from anywhere with an internet connection. Its cloud-based platform allows users to manage teams, control live audio and video streams, and even incorporate live translations and sign language insertions seamlessly.

Fan engagement enables real-time gamification and monetization of audiences. Integrated with Holovox, it allows sportscasters to entertain viewers by creating interactive games within the live broadcast, leading to a unique fan experience. It also provides opportunities for direct audience monetization through transactions and sponsorships, generates innovative ad inventory, encourages competition among fans for prizes, and fosters closer engagement with the action.

By leveraging these two solutions, BCE streamlines voice-over production, enabling sportscasters to seamlessly transition between different events while

ensuring viewer engagement through live gamification, enhancing the overall viewing experience.

Visit BCE at stand 8.B57 during the IBC tradeshow to witness live demonstrations of the Holovox and Fan engagement solutions among others in content management, distribution, and exchange.

Black Box will feature IPbased KVM and AV solutions that empower users with intuitive user experiences and high system connectivity for production control rooms and both remote and hybrid productions — including live events—.

Black Box will show how its latest solutions ensure flexible, scalable, and secure workflows with industryleading low-IP-bandwidth usage. Visitors to the Black Box stand will discover new additions to Emerald® KVMover-IP system that unite KVM and AV video wall processing under a single management system. They will experience the company’s fresh approach — integrating automation into Emerald, enabling greater system reliability through predictive maintenance, and simplifying workflows through synchronization of user workstations.

Black Box will too demonstrate the Emerald DESKVUE solution and Emerald AV WALL.

The company will highlight IPbased and remote production intercom solutions and announce new features for the Arcadia Central Station.

Clear-Com will showcase the latest advances in IP-based intercom for a wide variety of applications. They will highlight innovative features of their flagship Eclipse® HX Digital Matrix Intercom System, including Dynam-EC™ realtime production software, industry-leading role-based workflows, and the new 2X10 Touch™ desktop touchscreen panel. The award-winning IP-based Arcadia® Central Station will be available for demonstration, including the HXII-DPL Powerline device, an IP interface that delivers

power and digital audio from Arcadia to HelixNet® Digital Network systems, as well as highly anticipated new features to be revealed at the show.

Dynam-EC is an intuitive software tool that allows operators to quickly switch all audio input and outputs, audio mapping, IFBs and partylines at the touch of a button.

New features introduced in EHX 13.1, including the implementation of role-based workflows and support for NMOS 4 and 5, facilitate many of the needs of broadcast applications for large-scale events such as the World Cup or other global sporting events.

A popular choice for flypacks and OB vans, Arcadia Central Station brings together HelixNet®, FreeSpeak®, Clear-Com Encore®, other 2W/4W endpoints, and third-party Dante devices in a single, integrated system. Arcadia offers licensed-based scalability that allows it to meet numerous production needs, with support for over 100 beltpacks and up to 128 IP ports, with additional upgrades available in the future. The new HXII-DPL Powerline Device connects to any existing Arcadia system via its own network port to provide Powerline connectivity via 3-pin XLR anywhere on the network, opening a world of digital audio connectivity to HelixNet users.

CueScript will be showcasing a range of tools needed for broadcasters to more easily produce content in the field. This includes its recently released cloud-based service, CueTALK Cloud, its first voiceactivated solution for its CueiT prompting software SayiT, and OnTime, a WiFi enabled clock device that can be easily configured to the local time zone.

Besides, CueScript will demonstrate its various PTZ teleprompter solutions along with its new custom solution designed specifically for the Sony Electronics’ FR7 PTZ Camera.

CueTALK Cloud is designed to allow CueScripts’ prompting software CueiT- and CueTALKenabled devices, which feature the latest in IP connectivity, to communicate over WiFi. It allows controllers and prompt devices to be accessible via the cloud using standard public internet connection.

CueScript will also be highlighting SayiT, its first voice-activated solution for its CueiT prompting software. SayiT software application receives a talent microphone input and allows those using the CueiT teleprompting software to be able to have the script automatically scroll in accordance to what they

are saying, matching what is displayed on the output of the teleprompter and eliminating the need to manually scroll the prompter script.

OnTime, a WiFi enabled clock device that can be easily configured to the local time zone, will also be a highlight at IBC. While it is a small accessory product, it is a great tool for those broadcast teams that require an accurate timing device on the road, especially for those that are traveling to multiple locations.

At IBC 2023, Dalet will showcase its new Dalet InStream hyper-scalable multichannel IP ingest solution, a significant expansion of Dalet Brio’s capture capabilities, and the recently launched Dalet Cut web-based media editor. These powerful cloud-native SaaS solutions help customers achieve the operational efficiencies and cost savings they need. This is the first time that end-to-end cloud workflows can run seamlessly across news operations powered by Dalet Pyramid and the wide range of Dalet Flex media workflows.

IBC2023 attendees can book a demo to see live the benefits

and cost savings that Dalet’s full range of solutions bring to newsrooms and media workflows. This includes its new Dalet InStream and Dalet Cut innovations, the nextgeneration Dalet Flex platform and Dalet Pyramid modules, Dalet AmberFin high-quality media transcoding and processing, as well as the Dalet Brio high-density ingest and playout application.

Dalet Cut enables live webbased editing from anywhere with native access to all assets, including clips, sequences, projects and graphics, even with limited bandwidth.

Dalet will also showcase Dalet Cut’s news production capabilities within Dalet Pyramid:

- Scoop news and create multimedia stories from anywhere using cloud-native proxy editing along with intuitive story creation tools.

- Assembling and distributing compelling news for any platform is quick and easy in a single browser tab.

- Quickly generate multiple versions of each news story for social networks at any point in the workflow, from ingest to delivery.

Dejero will introduce its latest EnGo mobile video transmitter to visitors at IBC 2023, and visitors to their stand will learn that not only does the new EnGo 3s carry the same powerful features of the EnGo 3, including native 5G modems and built-in GateWay Mode for wireless internet broadband connectivity but it also offers 12G-SDI and HDMI connectors.

The Dejero staff on stand will explain to attendants and interested public that 12G-SDI can deliver eight times the bandwidth of HD-SDI, and users have the ability to handle high frame rate and live 4K/UHD signals, over a single cable.

Similar to the existing EnGo 3 and 3x mobile transmitters, the EnGo 3s features Gateway Mode which provides wireless broadband internet

connectivity in the field to enable mobile teams to reliably, securely and quickly transfer large files, access MAM and newsroom systems, and publish content to social media. GateWay Mode also provides general internet access to resources for field research, access to cloudbased services and also serves as a high-bandwidth access point for devices.

As IBC 2023 visitors will be able to see and confirm the EnGo 3s also streamlines communication and workflow between the field and station or post production facility. This is essential for mobile news teams that work to tight deadlines, as well as film crews working from remote sets.

Dejero’s Smart Blending Technology powers the EnGo 3 mobile transmitter range by simultaneously blending together multiple wired (broadband/fiber) and wireless (3G/4G/5G, Wi-Fi, satellite) connectivity from multiple providers to provide a ‘network of networks’.

Densitron, company specialized in display, touchbased interfaces and specific ODM+ outsourced product

design and development for manufacturers, will showcase its latest HMI and control system innovations for broadcast manufacturers and systems integrators at IBC 2023. The company will be presenting as well its suite of ready to use product control panels and IDS Control System. In addition, the company will introduce at IBC 2023 a new technology, Jogdial, which provides a vital new component in its ongoing efforts to enrich the tactile experience of future sets.

Also, Densitron will present to the IBC Show’s attendants its complete suite of ready to use control panels and IDS Control System, powered by ARM or X86 computer processors.

The company will shine a particular spotlight on its tactile and haptics innovations, including the new 2RU Haptics product, which delivers a ‘building block’ HMI that can be incorporated into vendors’ own designs, together with the Tactile HMI Evaluation Kit.

At IBC 2023, Densitron will be introducing the new Jogdial encoder for use with Densitron’s 2RU rack-mounted range, using IBC Show as a official launch platform.

Densitron will also highlight its IDS Control System, which includes a growing product ecosystem (with 100 new pieces of broadcast equipment recently becoming compliant) and cloud deployment option.

At this year’s IBC, Edgio will demonstrate how media companies can create a complete streaming solution built on Uplynk’s components that deliver great viewing quality and reliability while optimizing monetization through advanced advertising.

Visitors will be able to learn about Edgio’s tried-and-tested solution which provides a high quick time-to-market and improves ROI.

In addition, Edgio will also share details of its latest partnerships and integrations that see Uplynk’s offering expand to solve all the complexities of video streaming today, from ingestion to delivery and analytics.

At this year’s event, EVS is set to showcase its comprehensive and integrated suite of solutions, offering a creative and cohesive working

environment from production to monetization.

EVS will highlight the benefits of its VIA platform, which provides common and flexible technology catering to the specific needs of various stakeholders including broadcasters, production teams, content owners, and digital marketers.

For production purposes, the LiveCeption solution leverages the power of the platform to enable creators to deliver captivating replays whether

they are on-site or working remotely. The MediaCeption solution utilizes the platform to provide web-based browsing and editing tools that enhance collaboration among production teams. On the distribution side, EVS will be showing its MediaHub solution, which allows content owners to monetize their assets through cloud-based operations and facilitates social media engagement.

Furthermore, EVS will unveil the latest version of its Xeebra multi-camera review system, designed to facilitate remote workflows even in low-bandwidth environments. This enhanced version caters to the demands of centralized video operation rooms (VOR), ensuring swift and reliable operations that reinforce collaboration regardless of geographical constraints.

Last but no least, the full line of EVS’s Neuron products will be presented, including the latest addition, Neuron View, a high-quality scaling and low latency live production multiviewer. Specifically designed to support the needs of live production teams, one View card can support two UHD outputs or up to eight full HD outputs in a fully customizable layout.

At IBC 2023, farmerswife —software provider for resource scheduling, project management, and team collaboration in the media industry— will be showcasing for the first time version 7.0 of the farmerswife tool, and the new features of the Cirkus project management solution.

To make workflow management and collaboration easier, the version 7.0 of the farmerswife scheduling, management and reporting tool has incorporated several new features including:

Dark mode: For those who are working late at night, in a low-light grading suite, or just want to reduce eye strain,

farmerswife v7.0 offers its highly anticipated new dark theme.

QR Codes: The new “QR Code” element adds an extra layer of information to reports, making it easy for clients or colleagues to quickly access additional details or resources, such as a project overview, a budget breakdown, or a promotional video.

Sync Mode: The new “Sync Mode” option allows users to control which direction data is synced —from farmerswife to Cirkus, from Cirkus to farmerswife, or both ways. Visitors will check out how the tool can be configured independently for Projects, Bookings, Time Reports and/ or Personnel Bookings.

Advanced Project Search: The latest release makes it easier for users to find what they need. The dropdown “Filter From” allows users to filter by Container, Project, Binders and Projects & Binders. Users can also “Edit View” or activate the “Load View” option to load a saved view from another user, which is useful for collaboration with remote team members.

As IBC 2023 attendants will be able to see, farmerswife and Cirkus can work together

or as standalone products to address the specific needs of media organisations, with farmerswife providing inhouse scheduling, resource management and financial reporting, and Cirkus sharing information with distributed teams internally and externally.

GatesAir, a subsidiary of Thomson Broadcast dedicated to wireless content delivery, will showcase new

low-power FM and DAB

Radio solutions from its two flagship transmission product brands at IBC2023 that align with global radio broadcast trends. Highlights include an expansion of the Flexiva GX transmitter series to serve more low-to-medium FM power levels, and the European debut of an ultracompact, second generation Maxiva family of DAB/DAB+ Radio transmission solutions to support a higher density of digital radio services in a single chassis.

At their stand, GatesAir will be showing options for Flexiva GX that include GPS receivers for SFN support, and GatesAir’s Intraplex IP Link 100e module. The latter integrates within Flexiva GX transmitters, enabling direct receipt of contributed FM content instead of requiring an external codec. This further reduces rack space requirements inside RF facilities with limited open real estate.

Grass Valley will showcase at their stand a ecosystem that unifies the traditional hardware that broadcasters have long-relied upon with next-gen softwaredriven solutions, such as its

cloud-native AMPP (Agile Media Processing Platform) production and distribution ecosystem.

As a fully cloud-native solution, AMPP is a highly secure, reliable open ecosystem architected with standard REST APIs throughout, integrating applications from 80 third- party partner companies, as well as over 100 cloud native applications of its own. Standard REST API integration points for every part of the solution allow for tight integration to both customer and third party control and deployment solutions, whilst comprehensive signal format support means that AMPP can be inserted into the customers’ signal path

anywhere it’s required, from simple graphics overlays through to full workflow chains.

Grass Valley will be demonstrating its video production switchers which are widely installed in all sorts of Tier-1 production facilities, including broadcast networks, mobile OB units and remote sites, as well as studios run by corporations, universities, and houses of worship.

Another Grass Valley’s highlight for this IBC 2023 will be LDX 100 series broadcast camera platform exemplifies the flexibility and upgradeability of key products in its portfolio. This camera system lets customers choose the camera/XCU configuration

(and IP and SDI functionality) of their choice.

The Mexican company Igson, specializing in automation and broadcast IP and TV solutions, will exhibit its innovations during the next edition of IBC 2023 with the aim of bringing its products and solutions to a European and global audience.

Igson products that will be on display:

• Igson MULTIPLAY with SCTE 35/104 insertion.

• Igson REPLAY MASTER: production of sporting events.

• Igson SIGNAL LOGGER: our exclusive development for the detection of SCTE 35/104 or DTMF impulses, closed captions, etc.

• Igson PROOFREC with baseband and IP signal recording.

• The new version of Igson GRAPHICS with unique and outstanding functionalities for live production.

In addition to the new products, the company’s extensive range of solutions will also be exhibited: hybrid playout systems (SDI/IP), Master Control automation, baseband and IP signal recorders, DPI event reception and insertion systems (SCTE-35/104) for content blocking in cable and satellite distribution systems, or in digital platforms and FAST Channels, signal detection and recording systems, plotters and titlers, among other solutions.

INFiLED, manufacturer of LED displays, will launch a brandnew LED solution at IBC, its Infinite Colors technology.

If traditional LED video displays only use 3 emitters being Red, Green, and Blue, INFiLED is introducing a fourth emitter in a custom LED package that increases the color spectrum viewed from professional cameras by using a LED video Ceiling. This way, IBC visitors will learn how Infinite Colours improves a variety of LED applications by allowing full variations in tone, saturation, and colour appearance in white light and custom colours, all featured in one display.

During IBC 2023 will be presented the INFiLED Studio AR Series, where the initial application of Infinite Colors technology can be observed in by all attendants, and they will be able to experiment how the creators can seamlessly integrate attractive and dynamic lighting effects that enhance the overall visual quality of virtual productions.

INFiLED will be also showcasing its flagship WP 1.2 as well as one of its latest innovations, the M2 technology which has been seamlessly integrated into the STUDIO DB and STUDIO Xmk2 series. One of the standout features of M2 is its ability to enhance background performance through a high color accuracy.

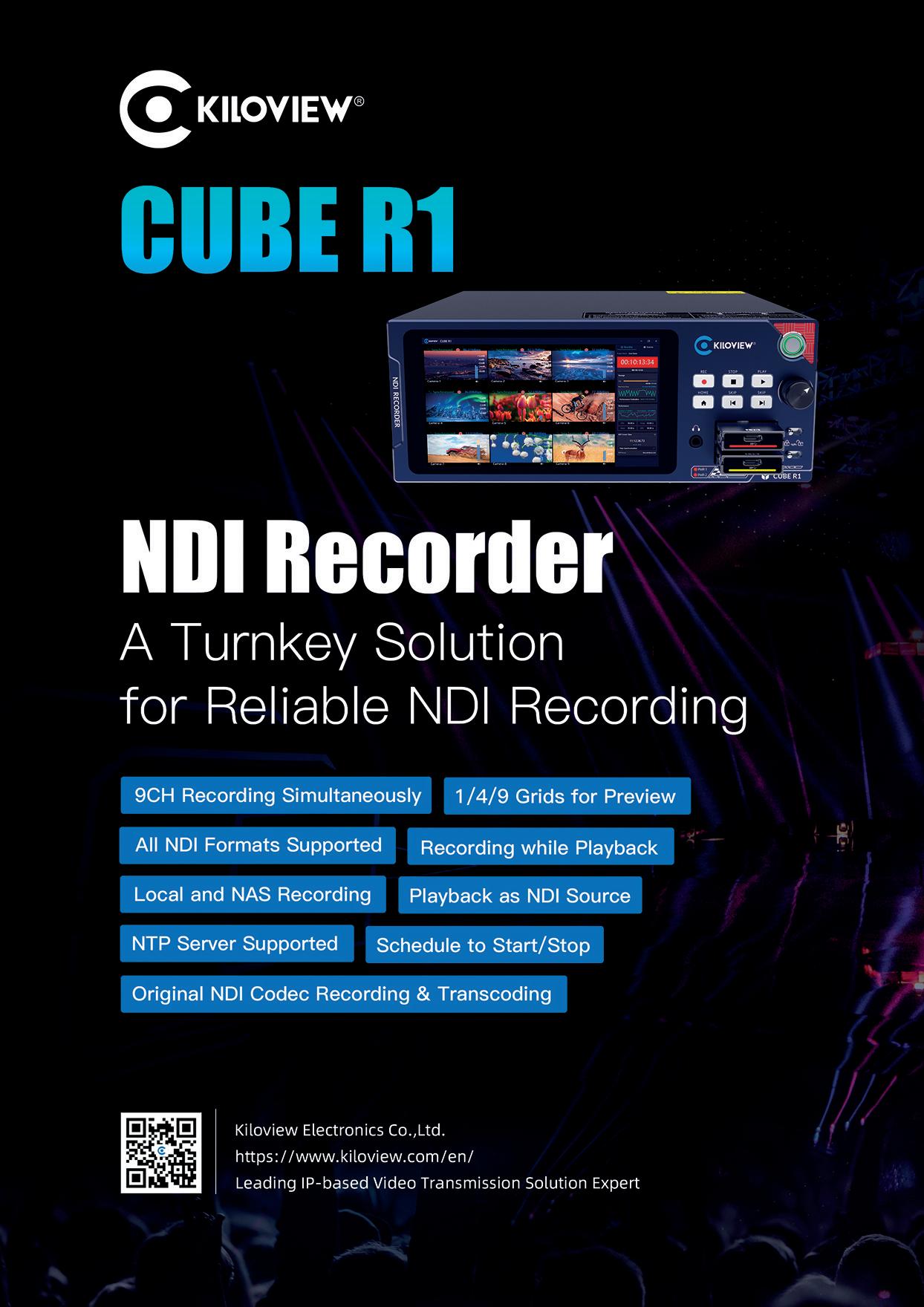

At IBC 2023, Kiloview will demonstrate its Kiloview ecosystem for broadcasting and media, showcasing its latest solutions for video contribution, production, transmission, and distribution, all based on IP.

The flexibility and interoperability of the IPbased ecosystem will be emphasized by showcasing SRT solutions, such as the newly added SRT coverage of N50/N60, rack-mountable encoder RE-3, 5G bonding encoder P3, dual-channel encoder E3, and NDI solutions, including the CUBE series and LinkDeck.

With constant upgrading of its IP-based hardware and software solutions, Kiloview Ecosystem aims to enable professionals to be more versatile in various workflows with less need for hardware and staffing. This said, attendants will be able to check out the latest Kiloview’s update: it will expand the IP coverage of N50/N60 including SRT, RTMP, RTSP, UDP and more protocols

except the existing NDI High Bandwidth and NDI|HX2/3 support. Another notable update for visitors to review is the NDI High Bandwidth support of MG300V2, as well as the ones that follow:

It’s a 5G bonding HEVC video encoder which integrates up to 4CH 5G connections, Wi-Fi, and Ethernet leveraging Kiloview’s Kilolink bonding server to optimise signals across different internet connections.

Kiloview E3

4K HDMI & 3G-SDI Video Encoder

The versatile video encoder, designed to provide highquality video streaming solutions for a wide range of applications, will be available for interested professionals at Kiloview’s IBC booth. It supports various input sources, including HDMI and SDI, ensuring compatibility with different cameras and devices. Broadcasters may be interested in its powerful encoding capabilities that enable users to utilize the video sources from both inputs in multi ways, either encode simultaneously or mix

with PIP or PBP. This encoder efficiently compresses video streams with H.265/ HEVC technology, enabling low-latency transmission without compromising on video quality. Equipped with multiple streaming protocols like RTMP, RTSP, SRT and NDI|HX3.

Kiloview CUBE R1 is an NDI recorder system designed to capture and store high-quality video content from multiple NDI video sources.

CUBE X1 - A solution for NDI multiplexed distribution

Another solution will be present at IBC 2023: Kiloview CUBE X1, designed for scheduling, routing and switching, distribution, and management of NDI signals. It accommodates up to 16 channel NDI inputs and 32 channel NDI outputs.

Lawo is set to unveil its latest innovations at IBC 2023 and visitors to its booth will be the witnesses. Among Lawo’s range of products IBC visitors will find this year a couple of anticipated developments as:

Lawo’s HOME Apps will take center stage at IBC 2023. These applications, including HOME Multiviewer, HOME UDX Converter, HOME Stream Transcoder, and HOME Graphic Inserter, will demonstrate the power of a flexible microservice architecture. They deliver high processing capabilities with reduced compute power and energy consumption, allowing users to adapt swiftly to changing requirements and budget conditions. Lawo’s HOME Apps support SMPTE ST2110, SRT, JPEG XS and NDI for increasingly mixed technology environments, and can easily adapt to new format requirements as these become relevant.

Enhanced .edge HyperDensity SDI/IP conversion and routing platform

Attendants to Lawo’s booth

at IBC 2023 will learn about the .edge HyperDensity SDI/IP Conversion and Routing Platform. Through licensable options like proxy generation and JPEG XS compression, with this solution Lawo addresses bandwidth constraints, streamlining IP pipelines and optimizing workflows.

To empowering live productions, Lawo will showcase the V10.8 software for the mc²/A__UHD Core/Power Core platform. With features like flexible bus routing, expanded AUX count (up to 256 busses), QSC Q-Sys proxy integration in HOME, Remote Show Control via OSC, and more, visitors at IBC will witness how Lawo’s platform sets a new benchmark for live performance capabilities. Furthermore, NMOS support for the mc² Gateserver bolsters device compatibility, offering seamless integration into Lawo’s ecosystem.

With its integration as a proxy in HOME 1.8, support for MADI front ports, and dynamic recognition of DANTE and/or MADI SRC cards, the Power Core ensures a seamless audio experience and Lawo is committed to show it to customers and visitors at IBC 2023.

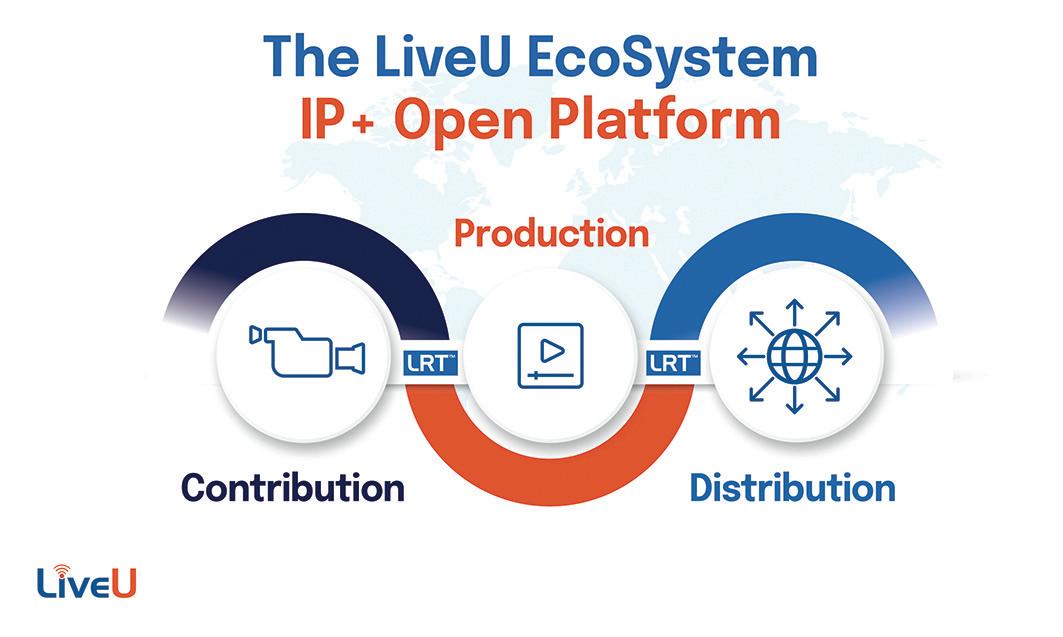

At IBC2023, LiveU will showcase its latest live video workflows and collaborations in contribution, production and distribution across sports, news and other live productions, bringing greater efficiencies across workflows while shortening the time to air. These include its flexible remote and on-site production solutions, using IPbonding and cloud workflows to replace traditional production hurdles with easyto-use, high-quality, and lowcost solutions.

A core element of the EcoSystem – its openness and interoperability – will

be highlighted on the stand. LiveU’s latest integrations will be demonstrated with other leading technologies encompassing 5G, cloud and AI. Real-time use cases will be presented across the entire video production chain.

Show highlights include:

LiveU Studio: first Cloud IP Live video production service to natively support LRT™

The fully cloud-native IP live video production service, LiveU Studio, is the first to natively support LRT™.

LiveU Ingest: ingest Cloud solution for automatic recording and story metadata tagging of Live video

It’s an automatic recording and story metadata tagging solution for cloud and hybrid production workflows: LiveU’s automated workflow solution accelerates the time-to-air and conversion of assets into digital media, increasing production efficiency.

LiveU Matrix: next-gen IP cloud video distribution service

LiveU will show how plugging into the LiveU Matrix, its large global private video distribution system, can boost content monetization and give instant access to a world of creative possibilities.

At this year’s IBC, Magewell will be showing its new space- and power-efficient Eco Capture HDMI 4K Plus M.2 and Eco Capture 12G SDI 4K Plus M.2 cards, which capture 4K video at 60 frames per second. But the main course for Magewell will be the showcasing of its Control Hub as part of IP workflow and streaming demonstrations in its stand at IBC 2023; in

Magewell’s booth, visitors will verify that Control Hub provides centralized configuration and control of multiple Magewell streaming and IP conversion solutions.

Administrators, IT staff and systems integrators can easily manage encoders and decoders across multiple locations through an intuitive, browser-based interface. An HTTP-based API is also available for third-party integration.

As Magewell’s team will explain to visitors and interested professionals, the Control Hub software can be deployed on-premises or in the cloud and supports Magewell hardware products including Ultra Stream and Ultra Encode live media encoders; Pro Convert NDI encoders and decoders; the Pro Convert Audio DX IP audio converter; and the USB Fusion capture and mixing device.

Attendants will be able to first-hand learn how users can remotely configure device parameters, monitor device status, trigger operational functions – such as starting or stopping encoding – and perform batch firmware upgrades across multiple units of the same model.

Marshall Electronics zooms in on its POV offerings at IBC 2023, and visitors will able to learn all about them and discover four new NDI|HX3 models as well as recent updates to the line.

Marshall’s team will be present for attendants’ questions’ and new Marshall POV cameras will be on display at stand, the CV570/CV574 Miniature Cameras and CV370/ CV374 Compact Cameras all feature low latency NDI|HX3 streaming as well as standard IP (HEVC) encoding with

SRT. The cameras feature H.264/265 and other common streaming codecs along with a simultaneous HDMI output for traditional workflows. All will be available for AV professionals and broadcasters to see.

Interested visitors will learn that CV570 miniature and CV370 compact NDI|HX3 cameras both contain a new technology Sony sensor with larger pixels and square pixel array that Marshall is implementing into all its next generation POV cameras. These will have resolutions of up to 1920x1080p (progressive), 1920x1080i (interlaced) and 1280x720p. All four cameras provide NDI|HX3, NDI|HX2 as well as standard IP with SRT and streaming codecs such as H.264/265. Simultaneous HDMI output is available for local monitoring or low latency transmission.

The CV570 (HD) and CV574 (UHD) use a miniature M12 lens mount and is built into an ultra-durable and lightweight body with dimensions of roughly 2 inches x 2 inches x 3.5 inches. The CV370 (HD) and CV374 (UHD) use a slightly larger aluminum alloy body with CS/C lens mount allowing a wider range of lens options.

Last but not least, Marshall will present at IBC 2023 its newest NDI|HX3 camera

models as well as its recently upgraded POVs that have all been optimized to feature the latest in sensor technology and high-end processors. In addition, Marshall will bring its new CV420Ne, an NDI|HX3 version of its ultra-wide 100-degree angle-of-view streaming POV camera and will also be featuring its lineup of professional broadcast monitors throughout the show, including its now shipping V-702W-12G professional broadcast monitor.

At IBC 2023, Matrox will feature the critical technology powering broadcast workflows for live, tier-1 programming, broadcast television and specialty content production.

Broadcasters, integrators, and developers that visit Matrox’s stand will discover a range of production solutions:

Introducing Matrox ORIGIN, a framework that redefines live production workflows

for broadcast and media developers. Visitors will learn about how Matrox ORIGIN offers a native, ITbased approach to television production, providing scalable, low-latency, and frameaccurate broadcast operations for tier-1 live television production. .

Visitors interested in signal management — including two-way, high-density ST 2110-to-HDMI/SDI monitoring and conversion — are invited to the ConvertIP pod. There they will see how they can reduce the cost of ownership, ease switching requirements,

and gain baseband monitoring flexibility and redundancy with Matrox ConvertIP.

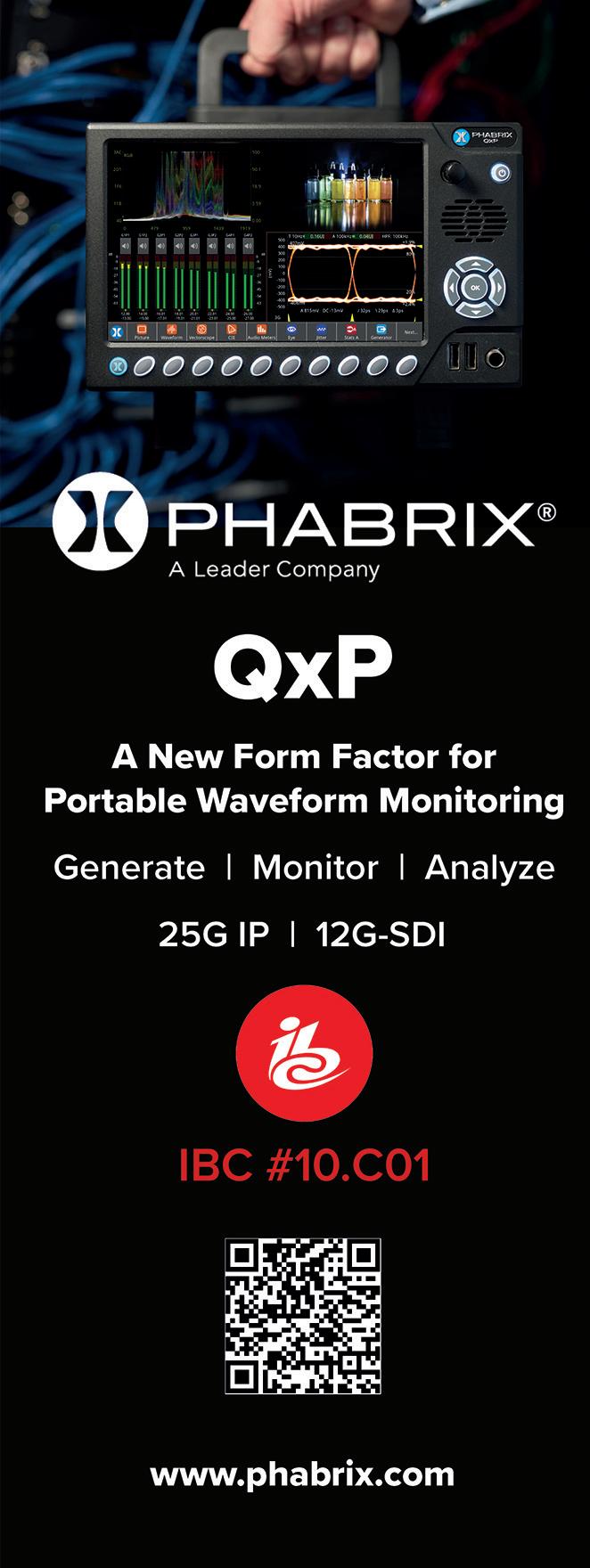

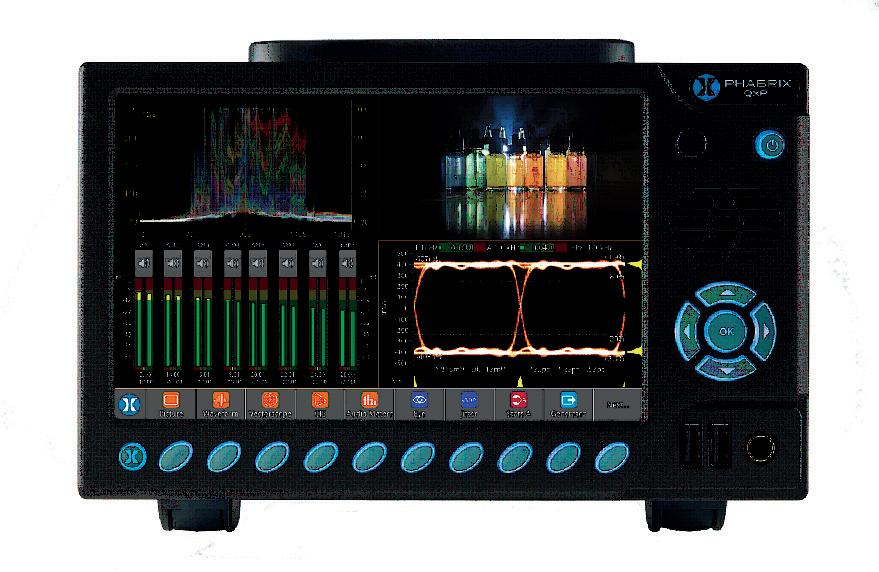

As visitors will be able to learn, central to the Qx Series demonstrations at this year’s IBC is the new QxP portable waveform monitor. Inheriting the flexible architecture of the QxL rasterizer, the QxP, distinguished by its capacity for 12G-SDI and 25GbE UHD IP workflows, offers an integral 3U multi-touch 1920 x 1200

7” LCD screen with integral V-Mount or Gold-mount battery plates for portability.

Phabrix will also introduce new features for the Qx Series at IBC 2023. It now supports

Full Range generation and analysis, allowing for comprehensive testing and evaluation. The Qx Series also offers enhanced waveform analysis capabilities, and Phabrix’s team will ensure that attendants to their booth verify it; like they would surely explain to IBC’s public, this waveform instrumentation provides the necessary precision for tasks like camera shading and image grading, while retaining realtime operation flexibility and supports a wide range of SDR/ HDR SDI and IP video formats.

Visitors to the stand will also see the latest update to the Rx Series of 2K/3G/HD/ SD rasterizers, including a

new 6-bar gamut toolset, to highlight out of gamut areas in the picture window. The instrument offers gamut monitoring meter bars for the YCbCr and RGB color spaces to enable pixel-level monitoring at both YCbCr/RGB levels simultaneously.

Phabrix’s Sx Series of handheld instruments will also be on show, including the Hybrid IP/SDI, Sx TAG, and SxE for advanced SDI physical layer analysis, key products designed for all broadcast engineers and especially for live event set-up.

IBC’s attendants will be able to see all range of Pixotope’s solutions, focusing in its software platform for endto-end real-time virtual production solutions; whether used by teams or individual users, Pixotope’s platform offers an intuitive UI/UX that requires no technical expertise to operate.

Other Pixotope solutions that will be on display at IBC 2023 are:

Pixotope Tracking - Fly Edition, a first-of-its-kind markerless through-the-lens (TTL) camera tracking solution, that eliminates the complex setup and creative constraints imposed by tracking markers, enabling productions and live events to engage audiences with dynamic real-time aerial graphics. .

Pixotope Graphics - XR

Edition reduces the technical complexities and associated resource costs of extended reality workflows and environments that use

LED volumes with a range of purpose-built tools that simplify setup and operation, accelerating time to market.

Pixotope Pocket, a new mobile application that enables students to use their smart devices to produce immersive content with virtual production, will be available for IBC2023 attendees to try for the first time.

Qvest will present a comprehensively expanded service portfolio with new practices and products for future-proof customer solutions in the media and entertainment sector. Additionally, the company will provide updates and insights

into the international project business of the global Qvest group.

Under the company’s this year’s IBC motto “Expect more”, the focus will be on the expanded range of practices, as well as new digital products with which Qvest addresses all the requirements of international customers in the field of media and broadcasting and beyond.

From September 15 to 18, members of the global Qvest team will be on site in Hall 10 at booth C31 to inform visitors about all the latest news. In dialog with Qvest product experts at demo pods, visitors can experience, among other things, the digital products makalu Cloud Playout and

ClipBox Studio Automation, which provide greater simplicity, productivity and scalability for broadcasters and publishers.

As part of its presence at the show this year, Qvest will offer a dedicated Product Experience Zone. Visitors can get insights about Qvest products during compact live presentations that will be offered several times a day. The integration platform for media workflows qibb, a strategic spin-off of Qvest, will also be presented. Their focus will be on automated workflows with generative artificial intelligence for media applications, integrating popular AI tools such as ChatGPT4.

In addition, visitors can get comprehensive information about the Qvest fields of expertise, which are now clustered into practices. The Qvest practice specialists will demonstrate how the company’s knowledge in areas such as Foresight & Innovation, Digital Media Supply Chain, OTT, Data & Analytics, Digital Product Development or Systems Integration enables the implementation of futureproof technology projects. By using state-of-the-art technologies and individual consulting processes, the global Qvest team can offer specially tailored solutions for every need.

Trade show visitors and media representatives will also have the opportunity to arrange exclusive meetings with Qvest. Members of the management of the worldwide Qvest company group, as well as practice and product experts, will be on site on all days of the IBC.

With the integration of Simplylive, Riedel will be showcasing their live video production tools including the All-In-OnecProduction Suite, offering ViBox for multicamera productions, with Slomo and RefBox. Additionally, RiMotion replay, RiCapture UHD

recorder, the Venue Gateway and the Web Multiviewer enabling simple, scalable production and replay for anyone, anywhere. Plus, new additions to the Simplylive portfolio will be unveiled, the company promises.

They will be demonstrating, for all attendants, its MediorNet IP solutions for video and audio processing and distribution with innovative MuoNSFP technology, which will exhibit an abundance of processing: encoding/ decoding, ST 2110 gateways, multiviewers, and newly added HDR conversion between any SDR/ HDR formats, including Slog3, PQ, and HLG. Visitors

will also discover the MicroN UHD media distribution and processing platform, adding bandwidth, I/O, higher resolutions, and processing power to the MediorNet TDM platform. Plus, Riedel will unveil a hot new addition to their software-defined hardware portfolio on September 15th.

On the comms side, Riedel will present the Bolero wireless intercom in both the DECT 1.9Ghz and the new 2.4Ghz versions, including capabilities in combined networks. Available for all attendants to see it, the Artist-1024 will be on display and as well will the SmartPanel 1200 and

2300 Series multifunctional interfaces.

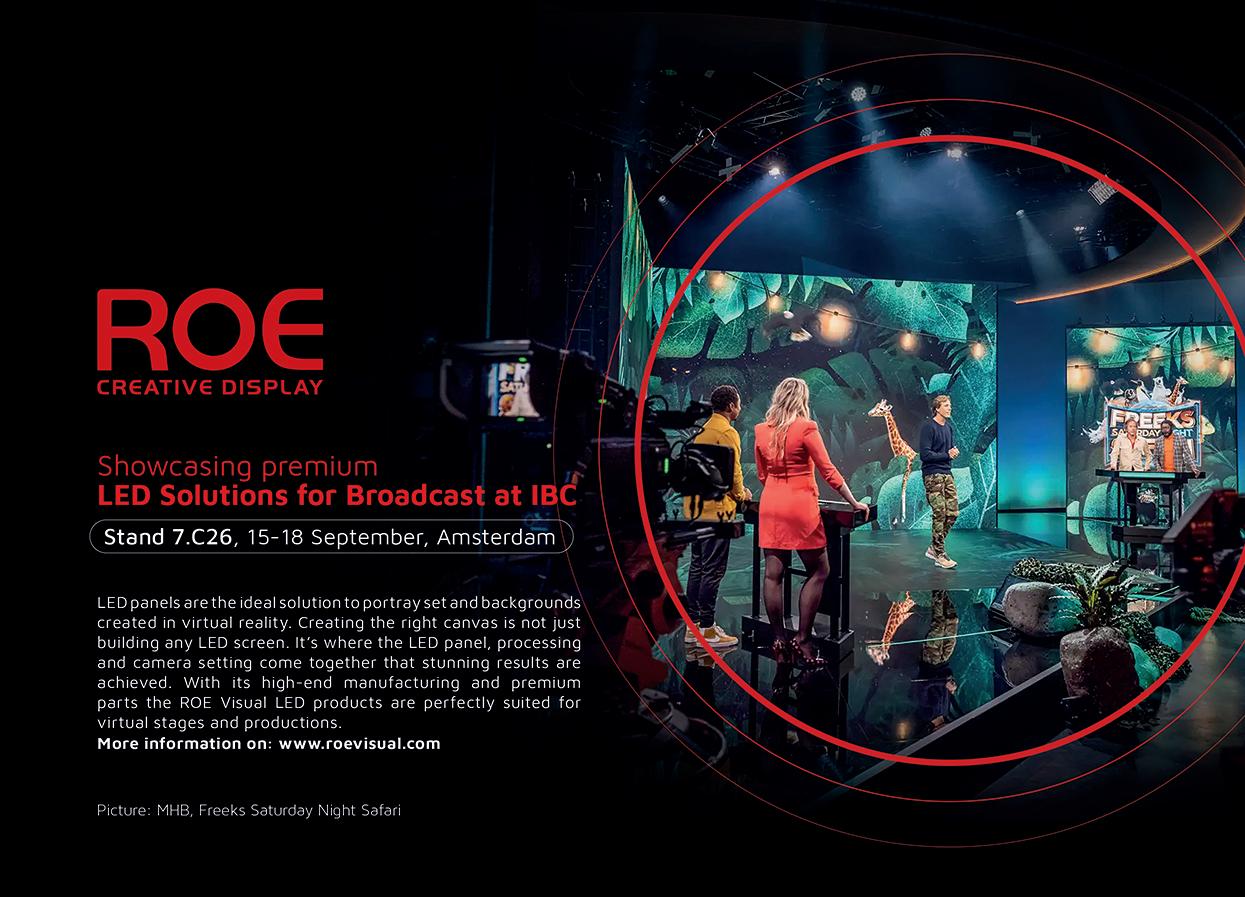

ROE Visual will showcase its latest products and technologies, highlighting its significant presence in the broadcast and film market.

With its dedication to pushing boundaries and delivering high quality LED displays, ROE Visual’s presence at IBC Show 2023 aims to impact the attendees. From broadcast professionals to filmmakers, the showcase will provide a glimpse into the future of visual storytelling and media production for all visitors and professionals.

As visitors at ROE’s stand will see, the Ruby LED series will take center stage, featuring the Ruby RB1.2, RB1.5, and RB1.9BV2-C panels alongside the Black Marble LED floor, the BM2.

Making its debut at IBC, the Ruby RB1.2 is a fine-pitch, broadcast-grade HD-LED panel delivering highperformance visuals. The Ruby RB1.9BV2-C, a curved LED panel fully compatible with the regular RB1.9BV2, promises seamless integration for broadcast and virtual production applications.

Designed with cutting-edge LED technology and highspeed components, the RB1.2 and RB1.9BV2-C panels offer true-to-content color representation and unrivaled in-camera performance with high frame rates, refresh rates, and minimal scan lines, and attendants to IBC 2023 will be able to check it out.

In addition, ROE Visual will bring a new prototype to the exhibition: Coral, a finepitch COB LED panel creating unparalleled visuals.

With its high visual performance, Coral’s Chip on Board technology delivers high contrast, wide color gamut, and great color accuracy, providing a highdefinition viewing experience. Its energy-efficient common cathode design saves costs and extends the panel’s service life, making it a sustainable choice. In order to verify it, visitors will be able to ask for exclusive sneak peeks at the stand.

Ross will have new, solutionscentric, booth (Hall 9, Stand A04 and A05) demonstrating end-to-end workflows for a wide variety of video production environments.

Attendees to Ross stand will be able to experience full solution demonstrations that show the latest advances in:

- Extended Reality (XR)

- News Workflows

- LED Production

- Production Graphics

- Camera Motion Systems

- Automated Production

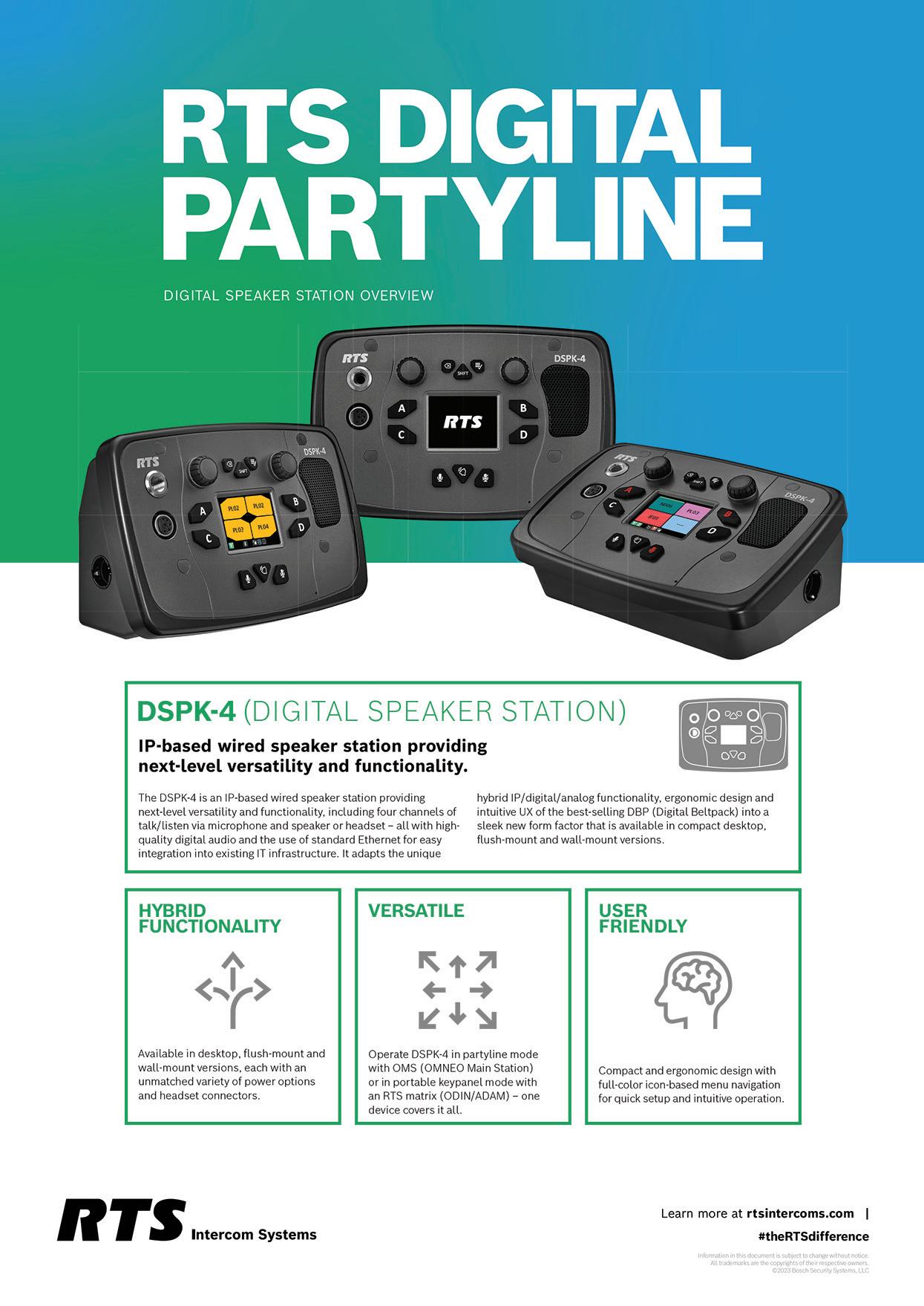

RTS Bosch will be showcasing and demonstrating its products and solutions at IBC 2023, and these are some highlights about what visitors will be able to see at their stand:

Available for all attendants, there will be a demo of RNP implementing NMOS protocols. As RTS Bosch’s team will explain, RNP acts as a proxy between RTS OMNEObased devices and non-RTS NMOS third-party products, such as NMOS explorers, NMOS nodes and NMOS controllers.

At RTS Bosch’s stand, visitors will check out RNP functions:

- IS-04 Discovery and Registration -- Broadcast controllers identify and manage new devices through automated workflows.

- IS-05 Device Connection Management -- Broadcast controllers integrate IS-05 devices through a common method without requiring any drivers for stream connection management.

- IS-08 Audio Channel Mapping -- This capability allows users to manage audio channels through a control system in a uniform manner without the need to develop custom drivers for every different device.

In addition, RTS intercoms will have a demo that will show one of ODIN’s redundancy and glitch-free capabilities. In case of a device failure, the system will switch over to a backup device / second network.

The latest firmware release for the ODIN matrix and the OMNEO Main Station (OMS) features call light support. RTS Bosch will offer a demo of this functionality for different verticals (theater, broadcast, live entertainment & industry).

Also, RTS intercoms will be showcasing the new PH+ headset which combines improved audio quality for enhanced speech intelligibility with comfort for extended wear and robustness for tough environments.

RST will have on display the new DSPK4 (Digital Speaker Station) —an IP-based speaker station with several key enhancements, such as high-quality digital audio and the use of standard Ethernet, making it a flexible fit for any work environment.

New immersive innovations, content creation and broadcast audio solutions, plus an invite-only preview of the future of wireless audio –at Sennheiser’s stand will be displayed the group’s latest advancements.

Audio at its best: at IBC, visitors will see how Sennheiser, Neumann, Dear Reality and Merging

Technologies will demonstrate state-of-the art immersive production workflows as well as exciting solutions for audio capture, monitoring and processing. Creators and audio professionals of all levels will be welcomed.

Four spaces invite IBC visitors to explore Sennheiser group’s state-of-the-art solutions for today’s content production: (1) the immersive presentation zone, (2) active and passive product islands, (3) a Neumann area and (4) a separate Wireless Multichannel Audio Systems (WMAS) zone.

The immersive zone

In the immersive presentation zone, which is powered by a 5.1.4 Neumann monitor setup with nine KH 150 monitor

loudspeakers and two KH 750 DSP subwoofers, guests will be able to get hands-on with the Dolby Atmos/immersive broadcast workflows facilitated by the products of Merging Technologies, the latest member to join the Sennheiser Group. The company shows their Anubis, Hapi, Pyramix and Ovation solutions integrated in typical broadcast workflows. In addition to their new Dolby Atmos certified monitoring package, Merging Technologies will show a brand-new interpretation solution.

In the same space, visitors will be invited to experience Dear Reality’s dearVR SPATIAL CONNECT for Wwise. The software will be previewed at IBC for the first time —and

enables game designers to develop the audio directly in the VR or AR gaming environment, instead of having to switch between systems. The in-game mixing workflow will provide a new in-headset control of Wwise audio middleware sessions and aims to improve the workflow for next-generation XR productions.

Other presentations in this area will include AMBEO 2-Channel Spatial Audio for live production, using an Anubis to create an enhanced immersive stereo feed from an immersive audio production source, and information sessions on WMAS, Sennheiser’s

broadband wireless technology.

At IBC 2023, Sony will showcase solutions for the Media Industry under the theme of Creativity Connected. It rests on long-term commitment to customers around core technologies allowing them to be ever more creative and efficient.

All visitors will be aware of Sony’s complete ecosystem of solutions, products and services, which reflect four strategic areas of focus that are shaping the media industry:

• Networked Live, a platform enabling the optimization of people, locations, and processing for each and every live production environment, whether on the ground or in the cloud.

• Creators’ Cloud, Sony’s cloud platform dedicated to efficient media production, sharing and distribution.

• Imaging, with color science, flexibility and ease of operation at the heart of Sony’s cameras and lenses, in particular its Cinema Line range, including CineAlta cameras and reference monitors, and the added benefit of SDK-based remote control.

• Virtual Production, for film production that combines the Virtual Production Toolset software with Crystal LED displays for high brightness and contrast 3D set background images and rich color reproduction, as well as the VENICE camera series, for soft and delicate depictions, smooth skin tones, and beautiful color reproduction.

Attendants to Telestream’s stand will learn how media organizations are enhancing workflows using scalable and cost-effective solutions for Media Production, Media Supply Chain Management, and Media Distribution. As well, Telestream’s team will be demonstrating solutions and platform-as-a-service offerings that bridge on-prem ecosystems with the benefits of working in the cloud.

• Monitor and test video/ audio outputs in SDI, IP, and hybrid production environments

• Ensure premium quality content in remote productions

• Easily synchronize resources

and generate reference clock

Live Capture, Ingest and Delivery

• Capture live feeds via SDI, NDI, and IP

• Support SMPTE 2110, SRT, RTMP and Transport Streams

• Create final deliverables for PAM systems, social media and more

• Easy color space conversion for live HDR production

• Rapid broadcast preparation for on-demand with DAI

• Support for format conversion from proxy through SD, HD, and UHD

• Efficient captioning from

video files as well as auto subtitling with live workflow

Cloud Media Processing and Workflow Orchestration

• Cloud-native execution of Vantage workflow orchestration

• Support for hybrid cloud/ on-prem configuration of Vantage workflows

• Support for customers chosen Private Virtual cloud (AWS, GCP, Azure)

• Fully cloud native file-based media processing for broadcasters and streaming services

• File-based QC and automated captioning

Visitors to IBC 2023 will be able to enjoy at Telos’stand a tour of the latest hardware and software solutions for radio and TV that enable creators and companies to broadcast without limits.

At Telos’s booth attendants will find their expert staff of broadcast specialist’s on-hand: whether customers or visitors have product questions, a specific workflow they’re seeking to implement, or just want to see what’s new, Telos’ team will be there to help.

Telos Alliance is bringing a full slate of radio and TV products to Amsterdam for IBC 2023, all designed to make life easier and more productive for

broadcast content creators. Visitors will find a wide range of solutions for managing workflows and media delivery.

Telos Alliance products on display at IBC 2023 include:

• Axia Quasar AoIP Mixing Consoles

• Telos Infinity VIP: The Infinity Intercom family of products includes now the new Telos Infinity VIP App

• AudioTools Server WorkflowCreator

• Jünger Audio AIXpressor

• Omnia Forza: The new Omnia Forza, a brand-new software-based approach to multiband audio processing, is purpose-built for HD, DAB,

DRM, and streaming audio applications.

• Axia Altus. This new software-based audio mixing console delivered as a Docker container brings the power and features of a traditional hardware console to desktop and laptop computers, tablets, and smartphones running any modern web browser.

At this year’s IBC edition Vislink will be unveiling its latest innovations, offering attendees a sneak peek into the future of live content production and distribution. The Vislink team will be live on stand 1.C51 with a full complement of product

displays, presentations and video demonstrations.

One of the highlights of Vislink’s showcase will be its Hybrid IP/COFDM applications. This technology, expressed in key products like the Cliqultracompact mobile transmitter, blends the strengths of Internet Protocol (IP) and Coded Orthogonal Frequency Division Multiplexing (COFDM) to create optimal transmission capabilities. As visitors will be able to learn, this technology is designed to provide broadcasters with a more robust and flexible transmission solution, especially in challenging environments where signal stability is crucial.

Vislink will also be demonstrating its private 5G solutions for event productions, leveraging the high-speed and low-latency benefits of 5G technology.

Another offering from Vislink available to all attendees will be its solutions for remote production in the cloud. Led by theTerra Link series of portable, rackmount and compact encoders, these flexible, cost-effective, and scalablecloud-based production solutions are

tailor-made for modern broadcasting needs, allowing organizations to streamline their production workflows and maximize their resources. Vislink’s cloud-based solutions offer production teams the ability to collaborate remotely and deliver highquality content to audiences worldwide.

Vislink’s showcase will also feature AI-automated live sports and in-studio systems, harnessing the power of artificial intelligence for enhanced production automation and viewer engagement.

In addition to the two big shows at Vizrt booth – The Vizrt Experience live show and The TriCaster Anywhere live show – attendants won’t want to miss a few of our other highlights. Every visitor

to Vizrt’s booth will experience live demos of their cuttingedge solutions, and their team will be eager to showcase what they have to offer and answer any questions professionals may have.

Solutions that will be on display at Vizrt’s stand are:

Viz Engine 5

Vizrt will be showing its powerful render and compositing engine together with its tight integration with UnReal Engine 5 for photorealistic AR, VR, and virtual sets.

Adaptive Graphics for every screen

This single workflow, multiplatform content delivery that automatically adjusts resolution and format to support specific display devices will be on show for

visitors and interested public. It’s an exclusive technology that enables broadcasters, creators and graphic artists to create once and publish multiple times for fluid, editable, and consistent visual storytelling.

This is a Cloud native so professionals and artists can create and control live graphics from a single interface via any browser. Besides, it offers a wide range of audience engagement and monetization features.

Vizrt Sports Content Factory provides a full sports content workflow in the cloud from live ingest, archive, and metadata annotation, through branded content distribution to multiple platforms. It’s been designed to address the high-performance demands of sports teams, leagues, and rights holders.

The Viz Flowics interface via any browser gives creators and professionals simple

control over the creation, integration, and playout of HTML5 graphics that are adequate for fast-paced productions and digital or multi-screen extensions.

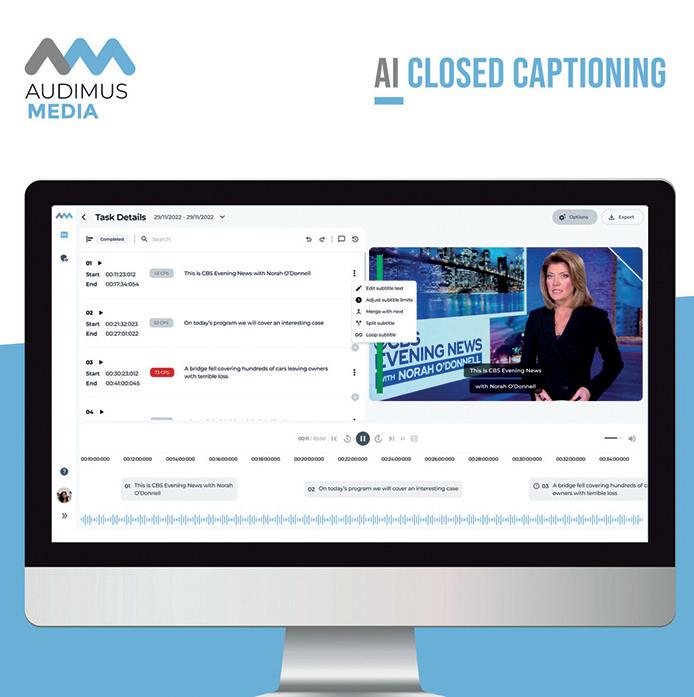

VoiceInteraction returns to IBC to showcase its leading AI speech recognition solutions: with demos of Live Closed Captioning, Transcription Automation, and a Broadcast Compliance tool that provides additional features such as automatic news clipping and analytics.

VoiceInteraction’s flagship automatic subtitles solution is available in 40 languages. With reliable speech recognition and marketspecific adaptability, it delivers high accuracy and reusable captions for VOD. Enhance accessibility costeffectively with support for any SDI or IP workflow. The on-prem translation widens audiences, while the intuitive web dashboard provides customized control, event scheduling, and seamless caption integration.

Audimus.Server

Audimus.Server is VoiceInteraction’s transcription automation platform for offline speechto-text or post-recording subtitling workflows. With an in-app editor and automatic timecoding, it creates .srt files or directly burns the subtitles into videos, among other exporting formats. The metadata extraction is designed for indexing and enriching large MAMs, as Audimus.Server empowers broadcasters to repurpose content for on-demand programming and other platforms.

A tool for Broadcasters to reduce costs, create additional monetization opportunities, and enhance viewer loyalty.

Traxis Platform will be on display at Zero Density’s IBC stand, for all visitors to see, and ZD’s team will answer all questions and explain all features to them.

With an accuracy of up to 0.2 mm and ultra-low latency of just six milliseconds, the Traxis Camera Tracking system offers

high precision. On a virtual production, this ensures continuous calibration and seamless real-time results, so that every camera move that happens on set is reflected in the virtual world without delay. Virtual graphics can then be broadcast live without breaking the audience’s immersion.

For talent tracking, the Traxis platform includes an AI-powered system that can identify multiple actors within a 3D environment at once. The Talent Tracking system uses AI to extract the actor’s 3D location from the image in real time, then sends the data to Reality Engine to create accurate reflections, refractions and virtual shadows of the actor in 3D space. The technology

is completely markerless, which streamlines setup and maintenance, and means actors no longer need to be distracted by wearables or beacons that make it harder to perform.

At the heart of the Traxis platform is the Traxis Hub, a comprehensive tool that manages both tracking and lens calibration data. Compatible with all industrystandard tracking protocols, the hub brings together all available tracking and lens data into a centralized interface, significantly reducing setup time. The hub has a library of the most commonly used broadcast lenses, enabling quick and easy calibration.