The great European broadcast trade fair is over. The IBC in Amsterdam was held from 9 to 12 of September. The entire industry, from manufacturers, to end users, through all integrators and distributors, was once again met in the aisles of the RAI. We, as a specialized magazine, attended the show to say hello again to old friends with whom we had not met for years. In addition to this minor but equally important reason, we travelled to the Dutch city to identify trends in our industry. In this issue you will find the details of our research.

IBC 2022 has meant a return to the presence of the past. However, that splendor has lost its luster. A constrained market where the big players take the best parts of the cake, the convenience of remote modes of communication, the immediacy of globalization on the one hand, and the rise of local and national fairs that bring together more controllable markets on the other; have all contributed to the fact that IBC has lost some of its importance.

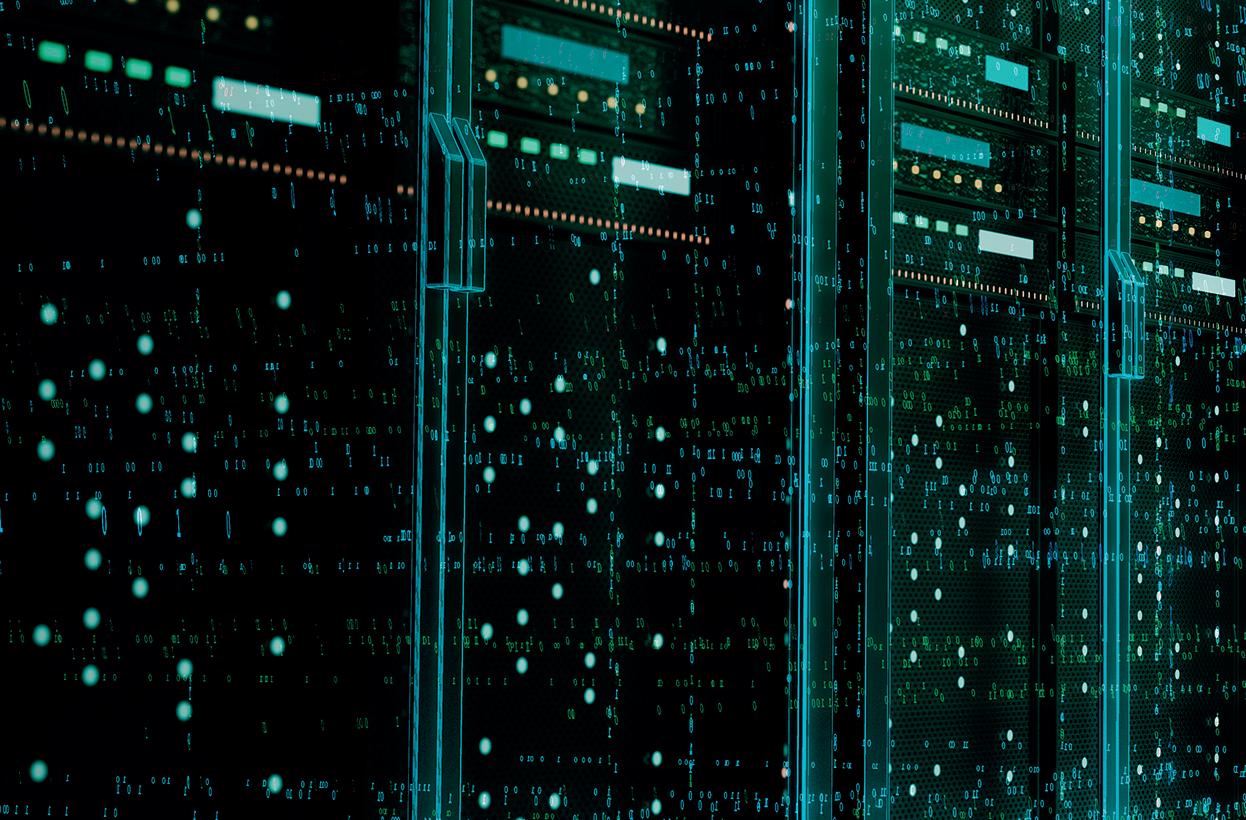

Another major protagonist of the last edition of IBC was the cloud. Once the worflows of remote production have been accepted and confidence in virtualization has been gained, this technology only needs to grow and be tested to deploy its full potential. We have talked to two end users, Olympic Broadcasting Services and Telefónica Broadcast Services; and to a provider of these services, Amazon

Web Services; to check the state of this technology and to know how much is left to reach its full capabilities.

Rugby League World Cup is one of the oldest rugby competitions in our society. It was held for the first time in 1954. In this edition, postponed due to the effects of the pandemic, the organization is responsible for holding the competition in the United Kingdom and attracting the most representative nations of this sport in three categories: men, women and people with disabilities. All categories will receive equal broadcast coverage.

The FIFA World Cup in Qatar is just around the corner. An event of this magnitude attracts a billionaire influence and millions of fans around the world. Gravity Media has been in charge of setting up the stadiums to broadcast the matches, as well as deploying the TV broadcast infrastructure to cover them.

Another trend that is starting to take hold in the broadcast and film industry worldwide is sustainability. How to make even better content with less environmental impact? There are companies that are already developing these methods using remote production techniques or, even cheaper, being faithful to the basic law: reduce, reuse and recycle. In this issue you will find the story of New Yonder, a production company with its own OTT service that applies this way of working.

3

EDITORIAL Editor in chief Javier de Martín editor@tmbroadcast.com Key account manager Susana Sampedro ssa@tmbroadcast.com Editorial staff press@tmbroadcast.com Creative Direction Mercedes González mercedes.gonzalez@tmbroadcast.com Administration Laura de Diego administration@tmbroadcast.com Published in Spain ISSN: 2659-5966 TM Broadcast International #110 October 2022 TM Broadcast International is a magazine published by Daró Media Group SL Centro Empresarial Tartessos Calle Pollensa 2, oficina 14 28290 Las Rozas (Madrid), Spain Phone +34 91 640 46 43

Cloud & Live

We know that the cloud is the future of technology. We know that it is the present for management of computerized resources. We also know that broadcasters are using it, and also that will increasingly implement workflows in it. However, what is needed to produce and distribute content such as Olympic Games or a Champions League final in the cloud?

Web Services

Qatar 2022 with Gravity Media

We had a chat with Ed Tischler and his team at Gravity Media, who are responsible for organizing the broadcast infrastructure and setting up the Qatari stadiums, to ensure that every fan, no matter how far away they are from Qatar, can see their team win and feel the excitement of a World Cup.

SUMMARY 4 NEWS6

OBS TBS Amazon

20

46

5 74 Newyonder: Reduce, reuse and recycle making movies RLWC21 Rugby League World Cup 202152 IBC 2022: Democratization and virtualization of solutions62 Interview with Ross Video 70

Vizrt introduces adaptive graphics capabilities and Unreal Engine support with the release of Viz Engine 5

Vizrt has recently announced Viz Engine 5 and its inmediate availability for purchase or upgrade.

Into its features, Viz Engine 5 introduces Adaptive Graphics: a way of graphic deployment to multiple output formats simultaneously. Another enhancement is the integration of this engine owned by the Vizrt Group with Unreal Engine 5. The combination of both gives users a workflow perfect for live production.

Adaptive Graphics is a template workflow that intelligently adjusts resolution a format to support specific display devices without multiplied resource usage, compromising quality, or risking loss of readability. This capability is designed for TV, hand-held device aspect ratios, studio video walls, virtual sets, and digital signage. All the outputs can be delivered as NDI.

With the integration with Unreal, Viz Engine now can do photoreal, detailed and data driven graphics for virtual environments. Across the control capability that this union brings, users can change assets, transformations, or animations within Unral or Viz.

“Adaptive Graphics is a smarter way to do multiplatform graphics. For the broadcaster this will mean merging production lines, more control over the quality of the product, and more efficient use of designers’ time,” says Gerhard Lang, CTO at Vizrt. “And our newly

enhanced integration with Unreal Engine introduces a workflow and output that is as straightforward as it is innovative. It allows broadcasters to deliver high-impact graphics from both rendering pipelines within a single workflow.”

Apart from this features mentioned, the group has also introduced into its

graphic engine NDI with fully embedded tracking data in the stream. This enhancement opens the possibility to allow users to create augmented reality setups with PTZ cameras and cloud-based workflows.

NEWS - PRODUCTS 6

Lawo HOME platform adds NMOS and JPEG-XS support

Rights Management for audio parameters that are accessible from several control surfaces but can be configured to be adjustable only from one control surface; and System Health, i.e. status information about a system’s components with centrally logged information, warning and error entries for convenient monitoring during normal operation.

interoperability, and it offers higher video compression ratios. vm_jpegXS supports compression ratios

between 5:1 and 36:1 and offers 4x encoding

The Lawo’s management platform for IP-based media infrastructures has recently succeeded JTNM Test obtaining NMOS compatibility.

Through the API-based “lives@HOME” program, any third-party device can make all desired parameters accessible for control on the network. This is an addition to HOME, and others are Signal

HOME functionality furthermore includes multiessence stream grouping that allows operators to bundle all relevant ST2110 video, audio and metadata flows. This functionality is also available for other products. Lawo also announces a module app for its constantly evolving V__matrix platform. it provides comprehensive ST2110 compatibility for

+ 4x decoding from, and to, JPEG XS (ST2110-22). Uncompressed signals can be interfaced with SMPTE ST2110, ST 2022-6 or SDI. vm_jpegXS is a versatile audio and video tool with ample processing and glue functionality for V__matrix applications.

SMPTE ST2022-7 seamless

protection switching, ST2110-30/31 support for IP audio interfacing, and ST2110-40 compatibility for ancillary data as well as IP stream format conversion, and frame-accurate video switching using destinationtimed clean and quiet switching (MBB and BBM) with audio V-fades are provided as standard.

NEWS - PRODUCTS 8

DAZN creates new way to watch NPB league with Stats Perform, AWS technology and machine learning

Nippon Professional Baseball (NPB), the Japanese baseball league, is streamed by DAZN. Their services help fans to stay connected to their favorite NPB players and teams with live and on-demand coverage of local matchups every day. Related with this broadcast, DAZN Sports Data team launched a new highlights feature for subscribers. They has leaned technology from Amazon Web Services (AWS) and other brands. Specifically, this feature is using real-time match data from Stats Perform, Amazon Rekognition Custom Labels machine learning (ML), and video analysis technology. This service provided to DAZN subscribers, allow them to take control over their own highlights experience. They can pull up and select a recorded or in-progress NPB game, load it, and use the scrub

bar to navigate to critical highlight markers or to watch important plays.

DAZN worked with its colleagues in Japan to build an application to support this functionality. t first determined which plays the application would define as highlights, using available Stats Perform data. The team then had to sync this data with its live game streams and found machine learning the best way to do so.

Whereas football plays can be mapped to a clock, baseball isn’t timed the same way. The development team needed a way to automatically detect the start of a game. They chose chose to use the first appearance of the score graphic (including the number of balls, strikes and outs) – which coincides with the start of the first pitch – as the starting marker. With no off-the-shelf tool available to identify this

graphic, they set out to build their own model using Amazon Rekognition Custom Labels. Then, they trained the application to detect the initial appearance of this graphic. From there, the team provided the system with a list of all the key moments and times they occur, and it synchronized them with the video based on the time of the game.

“NPB fans have responded with extreme enthusiasm.

Matches run quite long, so unless you’re an avid fan, tuning into an entire game isn’t a reality, so now, more casual fans can even check in to see highlights, which wasn’t previously possible,” shared Fred Clarke, Head of Product, Sports Data, DAZN.

NEWS - SUCCESS STORIES 10

QTV and Neutral Wireless Ltd deployed a 5G network tested by IBC’s Media Innovation Accelerator Program for Operation Unicorn

broadcast use cases as part of IBC’s Accelerator Media Innovation Programme, involving an international consortium of broadcasters and media technology vendors over the course of 2021-2022.

QTV is a Scottish outside broadcast specialist. The company deployed private 5G network technology to connect cameras used in the international broadcast coverage of Her Late Majesty Queen Elizabeth II’s final departure from Scotland via Edinburgh Airport on September 13.

The network on 5G band of frequencies was designed and deployed by the University of Strathclyde. Specifically, by its spinout company, Neutral Wireless Ltd. This capacity was developed through a series of proof-of-concept trials in 2022 as part of IBC’s Accelerator Media Innovation Programme. The network has been trialled and proven viable for

Operation Unicorn — the codename of the plan for handling Her Late Majesty’s death should she pass away in Scotland— saw the Queen’s coffin transported from Edinburgh Airport to RAF Northolt by air. This plan created the necessity for a high-definition, wireless solution that avoided the use of cables across the airport runway, whilst mitigating interference and guaranteeing quality of service. The Neutral Wireless pop-up 5G SA network was deployed for QTV within 24 hours of the spectrum licence in the radio frequency band n77 (3.8GHz – 4.2GHz) being granted by Ofcom.

The outside broadcast at Edinburgh Airport was also supported by Open Broadcast Systems and Zixi, with the former providing encoders and decoders, and Zixi providing licences to use the software Defined Video Platform, Zen Master Control Plane and protocol over 5G at short notice.

Professor Bob Stewart, from University of Strathclyde and head of the University’s Software Defined Radio team, said: “The use of a dedicated 5G private network operating in shared spectrum licensed by Ofcom is believed to be a first for live TV news. A spectrum licence was granted in the n77 frequency band at Edinburgh Airport and the network was rapidly deployed on the tarmac beside the runway to provide connectivity for a wireless camera position. The network operated live and with no technical issues for nine hours.”

NEWS - SUCCESS STORIES 12

Red Bee Media, Nowtilus and Equativ develop a FAST channel for High View

Red Bee Media, Nowtilus and Equativ have launched a solution for Free AdSupported Streaming TV (FAST) offering. The product combines technology and expertise from the three companies to create a B2B solution for implementing and managing FAST channels. High View, a Germany-and-Austriabased independent media company, is the debut customer, distributing its content on 2016 and later LG Smart TVs through LG Channels, which is LG’s own FAST platform.

“By utilizing the wellintegrated feature-set of Red Bee Media, Nowtilus and Equativ in distribution and monetization of FAST channels to a wide range of platforms and devices, we ensure that our valuable content is flawlessly showcased on LG Channels,” said High View Managing Director Alexander Trauttmansdorff

The recently launched six channels for Germany, Austria and Switzerland all have different characteristics and serve a variety of viewer preferences such as a cooking channel, another for documentaries about foreign countries and cultures, a channel dedicated to show different lives and daily routines of extraordinary humans around the world, a travel channel, hunting and fishing programs in Germanspeaking countries, and another one for nature documentaries.

“Having localized content on our LG Channels platform

is crucial for success in regions like Germany, Austria and Switzerland, which have been spoiled with high-value content for decades. Therefore, High View’s Channel portfolio brings the perfect addition to our FAST Platform”, noted Chris Jo, Senior Vice President of Home Entertainment Platform business division at LG.

“As the pace of media convergence increases, content owners and aggregators need partners that can reliably deliver content to worldwide audiences,” said Steve Nylund, CEO, Red Bee Media.

NEWS - SUCCESS STORIES 14

Mediaproxy implements LogServer software for SMPTE 2110 in NEP Group’s Norwegian operation

Mediaproxy has expanded its relationship with NEP Group by supplying its LogServer software for SMPTE ST 2110 recording at its Norwegian operation.

NEP Norway first began using Mediaproxy software in 2017, when it chose LogServer for its compliance recording on the SDI channels distributed from its playout center. This replaced a custom-built system and when the company needed a logging system for new ST 2110 services, the decision was taken to expand the installation, which was

facilitated thanks to easy software-based integration for uncompressed IP video sources.

Mediaproxy LogServer is a software-based IP logging and analysis system that supports SMPTE ST 2110, NMOS and SCTE ad-insertion. Delivering compliance monitoring and recording of program output to meet broadcast standards and regulations, it can integrate with cloud, virtual or on-premises workflows. The platform also supports video, audio and real-time data from sources including 4K, HDR,

10-bit, HEVC, TSoIP, SMPTE 2110, Zixi and SRT.

NEP Norway technical manager Rune Olsen sees the key benefits of LogServer as being its ease of use and stability, plus excellent support from Mediaproxy. “The software has worked without a hitch from the get-go and Mediaproxy is very fast in responding to any questions we may have,” he says. “The interface is also very easy and efficient to use. It just works and does the work it is supposed to do.”

NEWS - SUCCESS STORIES 16

Ericsson and O2 Telefónica develop 5G networks with 10-kilometer coverage through microwave connectivity

Ericsson and O2 Telefónica have recently proved 5G wireless distribution network for rural and suburban coverage. This capacity has saw light before their latest joint project. This technology milestone has shown that the companies can deliver speeds of up to 10 Gbps over a distance of more than 10 km and demonstrate fiber-like microwave connectivity. This demonstrates that microwave backhaul in traditional bands can support the continued build-out of highperformance 5G networks and enhanced mobile broadband services from urban to suburban and rural areas. This has been, actually, one of the biggest challenges associated to the 5G network spreading.

Traditionally, such areas have been difficult to service, as high capacities require broad bandwidths

that usually only have been available in millimeter wave frequency bands (E-band). The E-band is more impacted by rain compared to the lower frequency bands, which makes it more difficult to deliver consistent service over long distances during adverse weather conditions. The ability to deliver such high data rates over distances of more than 10 km brings great advantages in providing reliable, lowlatency broadband in hardto-reach areas.

“Together with our partner Ericsson, we are pioneering new powerful microwave solutions using Carrier Aggregation and MIMO technology to backhaul 5G traffic over long distances in rural areas, when fiber is not an option. This type of technology enables us to deliver fiber-like connectivity via microwave and further accelerate our 5G deployment,” affirmed

Aysenur Senyer, Director of Transport Networks at O2 Telefónica.

The key innovation is the ability to use MIMO with high modulation in the 112MHz channels (commercial MIMO solutions support up to 56 MHz channels), which were combined with Carrier Aggregation to enable similar capacities to E-band in the lower frequency bands.

The backhaul link utilized the 18GHz frequency band, dual antennas in a MIMO configuration, and commercial MINI-LINK radios together with a pre-commercial baseband algorithm that allowed the use of MIMO in 2x 112 MHz channels. MIMO ensures the efficient use of limited spectrum resources. The same capacity without MIMO would demand a 448 MHz bandwidth in a crosspolar setup.

NEWS - SUCCESS STORIES 18

Why the cloud, as we know it today, cannot cover live events that require quality and reliability? We know that the cloud is the future of technology. We know that it is the present for management of computerized resources. We also know that broadcasters are using it, and also that will increasingly implement workflows in it. However, what is needed to produce and distribute content such as Olympic Games or a Champions League final in the cloud?

Mario Reis is Director of Telecommunications and OTT at Olympic Broadcasting Services. His specialty is the transport of video signals, with special emphasis on distribution through technological channels such as satellite, the cloud or a blend of both through edge computing. We have had the opportunity to hear his opinions about the potential of the cloud for broadcasting top-notch sporting events.

CLOUD & LIVE report by Javier Tena

Interview with Mario Reis, Director of Telecommunications and OTT at OBS, by Daniel Gonzalez, Sales Director at Mediakind, during the Direct2Consumer Summit organized by MoMe, Microsoft, Mediakind and Overon. The event took place on June 7, 2022, in Madrid. What is the role of OBS in generating the content of the Olympics?

The main role of Olympic Broadcasting Services is to connect athletes with their fans. Our goal is to bring the excitement and passion of an athlete to your home. We do two events every two years: the Summer and Winter Olympics; and the Summer and

Winter Youth Olympics. We are in charge of the production of all these events for radio and television.

For the Tokyo Olympics we deployed more than a thousand cameras, over than four thousand microphones, and fifty-two mobile units. We used all these resources to cover forty-five venues from which the content of

20 CLOUD & LIVE

21 OBS

forty-five different sports was broadcast. It takes us five years to prepare the infrastructure for a 20-day Olympics. In Tokyo, for the first time ever, we did UHD production and HD and UHD distribution.

On the other hand, we also help TVs bring Tokyo’s content to their countries. For this we set up telecommunication networks by means of fiber and satellites, and distribution services around what we call the International Broadcasting Center, our production headquarters during the

Games. In the past Tokyo Games, we assembled a fiber network with a capacity of 2.7 Tb per second, with three routes to each point of presence, for a total of seven; five satellites on leasing for us to which we distributed from six satellite feeds and also from our OTT platform we make distribution in global streaming.

What I mean by this is that it’s not just about producing the content, but also about providing OBS customers with solutions to get this content to anywhere in the world.

What is OVP?

The acronym stands for Olympic Video Player and is basically our OTT platform for the Olympic Games. It is a platform, which means that it has a series of functionalities that we can integrate or remove depending on each customer we serve. For example, the platform has a live player to which any customer, such as RTVE, can add their customization. You can change the interface, change colors, but receive all the VOD content, live to go, ceremonies, etc.

22

CLOUD & LIVE

However, I would like to emphasize that it is much more than this. It is a set of widgets, iframes and has an API layer to facilitate integration with backends from different clients. Our team redesigned OVP for Tokyo, which is why I said it is much more than a mere platform; after the change of plans caused by the pandemic, we were able to launch our fully finished and functional platform in 2021. I think we did well, sorry for my lack of modesty; but we used many resources to articulate the logic of each sport, -42 in total-, in order to provide an interesting user experience.

It was complex to articulate all this data and reach a limit so that the information received by end users made sense. We developed this with the aim, obviously, of fostering engagement and offering an experience to users beyond video. Data, for us, is critical to enriching this video content. One of the next developments will address that.

What is the audience of the Olympics, on the inauguration day, for example?

The audience of the opening week of the last Olympic Games in Tokyo

was between 4.5 and 5 billion users. The pressure is interesting. But we always plan well. Of course, there is a last minute for everything. In Beijing, for example, the director of the Opening Ceremony had decided two days before that he wanted a camera to show the Olympic rings that would be drawn in the sky by the fireworks. As you can imagine, getting that camera out of the olympic stadium is not the easiest thing to do. It was important to show this content to the world and we succeeded thanks to 5G and slicing. What I

23 OBS

mean is that we must have solutions to these kinds of contingencies, because they always appear. However, although there is always room for improvisation, we have 95% prepared for the opening day.

We know that you have used the cloud in the last Games, what motivated you to do it?

OBS started using the cloud in 2016, at the Games held in Rio de Janeiro. Ever since, we have been increasing the number of workflows we place in this infrastructure. We have created a hybrid cloud, and therefore both private and public. Regarding the public side, we work with the four suppliers. The raison d ‘être of this architecture has to do with what I was saying at the beginning. Our mission is to bring the content to the fans, and we must ask ourselves: What is the best solution for each service? We have to ask ourselves: is the cloud the solution for all services? The answer is ‘no’. But, for example, to offer broadcasting through streaming, we can’t even

conceive not having public and private clouds because

we need to be close to the end users.

To use the cloud, you must take into account some of its features depending on the service, such as latency, for example. For some services, the most important thing is availability and not so much latency. In service where the most important thing is the ability to react over time, that is, the time I need for a customer to be integrated in our network, we do use cloud architectures. A broadcaster possessing the rights has the ability to access the IBC four months before the Games begin. If we develop a cloud infrastructure to access these services, you can even integrate TV up to a year in advance. In this way, we gain some room for manoeuvre to just fine-tune the final settings once we are already settled in the city welcoming us.

Actually, we don’t have overly complex workflows in the cloud. What is brought to the cloud is the task of

managing the amount of content we generate. In this case, we have seen t hat the cloud is a fundamental platform to serve our customers.

Of your ecosystem, how much is in the cloud?

I think we should distinguish between live and archive. Obviously, the Olympic Games are important when live, as people want to see watch them as they are taking place; this therefore is our main focus.

So, regarding archive, currently 70% of our asset management systems are in the cloud. The whole archive is there, much of the post-editing is already done in the cloud. Also because of the pandemic we have accessed workflows and, in addition, it has offered us the possibility of delaying the staging of the Games for another year. That was especially good for us because we were able to further develop our OTT; honestly if they had been held when they were supposed to, this service would not have been ready.

24

CLOUD & LIVE

Live broadcasting, as I have mentioned it’s a completely different issue. I think it’s a challenge for all companies involved in cloud development to achieve a high-quality, high-fidelity live stream in order to ensure that such an important event reaches the viewer. TV networks that pay millions of euros for broadcasting rights must have content guaranteed. At this stage, the cloud cannot guarantee this. In my opinion, there are several reasons.

The first thing is that we have forty-five UHD feeds to ingest in the cloud and, really, there is no cloud that supports something like that. The cloud is not yet designed to have those workflows in 2110 UHD and as many in HD. This is just the ingest, because then

there is the problem of distribution. In addition, and on the other hand, the last mile live to the end client is still a problem. OBS, for example, set up a network in Beijing with a bandwidth of 80 Gb/s at the IBC. But 40% of the population does not have access to broadband Internet. In Europe and the United States we are a bit spoiled in this regard, and we may lose sight of reality. But OBS has links all over the world, Africa, Asia, Oceania, Central Europe, America, we have all the countries. And the Internet is not a

resource that is shered by everyone. In fact, there are very few TVs that can afford to have 1 Gb/s speed. So, in the hypothetical case of the cloud broadcasting live content, we wouldn’t be able to reach every possible corner.

What the market tells us is that there is a gap to be bridged. How? In my opinion we should solve it with edge cloud.

Distribution of this amount of audiovisual content requires compression and decompression. To generate this much needed ecosystem, all agents that make up this market must develop high-availability live workflows for the edge, because if we are not

26

CLOUD & LIVE

located there, we will always have this problem.

The world of sport is starting to become an important hub for edge computing. We must do so to bridge that gap. In fact, some of our partners are already doing so. Last year we ran some tests with Azure, Microsoft’s cloud services, which were very interesting regarding integration of edge computing with satellites because, as I said, 40% of the world does not have access to broadband Internet. That is why satellite distribution is very important to us. We had 4,000 satellite reception points for the last Olympic

Games. Microsoft is integrating edge computing and satellite distribution with Orbital Ground Station. It is important that this development be encouraged with markets still to be exploited.

How do you think right holders can increase their revenue and audience in these last few years?

To increase audience, -which means both number of users and minutes consumed-, what you must do is look towards the platforms. You will find your customers there. You have to give content on all platforms, you have

to adapt the content to each customer and each platform; but most importantly, we have to offer tools to end users to enable them to find the content they want to watch. This is a problem that we have in the Olympic Games but can be extrapolated to everything: the content exists; however, it is difficult for end users to find it. On which platform, how and where, when does it start, is it live, is it VOD? So how do we get this? With data. This is where we must focus our efforts.

27

OBS

TBS’s Experience with Cloud Sports Production

With the aim of providing a clear-cut answer to the big question of this extensive report —Is the cloud ready to produce tier one sports events live?— we have approached the end users. To the list, in which we already find OBS, we have added one of the groups dedicated to production with an unparalleled track record in Spain. Who better to shed light on this issue than one who is accustomed to juggling in order to deliver the best content in the best possible way?

Raúl Izquierdo is the director of TBS (Telefónica Broadcast Services). He is responsible for the deployment of technical equipment and human teams to cover sports of maximum audience such as football or basketball. His experience with the cloud ranges from the most common uses - such as storage and asset management or the distribution of content in digital environments - to experimentation in the production layer. What is the actual, hands-on experience and, more importantly, how should it evolve to be functional?

28 CLOUD & LIVE

What sports content does TBS specialize in?

We work for different clients. Sometimes the final product is directly for Movistar, other times it is for an organization. Specifically [at the time of this interview] we produce all the ACB (Spanish Professional Basketball) matches, the Euroleague and the Eurocup, as well as the World Padel Tour internationally and also the TURF horse racing circuit.

We also make customizations for Mediaset and DAZN, -all the Spanish National Cup matches-, for Real Madrid Televisión -for them we produce the RMTV channel together with Supersport, Mediaset Group’s producing company-; customizations also for Barça TV, in addition to content; and of course, the production of all Movistar sports programs.

Apart from all this, which is our usual work, there are also events like the Handball World Championship that we did last December, or the Davis Cup, which we have been doing for two years. We have also participated in several golf competitions or, recently, the match of the Spanish National Rugby Squad that was played in Oviedo. And I cannot forget that we have also made Formula One customizations on some occasions.

29 TBS

What technical means do you usually deploy?

Every sport is different. And budget constraints also play their part. We produce both with mobile units and thirty cameras or just with three or four cameras. We also work with remote production. For example, in the ACB basketball league, between four and five games are produced remotely. We do this with a technology that provides us with minimal latency. We also do it remotely with paddle tennis, but we produce that with a completely different technology.

To what extent do you use the cloud in these events?

We currently work in the cloud in some areas, but really and at the production level, we have used it only experimentally.

Storage, which is perhaps the most widespread use right now, as we use it on a daily basis and in different projects.

There is also a distribution section where we use some cloud technology to make

certain contributions and distributions of content through SRT.

Concerning production, we have also been testing cloud systems, where iron stuff is “eliminated” and virtualized in the cloud. In this area we have carried out different tests: with edge computing to eliminate latencies and in FTTH environments, leaving edge aside. We have tested different solutions, with different manufacturers such as NewTek, Grass Valley or Amazon. All of these tests are very different from each other.

With what sports have these tests been carried out and how has the experience been?

We tried to extrapolate the different experiments to specific use cases such as customization or use at sports events. In these tests we wanted to check the latencies and identify the handicaps associated with cloud production.

From my point of view, a lot of progress has been made, but we have reached a dead end in the audio and data processing side of

things. We have tested with NDI systems, we have done them with coding in H264, etc. The truth is that the result in general has been quite good.

For example, the test we did with Grass Valley and Amazon Web Services (AWS), which was for a Real Madrid Champions League match, was carried out to evaluate different production options and formulas. It had a remoteproduction portion that was done from Tres Cantos and the result was very stable. But of course, you also have to rely on the assurance provided by brands such as Grass Valley or Amazon. The handicap we saw was precisely the one I mentioned before: audio integration, intercom management and so on. I think this is the weak point of all these systems in general.

Why have you encountered these problems? Are the reasons related to what happens with integration of these kinds of devices and functionalities in IP environments?

30

CLOUD & LIVE

In the audio area in particular, computers are very used to operating in network environments. But of course, we are talking about work environments in which the required latencies have to be absolutely minimal. The moment you get in the cloud, you don’t control those latencies that much anymore. Making systems by using Dante, which has a range of up to five milliseconds in IP environments, is somehat more complicated in the cloud because normally the latencies tend to increase.

This doesn’t mean you can’t produce. You can do this, and in fact you may be embedding audio, but then you lose all that potential that you get when you’re working on site with a mobile unit, with a control or even remotely with certain systems, -as for example if you have point-to-point fiber-. This is the handicap. IDepending on the relevant kind of production is becomes a problem or not.

There is another handicap: data management. Control equipment for cameras, tallies, and

certain computers doesn’t integrate in the same extent, and apart from latencies, there are computers that need you to generate VPNs and IP communication systems in a less controlled environment. Then it also depends on how you are working. If you have your system in an FTTH environment that may not be as controlled, you will find a challenge in network data management.

The work you did with Amazon and Grass Valley was a customization, wasn’t it?

We did a parallel program, about four hours in total, with the customizations that are usually done in this type of events. More cameras could have been brought in, -there were five in total with four different positions-, and then we added the international pool signal to enrich the content. The final result was

good, although it is true that you have to fine-tune things, but program quality was quite good.

What systems were virtualized?

The audio and video mixer, the multi-screen, the matrix were virtualized, although

32

CLOUD & LIVE

we had to strengthen this by means of the local audio management, and we also made an intercom integration. It is true that a video or audio mixer in the cloud is not the same as in hardware. Each one has its own peculiarities. In the audio area, customization is more than enough, but it is true that in a physical audio mixer you have more capabilities and more functionality.

What image format did you work with in that customization?

We made it in HD. Resolution was 1080. Whether progressive

or intertwined I can’t recall, because we had certain problems with the integration of equipment and we had to modify the usual resolution with which we worked.

Would you like to comment on any extra details about the projects in which you take part?

We have also carried out tests with edge computing technology from Telefónica and within the production in the cloud. As we said before, one of the great challenges remains latency. I think that the

moment edge computing solution becomes more widespread, latency will decrease a lot. In addition, with 5G you can also reach these latency levels. We will be broadcasting practically without latency with backpacks and, simultaneously, in the cloud and in this way we would greatly lighten the production systems.

Is the cloud ready to produce an event the size of the Olympics?

Not yet. It is in a process of evolving and still needs a lot of maturity. First to settle in, gain robustness and offer more integration. And then, gather experience in sports that prove less demanding than top-tier competitions, such as a Champions League match or the Olympic Games.

The customization I have mentioned above worked well, with its flaws and so on, but the experience was a good one. But if I had to prepare a live stream of something important, I would not go through the trouble of planning it in the cloud.

33

TBS

But of course, this has an added problem: price. These types of systems require a huge investment to be budgeted, and in order to ensure this you have to have concurrency. For this to be implemented as a usual system, it first has to go through smaller productions, with a decrease in price, of course. If this does not happen, the evolution of the cloud will be slower.

If you have a lot of recurrence, you might get the numbers right. However, to broadcast a Champions League game before you had to do a lot of things while testing the resilience of the system in the cloud.

In addition, there is also a part that will always be hardware. We’ll always need an interface we can control. Therefore, beyond investing in the cloud system, there is also a cost associated with these devices that will always be there.

Apart from uses for live production, what uses does TBS currently give to the cloud in rgard to sports?

For storage, it is being used in sports newsrooms. To have material down deep or hot. We’re working with AWS.

In the distribution side, we are working on a remoteproduction system with the NetInsight Nimbra system. We distribute in the cloud to certain customers who need to stream, either for virtualization or for an intake.

We often tend to hear that the cloud is an unstoppable banwagon and we all have to get on it. What are the advantages of jumping on this bandwagon?

I understand that in the future -because this is not the case at presentthe advantages should be primarily economic. Both in investment and in production and maintenance. Companies should have advantages in investing thanks to promises concerning scalability models.

In the production area, we must add the advantages that it brings thanks to

remote workflows, which provide facilities and lower expenses when it comes to mobilizing technical equipment and human teams.

Regarding maintenance of the equipment and related useful life when assessing investments, it must also offer a strong argument for decision-making. All this is in theory, if it delivers on reality.

Regarding security, it is interesting to know what the internal security and cybersecurity protocols in cloud environments are and how end users feel about their utilization. What is your impression about this?

In my opinion, everything having to do with security goes against the day-to-day of the production layer. In the end, it will somehow have to be standardized, however. Whenever you access IP environments you should be more secure because there are more weaknesses involved. The easy thing would be not to have it and be in isolation, but the truth is that no

34

CLOUD & LIVE

system can be completely isolated.

As I was saying, with regard to responsiveness, directorial productions work at a very fast pace. You can’t afford to leave a piece of content unbroadcast. True, uthentications are necessary for security reasons, but they are a handicap when it comes to operating. These measures, although necessary, go against agility.

With regards to integrating security into systems, if manufacturers offer security as a complete solution, it may all become simpler. Let’s say that in that package of services that we discussed, security and cybersecurity are also embedded. If, on the other hand, you have to integrate equipment and systems as a closed service within IP environments in which, in addition, other services

are also developed, all this will be more difficult. It is already hard to implement it in the computer systems into which broadcast has shifted when we have to take into account security in ports, firewalls, etc.

Depending on the volume of the system, what happens is that you actually have to work with different layers. This is another issue to bear in mind now and for the future.

TBS

What is the future of cloud solutions?

In the production part, evolution will be associated to the final use of these systems. And we are still at an embryonic phase in which we must facilitate their use.

First, the core that is virtualized must be given more robustness. Secondly, we must make the costs bearable. For someone committed to use these systems on a regular basis, on something other than a pilot, they must have assurances that it will work. Even if they do not have all the solutions, providers of these cloud services must ensure that they can be integrated with the missing ones. That possibility must exist for its use to prevail, and, in addition, it must encourage such integration to be developed in a hybrid way.

The natural way of evolving, moreover, must be gradual. You have to start with small events to progress to the media and, finally to the big ones. That is why the cost must be under control.

36

CLOUD & LIVE

As use increases, system guarantees will be gradually fine-tuned. This is the way it should evolve. Meanwhile, in this process, integration of third-party solutions and systems within virtualized environments should be encouraged.

This is going to involve even more experimentation, right?

Of course, there can be situations in which within a much larger production and with more idiosyncrasies in the final broadcastin these types of experiments are developed. I can think of Formula 1, for example. Imagine they have a streaming platform for the staunchest fans. There you can make a parallel production associated with the main one but much less demanding. There are many implications at the sporting level that we are not contemplating, such as rights, but you can make a production in the cloud that is still a production system in which you have

a series of signals that are being generated from the circuit which you mix in the cloud. You are already in a top-tier sport, and you are already developing the two production models. The growth being experienced in the cloud production model can be associated with broadcast production models. Such examples would provide good opportunities for the evolution of cloud production.

In the end it will tend to hybridization. I am constantly hearing that thanks to the cloud there will be no more mobile units. Yeah... I’d like to see that for myself. It will end up being another production model that will be better adapted to a specific type of customer and one that will be able to generate more production through different models. It’s not like before, when we had linear channels and little else. Now there’s room for everything.

37

TBS

In this special issue on the ability of the cloud to produce live sports, we could not miss the views of one major provider of cloud services at present: Amazon Web Services (AWS). Through the answers given to us by big representatives of the end users that make up this sector, such as Mario Reis of Olympic Broadcasting Services, or Raúl Izquierdo of Telefonica Broadcasting Services, we have reached the conclusion that the cloud is not yet mature enough as to carry out the production of large live events. Thanks to a conversation we had with Manuel González Sevilla, systems architect - Broadcast at AWS; and David Sabine, s Broadcasting Senior Consultant at AWS; now we know that the purpose of developing these services is precisely this, among many others that are equally important.

38 CLOUD & LIVE

What cloud services does Amazon Web Services (AWS) offer to the mass media and entertainment industry? AWS offers more than 200 support services to its customers, specializing in the media and entertainment sector and including the full services of AWS Elemental Media Services and Amazon Nimble Studio. In addition, AWS releases eleven turnkey, ready-to-integrate solutions. These solutions help your customers build or improve their own workflows relating to mass media.

AWS has more than 400 industry partners, including technology manufacturers and consultants such as Adobe, Autodesk, Deluxe, Epic Games, Evertz, Grass Valley, Imagine Communications, Slalom, Sony Media Cloud Services, Trackit, Teradici, and Wizeline. Together with our partners, AWS helps transform the industry in five key areas: content production, media supply chain and archiving, broadcasting,

direct-to-consumer (D2C) and streaming, data and analytics.

Last but equally important, AWS brings fifteen years of experience in supporting the transformation of clients such as Comcast, Discovery Inc., Disney, Formula 1, FOX, HBO Max, Hulu, Method Studios, MGM, NFL, Netflix, Nikkei, Peacock, Sky, TF1, Untold Studios, ViacomCBS and Weta Digital.

The view of broadcasters is that the cloud offers great advantages in cataloging and storing media. What are the benefits provided by these services according to AWS and what are their features?

Millions of customers use AWS services to transform their businesses, increase agility, reduce costs and accelerate innovation. In the media and entertainment industry, services such as Amazon Simple Storage Service (S3) are used on a daily basis to support our customers’ workflows. It includes archiving and content recovery, with eight

different storage levels, based on data utilization. For example, S3 Standard is suitable for content that is used daily, while S3 Glacier Deep Archive is more suitable for content that is rarely used.

In cataloging workflows, we leverage intelligent media solutions built on AWS’s analytical and machine learning capabilities. For example, services such as Amazon Rekognition can be used to automate the analysis of images and videos, in addition to being combined simultaneously with machine learning. On the other hand, services such as Amazon Transcribe or Amazon SageMaker can also be used for these automatic content recognition needs. An example of use case is the frequency with which our customers use AWS AI/ML services to create cataloging workflows.

In addition to the aforementioned services, the sports content production chain also includes other stages, such as editing.

39 AWS

This task is particularly suitable for a permanent handover to the cloud. What options does AWS offer at this stage and why does this result in an improved process?

Editing was, in fact, one of the workloads that saw early adoption by AWS customers. A full range of storage services suitable for editing has been developed at home: from Amazon Elastic Block Storage (EBS) for high-performance block storage, to shared file storage such as Amazon Elastic File System or Amazon FSx. These storage services provide all the performance and flexibility our customers need for editing workflows. The range of computing and graphics processing options available from AWS, including the latest NVIDIA GPU-based instances of Amazon G4 and G5, admit effects and rendering workloads of all scales.

The best way to illustrate how AWS improves the editing process is to use an example: NETFLIX is equipping its artists with remote workstations and

achieving millisecondlatency when using AWS Local Zones.

With regards to the distribution of sports content, in a world that is getting closer to the Internet and IPTV/OTT models, what role does AWS play?

AWS has a global presence worldwide, with 27 regions composed of 87 availability zones in operation and over 410 points of presence with Edge Locations and Regional Edge Caches. By deploying applications and workloads in the cloud, our customers have the flexibility to select the technology infrastructure that is closest to their primary users. And specifically for OTT workflows, AWS is the world’s leading D2C and streaming services platform with more purpose-built capabilities than any other cloud. Amazon CloudFront, AWS’s Content Delivery Network (CDN), has been streamlined for the workloads associated with the broadcast industry.

Our project with Peacock, a US-based streaming service that belongs to the Comcast Group, is a good example that illustrates how to deploy an OTT platform on AWS.

We must distinguish between two modes of content production: live and delayed. Specifically, sports take place live. So, in this interview we will focus on live production. In your opinion, is the cloud ready for live production?

Our customers have been successfully using live remote production solutions for many years. The proposal from AWS for remote live production extends these proven workflows by providing the benefits of virtualized production teams running on Amazon’s virtual private cloud (VPC). Our customers are leveraging these virtualized live production solutions to replicate and expand their existing workflows that are at present deployed on AWS. Virtualized live production solutions have

40

CLOUD & LIVE

been developed by AWS’s partners, including the most important and trusted names in broadcasting. Our partners have optimized their solutions by leveraging the services offered by aWS and deploying cloud-native solutions. In response to customer demand, AWS hosts frequent live remote production events. That’s why we can confidently say that the answer is ‘yes’: AWS is ready for live remote production workflows.

We have spoken with a number of broadcasters, and they all agree that cloud services related to storage and publishing meet their expectations. However, services concerning early stages (production, contribution, and distribution) are more challenging. How does AWS address these challenges for its customers?

We talk every day with our customers about their needs around contribution and production workloads, supporting them with proof of concept (PoC) to test the viability of these workloads. AWS does this in collaboration with our outreach partners and independent software vendors (ISVs).

One of the recurring themes in these debates is latency. In a recent

AWS

proof of concept, we have obtained latencies of a few frames in a fully redundant contribution path (2022-7), supported by the JPEG XS codec. The test used an AWS Direct Connect service that was provided by one of our partners.

We encourage TV networks to reach out to their AWS representatives and start conversations about their key metrics for remote live production; we have a large number of media solution architects and other experts within AWS organizations, including a dedicated media and entertainment professional services team.

Broadcasting a Champions League

Final or the Olympic Games requires a certain infrastructure. In addition, dedicated networks (either SDI or fiber) are needed to move huge volumes of data. Finally, the infrastructure must be secure and 100% reliable. How should the cloud evolve to meet these needs?

AWS infrastructure is built on security and resilience. It currently supports millions of active customers around the world. As data volumes needed to make a production increase, AWS

continues to work with our partners in order to ensure that customers have optimal contribution and distribution solutions. AWS regularly publishes content that is relevant to this sector on the AWS Media Blog. As an example, a quick blog search for the term JPEG XS will return articles describing the work done by AWS to demonstrate how the use of JPEG XS provides low-latency, lossless contribution.

For the processing of multimedia workloads within AWS, let’s use the example of uncompressed video over IP. AWS Cloud Digital Interface (AWS CDI)

42

CLOUD & LIVE

is a network technology that allows customers to transfer high-quality uncompressed video within the AWS cloud, with high reliability and a network latency of as little as 8 milliseconds. AWS CDI allows you to create similar workloads on AWS cloud computing services and instances by providing a reliable, high-performance, and interoperable way to transport uncompressed video.

Our interviewees have said that, for this evolution to occur, there must be experimentation and collaboration

between industries and companies. What R&D programs does AWS take part in for the media and entertainment industry?

AWS is present in many industry forums and associations such as SMPTE, VSF or IABM and actively promotes industry standards through channels such as the AWS Media Blog.

AWS actively supports interoperability events; as for example with the AWS CDI interoperability workshop; the second in the series, which promotes the highest quality live production with the

participation of thirteen technology providers.

Another irrefutable fact is that there are different sports categories depending on the audience that the sport brings. Important broadcasters in the European scene have assured that the ideal would be to test and evolve this technology in ‘less important’ sports. Is AWS developing or planning to develop this possibility?

On-site, our customers choose the technology providers that best suit their production

43

AWS

requirements. Similarly, our customers deploy their production environments in AWS differently, depending on the type of event. An event that requires a single vendor may need less integration, deployment, and configuration time than an event with multiple vendors. Events with fewer cameras, fewer replays and graphics are easier to implement in AWS, as in traditional productions.

Sports federations, production companies and broadcasters, large and small, have reached out to AWS to help them implement cloud-based live production. The range of productions in which AWS is asked to help ranges from small events of 4 to 8 cameras, to large coverage using more than 16 cameras, with multiple replay operators, data transmission charts, complex audio mixes, intercom and counting. At AWS, we are working with our in-house media and entertainment teams and partners to ensure we remain ready to help customers deploy all levels

of live production.

The strategy adopted by AWS is to continue working with the different technology providers and consulting partners to have the largest portfolio of solutions available in order to meet the needs of customers, regardless of the type of event they want to produce.

From a cost point of view, when is it more efficient to rely on the cloud and when is it necessary to rely on physical technology? Which option will prevail in the future?

As of April 7, 2022, AWS has lowered its prices 115 times since its launch in 2006. The term cloud computing refers to the on-demand delivery of computing resources at a pay-per-use price. Our customers only pay for what they use and can scale up or down as needed. Performance can be optimized, as needed, and automation can be applied to processes. At the same time, monitoring and observability of metrics across all infrastructures can be ensured. By using

AWS managed services, customers reduce the time spent in managing infrastructure and can focus on the results of their business, thus enabling rapid innovation.

And it’s not just about cost, many of our customers are looking to reduce their carbon footprint when they produce TV, and live production is a workflow where this can be easily achieved by migrating to the cloud. Fewer items of equipment and staff at the venue, infrastructure that works more efficiently in the AWS cloud rather than on-premises, and only the exact infrastructure needed is deployed, depending on the scale of the event; all that helps to be more efficient in environmental terms.

What are the needs concerning cloud security?

AWS is designed to be the most flexible and secure cloud computing environment available today. Our core infrastructure is built to meet the security

44

CLOUD & LIVE

requirements of the military, global banks, and other highly sensitive organizations. This is supported by a deep suite of cloud security tools, with 230 security, compliance and governance services and features. AWS supports 90 security standards and compliance certifications, and the 117 AWS services that store customer data offer the ability to encrypt that data.

Security is a shared responsibility between AWS and our customers. The shared responsibility model describes it as cloud security and security in the cloud: where AWS is responsible for cloud security and the customer is responsible for security in the cloud.

Take the example of an AWS-speci

c service such as AWS MediaConnect: this

service supports enhanced security in many different ways, including, protection through in-transit encryption, integration with identity and access management (IAM) for administration purposes. It also hosts integration with Amazon CloudWatch for observability and AWS CloudTrail to record actions and other aspects described in the service documentation.

fi

AWS

Gravity Media

the

46 QATAR 2022 FIFA World Cup Qatar 2022

Showing every goal to

world Khalifa International Stadium

A World Cup is, probably, one of the most important events in today’s society. It mobilizes millions of fans and billions of euros in investments and profits. Ensuring that every play is seen in high quality in every corner of the planet is no easy task. Nor, in fact, is organizing a World Cup in a place where it reaches 50 degrees Celsius. Our editors had a chat with Ed Tischler and his team at Gravity Media, who are responsible for organizing the broadcast infrastructure and setting up the Qatari stadiums, to ensure that every fan, no matter how far away they are from Qatar, can see their team win and feel the excitement of a World Cup. Here are all the details of Gravity Media’s work leading up to the World Cup in Qatar.

47 GRAVITY MEDIA

What work will Gravity Media do for the World Cup in Qatar?

The Gravity Media team from across the UK and Qatar will be supporting both the LOC and unilateral broadcasters with a variety of broadcast equipment and crewing services across multiple locations in Doha, Qatar.

What are the challenges of covering a World Cup?

The World Cup tournament has a very compressed scheduled at the start of the event during the Group Stages which extends the working day and production plans for all. This reduces the rigging period available to our Gravity Media team in each stadium.

There are very specific challenges to working in this region and that is one of the reasons our clients have come to us. Our Qatar Business has been established for over 15 years which means we are best placed to anticipate any challenges of meeting local permits and regulations.

Our years of experience at major football events means we have a comprehensive understanding of how best these workflows integrate together and allows us to leverage our technical expertise to drive the best value for our clients.

We have read that you have worked on the development of different stadiums in Doha, what did you develop them for?

The Gravity Media team has been busy equipping the host stadia with permanent broadcast and communications infrastructure fit for such a large-scale high-profile event and for many years to come.

Gravity Media was commissioned to support the redevelopment of the Khalifa International Stadium in Doha, as well as Al Bayt Stadium, Ahmed Bin Ali Stadium and Al-Thumama Stadium. We designed, installed, and commissioned the permanent broadcast infrastructure systems to enable all camera wall-box positions.

48

QATAR 2022

Ed Tischler, Managing Director at Gravity Media

Our experience in sports broadcast and stadium integration meant we were able to pin point the best angels for the best coverage in each venue.

In the development of this infrastructure, what challenges have you encountered and how have you overcome them?

Qatar’s climate is extreme, with temperatures rising to above 50 Degrees Celsius during summer months. This means the design had to meet the demanding needs of our client and our choice of installation material had to be thoroughly researched.

What technological innovations have you

introduced in the stadiums?

Engaging fans inside the stadium is a key part of creating the anticipating excitement around match days where Gravity Media was at the heart of the action, installing the Infotainment Systems in all the World Cup stadiums based in Qatar.

49

GRAVITY MEDIA

Whether that is following the announcers and entertainers or providing the replays and clip playouts to the big screens, we innovated the system and made the fan experience much better.

In addition, Gravity Media has taken delivery of a

newly commissioned DSNG mobile unit, Suhail.

Suhail can take up to eight fixed cameras including a combination of line and RF channels. It comfortably seats a crew of seven where it was successfully deployed for the World Cup Draw in April supporting a highprofile rights holder.

In terms of signal production, what infrastructure did you deploy to cover the championship? Which manufacturers did you rely on?

Gravity Media worked closely with Belden, Draka, Simply Live, and Riedel to install the broadcast cable

50

QATAR 2022

infrastructure in half the World Cup stadiums, and the infotainment system in all of the World Cup stadiums.

How are you going to transmit broadcast signals in the stadium? Are you going to use IP structures, or are

you going to rely on remote production resources? What are the particularities of this ecosystem?

Continuous coverage of this World Cup is obviously paramount to our clients and every attention to detail

has been given to mean not a moment of action goes a miss. A mixture of IP, Satellite, and Mobile Date will be in use to achieve maximum resilience. This enables all of our outgoing circuits to have the very best in redundancy and disaster recovery.

51

GRAVITY MEDIA

52 SPORTS

The Rugby League World Cup is held every four years and its organization and infrastructure is created ad hoc for each occasion. On this special occasion, delayed by the effects of the pandemic, the organization has directly taken over the direction of the decisions for the broadcasting of the content. Its strategy is based on the integration of categories and a digital approach that, beyond relying on broadcasters such as BBC or FOX, has focused on the creation of digital broadcast channels for social networks and its own streaming service.

In this reading you will also be able to understand another of the main lines of action of this organization is the integration of categories. According to Russell Scott, Broadcast Lead of the RLWC21, “women, men and wheelchair athletes will receive the same coverage during the competition”. The organization will rely on the production services of BCC and Whisper to cover the 61 matches that make up the competition in the 21 venues where it will be held.

53 RUGBY LEAGUE WORLD CUP

3Which event is the Rugby League World Cup?

The Rugby League World Cup happens from the 15th of October through to the 19th of November this year. We are running three tournaments at the same time. We have the Men’s World Cup, the Women’s World Cup, and a Wheelchair World Cup. It’s Men’s Rugby League, Women’s Rugby League, and Wheelchair Rugby League. I think it is the most inclusive event I’ve ever worked on.

We start with the men’s tournament, and then the women’s and wheelchair start and all three run simultaneously. We end up with three World Cup finals in Manchester within 24 hours of each other on November 18 and 19. Never before have three World Cups been held in the same place at the same time.

To talk about broadcast, the last two cycles of the World Cup, 2013 and 2017, the International Federation licensed the

World Cup to IMG. This company is a fantastic organization, a great commercial production, right sales distribution, etc.

I don’t think there are many things they don’t do. We have taken the decision to do things slightly differently this time round. We have now transferred that responsibility to the organizing committee for 2021.

And you are in charge this time, right?

Yes. We are now responsible for production, distribution and rights sales. I think a lot of the logic behind that is to be able to take some control, and as a sport, deliver value in the longer term. There are two clear examples of this. One is that our coverage of men’s, women’s and wheelchair Rugby League requires an investment to grow awareness of Women’s and Wheelchair’s Rugby League.

They’re both really strong sports, and they’ve both got strong participation. Nevertheless, still do not charge a large amount of

fees. It is up to us to invest and make sure that we show those sports in a way that increases the audience.

How are you doing this?

Much of this is due to our coverage. For example, there will be no difference in the coverage of the last three categories. The men’s, women’s and wheelchair competitions will receive the same treatment in our television production. However, to be perfectly honest, we must accept that not all championship matches will have the same coverage. Obviously, there are more cameras at the final than there are at some of the earlier rounds.

If you accept that, everything else we are doing is designed to create equality in all three events.

As a tournament, for the first time, we are paying participation fees to all athletes. It is not a technical aspect of broadcasting, but it starts with a level playing field. The objective is to start with professionalization of

54

SPORTS

the sport and to give the athletes the opportunity to perform at the very highest level. This is a big difference from what happened in the last cycle. On the previous occasion when the wheelchair championship was held, the athletes had to pay for their own accommodation and transportation. This time, the tournament is taking care of all expenses.

As I was saying, all the three different competitions will be as close to the same as possible. They all will have same graphics, same titles, same talent presenting, etc.

Just to go into details, as part of the same storytelling of the match, we’ve put cameras in the locker room, so that all of us in the audience have that real excitement in the last five minutes before the team has taken the field. Also, we will find out what happens at halftime, when they come in for their team talks and prepare for the second half. We’ll do that in men’s, women’s and wheelchair. It is the same coverage plan, the same narrative and the same broadcast for the audience in all three categories.

We are working with Stats Perform, formerly known as Opta. Stats Perform will do all our live athlete data tracking, data capture, and editorialization of that data. We have the same specification for that on men’s, women’s and, for the first time ever, on wheelchair category.

When the opening match of the wheelchair category between Spain and Ireland arrives, we’ll deliver athlete performance data out of

55

What tools will you use to help you tell the story?

RUGBY LEAGUE WORLD CUP

that match for the first time ever. That doesn’t tell the story of disability, but tells the story of athleticism. The viewer can see exactly the same markers that they see for the main game. So, for example, tackles completed and distance traveled and all those stats, will be available for wheelchair as well.

My aspiration is that when we get to the third and fourth week of the tournament, when we have a lot of games, we will be able to do a good job. At the end of a few days, we will have had multiple men’s, women’s

and wheelchair games, and we will be able to give a summary of the best performance that is completely neutral for the individual tournaments. Whether that person has participated in a wheelchair, or is female or male, it doesn’t matter. Our top five performances of the day will be a mix of all the tournaments. I think it’s a very powerful way to tell not only the story of inclusion, but also the story of athletics.

When I talk about taking back control of the broadcast, it means that we can make some pretty challenging decisions for the good of the sport, and we deal with the consequences of those decisions. One of those decisions is that throughout the tournament we have 61 different matches. We play them in 21 different venues. That’s a lot in five and a half weeks. From a broadcast perspective, it would be lovely to set up in one place and have everyone come to us.

56

What is the biggest technical challenge of the event?

SPORTS

We’re not playing a weekend tournament. We’ve got matches the whole week. We are going to get a really dynamic pace to the tournament, where people will be able to watch the games committed to the tournament on a daily basis, rather than with breaks in play.

The combination of that intensity of schedule and 21 venues creates a big logistical challenge for us. We need to be in the venue, roll and exit to the next location quickly. We start in St. James’s Park in Newcastle and finish in Old

Trafford in Manchester. Both are premier league grounds. In between those two, we visit a lot of different places. Some of them are more traditional super league venues. Some of them are indoor venues for the wheelchair. Therefore, a wide range of different installations are involved. Our wheelchair final is at Manchester Central. Manchester Central is an iconic venue in the center of Manchester.

Water sports competitions are regularly held there. Therefore, we will be staging a rugby final at a venue that is empty.

What infrastructure have you deployed in these venues you mentioned?

Rugby League World Cup takes direct responsibility based broadcast, but we have contracted BBC for 16 of all matches. That’s part of a distribution agreement in the British domestic market. BBC will deliver coverage for our opening match for our Old Trafford finals, and a number of the bigger games mostly with home nations competing across the tournament. Their production is a more traditional on-site outside

57

RUGBY LEAGUE WORLD CUP

broadcast. There are some areas of innovation around the use of data, but it really corresponds to what we would all recognize as outside broadcast.

For the other 45 matches, we’ve engaged Whisper to act as host broadcaster. Whisper, who covers many of the women’s and wheelchair games, brings a wealth of experience from the Paralympic Games, where he has actually worked. In addition, Whisper is responsible for this summer’s Women’s European Championship. As mentioned, we

look for equality in our coverage, and they have an understanding of women’s sports and disability storytelling. It really allows them to elevate the quality of the content.

Whispers 45 matches will be a remote production, not an onsite. It’s a model that although I think is relatively new, well proven though. We are using a production hub in Ealing (London neighbourhood) for those Whisper matches. From a technical point of view, I don’t think there is anything that is particularly

difficult. The only small difference we will have between Whisper and BBC coverage is that, in this

case we are talking about, we have a video refereeing protocol on remote from

the Ealing hub, while for BBC matches the video referee will be on site with the outside broadcast infrastructure.

To summarize it, Whisper will be responsible for all of the wheelchair matches, but the men’s and women’s are split between BBC and Whisper.

58

SPORTS

What other solutions will you apply to improve the storytelling?

As part of the work to be performed by Whisper, they will produce match clipping service and highlights output. A series of highlights of varying lengths will be broadcast within 45 minutes of the end of the match. Access and distribution will be made on Tellyo. From our perspective, we have had to make a big investment to provide that service. It was necessary though, because there is an enormous demand for content creators across multiple platforms.

We have invested in the production of the match assets in order to produce it in one single occasion. The organizations of the participating federations know that they can count on all this material only 45 minutes after the match their teams have played. With this investment, these federations and the right holders, too, can concentrate all their rights on storytelling and not so much on producing the

media to tell the story they want to tell. The centralized delivery of this material streamlines the process of obtaining content.

What other distribution windows are you considering for this edition of the Rugby League World Cup championship?

We are working with broadcasters in core markets. A key part of that is access to the widest audience possible. There is also, obviously, financial imperatives there by taking responsibility for production that we must cover. The core markets are the domestic market in the UK, Australia, and New Zealand. We’ve got exceptionally strong broadcast relationships in this countries.

In the British market we are working with BBC as the rights holder. In Australia, we’re in partnership with Fox, and in New Zealand, we’re in partnership with Spark Sport. In all three of those markets all organizations will distribute all 61 matches live. There’s

no prioritization of different tournaments or different matches. If you are in those territories, you can see every single game and every minute of those games live.

Are they going to broadcast them in a linear way?

Yes. On BBC there’s a split between network channels, BBC 1, BBC 2 and the digital platform iPlayer. In Australia, there’s a split between Fox Sport and Kayo, their streaming platform. And in the case of New Zealand, Spark Sport is a streaming platform itself, so everything goes across there.

On the other hand, we have a significant number of broadcast partners in other territories, but at a slightly lower audience level for Rugby League.

Then on top of that, we have our own direct-toconsumer proposition. We took the decision to work with the RFL, Rugby Football League in the UK. They have a platform built by InCrowd with streaming delivered by StreamAMG. We deliver the tournament app with them.

59

RUGBY LEAGUE WORLD CUP

This app has all of our content, and all of our information about the tournament. The RFL app is called Our League. In effect, we’re taking that over for the period of the tournament. That gives us a number of really very specific benefits.

The first is that it’s a proven platform. We know it scales, we know it’s got good resilience, and we know the payment platform is stable and reliable. It also means we inherit an existing audience of Rugby League fans from the

RFL, so we don’t start the tournament with no users.

We start the tournament with a significant existing audience. When the tournament is over, the application will revert back to the RFL application. Any new audience we get will be inherited by the RFL. We are using that app and that platform to stream all our matches live to every territory where we don’t have an exclusive broadcast agreement. And we are using a pay-per-view model.

We have created this model to go further. In

countries like Australia, New Zealand or the UK

there is already a strong fan base. In other countries, there is a fan base that, although it generates enough interest because there are teams competing in our championship, it is not enough to get broadcasters involved in broadcasting this content. There’s a couple of areas where we’re talking to broadcasters about how we might work together to give more exposure to the tournament in competing nation territories.

60

SPORTS

What are your future plans?