Has AI Surpassed Humans in Creative Idea Generation? A Meta-Analysis Has AI

Surpassed Human Creativity?

Alwin de Rooij

Centre of Applied Research for Art, Design and Technology, Avans University of Applied Sciences, and the Department of Communication and Cognition, Tilburg University, alwinderooij@tilburguniversity.edu

Michael Mose Biskjaer

Center for Digital Creativity, Aarhus University, mmb@cc.au.dk

Has AI surpassed humans in creative idea generation? This question has gained traction as generative AI (GenAI) becomes widely used for supporting creativity. To evaluate this, we examined the first wave of experimental studies comparing human and GenAI-prompted creative idea generation, by conducting a meta-analysis of 17 studies comprising 115 effect sizes. The results showed a small but nonsignificant pooled effect favoring GenAI Initial analyses suggested greater originality in GenAI ideas, but sensitivity analysis showed this was driven by a few studies with very large effects. No significant differences were found between human ideas and those generated by prompting specific GenAI models (GPT-3, GPT-3.5, GPT-4). Funnel plots and asymmetry tests indicated no evidence of publication bias, supporting the findings’ validity This meta-analysis finds no empirical support suggesting that GenAI has surpassed humans in creative idea generation. We emphasize sociotechnical and -cultural approaches as crucial for shaping human and artificial creativity

CCS CONCEPTS • Human-centered computing ~ Human computer interaction (HCI) ~ HCI theory, concepts and models • Computing methodologies ~ Artificial intelligence • General and reference ~ Document types ~ Surveys and overviews

Additional Keywords and Phrases: Generative Artificial Intelligence, Creativity, Idea Generation, Meta-analysis

ACM Reference Format:

First Author’s Name, Initials, and Last Name, Second Author’s Name, Initials, and Last Name, and Third Author’s Name, Initials, and Last Name. 2018. The Title of the Paper: ACM Conference Proceedings Manuscript Submission Template: This is the subtitle of the paper, this document both explains and embodies the submission format for authors using Word. In Woodstock ’18: ACM Symposium on Neural Gaze Detection, June 03–05, 2018, Woodstock, NY. ACM, New York, NY, USA, 10 pages. NOTE: This block will be automatically generated when manuscripts are processed after acceptance.

1 INTRODUCTION

On November 30, 2022, the American company OpenAI released the chatbot ‘ChatGPT.’ This happened after several years of developing the underlying large language model (LLM), first introduced, indirectly, when OpenAI published its initial research in June 2016. Following its introduction, ChatGPT, powered by GPT-3.5, reached one million users in just five days. Two months later, the chatbot was reported to have 100 million active users, an uptake that dwarfs previous digital innovations such as TikTok and Instagram where the same milestone was reached in about nine months and 2.5

years [31] Generative artificial intelligence (hereafter ‘GenAI’), including transformer models (text), diffusion models (image), and recurrent neural networks (sound), is now widely used for creative purposes, from everyday problem solving to integration into the workflows of creative professionals. Many GenAI implementations enable users to design input queries and instructions using natural language, so called prompting, to guide and shape GenAI output The rapid adoption of such GenAI coincided with the proposition, bolstered by high-profile studies such as [32, 36], that GenAI has surpassed (most) humans in creative idea generation. The contention, here, is that when ideas generated by humans are compared to those generated by prompting GenAI, ideas by GenAI are more likely to be judged as creative.

This proposition is both intriguing and warrants further scrutiny as creative idea generation historically has been seen as a proxy for human creativity per se. Exploring this requires commensurability to make the comparison meaningful. This means investigating the types of interaction that involve GenAI. Here, the distinction by Karimi et al. [35] is expedient to distinguish between a) creativity support tools, i.e., tools built by HCI researchers to support human creativity in everyday and professional contexts; b) computational creativity, i.e., AI researchers building algorithms that generate creative output with minimal human intervention; and c) computational co-creativity, i.e., when HCI and AI researchers build tools to support more dialogical forms of human-AI co-creation, including GenAI. Our research interest here is the second category, b) computational creativity, and specifically, on studies where the GenAI is singularly prompted to generate creative ideas for a given problem or challenge. At the time of writing, two-and-a-half years after the launch of ChatGPT as the first publicly successful, general-purpose GenAI tool, we investigate the first wave of research studies that compare GenAI creativity and human creativity with respect to idea generation. Our aim is to take stock of where we are as an interdisciplinary research field to better understand future trajectories in this rapidly evolving field.

Through a meta-analysis of the current corpus of this first wave of research papers and their findings, we pose the following, exploratory main research question (RQ): When examining and reflecting on the first wave of studies, can we conclude that GenAI has surpassed humans in creative idea generation? In the following sections, we delineate the concept of creativity and elaborate on idea generation as a core human component of creativity. This is then contrasted to the emerging concept of artificial creativity, after we further unpack the rationale for the present study. The method and results of a three-level meta-analysis of 15 manuscripts (17 studies, 115 effect sizes) made available between January 2022 and January 2025 are presented. We conclude by discussing and reflecting on the implications of the meta-analytic results for understanding current and future work comparing human and GenAI creative idea generation.

2 BACKGROUND

2.1 Delineating the complex concept of creativity

Since J.P. Guilford’s [25] inaugural APA presidential address in 1950, which ushered in modern-day creativity research [52], creativity has become a hallmark of human thought and behavior. The standard definition of creativity is bipartite by requiring both originality and usefulness [56]. Given its conceptual complexity, various semantic models have been proposed to capture more nuance. Among the first is Rhodes’ [49] 4 P model of Person, Process, Press, and Product, stressing that creativity emerges through the interaction between these four components. Sternberg and Karami [63] expanded this model with Place, Problem, Persuasion, and Potential. Other models stress the sociocultural aspect of creativity, e.g., Glăveanu’s [19] 5 A framework of Actor, Action, Artifact, Audience, and Affordances, and Lubart’s [39] 7 C model of Creators, Creating, Collaboration, Contexts, Creations, Consumption, and Curricula. Both illustrate how creative work is shared, evaluated, taught, and embedded within various types of communities.

A common thread in these taxonomies is the recognition that creativity is fundamentally human. Creativity is shaped by individuals’ experiences, values, relationships, and environments, and, not least, the tools and technologies we adopt to support and enhance creativity. Given the surge rise of GenAI this sociotechnical understanding of creativity is becoming increasingly relevant to discuss and develop [4], because it challenges established perspectives on how creativity through technological means affords meaning-making, problem-solving, and the imagining of new possibilities.

2.1.1 Idea generation as a core human component of creativity

Implicit in the above models is idea generation as integral to human creativity. Idea generation can be seen as the broader process of generating ideas that are deemed creative (i.e., original and useful), while the closely related concept, divergent thinking, is a key cognitive mechanism within it that involves producing multiple, varied, and original responses to openended problems [25, 55]. As creativity research has evolved, divergent thinking tasks, e.g., the Alternate Uses Task (AUT), where humans list creative uses of common objects [26], have become standard tools for assessing creativity.

Most studies so far have explored cognitive and affective factors influencing idea generation, including associative thinking, working memory, mood, and motivation [3], but this seems to be changing due to GenAI. Although divergent thinking fuels idea generation, especially in its early stages, generating ideas also includes component processes such as refinement, evaluation, and combination of ideas. This makes it even harder to pin down the process of idea generation and its creative outcome. For instance, a recent study [22] found that increasing the sheer number of ideas can lead to lower originality for each idea despite the well-known serial order effect, i.e., that ideas generated later generally tend to be more original. In practice, human idea generation is shaped by lived experience (see e.g., Merleau-Ponty’s influential concept of ‘lived body’, corps vécu [44]), emotion, ethical context, and interpersonal meaning-making. Unlike purely algorithmic output, human idea generation often draws from autobiographical memory, cultural knowledge, and affective resonance [41]. The sociocultural context the above models point to how, e.g., group dynamics and conversational cues, also influences how and when ideas emerge [57]. These aspects suggest a human quality of idea generation: it is not just a matter of perceived original and useful outcomes, but a situated, intentional, and meaning-laden process. With this as a historical point of departure, the question is how GenAI challenges and compares to human creativity and idea generation.

2.2 Artificial creativity – a new paradigm?

The adjective ‘artificial’ in GenAI connotates simulation and imitation, and so rapid adoption of GenAI for creative purposes has brought renewed attention to the idea of artificial creativity: Can GenAI be said to create in a sense that is comparable to human practice, or do they merely mimic creativity by recombining existing patterns? In an HCI and creativity research perspective, the question is whether artificial (GenAI) creativity represents a new paradigm or rather an advanced extension of human creative practice. Moruzzi [45] has shown how this discussion has a long history evolving in response to technological innovations and that GenAI may signal a shift in how creativity is conceptualized. A major challenge to the current understanding is that GenAI can now produce outputs that users frequently perceive as original and useful. Natale and Henrickson [59] have described this as the “Lovelace effect”: moments when computational behavior is perceived as creative, even if the underlying mechanisms are not. This contrasts with the better known ‘Lovelace objection,’ which states that computers, per definition, cannot create but only carry out programmed instructions. Such criticism asserts that GenAI mainly performs high-dimensional interpolation by recombining patterns statistically derived from vast human-generated datasets. This has prompted leading creativity research scholars such as Runco [53] to argue that “it makes no sense to refer to ‘creative AI,’” and that “the output of AI is a kind of pseudo-creativity” (n. p.). Instead, the standard definition of creativity should be updated to stress Surprise, Value, Authenticity, and Intentionality,

where the latter two are particularly useful for distinguishing between human and artificial (GenAI) creativity [54]. A similar stance has been proposed by Gilhooly [18], arguing that “GPT-3 and similar systems, despite generating novel and useful responses, do not display creativity as they lack agency and are purely algorithmic“ (n. p.). While human creative idea generation represents a situated, intentional, and meaning-laden process, the question is if prompting GenAI to generate creative ideas may simply yield ‘the most likely creative idea’, i.e., ideas humans are most likely to describe as creative given past training data. This challenges the idea of genuine originality and insight as fundamental to creativity, e.g., [34, 62]. Still, the huge scale of a GenAI tool’s training data, and where this data originates from humans, might invite the contention that AI’s ability to simulate creativity convincingly will suffice for many real-world applications. Perhaps even to such an extent that some would propose it has surpassed human creative idea generation. Considering this, it is relevant to investigate the first wave of studies that compare general-purpose GenAI tools and one of human creativity’s key (and historically unique) traits, idea generation. This motivated the following study.

2.3 The present meta-analysis

The present meta-analysis aims to examine the first wave of experimental research comparing the perceived creativity of human and GenAI-prompted idea generation. Among the first was Stevenson et al. [64], which arguably became exemplary for the methods employed by others in comparing human with GenAI creative idea generation. Their experimental approach consisted of a human comparison group instructed to generate creative uses for a book, fork, and tin can within a given time limit, thereby employing a psychometric task originally designed and validated to test divergent thinking and creative idea generation in humans [25, 55]. Subsequently, they repeatedly prompted GPT-3 using similar instructions but with varying parameter settings. Ideas generated by humans and GPT-3 output were evaluated by trained human judges blind to the experimental conditions on originality and utility Semantic distance between each idea and its corresponding object, a well-established proxy for originality, was also computed. The findings showed that human-generated ideas were significantly more original and had a greater semantic distance compared to the GPT-3 output. In contrast, the GenAIgenerated ideas were judged as having significantly more utility

Since then, more studies have emerged exploring the efficacy of GenAI relative to humans across a breadth of creative idea generation tasks. These include conceptual replications on the alternate uses task, but with inconsistent results across studies. Koivisto and Grassini [36], for example, instructed human participants to generate original and creative uses for common objects, and compared these to similarly prompting Copy.AI, GPT-3.5, and GPT-4. Their findings showed significantly greater semantic distance and subjective creativity ratings favoring GenAI. Further analyses informed their conclusion that only “the best humans still outperform artificial intelligence in a creative divergent thinking task” (p. 1) Similar results were obtained elsewhere [32, 40, 48], whereas other studies showed results favoring idea originality in humans instead [2, 27]. Eisenreich et al. [15] compared ideas generated during expert-based idea generation workshops to prompting GPT-4 for sustainable home appliance ideas. Prompting GPT yielded more original ideas, but indices of usefulness faltered considerably. For contextually richer idea generation tasks, findings also tend to vary across studies [6, 8, 43, 48, 58, 65]. These inconsistencies call into question the proposition that GenAI has surpassed humans in creative idea generation. This leads to the first sub-question: Does GenAI generate more creative ideas than humans? (RQ1)

Observing these varying results, one could argue that GenAI tools released at the beginning of this first wave were less advanced than later tools. Certainly, after releasing early versions of ChatGPT and DALL-E, these quickly evolved toward larger and later also more specialized models with seemingly higher quality output. It could be the case that their development also translated into a greater capacity for generating creative ideas. Studies relying on earlier GenAI models have reported lower originality compared to humans [27, 64], whereas some studies employing more recent models

reported higher originality compared to humans [32, 36]. Also, within-studies considerable variation is observed between GenAI models. For example, Sun et al. [65] demonstrate that GPT-4 generated more creative ideas than their human comparison group, whereas GPT-3.5 performed similar to humans, when prompted for creative ideas for the bicycle of the future. However, GPT-3.5 and GPT-4 both generated significantly less creative ideas compared to the human comparison group, whereas the LLM Claude performed similar to them, when prompted to generate original ways to reuse or repurpose a bicycle pedal. Given the varying performance of specific GenAI models on creative idea generation tasks, it is possible that specific GenAI-models might have surpassed humans in creative idea generation while other models have not. This leads to the second sub-question: Do specific GenAI models generate more creative ideas than humans? (RQ2).

3 METHOD

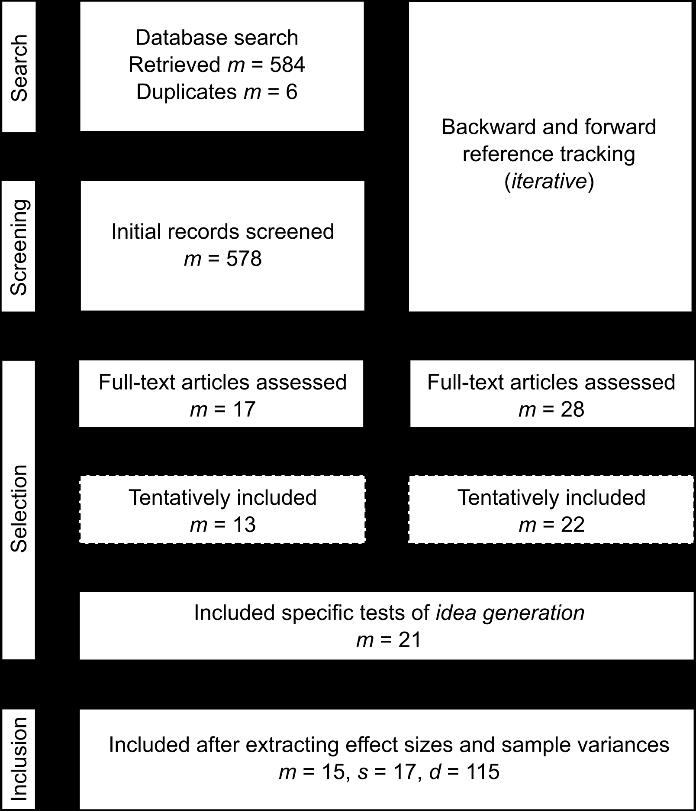

To answer the research questions, a meta-analysis was conducted [67]. The complete systematic search strategy, data, and meta-analysis scripts are available online. 1 Figure 1 provides an overview of the systematic search.

3.1 Systematic search strategy

The systematic search started with querying Web of Science, ACM Digital Library, DRS Digital Library, and Google Scholar to record potentially relevant publications from a broad range of journals and conference proceedings. Titles and abstracts were searched using a Boolean query, except for Google Scholar, for which only titles could be searched. The

1The systematic search strategy, data, and meta-analysis scripts are available online at the Open Science Framework (OSF) project page: https://osf.io/2xdba/

Boolean query combined terms related to GenAI (e.g., machine learning, LLM), creativity (e.g., creative performance, divergent thinking), and human performance (e.g., human creativity, human-generated). The tentatively included articles resulting from this initial search provided the basis for backward citation tracking (bibliography screening) and forward citation tracking (Google Scholar’s “Cited by” feature). This was done iteratively for each newly tentatively included article, until no new potentially relevant articles were identified. The Google Scholar search combined with backward and forward citation tracking also facilitated searching unpublished articles. The search was restricted from January 2022 to January 2025, covering the first wave of relevant articles. Duplicates were removed prior to screening. Where both unpublished and published versions existed, the unpublished version was excluded.

Table 1: Overview of the included studies.

Included study Comparison(s)

[2] Student dyads vs. 10 GenAI models (incl. Bard, Perplexity)

[6] Crowdworkers vs. GPT-4

[8] Study 1 Experts vs. GPT-4

[8] Study 2 Experts vs. GPT-4

[10] Session 1 Students vs. GPT-3.5

[10] Session 2 Students vs. GPT-4

[15] Experts vs. GPT-4

[27] Crowdworkers vs. 5 AI-models (incl. Copy.ai, Jurassic-1)

[32] Crowdworkers vs. GPT-4

[36] Crowdworkers vs. 3 GenAI models (incl. GPT-3.5, GPT-4)

[37] Study 2b Crowdworkers vs.GPT-3.5

Idea generation task(s)

Alternate uses task

Business ideas for the circular economy

Ideas for apps

Ideas for product invention

Ideas for an invention

Ideas for an invention

Ideas for sustainable products

Alternate uses task

Alternate uses task

Alternate uses task

Children’s toy ideas using given objects

[40] Students vs. GPT-4; Students vs. GPT-4 and DALL·E 2 (1) Alternate uses task; (2) Sketch ideas for alien characters

[43] Students vs. GPT-4

Physical product ideas for students

Measurement(s)

Originality

Originality, Multiple usefulness

Creativity, originality, usefulness

Creativity, originality, usefulness

Creativity

Creativity

Originality, multiple usefulness

Multiple originality

Originality

Creativity, originality

Multiple creativity

Originality, usefulness

Originality, usefulness

[48] Study 3 Crowdworkers vs. GPT-4 (1) Alternate uses task; (2) ideas for solving everyday problems Originality, usefulness

[58] Experts vs. Claude 3.5

[64] Students vs. GPT-3

[65] (Prospective) Students vs. 5 GenAI models (incl. GPT-3.5, GPT-4)

Research ideas in the field of NLP

Alternate uses task

Survival: (1) Staying warm, (2) finding fruit; Bicycles: (3) Future bicycle, (4) facial recognition, (5) pedal reuse; Apps: (6) H2O-saving, (7) long-term use

Originality, indices of usefulness

Multiple originality, usefulness

Creativity, originality, usefulness

Note. When a study includes multiple idea generation tasks, each task is indicated by a number between parentheses. Note that some studies also contribute to multiple indices of creativity, originality, or usefulness.

3.2 Inclusion and exclusion criteria

Articles were considered for inclusion if they reported studies that experimentally compared ideas generated by humans to those generated by prompting GenAI models with similar instructions to generate creative ideas. One article can contribute a single or multiple studies, i.e. experiments, suitable for meta-analysis, and ultimately, the included studies contribute the data for this meta-analysis (Table 1). Articles were excluded if no reported studies directly and exclusively targeted idea generation, such as testing mere association [13, 23], or fully realized creative products [21, 42], omitting discrete idea generation. Human participants and GenAI models had to receive similar instructions or prompts to generate creative ideas

without further interaction, which excludes comparisons of GenAI to users engaging with GenAI dialogically via prompting, curating datasets, or adjusting model parameters [1, 9, 11, 14, 30, 51, 66, 68], which constitutes computational co-creativity [35]. Eligible articles also needed to report measurements assessing (indices of) overall creativity, originality, or usefulness, and obtained without evaluators knowing the origin of the ideas. This excludes any studies testing or confounded by bias against GenAI [50]. Finally, articles had to be in English, be accessible, and report statistics or data that enables meta-analysis.

3.3 Screening and selection

The titles and abstracts of the retrieved records were screened applying the criteria but initially omitting the criterion of focusing on idea generation tasks. This enabled the identification of a broader set of articles supporting more comprehensive backward and forward reference tracking. The retrieved records (m = 584) were imported into Rayyan [47] Duplicates (m = 6) were detected and removed. Rayyan's natural language processing features supported screening by ranking articles by their likelihood of inclusion, based on the ongoing selection and rejection of records. Following this, 17 articles were selected for full-text assessment, after which 13 were tentatively included. These 13 articles also formed the basis for the iterative backward and forward reference tracking, which led to 28 further articles selected for full-text assessment (totaling m = 45), with 22 meeting the criteria applied at this stage (totaling m = 35). Applying the criterion of focusing on idea generation led to the tentative inclusion of 21 articles. The final selection was determined by tentatively including the studies from the articles that met all eligibility criteria, and subsequently, whether the effect sizes and sample variances could be extracted, converted, or otherwise calculated from these studies

3.4

Extraction of effect sizes

The effect size Cohen’s d and sample variance were extracted based on the reported statistics found in the included studies [69] For one study, Cohen’s d and sample variance were calculated from the original dataset [64]. When Cohen’s d and sample variance could not be obtained in any of the reported studies in an article, it would be excluded. All effect sizes were (re)coded so that negative values indicated greater creativity in human-generated ideas, and positive values indicated greater creativity in AI-generated ideas. The diverse methods necessarily used to calculate Cohen’s d may have introduced minor discrepancies with originally reported values. Finally, 15 articles contributing 17 studies and 115 effect sizes could be included in the meta-analysis. Table 1 presents further details on the included studies.

3.5 Data analysis

Meta-analyses were conducted to calculate the pooled effect sizes, indicating the overall magnitude and significance of the difference in measured creativity between ideas generated by humans and GenAI based on the effect sizes extracted from included studies. To this end, R 4.4.1 [46] and metafor 4.6-0 [67] were used. As the included studies vary in the number of creativity-related measurements and idea generation tasks administered in the same sample, three-level meta-analyses were specified [67]. This enables modeling the sampling variance of effect sizes (level 1), variance of effect sizes withinstudies (level 2), and between-studies (level 3). Tests of heterogeneity were conducted to assess the variability of the data. Overall, this approach supports the accuracy and generalizability of the pooled effect sizes. To explore the research questions in greater depth, two three-level meta-analytic moderator analyses were conducted testing differences between humans and GenAI for 1) measurements of usefulness, originality, and creativity measured as a singular construct, and 2) specific GenAI models Effect sizes were coded as assessing creativity as a single construct or its bipartite elements originality or usefulness; and the specific GenAI models were coded, provided that sufficient data was present. The first

author coded these moderators. Reliability checks were deemed unnecessary due to the minimal subjective interpretation needed to code the moderators. Tests of residual heterogeneity indicated the extent to which the included moderators explained variation in the data.

Sensitivity analyses were conducted by repeating the three-level meta-analyses after removing influential values for comparison. Influential values were detected by comparing Mahalanobis distances to a chi-square distribution (threshold for influential values: 95% of the normal range) [28]. When similar pooled effect sizes are found in the comparison, the results indicate a robust pooled effect size. Discrepancies, however, suggest that the magnitude of the observed pooled effects may be driven by extreme effect sizes or high variances, indicating substantial uncertainty about the precision and generalizability of the included effect sizes. This provides insight into the accuracy and generalizability of the pooled effect sizes. Publication bias, where studies with larger or significant effects are more likely to be published, was assessed in the published peer-reviewed studies, and for comparison, the unpublished studies [60]. Funnel plots were generated by plotting Cohen’s d against the inverse of the sample variance [67]. A lack of small effect sizes among high-variance studies may suggest selective publication of larger (significant) effects. Complementarily, asymmetry was tested by repeating the threelevel meta-analyses with variance as a moderator [70], providing further insight into the validity of any findings.

4 RESULTS

4.1

Does GenAI generate more creative ideas than humans? (RQ1)

The complete dataset comprised 15 articles contributing 17 independent studies with 115 effect sizes. The results of the three-level meta-analysis showed a pooled effect size of d = .360, 95% CI [-.071, .790]. This indicates a small difference favoring creativity in ideas generated by prompting GenAI compared to humans. However, the confidence interval included zero, indicating a statistically non-significant effect. The test for heterogeneity was significant (Q(df = 114) = 2516.39, p < .001), suggesting substantial unexplained variation in the data. Fourteen influential values were detected. The sensitivity analysis conducted by repeating the three-level meta-analysis without these influential values showed a slightly lower pooled effect size, d = .236, 95% CI [-.073, .544]. The test for heterogeneity remained significant (Q(df = 100) = 1661.00, p < .001). These findings suggest no evidence that GenAI overall generates more creative ideas than humans

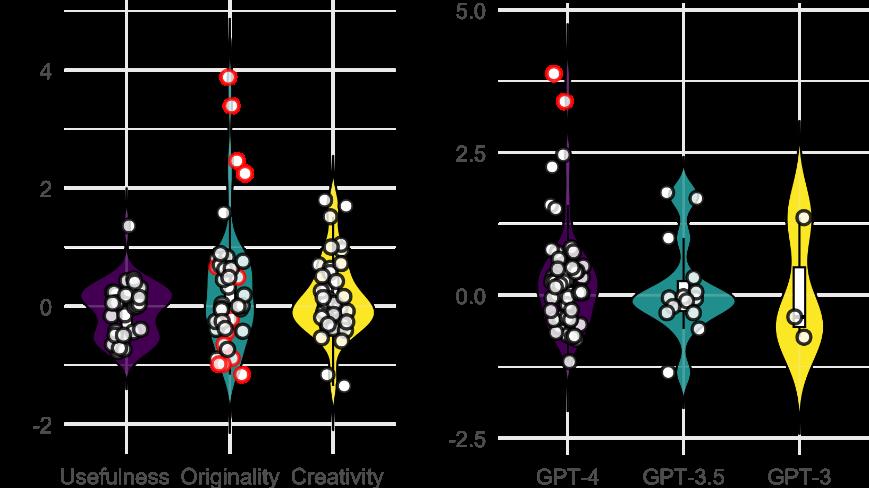

Figure 2: Violin plot of effect sizes by usefulness, originality, and creativity as a singular construct (left), and by ChatGPT model version (right). Positive effect sizes indicate GenAI scores higher than those given to ideas generated by humans. Red dots mark influential values. Note how some influential values represent very large effect sizes and for others less so, entailing high variances.

The effect sizes either captured creativity as a singular construct (41 effect sizes from 7 studies), usefulness (32 effect sizes from 10 studies), or originality (42 effect sizes from 14 studies). The test of moderation comparing usefulness to originality and creativity was marginally significant (Qm(df = 2) = 5.03, p = .081). Upon further inspection, GenAI generated more original ideas than humans, when compared to generating useful ideas, d = .323, 95% CI [.040, .606]. This indicated a small but significant difference, as the confidence interval did not cross zero. However, sensitivity analysis conducted by repeating the analysis on the data without the 14 influential values yielded no significant moderation effect (Qm(df = 2) = 1.78, p = .410). Without the influential values present, the difference between usefulness and originality was non-significant, d = .173, 95% CI [-.081, .352]. This finding is likely due to a few studies contributing very large effect sizes (Figure 2, left, red dots) All tests of residual heterogeneity remained significant, suggesting that a considerable amount of variation in the data could not be explained by any differences between usefulness, originality, and creativity

See Figure 2 (left) and Table 2 for details.

Table 2: Results of the moderation analyses comparing GenAI models and humans in generating creative and original, relative to useful ideas, for the full dataset and after removing influential values.

Complete dataset Without influential values

Test of moderation

Test for residual heterogeneity

Qm(df = 2) = 5.03, p = .081

Qe(df = 112) = 2467.69, p < .001

Qm(df = 2) = 1.78, p = .410

Qe(df = 98) = 1645.55, p < .001

Note. aThe human vs. GenAI comparison of usefulness is the reference category against which originality and creativity are tested. Data are the pooled effect size (d), the lower (L) and upper (U) bounds of the 95% confidence interval (CI), and moderation (Qm) and residual heterogeneity (Qe) tests. The results are presented for the complete dataset and without influential values (n = 14; sensitivity analysis).

4.2 Do specific GenAI-models generate more creative ideas than humans? (RQ2)

The included studies compared ideas generated by prompting a variety of GenAI models to those generated by humans. These included 14 distinct (large) language models (excluding model versions), used across all included studies, and one diffusion model, DALL·E 2, used in a single study (Table 1) [40]. Most studies offered direct comparisons with specific GenAI models, while others compared aggregated responses from multiple GenAI models. The most common were direct comparisons in idea creativity between humans and different versions of ChatGPT (65 effect sizes from 13 studies). Here, sufficient data allowed further meta-analysis. The results of a three-level meta-analysis showed a pooled effect size of d = .478, 95% CI [-.040, .996]. This indicates a near-moderate difference favoring ChatGPT. However, the confidence interval included zero, again showing a statistically non-significant effect. Here also, the test for heterogeneity was significant (Q(df = 64) = 1825.73, p < .001), suggesting substantial unexplained variation in the data. Two influential values were detected. Sensitivity analysis conducted by repeating the above analysis without these influential values slightly lowered the pooled effect size, d = .374, 95% CI [-.047, .975], which remained non-significant. The test for heterogeneity also remained significant (Q(df = 62) = 1385.87, p < .001). This result offers no evidence indicating that ChatGPT specifically may generate more creative ideas than humans.

The included effect sizes represented direct comparisons of ideas generated by humans to ideas generated by prompting different versions of ChatGPT, including GPT-4 (48 effect sizes from 10 studies), GPT 3.5 (14 effect sizes from 3 studies), and GPT-3 (3 effect sizes from 1 study). A moderation test was subsequently conducted to test which, if any, of these different versions of ChatGPT generated more creative ideas than humans. The test of moderation was not significant, Qm(df = 2) = .90, p = .637. Sensitivity analysis, conducted by repeating the analysis without the 2 influential values, also yielded a non-significant test of moderation, Qm(df = 2) = .92, p = .631. For both, the tests of residual heterogeneity remained significant, suggesting that a considerable portion of the variation in the data could not be accounted for by different versions of ChatGPT. See Figure 2 (right) and Table 3 for further details. These findings show no evidence for a difference in creativity for ideas generated with GPT-4, compared to GPT-3.5 and GPT-3, compared to humans’ ideas.

Table 3: Results of the moderation analyses comparing ChatGPT models and humans in generating creative ideas, for the dataset comparing humans with all ChatGPT models, and after removing influential values.

All comparisons humans with ChatGPT Without influential values d

a

Test of moderation

Test for residual heterogeneity

Qm(df = 2) = .90, p = .637

Qe(df = 62) = 1792.75, p < .001

Qm(df = 2) = .92, p = .631

Qe(df = 60) = 1366.22, p < .001

Note. aThe human vs. GPT-4 comparison is the reference category against which GPT-3.5 and GPT-3 are tested. Data are the pooled effect size (d), the lower (L) and upper (U) bounds of the 95% confidence interval (CI), and moderation (Qm) and residual heterogeneity (Qe) tests Results are shown for all comparisons with ChatGPT and without influential values (n = 2; sensitivity analysis).

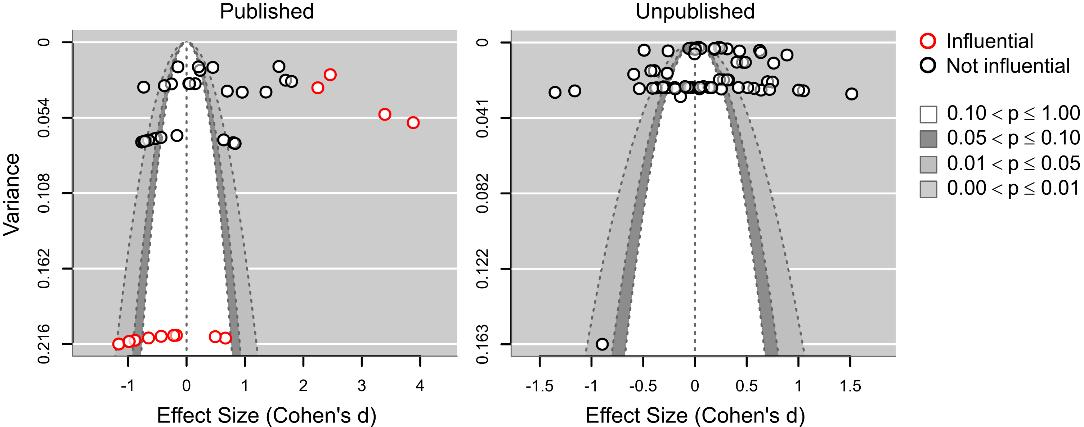

4.3 No evidence to suggest publication bias

No evidence for publication bias was found in the included studies published in peer-reviewed journals or conference proceedings (42 effect sizes from 9 studies). Visual inspection of the funnel plots indicated some degree of asymmetry in effect sizes relative to their variances (Figure 3, left), also compared to studies not (yet) published as of January 2025 (Figure 3, right). Notably, all influential values were observed in the published studies. The initially observed asymmetry is due to the few studies reporting very large effect sizes, and some reporting effect sizes with high variances (Figure 3, left, red dots). The statistical test of asymmetry, however, suggested that any visually observed asymmetry in the data was not statistically significant (Qm(df = 1) = .15, p = .697). The distribution of effect sizes relative to sample variances was sufficiently similar between the published and unpublished studies, which was also non-significant (Qm(df = 1) = 1.62, p = .203). These findings offer no evidence suggesting publication bias in the studies included.

Figure 3: Funnel plot of the effect sizes from the included studies published in peer-reviewed journals or conference proceedings (left) and, for comparison, the effect sizes from the included studies not (yet) published as of January 2025 (right). Red dots mark influential values. In the legend (bottom), p, here, denotes p-value ranges indicating (non-) significance of effect sizes presented in the funnel plots.

5 DISCUSSION

The rapid uptake of general-purpose GenAI tools coincided with the proposition that AI has surpassed (most) humans in creative idea generation. To critically assess this, we conducted a meta-analysis of the first wave of experimental research of human vs. GenAI creative idea generation (January 2022 – January 2025).

5.1 No evidence to suggest GenAI has surpassed humans in creative idea generation

The results showed no evidence to indicate that GenAI overall generates more creative ideas than humans (RQ1). The results indicated a small, pooled effect size favoring ideas generated by prompting GenAI, but this was non-significant. Sensitivity analysis, i.e., repeating the meta-analysis without influential values, yielded a slightly lower, also nonsignificant outcome. This shows this finding’s accuracy and generalizability. Further testing of potential differences between the usefulness, originality, and general creativity of ideas generated by humans compared to those generated by prompting GenAI, initially suggested that GenAI ideas may be significantly more original than ideas created by humans, relative to their difference in usefulness. Sensitivity analysis without the influential values, however, yielded a nonsignificant difference. Further inspection revealed that any difference found in originality between humans vs. GenAI is likely due to a few studies reporting very large effect sizes. As such, we argue against interpreting this finding as indicating that prompting GenAI yields more original ideas than humans.

Similarly, the results showed no evidence to indicate that specific GenAI models generate more creative ideas than humans (RQ2). Sufficient data for meta-analysis was only available for ChatGPT. The results initially suggested a nearmoderate, but non-significant, pooled effect size favoring ideas generated by prompting ChatGPT. Sensitivity analysis indicated that a smaller, non-significant, pooled effect size likely offers a more accurate representation. It might be that more recent GPT models, e.g., GPT-4, have surpassed (most) humans in generating creative ideas [32, 36]. Still, the results showed no significant difference in creativity of ideas generated with GPT-3 or GPT-3.5, compared to GPT-4, relative to humans. The sensitivity analysis gave a similar non-significant outcome, underlining the accuracy and generalizability of this result. So, we find no evidence that prompting ChatGPT, regardless of version, yields more creative ideas than humans.

Across the analyses, the tests of (residual) heterogeneity were significant, indicating substantial variability even when accounting for the included moderators Therefore, unexamined factors likely contribute to the observed heterogeneity, raising important questions about what underlying variables may be driving these differences. Table 1 provides a useful

starting point for further exploration. Considerable variation may arise from the nature of the idea-generation tasks employed across studies, which varied substantially between studies, ranging from inventing alternative uses for everyday objects to suggesting business ideas for the circular economy. The human comparison group was another critical source of variation due to different participants, from students and crowdsourced individuals to experts. Further meta-analytic scrutiny of this is beyond this paper’s scope. Given the available dataset, any such analysis of currently available data would likely suffer from insufficient statistical power.

Substantiating the validity of the findings, no evidence to suggest publication bias was found amongst the studies included. For studies published in peer-reviewed journals and conference proceedings, visual inspection of the funnel plots indicated some degree of asymmetry in the effect sizes relative to their variances. Still, the statistical tests of asymmetry were non-significant and resembled the data from the unpublished studies. As such, there was no evidence suggesting a publication bias. This supports the accuracy and generalizability of the reported findings, indicating that in the first wave of studies comparing humans and GenAI, there is no evidence that GenAI has surpassed humans in creative idea generation.

5.2 GenAI idea generation as a database query for ‘the most likely creative idea’?

Another insight concerns the mechanism by which GenAI produces a creative output. When prompted to generate creative ideas, GenAI appears to reproduce with some variation ideas that align with what is statistically marked as ‘creative’ in a domain. In effect, GenAI relies on the LLM to retrieve or generate ‘the most likely creative idea’ as defined within a specified context. This is important as GenAI is trained on big data sets containing lots of human-produced content, likely including data from established creativity tasks, such as the Alternate Uses Task [18, 64] that are widely available online. So, what GenAI retrieves could thus be seen as approximating ideas that it has ‘learned’ humans tend to evaluate as creative, because it has been trained on many such examples, rather than as novel or contextually emergent idea generation. This raises epistemological and methodological concerns about whether it is meaningful to assert that GenAI surpasses humans in creative idea generation, as some studies suggest, e.g., [8, 32, 36]. Rather, it can be argued that its output simply reflects a refined interpolation of well-represented human ideas as contained within the training data, particularly within familiar task framings. This dynamic may help explain the non-significant differences found in this meta-analysis. Moreover, in studies suggesting GenAI outperformed humans in creative idea generation, it is necessary to interrogate whether the human comparison group simply underperformed relative to the GenAI’s optimized retrieval capabilities, rather than contending that the GenAI showed true creative superiority. This further raises concerns about the prevalent reliance on prompting as an evaluative framework for GenAI. As Rozental et al. [51] have recently argued, alternative interaction paradigms, such as co-creative workflows or embodied engagements, may be better suited to interrogating the unique, and undeniable, affordances of GenAI in creative contexts.

5.3 A sociocultural and sociotechnical perspective on GenAI idea generation

Although this study revealed no significant difference between humans and GenAI in creative idea generation, seeing this result through a sociocultural and -technical lens makes it relevant to (re)consider what creativity is and how it is valued. As stressed by current sociocultural creativity frameworks, notably [19, 39], and, not least, a recent research manifesto [20], creativity is fundamentally situated by being embedded in lived experience, ethical context, and interpersonal meaning-making. In contrast, GenAI output is based on statistical inference from prior data (or, more bluntly, advanced guesswork). This affords quick, easy, and sometimes impressive results through novel juxtapositions, e.g., by being able to visually merge highly dissimilar animals to output a visually apparent new species as an example of combinational creativity, e.g., [12, 29]. However, the very grounding in human relevance, tacit knowledge, and the shifting value systems

that define creativity is lacking. For instance, the recognition of cases of malevolence as a valid type of creativity worth academic study, e.g., [17, 33], is a relatively recent conceptual and axiological shift in creativity research and therefore unlikely to be adequately reflected in the historical training data on which LLMs rely.

This disconnect in terms of a limited sociocultural embeddedness shows that even when GenAI appears to match or surpass humans on creative idea generation tasks, it does so within the constraints of a value system of past datasets, not the evolving sociocultural dynamics that shape contemporary human creative expression. Moreover, the creativity tasks used in existing studies have, to the best of our knowledge, not been truly ill-defined, complex, and novel. Rather, they tend to play to GenAI’s strengths by featuring well-bounded, linguistically constrained problems. Creative idea generation is mediated by human experience, e.g., social relationships and embodied knowledge, but such dimensions of human creativity, central in the above sociocultural frameworks, are not only absent in GenAI; they are difficult (if not impossible) to simulate meaningfully. This makes us speculate if the burning question is not whether GenAI has surpassed human creative idea generation (as this paper’s title suggests), but how GenAI can be incorporated into the broader sociocultural processes that make human creativity significant, consequential, and continuously re-evaluated in real world settings (in the wild). To this end, raising awareness of the sociotechnical aspect of creativity is essential [4] because the tools and technologies we deploy affect human creative practice.

5.4 Limitations

A range of limitations apply to the present meta-analysis. Here, we point out some of the most important ones. Although they met most inclusion criteria, six studies were excluded because we were unable to extract the effect sizes and sample variances. While sampling bias cannot be ruled out, it is likely minimal, as inspection of the full texts suggested a high degree of similarity to the included studies in terms of methods and results. Most included studies tested LLMs, which introduces uncertainty about whether the results generalize to other GenAI. As discussed, the meta-analysis does not account for several crucial factors that likely contribute to the substantial heterogeneity found, including characteristics of the administered idea generation tasks and the human comparison group. Also, the search query did not specify all generative AI models, possibly leading to an underrepresentation of certain model types in the included studies. Furthermore, the moderator analyses may be confounded. For example, thinking up alternative uses for common objects typically means no experts are present in the human comparison group and is commonly used to assess originality. This could artificially exaggerate differences in originality with GenAI. Finally, given the fast evolution of GenAI for creative idea generation, the findings only represent a snapshot of a first wave of studies conducted between January 2022 and January 2025; a period characterized by the rapid and widespread uptake of GenAI. Future studies may yield different insights as the field and technologies progress.

5.5

Conclusion and implications

This paper has examined a timely and, arguably, controversial question: Has GenAI surpassed humans in creative idea generation? To evaluate this, we examined the first wave of experimental studies comparing human and GenAI-prompted creative idea generation, by conducting a meta-analysis of 15 articles including 17 studies and 115 effect sizes. The results showed a small but non-significant pooled effect favoring GenAI. Initial analyses suggested greater originality in GenAI ideas, but this was likely due to a few studies contributing very large effects. No significant differences were found between specific GenAI models (GPT-3, GPT-3.5, GPT-4), relative to human creative idea generation Our findings also raise theoretical questions. GenAI can produce perceivably original ideas within constrained semantic prompts, but this output differs from the situated, ethically sensitized, value-driven creativity represented in prevailing sociocultural creativity

frameworks. Scholars agree that human creativity is shaped by context, culture, and agency, but such factors are limited in GenAI [20]. While notions of creativity evolve, these continuously shifting evaluations are not fully captured in GenAI models trained on past data.

Speculating how GenAI will evolve is notoriously difficult due to the current rapid technological development. Still, we support Brem and Hörauf’s idea [7] of a tripartite perspective to address societal implications at the micro (individual), macro (organizational), and meso (societal) level. Looking ahead, we encourage studying GenAI-powered creativity in more ecologically valid settings where creative idea generation implies uncertainty and cultural sensitivity, especially in co-creation processes between humans and GenAI. As recent studies have noted, it is important to be mindful about the particularity of a professional domain [16] and potential impact of sociodemographic factors, such as country, age, and gender differences, on future perspectives of AI as a threat or a positive to humanity [24]. Indeed, some scholars have identified a human aversion to GenAI, particularly in art [38, 50]. Discerning the difference between human and GenAI creativity, including idea generation, and how the two can be integrated in creative practice, including education [61] through development of different cognitive strategies for GenAI-human co-creation [5, 51], must also involve studying the richness of sociotechnical and -cultural manifestations of creativity in complex, real-world contexts

REFERENCES

[1] Barrett R. Anderson, Jash Hemant Shah, and Max Kreminski. 2024. Homogenization effects of large language models on human creative ideation Proceedings of the 16th ACM Conference on Creativity & Cognition. 2024. 413–425.

[2] Mia Magdalena Bangerl, Katharina Stefan, and Viktoria Pammer-Schindler. 2024. Explorations in human vs. generative AI creative performances: A study on human-AI creative potential TREW Workshop for Trust and Reliance in Evolving Human-AI Workflows. 2024.

[3] Mathias Benedek, Roger E. Beaty, Daniel L. Schacter, and Yoed N. Kenett. 2023. The role of memory in creative ideation. Nature Reviews Psychology 2, 4 (2023), 246–257.

[4] Michael Mose Biskjaer, Peter Dalsgaard, and Kim Halskov. 2023. Digital. In Creativity A New Vocabulary: Palgrave Studies in Creativity and Culture (2nd edition) (2nd ed.), Vlad Petre Glăveanu, Lene Tanggaard and Charlotte Wegener (eds.). Palgrave/Macmillan, Springer International Publishing, Cham, Switzerland, 71–86. https://doi.org/10.1007/978-3-031-41907-2_7

[5] Jelle Boers, Terra Etty, Martine Baars, and Kim van Boekhoven. 2025. Exploring cognitive strategies in human-AI interaction: ChatGPT’s role in creative tasks. Journal of Creativity 35, 1 (2025), 100095. https://doi.org/10.1016/j.yjoc.2025.100095

[6] Léonard Boussioux, Jacqueline N. Lane, Miaomiao Zhang, Vladimir Jacimovic, and Karim R. Lakhani. 2024. The crowdless future? Generative AI and creative problem-solving. Organization Science 35, 5 (2024), 1589–1607.

[7] Alexander Brem and Dominik Hörauf. 2025. ‘Artificial Creativity?’ AI’s Short- and Long-Term Impact on Creativity. Research-Technology Management 68, 2 (2025), 54–58. https://doi.org/10.1080/08956308.2025.2450756

[8] Noah Castelo, Zsolt Katona, Peiyao Li, and Miklos Sarvary. 2024. How AI Outperforms Humans at Creative Idea Generation. (2024). https://doi.org/10.2139/ssrn.4751779

[9] Tilanka Chandrasekera, Zahrasadat Hosseini, and Ubhaya Perera. 2024. Can artificial intelligence support creativity in early design processes? International Journal of Architectural Computing (2024), 14780771241254636.

[10] Gary Charness and Daniela Grieco. 2024. Creativity and AI Available at SSRN 4686415 (2024).

[11] Zenan Chen and Jason Chan. 2024. Large language model in creative work: The role of collaboration modality and user expertise Management Science 70, 12 (2024), 9101–9117.

[12] Fintan J. Costello and Mark T. Keane. 2000. Efficient creativity: Constraint-guided conceptual combination. Cognitive Science 24, 2 (2000), 299–349.

[13] David Cropley. 2023. Is AI more creative than humans? ChatGPT and the divergent association task. Learning Letters 2, (2023), 13–13.

[14] Anil R. Doshi and Oliver P. Hauser. 2024. Generative AI enhances individual creativity but reduces the collective diversity of novel content. Science Advances 10, 28 (2024), eadn5290.

[15] Anja Eisenreich, Julian Just, Daniela Giménez Jiménez, and Johann Füller. 2024. Revolution or inflated expectations? Exploring the impact of generative AI on ideation in a practical sustainability context. Technovation 138, (2024), 103123.

[16] Tamari Gamkrelidze, Moustafa Zouinar, and Flore Barcellini. 2021. Working with Machine Learning/Artificial Intelligence systems: workers’ viewpoints and experiences Proceedings of the 32nd European Conference on Cognitive Ergonomics. 2021. Association for Computing Machinery. https://doi.org/10.1145/3452853.3452876

[17] Z. Gao, L. Cheng, J. Li, Q. Chen, and N. Hao. 2022. The dark side of creativity: Neural correlates of malevolent creative idea generation. Neuropsychologia 167, (January 2022), 108164. https://doi.org/10.1016/j.neuropsychologia.2022.108164

[18] Ken Gilhooly. 2024. AI vs humans in the AUT: Simulations to LLMs. Journal of Creativity 34, 1 (2024), 71. https://doi.org/10.1016/j.yjoc.2023.100071

[19] Vlad Petre Glăveanu. 2012. Rewriting the Language of Creativity: The Five A’s Framework. Review of General Psychology 17, 1 (2012), 69–81. https://doi.org/10.1037/a0029528

[20] Vlad Petre Glaveanu, Michael Hanchett Hanson, John Baer, Baptiste Barbot, Edward P. Clapp, Giovanni Emanuele Corazza, Beth Hennessey, James C. Kaufman, Izabela Lebuda, Todd Lubart, Alfonso Montuori, Ingunn J. Ness, Jonathan Plucker, Roni Reiter‐Palmon, Zayda Sierra, Dean Keith Simonton, Monica Souza Neves‐Pereira, and Robert J. Sternberg. 2019. Advancing Creativity Theory and Research: A Socio‐cultural Manifesto. The Journal of Creative Behavior 54, 3 (2019), 741–745. https://doi.org/10.1002/jocb.395

[21] Carlos Gómez-Rodríguez and Paul Williams. 2023. A confederacy of models: A comprehensive evaluation of LLMs on creative writing. arXiv preprint:2310.08433 (2023).

[22] Corentin Gonthier and Maud Besançon. 2022. It is not always better to have more ideas: Serial order and the trade-off between fluency and elaboration in divergent thinking tasks. Psychology of Aesthetics, Creativity, and the Arts (2022). https://doi.org/10.1037/aca0000485

[23] Simone Grassini and Mika Koivisto. 2025. Artificial Creativity? Evaluating AI Against Human Performance in Creative Interpretation of Visual Stimuli. International Journal of Human–Computer Interaction 41, 7 (2025), 4037–4048. https://doi.org/10.1080/10447318.2024.2345430

[24] Simone Grassini and Alexander Sævild Ree. 2023. Hope or Doom AI-ttitude? Examining the Impact of Gender, Age, and Cultural Differences on the Envisioned Future Impact of Artificial Intelligence on Humankind Proceedings of the ACM European Conference on Cognitive Ergonomics (ECCE 2023). 2023. https://doi.org/10.1145/3605655.3605669

[25] Joy P. Guilford. 1950. Creativity. American Psychologist 5, 9 (1950), 444–454. Retrieved from DOI:10.1037/h0063487

[26] Joy P. Guilford. 1957. Creative abilities in the arts. Psychological review 64, 2 (1957), 110–118.

[27] Jennifer Haase and Paul H.P. Hanel. 2023. Artificial muses: Generative artificial intelligence chatbots have risen to human-level creativity. Journal of Creativity 33, 3 (2023), 100066.

[28] Ghorbani Hamid. 2019. Mahalanobis Distance and Its Application for Detecting Multivariate Outliers. Facta Universitatis, Series: Mathematics and Informatics (2019), 583–595. https://doi.org/10.22190/FUMI1903583G

[29] Ji Han, Min Hua, Feng Shi, and Peter R. N. Childs. 2019. A Further Exploration of the Three Driven Approaches to Combinational Creativity. Proceedings of the Design Society: International Conference on Engineering Design 1, (2019), 2735–2744. https://doi.org/10.1017/dsi.2019.280

[30] Jennifer L. Heyman, Steven R. Rick, Gianni Giacomelli, Haoran Wen, Robert Laubacher, Nancy Taubenslag, Max Knicker, Younes Jeddi, Pranav Ragupathy, Jared Curhan, and others. 2024. Supermind Ideator: How scaffolding Human-AI collaboration can increase creativity Proceedings of the ACM Collective Intelligence Conference. 2024. 18–28.

[31] Krystal Hu. 2023. ChatGPT sets record for fastest-growing user base - analyst note. Reuters.com, Technology. Retrieved from https://www.reuters.com/technology/chatgpt-sets-record-fastest-growing-user-base-analyst-note-2023-02-01/

[32] Kent F. Hubert, Kim N. Awa, and Darya L. Zabelina. 2024. The current state of artificial intelligence generative language models is more creative than humans on divergent thinking tasks. Scientific Reports 14, 1 (2024), 3440.

[33] Samuel T. Hunter, Kayla Walters, Tin Nguyen, Caroline Manning, and Scarlett Miller. 2021. Malevolent Creativity and Malevolent Innovation: A Critical but Tenuous Linkage. Creativity Research Journal (2021), 1–22. https://doi.org/10.1080/10400419.2021.1987735

[34] Craig A. Kaplan and Herbert A. Simon. 1990. In search of insight. Cognitive Psychology 22, 3 (1990), 374–419.

[35] Pegah Karimi, Kazjon Grace, Mary Lou Maher, and Nicholas Davis. 2018. Evaluating Creativity in Computational Co-Creative Systems. arXiv (July 2018), 1807.09886v1. Retrieved from http://arxiv.org/abs/1807.09886v1http://arxiv.org/pdf/1807.09886v1

[36] Mika Koivisto and Simone Grassini. 2023. Best humans still outperform artificial intelligence in a creative divergent thinking task. Scientific Reports 13, 1 (2023), 13601.

[37] Byung Cheol Lee and Jaeyeon Chung. 2024. An empirical investigation of the impact of ChatGPT on creativity. Nature Human Behaviour 8, 10 (2024), 1906–1914.

[38] Peng Liu, Yueying Chu, Yandong Zhao, and Siming Zhai. 2025. Machine creativity: Aversion, appreciation, or indifference? Psychology of Aesthetics, Creativity, and the Arts (2025), 0. https://doi.org/10.1037/aca0000739

[39] Todd Lubart. 2017. The 7 C’s of Creativity. The Journal of Creative Behavior 51, 4 (2017), 293–296. https://doi.org/10.1002/jocb.190

[40] Anne-Gaëlle Maltese, Pierre Pelletier, and Rémy Guichardaz. 2024. Can AI Enhance its Creativity to Beat Humans? arXiv preprint:2409.18776 (2024).

[41] Arthur B. Markman and Dedre Gentner. 2001. Thinking. Annual Review of Psychology 52, 1 (2001), 223–247.

[42] Jack McGuire, David De Cremer, and Tim Van de Cruys. 2024. Establishing the importance of co-creation and self-efficacy in creative collaboration with artificial intelligence. Scientific Reports 14, 1 (2024), 18525.

[43] Lennart Meincke, Karan Girotra, Gideon Nave, Christian Terwiesch, and Karl T. Ulrich. 2024. Using large language models for idea generation in innovation. The Wharton School Research Paper Forthcoming 9, (2024).

[44] Maurice Merleau-Ponty. 2002. Phenomenology of Perception. 1962. Trans. Colin Smith. London: Routledge (2002).

[45] Caterina Moruzzi. 2025. Artificial Intelligence and Creativity. Philosophy Compass 20, 3 (2025), e70030.

[46] Core Team R. 2021. R: A Language and Environment for Statistical Computing. (2021). Retrieved from https://www.R-project.org/

[47] Rayyan.ai. 2024. Faster Systematic Literature Reviews. (2024). Retrieved from www.rayyan.ai/

[48] Tuval Raz, Roni Reiter-Palmon, and Yoed N. Kenett. 2024. Open and closed-ended problem solving in humans and AI: the influence of question asking complexity. Thinking Skills and Creativity 53, (2024), 101598.

[49] Mel Rhodes. 1961. An analysis of creativity. The Phi Delta Kappan 42, 7 (April 1961), 305–310.

[50] Alwin de Rooij. 2025. Bias Against Artificial Intelligence in Visual Art: A Meta-Analysis. (2024). https://doi.org/10.31234/osf.io/n4jdy

[51] Sonja Rozental, Michael van Dartel, and Alwin de Rooij. 2025. How Artists Use AI as a Responsive Material for Art Creation. Proceedings of the International Symposium on Electronic Art (ISEA) 2025 2-25. Retrieved from https://osf.io/preprints/psyarxiv/gjdnw

[52] Mark A. Runco. 2014. Creativity - Theories and Themes: Research, Development, and Practice. Elsevier Science, London, UK.

[53] Mark A. Runco. 2023. AI can only produce artificial creativity. Journal of Creativity 33, 3 (2023), 100063. https://doi.org/10.1016/j.yjoc.2023.100063

[54] Mark A. Runco. 2025. Updating the Standard Definition of Creativity to Account for the Artificial Creativity of AI. Creativity Research Journal 37, 1 (2025), 1–5. https://doi.org/10.1080/10400419.2023.2257977

[55] Mark A. Runco and Selcuk Acar. 2012. Divergent Thinking as an Indicator of Creative Potential. Creativity Research Journal 24, 1 (January 2012), 66–75. https://doi.org/10.1080/10400419.2012.652929

[56] Mark A. Runco and Garrett J. Jaeger. 2012. The Standard Definition of Creativity. Creativity Research Journal 24, 1 (January 2012), 92–96. https://doi.org/10.1080/10400419.2012.650092

[57] Keith Sawyer. 2017. Group genius: The creative power of collaboration. Basic books.

[58] Chenglei Si, Diyi Yang, and Tatsunori Hashimoto. 2024. Can llms generate novel research ideas? a large-scale human study with 100+ nlp researchers. arXiv preprint arXiv:2409.04109 (2024).

[59] Natale Simone and Henrickson Leah. 2024. The Lovelace effect: Perceptions of creativity in machines. New Media & Society 26, 4 (2024), 1909–1926.

[60] Uri Simonsohn, Leif D. Nelson, and Joseph P. Simmons. 2014. Key to the file-drawer. Journal of Experimental Psychology 143, 2 (2014), 534.

[61] Ulrik Söderström, Måns Hellgren, and Thomas Mejtoft. 2019. Evaluating Electronic Ink Display Technology for Use in Drawing and Note Taking. 2019. In Proceedings of the ACM European Conference on Cognitive Ergonomics (ECCE 2019), 108–113. https://doi.org/10.1145/3335082.3335105

[62] Robert J. Sternberg and Janet E. Davidson. 1995. The Nature of Insight. The MIT Press, Cambridge; New York.

[63] Robert J. Sternberg and Sareh Karami. 2022. An 8P Theoretical Framework for Understanding Creativity and Theories of Creativity. The Journal of Creative Behavior 56, 1 (2022), 55–78. https://doi.org/10.1002/jocb.516

[64] Claire Stevenson, Iris Smal, Matthijs Baas, Raoul Grasman, and Han van der Maas. 2022. Putting GPT-3’s Creativity to the (Alternative Uses) Test. Proceedings of the 2022 International Conference on Computational Creativity (2022). Retrieved from https://arxiv.org/abs/2206.08932

[65] Luning Sun, Yuzhuo Yuan, Yuan Yao, Yanyan Li, Hao Zhang, Xing Xie, Xiting Wang, Fang Luo, and David Stillwell. 2024. Large Language Models show both individual and collective creativity comparable to humans. arXiv preprint arXiv:2412.03151 (2024).

[66] Marek Urban, Filip Děchtěrenko, Jiří Lukavský, Veronika Hrabalová, Filip Svacha, Cyril Brom, and Kamila Urban. 2024. ChatGPT improves creative problem-solving performance in university students: An experimental study. Computers & Education 215, (2024), 105031.

[67] Wolfgang Viechtbauer. 2010. Meta-Analyses in R. Journal of Statistical Software 36, 3 (2010), 1–48. https://doi.org/10.18637/jss.v036.i03

[68] Samangi Wadinambiarachchi, Ryan M. Kelly, Saumya Pareek, Qiushi Zhou, and Eduardo Velloso. 2024. The effects of generative ai on design fixation and divergent thinking Proceedings of the 2024 ACM Conference on Human Factors in Computing Systems. 2024. 1–18.

[69] D.B. Wilson. 2023. Practical meta-analysis effect size calculator (Version 2023.11. 27). Campbell Collaboration. Available online: https://www. campbellcollaboration.org/calculator/ (accessed on 8 January 2025) (2023).

[70] Wolfgang. 2015. Answer to “Metafor package: Bias and sensitivity diagnostics.” (2015). Retrieved from https://stats.stackexchange.com/a/155875