A very warm welcome to the relaunch issue of Data Centre & Network News (DCNN)! After a short hiatus we are now back and better than ever. This is the first of our quarterly magazines for 2023, and it’s packed full of the latest news and comment on your industry.

In this month’s interview, I speak to The Data Centre Alliance’s CEO, Steve Hone, about his career so far and life at the DCA.

In our UPS & Power Distribution feature we have insight from Eaton on everything there is to know about UPS technology; Centiel says don’t put all your eggs in one basket; Panduit looks at critical factors for UPS success; and Riello writes on unlocking the power of data centre UPS.

In Cooling, Subzero looks at hot isle containment; Icetope embraces new cooling

EDITOR: CARLY WILLS

T: 01634 673163

E: carly@allthingsmedialtd.com

EDITORIAL ASSISTANT: BEATRICE LEE

T: 01634 673163

E: beatrice@allthingsmedialtd.com

GROUP ADVERTISEMENT MANAGER:

KELLY BYNE

T: 01634 673163

E: kelly@allthingsmedialtd.com

SALES DIRECTOR: IAN KITCHENER

T: 01634 673163

E: ian@allthingsmedialtd.com

solutions; and i3 Solutions asks if data centre cooling failures will become more common.

In our final feature – Security – G4S Fire and Security Systems discusses the ‘six degrees of separation’ needed to protect data centres; Axway looks at guaranteeing robust API security; and R&M offers a holistic look at UPS and power distribution, cooling and security.

If you would like to contribute to a future issue of DCNN, please drop me an email at carly@allthingsmedialtd.com. Please also get in touch with any feedback on this issue and don’t forget to subscribe to the DCNN newsletter to get the latest industry news delivered straight to your inbox – head over to the DCNN website to subscribe.

I hope you enjoy the issue – see you in the summer!

STUDIO: MARK WELLER

T: 01634 673163

E: mark@allthingsmedialtd.com

MANAGING DIRECTOR: DAVID KITCHENER

T: 01634 673163

E: david@allthingsmedialtd.com

ACCOUNTS

T: 01634 673163

E: susan@allthingsmedialtd.com

16 UPS technology: everything there is to know and future considerations from Eaton

20 Centiel says, don’t put all your eggs in one basket

22 Critical factors for UPS success from Panduit

26 Unlocking the power of data centre UPS with Riello

30 Centralised IT rack monitoring is essential for cost-efficient operation, says E-T-A

Operators will be given the tools to enhance the security measures within their data centres as a result of the new Data Centre Work Group.

The Data Centre Work Group at Trusted Computing Group (TCG) has been formed to establish trust within systems and components within a data centre, focusing primarily on developing protective measures against active interposers. The Work Group will examine the existing attack enumerations against data centres, and devise ways to mitigate them. These attacks include the feeding of compromised boot code to the CPU, impersonations of the CPU to the TPM, the suppression and injection of false measurements to a legitimate TPM, and the redirection of legitimate measurements to an attacker controlled TPM.

As a result, operators will be able to trust that the components and hardware found within the system are operating successfully without the fear it may become weaponised.

Trusted Computing Group, trustedcomputinggroup.org

industry. IT employees are therefore potentially suffering attacks at least once per week each.

Cyber security expert, Ledi Sallilari offered the following tips on how businesses can protect themselves:

The IT sector has the highest number of data breaches of any industry, with over 300,000 cyber security breaches in the past year alone. And, with photo sharing apps on the rise, the potential for data breaches when these are used at work is creating panic across the industry.

Experts at Scams.info used data from the 2022 Cyber Security Breaches Survey to identify the UK industries with the most breaches. According to the data, IT employees are over three times more likely to suffer a security breach than those in the finance

• For tech-based industries, the importance of cyber security training is invaluable, playing a vital part in keeping data safe.

• Avoid easily guessable passwords that use identifying information, and opt for the longest passwords possible.

• Be aware of phishing attacks, and do not open any emails you do not recognise.

Reboot, rebootonline.com

Castrol has joined the RISE partner program for the data centre unit ICE (Infrastructure and Cloud Research and Test Environment). The collaboration is aimed at enhancing immersion cooling, which involves the cooling of servers through immersion in dielectric liquid.

The collaboration with Castrol aims to improve understanding of the benefits that come with the introduction of coolants for immersion technology. Immersion cooling is one of several solutions for cooling servers in data centres that will need to be developed. Liquid cooling will be required when the heat generation density (heat per cm2) of processors goes up.

“We want to excel in data centre technology by working closely with the industry. A partner program

helps with dialogue and direct bilateral cooperation. This way, we can continue to develop our thought leadership together with our partners,” says Tor Björn Minde, Director ICE Datacenter, at RISE.

Castrol, castrol.com

data centre industry are clear, and there is growing understanding of their importance.

A key element of WLCAs is defining the boundaries of the assessment. Given the current maturity of the industry in conducting assessments, there is still a grey area - with many of these excluding MEP systems and externals from their scope.

The EUDCA has announced the publication of a new white paper entitled ‘Whole Life Carbon Assessments for Data Centres’.

The publication says that efficiency measures are reaching their limits, and the next challenge will be achieving net zero embodied carbon. The benefits of whole life carbon assessments (WLCA) within the

The white paper explains the relevance of WLCAs, what they consist of and some of the methodologies and reporting frameworks that exist. It provides an example of a typical data centre and how WLCAs can offer value to data centre operators, and highlights some of the challenges the industry faces and recommendations on how to tackle these.

EUDCA, eudca.orgThe London Fund has agreed to co-invest alongside Macquarie Asset Management in its acquisition of a minority stake in VIRTUS Data Centres.

VIRTUS is a pure-play data centre owner-operator, providing critical digital infrastructure which gives vital connectivity and access to online services for residents and businesses in London. The business provides intelligent solutions to improve the storage, retrieval, management and security of data across global networks.

VIRTUS currently comprises 11 sites in London, with a combined capacity of more than 100MW. It aims to build more sites in the area, in line with increasing data needs, while expanding into Europe.

The VIRTUS investment also delivers on its Positive Social Outcomes promise by supporting London’s

digital ecosystem through a data centre platform which matches its electricity consumption to 100% renewable energy procurement, alongside its wider commitment to net zero by 2030 and efficient water and energy usage.

VIRTUS Data Centres, virtusdatacentres.com

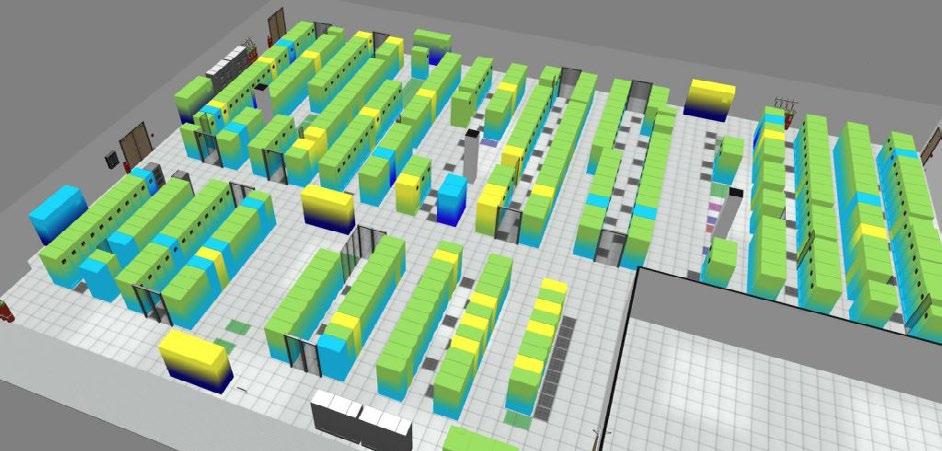

optimisation of Three’s UK facilities. EkkoSoft Critical’s real-time operational visibility helps Three’s data centre operations team to identify where specific cooling optimisation actions are needed. This has already resulted in energy savings of 200kW against a 196kW projection, while helping the Three data centre team to meet a demand for a 5% energy saving across its sites.

Three UK has deployed EkkoSense’s EkkoSoft Critical data centre optimisation software to secure a 12.5% cooling energy reduction in just 10 weeks across its legacy sites.

Using the AI-enabled SaaS visualisation and analytics software ensures the rapid thermal

EkkoSense’s technology works by analysing thousands of temperature and cooling points across Three’s legacy data centre sites to identify where levels of cooling can be tweaked. The software also increases the level of thermal insight available to operations teams, helping to uncover areas of thermal risk that were not being picked up by BMS systems.

EkkoSense,

ekkosense.com

Stellium Data Centres owns and operates one of the largest purpose-built data centre campuses in the UK. Stellium positions itself as the new Data Meridian, being the UK’s only landing point for the new North Sea Connect cable and the UK’s newest Internet Exchange Point. The company provides a wide choice of fast and direct terrestrial networks, including Newcastle’s only open metro network.

An important client to Stellium is Aqua Comms, a provider of international undersea fibre connectivity. The America Europe Connect 2 (AEC-2) project is a dual diverse trans-Atlantic fibre connection comprising two legs: one going directly from the US to Denmark; the other passing through Ireland, the Isle of Man and England, where it terminates at the Stellium Campus before continuing to Denmark.

According to Paul Mellon, Operations Director of Stellium, the Newcastle campus hosts Aqua Comms’ cable landing station for the section passing through Britain and Ireland. The station comprises Power Feeder Equipment (PFE) gear which supplies the necessary power to transmit data via the cable to Denmark and Submarine Line Terminal Equipment (SLTE), in which the fibres get split for individual use.

The overriding requirement for Aqua Comms’ cable landing station is reliability with guaranteed zero downtime. Several factors made the Stellium Campus a good fit, according to Paul. There is ample utility power in the area, thanks to the presence of the UK Super Grid, which can provide dual 11kVA feeds into the site.

To provide such a guaranteed service requires a combination of high quality products and infrastructure with corresponding planning and operations management expertise. In the case of products and infrastructure, Stellium could provide Aqua Comms with ample power from the grid as well as backup power facilities that offer defence in depth.

Stellium also excels in the management of its campus, having earned certification to such standards as ISO 27001 for information security,

ISO 14001, for environmental protection, and ISO 9001 for service management.

An example of a live upgrade was a project to reinforce the UPS resource necessary to guarantee uptime in the case of a power disruption. The requirement was to install dedicated UPS systems to support the Aqua Comms infrastructure, rather than have it share UPS systems with other colocation clients.

Paul says, “It was always part of the plan to keep the power needed for colocation IT separate from that needed for the fibre. But given the complexity of the Europe America Connect project, which is really 14 related projects with a combined investment of between $400m and $500m, the cable landing station was completed in 2019, but the cable itself didn’t go live until 2020.”

Because of the time between the completion of the cable station and the go-live date for the connection, the project to upgrade the UPS capability did not take place until 2020. Unfortunately, this meant that it encountered the additional challenge of personnel restrictions caused by the onset of the COVID-19 pandemic and associated lockdowns.

The dedicated cable station UPS systems are 200kVA Galaxy VM models from Schneider Electric, chosen because of their quality, the experience Stellium has had of dealing with Schneider in the past, and because the company’s product strategy fits well with Stellium’s approach to life cycle management.

Designed to provide efficient data centre power continuity, Galaxy VM is a highly compact, modular three-phase UPS that incorporates servicing features which are highly desirable in mission-critical applications, for example, all inputs, outputs and local manageability are accessible from the front of the unit to simplify maintenance - additional or replacement batteries can be quickly installed without the need to go to maintenance bypass and therefore maintaining availability and continuous protection of the load.

In addition, Galaxy VM features Schneider Electric’s ECOnversion operating mode, delivering ultra-high efficiency while charging the batteries, conditioning the load power factor and ensuring Class 1 output voltage regulation. The unit’s high-efficiency rates remain stable even at lower operating power levels. Advanced electric features include power conditioning, very low harmonic distortion through IGBT rectifier, plus input power factor correction that lowers installation costs by enabling the use of smaller generators and cabling.

“We’ve had great support from Schneider,” says Paul. “They’re very good technical designers, and the products are extremely reliable. In the past, we’ve found that we could rely on a 12-year-old UPS, for example, that was still operational even after it suffered what had seemed to be a catastrophic failure. That’s a monumental level of reliability and support that we recognise.”

Please click here to read the entire case study.

Schneider Electric, se.comthat’s out of this world.

Huge power in a small footprint…

The Galaxy™ VS from Schneider Electric is the best-in-class power protection that innovates against downtime. It is an efficient, modular, simple-to-deploy 3-phase UPS that delivers intelligent power protection to edge, small, and medium data centres, as well as other business-critical applications

• 99% efficient in ECOnversion™ mode

• Compact design, optimised footprint architecture

• Battery flexibility, including Lithium-ion batteries

• New patented hybrid technology

Discover the Galaxy VS UPS

www.se.com/uk

CW: Tell us about yourself and how you got into the sector.

SH: I originally trained as a mechanical engineer. However, like most of my peers, working in the data centre sector happened by chance and not design. Over 15 years ago my business partner, Simon Campbell-Whyte and I identified a need to share knowledge related to the data centre sector. We were frequently working on bids which required hosting services as part of the RFI. The same questions kept coming up with no one knowing the answer, so we set out to research and site survey every colocation and hosting provider we could find. This resulted in the collation of so much market intelligence that we eventually gave up our day jobs to launch a data centre search and selection consultancy called Colofinder.

After supporting the UK Government with numerous projects, they asked us if we would be prepared to set up a data centre trade association – the aim was to enable

the government, consumers and public to understand more about the growth in the digital sector. Work started on setting up The DCA in 2009, following consultation between industry leaders the DTI, RDA and EU Commission, and finally launched in 2010.

The trade association is - and always will be - completely inclusive, independent and vendor neutral, representing the interests of the entire data centre community. This includes private data centre/server room owners/operators, hosting and colo providers, consumers of data centre third party services, and suppliers providing products and services to the data centre sector.

CW: For those who may not know, can you give us an overview of the work that the Data Centre Alliance does?

SH: The remit of The DCA is pretty expansive - we address many important issues and areas of focus for the sector. We are active in

routine areas of the sector, such as standards, certification, regulation and lobbying for positive change – The DCA are present on a number of APPG’s, representing the industry and ensuring our partners views and opinions are listened to.

We facilitate a number of special interest groups, including: sustainability, energy efficiency, anti contamination and security groups to name but a few. These groups are made up of industry experts and result in recommendations and best practice guides for the sector to consult.

The DCA supports many industry events throughout the year, sitting on the organising committees for a number of data centre events – for example, we have just hosted panels, provided speakers and Chairs at Data Centre World London. We also supported and judge the DCW, DCS and DCR Awards. The DCA runs its own educational events – Data Centre Transformation (at The IET, Birmingham – in May each year) and our highly successful series of 10 x 10 Data Centre Briefing Update and networking events, which are hosted quarterly.

The DCA also helps to run initiatives such as The Rising Star Programme – which encourages new talent into our sector. On National Data Centre Day this year the programme will host 60 students visiting two data centres in west London and meeting fellow young professionals who are already gainfully employed in the sector; this will help to spread the word and highlight the data centre sector as a career of choice rather than an accidental career like it was for me and so many others.

CW: Tell us about your current rolewhat are you responsible for and what does the normal working day consist of?

SH: As CEO, I am responsible for the day-to-day management of the association. I am lucky enough to work with a great team who support me every step of the way, and an Advisory Board who bring a multitude of experience and expertise, which is invaluable. A typical day starts with a ‘things to do’ list, which never seems to get any shorter and is normally twice as long by the time I eventually switch the office lights out, so I’m always busy, which secretly is the way I like it.

CW: What are the best things about your role? What are the most challenging?

SH: That’s an easy question to answer, it’s the people I have the pleasure to work with and support every day. The members we support all tend to share one deep rooted thing in common and that is a desire to help the data centre sector grow and prosper. What drives me is not accolades or medals but the knowledge that The DCA is making a real difference for the organisations which make up The DCA community.

The biggest challenge is definitely keeping pace with the speed in which our sector is both growing and evolving. It’s impossible to know all the answers, but through The DCA I can guarantee that we know someone that does, and that is where The DCA’s real superpower truly lies.

CW: How has COVID-19 affected the industry? What are the positives to come from the pandemic?

SH: COVID-19 saw a huge demand in the use of digital online platforms during what was, in essence, a global lockdown on our freedom to travel. As a result, two things happened simultaneously - overnight, almost every aspect of our existence went online and at the same time demand on the digital network doubled. This demand was unrelenting, yet throughout the entire pandemic the data centre sector, which was responsible for keeping the country and population connected, never missed a beat. Remote working, video calls, streaming services and online deliveries of pretty much everything became the norm. With many of these new habits here to stay, reliance and business in the data centre sector has never been stronger.

It is difficult to see past the human tragedy of the pandemic for positives, but in a world where we are all being asked to look at the carbon footprint we are leaving in the sand, it’s not a bad thing to question if the planet would be better off if I jumped on a zoom call rather than a plane. The way we have utilised digital services over the past two years is nothing short of transformative, it has changed the way we conduct both business and how we live our lives, and I can only reflect on the positive opportunities that still wait to be unlocked now the genie is out of the bottle.

CW: Aside from COVID-19, what have been the biggest changes across the industry in recent times? What will be the biggest changes in the future?

SH: As mentioned, one thing that the pandemic created was a sudden wake-up as to just how vital the digital sector is to the health and wealth of both countries and their populations. However, as the spotlight glows ever brighter on the data centre sector, so does the spector of enforceable regulation. To date, the sector has very much self-regulated, but this is about to change with three key areas of regulation currently being considered: EU Taxonomy related to sustainable investments, Corporate Sustainability Reporting Directive (CSRD) and Energy Efficiency Directive, which will have far reaching implications for the sector, as this is no longer a case of if but when, and when will be soon.

CW: How do you see the data centre industry developing over the next few years?

SH: I refer you back to my previous answer. Irrespective of how efficient data centres are run or how effectively the IT services they host are utilised, demand for data storage and processing is only set to exponentially increase and that will inevitably mean more data centres will need to be built to meet demand. Where and how needs to be managed in a responsible and accountable manner using the latest DC design best practices and The DCA has an important role to play in this process.

CW: What makes a great data centre?

SH: So, you first need to ask yourself ‘what exactly is the definition of a data centre?’ Ask a dozen people and you will get a dozen different answers. In my view, these can be anything from a Tier 1 comms room right through to a Tier 4 hyperscale facility

Rather than asking ‘What is a great data centre’ what you should be asking is ‘is my facility fit for purpose?’ if the answer comes back yes then even if it’s a broom cupboard you have every right to refer to it as a great data centre and be proud of it, irrespective of its size or age.

The DCA’s special interest groups provide a number of best practice guides, White papers and reports all designed to help data centre owners and operators ensure their own facilities remain fit for purpose, which are all available on our website.

CW: What’s next for you and for the Data Centre Alliance?

SH: More of the same! The DCA continues to expand and grow, with new members and partners joining all the time. We will look to strengthen the DCA Advisory Board over the next 12 months and continue to support our members wherever they trade around the world. The events we support and host enable members to both source and share knowledge. These regular educational and networking events help to influence decision making and enable new connections to be made. With the event calendar already planned out right up to the end of 2024, there is lots to look forward to.

CW: Do you have any careers advice for anyone starting out in the industry?

SH: Join The Rising Star Programme organised by HireHigher and The DCA. It’s a great start point that will allow new joiners to get to know others in the same situation and develop a well aligned peer group to network and collaborate with.

I would always advise anyone thinking of starting out in the industry to join The DCA as a registered user, its free and will enable us to keep you informed of planned events you can attend and guidance on your options.

CW: What are you most proud of in your career so far?

SH: I have thought long and hard about this, and setting up The DCA must be one of the most challenging and rewarding projects I have ever worked on. At times it’s truly exhausting but I never fail to feel excited by working in this fast-moving sector.

CW: What are your interests away from work?

SH: ‘Once an engineer, always and engineer’ – I am never short of a crazy project! And I’m never happier than when I am busy in my workshop inventing, making or repairing something for someone. It helps me to keep my brain working on something other than work, and also keeps A&E busy stitching me back up when I cut something off by mistake.

Data Centre Alliance, dca-global.org

Ciarán Forde, Segment Leader, Data Centre and IT - Eaton, covers the bases of everything there is to know about UPS technology, and considerations for the future that professionals should be aware of within the power sector.

Post-COVID-19, the biggest challenge facing most industries today is the rising cost and uncertain security of energy. Such is the concern that it has shifted the focus of the need for a renewable generation strategy from sustainability to protecting supplies.

To this end, the European Union has singled out large energy users - and the data centre industry in particular - in proposed modifications to its Energy Efficiency Directive, stipulating the need for sustainability reporting and plans from data centre operators.

Data centre operators have an opportunity to address this. By utilising UPS assets in their power infrastructure they can not only improve their resilience, and reduce their environmental

impact, but also optimise the performance of the grid.

Used in industrial, commercial and other settings as an alternative source of power, such as a generator, in the event of a blackout, UPS’ have typically worked in the background, largely unnoticed. Now, their capability to utilise stored energy for secondary functions makes them significantly more important. As well as ensuring business continuity in the absence of power, UPS’ can also be used as a distributed energy resource to help electricity grids manage the high variability experienced today.

UPS’ with FFR capabilities, for example, can enable a data centre’s backup power system to provide auxiliary energy services back to the grid when required, without diminishing the data centre’s integrity or performance. One such system, Eaton’s EnergyAware UPS, puts data centres in full control of their energy, allowing them to choose how much capacity to offer, when and at what price, thereby contributing to renewable energy while generating revenue.

In fact, these latest bidirectional UPS models are already in use in data centres across Ireland and the Nordics, where regulators are calling for demand-side flexibility to manage grid frequency. Their grid-interactive functionality means UPS’ can release power back to the grid, and thereby balance the frequency.

Far from being a simple backup solution, UPS technology is becoming increasingly sophisticated, with recent developments proving essential, particularly given the rising cost of energy.

Advanced energy-saving systems are now capable of improving efficiency levels to 99%, and Variable Module Management Systems (VMMS) allow for high efficiency even when UPS load levels are low, such as in redundant UPS systems. By suspending extra UPS capacity, VMMS can optimise the load levels of power modules in a single UPS or in parallel UPS systems. Not only does this mean greater efficiency at lower load levels, but it also results in optimum efficiency at all load levels.

In addition, the application of specialised software can increase operational efficiency and decrease complexity. This would allow IT teams to reliably aggregate data centre and IT asset information, and monitor and manage end-to-end IT and power infrastructure with accurate data and actionable insights to ensure business continuity, and optimisation of data centre performance.

Such efficiency savings and technologies must be considered, therefore, when comparing a UPS. And, given their growing importance to supporting the grid, the reliability and maintainability of a UPS are crucial factors to consider, too. All of this, coupled with essential vendor services and support will help to ensure business continuity.

Fundamental to a data centre, a cyber-secure system is also a feature that must not be overlooked. The threat of a cyber attack is persistent, and one that network administrators must remain on top of. A security breach can result in operational downtime and data loss, impacting safety, lifecycle costs, and a data centre’s reputation. So, it is vital that any UPS network management system is capable of improving business continuity by alerting administrators of any pending issues, and helping to perform the orderly shutdown of servers and storage in the event of an attack.

All of these factors contribute to the broader Total Cost of Ownership (TCO) of a UPS - an area where energy will play a more significant role now. A financial metric that evaluates the total investment a data centre will make throughout the lifetime of an asset demonstrates that even a single percentage improvement in efficiency can reduce the TCO and save more than its purchase price over its lifetime.

The ongoing energy crisis is impacting industries everywhere - especially heavy energy users

such as data centres. But recent developments in UPS technology mean these data centres can convert their existing assets into a revenue stream, selling stored power to utilities to add flexibility to the grid.

While energy security may be a priority, it will also have the benefit of enabling data centres to support the decarbonisation of energy at the grid level, and maintain ongoing sustainability efforts. There’s no escaping the fact that energy is expensive right now, so optimising the efficiency and sustainability of any data centre is essential - the UPS can play a pivotal role in enabling this.

For most data centres, UPS is still seen as a means of protecting critical loads in the event of a power outage or enforced shutdown. Many are now beginning to realise how they can drive the energy transition, contribute to grid stability, and open up new revenue streams, all by using existing infrastructure. We don’t know how the energy crisis will play out, but we can be sure that UPS’ can help data centres see it through.

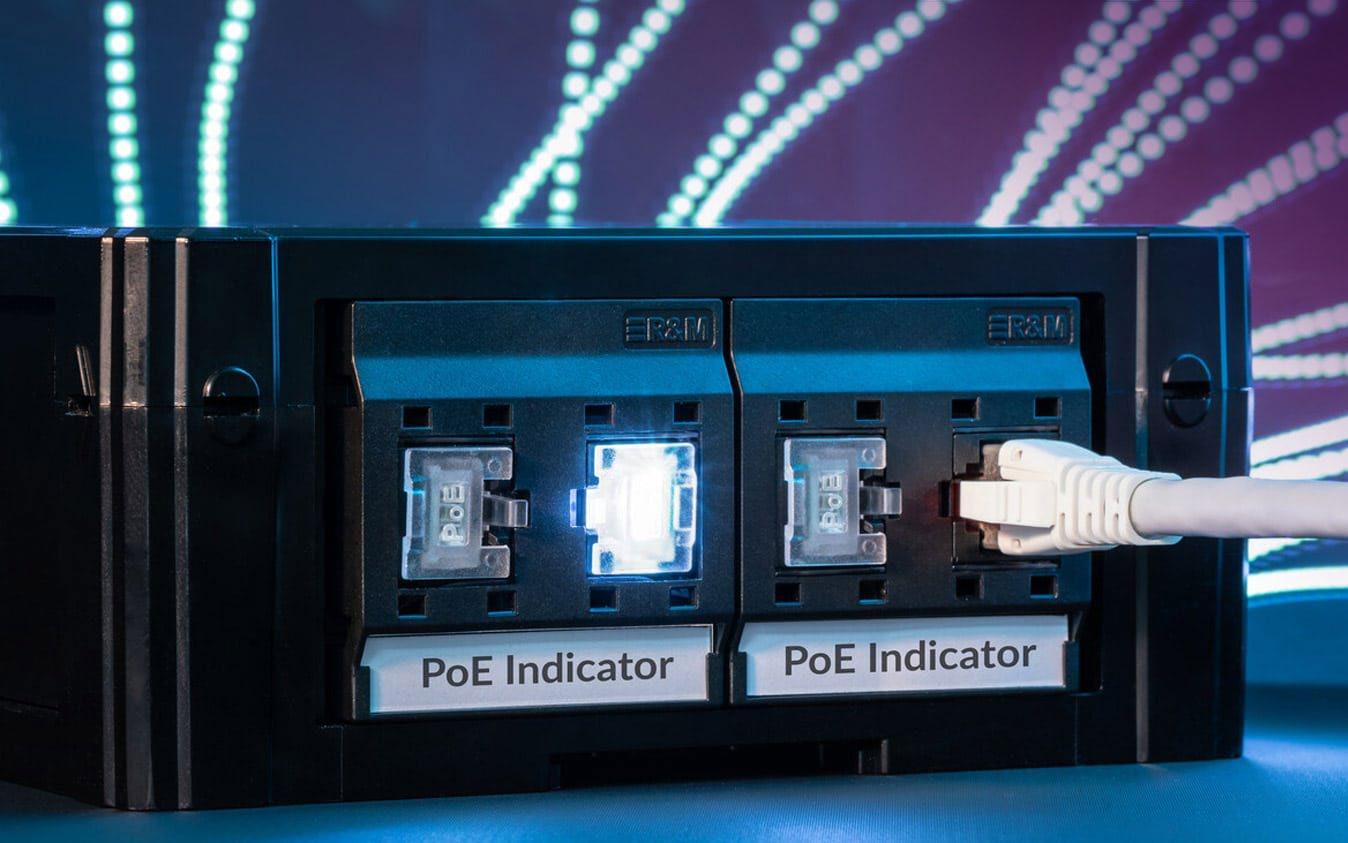

Eaton, eaton.comFrom hyperscale and modular to distributed edge and enterprise: there’s no «one size fits all» solution for today’s ever-growing variety of Data Centre architectures and applications.

That’s why we offer complete DC infrastructures, housings for network technology, hardware and software for infrastructure management, and our own cables and racks.

Here in the UK, we have a dedicated dealer network, service staff, logistics partners, consultants, manufacturing facilities, and a longestablished presence.

Maybe you didn’t manage to get hold of us at our crowded Data Centre World London booth? Would you like to visit us in our demo room in Reading and discuss your project requirements or challenges?

Get in touch - our experts are happy to have a chat - or schedule a visit at our Roadshow truck.

gbr@rdm.com

11

In a Tier III data centre, the UPS systems must be concurrently maintainable without any disruption to the critical load. To achieve this, a separate UPS-backed A and B stream is necessary. If, for whatever reason, either A or B stream becomes unavailable, the unaffected power path will have the capacity and infrastructure to support the total load. It’s a UPS configuration designed to maximise availability and uptime to the critical load. Aaron Oddy, Sales Engineer at Centiel UK, explains.

As a Swiss manufacturer, Centiel is regularly involved in supplying UPS systems to suit this configuration within data centres. However, for the client, it may not always be as simple as supplying a ‘like for like’ replacement or replicating a design from a previous facility. There are lots of variables that can dictate the choice of product or manufacturer, so discussing what options are available will help in making the right informed choice.

Take, for example, that you have a Tier III data centre with an A and B stream. You may be running some legacy end-of-life UPS

equipment on stream A, and relatively new equipment on stream B. So, do you replace the equipment on both A and B streams to keep the equipment and manufacturer consistent, or do you just replace the legacy equipment? Which route offers more cost savings and alternative options?

There are pros and cons to both. You may choose to stick with a manufacturer, based on previous experience, to replace all your equipment. While this may provide some considerable comfort, you may question whether this is the best value in both CapEx

and OpEx for the business. You may also be considering the accessibility of this equipment or support should it no longer be available in the future. Where does that leave you as an operating facility? Using a second manufacturer on the same site could solve the issue of putting all your eggs in one basket.

Using two different UPS manufacturers in this way could be seen as an additional layer of resilience to the facility by mitigating risk in the supply chain and services. Replacing just the legacy equipment will help to lower the Total Cost of Ownership (TCO) by reducing the cost of new hardware and reducing the running cost with higher efficiencies. In this scenario, you could say that you are making good use of your existing equipment, working alongside the new equipment with a robust supply and support structure.

There are no issues with running completely different UPS systems on true alternative A and B streams. They don’t need to be compatible as they operate on independent power paths. Centiel is starting to see some of the most forward-thinking data centres that require the highest levels of availability adopting this strategy because it increases the resilience, not only to the equipment that supports them but the companies they work with too.

A further advantage of adopting this method is the ability to compare two alternative UPS systems in a live scenario. This could be a beneficial exercise to evaluate the performance of two different UPS systems from two different manufacturers. Particularly when it

comes to their energy efficiency, performance, ease of installation, technical support and maintenance, even down to small details like the amount of noise they make or even how they physically look. It can provide valuable insight and help with informed decision-making for future UPS system lifecycle replacements.

Working with alternative manufacturers may only be the right choice for some data centres and primarily when reviewing the replacement of legacy equipment. For new data centre builds, for example, the decision to stay with a single manufacturer may be more advantageous. For example, the commonality of equipment for users, the flexibility of equipment to be redeployed, the warranty period, and possibly a more cost-effective maintenance plan with the same provider. However, for certain scenarios with their existing infrastructure, it may be a possible solution! Centiel’s role as trusted advisors is always to give the best recommendations to its clients.

At Centiel, the company’s experienced team is always available to discuss and help evaluate the best approach to UPS design, installation and management to suit any facility’s critical power protection needs. Centiel’s leading-edge technology, backed up with its comprehensive maintenance contracts carried out by experienced engineering teams ensure its clients’ power has the very best protection at all times over the long term.

Centiel, centiel.co.ukThe UPS is one of the key components in any environment where continuous electrical power to IT equipment is mission critical. Unprecedented demand, coupled with failures to invest in electrical transmission grid infrastructure, has increased pressure on the network to support the growth of data centres and other power-hungry applications, writes

Europe, Pandiut.

As we have witnessed from various data centre failures, when the power goes off unexpectedly, and the backup processes also fail, the results can be catastrophic in business terms. Understanding the key variables and goals in individual situations will provide a clear decision making process.

Those onsite backup electrical generation or storage systems, diesel generators and battery UPS’ are the bridge to a successful rollover of power, and therefore operational continuation. The UPS capability provides short-term power to systems to ensure processes can continue while the generator sets power up to offer longer-term energy supply to the facility.

Michael Akinla, Manager North

The period between utility power failure and the IT load transitioning to the UPS is critical. Any power interruption longer than 20 milliseconds will probably result in an IT system crash, extend that power delay to up to 60 seconds, and the outage will result in an extended ITE restart process that will seriously affect data centre operations and customer applications. A lengthy outage may also incur financial penalties from customers and reputational damage. In a fairly conservative and cost-conscious industry, it is better to be safe than sorry.

Improvements in UPS technology and its components is evolving the capabilities that they offer. Rapid development of higher speed processors and storage at the server

is also changing backup energy requirements. The type of IT applications being supported and customers’ risk tolerance, together with the application resilience will dictate UPS capabilities. Therefore, the data centre or customer must clearly recognise their needs before deciding on the UPS to support this.

Hyperscale data centre applications are designed so that only two minutes of battery runtime is needed, and colocation sites typically require five minutes of runtime. Whereas, in the financial market - where data is mission critical and where even a small number of dropped trades could cost hundreds of thousands of pounds - it is typical to see 10-15 minutes of guaranteed UPS runtime. As one would expect, the longer the runtime required, the larger the investment in UPS, in both initial cost and operational expense. In enterprise or edge computing environments where generators are unavailable, more time to safely and securely shut down servers and other equipment may be a critical requirement and will affect the decision. However, over-provision of UPS with extended runtimes, could be an unnecessary capital cost.

It is essential to select UPS’ that are suitable for the IT load they are supporting. IT running critical loads is a certainty, and for higher speed

processors generating more heat at the server, UPS’ for the cooling systems targeting those servers are quickly becoming a critical load.

With that in mind, another key factor in the process is the UPS unity power factor. The latest UPS’ have a unity power factor of 1. And with today’s modular capabilities, a customer’s 100kW IT load could be supported by five 20kVA or a single 100kVA UPS, dependent on preferred configuration. However, earlier model UPS may have a unity power factor <1.0 and this will probably impact the UPS requirement for the critical load supported. Modular UPS components are a solution in this situation

when combining legacy UPS products in an environment that is upgrading to higher power rated ITE racks. The modular capability of these systems allows for the additional UPS kVA to match the upgraded rack kW, reducing costs and improving energy efficiency. Maintaining battery life is essential, with Panduit utilising the three-stage charging method - matching the most optimum power curve, together with temperature-compensation and using advanced algorithms to prolong battery life.

Lithium-ion batteries are now established in the market and will deliver more capabilities to users as the technology becomes embedded within the key applications. Compared to lead-acid, Li-ion batteries offer longer lifecycles, reduced weight, compact footprint, and lower cooling requirements, which is highlighting its potential in small data centres and edge environments. Their capacity for higher amounts of energy in smaller footprints, at a factor of between two and three, with twice the life, and cooler running (reducing the requirement for specific cooling systems) offers the possibility of eliminating the need for separate battery rooms.

As the technology advances, numerous management and safety features are being implemented to ensure far more granular data is available on battery health and connectivity, and the capability to provide that data across the power and data centre network. Integration will make it possible to integrate the Li-ion UPS into preconfigured data centres so that customers can order a single SKU with a

complete critical system and be delivered to any location and simply installed and connected to power, becoming operational in minutes. IPDUs (intelligent power distribution units), such as SmartZone G5 iPDU in the racks provide the backbone to these extended capabilities, with data management and connectivity.

However, for the time being, the latest UPS’, such as SmartZone UPS, deliver increased efficiency, reliable power protection and backup power for computer IT and other critical equipment. Modular systems allow for hot swapping, providing the platform for faster maintenance, and removal of old or faulty individual units.

To continually meet the growing power demands of data centres, enterprise, and edge IT equipment, the latest UPS’ provide excellent electrical performance, intelligent battery management, intelligent monitoring, secure networking functions and a long lifespan for lithium units. Integration with cloud based DCIM solutions, such as Panduit’s SmartZone provide support for management, monitoring, control and alerting across the wider environment including power chain, environmental, cooling, security, IT asset (physical and logical) and connectivity infrastructure. Avoiding downtime in critical assets is the top priority. Improving technology invariably creates challenges to reduce outages, and UPS’ remain a critical element in data centre best practice.

Panduit, panduit.com

Data centres deploy uninterruptible power supplies (UPS) as the ultimate insurance against the damage of downtime. But compared to many other countries, the UK’s power grid is stable, with major power cuts being relatively rare events.

So, while UPS systems are undoubtedly needed as a last line of defence against the worst-case scenario, you could perhaps see them as something of an under-utilised and expensive asset. Thanks to improvements in battery technology, and advances in communications software and protocols, many modern data centre UPS’ are now smart grid compatible.

They are able to communicate with local power networks and, depending on the real-time status, either draw electricity from the grid or push stored power back into it to help with the tricky task of balancing supply with demand.

This potentially turns a UPS from an essential, yet reactive, piece of equipment into a dynamic ‘virtual power plant’ that works hand in hand with the ongoing transition towards a smarter,

zero carbon electricity network. And it can do all this without compromising on the UPS’ fundamental premise of reliability.

The first smart grid UPS application is called peak shaving, a concept where the batteries basically limit how much power a data centre draws from the mains electricity. If the load on the UPS goes above a set level, the unit draws a proportion of the power from the mains, with the rest coming from the battery set.

Peak shaving can either be static - where the UPS has a fixed setting and peak shaves to that limit - or user-controlled, where the operator can reduce the input mains by sending commands via modbus or volt-free contacts. However, the most common type is dynamic, which as the name suggests, works according to the real-time conditions.

Say your data centre has a contractual limit of 1MW mains supply. The typical load

is between 500-900kW, with a further 30kW critical load. So, at peak time your maximum load could be 1.2MW - in breach of your contract. When this happens, the UPS automatically pushes stored energy from the battery set to reduce what’s needed from the mains. And when the loads are lower, the UPS simply recharges the batteries.

There’s another application similar to peak shaving - impact load buffering. In effect, impact load buffering sees the energy stored in batteries slow down the incoming mains. It is an option for sites with a weak power source or reliance on a generator set.

Smart grid-ready UPS can also help maintain a stable grid frequency via demand side response. The Master+ solution that Riello developed with RWE Supply & Trading specifically for data centres is a great example. It combines a high efficiency UPS and premium cycle-proof batteries (either lead or lithium-ion), plus a secure, integrated battery monitoring and management system. The final aspect is a route-to-market contract that enables the data centre to participate in the energy market without taking on the risks.

How does it work? Well thanks to a compact battery arrangement, the solution provides up to four times the useable battery

capacity in just a 20% increase in footprint. The batteries are split in two and there’s a backup segment consisting of around 30% of total capacity. This is controlled by the UPS, and only used to support the critical load if there’s a disruption.

The remaining 70% is a ‘commercial’ segment RWE deploys to support grid balancing schemes, for example, Firm Frequency Response, which National Grid uses to maintain a safe frequency within 1Hz of 50Hz. So, when the frequency goes above 50Hz, the UPS draws power from the grid into its batteries to pull the frequency back down. On the flip side, if the frequency drops, the UPS pushes power from the batteries back into the grid to stabilise the network.

Typically, the system’s state of charge ranges from 60-70%. So, if you do suffer a mains failure, you can call upon the power in the exclusively ‘backup’ segment, with whatever’s left in the ‘commercial’ segment topping up your overall battery runtime.

In return for granting RWE usage rights for the commercial element of the batteries, data centre operators gain from a significantly discounted premium battery system, extended autonomy, 24/7 monitoring (which lowers maintenance costs), reduced grid tariffs, and the chance to explore other revenue-generating opportunities.

For any data centre operator unsure about the potential of smart grid-ready UPS, they only need to look at tech giant Microsoft. Its entire data centre portfolio in Ireland uses lithium-ion batteries to push stored power back into the grid. This reduces the reliance on gas and coal-fired plants to maintain vital spinning reserves, whilst significantly cutting carbon emissions from the Irish energy sector.

As UPS and battery technology continues to evolve, other operators should follow suit and harness their standby power systems for a wider good. It makes sense financially, and will also help secure energy supplies in increasingly uncertain and unpredictable times.

Riello UPS, riello-ups.co.uk

Riello UPS, riello-ups.co.uk

YOUR BENEFITS

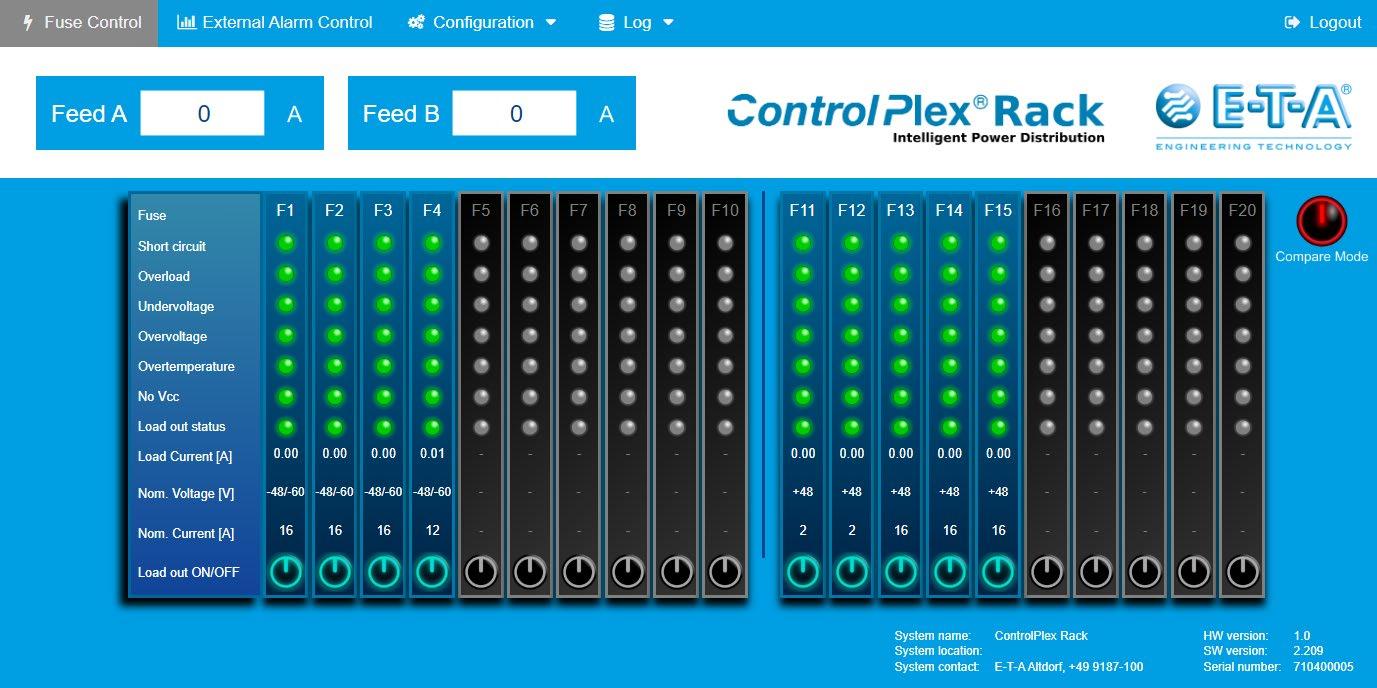

• Condition monitoring through diagnostic capabilites (current, voltage, temperature)

• Reduced maintenance time through remote control

• Reduced install time due to plug & play solution

• Easy system extension due to modular design

3YEAR CARE PLAN

The award-winning new Enhanced TestPro CV100 Multifunction Cable Certifier is purpose-built for today’s modern Smart Building network infrastructure. It offers the most feature rich test platform available today, and the modular based platform that allows for customisation of the exact test suite needed.

You can get £2500 off the list price of the TestPro K51E or K61E test kits when you trade-in any Cat 5e certification tester (it doesn’t even need to work!) -on purchases made from Mayflex between 1st March - 31st May 2023

WHAT AN AWARD WINNING AEM TESTPRO CV100 TESTS

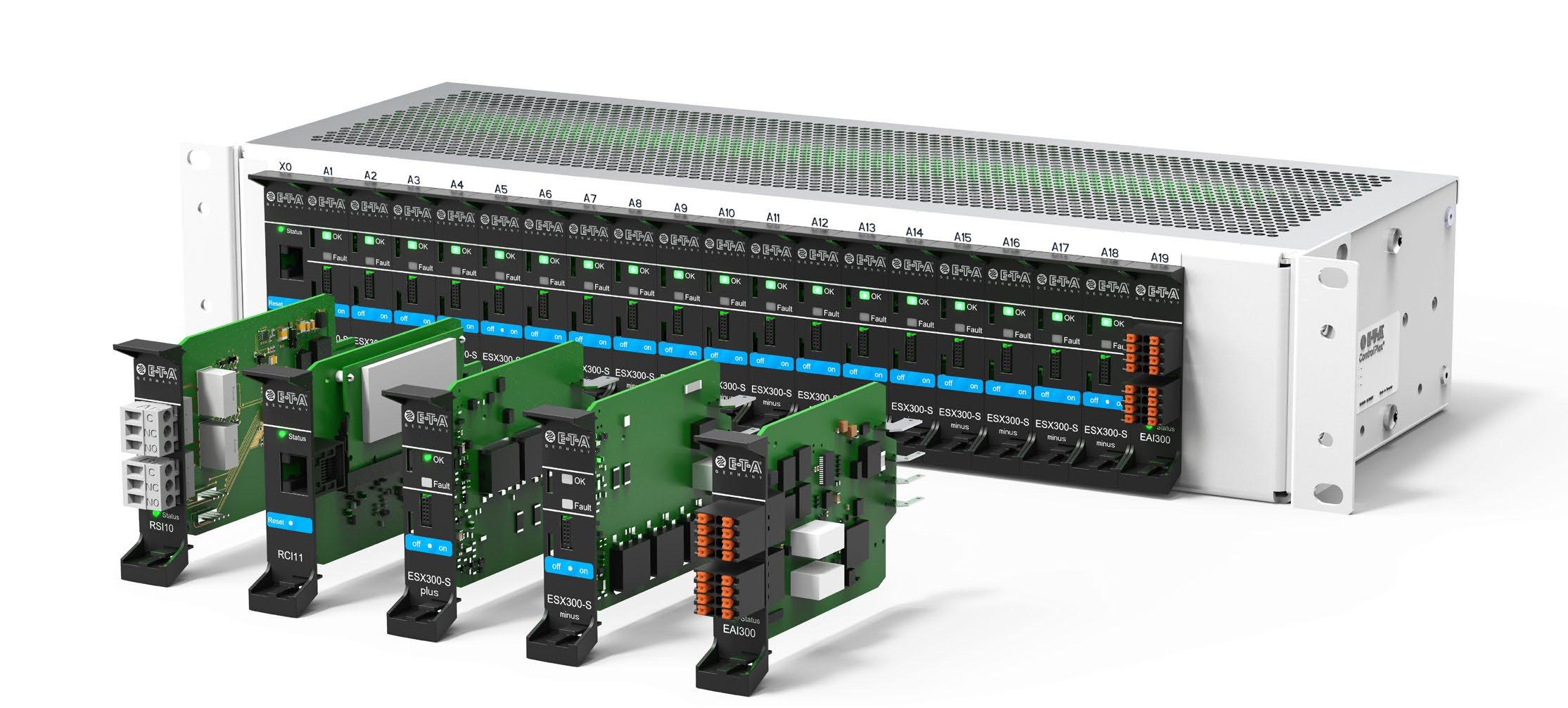

Measuring and processing huge data amounts provided by external signalling devices such as sensors is becoming increasingly important. Decisions based on status messages should be made as automated and autonomously as possible by the system to prevent failures of the active technology. E-T-A’s modular ControlPlex Rack system provides power distribution and overcurrent protection, as well as the option to integrate sensors and process their environmental data in a useful way.

Whether planning a new data centre infrastructure, expanding existing system cabinets or changing equipment within a rack, the power supply and distribution is one of the main requirements in data centres or telecommunication systems. While servers in data centres are mainly supplied with AC current, the DC current supply with a typical voltage range of DC -48V or DC -60V is still state-of-the-art in conventional

telecommunication technology. Reliable power distribution and protection of the components to be supplied are often handled in the system cabinet by conventional power distribution systems with hydraulic-magnetic circuit breakers. However, this kind of protection and distribution reaches its limits in modern infrastructures, as it does not offer features such as data logging, transparent monitoring or control via remote access. Integrating sensors and processing their data with conventional power distribution systems is not possible. Intelligent DC power distribution systems with electronic circuit protectors and I/O interfaces, such as E-T-A’s ControlPlex Rack, are a modern alternative for these demanding applications.

Sensors are an integral part of everyday life in a digitised world. We often associate them with

Centralised IT rack monitoring is essential for cost-efficient operation in data centres and telecommunication systems, writes Michael Bindner, Product ManagerCommunication Systems, E-T-A Elektrotechnische Apparate GmbH.

automatic processes, e.g. motion detectors. The term ‘sensor’ comes from the Latin word ‘sentire’, which means ‘to feel’ or ‘to sense’. This meaning is a very accurate definition of a sensor’s main task, which is to measure physical or chemical variables, such as temperature, brightness or humidity and then convert them into electrical signals. The counterpart to the sensor is the so-called actuator. It can correctly interpret the electrical signals transmitted by the sensor, generate a physical variable and then perform the correct action. This creates logical process chains that manage everything from collecting information and converting it into electrical signals, to carrying out actions completely automatically. The combination of sensors and actuators is often required in control cabinets or IT racks. A simple example for such a process is the temperature sensor transmitting a signal that the ambient temperature is too high. This will actuate an electronic circuit breaker and a fan starts to rotate.

Collecting the signals provided by the sensors is the first and the most important step when setting up an automated process chain. In most cases, this task is taken over by separate input/output modules. Modular complete systems, such as the ControlPlex Rack, integrate this functionality

easily and space-savingly by plugging a module, e.g. the External Alarm Interface (EAI300), into the 19in power distribution system. The great benefit here is that no valuable rack units are occupied, and are therefore available for active technology such as routers, servers or switches. In addition to its eight digital inputs, the External Alarm Interface (EAI300) also has an analogue input and two digital relay outputs, enabling easy connection of the sensors, e.g. for temperature and humidity, proximity or even vibration and contact terminals. The EAI300 is connected to all electronic circuit protectors and the ‘Remote Control Interface (RCI10)’ communication module via integral BUS system. It is the heart of the system and takes over the internal communication, but also connects the ControlPlex Rack system with the superordinate control unit or control room. With this system, sensor data, status information and error messages can be requested, temporarily stored and forwarded to or indicated in the management system. The integral Ethernet interface connects the entire power distribution system to the company network and allows data to be transmitted to administrators worldwide. ‘TCP/IP’ or the ‘SNMP (v1, v2, v3)’ and ‘http/https’ protocols can be used for data transmission.

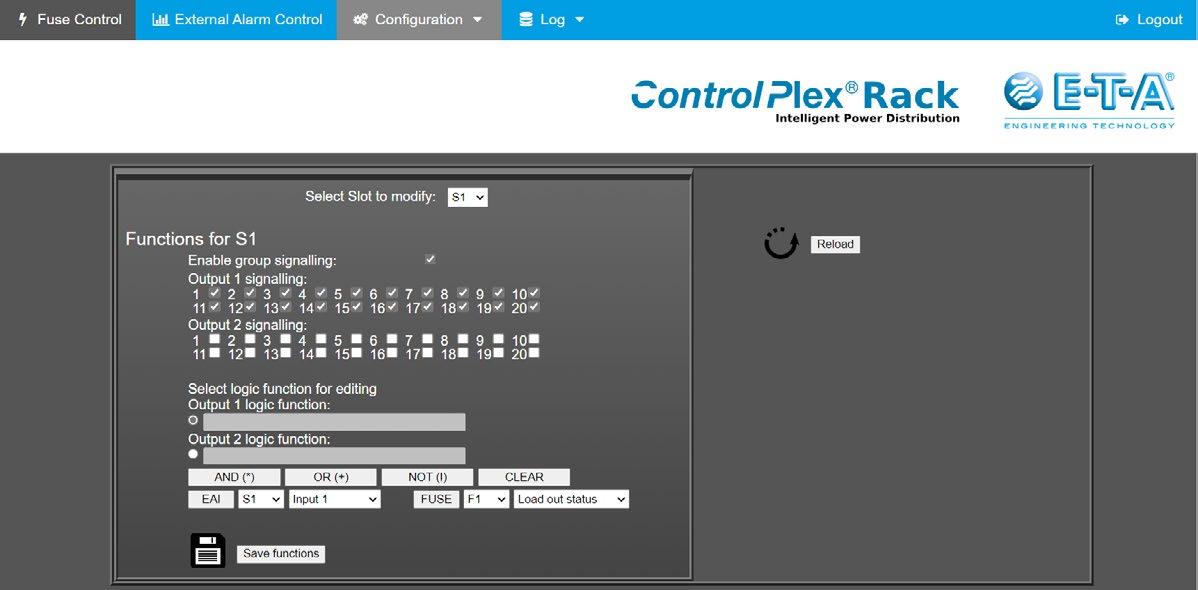

However, if we talk about power distribution systems in the context of Industry 4.0, the sensor integration does not yet include the option for automated actions. This requires additional features, especially in terms of software, as you can find them in modern

Fig. 2: External Alarm Interface (EAI300) as signal collector for sensors in data centres

Fig. 2: External Alarm Interface (EAI300) as signal collector for sensors in data centres

programmable logic controllers (PLCs). The combination of RCI10 and EAI300 allows the two digital relay outputs to be switched as required. This allows the user to configure a logic function with the common ‘AND, OR, NOT’ commands and link them to the electronic circuit protector’s operating conditions. The user can programme fully automated scenarios, e.g. switching on an additional fan when a certain temperature value in the IT rack is exceeded. When the temperature value falls below the limit and the fan is switched off again, this leads to self-optimisation of the system. The logic function also allows switching on physical warning signals, i.e. a warning lamp or an acoustic warning. This is useful when the door contact of the IT cabinet signals that the cabinet has been opened - although the active technology is still live.

For convenient scenario configuration, most systems today offer web interfaces. The graphical user interfaces can be conveniently accessed platform-independently via a web browser without the need to install additional software. In addition to the web interface, the ControlPlex Rack provides the option of configuring and controlling the system via Secure Shell (SSH). It is possible to programme

logic links with both interfaces and integrate many other functionalities, allowing the system supervisor to keep an eye on all system conditions. For example, if an electronic circuit protector has tripped due to a short circuit or if there is an overvoltage, the control room can directly see it and initiate appropriate countermeasures. Moreover, the system can be restarted via Power On/Off. This significantly reduces service costs, as no service staff are needed on site.

Increasing digitisation also leads to higher demands on power distribution systems in the control or IT cabinet. The modular ControlPlex Rack allows the user to combine all the functions needed for their application-specific requirements. The default configuration includes the CP Power-D-Box DC power distribution module suitable for 19in or ETSI cabinets. The user can equip the 19 available slots with ESX300-S electronic circuit protectors or other optional modules. The Remote Control Interface (RCI10) allows convenient remote access and the Remote Signalling Interface (RSI10) ensures reliable potential-free signalling. The External Alarm Interface (EAI300) can be used for a transparent integration of sensors or logic links.

E-T-A Engineering UK Ltd, e-t-a.co.uk

E-T-A Engineering UK Ltd, e-t-a.co.uk

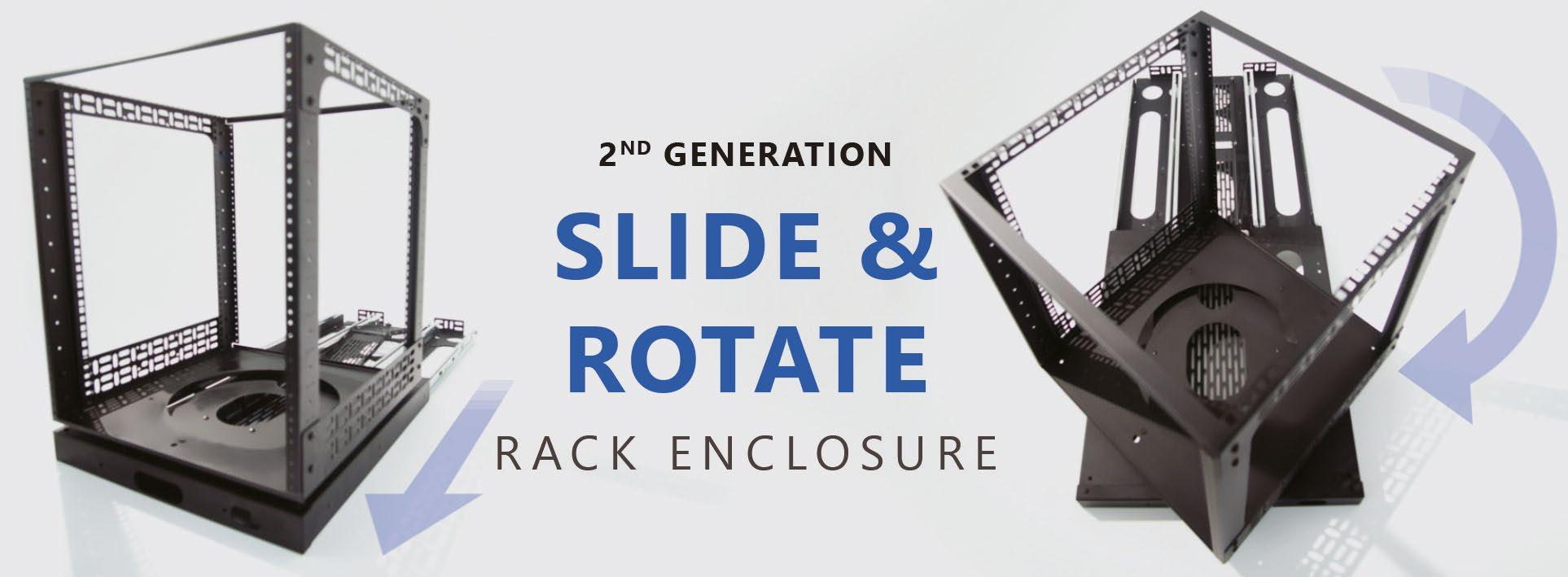

Would your life be easier if you could just slide your rack out or spin an enclosure to work on the sides or back anytime?

This is the newly-updated R8010 Slide & Rotate Rack system - an enclosure with more freedom than ever before. It makes it simple to slide a fully-loaded rack safely out and spin it in either direction offering full 360° access.

● Slides out to 550mm / 22” and rotates 360° in either direction

● Secure in position with Slide Catch and Spin Catch

● Folding cable management tray

● Security faceplate protects catches from use

● Full hole double rack rails with square holes for clip nuts

● Quick assembly: under 30 minutes

Are you fed up with using testers that don’t meet your expectations?

Have you ever been on site and needed to carry multiple testers to find a fault? Look no further than AEM - a company that not only values customer feedback but also strives to offer the best products available.

The award-winning CV-100 platform, and the Network Service Assistant, have been improved with the New Enhanced Range of testers, which has now become the standard range. AEM has made several enhancements based on customer feedback. The most notable change is the new capacitive touch screen that is easier to use and navigate, with a much-improved screen resolution.

It’s not just the screens that have improved. All CV-100 platforms, including the K50E, now come with a new set of Permanent Link adapters. These adapters now feature a ruggedised cable that

is built to last - they have now been upgraded meeting Cat8.1 standards. The shroud to plug has also been improved, making the permanent link adapters even more rugged and durable, which means more time on site and less downtime.

In addition to the above for installers that need to do fibre testing, the K51E and K61E kits now come with more standard equipment. Both kits now come with an inspection probe. This probe allows you to inspect fibre ends for dust or damage, ensuring your fibre connectors are always clean and ready for testing.

The testers now come with SC connectors and launch leads included, making both the K51E and K61E, LC and SC ready right out of the box!

Companies that specialise in smart/intelligent buildings should look at the K60E and K61E testers that are the perfect solution for those requiring even more advanced features. Both testers get all the additional kit mentioned above. But, in addition, they now come with a Cat6A patch cord adaptor, allowing you to carry out MPTL tests - essential for any installer installing Wi-Fi access points or IP CCTV cameras. This ensures that all IoT devices can be installed to the correct standard, ensuring your work is always up to par.

AEM testers have been tried and tested by real customers who have given glowing testimonials about their effectiveness and value for money - click here to find out more.

AEM CV-100 testers have always had advanced smart/intelligent building capabilities and are designed for more than just straight cable certification. They offer features like POE under load to determine if IoT devices have enough power, network tests that produce MAC and IP addresses, and traceroute to identify faults outside the local network. Plus, now they’re even better!

AEM understands that investing in equipment can be a significant expense. That’s why the company offers a three-year care plan, including calibration and accidental damage. Additionally, all adapters can be swapped out once a year when worn, ensuring that your equipment is always ready when needed. Your tester is even protected against accidental damage - if the company cannot repair it, it will replace it. AEM is available exclusively from Mayflex in the UK.

If you don’t already deal with Mayflex, you can easily click here to open an account. For more information about AEM from Mayflex click here, call the sales team on 0800 757565 or click here to send an email.

Flooding the data centre with cold supply air, in combination with an appropriate hot aisle containment solution, makes for a cost-effective, energy-efficient and environmentally-friendly facility, as Gordon Johnson, Senior CFD Engineer at Subzero Engineering, explains.

Data centre designs are continuing to evolve and, recently, more facilities are being designed with slab floors and overhead cabling, often in line with Open Compute Project (OCP) recommendations. Rather than install expensive and non-flexible ducting to supply cooling from overhead diffuser vents, engineers are seeing high efficiency and sustainability gains by flooding the room with cold supply air from either perimeter cooling units, CRAC/CRAH galleries, or other cooling methods (rooftop cooling units, fan walls, etc.).

Hot aisle containment (HAC) then separates the cold supply air from the hot exhaust air

and a plenum ceiling returns the exhaust air back to the cooling units. As such, this design is also gaining popularity due to its simplicity and flexibility.

An optimised containment system is designed to provide a complete cooling solution with a sleek supporting structure that serves as the infrastructure carrier for the busway, cable tray and fiber. Such a system should be completely ground-supported and, for that, a simple flat slab floor is all that is needed.

The goal of any containment system is to improve the intake air temperatures and deliver cooling efficiently to the IT equipment, thereby creating an environment where changes can be made that will lower operating costs and increase cooling capacity. Ideally, the containment system should easily accomplish this while allowing both existing and new facilities, including large hyperscale data centres, to build and scale their infrastructure quickly and efficiently.

Traditional methods for supporting data centre infrastructures, such as containment, power distribution and cable routing, can be costly and time-consuming. They require multiple trades working on top of each other to accomplish their work. An optimised containment structure provides a simple platform for rapid deployment of infrastructure support and aisle containment. For example, all cable pathways and the busways can be installed at the same time as the containment, allowing the electricians to energise the busway when needed, such as when as the IT equipment gets installed, or as the IT footprint expands.

The containment system should also give the end user the ability to deploy small, standardised and replicable pods. This helps to limit the amount of upfront capital spent compared with building out entire data halls by providing all the infrastructure necessary, while allowing for almost limitless scaling, should the situation require it.

When selecting a containment solution, the seal or leakage performance (typically a percentage) of the system is essentialit’s often stated that leakage is the nemesis of all containment systems. Users should reasonably expect a containment solution to have no more than approximately 2% leakage. This reduces and practically eliminates both bypass air and hot recirculation air that raises server inlet temperatures on IT equipment - the result being superior efficiency of the cooling system.

There’s another important element to this design - the plenum ceiling return. The ceiling and grid system chosen should have minimum leakage to reduce and even eliminate bypass air where cold supply air enters the plenum ceiling return instead of contributing to the cooling of the IT equipment.

This article has mentioned the importance of maximising energy efficiency and sustainability. Flooding the data centre with cold supply air for the IT equipment and containing the hot aisles so that hot exhaust air returns to the cooling units (or is rejected by some other method) is a simple, easy and flexible design. All new data centres should consider this for future deployments.

Another benefit of this (and most HAC designs) is that it’s easier to achieve airflow and cooling optimisation. In a perfect world, we would simply match our total cooling capacity (supply airflow) to our IT load (demand airflow) and increase cooling unit set points as high as possible. However, there’s inherent leakage in any design, including within the IT racks. The goal is to minimise the leakage as much as possible,

The lower the overall leakage, the less cold supply air is needed. Therefore, to maximise energy efficiency, we want to use as little cold supply air as possible, while still maintaining positive pressure from the cold aisle(s) to the hot aisle(s). When this is achieved, there will be consistent supply temperatures across the server inlets on all racks throughout the data centre.

Because HAC is used, the data centre is essentially one large cold aisle, so the total sum of cold supply airflow should only be slightly higher than the total sum of demand airflow (10-15% should be the goal). This percentage is easily attainable if leakage is kept to a minimum by using a quality containment and ceiling solution, along with good airflow management practices such as installing blanking panels and sealing the rack rails.

To drive further efficiencies, operators can raise the cooling set points while maintaining server inlet temperatures at or below ASHRAE (American Society of Heating,

Refrigeration, and Air-Conditioning Engineers) recommended specifications for cooling IT equipment (80.6°F/27°C). This also results in higher equipment reliability and lower MTBF (Mean Time Between Failures).

The data centre industry is constantly evolving, and so should our designs. Energy efficiency should continue to be a top concern for data centre operators, both now and in the future. Data centre designers and owners should carefully evaluate all options rather than just relying on or selecting from old projects.

Further, flooding the data centre with cold supply air and utilising a containment system, regardless of the cooling system, results in a simple, flexible design that’s both extremely energy efficient and sustainable. This will make both new and legacy data centres greener and more environmentally sustainable, and an environmentally friendly data centre is always a cost-effective data centre.

Whether it is extreme climate events or new technologies transforming data centre design, one thing is certain - the limits of air-cooling technology are quickly being reached. Data centre operators need to be prepared, says Pascal Holt, Director of Marketing, Iceotope.

Liquid cooling solutions offer significant benefits as they are highly effective in reducing energy consumption, carbon footprint, and solution costs, as well as saving floor space and offering downstream heat recycling capability. Some have moved the game on, whilst others are merely a stop-gap measure. Direct to chip and immersive cooling make up the two primary family groups. Direct to chip delivers coolant directly to the hotter components using a cold plate on the chip. These solutions can maximise performance at the CPU and GPU level, and are ideal for supercomputer clusters, for example, where every bit of cooling performance matters.

Immersive cooling has two major types of deployments. Full bath immersion completely submerges the ITE in fluid, and usually servers are stacked vertically rather than horizontally in a normal rack. Servicing these units is complex as it involves removing the equipment and draining liquid from the tub.

Precision liquid cooling on the other hand cools, protects and monitors the whole IT stack in any location - from the extreme edge to the hyperscale cloud. Small volumes of a dielectric compound circulate across the surface of the server to the hottest components first, removing nearly 100% of the heat generated by the electronic components. In addition, the

plug-and-play nature of the design enables consistent services and support, regardless of deployment.

Data centres are at the centre of an unprecedented data explosion. Increasing rack density and compute-intensive workloads are placing strenuous demands on data centre cooling. Artificial intelligence (AI) workloads across finance, security, healthcare, manufacturing and construction continue to drive up the processing requirements and power consumption of servers.

Data centre operators have made large investments in their cooling infrastructure, so many are exploring hybrid approaches to provide a path forward for new and legacy sites. Targeting the application to the cooling solution according to the power requirements for specific loads would allow data centres to provide customers with the energy efficiency required for HPC environments, while ensuring lower energy load to remain competitive and increase overall site energy effectiveness.

The need to handle, manipulate, communicate, store and retrieve data efficiently is moving processing capacity closer to the user than ever before. IT computing loads at the edge are

usually required to operate reliably in locations not built specifically for IT equipment. Whether it is indoors around people, or in harsh external environments, the equipment needs to be purpose-built for edge computing.

Precision liquid cooling is enabling new opportunities. The sealed chassis form factor provides the same kind of protected environmentally-controlled conditions found in a data centre facility. It is designed to withstand all types of IT environments with minimal impact on its local surroundings. Organisations can also more easily achieve their net zero targets, as precision liquid cooling captures greater than 95% of server heat inside the chassis, significantly reducing energy costs and emissions associated with server cooling. There is also negligible water consumption, as cooling water is supplied to servers at up to 40°C, meaning additional adiabatic cooling on the heat rejection is rarely required.

As with any technology evolution, it is important for data centre operators to understand its impact on their facilities. Liquid cooling is rapidly becoming the solution of choice to efficiently and cost-effectively accommodate increasing data centre workloads. In order to move towards greater sustainability, the time to embrace new technologies is now.

Iceotope, iceotope.com

Heatwaves are changing the risk appetite for data centre operators when thinking about a safe operating temperature, writes Luke Neville, Managing Director, i 3 Solutions Group.

More extreme weather patterns resulting in higher temperature peaks, such as the record 40oC experienced in parts of the UK in the summer of 2022, will cause more data centre failures. However, while it is inevitable that data centre failures will become more common, establishing a direct cause and effect is difficult as factors to consider include the growing number of sites and an aging data centre stock that will statistically increase the number of outages.

What the increasing peak summer temperatures are doing is shifting the needle and changing conversations both about how data centre cooling should be designed and what constitutes ‘safe’ design and operating temperatures. Since the beginning of the modern data centre industry over two decades

ago, the design of data centre cooling system capacity has always been a compromise of installation cost vs. risk.

Designers sought to achieve a balance whereby a peak ambient temperature and level of plant redundancy is selected so that should that temperature be reached, the system has the capacity to continue to support operations. The higher the peak ambient design temperature selected, the greater size/cost of the plant, with greater resilience meaning further cost for plant for redundancy. It came to down the appetite for risk versus the cost for the owner and operator. It is a fact that whenever the chosen ambient design temperature is exceeded, the risk of a failure will always be present and increases with the temperature.

ASHRAE publishes temperatures for numerous weather station locations based on expected peaks over 5-, 10-, 20- and 50-year periods. Typically, the data for the 20-year period is used for data centre ambient design.

However, this is a guideline only and each owner/operator choses their own limit based on what they feel will reduce risks to acceptable levels without increasing costs too much. Hotter summers have seen design conditions trending upwards over the last 20 years.

Legacy data centres were traditionally aligned with much lower temperatures from say 28-30oC to latterly accepted standard design conditions of 35-38oC. Systems were often selected to operate past these points, even up to 45oC (based on the UK – all other regions will have temperatures selected to suit the local climate).

The new record temperatures of +40oC in the UK will sound the warning bell to some data centre operators who may find themselves in a situation where dated design conditions, aging plant and high installed capacity will result in servers running at the limits of their design envelope.

All systems have a reduced capacity to reject heat as the ambient temperature increases and also have a fixed limit irrespective of load, at which they will be unable to reject heat. Should these conditions be reached, failure is guaranteed

More commonly, low levels of actual load demand versus the system’s design capability mean, typically, data centres never experience conditions which stress the systems. However, that requires confidence that IT workloads are either constant, 100% predictable, or both.

For now, the failure of data centre cooling is most likely to be the result of plant condition impacting heat rejection capacity rather than design parameter limitations. This was cited as the root cause of one failure during the UK’s summer heatwave when it was stated that cooling infrastructure within a London data centre had experienced an issue.

Coupled with an increase in data centre utilisation, should temperatures outside the data centre continue to rise, this will change.

Inside the data centre, the increasing power requirements of modern chip and server designs also mean heat could become more of an issue. Whatever server manufacturers say about acceptable ranges, it has been the case that traditionally data centres

and IT departments remain nervous about running their rooms at the top end of the temperature envelope. Typically, data centre managers like their facilities to feel cool.

To reduce the burden on the power consumption and over sizing of plant, it is common to integrate evaporative cooling systems within the heat rejection. However, there has been much focus recently on the quantity of water use for data centres and its impact on the sustainability of such systems. Whilst modern designs can allow for vast water storage systems and rainwater collection/use, should summers continue to get longer and dryer, more mains water use will be required to compensate, and the risk of supply issues impacting the operation of the facility will increase.

In every sense, a paradigm shift away from cooling the technical space towards a focus on cooling the computing equipment itself may present the answer.

The adoption of liquid cooling systems, for example, can eliminate the need for both mechanical refrigeration and evaporative

cooling solutions. In addition to some environmental benefits and a reduction in the number of fans at both room and server level, liquid cooling will help increase reliability and reduce failures generally, as well as at times of extremely high temperatures.

Liquid cooling seems to be gaining traction. For example, at this year’s Open Compute Summit, Meta (formerly Facebook), outlined its roadmap for a shift to direct-to-chip liquid-cooled infrastructure in its data centres to support the much-heralded metaverse. However, one of the limitations of liquid cooling designs is that they leave little room for manoeuvre during a failure, as resilience can be more challenging to incorporate into these systems.

But for now, without retrofitting new cooling systems, many existing data centres will have to find ways to use air and water to keep equipment cool. And as the temperatures continue to rise inside and outside the facility, so too will the risks of failure.

Tim Goodwill, Sales Director at G4S Fire and Security Systems, discusses how making sure that physical security arrangements are the best that they can be should be something that most of us look for.

From front gates to deter burglars, to locking doors and windows to test smoke alarms, we ensure that our properties and contents are safe. Doing the same for data centres shouldn’t be any different.

Tim says that when he is asked to provide a risk assessment for data centres, one of the first things he talks about is ‘The Six Degrees of Separation’ - combining technology-enabled security and life safety system capabilities, alongside guarding, monitoring and response services. Couple this with effective cyber security to manage risks.

The perimeter is usually the first line of defence against an external attack, so the primary goal is to achieve the three Ds of security: deter, detect and delay.

A good security system should offer high deterrence and increase the time and effort required to breach it. Linking perimeter security systems with video monitoring, intruder detection or access control means alarms can be immediately validated. In addition, unusual motion or activity can be identified before a breach to ensure a proactive approach.

The second layer is to clear space between the perimeter and the building entrance. It enhances the opportunity to delay access should a breach occur at the perimeter. At this stage, a data centre would use a Visitor Management System (VMS) to protect its facilities, people and assets.

Located after the reception/visitor’s area, but before the plant and computer rooms, are the common and circulatory areas. This layer aims to further qualify access through multiple forms of verification and monitoring.

The SOC is the heart of the data centre for physical security, the command and control of all security technologies deployed at the facility. The SOC may include cyber security teams who monitor, detect, analyse and respond to cyber security incidents. They aim to thwart cyber threats as quickly as possible, and plan so that similar occurrences do not occur.

This layer gives access to the network critical infrastructure - often called the grey spacehousing the plant, equipment rooms, generators and UPS that support the critical power, cooling and network equipment.