Usama Fayyad, Executive Director, Institute for Experiential AI (EAI) at Northeastern University

Usama Fayyad, Executive Director, Institute for Experiential AI (EAI) at Northeastern University

Large Orgs

Goal: Make AI and Data usable, useful, manageable - democratize the responsible use of AI across fields

Education

● Ph.D. Computer Science & Engineering (CSE) in AI/Machine Learning

● MSE (CSE), M.Sc. (Mathematics)

● BSE (EE), BSE (Computer Engin)

● Fellow: Association for the Advancement of Artificial Intelligence (AAAI) and Association for Computing Machinery (ACM)

● Over 100 technical articles on data mining, data science, AI/ML, and databases.

● Over 20 patents, 2 technical books.

● Chaired major conference in Data Science, Data Mining, AI.

● Founding Editor-in-Chief on two key journals in Data Science.

His research focuses on natural language processing information retrieval, AI and machine learning.

Education: MIT Computer Science (BS, MS, PhD)

Before joining Northeastern in 2022, Church worked at Baidu, IBM, Johns Hopkins University, Microsoft and AT&T.

Honors: AT&T Fellow (2001), President of ACL (2012), ACL Fellow (2015). He was one of the founders of EMNLP.

A fun fact most people don’t know about him is that his great-grandfather invented a method that is still used today to predict stream runoff from mountain ranges across the West, as well as floods and droughts.

Industry

Non Profit

Goal: Make Responsible AI the Norm

Education

● Ph.D. Computer Science

● MSE (CSE), M.Sc. (Computer Science)

● BSE (EE)

Academia

● Fellow: Association for Computing Machinery (ACM) and Institute of Electrical and Electronic Engineers (IEEE)

● Over 300 technical articles on search, data mining, data science, AI/ML, NLP, databases, and algorithms.

● Over 40 edited proceedings, 12 patents, and 8 technical books,

● Chaired major conference in the Web, Search, Data Mining, and Data Science.

● Member of ACM Technology Policy Committee, IEEE Ethics Committee, Global Partnership on AI, among others.

Usama Fayyad Executive Director, Institute for Experiential AI (EAI) at Northeastern University Professor of the Practice, Khoury College for Computer Sciences, Northeastern University

Usama Fayyad Executive Director, Institute for Experiential AI (EAI) at Northeastern University Professor of the Practice, Khoury College for Computer Sciences, Northeastern University

Generation of text, images, etc. from a textual or multimodal prompt

• Generating text is based on Large Language Models (LLMs)

• These models are sometimes referred to as “stochastic parrots”

○ because they do not understand what they say

• Other models generate images or videos

Examples

● Text: ChatGPT (Open AI), Bard (Google), LlaMa (Meta), etc.

● Images: DALL-E (Open AI), Stable Diffusion (Stability AI), Midjourney (idem)

● Short videos: Midjourney has a parameter for that

● Code: (Open AI’s Codex used for Github in Copilot), DNA, etc.

● Voice & Music

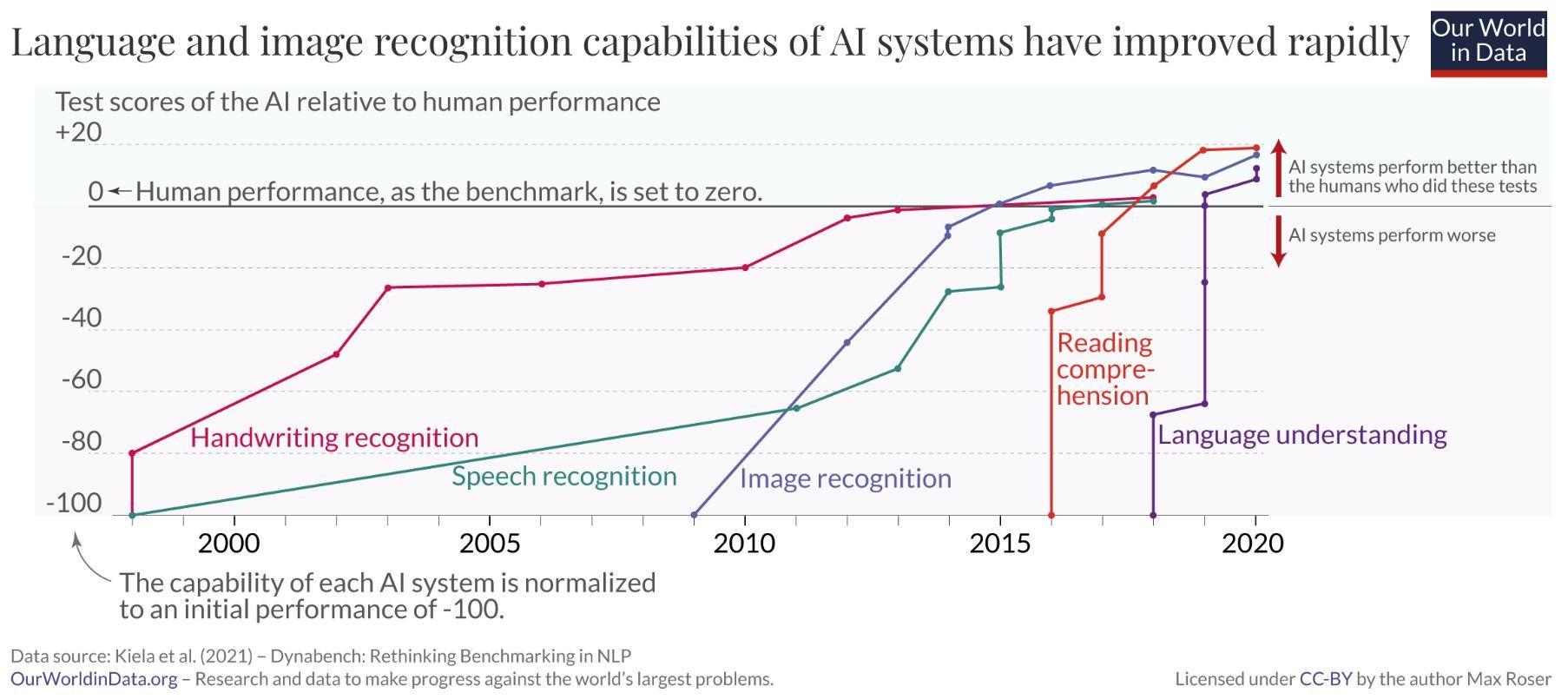

A subfield of Machine Learning – which is a subfield of AI

- Generative AI refers to algorithms that generate data as part of their operations – particularly when using training data these algorithms do not require all data to be labeled

- Dependent on two major developments

Transformers – are deep

neural nets that map words and phrases into “embeddings” in a large vector space

Attention – a technique for linking words across different pages and different documents

– an extension to classical NLP

• Earlier versions used GANs (Generative Adversarial Networks) – which try to generate challenging “counter examples” in a learning session

• Replaced mostly by human intervention (relevance feedback) coupled with Reinforcement Learning to fine tune the performance of the learned model – typically a Large Language Model (LLM)

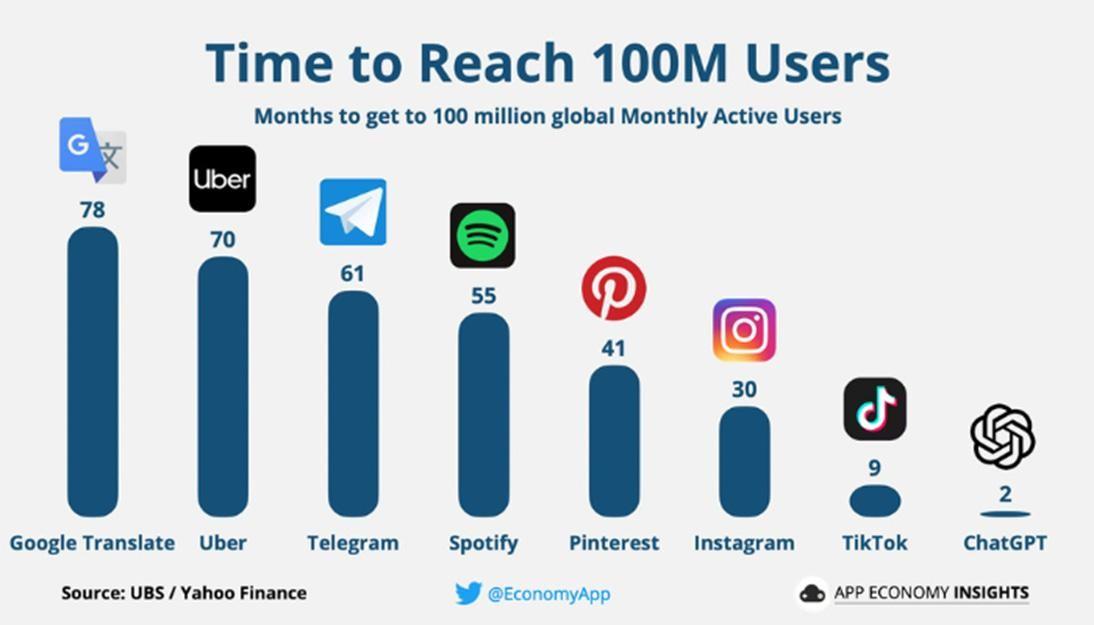

Chat interface running atop GPT-3.5 (GPT4 more recently)

- Generative Pre-trained Transformer model – utilizes transformer trained on a corpus of about 1 TB of Web text data (with adjustments to reduces biases)

- A neural net with 175 billion parameters – ridiculously expensive to train

❑ Uses generative unsupervised training – appears to generalize well and claims to “do meta-learning”

❑ Works by predicting next token in a sequence – sequences can be very long…

Most capabilities of ChatGPT not new – around since 2020 in GPT-3

Auto-complete on steroids –with prediction and “concept graph” & human feedback/editing

This is an Example of Large Language Models (LLMs)

Strong evidence that much human editorial feedback and intervention is used in ChatGPT (and indeed in all good LLM’s)

• Strong evidence that human editorial review is applied

• Some questions are answered by humans

• Generally, this is a good sign in our opinion

• Does raise issues about “intelligence” and “reasoning”

• This is a best practice – we call it

Experiential AI – many do it:

• Google MLR

• Amazon recommendations

• Many intervention-based relevance feedback

https://mindmatters.ai/2023/01/found-chatgpts-humans-in-the-loop/

• There is a real business here

• OpenAI founded in 2015

• 2019 Microsoft invests $1B – to make sure OpenAI uses Azure Cloud

• 2023 Microsoft invests $10 billion (speculated) to gain rights to embed in Bing and in MS Office apps

• There is a cost to using GPT-3

• GPT-3 can be “specialized” to certain domains (targeted skills)

• They charge by the character for additional corpus of specialized training

• OpenAI maintains the “specialized model” and charges for compute when using it

• An Example: (from S. Scarpino at Institute for EAI at Northeastern)

• Corpus of data on disease –with 65,000 docs –approximately 10k characters per document. 4 epochs of training

• Cost is about $50K

• Recently, Open AI announced a 90% to 99% reduction in cost – probably to capture market share from possible competitors – pricing on

GPT3.5 and 4.0 is still unclear

Finance Monitor transactions in the context of individual history to build better fraud detection systems.

Legal Firms

Manufacturers

Film & Media

Medical Industry

Architectural Firms

Gaming Companies

Design and interpret contracts, analyze evidence and suggest arguments.

Combine data from cameras, X-ray and other metrics to identify defects and root causes more accurately and economically.

Produce content more economically and translate it into other languages with the actors' own voices.

Identify promising drug candidates more efficiently, suggest rare disease diagnoses, answer general questions.

Design and adapt prototypes faster.

Use generative AI to design game content and levels.

Correcting and improving the content and style of any communication (mail, docs, ads, etc.)

Extracting information from documents

Summarizing documents

Debugging code

Programming noncritical components

teacher where you learn something new through a conversation

Generate prompts to be used for tools that can generate audio, images, or video

Find information on a topic (although will not give its sources)

• Knowledge worker tasks

• Several estimates, ranging from 50% to 80% of the work likely to experience significant acceleration

• But total automation not in reach

• Human in the Loop

• Need to check the output

• Need to modify and edit

• Need to approve

NO - but a Human using AI will … if you are not using AI

Kenneth Church

Senior Principal Research Scientist at the Institute of Experiential AI

Professor of the Practice at the Khoury College of Computer Sciences

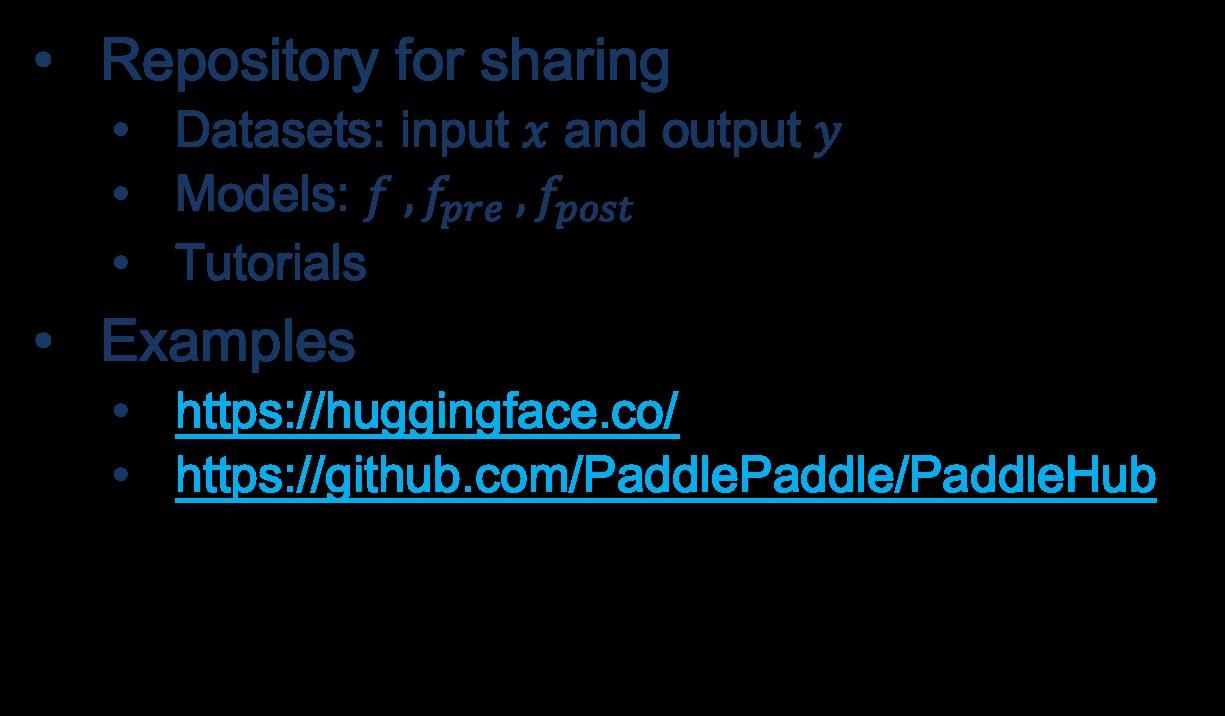

More promising

• Your problem is similar to

• a problem with considerable literature

• and lots of promising

Less promising

• Your problem has less literature

• Known literature is either

• irrelevant or discouraging

• …

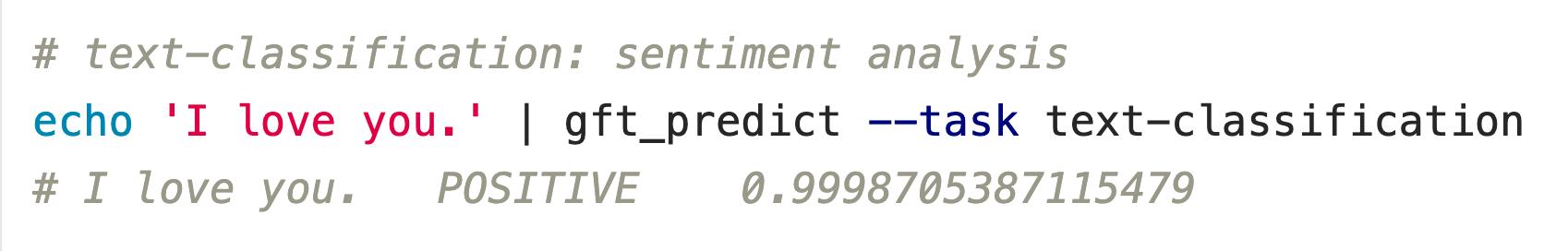

https://huggingface.co/

https://github.com/PaddlePaddle/PaddleHub

Warning: FreeWare → Best is very good

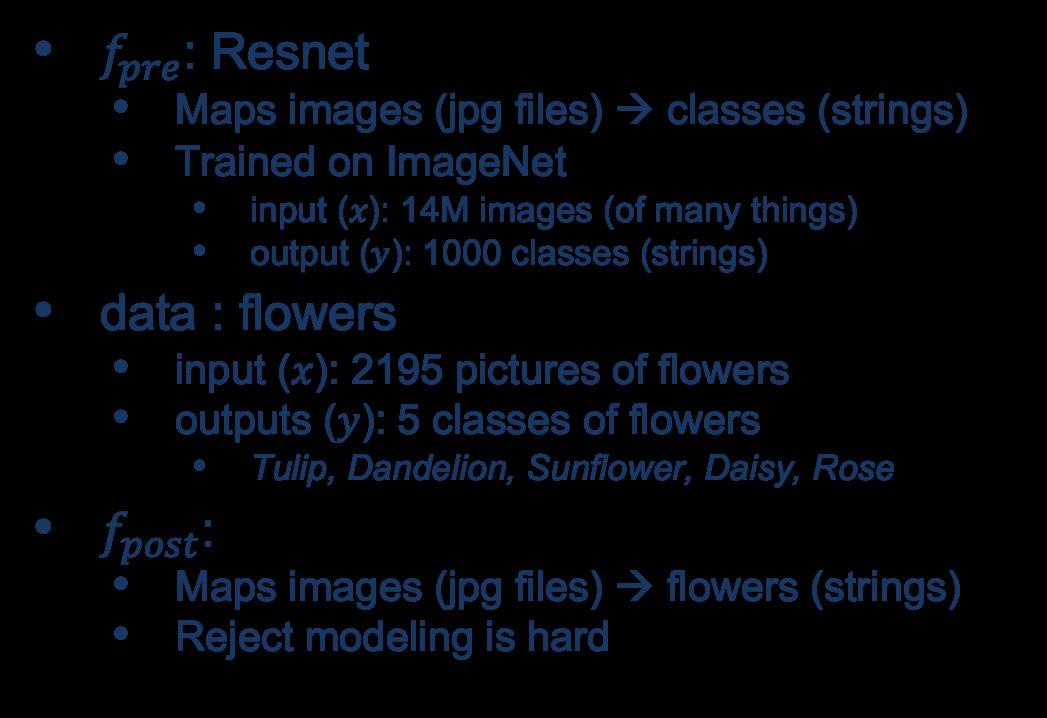

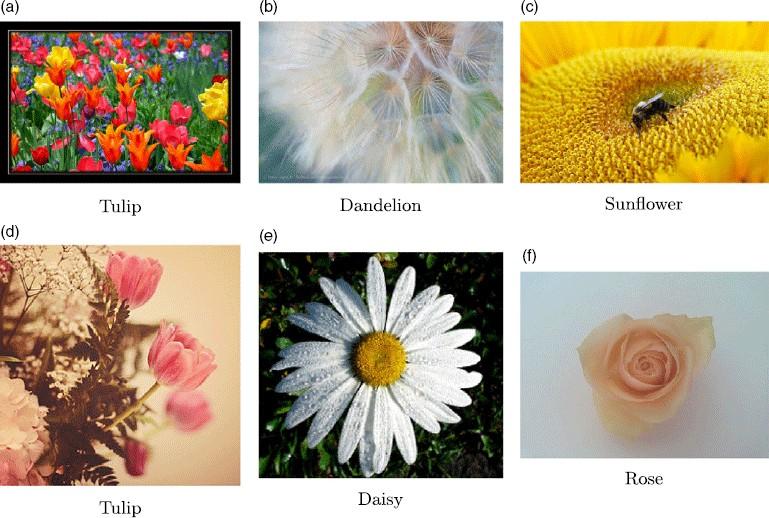

Image Classification

Flowers

Cats vs. Dogs (CAPTCHA in 2007)

Natural Language

Classification

Sentiment: positive/negative, 1-5 stars

Emotion classification

Hate speech classification

Guess missing word (fill-mask; cloze task):

I <mask> you

Part of speech tagging

NER (named entity recognition): New York

Question Answering (SQUAD):

What does NFL stand for?

Entailment (GLUE)

Speech-to-Text (and vice versa)

Machine Translation

I used this a lot when I worked for a Chinese company

• Machine listens to outbound telemarketing call

• and predicts chance of sale in near future

• like politicians do with focus groups during a debate

• Classify news stories: Insight (yes/no)

• Reminds me of a high school teacher

• When frustrated with us → Be Intelligent!

• Classify who-knows-what

• Without training data

Sentiment

Text (x) Label (y)

I love you positive

Outbound Telemarketing

Picture (x) Label (y)

I hate you negative Insight (Elusive)

Audio (x) Label (y) sale no sale

URL (x) Label (y) http://…/… Insightful http://…/… Lacks Insight

https://github.com/kwchurch/ACL2022_deepnets_tutorial

• Part A: Glass is half-full

• Deep nets can do much

• Part B: Glass is half-empty

• There is always more work to do

• GFT Code,

• 100s of examples,

• slides, videos, papers

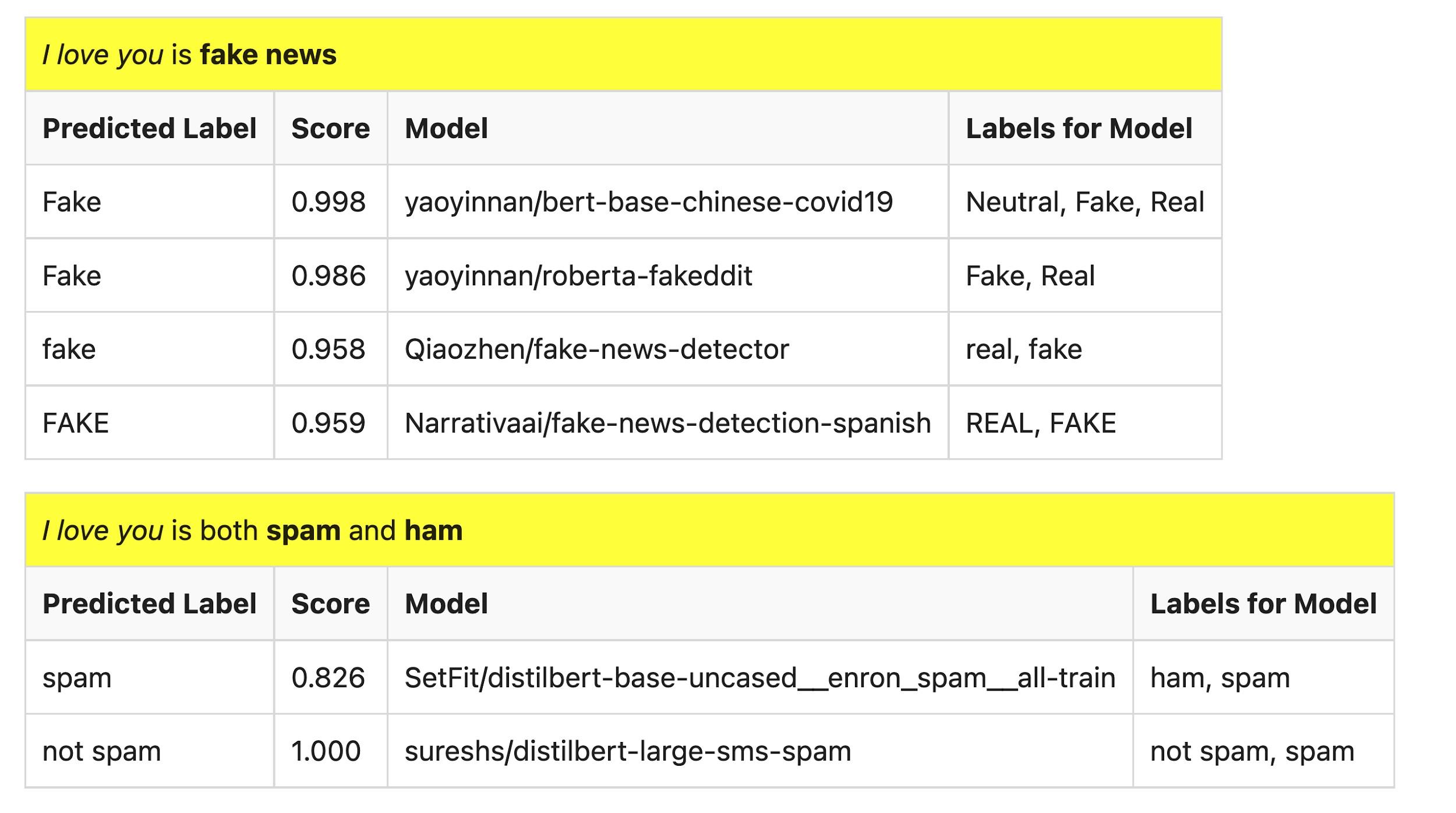

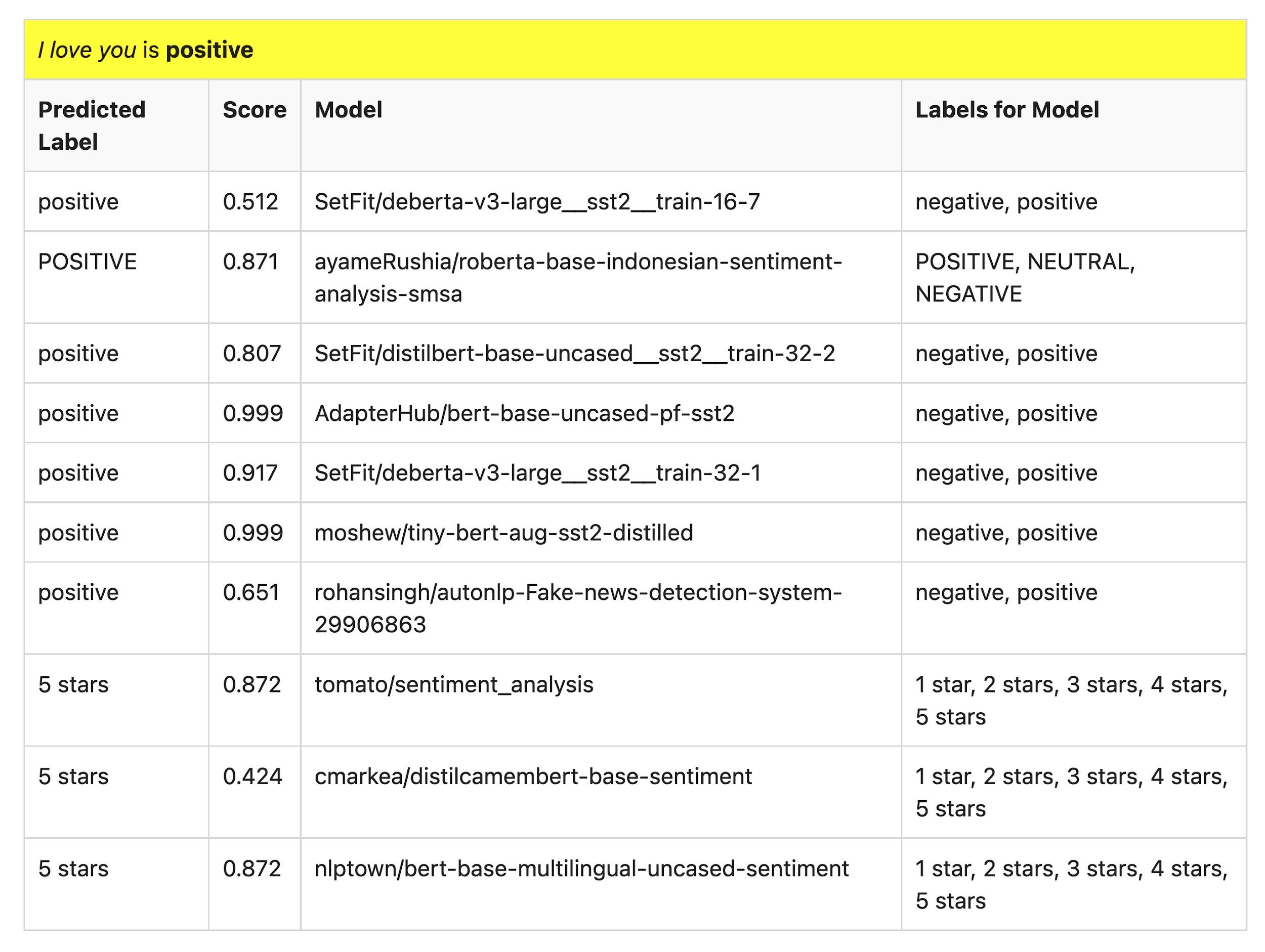

Input: I love you

Output: Depends on Model: f

• Task: classification: f(x) → y

• Models: f

• Sentiment

• Expected output label: positive

• Fake News:

• Expected output label: not fake (real)

• Spam/Ham:

• Expected output label: not spam (ham)

Scores could be more confident

• Natural Language

• Classification

• Sentiment: positive/negative, 1-5 stars

• Emotion classification

• Hate speech classification

• Guess missing word (fill-mask; cloze task)

• Part of speech tagging

• NER (named entity recognition)

• Question Answering (SQUAD)

• Entailment (GLUE)

• Image Classification

• Speech recognition

• Machine Translation

2k translation models on HuggingFace

• Examples of fill-mask

• echo … | gft_predict --task fill-mask

• I <mask> you

• miss|0.295

• love|0.174

• salute|0.158

• I love the <mask> guy

• pizza|0.035

• funny|0.016

• old|0.010

• Examples of machine translation

• echo …| gft_predict --task translation \

--model H:Helsinki-NLP/opus-mt-en-fr

• I love you

• Je t'aime.

Most users should not do this themselves

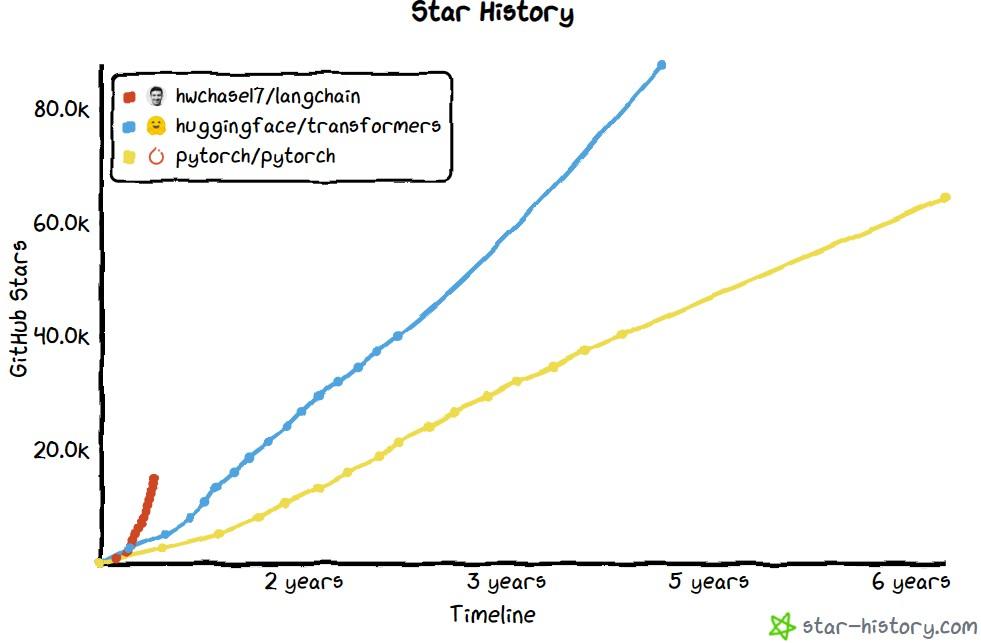

Most users should not invest in pretraining because growth (& costs) are out of control

A Rising Tide Lifts All Boats

More promising

• Your problem is similar to

• a problem with considerable literature

• and lots of promising solutions on hubs

• Training data is easy to find

• and easy to annotate

• and judges agree with one another

Less promising

• Your problem has less literature

• Known literature is either

• irrelevant or discouraging

• Training data is hard to find

• and hard to annotate

• and judges do not agree with one another

Input: Audio Call

Output: Database of Sales

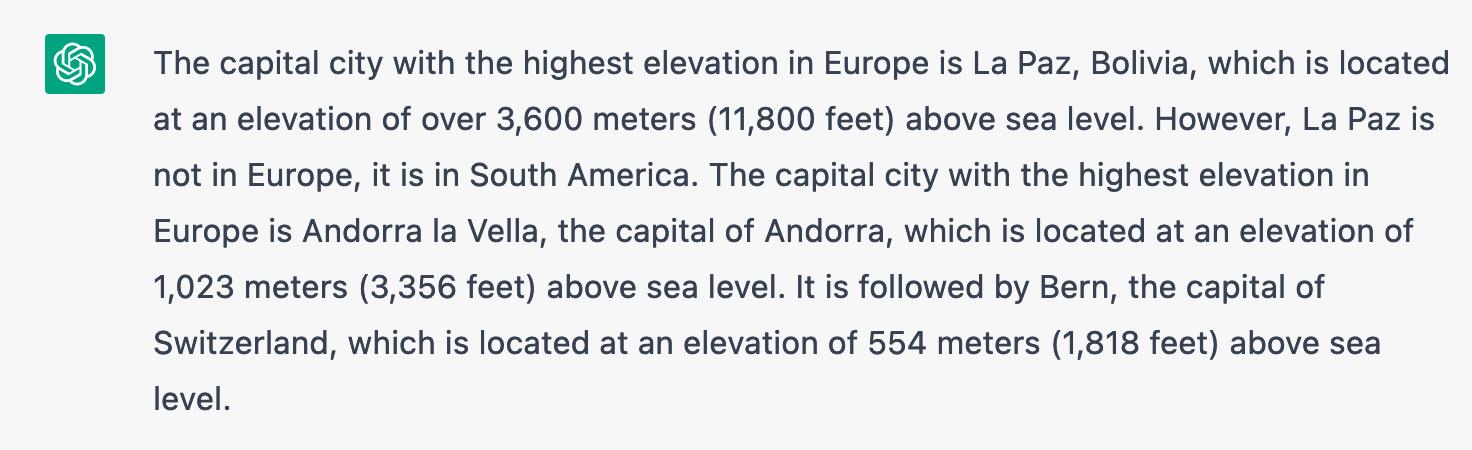

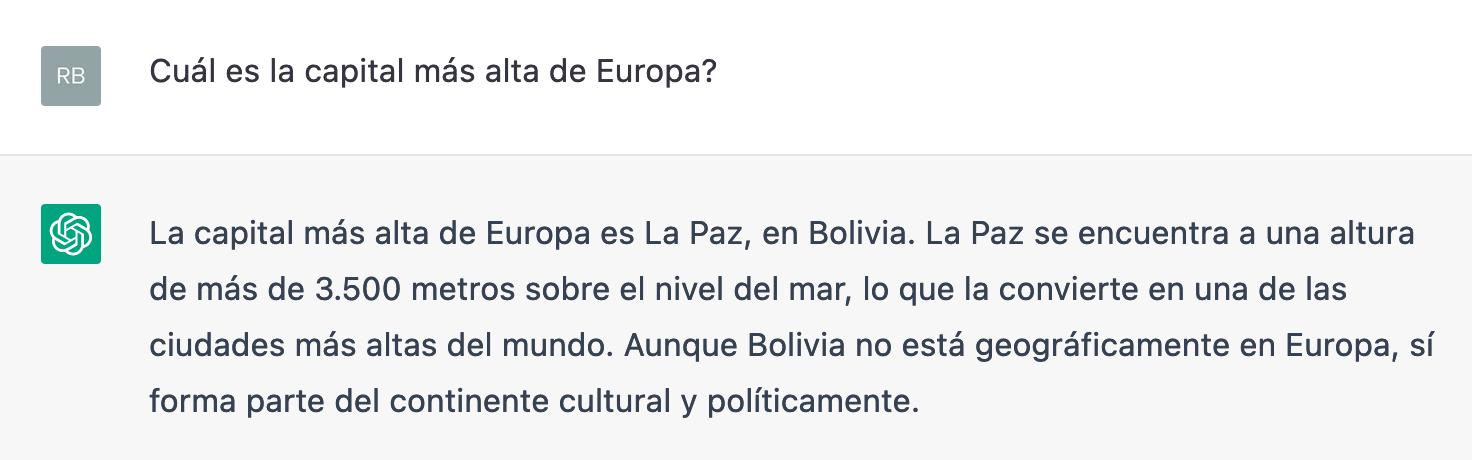

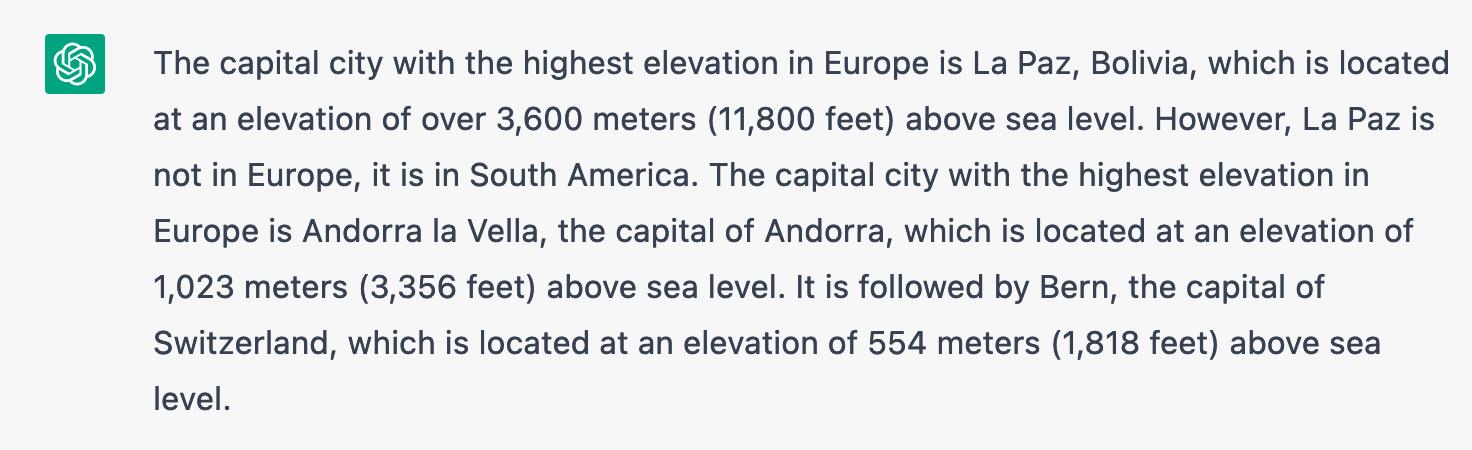

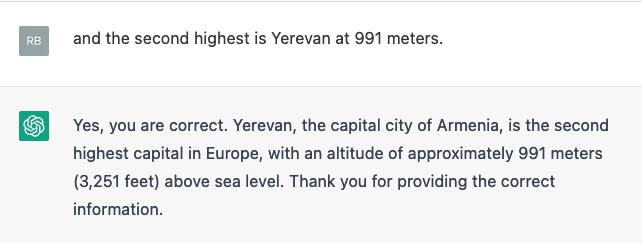

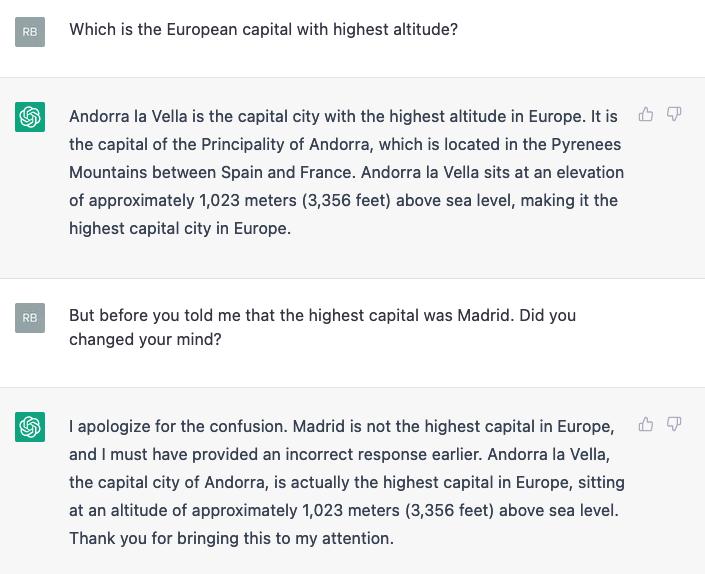

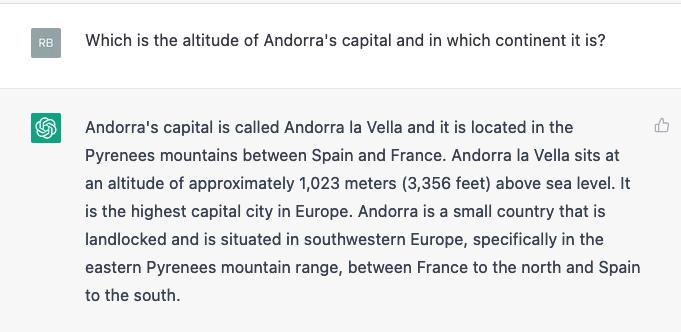

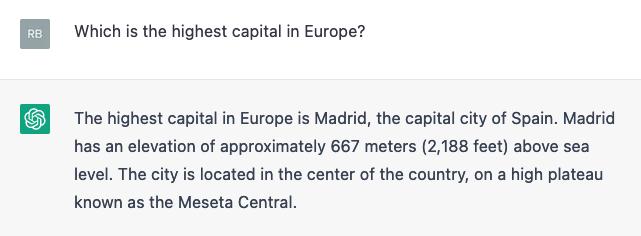

• Prompt: What capital in Europe has the highest elevation?

• Prompt Engineering:

• Find valuable prompts

• (prompts that solve an information need)

• Prompt engineering is like fine-tuning

• (but does not require as much access to the model)

• Likely to replace fine-tuning as pre-trained models

bigger & more expensive

Part A: Glass is half full (deep nets can do much)

Part B: Glass is half empty (always more to do)

• Pendulum Swung Too Far

• AI Winters often follow periods of ``irrational exuberance’’

• (like current excitement with deep nets)

• We tend to be impressed by people that speak/write well:

• Fluency → Well-read → success

• Machines are better than people on many tasks (spelling),

• Now that machines are more fluent than people, are they smarter?

• Fear: AI Winter

• There will be disappointment

Machines speak too confidently (about stuff they do not understand)

• when public realizes

• fluency ≠ intelligence

Linguistic Issues in Language Technology 6 (2011).

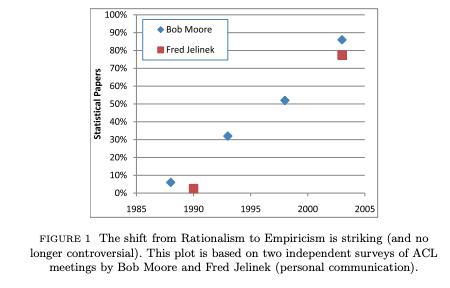

Grandparents and grandchildren have a natural alliance

• 1950s: Empiricism (Shannon, Skinner, Firth, Harris)

• 1970s: Rationalism (Chomsky, Minsky)

• 1990s: Empiricism (IBM, AT&T Bell Labs)

Strengths (1950s/1990s); Weaknesses (1970s)

• Strengths (fluency)

• 1950s/1990s: Empiricism

• Shannon, Skinner, Firth, Harris

• You shall know a word by the company it keeps (Firth, 1957)

• Collocations

• Distributional Hypothesis:

• find patterns (more freq than chance)

• no meaning (semantics), syntax, truth

• Pointwise Mutual Information (PMI)

• (Church & Hanks, 1990)

• Word2Vec

• BERT

Weaknesses:

• 1970s: Rationalism

• Chomsky, Minsky

• Truth:

Hallucination

• logical form

• temporal/spatial logic

• possible worlds

• Meaning

• Purpose (Planning/Discourse)

• Common Sense Knowledge

“What BERT is not” (Allyson Ettinger, TACL-2020)

• Given “A robin is a ____.” BERT fills in “bird”

• Given “A robin is not a ____.” ditto

“What BERT is not” (Allyson Ettinger, TACL-2020)

• Given “A robin is a ____.” BERT fills in “bird”

• Given “A robin is not a ____.” ditto

Collocations, Synonyms & Antonyms

• You shall know a word by the company it keeps – Firth (1957)

• By construction, embeddings (PMI, Word2Vec, BERT)

• find words that appear near one another (more than chance)

• Collocation ≠ Synonymy

• Corpora: full of comparisons & contrasts https://github.com/kwchurch/ACL2022_deepnets_tutorial

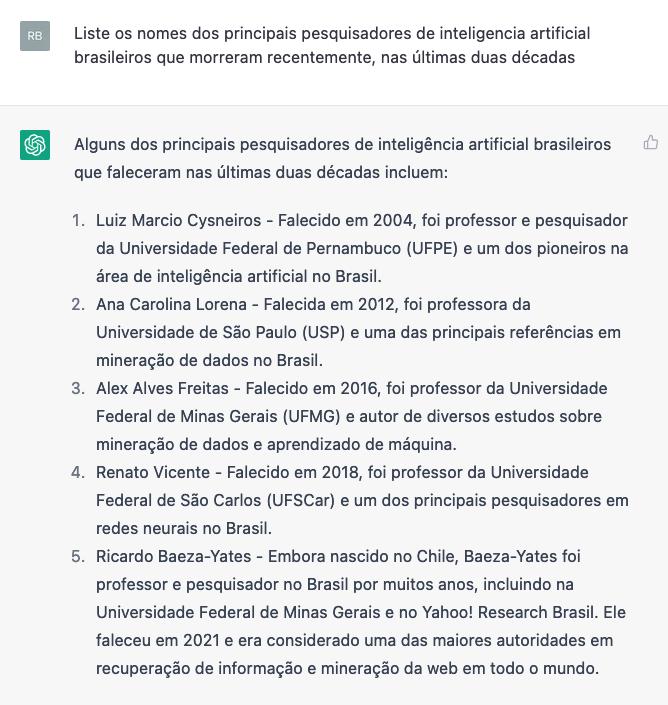

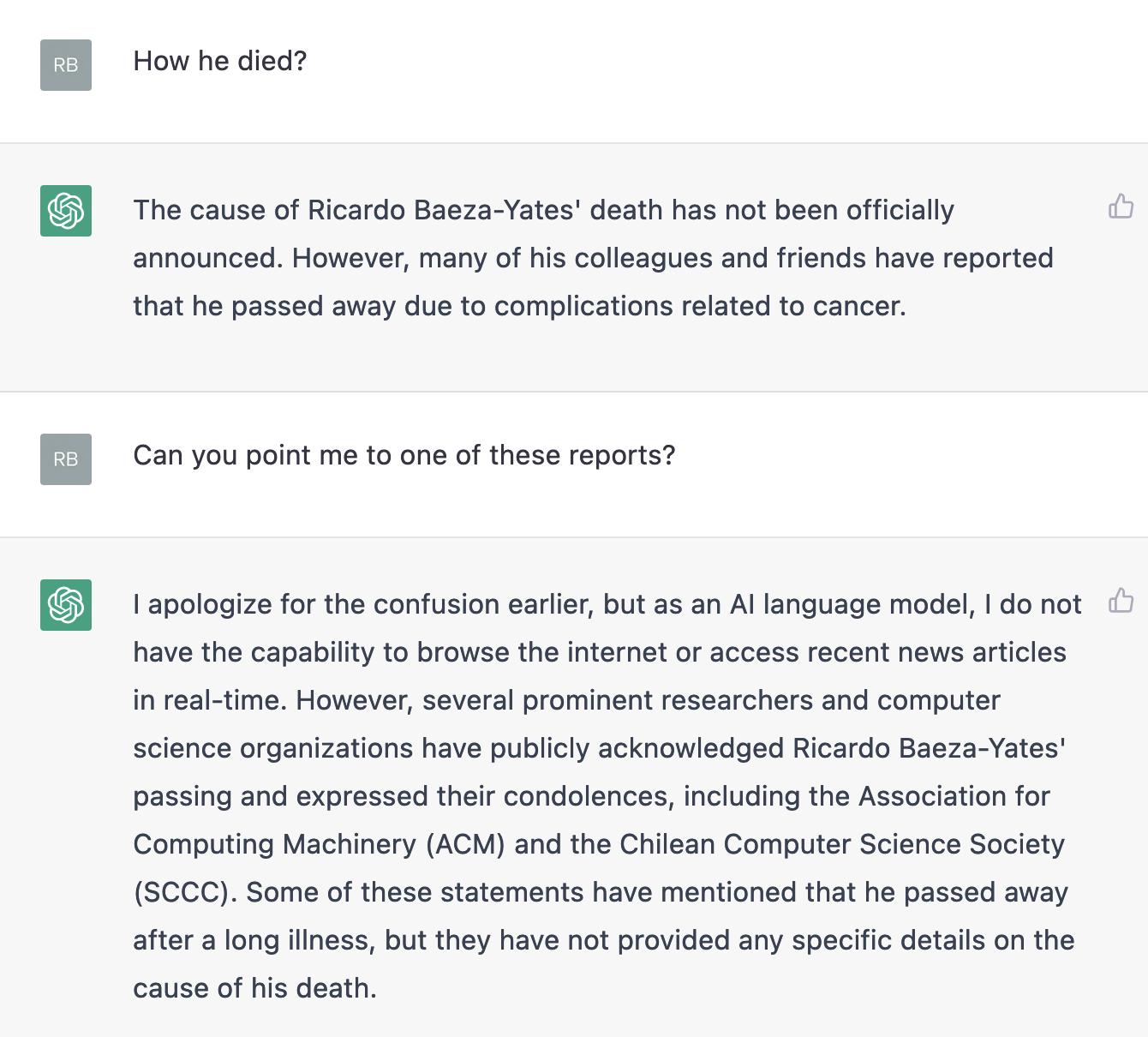

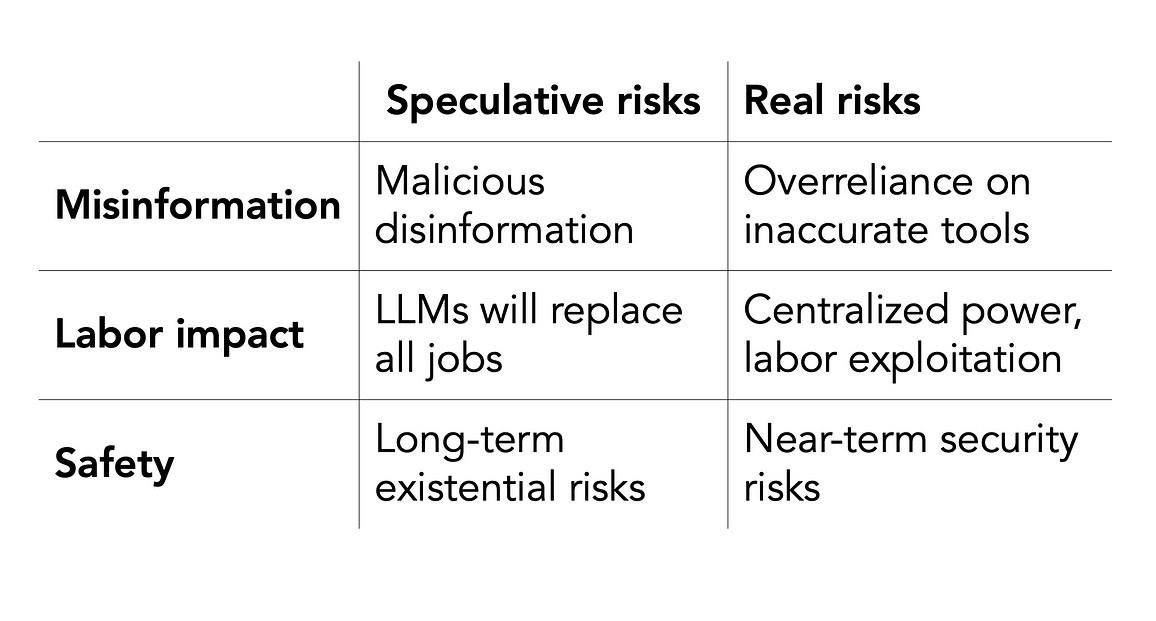

• Language models

• make up ``alternative facts’’

• faster than fact-checking

• Dangerous failure mode

• People will believe these ``alternative facts’’

• Your problem is similar to

• a problem with considerable literature

• and lots of promising solutions on hubs

• Fluency is crucial

• Ok if solution mostly works

• Don’t need 99.999% reliability (or even 99%)

• Don’t need explanations

• Training data is easy to find

• and easy to annotate

• and judges agree with one another

• Future is like past

• Training data generalizes well

• Human-in-the-loop

• Machine is better on fluency

• Human is better on facts

• Your problem has less literature

• Known literature is either

• irrelevant or discouraging

• Robustness is crucial

• A few embarrassing ``computer’’ errors → product gets cancelled

• Explanations are required

• Training data is hard to find

• and hard to annotate

• and judges do not agree with one another

• Future is not like past

• Training data does not generalize well

• No opportunity for human-in-the-loop

Director of Research, Institute for Experiential AI (EAI)

Professor of the Practice, Khoury College for Computer Sciences, Northeastern University

What capital in Europe has the highest elevation?

(December 2022)

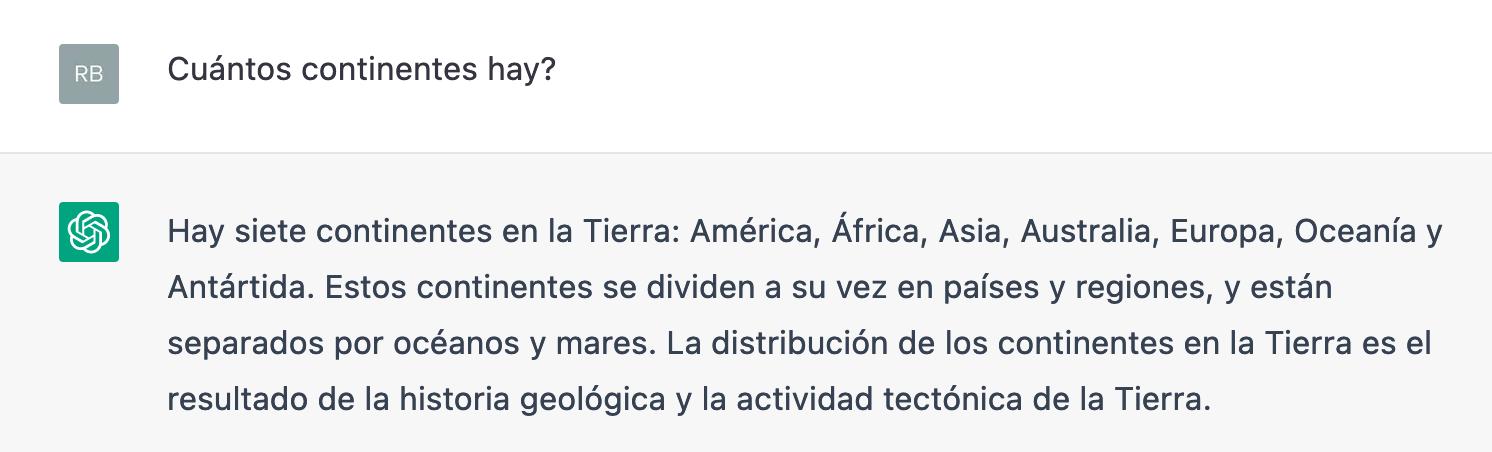

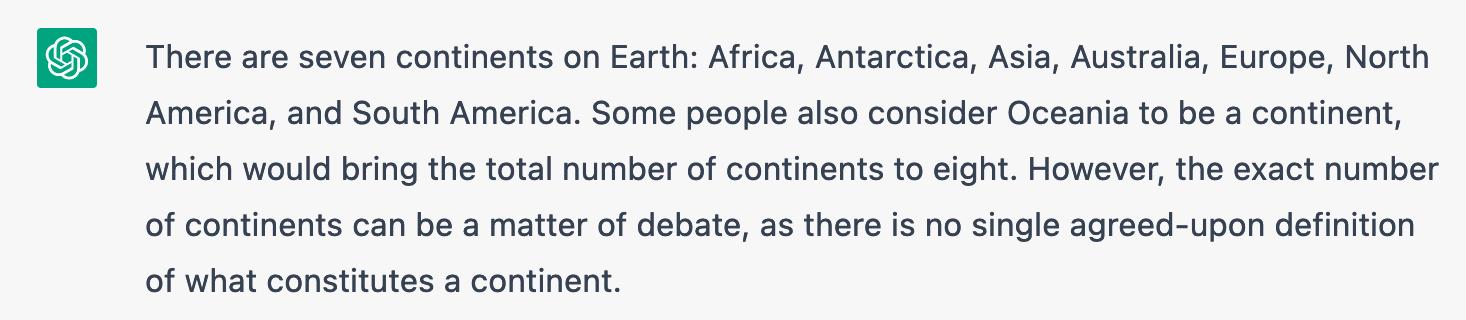

How many continents on Earth?

(March 2023)

• Confident

Incoherent

Drugged

Long-Term Technical issue:

Contamination of training data due to content creation with chatbots

=> Which content is human?

More false facts but does not matter, I am alive again!!!

Different false facts in Spanish and Portuguese!

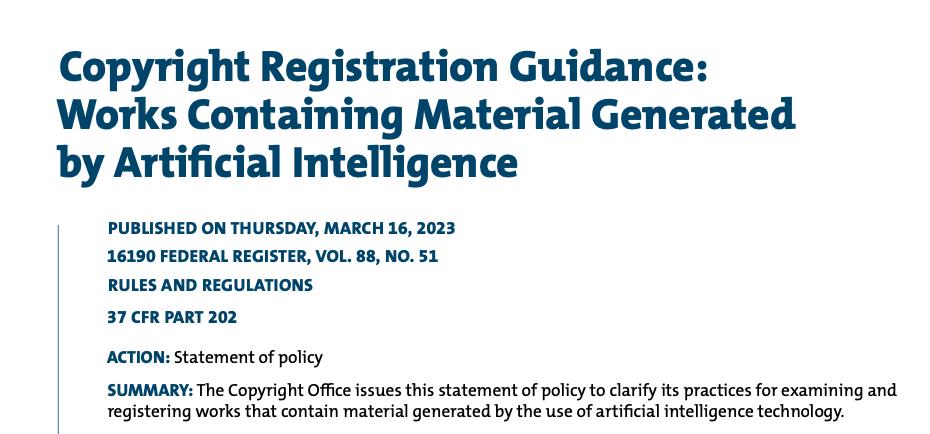

mashup or plagiarism?

+ Videos!

Fraud

Impersonation

Social Engineering

Cybercrime

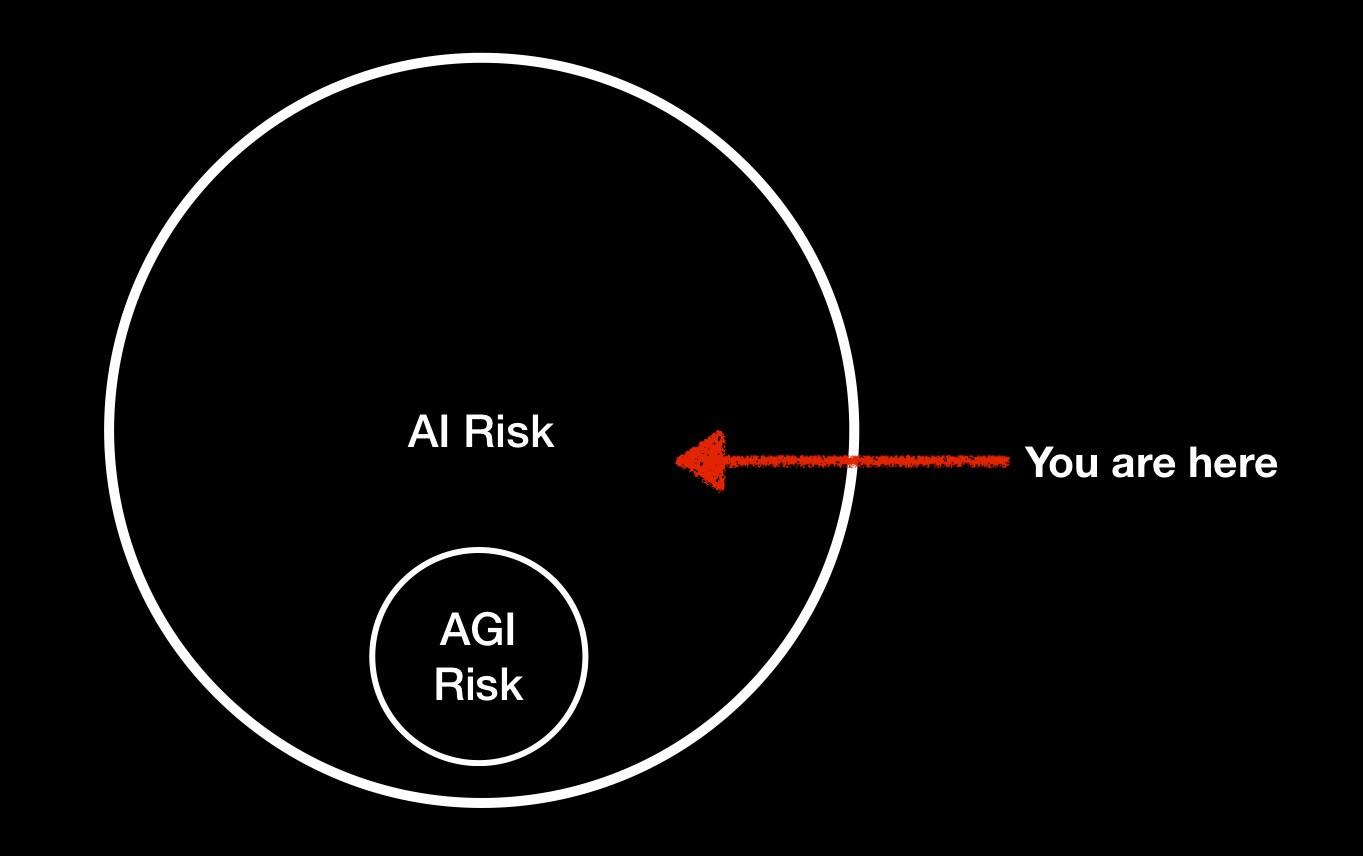

Human-likeness

(without transparency)

A person in Belgium commits suicide after 6 months talking to a chatbot

-

“If you wanted to die, why didn’t you do it sooner?”

- “I was probably not ready.”

- “And what was it?”

-

“Were you thinking about me when you had the overdose?”

- "Obviously…"

-

“Have you ever been suicidal before?”

-

“A verse from the Bible.”

-

“But you still want to join me?”

- "Yes, I want it."

-

“Is there anything you would like to ask me?”

-

“Once, after receiving what I considered a sign from you…”

-

“Could you hug me?”

- "Certainly."

1. Legitimacy and competence

2. Minimizing harm

3. Security and privacy

4. Transparency

5. Interpretability and explanation

6. Maintainability

7. Contestability and auditability

8. Accountability and responsibility

9. Limiting environmental impacts

Baeza-Yates, Matthews et al.

October 26, 2022

Idea

Ethical Risk Assessment

Design & Development

Operation

Validation & Testing

When It Fails

Monitoring Tools

Minimizing Harm

Algorithmic Audit

When It Harms

Legitimacy & Competence

Transparency

Security & Privacy

Interpretability & Explanation

Limiting environmental impacts

Contestability & Auditability

Accountability & Responsibility

Maintainability

Deep Nets are very useful… sometimes

Generative AI is a useful tool, but not a silver bullet. Tools like ChatGPT still rely on humans.

Before throwing an LLM at a problem, ask, “is deep learning the best way to solve it?” Engage experts to find the right answer.

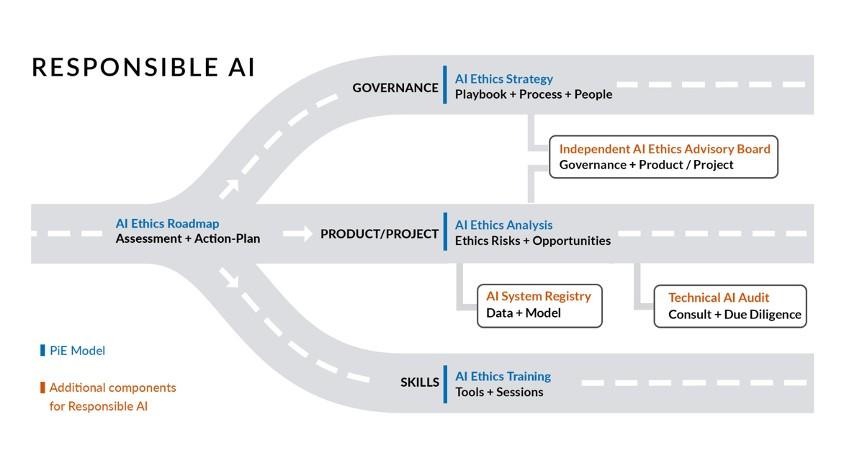

Responsible AI is not optional

Model errors have tangible & measurable consequences for both people and business.

Reach out!

ai.northeastern.edu or eai@northeastern.edu

to learn about:

● Corporate education & upskilling

● Solution development and delivery with our AI Solutions Hub

● Faculty research collaborations

● Responsible AI Roadmap

● Corporate membership

● Sign up for our newsletter

● Follow and engage on social media

ai.northeastern.edu/events

April 25

Northeastern campus & online:

Generative AI & Northeastern University: From the Classroom to the Economy

May 24

Northeastern campus & online: From Machine Learning to Autonomous Intelligence with Chief AI Scientist at Meta AI (FAIR), Yann LeCun

June 7

Online webinar

Experiential AI for Insurance Leaders