LIFELIKE HOPPERS

Welcome to the Spring 2026 issue of VFX Voice!

This edition spotlights the recent 24th Annual VES Awards Show. Congratulations to all our nominees, winners and honorees – and thank you to the worldwide VFX community whose talent, passion and support continue to inspire the VES and VFX Voice! Our cover story leaps into Hoppers, Pixar’s new animated adventure that began life in 2D before evolving into a richly layered 3D experience. We also explore the expanded effects in Season 2 of Netflix’s live-action, sea-voyaging One Piece, from its stretch-powered hero to a fully CG character, a pair of towering giants and a massive sperm whale. In gaming, we introduce you to the evocative environmental art – and the compelling creative philosophy behind it – that elevates Ghost of Yōtei.

Elsewhere in this issue, we examine the intersection of science and art in creature animation, track the realities of time zone-crossing VFX pipelines on international co-productions, and convene renowned supervisors to discuss how three of the greatest VFX films of all-time pushed the art and craft to new heights. Our Spring special focus turns to VFX in India, and in honor of ILM’s 50th anniversary, we salute the Dykstraflex – the ingenious homemade motion control camera system that made the VFX in Star Wars possible. We also profile Framestore Lead Modeler Crystal Bretz and Intel Principal Engineer Attila T. Áfra.

Be sure to visit the VES Awards Show Photo Gallery, capturing the 2026 award winners, the celebration, and presentation of the Lifetime Achievement Award to Producer Jerry Bruckheimer and the VES Visionary Award to Wētā Workshop Co-Founder and Chief Creative Officer Sir Richard Taylor.

Dive in and meet the innovators and risk-takers who push the boundaries of what’s possible to advance the field of visual effects.

Cheers!

Kim Davidson, Chair, VES Board of Directors

Nancy Ward, VES Executive Director

P.S. You can continue to catch exclusive stories between issues only available at VFXVoice.com. You can also get VFX Voice and VES updates by following us on X at @VFXSociety and VFX_Society on Instagram.

FEATURES

8 VFX TRENDS: CREATURE ANIMATION

Science and art: What it takes to believe in a creature.

14 COVER: HOPPERS

Pushing the idea that animals know more than they show.

18 INDUSTRY: INTERNATIONAL CO-PRODUCTIONS

The pros and cons of taking a more global view of VFX.

26 PROFILE: CRYSTAL BRETZ

Framestore Montreal’s Lead Modeler accepts the challenge.

30 TELEVISION/STREAMING: ONE PIECE

An entirely CG character joins the Straw Hats for Season 2.

36 SPECIAL FOCUS: VFX IN INDIA

Capacity for growth continues India’s rise as a global power.

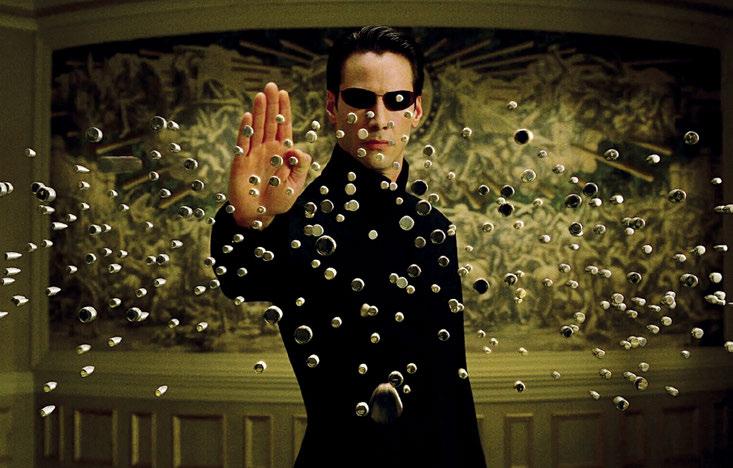

42 VFX VAULT: DIGITAL INNOVATORS

How Terminator 2, Inception and Life of Pi changed VFX.

48 THE 24TH ANNUAL VES AWARDS

Celebrating excellence, innovation and artistry.

56 VES AWARD WINNERS

Photo gallery of the winners in Film, Animation and TV.

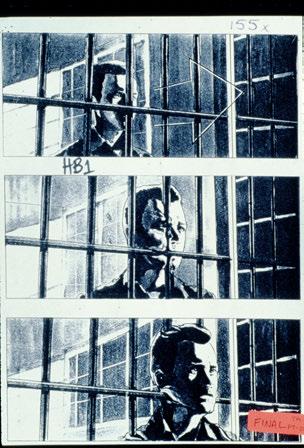

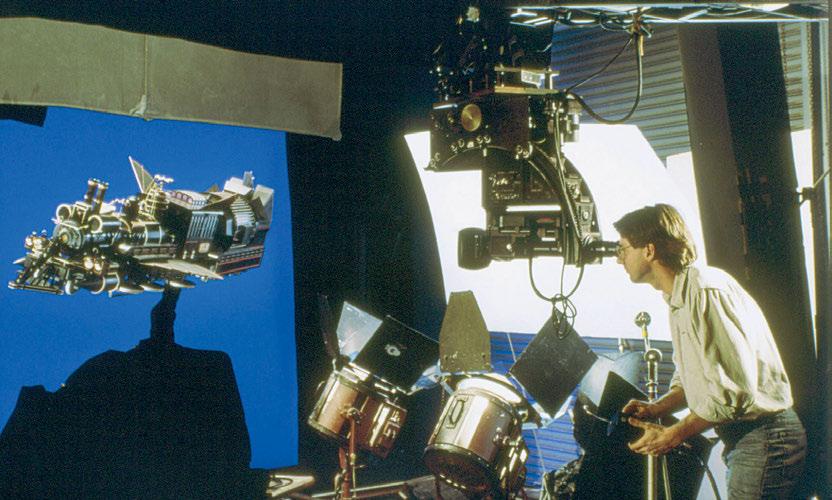

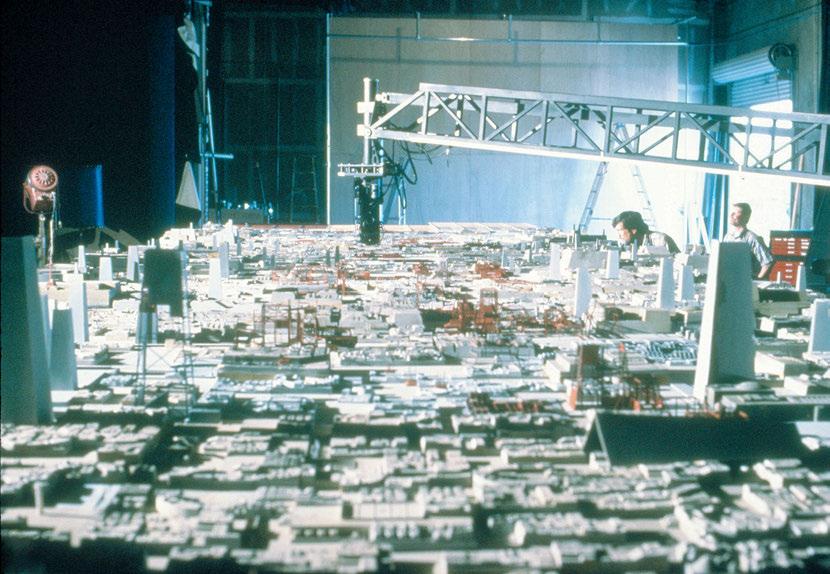

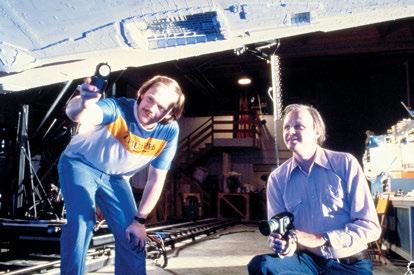

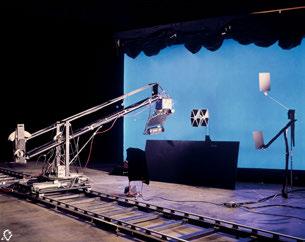

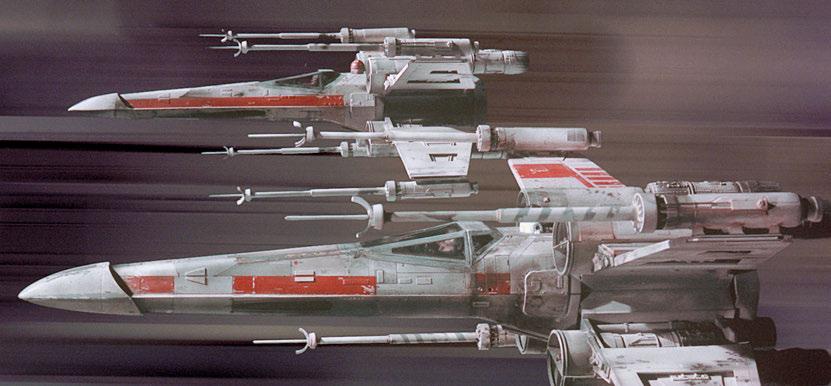

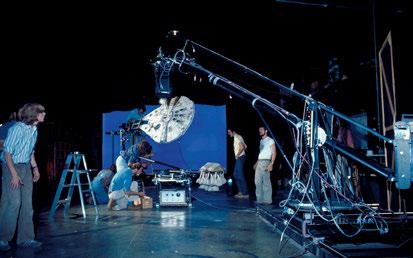

62 VFX HISTORY: THE DYKSTRAFLEX

The motion control camera system home-grown for Star Wars

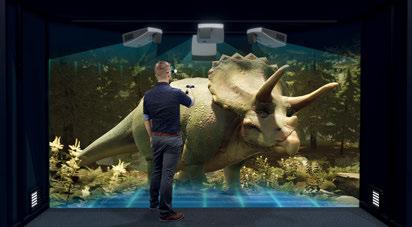

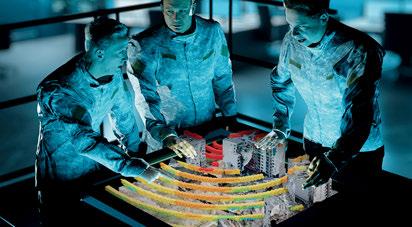

70 TECH & TOOLS: ADVANCING HOLOGRAMS

Technology on the bridge to future visual media innovation.

74 PROFILE: ATTILA T. ÁFRA

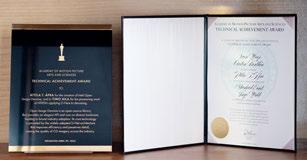

Academy-honored Intel Engineer is busy thinking about AI.

80 VIDEO GAMES: GHOST OF YOTEI

The power of nature telling the story in color, light and weather.

86 VR/AR/MR TRENDS: STAR WARS: BEYOND VICTORY

Lucasfilm game introduces a new twist via MR integration.

DEPARTMENTS

2 EXECUTIVE NOTE

90 THE VES HANDBOOK

92 VES SECTION SPOTLIGHT – TORONTO

94 VES NEWS

96 FINAL FRAME – VFX VISIONARIES

ON THE COVER: Mabel Beaver is influenced by her human tendencies in Hoppers (Image courtesy of Disney/Pixar)

SPRING

VFXVOICE

Visit us online at vfxvoice.com

PUBLISHER

Jim McCullaugh publisher@vfxvoice.com

EDITOR

Ed Ochs editor@vfxvoice.com

CREATIVE

Alpanian Design Group alan@alpanian.com

ADVERTISING

Arlene Hansen arlene.hansen@vfxvoice.com

SUPERVISOR

Ross Auerbach

EDITORIAL ASSISTANT

Desiree Bowie

CONTRIBUTING WRITERS

Trevor Hogg

Katie Kasperson

Chris McGowan

Barbara Robertson

ADVISORY COMMITTEE

David Bloom

Andrew Bly

Rob Bredow

Mike Chambers, VES

Lisa Cooke, VES

Neil Corbould, VES

Irena Cronin

Kim Davidson

Paul Debevec, VES

Debbie Denise

Karen Dufilho

Paul Franklin

Barbara Ford Grant

David Johnson, VES

Jim Morris, VES

Dennis Muren, ASC, VES

Sam Nicholson, ASC

Lori H. Schwartz

Eric Roth

Tom Atkin, Founder

Allen Battino, VES Logo Design

VISUAL EFFECTS SOCIETY

Nancy Ward, Executive Director

VES BOARD OF DIRECTORS

OFFICERS

Kim Davidson, Chair

David Tanaka, VES, 1st Vice Chair

Brooke Lyndon-Stanford, 2nd Vice Chair

Frederick Lissau, Secretary

Jeffrey A. Okun, VES, Treasurer

DIRECTORS

Fatima Anes, Laura Barbera, Alan Boucek

Kathryn Brillhart, Colin Campbell, VES, Mary Carr

Christina Caspers-Römer, Emma Clifton Perry

Rachel Copp, Dayne Cowan, Laurence Cymet

Dave Gouge, Kay Hoddy, Dennis Hoffman

Thomas Knop, VES, Karen Murphy, Maggie Oh

Joel Pennington, Robin Prybil, Christine Resch

Lopsie Schwartz, Agon Ushaku, Sean Varney

Sam Winkler, Philipp Wolf

ALTERNATES

Klaudija Cermak, Fred Chapman, Aladino Debert

Jess Loren, William Mesa

Visual Effects Society

5000 Van Nuys Blvd. Suite 310

Sherman Oaks, CA 91403 Phone: (818) 981-7861 vesglobal.org

VES STAFF

Elvia Gonzalez, Associate Director

Jim Sullivan, Director of Operations

Ben Schneider, Director of Membership Services

Wayne Watkins, Donor Relations Manager

Charles Mesa, Media & Content Manager

Eric Bass, MarCom Manager

Ross Auerbach, Program Manager

Colleen Kelly, Office Manager

Shannon Cassidy, Global Manager

Adrienne Morse, Operations Coordinator

P.J. Schumacher, Controller

THE SCIENCE OF CREATURE ANIMATION: ACCURACY VS. ARTISTRY

By KATIE KASPERSON

Talking animals, aliens, fantastical beasts – when we see a creature onscreen, what does it take for us to believe in it? In VFX and animation, this crucial question is a guiding light that keeps creators toeing the line between biomechanical accuracy and artistic license. Achieving this delicate balance can mean the difference between a story falling flat or hitting its intended emotional beats.

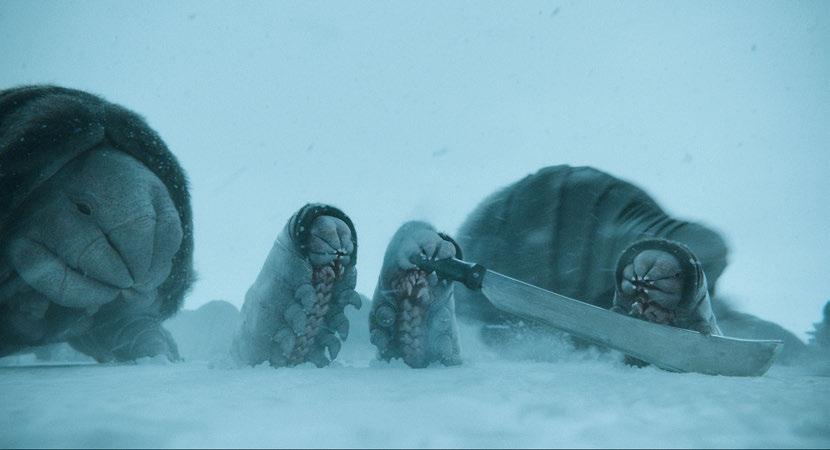

INVENTING AN ALIEN

Developing a creature from scratch begins with addressing every detail. “We start with the story, the tone and what the director wants,” says DNEG’s Robyn Luckham, Animation Director on Mickey 17. Tasked with animating the Creepers, alien creatures that inhabit the film’s icy planet of Niflheim, Luckham spoke with director Bong Joon Ho and the film’s lead designer to nail down the Creepers’ every characteristic. “It starts with where they live and how the creatures are formed, based on their environment. Then, we get into character – what characters do they play in the story, and what’s the tone of the story? Is it a serious film or more playful? Are they more playful?”

TOP: With a background in zoology, Framestore Animation Director Pablo Grillo brings an in-depth understanding of animal anatomy, psychology and behavior to his VFX work. (Image courtesy of Framestore and StudioCanal Films Ltd.)

OPPOSITE TOP: Animation Director Pablo Grillo believes what made the Hippogriff in Harry Potter and the Prisoner of Azkaban so compelling was that it was naturalistic in its performance and paid homage to the real world. (Image courtesy of Framestore and Warner Bros. Entertainment)

The Creepers have their own character arc. According to Luckham, at first, they’re ‘repulsive,’ but eventually they ‘win you over.’ To inject them with cute factor a cute factor, Luckham drew inspiration from bear cubs and the Cat Bus in My Neighbor Totoro, as well as walruses, millipedes, horses and dogs. “What creatures are out there in our world that represent something similar,” he asks, “and how can I make a Frankenstein’s monster?”

Luckham also considered their habitat; how they eat, breathe and move, and how they protect themselves. “You have to make sure there’s an alignment between the creature’s design and the environment. There are a lot of assumptions you make because you’re on Earth, and when you’re creating a fantastical creature, you have to

reassess. You’re taking a leap when doing an alien,” he continues. “You can make it as fantastic as you want, but you have to justify it because everyone will know if you haven’t. Sometimes, it has to look real, and sometimes it has to be more cinematic.”

After addressing the unknowns, Luckham prepares for the first animation test. The whole process takes over a year. “The Creepers are an evolution – they started as a [2D] picture, but in the film, they’re standing up, they’re rolling, they’re doing all sorts of things. It takes time to get that right.”

While animators and VFX supervisors often turn to scientists –zoologists, paleontologists, biologists and the like – for guidance, Luckham was afforded no such luxury on Mickey 17. “I would love to talk to scientists on every project, but it depends on the show and the budget.” On a sci-fi film like Mickey 17, the creatures in question are entirely fictional. “It’s a fantasy creature, so having a scientist there – they don’t really have a frame of reference,” Luckham remarks. “I could make the judgments myself.”

Instead of consulting with external academics, the crew employed its own team “of phenomenally trained Creeper supervisors” who were “experts in anatomy and muscle activation,” according to Luckham. These experts ultimately advised on movement – the jiggle of fat or the rippling of skin. “You have to build layer by layer,” he argues.

After rounds and rounds of animation tests and tweaks, the Creepers finally came into their own. Each creature has “a thick outer layer to protect it from all the elements,” Luckham states, “and it has a small mouth with hard ends that it uses to crunch rocks.”

It’s one thing for a creature to exist; it’s another for it to interact. Crucial to the story is the Creepers’ ability to communicate, not only with one another but also with the titular Mickey. “There’s

that one little line of dialogue, which is, ‘How are you, Mickey?’ I had to work backwards,” Luckham admits. “All our speech comes from breath: breathe in, speak, breathe out. That goes through our larynx. That’s our logic.”

Occasionally, Luckham would ‘bend the rules’ at Bong Joon Ho’s instruction. “You have to make compromises,” he says, but achieving a sense of reality was his primary goal. “I want to get it as real as possible because every creature we see, we put our emotions into, and we can feel that emotion back. It’s our interpretation. It’s quite unique and quite a privilege to create a brand-new creature,” he adds. “We’re creating a language, creating a physiology from scratch. It was a real challenge, but that’s the work I enjoy.”

ACCESSING THE SOUL

Like many of today’s movies, Mickey 17 is a page-to-screen adaptation of the sci-fi novel Mickey7. While the written word knows no bounds in what imagery it can inspire, films are limited by their reliance on visual perception. Russell Dodgson, VFX Supervisor at Framestore, faced this fact while working on His Dark Materials, HBO’s live-action interpretation of Philip Pullman’s fantasy trilogy. “We had to create a character called a Mulefa, and the way it’s written in the books is an absolute design disaster,” he states. “It’s got a diamond-shaped body, four legs and no spine, and it can roll around on seed pods. [Pullman has] written something fantastical, but he hasn’t necessarily worried about how it translates to the screen. That’s not his concern. He tells you that it’s elegant, but when you see it, it doesn’t look as elegant as described.”

Like Luckham, Dodgson began with the big questions. “What’s pleasing to look at? Which rules do you stick by? Do you give it a more human-like ability to express, or do you honor the true mechanics?” he asks, noting the importance of intention when it

TOP: To inject the Creepers with the ‘cute factor’ for Mickey 17, Visual Effects Supervisor Robyn Luckham pulled inspiration from bear cubs and the cat bus in My Neighbor Totoro as well as from walruses, millipedes, horses and dogs. (Image courtesy of DNEG and Warner Bros. Pictures)

BOTTOM: Visual Effects Supervisor Robyn Luckham had to make sure there was an alignment between the creature’s design and the environment in Mickey 17. The Creepers are fantasy creatures, so science played no role. (Image courtesy of DNEG and Warner Bros. Pictures)

comes to creature design. Dodgson contacted a zoologist to advise on the non-human characters in His Dark Materials “We went through all the analogous creatures that exist, that we could draw from, to build this complete fantasy creature. There’s a very fine line between what rules you can break and what you can’t, relative to an audience’s perception or what they understand about something. You slowly build this picture that works through trial and error.”

For every human in His Dark Materials, there is an animal companion called a daemon – a living manifestation of the person’s soul. “The whole point is that they have a human consciousness,” explains Dodgson, who called upon a team of puppeteers during production. “As long as you have puppeteers who are sensitive to performance, who understand the rhythm of the real creature and the body language that is going to work with an actor, then what you’ve got on set is something that is physically the right shape, moving in a similar rhythm and tempo [as the animation], but has this human intent.”

Since the daemons act more civilized than wild beasts, “we had to strip out animal behavior and replace it with human focus and intent,” Dodgson notes. “We had to strike a delicate balance. There’s so much bidirectional language between animals and us. That’s why they’re such a beautiful vessel for connecting with an audience.”

BIRDS, BEARS AND FANTASTIC BEASTS

Pablo Grillo, seasoned Animation Director at Framestore, is credited with animating the Paddington, Fantastic Beasts and Harry Potter franchises. Grillo believes that all animals, humans included, share the same essence. “We’re all vertebrates and, going even beyond that, are all made of the same recipe,” he says. “Once you understand that, you can find the throughlines. A lot of differences are arbitrary.”

With a background in zoology, Grillo brings an in-depth understanding of animal anatomy, psychology and behavior to his VFX work. “Visual effects animation demands that the material looks completely authentic when it’s put in a real space and against live action. We have to do a huge level of study and be very analytical. That’s what binds the artistic and scientific.” Whatever the project, Grillo stresses the significance of realism and universality as it relates to creature animation. “Finding something familiar to lean on is essential,” he shares. “I always tell other animators to look at animal ‘fail’ videos. I think there’s a lot to be learned there. You experience feelings of optimism, failure and shame. What you’re trying to do is access a truth.” Harry Potter and the Prisoner of Azkaban, for instance, introduces a character called a Hippogriff, a legendary creature that’s both bird and mammal. “I was lucky enough to work on the Hippogriff,” Grillo recalls. “What made it so tangible that it was utterly realistic in its performance was that it looked real. It was naturalistic. It wasn’t trying to do anything

established the basics of

for Fantastic Beasts and Where to Find Them, he endowed it with a personality, beginning with the aggression of a honey badger mixed with other animal traits. Nifflers are known for their insatiable attraction to shiny objects, precious metals and jewelry, which they store in a belly pouch. (Image courtesy of Warner Bros. Pictures)

BOTTOM: Visual Effects Supervisor Russell Dodgson contacted a zoologist to advise on the non-human characters in His Dark Materials (Image courtesy of Framestore and HBO)

TOP: Once Animation Director Pablo Grillo

the Niffler

TOP TWO: Among the visually-striking creatures in Fantastic Beasts: The Crimes of Grindelwald is the Zouwu. The team referenced lizards for body movement in contrast to the cat-like facial performance. They also took reference for her head and tail movements from Chinese dragon parade performances. (Image courtesy of Warner Bros. Pictures)

BOTTOM TWO: Puppeteers who worked on His Dark Materials understood the rhythm of the creature and the body language that works with an actor, moving in a similar rhythm and tempo as the animation, but with human intent. (Image courtesy of Framestore and HBO)

outlandish. I always thought that was a successful creature because of its homage to the real world. It felt absolutely compelling.”

Years later, Grillo joined the Paddington movies, animating the beloved titular bear himself. “Paddington is a young bear, but more importantly, he’s a person that you can believe and invest in. There was a choice in terms of defining his anatomy, to have him keep up with the other actors and walk normally, and for that not to be a distraction,” Grillo says. In this case, he split away from biomechanical accuracy and instead embraced a narrative-driven design. “We had to devise an anatomy where the legs poke out from under the belly; we built a strange hybrid pelvis. It was a creative decision.”

Grillo returned to the Harry Potter cinematic universe for Fantastic Beasts and Where to Find Them, a film that introduces audiences to extraordinary fictional beings. “One of the most enjoyable creations for me was the Niffler because it’s a creature that, on the page, was almost impossible in that it was very small but had to be very fast and voracious,” Grillo describes. “It had to be a humorous creature – a comedy actor as much as an animal – but it had to look real. It had to look sweet. I built a lot of mood boards for the director and offered up a variety of creatures,” he continues. “We honed in on the monotremes, platypuses and echidnas. There’s a primitivism to them that’s already entertaining by itself. If you juxtapose that with a mental state that is incredibly headstrong, you could have something really interesting.”

After playing around with early animations, Grillo eventually settled on a design that was ‘just right.’ He says, “It’s about finding a balance where you don’t take the design too far, but it looks otherworldly and alien. It’s an interesting thing when you’re creating something that doesn’t exist. You go, ‘Well, that looks like an animal I’ve never seen before, but I believe it.’” Once he established the Niffler’s basis, Grillo added the ‘trimmings’ and endowed it with personality. He began with the aggression of a honey badger and mixed and matched other animal traits from there. In the end, he argues, “There are no real rules. You either buy it or you don’t.”

ON COMMON GROUND

Grillo believes digital technology has allowed animators to go much further, approaching the design process more “holistically.” He explains, “You build anatomy; you look at color pictures, and look at the acting and the movement. You don’t commit to anything until you’ve explored these different angles of what a creature can be. By doing so, you also discover what they’re capable of, and through that you get more story. The plasticity of the process allows you to keep moving gently towards that finished product.”

Creature animation is both science and art, both objective and subjective. “A lot of the art is being able to look at things that happen in the natural world and see them as a universal truth,” Dodgson remarks. “It’s through finding a tangible solution that you create a sense of realism that the audience can then believe in,” Grillo states. “That way, they can invest in more important things in the story. It’s an incredible privilege to get invested in these creatures. They open your eyes to the beauty and intricacy of nature. What we do is beautiful, and it’s full of passionate people. I think we’re all scientists at heart.”

VIEWING THE NATURAL WORLD THROUGH HOPPERS

By TREVOR HOGG

After a grizzly, panda and polar bear attempt to infiltrate human society in his We Bare Bears, director Daniel Chong reverses the narrative for his Pixar directorial debut Hoppers, where scientists are able to ‘hop’ human consciousness into lifelike robotic animals that are able to talk to and understand what every creature big or small is saying.

“There are these creatures who live with us and share this planet, but we can’t talk to them,” Chong states. “You can look at the Internet and see that everyone is trying to make sense of them. It’s amusing to even try to give voice to them.” The biggest inspiration was not James Cameron or David Attenborough, but videos where awkward robots with cameras in their eyes try to fit in with animals. “There are even these funny ideas or things that we were seeing in real life with panda enclosures where in order not to habituate the pandas to humans, people will dress up in panda costumes and go feed them. There are a bunch of stories like that, and it makes you think, ‘Humans are so interested in animals and are trying to infiltrate in these wonky and clearly hilarious ways. How can we take that and push that idea?”

Images courtesy of Disney/Pixar.

TOP: The comedy of the movie comes from defying the expectations of audience members.

OPPOSITE TOP: Lighting was important in ensuring the animals stayed on model and always looked their best.

Transitioning from 2D to 3D animation required some adjustments. “The original boards for the movie were so charming and worked so well as 2D cartoons,” remarks Nicole Paradis Grindle, Producer. “I remember saying to Daniel that I thought the biggest challenge we would have was translating this into 3D because they worked so well in 2D. It was a big leap to translate and also hold onto the humor. There were jokes that didn’t play in 3D in the same way they played in 2D, so we had to come up with a different

play on certain gags.” The story constantly shifts between the human and animal perspectives. “Another big thing was dot eyes versus cartoon eyes. That was not something that was a slam dunk. We had to work for a long time to get it so that the same model could have those dot eyes that made it seem more like an animal and then have the cartoon eyes. The whole language around the human perspective without the earpiece versus the perspective in that animal world; that was a long journey,” Grindle says.

Adopting the disguise of a robotic beaver is Mabel, who attempts to solve the mysteries of the animal world. “For human Mabel and Mabel Beaver, we had a few things outside of her general personality and performance that we made sure tracks through both forms,” explains Alon Winterstein, Animation Supervisor. “When Mabel is a kid, her hair spikes up or goes fuzzy when she gets angry, and we incorporated that into the beaver. What made Mabel unique was her human instinct inside of the beaver. Where a beaver’s instinct would be to walk on fours, Mabel would feel more comfortable walking on twos. There is a scene when George is trying to encourage her to come with them to the Super Lodge and swim. Mabel is not sure if she is going to do it. Mabel did what she needed to and now is going back home. But Mabel gets convinced. However, her choice to jump into the water is different than any other beaver. A beaver would jump head on and swim in an organic way. Mabel ‘cannonballs’ into the water. It’s a much more human instinct.”

Maintaining the appeal of the characters was not easy. “We definitely had innovations on the characters themselves, as they

were built in more of the rigging and groom parts to allow for the animators to achieve what they need out of the performance and the graphic shapes, appeal, silhouette and performance,” Winterstein notes. “There was a lot of effort there to create models that could support all of that range, but also unique solutions for things such as those short arms and legs that sound simple in concept. But a character that is so round, and everything is so close to each other, it tends to collapse on itself. To manage that was challenging in addition to a tail. Once they go between two legs to four legs, for that transition, the proportion actually needs to change to accommodate a silhouette that is more appealing on fours. You need to find ways to cheat that in the transition but also make sure that this model looks good in both ways and doesn’t start looking unappealing.”

New tools were created. “We developed a new system for generating feathers on characters and for rigging the wings of birds,” states Laura Beth Albright, Visual Effects Supervisor. “When birds fold their wings, the way that the feathers have to follow the wing, fold up and unfold, is actually complex. There are layers of different feathers; some of them articulate like fingers and some follow the others. Instancing the feathers on the body was another project. We hadn’t updated the way we make feathers in quite some time. Since we had some birds that were going to be prominent, close to camera, right up with our main characters, we wanted the feathers to be compelling and nice.” A prominent plant had to be accommodated. “We worked early on with the tools department to create a new internal tool for modeling and instancing procedural trees because we were going to have a ton of them,” Albright notes.

Having the ability to manage the visual complexity of nature led to the Brushstroke Project. “It’s a system of projecting points in space onto the environment, applying a texture card on each one of those points, bringing that in and rendering it, pulling the color from the existing beauty pass render and then applying the texture cards with those colors in the final composite of the image in whatever way the compositor chooses to dial it in,” Albright remarks. “What that did was to provide a way to visually stylize the extremely complex environment. Natural environments have so many little pieces, it can be really busy and visually hard to look at. You have your characters in front with all of these little things going on. We needed some way to quiet all of that visual noise and frame the characters. It adds to the tactile style that we wanted for the movie as well.” Effects simulations like water, fire and smoke did not vary that much between human and animal perspectives. Albright adds, “It was more in the design and shaping. You can also see in our human world that things are more orderly and organized. Even the vegetation is more structurally controlled. Then, in the natural world, things are wilder.”

Comedy is a fragile concept. “I feel like we’ve learned a lot about how you can step on the comedy by making something overdramatic or not putting clarity in the right place,” states Ian Megibben, DP, Lighting. “Much like the timing is important, the clarity of what you see is important. Seeing it at the right time. A lot of this is worked out already between the blocking they do in layout and the work they do with the edit, but when you have an eye fixed on a cut, making

TOP: For Daniel Chong, animation is a medium that lends itself to the suspension of disbelief, allowing for a more fantastical world without the need to explain everything.

BOTTOM: Daniel Chong has a specific aesthetic for his humor and storytelling where the imagery had to feel real without feeling necessarily realistic.

sure that we’re clearly highlighting that in a subtle way. That was probably the biggest lesson. I’m probably proudest of putting a lens flare in the movie that got the largest laugh that I’ve ever seen a lens flare get in the right way. It was rewarding for me to contribute to a movie that is hilarious in a way that elevates the humor. I put so much thought into lens aberrations and effects in a way that feels organic. It doesn’t draw the eye. There is one time where I was like, ‘We can pour a lot of sauce on this shot!’”

Given the nature of the humor and storytelling, the visual aesthetic had to feel real without having to be realistic. “There is such an odd character design, and odd in the best way, and a cartoony feel to things in the natural world,” remarks Jeremy Lasky, DP, Layout. “The human world gave us permission to come up with our own style for it without having to feel like we were doing Finding Dory. We could get away from making it feel like we were just observing these characters doing what they are doing. Ian and I wanted to establish this tone of pushing the visuals just enough in the beginning that we came up with this stylized feel that could be then really pushed to extremes when we needed to because the story gets really weird. It gave this baseline that the audience was comfortable with, so they weren’t saying, ‘What do you mean you have a shark being pulled by a bunch of seagulls that lift it out of the water? I don’t understand how that works physically.’ It doesn’t matter because you’re hooked in the story and the characters that you roll with it.”

TOP TO BOTTOM: A key landmark is a rock where Mabel would spend time with her grandmother.

The robotic beaver was inspired by people recording videos of robots awkwardly interacting with animals.

Director Daniel Chong, Production Designer Bryn Imagire and Story Supervisor John Kim during a Hoppers art review.

THE GLOBAL REACH OF VISUAL EFFECTS TODAY

By TREVOR HOGG

TOP: Predator: Badlands. Tax incentives have spurred global growth. (Image courtesy of 20th Century Studios)

OPPOSITE TOP: Foundation. Circumstances are constantly changing and must be accounted for in advance. (Image courtesy of Apple TV)

Financing movies and television programs remains expensive, prompting studios and production companies to take a more global view, with both positive and negative effects on the visual effects industry. No longer does one have to be based in California to find work, but reliance on tax incentives has also led to a more nomadic lifestyle for digital artists. “Despite the entertainment industry being consumed by everybody, it’s a very niche business in itself, and yet, the visual effects industry and post-production exist as a niche within a niche,” notes Will Cohen, VFX Producer, Executive Producer and VFX Consultant, who co-founded Milk VFX in 2013. “It’s a creative business, but also technology-driven, so it’s a lovely fusion of art, science, technology and craft to help create images. The movie business as we know it really starts in Hollywood with the studio system that grew out of it and with the global success of people going to the cinema as a premium form of entertainment. Within this studio system, the special effects departments build on Georges Méliès’s work. Then, in San Francisco, George Lucas and ILM revolutionized the special effects industry with Star Wars, John Dykstra, motion control, and a whole new wave of physical and optical special effects. At some point between the mid-1980s and mid-1990s, digital visual effects emerged. All of this is coming out of Los Angeles, and the first rival to the West Coast of America is London; that is born not out of any particular tax incentive, but from the creative industry, particularly music videos and commercials, and what was possible digitally. Clairol commercials with morphing people for hair products and Ridley Scott doing advertisements based on 1984 for Apple were huge events.”

The opportunity to expand the scope of storytelling was attractive. “The big expansion and taking on of Los Angeles on the West Coast came out of the artistic desire to do it,” Cohen states. “There

were loads of brilliant artists in Paris at the time doing some great work. BUF was doing excellent work with David Fincher. This is because they want to participate in their artistry digitally within the premium entertainment format. Harry Potter put a billion dollars’ worth of visual effects work through the U.K. over a decade, creating regular work for companies to keep crews together and develop their capabilities. By 2008, Framestore was established. Mill Film is its own story. Cinesite is still there. Moving Picture Company has made inroads through Digital Film Company. DNEG is beginning. It was an exciting time.” The influx of visual effects companies, along with the increasing quality of digital augmentation, has led to a shift in attitude. Cohen observes, “Let’s say from Jurassic Park in the mid-1990s to 2010 is an era where people would come to see us from Hollywood, and they would say, ‘I’ve got this movie and a particular effects sequence. Are you actually able to do it?’ That was the question before it was, ‘How much?’ Between 2010 and 2020 came an era of people being in the game and the globalization of the industry. Money becomes this huge factor, and people stop asking, ‘Can you do it?’ They assume, if they’re talking to you, that you can do it.”

Tax incentives have spurred global growth. “In the early days, tax incentives helped build and grow some of these new territories and companies,” reflects Todd Isroelit, Senior Vice President, Visual Effects Production at 20th Century Studios. “For example, when I was first doing visual effects work in Australia, the tax incentives weren’t structured in a way that made sense to grow the visual effects business. There was a high minimum spend needed to trigger the PDV rebate with companies. You couldn’t see yourself spending that kind of money because they didn’t have the level of experience or resource capacity. Just through conversations

with Ausfilm and the government and making the case for bringing these thresholds down, such as having the requirement to film there, allowed these companies to start growing and expanding their reach globally. That’s what happened with Rising Sun Pictures, Animal Logic and some of the smaller companies. It was a ‘build a better rebate and they will come’ approach.” This also started to bring in established visual effects companies from outside of Australia, and that in turn creates a stronger talent pool.”

One factor remains an important part of any tax incentives. Isroelit says, “The bottom line is it’s got to be the right creative team at a vendor. Sometimes a vendor will put up a team in Canada and Australia. We have to vet and decide which is the better leadership team for this scope of work. Once we have established that, we can figure out how to split the work across the same vendor to maximize both incentives and resources. Perhaps it’s a Framestore in Melbourne that’s the creative lead, but then they split some of the work with Framestore Montreal to help with resources and pricing, including the value of the native currency. Ideally, our team is just going to be dealing with the supervisor and creative leads in that one main award location.”

Because work is performed across different time zones, the visual effects industry has become a 24/7 enterprise. “Initially, it used to be considered a handicap, but now people have embraced it,” Isroelit remarks. “If you can structure it in the right way for the production team’s and the studios’ schedules, you can actually get more productivity. Production site teams may need to schedule their days and tasks accordingly, particularly when they require direct engagement from the filmmakers. At least from California you can manage the Australian and Asian vendors later in your day. Conversely, you can wake up and start with Europe, and then hit the East Coast. You

can look at the clock across the globe and set up your schedules in a beneficial way.” The number of vendors influences the size of the visual effects team. “Definitely on bigger shows, like Predator: Badlands, you have multiple coordinators. One coordinator is in charge of vendors A and B, while another coordinator might be responsible for vendors C and D. In that context, you’re not overwhelming one coordination effort. Basically, you’re creating multiple pipelines within your own production. It becomes a delicate balance in managing the visual effects supervisor’s time across five or six vendors in different time zones. I’ve had those conversations with my production teams about making sure we don’t burn out. We have to find time for them to sleep, eat, and do their notes. To protect some quality of life for the long run of the schedule, it’s trying to figure out the right plan, then how to manage the vendors with little subsets of teams within the team.”

Circumstances are constantly changing and must be accounted for in advance. “When I first break down a script and turn in my budget, I’ll do a blended 25% tax incentive so that I can work anywhere in the world,” explains Kathy Chasen-Hay, Head of VFX,

TOP: A Knight of the Seven Kingdoms. Visual effects companies that specialize in the creative needs of a production are sought out. (Photo: Steffan Hill. Image courtesy of HBO)

BOTTOM: Murderbot. An effort is made to have visual companies work on their own sequences to ensure continuity. (Image courtesy of Apple TV)

Paramount Features & TV at Paramount Skydance. “Because then, if you are a larger film or TV, you can say, ‘I’m going to spread the work around.’ It’s usually safer not to keep it all in one territory. We might do a little bit in Canada, Australia and the U.K. I realize that Ireland is offering this great tax incentive, but I want to go first and foremost where the talent is, and I want to go to a company that has done right by me, delivered good-looking shots on time, and treats its artists fairly. There are so many ways to pick a company or a team to do your work. A lot of it is based on relationships. You may have a team that doesn’t have the best rebate. An example might be Digital Domain, which among visual effects companies, still maintains a strong presence in Los Angeles. So, we might get a 22% tax incentive from them because they’re doing work in Vancouver and also work in Los Angeles and Montreal.” Even within the same visual effects company, studios must track how work is distributed across facilities to avoid tax incentive conflicts. Chasen-Hay remarks, “I remember when we first started doing the rebates about 15 years ago, there was a lot of confusion. But now, when you’re in the contract phase, you would say 90% of the work

TOP: The Last of Us. To meet the budget, the cost of using vendors doing specialized work in non-incentivized countries can be offset by using other vendors in incentivized countries or regions. (Image courtesy of HBO)

BOTTOM: The Gorge. Having multiple visual effects companies working on the same shot is not preferable. (Image courtesy of Skydance and Apple TV)

BOTTOM: The Last of Us S2. The end result suffers when filmmakers do not give visual effects companies the flexibility to place the work where the best creative talent is available. (Image courtesy of HBO)

will be done in Toronto, and 10% will be non-rebate because all the vendors need flexibility to ship out roto, paint and matchmove to India or a different part of the world.” Chasen-Hay adds, “Our job is 50% accountant and 50% being up on all of the territories and what the incentive programs are.”

Having multiple visual effects companies working on the same shot not preferable. “We don’t like to do that because you’ve got to share assets,” Chasen-Hay states. “Some of the vendors, like DNEG, might share an asset between their territories because theoretically they are sharing their software live. What we try to do is have vendors work on their own sequences because then, if there’s anything that looks different, it makes more sense as there’s continuity and you’re telling a story within that sequence. But often, as the visual effects sequence grows, we have less time to deliver; that’s when we bring in vendors six or seven to incorporate into the pipeline, and that’s when you have to share the assets. I really noticed it in Australia and Vancouver, where you might have two companies, they’re talking to each other as if they’re working for the same vendor. Artists are constantly switching visual effects facilities, and many people are friends with one another, so it’s a collaborative field. If your neighbor’s shot doesn’t look as good as your shot, who knows whose shot it was, and you could get ridiculed for someone else’s work. It’s in everybody’s best interest for the work to look good. You try not to share an asset for the same shot.” Machine learning and AI will have global ramifications. Chasen-Hay notes, “Right now, you send all of your non-creative work, like roto, paint or matchmove, to cheaper countries to do

TOP: The creatures in It: Welcome to Derry tended to be standalone, so various types of visual effects were able to be assigned to different vendors. (Image courtesy of HBO)

that type of work. But if you’re a visual effects facility using some software that is going to do that for you, you don’t necessarily have to send that work out. It will affect people in other countries and the lower-level jobs.”

Sharing work with multiple facilities and visual effects companies worldwide is hardly challenging. “Filmmaking is such a collaborative art form, requiring teams across different disciplines to be in creative sync,” observes David Conley, Executive Producer at DNEG. “Nothing will ever come close to surpassing the experience of being in a screening room with a team of artists working on a sequence. With increased pressure to deliver on global tax incentives, companies must build teams spanning time zones through video conferencing, data networking, and an international company infrastructure that supports the management of a diversified creative portfolio across multiple sites. Global visual effects production stress tests a company’s ability to seamlessly recreate the experience of being together in a screening room.” Dividing and conquering is a critical management issue for any global visual effects company. “Dividing the work among the facilities is primarily dictated by the rebates that inform the net target the filmmakers are looking to achieve. As a company, you work diligently to ensure you can support achieving the filmmakers’ financial parameters by developing a talent base at both the creative and production management levels that consistently achieves the high standards you set across all sites. This isn’t always possible due to capacity constraints in a particular territory. The consequence is that the creative output suffers when filmmakers can’t give a company the flexibility to place the work where the best creative talent is available.”

Each project has different creative needs. “Some projects have specialties like fire or water or effects simulations or creatures and types of creatures; we will look for vendors who specialize in that,” states Janet Muswell Hamilton, Senior Vice President of VFX at HBO. “We will know what is needed to hit our budget, but if we have a facility, for example, ILP in Sweden, there is no incentive there, but we used them a lot. We offset the fact that we’re not getting the incentive for a big chunk of work by using other incentivized countries or regions for work that isn’t as specialized as they’re doing.” Generally, there is a routine regarding how visual effects are distributed over the course of a season. “Normally, the first and last episodes are huge, and normally, episode seven or five or both are big. What we have to look at first is assets. Are these assets across the entire season, or are they different assets that we can assign to various vendors? A good example is It: Welcome to Derry. Because the creatures in the episodes were standalone, for the most part, we were able to assign those various types of visual effects to different vendors. We did that because the schedule is tight, so we have to ensure that the visual effects company handling episode eight isn’t backlogged by episode seven. Even if they can offer a big crew, you still need to balance that work because just getting it through the facility is difficult.”

Keeping track of the facilities being used within the same visual effects company is important. “A good example would be House of the Dragon,” Hamilton notes. “Pixomondo has been our dragon

TOP TO BOTTOM: Predator: Badlands. Because work is done across different time zones, the visual effects industry has become a 24/7 enterprise.

(Image courtesy of 20th Century Studios)

Springsteen: Deliver Me From Nowhere. Managing the visual effects supervisor’s time across five or six vendors in different time zones becomes a delicate balance.

(Image courtesy of 20th Century Studios)

The Last of Us. When the schedule is tight, it’s more difficult to get back-to-back episodes of work through a single facility. (Image courtesy of HBO)

facility since way back on Game of Thrones, and we used three of their facilities in three different regions. We review which work is assigned to which facility and assess the incentives in those regions, as well as, the extent of outsourcing. If they’re outsourcing 10%, that’s 10% for which you will not receive an incentive. All of that has to be worked out. It becomes a complicated grid and a lot of discussion.” Templates have been implemented to make looking after the different shows more manageable and feasible. Hamilton says, “We use Flow [formerly ShotGrid] to track where we are with things. We have a whole system that scrapes the data up into an Airtable, so I don’t have to go and look at everybody’s Flow. I’ve got an overview that’s constantly being updated. What I’m mainly looking at is whether a show is getting into trouble. When I have 15 or 20 shows to look at, I can’t look at them differently. We try to make it as simple as possible. Everyone is satisfied with our financial tracking. If a show asks for a change and it’s a good idea, we will accommodate it then roll it out through all our other shows. We track all the places where we’re applying for incentives because there are rules governing that. Every incentive has a cost associated with it, so we have to make sure that is accounted for in the budget.”

Each country has a unique culture that, in part, influences how visual effects companies operate there. “Understanding cultural differences is foundational to the success of any company,” observes DNEG’s David Conley. “Language and culture shape communication, leadership, teamwork and decision-making in multicultural, multigenerational environments within a global company. That said, watching Ted Lasso offers up some really great management tips on how to handle multicultural teams. Or Aliens, depending on how you view your clients!” Talent is not in short supply. “There is incredible talent worldwide that can be accessed through remote work or by encouraging relocation to different countries. Any perception of labor shortages can be attributed to the oversaturation of work being pushed into heavily subsidized regions and, unfortunately, to the loss of experienced artists moving into other industries. We really need to figure out how to protect our global talent base from the effects of downward financial pressures that are undermining the stability of our industry.”

Cloud computing, real-time rendering and AI/machine learning are leading the next wave of technological innovation. “All these technological developments are fantastic for creating a global multi-site facility that can access artists worldwide,” Conley remarks. “These developments will be foundational in building a suite of tools to improve compute speed, designed to deliver images to a higher standard, and unite artists in sites around the world. But we need to be careful not to let discussions about technology minimize the artists’ contributions, which are more needed than ever.”

Murderbot. Dividing and conquering is a critical management issue for any global visual effects company. (Image courtesy of Apple TV)

It: Welcome to Derry. CG assets needed to be assessed as to whether they will be used across the series. (Image courtesy of HBO)

The visual effects industry continues to evolve. Conley concludes, “What we’re seeing is that the economics of content creation are being challenged by the various distribution models that audiences around the world use to consume their content. As an industry, we need to adapt to those evolving models. But not at the expense of undermining our position in the filmmaking process. It’s essential that we continue to deliver visually groundbreaking work.”

TOP TO BOTTOM: Fountain of Youth. Even within the same visual effects company, studios must keep track of how the work is distributed among the various facilities to avoid tax incentives conflicts. (Image courtesy of Skydance and Apple TV)

PUSHING CREATIVE AND STORYTELLING BOUNDARIES WITH CRYSTAL BRETZ

By TREVOR HOGG

While attending the Victoria School of the Arts, Crystal Bretz played guitar, sang in a choral choir, performed ballet and contemporary dance, and experimented with 3D animation, graphic arts and photography. “I still play guitar and sing but don’t dance much anymore,” reflects Bretz, who is based in Montreal and is a Lead Modeler at Framestore. “In general, anything art related definitely helps. Just keeping rhythm in dance and guitar can assist you in understanding the rhythm and flow of shots and sequences.” Life began in Richmond, B.C., then the family moved to Edmonton, Alberta. “Where I grew up was a music-oriented place because there were not a whole lot of other things to do. To keep busy, you did artistic things, which were dancing, music, arts and things like that. I went to an arts high school in Edmonton, which wasn’t a common place to go. I was always telling my parents I’m not interested in sports, so we ended up finding the Victoria School of the Arts. Instead of doing gym classes, you can take alternative classes like ballet and guitar. I also took my first animation class, so I got exposed to a lot of different forms of arts early on, which was nice.”

While the arts were not a big part of the Bretz household, with her mother being a mail carrier for Canada Post and her father selling parts for big oil rig machinery, the offspring have been driven towards more artistic endeavors. “Part of what I do has inspired my family to become more artistic,” Bretz reflects. “Now, my brother is a graphic designer; he decided to go back to school to study that recently. My dad recently got into bird carving, and it is cool to see this artistic side coming out of him now.” Next stop was the Vancouver Film School, which saw the post-secondary student specialize in 3D modeling, surfacing and lighting and earn a diploma with honors in 3D animation and visual effects. “I wanted to go into their game design course, but they told me there might be more career opportunities in visual effects because if you learn one, you could maybe go both ways. I went into that program with the goal to be an animator, but then I found a lot of love and interest in modeling. Starting from nothing and building something out of it was really satisfying for me. I ended up going down that route, and at some point in their curriculum you can split off from the other fields and specialize. I ended up specializing in character modeling but didn’t realize how difficult it would be to get into that professionally.”

Images courtesy of Crystal Bretz, except where noted.

TOP: Crystal Bretz, Lead Modeler, Framestore

OPPOSITE TOP: Translating the characteristics of James Gunn’s dog Ozu onto Krypton was difficult because the two canines were of different proportions and sizes. (Image courtesy of Warner Bros. Pictures)

OPPOSITE BOTTOM: Bretz proudly wears Superman’s iconic crest beside the theatrical poster.

Upon graduating there were two job offers. “I couldn’t figure out which one I wanted to take because I wasn’t entirely sure at the beginning of my career what would be the right path,” Bretz recalls. “I had an offer from MPC to join their lighting academy for young artists entering the industry. There was another offer on the table as a generalist at a small TV visual effects company. I ended up taking that job because it was recommended to me because I might get the opportunity to work on a lot of different types of things as a generalist, including characters. At the beginning of my career, I started working at Artifex Studios, and they taught me so much. I did simulations and rigging, everything 3D related. That was a good kickoff to my career because it made me a lot more knowledgeable and fast about every part of the pipeline, rather than being stuck into one category.” Subsequent

“There’s so much involved with creating a character. I pick the hardest thing there is to do. If it’s not pushing the boundaries to the next level, I don’t want it. The most challenging things don’t frustrate me; they get me excited because I get to solve something.”

—Crystal Bretz, Lead Modeler, Framestore

jobs include Senior Organic Modeler at Method Studios, Senior Modeler at DNEG and Digital Domain, and being granted an Unreal Virtual Production Fellowship. “I was so surprised that I got into the Unreal Fellowship because I know they only select a certain number of people, and I learned a lot. It was three or four weeks of going through the full Unreal Engine and learning how to do everything. I don’t technically use the skills I have developed now, but I know that if I was to go into a virtual production or even a game career in the future, I could definitely jump right in. Also, nowadays, if an Unreal Engine file comes in, I’m the person that they send it to!”

“There’s a lot to think about when you’re translating stuff from 2D to 3D, especially when you don’t have that concept model beforehand that’s already figured those things out for you in a rough manner,” Bretz observes. “For example, with Krypto in Superman, we only had a 2D concept to work from, and had to match James Gunn’s dog, Ozu, but his dog was very small, and the dog they wanted was a lot bigger. We needed to figure out how to make big-dog proportions work on a small dog, but also how do we get the exact same look of Ozu in this character? It was a lot of problem-solving, for sure. We would go back and forth on changing structure and design and eventually landed in this nice place where things just worked. I don’t know if there’s ever a cut-and-dry answer of how to translate something from 2D into 3D.”

Motion is kept in mind when modeling. “A lot of the time, you

Bretz attends an event held by the Montreal Section of the Visual Effects Society.

Bretz has some fun with IF memorabilia while at the movie theater.

Framestore

have to build things exactly how it would need to be moved practically,” Bretz states. “You’re always thinking about the anatomy inside the bodies and where the bones are connecting. It gets really intricate. Some creatures or characters are fantastical, so they don’t exist. You have to think about things a bit more like, ‘Is this a jumping type of creature? What kind of bone structure would they have? How would they move?’ It impacts how you build something, which is the fun part about this job.” Realistic and stylized characters share some common principles. “No matter how stylized it is, someone will notice that it doesn’t feel right if it doesn’t actually function like a person would expect or something you’ve actually seen before. If you don’t know basic anatomy and don’t understand how things should work, then you don’t know how to stylize and tweak it to make it a little bit different but still feel anatomically right. It’s a really fine balance.”

Mentors have been prevalent throughout Bretz’s career.

“Some of them I’ve never even met, but I’ve seen their art and been really inspired.” Bretz notes. “Then there are some people I’ve met who have taught me a lot. Justin Holt is an amazing texture artist, and he ended up being one of my supervisors and taught me a lot about texturing and the requirements for that.

Eugene Fokin is a really good modeler who I got the opportunity to work with, and he taught me a lot as well. Overall, mentorship is important in this industry because I find when you go to college, you’re only learning a quarter of the information you need. The rest of the information you get in the industry and from those people who have the experience. This industry is constantly growing, so it’s hard to keep up sometimes without a little bit of help.” Bretz is a Character Art Mentor at the Vertex School and has conducted Gnomon workshops on “Creating

TOP TO BOTTOM: There was only 2D concept art to work from for Krypto, which then had to be translated into 3D. (Image courtesy of Warner Bros. Pictures)

modeling team attending a 2025 Christmas party. Left to right: Stephanie Qin, Marjorie Veillette, Kanisha Lopez, Vik Sorensangbam, Klaudio Ladavac, Natasza Nalewajek, Christopher Helin, Pascal Clement, Wilfried Vougny, Crystal Bretz, Pierre-Edouard Merien, Lyrian Corneil, Maxime Moreira, Patrick Comtois, Duy Tran, Simon Martineau and Johannes Boivin.

a Stylized Female Character” and “Creating a Male Groom.” “Being a mentor has been one of the best things I’ve done. The surprising part is that you always know a little bit more than the person you’re mentoring and always have something useful to share, and you always know something different and come with a different perspective. The other part is helping people grow; it’s so rewarding when they get their first job – for them and for me.”

The attitude towards character modeling has remained consistent while at the same time techniques and technology are constantly changing. “I’m definitely not doing the same things I did back when I started, and I, as well as the programs and workflows, have grown a lot since then,” Bretz reflects. “I am looking forward to the sort of machine-learning style that aids processes that we didn’t like doing before, like UVs. But anything beyond that, I’m happy keeping the art as organic and human as possible because there are still flaws. There is an automated feeling behind some of the machine-learning things that come up, especially with concept art. Some tools are being developed with AI to aid everyday processes that take enormous computing power, and I support the uses here, for sure. The other thing that could be personally useful as well is just creating scripts on the fly for quick problem-solving, but leaving the complex scripting to the pros.” For Bretz, this is an enjoyable part of the job. “I like creating something from nothing. I love the challenge of it because there are muscles, bones and facial structures as well as face shapes. There’s so much involved with creating a character. I pick the hardest thing there is to do. If it’s not pushing the boundaries to the next level, I don’t want it. The most challenging things don’t frustrate me; they get me excited because I get to solve something. I’m always learning more things about this field. It’s a constant learning curve, but that’s why I love it so much.”

TOP AND MIDDLE: Bretz modeled Unicorn for John Krasinski’s IF. The film was her first project as model lead at Framestore and has a special place in her heart. (Image courtesy of Paramount Pictures)

BOTTOM: Ale Barbosa, Crystal Bretz, Bell de Deus and Stephanie Hayot at Cafe VFX in Montreal giving a Q&A panel with Artstation.

NAVIGATING THE HIGH SEAS FOR ONE PIECE: INTO THE GRAND LINE

By TREVOR HOGG

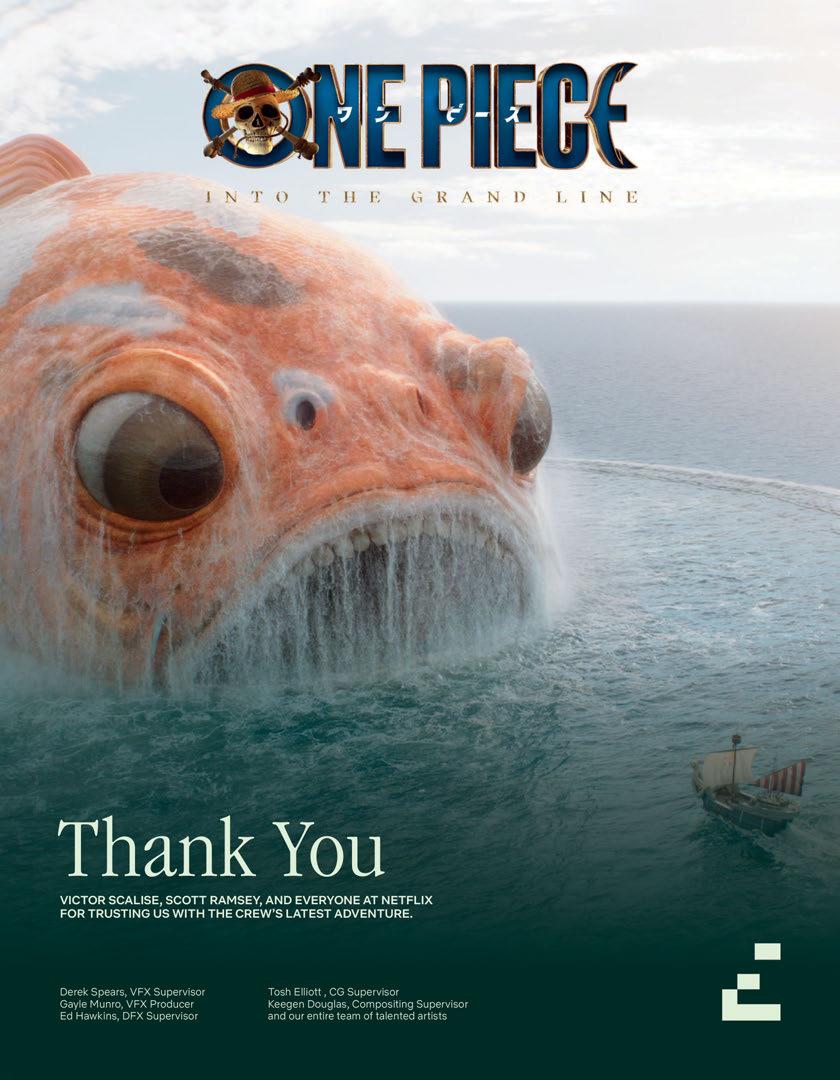

Setting sail on the treacherous ocean current that flows around the entire Blue Planet in an effort to find a legendary treasure and be crowned the Pirate King, One Piece: Into the Grand Line marks the return of Monkey D. Luffy and his Straw Hat crew for eight episodes that travel to Loguetown, Reverse Mountain, Whisky Peak, Little Graden and Drum Island, along with introducing an entirely CG character, a massive sperm whale, a pair of giants, and antagonists with the ability to manipulate wax and smoke.

“This show wouldn’t be possible without visual effects, and we have an incredible visual effects team,” states Joe Tracz, Co-Showrunner. “There were definitely superpowers to figure out for the first season. Luffy is made of rubber, so him stretching was the big challenge. But each season gets bigger and bigger. In Season 2, we knew that one of our big challenges was we were introducing a new crew member to the Straw Hats, and he is a little reindeer boy known as Tony Tony Chopper, who is an entirely visual effects character.” The methodology was borrowed from Guardians of the Galaxy. “We have an incredible local actress named N’kone Mametja. She was on set every day in a body suit, giving someone that our actors could do a real performance with. You’d shoot with her, shoot the clean plate for visual effects, and then in post-production, Chopper’s face and voice were provided by another actress, Mikaela Hoover, who also was in the Guardians movies.”

TOP: Laboon is an entirely CG creature, which was tough to frame properly in shots.

OPPOSITE TOP: There were too many shots with two CG giants, so the characters of Dory and Brogy were achieved practically.

Things get even weirder in the second season. “In the writers’ room, we constantly have questions of, ‘Can we do dinosaurs and giants?’” Tracz recalls. “The answer was, ‘It’s One Piece. You have to do dinosaurs and giants. If you don’t do dinosaurs and giants, Little Images courtesy of Netflix.

Garden, the island where they discover those things, just isn’t the same Little Garden.’ There are expectations that fans of the manga and the anime have that we want to make sure we’re delivering on because that’s the promise you make when you’re adapting something as specific and beloved as One Piece. If you shy away from those things in favor of realism, you lose what makes this world so special.”

Given the scope of the world-building, the task was divided between two production designers with each island treated as a unique environment. “[The approach towards visual effects was] definitely different for various parts of the show,” remarks Tom Hannam, Production Designer. “Whisky Peak was one of the islands where everything was in-camera and I didn’t have to consider the visual effects side of it so much. But Little Garden is an island populated by two giants that were 60-to-70-feet tall, and they got bigger in post as these things always do. That had a lot of Straw Hats, human-sized crew, dealing with these two enormous giants. It took a lot of collaboration with visual effects right from the beginning. I worked it out with Victor Scalise [Visual Effects Supervisor] and Scott Ramsey [Visual Effects Producer] literally shot-by-shot, but at the same time there was still the desire to do as much in-camera as possible.”

Everything was like putting together a jigsaw puzzle consisting of practical and digital pieces. “For each setup we would have continuous meetings,” states Max Gottlieb, Production Designer. “You would have full set extension and bits you could and couldn’t see through. For instance, with Laboon we built the lighthouse up

to the edge, and we had to make a mark where there was this huge drop and cliff, and then there was the sea and Laboon. Beforehand, we have to proportion the whole thing into a series of drawings that are exactly to scale and then design where the eye of Laboon would be. Luffy is standing on what would be the clifftop, and we have to pinpoint where his eye would be and where he’s looking at different parts of the action. There is the financial aspect, as well, of how many wide and close-ups shots you can have. In the end, the whole thing becomes this jigsaw puzzle broken down shot-by-shot.”

“One of the most important parts of a cinematographer’s job is to try and facilitate the best outcome for all the imagery, whether live-action or visual effects,” remarks Michael Swan, Cinematographer, who was responsible for the final three episodes. “I try and collaborate with the visual effects team as much as possible to make their life as easy as it can be. This is a visual effects-heavy show, and nothing is undemanding.” Previs and storyboarding are important. “All the big visual effects sequences are prevised and often storyboarded by the director. In addition, the fight scenes are carefully rehearsed and choreographed well ahead of time.” There are few practical locations with the emphasis on elaborate sets and shooting against screens. “The look was established in the first season, and although we used less extreme wide-angle lenses for close-ups in Season 2, the look remained the same. Netflix has full control over the final color grade,” Swan notes.

Ensuring that there is enough time to produce the required

visual effects is a quick and efficient editorial turnover. “While we are still offline, we have this absolutely fantastic team of visual effects editors and also our own previs artists so we can do internal turnovers constantly and get temp visual effects moving,” remarks Tessa Verfuss, Editor. “Even by the time we’re presenting to Netflix, we have something there. It’s different when we’re talking about a full CG character, but things like Luffy’s punches, we’re getting previs for that before we even send cuts to Netflix. That just becomes an ongoing process that we keep moving. Personally, I do have an advantage of a time zone difference because I’m in Cape Town, so most of that team are only starting when I finish my day, so we’re not literally trying to jump into each other’s bins and timelines at the same time.”

Imagination is required when assembling sequences. “When you do a show like this, so much is on bluescreen or greenscreen,” states Eric Litman, Editor. “You have a character that is not present because it’s CGI. And from an editor’s perspective, it requires, at least from me, so much imagination. As an editor you are imagining the timing and pacing. Is this enough time to do x, y and z or deliver a line? The boat is doing this right now, but we don’t have the effect of what’s causing that. We’re constantly figuring out cause and effect and how that translates into visual effects. It also requires a tremendous amount of conversation and homework with the people you are working with, the director and visual effects department, in understanding the storyboards.

TOP: After sailing over Reverse Mountain where a gravity-defying river runs upwards, the Going Merry encounters a massive whale known as Laboon.

BOTTOM: A major challenge was getting the character’s eye level with Laboon, given the huge scale difference between them.

Sometimes you have to go to the manga to understand the concept of these shots.”

“What is good about One Piece is that the show accelerates its insanity from a storytelling perspective. Everything gets bigger, and I’m sure Season 3 will be no different,” observes Tim Kinzy, Editor. “It was different with the full CG character for the first month with this flashback scene, which had nothing to do with the Straw Hats. It was basically Mark Harelik and N’kone Mametja doing the Chopper/Dr. Hiriluk scenes. I felt like I was working on a spin-off. It was refreshing because this is nothing like I saw in Season 1 or have even dealt with before. It was a unique opportunity, a different kind of pacing, especially with a fully CG Chopper that is expensive per shot. A lot of shots get pared down or taken out to save money and for economy of storytelling. That was quite a challenge. You can’t just cut away to Chopper whenever you’re stuck on an editor because it’s going to cost a lot of money, so sometimes the pacing had to play out. It’s still One Piece, but it feels like something unique.”

Pushing the gimbal for the Going Merry was the sequence where the pirate vessel goes up Reverse Mountain. “We had the Going Merry at 20-odd degrees going up Reverse Mountain,” states Mickey Kirsten, Special Effects Supervisor. “That was incredibly tricky. Everything needed to be elevated, like our water cannons. We were shooting water onto the ship and people were sliding around. Resetting was quite challenging. People literally had to use

TOP: Tony Tony Chopper is the first entirely CG principal cast member.

BOTTOM: Getting an upgrade is the ability of Monkey D. Luffy to stretch his limbs like rubber.

TO BOTTOM: Miss All Sunday makes a dramatic entrance with swirling cherry blossoms.

Principal photography for One Piece centers around Cape Town.

Mr. 13 and Miss Friday, an otter and vulture, respectively, are members of the criminal organization Baroque Works.

The hard part of Cactus Island was coming up with techniques to give texture to something that is really grass and fields but looks like a cactus from the distance.

ropes to pull themselves up and re-set everything that was falling down during the take.” Mr. 3 generates a specific substance with his fingers. “The wax was insane. It was such a journey trying to find a product that we could have on the artist, and get it to do what we wanted it to do. We literally went from food stuffs like marzipan to baking products. We finally settled on a medical plastic that you can mold and it breaks really cool and nicely,” Kirsten says.

Creating the interior of the whale, Laboon, was a labor of love. “There was a lot of stomach acid inside of the whale,” Kirsten remarks. “Skeletons would fall into these little pools of water and would smoke and bubble away. We had to keep the walls of Laboon moist all the time. If you think about it, it’s 40-odd meters by 25 meters, and you square that. It’s a lot of rain rigs working there. We had kilometers of plumbed air underneath Laboon for the bubbles, steam and smoke, and also plumbed water underneath it, causing little eruptions. It was probably three, four months of prep on that one.”

While Season 1 had 2,300 visual effects shots, Season 2 has 3,800 created by Framestore, Rising Sun Pictures, ILP, Folks VFX, Barnstorm VFX, Ingenuity VFX, Mr. Wolf and Refuge VFX. “We broke down the approach coming up to Reverse Mountain as one section so you wouldn’t have to match into water,” states Scott Ramsey. “We gave the basic idea that the water needs to be sucking the boat toward Reverse Mountain, and as it does that it gets closer. When the Going Merry gets sucked into it, then we turn it over to Rising Sun Pictures. As it keeps going up to the top, at the top we know that ILP is very good at specializing in work like that. Once we get down to the bottom, that’s Laboon. We broke it down in those areas, hitting the vendors’ strengths.”

“The biggest problem we had was fighting the scale of the water [for Reverse Mountain] because a lot of the fluid simulators are meant to build oceans,” notes Victor Scalise, Visual Effects Supervisor. “You’re using water simulations that are defying physics and also need to be moving upward, colliding with the landscape, as well as being like white water rafting.” The giants were achieved practically. “We ended up getting two actors, and we worked with Tom Hannam to build big and small sets. Christoph Schrewe [director] came in, and we blocked everything out. We had little characters that were the size they were, so on the giant-size set, we could have Straw Hats, stand-ins that were the real size. We did a lot of math and planning to where it all snapped together beautifully in post.” Digital effects were unavoidable for Smoker. Scalise states, “The punches are true to the manga, and with the tendrils, we wanted to give them more shape, so a tornado-ish swirling was added. One of the tricky parts was, how do you make tendrils, when they grab Luffy, actually feel like it’s not emitting smoke from Luffy? We came up with the idea of these spinning cuffs that gave more depth. We also had a lot of fun developing the Gum Gum Gatling effects as Luffy is punching Smoker. We went through a lot of cool simulations to answer the questions: How do things go through Smoker? How does it react? How does it interact? Smoker was a lot of fun, and as viewers watch it, I hope they’ll enjoy what we came up with for it. The sequence is intense.”

TOP

THE INDIAN VFX INDUSTRY – A MAJOR GLOBAL FORCE

By TREVOR HOGG

TOP: An indication that the Indian domestic film industry has become more accepting of visual effects is the blockbuster RRR (Image courtesy of DVV Entertainment)

OPPOSITE TOP: Foundation. BOT VFX has invested seriously in compositing as well as asset and CG development. (Image courtesy of Apple TV)

Creating waves back in 2014 when Prime Focus World merged with DNEG and carrying into 2025 when Phantom Digital Effects consolidated Milk VFX, Tippett Studio, PhantomFX, Lola Post and Spectre Post into the Phantom Media Group, India remains a significant player in the visual effects industry, with the South Asian country getting its own chapter in the Visual Effects & Animation World Atlas 2025. Joseph Bell states in the comprehensive research report that Mumbai is the largest visual effects hub worldwide by headcount. Six out of 10 of the fastest growing visual effects and animation hubs in the world are in India. The vast majority of the visual effects and animation revenue comes from international revenue, with domestic clients accounting for only 10% to 15%, and 2,000 visual effects and animation workers lost their jobs with the collapse of Technicolor. Unlike other markets where tax incentives have been a driving force in attracting international productions, it has been the cheap labor that has turned India into a global outsourcing powerhouse; however, that might start to change as the Indian government becomes more actively involved.

India has over a 100-year history with films and filmmaking. “Given India’s long history with films, visual effects were not an unknown element here, though they weren’t really used to their full potential,” states Akhauri P. Sinha, Managing Director, India for Framestore. “I, along with many other people from the industry in India, have always maintained across various forums that visual effects is something filmmakers should start thinking about at the beginning, instead of as an afterthought or a corrective tool, as it usually was in the early days in India. As filmmakers started understanding the ability of visual effects to help realize their cinematic vision, and their films did well commercially, it

made others aware of the possibilities. The other part of the puzzle that also fell in place was the availability of talent, as over the years, working on global projects had grown the talent pool as well as honed the skills of the artists here.” Machine learning/AI are tools that aid air artists and enhance output. “It’s also reasonable to assume that as technology evolves, certain repetitive tasks could also be automated. Digital infrastructure like cloud computing and storage has been a game-changer for smaller Indian companies as it allows them to scale without expensive capital outlays.”

“The Indian government has made significant progress in supporting the visual effects industry,” remarks Sudhir Reddy, President, Global VFX Business for Digital Domain. “The Ministry of Information and Broadcasting now offers incentives for international film and visual effects projects, allowing production service companies to claim up to 40% of qualifying expenses incurred in India, with a maximum payout of approximately $3.6 million USD, substantially higher than previous limits. The government has also established the National AVGC [Animation, Visual Effects, Gaming and Comics] task force and proposed policy frameworks to develop the sector further. However, comprehensive nationwide implementation is still underway. At the state level, support remains uneven, with only some states [such as Telangana and Karnataka] implementing dedicated visual effects policies. Skill development programs are in place, but there is still a need for more focused approaches to advanced specialization and training. Overall, while necessary steps have been taken, large-scale and consistent national support is still evolving.”

Since 2005, the Indian visual effects industry has experienced rapid growth. “Initially centered on basic corrective work such as clean-up and motion graphics, the sector has transformed

dramatically due to exposure to international markets and the entry of global visual effects leaders, like Rhythm & Hues, DreamWorks, Technicolor, DNEG, Digital Domain, Framestore and ILM,” Reddy explains. “These companies have integrated Indian talent into the global production pipeline, elevating technical standards and expertise. Domestically, Indian filmmakers are now making visual effects a core part of their creative process, leading to more ambitious storytelling and higher production values in film, television and streaming content. Internationally, India has become a vital hub for major Hollywood productions, delivering complex visual effects at scale. Strategic partnerships, government incentives, and ongoing investments in talent and technology continue to strengthen India’s reputation as a major force in the global visual effects industry.”

When Digital Domain established a facility in Hyderabad in 2017, Reddy was appointed the Head of Digital Studio. “Digital Domain expanded to India to support global growth, improve margins and leverage the country’s growing talent and technology pool,” Reddy notes. “The supportive ecosystem and government encouragement for the AVGC sector were also key factors. The primary advantages have been access to skilled professionals and cost efficiencies. Challenges include heavy reliance on North American projects, limited high-revenue domestic work and time zone differences. Indian teams have, however, demonstrated strong adaptability and flexibility.” Real-time rendering, cloud computing and AI/ML are reshaping the visual effects landscape. “Artists are actively upskilling to maintain global competitiveness. Indian tech firms are also investing in cloud render farms and data centers, with support from government initiatives. The rise of AI tools is expected to significantly affect outsourcing studios in India,

particularly those focused on labor-intensive tasks like roto, paint and camera tracking,” Reddy says.