The SPS STEM Journal

1

Vector

Fifth Edition

Alexander Apkarian Editor in Chief

Deputy Editors

Noah Whale

Zak Farazi

Vikram Bhamre Astronomy

Biology

Biotechnology

Book Reviews

Chemistry

Computer

Science Engineering

Kai Philipose Ryo Kusakari

Thomas Hallé Ynon Weiss

Yuxi Liu

Alexis Andronikos Luca Viviano Dom Yap Harishan Ganeshan

Charles Calzia Rahul Marchand

Environmental Science Frederick Dehmel

Gabriel Treneman Shashwat Sangwan Medicine

Maths

Leo Wen Oliver Milroy Goulding

News

Physics Psychology

Marco Cina Rabin

Adrien Durantel Teddy Onslow

Shivan Arora Varun Vashisht

2

Editorial

Welcome, readers, to the fifth edition of Vector,theSt Paul'sSTEM journal.It hasbeen a privilege to lead a large team of talented peers in collaboration with our dedicated teachers.I would like to sincerely thank all of thoseinvolved

This year?s edition was conceived and edited in a somewhat unconventional fashion; I began the work in London and completed it in Californiaduringa10 week research stint Although this complicated logistics, it strengthened the publication Working in a laboratory enabled me to bounce ideas off several highly esteemed researchers who wereabletoprovideinvaluablefeedback.

The title, Vector, has always fascinated me Derived from the latin ?Vehere? , meaning to carry, its scientific usage varies according to discipline In mathematics, physics, and engineering,avector conveysmagnitudeand direction In biology it denotesavehicleused tocarry foreignmaterial intoacell

In an increasingly complex and interconnected world, the most profound scientific advancements will come from interdisciplinaryapproaches.

We see evidence of the significance of multidisciplinary perspectives all around us The discovery of the DNA double helix was only fully harnessed due to parallel developments in computational power, information theory,and powerful algorithms. Likewise, the record breaking speed of vaccine developmentsduring Covid required breakthroughsin both biology and computer science ? AI programs were used to design mRNA molecules

Whilst some will confine this multifaceted approach to within STEM disciplines, the scientific world would greatly benefit from

greater integration with literature and philosophy. Effective communication in science is of paramount importance if the public are ever to actualize the extent of ground breakingdiscoveries. Moreover, it is critical that the fidelity of scientific work isheld to the highest possible standard ? both to advance science and to maintain the public?s trust Famous missteps include the false claims about cold fusion in the 1980s, Andrew Wakefield?s infamous paper on MMR vaccines, and the cancer causingpotential of powerlinesin the 1990s.

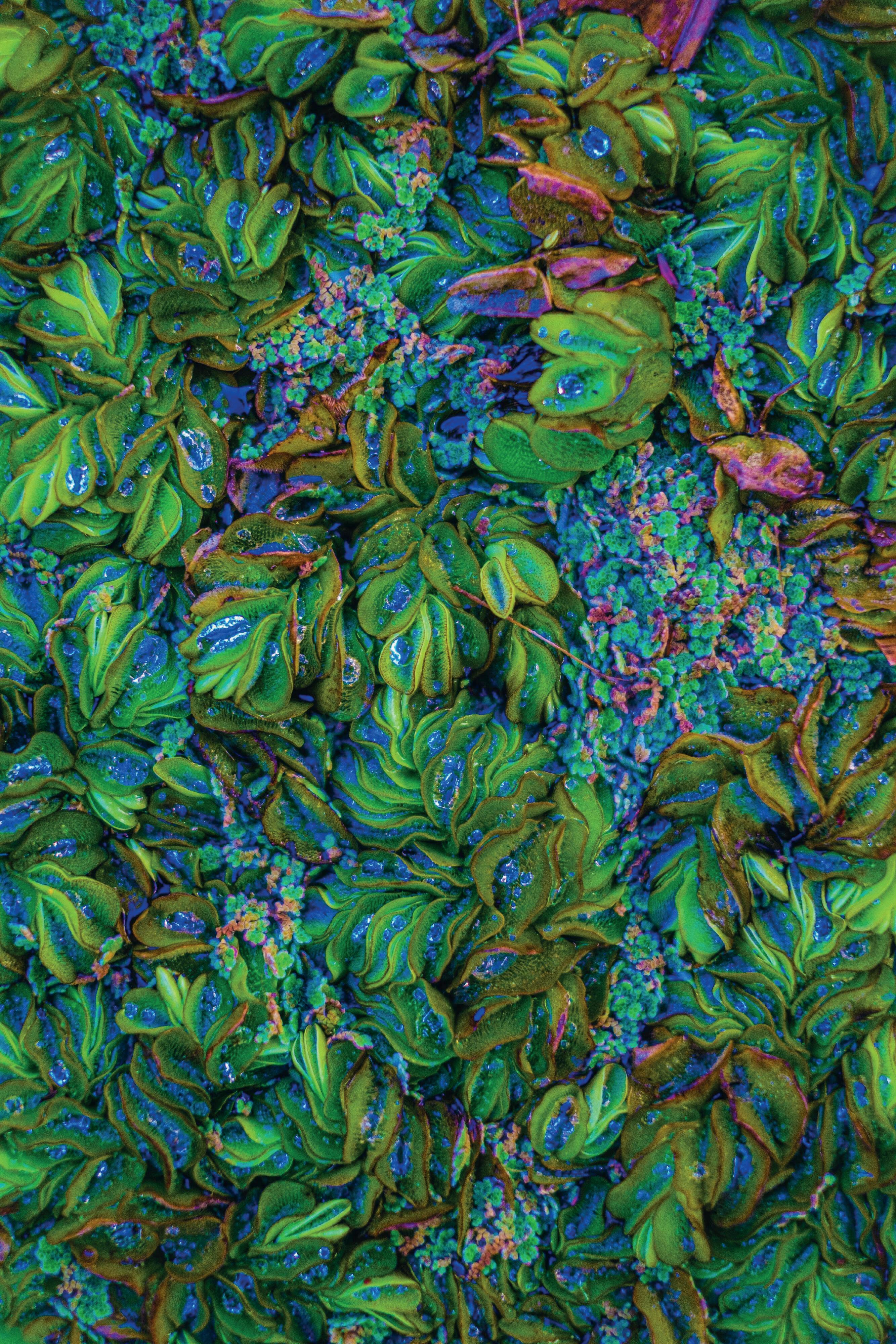

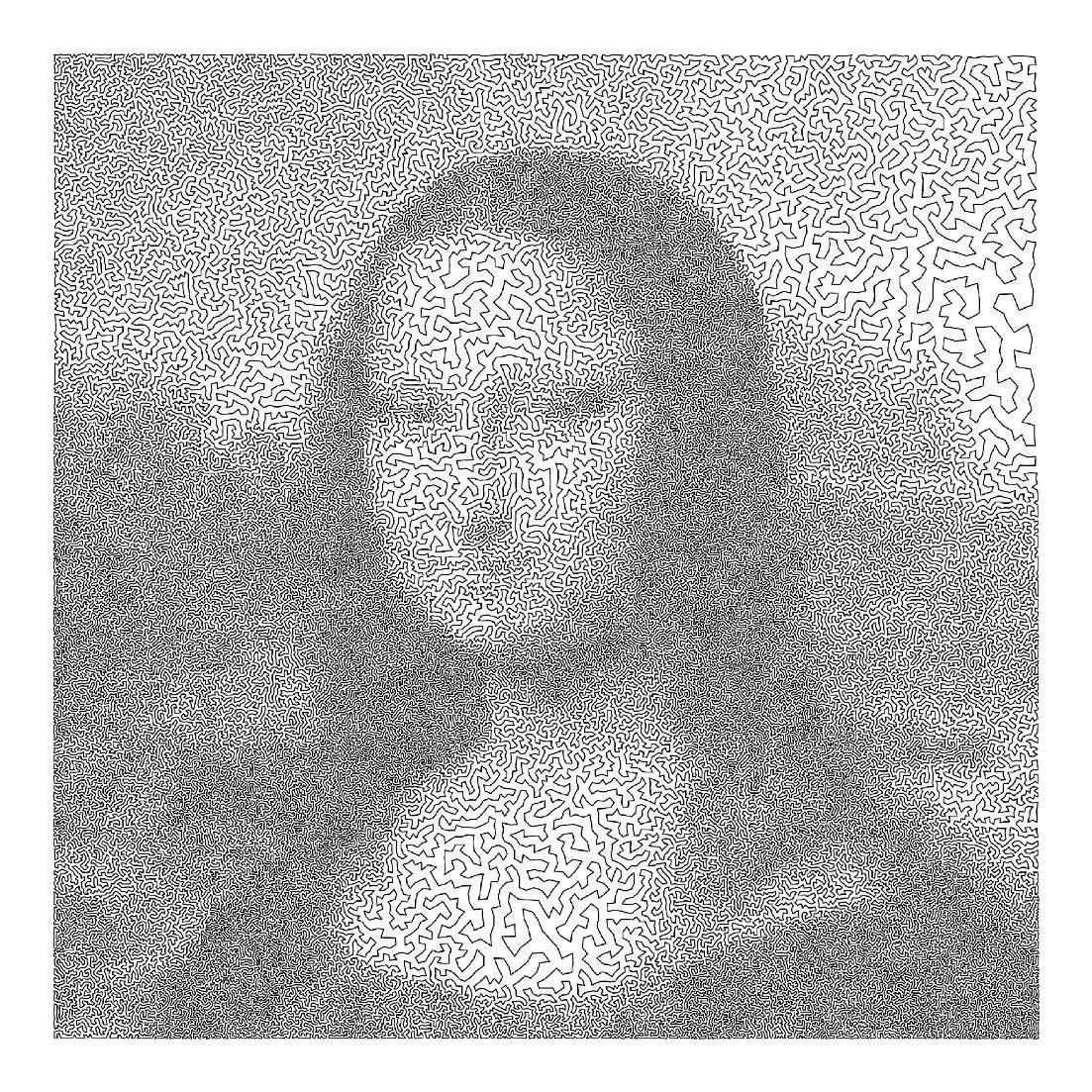

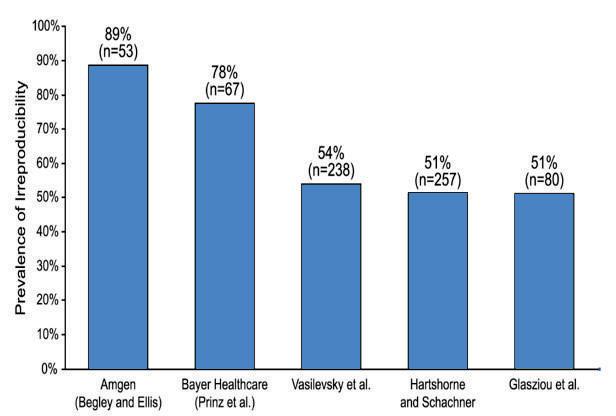

A lack of rigour and skilled communication within the scientific field has, in great part, led to the ?irreproducibility crisis.? Reproducibility is key as it allows peers to review, confirm, and build upon work The universal aim to publish papers soon after a discovery (driven by a ?publish or perish? environment) should be balanced with the quality of scientific research and the clarity of papers Below istheresult of ameta study concernedwithirreproducibility inscience

In order to effectively combat this?crisis?,we must foster interdisciplinary and rigorous environments The separation between STEM and humanities should not act as a closed, but rather a permeable, barrier, allowing for the sharing of ideasand themes, ensuringeffectivescientificcommunication.

3

Alexander Apkarian

Astronomy

Astronomy Society: Observing The Sun page5

Biology

Myostatin and Its Role in Doping page7

The Key to Eternal Youth?The Technique that Rewound the Age of Skin Cells by 30 Years page9

Clash of the Titans: Spinosaurus vs Tyrannosaurus page11

Mendel's Discoveries in the Understanding of Complex Diseases page13

Biotechnology

Mycoremediation: How Fungi Can be Used to Clean the World page15

Lipid Nanoparticles: A New Era of Vaccines page16

Book Reviews

The Emperor of All Maladies By Siddhartha Mukherjee page19

A Brief History of Time By Stephen Hawking page20

Chemistry

The Catalytic Condenser Chameleon Device: Appreciating the Value of Metal Catalysts page22

Contents

The Chemistry Behind Acne page24

The Biochemistry of Coffee page26

Computer Science

The Illusion of the Third Dimension and the Tropes of Modern First Person Shooters page28

How does a Bayesian Neural Network Model Differ from a Traditional Point Estimate Neural Network? page29

The Travelling Salesman Problem page31

Engineering

"EJECT! EJECT!" page33

The Future of the Factory page34

Environmental Science

Constructing a 'Simple' Climate Model page36

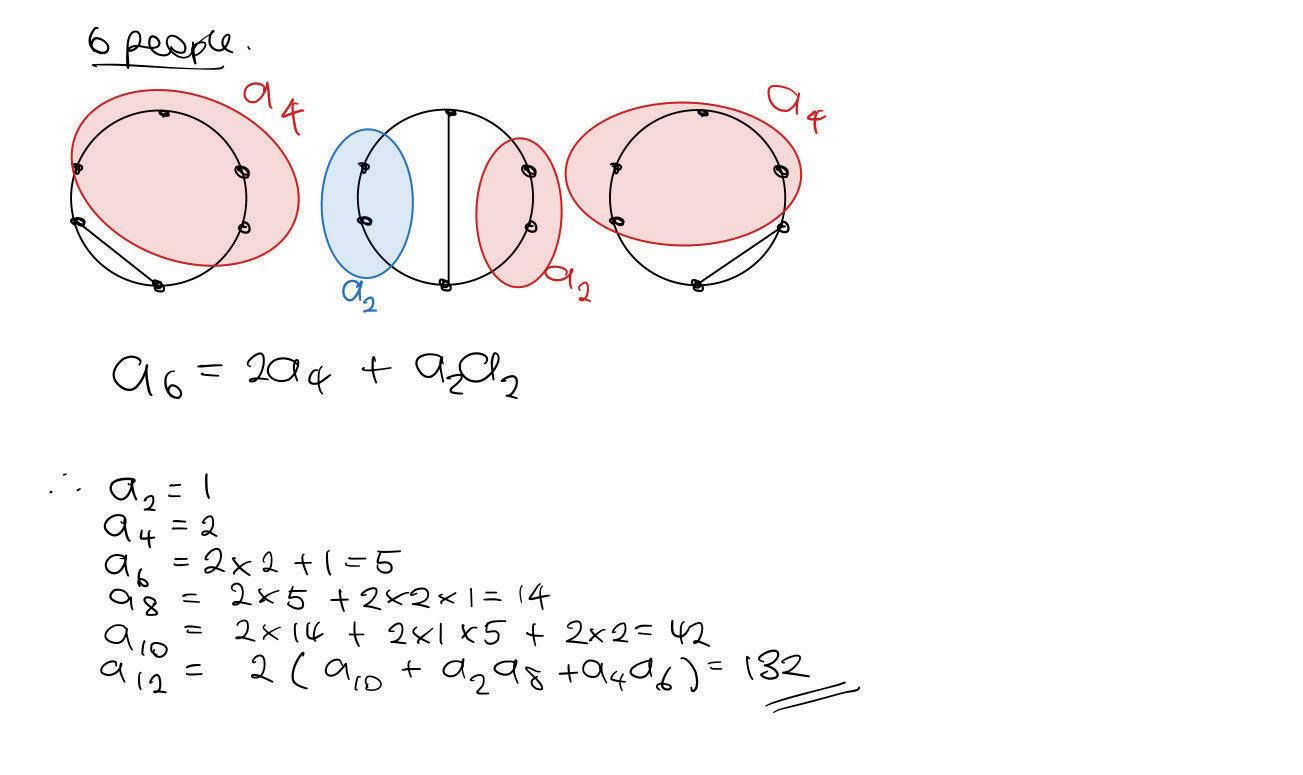

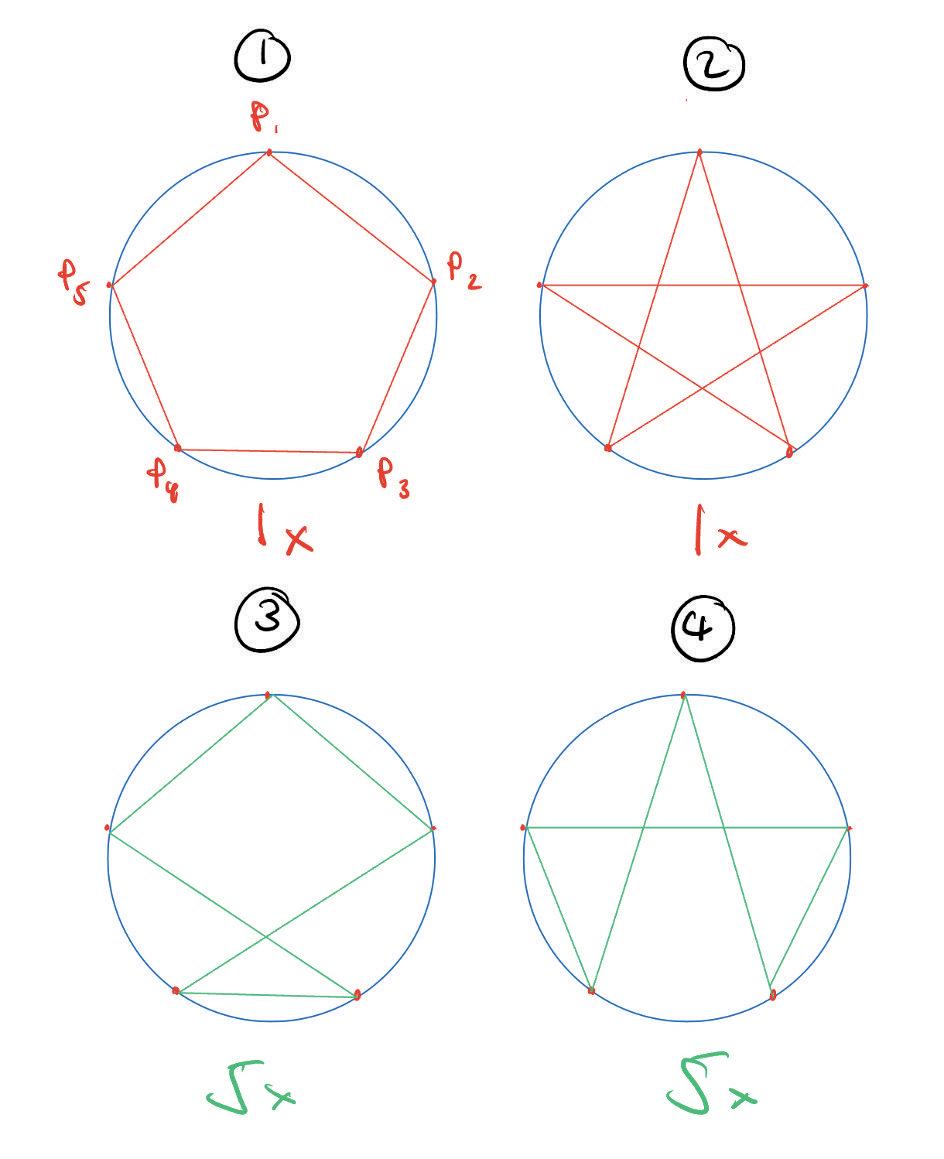

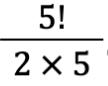

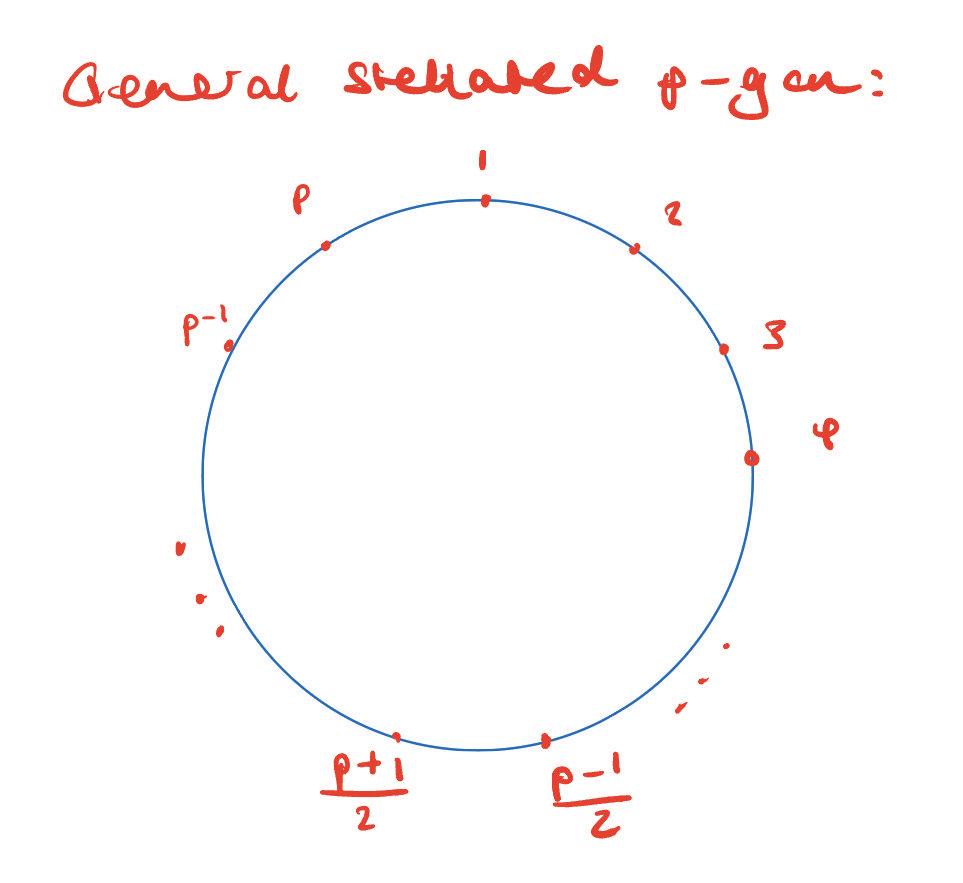

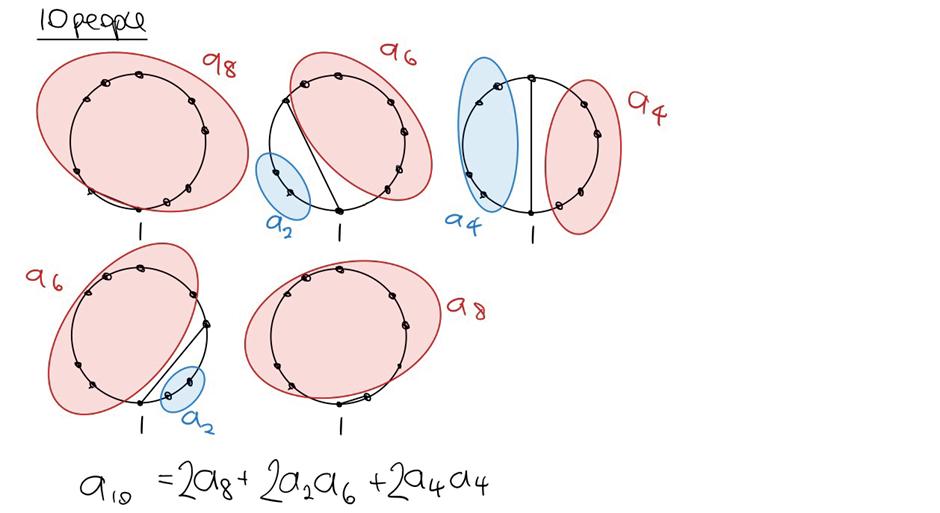

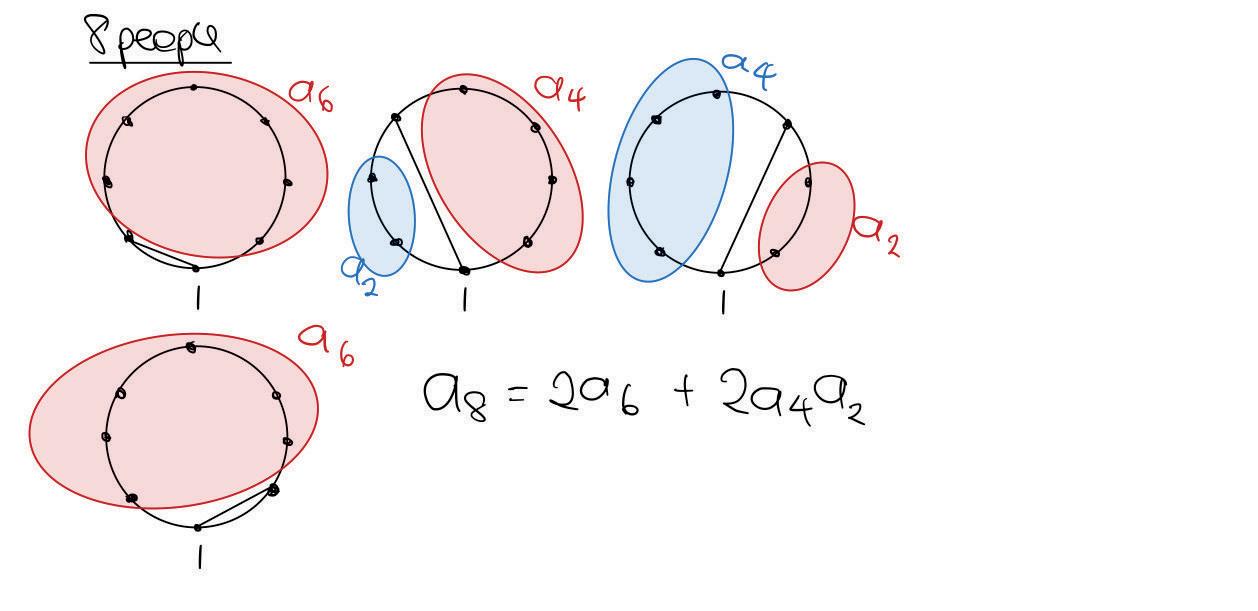

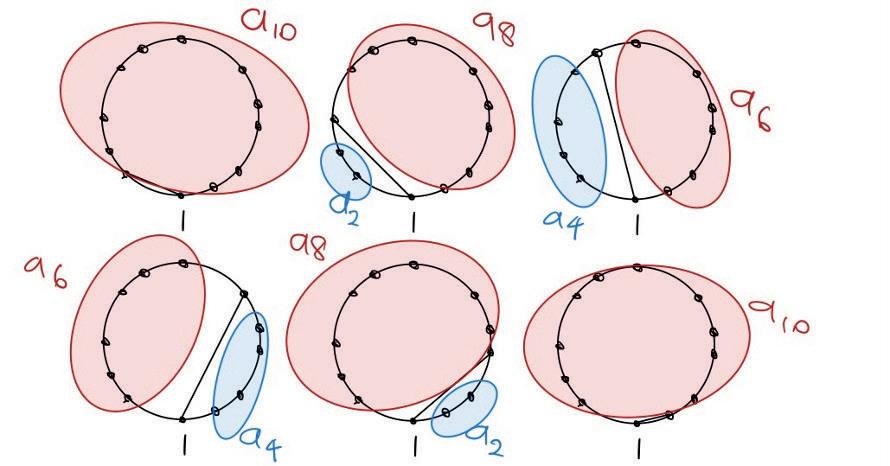

Mathematics Catalan Numbers page38

A Combinatorial Proof of Wilson?s Theorem page39

Medicine

The Future of Artificial Intelligence in Medicine page42

A Life for a Life: Xenotransplantation page 43

Human vs Disease page 45

News

Can Mistletoe Berries be Used as a Superglue? page 46

How do Hypergiants Die? page 46

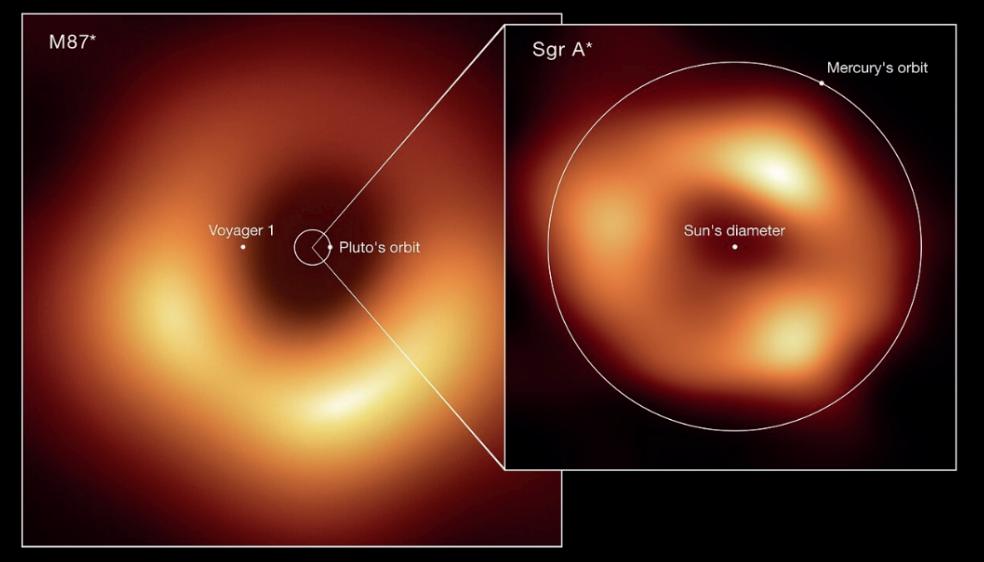

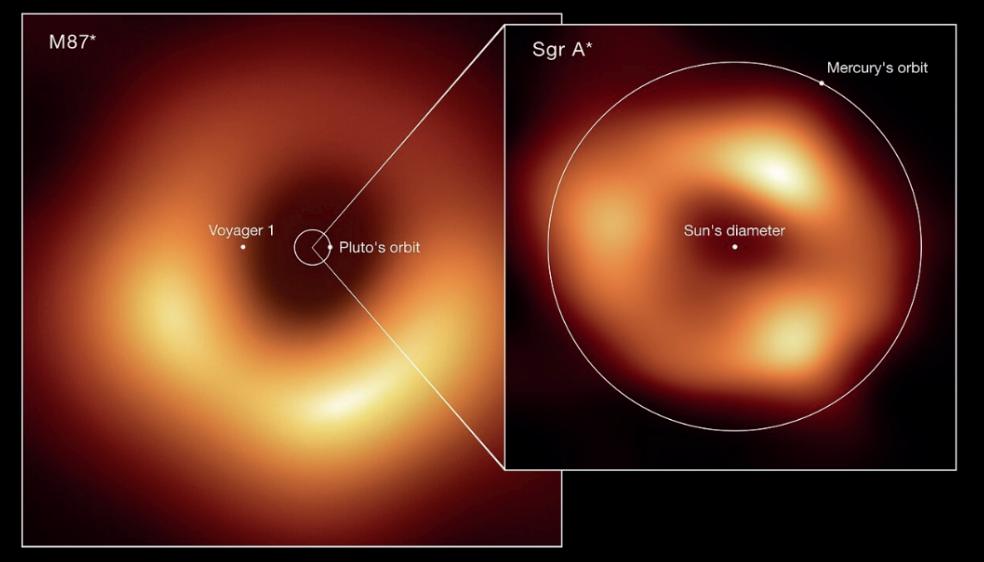

A New Black Hole? page 47

Do Mummies Still Contain Ancient Strains of Bacteria? page 47

Physics

The Doppler Effect page 48

Quantum Computing: Separating Fact from Myth page 49

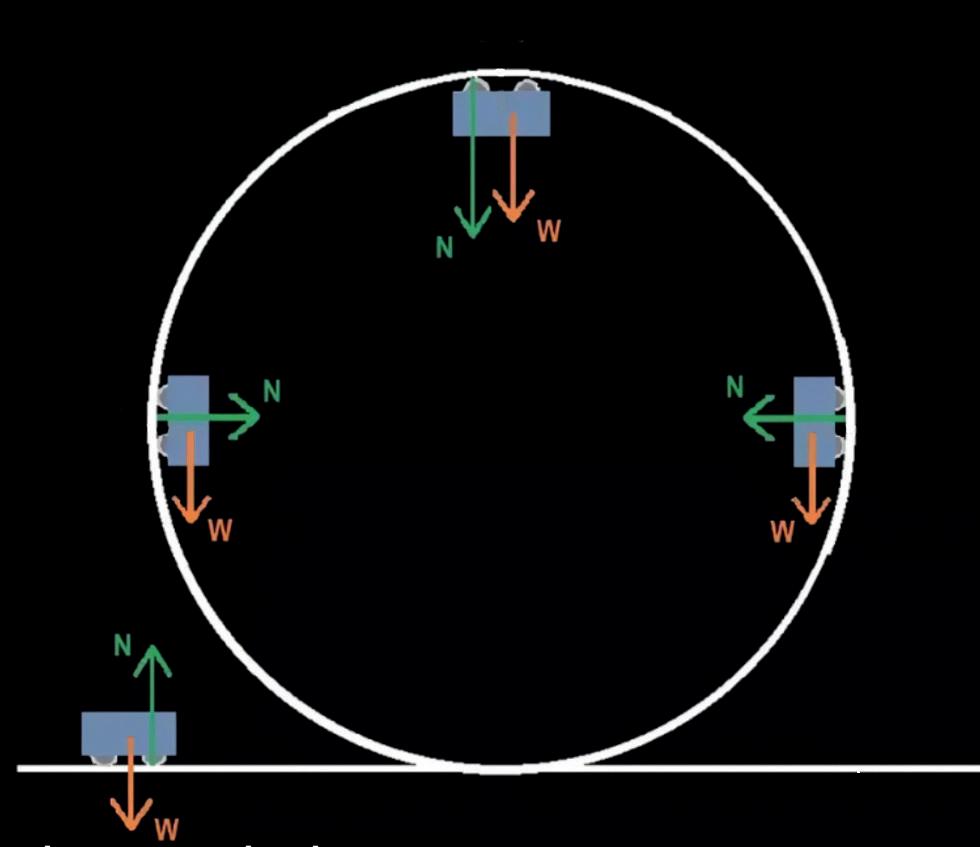

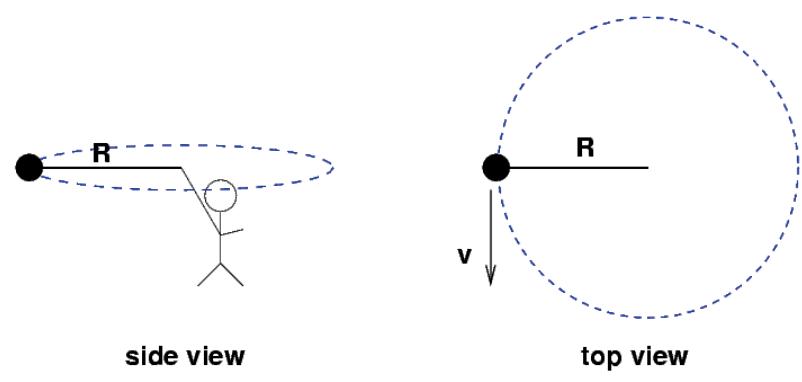

Centripetal Force page 51

Psychology

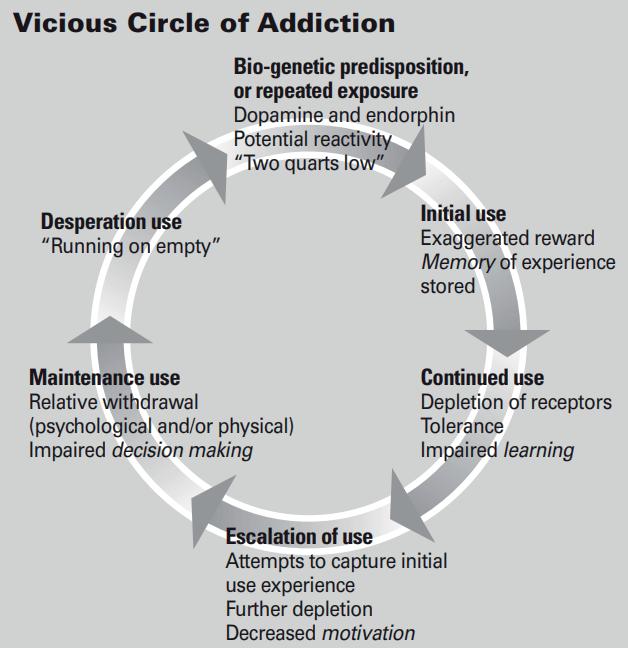

What is Addiction? page 53

Does Language Affect the Way in Which We Think? page 54

4

Astronomy

Astronomy Society: Observing the Sun

Danny Cui

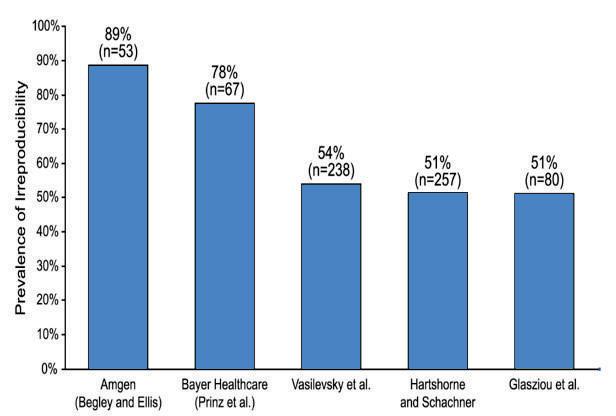

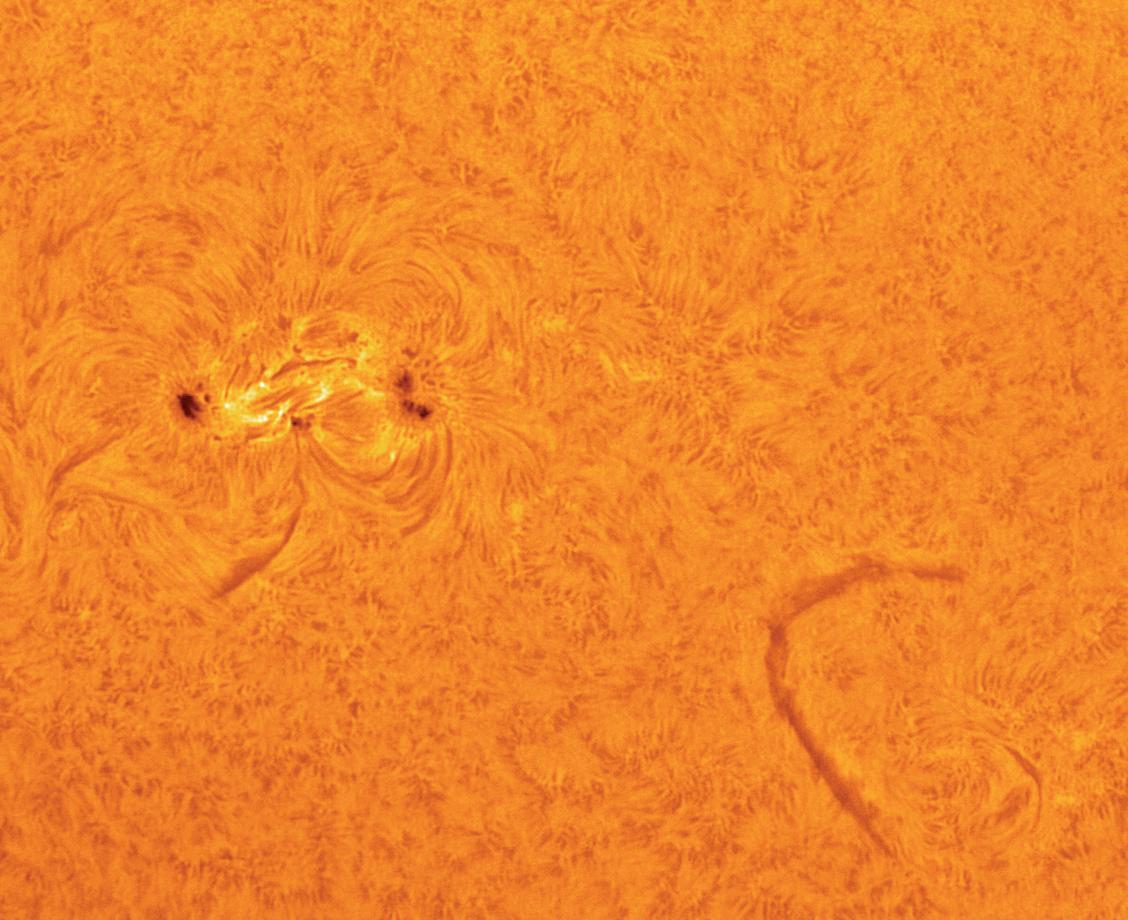

In June of 2022,right the end of the summer term, the Astronomy Society completed its first ever observation of the Sun. This viewing was successful and we were even able to see some of the details on the Sun's surface ? most notably sunspots and solar filaments.

But what are sunspots and solar filaments? How do solar activities like these affect us? How does the equipment used to observe theSunwork?

Solar filaments are structures of plasma and magnetic fieldswhich extend outwardsfrom the photosphere (the deepest observable region of the Sun?ssurface) They often form in the shape of a loop owing to their underlying magnetic field and can extend from 50,000 to 70,000 km into the corona (the outermost part of the Sun?s atmosphere) Giventhat their plasmaismuch cooler than that of the corona, solar filaments usually appear as dark line segmentsontheSun?ssurface Sunspots, as the name suggests, are dark spots on the surface of the Sun. They are minima of temperature on the Sun?s surface, caused by concentrations of magnetic flux, which prevent convection currents in the Sun from transferring heat to their surroundings. Sunspots have two main structures? theumbra(acentral region)and the penumbra (a surrounding region) The umbra is the darkest area of the sunspots, and the coolest area ? roughly around 2,700°C to 4,200°C This is because the magnetic field is at its strongest. The

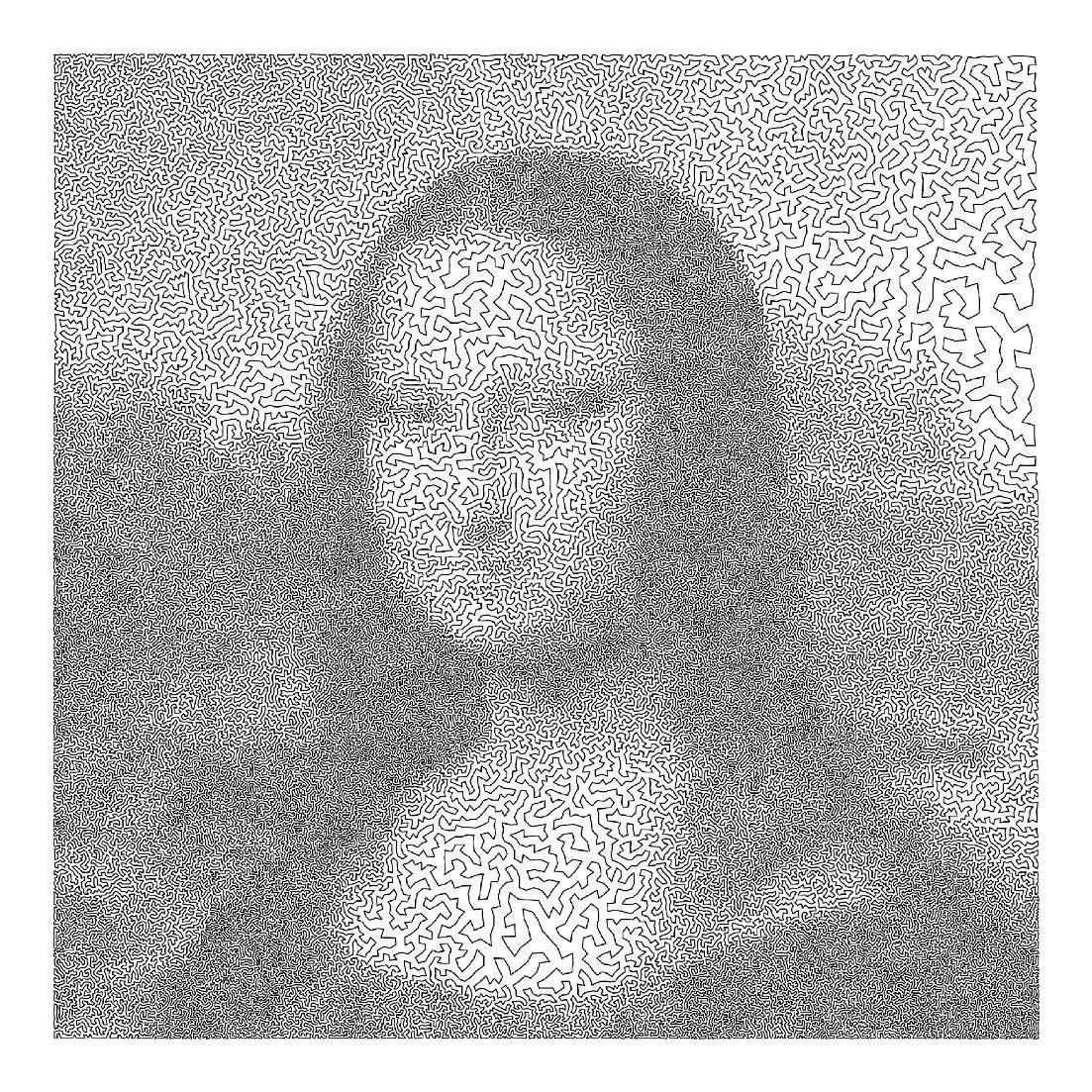

surrounding penumbra is brighter and has a temperature of around 5,500°C Sunspots often come in pairs of opposite magnetic polarity Below is a photo that was taken during our observation It illustrates a solar filament in the bottom right corner, apair of sunspots, and the curling of the sunspots' surroundings with the pattern of their magneticfields.

Sunspots form where strong magnetic fields emerge from the Sun?sinterior.These strong magneticfieldscatalyseother solar activities which accompany periods where a large number of sunspots appear. These activities include solar flares, where intense localised electromagnetic radiation on the Sun's atmosphere erupts owing to acceleration of charged particlesin theSuncaused by stored magnetic energy in the sun?s atmosphere; coronal massejections,wherelarge amounts of plasma are released due to intense magnetic activity on the Sun into the solar

5

wind; and other solar phenomena. These periodsof intense solar activity, referred to as?solar maxima?,occur in regular periodsof roughly 11 years. This is called the solar cycle,wherethemagneticpolarity of theSun flipsperiodically

Some coronal mass ejections (CMEs) result in large amounts of plasma travelling towards the Earth, carrying with them a strongmagnetic field.The shock wave of the travelling plasma compresses the Earth?s magnetic field, and its magnetic field interacts with that of the Earth's, storing energy in the Earth?smagnetosphere This is called a geomagnetic storm Energy in the magnetosphereincreasesplasmamovement, increasing current This energy can also induce electric currents in our power grids and other grounded conductors. This can cause instability in power transmission networks and result in the burning out of transormers. Given our dependancy on electricity,thisishighly problematic However, solar activity can also manifest itself in stunning ways. For CMEs which exude smaller amounts of plasma travelling towards the Earth, the plasma, being charged particles,is deflected by the Earth's magnetic field and towards the north and south poles These charged particles then collide with oxygen and nitrogen atoms, releasing energy in the form of colourful light,creatinganaurora

We observed these details on a telescope equipped with ahydrogen alphafilter,which allows for only a specific range of light wavelengths. This is critical as it limits the light collecting power of the instrument, making the image observed safe to the eye We also captured images in calcium-K or visible light to highlight different featuresof the sun We first captured thousands of frames of the Sun through a monochrome camera with the shortest possible exposure time For each image, the exposure time is kept to a minimum to prevent any blurring

owing to the atmosphere. Subsequently, we used a stacking software which used a point of reference in the image to align and combine the frames. Usually, only the best 50% of frames are used, as the blurred frameswill only downgradethefinal product. The image is then sharpened in image processing software To do this, a histogram of the data is manually altered to enhance the detail on the sun, after which a noise reduction algorithm is applied Finally, the individual red,green and bluechannelsof the image are tweaked to artificially give colour This is up to the preference of the user, and some people like darker, redder versions of theSunwhileothersprefer alighter edit

If you are interested in extraordinary activities related to astronomy like this one, be sure to email Dr Gane, or come to P4 on Tuesday lunchtimes at 1:00 pm Lastly, the astronomy society wants to thank our financial supporters who have given us the money tobuy theequipment.

6

Biology

Myostatin and I ts Role in Doping

Hugo Lefranc

Doping is now a global problem for major sporting events Sports federations, led by the International Olympic Committee, have, for the past half century, unsuccessfully attempted tohalt itsspread It wasexpected that educational programs, testing, and supportive medical treatment would decrease this behaviour Unfortunately, this has not been the case. In fact, new, more powerful, and undetectable doping techniques are now abused by athletes Simultaneously, even more sophisticated distributionnetworkshavedeveloped

Myostatin is a molecule discovered in 1997 by a team working on the Transferring Growth Factor B (TGF B) hormone family usingatechniquecalled degenerativePCR It signals through the activin receptors and functions as a negative regulator of skeletal musclegrowth.

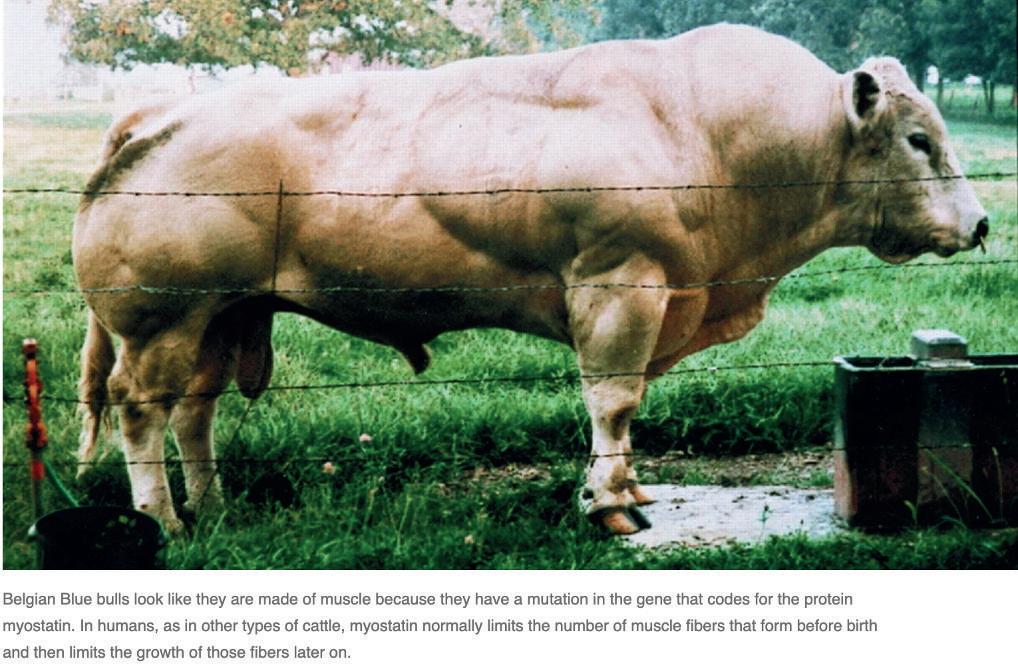

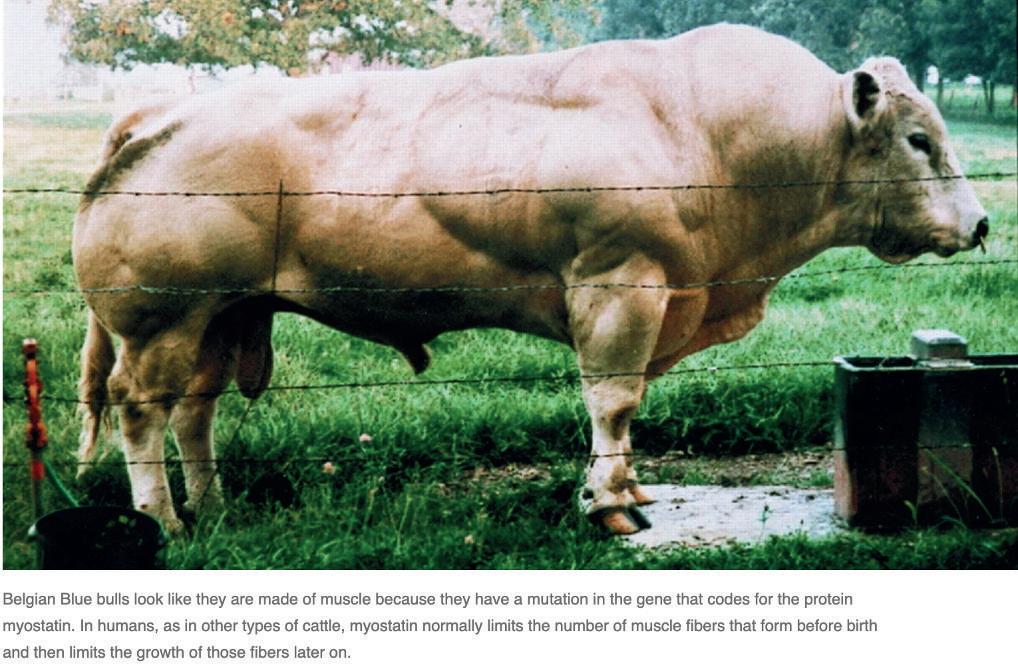

The TGF-B group ran numerous in vitro functional assays using mouse models The knockout mice without functional myostatin survived but with an altered biology that clearly pointedtotheroleof myostatininthe body:they had200%moremusclemassthan the control mice owing to an increase in the size of muscle fibres and in the number of myocytes A similar phenotype is seen in some breeds of cattle known to be genetically ?doublemuscled?,for examplethe Belgian Blue. It was subsequently noticed that a five year old boy who had a dramatic increase in muscle masswasfound to have a defect in his myostatin gene. Following this, interest in myostatin significantly increased. Athletes and bodybuilders in search of

performance enhancing drugs were particularly fascinated by it There were many questions being asked, but most importantly, could the role of myostatin be useful intheworldof doping?

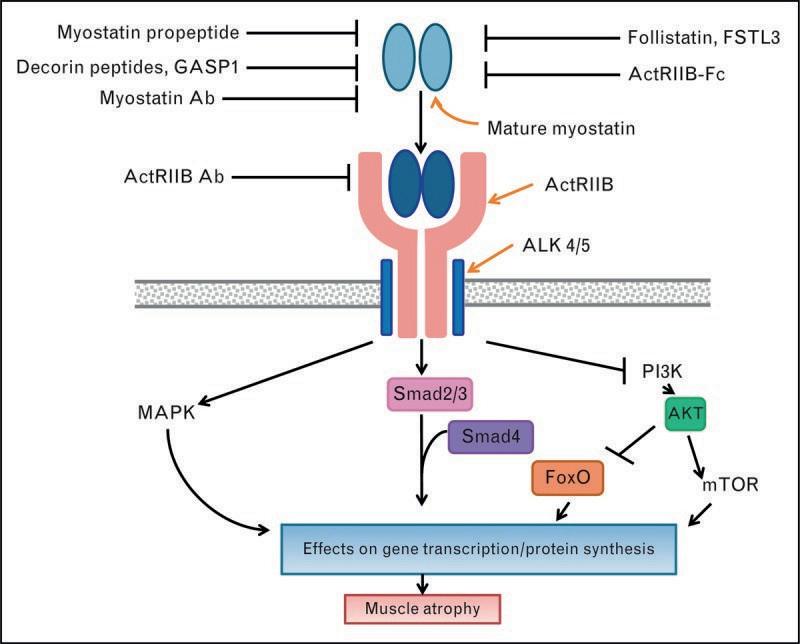

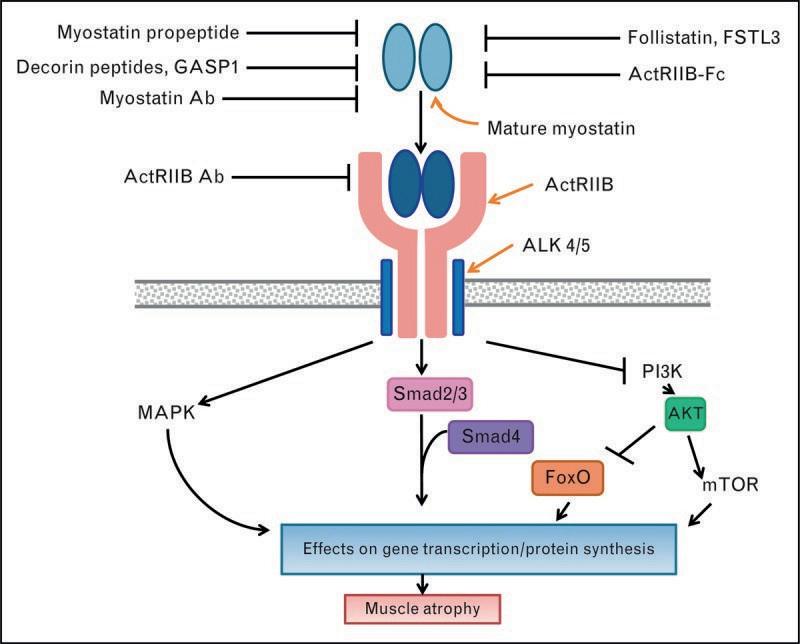

The first thing to note is that unlike mTOR (the start button for muscle growth), myostatin signalling isspecific to the muscle; thisisagoodstart for any prospectivedopers as it means that a molecule that targets myostatin will only affect the desired part of the body Also, the myostatin pathway encompasses a range of possible doping targetsother thanmyostatinitself

To become effective in muscle cells, myostatin needs to be modified by other proteins These proteins, called proteases, degrade myostatin, making it an optimum size for activity.Onceactive,myostatin binds to proteins on the muscle cell surface that then bind to the TGF B hormone receptors Myostatin binding activates these receptors, allowingthem to phosphorylatetwo proteins in the cell called SMAD2/3 and SMAD4. These then bind to the nucleus and to specific parts of the DNA ? and by an as of yet unknown mechanism ? switch off muscle protein synthesis This illustrates the

7

complex process associated with protein synthesis supression in muscles It also demonstratesthat there aremutlipletargets for myostatinrestriction.

by cachexia, a symptom of cancer that causes the loss of muscle and fat despite adequate nutrition. Some studies in mouse models suggest that myostatin inhibition may prevent cancer related muscle loss in both lung and skin cancer (melanoma). This suggeststhat myostatin inhibition should be further researched for the prevention of catexia-relateddeathsinhumans.

Since its discovery, pharmaceutical companies have produced myostatin ? inhibiting drugs to treat diseases such as muscular dystrophy However, this means that such drugs are available to athletes and bodybuilders who would look to consume these to improve their muscle mass ? a key attribute in many sports One such drug produced is Stamulumab. This is a G1 immunoglobulin antibody which binds to myostatin and preventsit from binding to its target site, thus inhibiting the growth limiting action of myostatin in muscle tissue In 2019 it was added to the World Anti Doping Agency's list of prohibited drugs as it was feared it gave competitors too much of an advantage. However,aswith most prohibited drugs,this doesn? t remove the threat of usage, and as a result it is still being consumed throughout theworldby athletes

But what does myostatin inhibition actually do?Aswesaw earlier,itsmain effect isthat it reducesmusclelossduringinactivity and can also help build muscle However, scientists also believe that myostatin inhibition may prevent muscle loss from diseases such as muscular dystrophy In addition,over 20%of cancer deaths in human patients are caused

However, myostatin inhibition does not come without side effects Firstly, increased muscle growth will lead to an increased risk of injury owing to stress on the muscle fibres This may be especially true for individuals using myostatin inhibitors as workout supplementsinstead of asamedical treatment for muscular dystrophy or other disorders. Other possible side effects include increased chanceof tendon rupture, heart failuredue to inflamed cardiac muscle, and rhabdomyolysis(a breakdown of muscle fibresthat often leadsto kidney failure) It is clear that myostatin inhibition can play a critical role in doping. The plethora of targets for myostatin signalling supression, along with its various benefits, makes myostatin inhibition a very attractive process to potential dopers However, this has side effects Nonetheless, a survey conducted by Dr Robert Goldman claims that over 50%of athleteswould take a drug that guaranteed them unlimited, undetectable sporting victories for five years, even if it was followed by instant death Thus, myostatin could be a very attractivetarget for dopinginthefuture.

8

The Key to Eternal Youth? The Technique that Rewound the Age of Skin Cells by 30 Years

Kai Philipose

Crackingthecodeof why weageandhow to reverse it has been the Holy Grail for scientists, and, in recent years, for billionaires seeking to literally buy more time to spend their ever increasing mountains of wealth. Luckily for them, as well as us, a team of researchers from the Babraham Institute in Cambridge seems to havebrought usonestepcloser tothisgoal.

What is ?ageing??Ageing can be defined as the gradual decline in organismal fitness that occurs as a consequence of the molecular ?hallmarks? of ageing: telomere attrition, genetic instability, epigenetic and transcriptional alterations, and an accumulation of misfolded proteins Thus, many of the symptoms we associate with ageing are a direct consequence of these molecular hallmarks For example, loose wrinkly skin iscaused by the dysfunction of skin fibroblasts in the dermis. With age, they produce less collagen, elastin, and growth promoting factors that create the foundation of a healthy, plump epidermis (thevisiblelayer of theskin)

Sohow might wereverseageing?

Perhaps the idea of turning back time on our cells seems preposterous, however, the resetting of the ageing clock is a frequent, deeply installedprocessthat iscrucial tothe nature of life: it occurs with every fertilisation event For humans,thisinvolves the fusion of two cells, a sperm and an egg, each of whose chronological age is measured in decades, to give rise to a zygote which somehow has no trace of the parental cells' age Although one might make the argument that the mechanism by which these germ cells fuse and then rejuvenate is specific and so not replicable

in other cells of the body, this was be disproven by Dr John Gurdon?s pioneering experiment in1962.

Dr Gurdon showed that differentiated nuclei from tadpole intestinal or muscle cells could be transferred into enucleated Xenopuseggs and give rise to mature and fertile male and female frogs that showed no signs of prematureageing.Just likethefused nucleus of the sperm and egg during fertilisation,the somatic nucleus was reprogrammed and any hallmarksof ageing were reset.Therefore,in principle, there must be a mechanism which we could utilise to reverse the molecular attributes of ageing and phenotypically rejuvenatesomaticcells

Enter YamanakaFactors

Discovered by Shinya Yamanaka in 2006, these four transcription factors (Oct3/4, Sox2, Klf4, c Myc) can turn differentiated adult cells into pluripotent stem cells in a process called induced pluripotent stem cell (iPSC) reprogramming iPSC reprogramming has shown to be able to reverse many age associated changes, including telomere attrition and oxidative stress Unfortunately, however,it also results in the lossof original cell identity and so function To avoid this, researchers from the Babraham Institute decided to takeanother approach,wherethe Yamanaka factors are only expressed for short periods of time This novel strategy called transient reprogramming was able to achieve a ?robust and very substantial rejuvenation? of around 30 years in fibroblastswithout lossof cell identity.

iPSC reprogramming consists of three phases: the initiation phase (IP), where somatic expression is repressed; the maturation phase (MP), where certain

9

pluripotency genes become expressed; and the stabilisation phase (SP), where the complete pluripotency program is activated. Previous methods of transient reprogramming had only reprogrammed within the IP, but researchers were able to show that further reprogramming up to the MPmay achievegreater rejuvenation.

In their method,Babraham researcherstook fibroblasts from three middle aged donors (aged 38, 53, and 53) and then reprogrammed their fibroblasts for different lengths of time (10, 13, 15, 17 days) using a ?reprogramming cassette? that encoded Oct4, Sox2, Klf4, c Myc to ensure all four Yamanakafactorswereexpressed

The efficacy of the reprogramming was determined by measuring changes in the different molecular hallmarks of ageing through methodssuch asexaminingavariety of ageing ?clocks? as well as looking at restoration of function in individual cells Ageing ?clocks? distinguish biological age fromchronological ageand areimportant for identifying genetic and environmental factors that influence the ageing process. In this experiment, a BiT age clock was used to investigate rejuvenation in the transcriptome, whilst an epigenetic clock was used to find evidence of epigenetic rejuvenation Results pointed to a 30 year quantitativerejuvinationinboth.

The function of these reprogrammed cells was also considered, as they should both appear and behave like young cells. For example, production of collagen is a major function of fibroblasts, so the expression of all collagen geneswere examined beforeand after reprogramming They found that collagen I and IV (proteins downregulated with age) were restored to youthful levels after transient reprogramming Fibroblasts arealso involved in wound healingresponses by movinginto areasthat need repairing(we heal slower with age due to the reduction in the cell migration speed of ageing

fibroblasts) Transient reprogramming improved the median migration speed, although there some speeds were unaffected Overall,the resultsshowed that maturation phase transient reprogramming (MPTR) rejuvenates multiple molecular hallmarks of ageing, including the transcriptome, epigenome, functional protein expression, and cell migration speed Intriguingly,it wasalso found that 13 days of reprogramming was the ?sweet spot? and longer time periods diminished the extent of transcriptional and epigenetic rejuvenation.

The mechanism behind their successful transient reprogramming is still not fully understood and there remain a great many challenges when it comes to therapeutic applications Only around 25% of the cells were successfully reprogrammed and other hallmarks of ageing such as telomere attrition were unaffected Therefore, it is perhapstoo early to say that we have found the silver bullet to end ageing, but we may have taken one small step closer to finding themythical Fountainof Youth.

10

The Fountain of Youth by Lucas Cranach

Clash of the Titans: Spinosaurus vs Tyrannosaurus

Aiken Lau

A one minute fight in Jurassic Park III immortalised the clash between the Spinosaurus aegyptiacus and Tyrannosaurus rex. One of the only incidents in popular culture in which the King of the Dinosaurs was promptly dethroned. However, this fight,involvingtheSpinosaurussnappingthe Rex?s neck with its forelimbs, is nowhere near thetruth.With that aside,let?sdiveinto the facts behind this Cretaceous (NOT JURASSIC)clash

shaped tail packed with muscle similar to a crocodile?s; this served as both a strong weapon to sweep potential targets off their feet, and an aid for aquatic locomotion (perfect for itshome on the marshy coastsof CretaceousEgypt).

Our first contender, the Spinosaurus, lived around 90 million years ago and was a semi-aquatic predator. Its most impressive prey include the eight metre sawfish, Onchopristis Spinosauruses could grow up to 17 18 metres long and exceed 7 tons in weight It isthe largest carnivorousdinosaur ever discovered. The giant sail on its back would most likely have been for intimidating rivalsand thermoregulation It also had long crocodilian jaws with conical teeth. Small pores at the ends of the snout would have been used for sensingvibrationsin thewater ? a great tool for seizing fish. Its viciously large claws on its muscular forelimbs were also adept at killing fish and land animals alike. Despite the movie dinosaur having a conical tail, the Spinosaurus was recently discovered to have had a broad, paddle

This habitat was one of the most dangerous places to live in Earth?s history The Spinosaurus coexisted with another large carnivore, Carcharodontosaurus (which was also bigger than the T Rex) Although it seldom fought with Carcharodontosaurus as the latter mainly tackled larger prey (like other dinosaurs) on land. But, this does not mean they never clashed. They would encounter each other regularly in the dry season as Spinosaurus? aquatic hunting groundsdried upintheheat forcingit tohunt land animals This suggests that the Spinosaurus, despite being specialised to eat fish, was more than capable of holding its ground against large dinosaurs These confrontations often turned in the Spinosaurus? favour due to its large sail, which intimidated other opponents and won askirmishwithout asingleblow.

The Tyrannosaurus rex could not have been more different This prehistoric tyrant lived in the Late Cretaceous period, 67 65 million yearsago,preferringthecomfort of dry land This 12 metre North American juggernaut was shorter than the Spinosaurus, but was the heaviest of all known carnivorous dinosaursat around ten tons.A robustly built predator, the T Rex had a broad head and neck, and serrated teeth as long as bananas

The jaw packed ahuge bite force of up to 6.5 tons,which isthestrongest biterecorded for all land animals and could crush a car This

11

enabled it to harness?puncture-pull feeding? , which involved the predator's biting deep into its prey, puncturing the insides Strong neck muscles would then pull back the head and rip out a huge mouthful of flesh, bone, and organs to inflict critical damage, killing the unfortunate victim with shock and blood loss This unique method of feeding was exclusiveto theTyrannosaurusand itsfamily as it requires teeth rigidly attached to the jaw (otherwise all the teeth would be unceremoniously pulled off when the animal attempted it) and robust neck muscles to pull back the huge head while itsteeth were embedded deep in the flesh It developed this way of feeding to pierce the armour of large herbivores, like its equally iconic contemporaries Triceratops and Ankylosaurus. Notably, recent research has suggested that this predator hunted in family groups, not just the men, but the women and the children For them hunting wasafamily excursion!

So what would happen if these two prehistoricpredatorsmet?

Short answer:They wouldn? t evenfight

In the first place, they would not have even come into contact as they lived on different continents 40 million years apart. Even if they had met, there would have been nothing to fight over, as they occupied different ecological niches and shared different forms of prey At most, they would have displayed threats to each other (the Spinosaurus would probably have won this with its gargantuan size and intimidating sail), instead of going into a fully fledged fight, which could have crippled, if not outright killed, both creatures Remember, these creatures aren? t movie monsters, they are animals that aim to conserve energy. As such,aimlessfighting would certainly not be

beneficial for either party.

If coaxed to fight, the outcome would have been heavily dependant on the setting On dry land, whilst the Spinosaurus had more experience in dealing with large carnivores like Carcharodontosaurus, it would find it harder to launch a surprise attack owing to its vast size and sail The T Rex would most likely reign victoriousin thissituation,taking advantage of its powerful jaws evolved to grip and tear large prey, while the Spinosaurus? jaws and claws were more suited for fish. However, in any remotely aquatic habitat, it would be a completely different story. The Spinosaurus was the undisputed king in the water, easily propelling itself in the water and detecting itsrival?slocationwiththeporesonitssnout.

The T. Rex, would definitely be outmanoeuvred by its semiaquatic rival Funnily enough, the T. Rex would have been preoccupied tryingnot to drown,rather than focusingonthefight

To conclude, between these two reptilian behemoths, there is no clear winner Each one had its own strengths and ecological niches, making the outcome of a potential fight heavily dependant on the location However, it should be noted that research into theseapex predatorsisstill ongoingand new discoveries may be made which could dramatically shift our understanding of either dinosaur Who knows, perhaps newfound knowledge may shed light on the conflict of thesetwoprehistoricbeasts.

12

Mendel's Discoveries in the Understanding of Complex Diseases

Yuxi Liu

With roughly 80% of all rare diseases resulting from genetics, 400 million people worldwide suffer from 7,000 distinct Mendelian disorders However, the genetic basis for over half of these remains elusive Complex disease phenotypes are only partially represented by our genomic sequences; under the influence of external factors, such disorders arise from not only heredity, but environment, mutation, lifestyleand evolution ? it isthepathological confluenceof DarwinandMendel.

strand, and the exact number of repeats will determine the gene?s penetrance ? in most severe cases, repetition exceeds 41 codons. Roughly 50% of affected parents?offspring will suffer from HD due to the presence of a dominant allele, as stated by Mendel?s principleof uniformity

Mendelian patterns of inheritance can be used to study the patterns of inheritance of single gene disorders across a family pedigree. One such example being Huntington?s Disease (HD) ? individuals with a single mutant autosomal dominant huntingtin gene (HTT) are likely to suffer from HD in their late 30s, resulting in cognitive decline and involuntary movements caused by the ?triplet repeats? mutation Extra repetitions of the codon ?CAG? are added to the polynucleotide

Although HD may purely be due to gene mutations,many disordersmay be alleviated or exacerbated by lifestyle changes and environmental alterations. Commonly known as ?Asian flush? , the accumulation of acetaldehyde causes dilation of facial blood vessels resulting in redness ? a condition present amongapproximately 40%of Asians Studies uncovered a mutation within the ADH1C gene that codes for the gamma subunit in alcohol dehydrogenase (ADH), an enzyme which catalyzes the rate-determining step of ethanol metabolism However, caucasians are less likely to inherit said deficiency owing to genomic differences caused by environmental factors In the 18th century among most European countries, 2 3% alcohol was the main source of fluid intake due to lack of sanitary water Over time, gene adaptations have thus led to more ADH productionwithin theseindividuals.

Nevertheless, discoveries regarding the genetic nature of illnesses offer minimal information about the genes themselves; phenotypic variability in disease severity occurs even amongst monozygotic twins with an identical primary mutant gene As shown by Gaucher Disease (GD), its clinical classification can be categorized into type I

13

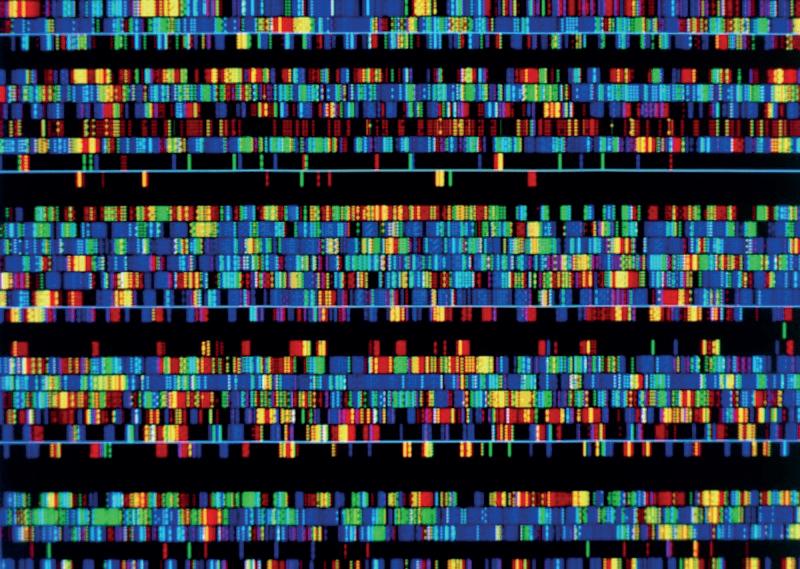

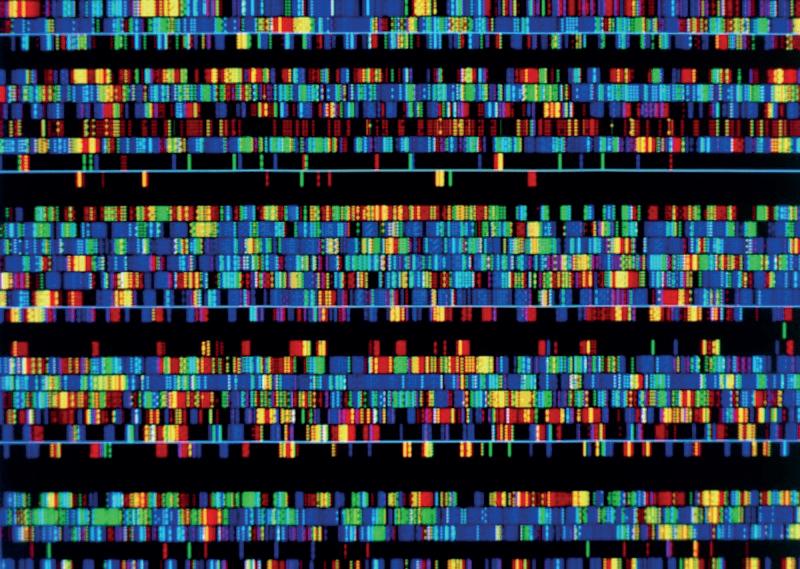

(non-neuronopathic), type II (acute), and type III (sub acute); the first of which, was found to be predisposed to Parkinson?s Disease (PD), whereas types II and III were characterized by neurological impairment. Although the clear cause of PD is still unknown,it?s certain that the mutant GBA1 gene in GD isa prevalent genetic risk factor in the exemplification of PD Detailed investigation of interactions between genes would certainly provide aspiration towards the complete understanding of complex polygenic diseases. Although projects such as the Encyclopedia of DNA Elements (ENCODE) and several genome wide association studies (GWAS) have mapped out the entire human genome in an orderly fashion, the functions of non-coding introns withinthedoublehelixremainamystery.

To fully understand the variability of phenotypic expression in Mendelian disorders, it?s necessary to analyze the entire genomic information of affected individuals. The completion of the Human Genome Project in 2003 has revolutionised scientists?approach to complex diseases by providing new insights into navigating disease causing mutations An example being single nucleotide polymorphisms (SNP) ? single DNA base pair changes used asgeneticmarkersfor assessingdiseaserisk and associated mutations. When they occur in the functional region of a used protein,or in higher frequency in affected vs unaffected subjects, it may be an indication that SNPs are physically close to the disease causingmutation They may beseen as genetic signposts, or an internal GPS systemfor thegenome

0.025% can be altered by our modern pharmacopeia The presence and feasibility of gene sequencing technology has led to a lasting war of personalized medicine against complex diseases. Recent success of clinical trials for Chimeric Antigen Receptors Cell Therapy (CAR-T) in the treatment of acute lymphoblastic leukemia is sure to act as a stepping stone towards our ultimate victory in thisbattle.Regardlessof the rarity of such diseases, we must increase our financial motive and further our research into the mechanisms behind genomic expression in order to stand a chance of curing life threateningillnessessuchascancer

Out of the several million biological molecules present in the human body, only

14

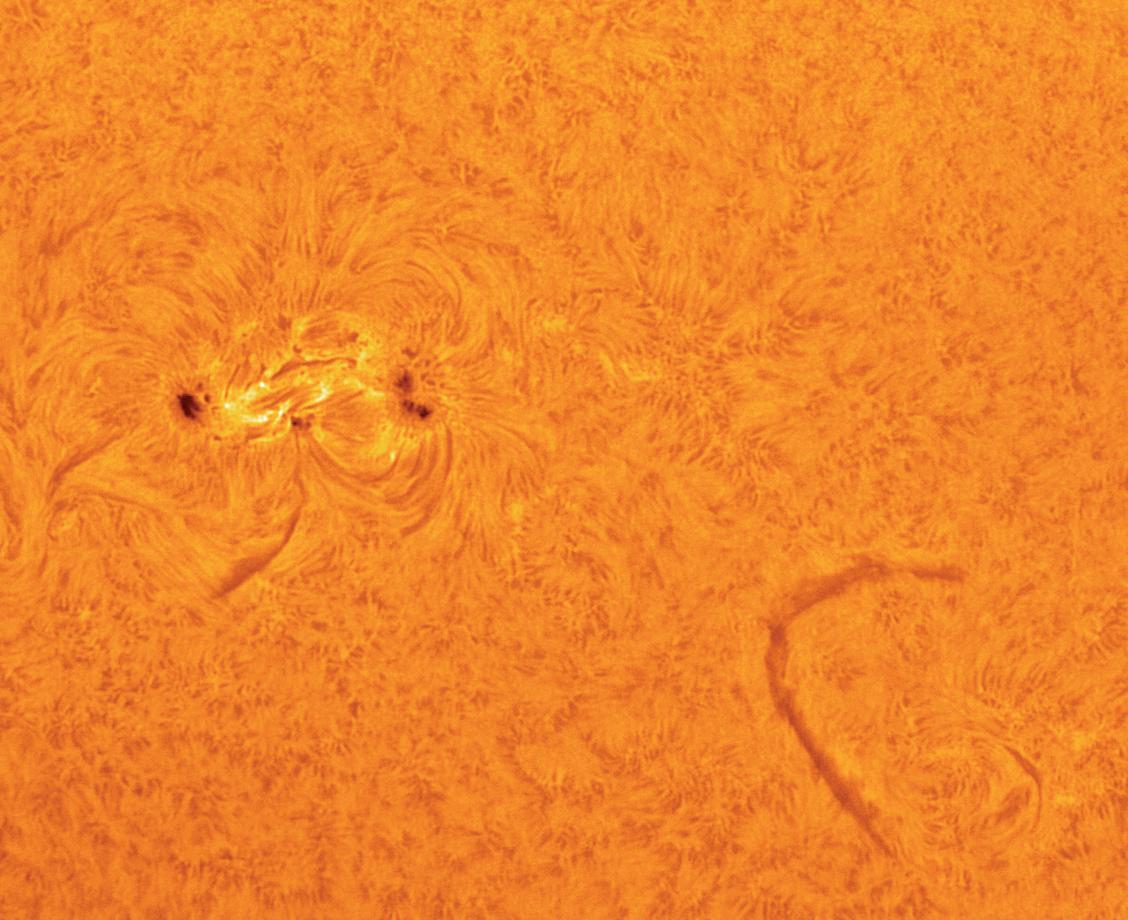

Biotechnology

Mycoremediation: How Fungi Can be Used to Clean the World

Ynon Weiss

As the world continues to industrialise and develop,environmental and health concerns over chemical waste are escalating Traditional physicochemical methods for waste treatment are often effective, but typically onerous to scale up, unsustainable, andfrankly expensive.

Bioremediation is the process by which organisms are used to break down environmental pollutants, often involving the use of bacteria, microalgae and plants Fungi,however,provide an interestingroute for thedetoxificationof wastewater and soil, removing heavy metals, organic pollutants, pesticides,andmanyother toxicchemicals.

Several species of fungi, known as white rot fungi,have the ability to decay wood asthey secrete extracellular enzymes that break down lignin ? known as ligninolytic enzymes. Of course, wood is not the target pollutant needed to beremoved,but lignin,a class of organic polymers, possesses a similar structure to many organic pollutants. Laccases, a type of ligninolytic enzyme, are therefore also able to oxidise and break down a variety of other aromatic compounds in waste, such as azo dyes, bisphenol A,andseveral pharmaceuticals

The low specificity of many catabolic fungal enzymes is an attractive feature of mycoremediation,allowingmany ligninolytic enzymes to act on other substrates such as

polycyclic aromatic hydrocarbons(PAHs) ? a byproduct of fossil fuel combustion, waste incineration, and steel production PAHs are known carcinogensand havealso been linked to cardiovascular disease Whilst bacterial degradation of PAHs is also a possibility, the extracellular natureof fungal enzymesmeans that they are able to diffuse towards the pollutant moleculesin soil and thustheinitial attack on the PAH molecule ismore useful ? a feature not seen in bacteria Additionally, microbial enzymaticactivity isusually limited to low molecular weight PAHs, with higher molecular weight molecules being recalcitrant (resistant to decomposition).Yet, studieshaveshown that fungal enzymeshave the capability to overcome this As opposed to fungi,bacteriaoften use the pollutantsfor their own growth This means that the efficiency of their degradation relies on the benefits reaped by the bacteria from the products At low concentrations of pollutants, or if the molecules have a low energy content, the bacterial breakdown of the molecules is less efficient, and this presents a problem that can be solved by mycoremediation. Degradation pathways in bacteria are in general much more specific than fungal pathways, and this creates a difficulty for them to decompose large, complex molecules with high numbers of functional groups.

15

So, should all bacterial cleaning methods be replacedwithmycoremediation?

Not exactly In fact,studieshave shown that the presenceof themycelium in fungi allows for increased movement of bacteria in soil which has physical barriers such as air filled pores Fungi could therefore not only be used to degrade pollutants themselves, but alsotostimulatebacterial breakdown

Organic pollutants are merely one type of chemical waste, with metals, particularly heavy metals, posing a significant threat to the environment. Metals such as cadmium, lead, nickel, and mercury are often generated through the mining of metal ores and electroplating, and again are associated with organ damageand carcinogeniceffects

Although complex organic compounds have thepotential to bebroken down into smaller molecules, metals, being elements, cannot be degraded further Instead, they must be either transformed into lessharmful species, or separated from any susceptible part of the organism ? such as by intracellular immobilisation.For example,some members of the Aspergillus genus are able to reduce Cr6+ to Cr3+ , a much less toxic form. Fungi

are able to take up the heavy metals and removethemfromtheground by biosorption to their cell surfaces, where they then enter the mycelium passively Yet fungi have applications beyond the environment. A study from the VTT Technical Research Centre of Finland reported an 80%recovery of gold from electronic waste using mycoremediationmethods

Mycorrhiza is the mutual symbiotic relationship between fungi and plants, in particular between fungi and the roots This often enhances the decontamination activitiesof theplants,aidingin theuptakeof both nutrients and heavy metals Mycorrhizal fungi reduce the harmful effects of thepollutant moleculeson plants,allowing them to continue to grow, while also providing the them with water and minerals. In return, the plants supply the fungi with sugarsproducedby photosynthesis

Mycoremediation seems to be a sustainable and cheap method to clean up waste, with the fungi not only directly decontaminating the environment, but also increasing the capacity of both bacteriaand plantsto do the same,resultinginahealthier ecosystem.

Lipid Nanoparticles: A New Era of Vaccines

Thomas Hallé

The Covid 19 pandemic boosted interest in the biotechnology behind the various strategies used by scientists to halt the spread of the virus One key reason we were able to return to our daily lives was the nationwide vaccination programme, and whilst there were a variety of different vaccine platforms used by pharmaceutical companies, Pfizer and Moderna were the first companies to bring a vaccine using a

nucleic acid platform to market These rapid responses represent key progress in our ability to swiftly design and manufacture viral vaccines Although this technology has been available for over four decades, in the past it was received with scepticism by scientists who were unsure of its ability to manifest into a viable vaccine The reasons for this doube were twofold: mRNA has an incredibly delicate structure,and itsstrands

16

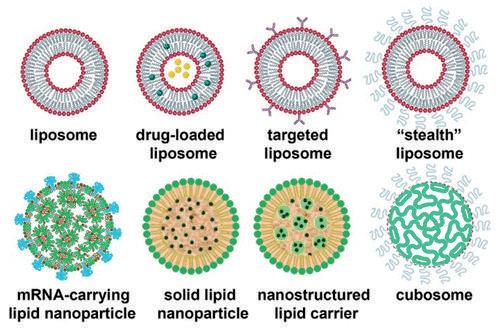

possess a negative charge, making it difficult for them to pass through the largely hydrophobic phospholipid membranes that surround cells.Scientists,however,wereable to turn to a delivery technology,with origins older than theideaof mRNA therapy itself,in order to revolutionise the concept into a working vaccine delivery mechanism ? that technology waslipidnanoparticles

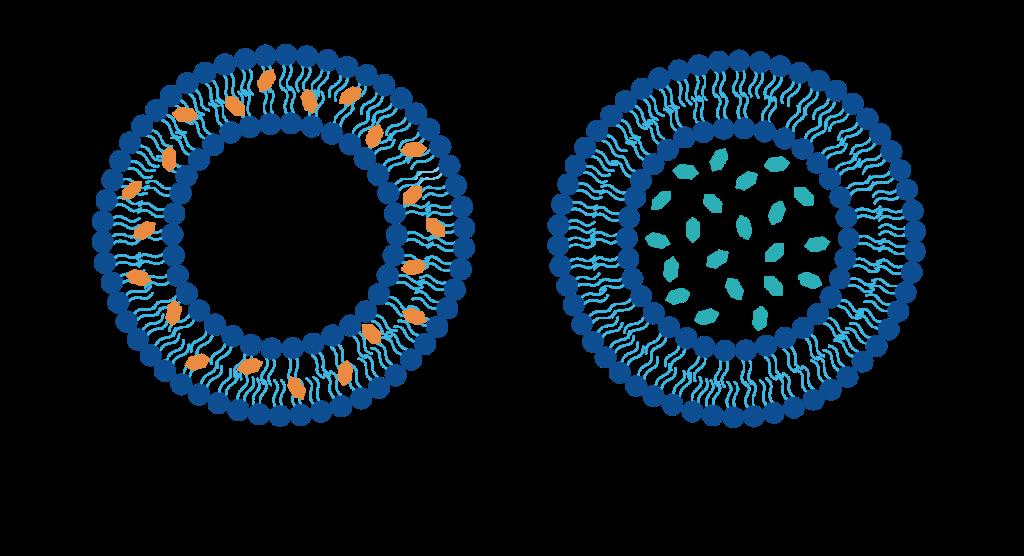

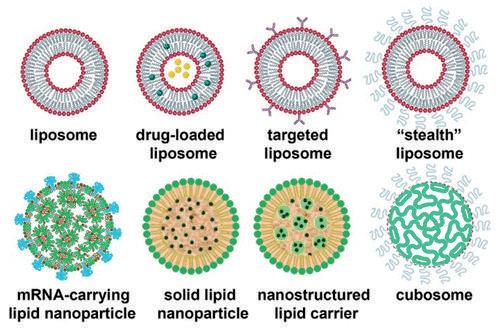

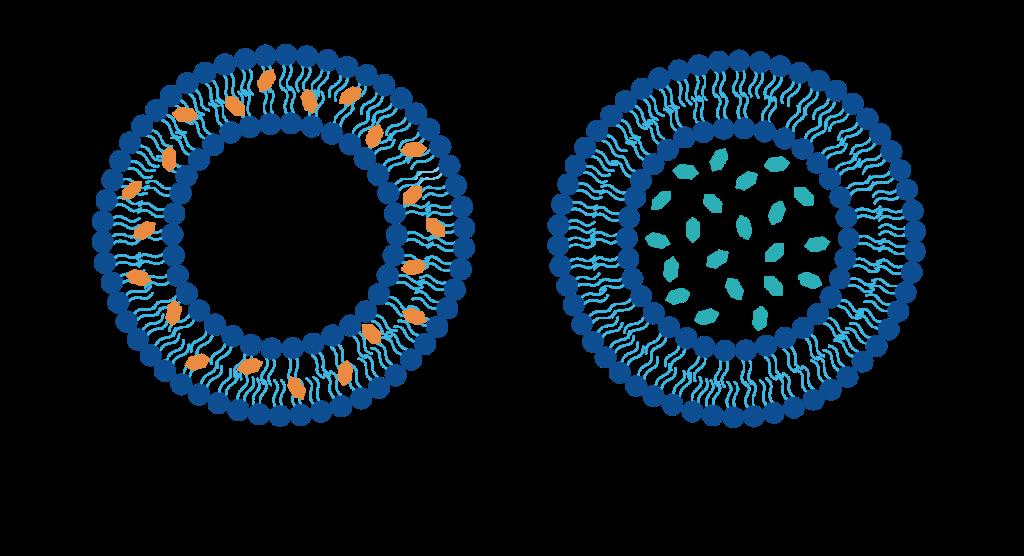

The lipid nanoparticles currently used as a vehicle for the delivery of mRNA and other therapeutic agents have undergone years of development. They have been engineered to exhibit more complex internal architectures and enhance physical stability,both of which ultimately increase specificity. The earliest generation of lipid nanoparticles, liposomes, was discovered when scientists observed closed lipid bilayer vesicles forming spontaneously in water These liposomes consist of one or several bilayers, which range in size from 20 nm to 100 nm, with smaller liposomes being preferable due to their increased chanceof phagocytosis.Their structural properties meant they were immediately highlighted as potential drug delivery vehicles. This is because their hydrophilic aqueous interior, and hydrophobichydrocarbon chain region in the bilayer, can carry both hydrophobic and hydrophilic drugs On a simple level, to

Liposomes have been engineered to avoid recognition (which prevents an immune response and so premature destruction) and to have increased specificity Targeted liposomes are designed with surface attached ligands to recognize and consequently bind to specific receptors present on the surface of cells. The liposome's membrane is very fluid, and so a dynamic organisation of targeting ligands is displayed. This allows for optimal binding to cell surface receptors These targeted liposomes are generally prepared by conjugatingsmall molecule ligands,peptides, or monoclonal antibodies to their surface Furthermore, liposomes have also been heavily engineered to prevent removal from the blood flow by phagocytes To avoid

deliver the drugmoleculesto asite of action, the lipid bilayer fuses with the cell surface membraneof thetarget cell viaendocytosis.

detection, liposomes have been coated with biocompatible inert polymers, typically polyethylene glycol which attaches to the heads of lipids in the bilayer This has given rise to a class of liposome known as the ?stealth liposomes? , as they are almost ?invisible?tocellsof theimmunesystem

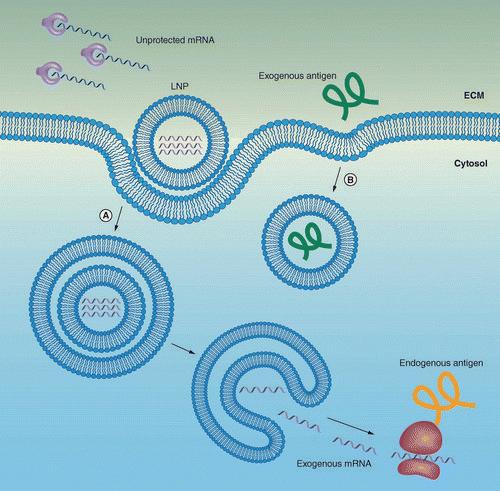

The latest successful use of lipid nanoparticles is in the delivery of mRNA in the context of Covid vaccines. These vaccines deliver mRNA encoding for the SARS CoV 2 spikeproteinintothecytoplasm of host cells, where it is translated in the ribosomes into the spike protein, which thereby acts as an antigen to stimulate an

17

immune response to the virus Each nanoparticle used in the vaccines is approximately 80 nm to 100 nm in diameter, and contains approximately one hundred mRNA moleculeseach.

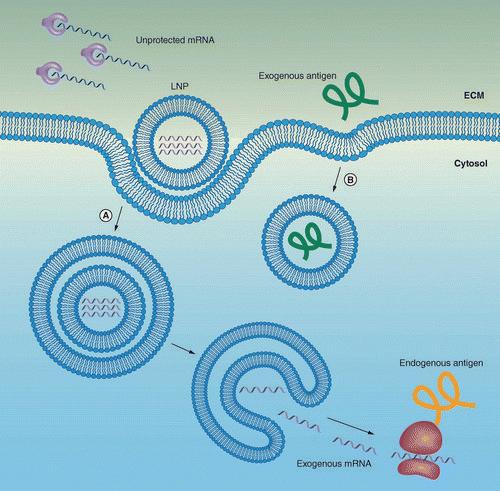

macropinocytosis or receptor mediated endocytosis. Following cellular internalisation, LNP?s are trapped in endosomal compartments (essentially a lipid bubble), and consequently the endosome?s acidic interior protonates the heads of the ionizable lipids, making them positively charged. This positive charge triggers the LNP to undergo a conformational shape change, which helps it break free from the endosome and ultimately release the mRNA intothecell cytoplasm

The lipid nanoparticles (LNPs) used in both thePfizer and theModernavaccines contain an ionisable lipid. This lipid is positively charged at low pH, allowing for RNA complexation, and is neutral at physiological pH,which reducesthe potential toxic effects and facilitates payload release The LNPs also contains a PEGylated lipid to reduce opsonization by serum proteins and clearance by phagocytes (as mentioned before, to prevent the destruction of the delivery vehicle by phagocytes prior to its reaching the target cell) The phospholipid distearoylphosphatidylcholine (DSPC) and cholesterol help to pack the mRNA into the lipid nanoparticles Furthermore, the hydrocarbon chains found in the bilayer of the LNP are connected through biodegradable ester groups, allowing for their safe clearance after the mRNA reaches thecytoplasmof thetarget cell

Oncethelipid nanoparticlesreach thetarget cells, they can be internalised by

In essence, the LNPs used in the COVID 19 vaccines contain just four ingredients: ionizable lipids whose positive charges bind to the negatively charged backbone of mRNA,pegylated lipidsthat helpstabilisethe particle, and phospholipids, as well as cholesterol molecules that contribute to the particle?s structure Thousands of these four components help to house the mRNA, shieldingit from theenzymesand cellsof the immune system, and translocating it to the cytoplasm of target cells, where it can finally be unloaded and used to code for the spike protein Lipid nanoparticle technology has meant that mRNA vaccines, which reign supremein thevaccinemarket for their rapid development and manufacturing, have been able to reach the market and thereby tackle Covid 19 containment. This technology provides a promising future for subsequent responsestoviral pandemics.

18

Book Reviews

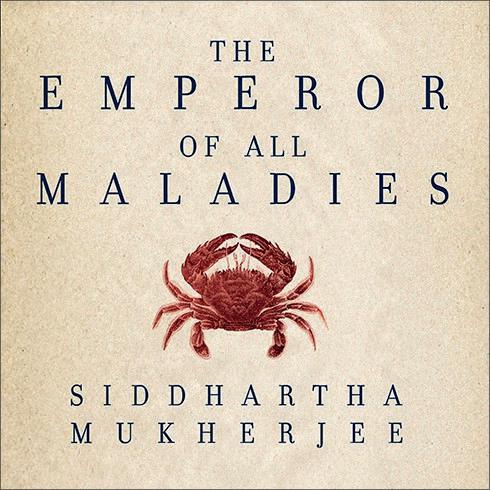

The Emperor of All Maladies By Siddhartha Mukherjee Yuxi Liu

From injecting patients with folic acid to the modern immunotherapy technology, Mukherjee describes in depth the medical journey of humanity?s4,000 year war against cancer In particular, he pays ultimate respect to generations of doctors and researcherswho have dedicated their entire livestofightingthiswar

The journey beginswith thestory of chemist Sidney Farber and his perseverance in finding the cure for acute lymphoblastic leukaemia. Working at a children?s hospital, he witnessed the unwavering pain and endless suffering of affected children, and the hopelessness of doctors (at the time there were no treatment options) Driven to end their agony, he worked as a public cancer research advocate and pioneered the first nationwidecancer fundraisingcampaign together with activist Mary Lasker. Unlike doctors at the time who used radical discectomy to ?slice?out the tumour, as an intelligent chemist, Farber turned to chemicals and discovered a novel cancer treatment ? now knownaschemotherapy

Mukherjee also highlights the vital role of statistical data analysis in medical research The first example being scientist Percival Pott, who noticed an unusual number of young chimney sweepers diagnosed with scrotal cancer in the 1980s, which was later discovered to be due to the accumulation of

carbon soot in their lungs. This led to the term ?carcinogen? appearing in our dictionaries for the first time In addition, as part of Farber?s research group, scientists Doll and Hill werefirst to discover thestrong link between smoking and lung cancer This wasthe inception of epidemiology (the study of populationstolearnabout disease)

In addition to chemical carcinogens, many viral carcinogens were also linked to cancer. The most notable of those being the HPV virus, the culprit in 5% of total diagnosed cancers worldwide and roughly all cases of cervical cancer in women ?Prevention is the best cure?,asMukherjeestates.

So what are the treatments available? Unfortunately as Mukherjee mentioned in ?The Gene? : out of the several million

19

biological molecules present in the human body, only 0 025% can be altered by our modern pharmacopoeia We are merely starting to comprehend the entirety of the human anatomy,and as such our treatments are limited The big challenge of cancer treatment hasalwaysbeen finding a suitable target site which exclusively suppresses the growth of cancer cells Nevertheless, it also meansthat if aspecificgenemutation can be identified and linked to a type of cancer, targetedtreatmentscanbedeveloped

The presence and feasibility of gene-sequencing technology may prove to be the turningpoint in thiswar The success of the drug ?Herceptin?in targeting ?Her 2? positive breast cancer patientsissure to act as a stepping stone towards our ultimate victory Regardless of prevalence, we must increase our financial motive and further our research into the mechanisms behind tumour growth if wehopeto stand achance of curingsuchlife-threateningillnesses.

A Brief History of Time By Stephen Hawking

Haruki Matsunaga

The book published in 1988 by one of the most brilliant theoretical physicists in history hadinthefirst tenyearsof itsrelease already formed a monumental landmark for science Ranging from profound discussions about the origins of the universe to detailed insights of elementary particles, Hawking shares,alongwith hisoffbeat humour,abrief history of time. In this book review, I will go into further detail into the chapter which I found the most fascinating: chapter four on TheUncertainty Principle.

Hawking begins by describing the views of French astronomer and polymath Pierre Simon Laplace regarding the universe?s purely deterministic nature In a book he wrote in 1819, Laplace asks the reader to imagine an omnipotent demon who knows everything about the universe, this being the exact positions and momentumsof all particlesin theuniverseat once Pierre Simon proposed that this demon would be able to predict anything in future by knowing everything from the past This was determinism in a nutshell If we

know the past, surely we can accurately predict thefuture

The scientist then follows through this by commenting on another monumental scientific discovery, the formulation of the Rayleigh-Jeans law at the turn of the century The law?s intended use was for calculating the energy intensity released fromblack bodies. Theformulastates:

By using this, Hawking suggests the ridiculous conclusion that it entails if we base it on determinism He explains that if we go by the law ?a hot body should radiate the same amount of energy in waves?with different frequencies (which we know is absurd), since frequency is unlimited, we couldarguethat infiniteenergyisradiated

20

B=spectral

kB

c=speedof

T=absolutetemperatureinKelvin ?=frequency

radiance

=Boltzmannconstant

light

The author then introduces us to Max Planck?s invention of quantum theory through his solution to the black body radiation issue He proposed that energy emitted from electromagnetic waves can be quantised into packetsof energy rather than being continuous This would mean that, at certainhigh frequencies,theenergy required to release a single quantum may be more than isavailable Thustherateof energy lost by black holes would be finite and not limitless.

The theorist then discusses the most significant topic in the chapter, the uncertainty principle which was created by Werner Heisenburg in 1926 Heisenberg argued that finding the accurate position and momentum of a particle is impossible. The German scientist believed that to find the future position and momentum of a particle, one must find its current values To find these measurements, one shines light, quiteliterally,onto theparticle.For themost accurate position of the particle to be found, the wavelength must be as low as possible However, by Planck?s quantum theory, we cannot use an arbitrary amount of light but a quantum Although this would be ideal, this singular quantum will affect the momentum of the particle significantly and thus our measurements of the speed of the particle would be invalid. If we tried to measure the velocity of theparticle,wewould need to use light of a higher frequency. This would lead to a less accurate result for the position of theparticle Therefore,wecannot accurately find out the position and the momentum of an object at the same time This famous dilemmaisrepresentedby thisformula:

? =Uncertainty of positionof particle p=Uncertainty of momentumof particle ? =Planck?sconstant

Quantum mechanics is then introduced. Hawking explains how Heisenberg, Erwin Schrödinger, and Paul Dirac reformulated quantum theory into what we now know today as quantum mechanics In this theory, the position and the momentum of a particle was combined into one singular ?quantum state?Quantum mechanicsdoesnot output a single result of a process but rather the likelihood of separatedifferent results.

Stephen Hawkingthen concludesthechapter by explaining how quantum mechanics may still to greatly influence the scientific world and may even disprove some of the cornerstones of physics. It is even hinted by Hawking that quantum mechanics may reinforcethe?downfall?of Einstein'stheory of general relativity.

Hawking?s ability to disseminate frighteningly difficult topics in a simple manner is what stood out to me I was also attracted to the fact that for each analysisof a theorem or a principle,he alwaysleft some bits of knowledge out I enjoyed figuring out the answers to these questions and I believe in thisbook,Hawking enticesusto do so in a very skilled manner Who would have known that aman without without the ability to talk could writesuchaneloquently shapedbook?

21

Chemistry

The Catalytic Condenser ? a Chameleon Device Appreciating the Value of Catalysts

Teddy Onslow

Asthe world movesaway from fossil fuelsin the modern pursuit of a green economy, we have become increasingly dependent on cleaner forms of renewable energy, such as solar and wind power Green ammonia is capable of delivering clean power to more markets by capturing, storing, and shipping hydrogen for use in emission free fuel cells and turbines. Having identified this great opportunity, chemical companies are developing a route to green ammonia in which hydrogen is produced by water electrolysis ? a method which can be poweredby alternativeenergy ? rather than from the burning of hydrocarbons. This will make ammonia production virtually carbon dioxide free However, by estimates, the production of green ammoniacostsbetween two and four times as much as conventional ammonia production, the cost of catalysts critical to its production accounting for a significant proportionof thisheavy cost

Catalysts increase the rate of a reaction by enablingalternate pathwaysfor the reaction with lower activation energies, all whilst remaining unused and unchanged. Metals such asplatinum,palladium,and rhodiumare catalysts used to manufacture important materials and chemicals in many industries. Rhodium and Platinum alone cost more than $529,000/kg and $31,000/kg respectively Their rarity drivesupmanufacturingcosts.

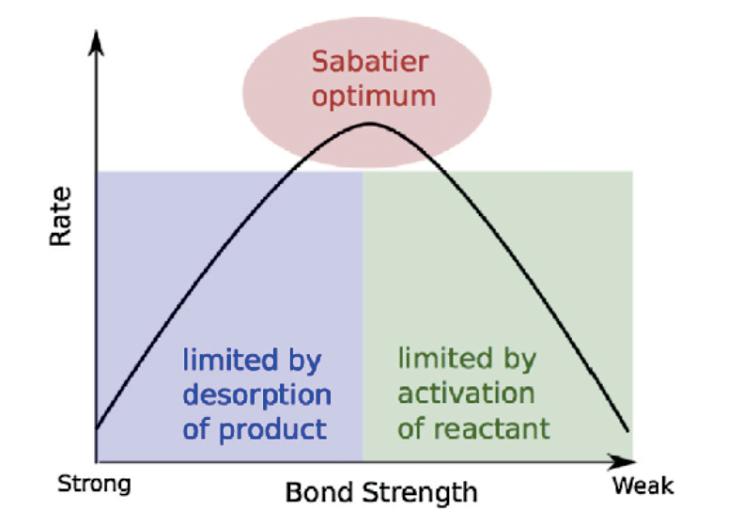

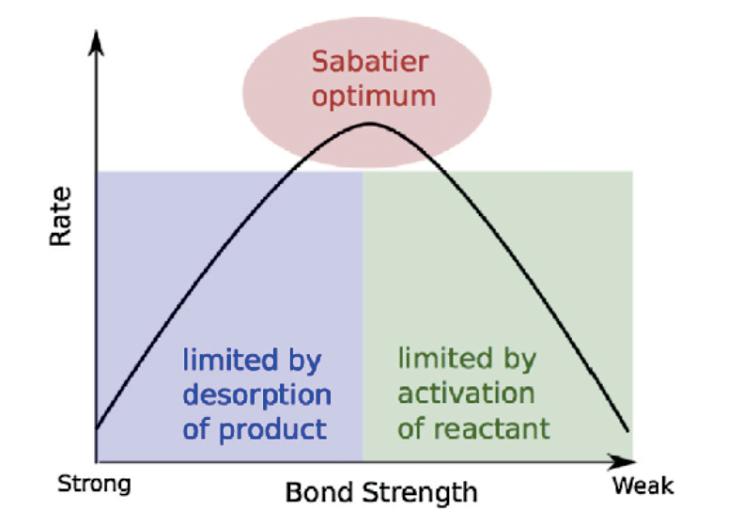

A team from theUniversity of Minnesotahas designed and produced a new instrument, the catalytic condenser, which is capable of fine tuning cheaper and more abundant metalsto behave like these hugely expensive metal catalysts by simply varying an applied voltage. This breakthrough in the chemical treatment of ordinary metals has the power to revolutionise the future of sustainability. These changeable metals are often referred to as chameleon metals for their ability to metamorphose their surface chemistry into specially selective structures for the best performance The catalytic condenser uses ultra thin film strips of alumina and graphene,which both cost lessthan $225/kg Therefore,thisdevice allowsthese metalsto act as catalysts way above their paygrade. Reactions where wind and solar power are taken to convert water into ammonia are Sabatier limited;theSabatier Principlestates that the interactions between the catalyst and thesubstrateshould be?just right?,that is neither too strong nor too weak If the interaction istoo weak, the molecule will fail to bind to the catalyst and no reaction will take place On the other hand, if the interaction is too strong, the product fails to dissociate. This means that when looking at the rate of reaction we can plot different catalytic materials such that we see an optimum in performance. We already have materialsthat achieve thistheoretical ?speed

22

limit?for chemical reactions, however, these reactions are still far too slow to be viable for technologies required to address problemssuchasclimatechange

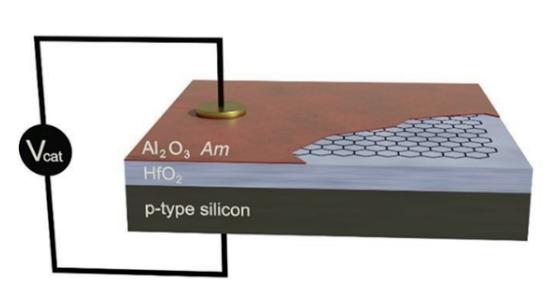

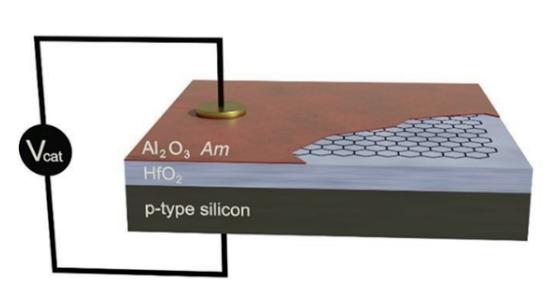

Thedeviceiscomposed of alayer of alumina (the solid acid site) on a layer of conductive graphene,which restson top of an insulating layer of hafnium oxide, supported by a conductive silicon layer. When applying a voltage, electrons or holes (the removal of electrons) are added to the catalytic layer and perturb the performance of the material This begs the question of how much charge should be added to the plate in order to reach optimal rate of reaction? When a voltage was applied to the device and the charge uptake measured, it was experimentally found that there isalmost an order of magnitude more charge uptake with alumina and graphene together, versus only graphene, allowing us to infer that charge can be imagined to seep into the alumina About 10% of an electron, on average, is emitted per active site on the alumina. A reaction involving the dehydration of

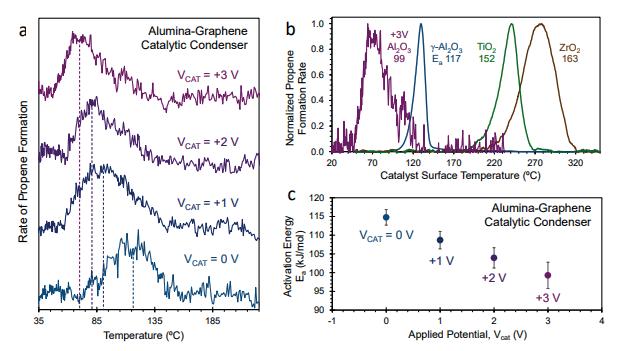

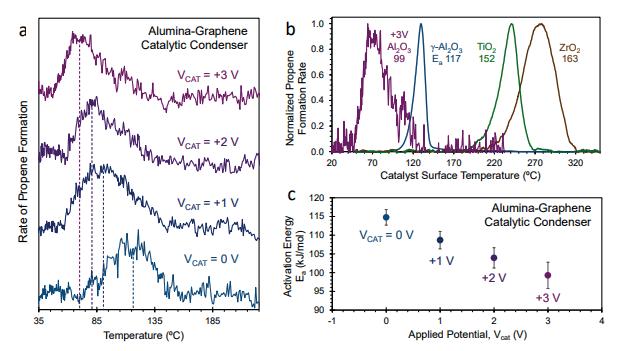

isopropanol to propene was used to investigate how altering the voltage affects the rate of reaction In figure a (leftmost in bottom figure), the purple line shows an alumina material where positive bias (when holes are added/electrons are removed) of 3 V hasbeen applied and themetal transforms. This clearly indicates that the rate of the dehydration increaseswhen apositive biasis applied, and also that the temperature corresponding to the peak rate of propene formation decreases. Therefore, as seen in figure c, the activation energy decreases when a positive bias is applied When we further examine the surface chemistry at the active site (alumina), under positive bias, the bond length between aluminium and oxygen atoms decreases, and so they strengthen. Thus, the binding energy increases as isopropanol binds more strongly to the aluminasurface,resulting in lower activation energy for thereaction

Considering Figure b, we see the rate of propene formation for +3 V Al2O3 and neutral Al2O3 These chameleon metals? ability to switch between these two states 1,000 times per second provides us with the uniqueability to programmethemby sending different electronic signals to tune the material to desired characteristics at different stages of the reaction Consider a reaction where reactant A forms product B. A adsorbs and, under a strong positive bias, you can bind A very strongly and it will react to form Bbut then immediately put electrons

23

back in so B can desorb. With the ability to change the surface chemistry of a catalyst over time,weareableto lower theenergy of the transition state by applying a voltage, then flip to another state which gives very fast desorption from thecatalyst In fact,the instrument builds upon previous research from this Minnesota University team on catalytic resonance theory, which argues that by applying an external wave at the catalyst surface that resonates with the oscillations of binding energy and transition energy, a reaction can be drastically accelerated by carefully tuning the amplitude and frequency of the wave By making the frequency of changes to the applied voltage equal to the frequency of

these oscillations, we are able to reach a resonant frequency that allows for reaction rates much higher than the theoretical maximum of catalysts (the Sabatier optimum). The amplitude also has a logarithmic impact on the enhancing catalyticrate.

The prospect of cheaper and tunable catalysts could be revolutionary in both storingrenewableenergy,aswell asavariety of other projects, such as cheaply and effectively forming key components of bioplastics. This new development has a myriad of exciting possibilities in the future and will hopefully play an integral role in protectingour planet.

The Chemistry Behind Acne

Alexis Andronikos

Acne is a common skin condition which disproportionately affects those going through puberty. The main symptoms are facial ?spots?,and hot and oily skin which may be painful to touch Significant capital and time have been invested into improving treatments given it is such a vast and profitable market However, whilst treatments are available, we are yet to developacure

This skin condition is, in the most part, caused by a substance called sebum. Sebum is composed of triglycerides, wax esters, squalene,and small levelsof cholesterol It is produced by humans and its main purpose is to maintain soft skin and shiny hair;however, especially duringteenageyears,high levelsof testosterone result in excess sebum production This excess can clog up pores, allowingbacteriathat feed off dead skin cells

to thrive, and cultivating sebum producing toxins that damage the lining of the pore A blocked pore initially turns red, as blood (white blood cells) rushes to the site of infection Whiteheadsform asa result of the accumulation of dead skin cells, pus, and deadwhitebloodcells

Acne products are quite divisive, however, the most effective ones tend to contain at least one of benzoyl peroxide, salicylic acid, alpha hydroxy acids or sulfur Alpha hydroxy acidsare a classof chemical compoundsthat consist of acarboxylicacid substituted with a hydroxyl group on the adjacent carbon These are believed to battle acne by removing the top layer of dead skin cells (which bacteria feed off), reducing levels of sebum production. Sulfur is believed to be effective as similarly it removes dead skin cellsandabsorbsexcesssebum.

24

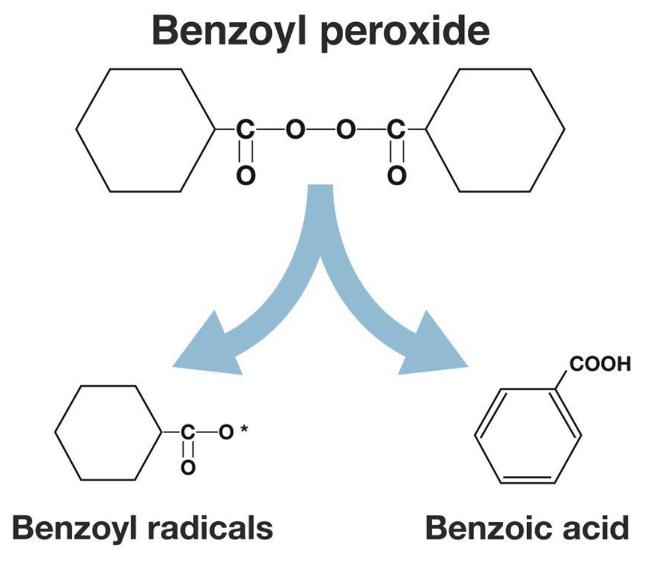

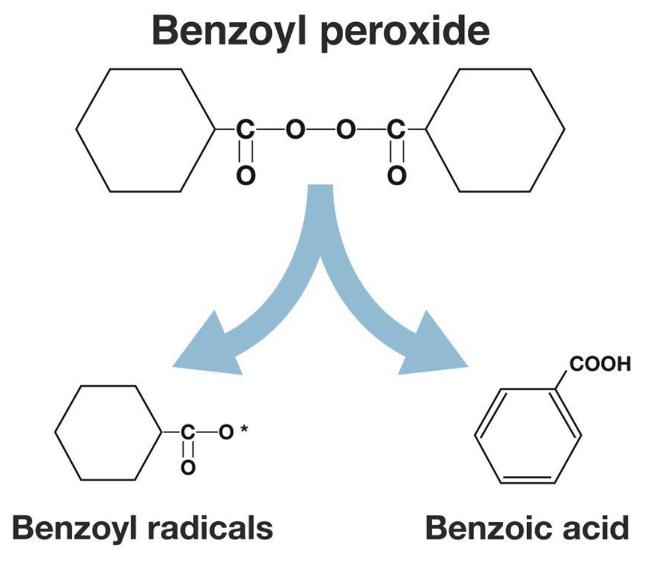

Benzoyl

peroxide hasastructural

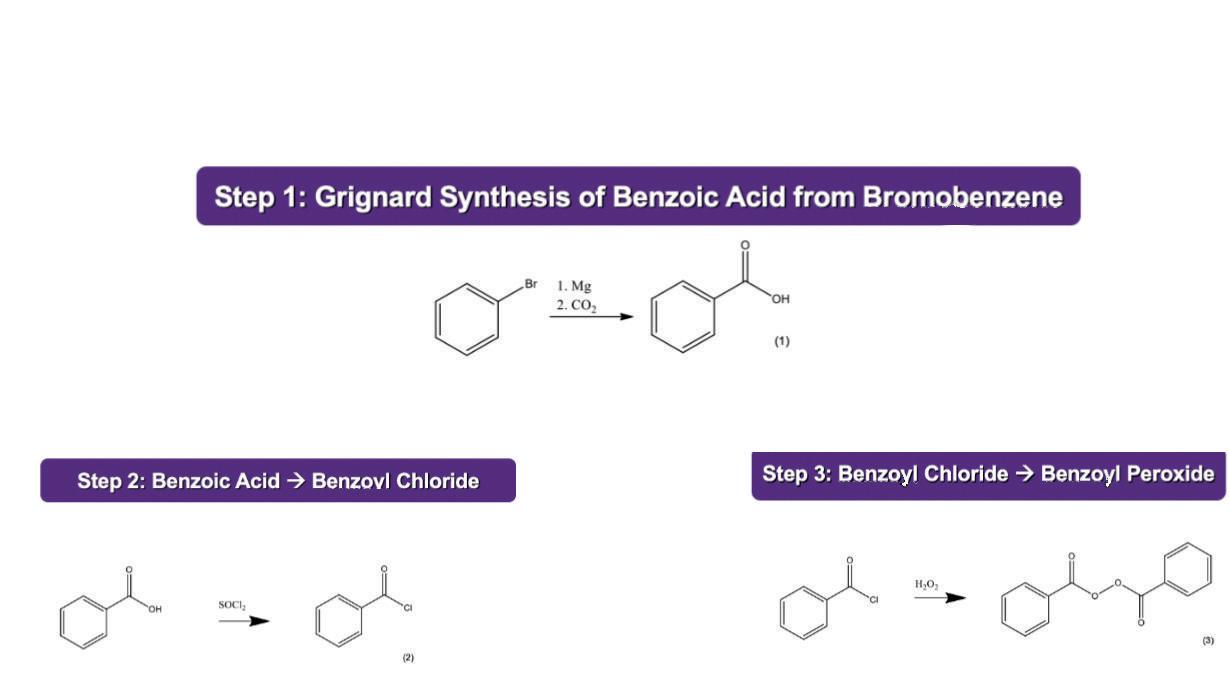

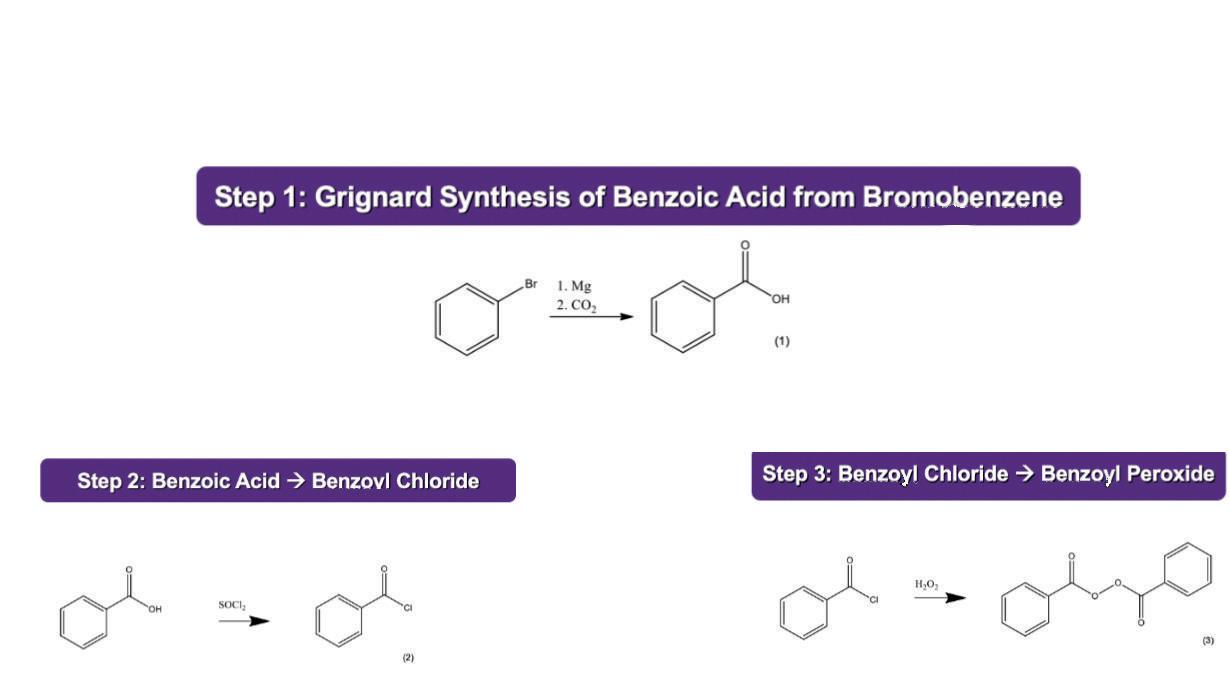

formulaof (C6H5?C(=O)O?)2 Upon application, it decomposes to form benzyl radicals and benzoic acid. Benzyl radicals kill bacteria, and benzoic acid unclogs pores which also contributes to the reduction of bacterial accumulation. Benzoyl peroxide is often synthesised in three steps The first step is the Grignard synthesis of benzoic acid from bromobenzene. A Grignard reagent is a substance with a very polar carbon magnesium bond in which the carbon hasapartial negative charge and the magnesium has a partial positive charge This step is followed by the reaction of benzoic acid to form benzoyl chloride. Finally, benzoyl chloride is reacted with hydrogen peroxideto form benzoyl peroxide as a final product. Alternatively, it can be produced by reacting benzoic anhydride with an alkali metal perborate in aqueous solution

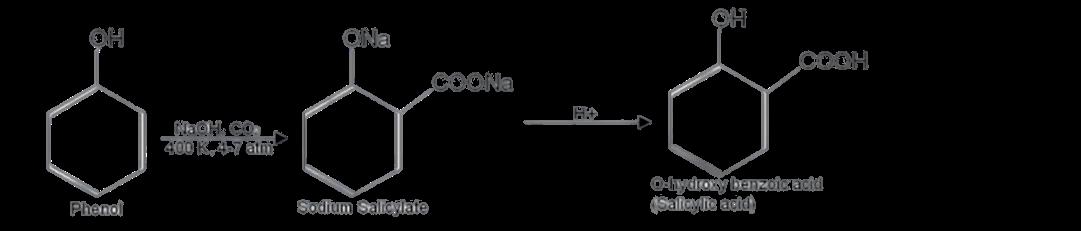

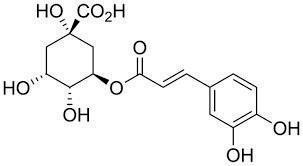

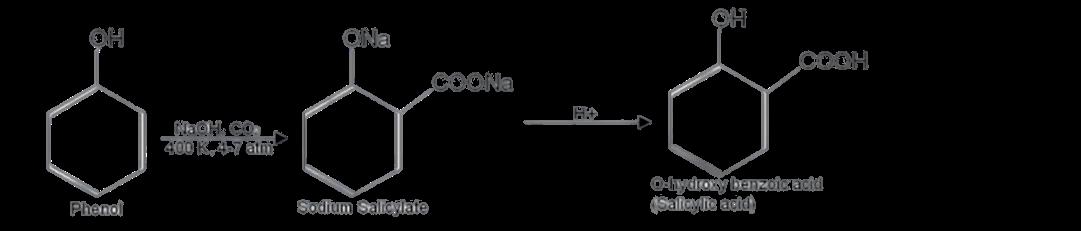

A beta hydroxy acid is an organic compound that contains a carboxylic acid functional group and a hydroxyl group separated by a carbon atom Salicylic acid is a beta hydroxy acid often found in skin care products combatingacne.It islipophilic? meaningit is able to dissolve in oils and fats ? which allows salicylic acid to penetrate skin layers and bind and destroy sebum. Furthermore, it also has anti inflammatory effects However, although research shows that salicylic acid is moreeffectivethan aplacebo,it hasnot been proven to beextremely effectiveand can also cause irritation and dry skin (as can benzoyl peroxide). Salicylic acid is a naturally occurring compound which can be isolated from the bark of the willow tree. It can also be synthetically produced, either by biosynthesisof theamino acid phenylalanine, or from phenol.It isoften produced usingthe Kolbe Schmitt process shown below Naturally,salicylicacid and itsderivativesare also found in fruits, particularly berries, and vegetables Interestingly it is also often used inthesynthesisof aspirin

25

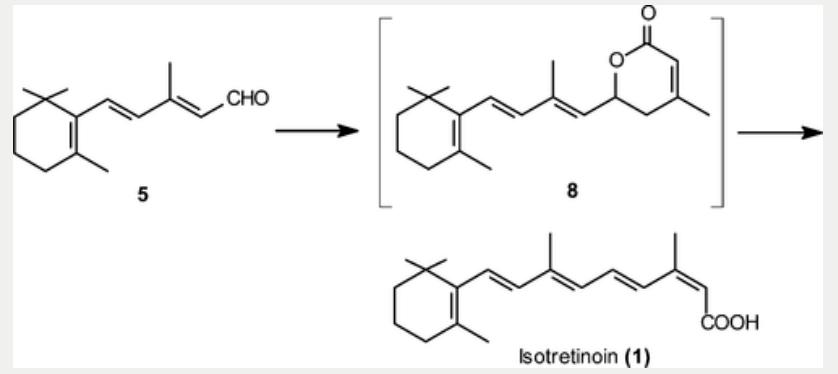

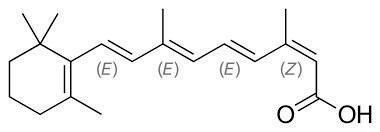

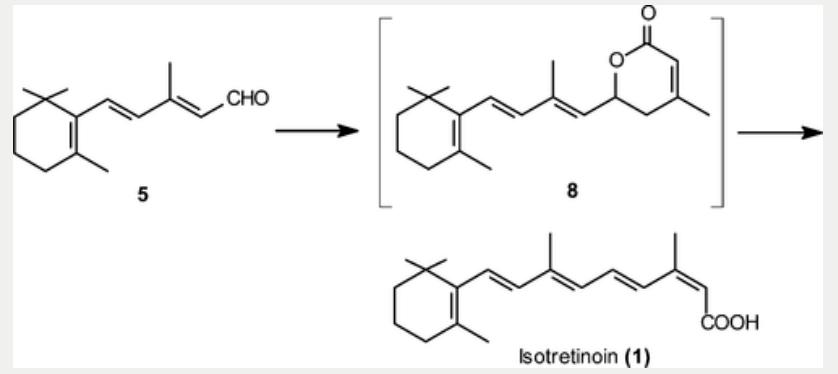

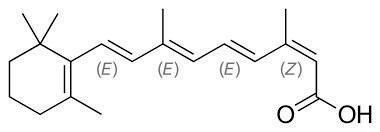

Accutane (otherwise known as isotretinoin) isrenowned asthemost effectivemethod of treating acne However, the side effects are more extreme and much more common.The list of common sideeffectsincludesdry skin, eyes, nose, and lips; irritation of the skin; sore mouth or throat; headaches; and muscle pains The more serious side effects include unexplained bruising, dizziness, stomach pain, nausea, bloody diarrhoea, increased mental health problems and prolonged headaches However, accutane is deemed to be very effective nonetheless. Isotretinoin?s chemical structure is shown below This structure can produce

preparation of isotretinoin (13-cis isomer of vitamin A acid) The reaction conditions are perfect for industrial scale production, and ensure a high quality product; levels of related isomeric impurities such as tretinoin (all trans retinoic acid) and 9,13-di-cis-retinoicacidareextremely low.

stereoisomersowingtothealkenegroupson one of the side chains (with each carbon across the double bond bonded to two different groups). Its mechanism of action involves alterations in the cell cycle and apoptosis (programmed cell death) These actions reduce sebum production, preventing the blockage of pores and the growth of acne producing bacteria Shown below is an efficient process for the

In conclusion,due to endlessresearch in this field,therehasbeen significant development of acne treatments However, many are still sceptical of the effectiveness of these treatments, and many doctors believe that a healthy lifestyle and diet can be just as effective. Furthermore, many of these treatments display fairly unpleasant side effects as mentioned ? particularly with accutane,although it iscurrently deemed the best long term solution to acne Cost also presentsasignificant issue? a30 day supply costs over $200. In the future, with continued research, it is likely that more established treatments will be developed andbecomemoreaccessible.

The Biochemistry of Coffee

Seb Elliot

Coffee consists of 2 3% of caffeine and is responsible for most of coffee's known characteristics.

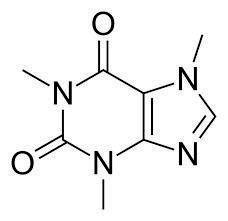

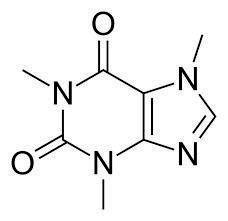

It hasachemical formulaof C8H10N4O2 and it is a methylxanthine, with its IUPAC name being1,3,7 - trimethylxanthine. The caffeine molecule, shown adjacent, is

made of heterocyclic rings, which are cyclic compounds that have atoms of at least two different elementsasmembersof itsring.

26

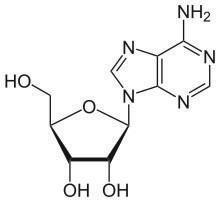

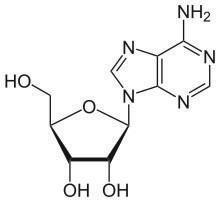

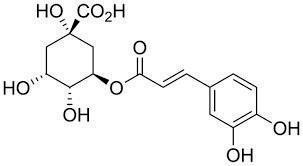

Caffeine is able to bind to adenosine receptors(atypeof ribonucleotidesimilar to that of adenine and guanine) owing to the similarity in shape between caffeine and the adenine component of adenosine. Its structural similarity with adenosine, shown below, allows caffeine to act as a competitiveinhibitor

fatigue, but also has anti-inflammatory effects The anti inflammatory effects of caffeine are due to the nonselective competitive inhibition of phosphodiesterases (PDEs). Inhibition of PDEs raises the intracellular concentration of cyclic AMP (cAMP), activates protein kinase A, and inhibits leukotriene synthesis, which leads to reduced inflammation and innateimmunity.

To extract thecaffeinemoleculefrom coffee and tea plants, decaffeination needs to occur. This is when a carbon dioxide solvent ispumped into sealed vesselswith thecoffee beans, the caffeine dissolves into this solvent,andisinturnextracted.

The caffeine molecule is found naturally in the seeds of coffee plants and the leaves of tea plants Within these plants, the caffeine molecule increases the rate of many biological processes, including photosynthesis, and water and nutrients absorption.

Upon consumption, caffeine binds to adenosine receptors in the central nervous system (CNS), which inhibits adenosine binding Adenosine has multiple functions, most notably sleep promotion and maintenance. The underlying mechanism is centred upon the release of GABA (an inhibitory neurotransmitter), which inhibits neuronsinvolvedinwakefulness

Consumption of caffeine also causes an increase in the oxygen supply by coronary vasodilation, and decreases the consumption of oxygen by lowering the heart rate. This inhibition by caffeine therefore results in its known effects of increasing heart rate and ?concentration? throughthelack of promotionof sleep.

Caffeine is not only known to decrease

27

Computer Science

The I llusion of the Third Dimension and the Tropes of Modern First Person Shooters George Niedringhaus

Doom (1993) was released in 1993 by id Software for MS DOS, and came to home consolesover the next few years With a file size of only 2.39MB, it was difficult to fit a 3D game into that little space In contrast, DoomEternal, the latest instalment,takes up nearly 38 thousand times as much space, at an obscene 89GB The original game used a technique called ?ray casting?, colloquially known as?2 5D graphics?? even though the levels themselves exist as 3D objects, the demons, projectiles, weapons and pickups were all two dimensional sprites The enemies in particular had eight versions of every stage of their animations,one for each major angle they could be seen from This technique is used in some more modern games, such as the mainline Danganronpa games In their case, however, that was an artistic choice rather than a compromise to ensurethat thegamecouldrunproperly.

The engine used in Doom, called ?id Tech 1?, is quite simple as would be expected for the time It considers things only two dimensionally at first,withaheight used during rendering. It has vertices (internally referred to as ?vertexes?), which are joined to form lines(?linedefs?),which can haveone or two ?sidedefs? . Sidedefs are grouped together to form polygons (?sectors?), which represent subsections of the overall level Sectors have a floor height, ceiling height, light level, and floor and ceiling textures

Sidedefs have three textures, middle, upper, and lower One sided linedefs (solid walls) use only the middle texture, but two sided linedefs (which are entrances between sectors) use the upper and middle to fill the gaps between sectors with different floor/ceiling heights (eg stairs) A two sided linedef could also beused to makesomething appear tofloat.

Doom utilises a system called binary space partitioning (BSP) The data for each level is generatedbeforehand.Thelevel issplit into a binary tree, with the root node representing the entire level,and each subsequent node is a specific area.Each branch of the tree splits the area into two smaller areas The line segmentsfrom split linedefsarecalled ?segs? . The lowest nodes are always convex polygons,that do not need to besplit further, called subsectors ?SSECTORS?, with a list of associated segs Thelevelsarethen rendered recursively, starting at the root node, and each time selecting the subnode closest to the camera, drawing subsectors when the algorithmreachesthem

All of the walls in the game are rendered vertically, which is why vertical camera movement istypically not possible A stopgap solution called ?y shearing? does exist, but causes a great deal of distortion at severe angles. The floors and ceilings (flats), however, are significantly less elegant They

28

aredrawn usingan algorithmsimilar to flood fill, so if the BSP data is built poorly, there can be ?holes?in which a flat bleedsinto the edges of a screen Flats are rendered as ?visplanes?,horizontal runswithaset height, light level and texture. Each x position within the visplane has a set vertical line of texture.Thislimit can causetheneed to split visplanes, such as where there are overlapping objects on a flat This was an important limitation, as Doom had a limit of 127 visplanes,whichcould bereachedwitha 12x12 chessboard pattern Visplanes are rendered alongside segs, extending towards the applicable vertical edge As a result, the order in which renderingoccursisimportant so that closer visplanes cannot be ?cut off? by other visplanesmeant tobebehind them

Finally, things, or sprites Each sector has a linked list of spritesstored within. Theseare then drawn if they are within the player?s field of view.Theedgesof spritesareclipped if they are behind previously rendered walls, which is aided by the fact that sprites are

stored in the same column based format as thewalls,but thereisnot thesameguarantee that sprites will be rendered in the correct order The sprites for enemies are selected based on what action the enemy is performing (attacking, moving, etc.), as well aswhat direction they areviewed from Each individual enemy has eight versions of every stage of every animation. Doom and Doom II both used the same engine, but Doom 64, released on the Nintendo 64 used an improved version. 64 had 3D maps, and to a very limited extent, platforming The ?platforming?was mostly using the sprint to make it across small gaps, but it was an important improvement that the first two gamescouldnot havehandled

To conclude, while the was an interesting game engine, it didn? t have much longevity Similarly to comic sans, it worked well given the restrictions of the time, but it can? t take advantage of modern computing power as well as3D graphicscan.

How does a Bayesian Neural Network Model Differ from a Traditional Point-Estimate Neural Network?

Victor Shao and Roman Lipko

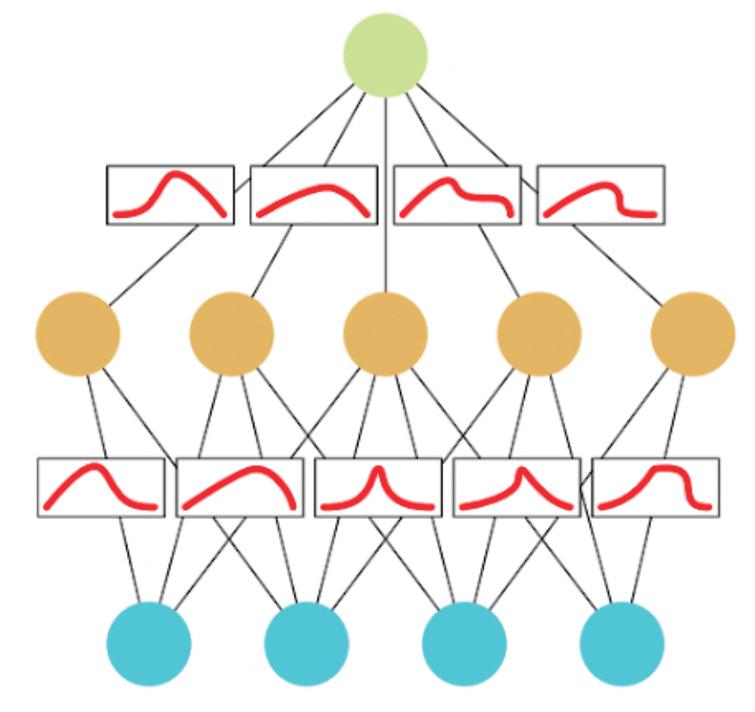

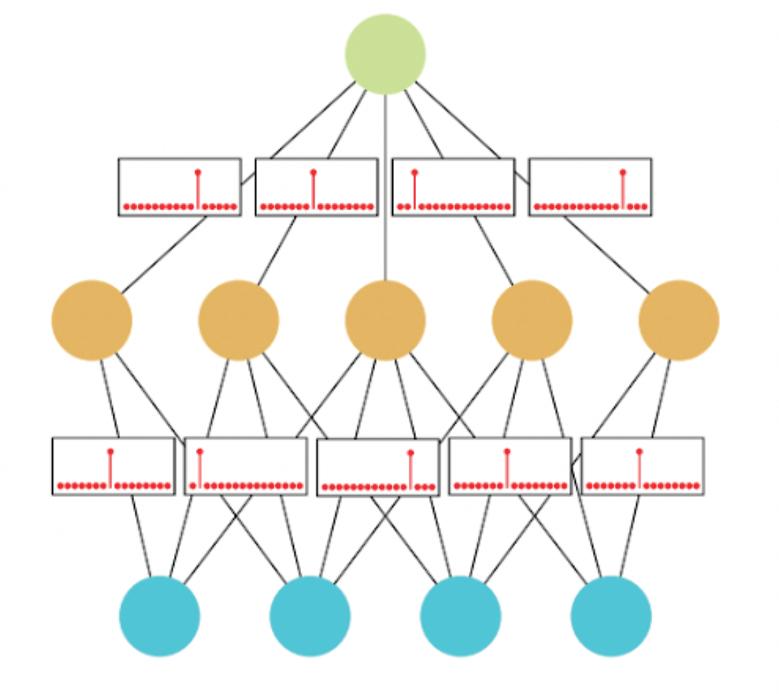

A neural network is a computer system designed to mimic the workings of the human brain. It consists of the input layer, the hidden layer, and the output layer, all of which contain interconnected processing nodes, or neurons, that can learn to recognize patterns of input data Each node can be thought of as a function represented by aset of weightsand activation inputsthat can either amplify or reduce the specific

input to be passed on to the next layer The weights and activations form the input function, which is usually altered by a node specific bias value This is then transformed by an activation function, for example, a sigmoid function which produces an output between 0 and 1,and isthuseasier tobepassedthrough.

29

The way the neural network learns is by adjusting the weights and biases of each individual node so the final output matches the desired result This is done by representing the desired output as ?Vector A? , grouping the actual layer?s result as ?Vector B? , then finding the cost function which is represented by the difference between ?Vector A?and ?Vector B?This cost function is usually computed cycle by cycle until it reaches convergence, which means the error between the predicted output and the actual output is close to 0 (although it could run an infinite number of times to traindata)

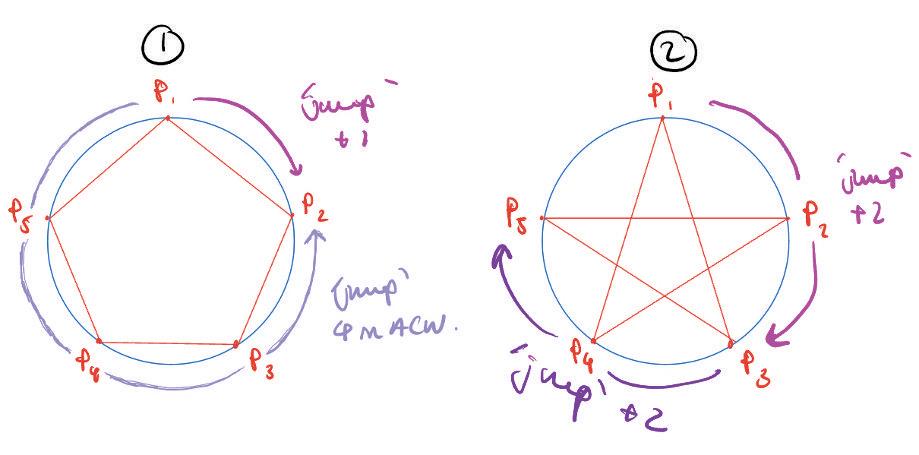

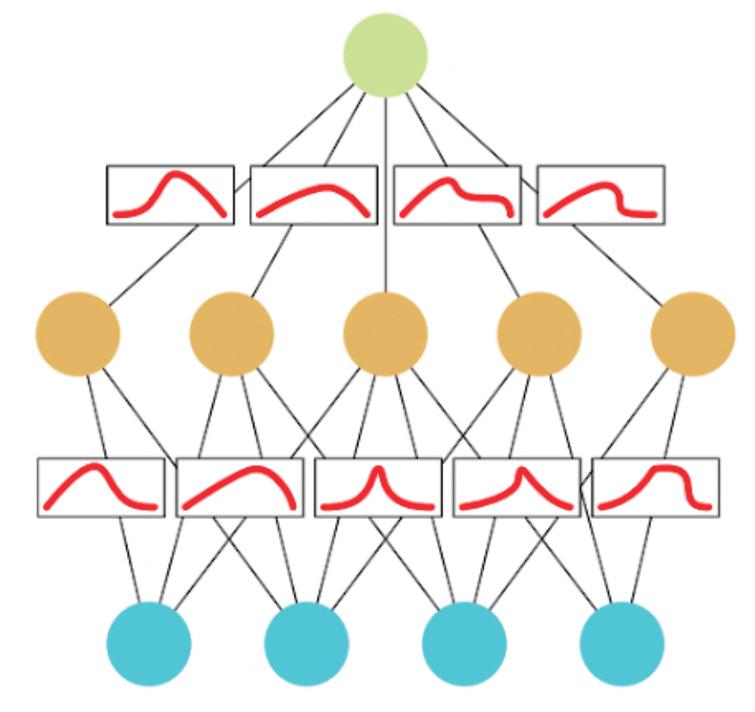

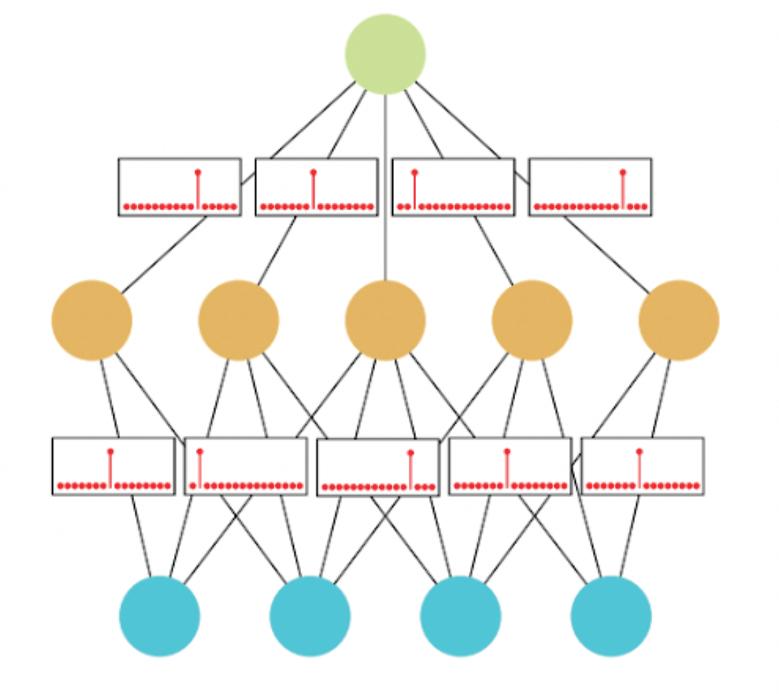

Differences between a Bayesian Neural Network (BNN) and a Point Estimate Neural Network (PENN)

The main difference between a PENN and BNN is how each node is represented; in a PENN,thenodeisdescribed asasinglevalue, whereas in a BNN it is expressed as a probabilisticdistribution.

A BNN is used to estimate the underlying probability distribution of a given input. For example, with reference to the below figure, instead of a single value, the weightsof each nodearecomputedby anormal Distribution.

BNN (t op), PENN (bot t om )

Furthermore, different BNNs work in different ways Some may have the final output as a probability distribution, whilst some may only do so for the node functions (eg weightsand biases) Thisisin contrast to traditional point-estimate neural networks whichsimply output themost likely estimate

Below are some of the main advantages of BNNs. Firstly, they are more robust to overfitting ? the problem of being excessively trained sothat it only respondsto a narrow range of inputs ? by addressing regularisation properties (innate nature via probability distributions). In addition, since BNNs account for uncertainty in the data, they are useful in making predictions However, a Bayesian approach is more computationally expensive since each time the network makes a prediction, it must update its beliefs about the model parameters given the new data This means it?s hard to scale to larger problems and is relegated to more specific questions such as inmolecular biology andmedical diagnosis

30

The Travelling Salesman Problem

Victor Shao and Roman Lipko

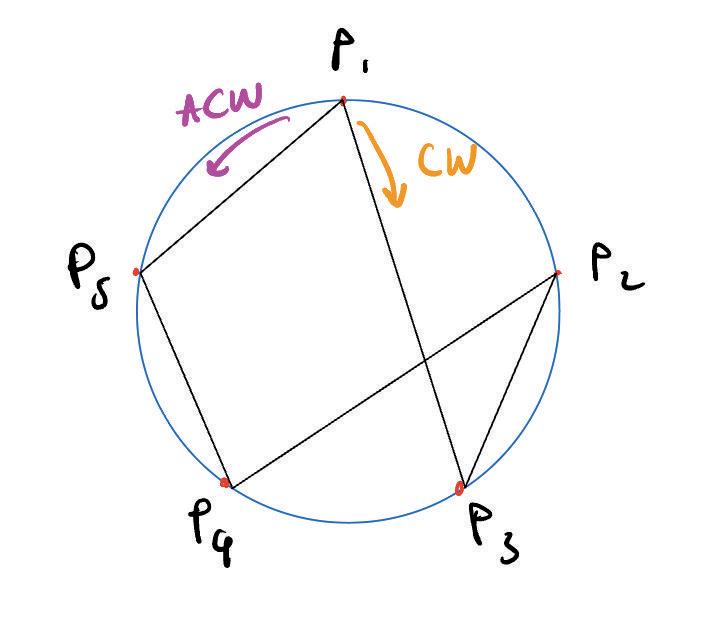

For years, computer scientists have created optimization algorithms for perplexing and versatile problems, of which the Travelling Salesman Problem (TSP) has been a benchmark for many optimizationmethods. The problem goes as follows: ?Given a list of citiesand the distancesbetween each pair of cities,what istheshortest possibleroutethat visits each city exactly once and returns to theorigincity?"

essentially techniques or algorithms used to produce solutionswhich may not be optimal, but areefficient in thegiven time A heuristic function creates a cost (a value generated from the function) to evaluate the accuracy of the current solution Its purpose is to decrease this cost, to increase the accuracy of thesolutiontotheoptimal one.

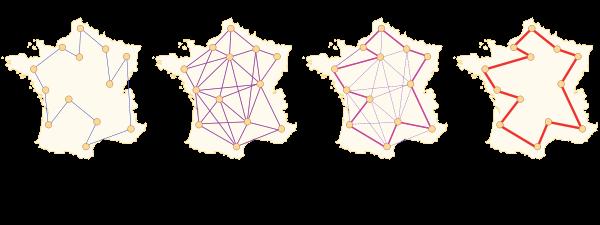

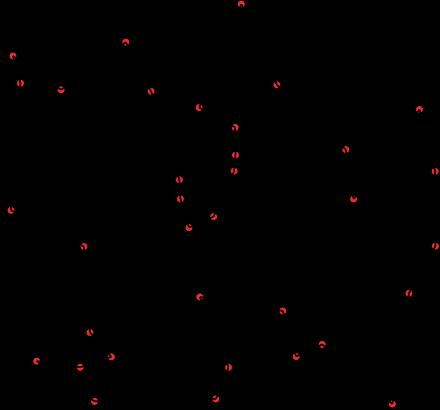

The image above gives an example of a solution to a problem. Here, the dots represent the cities and the line connecting themistheoptimal solution.

It is considered to be a ?non deterministic polynomial (NP) time hard? problem ? in simple terms, it is both hard to solve and verify, and more technically it can be transformed into another NP problem To give a more intuitive understanding, the maximumnumber of pathspossiblein anaive solution (a brute force approach of trying all combinations) for 5 citiesis12 paths.For 10, it is181,440 and for 20 cities, it increases to 6.082 ×1016!

However, due to intensive studying since 1930, there are a range of approximate and exact algorithms to solve this problem exactly to 10s of 1,000s of cities and approximate to millions The majority of these algorithms use heuristics, which are

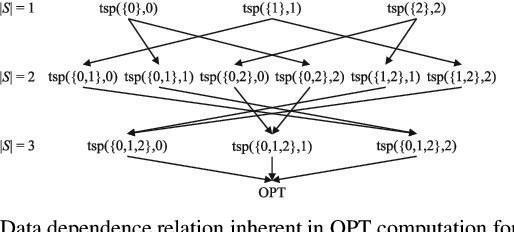

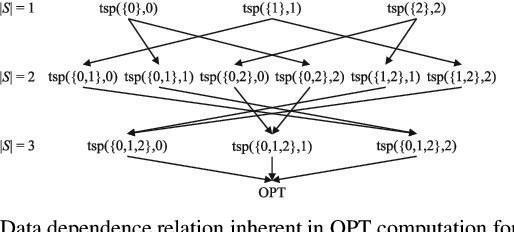

Exact algorithms often take a very long time to execute There are relatively fast techniques to compute an exact algorithm, such as the Held Karp algorithm, which is an application of dynamic programming. It defines a set Sfor the cities which the route has to go through before reaching its destination, d. It starts with |S|=0 and gradually increases |S|, computing the smallest distance for each combination of size |S|. The smallest distance through S is broken up into the minimum value between going from some city 'c' in S to the new destination while going through the rest of thecitiesin S However,thesmallest distance to 'c' while goingthrough the other citieshas already been computed before in the set of |S| 1, so it is easier to compute the new possible distances Although this is faster than a naive approach,it still hasto take into account a considerable number of possibilities, thus resulting in a time complexity of O(n22n).

31

As shown, exact algorithms get very computationally expensive as the number of citiesincreases,which waswhy approximate algorithmsweredevised

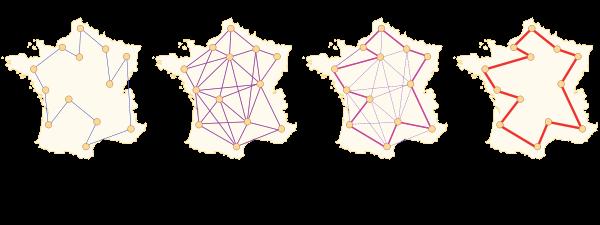

One simple yet inefficient heuristic algorithm is the nearest neighbour algorithm, which is a type of greedy algorithm In this algorithm, the salesman always chooses the nearest unvisited city as his next move. It constructs a tour very quickly but relies heavily on the positions of the cities and may sometimes produce the worst case. Thus, techniques, such as the pairwise exchange, exist This removes n edges (where n is a pre specified integer) and replaces these with n different edges that reconnect thefragmentsinto anew and shorter tour

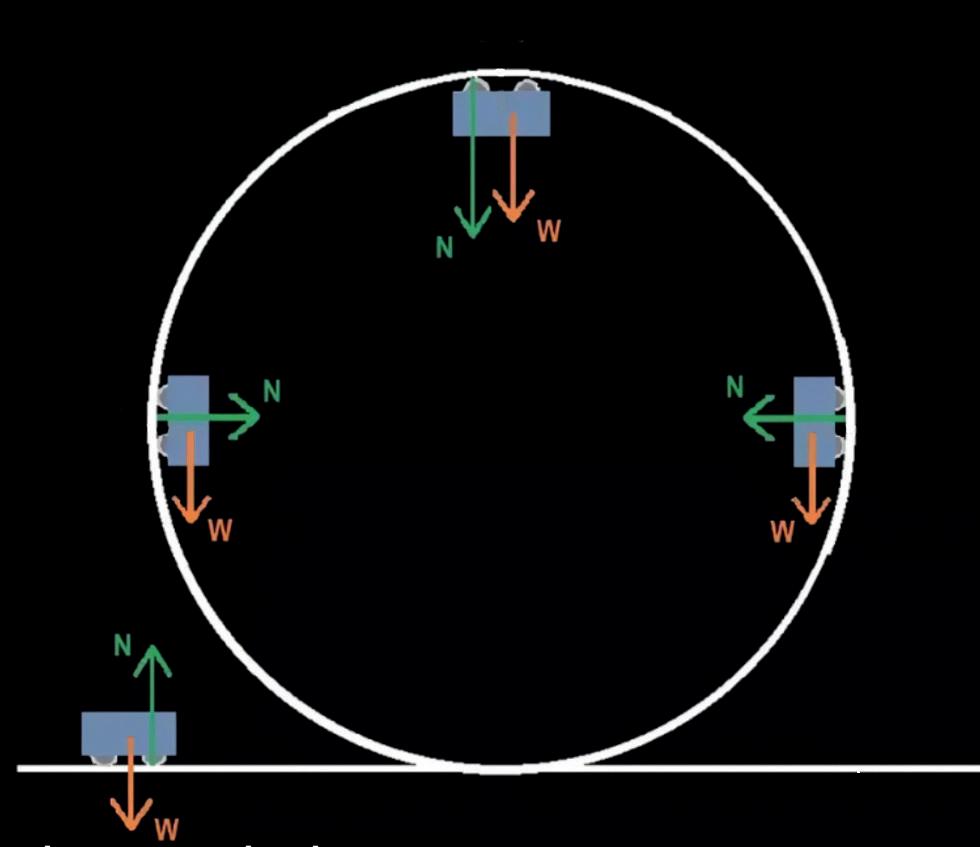

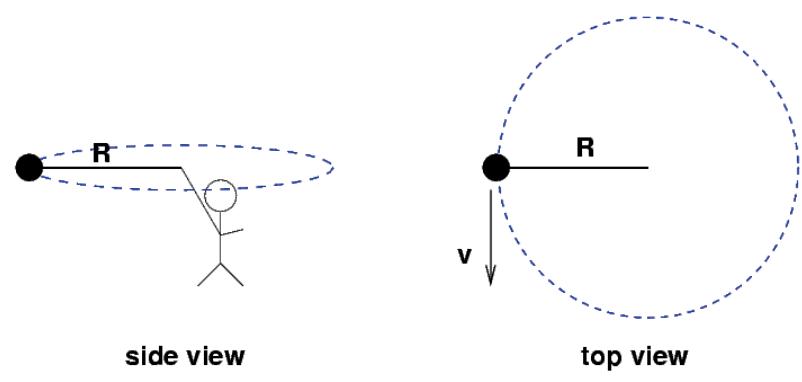

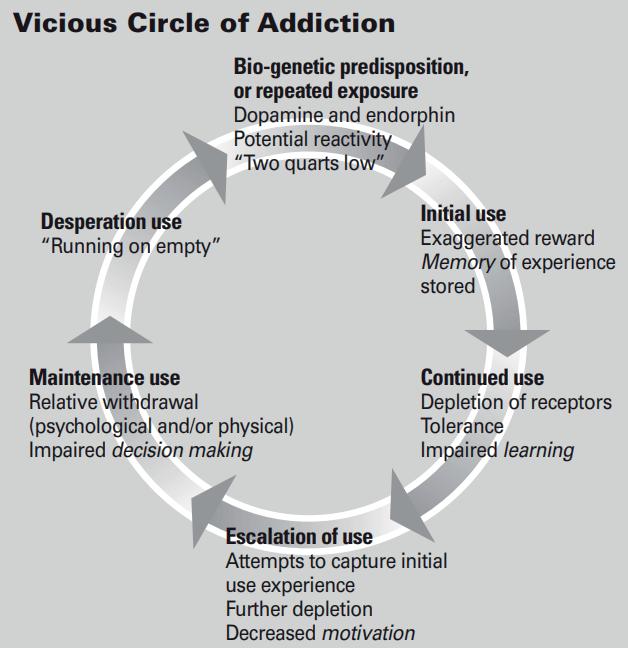

The third and final algorithm explained here istheAnt Colony Optimization,which usesa simulation of theAnt Colony System (ACS),a type of swarm intelligence method. It imitates real ants which lay down pheromones directing each other to resources This algorithm sends out a large number of so called ?ant agents?to explore many possible routes on the map. They travel through the graph based on heuristics from the starting point and ?pheromones? These pheromones are values added to the edgesof thegraph,increased by antsmoving on the edge, to increase the likelihood of an ant travelling through that route. Once it reachesthe end of the tour,it depositsmore pheromones around the completed tour it has taken. From this, the later simulation iterationsof antswill locate better solutions on averageand at theend,theroutewith the most deposited pheromoneswould beavery close approximation to the shortest path