Innovative Writers

Verity Powell

Emily Feeke

Nina Bucekova

Sarah Mackel

Sarah Kurbanov

Adam Brittain

Violet Melcher

Zara Ward

Shiksha Guru

Milan Wood

Diya Rajesh

Revathi Ramachandran

Mia Cammarota

Ulyssa Fung

Thomas Burton

Ideja Bajra

Josie Sequeira-Shuker

Editors

Laila Deen

Tom Burton

Ideja Bajra

Toby Lawson

Sushmhitah Sandanatavan

Committee

Co-founder Thomas Burton

Co-founder Ideja Bajra

Natural Science Josie Sequeira-Shuker

Technology Milan Wood

Engineering Zara Ward

Medicine Sarah Mackel

Editing & Design Laila Deen

Logistics Melissa Ieger Gaeski

Head Melissa Ieger Gaeski

Treasurer Côme Naegelen

Events Kimberly Pederson

Outreach Molly Keane

Social Media Ruby Sloan

shortly evolved into the creation of STATIC (St. Technological and Innovation Club) and our Scientific Innovation Review.

Our Review outlines recent and significant innovative technologies across the fields of Natural Science, Technology, Engineering and Medicine. Our innovative writers have researched their report titles in great depth, using various sources, scientific journals and databases. The STATIC committee have worked consistently throughout the semester to guide our teams in researching their titles and formulating their reports.

STATIC is a growing initiative and will continue to host inspiring and influential collaboration and inter-club events. We aspire to continue establishing a networking system between like -minded, accomplished and motivated individuals. STATIC’s ethos can be summarised in a quotation from a TED talk that our head of Natural Sciences recently gave on the ‘synergy between successful women’.

“The interactions between different people with different strengths combine to create new ideas. These new ideas combine to create unique and life changing solutions to climate change, the energy crisis and future pandemics” ~ Josie Sequeira-Shuker.

This review is a product of the synergy across the committee and our entire team of innovative writers, collaborating to outline the innovations that have contributed towards the betterment of society.

Thomas Burton and Ideja Bajra.

Thomas Burton and Ideja Bajra.

Deep learning and web accessibility: a commentary on how deep learning could improve accessibility online, Milan Wood……;.07

The importance of understanding protein folding in relation to computational complexity, Verity Powell...................................17

Machine Learning for Causal Inference: Bridging the Gap between Prediction and Causality, Nina Bucekova……………………...26

Initial Explorations: A computational approach to 3x3 magic squares of squares, Verity Powell……………………………………….35

Reviewed and edited by

S. SandanatavanABSTRACT: The aim of this report is to provide an insight into the potential of deep learning as an ingenious solution to the web accessibility challenge and to illuminate its opportunities and implications for all users. By harnessing the power of deep learning, we could strive towards a digital landscape where everyone, regardless of their abilities, can reap the benefits from the vast resources available on the web. The exploration investigates the diverse avenues through which deep learning could improve accessibility on webpages for viewers with learning difficulties, disabilities or visual impairments. I will be exploring the various avenues through which deep learning could be applied in enhancing image, video and text recognition, facilitating natural language understanding and personalising the user experience through predictive modelling. The start of this report clarifies the definitions of key terminology that will be used throughout the exploration, before diving into potential avenues alluded to above. Finally, we will conclude on the feasibility of using deep learning for web accessibility.

The rapid expansion of the digital age presents a myriad of opportunities for worldwide communication, education and entertainment, but it also introduces significant challenges in terms of ease of access. Deep learning is a branch of machine learning that uses complex neural networks to identify patterns within large, unstructured datasets. Recent advancements in the capabilities of artificial intelligence (AI) have given deep learning the potential to significantly improve inclusivity in web accessibility. The ever-evolving nature of web content demands innovative approaches that go beyond established standards – deep learning could be the key to a seamless online experience (Dean, Jeffrey 2022).

To understand deep learning, we must start at the top of the hierarchy with AI. Broadly speaking, AI is the simulation of human intelligence processes by machines with the ability to learn (i.e. acquire information and rules for using the information), reason (using these rules to reach approximate or definite conclusions), self-correction and the ability to perceive and interpret the surrounding environment (Scharre, Paul, et al., 2018).

Machine learning (ML) is a subset of AI involving the development and application of algorithms and statistical models to enable computer systems to automatically learn and improve from experience without being directly programmed. These algorithms are design-focused to analyse complex data and make predictions and decisions based on that data – the fundamental concept underlying machine learning is to allow systems to identify relationships between data in a progressive manner, similar to that of a human gathering knowledge.

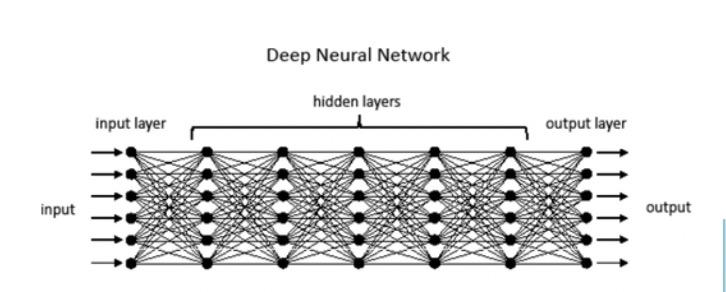

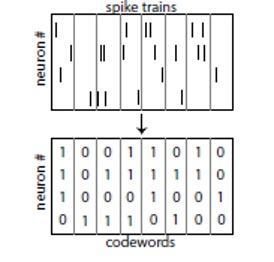

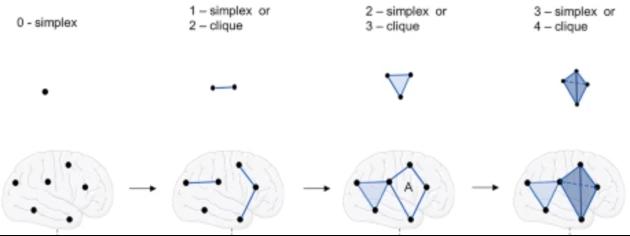

Deep learning is a subset of machine learning with a design inspired by the structure and function of the human neural network in the brain. Deep learning models are composed of multiple layers of artificial neural networks, each providing a different interpretation of the data it feeds on The layers are hierarchical, with each successive layer using the output from the previous layer as its input.

These models are trained by looking at many pre-designed example datasets to trigger automated learning – the more training the model receives, the further it excels in identifying patterns in both structured and unstructured data. Signals are passed from neuron to neuron, initially being generated by simple yes-or-no choices, where signals with a positive value continue through the network and signals with negative value are inhibitive (Hayes, Brian. 2014). This is why deep learning is suitable for fields such as image and speechrecognition and natural language processing.

The key differences between ML and deep learning are: representation of data, architecture and model complexity, data requirements, training and computational resources and interpretability (Janiesch, C. et al. 2021).

Traditional ML requires data to be handcrafted by domain experts before it can be interpreted whereas raw data is used directly in deep learning to extract information which gives deep learning models the ability to automatically learn hierarchical representations of data to be more effective in capturing complex patterns Leading on from this, deep neural networks consisting of multiple layers of interconnected artificial neurons that can potentially contain millions, or even billions, of parameters make deep learning more advanced than comparatively simplistic machine learning architecture in understanding highly intricate and abstract representations of data.

The inherently less complex nature of ML results in ML models needing minimal data description and computation resources to work effectively. Limited labelled data and small datasets are sufficient for ML models to operate compared to the data-hungry temperament of deep learning that requires large amounts of descriptive data to achieve optimal performance.

Consequently, ML models can be trained on standard hardware as they are generally less computationally intensive than deep learning models that are expensive, time- consuming and often require high-performance processing units.

It can be difficult to understand the predictions drawn by deep learning models due to the high complexity of deep neural networks that make it hard to trace the decisionmaking process back to specific milestones. ML models are more easily understood and often more interpretable than their deep learning counterparts.

According to Tim Berners-Lee (Berners-Lee, Tim 2023), web accessibility is the degree to which the Internet and its tasks are put at the distribution of all types of users whatever their requirements, locations, languages, physical and mental aptitudes, etc. Web accessibility is the inclusive practice of allowing all users to have equal access to information and functionality on web pages by removing barriers that hinder interaction with, or access to, sites by people with disabilities There are several standards and guidelines to ensure diverse webpage interaction – these include Web Content Accessibility guidelines (WCAG) developed around four principles of perceivable, operable, understandable and robust and Accessible Rich Internet Applications (ARIA) which are a set of special attributes optional for HTML, JavaScript and similar technologies (Simeone, Jonathan. 2007).This report will be focusing on client-side applications of deep learning to assist digital environments in image and video recognition, speech synthesis, natural language processing (NLP), predictive text and personalisation.

The discussion portion of this report is divided into four topic sections: Image and Video Recognition, Speech Recognition and Synthesis, Predictive text and Personalisation. Each section will highlight current issues within this area of web accessibility and highlight how applying deep learning techniques could provide a solution.

Images and videos are required to have alternative text (alt text) for audible descriptions to help users who struggle visually This text is a brief description of the information represented by the image or video that is crucial for individuals with visual impairment or rely on screen readers to interpret visual elements on a webpage. Alt text is handwritten by the website developer in text fields that add an alt attribute to the HTML tag used to display the webpage image – it can be automatically generated for video descriptions once it has been manually inputted, but it is often wildly inaccurate (Raju Shrestha 2022) This is a time-consuming process which is often overlooked by content creators

Deep learning techniques offer a promising solution for automatically generating accurate and descriptive alt text by training models, on large datasets of labelled images and videos, to recognise objects, scenes and actions depicted in visual content (Waldrop, M. Mitchell. 2019). For example, an initial training dataset of labelled images could contain common objects, such as “dog”, “car”, to introduce familiarity of a generalised ‘body’ to the model Further details such as colour and size could be added to future datasets once the model is able to skilfully analyse and recognise basic image concepts.

The significance of the information produced by these models would reduce the burden on human content creators and produce a real-time narrative of visuals that will change how the viewer interprets the webpage content.

Speech recognition allows a user to interact with a web page through voice commands. The transcription and interpretation of spoken language for individuals with physical or speech impairments, or those who simply prefer voice input, makes websites easily navigable without having to use alternative hardware like a keyboard and mouse.

Deep learning models can acquire knowledge on the nuances and patterns of spoken language through iterative training on large speech datasets to enable accurate recognition of speech. These datasets could range in attributes such as accent, age and language for the broadest coverage of natural language. The models would convert spoken word into text or commands to manoeuvre the website, eliminating the need for manual typing for a more natural means of interaction This benefits all users as they can still access information on the webpage when multi-tasking or when visual attention is limited. It also promotes inclusivity by accommodating users with varying levels of literacy or language proficiency.

Speech synthesis (or Text-To-Speech (TTS)) converts written text into spoken word using deep learning-based techniques. TTS models learn intonations and linguistic nuances of human speech to produce high-quality synthetic voices that closely resemble natural speech. The natural and intelligible speech generated benefits users with visual impairments or learning disabilities.

A focus on refining deep learningbased TTS models could help overcome challenges such as maintaining prosody, inflection and personalisation, tailoring the voice output to the user’s preferences to ensure they receive a high-quality auditory experience. Moreover, using deep learning techniques to develop more compact and efficient TTS models for easier integration of speech synthesis directly into websites to ease reliance on external services and reduce latency. These improvements will make webpage information more accessible to a wider user base and is overarchingly more inclusive

Predictive text offers valuable assistance to users as they type by making suggestions or automating responses – a service that is particularly valuable to individuals with cognitive impairments or motor disabilities with challenges typing. The system uses algorithms to analyse the context of the input text, including factors such as words already entered and syntax, to generate relevant and likely predictions (Tlamelo Makati. 2022). Probability distributions are then applied to a language model, powered by machine learning algorithms, to assign the likelihood of each potential suggestion Some text systems can continuously adapt based on user interaction by learning from user selections to generate suggestions that better align with individual preferences

The key areas in which applying deep learning could improve within predictive text are accuracy of predictions and personalisation for individual writing styles and preferences. Extensive training of a deep learningbased predictive text language model on diverse datasets would better capture the intricacies of language and result in more accurate predictions. The outcome of an improved predictive text system would enhance typing efficiency and overall user experience. The integration of multi-modal input (combining text with audio or visual cues) will further improve accuracy and richness of predictive output. Some deep learning language models, such as OpenAI’s GPT-3, are starting to incorporate this feature. The incorporation of user-specific data, such as typing patterns, preferred phrases, or commonly used vocabulary, on a deep learning-based predictive text language model will adapt the suggestions to the individual, and the model’s ability to continuously learn ensures it remains up-to-date and adapts to new linguistic trends These personalised models will align better with individual writing styles

The defining factor of web accessibility is how easily a website can adapt and learn from the individual – using deep learning to improve user personalisation in areas such as interfaces, text size and colour schemes may be useful for those with cognitive disabilities who may find standard website layouts challenging.

Creating user profiles is one way deep learning is currently used to tailor the web experience. By understanding individual users’ characteristics, interest and accessibility needs, websites adapt their content layout and functionality to provide an optimised experience (Abou-Zahra, Shadi et al. 2018). The deep learning algorithms identify patterns, correlations and preferences by training on user data to refine recommendations. Dynamically adjusting an interface based on user feedback and behaviour is an addition that deep learning models could perform. Adaptive interfaces can change aspects like font size or colour contract based on an individual’s disability or cognitive capabilities. This will ensure all users can access and interact with content more efficiently. Similarly, these models could suggest content recommendations that suit user interests and accessibility requirements by leveraging user preferences, browsing history and contextual information. The more data available to the deep learning models, the better understanding it can develop to refine web personalisation

The inclusion of multimodal forms of i nput (as mentioned in the commentary above) will enable more comprehensiv e personalisation by considering various accessibility dimensions simultaneously. For example, combining image recognition with user preferences can lead to personalised image descriptions that cater to individual accessibility require ments.

Deep learning-based models can evidently enhance user experience on the web by their capacity to discern patterns that improve personalisation and alternative media or speech recognition. A large downfall with the application of deep learning in this way is user privacy (Pellegrino, Massimo, et al. 2019) There are concerns related to data collection, storage, anonymisation, consent, security, and user control. Protecting user privacy through measures such as informed consent, data minimisation, secure storage (i.e. using encryption), and transparency is crucial. Addressing these concerns ensures responsible use of data and fosters user trust in deep learning systems for web accessibility. Overall, continued research and innovation within the areas mentioned in this report for deep learning techniques can responsibly pave the way for more effective accessibility approaches in the future

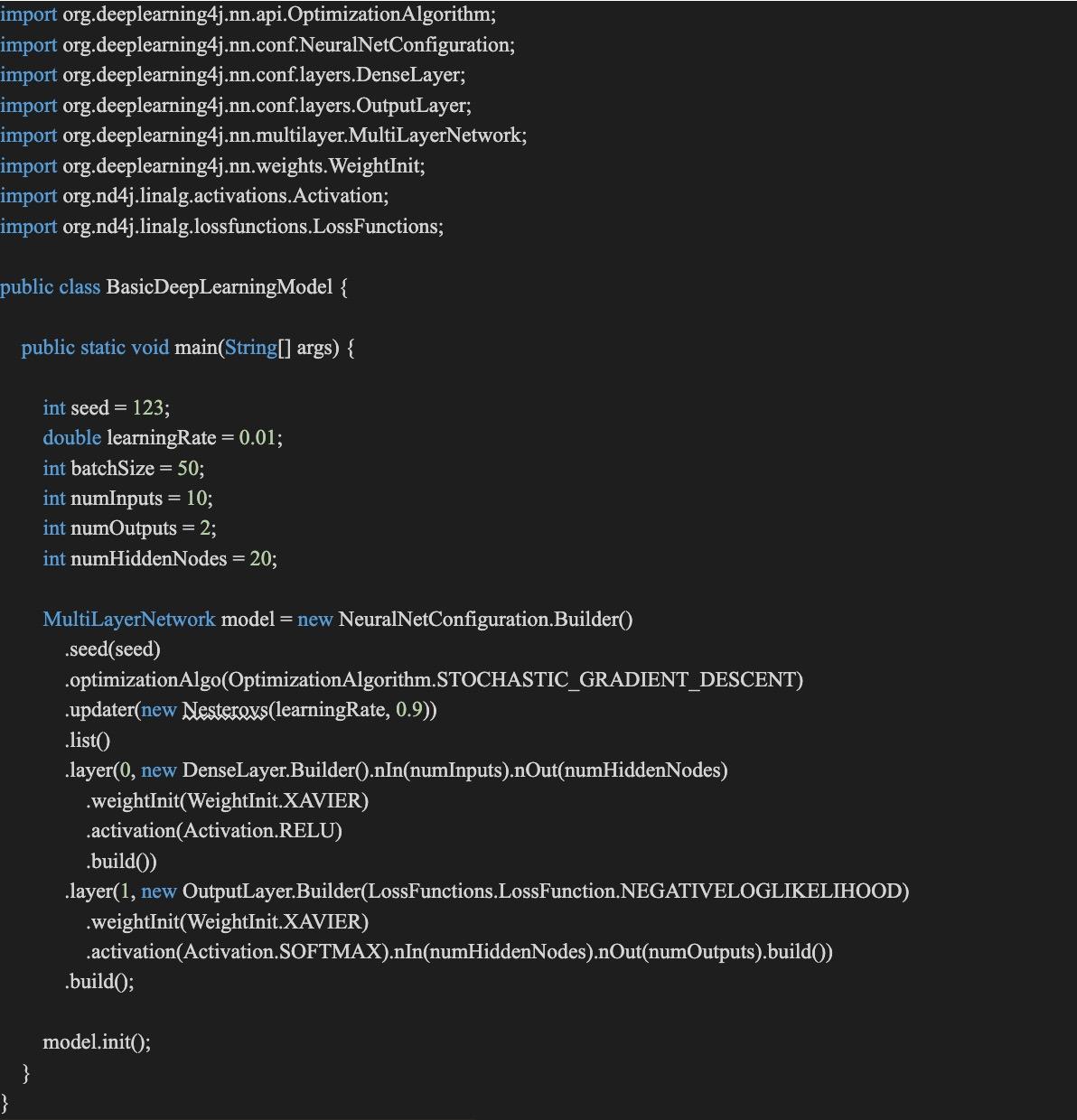

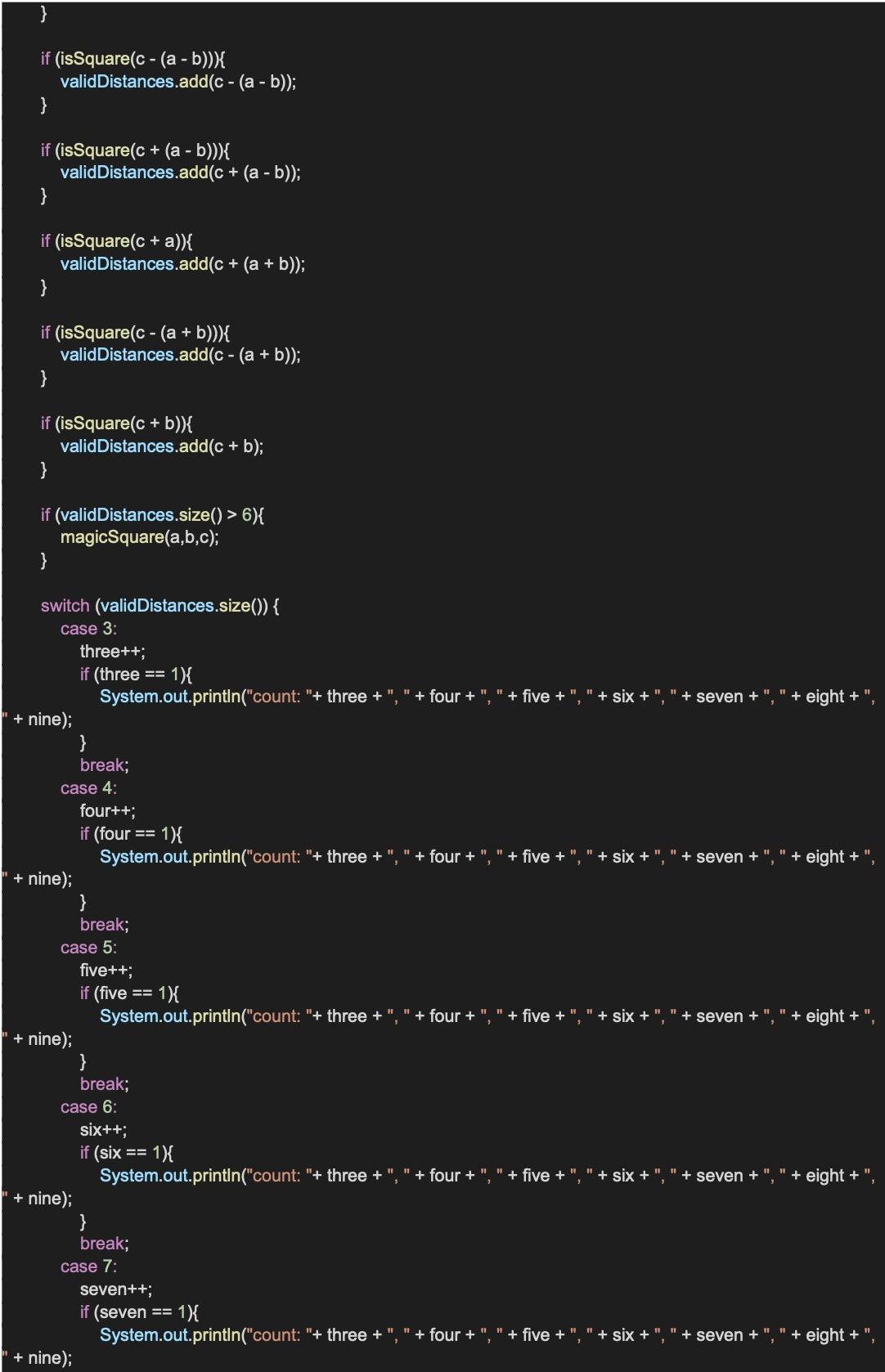

This section contains analysis on a simplified deep learning model written in the Java library, Deeplearning4j (DL4j) The model requires a suitable library that can handle complex mathematical operations and allow for scalability – a considerable amount of customisation is needed to tailor this model for web accessibility. The example is a MultiLayerNetwork model with one hidden layer.

The program begins by setting the initial configuration parameters for the model, including the seed for the random number generator (seed), the learning rate for the optimisation algorithm (learningRate), the batch size (batchSize), the number of inputs to the model (numInputs), the number of outputs from the model (numOutputs), and the number of nodes in the hidden layer (numHiddenNodes).

A NeuralNetConfiguration builder follows the design pattern of method chaining and is used to set up the network configuration. This builder is used to initiate a MultiLayerNetwork object.

The configuration starts with the seed value for replicable results and the optimisation algorithm set to Stochastic Gradient Descent (SGD). SGD is a commonly used optimisation algorithm in neural networks which iteratively adjusts the model parameters to minimise the loss function

Figure 2: DL4J MultiLayerNetwork model

Dean, Jeffrey “A Golden Decade of Deep Learning: Computing Systems & Applications.”Daedalus 151, no. 2 (2022): 58–74. https://www.jstor.org/stable/48662026.

Scharre, Paul, Michael C Horowitz, and Robert O Work “What Is Artificial Intelligence?” ARTIFICIAL INTELLIGENCE: What Every Policymaker

Needs to Know. Center for a New American Security, 2018. 4-9. http://www.jstor.org/stable/resrep20447.5.

Scharre, Paul, Michael C Horowitz, and Robert O Work “What Is Artificial Intelligence?” ARTIFICIAL INTELLIGENCE: What Every Policymaker

Needs to Know. Center for a New American Security, 2018. http://www.jstor.org/stable/resrep20447.5.

Hayes, Brian “Computing Science: Delving into Deep Learning ” American Scientist 102, no. 3 (2014): 186–89. http://www.jstor.org/stable/43707183.

Janiesch, C., Zschech, P. & Heinrich, K. Machine learning and deep learning Electron Markets 31, 685–695 (2021)

https://doi org/10 1007/s12525-021-00475-2

Tim Berners-Lee, 2023. W3.org. https://www.w3.org/mission/accessibility/ Simeone, Jonathan. “Website Accessibility and Persons with Disabilities.” Mental and Physical Disability Law Reporter 31, no 4 (2007): 507–11 http://www.jstor.org/stable/20787031.

Raju Shrestha. 2022. A Neural Network Model and Framework for an Automatic Evaluation of Image Descriptions based on NCAM Image Accessibility Guidelines In Proceedings of the 2021 4th Artificial Intelligence and Cloud Computing Conference (AICCC '21). Association for Computing Machinery, New York, NY, USA, 68–73. https://doi.org/10.1145/3508259.3508269

Waldrop, M Mitchell “What Are the Limits of Deep Learning?” Proceedings of the National Academy of Sciences of the United States of America 116, no. 4 (2019): 1074–77. https://www.jstor.org/stable/26580207.

Tlamelo Makati 2022 Machine learning for accessible web navigation In Proceedings of the 19th International Web for All Conference (W4A '22). Association for Computing Machinery, New York, NY, USA, Article 23, 1–3. https://doi.org/10.1145/3493612.3520463

Abou-Zahra, Shadi, Judy Brewer, and Michael Cooper "Artificial Intelligence (AI) for Web Accessibility: Is Conformance Evaluation a Way Forward?" Web4All 2018, 23-25, 4.1.2 Personalisation, April, 2018, Lyon, France, ACM, 2018.

Pellegrino, Massimo, and Richard Kelly “Intelligent Machines and the Growing Importance of Ethics.” Edited by Andrea Gilli. The Brain and the Processor: Unpacking the Challenges of Human-Machine Interaction. NATO Defense College, 2019. http://www.jstor.org/stable/resrep19966.11.

Warner, Brad, and Manavendra Misra “Understanding Neural Networks as Statistical Tools.” The American Statistician 50, no. 4 (1996): 284–93. https://doi.org/10.2307/2684922.

Reviewed and Edited by S. Sandanatavan

Reviewed and Edited by S. Sandanatavan

ABSTRACT: Since the turn of the century, advancements in computational biology have allowed us to understand biological processes and relationships through the utilisation of big data, mathematical modelling, theoretical methods, and computational simulation techniques. Therefore, the computational complexity of biological processes can be studied to better understand their limitations and performance. Some biological processes exhibit complexities that relate to the infamous P versus NP problem, as seen in protein folding. This report delves into the significance of comprehending the inherent complexity of protein folding, considering experimental evidence and the persistently unsolved protein folding problem. Levinthal’s paradox and the energy landscape theory are explored to better understand how the physicochemical properties of amino acids constrain potential solutions to the protein folding problem. Potential computational solutions to the protein folding problem are discussed with reference to heuristics and hypercomputation. We conclude that understanding the mechanics of protein folding could be hold the key to resolving other important computational problems. Furthermore, we argue that considering the computational complexity of a biological process can help us to better understand how they operate

biology deals with the detailed residue-by-residue transfer of sequential information. It states that such information cannot be transferred back from protein to either protein or nucleic acid.”.

The dogma gives us a framework for understanding the relationship between DNA and Proteins. A gene can be expressed to manufacture its corresponding protein via the processes of transcription and translation (Clancy et al, 2008).

Computational complexity refers to the resources required to solve a computational problem (Cirillo et al, 2018). This report delves into time complexity and more specifically asymptotic time complexity, which details the behaviour of complexity as the input size of an algorithm increases. Computational complexity is not only important in creating faster algorithms but for understanding the limits of computation. For example, the asymptotic complexity of a problem can be finite but take so long to complete that it is an impractical solution

Such problems are said to be intractable signifying any attempt at resolution demands too many resources to be useful (National Science Foundation, 2016).

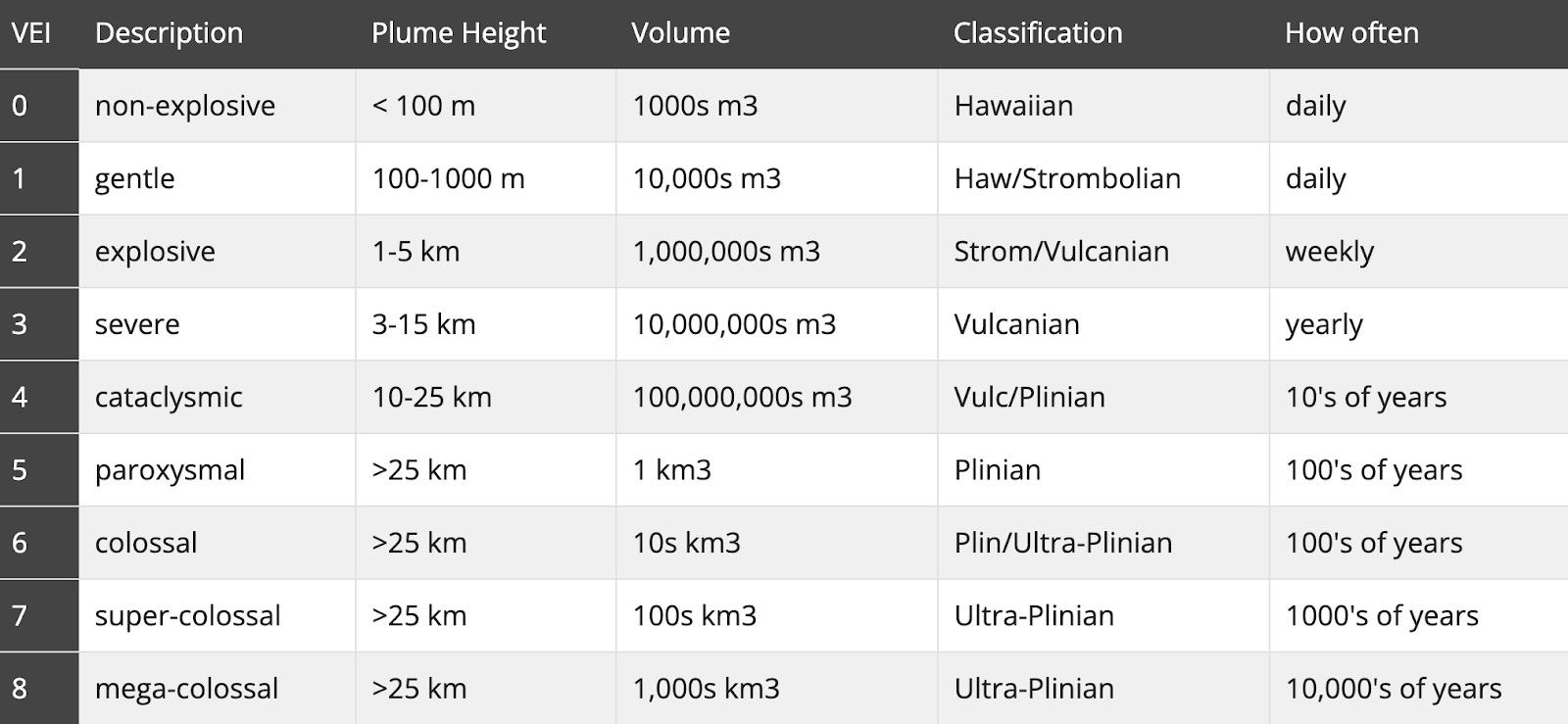

Intractability underscores the absence of an efficient solution, where efficiency is defined as a polynomialtime algorithmic solution This closely relates to the complexity classes of polynomial (P) and nondeterministic polynomial (NP) time P problems can be solved in polynomial time. A problem is classed as NP if it’s solution can be guessed and then verified in polynomial time where the production of the ‘guess’ is nondeterministic. Whilst these two complexity classes seem distinctly different, the P versus NP problem questions if a NP problem be solved in polynomial time (Cook, 2001)

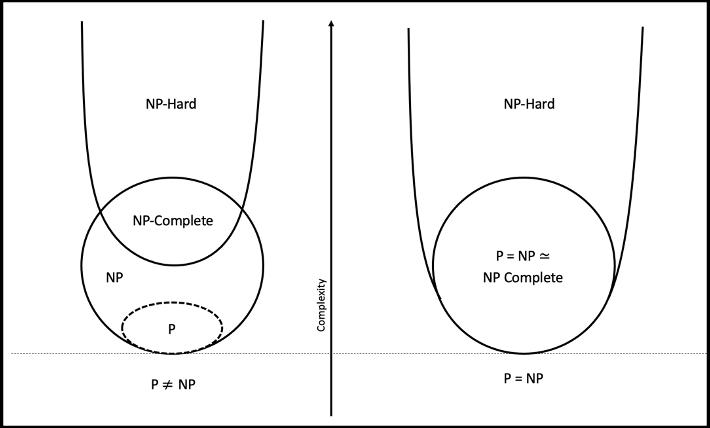

Figure 1 shows the relationship between P and NP and further introduces the complexity classes of NPhard and NP-complete for the cases P = NP and P ≠ NP. A program is NP-hard if an algorithm to solve it can be translated into any other NP problem. A problem is NP-complete if it is not only in NP but also NP-hard. Stewart (2000) explains “Specifically, an NP problem is said to be NP-complete if the existence of a polynomial time solution for that problem implies that all NP problems have a polynomial time solution"

Figure 1: Euler diagram for P, NP, NP-Complete and NP-Hard problems shown under the assumptions that P ≠ NP (left) and P = NP (right).

Figure 1: Euler diagram for P, NP, NP-Complete and NP-Hard problems shown under the assumptions that P ≠ NP (left) and P = NP (right).

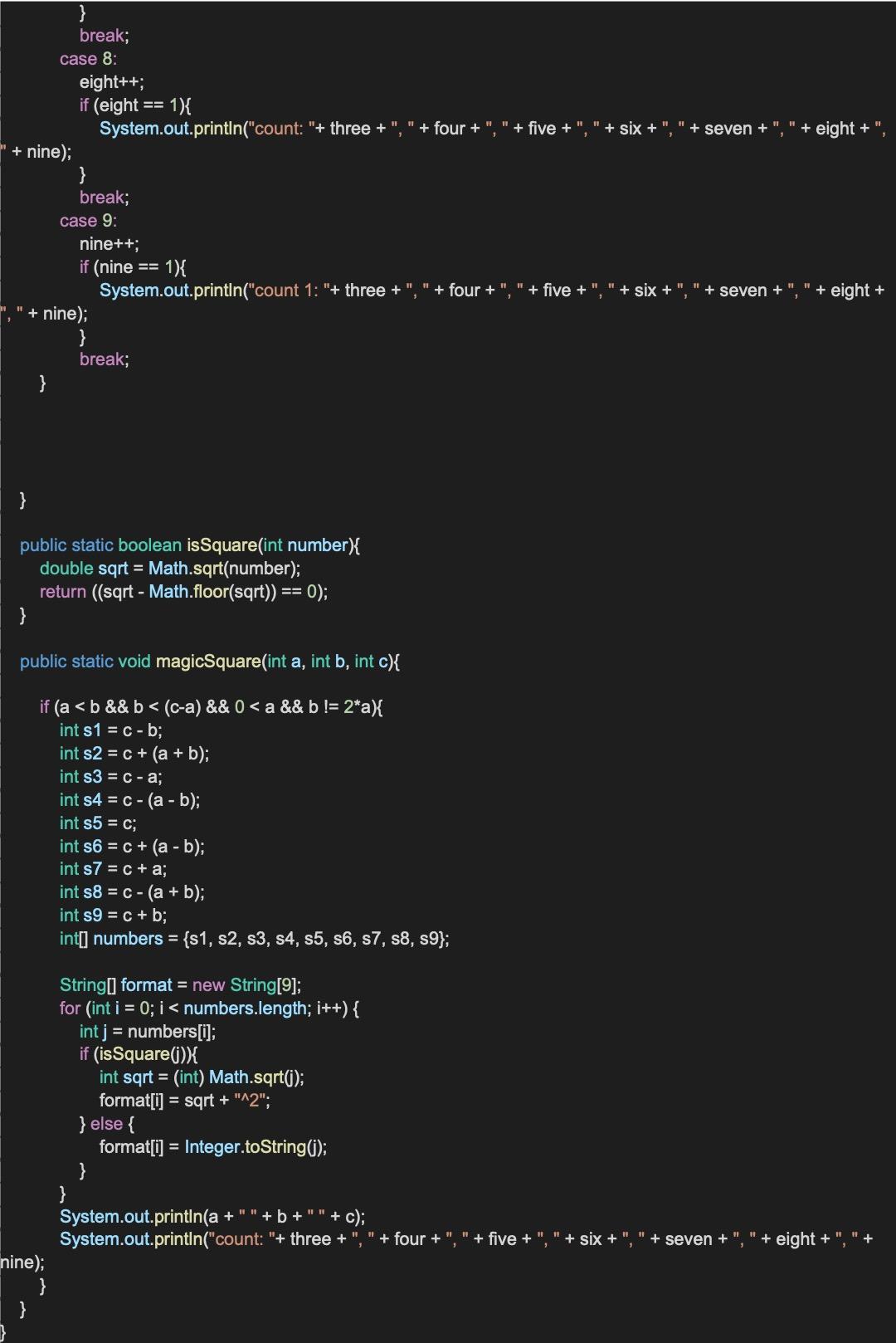

A polypeptide chain undergoes a transformative process into a biologically functional protein upon assuming its intricate threedimensional configuration (Cheriye dath, 2019). The comprehension of "how a protein’s amino acid sequence dictates its threedimensional atomic structure” (Dill et al, 2008) is known as the Protein Folding Problem (PFP). The PFP lies at the intersection of biology, physics and computer science and its potential solution could have a profound effect on each discipline This report will explore the PFP from a computational perspective considering the potential effects of the problem’s computational complexity against the backdrop of the P versus NP problem.

In 2021 Deepmind released AlphaFold, a ‘computational approach capable of predicting protein structures to near experimental accuracy in a majority of cases’ (Jumper et al, 2021). However, it is important to note the distinction between the PFP and protein prediction. The process of predicting how proteins fold into a three-dimensional structure from their amino acid sequence, does not teach us anything about how proteins fold ( Moore et al, 2022).

Within the scope of this report, we will only focus on the PFP, with only relevant reference to protein prediction.

In a broader sense, studying complexity allows us to better understand fundamental aspects of science with profound implications on our understanding of the world

that is consistent with the dynamic responses of biological processes. Furthermore, the introduction of non-linearity within biological systems presents a counterpoint to deterministic explanations. Here non-determinism disrupts a reductionists idea of reducing complex phenomena into simple terms. Computational biology can be used to study complex and non linear biological

Modern embraced explain reducing complex data into simple terms However, scientists may be reaching the limits of this approach (Mazzocchi, 2008). Mazzocchi (2008) insists that it is the emergent properties of biological systems within both components and external factors that hinder a reductionist approach. For example, when proteins fold it is not only the order of their polypeptide chain but factors such as cell acidity and temperature effects their threedimensional structure.

Biological systems exhibit nonlinear behaviour (De Haan, 2006)

biological processes and discover their underlying algorithmic complexity

P versus NP takes its place amongst the Millennium Prize Problems (Clay Mathematics Institute, 2022) as one of the most well-known and complicated unsolved mathematical problems. Whilst no proof currently exists for a solution that proves whether P = NP, there is an expectation amongst computer scientists and mathematicians that P ≠ NP (Gasarch, 2002)

Whilst the PFP is of immense biological significance, its computational complexity should also garner interest. The complexity of the PFP is known to be NP-hard meaning it is “conditionally intractable” (Fraenkel, 1993) However, it is the question of whether protein folding is NP-complete that could revolutionise our whole understanding of computation. If NP-complete, the PFP, unlike other NP-complete problems, appears to have an effective solution in nature If the biological process of protein folding is NP-complete, and can be solved in polynomial time, then P would equal NP.

There is debate about the NP-complete nature of the PFP Berger and Leighton (1998) provide evidence that the PFP is NP-complete for the hydrophobic-hydrophilic model on the cubic lattice. Conversely, Guyeux et al, (2013) (in their work focusing on models used for protein prediction which model the PFP) state that ‘the SAW requirement considered when proving NPcompleteness is different from the SAW requirement used in various prediction programs, and that they are different from the real biological requirement.’ Therefore, we will explore the implications of the PFP being both NP-complete and not NP-complete.

Furthermore, as no proof exists for P versus NP no assumption about its outcome will be made. Instead, this report aims to detail the importance of definitively understanding the complexity of the PFP and highlight that understanding the PFP would shed light on the mechanisms behind NP problems. Therefore, we will explore the biological process of protein folding and make comment on the problem in relation to P versus NP.

One hypothesis for finding the native folded state for a protein is that it could be achieved by a random search along all possible configurations (Zwanzig et al. 1992). This thought experiment is known as Levinthal’s Paradox (Levinthal, 1969) and shows the intractable nature of the PFP (Martinez, 2014)

The notion that exhaustive search contributes to the intricacies of protein folding aligns with an intuitive understanding that P is not equal to NP. This concept suggests that a polynomial solution is improbable for addressing an NP-complete problem. In an example provided by Srinivas and Bagchi (2003), we can understand how an implementation of a non-polynomial solution (in this case exhaustive search) is simply not viable Consider that for a string of 101 amino acids (where only conformations are considered) there exists 3100 different possible states (Srinivas & Bagchi, 2003). If we extrapolate from Levinthal's hypothesis that proteins fold via random and exhaustive search, even with a protein's ability to explore 1013 configurations per second, the process of folding would extend to 1027 years (Srinivas & Bagchi, 2003). Therefore, in Levinthal’s paradox, finding the native folded state of a protein is bounded by the sheer combinatorial complexity of its components (Tompa & Rose, 2011)

The paradox raises questions about the likelihood of a rigid algorithmic pathway being used in the protein folding process In response to Levinthal’s paradox small energetic biases towards the native state of the protein can be used to decrease folding rates to a realistic time frame (Martínez, 2014). Whilst this is certainly not the definitive way proteins fold, the existence of such a solution to Levinthal’s paradox shows that the PFP has a solution with better time complexity than exhaustive search. Furthermore, this shows that protein folding is not simply using exhaustive search on ‘supercomputer’ style biological hardware

Therefore, protein folding could take place using a rigid algorithmic pathway which we could assume would take place in polynomial time. Such a solution would prove that P = NP if the PFP is NP-complete However, these ideas presented at a high level and the experimentally observed function of protein folding must be considered in tandem As stated in the biological context of this review, a reductionist approach has often not reached the desired solution to complex biological problems such as protein folding.

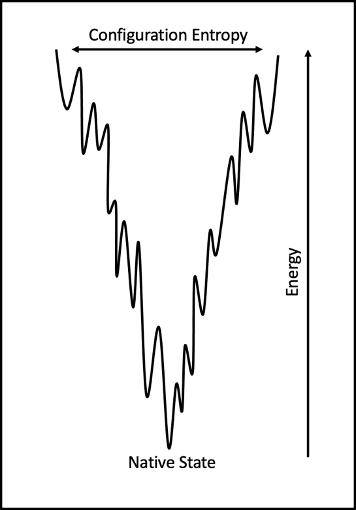

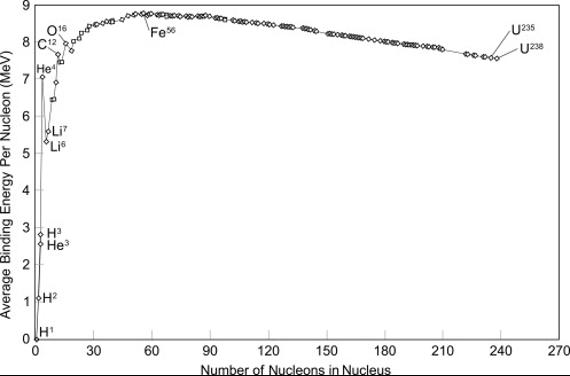

Anfinsen showed that proteins fold into their shape because it is “thermodynamically the most stable in the intracellular environment” (Reynaud, 2010). Furthermore, Reynaud (2010) explains that “the information needed for proteins to fold in their correct minimal energy configuration is coded in the physicochemical properties of their amino acid sequence.”. To represent the pathway to the free-energy minimum a statistical approach can be used on the energetics of protein conformation leading to the energy landscape theory (Bryngelson et al 1995) Figure 2 shows a 2dimensional view of a protein folding tunnel Unfolded configurations occupy the top of the funnel-like energy landscape. Proteins reach their free-energy minimum by using routes determined by the physicochemical properties of the protein's amino acid sequence (Schug & Onuchic, 2010)

The most fascinating quality of the energy landscape theory is that there is likely no single unique folding pathway, instead Bryngelson et al. (1995) propose that protein folding is a complex self-organising process that occurs through various routes down a folding tunnel Whilst this theory does not constrain a defined pathway model, it is widely interpreted that proteins fold through independent pathways

(Englander & Mayne, 2014). The idea of independent pathways seems to conflict with any idea that if NP-complete, protein folding takes place via a rigid algorithmic path.

The energy landscape theory further polarises the implications of the potential computational complexities Answering the these multiple within the protein would give understanding complexity Firstly, we implications landscape theory complete. If environmental temperature or the pathway, could still be algorithm where

physiochemical properties of the amino acids dictate the path, but also the environmental factors. Alternatively, proteins might undertake folding through distinct routes even within the same environmental conditions. In this case, such a solution might exist well beyond our current understanding of the limits of computational models as the algorithm would contain multiple correct paths with no apparent benefit.

In contrast, if the modelling of the PFP is not NP-complete then there are other possible explanations for how proteins fold. For example, if protein folding has any tolerance for imprecision, then the process might not be able to be represented by a precise analytical model. Instead, protein folding might have a likeness to the concepts of soft computing (neural networks, fuzzy logic etc.) (Gupta & Kulkarni, 2013).

Figure 2: 2-dimensional view of a of protein folding tunnel.In computer science a heuristic methodology is one used to produce a good enough solution in a shorter time (Datta, 2022) Heuristics are favoured in creating near-optimal solutions to NP-hard optimisation and stay in line with the assumption that P ≠ NP (Zerovnik 2015). Protein folding is NP-hard and therefore the process could be computed via a heuristic style solution which would consider an independent pathway model. This would, however, introduce the possibility that there is an inherent error rate in the way proteins fold.

NP-complete problems such as the Travelling Salesman Problem are also often approximated by heuristic methods (Gao, 2020) and researchers often look for parallels with the natural world to form new insights For example, researchers have demonstrated that “bumblebees make a trade-off between minimising travel distance and prioritising high-reward sites when developing multi-location routes” (Lihoreau et al 2011) leading to optimisation approaches that consider the behaviours of bumble bees such as swarm intelligence (Sahin, 2022) Whilst studies like this (Lihoreau et al 2011) provide no insight into how these routes might be optimised it is interesting to note the parallels between the natural world and mathematics Through this example we

see that heuristic methods exist in other aspects of nature Therefore, it is not inconceivable that through evolution, the protein folding mechanism could be an efficient heuristic solution.

Whilst this explanation provides no insight into a solution to P versus NP, the importance of discovering a potentially heuristic protein folding mechanism would also have substantial consequences This solution could be applied to other NP problems and might provide near perfect solutions to problems such as the travelling salesman problem, satisfiability problems and graph covering problems (Hosch, 2022).

The study of complexity theory can be viewed in relation to the study of computability theory. The later theory concerns the capabilities and limitations of computation and the concept of Effective Procedures (Enderton, 2011). Our definitions of NP and P rely on deterministic and nondeterministic Turing Machines (TMs) and therefore, the scope of the Church-Turing thesis from which the notion of computability is formalised This thesis can be explained as “the set of functions on the natural numbers that can be defined by algorithms is precisely the set of functions definable in one of a number of equivalent models of computation" (Daintih & Wright, 2008)

We will consider the form of this thesis in which every effective computation can be done by a TM (Copeland, 2000).

NP problems are computable and can be solved in exponential time. However, if P ≠ NP then NP-hard problems can be viewed as intractable as any solution takes too many resources to be useful (as seen earlier in Levinthal’s paradox) (National Science Foundation, 2016). In this case we reach a limit of effective computation and must once again ask how it can be possible that proteins fold if the PFP is NP-complete.

If it is proved that we cannot effectively compute NP problems, then it is possible that these problems could be computed in a way that surpasses the Turing model (Wells, 2004). The concept of computation that exceeds Turing’s Model is known as hypercomputation or Super-Turing computation (Wood, 2019). Therefore, it is possible that protein folding is a process completed via hypercomputation The conjecture that hypercomputation could be responsible for biological processes is debated by both philosophers and computer scientists (Arkoudas, 2008), often within the context of theories of consciousness (Bringsjord & Arkoudas, 2004)

classification as NP-hard could perhaps become trivial, as the

The unifying quality of both the problem P versus NP and the PFP is the breadth of consequences from their potential solutions. Respectively these problems divide opinion across mathematics and biology, yet their solutions seem astonishingly intertwined. Any potential solution to how proteins fold has the potential to revolutionise our understanding of P versus NP. Therefore, we see that by viewing the PFP in conjunction with computational complexity allows us to better understand the limitations and potential outcomes of the problem. Perhaps, through a lens of computational theory and not just computational assistance we have the power to find the solutions to our most important mathematical problems in the biological processes that surround us

1 Cobb, M (2017) ‘60 years ago, Francis Crick changed the logic of biology’ PLoS biology, vol 15, no 9, pp 1-8, Available at: https://doi org/10 1371/journal pbio 2003243 (Accessed: 21 December 2022)

2 Crick, F (1970) ‘Central dogma of molecular biology’ Nature, vol 227, no 5258, pp 561–3 Available at: https://doi org/10 1038/227561a0 (Accessed: 21 December 2022)

3. Clancy, S. & Brown, W. (2008). ‘Translation: DNA to mRNA to Protein’. Nature Education, vol. 1, no. 1, pp. 101. Available at: https://www.nature.com/scitable/topicpage/translation-dna-to-mrna-to-protein-393/ (Accessed: 21 December 2022).

4. Cirillo, D., Ponce de Leon, M., & Valencia, A. (2018). ‘Algorithmic complexity in computational biology: basics, challenges and limitations ’ [Preprint] Available at: https://doi org/10 48550/arXiv 1811 07312 (Accessed: 22 December 2022)

5 National Science Foundation (2016) ‘Tackling intractable computing problems’, National Science Foundation [online], 29 June Available at: https://beta nsf gov/news/tackling-intractable-computing-problems (Accessed: 22 December 2022)

6. Cook, S. (2001). ‘The P Versus NP Problem’, Clay Mathematics Institute. Available at: https://www.claymath.org/sites/default/files/pvsnp.pdf (Accessed: 22 December 2022).

7. Stewart, I. (2000). ‘Million-Dollar Minesweeper’, Scientific American [online], 1 October, Available at: https://www scientificamerican com/article/million-dollar-minesweeper/ (Accessed: 22 December 2022)

8 Cheriyedath, S (2019) ‘Protein Folding’, News-Medical [online], 26 February Available at: https://www news-medical net/lifesciences/Protein-Folding aspx (Accessed: 21 December 2022)

9 Dill, K A , Ozkan, S B , Shell, M S , & Weikl, T R (2008) ‘The protein folding problem’ Annual review of biophysics, vol 37, pp 289–316. Available at: https://doi.org/10.1146/annurev.biophys.37.092707 (Accessed: 21 December 2022).

10. Jumper, J., Evans, R., Pritzel, A. et al. (2021). ‘Highly accurate protein structure prediction with AlphaFold.’, Nature, vol. 596, pp. 583–589 Available at: https://doi.org/10.1038/s41586-021-03819-2 (Accessed: 22 December 2022).

11 Moore, P , Hendrickson, W , Henderson, R , & Brunger, A (2022) ‘The protein-folding problem: Not yet solved ’ Science, vol 375, no 6580, pp 507 doi: 10 1126/science abn9422 (Accessed: 22 December 2022)

12 Schneider, M , & Somers, M (2006) ‘Organizations as complex adaptive systems: Implications of Complexity Theory for leadership research’ The Leadership Quarterly, vol 17, no 4, pp 351–365 Available at: https://doi org/10 1016/j leaqua 2006 04 006 (Accessed: 22 December 2022).

13. Mazzocchi, F. (2008). ‘Complexity in biology. Exceeding the limits of reductionism and determinism using complexity theory’. EMBO reports, vol 9, no 1, pp 10–14 Available at: https://doi org/10 1038/sj embor 7401147 (Accessed: 22 December 2022)

14 Haan, J (2006) ‘How emergence arises’ Ecol Compl, vol 3, pp 293–301 Available at: https://www researchgate net/publication/222935027_How_Emergence_Arises#:~:text=10 1016/j ecocom 2007 02 003 (Accessed: 22 December 2022)

15. Huerta, M., Downing, G., Haseltine, F., Seto, B., & Liu, Y. (2000). ‘NIH working definition of bioinformatics and computational biology’, Biomedical Information Science and Technology Initiative. Available from: http://www.binf.gmu.edu/jafri/math6390bioinformatics/workingdef.pdf (PDF). (Accessed: 22 December 2022).

16 Clay Mathematics Institute (2022) ‘Millennium Problems’, Clay Mathematics Institute [online], 7 December Available at: https://www claymath org/millennium-problems (Accessed: 22 December 2022)

17 Gasarch, W (2002) ‘The P=?NP Poll’ SIGACT News, vol 33, no 2, pp 34–47 Available at: https://dl acm org/doi/10 1145/564585 564599 (Accessed: 22 December 2022)

18. Fraenkel, S. (1993). ‘Complexity of protein folding’ Bulletin of Mathematical Biology, vol. 55, no. 6, pp. 1199-1210. Available at: https://doi.org/10.1016/S0092-8240(05)80170-3 (Accessed: 22 December 2022).

19. Guyeux, C., Côté, N., Bahi J.M., & Bienia, W. (2014) ‘Is protein folding problem really a NP-complete one? First investigations’. J Bioinform Comput Biol Available at: DOI: 10 1142/S0219720013500170 (Accessed: 22 December 2022)

20 Berger, B , & Leighton, T (1998) Protein Folding in the Hydrophobic-Hydrophilic (HP) Model is NP-Complete Journal of Computational Biology Available at: http://doi org/10 1089/cmb 1998 5 27 (Accessed: 22 December 2022)

21 Zwanzig, R , Szabo, A , & Bagchi, B (1992) ‘Levinthal's paradox ’ Proceedings of the National Academy of Sciences of the United States of America, vol. 89, no. 1, pp. 20–22. Available at: https://doi org/10 1073/pnas 89 1 20 (Accessed: 22 December 2022)

22 Levinthal, C , (1969) ‘How to Fold Graciously’, Mossbauer Spectroscopy in Biological Systems Proceedings, vol 67, no 41, pp 22-26 Available at: https://faculty cc gatech edu/~turk/bio_sim/articles/proteins_levinthal_1969 pdf (Accessed: 22 December 2022).

23. Martínez, L. (2014) ‘Introducing the Levinthal’s Protein Folding Paradox and Its Solution’, Journal of Chemical Education, vol. 91, no. 11, pp 1918-1923, Available at: DOI: 10 1021/ed300302h (Accessed: 22 December 2022)

24 Srinivas, G , Bagchi, B (2003) ‘Study of the dynamics of protein folding through minimalistic models ’, Theor Chem Acc, vol 109, pp 8–21 Available at: https://doi org/10 1007/s00214-002-0390-6 (Accessed: 22 December 2022)

25 Tompa, P , Rose, G (2011) ‘The Levinthal paradox of the interactome ’, Protein Sci, no 12, pp 2074-9 Available at: DOI: 10 1002/pro 747 (Accessed: 22 December 2022)

26 Reynaud, E (2010) ‘Protein Misfolding and Degenerative Diseases’ Nature Education, vol 3, no 9, pp 28 Available at: https://www nature com/scitable/topicpage/protein-misfolding-anddegenerative-diseases-14434929/(Accessed: 22 December 2022)

27 Bryngelson, J , Onuchic, J , Socci, N , & Wolynes, P (1995) ‘Funnels, pathways, and the energy landscape of protein folding: A synthesis ’ Proteins, vol. 22, no. 3, pp. 167-195. Available at: https://doi org/10 1002/prot 340210302 (Accessed: 22 December 2022)

28 Schug, A , & Onuchic, J (2010) ‘From protein folding to protein function and biomolecular binding by energy landscape theory’, Current Opinion in Pharmacology, vol 10, no 6, pp 709-714, Available at: https://doi.org/10.1016/j.coph.2010.09.012. (Accessed: 22 December 2022).

29. Englander, S., & Mayne, L (2014). ‘The nature of protein folding pathways’ Proceedings of the National Academy of Sciences, vol. 111, no. 45, pp. 15873-15880. Available at: https://doi.org/10.1073/pnas.1411798111

(Accessed: 22 December 2022)

30 Gupta, P , & Kulkarni, N (2013) ‘An introduction of soft computing approach over hard computing’ International Journal of Latest Trends in Engineering and Technology, vol 3, no 1 Available at: https://www.ijltet.org/pdfviewer.php?id=894&j_id=2505 (Accessed: 22 December 2022).

31 Datta, S (2022) ‘Greedy Vs Heuristic Algorithm’, Baeldung [online], 8 November Available at: https://www baeldung com/cs/greedy-vsheuristic-algorithm (Accessed: 21 December 2022)

32 Zerovnik, J (2015) ‘Heuristics for NP-hard optimization problemssimpler is better!?.’, Logistics & Sustainable Transport, vol. 6, pp. 1-10. DOI:10.1515/jlst-2015-0006 (Accessed: 22 December 2022).

33 Gao, Y (2020) ‘Heuristic Algorithms for the Traveling Salesman’ Medium [online], 14 February Available at: https://medium com/opexanalytics/heuristic-algorithms-for-the-traveling-salesman-problem6a53d8143584 (Accessed: 22 December 2022)

34 Lihoreau, M , Chittka, L , & Raine, N (2011) ‘Trade-off between travel distance and prioritization of high-reward sites in traplining bumblebees’, Functional Ecology, vol. 25, no. 6, pp. 1284-1292 Available at: https://doi.org/10.1111/j.1365-2435.2011.01881.x (Accessed: 22 December 2022)

35 Sahin, M (2022) ‘Solving TSP by using combinatorial Bees algorithm with nearest neighbor method’ Neural Computing & Applications Available at: https://doi org/10 1007/s00521-022-07816-y (Accessed: 22 December 2022)

36 Hosch, W (2022) ‘P versus NP problem’ Britannica [online], 24 November Available at: https://www britannica com/science/P-versus-NPproblem (Accessed: 22 December 2022)

37 Enderton, H (2011) ‘Computability Theory’, Academic Press, pp 1-27, Available at: https://doi org/10 1016/B978-0-12-384958-8 00001-6 Accessed: 22 December 2022)

38 Daintith, J , & Wright, E (2008) ‘A Dictionary of Computing (6 ed )’, Oxford: Oxford University Press

39 Copeland, J (2000) ‘The Church-Turing Thesis’ AlanTuring net [online], June Available at: http://www.alanturing.net/turing_archive/pages/Reference%20Articles/The% 20Turing-Church%20Thesis html (Accessed: 22 December 2022)

40 Wells, B (2004) ‘Hypercomputation by definition’ Theoretical Computer Science, vol 317, no 1–3, pp 191-207 Available at: https://doi.org/10.1016/j.tcs.2003.12.011 (Accessed: 22 December 2022).

41. Wood, L. (2019) ‘Super Turing Computation Versus Quantum Computation’, Forbes [online], 25 February. Available at: https://www.forbes.com/sites/cognitiveworld/2019/02/25/super-turingcomputation-versus-quantum-computation/?sh=53c81ea049e2 (Accessed: 22 December 2022)

42 Arkoudas, K (2008) ‘Computation, hypercomputation, and physical science’, Journal of Applied Logic, vol 6, no 4, pp 461-475 Available at: https://doi.org/10.1016/j.jal.2008.09.007 (Accessed: 22 December 2022).

43. Bringsjord, S., & Arkoudas, K. (2004), ‘The modal argument for hypercomputing minds’ Theoretical Computer Science, vol 317, no 1-3, pp 167-190 Available at: DOI:10 1016/j tcs 2003 12 010 (Accessed: 22 December 2022)

Reviewed and edited by

T. LawsonABSTRACT: This report provides an overview of machine learning (ML) methods for causal inference, with an emphasis on its applications in social sciences. Much of machine learning literature centres on using and developing methods to make predictions. However, there is growing recognition that an understanding of causal relationships is of crucial importance in many disciplines. Much of the research in both social and natural sciences revolves around cause-and-effect questions, which had remained far beyond the reach of conventional ML approaches. In this report, we first discuss the fundamental challenges inherent in causal inference and examine how diverse ML approaches can aid in addressing them. We draw to examples primarily from the realm of social sciences, predominantly economics, which is a discipline renowned for its emphasis on causal questions. Finally, we conclude by discussing potential directions for future research and the intricacies of causal discovery.

Over the past few decades, we have witnessed remarkable advancements in machine intelligence, leading to enhanced performance of these systems across an expanding range of tasks. These breakthroughs are often attributed to the paradigm shift in the field of artificial intelligence (AI), moving from procedural approaches to empirical approaches based on statistical learning. As a result, contemporary machine learning algorithms primarily operate in an associational mode (Pearl, 2019). However, many questions are inherently causal in nature, and findings based solely on associations cannot be readily interpreted in terms of cause and effect (Liu, et al. 2021). Despite the considerable progress in the fields of machine learning and artificial intelligence, addressing causal questions remains beyond the purview of conventional machine learning approaches. The absence of suitable tools to address causal inquiries has also contributed to the slow adoption of ML approaches in many fields outside computer science, for example, social sciences (Leist, et al. 2022).

While (supervised) machine learning revolves around the problem of prediction, much of the research in social sciences builds on theories describing causal relationships and seeks to test them empirically. Social scientists strive to obtain unbiased estimates of causal effects based on some theoretical relationship, rather than placing emphasis just on minimising prediction errors, as is central in ML research. ML models observe the associations between features and outcomes to achieve accurate predictions, but discerning whether these predictions are

spurious relationships remains elusive. Consequently, the question arises as to whether any data mining algorithm can extract genuine causal relationships or, in other words, the answers to causal questions lie within data itself (Pearl, 2018). These questions have garnered interest from Judea Pearl, a computer scientist and Turing Award recipient for his contributions to both AI and causal inference. In this report, we explore how far we have gone in answering these questions. But, prior to delving into that discussion, we will introduce the fundamental problems of causal inference and present some popular ML approaches.

The purpose of this report is to offer a high-level review of the literature at the intersection between ML and causal inference, primarily with a focus on its relevance to the fields of social sciences. Notably, it was social scientists who spearheaded rigorous exploration of causal questions, and economists who played a pioneering role in both developing and adopting ML tools for causal inference (Kreif, and DiazOrdaz, 2019). To commence our exploration, we leverage the influential work of renowned econometricians Guido Imbens and Susan Athey, which serve as valuable starting points. Additionally, we draw upon a multitude of extensive studies that delve into the intersection between ML and causal inference, providing

comprehensive insights into the major challenges inherent in causal inference and the accompanying ML tools. Our examination encompasses a systematic search and review of relevant academic resources pertaining to these tools. Acknowledging the rapid advancements in causal inference frameworks and tools within the field of computer science and their potential implications for other disciplines, we also investigate the contributions of Judea Pearl, the pioneer of causal reasoning within computer science Additionally, we briefly discuss the most recent developments in approaches for causal discovery.

The body of this report is structured as follows. First, we introduce the fundamental problem of causal inference and the practical issues related to estimating causal effects. Subsequently, we present an overview of the most employed ML approaches for causal inference tasks. To conclude this section, we discuss the advantages and drawbacks of ML methods, and outline potential avenues for future research. It is important to note that this report does not aim to provide an exhaustive review of the literature on the utilisation of ML for causal inference; we discuss only selected approaches. For example, we do not cover in depth approaches such as causal reinforcement learning. This is primarily due to both the fragmented nature and substantial growth of this literature, necessitating a more extensive review for a comprehensive account. Moreover, our review primarily focuses on applied work in the domain of social sciences, as opposed to theoretical results.

For decades, under the influence of the founders of modern statistics, Francis Galton and Karl Pearson, causal questions had remained outside the realm of scientific investigation (Hernan, et al. 2019; Pearl, 2018). It was social scientists (and geneticists), particularly econometricians and epidemiologists, rather than statisticians, who were the pioneers of causal reasoning in science (Pearl and Mackenzie, 2018). After the series of influential papers by Rubin (1974, 1976, 1978, 1980) – who introduced the first formal mathematical framework for causal inference – econometricians, in particular, have made substantial contributions to the development of tools for causal inference (Kreif, and DiazOrdaz, 2019). Program evaluation and experimental economics have emerged as thriving subfields of economics, with a primary focus on estimating causal effects of interventions using randomised experiments and innovative quasi-experimental designs (Athey and Imbens, 2017). Notably, Joshua Angrist and Guido Imbens were honoured with a Nobel Prize in Economic Sciences for their instrumental role in popularising experimental techniques in economics. The allure of experimental methods lies in their ability to overcome key problems in causal inference, which we illustrate in the following example.

Consider the scenario in which our objective is to estimate the effect of attending university on future earnings. Since we cannot observe both counterfactual outcomes for each individual, we could attempt to estimate the causal effect of attending

university by obtaining a sample of individuals and dividing them into two groups: those who attended a university and those who did not. By calculating the average earnings for each group and taking their difference, we might infer the premium associated with attending university –or so it seems It is plausible to assume that the students who decided to attend university differ in various ways to those who chose not to –perhaps they are smarter, more industrious, or have more favourable socio-economic backgrounds These attributes also make them more likely to earn higher wages in the future, regardless of whether they attended university. These characteristics are known as confounders, which means they simultaneously affect both the treatment (attending university) and the outcome (earnings). In the absence of further assumptions, the presence of confounders represents a challenge in isolating causal effects (Kreif, and DiazOrdaz, 2019).

The example above highlights several notorious problems of causal inference. Firstly, for each individual, both potential outcomes cannot be simultaneously observed, which makes the identification of causal effects difficult based solely on observed data (Hill, et al. 2019; Kreif, and DiazOrdaz, 2019). In observational studies, we could simply compare outcomes between treated and untreated groups However, as demonstrated earlier, such a simple comparison would yield a biased estimate of the causal effect due to confounding While randomised experiments are considered the gold standard, they are often unethical and impractical In the absence of randomisation, identifying causal effects requires robust assumptions to be satisfied, with the unconfoundedness assumption being of utmost importance. This assumption posits that treatment assignment is independent of observed covariates (Leist, et al. 2022; Kreif, and DiazOrdaz, 2019).

Plausibility of this assumption cannot be tested using observational data and necessitates careful reasoning and understanding of the relationships between variables based on subject-matter expertise (Balzer and Petersen, 2021). These relationships can be conveniently represented through the so-called directed acyclic graphs (DAG) popularised by Pearl.

ML has proved to be highly valuable in addressing many of the challenges outlined above, including confounding adjustment and counterfactual prediction. In the following section, we provide an overview of some commonly employed ML tools for addressing causal inference tasks.

A large body of work on causal inference using ML relies on tree-based approaches. Tree-based methods, also known as classification and regression trees, can be used for the classification of binary or multicategory outcomes, or with continuous outcomes, respectively (Kreif, and DiazOrdaz, 2019). In its most basic form, a regression tree considers which covariate should be split, and at which level to ensure the sum of squared residuals is minimised (Athey and Imbens, 2017). A major challenge with tree methods is their sensitivity to the initial split leading to high variance, which is also the reason why single trees are rarely used in practice (Kreif, and DiazOrdaz, 2019). Thus, they are usually implemented as an ensemble of trees in various variants, some of which we present here.

Researchers and policy makers are often interested in how treatment effects vary across different population subgroups. Building on our previous example, in addition to determining the average effect of attending a university on future earnings, we may want to examine which specific types of students benefit the most from university attendance. To address such a question, Wager and Athey (2018) introduced a modified version of regression trees known as causal trees, specifically designed for causal settings. Unlike traditional regression trees optimised for prediction, in the case of causal trees, the splitting rule optimises for finding splits associated with treatment effect heterogeneity (Athey and Imbens, 2019). This method generates treatment effect estimates and a confidence interval for each subgroup However, a disadvantage of causal trees is that the tree structure itself is somewhat arbitrary, potentially resulting in different estimated partitions when using different subsamples of data. A further extension of this approach are causal forests developed by Wager and Athey (2015), which provide smooth estimates of treatment effects. Causal forests involve generating multiple trees and averaging the treatment effects across a large number of these causal trees, resulting in a smooth function of treatment effects, given some covariate.

An interesting tree-based approach are Bayesian Additive Regression Trees (BART), which can be distinguished from other tree-based methods due to its underlying probability model. BART was introduced by Chipman et al. (2010) and since then, has received increasing popularity due to several known advantages: it is simple to

use, yields excellent performance, provides uncertainty measures, and it handles many predictors and missing data (Hill, 2011). As a Bayesian method, BART includes a set of priors for the structure and the leaf parameters, which aim to provide regularisation to prevent a single tree from dominating the total fit (Kreif, and DiazOrdaz, 2019). BART fits trees iteratively in such a way that each new tree aims to capture the fit left currently unexplained by holding the other trees constant. BART provides a very versatile approach to causal inference producing accurate average treatment effect estimates, naturally identifying heterogenous treatment effects (Hill, 2011), modelling complex response surfaces, controlling for confounding, etc.

Several noteworthy ML approaches have been developed to adjust for confounding, with propensity score methods standing out as particularly notable (Leist, et al. 2022). The propensity score (PS) is defined as the probability of the treatment assignment as a function of all relevant observed covariates (Rubin, 2010; Abadie and Imbens, 2016). By comparing individuals with similar PS, one can obtain an unbiased estimate of the treatment effect. In randomised experiments, where the treatment assignment is equally likely for everyone, the PS is one half for all individuals, enabling an unbiased treatment effect estimation. In nonexperimental settings, the PS is not directly known, but can be obtained using observed covariates (Rubin, 2010). If it is reasonable to assume that a specific set of observed covariates eliminates confounding, adjusting solely for the propensity score is equivalent to adjusting for the entire set of covariates (Abadie and Imbens, 2016).

PS methods have been widely used to mitigate confounding in the pre-effect estimation step (Blakely, et al. 2020). These methods have demonstrated excellent performance especially through two key techniques: propensity score matching, and reweighting using the PSs as inverse weights. The propensity score matching estimator constructs the missing potential outcome by pairing it with the closest outcome from the other group based on its propensity score. The average treatment effect is then calculated as the mean difference between these predicted potential outcomes (Abadie and Imbens, 2016) Conversely, inverse weights balance the weighted distributions of covariates between treated and untreated groups (Li, et al 2018) Despite their growing popularity, one of the limitations of PS methods is their inability to account for unobserved covariates, thus potentially not eliminating the selection bias completely.

The ML tools have the potential to substantially improve empirical analysis of cause-effect problems across a diverse range of fields, offering benefits especially in certain settings. In contrast to most empirical research in various disciplines, ML typically focuses on optimising out-of-sample performance (Mullainathan & Spiess, 2017). The demonstrated capability of ML methods to outperform alternative methods holds considerable practical value, yet are often underappreciated outside computer science. Additionally, ML approaches prove particularly valuable when handling large datasets, especially in high-dimensional settings (Mullainathan & Spiess, 2017). These approaches enable structured exploration of relationships between numerous variables and facilitate the construction of optimal predictor combinations (Balzer and Petersen, 2021).

However, ML is not without dangers and challenges. A noteworthy practical challenge lies in selecting the most suitable algorithm for a given problem and implementing it effectively, a process that still largely necessitates domain expertise.

Most research on ML approaches for causal inference reframes cause-and-effect questions as prediction tasks, emphasising confounding adjustment, potential outcomes prediction, and treatment effect heterogeneity estimation (Blakely, et al 2020) Exploring novel methodologies for causal discovery – aiming to identify causal relationships from observational data without prior knowledge – emerges as an intriguing area for future investigation. While rarely employed in the realm of social science, the predominant framework for causal discovery is the so-called causal structural learning (Leist, et al. 2022). By leveraging the graphical representation of variable relationships in a form of DAG (Pearl, 2009), structure learning approaches aim to acquire such graphs from data. Causal structure learning extends this framework by inferring the directionality of the graph edges. The major limitations of structure learning approaches include reliance on several underlying assumptions (Heinze-Deml, 2018), and the need for more scalable and efficient algorithms. Other methods for causal discovery are being developed including causal reinforcement learning (e. g. Zhu, et al. 2019), but in most cases, efficiency remains an issue.

Numerous influential thinkers, including Pearl (2009, 2019), Deutsch (1997), and Popper (1934), have emphasised the importance of good explanatory models for the scientific understanding of the world. Cause-effect relationships are a fundamental aspect of such models both in natural and social sciences. In contrast, ML and AI research have traditionally focused on prediction tasks, representing a distinct statistical thinking approach. Only recently has there been a surge in development and adoption of ML approaches to cause-effect problems. However, many of these approaches –such as propensity score methods and tree-based approaches – essentially reframe causal inference tasks as prediction problems. Although these approaches have proven to be highly valuable in many settings, they still rely

on underlying domain expertise, much like other statistical methods. The true challenge lies in developing systems that can autonomously learn causal relationships solely from data, without relying on pre-existing knowledge. Further research in this area has the potential to revolutionise research not only in social and natural sciences, but also in the field of artificial intelligence itself.

Abadie, A , Diamond, A & Hainmueller, J (2010) Synthetic Control Methods for Comparative Case Studies: Estimating the Effect of California’s Tobacco Control Program, Journal of the American Statistical Association, 105:490, 493-505, DOI: 10.1198/jasa.2009.ap08746

Abadie A, Diamond A, Hainmueller J 2015 Comparative politics and the synthetic control method Am J Political Sci 59: 495–510

Abadie, A. and Imbens, G. 2016. Notes and Comments: Matching on the Estimated Propensity Scores. Econometrica, Vol. 84, No. 2 (March, 2016), 781–807. DOI: 10.3982/ECTA11293

Andrews, R , R et al 2021 A practical guide to causal discovery with cohort data arXiv:2108 13395 [stat AP] (30 August 2021).

Athey, S and Imbens, G 2017 The State of Applied Econometrics: Causality and Policy Evaluation Journal of Economic Perspectives Volume 31, Number 2 Spring 2017 Pages 3–32

Athey, S and Imbens, G 2019 Machine Learning Methods That Economists Should Know About Annual Review of Economics. Vol. 11:685-725 (Volume publication date August 2019). https://doi.org/10.1146/annurev-economics-080217053433

Athey, S. and Wager, S. 2021. Policy Learning with Observational Data. Econometrica, Volume 89, Issue 1, p. 133-161. https://doi org/10 3982/ECTA15732

Balzer, L. B, Petersen, M. L. 2021. Invited Commentary: Machine Learning in Causal Inference How Do I Love Thee? Let Me Count the Ways, American Journal of Epidemiology, Volume 190, Issue 8, August 2021, Pages 1483–1487, https://doi org/10 1093/aje/kwab048

Blakely, T., et al. 2020, Reflection on modern methods: When worlds collide Prediction, machine learning and causal inference. Int. J. Epidemiol. 49, 2058–2064 (2020).

Chipman, H. A., George, E. I., and McCulloch, R. E. (2010). “BART: Bayesian additive regression trees.” The Annals of Applied Statistics, 266–298 MR2758172 doi: https://doi org/10 1214/09-AOAS285 1051

Deutsch, D. 1997. The Fabric of Reality. Penguin Books, London. ISBN 987-1-101-55063-2

Fan, J and Lv, J 2010 A Selective Overview of Variable Selection in High-Dimensional Feature Space Statistica Sinica 20 (2010), 101-148.

Hartford, et al 2017 Deep IV: A Flexible Approach for Counterfactual Prediction Proceedings of the 34th International Conference on Machine Learning, Sydney, Australia, PMLR 70, 2017

Heinze-Deml, C., Maathuis, M. H., Meinshausen, N. 2018. Causal structure learning. Annu. Rev. Stat Appl. 5, 371–391 (2018).

Hernan, et al. 2019. A Second Chance to Get Causal Inference Right: A Classification of Data Science Tasks. CHANCE. Volume 32, 2019 - Issue 1

Hill, J. L. 2011. Bayesian Nonparametric Modeling for Causal Inference, Journal of Computational and Graphical Statistics, 20:1, 217-240, DOI: 10.1198/jcgs.2010.08162

Hill, J et al 2019 Bayesian Additive Regression Trees: A Review and Look Forward Annual Review of Statistics and Its Application. Vol. 7:251-278 (Volume publication date March 2020). https://doi.org/10.1146/annurev-statistics-031219-041110

Kreif, N. and DiazOrdaz, K. 2019. Machine learning in policy evaluation: new tools for causal inference. arXiv:1903.00402v1 [stat.ML] 1 Mar 2019

Leist, A K et al 2022 Mapping of machine learning approaches for description, prediction, and causal inference in the social and health sciences. SCIENCE ADVANCES, 19 Oct 2022, Vol 8, Issue 42. DOI: 10.1126/sciadv.abk1942

Li, F et al 2018 Balancing Covariates via Propensity Score Weighting Journal of the American Statistical Association Volume 113, 2018 - Issue 521.https://doi.org/10.1080/01621459.2016.1260466

Liu, T , Ungar, L & Kording, K (2021) Quantifying causality in data science with quasi-experiments Nat Comput Sci 1, 24–32 https://doi.org/10.1038/s43588-020-00005-8

Mullainathan, S. & Spiess, J. 2017. Machine learning: an applied econometric approach. J. Econ. Perspect. 31, 87–106 (2017). Pearl, J. 2019. The Seven Tools of Causal Inference, with Reflections on Machine Learning. COMMUNICATIONS OF THE ACM | MARCH 2019 | VOL 62 | NO 3 https://doi org/10 1145/3241036

Pearl J. 2009. Causality: Models, Reasoning, and Inference. Cambridge, UK: Cambridge Univ. Press. 2nd ed.

Pearl, J and Mackenzie 2018 The Book of Why New York, Basic Books ISBN 978-0-465-09760-9

Popper, K. 1934. The Logic of Scientific Discovery (as Logik der Forschung, English translation 1959), ISBN 0415278449

Rubin, D.B. 1974. Estimating causal effects of treatments in randomized and nonrandomized studies. Journal of Educational Psychology 66, 688–701

Rubin, D.B. 1976. Inference and missing data. Biometrika 63, 581–92

Rubin, D.B. 1978. Bayesian inference for causal effects: the role of randomization. Annals of Statistics 6, 34–58.

Rubin, D.B. 1980. Discussion of ‘Randomization analysis of experimental data in the Fisher randomization test’ by Basu. Journal of the American Statistical Association 75, 591–3

Rubin, D.B. 2010. Propensity Scores Methods. SERIES ON STATISTICS| VOLUME 149, ISSUE 1, P7-9, JANUARY 2010. DOI: https: //doi org/10 1016/j ajo 2009 08 024

Scanagatta, M., Salmerón, A., Stella, F. 2019. A survey on Bayesian network structure learning from data. Prog. Artif. Intell. 8, 425–439 (2019)

Schölkopf, B. 2019. Causality for machine learning. Preprint at https://arxiv.org/abs/1911.10500 (2019).

Vivalt, E. 2015. Heterogeneous Treatment Effects in Impact Evaluation. American Economic Review: Papers & Proceedings 2015, 105(5): 467–470. http://dx.doi.org/10.1257/aer.p20151015

Wager Stefan & Athey Susan (2018) Estimation and Inference of Heterogeneous Treatment Effects using Random Forests, Journal of the American Statistical Association, 113:523, 1228-1242, DOI: 10.1080/01621459.2017.1319839

Wasserman, L and Roeder, K. 2009. High Dimensional Variable Selection. Ann Stat. 2009 Jan 1; 37(5A): 2178–2201. doi: 10 1214/08-aos646

Zhu, S, et al. 2019. Causal Discovery with Reinforcement Learning. arXiv:1906.04477 [cs.LG]

Verity Powell, Computer Science

Reviewed and edited by T. Lawson and T. Burton

ABSTRACT: This report explores the construction of a 3x3 magic square of squares using 9 distinct integer numbers, a problem unsolved since Euler. In it, we will discuss the history and properties of magic squares alongside the prizes and efforts put forward towards further research in the field. This report uses a computational approach to the problem by using a general formula to try and generate a complete 3x3 magic square of squares. The results of such computation will be discussed, and the initial observations presented.

The combinatorial problem of placing 9 distinct positive integers into a 3x3 grid, such that each row, column, and diagonal sums to the same integer (the magic constant) dates to over 5000 years ago in Ancient China (Eves, 2022). The Lo Shu Square is the first recorded magic square of order 3 and shows that there is only one way of arranging the numbers 1-9 within a 3x3 grid (as shown in Figure 1) (Eves, 2022).

Magic squares have some interesting properties which will aid the reader’s understanding of this report. Sallows notes that a magic square of order 3 has 8 trivially distinct rotations, and reflection of its formula that one equivalence class (Sallows, 1997) As a result, these trivial cases are not regarded as unique magic squares. Magic squares can also undergo linear transformation, or the addition of a constant to each integer in the square and remain magic (Kraitchik, 1953).

Whilst the order 3 magic square has existed for millennia, the problem of constructing a 3x3 magic square consisting of 9 distinct square integers remains unsolved since Euler, as previously stated (Boyer, 2020).

Posed by Martin LaBar in 1984 (LaBar, 1984), the question gained notoriety when in 1996, Gardner offered $100 for a solution (Gardner 1996). It has since captured the attention of recreational mathematicians for decades. In 2010, Christian Boyer created multiple “enigmas” to incentivise progress on the smallest possible magic squares (Boyer, 2010). This report will focus on “Main enigma #1” outlined as follows

“Main enigma #1 (€1000 and 1 bottle) Construct a 3x3 magic square using seven (or eight, or nine) distinct squared integers different from the only known example and of its rotations, symmetries, and k² multiples. Or prove that it is impossible.” (Boyer, 2010)

Given that no solution has been presented even in spite of the large prize, it could be suggested that the required distinct square integers are incredibly large What is true, however, is that there is no known proof of its impossibility. Using a computational approach, this report will explore this intriguing problem further.

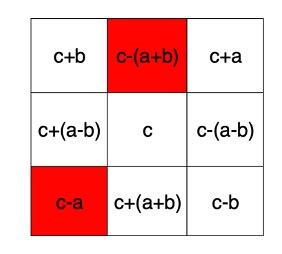

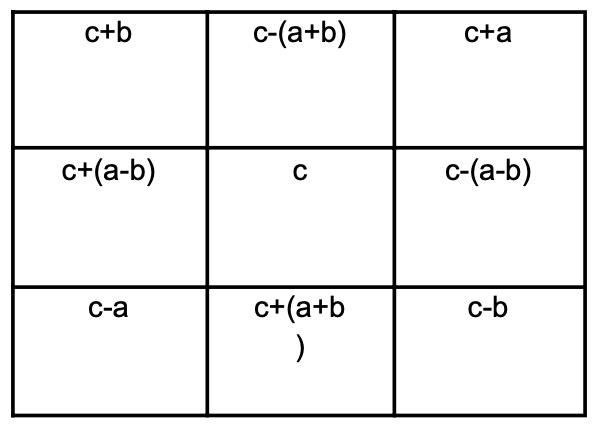

There are thousands of possible constructions of magic squares, each with a different magic constant. Therefore, when approaching the construction of magic squares, it is important to understand not only the definition of a magic square, but also the relationships between the numbers they contain. The 19th century mathematician Édouard Lucas devised a general formula for the construction of a magic square (Stewart, 1997).

Consequently, when aiming to construct a magic square of squares, we are looking for the integers ‘a’ and ‘b’ to satisfy (1), (2), (3) and (4) such that they form sequences of equidistant square numbers

The ‘enigma’ posed by Boyer asks for any solution with a magic square that contains more than 6 square numbers and is different from the known example shown in Figure 3 in terms of rotations, symmetries, and k2 multiples (Boyer 2010). As a result, multiple approaches can be taken to generate squares containing certain numbers of square numbers or numbers in a specific position within the square. The computational approach used in this report aims to generate a complete magic square of squares with 9 distinct integer square numbers. The only known solution involves a = 41496, b = 138600, and c = 180625; Figure 3 shows the relative positions of the square numbers within the known solution.

Figure 2. The general formula for the construction of a magic square where 0 < a < b < c – a, b ≠ 2a, magic constant = 3c.

This formula not only provides us with a foundation for constructing magic squares, but also allows us to visualise magic squares as a sequence of numbers A point of interest in the formula is that for a magic square of squares to exist, ‘c’ must be a square number. It is also the case that the formula can be viewed as four arithmetic sequences of three equidistant square numbers, as shown below.

a = 41496, b = 138600, and c = 180625.

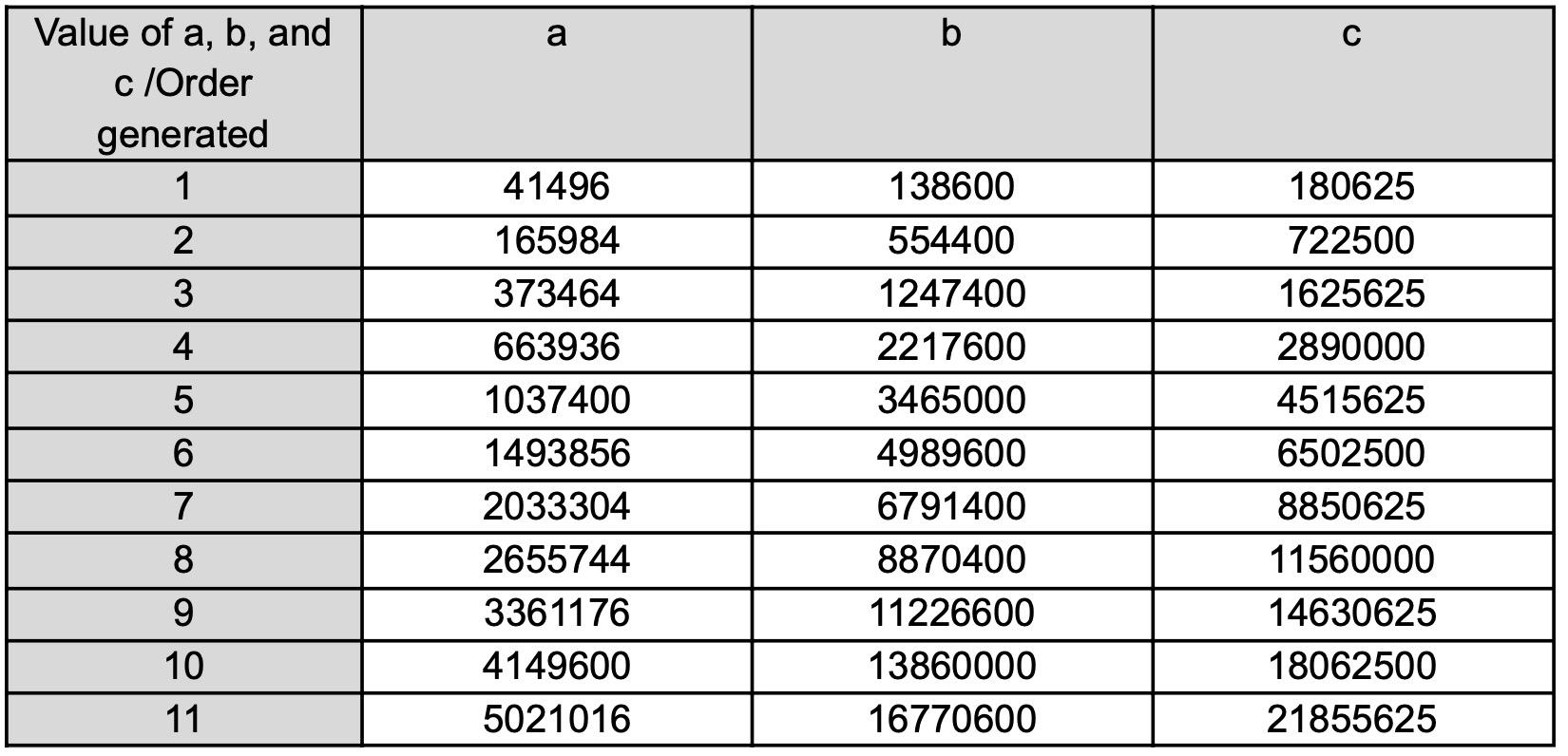

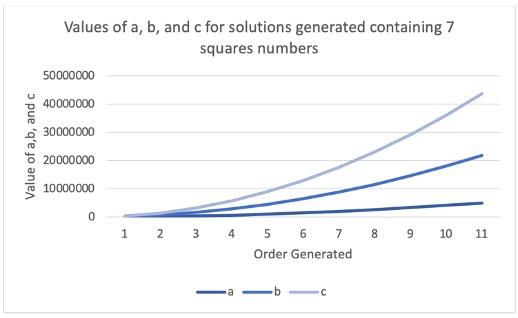

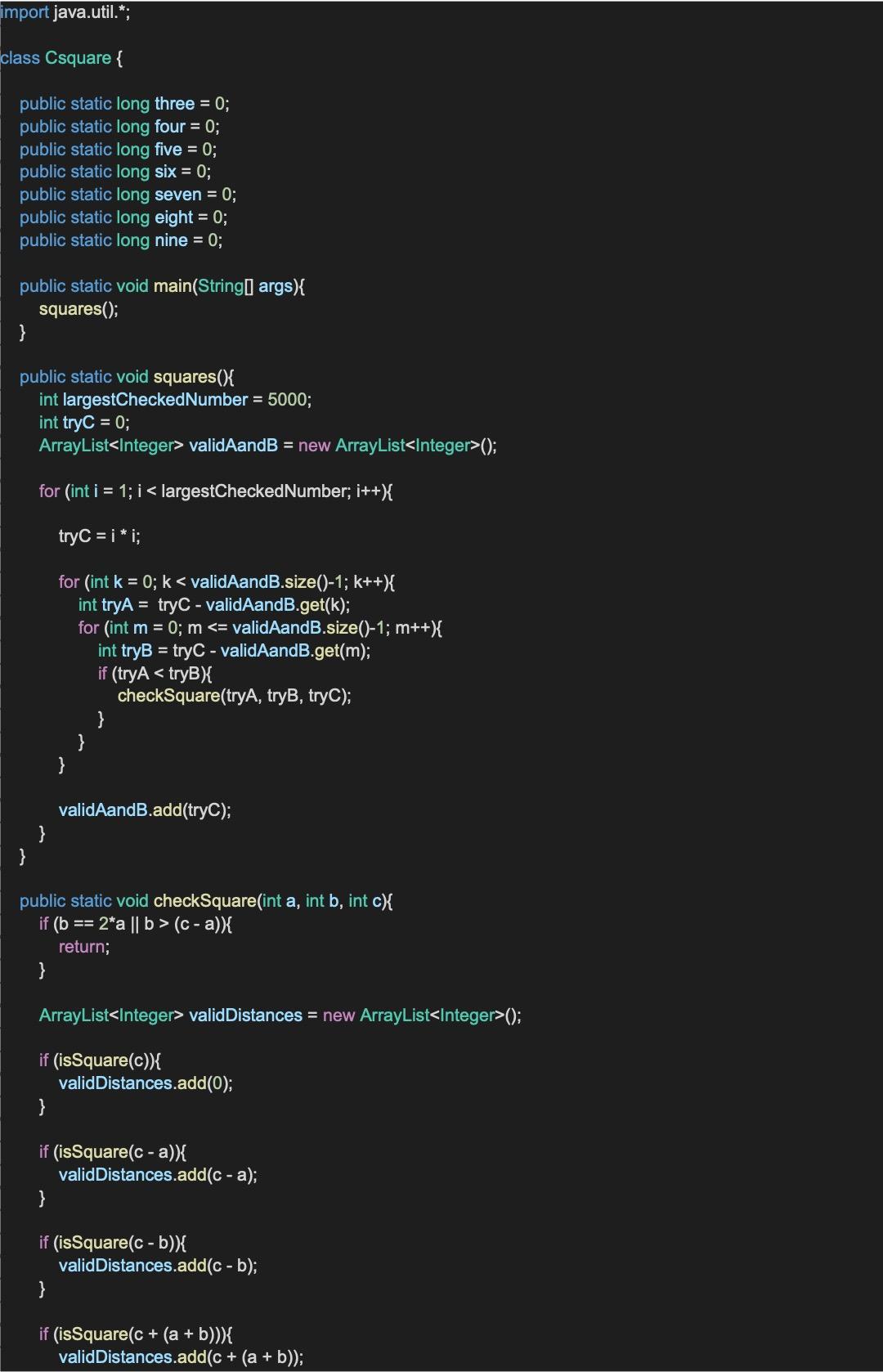

In aiming to generate a magic square with 9 distinct integer square numbers, we will first assume that ‘c’ is itself a square number We must next find integers ‘a’ and ‘b’ to satisfy the sequences (1), (2), (3) and (4), as explained above. Further, knowing that ‘a’ and ‘b’ must fulfil the inequalities 0 < a < b < c - a and b ≠ 2a will allow us to avoid unnecessary computation