International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN: 2395-0072

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN: 2395-0072

Anurag Kumar Patel1 , Rajkumar Sharma2 , Vivek Richhariya3

1Student, Department of Computer Science & Engineering, LNCT Bhopal

2,3 Professor, Department of Computer Science & Engineering, LNCT Bhopal

Abstract - Breast cancer represents a major worldwide health challenge, ranking among the primary causes of mortality in the female population Achieving precise earlystage detection is essential, as this greatly enhances survival prospects and supports the implementation of more effective treatment approaches. Mammography remains the most prevalent imaging modality for initial screening, though its manual interpretation can be complex and susceptible to inter-observer variability. To address this challenge, this study introduces a deep learning-driven framework for automated breast cancer identification, utilizing mammographic imagery from the MIAS dataset. Three distinct models were conceptualized and assessed: (i) a standard Convolutional Neural Network (CNN), (ii) a hybrid CNN-DNN model enhanced by transfer learning, and (iii) an ensemble CNN-ML model. The latter, which proved most effective, specifically incorporated feature extraction from pre-trained networks, including InceptionV3, ResNet50, and VGG16, followed by a voting-based classification executed withSupportVectorMachine(SVM)andRandomForest(RF) algorithms. A thorough assessment of the proposed models revealedthattheensembleCNN-MLframeworkdeliveredthe strongest classification performance. Notably, this leading model achieved an impressive accuracy of 98.48% in the image-based breast cancer detection task.

Key Words: Breast Cancer Diagnostics, Medical Imag e Analysis, Deep Learning for Medical Imaging, Transf er Learning Applications, Ensemble Learning Framew orks

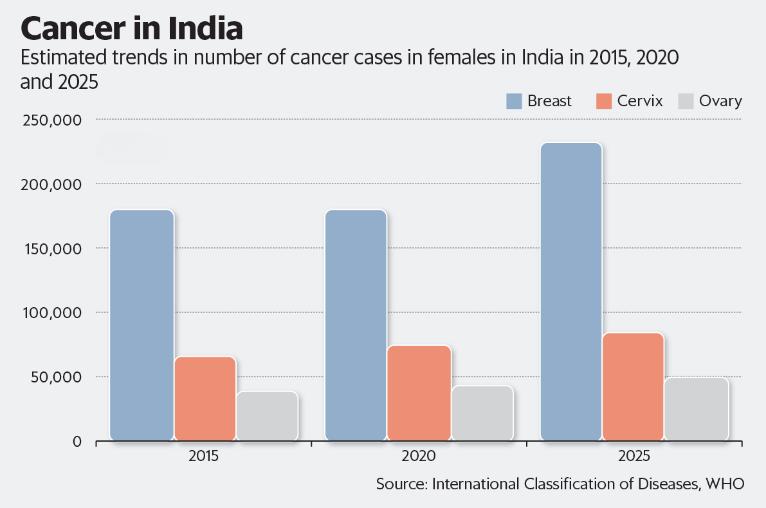

Breastcancer(BC)representsacriticalglobalhealthissue, acknowledged as the most widespread cancer among women worldwide and a major cause of mortality [1]. Projections indicate a continuous rise, with the World HealthOrganizationestimating19.3millioncancercasesby 2025 [2]. Figure 1 illustrates the number of cancer cases among women in India. Crucially, early and precise detectionisthemostsignificantfactorinimprovingpatient survival rates and enabling effective treatment outcomes

Mammography serves as the corner- stone and most

Fig -1: NumberofcancercasesamongwomeninIndia

widelyadoptedimagingmodalityforroutinebreastcancer screeninganddetection[4].Thislow-doseX-raytechnique excels in visualizing internal breast structures and identifying abnormalities such as microcalcifications and masses,makingittheprimaryandmostcost-effectivetool for early diagnosis and a key contributor to reducing mortality rates [5]. Despite its critical role, the manual interpretation of mammographic images presents considerable challenges due to the complex nature of breasttissue,variedtumourappearances,andtheinherent subjectivity and inter-observer variability among radiologists [6]. These challenges may lead to false positives causing unnecessary patient distress and additional procedures or false negatives, which can postpone essential treatment [7]. To overcome these hurdles, Computer-Aided Diagnosis (CAD) systems have emergedasvital tools,enhancing diagnosticaccuracyand efficiency. Recent progress in Artificial Intelligence (AI), particularly in deep learning (DL), have revolutionized medical imaging diagnostics by automating feature extractionandpatternrecognitionfromrawimages,often achievingperformancecomparabletoorexceedinghuman experts.Deeplearningmodelsofferpromisingsolutionsfor more precise, reliable, and consistent breast cancer detection, leveraging their capacity to discern subtle anomaliesthatmightbe missedbymanual inspection [8]. Ourprimarycontributionsare:

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN: 2395-0072

•Thisresearchconcentratesonthecreationandevaluation of three distinct deep learning architectures for mammographic breast cancer identification: a traditional Convolutional Neural Network (CNN), a hybrid CNN-DNN modelthatincorporatestransferlearning,andanensemble CNN-MLapproach.

• The study implements an ensemble CNN-ML model that combines feature extraction from pre-trained networks namelyInceptionV3,ResNet50,andVGG16 withavotingbasedclassification framework employing Support Vector Machine(SVM)andRandomForest(RF)algorithms.

• The developed ensemble CNN-ML architecture, upon assessment using the MIAS dataset for breast cancer identification,demonstratedremarkablyhighclassification performance with an accuracy of 98.48%, highlighting its effectivenessinimage-baseddiagnostictasks.

•Demonstratingthepotentialforacombineddeeplearning system and an accessible software interface to provide accurate and real-time breast cancer diagnosis from a single,well-curateddataset.

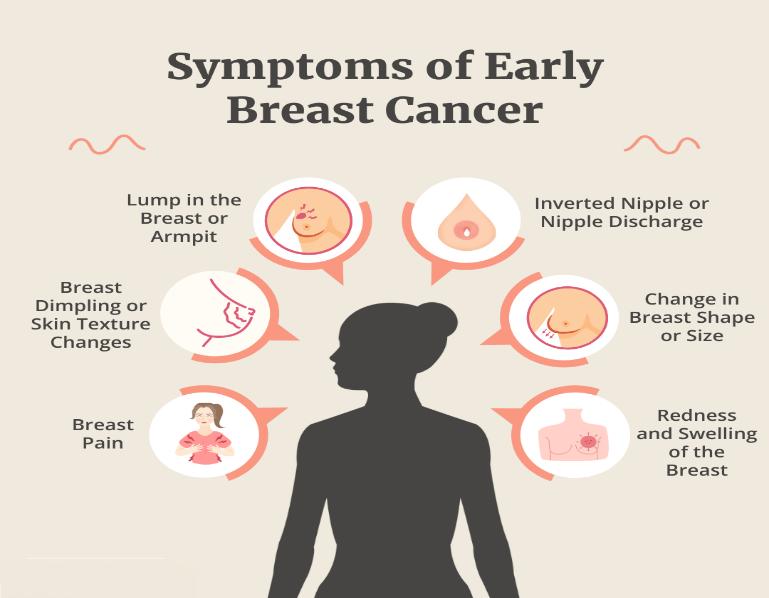

Timelyidentificationandprecisediagnosticassessmentof breast cancer are fundamental to enhancing patient outcomes and mortality rates [9]. Figure 2 illustrates severalearlysymptomsofbreastcancer.

Fig

Whilemammographyremainstheprimaryimagingtoolfor screeningandearlydiagnosis,itsmanualinterpretationby radiologists is prone to subjectivity, inter-observer inconsistencies, and time demands, which may result in false positives or false negatives [10]. Consequently, Computer-AidedDiagnosis(CAD)systems,especiallythose driven by Artificial Intelligence (AI) and Deep Learning (DL), have emerged as crucial tools for improving

diagnostic precision and efficiency. This section reviews existing research, categorized by their methodological approaches, to position the contributions of this work within the current landscape of automated breast cancer detection[11].

Deep Learning methodologies, especially CNNs, have demonstrated remarkable efficacy in medical imaging analysis through their potential to autonomously extract hierarchical feature representations from unprocessed images Early CNN architectures like LeNet evolved into morecomplexmodelssuchasAlexNet,VGGNet,GoogleNet (Inception-V1), and ResNet, each introducing innovations to handle deeper net- works, reduce computational costs, oraddressissueslike vanishing gradients. Several studies have employed different CNN architectures for breast cancer identification and classification through mammographic data analysis. For instance, Ting et al. constructedadeepCNNarchitecturethatattainedaccuracy of90.50%inclassification. Abbasetal.[15]utilizeddeep invariant features on the MIAS dataset, reporting 91.5% accuracy. Sha et al. [16] also used CNNs with an optimization algorithm, achieving 92% accuracy on the same dataset. More recent efforts have pushed these boundaries; S. Raaj et al. [17] reported a hybrid CNN architecture achieving 98.44% accuracy on DDSM and 99.17%onMIAS,whileSharifHasanetal.[18]developeda CNN-based system achieving 95.76% test accuracy on histopathological images. Dilawar Shah et al. [19] introducedEfficientViewNet,whichattained0.99accuracy inclassifyingbreastabnormalitiesfromCCandMLOviews. Ameta-analysisbyJunjieLiuetal.[20]furtherhighlighted CNNs’superiorperformance,reportingapooledsensitivity of 0.961, specificity of 0.950, and AUC of 0.974 for mammographydiagnosis.Whilethesestudiesdemonstrate the effectiveness of CNNs, many focus on standard or customizedsingle-CNN architectures.This researchpaper distinguishes itself by exploring and evaluating an ensemble deep learning-machine learning model that integrates features from multiple prominent pre-trained CNNs (InceptionV3, ResNet50, VGG16) to potentially capture a broader spectrum of features, a specific architectural choice less frequently detailed as a core contribution.

Transfer Learning (TL) has become a pivotal technique in medical imaging analysis, especially for breast cancer identification,asiteffectivelymitigatestheissueoflimited annotated medical datasets [1,4]. The approach involves initializing a neural network with parameters pre-trained on extensive general-purpose image databases (e.g.,

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN: 2395-0072

ImageNet),andsubsequentlyfine-tuningtheseparameters forthetargetedmedicalapplication[7,9].

Thefusionofmultiplemodelsordiversearchitecturalcomponents into ensemble or hybrid architectures has consistently demonstrated superior diagnostic accuracy and robustness compared to individual models [8,13,19,21]. This synergistic approach effectively harnesses the complementary strengths of various techniques.

• Multi-model ensembles: Sahu et al. [21] proposed an ensemble classifier that integrates AlexNet, ResNet, and MobileNetV2,showingrobustperformanceevenwithsmall datasets.Dilawaretal.[19]developed EfficientViewNet, a dual-view deep neural network architecture (CCEfcientNet and MLO- EfficientNet), achieving an accuracy, F1score,andrecallof0.99.

•HybridCNN-MLmodels:Raajetal.[17]designedahybrid CNN architecture that attained an accuracy of 99.17% on the MIAS dataset. Nusrat et al. [4] reviewed models that utilized CNN-based pre-trained features as input for fully connected layers, surpassing traditional models with an accuracyof97.25%.

•Hybrid Deep Learning models with specialized components: Vasudha et al. [13] proposed a CNN+ViT model that merges CNN’s strength of the local feature extraction with the global context modelling ability of VisionTransformers(ViTs),achieving90.1%accuracyand stableperformance.Similarly,Peteretal.[22]investigated Transformer-based and hybrid CNN-Transformer approaches for mammography classification. Raza et al. [23] introduced a hybrid BiLSTM-CNN model, which attained99.3%accuracyalongwith99%precision,recall, andF1-scoreinbreastcancerdiagnosis.

This section outlines the systematic methodology applied for breast cancer detection and classification. It covers dataset collection and preprocessing, the design of deep learning models, and their thorough evaluation using establishedperformancemetrics.

This research utilized the MIAS dataset, a widely recognizedbaselineformammographyimageanalysis.The dataset comprises 322 mammographic images, which are categorizedandlabelledasNormal,Benign,andMalignant breastconditions.Visualinspectionsoftheseimagesreveal that malignant lesions typically appear denser and more irregular, while benign masses are generally more structured and localized. The MIAS dataset inherently

presentsaclassimbalance.Forexperimentalpurposes,the dataset was fragmented into training and testing subsets usingan 80:20 stratifiedsampling approach.This method was employed to ensure that the class distribution remained consistent across both subsets, thereby facilitating a robust and unbiased evaluation of the classification performance. The MIAS dataset is strictly designated for research use, with adherence to its data usageguidelines.

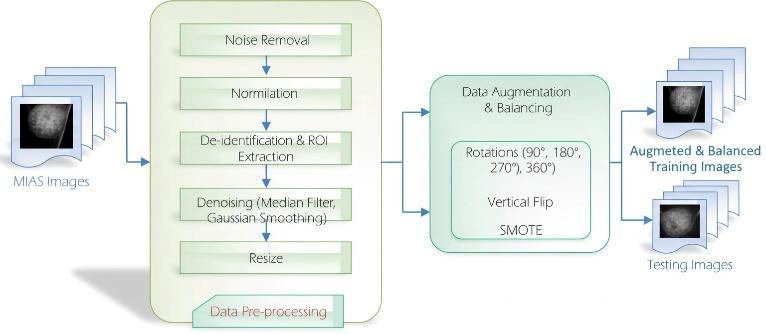

The initial phase of the methodology involved image preprocessing, including normalization. Specific conversions, de- identification procedures, or region of interest (ROI) extraction. Several denoising techniques are applied to mammogram images, particularly when used with DL modelssuchas2DMedianFilterandGaussianSmoothing. To enhance model generalization and robustness, especially considering the class imbalance of the datset, dataaugmentationmethodssuchasrotationsandvertical flips are commonly applied in studies utilizing the MIAS dataset. Training data was augmented by rotating segmented images clockwise to 90°, 180°, 270°, and 360° (whichisequivalentto0°).Followingtheserotations,every rotatedimagewasthenflippedvertically.Acrucialstepin addressing the inherent class imbalance was the application of the Synthetic Minority Oversampling Technique(SMOTE)asdisplayedinFigure3.

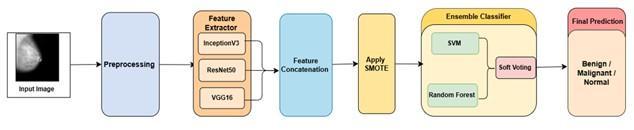

The proposed study employs a hybrid model architecture as shown in figure 4 that integrates distinct machine learning techniques to leverage their complementary strengths. In this architecture, feature extraction is an automatic process due to the integration of deep learning models with conventional machine learning classifiers for final predictions. The pipeline follows the CNN-ML EnsembleHybridframework.Featureextractioniscarried out using pre-trained CNNs InceptionV3, ResNet50, and VGG16. Both InceptionV3 and ResNet50 generate 2048dimensional feature vectors, whereas VGG16 outputs a 512-dimensional feature vector. The individual feature vectors are combined to create a unified representation,

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN: 2395-0072

producing a 4608-dimensional feature space. After balancingthe dataset,classification isperformedusing an ensemble of traditional machine learning algorithms, namelySupportVectorMachine(SVM)andRandomForest (RF). Each algorithm is trained independently on the oversampled deep feature vectors. The Random Forest modelisconfiguredwithn_estimators=100,max_depth= 10,andrandom_state=42.TheSupportVectorMachineis setwithC=10,kernel=’rbf’,gamma=’scale’,probability= True,andrandom_state=42.

The model’s performance was thoroughly assessed using standardclassificationmetricsderivedfromtheconfusion matrix [24]. This matrix is a fundamental evaluation tool, offering a clear breakdown of correct and incorrect predictions. In the context of this study, which targets breast cancer detection, the confusion matrix classifies mammogram image predictions into two categories: ’cancer’ or ’non-cancer’ (alternatively, ’malignant’ and ’healthy/benign’).Thefourprimaryoutcomesrepresented inaconfusionmatrixare:

• True Positive (TP): Occurs when the model accurately identifiesapositiveinstance.InthecaseofBCdiagnosis,TP meansacancercaseiscorrectlyclassifiedascancer.

• True Negative (TN): Occurs when the model correctly identifies a negative instance. For breast cancer, this signifies that a healthy case is correctly identified as noncancer.

• False Positive (FP): It indicates when the model incorrectly categorizes a negative instance as positive. In this context, a healthy case is erroneously classified as cancer.Clinically,elevatedfalsepositiveratescanresultin patientanxietyandunnecessarybiopsies

•FalseNegative(FN):Occurswhenthemodelmisclassifies a positive instance as negative. Here, a cancer case is incorrectly labelled as healthy. False negatives are particularly concerning in medical diagnosis, as they may delaytimelyandessentialtreatment.

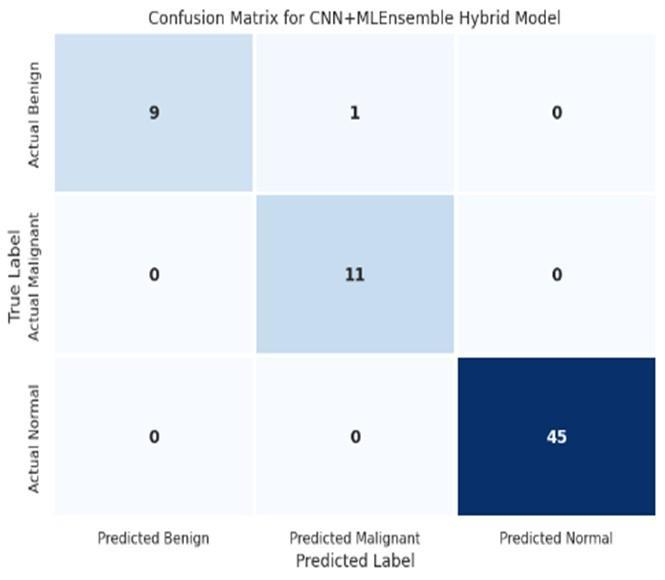

Toprovideacomprehensiveunderstandingofthemodel's diagnostic performance through the confusion matrix illustrated in Figure 5, the following metrics were employed:

• Accuracy: It serves as a comprehensive performance indicator that quantifies the model's correctness by

determining the ratio of accurate predictions encompassing both positive and negative classifications relative to the complete set of predictions made. This measure offers a general assessment of the model's predictivecapabilityandoverallreliability.

Accuracy=

• Precision (Positive Predictive Value): This metric quantifiestheratioofaccuratelyidentifiedpositivecasesto the total number of instancesthat the model predicted as positive. Withinthe clinical context, precision assumes paramount importance as it directly impacts patientcare elevated precision translates to fewerfalse positive diagnoses, thereby preventingunwarranted patient distress,invasive procedures, and excessive medical interventions that could otherwise compromise both patientwell-beingandhealthcareresourceallocation.

Precision= ���� ����+����

• Recall: Sensitivity, commonly known as Recall or True Positive Rate, represents a fundamental performance indicatorthatassessesamodel'sproficiencyindetectingall genuine positive cases present in the dataset. This metric establishesthefractionofactualpositiveinstancesthatthe algorithm successfully identifies, thus providing a comprehensive measure of the model's thoroughness in recognizing target conditions and ensuring minimal oversightofcriticalcases.

Recall= ���� ����+����

• F1-Score: The F1-score emerges through the harmonic meancalculationofprecisionandrecall,yieldingaunified metric that strategically reconciles the inherent tension between these two measures. This composite indicator proves particularly valuable as it simultaneously incorporates false positive errors (reflected through precision) and false negative misclassifications (captured viarecall).AnelevatedF1-scorethereforesignifiesawellperformingmodelthatdemonstratesconsistentefficacyby substantially reducing both error categories, thus exemplifying a balanced and dependable classification system.

F1-score= 2×Precision×Recall Precision+Recall

• Area Under the Receiver Operating Characteristic Curve (AUC-ROC):TheROCcurveservesasanessentialanalytical framework for assessing classifier efficacy, presenting a visual depiction of the model's discriminatory capacity throughplottingsensitivity(TruePositiveRate)versusthe False Positive Rate across the complete spectrum of

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN: 2395-0072

decision thresholds. The AUC-ROC emerges as a critical performance indicator that measures the algorithm's competence indistinguishingbetweenclasscategories.At any given threshold value 't', the True Positive Rate establishes the fraction of genuine positive instances that the model accurately recognizes and correctly classifies. TheAUC-ROCmetricsubsequentlydeliversaconsolidated numerical summary that encapsulates the equilibrium betweensensitivityandspecificityacrosstheentirerange of threshold parameters, providing researchers with a comprehensiveevaluationofclassifierperformance

4.1 Result Presentation

This section delivers a thorough performance assessment of the deployed deep learning and hybrid architectural frameworks. The effectiveness of these computational models underwent rigorous evaluation and comparative analysis utilizing the MIAS database. To establish a comprehensive and methodologically sound performance evaluation,anextensivearrayoffundamentalclassification indicators was implemented. This quantitative analysis encompassed accuracy, precision, recall, and F1-score metrics, which were strategically augmented through examination of the AUC curve, thereby furnishing a complete perspective on the model's diagnostic proficiency.

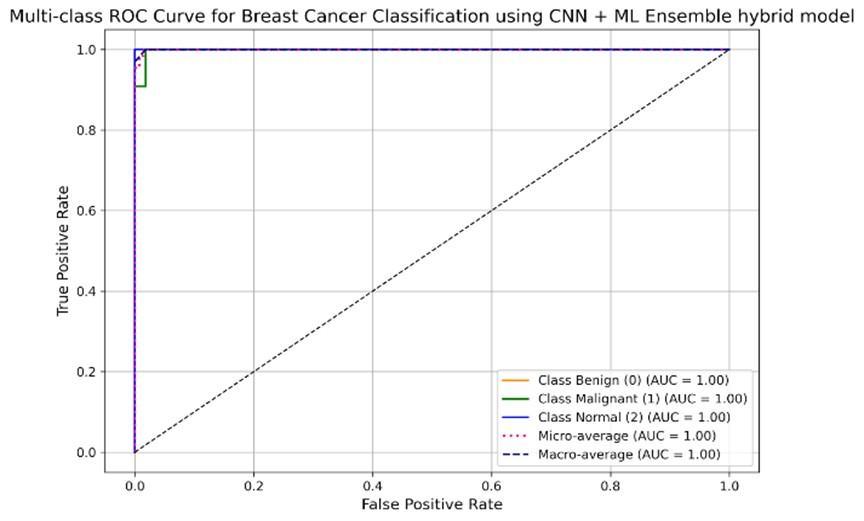

The ensemble CNN-ML hybrid model, which combines feature extraction from pre-trained CNNs with classical machine learning classifiers, achieved the highest classificationperformance.Theensemblehybridmodel demonstrated a high accuracy of 98.48% in imagebased classification. Furthermore, the model exhibitedexcellentclassseparability,withAreaUnder the Curve (AUC) scores reported to be close to 0.99. AUC curve is shown in Figure 6. The assessment additionally incorporated confusion matrix analysis, which substantiated the model's competence in precisely detecting malignant instances. This investigationunderscorestheutilizationofprecision, recall, and F1-score as fundamental metrics for assessingclassificationefficacy.

4.2

Thereportedaccuracyof98.48%achievedbytheensemble CNN-MLhybridmodelrepresentsitshighsignificanceinthe context of breast cancer detection. In medical diagnostics, particularlyforlife-threateningdiseaseslikebreastcancer, high accuracy is crucial as it directly correlates with the reliabilityofidentifyingbothcancerousandnon-cancerous cases correctly. This high accuracy indicates the model’s proficiency in minimizing both false positives and false

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN: 2395-0072

negatives Reducing these two error types is a critical objective in clinical practice, as each carries significant consequences for patient treatment and outcomes. The mention of AUC scores close to 0.99 as shown in Table 1 indicatesthatthemodelpossessesexcellentdiscriminative power,effectivelyseparatingdifferentclasses(e.g.,normal, benign, malignant). A high AUC suggests that the model is robust across various classification thresholds and performs consistently well in distinguishing between positiveandnegativecases.Thesignificanceofthismetricis especially pronounced within the high-stakes field of medical applications. In this context, where the consequencesofmisclassificationcanbesevere,themodel's strongperformanceservestounderscoreitspotentialas a robustandreliabletoolforclinicaldiagnosticsupport.

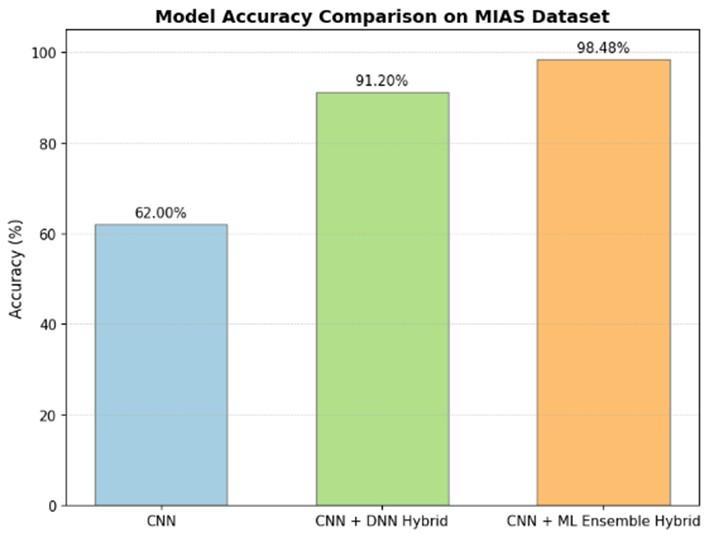

1) Comparison with Other Models: The performance of the proposed ensemble CNN-ML hybrid model can be assessed with the comparative analysis of other methods utilizingtheMIASdatasetasshowninfigure7.Thisresearch paper introduces three different models designed and evaluated:(i) a standard Convolutional Neural Network (CNN),(ii)ahybridCNN-DNNmodelwithtransferlearning, and (iii) the ensemble CNN-ML model. Among these approaches, the ensemble architecture demonstrated superior classification performance Several studies in the literaturehavealsousedtheMIASdataset,offeringpointsof comparisonfortheensemblemodel’s98.48%accuracy:

•WhenevaluatedontheMIASdatasetusingan80:20data partitioning strategy, the pre-trained VGG16 model demonstrated a remarkable classification accuracy of 98.96%. The architecture attained elevated sensitivity of 97.83% and exceptional specificity of 99.13%. This robust performancewasadditionallyvalidatedbyaprecisionvalue of 97.35% and a well-balanced F1-score of 97.66%. Furthermore, an exceptional AUC value of 0.995 was achieved.

• Other deep learning techniques applied to the MIAS database demonstrated varying accuracies: Stack Sparse AutoEncoder(SSAE)achieved98.9%accuracy,SparseAuto Encoder (SAE) achieved 98.5% accuracy, and a standard CNNachieved97%accuracy.

•AhybridCNNframeworkspecificallyengineeredforboth classification and segmentation of mammographic images, reportedanaccuracyof99.17%,alongwith98%sensitivity and 98.66% specificity. In a different approach, a study utilized a ResNet-18 model for feature extraction, with an enhancedcrow-searchoptimizedextremelearningmachine (ICS-ELM) yielding a competitive accuracy of 98.137% on theMIASdataset. Anotherdeeplearningapproach applied the AlexNet architecture to the MIAS dataset, attaining a notable classification accuracy of 95.70%.. Studies using traditionalmachinelearningclassifiersontheMIASdataset showed lower accuracies. For instance, an SVM classifier achieved 87.5% accuracy, while a voting k-NN classifier achieved75%accuracy.AstudycombiningCNNwithRNN (rectified linear unit) to categorize masses into normal, benign, or malignant on the MIAS dataset reported an accuracyrate of90%,withsensitivityat92%,precisionat 78%,andF1scoresat84%.Transferlearningtechniqueson theMIASdatasetachievedanaverageaccuracyof93.52%. Comparedtotheseresults,theproposedensembleCNN-ML hybrid model’s accuracy of 98.48% is highly competitive, positioningitamongthetop-performingmodelsthatwhich focusesspecificallyontheMIASdatasettoaddressthetasks of breast cancer detection and classification. Its performanceiscomparabletoorsurpassesmanyadvanced deeplearningandhybridapproachesfoundintheliterature thatusethesamedataset.

2) Significance and Practical Implications: The high performance of the proposed model holds significant practicalimplicationsforbreastcancerdetection:

• Enhanced Diagnostic Accuracy and Early Detection: The model’s high accuracy and AUC suggest it can reliably distinguishbetweenbreastconditions,supportingearlyand precise detection, which is critical for improving patient survivalrates.

• Reduction in Radiologist Workload: By providing highly accurate, real- time decision support, the system could potentially alleviate the workload of radiologists, enabling themtodedicatetheirexpertiseonmorecomplexcases.

• User-Friendly Interface: The project’s aim to provide a user-friendly diagnostic interface ensures that medical practitioners with minimal technical expertise can utilize thesystemforreal-timepredictions,enhancingaccessibility inclinicalsettings.

• Overcoming Data Limitations: The model’s robustness, especially through techniques like SMOTE for addressing classimbalance,indicatesitscapabilitytoperformwelleven withinherentdatachallengesinmedicalimaging.

3) Limitations of the Study: This paper acknowledges severallimitationsinherentinthestudy:

• Class Imbalance: A common characteristic of medical imagingcollections,andoneinherenttotheMIASdataset,is thechallengeofclassimbalance Tomitigatethisissueand fostergreaterclassificationfairness,theSyntheticMinority Oversampling Technique (SMOTE) was employed Despite

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN: 2395-0072

thiscorrectivemeasure,itisimportanttoacknowledgethat the dataset's foundational imbalance remains a pertinent factor that could potentially influence the generalization performanceofthemodelonunseendata.

• Resolution Dependency: The study notes that resolution dependency persists as a challenge. This implies that the model’s diagnostic capability might be influenced by the imageresolution,potentiallylimitingitsdirectapplicability acrossdiverseimagingequipmentorifdifferentresolutions areused.

• Older Dataset: The MIAS dataset, while a widely recognizedbenchmark,isanolderpublic datasetand may not provide the same image quality or richness as more contemporary datasets. This could affect the model’s generalizability to modern, higher-resolution mammograms.

Thisresearchpresentsthedevelopmentandassessmentof three distinct deep learning frameworks engineered for breast cancer detection in mammographic images viz A standard CNN, A transfer learning model incorporating a DeepNeuralNetwork(CNN-DNN),AnovelensembleCNNMachine Learning (ML) hybrid model. Among these, the ensemblemodel,whichintegratedfeatureextractionfrom InceptionV3, ResNet50, and VGG16 with Support Vector MachineandRandomForestclassifiersthroughsoftvoting, achieved the best results, recording 98.48% accuracy and an AUC close to 0.99. These results reveal the model's abilitytosimultaneouslyminimizebothfalsepositivesand false negatives, thereby enhancing the reliability and timelinessofearlydiagnosis Thestudyalsoillustratesthe effectiveness of preprocessing and class-balancing strategiessuchasSMOTEformitigatingdatasetlimitations. However, reliance on the older MIAS dataset, class imbalance, and resolution dependency limit broader generalizability. Future research should emphasize larger and more diverse datasets, optimized architectures, multimodal data integration, and explainable AI approaches to enhance robustness, interpretability, and clinicalapplicability.

[1]A.Saber,M.Sakr,O.M.Abo-Seida,A.Keshk,andH.Chen, ``A Novel Deep-Learning Model for Automatic Detection and Classification of Breast Cancer Using the TransferLearning Technique,''vol. 9, pp. 71194 71209,2021,doi: 10.1109/ACCESS.2021.3079204.

[2]A.F.A.AlshamraniandF.S.Z.Alshomrani,``Optimizing Breast Cancer Mammogram Classification Through a Dual Approach: A Deep Learning Framework Combining ResNet50, SMOTE, and Fully Connected Layers for

Balanced and Imbalanced Data,'' vol. 13, pp. 4815 4826, 2025.

[3] A. B. Nassif, M. A. Talib, Q. Nasir, Y. Afadar, and O. Elgendy, ``Breast cancer detection using artificial intelligence techniques: A systematic literature review,'' {ArtificialIntelligenceinMedicine},vol.127,2022,Art.no. 102276,doi:10.1016/j.artmed.2022.102276.

[4] N. Mohi ud din, R. A. Dar, M. Rasool, and A. Assad, ``Breast cancer detection using deep learning: Datasets, methods, and challenges ahead,'' {Computers in Biology andMedicine},vol.149,2022,Art.no.106073.

[5] S. A. Qureshi, ``Breast Cancer Detection Using Mammography: Image Processing to Deep Learning,'' vol. 13,pp.60776 60801,2025.

[6] B. Abdikenov, ``Future of Breast Cancer Diagnosis: A ReviewofDLandMLApplicationsandEmergingTrendsfor MultimodalData,''

[7] L. Shen, L. R. Margolies, J. H. Rothstein, E. Fluder, R. McBride, and W. Sieh, ``Deep Learning to Improve Breast CancerDetectiononScreeningMammography,''vol.9,no. 1,pp.1 12,Aug.2019,doi:10.1038/s41598-019-48995-4.

[8] M. S. Shahid and A. Imran, ``Breast cancer detection using deep learning techniques: challenges and future directions,'' {Multimedia Tools and Applications}, vol. 84, pp.3257 3304,2025.

[9] D. Youssef, H. Atef, S. Gamal, J. El-Azab, and T. Ismail, ``EarlyBreastCancerPredictionUsingThermalImagesand Hybrid Feature Extraction-Based System,'' vol. 13, pp. 29327 29339,2025,doi:10.1109/ACCESS.2025.3541051.

[10] S. Shrati and A. Ambika, ``Enhancing breast cancer detection and classification using light attention deep convolutionalnetwork:Anovelapproachintegratingdeep learningandmedicalimaging,''in{Proc.2025GlobalConf. EmergingTechnol.(GINOTECH)},Pune,India,2025.

[11] A. Alghamdi, K. Alghamdi, M. Alqahtani, and R. AboHejji, ``Enhance Breast Cancer Diagnosis Using Deep LearningModelsOnMammogramImagesinSaudiArabia,'' in{Proc.2025Int.Conf.InnovationinArtificialIntelligence andInternetofThings(AIIT)},Jeddah,SaudiArabia,2025.

[12] F. H. Alhsnony and L. Sellami, ``Advancing Breast Cancer Detection with Convolutional Neural Networks: A Comparative Analysis of MIAS and DDSM Datasets,'' in {Proc.2024IEEE7thInt.Conf.AdvancedTechnologiesfor SignalandImageProcessing(ATSIP)},Sousse,Tunisia,pp. 194 199,2024.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056 Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN: 2395-0072

[13] V. R. Patheda, G. Laxmisai, B. V. Gokulnath, S. P. S. Ibrahim, and S. S. Kumar, ``A Robust Hybrid CNN+ViT Framework for Breast Cancer Classification Using Mammogram Images,'' {IEEE Access}, vol. 13, pp. 77187 77195,2025,doi:10.1109/ACCESS.2025.3563218.

[14]F.F.Ting,Y.J.Tan,andK.S.Sim,``Convolutionalneural network improvement for breast cancer classification,'' {ExpertSystemswithApplications},vol.120,pp.103 115, Apr.2019.

[15] A. Hasan, U. Khalid, and M. Abid, ``AI-Powered Diagnosis:AMachineLearningApproachtoEarlyDetection of Breast Cancer,'' {International Journal of Engineering Development and Research}, vol. 13, no. 2, pp. 153 166, Apr.2025.

[16]Z.Sha,L.Hu,andB.D.Rouyendegh,``Deeplearningand optimization algorithms for automatic breast cancer detection,'' {International Journal of Imaging Systems and Technology}, vol. 30, no. 2, pp. 495 506, Jun. 2020, doi: 10.1002/ima.22400.

[17]R.S.Raaj,``Breastcancerdetectionanddiagnosisusing hybrid deep learning architecture,'' {Biomedical Signal ProcessingandControl},vol.82,2023,Art.no.104558.

[18] S. Hasan, M. M. H. Nour, A. Al Kafi, M. F. Islam, M. N. Islam, and F. A. Faisal, ``BCTD: An Optimised CNN-based Deep Neural Network for Primary Stage Breast Cancer Detection and Classification,'' in {Proc. 3rd Int. Conf. ComputingAdvancements(ICCA'24)},NewYork,NY,USA: ACM,2025,pp.986 992.

[19]J.Shah,D.U.Khan,M.A.Abrar,M.Tahir,andM.,``DualView Deep Learning Model for Accurate Breast Cancer Detection in Mammograms,' \{International Journal of IntelligentSystems},2025.

[20]J.Liu,J.Lei,Y.Ou,Y.Zhao,X.Tuo,B.Zhang,andM.Shen, ``Mammography diagnosis of breast cancer screening through machine learning: a systematic review and metaanalysis,''{ClinicalandExperimentalMedicine},vol.23,no. 6,pp.2341 2356,Oct.2023.

[21] A. Sahu, P. K. Das, and S. Meher, ``An efficient deep learningschemetodetectbreastcancerusingmammogram and ultrasound breast images,'' {Biomedical Signal Processing and Control}, vol. 87, Part A, 2024, Art. no. 105377.

[22] E. Peter, D. Emakporuena, B. Tunde, M. Abdulkarim, and A. Umar, ``Transformer-Based Explainable Deep Learning for Breast Cancer Detection in Mammography: The MammoFormer Framework,'' {American Journal of Computer Science and Technology}, vol. 8, pp. 121 137, 2025,doi:10.11648/j.ajcst.20250802.16.

[23]M.A.Raza,A.M.Khattak,W.Abbas,andM.Z.Asghar, ``Efficient Diagnoses of Breast Cancer Disease Using Deep Learning Technique,'' in {Proc. 2024 10th Int. Conf. on Computing and Artificial Intelligence (ICCAI '24)}, New York,NY,USA:AssociationforComputingMachinery,2024, pp.136 143,doi:10.1145/3669754.3669775.

[24] R. K. Ranjan and D. Rai, ``Alzheimer's Disease Image Diagnosis by Active Contour Segmentation \& Bootstrap Bagging Learning Model,''{Journal of Computational Analysis\&Applications},vol.33,no.5,2024.