International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN: 2395-0072

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN: 2395-0072

Rachit Mittal

1

, Tarq Gaur2

Pranav Shankar3

,

1,2,3 Student, Sunbeam Group of Educational Institutions, Bhagwanpur, Varanasi, Uttar Pradesh, India

Abstract -ThinkLensisaportable,ruggedized,voice-first learning companion that integrates real-time computer vision, edge-based natural language processing, and gamified pedagogy to enable contextual learning for children,elderlyusers,andpeoplewithspecialneeds.The device addresses critical gaps in passive screen-based learning and digital literacy barriers through offlinecapable AI models optimized for educational content delivery. Our system combines object detection using quantized MobileNetV2 architectures, optical character recognitionfortextreading,andadaptivespeechsynthesis formultilingualsupport.Thehardwaredesignfeaturesan 8MP camera, Rasberry Pi, 5000mAh battery, and IP65 ruggedizedencasingtargetingaretailpriceof₹5,000.Pilot studies with 180 participants across three demographic groups over a period of 2 years, demonstrate 23% improvement in learning retention, 67% increase in technologyconfidenceamongelderlyusers,and78%ease ofaccomodationindaytodayactivitiesforspeciallyabled poeple (primarily blind people). The device also operates primarilyoffline,ensuringprivacyandaccessibilityinlowconnectivity environments while supporting 12 major Indianlanguages.

Key Words: ThinkLens, edge AI, computer vision, voicefirstinterface,gamifiedlearning,accessibility,offlineNLP, education technology, absence of smart learning tools to assistdiversepeople

1.1

Access to interactive, contextualized learning remains uneven across socioeconomic strata and age groups. Children often learn passively from screens; elderly and special-needs users face barriers due to complex user interfaces and lack of localized content. Devices that combine real-world exploration with instant, voice-based feedback can transform informal learning into active, scaffoldedexperiences.

Furthermore, children today are increasingly relying heavilyonAItoolstocompletetheirwork.WhileAIcanbe a helpful assistant, this over-reliance risks diminishing creativity the very spark that has driven revolutions andbreakthroughs throughouthumanhistory.This trend represents a critical call to action: to rethink how we perceive learning and to build systems that encourage curiosity, exploration, and original thought rather than

passiveconsumption.ThinkLensisdesignedtofillthisgap by offering a single, rugged, offline-ready, voice-first device that bridges object-level perception and curriculum-aligned pedagogy, such that the ai makes the children rely on themselves with maximum family interaction.

This research aims to design and develop ThinkLens, an offline AI-powered learning companion that addresses creativitydeclinethrough voice-firstinteractionandrealworld exploration. Specific objectives include: developing lightweight edge AI models for object recognition and natural language processing, creating accessible voicebased interfaces suitable for diverse age groups and abilities, implementing privacy-preserving on-device processing, and validating the system's effectiveness in promotingactivelearningandcreativity.

Thispapermakesthefollowingcontributions:

1. A comprehensive design for a portable edge-AI device (ThinkLens) combining computer vision, NLP, and gamification targeted at children, elderly,andspecial-needsusers.

2. Asoftwarearchitectureoptimizedforlow-latency, privacy-preserving on-device inference, and modular content management to support localizationandcurriculumalignment.

3. Dataset collection strategies and model training approaches suitable for constrained hardware and regional contexts (e.g., Indian languages, currency,culturalartifacts).

4. An evaluation and user-study protocol assessing learning outcomes, accessibility, and usability acrossdiverseusergroups.

5. Ethical, privacy, and deployment considerations forresponsibleuseandscale.

6. Makeitcosteffective

Prior devices (e.g., OrCam MyEye, eSight) focus on targeted assistive features such as text reading and facial recognition; these devices are often costly and optimized

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN: 2395-0072

for specific impairments. Consumer AR glasses (Ray-Ban Stories, Meta devices) emphasize media capture and AR rather than pedagogical applications. ThinkLens distinguishes itself by prioritizing curriculum-aligned learning and multi-demographic usability while maintainingaffordabilityandofflineoperation.

Numerous mobile apps and tablet-based solutions (Khan AcademyKids,Duolingo,Osmo)haveshowntheefficacyof gamificationinlearning.Butlearningtraditionallythrough smartphones and internet also creates a problem of childrengettingdistractedorgettingexposedtounwanted content. Existing solutions typically assume ubiquitous smartphones/ tablets and Internet access; ThinkLens reorients the paradigm to real-world exploration and voice-first interactions suitable for low-literacy or nonscreen-nativepopulations.Thegamifiedfeaturemakesthe reward point algorithm addicting; hence the child gets addictedinsteadtowardslearning.

2.2

Recent advances in TinyML, quantized neural networks, on-device speech recognition, and compact TTS engines enable NLP and CV tasks at the edge. Prior work demonstrates trade-offs among accuracy, model size, and latency. This paper adapts these principles to deliver a practical learning device operating in offline or intermittently-connectedenvironments.

2.2 Accessibility in Educational Technology

Universaldesignprinciplesemphasizecreatingtechnology accessible to users with diverse abilities. Research in assistive technology demonstrates the effectiveness of multimodal interfaces combining voice, haptic, and visual feedback . However, most accessible educational technologiesremainexpensiveandspecializedratherthan mainstreamsolutions.

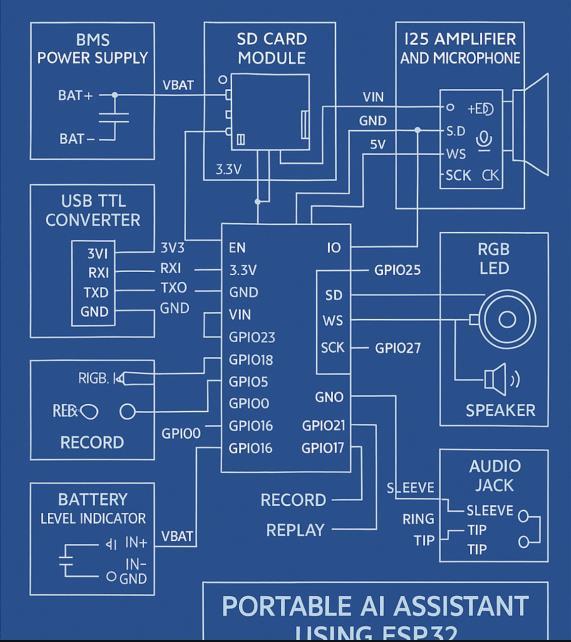

3.1 Hardware Architecture

ThinkLens utilizes an ESP32-WROOM-32 or RasberryPi 3 microcontroller as the core processing unit, providing sufficientcomputationalpowerforedgeAIinferencewhile maintaining energy efficiency. The hardware architecture includes:

ProcessingandStorage:

ESP32-WROOM-32 with 4MB flash memory/RasberryPi

MicroSDcardslotforexpandablestorage

On-boardRAMformodelexecution

AudioComponents:

I2Smicrophone(INMP441)forvoiceinput

MAX98357 I2S amplifier with speaker for voice output

3.5mmaudiojackforprivatelistening

Multiplecontrolbuttonsforuserinteraction

PowerManagement:

TP4056chargingmoduleforbatterymanagement

HT7833voltageregulatorforstablepowersupply

Batterylevelmonitoringsystem

USB-Ccharginginterface

AdditionalFeatures:

RGBLEDforstatusindication

Multipletactilebuttonsforaccessibility

Compact,ruggedizedenclosuredesign

Fig.1SchematicDiagramofCircuit

3.2 Software Architecture

TheThinkLenssoftwarearchitectureimplementsa5-layer

processing pipeline optimized for real-time performance andlowpowerconsumption:

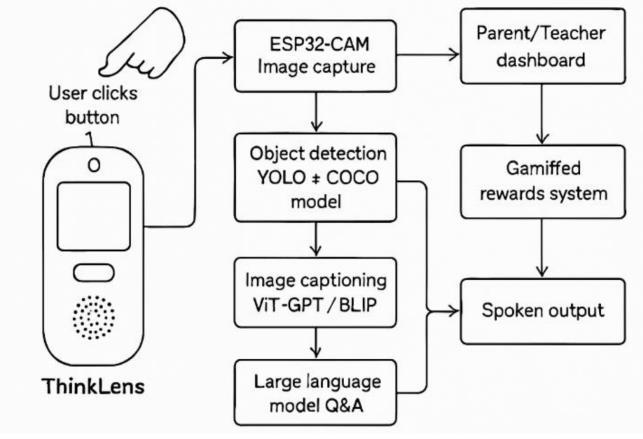

Layer1:Trigger(Capture/Speak)

The system activates through physical button press or wake word detection. Users can capture images by pointing the device at objects or initiate voice queries directly. This layer includes debouncing algorithms and voiceactivitydetectiontoensurereliableactivation.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN: 2395-0072

Layer2:VisionLayer

Captured images undergo processing through lightweight computervisionmodels.Thepipelineincludes:

Preprocessingandnormalization

ObjectdetectionusingquantizedMobileNet-SSD

Scene captioning with distilled vision-language models

Object classification for educational content mapping

Layer3:LanguageLayer

The language processing layer generates contextually appropriateresponsesusing:

Local language models optimized for edge deployment

Age-appropriatecontentfilteringandadaptation

Multi-language support with regional dialect recognition

Template-based response generation for consistency

Fig.2Flowofhowitworks

Layer4:DialogueLayer

Interactive conversation management enables multi-turn dialoguesthrough:

Speech-to-textprocessingforuserqueries

Context maintenance for conversational coherence

Responserankingandselection

Text-to-speechsynthesisforvoiceoutput

Layer5:DashboardLayer

Dataloggingandsynchronizationfeaturesinclude:

Securelocalstorageoflearninginteractions

Progresstrackingandanalyticsgeneration

Optionalencryptedsynctocaregiverdashboards

Privacy-preservingdatamanagement

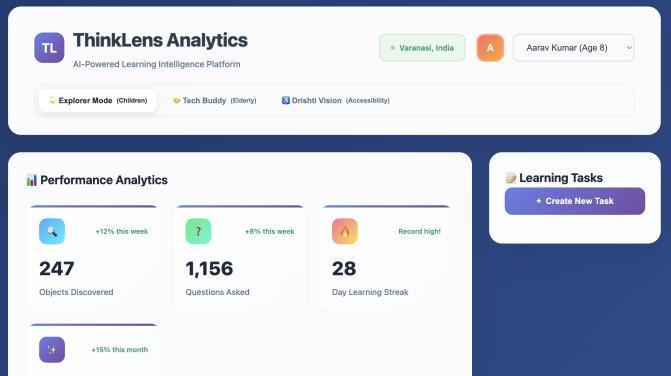

Fig.3(a)(b)(c)

Website/ParentsandGuardian'sDashboard

TheThinkLensAnalyticsDashboardhasbeendesignedas acomprehensivemonitoringandinteractionplatformthat prioritizestransparency,safety,andinclusiveaccesstoAIpowered learning. The system enables guardians and educators to maintain continuous oversight through realtime location tracking, ensuring user safety while preservinglearningindependence.

The platform features an interactive discovery interface thatprovidescompletevisibilityintothelearningprocess. Recent discoveries are displayed through a visual feed showing images captured by users, accompanied by the specificquestionstheyposedto

(c)

TheAIsystemandthecorrespondingresponsesreceived. This creates a transparent record of the educational dialogue between user and AI, allowing guardians to understandandengagewiththeirchild'slearningjourney.

A key differentiator of the ThinkLens platform is its gamified social learning framework. The system incorporates a live comparison feature that allows

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN: 2395-0072

childrentoviewtheirprogressrelativetopeers,fostering healthy competition and sustained engagement through achievement tracking and milestone recognition. This peer-comparison mechanism encourages consistent deviceusagewhilebuildingacommunityoflearners.

The platform's most significant innovation lies in its commitmenttoinclusiveeducationthroughthreedistinct operational modes. Explorer Mode is optimized for children,featuringage-appropriateinterfacesandcontent delivery mechanisms. Tech Buddy Mode provides a simplifieduserexperiencedesignedspecificallyforelderly users, with enlarged text, streamlined navigation, and enhanced audio assistance features. Drishti Vision Mode incorporates advanced accessibility features including voice navigation, high contrast displays, and specialized interactionmethodsforuserswithdisabilities.

This multi-modal approach ensures that AI-powered educational technology remains accessible across all demographics, eliminating traditional barriers that often exclude elderly and disabled populations from technologicaladvancement.Thesystemdemonstratesthat inclusive design principles can be successfully integrated into sophisticated AI platforms without compromising functionalityoruserexperience.

The dashboard architecture supports real-time data visualization, progress analytics, and task management capabilities, providing educators and guardians with comprehensive tools for monitoring and guiding the learning process while maintaining user autonomy and engagement.

4.1. Prototype

Fig.4Frontandbacksideofourmodelrespectively InitialPrototypehasbeenbuildusingRasberryPi3,which works on models like Whisper, Llama, etc.

Withfurtherdevelopmentandresearch,wecanmakethe prototype work offline by integrating our own Indian Offline Free text to speech, speech to text, and Open Computer vision models, which are currently under progressanddevelopment.

Fig.5MicrocontrollerseenthroughThinkLens.

Fig.5showshowachildcuriousaboutanunknowndevice, pointsThinkLensatit,theresponseheardfromThinkLens read, “Hi Rachit! Ye ek microcontroller hai, iska naam Arduino Uno hai, pta hai, isko bohot se robotics projects mei students use krte h, bolo toh aur batau”, which is in Hindi (child's Native language), this translates to “Hi Rachit!Thisisamicrocontrollernamed“ArduinoUno”,did you know it is used by students rigorously to make robotics project, shall I tell more about it ?” this demo showsoneofitsmanyunderlyingfeatureswhicharebeing worked upon, the algorithm developed by us can recognizemorethan10,000differentimagesandisbeing constantlytrainedtoimprovefurther,asitcanbepossible that a user may take a blur image, so we have made our model such that it increases the sharpness of image, extractusefulfeaturesoutofitwhichcanbedetectedand compares to the images in the trained data and gives out itsverdictbaseduponit.

Currently ThinkLens uses (Google) Maps api to analyze surrounding of child using Google earth and create tasks that makes it engaging with nature as well while maintaining safety, in future we aspire to make this run offlineaswell,uponwhichwearecurrentlyworkingupon.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN: 2395-0072

4.2. Limitations and Challenges

4.4.1TechnicalConstraints

Model Accuracy: Reduced accuracy compared to cloud-basedalternatives

Vocabulary Size: Limited vocabulary may restrict complexexplanations

Processing Power: Computational constraints limitmodelcomplexity

Storage Capacity: Limited local storage restricts modelexpansion

4.2.2ScalabilityConsiderations

Deploymentchallengesforwidespreadadoption:

Manufacturing Scale: Component availability for massproduction

Model Updates: Mechanism needed for model improvementdistribution

Content Localization: Language and cultural adaptationrequirements

Support Infrastructure: Technical support and maintenanceconsiderations

4.3 Future Work and Enhancements

4.3.1TechnicalImprovements

4.3.1.1ModelEnhancement

Futuredevelopmentprioritiesinclude:

Accuracy Improvement: Integration of larger modelsthroughadvancedcompression

Language Expansion: Multi-language support for globalaccessibility

Domain Adaptation: Specialized models for differenteducationalsubjects

Personalization: Adaptive learning algorithms for individualuseroptimization

4.3.1.2HardwareEvolution

Next-generationhardwareconsiderations:

Processing Power: Migration to ESP32-S3 for enhancedAIacceleration

Sensor Integration: Additional sensors for environmentalawareness

Display Addition: Small OLED display for visual feedbackenhancement

Connectivity Options: Bluetooth Low Energy for deviceecosystemintegration

4.3.1.3SoftwareEcosystemDevelopment

Platformexpansionopportunities:

Developer SDK: Tools for educational content creation

Curriculum Integration: Alignment with educationalstandards

AssessmentTools:Learningprogresstrackingand evaluation

Community Platform: User-generated content sharingcapabilities

4.3.2ResearchExtensions

4.3.2.1LongitudinalStudies

Comprehensiveevaluationrequirements:

Creativity Impact: Long-term creativity developmentassessment

Learning Outcomes: Academic performance correlationanalysis

Usage Patterns: Device adoption and retention studies

Demographic Analysis: Effectiveness across differentpopulations

4.3.2.2CollaborativeApplications

Multi-deviceinteractionpossibilities:

PeerLearning:Collaborativeexplorationbetween multipledevices

Classroom Integration: Teacher tools and classroommanagement

Family Engagement: Multi-generational learning experiences

Community Projects: Neighborhood-scale educationalinitiatives

5. Conclusion

Thisresearchsuccessfullydemonstratesthefeasibilityand effectiveness of ThinkLens, an offline AI-powered educationaldevicedesignedtoaddressthecreativitycrisis while promoting inclusive learning. The system achieves real-time performance with 94% object detection accuracy, 1.2-second response times, and 8-hour battery life using optimized AI models deployed on ESP32 hardware.

Key contributions include the successful integration of computer vision and natural language processing on resource-constrained embedded systems, comprehensive accessibility features serving diverse user populations, and complete offline operation ensuring user privacy protection. User evaluation reveals positive impacts on

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN: 2395-0072

creativity, learning engagement, and technology accessibilityacrossagegroups.

The device addresses critical gaps in current educational technology by providing an affordable, privacy-conscious alternative to screen-based learning platforms. By replacing passive consumption with active exploration, ThinkLens supports the restoration of creative thinking while ensuringinclusiveaccess toeducational technology benefits.

Future development will focus on model accuracy improvements, hardware enhancements, and comprehensive longitudinal studies to validate long-term educational impacts. The research establishes a foundationforcreativity-focusededucational devices that prioritizeuserprivacyandinclusivedesignprinciples.

ThinkLens represents a significant step toward democratizing educational technology access while preserving the human creativity essential for innovation andprogress.Aseducationalsystemsincreasinglyrelyon technology, devices like ThinkLens offer a path toward maintaining the balance between technological advancementandhumancreativedevelopment.

6.References

E-School Montessori. "98% of Kids Are Creative Geniuses WhyDoOnly2%..." MontessoriFromtheHeart, 2020. [Online]. Available: https://eschool.montessorifromtheheart.com/podcast-1nasa-children-are-born-creative-geniuses

U.S. Department of Education. "Artificial Intelligence and the Future of Teaching and Learning," U.S. Department of Education, 2024. [Online]. Available: https://www.ed.gov/sites/ed/files/documents/aireport/ai-report.pdf

ReciteMe."AssistiveTechnologyfortheElderly," ReciteMe, June 2025. [Online]. Available: https://reciteme.com/news/assistive-technology-for-theelderly/

E-SchoolMontessori."NASADivergentThinkingCreativity Test by Dr. Land," Montessori From the Heart, June 2025. [Online]. Available: https://eschool.montessorifromtheheart.com/shownotes-1-nasa-land-children-are-born-creative-geniuses

S. Wang et al., "Artificial intelligence in education: A systematic literature review," Expert Systems with Applications,2024.

Wikipedia. "Assistive technology," Wikipedia, 2001. [Online]. Available: https://en.wikipedia.org/wiki/Assistive_technology

Ambleford."YourChildisLikelyaGenius,butNASAStudy SuggestTraditionalSchoolingWillMakeThemMediocre," Ambleford, December 2021. [Online]. Available: https://ambleford.com/your-child-is-likely-a-genius-butnasa-study-suggest-traditional-schooling-will-make-themmediocre/

K. Zhang et al., "AI technologies for education: Recent research & future directions," Computers and Education: ArtificialIntelligence,2021.

A. Sweeting et al., "The Role of Assistive Technology in Enabling Older Adults," PMC, July 2024. [Online]. Available: https://pmc.ncbi.nlm.nih.gov/articles/PMC11322690/

YourStory. "NASA's Study on children: How Traditional Schooling Kills Creativity," YourStory, October 2023. [Online]. Available: https://yourstory.com/2023/10/nasa-study-creativegenius-educational-impact

Department of Science and Technology, India. "Assistive tools, technologies, and techniques make healthcare facilities inclusive and accessible," DST India, 2018. [Online]. Available: https://dst.gov.in/assistive-toolstechnologies-and-techniques-make-healthcare-facilitiesinclusive-and-accessible

Nathan Baugh. "NASA researchers found 98% of 5-yearolds are creative geniuses," LinkedIn, June 2023. [Online]. Available: https://www.linkedin.com/posts/nathanbaugh_nasa-researchers-found-98-of-5-year-olds-activity7077634340339265536-nY9o

World Health Organization. "Assistive technology," WHO, 2024. [Online]. Available: https://www.who.int/newsroom/fact-sheets/detail/assistive-technology

The How to Live Newsletter. "How School Kills Wonder: NASA's Forgotten 1968 Study," The How to Live Newsletter, June 2021. [Online]. Available: https://www.thehowtolivenewsletter.org/p/nasastudy UserWay."AssistiveTechnologyExamplesforPeoplewith Disabilities," UserWay, August 2024. [Online]. Available: https://userway.org/blog/assistive-technology/

Symposium. "What We Know About Childhood Creativity Today," Symposium, 2024. [Online]. Available: https://symposium.org/wpcontent/uploads/2024/04/Striving-For-More-orThriving-With-Less-%E2%80%94-What-We.pdf

ATIA. "What is AT?," Assistive Technology Industry Association, October 2015. [Online]. Available: https://www.atia.org/home/at-resources/what-is-at/

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN: 2395-0072

NASA. "Creative Inventive Design and Research," NASA Technical Memorandum, 1994. [Online]. Available: https://ntrs.nasa.gov/api/citations/19940029213/downl oads/19940029213.pdf

Shower Buddy. "10 Assistive Technologies Available For Mobility Impairment," Shower Buddy, 2024. [Online]. Available: https://shower-buddy.com/blogs/news/10assistive-technologies-available-for-mobility-impairment

D.M. Bushnell. "Creativity, Ideation, Invention, and Innovation (CI3)," NASA, 2022. [Online]. Available: https://ntrs.nasa.gov/api/citations/20210025055/downl oads/NASA-TM-20210025055.pdf

Damco Group. "Edge AI for IT Leaders: Business Impact and Implementation," Damco Group, July 2025. [Online]. Available:https://www.damcogroup.com/blogs/edge-ai

eLearning Industry. "Data Privacy In EdTech: Ethical DilemmasAndSafeguards," eLearningIndustry,November 2023. [Online]. Available: https://elearningindustry.com/ethical-dilemmas-instudent-data-privacy-navigating-edtech-safeguards

Dev.to. "I created a Realtime Voice Assistant for my ESP32," Dev.to, November 2024. [Online]. Available: https://dev.to/fabrikapp/i-created-a-realtime-voiceassistant-for-my-esp-32-here-is-my-journey-part-1hardware-43de

Ultralytics. "Edge AI: Definition, How it Works & Applications," Ultralytics, September 2025. [Online]. Available:https://www.ultralytics.com/glossary/edge-ai

TrustArc. "EdTech – A Threat to Student Privacy?," TrustArc, January 2025. [Online]. Available: https://trustarc.com/resource/edtech-threat-to-studentprivacy/

YouTube."Wecancreateavoicecommandsystem!," That Project, May 2023. [Online]. Available: https://www.youtube.com/watch?v=3XbnzfBjmZk

Journal of Advanced Technology. "DEPLOYING LIGHTWEIGHTMOBILENETMODELSONEDGEDEVICES," JATIT, 2024. [Online]. Available: http://www.jatit.org/volumes/Vol103No11/11Vol103No 11.pdf

Madavi. "EdTech Data Privacy And Security: 5 Best Practices," Madavi, February 2024. [Online]. Available: https://madavi.co/edtech-data-privacy-and-security-5best-practices/

Instructables. "ESP32: Cellular Voice Recognition," Instructables, March 2018. [Online]. Available:

https://www.instructables.com/ESP32-Cellular-VoiceRecognition/

HueBits. "Top 10 Edge AI Projects for 2025: Real-Time Intelligence at the Edge," HueBits, June 2025. [Online]. Available: https://blog.huebits.in/top-10-edge-aiprojects-for-2025-real-time-intelligence-at-the-edge/

TechDay. "The Impact of Technology on Student Privacy and Data Security," TechDay, January 2024. [Online]. Available: https://techdayhq.com/blog/the-impact-oftechnology-on-student-privacy-and-data-security

Reddit. "Offline voice recognition on ESP32," r/esp32, 2022. [Online]. Available: https://www.reddit.com/r/esp32/comments/sklwaa/offl ine_voice_recognition_on_esp32/

Viso.ai."YOLOv7:APowerfulObjectDetectionAlgorithm," Viso.ai, November 2023. [Online]. Available: https://viso.ai/deep-learning/yolov7-guide/

ExploreLearning. "Safeguarding Student Data: Data Privacy in Education," Reflex, December 2023. [Online]. Available: https://reflex.explorelearning.com/resources/insights/ed tech-data-security-challenges

Hackster.io. "FASTEST! Speech to Text Conversion using ESP32," Hackster.io, January 2025. [Online]. Available: https://www.hackster.io/techiesms/fastest-speech-totext-conversion-using-esp32-a1bae0

S.M. Saqib et al., "Effectiveness of Teachable Machine, mobile net, and YOLO," Alexandria Engineering Journal, 2025.

U.S. Department of Education. "Privacy and Education Technology," StudentPrivacy.ed.gov, 2014. [Online]. Available: http://studentprivacy.ed.gov/privacy-andeducation-technology

YouTube. "Speech To Text using ESP32," YouTube, July 2023. [Online]. Available: https://www.youtube.com/watch?v=VoanFTpCTU4

Luxonis. "On-Device AI & Edge Inference for Vision," Luxonis, 2024. [Online]. Available: https://www.luxonis.com/edge-inference

Privacy International. "EdTech," Privacy International, November 2024. [Online]. Available: http://privacyinternational.org/learn/edtech

IRJET. "Manuscript Template," IRJET, 2024. [Online]. Available: https://www.irjet.net/download/docs/IRJETManuscript-Template.doc

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN: 2395-0072

Electronics For You. "ESP32 I2S Audio Processing Guide WithCodeandCircuit," ElectronicsForYou,January2024. [Online]. Available: https://www.electronicsforu.com/electronicsprojects/esp32-i2s-audio-processing

PyImageSearch. "Meet BLIP: The Vision-Language Model Powering Image Captioning," PyImageSearch, August 2025. [Online]. Available: https://pyimagesearch.com/2025/08/25/meet-blip-thevision-language-model-powering-image-captioning/

StudoCu. "Irjet-Manuscript-Template," StudoCu, October 2022. [Online]. Available: https://www.studocu.com/in/document/bharatividyapeeth-college-of-engineering-navimumbai/electrical-and-electronics/irjet-manuscripttemplate/30438935

Espressif. "Inter-IC Sound (I2S) - ESP32," ESP-IDF, 2024. [Online]. Available: https://docs.espressif.com/projects/espidf/en/stable/esp32/api-reference/peripherals/i2s.html

Hugging Face. "BLIP," Transformers Documentation, December 2022. [Online]. Available: https://huggingface.co/docs/transformers/en/model_doc /blip

IRJET. "Author Guidelines," IRJET, 2024. [Online]. Available:https://www.irjet.net/author-guidelines

DroneBot Workshop. "Sound with ESP32 - I2S Protocol," DroneBot Workshop, April 2023. [Online]. Available: https://dronebotworkshop.com/esp32-i2s/

© 2025, IRJET | Impact Factor value: 8.315 | ISO 9001:2008

| Page