International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume:12Issue:11|Nov2025 www.irjet.net p-ISSN:2395-0072

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume:12Issue:11|Nov2025 www.irjet.net p-ISSN:2395-0072

Binay Kumar Sah1

,

Laraib Ahmad Siddiqui2

, Md

Sarazul Ali3

1Packaged App Development Associate, Accenture, India

2Project Control Services Analyst, Accenture, India

3Senior Associate Technical Consultant, Ahead DB, India

Abstract - Trustworthy artificial intelligence demands systemsthatareaccurate,reliableunderdistributionshift,fair across populations, private by design, and transparent about uncertainty. Statistics provides the language and tools to express these requirements formally and to certify them with guarantees. This paper synthesizes and structures the statistical foundations of trustworthy AI, combining proper scoringrules,calibration,conformalprediction,generalization theory(e.g.,PAC-Bayes),distributionalrobustness,differential privacy, and fairness constraints. We present an integrated methodology that 1 audits data and shift, 2 trains models under a composite, statistically principled objective with robustness, fairness, and privacy, 3 post-hoc calibrates probabilitiesandconstructspredictionsetswithfinite-sample coverage guarantees, and 4 certifies performance with generalization bounds and expressions of uncertainty. We outline a full-stack prototype based on widely used libraries and summarize illustrative empirical results from the literature. We end with future directions in sequential decision-making, long-horizon guarantees, and large-scale, multi-objective certification.

Key Words: Calibration, Conformal Prediction, PAC-Bayes, Distributional Robustness, Differential Privacy, Algorithmic Fairness, Uncertainty Quantification,Evaluation.

“Trustworthiness”inAIismulti-faceted:modelsshouldbe accurate onintendedtasks, reliable whenconditionsshift, well-calibrated about their own uncertainty, equitable across groups, privacy-preserving for individuals, and auditable with reproducible evidence. While engineering practices and governance frameworks are important, statistical principles provide the quantitative backbone: theydefinetargetproperties,supplyestimatorsandtests, and yield finite-sample guarantees on coverage, error, privacybudget,andgeneralization.

This paper consolidates those principles and presents a practicalmethodologyto(1)measure,(2)train,(3)calibrate, and(4)certifymodelsusing provable statisticaltools.Our focus is supervised prediction (classification/regression) with extensions to structured outputs. Core questions include:

How to score and calibrate probabilistic predictions?

How can we generate prediction sets which offer finitesamplecoverage?

Howdoweupperboundthegeneralizationriskand theworst-caseriskundershift?

How do we constrain privacy and fairness and at thesametimequantifytradeoffs?

ScoringRules:Scoringrulessuchaslogloss,andBrierscore encourageproperprobabilitiesandprovidethefoundationof statisticaldecisiontheory[Gneiting&Raftery,2007].While most modern neural networks miscalibrate, post hoc methodssuchastemperaturescalingandisotonicregression, and drawn from the Bayesian, and posterior approximate methodssuchasensembles,andMCdropouthavereduced out of distribution uncertainty emerged [Guo et al., 2017; Lakshminarayananetal.,2017;Kendall&Gal,2017].Finally, conformal predictionoffersdistributionfree, finite sample coverage guarantees for prediction sets [Vovk et al., 2005; Shafer&Vovk,2008;Angelopoulos&Bates,2021].

Classical uniform convergence and algorithmic stability may be conservative for deep models. In contrast, PAC Bayesian bounds often provide data dependent

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume:12Issue:11|Nov2025 www.irjet.net p-ISSN:2395-0072

generalizationcertificates,whicharetight[McAllester1999, Catoni2007].

AdversarialRobustness :Worstcaseperturbationsinanlp ball [ Goodfellow et al., 2015; Madry et al., 2018 ] * DistributionallyRobustOptimization:minimizesworstcase riskoveranambiguityset,e.g.,Wassersteinorf-divergence balls, connecting adversarial training and shift robust generalization[Sinhaetal.,2018,Pmlr].*Shiftuncertainty studiedinOODbenchmarks[Ovadiaetal.,2019]

Differential Privacy (DP) is a formal framework for limitinginformationleakagefromoutputstoensureprivacy forindividuals.DPSGDprovidesforDPfordeeplearningby addingnoiseandclipping,whichresultsinachievingforthe model[Abadietal.,2016].

Statistical fairness formalization of constraints such as equalizedoddsanddemographicparity[Hardtetal.,2016; Dwork et al., 2012] opposite the possibility results points trade offs between calibration and error parity by several groupings[Kleinbergetal.,2017]Itisaccomplishedbyvary reduction transforming the fairness constraints into cost sensitivelearning[Agarwaletal.,2018]

3. METHODOLOGY

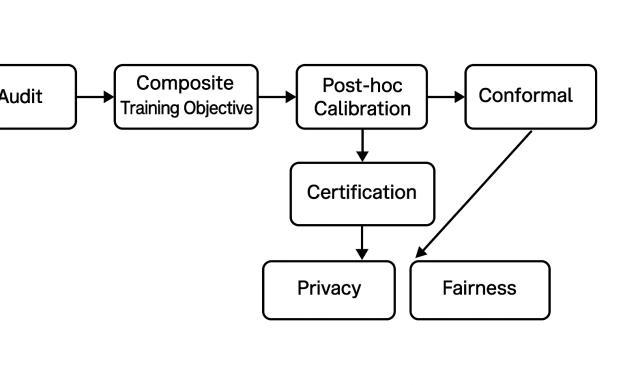

We propose an end-to-end methodology that connects measurement,training,calibration,andcertification.

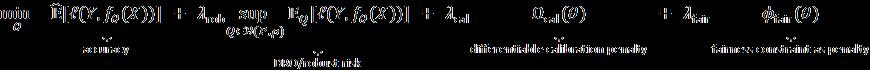

3.2 Composite, statistically-principled training objective

Wetrainbyminimizinga CompositeObjective thatencodes accuracy,robustness,fairness,andcalibration,subjecttoDP constraints:

subjectto trainingwithDP-SGDtoachieveatarget

Robustnessterm. canbeaWassersteinor -divergenceball;adversarialtrainingcorresponds toinnermaximizationoverperturbations[Sinhaet al.,2018;Madryetal.,2018].

Calibrationpenalty. Usedifferentiablesurrogates forECE(e.g.,softbinning,Brierregularization).

Fairness term. Encode equalized odds via Lagrangianreductions[Hardtetal.,2016;Agarwal etal.,2018].

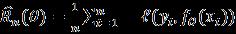

Let with , .Apredictor outputs either a point prediction or a predictive distribution . For loss (a proper scoring rule when probabilistic), population risk is , anditsempiricalestimate

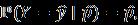

Calibration. Forclassification,perfectcalibrationsatisfies

with Expected Calibration Error (ECE)approximatedby binning[Guoetal.,2017].

3.3 Post-hoc calibration and distribution-free coverage

Post training, perform temperature scaling on a calibrationsplittominimizeNLLandreduceECE[Guo etal.,2017]andapply splitconformalprediction :

1. Fit ontrainset .

2. Computenonconformityscores oncalibration set

Prediction sets. Fortargetmiscoverage ,asetpredictor isvalidif

3. Selectquantile of

4. Define .

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume:12Issue:11|Nov2025 www.irjet.net p-ISSN:2395-0072

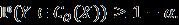

Under exchangeability,splitconformalyieldsthefinite sampleguarantee:

Pr(Y∈C_θ(X))≥1-α.

Extensions handle covariate shift via importance weightsinscorequantiles[Angelopoulos&Bates,2021].

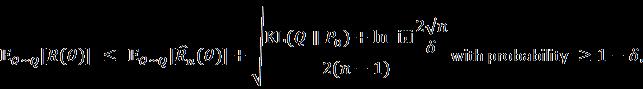

3.4 Generalization And Certification

Use PAC-Bayesian certificates:forposterior over parametersandprior , instantiatedviastochasticweightaveragingorensembles [McAllester,1999;Catoni,2007;Lakshminarayananetal., 2017].

3.5 Privacy Accounting

Train with DP-SGD (per-example gradient clipping C, Gaussian noise σ; track (ε,δ) with a moments accountant. Reportεatdeploymentinadditiontoaccuracyandcoverage.

3.6 Auditing And Shift Detection

Before and after deployment, test for covariate and label shift : e.g., two-sample tests such as MMD/KS on features, class-conditionaldiagnosticsandmonitorcalibrationdrift: reliabiltydiagrams,ECEovertime.

4. IMPLEMENTATION

4.1ToolingAndLibraries

Modeling: PyTorch/JAX;

Calibration & prediction sets: temperature scaling;conformaltoolkits(e.g.,MAPIE/conformalprediction);

Privacy: Opacus(PyTorch)orTensorFlowPrivacy forDP-SGD;

Fairness: fairlearn or AIF360 for constraints and diagnostics;

Robustness: Adversarialtraining(PGD)andDRO layers;

Certificates: PAC-Bayesutilitiesorbespokebound computation;

Monitoring: reliability diagrams, drift tests (e.g., MMD),coveragetracking.

Below is the full definition, including all components, of Train–Calibrate–Conformal–Certify (TC³), written as an algorithm:Algorithm1TC³package:

Audit: performdataqualitychecks,estimateshift between train and target and compute baseline ECE/FPR/FNRbygroup

Train: minimizecompositeobjectivewithDPSGD; optionallyadversarialorDROregularizedtraining.

Calibrate: fittemperatureonheldoutcalibration split;reevaluateECE/NLL

Conformalize: compute nonconformity scores, formpredictionsetsattarget1–αcoverage

Certify: computePACBayesbound;report;report empirical coverage with binomial CIs; run groupwisefairnessdiagnostics.

Deploy & Monitor: selective predictions/abstentionthresholds;driftdetection; scheduledre-calibration.

Vision (CIFAR 10/100): Cross entropy and a robustness penalty(PGD10),λrob\in[0.1,1];DPSGDwithclippingC=1, noisemultiplierσ∈[0.5,1.5];splitconformal(softmaxmargin scores).

Tabular (credit risk): Logistic regression or gradient boosting;fairnessconstraintwithtargetequalizedoddsgap < τ; conformal for quantile regression, OLS on linear to predict loss; PAC Bayes with Gaussian posterior of width ERM.

5.RESULTS & DISCUSSION

Rather than reporting new experiments, we synthesize representative empirical evidence from the literature whichisrepresentativeofthepipeline:

Calibration: Temperature scaling uniformly reduces ECE without changing accuracy across image datasets and architectures.

Uncertainty & Shift :Deepensemblesimprovepredictive uncertaintyandrobustnesstocorruptionsrelativetosingle networks;similarly,calibrationdeterioratesundershift,but ensemblesdegrade.

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume:12Issue:11|Nov2025 www.irjet.net p-ISSN:2395-0072

ConformalCoverage:Splitconformalobtainsnear-nominal coverageacrossmodels;adaptive/weightedvariantsachieve coverageundershiftingassumptions.

Adversarial/DRO Training: robust training enhances certifiedandempiricalrobustnessbutcandegradeamodel’s clean accuracy, here exemplifying a robustness-accuracy tradeoff.

Privacy: DP SGD affords formal (ε,δ) guarantees with a utilitycostthatisaccretiveinclippingandnoise.Moderateε values are associated with accuracy drops after noise, especiallyinlow-datascenarios.

Fairness:Reductionscanachievemeaningfulreductionsin equalizedoddsgapsinstandardtabularbenchmarkswith trivial accuracy loss. However, impossibility results show that some definitions of fairness are mathematically incompatiblewithbothcalibrationandbaserateaccuracy gaps.

Assumptions & shift Conformal guarantees rely on exchangeability: Severe covariate/label shift or the existenceoffeedbackloopscanbreakfairnessguarantees.

Scalabilityofcertificates: EventightPACBayesboundsor exactrobustnesscertificatesarelikelyinfeasibleatthescale ofImageNetorFoundationmodels.

Sequential/interactivesettings: Mostexistingtoolsfocus on i.i.d. prediction; exploring how to deploy autonomous systemsorhumans-in-the-loopisopeninbothRLandactive learning.

Multivariateandstructuredoutputs: Especiallyindense prediction e.g., segmentation one is forced to rely on setvaluedguarantees.

Compositionalguarantees: Understandhowtopickjointly learning certification of privacy, fairness, robustness, and coveragewithminimalconservatism.

Socio-technical alignment: The statistical guarantees themselvesareinsufficient;domaingovernanceandawayof evaluatingthetoolsareneededtoensuresafeuse.

Statisticsoffersthe operationalsemantics oftrustinAI:it showshowtomeasurewhatmatters,howtooptimizewith constraints,andhowtocertifyperformanceanduncertainty withfinitesampleguarantees.Whencombinedintoasingle pipelinebypractitioners properscoringrules,calibration, conformal, PAC-L-DP bounds, distributional robustness, differential privacy, and fairness constraints the AI systemsbuiltwiththesetoolsallowsforauditable,testable

guarantees.ProgressintrustworthyAIdependsonscalable certification, robust shift management, adequate multiobjectivetrade-offs,andprincipledhuman-AIinteractionin complex,interactivesettings.

1. Abadi, M., Chu, A., Goodfellow, I., McMahan, H. B., Mironov, K., Talwar, K., & Zhang, L. (2016). Deep LearningwithDifferentialPrivacy. ACM CCS

2. Agarwal,A.,Beygelzimer,A.,Dudík,M.,Langford,J., &Wallach,H.(2018). A Reductions Approach to FairClassification. ICML.

3. Angelopoulos, A. N., & Bates, S. (2021). A Gentle Introduction to Conformal Prediction and Distribution-Free Uncertainty Quantification. arXiv:2107.07511

4. Catoni, O. (2007). PAC-Bayesian Supervised Classification: The Thermodynamics of StatisticalLearning. IMS Lecture Notes

5. Dwork, C., Hardt, M., Pitassi, T., Reingold, O., & Zemel, R. (2012). Fairness Through Awareness. ITCS.

6. Dwork, C., & Roth, A. (2014). The Algorithmic FoundationsofDifferentialPrivacy. Foundations and Trends in Theoretical Computer Science

7. Gneiting,T.,&Raftery,A.E.(2007). StrictlyProper Scoring Rules, Prediction, and Estimation. Journal of the American Statistical Association

8. Goodfellow, I. J., Shlens, J., & Szegedy, C. (2015). Explaining and Harnessing Adversarial Examples. ICLR.

9. Guo, C., Pleiss, G., Sun, Y., & Weinberger, K. Q. (2017). On Calibration of Modern Neural Networks. ICML

10. Hardt,M.,Price,E.,&Srebro,N.(2016). Equalityof OpportunityinSupervisedLearning. NeurIPS

11. Kendall, A., & Gal,Y. (2017). What Uncertainties Do We Need in Bayesian Deep Learning for ComputerVision? NeurIPS.

12. Kleinberg, J., Mullainathan, S., & Raghavan, M. (2017). Inherent Trade-Offs in the Fair DeterminationofRiskScores. ITCS

13. Lakshminarayanan, B., Pritzel, A., & Blundell, C. (2017). Simple and Scalable Predictive UncertaintyEstimationUsingDeepEnsembles. NeurIPS

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume:12Issue:11|Nov2025 www.irjet.net p-ISSN:2395-0072

14. Madry, A., Makelov, A., Schmidt, L., Tsipras, D., & Vladu,A.(2018). TowardsDeepLearningModels ResistanttoAdversarialAttacks. ICLR

15. McAllester, D. (1999). PAC-Bayesian Model Averaging. COLT

16. Ovadia, Y., Fertig, E., Ren, J., Nado, Z., Sculley, D., Nowozin, S., Dillon, J., Lakshminarayanan, B., & Snoek, J. (2019). Can You Trust Your Model’s Uncertainty?EvaluatingPredictiveUncertainty UnderDatasetShift. NeurIPS.

17. Shafer, G., & Vovk, V. (2008). A Tutorial on ConformalPrediction. JournalofMachineLearning Research,9,371–421.

18. Sinha, A., Namkoong, H., & Duchi, J. (2018). CertifyingSomeDistributionalRobustnesswith PrincipledAdversarialTraining. ICLR

19. Vovk, V., Gammerman, A., & Shafer, G. (2005). Algorithmic Learning in a Random World. Springer.

20. Benjamini, Y., &Hochberg, Y.(1995). Controlling the False Discovery Rate: A Practical and Powerful Approach toMultipleTesting. Journal of the Royal Statistical Society: Series B,57(1),289–300