International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

J Supreetha Sri 1 , Nishika Timilsina2 , Kundan Jha3 , Rahul Trivedi4 , Saismaran Revinipati5 , Sannidhya Ghosh6

¹National Model Senior Secondary School, 14-B, Kalluri Nagar, Peelamedu, Coimbatore -641016, India

2 Kathmandu Model Secondary School, Bagbazar, Kathmandu 44600, Nepal

3 Chirec International, 1-55/12, Botanical Garden Rd, Sri Ram Nagar, Kondapur, Telangana 500084, India

4 S.N.Kansagra school, University Rd, opposite Akashwani Quarter, Panchayat Nagar, Rajkot, Gujarat 360005, India

5 Chirec International, 1-55/12, Botanical Garden Rd, Sri Ram Nagar, Kondapur, Telangana 500084, India

6 Phoenix Greens School Of Learning, Kokapet,Hyderabad,Telangana 500075, India

Abstract -

Phishing attacks, which frequently trick users with extremely complex messages, continue to be a serious threat to digital communication platforms. The creation of PhishGuard AI, a multilingual, AI-powered phishing detection system that combines an user-friendly Chrome Extension with a transformer-based language model, is presented in this study. A lightweight frontend for real-time user interaction and a FastAPI backend housing optimised models make up the system architecture. To train XLM-RoBERTa for binary classification, two publicly accessible datasets, the SMS Spam Collection and a Phishing Email dataset, were selected and preprocessed. mT5 was used to generate multilingual explanations. Along with a unique preprocessing workflow that included tokenization, padding, and data cleaning, a strong training pipeline that made use of Hugging Face's Trainer API and Adam Woptimiser was employed. Model performance on imbalanced datasets was evaluated using evaluation metrics like accuracy, precision, recall, F1-score, and ROC-AUC. Data encryption, local storage protocols, and secure API design were among the ethical measures incorporated. In general, PhishGuard AI improves user protection and digital literacy bycorrectlydetectingphishingattemptsandinformingusers withconcise,relevantexplanations.

Keywords - Cybersecurity, Machine Learning, Natural Language Processing (NLP), Neural Networks, Phishing Detection, XLM-RoBERTa

With the integration of digital technologies into our everyday lives, greater convenience and constant connectivityhavebeenenabled.

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN:2395-0072 © 2025, IRJET | Impact Factor value: 8.315 | ISO 9001:2008 Certified Journal | Page417

At the same time new opportunities for cyber threats, especially phishing scams, are created [1]. In these scams, individualsaretrickedintoprovidingpersonalinformation, downloading harmful software, or making financial mistakes. In 2024 alone, global financial losses caused by scams were estimated to exceed $1.03 trillion [2]. In addition to financial impact, risks to identity and personal privacyhaveincreasedsignificantly[3].

Traditionally, protection against such threats has been provided through spam filters and blacklist-based systems. Whilethesetoolscanprovidesomelevelofprotection,their effectiveness has steadily reduced as phishing techniques have evolved [4]. Messages are now crafted to mimic trusted brands and use convincing language to bypass conventional filters. Because of this, people are frequently leftvulnerabletothese[5].Topreventthis,moreintelligent andadvancedsystemswhichcanidentifysubtledifferences inlanguage andbehaviourarerequired.

Among the most affected groups are teenagers and seniors [6]. In many cases teens are exposed to new digital platforms before they are aware enough to detect scams. Seniors,thoughoftencautious,maynotbefamiliarwiththe latestonlinecommunicationtrends[7].Hence,theabilityto recognize scams is limited for both groups. This highlights the need for tools that not only detect threats but also educateuserssothattheycandevelopsaferhabits[8].

To meet this need, an AI-powered tool was developed to detect phishing and scam messages [9]. To increase the educational value, rather than simply marking a message as phishing, the tool also provides an insight into the reasoning behind its classification to help users avoid similarthreatsinthefuture[10].Thissolutionworksasan user-friendly browser extension, integrating directly with popular email platforms to offer immediate protection and

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net

education[11].ThroughthiscombinationofAI-modelsand a focus on user accessibility, we aim to foster a safer and moreinformedonlineenvironment[12].

Inshort,anAI-drivensolutionhasbeenproposedinwhich phishing messages are detected by an AI model and explained clearly [13][14]. In doing so, user awareness is enhanced and future susceptibility to online scams is reduced [15]. The aim of this paper is to detail the development and evaluation of this AI-powered browser extension, demonstrating its effectiveness in detecting phishing attempts and educating users on identifying such threats.

Thissectionelaboratesonthecomprehensivedesignand methodological approach underpinning our AIpowered

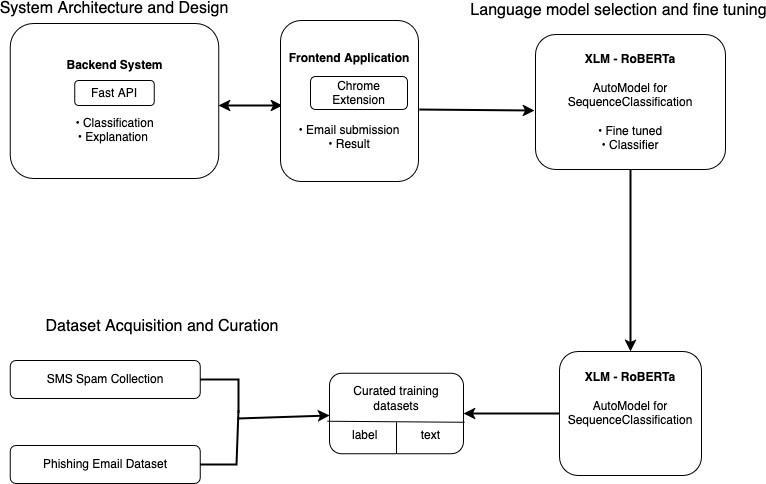

phishing detection system, PhishGuard AI. We detail the dual-component architecture consisting of a robustPython-basedbackendAPIserverand an userfriendly Chrome Extension frontend. The subsequent subsections outline the meticulous process of dataset collectionand curation, ensuring our model is trainedon diverse and representative examples of both legitimate and malicious messages. Wethen delveinto the developmentandimplementationofourchosen transfer learningmodel, XLM-RoBERTa-base,highlighting its finetuning for specializedphishingdetection, complemented byGoogle's mT5-small forexplanation generation. Furthermore, this section details the evaluation and validation metrics employed to assess the system's performance,particularlyinhandlingimbalanceddatasets. Finally, we discuss the practical aspects of system deployment and user interaction, alongside the critical ethical,privacy,andsocialconsiderationsthathaveguided every stage of our system's development, ensuring responsibleandsecuredigitalliteracyenhancement.

Our AI-powered phishing detection system employs a transfer learning approach, i.e. using pre-trained transformer models and fine-tuning them for our specialized needs [16]. This allows us to use the existing linguistic knowledge within these models, while also adapting them to detect the subtle differences in signs of phishing attempts. The overall system is structured into two primary and interconnected components: a Pythonbased backend API server and a user-friendly Chrome Extension frontend. This dual-component architecture ensures powerful AI capabilities while offering an

p-ISSN:2395-0072

accessibleuserexperience.Thisdirectlycontributestoour

goalofenhancingdigitalliteracyandsafety.

BackendSystem:Thebackendsystem,developedinPython uses the FastAPI framework to create a high-performance and efficient API server.Its primary roleistohostthe finetuned language models responsible for message classification and explanation generation [17]. This server manages all AI inference requests, hence efficiently processing incoming text messages to determine if they are safe or scam. The backend is designed for scalable andsecurecommunication,actingasthecentralprocessing unit for the AI logic. It ensures that the complex computations are handled server-side rather than burdening the client-sideapplication.Keyfunctionalitiesof thebackendincludethefollowing:endpointsforclassifying email content and generating explanations for detected phishing attempts, with incoming requests validated using Pydanticmodelstoensuredataintegrity[18][19].

FrontendApplication:ThefrontendapplicationisaChrome Extensionwhichisdesignedforaseamlessintegrationinto theuser'sweb-Browser, particularlyforemailserviceslike Gmail [20]. This extension has a clear and minimalist user interface accessed via a browser popup. It enables users to submit email content for analysis and receive immediate classificationresults(safeorsuspicious), alongwithaclear understandingoftheAI'sdetectionrationale.Theextension is responsible for intercepting email content from web pages using content scripts, communicating with the backend API via background scripts, and displaying the results within its popup interface. Additionally, upon detecting a phishing attempt, the extension locally records the sender's email address within the user's browser storage and hence helps in maintaining a personalized recordofsuspiciousorigins.

Initially,weused theSMS SpamCollectiondataset, sourced from Kaggle [21]. This dataset mainly consists of SMS messages, which offered a foundational set of labeled examples for basic spam detection. It contains a total of 5169 messages, with a class distribution of approximately 87% safe ('ham') messages and 13% spam messages. This initial dataset provided a valuable, though smaller, collection of text-based deceptive messages to begin trainingourmodel.

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN:2395-0072

Thisflowchartshowstheoutlineofourphishingdetection system. Users access a Chrome extension from which the email content can be submitted to a FastAPI backend for classification and explanation. The backend has a fine tuned XLM-RoBERTa model optimized for phishing detection. The model is trained using curated datasets (detailed in Section 2.2) from publicly available sources like kaggle. The datasets include SMS spam and phishing emails.

ForthetrainingofourAIpoweredphishingdetectiontool, we utilized two distinct, publicly available datasets: SMS Spam Collection and Phishing Email dataset. Acquiring bothofthesedatasetswascrucial toensurethatthemodel was trained on diverse and representative examples of both legitimate and malicious messages. The final curated datasets were structured into a consistent format having two columns: “label”, with '0' indicating legitimate/ham messages and '1' indicating spam/phishing messages and “text”containingthemessagecontent.

Subsequently, to enhance the model's ability to identify sophisticated phishing attempts, we used a significantly larger Phishing Email dataset, also publicly available on Kaggle[22].Thisdatasetcontains18,597entries, focusing mainly on email-based phishing attempts. Its class distribution is 61% safe emails and 39% phishing emails. The inclusion of this larger and email-centric dataset allowed our model to learn from a broader range of phishingtactics,includingthosecommonlyfoundin email. This helped improveits generalizationcapabilities beyond SMS-specificpatterns.

The combination of these two datasets provided a large and varied corpus for training. While the SMS Spam

Collection offered a solid baseline for detecting general deceptivemessaging,thePhishingEmaildatasetspecifically targetedthemorecomplexphishingemails,henceenabling the development of a more comprehensive detection system.

Thetrainingdataisdetailedin Table 1 below:

Table 1: Distribution of Training data

After experimenting with several models, we found the XLM-RoBERTa-base as the best suited foundational language model for our core phishing email classification task[23][24][25]. Specifically, we used the pre-trained checkpointfromHuggingFace'sTransformerslibrary[26]. XLM-RoBERTa's strong performance in text classification, its contextual understanding and semantic detection capabilities across multiple languages makes it well-suited for identifying the subtle, and often multilingual phishing attempts[27].WhenmodelslikeDistilBERTandTinyBERT trade some accuracy for computational efficiency by reducing the number of layers and parameters and have limitedfine-tuningflexibility,RoBERTaprioritisesaccuracy and allows for a lot of fine tuning flexibility, this makes it moreaccurate[28].

TheAutoModelForSequenceClassificationarchitecture,a fine-tuned model version of XLM-RoBERTa , uses the information from our training data to classify emails as phishing or ham. This model adds a classification head directly on top of the pre-trained XLM-RoBERTa encoder [29]. This head applies a linear layertothefinalhiddenstateofthe[CLS]token,(aspecial token for classification tasks) , categorizing the input into phishing or ham. The model is then fine-tuned on our curated and labeled phishing datasets which enables the classification head to distinguish decision boundaries specific to phishing characteristics. This fine-tuning process alignsthemodel’sgenerallanguageunderstanding with the task-specific requirement of detecting malicious intent. To prevent overfitting during this crucial phase, hyper parameters such as a lowlearningrate, small batch size, and early stopping mechanisms are employed [30].

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net

Viathismethod,theXLM-RoBERTamodeladaptstodetect the subtle patterns in phishing language, which usually include messages of urgency, manipulation, or unclear intent.Suchcharacteristicsmakethemodelhighlyeffective for real-timeuseasanemailfiltering system that requires accurateandcontextualunderstandingbasedclassification.

In addition to the classification model, our system utilizes Google's mT5-small for generating human-readable explanations of detected phishing attempts. The mT5 (MultilingualText-to-TextTransferTransformer)modelwas chosenforitsrobust performance in text generation tasks across many languages [31]. This aligns perfectly with our system's multilingual classification capabilities. This ensures that explanations can be provided in the same language as the detected phishing email. This dual-model approach allows for both precise detection and comprehensive user education, enhancing the overall utilityofourPhishGuardAIsystem.

Before the model training process is detailed, it's crucial to knowthepreparatorystepstakenwiththerawdata.Firstof all, the data from the dataset is cleaned by removing all rows with missing or null values using the ‘dropna( )’ method.Thisensurestheconsistencyandcompletionofthe data by preventing possible runtime errors and improving the quality of the training process [32]. After the data is cleaned, the raw email text is converted into a numerical format which can be processed by the RoBERTa model through tokenization using ‘RobertaTokenizer’ from Hugging Face’s Transformers library [33]. Tokenization begins with the raw text being broken down intosub-wordunitsortokens. Thenspecialtokens suchas CLS and SEP are added. This tokenizer generates attention masks to distinguish the tokens which are the actual data along with token type IDsthat helps the model to separate the different segments ofinputaspernecessity[34].

To ensure that the messages which are longer than RoBERTa’s maximum input length are truncated instead of causinganoverflowerror,‘truncation=True’isconfigured in the tokenizer. After tokenization is complete, the categorical textlabelsareconvertedto numerical labels,0s and1stodifferentiatesafeandmaliciousones.Suchmodels require numericalization to compute loss and perform classification.

‘DataCollatorWithPadding’ utility can also be used to find the length of the longest input in each batch by avoiding inefficiency of fixed-length padding across all samples, Steps like dynamic padding and structured tokenization are essential to achieve high performance of the model in phishing detection by ensuring that the input

p-ISSN:2395-0072

dataisuniformlystructuredandprocessedbytheRoBERTa model[35].

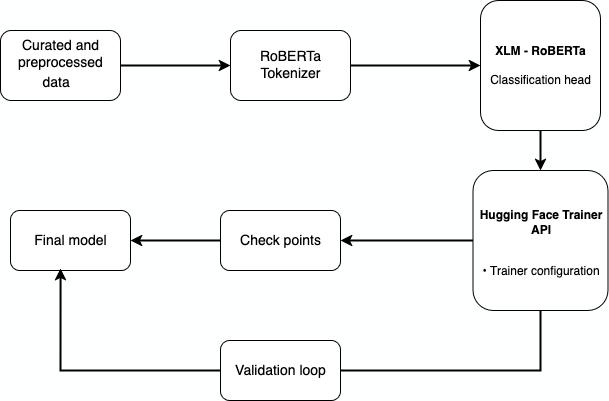

This flowchart shows our model trainingpipeline. Curated phishing and safe emails are first tokenized and then passed onto XLM-RoBERTa model with a classification head. Hugging Face’s Trainer API handles batching, optimizationandlearningratescheduling,hencemanaging the entire training. After training the model is saved and evaluatedusingmetricslikeF1scoreand ROC-AUC.

The training strategy for our transformer-based phishing detection model involved a structured pipeline. The dataset is split up into training and testing sets at an 80/20 ratio. This ensures that the model learns from a majorportionofthedataandtheremainingportionisused for its objective evaluation [36]. Such use of balanced and proportional sampling helps inpreserving the distribution ofphishingandlegitimateemails.

Hugging Face's Trainer API was used in fine-tuning our model, making the process easier and more consistent for transformer architectures [37]. With this framework, the boilerplate training code is abstracted along with evaluation , logging , and checkpoint savings, which are seamlessly integrated into the training loop. A set of hyperparameters control the training using trainer.py configuration. In depth , the model istrained for 3 epochs with a batch size of 8. This is for both training and evaluation. Evaluation is performed after each epoch , alongwithcheckpointsofmodelsbeingsavedatevery500 steps. At maximum, 2 checkpoints are retained. We log metrics every 100 steps to monitor training progress and detectoverfitting.

The Trainer class employs default settings if not explicitly overridden. Moreover, the AdamW optimizer and a linear learning rate scheduler are also included in the default setting for better performance [38]. These configurations

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net

providestableconvergenceswhichare widelyusedinfinetuning transformers to maintain robustness during deployment on real-world phishing emails [39]. Once the training is complete, the fine-tuned model is saved for deployment.

Toeffectively evaluatetheperformanceofourclassification model,especiallyinthecontextofanimbalanceddataset(a common characteristic of phishing email collections), we utilize some evaluation metrics beyond simple accuracy. These metrics provide a more nuanced understanding of the model's strengths and weaknesses, particularly in identifying the minority class (phishing emails) [40].

The primary metricsemployedareexplainedbelow:

● Accuracy: It measures how many predictions were correctoverall.

Where…

- TP (True Positives): Correctly predictedpositiveinstances.

- TN (True Negatives): Correctly predictednegativeinstances.

- FP (False Positives): Incorrectly predictedpositiveinstances.

- FN (False Negatives): Incorrectly predictednegativeinstances.

● Precision: This measures the proportion of correctly predicted positive instances out of all instances predictedaspositive.

p-ISSN:2395-0072

ROC-AUC (Receiver Operating Characteristic - Area

Underthe Curve):

This evaluates the model's ability to differentiate between the positive and negative classes across various classificationthresholds[41].

TheROCcurveplotstheTruePositiveRate(Recall)against theFalsePositiveRate(FPR)atvariousthresholdsettings. The Area Under the Curve (AUC) then shows the overall differentiating power. This ranges from 0.5 (random guessing)to1.0(perfectdifferentiation).

Where…

- FPR(FalsePositiveRate)=FP/(FP+TN)

The PhishGuard AI system is designed for seamless integration into the user's daily online activities, primarily through a user-friendly Chrome Extension. This frontend application ensures an accessible user experience, directly contributing to our goal of enhancing digitalliteracyandsafety.

The Chrome Extension provides a clear and minimalist user interface, accessed via a browser popup. It enables users to easily submit email content for analysis and receive immediate classification results (safe or suspicious). Crucially, the system also provides clear, educational explanations of the AI's detection rationale, helping users understand why a message is flagged as suspicious. This feature, powered by Google's mT5-small model, aligns with our objective of promoting digital literacy by educating users on subtle language patterns and behavioral cues indicative of phishing attempts.

● Recall (Sensitivity): This measuresthemodel'sability toidentifyall actualpositiveinstances. classdistributions.

● F1-Score: This is the harmonic mean of precision and recall. This provides a balanced measure of a model's performance that is especiallyvaluablewith

Forongoinguserprotectionandlearning,upondetectinga phishingattempt,theextensionlocallyrecordsthesender's email address within the user's browser storage. This personalized record of suspicious origins assists users in building better online habits and recognizing potential threats over time. The backend API server efficiently handles the complex AI inference requests, ensuring that theclassificationandexplanationgenerationprocesses are swift and do not burden the user's client-side application, thusmaintainingasmoothandresponsiveuserinteraction.

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net

The data will be encrypted end to end in order to ensure the privacy of the user, and will not be stored on external databases. All datasets were sourced from open sources which will be checked upon and trained data that is to be usedwillbeanonymized[42].

To prevent data leakages, the extension will have security audits conducted on a regular basis and unusual patterns andactivitythatareloggedwillbemonitoredcontinuously. Asdatawillbeencrypted, thedatawillbeprotectedandat rest in the case of a data compromise. Data between the user and the extension will be stored locally in the user's browser environment and if syncing is turned on across devices, it will run in secure channels that will be maintainedtoensureproperfunctionality[43].

The use of FastAPI helps secure the API endpoints through its quick performance and through the use of Pydantic models it validates incoming requests, which it does by stating certain restrictions and requirements necessary for the request to pass through. It also encrypts data through theuseofHTTPS.Allinall,thisextensionhelpsbuilddigital literacy for all as it allows the immediate detection of phishing emails and allowsthe user todistinguishbetween alegitimateandaphishingemail[44].

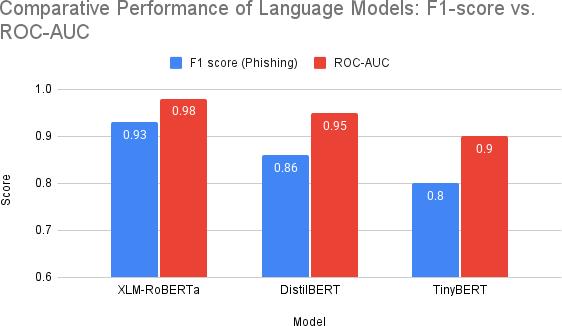

After the curation of the training and testing sets, we first created a structured pipeline where the dataset which we curated was split up into training and testing sets at an 80/20 ratio. We then filtered out the phishing (30%) and safe(70%)emails.Thenafter,wetestedthealgorithmand analyzed its performance using various metrics. Amongst the 3 transformer-based models (DistilBERT, TinyBERT, and XLM-RoBERTa-base) which we evaluated, XLMRoBERTa stood out to be the most reliable for phishing detection tasks, providing a general sense of how well the model can perform across all classes. With accuracy of 97.23%, XLM-RoBERTa outperformed the other models along with our assessment in performance using precision of 0.94, recall of 0.93, F1-score of 0.93, and an ROC-AUC scoreof0.98,whichindicatedstrongclassseparation.

Whereas, both DistilBERT and TinyBERT indicated lower precision and recall for phishing detection. In the case of DistilBERT, while it was efficient it lacked the ability to capture complex phishing patterns, which led to reduced accuracy. Similarly, TinyBERT’s compact architecture limited its contextual understanding thus, affecting the subtlephishingcues.

p-ISSN:2395-0072

Performance Comparison of Transformer Models

Thisgroupedbarchartvisuallycomparestheperformance of XLM-RoBERTa base, DistilBERT and TinyBERT across twokeymetrics:F1scorefor phishing class and ROC-AUC. The F1 score balances precision and recall of phishing detectionwhichisimportantforhandlingunevendatasets. ROC-AUC shows the model's overall ability to distinguish between safe and phishing emails. As shown XLMRoBERTa-baseclearlydemonstratessuperiorperformance onbothmetrics.

Thedetailedcomparisonisshownin Table 2 below:

Table 2: Performance Comparison of Transformer Models

We compared the fine-tuned XLM-RoBERTa model with DistilBERT and TinyBERT using metrics like Accuracy, Precision, Recall,F1-score,andROC-AUC.Wealsolookinto

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net

the mT5-small model’s ability to create clear explanations for phishing classifications to improve user understanding anddigitalliteracy.

The fine-tuned XLM-RoBERTa-base model showed better performance in phishing detection, reaching an F1-score of 0.93 and a ROC-AUC of 0.98. It consistently outperformed DistilBERTandTinyBERT,thanks toitsdeeperarchitecture andstrong contextual understanding, whichis essential for spotting subtle and deceptive phishing patterns. This confirms the choice of XLM-RoBERTaforcreatingamore accurateandreliabledetectionsystem.

Focusing on F1-score, precision (0.94), and recall (0.93) was important due to the class imbalance common in phishing datasets. High precision reduces false positives, which helps maintain user trust, while high recall ensures real threatsareidentified,loweringtheriskoffinancial and privacyharm.

Using mT5-small for explanation generation helps users understand complex AI decisions by translating them into simple language. Together, detection and explanation improve digital safetyandempowervulnerablegroups like teensandseniorstobetterrecognizeonlinethreats.

The model was trained on public datasets, which may not reflectthechangingnatureofphishing.Regularupdatesand retraining are necessary for ongoing performance. The explanationfeature,poweredbymT5-small,was evaluated

only for clarity, not itsreal-worldeffectiveness which wouldneeduserstudies.

Real-worldusealsocomeswithhardwareandconnectivity issues. Although the tool is optimized for efficiency, we haven't tested performance across various devices. Furthermore, while XLM-RoBERTa boosts accuracy, its computational needsmay restrictaccessibilityonolder or slowerdevices.

In conclusion, this research delved deep into the implementation of a phishing detection Chrome extension using various transformer models. First, we decided to compare the performance of DistilBERT, TinyBERT, and XLM-RoBERTa to identify the most effective model. After proper examination using metrics such as F1-score, precision, recall,andROC-AUC,theRoBERTa-basedmodel outperformed the others and was selected as the final modelforourproject[45].

The backend of the extension was developed using FastAPI, using a fine-tuned XLM-RoBERTa model

p-ISSN:2395-0072

trained on phishing datasets sourced from Kaggle, consisting mainly of phishing sms and emails. This setup displays efficient real-time classification with higher accuracy[46].

While the results were promising, further work is needed to improve adaptability to evolving phishing techniques. Moreover, using models like XLM-RoBERTa can require enormous processing power, and only upon the usage and user feedback of the mT5 model we will be able to assess itsfunctioningandperformance[47].

This research was supported by Incognito Blueprints Pvt. Ltd.

Author contributions

N.T. conceptualisation, software, formal analysis. S.R. writing - original draft, conceptualisation, validation. K.J. conceptualisation, software, methodology, visualisation. R.T. writing - original draft, validation, methodology. S.R. writing - original draft, conceptualisation, validation. S.J. visualisation, writing - review and editing, investigation. S.G. writing - review and editing, data curation, investigation.

Competing financial interests

The authors declare no competing financialinterests.

[1.]Sarabjit Kaur, “DIGITAL TRANSFORMATION AND CYBER SECURITY CHALLENGES IN THE INFORMATION AGE,” Journal of Informatics Education and Research,vol.5, no.1,Feb.2025, doi:https://doi.org/10.52783/jier.v5i1.2208.

[2.] S. Rogers, “International Scammers Steal Over $1 Trillionin12MonthsinGlobalStateofScamsReport2024,” GASA,Nov.07,2024. https://www.gasa.org/post/global-state- of-scams-report2024-1-trillion-stolen-in-12-months-gasa-feedzai

[3.] Z. Alkhalil, C. Hewage, L. Nawaf, and I. Khan, “Phishing Attacks: a Recent Comprehensive Study and a New Anatomy,” Frontiers inComputerScience,vol.3,no.1,pp.1–23, Mar. 2021, doi: https://doi.org/10.3389/fcomp.2021.563060.

[4.] Z. Alkhalil, C. Hewage, L. Nawaf, and I. Khan, “Phishing Attacks: a Recent Comprehensive Study and a New

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN:2395-0072

Anatomy,” Frontiers inComputerScience,vol.3,no.1,pp.1–23, Mar. 2021, doi: https://doi.org/10.3389/fcomp.2021.563060.

[5.]Tolamise Olasehinde, “THE EVOLUTION OF PHISHING ATTACKS: TACTICS AND COUNTERMEASURES,”Oct.21, 2024. https://www.researchgate.net/publication/ 385091892_THE_EVOLUTION_OF_PHISHING_ATTACKS_ TACTICS_AND_COUNTERMEASURES

[6.] M. Lim, “Evaluating Risks of Social Media For Teenagers,”doi:https://doi.org/10.36838/v7i1.11.

[7.] T. Lin et al., “Susceptibility to Spear-Phishing Emails: Effects of Internet User Demographics and Email Content,” ACM Transactions on Computer-Human Interaction, vol. 26, no. 5, pp. 1–28, Jul.2019, doi: https://doi.org/10.1145/3336141.

[8.] T. Lin et al., “Susceptibility to Spear-Phishing Emails: Effects of Internet User Demographics and Email Content,” ACM Transactions on Computer-Human Interaction, vol. 26, no. 5, pp. 1–28, Jul.2019, doi: https://doi.org/10.1145/3336141.

[9.]S.KumarB.,A.Kiran,V.E.,R.D.Hegde,D.V.Fuletra,and K. Ittigi, “Machine Learning-Driven Phishing Detection: A Robust Browser Extension Solution,” International Journal of Innovative Science and Research Technology, pp. 988–991,Mar. 2025, doi: https://doi.org/10.38124/ijisrt/25mar670.

[10.] L. Xue et al., “mT5: A massively multilingual pretrained text-to-text transformer,” arXiv:2010.11934 [cs], Mar.2021,Available:https://arxiv.org/abs/2010.11934

[11.] S. Kumar B., A. Kiran, V. E., R. D.Hegde, D. V. Fuletra, and K. Ittigi, “Machine Learning-Driven Phishing Detection: A Robust Browser Extension Solution,” International Journal of Innovative Science and Research Technology,pp.988–991,Mar. 2025, doi: https://doi.org/10.38124/ijisrt/25mar670.

[12.] F. T. Ngo, Rustu Deryol, B. Turnbull, and J. Drobisz, “The Need for a Cybersecurity Education Program for Internet Users with Limited English Proficiency: Results from a Pilot Study,” International journal of cybersecurity intelligenceandcybercrime,vol.7,no.1,Feb. 2024,doi: https://doi.org/10.52306/2578-3289.1160.

[13.] S. Kumar B., A. Kiran, V. E., R. D.Hegde, D. V. Fuletra, and K. Ittigi, “Machine Learning-Driven Phishing

Detection: A Robust Browser Extension Solution,” International Journal of Innovative Science and Research Technology,pp.988–991,Mar. 2025, doi: https://doi.org/10.38124/ijisrt/25mar670.

[14.] L. Xue et al., “mT5: A massively multilingual pretrained text-to-text transformer,” arXiv:2010.11934 [cs], Mar.2021,Available:https://arxiv.org/abs/2010.11934

[15.] F. T. Ngo, Rustu Deryol,B.Turnbull,and J. Drobisz, “TheNeedfor a Cybersecurity Education Program for Internet Users with Limited English Proficiency: Results from a Pilot Study,” International journal of cybersecurity intelligence and cybercrime, vol. 7, no. 1, Feb. 2024, doi:

https://doi.org/10.52306/2578-3289.1160.

[16.]A.Vaswani etal.,“AttentionIsAllYouNeed,” Jun. 2017.Available:https://arxiv.org/pdf/1706.03762

[17.] Aekachai Phanomtip, Thaiyathorn Sueb-in, and Sirion Vittayakorn, “Cyberbullying detection on Tweets,” May 2021,doi: https://doi.org/10.1109/ecti-con51831.2021.9454848.

[18.] L. Xue et al., “mT5: A massively multilingual pretrained text-to-text transformer,” arXiv:2010.11934 [cs], Mar.2021,Available:https://arxiv.org/abs/2010.11934

[19.] A. Al-Subaiey, M. Al-Thani, N. A. Alam, K. F. Antora, A. Khandakar, and S. A. U. Zaman, “Novel Interpretable and Robust Web-based AI Platform for Phishing Email Detection,” arXiv.org, May 19, 2024. https://arxiv.org/abs/2405.11619

[20.] H. Shi, S. Xia, Q. Qin, T. Yang, and Z. Qiao, “NonStationary Platform Inverse Synthetic Aperture Radar Maneuvering Target Imaging Based on Phase Retrieval,” Sensors, vol. 18, no. 10, p. 3333, Oct. 2018, doi: https://doi.org/10.3390/s18103333. [21.] “SMS Spam Collection Dataset,” www.kaggle.com https://www.kaggle.com/datasets/uciml/sms-spamcollection-dataset

[22.] S. Chakraborty, “Phishing Email Detection,” www.kaggle.com, 2023. https://www.kaggle.com/datasets/subha journal/phishingemails

[23.]A.Vaswani etal.,“AttentionIsAllYouNeed,” Jun. 2017.Available:https://arxiv.org/pdf/1706.03762

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN:2395-0072

[24.] J. Devlin, M.-W. Chang, K. Lee, and K. Toutanova, “BERT:Pre-trainingofDeepBidirectionalTransformersfor Language Understanding,” ArXiv, Oct. 11, 2018. https://arxiv.org/abs/1810.04805

[25.] R. Meléndez, M. Ptaszynski, and M. Fumito, “Comparative Investigation of Traditional Machine Learning Models and Transformer Models for Phishing Email Detection,” Preprints.org, Oct. 18, 2024. https://www.preprints.org/manuscript/202410.1467/v1

[26.] T. Wolf et al., “HuggingFace’s Transformers: State-ofthe-art Natural Language Processing,” arXiv:1910.03771 [cs], Feb. 2020, Available: https://arxiv.org/abs/1910.03771

[27.] A. Conneau et al., “Unsupervised Cross-lingual Representation Learning at Scale,” arXiv:1911.02116 [cs], Apr. 2020,Available:https://arxiv.org/abs/1911.02116.

[28.] Z. Zeng and S. Bhat, “Idiomatic Expression IdentificationusingSemanticCompatibility,” doi: https://doi.org/10.1162/tacl.

[29.] Z. Zeng and S. Bhat, “Idiomatic Expression Identification using Semantic

Compatibility,”

doi:https://doi.org/10.1162/tacl.

[30.] Z. Zeng and S. Bhat, “Idiomatic Expression IdentificationusingSemanticCompatibility,” doi: https://doi.org/10.1162/tacl.

[31.] L. Xue et al., “mT5: A massively multilingual pretrained text-to-text transformer,” arXiv:2010.11934 [cs], Mar.2021,Available:https://arxiv.org/abs/2010.11934

[32.] “(PDF) Data Preprocessing for Supervised Learning,” ResearchGate https://www.researchgate.net/publicatio n/228084519_Data_Preprocessing_for_Supervised_Learning

[33.] Y. Liu et al., “RoBERTa: A Robustly Optimized BERT PretrainingApproach,” arXiv.org, Jul. 26, 2019. https://arxiv.org/abs/1907.11692

[34.] J. Devlin, M.-W. Chang, K. Lee, and K. Toutanova, “BERT:Pre-trainingofDeep Bidirectional Transformers for LanguageUnderstanding,” ArXiv, Oct. 11, 2018. https://arxv.org/abs/1810.04805

[36.]

[35.] T. Wolf et al., “HuggingFace’s Transformers: State-ofthe-artNaturalLanguageProcessing,” arXiv:1910.03771[cs], Feb.2020,Available:https://arxiv.org/abs/1910.03771

[36.]R. Meléndez, M. Ptaszynski, and M. Fumito, “ComparativeInvestigationofTraditionalMachineLearning

Models and Transformer Models for Phishing Email Detection,” Preprints.org, Oct. 18, 2024. https://www.preprints.org/manuscript/202410.1467/v1

[37.] T. Wolf et al., “HuggingFace’s Transformers: State-ofthe-artNaturalLanguageProcessing,” arXiv:1910.03771[cs], Feb.2020,Available:https://arxiv.org/abs/1910.03771

[38.] T. Wolf et al., “HuggingFace’s Transformers: State-ofthe-artNaturalLanguage Processing,” arXiv:1910.03771

[cs], Feb. 2020, Available: https://arxiv.org/abs/1910.03771

[39.] Z. Zeng and S. Bhat, “Idiomatic Expression IdentificationusingSemanticCompatibility,” doi: https://doi.org/10.1162/tacl.

[40.] M. Sokolova and G. Lapalme, “A Systematic Analysis of Performance Measures for Classification Tasks,” Information Processing & Management, vol. 45, no.4,pp.427–437, Jul. 2009, doi: https://doi.org/10.1016/j.ipm.2009.03.002.

[41.] Younis Al-Kharusi, A. Khan, M. Rizwan, and M. M. Bait-Suwailam,“Open-SourceArtificial IntelligencePrivacy and Security: A Review,” Computers, vol. 13, no. 12, pp. 311–311,Nov. 2024, doi: https://doi.org/10.3390/computers13120311.

[42.] A. Al-Subaiey, M. Al-Thani, N. A. Alam,K. F. Antora, A. Khandakar, and S. A. U. Zaman, “Novel Interpretable and Robust Web-based AI Platform for Phishing Email Detection,” arXiv.org, May 19, 2024. https://arxiv.org/abs/2405.11619

[43.] A. Al-Subaiey, M. Al-Thani, N. A. Alam,K. F. Antora, A. Khandakar, and S. A. U. Zaman, “Novel Interpretable and Robust Web-based AI Platform for Phishing Email Detection,” arXiv.org, May 19, 2024. https://arxiv.org/abs/2405.11619

[44.] Z. Liu, W. Lin, Y. Shi, and J. Zhao, “A Robustly Optimized BERT Pre-training Approach with Posttraining.” Available: https://aclanthology.org/2021.ccl1.108.pdf

[45.] J. Chen, “Model Algorithm Research based on Python FastAPI,” Frontiers in Science and Engineering, vol.3, no.9, pp.7–10, Sep. 2023, doi: https://doi.org/10.54691/fse.v3i9.5591. [47.]“ROBERTA: A ROBUSTLYOPTIMIZEDBERT PRE- TRAINING APPROACH.” Available: https://openreview.net/pdf?id=SyxS0T4tvS