Face Recognition Based on Image Processing in an Advanced Robotic System

Department of Instrumentation, Bhaskaracharya College of Applied Sciences, University of Delhi ***

Abstract - In the present work, face recognition system has been developed using image processing technique. Thesystem developed works in two stages. In the first stage, face recognition algorithm is used to unlock the system by a valid user face. Once the system is unlocked, then the motion of robot is controlled using different navigation images. Face recognization is implemented using SVM (support vector machine), HOG (histogram of oriented gradients)and kNN (knearest neighbors) algorithm inMATLAB. Thewholeprocessis based on the concept called Machine Learning in artificial intelligence. Here we are dealing with supervised machine learning technique where the machine is trained insupervised manner

Key Words: Face recognition, SVM, HOG, KNN, Machine learning

1. INTRODUCTION

Thefieldofdigitalimageprocessinghasgrownrapidlyafter 1960, after the development of hi-speed computer. It has foundimportanceinmainlytwoareas:(i)Improvementof pictorial information for better human interpretation and the second being the processing of a scene data for an autonomousmachineperception.Thefirstfeaturei.e.image enhancementandrestorationareusedtoprocessdegraded or blurred images. As per medical imaging is concerned, mostoftheimagesmaybeusedinthedetectionoftumorsor for screening the patients. Whereas second feature i.e. machineperceptioncanbeemployedinautomaticcharacter recognition,industrialmachinevisionforproductassembly and inspection. The continuing decline in the ratio of computer price to performance and the expansion of networking and communication bandwidth via the world wide web and the Internet have created unprecedented opportunities for continued growth of digital image processing[1].

Digital image processing basically includes the following three steps: Importing the image with optical scanner, analyzingandmanipulatingtheimageandOutputisthelast stageinwhichresultcanbealteredimageorreportthatis basedonimageanalysis.Itcanbeusedtoperformvarious tasks such as: (i) Visualization of the objects that are not visible, (ii) Image sharpening and restoration to create a betterimage,(iii)Imageretrievaltoseekimageofinterest,

(iv) Measurement of pattern in an image and (v) Image Recognition–Distinguishtheobjectsinanimage[2].

Inthepresentwork,wewillbeimplementingthe(v)parti.e. Facerecognition.Asweknowthatfaceplaysamajorrolein oursocialintercourseinconveyingidentityandexpressions. Thehumanabilitytorecognizefacesisremarkable.Wecan recognizethousandsoffaceslearnedthroughoutourlifetime and identify familiar faces at a glance even after years of separation. But developing a computational model of face recognition is quite difficult, because faces are complex, multidimensional, and subject to change over time and integrationoffacerecognitioninroboticsismoredifficult [3-4].

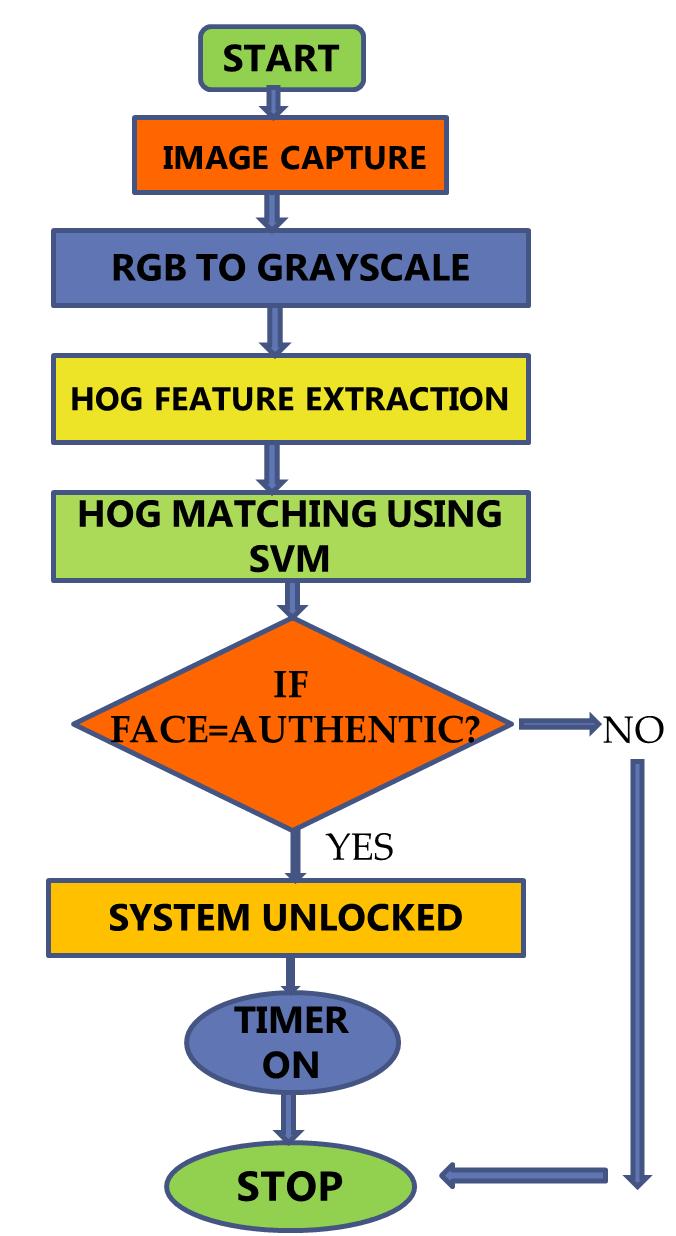

Face recognition has its applications in various fields. Machinesandtechnologyareincreasingrapidly,butwelack asystemwhichcandistinguishbetweendifferentusersand respond to that uniquely. Today most of the systems are blindbecausetheycannotdifferentiatebetweenvalidand invalid users. Keeping security breaching in mind, face recognition based system must be developed in order to improvesecuritylevel.Facerecognitionisapartofadvanced image processing which can be achieved using image processingalgorithmasshowninFigure1[5].

Inthepastfewyears,variousresearchershavedonework onfacerecognitionalgorithm.Mostofthemhaveworkedon Principalcomponentanalysis(PCA)algorithminMATLAB. Thisapproachreducesthedimensionofthedata/imageby means of data compression which might cause loss in the information.Italsoreducesthedimensionsofthedata.Also PCAuses1Darraytostoretheinformationofthetestdata, whichleadstoinsufficiencyofspacefordatastorage.Apart fromthis,someotherworkershaveusedBackPropagation Neural Network (BPNN) algorithm for classification of trainingdataandruntimecapturedtestdata/image,which is a very complex process of neural network of artificial intelligence [7]. Both these methods are complex and producesunsatisfactoryresults.

Inthepresentwork,facerecognizationisimplementedusing SVM(supportvectormachine),HOG(histogramoforiented gradients) and kNN (k-nearest neighbors) algorithm in MATLAB.Further,wehaveusedthismodelincontrollingthe motionoftherobot.

2. Working Model

Working model of a face recognition based system can be majorlydescribedintwostages.Boththestagesusesimage processing.Inthefirststage,Facerecognitionalgorithmis used to unlock the system by a valid user face. Valid face imageswillbepre-storedinthesystem.Oncethesystemis unlocked, then the motion of robot is controlled using differentnavigationimages.Thewholeprocessisbasedon theconceptcalledMachineLearninginartificialintelligence. Here we are dealing with supervised machine learning technique where the machine is trained in supervised manner. We have used various algorithm of MATLAB to acheievethis.Wewillnowdiscussthemonebyone.

HOGisafunctionusedinMATLAB,whichfindsthegradients ofeachpixelofanimagecapturedduringruntime.Gradients obtainedareusedtobuildahistogramofgradientsandthen theyarestoredina2Darray,whichisthenassignedwitha groupnameanditcanbefurtherusedforclassificationofan image. While using HOG, there are no problem of data compression and dimensions reductions, so there is no chance of loss of information. In addition to this, HOG descriptor has advantages over other descriptors, as it is invarianttogeometricandphotometrictransformations.[8]

Imagecaptured is displayedon a window using command ‘imshow(imr)’andHOGfeatureofrealtimecapturedimageis then extractedusingcommand‘extractHOGFeatures’.Finally using command ‘[feat] = hog_feature_vector(imr)’ the extractedHOGfeatureisrepresentedintheformofavector, i.e. vectorisation is done. Here, pause is required for the capturedimagestoappearonwindowclearly.

Fig2-HOGfunctionworking

Beforeproceedingfurther,let’sunderstandtheworkingof HOGfunction.AsshowninFigure2,[9]theimagecapturedis dividedintocellsandthenorientationofgradientsisbeing foundforeachcell.Aftergradientorientationhasbeenfound successfully,it’sbeenconcatenatedtoformahistogramand finallyhistogramisshowninvectorform

SupportVectorMachine(SVM)-Whiledealingwithcomplex data sets such as faces, kNN cannot be used. KNN is a classifierwhichdealswithdatasetsthatareeasytohandle andoperatewhereasSVMclassifierdealswithdatasetsthat arecloselyrelatedtoeachotherinthematterofresemblance. Asweknow,humanfacesaresimilartoeachothertoahigh extent,sofacerecognitionneedstohaveaclassifierwhich canbeabletodifferentiatebetweensuchsimilardatasets.

Itusestheconceptofhyperplaneforclassification.Itcreates alineorahyper-planebetweentwosetsofdatawhichare relatedtoeachotheratalargeextentandthusseparatethem outprecisely.WeareusingSVMhereinawaytoclassifythe real time image captured with the images of human faces already present in the system. SVM takes two data sets at random and separate them out using a hyperplane. Hyperplanecanbetreatedhereasaboundarybetweendata sets.Nowthesetwodatasetsarematchedwiththerealtime capturedimagedatasetusingaclassifierandthusitgivesthe optimumresult. SVMisusedheretomatchthefeatureofan image with the training data set so that system can authenticateauserandunlockit,ifitfindsanauthenticface.

Inmachine learning,SVMs aresupervised learningmodels withassociatedlearningalgorithmsthatanalyzedata used forclassification.[10]

SVM function is used as a classifier for face recognition operation.Ourmaintaskhereistofindtheoptimalmatches of the human face images captured during run time. The command‘SVM_Mdl=fitcecoc(fea,group)’isusedtofindthe match of the feature group and then command ‘c = predict(SVM_Mdl,test_feature)’isusedforpredictionoftest feature group which is closely related to the run time capturedimagesfeatures.

3. EXPERIMENTAL ANALYSIS

Image, i.e. human face, is first captured which is to be processed by MATLAB algorithm. Image captured is then convertedintograyscalebecauseitreducesthechancesof errorandalsoitreducesthetimeconsumedinprocessing. Now the captured image is analyzed by extracting its gradientfeatureusingHOGfunction.Nowatthisstagewe have training data set and run time data set and thus we needtomatchthemfortheirclassification.SVMisusedhere asaclassifiertomatchthetrainingdataandruntimedata. Themomentsystemencountersanauthenticuser,i.e.valid face, a timer will start and it will unlock the system for a fixedtimeperiodinwhichweneedtocontrolmotionofthe robot using different images. In case we fail to control roboticmotioninthatparticulartimeperiod,systemwillget lockedagainandnoonecanaccessthissystem.

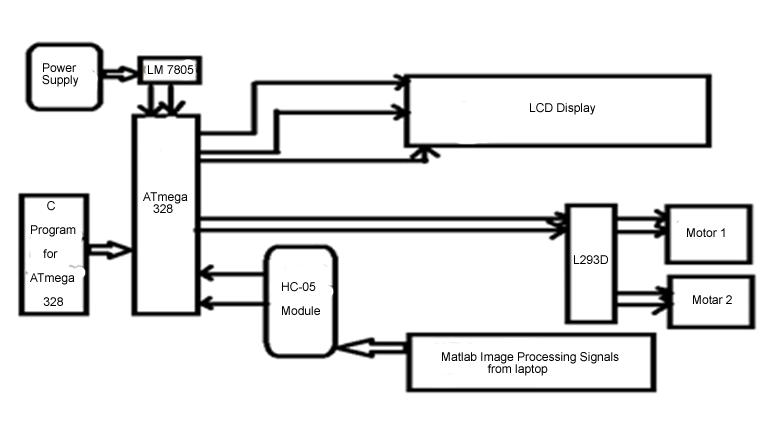

AsshowninFigure3,itcanbeseenthatthecentralunitof the system is microcontroller. MATLAB simulation sends signalbitsgeneratedfromtheimageprocessingtotherobot usingBluetoothcommunication,HC-05module.ATmega328 microcontrollergetsthatbitsanddecodeusingprogramming done in it. After decoding, microcontroller sends corresponding information to the LCD and corresponding signalbitstomotordriverL293DIC.MotordriverICfurther amplifiesthatreceivedsignalandsendsappropriatevoltages toDCmotorstocontroltheirmovementandthuscontrols roboticmotion.

3.1

Now we have to control motion of robot using image processing technique. So for this we need to process the imagesthatweareusingformotioncontrol.Wehaveatimer runninganditshowsthetimeweareleftwithinwhichonly we can motion. So here too the operation starts with the image/videocaptureandthentheimagetakenisconverted into gray scale, which reduces the chances of error. Using HOGfunction,gradientsofanimageareextractedandthenit is stored in a 2D array. We have already stored training

imagesandtheirgradientshavealreadybeenextractedand storedindifferent2Dmatrices.Nowweneedtomatchthe trainingfeatureswiththeruntimeimagefeatures,whichwe aredoingherewithknnfunction.Itmatchesthemandgives thegroupnumberofthatarraywhichiscloselyrelatedto runtimeimagefeature.Aftermatching,wewillhavedigital bytes as output which we need to transmit to the robotic system which further operates on incoming received bits and control robotic motion accordingly. We are using bluetoothcommunicationfortransmittingdataserially.

3.3 Flow chart for microcontroller

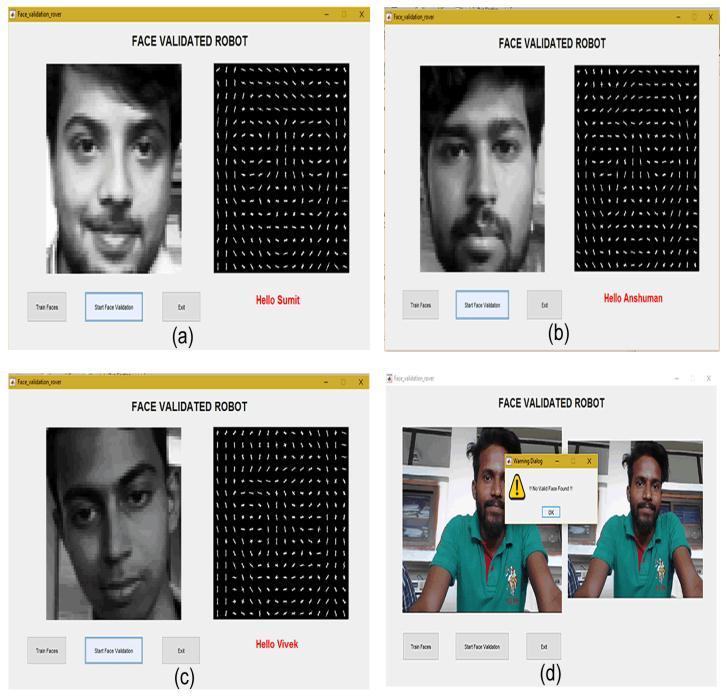

windowontheleftsideandanothersub-windowontheright sideforfeatureextractionasshownbelowwhichareusedto accomplish the task of face validation. This algorithm is workinganditisaccuratelyrecognizinghumanfacesthatare authentic.Herefacerecognitionpartisshownforourteam members.Outoffourmembers,wehaveusedthreefacesas authenticuserandrestallareunauthentic.Wehaveshown thescreenshotsofresultsobtainedafterrunningtheabove code. In the screenshots shown below, it can be observed howfacevalidationisdoneaccuratelyforourteammembers.

Fig6- CProgramFlowchartformicrocontroller

Nowwehaveincomingbytesintheformof‘F’,‘B’,‘L’and‘R’ fromtheMATLABsimulationwhichisnowusedformotion control. Microcontroller checks for the incoming bytes continuouslyandifthereisnodatareceived,itwilldisplay ‘STOP’ on LCD screen. The time microcontroller gets an incomingbytes,itwillworkuponthatandtrytodecodethat byte for further operation. After decoding incoming byte, microcontroller will send signals to the motor driver IC L293DanddriverICafteramplificationsendscorresponding voltagestotherespectiveDCmotorsandthuscontrolsits motion.

4. RESULTS AND DISCUSSION:

Inordertoachievethetaskoffacevalidation,weneedtorun thespecifiedcode.Oncodeexecution,awindowwillappear on the desktop which has a real time image capture sub-

Fig7- ResultsofFaceRecognitionSystem

In all the pictures above, we can see how the gradient orientationoffaceisobtainedandthentheyareusedforface validation.Allthethreemembersarerecognizedaccurately andthusallthethreememberscanunlocksystem.

In order to train this system for more faces, press button ‘TrainFaces’shownontheleftbottom.Forthatweneedto store images of new face in the system and by clicking on trainfacesbutton,requiredfeatureofimagecanbeextracted andstoredinthesystemfortheirclassification.

Nowifanunauthenticornotvalidfaceisbeingexposedin frontofthewebcam,theresultobtainedisshownFigure7 (d).Awarningmessagecomesassoonasaninvalidfaceis tryingtounlockthesystem.

After unlocking the system, the robotic motion can be controlled.Arrowsignboardisusedindifferentorientations tocontrolthemotionoftherobot.InFigure9(a),wecansee when the forward arrow sign is shown to webcam of the laptop,MATLABalgorithmusesimageprocessingtechnique toextracttherequiredfeaturefromarrowimageandthen

theyareusedtomovetherobotinforwarddirection.Wecan seeinthewindowontherightsideofthefigurebelow,arrow imageisbeingprocessedandconvertedintograyscaleand thenitsfeatureisextracted.Thesimilarresultsareobtained for‘BACKWARD’,‘LEFTWARD’,‘RIGHTWARD’directions.

International Journal of Advanced Research in Science,CommunicationandTechnology(IJARSCT) Volume2,Issue2,March2022.

[5] A MATLAB based Face Recognition System using ImageProcessingandNeuralNetworksJawadNagi, SyedKhaleelAhmedFarrukhNagi4thInternational Colloquium on Signal Processing and its Applications, March 7-9, 2008, Kuala Lumpur, Malaysia.

[6] RozaDastresandMohsenSoori,AdvancedImage processing systems, Internationational journal of imagingandRobotics,vol21, 2021.

[7] Signal&ImageProcessing:AnInternationalJournal (SIPIJ) Vol.2, No.3, September 2011 Anissa Bouzalmat,NaouarBelghini,ArsalaneZarghili,and Jamal Kharroubi, Face Detection and Recognition Using Back Propagation Neural Network and FourierGaborFilter

3. CONCLUSIONS

Thefacerecognitionsystemforadvanceduseinroboticshas been successfully developed which performs the desired functionssatisfactorily.Asprojected,thesystemefficiently unlocksforthedefineduserandenableshimtoaccessthe robot. The robot in turn, also performs the desired movementsadequatelyasinstructedbythe user.Multiple userscanbedefinedforgivingtheaccessbyincorporating theirdetailsintheprogram.Userscanbeadded,deletedor changedasperourapplicationbymodifyingtheprogram.In future,weproposetointroduceahighendsecuritysystem calledIRISscannerandidentifier.Itcanbeimplementedin our robotic system to first scan and identify an authentic user’s iris and then use this information for high level roboticsoperation.

REFERENCES

[1] Digital image processing 3rd edition, Rafael C Gonzalez

[2] Yan Sun,1Zhenyun Ren,1and Wenxi Zheng, ResearchonFaceRecognitionAlgorithmBasedon ImageProcessing,ComputationalIntelligenceand NeuroscienceVolume2022

[3] M.A.TurkandA.P.Pentland.“Facerecognitionusing Eigenfaces”.InProc.ofComputerVisionandPattern Recognition,pages586-591.IEEE,June1991b.

[4] Facial Recognition Using Image Processing Dr. Madhusudhan, Chinmaya Dayananda Kamath, Darshan Naik M , Chinmaya Bhatt K, Shreyas,

[8] Navneet Dalal and Bill Triggs, Histograms of Oriented Gradients for Human Detection, Published in: Computer Vision and Pattern Recognition, 2005. CVPR 2005. IEEE Computer SocietyConferenceon, Date ofConference: 20-25 June 2005,Date Added to IEEE Xplore: 25 July 2005, ISBN Information: Print ISSN: 1063-6919, INSPEC Accession Number: 8588935, DOI: 10.1109/CVPR.2005.177

[9] Hyukmin Eum 1, Changyong Yoon 2, Heejin Lee 3 and Mignon Park 1,* Continuous Human Action Recognition Using Depth-MHI-HOG and a Spotter Model, Sensors 2015, 15(3), 5197-5227; doi:10.3390/s150305197

[10] JINHO KIM¹ ,BYUNG-SOO KIM², SILVIO SAVARESE, Comparing Image Classification Methods:K-Nearest-NeighborandSupport-VectorMachines, Applied Mathematics in Electrical and ComputerEngineering,2012,ISBN:978-1-61804064-0133-138