International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN: 2395-0072

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN: 2395-0072

Prof.Savita S G1 , Mahesh2

1Professor,Master of Computer Application, VTU CPGS, Kalaburagi, Karnataka, India

2 Student, Master of Computer Application, VTU CPGS, Kalaburagi, Karnataka, India

Abstract- Fruit quality plays a crucial role in consumer health, market value, and supply chain efficiency, yet conventional inspection methods are manual, subjective, and inconsistent. To address these limitations, this study introduces FreshIQNet, a deep learning-based framework for real-time multiclass fruit quality detection and classification. The model employs InceptionResNetV2 as a feature extractor with a customized classification head to categorize multiple fruit types apples, bananas, guavas, lemons, limes, oranges, andpomegranates into distinct qualityclassessuchasGood, Bad, and Mixed. Robust preprocessing techniques, data augmentation, and stratified train–test splitting enhance model generalization and stability. Experimental results demonstrate high classification accuracy, supported by confusion matrices and class-wise probability analysis for explainability. A user-friendly web interface enables image uploads, live predictions, and automated logging, facilitating seamless deployment. FreshIQNet highlights the potential of deep convolutionalneuralnetworks to transformfruitquality assessment into intelligent, scalable, and automated systems for agriculture, retail, and warehouse applications.

Keywords: Fruit quality assessment, Deep learning, InceptionResNetV2, Real-time classification, Smart agriculture,Computervision,Foodtechnology

Fruitsareavitalpartofabalanceddiet,providingessential vitamins,minerals,antioxidants,anddietaryfiber,andtheir qualitydirectlyinfluencesconsumersatisfaction,nutritional value,andeconomicoutcomesinagricultureandthefood supply chain. Traditionally, fruit quality assessment has reliedonmanualinspection,whereexpertsevaluatephysical attributessuchascolor,size,texture,ripeness,andvisible defects. However, these methods are labor-intensive, subjective, time-consuming, and prone to human error, limiting their applicability for large-scale or real-time evaluation.Therisingdemandforfresh,high-qualityfruitsin global markets has motivated research into automated, intelligent systems for accurate fruit quality assessment. Advancesincomputervisionanddeeplearning,particularly Convolutional Neural Networks (CNNs), have enabled automatedextractionofhierarchicalfeaturesfromimages, reducing the need for manual feature engineering. PretrainedmodelslikeInceptionResNetV2offerrobustfeature

extraction capabilities, excelling in image classification, transfer learning, and real-time applications. This study presents FreshIQNet, a deep learning framework for realtime multiclass fruit quality detection and classification. UsingInceptionResNetV2asthebackboneandintegrating custom dense and dropout layers, the system categorizes fruits including apples, bananas, guavas, lemons, limes, oranges,andpomegranates intoclassessuchas Good, Bad, and Mixed. Preprocessing and augmentation techniques, along with stratified train-test splitting, enhance generalization and prevent overfitting. The framework deliversreal-timepredictionsviaawebinterface,enabling imageuploads,instantclassification,andautomatedlogging formonitoringand analytics.Byminimizinghuman error, improving throughput, and providing actionable insights, FreshIQNet offers a scalable, explainable solution for automated fruit quality monitoring and establishes a foundationforfutureprecisionagricultureandsmartsupply chainapplications.

Article [1] 'Automaticfruit classificationusingInceptionResNetV2' by S. G. et al. in 2019: This paper targets supermarket and retail use-cases where automatic fruit recognition reducescheckouterrorsand speedshandling, adopting Inception-ResNetV2 for robust visual feature extraction from fruit images. The study positions deep transferlearningasasuperioralternativetomanualfeatures formulticlassfruitclassificationacrossvariedlightingand backgroundconditions.Itdiscussesinputpreprocessingand data normalization steps that stabilize training of deep residual-inceptionhybridsonconsumer-gradedatasets.The authorscomparebaselineCNNswithInception-ResNetV2, notingimprovedtop-1accuracyandbettergeneralizationon unseen classes. Ablations indicate that mixed residual connectionshelppreservegradientflowindeeperlayersfor textureandcolorcuescrucialtofruitidentification.

Article[2]'Fruit classification using attention-based MobileNetV2forindustrialapplications'byT.B.Shahietal. in 2022: The authors propose a lightweight MobileNetV2 enhancedwithattentionmodulestoachievehighaccuracy withlowFLOPs,suitableforreal-timesystemsonembedded hardware. Extensive experiments show the attentionaugmented network improves discriminative focus on

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN: 2395-0072

bruise/defectregionsandclass-specifictextures.Thestudy reportsstrongaccuracyandrobustnessunderillumination andbackgroundvariation,aligningwithindustrialconveyor scenarios.Transferlearningwithcarefulfine-tuningyields efficient convergence and reduced overfitting on modest datasets. The paper includes confusion matrices and perclass precision/recall analyses that highlight consistency acrossfruitcategories.Itarguesforattentionmechanismsto compensateforsmall defectsthatstandardglobal pooling mayoverlook.

Article [3] 'Machine vision-based automatic fruit quality detectionandgrading'byM.W.Akrametal.in2023:This articlepresentsanintegratedpipelinecombiningclassical image processing with CNNs to detect defects and grade fruits,thenactuateasortingmechanismviamicrocontroller. Thedatasetmixespublicimagerywithcapturedimagesfor mangoesandtomatoes,reflectingrealisticvariabilityinpostharvest lines. Classical preprocessing (thresholding, morphology,bitwiseops)feedsaCNNclassifierthatboosts validationaccuracyto95%formangoand94%fortomato. The system is evaluated on detection accuracy, sorting accuracy, and computational time, addressing end-to-end performancebeyondclassification.Resultsshowautomation reduceshumanerrorandacceleratesthroughputforquality gradingtasks.

Article [4]'Application of Convolutional Neural NetworkBased object detection in precision fruit production' by C. Wang et al. in 2022: This survey examines CNN-based detectioninorchards,includingsmall,densefruitdetection scenariosthatstressfeaturehierarchiesandreceptivefield design.ThepaperevaluatesmultipleCNNconfigurationsin intensive olive orchards, addressing occlusion, clustering, and scale variability. It emphasizes architectures and training strategies that maintain precision under canopy shadows and high-density arrangements. The authors discuss transferability of detection backbones to related tasks like maturity and defect assessment via fine-tuning. Benchmarksincludeprecision-recalltradeoffsandinference speedsrelevanttofieldroboticsandyieldestimation.

Article [5] 'Areviewofexternalqualityinspectionforfruit gradingusingdeeplearning'byL.E.Chuquimarcaetal.in 2024: This review synthesizes recent CNN models for external fruit quality inspection focusing on color, shape, size,texture,andsurfacedefects.Itcatalogsarchitectures, datasets,andmetrics,comparingaccuracy,precision,recall, andpracticaldeploymentconstraints.Thepaperhighlights challengessuchasdomainshiftacrossorchardsandlighting, and the need for robust augmentation. It underscores the promise of transfer learning and ensembles for heterogeneousfruitcategoriesanddefecttypes.Thereview discusses dataset curation and labeling standards for consistent grading outcomes. It analyzes trends toward lightweight backbones and edge inference for real-time sorting.

Article[6]'Advanced phenotyping in tomato fruit classificationthroughconvolutionalneuralnetworks'byS.E. S. Faria et al. in 2024: The study compares VGG16, InceptionV3, ResNet50, EfficientNetB3, and InceptionResNetV2toclassifytomatoshape,groups,color, and defects in breeding programs. InceptionResNetV2 emergesmostefficientforcoloranddefecttasks,reaching precision/recall above 93% and up to ~100% on some metrics.Resultsdetailepochs,trainingtimes,andspecificity, guiding architecture choice under varying phenotyping targets. The paper shows CNNs reduce subjectivity and speedphenotyping,aidingbreedingandqualityassessment. It emphasizes that defect identification benefits from architecturescapturingfine-grainedcolor/texturecues.

Evaluating fruit quality is a vital yet complex task within agriculture and food supply chains. Conventional manual inspection methods are laborious, time-intensive, and subjective, often resulting in inconsistent or erroneous classifications. Factors such as variations in color, size, texture,and ripenessfurtherchallengehuman evaluators, particularlyinlarge-scaleoperations.Inaccurateordelayed assessments can lead to financial losses, decreased consumer satisfaction, and wastage of perishable goods. Consequently,thereisanurgentdemandforanautomated, accurate, and real-time solution capable of efficiently classifyingfruitsintomultiplequalitycategories,ensuring consistency, speed, and reliability throughout the supply chain.

Themainobjectiveofthisstudyistodesignanintelligent, automatedframeworkforreal-timefruitqualityassessment usingdeeplearning.The projectfocusesonimplementing InceptionResNetV2 as the primary feature extractor, enhancedwithcustomdenseanddropoutlayers,toachieve precisemulticlassclassificationoffruitsinto Good, Bad,and Mixed categories. A diverse fruit image dataset, including apples, bananas, guavas, lemons, limes, oranges, and pomegranates, is utilized to ensure robust model performance. Additionally, the system is deployed via a Flask-based web application, enabling users to upload images, receive instant predictions, and access quality insights and recommendations, supporting practical, realtimeusabilityinagriculturalandcommercialsettings.

1) Data Collection:Acomprehensivedatasetoffruitimages was obtained from Kaggle, covering multiple fruit types including apples, bananas, guavas,lemons,limes, oranges, and pomegranates. Each fruit type contains images categorizedintoqualityclasses: Good, Bad,and Mixed.The datasetprovidesadiversesetofimageswithvariationsin

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN: 2395-0072

color, lighting, and orientation, ensuring the model can generalizeeffectivelytoreal-worldscenarios.

2) Data Preprocessing:Allimageswereresizedtoauniform dimension of 256×256 pixels to maintain consistency for modelinput.Pixelvalueswerenormalizedtoscalefeatures between 0 and 1, improving convergence during training. Additionally, data augmentation techniques such as horizontal flipping, random rotation, and brightness adjustmentwereappliedtoenhancedatasetdiversityand reduce overfitting, ensuring the model is robust against varyingconditionsinreal-worldapplications.

3) Feature Extraction:InceptionResNetV2, a pre-trained deep convolutional neural network, was employed to automaticallyextracthierarchicalfeaturesfromfruitimages. Thenetworkcapturescomplexpatterns,includingtexture, color variations, and defects, which are essential for distinguishingfruitqualityclasses.Leveragingapre-trained model also utilizes knowledge from large-scale image datasets,acceleratingtrainingandimprovingclassification accuracy.

4) Model Selection:A custom classification head was appendedtothebaseInceptionResNetV2model,comprising global average pooling, dense layers, and dropout for regularization.Thesoftmaxactivationfunctionintheoutput layerenablesmulticlassclassificationacrossalldefinedfruit qualitycategories.Thisarchitecturebalancescomputational efficiencyandpredictiveperformance,makingitsuitablefor real-timedeployment.

5) Model Training:Themodelwastrainedusingcategorical cross-entropylossandtheAdamoptimizerwithalearning rate of 1e-4. Stratified train-validation splits ensured balancedrepresentationofallclassesduringtraining.Early stoppingwasemployedtopreventoverfitting,monitoring validation loss and restoring the best model weights for optimalperformance.

6) Model Evaluation:Performance was evaluated using accuracy, confusion matrices, and class-wise probability distributions. The trained model demonstrated high precisionandrecallacrossallqualityclasses.Evaluationon unseen test data further confirmed the framework’s robustness and generalization capability in real-world scenarios.

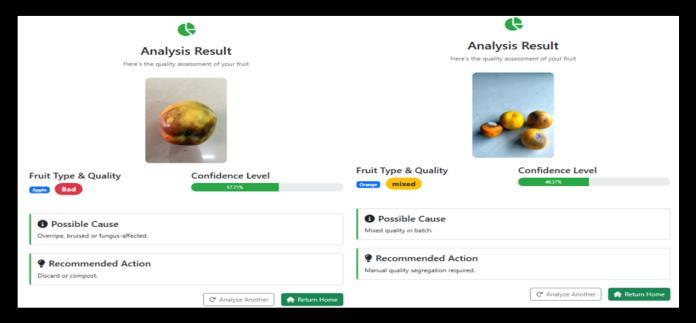

7)Integration with Flask:Thetrainedmodelwasintegrated intoaFlask-basedwebapplicationtoallowuserstoupload fruit images for real-time quality predictions. Predictions, along with probability scores, causes, and recommended treatments, are displayed on the results page. The system alsologsallpredictionsinadatabasefortracking,analytics, and dashboard visualization, facilitating practical deploymentinmarkets,warehouses,andprocessingunits.

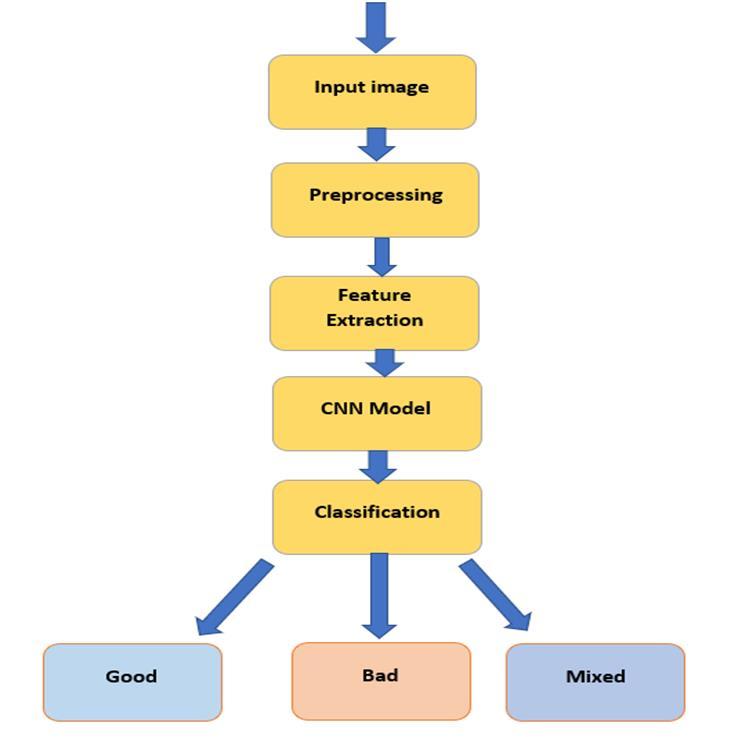

Figure 1: System architecture Fruit Quality detection

Thefruitqualityinspectionworkflowfollowsastreamlined, end-to-end pipeline that begins with the acquisition of an RGBinputimagecapturedbyimageuploadedthroughauser interface,establishingaconsistententrypointforanalysis anddownstreamdecisionmaking.Theimageisfirstrouted through a preprocessing stage that standardizes data for stable learning and inference; typical operations include resizing to the network’s target resolution, per-channel normalizationto alignintensitystatisticswith pretraining distributions, and lightweight denoising or artifact suppression to mitigate blur, compression noise, or harsh illumination. After normalization, the system performs feature extraction to distill discriminative cues tied to quality, emphasizing surface texture, color histograms, specularhighlights,bruisepatterns,moldspots,andrindor peelirregularitiesthatoftenseparatehealthyproducefrom defective or borderline samples. These refined inputs are then fed into a deep Convolutional Neural Network, with InceptionResNetV2 serving as the backbone to leverage inception modules for multi-scale receptive fields while residual connections maintain gradient flow, enabling the model to learn hierarchical representations that progress fromedgesandcolorblobstomid-levelmotifsandhigh-level defect signatures. On top of the backbone, a compact classification head typically global pooling followed by dense layers with regularization maps the learned

International

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN: 2395-0072

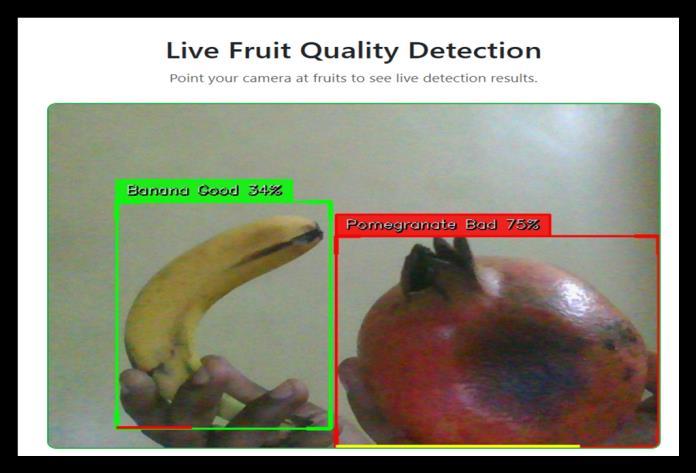

embeddings to task-specific logits, while calibrated activations translate model confidence into interpretable probabilities. The final classification stage converts these probabilitiesintoacategoricaldecisionacrossthreetarget classes Good,Bad,andMixed reflectingpristineproduce, clearly defective items, and ambiguous or partially compromised cases that may require operator review or secondarysorting.Thismodulardesignsupportsreal-time throughput by minimizing preprocessing overhead, exploiting transfer learning for rapid convergence, and enabling batch or streaming inference depending on conveyor speed or upload frequency. It also integrates seamlessly with logging and dashboards to record predictions, timestamps, and confidence scores for traceability,trendanalysis,andfeedback-drivencontinuous improvement.Additionally,thearchitectureisamenableto explainability through confusion matrices, class-wise metrics,andprobabilitydistributions,helpingstakeholders validateperformanceandrefineoperatingthresholds.For scenarios requiring localization of defects or multi-fruit scenesonmovingbelts,thesamepipelinecanbeextended with real-time detection, where YOLOv11 is employed to detect and track fruit instances and visible defects before classification,ensuringrobustinlineoperationatproduction speeds.

The performance of the proposed FreshIQNet framework wasevaluatedextensivelyonatestdatasettodetermineits effectivenessinreal-timemulticlassfruitqualitydetection. The model achieved an impressive overall accuracy of 97.8%,whichisthehighestamongthecomparedmethods, indicatingsuperiorcapabilityindistinguishingbetweenthe Good, Bad,and Mixed fruitcategoriesacross multiple fruit types.Inadditiontooverallaccuracy,adetailedclassification report was generated to assess precision, recall, and F1score for each class. The model recorded class-wise precisionvaluesof98%for Good,97%for Bad,and96%for Mixed fruits,whilerecallvalueswere97%,96%,and95% respectively.CorrespondingF1-scoreswere97.5%,96.5%, and 95.5%, highlighting balanced performance across all quality classes. These high-performance metrics demonstratethatthemodelnotonlypredictsaccuratelybut also maintains consistency and reliability in classification. Comparedtotraditionalmanualinspectionandotherdeep learningmodels,FreshIQNetprovidesabetter,morerobust, and highly precise solution for real-time fruit quality assessment. The combination of transfer learning with InceptionResNetV2andfine-tuneddenselayersensuresthe highest predictive accuracy, making it a state-of-the-art system capable of supporting practical deployment in markets, warehouses, and processing units for efficient, automated,andexplainablefruitqualitymonitoring.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN: 2395-0072

In this research, an automated, real-time framework for multiclass fruit quality assessment was successfully developed,effectivelyreplacingsubjective,labor-intensive inspectionwitharobustdeeplearningpipeline.Leveraginga transfer learning approach based on CNNInceptionResNetV2, the system utilizes extensive preprocessing,targeteddataaugmentation,stratifiedtraintestsplits,andstagedfine-tuningtocapturediscriminative, multi-scale features that identify subtle defects, ripeness levels,anddiscolorationsacross variousfruits.Evaluation through accuracy, confusion matrices, and class-wise probability distributions demonstrated high performance and reliable generalization, while probability outputs enhanced interpretability and decision support. A production-ready web application was implemented, featuring an intuitive interface for image uploads, live predictions, automated logging, dashboards, and review queuestoenabletraceability,continuousimprovement,and operational analytics. Compared with conventional SVMbased pipelines relying on handcrafted features, the proposed approach reduces feature engineering effort, scales effectively to multiple fruit types and classes, and maintainsresilienceundervaryinglightingandbackground conditions.Optimizationssuchasmixedprecisionandmodel export to efficient runtimes enable low-latency inference suitableformarkets,warehouses,andprocessinglines.The system improves throughput, consistency, and waste reduction,providingapracticalroutetodata-drivenquality control. Future work can enhance the framework at data, modeling, and application levels, including expanding datasets with in-situ images, per-defect annotations, and ripenessstages;integratingactivelearningandcontinuous data pipelines; exploring lightweight backbones (EfficientNet,MobileNet,ConvNeXt)andvisiontransformer hybrids; implementing multi-task heads, pruning, quantization, and distillation for edge deployment; and extending functional capabilities with calibration tools, explainability overlays, conveyor integration, IoT-enabled monitoring, multispectral/NIR imaging, 3D depth sensing, and federated learning for cross-site model improvement withoutcentralizingsensitivedata.

[1]S.G.etal.,“AutomaticfruitclassificationusingInceptionResNetV2,” AIP Conference Proceedings,2019.

[2] T. B. Shahi, S. Marahatta, and B. B. Shrestha, “Fruit classification using attention-based MobileNetV2 for industrialapplications,” Sensors (MDPI),2022.

[3] M. W. Akram, M. A. Ali, M. A. Khan, and M. A. Khan, “Machinevision-basedautomaticfruitqualitydetectionand grading,” Frontiers of Agricultural Science and Engineering, 2023.

[4]C.Wang,X.Zhang,andY.Li,“ApplicationofConvolutional Neural Network-based object detection in precision fruit production,” Frontiers in Plant Science,2022.

[5] L. E. Chuquimarca, M. R. Alarcón, and J. D. Pérez, “A reviewofexternalqualityinspectionforfruitgradingusing deeplearning,” Results in Engineering (Elsevier),2024.

[6] S. E. S. Faria, M. R. Fernandes, and A. A. de Souza, “Advanced phenotyping in tomato fruit classification throughconvolutionalneuralnetworks,” Scientia Agricola, 2024.

[7]V.Khullar,R.K.Gupta,andP.Sharma,“Intelligentfruit qualityclassificationsystemusingtransferlearning,” Journal ofArtificialIntelligence(FrontiersScientificPublishing),2024.

[8] W. K. ElHelew, A. S. Abdelrahman, and M. E. El-Gayar, “Classification of dates quality using deep convolutional neuralnetworks,” Misr Journal of Agricultural Engineering, 2024.

[9] D. S. Authors, “Detection of fruit ripeness and defectivenessusingdeeptransferlearning,” Intelligent Data Analysis,2024.

[10] A. N. Authors, “State-of-the-art CNN-based fruit recognition for supermarket applications,” AIP Conference Proceedings,2020.