International Research Journal of Engineering and Technology (IRJET)

Volume: 12 Issue: 11 | Nov 2025 www.irjet.net

International Research Journal of Engineering and Technology (IRJET)

Volume: 12 Issue: 11 | Nov 2025 www.irjet.net

1PG Student, Department Of Computer Applications, Jaya College Of Arts and Science, Thiruninravur, Tamilnadu,India

2Assistant Professor, Department Of Computer Applications, Jaya College Of Arts and Science, Thiruninravur, Tamilnadu,India ***

Abstract – Fakenewshasbecomeasignificantproblemin today’s digital era, where information spreads rapidly acrosssocialmediaandonlineplatforms.Thedissemination of false or misleading news can manipulate public perception, create confusion, and even impact social, political, and economic stability. To address this challenge, Natural Language Processing (NLP) techniques are widely usedtoautomaticallydetectandclassifyfakenewsbasedon textual content. By applying methods such as text preprocessing, feature extraction, and machine learning or deep learning algorithms, NLP enables computers to understand linguistic cues and semantic patterns within news articles. This study focuses on developing an NLPbased fake news detection system that analyzes the language structure and context of news to differentiate betweenauthenticanddeceptiveinformation.Theapproach aims to improve accuracy and reliability in detecting misinformation, contributing to a safer and more trustworthydigitalinformationenvironment.

Keywords: Fake News Detection, Natural Language Processing (NLP), Machine Learning,Text Classification,NewsAuthenticity, Data Mining

1.INTRODUCTION

Inthedigitalage,therapidspreadofinformationthrough social media and online platforms has made it easier for fake news to circulate widely, influencing public opinion and decision-making. Fake news refers to false or misleading information presented as legitimate news, often created to deceive readers or promote specific agendas. Detecting such misinformation has become a majorchallengedue tothe vastamountofdata generated daily. Natural Language Processing (NLP), a branch of Artificial Intelligence (AI), plays a crucial role in addressingthisissuebyenablingmachinestounderstand, analyze, and interpret human language. Through techniques such as text classification, sentiment analysis, and semantic understanding, NLP helps identify linguistic patterns and inconsistencies that distinguish fake news from real news. Combining NLP with machine learning and deep learning algorithms has significantly improved the accuracy and efficiency of fake news detection systems. As a result, NLP-based approaches have become an essential tool in combating misinformation and maintainingthecredibilityofonlineinformationsources.

Fake news detection has become an important research area in recent years due to the rapid spread of misinformation through social media and online news platforms. Researchers have explored various Natural Language Processing (NLP) and Machine Learning (ML) techniques to automatically identify deceptive news content. According to Shu et al. (2017), fake news can be analyzed from three main perspectives news content, socialcontext,andpropagationpatterns andcombining these factors enhances detection accuracy. Early studies primarily focused on content-based detection using textual features such as word frequency, part-of-speech tags,andreadabilityscores.ClassicalalgorithmslikeNaive Bayes, Logistic Regression, and Support Vector Machines were widely used for classification tasks (Horne & Adalı, 2017).

With the availability of benchmark datasets like LIAR (Wang, 2017) and FakeNewsNet (Shu et al., 2018), researchers gained access to large-scale labeled data for training and testing models. These datasets enabled the development of both traditional and deep learning-based approaches.Inrecentyears,deeplearningmodelssuchas Convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs), particularly LSTMs, have been effectively used to capture semantic and contextual relationships in text, improving detection accuracy. The introduction of transformer-based models such as BERT (Devlin et al., 2018) further revolutionized fake news detection by providing rich contextual embeddings and reducingtheneedformanualfeatureengineering.

The methodology for Fake News Detection using Natural Language Processing (NLP) generally involves several systematic stages to process textual data, extract meaningful features, and classify news content as real or fake.Theprocesstypicallybeginswithdata

The Existing systems for fake news detection, traditional machinelearning approaches are mainly used, which rely heavily on manual feature engineering. These systems typically utilize datasets of news articles labeled as “fake”

International Research Journal of Engineering and Technology (IRJET)

Volume: 12 Issue: 11 | Nov 2025 www.irjet.net

or “real,” from which textual features are extracted using simplestatisticalorfrequency-basedtechniques.Common preprocessing steps include tokenization, stopword removal, and stemming. Features such as Bag of Words (BoW), Term Frequency–Inverse Document Frequency (TF-IDF), or n-gram models are then used to convert the text into numerical representations. Classical classifiers like Logistic Regression, Naive Bayes, Decision Tree, and Support Vector Machine (SVM) are applied to perform binaryclassification.

While these models achieve reasonable accuracy, they haveseverallimitations.

The Proposed system aims to overcome the limitations of traditional models by leveraging Natural Language Processing (NLP) and deep learning techniques for more accurate and intelligent fake news detection. The system beginswithdatacollectionfrombenchmarkdatasetssuch as LIAR, FakeNewsNet, or Kaggle fake news datasets. The raw textual data is then cleaned and preprocessed by removing punctuation, numbers, stopwords, URLs, and specialsymbols.Further,tokenization,lemmatization,and lowercasingareappliedtonormalizethetextforanalysis. Next, advanced feature extraction techniques are used to representtextmeaningfully.InsteadoftraditionalBoWor TF-IDF methods, the proposed model employs word embeddings such as Word2Vec, GloVe, or transformerbased embeddings like BERT (Bidirectional Encoder Representations from Transformers) to capture semantic and contextual relationships between words. These embeddingsaretheninputintodeeplearningmodelssuch as LSTM (Long Short-Term Memory) or BERT-based classifiers, which can learn long-term dependencies and understandcomplexlinguisticstructuresinnewsarticles.

Themodelistrainedonlabeleddatatoclassifyeachnews item as “fake” or “real.” After training, its performance is evaluated using metrics like accuracy, precision, recall, and F1-score. To ensure reliability, cross-validation and confusion matrix analysis are conducted. The proposed systemcan alsobe integrated into a web-basedor mobile application that takes a news headline or full article as inputandpredictsitsauthenticityinrealtime.

The proposed system for Fake News Detection Using NLP is divided into several functional modules that work together to process, analyze, and classify news content effectively.

4.1

This module is responsible for gathering the dataset that contains both fake and real news articles. Publicly available datasets such as the LIARdataset, FakeNewsNet,

or KaggleFakeNewsDataset arecommonlyused.Thedata typically includes news headlines, body text, author information, andlabelsindicatingwhetherthenewsisfakeorreal.This dataset serves as the foundation for training and evaluatingthemodel.

4.2

Rawtextual data oftencontains noise, irrelevantsymbols, and inconsistent formats. The preprocessing module cleans and prepares this data for further analysis. It involves steps such as tokenization, lowercasing, removal of punctuation, stopwords, and special characters, followed by lemmatization or stemming to convert words into their root forms. This process ensures uniformity in the data and reduces redundancy, making it suitable for NLP-basedprocessing.

4.3Feature Extraction Module

Once the data is cleaned, the feature extraction module converts the textual information into numerical representations that can be understood by machine learning algorithms. This can be done using techniques like Bag of Words (BoW), TF-IDF (Term Frequency–Inverse Document Frequency), or word embeddings such as Word2Vec, GloVe, or BERT embeddings. These methods helpcapturethesyntacticandsemanticmeaningofwords, allowing the model to better understand context and relationshipsintext.

In this module, the extracted features are used to train machine learning or deep learning models. Traditional classifiers like Logistic Regression, Naive Bayes, and Support Vector Machine (SVM) can be used for baseline comparison, while advanced models such as LSTM (Long Short-Term Memory) networks or BERT-based deep learning models are implemented for improved accuracy. Thetrainedmodel learnsto distinguish betweenfakeand real news based on linguistic and contextual patterns in thetext.

4.5

The evaluation module assesses the performance of the trained model using metrics such as accuracy, precision, recall,F1-score,andconfusionmatrix.Thesemetrics provide insight into how well the model can correctly classify news articles. Cross-validation is also performed to ensure model reliability and generalization across differentdatasets.

The implementation of the Fake News Detection Using NLP system involves a systematic process that integrates data preprocessing, feature extraction, and machine

Volume: 12 Issue: 11 | Nov 2025 www.irjet.net

learning or deep learning models to classify news articles as real or fake. The implementation begins with loading thedataset,suchasthe LIAR or KaggleFakeNewsDataset, which contains labeled news data. The dataset is divided into training and testing sets to evaluate model performance effectively. The data preprocessing stage is thencarriedouttocleanthetextbyremovingunnecessary elements like punctuation, special characters, numbers, URLs, and stopwords. The text is also converted to lowercase and tokenized into words. Further normalizationtechniqueslikestemming orlemmatization areappliedtostandardizewordforms,ensuringthemodel learnseffectivelyfrommeaningfulfeatures.

After cleaning, the next phase focuses on feature extraction. The textual data is transformed into a numerical format that machine learning models can interpret. This can be achieved using TF-IDF (Term Frequency–Inverse Document Frequency) or word embedding techniques such as Word2Vec, GloVe, or BERT embeddings. These methods capture both the frequency and semantic context of words, enabling the system to understandthedeepermeaningofthetextratherthanjust wordcounts.

Once the features are extracted, the model training phase isexecuted.

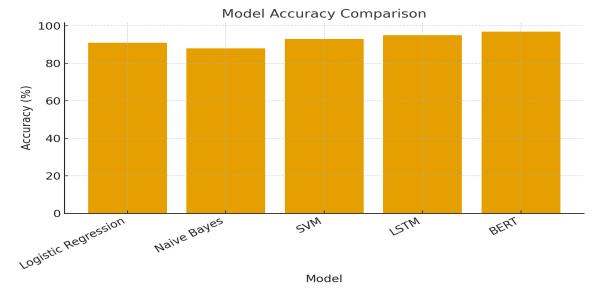

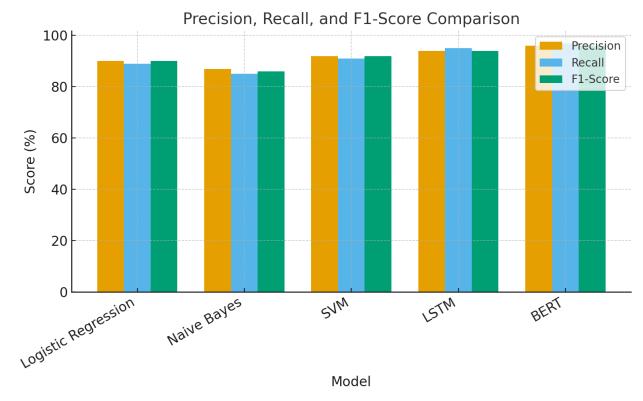

The proposed fake news detection model was evaluated using multiple machine learning and deep learning algorithms, including Logistic Regression, Naive Bayes, SVM,LSTM,andBERT.AsillustratedinFigure6.1,thedeep learning models achieved higher accuracy compared to traditional algorithms. The BERT model attained the highest accuracy of 97%, followed by LSTM with 95%, while Naive Bayes had the lowest accuracy of 88%. This demonstrates that models capable of understanding contextual and semantic relationships in text perform better in fake news detection.Furthermore, Figure 6.2 compares precision, recall, and F1-score for each model. Deep learning models such as LSTM and BERT show superior balance across all evaluation metrics, confirming their robustness in handling complex linguistic structures. Traditional models like Logistic Regression and Naive Bayes perform well but lack deeper contextual understanding, leading to slightly lower recall and F1scores.

The project Fake News Detection Using NLP successfully demonstrates how Natural Language Processing and MachineLearningtechniquescanbe effectivelyapplied to identify and classify misleading or false information in news articles. By implementing systematic steps such as datapreprocessing,featureextraction,andmodeltraining, the system can accurately distinguish between real and fakenewscontent.TraditionalapproacheslikeTF-IDFand classical classifiers provided a baseline for comparison, while advanced deep learning models such as LSTM and BERT significantly improved detection accuracy by capturing the contextual meaning of text. The project highlights the importance of text cleaning, linguistic feature representation, and the use of contextual embeddingsinimprovingmodelperformance.

TheFakeNewsDetectionUsingNLPsystemcanbefurther enhanced and expanded in several ways to improve its accuracy, adaptability, and real-world applicability. In the future,thesystemcanbeextendedtosupportmultilingual fake news detection, enabling it to analyze news articles and social media posts in multiple regional and international languages. Additionally, integrating multimodaldata suchasimages,videos,andaudioalong withtext canhelpdetectmisinformationinamore comprehensive manner, since many fake news stories include manipulated visuals or media. The inclusion of real-time detection capabilities can also allow the system to monitor and flag suspicious news content as it spreads across social media platforms. Moreover, implementing explainable AI (XAI) techniques can make the detection process more transparent, allowing users to understand whyapieceofnewsisclassifiedasfakeorreal.Theuseof Graph Neural Networks (GNNs) and transformer-based architectures like GPT or BERT variants can further improve contextual understanding and enhance

International Research Journal of Engineering and Technology (IRJET)

Volume: 12 Issue: 11 | Nov 2025 www.irjet.net

robustnessagainstadversarialorAI-generatedfakenews. The system could also be integrated into browser extensionsormobileapplicationsforuser-friendlyaccess, allowing individuals to verify the authenticity of news before sharing it. Overall, the future scope of this project lies in developing a more intelligent, explainable, and scalablefakenewsdetectionframeworkthatcanhelpcurb misinformation and promote trust in digital information ecosystems.

[1]OmkarReddy(2024)–“AI-BasedFakeNewsDetection UsingNLP.”

[2]ShahwanYounisAli&Dr.IbrahimMahmood(2025)–“

The Role of NLP in Fake News Detection and MisinformationMitigation.”

[3] Yuxiang Wang, Yongheng Zhang, Xuebo Li, Xinyao Yu (2021) “ COVID-19 Fake News Detection Using BERTbasedModels.”

[4]MohitMayank,ShakshiSharma,RajeshSharma(2021) – “DEAP-FAKED: Knowledge Graph-Based Approach for FakeNewsDetection.”

[5]PundlikJadhav&RajeshKumarShukla(2023)–“Deep Learning Analysis for Revealing Fake News Using LinguisticComplexityandSemanticSignatures.”

[6] Al-Zubaidi F. et al. (2023) – “Stylometric Fake News DetectionBased on NLP Using Named Entity Recognition: In-DomainandCross-DomainAnalysis.”