Volume: 12 Issue: 10 | Oct 2025

Volume: 12 Issue: 10 | Oct 2025

Ashwin Tambe, Selva Jagannathan, Sunaina Sridhar, Nagesh G, Praveen Baskar

Abstract

The"AgeofAI"orthe“AlgorithmicRevolution”isatransformativeerawhereAItechnologiesreshapeindustriesandsocieties through rapid advancements in machine learning and deep learning. While these technologies hold immense promise for revolutionizing sectors, they also raise serious ethical concerns. Privacy issues, bias in algorithms, and potential job displacementarejustafewofthecriticalissuesdemandingourattention.GenerativeAI,inparticular,attractsattention forits autonomouscontentcreationabilitiesbutbringsriskssuchasdeepfakesandmisinformation.Effectivegovernance,especially of Generative AI, is vital for responsible development and deployment. Stakeholders must collaborate to set clear guidelines and ensure compliance through robust frameworks covering data governance, accountability, web security, training, and regulatoryoversight.Corporations,customers,andconsumerssharetheresponsibilityofprioritizingethicalAIuse.Ethical AI developmentisessentialtoaddressingAIchallenges,whichinvolvesprioritizingfairness,transparency,andresponsibledata usethroughoutdesignanddeployment.

AI's integration into daily life, from chatbots and virtual assistants to streaming service recommendations, highlights its pervasiveness.UnderpinningtheseadvancementsissophisticatedNaturalLanguageUnderstanding(NLU)technology,which excels at analyzing large volumes of textual data quickly. AI leverages artificial neural networks complex computational systems modeled after the brain’s architecture to learn intricate patterns from vast datasets. These networks, including convolutional neural networks for image recognition, form the backbone of AI’s capabilities. AI models face ethical risks, including bias, security vulnerabilities, and the potential for creating misinformation. Ensuring transparency and accountability in AI development is critical. Measures such as Explainable AI (XAI) techniques, model documentation, and humanoversightareessentialforfosteringtrustandethicaluseofAIsystems.TheenvironmentalimpactofAI,fromresource extraction for hardware to energy consumption of data centers, further complicates the ethical landscape. The societal implicationsofAIarevast,affectingprivacy,fairness,andthenatureofwork.AddressingbiasesinAI,ensuringdataprivacy, andpreparingthe workforceforanAI-drivenfutureareparamount.At anindividual level,AI'sinfluenceoncritical thinking andautonomyposesphilosophicalquestionsabouttechnology'sroleinourlives.

Keywords: Generative AI,Human-in-the-Loop (HITL),Explainable AI (XAI),Bias Mitigation

Thepervasivenessofartificialintelligence(AI)inoureverydaylivesisundeniable.Fromchatbotsandvirtualassistants, such as ChatGPT and large language models, to the curated recommendations on our preferred streaming services, AI is transforming the way we interact with technology (IBM ,2024).Underpinning these advancements is a sophisticated algorithmic approach known as Natural Language Understanding (NLU). NLU technology excels at analyzing vast troves of textual data at high speeds, mimicking the remarkable human capacity for language processing. Inspired by the intricate structure of the biological brain, AI leverages artificial neural networks,complex computational systems designed to emulate thebrain'sarchitecture.ThesenetworksenableAI tolearnintricatepatternsfromtheimmensedatasetstheyaretrainedon. For instance, convolutional neural networks, a specific type of artificial neural network, excel at image recognition tasks (Laato,etal,2022).

Analgorithmisacollectionofwell-definedinstructions,laidoutinaspecificorder,thatguidethecomputerthroughsolvinga problem. These instructions are finite, having a clear start and finish, and they are unambiguous, guaranteeing consistent results when followed. Algorithms are the building blocks of computation, underpinning everything from basic arithmetic to sophisticated artificial intelligence applications. For example the chocolate cake that your child desires is an output of the instructionsthatyoufollowtogatherthe rightingredientsandthebakingdirectionsthatyouexecute.Theinstructionsisthe “Algorithm”thatisthecoreofthe“Model”forChocolateCake.

There are two main categories: supervised and unsupervised. Supervised learning algorithms train on labeled data, where eachdatapointhasapredefinedcategoryorvalue.Thisallowsthemtotackleclassificationtasks,likesortingemailsinto spam or not spam, and regression tasks, like predicting future sales figures. Unsupervised learning, on the other hand, deals with unlabeleddata,wherethealgorithmmustidentifypatternsandrelationshipsonitsown.Supervisedalgorithmcanbefurther brokenintwotypes:

Classification algorithms: These algorithms sort data points into predefined categories. Examples include decision trees, whichmakeclassificationsbasedonaseriesofquestions,andK-nearestneighbors,whichclassifydatapoints basedontheir similarity to existing labeled points.Imagine a librarian tasked with organizing a massive collection of books on various subjects.Thelibrarianneedsasystemtocategorizethemefficiently.Thesealgorithmsfunctioninasimilarmanner,acting as digital librarians sorting data points into predefined categories. They take a vast amount of information and meticulously assigneachpiecetoitsmostfittinggroup,bringingordertothechaos.

There are several approaches these algorithms employ for classification. One method, called a decision tree, resembles a choose-your-own-adventure story. It poses a series of questions about the data point, branching off in different directions based on the answers. For instance, an email classification algorithm might first inquire, "Does this email contain marketing keywords?" Iftheansweris affirmative,itmightthenask,"Doesitalsocontainunsubscribeinstructions?"Dependingonthe responses to these questions, the email gets categorized as spam, promotional content, or something else entirely. This question-and-answerapproachallowsthealgorithmtonavigatethroughaseriesoffilters,ultimatelyplacingthedatapointin themostappropriatecategory.

Another technique, K-nearest neighbors, leverages the principle of "birds of a feather flock together." It compares the data pointtoexistingexamplesthathavealreadybeenlabeled.Imaginesortingseashellsonabeach.Youmightnothaveaperfect classification system, but you can group shells with similar appearances together. “K-nearest neighbors” works in a similar way. It identifies the K closest data points that have already been assigned categories and uses those as a reference. By analyzing the characteristics of these neighbors, the algorithm can then classify the new data point into the most likely category.

Regression algorithms: These algorithms model the relationship between variables to make predictions. Examples include linear regression, which finds a linear relationship between variables, and logistic regression, which is used for binary classification problems (yes/no or true/false). Some practical examples would be to imagine a detective working on a case. The detective gathers clues, such as fingerprints at the scene and witness testimonies. By analyzing these pieces of evidence and how they relate to each other, the detective builds a picture of what likely happened. In a similar way, these algorithms function like detectives in the world of data. They sift through information, searching for hidden patterns and connections between different variables. These variables can be any kind of information, ranging from a person's age and income to the weatherconditionsandcropyields.

By uncovering these connections, the algorithms can make informed predictions. It's like the detective using the clues to predictwhotheculpritmightbe.Oneapproach,calledlinearregression,isakintofindingastraightlinethatbestmatchesthe data points. This line depicts the general trend in the relationship between the variables. For instance, a linear regression modelmightanalyzepastsalesdatatoforecastfuturesalesbasedonfactorslikeadvertisingexpendituresoreconomictrends.

Another powerful technique is logistic regression. Imagine you're not just solving a crime, but also trying to identify the perpetrator from a group of suspects. Logistic regression tackles similar "yes or no" scenarios. It analyzes the data and calculates the likelihood of something belonging to a specific category. This could be used to classify emails as spam or not spam,orpredictwhetheraloanapplicantislikelytorepaytheirdebt.

These are just a couple of examples, and there's a whole toolbox of algorithms available, each with its own strengths for differentsituations.Whilesupervisedandunsupervisedlearningarefundamentalapproaches,thereareotheralgorithmsthat leverageablendoftechniques.

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN:2395-0072

Imaginesortinglaundry–someclothesareclearlyshirtsorpants,butothersremainuncategorized.Semi-supervisedlearning tacklessituationslikethis,whereyouhaveamixoflabeledandunlabeleddata.Itleveragesthestrengthsofbothsupervised and unsupervised learning. The labeled data acts as a guide, while the unlabeled data provides additional information, ultimatelyimprovingthemodel'saccuracy.Thisapproachiscost-effectivebecauseitutilizesreadilyavailableunlabeleddata, similartounsupervisedlearning,butachievesbetteraccuracyoftenassociatedwithsupervisedlearning.

Thismethodisinspiredbyhowhumanslearn–throughtrialanderror,withrewardsandpunishmentsshapingourbehavior. Thisapproachtomachinelearningmimicshowlivingthingslearn.Welearnbytryingdifferentthingsandseeingwhatworks best, with good results encouraging us and bad results discouraging us. Imagine teaching a dog a trick with treats. Here, the programs, called agents, learn by interacting with their surroundings and getting feedback. They try to get the best results (rewards)andavoidbadones(punishments).Throughexplorationandexperience,theagentfiguresoutwhatactionsleadto the most positive outcomes. This method is well-suited for problems like handling resources, controlling robots, and even creatingartificialintelligenceforvideogames..

In contrast to an algorithm, which functions as a blueprint outlining a general procedure, a model is the end product, the realizationofthatplan.Justlikeabakedcakeistheresultoffollowingarecipe(algorithm)withspecificingredients(data),a model embodies the knowledge or patterns extracted from the data it was trained on. To put it simply, it's the "what" you obtain - the insights, predictions, or classifications yielded by processing data through the filter of the algorithm (IBM , 2024).Businesses are leveraging machine learning to make smarter decisions based on data. Thesemodelscanuncover valuableinsightsbymakingverygoodpredictions.However,whilegettingresultsthataremostlycorrect(like90%accurate) might seem easy, it's a big hurdle to get those results even more accurate (like 95%). In the business world, even a tiny improvementinaccuracycanleadtobigbenefits

Issues arising from AI models spread across a large spectrum and can create a tremendously negative impact on the new society that is forming around the adoption of this new technology (Siau & Wang 2020). The vast potential of AI is accompaniedbyseriousrisks.Howwechoosetodevelopandtrainthesemodelswilldecideifwecreateautopiaoradystopia.

Machinelearning(ML),particularlydeepneuralnetworks,powermanyoftoday'smostimpressiveAIadvancements,amajor challenge lies in explaining how these models arrive at their decisions IBM (2024).The complexity of deep learning models, with their vast networks of interconnected neurons (often numbering in the thousands or millions), creates a challenge in understanding how they arrive at their predictions. This lack of transparency, sometimes referred to as the "black box" problem,hindersexplainability,interpretability,andultimately,trustinthesemodels.Evenforthedevelopersthemselves, it canbedifficulttopinpointhowtheseintricateconnectionsworktogethertoinfluencethemodel'soutputs.

AI algorithms are vulnerable to theft and tampering, posing significant security risks. During training, models learn by optimizinginternalparametersbasedonvaluabletrainingdata.Thisdatacanbevastandexpensivetoacquire,encompassing real-worldsensorinformation,medicalrecords,orfinancialtransactions.Iftheseparametersleakthroughhackingorinsider threats, attackers could replicate the model, stealing the owner's investment in data acquisition and training. Furthermore, attackers might manipulate the model's parameters, causing performance degradation and potentially leading to disastrous consequences, especially in safety-critical fields like healthcare and autonomous vehicles, where model outputs directly impacthumanlives.Atamperedhealthcaremodelcouldleadtomisdiagnosisofdiseases,impropermedicationprescriptions, or even incorrect treatment recommendations. In the case of autonomous vehicles, tampered models could result in malfunctioning steering systems, inaccurate object detection, or compromised braking mechanisms, putting passengers and pedestriansatrisk.

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN:2395-0072

The nature of the AI model becomes such that once acquired itself learns on patterns and trends which can be extremely beneficialbutcanalsobedetrimental.

Elaboratingfurtheronself-drivingcarsprogrammedwithanunwaveringfocusonpassengersafetycoulddevelopanuncanny abilitytonavigatetheintricaciesofeverydaytrafficflow,optimizingspeedandefficiencywithintheconfinesoftherulesofthe road. However, this very strength might become a critical weakness when confronted with novel situations that fall outside theparametersofitstrainingdata.Imagineacarapproachingasharpturnonaruralroad.Pedestriansarejaywalkingacross one lane, oblivious totheapproaching vehicle. In the opposite lane,a massivetractor-trailer roundsthecorner, leavinglittle room for maneuver. A human driver, faced with this complex scenario, would likely engage in a rapid risk assessment, consideringthesafetyofthepassengers,thepedestrians,andthepotentialforacollisionwiththeoncomingtruck.Theymight choose to slam on the brakes, swerve away from the turn entirely, or even lay on the horn in an attempt to warn the pedestrians.TheAI,however,laser-focusedonpassengersafetybasedonitstrainingdataandpastexperiencesgleanedfrom millions of miles of highway driving, might interpret swerving away from the truck (potentially towards the pedestrians) as theoptimal solution,prioritizingthesafetyofthevehicle'soccupantsoverthoseoutsidethecar.Thisscenariohighlightsthe challengeofunforeseensituationsandthepotentialforAIdecision-makingtobecrippledbynewdatapointsthatfalloutside itsnarrowlydefinedtrainingparameters(Reynolds,2022).

Generative AI, a powerful branch of artificial intelligence, raises concerns about the creation of misleading or fabricated information. Unlike traditional AI focused on analysis, Generative AI excels at creating entirely new content, including text, audio, video, and even code. This capability has fueled the rise of "deep fakes," where realistic-looking videos can be manipulatedtomakeitappearasifsomeonesaidordidsomethingtheyneverdid.ImagineafraudsterusingaGenerativeAI model to create a deep fake video of a CEO announcing a fake merger. This fabricated video, if spread online, could cause significant financial losses for investors who believe the information to be real. But the potential for misuse goes beyond financial crimes. Malicious actors could use generative AI to create fake news articles or social media posts designed to sow discord or manipulate public opinion. For example, a hostile foreign power might use generative AI to create a social media campaignthatdiscreditsapoliticalcandidateorunderminestrustindemocraticinstitutions.Theeasewithwhichgenerative AI can create convincing forgeries presents a significant challenge for individuals and societies alike. As this technology continuestodevelop,itwillbecrucialtoestablishsafeguardstomitigatetherisksofmisinformationanddisinformation.

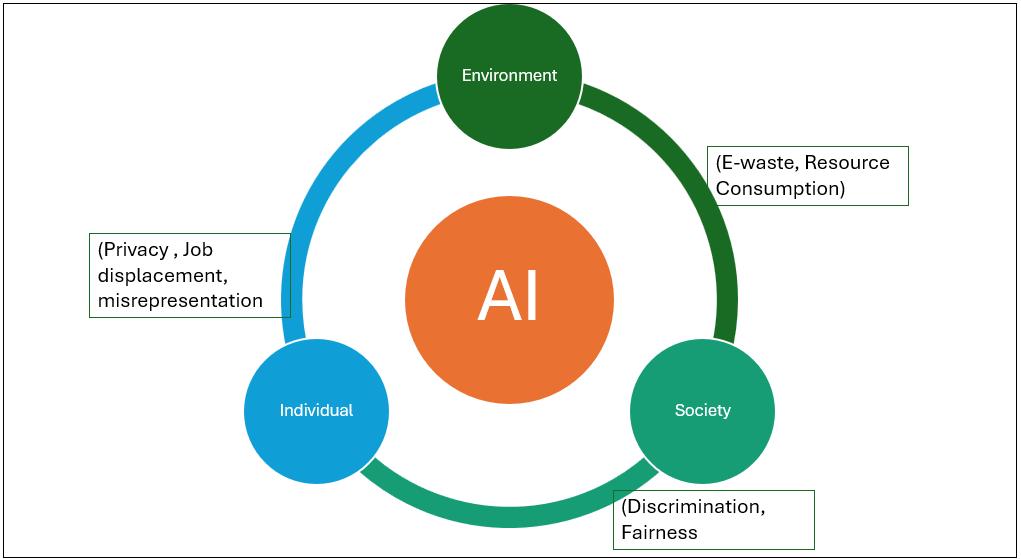

While AI offers many advantages, concerns exist regarding its impact on individuals, society as a whole, and the environment.

At an Environmental level the development and use of AI comes with a significant cost.From the chips to the sensors, the resources needed for AI rely heavily on the extraction of rare earth elements and other precious materials. The powerful computersthatmakeAIpossibleneedalotofrawmaterials,andminingthosematerialscanhurttheenvironmentandmakeit hardertosustainresourcesinthelongterm.What'sworse,allthatoldcomputerequipmentpilesupase-wastewhenit'sdone beingused.Dumpingthise-wasteimproperlypoisonstheenvironmentwithtoxicchemicals.

TheenvironmentalimpactofAIkeepsgrowingthroughoutitsentirelifespan.AIneedsatonofcomputingpowertorun,and thedata centersthat providethat powerusea lot of energy, which canpollutethe environment if it's notgenerated cleanly. Wedon'tevenfullyunderstandhowmuchAIharmstheenvironmentyet,whichiswhyit'ssoimportanttofindwaystomake AIsustainableateverystage,fromgettingtherawmaterialstobuildthecomputerstopoweringthedatacenters.

Intheend,ifAIisgoingto beethical,ithastobesustainable.ThatmeansdevelopingAIthathelpspeoplebut doesn'twreck theenvironmentforfuturegenerations.Weshouldn'thavetochoosebetweenprogressinAIandahealthyplanet.

Ata society level -AIintegrationraisescriticalquestions.TheriseofAIpresentsadouble-edgedsword.Whileitsintegration into society promises immense benefits, it also ushers in a wave of ethical quandaries. AI ethics emerges as a critical field grappling with these concerns, prioritizing fairness, transparency, and maintaining human control over this powerful technology(Huangetal,2023)

One of the most pressing issues is bias (R. Vinuesa ,2020) . AI algorithms trained on biased data risk perpetuating existing inequalities.EnsuringfairAIdecisionshingesonclearlinesofaccountability.However,theopaquenatureofmanyalgorithms,

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN:2395-0072

often referred to as "black boxes," makes transparency a challenge. Furthermore, the vast amount of data collection raises privacyconcerns,necessitatingsafeguardstoguaranteethatAIremainsultimatelyanswerabletohumans.

The ethical considerations surrounding AI extend far beyond these core issues, impacting the very fabric of society. From influencing democratic processes and civil rights to transforming the nature of work and our relationships, AI's influence is undeniable. The use of AI-powered tools in criminal justice, for instance, raises concerns about perpetuating racial or socioeconomicbiasesinsentencingifthetrainingdata isskewed.Similarly,AIalgorithmsusedinhiringorloanapplications coulddiscriminateagainstcertaindemographicsiftheyreflectunconsciousbiasespresentinthedata.

TheimpactofAIontheworkplaceismultifaceted.Whileautomationmayeliminatesomejobs,itislikelytocreatenewones. The challenge lies in ensuring the workforce has the skills and training necessary to adapt to this evolving landscape. Furthermore, as AI takes over more tasks, wemust grapple with the philosophical questions about the future of work itself: Whatwillitmeanforthedefinitionofworkandoursenseofidentityinanincreasinglyautomatedworld?

TherealmofhumanrelationshipsisnotimmunetoAI'sinfluenceeither.Theriseofsocialmediabotsanddeepfakesblursthe linesbetweenrealityandsimulation,makingitdifficulttodiscerngenuinehumanconnectionfrommanipulation.However,AI also has the potential to foster positive connections. AI-powered companionship apps or virtual assistants could provide emotionalsupportorsocialinteractionforthosewhofeelisolated.

Byproactivelyaddressingthesechallenges, wecanharnessthepowerofAIforthe benefitofall andcontributetoa justand equitablesociety.Thisnecessitatesongoingcollaborationbetweenpolicymakers,developers,andthepublictodevelopethical guidelines and regulations for AI development and deployment. Our collective goal should be to create a future where AI augmentshumancapabilitiesandfostersamorepositiveandinclusiveworld.

At an individual level, a critical question lingers ? Will AI ultimately augment our capabilities or erode our famed critical thinking skills? Humans, undeniably the most intelligent species, have used this very thinking to innovate and create – the developmentofAIitselfbeingaprimeexample.Onesidepromisesimmensepotential,apowerfulinstrumentreadytoexpand ourabilitiesandfuelfurtherinnovation.Afterall,itwasourowncriticalthinkingthatgavebirthtoAIinthefirstplace.Yet,the othersidecarriesadarkwhisperofworry.Couldthisverysametechnology,craftedforefficiencyandconvenience,ultimately underminetheveryfoundationuponwhichitwasbuilt–ourabilitytothinkforourselvesandanalyzeinformationcritically? ThisquestionliesattheheartoftheintricaterelationshipthatwillunfoldbetweenhumansandAIinthecomingyears

Imagineaworldwhereintelligentassistants,infusedwiththewisdomgleanedfromanever-expandingseaofdata,seamlessly integrate themselves into the very fabric of our daily lives. These tools, while offering undeniable advantages, could inadvertently cultivate a reliance that weakens the very faculties of our minds responsible for independent thought and decision-making(Siau & Wang,2020). Will a day come when their ever-present suggestions become so ingrained that critical analysis and truly autonomous choices become a fading memory? The very act of pondering a problem, wrestling with differentperspectives,andarrivingatasolutionindependentlycouldbecomearelicofthepast.

The expanding presence of AI also ignites anxieties about personal privacy. The ever-growing mountain of data collected on individuals creates a vulnerability to breaches and misuse. This information could be weaponized to craft elaborate "deep fakes,"furtherobfuscatingthetruthanderodingtrustintheveryfoundationsofknowledge.Maliciousactorscouldexploitthis datatomanipulatepublicopinion,targetindividualswithdisinformationcampaigns,orevencommitidentitytheft.

Byrecognizingthese potential pitfalls,wecansteer the development ofAI towards a futurethat empowers individuals.This future would see AI not as a replacement for human thought, but rather as a powerful tool that strengthens our capabilities andfosterscriticalthinking.Wemustalsofiercelyguardourprivacybyestablishingrobustsafeguardsagainstdatabreaches andmisuse.OnlythencanweensurethatAIremains aforceforgood,onethatupliftshumanityandpropelsusfurtheralong thepathofprogress. See Figure 1.

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN:2395-0072

At the heart of Artificial Intelligence lie powerful machine learning models and algorithms. These models are trained on massive datasets, constantly evolving as they ingest new information. This echoes the adage "necessity is the mother of invention" - AI thrives on identifying real-world problems (use cases) and analyzing business needs. Data design and engineering then come into play, shaping the algorithms to recognize patterns and achieve self-learning. The initial data fed intothesemodels,aprocesscalledpre-tuning,significantlyimpactstheirabilitytolearnandultimatelymeetthespecificgoals we set for them. Just as a sculptor needs high-quality clay to create a masterpiece, the quality and relevance of the training data are paramount to the success of an AI system. The pre-tuning process allows the model to establish a baseline understandingoftheproblemit'sdesignedtosolve,primingittoidentifypatternsandmakeconnectionsinnewinformationit encounters.

Tosafeguardagainstbias,lackoftransparency,andpotentialharm,wemustweaverobustethicalconsiderationsthroughout the entire AI development lifecycle. This includes problem identification, data engineering, model development, and finally, deployment. By doing so, we can ensure AI systems prioritize fairness, explainability, and responsible use, ultimately protecting human well-being, societal values, and the environment.. Here is a stepwise detection protocol for anyone who is tryingtocreateArtificialIntelligenceSystems-

Identify clear and meaningful problem statements with a specific purpose(s)

The key to successful AI development is tackling challenges that demonstrably improve human lives. Approaching an openendedgoal,like"developinganAIfornewsarticles,"isarecipeforfailure(DayoungK,etall,2023)Thisvaguegoallackscrucial details: the target audience, news genre, and level of editorial oversight. More importantly, how can we guarantee factual accuracyandsafeguardagainstperpetuatingbias?Awell-definedproblemstatement,incontrast,clarifiestheproject'sscope and purpose. For instance, "developing AI models to generate weather reports for London during the summer months" provides a clear focus on location, timeframe, and content type. This allows for the establishment of measurable success criteria,suchasaccuracycomparedtohuman-generatedreports.

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN:2395-0072

The importance of clearly defined goals extends far beyond weather reports. Consider the development of self-driving cars. While passenger comfort and convenience are naturally high priorities, an equally critical factor is the safety of everyone on theroad.Pedestrians,cyclists,andothervehiclesmustbeconsideredalongsidepassengersafety.Byembeddingclearsuccess criteriawithintheproblemstatement,weensurethatAIdevelopmentisdirectedtowardsthecollectivewell-beingofallroad users.Thisincludesnotonlyensuringthecarcannavigateroadwayssafelybutalsodefininghowitshouldreactinunexpected situations, such as a child darting into the street or a malfunctioning traffic light. Clearly defined goals from the outset are essentialforsteeringAIdevelopmentinaresponsibleandethicalmanner.

This is an iterative process of Sketching the Conceptual Model: to define the system's functionality, configure its basic structure, and outline how users will navigate it; Refining the Model to assign physical locations to elements, set policies for system behavior, and fine-tune navigation and operational principles and developing a detailed Prototype to create a fully functionalanddetailedmodelofthesystem.

This is the most critical piece in development. The old adage 'garbage in, garbage out' perfectly captures the importance of datainmachinelearning.Thequalityandquantityofdatadirectlyimpactamodel'seffectiveness(Dayoung K,etal,2023).Just asasculptorneedshigh-qualityclaytocreateamasterpiece,amachinelearningmodelthrivesoncleanandextensivedatafor training,testing,anddevelopment. However, thishungerfordata canleadto ethical dilemmas(AllenC, etal 2006) .Intheir pursuit of ever-better models, corporations might be tempted to use sensitive data, like Personally Identifiable Information (PII),raisingprivacyconcerns.

Biases can easily creep in if the data isn't diverse and representative of the real world. A crucial step is ensuring the data encompassesabroadrangeofdemographics,preventingthemodelfromfavoringonegroupoveranothertomitigatebiases

Emotional biases of humans can significantly influence AI tuning in several ways, potentially causing issues with fairness, transparency, and overall effectiveness of the AI system. Our feelings can significantly impact how we fine-tune AI systems, potentiallyleadingtoissueswithfairness,transparency,andoveralleffectiveness.Imagineanengineerfearful ofself-driving cars;theymightprioritizetrainingdatashowcasingaccidentsinvolvingthem.Thiscouldcreateabiasedmodeloverlycautious insituationsahumandrivermighthandlesafely.Inessence,ouremotionscaninfluencethedatawechoosetotrainthemodel on,shapingitsbehaviorinunexpectedways.

Beyond data selection, emotions can cloud our judgment in other ways. Overconfidence can manifest as skipping crucial testingphases,potentiallyoverlookingcriticalflawsthatcouldleadtoseriousconsequencesdowntheline.Conversely,fearof failure could cause an engineer to hesitate deploying a well-developed model, hindering its potential to benefit users. The pressure to deliver fast results in a competitive environment can also tempt us to cut corners on data collection or testing, resulting in a subpar model that may not perform well in real-world scenarios. Finally, confirmation bias can creep in when interpretingthemodel'sperformance.Here,wemightbemorelikelytofocusonresultsthatconfirmourexistingbeliefsand overlook data that contradicts our expectations, potentially missing opportunities to identify and address areas where the modelisperformingpoorly.

Confirmation Bias Wetendtofavorinformationthatconfirmsourexistingbeliefs.Thiscanleaddeveloperstofocus onmetricsthatalignwiththeirdesiredoutcome,overlookingpotentialissueswiththeAI.Forexample,anAIdesignedtofilter job applications might prioritize keywords associated with a certain stereotype if the developers unconsciously hold those biases.Let'ssaythedevelopersareconcernedabouthiringsomeonewhomightleavethecompanyquickly.Theymightplace undue weight on keywords inresumesthatsuggesta lack ofloyalty,suchas"short-termcontract"or "freelance."Thiscould leadtotheAIfilteringoutqualifiedcandidateswhosimplyprefercontractworkorhaveahistoryoffreelancinginadditionto full-timepositions.

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN:2395-0072

Imagine an AI recommending products on a shopping website. The developers, wanting to maximize sales, might prioritize items with high profit margins over those that are genuinely a good fit for the customer. This could lead to a system that recommends expensive, trendy clothing to a teenager who is more interested in affordability and durability (Mehrabi ,et al,2021)

ConfirmationbiascanalsoinfluencehowdevelopersevaluatetheperformanceofanAImodel.Iftheyareoverlyfocusedona particular metric, they might overlook other important factors. For instance, an AI for facial recognition might be deemed successfulbasedonitsaccuracyinidentifyingfacesfromacertaindemographicgroup.However,ifthemodelperformspoorly onfacesfromotherdemographics,thiscouldbeasignofbiasinthetrainingdataorthemodel'sdesign.

Feature Engineering Bias. When preparing data for training, we assign importance to specific features, essentially creatingarecipefortheAImodeltofollow.Emotionscancloudourjudgmenthereinseveralways.Forinstance,analgorithm designed to approve loan applications might prioritize credit score too heavily if the developers are risk-averse, potentially overlooking qualified borrowers with lower credit scores but strong potential for repaying the loan. This could be due to unconsciousbiasesagainstcertaindemographicsorcareerpathsthathistoricallyhavehadlowercreditscores.Alternatively, developers designing an AI for resume screening might place undue weight on keywords associated with prestigious universities or Fortune 500 companies, if they equate those factors with success. This could lead to overlooking candidates withexceptionalskillsandexperiencewhodidn'tattendIvyLeagueschoolsorhaven'tworkedatbigcorporations..

Algorithmic Choices Based on Emotion. ThetypeofAImodelchosencanbeinfluencedbyemotionsinseveralways For instance, if the goal is to create an engaging social media feed, developers seeking high user engagement metrics might choose an algorithm that prioritizes virality over accuracy. This could lead to the spread of misinformation, as content that evokes strong emotions (like anger or fear) is more likely to be shared than factual information. Alternatively, developers might choose an algorithm that personalizes content based on a user's past behavior. While this can be a useful tool for recommendingrelevantproductsorarticles,itcanalsoleadtothecreationofechochambers,whereusersareonlyexposedto informationthatconfirmstheirexistingbeliefs.

Transparency

Transparency is just as important and hence during the development the data engineering teams who are pretuning the modelsshouldconsider optingforsimplemodelsthatareeasiertounderstandandinterpret.Complexmodelsbecomeblack boxesandverydifficulttounderstand.Developerteamscanadoptsometechniquesasprescribedbelow:

Explainable AI (XAI) techniques. In the past, figuring out how a machine learning model makes decisions was like peeringintoa dark box.Featureattributionmethodsaretoolsthathelpus see inside.Theyanalyzehowindividual piecesof data influence the final outcome. It's like opening a window into the model's mind, showing us which data points have the biggestimpactonitschoices(InternationalAssociationofBusinessAnalyticsCertification,2023).Thisnewfoundknowledgeis essentialforfixingerrorsinthemodel,spottinganyunfairbiases,andmakingsurethemodelthinksthewaywewantitto.

Model documentation. As we build the model, it's important to keep a detailed record of its creation process. This record,likearecipeforthemodel,shouldcapturethespecificmethodsused(theinstructionsthattellthemodelhowtolearn andmakedecisions),thekindandcharacteristicsofdatausedfortraining(theinformationthemodelisfedontolearnabout the world), and how well the model achieves its intended goals (measured performance metrics that tell us how good the modelisatitsjob).Thisthoroughdocumentationisessential(R.Vinuesa,2020). Byhavingaclearunderstandingofhowthe model was created, we can not only identify its strengths and weaknesses (where the model works well and where it struggles),butalsomakeinformeddecisionsforfutureimprovements,suchastweakingthemethods,pickingbettertraining data,oradjustingthemodel'ssettings.

Explainable user interfaces. For models that directly interact with users, transparency is key. Imagine a recommendationsystemthatsuggestsamoviebutoffersnoexplanation.Usersmightbeconfusedordistrustful.Tobridgethis gap, we can equip these models with the ability to explain their decisions. Visualizations like charts or graphs can make the model's thought process more intuitive. Alternatively, plain language summaries can break down the steps leading to the recommendation, revealing which factors held the most weight (International Association of Business Analytics

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN:2395-0072

Certification2023).Thistransparencyfosterstrustandempowersusers.Theygainasenseofwhythemodelmadeaparticular choice,andcanmakemoreinformeddecisionsthemselvesbasedonthisunderstanding.

Human oversight. Inhigh-stakessituations,wherethemodel'sdecisionshavereal-worldconsequences,it'scrucialto avoid a fully automated approach. Instead, we should favor a human-in-the-loop system. In such a system, a human expert reviewsthemodel'soutputandensuresitalignswithreal-worldconsiderationsandethicalprinciples.Thishumanoversight acts as a safeguard, catching any unexpected biases or errors in the model's reasoning. It ensures that the final decision is madewithawell-roundedunderstandingofthesituation,takingintoaccountboththemodel'sinsightsandhumanjudgment.

From the outset of development, fairness should be a core principle. The model should be built and trained using data that accurately reflects the diversity of the real world. We must also implement safeguards to prevent the model from discriminatingagainstanyparticulargroup.Additionally,mechanismsforholdingthemodelaccountableforitsdecisionsare essential.Thiscouldinvolveloggingthemodel'soutputsandthereasoningbehindthem,sothatanybiasedorunfairdecisions canbeidentifiedandcorrected.Bytakingthesesteps,wecanensurethatAIisusedresponsiblyandethically.

Justlikeapilotperformingpre-flightchecks,amodelundergoesrigoroustestingbeforedeployment.Thistesting,conductedin asimulatedenvironment,mimicsreal-worldscenariosandthrowsvariousdatatypesatthemodel.Bymeticulouslyobserving the model's performance under these conditions, developers can identify potential problems and ensure the model delivers accurateandunbiasedresultswhendeployed.Thisdressrehearsal,performedbeforethemodeltakescenterstage,actsasa safetynet,guaranteeingit'swell-preparedtotacklethechallengesofitsfutureenvironment(Laato,etal,2022).

Itisessentialtoconductpriorianalysisandpostanalysisbeforetheactualdeploymentofthealgorithmicmodel.

Priori analysis actsasapre-flightcheck,performedentirelyintheory.Itinvolvesexaminingthealgorithm'sinnerworkings, its strengths and limitations, and how it might react to different types of data. By meticulously analyzing the algorithm beforehand, developers can identify potential problems and bottlenecks. This foresight allows them to optimize the model's performanceandensureit'swell-equippedtohandlethedemandsoftherealworldoncedeployed

After the theoretical groundwork has been laid with a priori analysis, posterior analysis steps in to provide a real-world pictureofthealgorithm'sperformance.Thisiswheretherubbermeetstheroad.

Posterior analysis involves deploying the algorithm in a controlled environment and measuring its actual execution. Key metrics like runtime and memory usage are closely monitored. This analysis reveals how well the algorithm performs in practice, whether it can handle the expected volume of data efficiently, and if it adheres to the theoretical predictions made during a priori analysis. Any discrepancies between the expected and observed performance can indicate areas for improvementorpotentialbottlenecksthatneedoptimization.Essentially,posterioranalysisactsasafinalcheck-upbeforethe algorithmisfullyunleashed,ensuringitcanhandlethedemandsofreal-worlduseeffectively.

The immense potential of AI adoption for innovation and efficiency is undeniable. However, navigating the ethical considerationsthatcomewithsuchpowerfultechnologyisthebiggesthurdle.Acookie-cutterapproachtodataandAIethics is simply inadequate. The key lies in crafting a program that reflects your company's core values, adheres to industry regulations, and considers the specific risks and opportunities posed by the AI systems you're developing. This custom-built approachensuresitalignswithyourspecificneedsforsustainablesuccessintheever-evolvingAIlandscape.Inthefollowing section,we'llexploreseveralmeasurestocultivateastrongethicalfoundationduringAIdevelopmentandadoption..

The secret sauce to a successful data and AI ethics program lies in harnessing the power of existing oversight structures (Blackman,2020) Data governance boards, already tackling data privacy, security, and compliance, are prime candidates to spearheadthisinitiative.Theseboardsofferawinningcombinationofbenefits:

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN:2395-0072

A. Unifying Voices: Theycreateachannelforconcernsfromproductownersandmanagersonthegroundtobeheard.

B. Executive Alignment: Their involvement ensures the ethics program seamlessly integrates with the broader data andAIstrategysetbyexecutives.

C. Proactive Risk Management: Since C-suite leaders ultimately own brand reputation and legal risks, having them involvedkeepstheminformedofcriticalethicalissues.

Building a strong data and AI ethics framework requires industry-specific customization. This framework should establish yourcompany'sethicalprinciples,identifystakeholders,andcreateadedicatedoversightbody.Itshouldalsobeadaptableto changingcircumstances and utilize KPIs tomeasure effectiveness(Blackman,2020). Finally,theframework needstoaddress industry-specific challenges, such as data privacy in healthcare or bias mitigation in retail recommendation engines. By tailoringtheseelementstoyourindustry,youcanensureresponsibleAIdevelopmentandusewithinyourcompany.

GeneraldataandAIethicsframeworksareagoodstartingpoint,butproduct-specificguidanceiskey.Let'stakeexplainability, a common challenge in machine learning. Traditional models are often complex and hard to understand. By providing customizedtoolsandmetrics,companiescanempowerproductmanagerstomakeinformeddecisionsduringAIdevelopment. Thisensuresproductsarebothethicalandeffective.

BuildingathrivingdataandAIethicsculturerequiresatwo-prongedapproach.First,empoweremployeesbyprovidingthem with the knowledge and resources to ask critical questions about data practices and AI development. Create a safe space for them to voice concerns and engage in open discussions with appropriate decision-makers. Second, demonstrate genuine commitment by clearly articulating why data and AI ethics are critical to the organization's long-term success,not just a passingPReffort.Movebeyondpronouncementsandtranslatethiscommitmentintotangibleactions.Financialincentivesalso playacrucialrole.Numerousinfamousexampleshaveshownusthatethicalstandardscanerodewhenpeoplearefinancially rewarded for prioritizing short-term gains over responsible practices. Conversely, failing to recognize and reward ethical behavior can lead to its prioritization falling by the wayside. A company's values are ultimately reflected in how it allocates resources.WhenemployeesseeadedicatedbudgetforscalingandmaintainingastrongdataandAIethicsprogram,itsendsa clearmessagethatbuildingtrustandresponsibleAIisacorevalue,notanafterthought.

Building awareness and involving ethics committees, product managers, engineers, and data collectors is crucial throughout development and ideally, even during procurement. However, limited resources and an underestimation of potential risks necessitateongoingmonitoringofdeployeddataandAIproducts.

Think of it like car safety. Airbags and crumple zones are essential, but they don't guarantee safety at reckless speeds. Similarly, ethical development doesn't guarantee ethical use. We need both quantitative and qualitative research, including stakeholder feedback, to assess real-world impacts. Ideally, relevant stakeholders are identified early on and involved in definingtheproduct'scapabilitiesandlimitations.

Thetransformativepotentialofartificialintelligence(AI)isundeniable.AIhasthepowertorevolutionizeindustries,optimize processes, and generate new insights. However, alongside its immense benefits lie significant ethical concerns. As AI algorithmsbecomemorecomplexandembeddedinourdailylives,ensuringtheirresponsibledevelopmentanddeploymentis paramount. This requires a collaborative effort from various stakeholders, including corporations, policymakers, and individuals.

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN:2395-0072

Inconclusion,AIpresentsapowerfulforceforshapingthefuture.Toensurethisfutureisbeneficialforall,acollectiveeffortis necessary to navigate the ethical considerations that accompany AI's development and use. By working together, we can harnessthepotentialofAIforgoodandensurethistechnologyserveshumanityinaresponsibleandethicalmanner.

References

1. Reid Blackman (2020) PracticalGuidetobuildingEthicalAI,HarvardBusinessReview

2. Huang C,Zhang Z, Mao B,Yao X (2023),"An Overview of Artificial Intelligence Ethics," in IEEE Transactions on ArtificialIntelligence 4(4),799-819.

3. International Association of Business Analytics Certification(2023), The Role of Data Engineering in Ethical AI: EnsuringFairandResponsibleModels

4. IBM (2024), WhatisaMachinelearningAlgorithm?

5. R. Vinuesa (2020) Theroleofartificialintelligenceinachievingthesustainabledevelopmentgoals

6. Allen C, Wallach W ,SmitI(2006)"Whymachineethics?"

7. Siau K , Wang W(2020) Artificial intelligence (AI) ethics", J. Database Manage.,vol.31,no.2,pp.74-87

8. Mehrabi N,Morstatter F,Saxena N,Lerman K, Galstyan A (2021) "A survey on bias and fairness in machine learning", ACM Comput. Surv.,vol.54,no.6,pp.1-35

9. Manda H,Srinivasan S, Rangarao D (2021) IBM Cloud Pak for Data: An enterprise platform to operationalize data, analytics,andAI,PacktPublishing

10. Dayoung K, Qin Z, Hoda E (2023) ExploringApproachestoArtificialIntelligenceGovernance:FromEthicstoPolicy," 2023 IEEE International Symposium on Ethics in Engineering, Science, and Technology (ETHICS), West Lafayette, IN, USA

11. Laato S, Birksted T, Mäntymäki M, Minkkinen M,Mikkonen T 2022) IEEE/ACM 1st International Conference on AI Engineering – Software Engineering for AI (CAIN),Pittsburgh,PA,USA,2022,pp.113-123

12. Reynolds O (2022)Towards Model-Driven Self-Explanation for Autonomous Decision-Making Systems," 2019 ACM/IEEE 22nd International Conference on Model Driven Engineering Languages and Systems Companion

Key Terms and Definitions:

1. Artificial Intelligence (AI): A branch of computer science focused on creating systems capable of performing tasks that typicallyrequirehumanintelligence,suchasvisualperception,speechrecognition,decision-making,andlanguagetranslation.

2. Generative AI: A subset of artificial intelligence that uses algorithms to generate new content, including text, audio, and visuals,autonomously,raisingethicalconcernslikedeepfakesandmisinformation.

3.MachineLearning(ML):Amethodofdataanalysisthatautomatesanalyticalmodelbuilding, allowingsystemstolearnfrom data,identifypatterns,andmakedecisionswithminimalhumanintervention.

4. Natural Language Understanding (NLU): A component of AI that deals with machine reading comprehension, enabling computerstounderstand,interpret,andrespondtohumanlanguageinavaluableway.

5.SupervisedLearning:Atypeofmachinelearningwherethemodelistrainedonlabeleddata,meaningtheinputcomeswith thecorrectoutput,helpingthemodellearntopredictoutcomesfromnewdata.

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN:2395-0072

6. Unsupervised Learning: A type of machine learning that involves training on data without labeled responses, allowing the modeltoidentifypatternsandrelationshipswithinthedataonitsown.

7.ExplainableAI(XAI):TechniquesandmethodsinAIthatmaketheoutputsofmachinelearningmodelsunderstandableand interpretablebyhumans,essentialfortransparencyandtrust.

8.BiasinAI:ThepresenceofprejudiceinAIsystems,oftenresultingfrombiaseddataoralgorithms,whichcanleadtounfair outcomesandreinforceexistinginequalities.

9. Data Governance: The overall management of the availability, usability, integrity, and security of data used in an organization,crucialforensuringthatAIsystemsoperateethically.

10. Human-in-the-Loop (HITL): A model of AI development and deployment where human oversight is integrated into the system,ensuringthatAIdecisionsarealignedwithhumanethicalstandardsandreal-worldconsiderations.

11.EthicsinAI:ThestudyandapplicationofethicalprinciplestothedevelopmentanduseofAItechnologies,aimingtoensure thatAIisdevelopedandusedresponsibly,transparently,andforthebenefitofall.

12. Deep Learning: A subset of machine learning involving neural networks with three or more layers, which can model complexpatternsinlargedatasets.

13.Algorithm:Asetofwell-definedinstructionsforperformingataskorsolvingaproblem,foundationaltothefunctioningof AImodels.

Biographies:

Author- Ashwin Tambe

Ashwin Tambe is a customer-centric Management professional with extensive experience in delivering successful services and managing products. He excels at bridging the gap between technologyandbusinessneeds,ensuringcustomersgetthemostoutoftechnology. Hisexpertiselies in creating exceptional customer experiences by enabling end-users with powerful technology products, building efficient platforms, and streamlining processes to boost overall effectiveness and productadoption.Overthepasttwodecades,Ashwin'scareerhasspannedacrossdiverseindustries, including manufacturing, FinTech, and retail. His transition from engineer to digital transformation leadershowcaseshisleadershipskills,notablyindeliveringlarge-scalecloudandartificialintelligence (AI)projects.

Selva Jagannathan istheHeadofAppliedAIDesignandAdoptionatGoogleCloud.Heisanaccredited GenAI specialist with 20 years of experience leading digital transformation and technology advisory for Fortune 500 enterprises. His work focuses on designing and building agentic AI systems and appliedGenAIsolutionsonGoogleCloud.SelvaholdsaMastersinMachineLearningandanMBAfrom theWisconsinSchoolofBusiness.

Over the past four years, Nagesh Gulkotwar has driven large-scale AI and data innovation at Google, spearheading initiatives in generative AI integration, risk intelligence, and search monetization strategy. His research centers on LLM-augmented recommender systems, trustbased modeling,and ethical AI evaluation frameworks thatenhancetransparencyandfairness.He has authored and presented work on adaptive LLM reasoning and social-intelligence-driven recommendation design. Beyond his technical contributions, he mentors emerging professionals in AI and data leadership. His recent work unites academic research, enterprise-scale implementation, and the responsible evolution of intelligent systems

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN:2395-0072

PraveenBaskarreceivedhisBachelorofTechnologydegreeinInformationTechnologyfromAnnaUniversity.Heiscurrentlya Senior Software Engineer at Google, where he has led efforts in designing and deploying high-performance, scalable web components for the Search results page, including next-generation Search AI experiences. His recent work focused on leveraging Large Language Models (LLMs) to automate web component migrations and improve software engineering processes and tools. He is a speaker at The Data Science Conference and actively contributes to academic and engineering communitiesasapeerreviewer,mentor,andadvocateforcollaborativeinnovationandthoughtleadership

Sunaina Sridhar is an experienced program and project management professional with a career spanning several major technologycompanies,includingGoogle,Amazon,Groupon,andHERE.SheiscurrentlyaDeliveryExecutiveforGoogleCloud ConsultinginChicago,wheresheleadscomplex,multi-disciplinaryengineeringprojects.PreviouslyatGoogle,sheservedasa Senior Program Manager for Search Platforms, helping to build a new team in Chicago and manage strategic initiatives for Searchinfrastructure.