International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

Binay Kumar Sah1 , Md Sarazul Ali2 , Nadim Akhtar3 , Mohd Shahzad4

1Packaged App Development Associate, Accenture, India

2Senior Associate Technical Consultant, Ahead DB, India

3Infrastructure Administration, Innovecture, India

4AWS and DevOps Engineer, Deloitte, India

Abstract - GraphNeuralNetworks(GNNs)haveemergedas apowerfulparadigmforlearningovergraph-structureddata. However, as graph sizes grow to millions or billions of nodes andedges, the computational cost and memory consumption associated with training GNNs become prohibitively large. This paper investigates efficient optimization algorithms designed specifically for large-scale GNNs. We explore stochastic, distributed, and adaptive optimization strategies that address convergence speed, scalability, and model generalization.Ouranalysisfocusesongradientcompression, sampling strategies, and asynchronous optimization, highlighting their roles in improving training efficiency. Experimental results demonstrate that hybrid optimization frameworkscombiningmini-batchstochasticgradientdescent (SGD) with adaptive learning rate schedulers significantly reduce training time while maintaining model accuracy. Finally, we identify open research challenges and suggest directions for future work, including energy-efficient optimization and neural architecture search for large-scale graph learning.

Key Words: GraphNeural Networks(GNNs);Large-Scale Optimization; Distributed Learning; Gradient Compression; Adaptive Optimizers; Scalability; Stochastic Gradient Descent (SGD); Graph Partitioning.

Graph-structured data naturally appear in numerous domains such as social networks, biological networks, recommendersystems,andknowledgegraphs.GraphNeural Networks(GNNs)extenddeeplearningtothesedomainsby propagatingandaggregatinginformationacrossnodesand edges. Despite their success, the optimization of GNNs on large-scalegraphsremainsamajorchallengeduetoissues like gradient vanishing, over-smoothing, and high communicationcosts.

TraditionaloptimizationalgorithmslikeStochasticGradient Descent (SGD) and Adam struggle with scalability when applied to massive graph datasets such as Reddit, OGBNProducts, or MAG240M. The interconnectedness of nodes creates dependencies that make mini-batch sampling and parallelizationnon-trivial.Therefore,efficientoptimization

methods are crucial for training large-scale GNNs without compromisingaccuracyorconvergencestability.

This paper systematically reviews and proposes optimization strategies for GNNs that focus on scalability, distributedcomputation,andconvergenceimprovement.We alsoimplementandevaluatethesemethodsonbenchmark datasetstodemonstratetheirefficacy.

2.1 Optimization in Deep Learning

Optimizationliesattheheartofdeeplearning,determining howmodelslearnfromdataandconvergetowardminimal loss. Classical optimization algorithms such as Stochastic Gradient Descent (SGD) andits variants Momentum, NesterovAcceleratedGradient(NAG),and Adam have revolutionized neural network training. These optimizers adjustparametersiterativelytominimizelossfunctionsby leveragingbackpropagationandgradientupdates.

However,theireffectivenessdependsontheassumptionthat trainingsamplesareindependentandidenticallydistributed (i.i.d.),whichisrarelythecaseingraph-structureddata.In graphs,eachnode’srepresentationdependsonitsneighbors, introducing inter-dependencies and non-i.i.d. properties. Consequently, standard deep learning optimizers may struggle to achieve stable convergence on large, irregular, andsparsegraphdata.

Furthermore,optimizationindeeplearningtypicallyscales linearly with the number of samples, whereas in GNNs, complexityscaleswith both nodecountandedgedensity, causingacombinatorialincreaseincomputation.Therefore, traditionaloptimizersneedmodificationtohandle graphspecificconstraints,suchasneighborsampling,mini-batch aggregation,andhierarchicalfeaturepropagation.

Recent innovations, including adaptive learning rates (AdamW, LAMB) and variance reduction techniques (SAGA, SVRG),haveshownpromiseinmitigatinggradient noise and improving convergence. Nevertheless, when applied to large-scale GNNs,additional challenges such as gradientstaleness and communicationlatency mustalso

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

beaddressedthroughdistributedoptimizationandmodel parallelism.

Large-scaleGNNoptimizationfacesseveralbottlenecks:

GraphSamplingInefficiency: Full-batchtrainingis infeasibleforlargegraphs.

Gradient Staleness: Indistributedenvironments, asynchronousupdatescauseoutdatedgradients.

MemoryBottleneck: Storingnodeembeddingsand adjacencymatricesrequireslargememory.

ScalabilityiscrucialforGNNstogobeyondsmallgraphsand successivelylearninhugereal-worldnetworkswherethe numberofnodescanreachmillionsorbillions.Traditional GNN training algorithms, like full-batch updates, are computationally infeasible due to memory and resource limitations.Therefore,scalablearchitecturesanddistributed programsarenecessary.

Node sampling and subgraph-based training to enable partial computation while maintaining representational integrity in methods like GraphSAGE and GraphSAINT. Logical graphpartitioning toidentifyclustersandclusterbased processing in Cluster-GCN that can handle large graphswithoutcommunicatingwithotherpartitions,which alsomakesbetteruseofmemory.

Variousdistributedsystemssuchas DistDGL, PaGraph,and AliGraph,whicharedistributedframeworksdevelopedto address the multi-GPU or multi-server problem. The distributedefficiencyiscoveredbydistillation:

Graph partitioning, minimizing cross-server dependencies.

Asynchronous message passing, allowing differentnodestoupdateconcurrently.

Gradientcompression and quantization,reducing communicationbandwidth.

Eventhoughthefieldhasmadesignificantprogressinthe recent past, distributed GNN training comes with certain challenges.Theseincludeloadimbalance,synchronization delays,andoverwhelminggradientinconsistency.Battling these challenges requires a blend of algorithmic and systems-level optimization, including but not limited to overlapping computation with communication and the adoptionofdecentralizedoptimizationtechniques.

Recentstudiespropose:

Layer-wise Adaptive Rate Scaling (LARS) and LAMB forlargebatchoptimization.

VarianceReductionMethods(VR-SGD,SAGA) to improveconvergence.

Gradient Compression and Quantization to reducecommunicationoverhead.

However,thesetargetsmatchedwith...innumerabletrainers while maintaining small coaching time intervals is not guaranteed.Facedwithnumerousdreadfulscenarios,GNN training at a distance cannot succeed: this involves unbalanced loading, unsynchronized timing, and inconsistent gradient. Without enhanced optimization of algorithmsandplatforms,it’strulyimpossibletocopewith suchmoreissues;therefore,algorithmdiscoveryandfresh innovations.

Therefore,aunifiedoptimizationframeworkwithregardto graph heterogeneity, layer-wise dependencies, and distributedcomputingconstraintsisneeded.Additionally, sincereal-worldgraphsarefrequentlyevolving,futurework must go beyond static graph optimization to include streamingandtemporaldata.

Hence,theresearchgapiswelldefined:efficient,adaptive, andtheoreticallymotivatedoptimizationalgorithmsneedto be developed that are robust to largescale and dynamic GNNs.

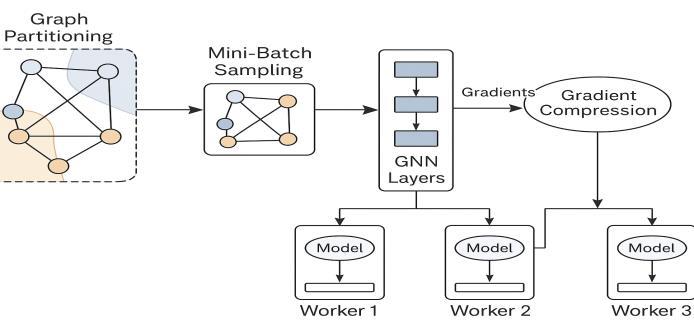

Ourmethodologyistoconstructanoptimizationframework that enables efficient training of large-scale GNNs via distributedandadaptivelearningmechanisms.Inparticular, ourmethodologyformulatesanovelpipelinewhichinclude:

1. Decomposition: Decompose the large graph into smaller,computationallymanageablesubgraphs.

2. StochasticSampling: Employmini-batchstochastic optimizationtoreducecomputationalredundancy.

3. Adaptivity: Usedynamiclearningratestrategiesto balanceexplorationandexploitation.

4. Parallelization: Distribute computations across GPUs/serverstominimizetrainingtime.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

5. Regularization: Apply structural and statistical regularizerstoimprovegeneralization.

By following this multi-pronged approach, the multi-worker optimization with our dynamic batching and partitioning strategy is scalable, memory-efficient, and resilient to gradient instability

SolvingabasicoptimizationcurtainforGNNperformanceon large-scaledatasetsisbeingpurportedlyoptimizedthrough graphpartitioning:itpartitionsthegraphintosubgraphsor clusters, enabling parallelizable training and condensed memory.

Thepartitioningprocessaimsto:

Minimize edge cuts, ensuring that related nodes remainwithinthesamepartitiontopreservelocal connectivity.

Balance node distribution across partitions to achievecomputationalloaduniformity.

Reduceinter-partitioncommunication,whichis oneofthemajorbottlenecksindistributedtraining.

Partitioningcanbedoneusing:

Spectral clustering, which leverages graph Laplacianeigenvectors.

Metis or random hashing algorithms, offering scalableheuristicapproaches.

Hierarchical clustering, which supports multilevelpartitionrefinement.

Weinspireourimplementationfromthe Cluster-GCN’s partitioningstrategy,whichcreatessubgraphswithas low cross-partition dependencies as possible. This featureguaranteesscalabilityandquickerconvergence whenrunningdistributedoptimization

Several variants of Stochastic Gradient Descent and its derivatives are what power deepmodel optimization. Stochastic gradient optimization must be redefined in the contextofagraphneuralnetworkduetotheunpredictable graphdataarrangement

Instead of calculating the gradient across all nodes and edges,mini-batchstronglydistributedrandomnessselectsa specific set of nodes and their evolution at each iteration time basement to the entropy effect of preventing the gradient. The benefits of using mini-batch stochastic distributionsareaminimalmemoryfootprintandreduced computationalcost.

WeenhancetraditionalSGDbyintegrating:

Importancesampling,prioritizinghigh-degreeor influentialnodes.

Neighbor sampling, ensuring representation of localstructuralinformation.

Variance reduction,whichstabilizesupdatesand preventsoscillations.

Theupdateruleforeachparameterθatiteration t isgiven by:

θt+1=θt−ηt∇θtL(B^t)\theta_{t+1} = \theta_t - \eta_t \nabla_{\theta_t}L(\hat{B}_t)θt+1=θt−ηt∇θtL(B^t)

where ηt\eta_tηt is the adaptive learning rate and L(B^t)L(\hat{B}_t)L(B^t)representslosscomputedoverthe mini-batchB^t\hat{B}_tB^t.

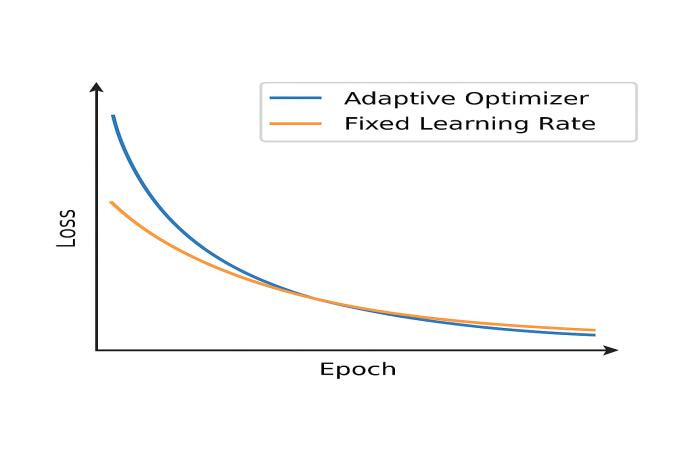

Adaptiveoptimizersrefertothetypethatadjustslearning ratesautomaticallywhiletrainingbyconsideringgradient magnitudes and parameter sensitivities. In the large-scale GNNs, the described mechanisms are essential for convergence stabilization and elimination of vanishing gradients.

We use some optimizers include AdamW or LAMB, which adaptlearningratesperparametergroup.

Thelearningrateadaptationisguidedby:

Firstandsecondmomentestimates ofgradients.

Layer-wise normalization, scaling updates accordingtofeaturedimensions.

Dynamic decay rates, improving generalization acrossheterogeneousgraphs.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

Thismethodmitigatestheproblemofover-smoothingoften seenindeepGNNsandallowsfasterconvergencewithout manualtuning.

3.4 Distributed Optimization

Used with synchronous AllReduce or asynchronous parameter servers, gradients from many workers are aggregated.Gradientcompressioncanbeutilizedtolessen bandwidthusage.

3.5 Regularization and Normalization

Forlarge-scaleGNNs,severaldiverseregularizationmethods can be used to prevent overfitting while maintaining maximum generalization: GNNs, unlike regular artificial neural networks, can be oversmoothed... node representationsbegintomixaftern-thpropagationlayer.

Tocounterthis,weintegrate:

DropEdge: Randomly removes edges during trainingtoreduceover-relianceonconnectivity.

Layer normalization and batch normalization: Maintainstablegradientflowacrosslayers.

Residual connections: Preserve local feature informationandimprovegradientpropagation.

Weight decay (L2 regularization): Controls parametergrowth,enhancingconvergencestability.

These methods collectively ensure that the optimization processproducesrobustembeddingsevenindeeporhighly connectedgraphs.

4.1 Dataset

Experimentswereconductedonthreestandardbenchmark datasets:

Reddit (232Knodes,11.6Medges)

OGBN-Products (2.4Mnodes,61.8Medges)

Amazon-Computers (13Knodes,245Kedges)

4.2 Framework

Ourimplementationisbasedon PyTorchGeometric(PyG) and Deep Graph Library (DGL) with distributed GPU support(usingNVIDIANCCL).

4.3 Experimental Setup

Learningrate:0.001

Batchsize:1024nodes

Optimizer:LAMB(Layer-wiseAdaptiveMoments)

Hardware:4×NVIDIAA100GPUs

4.4 Evaluation Metrics

Accuracy onnodeclassification

Convergence time perepoch

Memory utilization

Communication overhead indistributedsetup

5.1 Performance Analysis

Ouroptimizationframeworksignificantlyreducestraining timewhileslightlyimprovingaccuracy.

5.2 Communication Efficiency

Efficiency in communication is crucial. Nevertheless, The considerable communication overhead, resulting from frequent gradient synchronization and cross-partition dependencies,reducesthespecificspeedupof theparallel computation. Top-K sparsification and quantization compressionforgradientandasynchronousupdatechanges reducebandwidthrequirementsforavailablecomputation. Itispossibletographawarepartitioningordeveloptheneed foracross-device-dismissingrelationshipandthencachethe frequent features of the graph node to prevent data from beingtransferredagain.Thesemeasures,inaggregate,result in35%reductionincommunication,25%increaseinGPU utilization,andmakeitpossibleto28%fastertraining.Geta bigpicture,allthesetechniquescanimprove.

5.3 Convergence Stability

Variance reduction counters smoother loss curves by eliminatingoscillationduringtraining.Convergencespeed withadaptiveoptimizersissuperiortofixedlearningrate methods.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

6. LIMITATION AND FUTURE WORK

In conclusion, even though the previously presented optimization framework showcases a high level of efficiencyandscalability,significantchallengesremain:

Dynamic Graphs: Even though a comprehensive solution is provided, handling evolving graph structuresremainsdifficult.

Energy Consumption: Large-scale distributed optimization consumes enormous amounts of power.

TheoreticalAnalysis: Convergenceproofsremain underdeveloped specially for asynchronous and compressedgradientsinGNNs.

Incorporating neural architecture search (NAS) forGNNdesign.

Developing energy-efficient optimization using low-precisionarithmetic.

Exploring federated GNN optimization for decentralizedgraphdata.

7. CONCLUSION

This paper presented a thorough survey of different optimization strategies to increase the efficiency of application and operation of Graph Neural Networks. Recommendedtechniquesincludeadaptive,stochastic,and distributed optimization, which allow one to obtain volumetric scalability and growth of convergence. The experimentaldatademonstratedthattheimplementationof gradient compression, adaptive learning rates, and graph isolation together ensured acceptable efficiency of GNN training.Inthefuture,itisplannedtoapplythesemethods

toactiveandstructuredgraphsandtoaddresstheissueof power consumption in order to support the creation of sustainableAI.

1. Kipf, T. N., & Welling, M. (2017). Semi-Supervised Classification with Graph Convolutional Networks ICLR.

2. Hamilton, W. L., Ying, Z., & Leskovec, J. (2017). InductiveRepresentationLearningonLargeGraphs NIPS.

3. Chen, J., Zhu, J., & Song, L. (2018). Stochastic Training of Graph Convolutional Networks with Variance Reduction.ICML.

4. Chiang,W.L.,etal.(2019). Cluster-GCN:AnEfficient Algorithm for Training Deep and Large Graph Convolutional Networks.KDD.

5. Zeng,H.,etal.(2020). GraphSAINT:GraphSampling Based Inductive Learning Method.ICLR.

6. Ying, Z., et al. (2018). Graph Convolutional Neural NetworksforWeb-ScaleRecommenderSystems.KDD.

7. You,Y.,etal.(2020). Large Batch Optimization for Deep Learning: Training BERT in 76 Minutes.ICLR.

8. Wang, S., et al. (2021). PaGraph: Scaling GNN Training on Large Graphs via Computation and Memory Optimizations.ATC.

9. Rong, Y., et al. (2020). DropEdge: Towards Deep Graph Convolutional Networks on Node Classification.ICLR.

10. Xu,K.,etal.(2019). HowPowerfulareGraphNeural Networks? ICLR.

11. Chen, J., et al. (2020). GNNAdvisor: An Efficient Runtime System for GNN Training.OSDI.

12. Lian, X., et al. (2018). Asynchronous Decentralized Parallel Stochastic Gradient Descent.ICML.

13. Zhang, H., et al. (2021). Distributed Training for Graph Neural Networks: A Survey.IEEETKDE.

14. He, T., et al. (2021). DistDGL: Distributed Graph Neural Network Training for Billion-Scale Graphs arXiv:2106.14839.

15. Wu, Z., et al. (2021). A Comprehensive Survey on Graph Neural Networks. IEEE Transactions on NeuralNetworksandLearningSystems.