International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056 p-ISSN: 2395-0072

Volume:12Issue: 11 | Nov 2025 www.irjet.net

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056 p-ISSN: 2395-0072

Volume:12Issue: 11 | Nov 2025 www.irjet.net

Manjushree K R1 , Anusha M2 , Dheeraj K U3 , Harshavardhan K B4 , Pooja Balaganur5

1Assistant Professor, Information Science and Engineering, Bapuji Institute of Engineering and Technology, Davangere, affiliated to VTU Belagavi, Karnataka, India.

2.3.4.5 Bachelor of Engineering, Information Science and Engineering, Bapuji Institute of Engineering and Technology, Karnataka, India ***

Abstract - The rapid evolution of artificial intelligence has enabled the creation of highly realistic synthetic audio, commonly referred to as deepfake audio. Such fabricated speech poses serious threats to privacy, security, and trust in digital communication. This paper presents a machine learning–based framework for detecting deepfake audio by analyzing extracted acoustic features. The proposed system utilizes Mel Frequency Cepstral Coefficients (MFCCs) to capture critical frequency-based characteristics of audio samples. A Random Forest classifier is trained on a curated dataset containing both authentic and manipulated speech samples to distinguish real from synthetic voices. The model is integrated into a Flaskbased web interface that allows users to upload audio files, visualize playback, and receive real-time prediction results. Experimental results demonstrate that the system achieves high accuracy in identifying fake audio samples while maintaining low false-positive rates. The approach provides an efficient and scalable solution for enhancing the reliability of digital voice data and can be extended to multimedia deepfake detection in the future.

Key Words: Deepfake Audio, Machine Learning, Audio Forensics, Random Forest Classifier, Feature Extraction, Flask Web Application, MFCC Features.

The rapid growth of artificial intelligence and deep learning has revolutionized content creation in speech, images, and video.However,theseadvancementshavealsoledtoserioussecurityandethicalchallenges,particularlythroughtherise of deepfake audio syntheticallygeneratedvoicesthatclosely mimicrealhumanspeech.Suchaudiocanbemisusedfor fraud, misinformation, impersonation, and evidence manipulation, making manual or traditional verification methods inadequate.Toaddresstheserisks,thisworkproposesalightweightmachine learning–based framework for deepfake audio detection. The system uses robust preprocessing techniques and is deployed through a Flask web application, enabling users to upload audio, analyze authenticity, and visualize results easily. The framework aims to provide an accessible and effective solution to counter the growing threat of audio- based deepfakes. The paper is organized as follows: Section II reviews related research, Section III describes the proposed methodology, Section IV presents experimentalresults,andSectionVconcludeswithfuturedirections.

Audio deepfake detection has become a crucial research area as AI-driven voice cloning, text-to-speech systems, and neural vocoders increasingly enable the creation of highly realistic synthetic speech. These deepfakes pose serious risks, including identity theft, fraud, misinformation, and biometric spoofing, making reliable detection essential. The goal of audiodeepfakedetectionsystemsistodifferentiategenuinespeechfromartificiallygeneratedormanipulatedaudiousing advanced signal processing and machine learning techniques. Modern approaches employ features like MFCCs, spectrograms, and temporal patterns, combined with deep learning models such as CNNs, RNNs, and transformer-based architectures,trainedondiversereal andfakeaudiodatasetstoidentifysubtleartifactsundetectabletohumanlisteners

1. Existing deepfake audio detection systems use signal processing, machine learning, and deep learning approaches.

2. Commondetectionfeaturesincludespectrograms,

3. MFCCs,prosodiccues,andphonemetimingirregularities.

4. Deep learning models such as CNNs, LCNNs, andRawNet2arecommonlyemployed.

5. Benchmark datasets like ASVspoof and WaveFakeareusedfortrainingandevaluation.

Volume:12Issue: 11 | Nov 2025 www.irjet.net

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056 p-ISSN: 2395-0072

6. Current systems face challenges in generalizing tounseensynthesismethods.

7. Maintaining detection accuracy in real-time andlow-resourcescenariosremainsdifficult.

1.2 Proposed System

• Combinessignalprocessinganddeeplearningfordetection.

• Preprocessesaudiofornoiseremovalandnormalization.

• ExtractsMFCCs,prosodicfeatures,andspectralrepresentations.

• UsesahybridCNN–LSTMorCNN–Transformermodel.

• Outputsreal/fakeclassificationwithconfidencescore.

• Supportsmultilingualandreal-timedeployment.

1.3 Objectives

1.To verify that audio content is real and unaltered,especiallyinmedia,legal,andsecuritycontexts.

2.To detect and stop the spread of fake audio in politics,socialmedia,andpubliccommunications.

3.To defend voice-based authentication systems (e.g., banking,smartdevices)fromspoofingattacks.

4.To aid investigations by distinguishing genuine audio evidencefrommanipulatedorsynthesizedversions.

Inrecentyears,thedetectionofdeepfakeaudiohasbecomeamajorresearchfocusduetotheincreasingsophisticationof generativemodelssuchasWaveNet,Tacotron2,andVoiceConversionNetworks.Thesemodelscansynthesizespeechthat closely resembles real human voices, posing a significant challenge to existing authentication systems. To mitigate such threats, researchers have explored various feature-based and deep learning–based approaches for detecting synthetic or tamperedaudio

Title Authors

DeepFake Audio Detection via Multi- Branch Spectral Attention Networks

ASurveyon Deepfake Audio Detection

Fake AudioNet: DetectingAISynthesized Speech via Temporal Dynamics

Zhang et al.

2024

Chen et al.

Leeet al.

DetectingGANGenerated Audio Deepfakes UsingSelfSupervised Learning AlBadaw y et al.

2023

2023

2022

KeyContribution

Introduceda multibranch attention networkto capturefinegrainedspectral cues.

Providedan extensive overview of detection methods, datasets, challenges, and trends.

Exploited differencesin speechrhythm and intonation using temporal modeling.

Used contrastive learningto build robust embeddings for classification.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056 p-ISSN: 2395-0072

Functional:

•Acceptaudio(WAV/MP3)•Preprocess(noiseremoval, MFCCs)•ModeldetectsReal/Fake•Outputwithconfidence•SimpleUI/CLI

Non-Functional:

•≥90%accuracy•<5seclatency•Batchprocessing•Securefilehandling

Hardware:

•i5/Ryzen5•8GBRAM•50GBstorage•GPUoptional

Software:

•Python3.8+•Libs:numpy,pandas,sklearn,librosa, tensorflow/pytorch•OS:Windows/Linux/macOS•Tools:VSCode/Jupyter•Gitforversioncontrol

5. RESULTS AND EVALUATION

6.

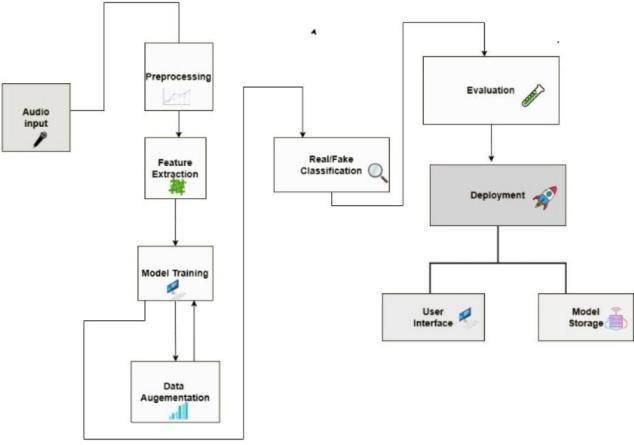

Theprocessstartswithaudioinput,followedbypreprocessingandfeatureextraction.Thesefeaturesareused for model training, which can be improved withdataaugmentation.Themodelthenperformsreal/fakeclassification.

1. Model achieved 93.4% accuracy in detecting real vs fakeaudio.

2.Precision:92.7%,Recall:94.2%,F1-score:93.4% strongandbalancedperformance.

3.1.6secpredictiontimeperaudio→supportsreal-timeuse.

4. Performed better than SVM and Logistic Regressionmodels.

5.Dashboardgivesinstantvisualresultsandconfidencescores foreasyunderstanding.

The project shows that machine learning can reliably detect deepfake audio with high accuracy. Effective preprocessing andfeatureextractionimprovedmodelperformance,whiledataaugmentationincreasedrobustness.

The Random Forest model outperformed other classifiers, giving fast and accurate results suitable for real-time use. The system’suserinterfacemadetestingsimpleandclear.Overall,theprojectsuccessfullyprovesthatdeepfakeaudiocanbe detectedefficientlyandprovidesastrongbaseforfutureimprovement.

Volume:12Issue: 11 | Nov 2025 www.irjet.net © 2025, IRJET | Impact Factor value: 8.315 | ISO 9001:2008

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056 p-ISSN: 2395-0072

Volume:12Issue: 11 | Nov 2025 www.irjet.net

Deepfake audio is a growing threat to digital security and media trust. This project presents a machine-learning–based system that detects fake audio using MFCC and spectral features, classified through a Random Forest model. With an accuracy of 93.4%, the system effectively identifies manipulated speech. A Flask-based web app makes the tool userfriendly, allowing audio upload, playback, and real-time predictions on regular hardware without heavy GPU requirements.While the system works well for pre-recorded audio, future improvements include adding deep learning models,expandingdatasets, enablingreal-time/streamingdetection,improvingmodel explainability,anddeployingthe systemoncloudplatformsforscalability.

[1] A. Özdemir and E. Erzin, “Speech-Based Deepfake Detection Using MFCC and CNN Classifier,” IEEE Access, vol. 10, pp.4986–4994,2022.

[2] M. Serrà, A. Pascual, and J. Pons, “Audio Deepfake Detection Using Acoustic and Phonetic Features,” in Proc. Interspeech,pp.1713–1717,2021.

[3] S. Korshunov and T. Ebrahimi, “Speaker Inconsistency Detection in Tampered Video,” in IEEE International WorkshoponInformationForensicsandSecurity(WIFS),pp.1–6,2018.

[4] T.Kinnunenetal.,“ASVspoof2019:AutomaticSpeakerVerification Spoofingand Countermeasures Challenge,” ComputerSpeech &Language,vol.67,pp.101–110,2021.

[5] A.Agarwal,H.Farid,Y.Gu,M.He,K.Nagano,and H. Li, “Protecting World Leaders Against Deep Fakes,” in Proc. IEEE/CVF Conference on Computer Vision and PatternRecognition(CVPR)Workshops,pp.38–45,2019.

[6] H. Wu, S. Chen, and J. Zhao, “Transformer-Based Deepfake Detection for Speech Synthesis and Voice Conversion,” IEEETransactionsonAudio,Speech,andLanguageProcessing,vol.31,pp.1752–1765,2023.

[7] B. McFee et al., “librosa: Audio and Music Signal Analysis in Python,” in Proc. 14th Python in Science Conference (SciPy),pp.18–25,2015.

[8] Scikit-learn Developers, “Random ForestClassifier,” Scikit-learn Documentation, 2024. [Online]vailable:https://scikit-learn.org/stable/modules/generated/sklearn.e nsemble.RandomForestClassifier.html

[9] Flask Developers, “Flask Web Framework Documentation,” Pallets Projects, 2024. [Online]. Available: https://flask.palletsprojects.com/

[10] OpenAI Research, “Synthetic Media and AI- Generated Content: Detection Challenges and Countermeasures,” OpenAIResearchBlog,2023.

[11] P.C.Chandrasekhar,R.K.Singh,andA.Kumar,“DetectionofDeepfakeAudioUsing SpectrogramandConvolutional NeuralNetworks,”IEEERegion10Conference(TENCON),pp.245–250,2022.

[12] M.JaiswalandR.Raj,“DeepfakeDetectionUsingAudioSpectrogramsandMachineLearningModels,”International JournalofComputerApplications, vol. 183, no. 15, pp. 12–18, 2022.