Volume: 12 Issue: 11 | Nov 2025 www.irjet.net

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056 p-ISSN: 2395-0072

Volume: 12 Issue: 11 | Nov 2025 www.irjet.net

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056 p-ISSN: 2395-0072

v1 , Amsa S2

1 PG student, Department Of Computer Applications, Jaya College of Arts and Science, Thiruninravur, Tamilnadu, India

2 Assistant Professor, Department Of Computer Applications, Jaya College Of Arts and Science , Thiruninravur, Tamilnadu, India

Abstract - Time series analysis has become increasingly critical across domains such as finance, healthcare, meteorology,manufacturing,andsmartcities,whereaccurate forecasting and pattern recognition support data-driven decision-making. Traditional statistical approaches, while effective for linear and stationary data, often struggle to modelthecomplex,nonlinear,andhigh-dimensionalpatterns foundinmoderntime-dependentdatasets.Deeplearninghas emergedasapowerfulsolution,offeringadvancedcapabilities for feature extraction, long-term dependency modeling, anomalydetection,andmultivariateforecasting.Techniques suchasRecurrentNeuralNetworks(RNNs),LongShort-Term Memory (LSTM) networks, Gated Recurrent Units (GRUs), Convolutional Neural Networks (CNNs), and Transformerbased architectures have significantly enhanced predictive accuracyandscalability.Thispaperprovidesacomprehensive overviewofdeeplearningtechniquesfortimeseriesanalysis, highlighting their methodologies, applications, advantages, and challenges. The review emphasizes the growing shift toward hybrid models, attention mechanisms, and representation learning, which continue to push the boundaries of forecasting performance. Finally, the paper outlines future research directions, including improved interpretability,data-efficientlearning,androbustmodelsfor real-worldenvironments

Key Words: Deep Learning, Time Series Analysis, LSTM, RNN,GRU,CNN,TransformerModel,AttentionMechanism, Sequence Modeling, Forecasting, Anomaly Detection, Multivariate Time Series, Feature Extraction, Prediction Models,TemporalData.

Time series analysis plays a crucial role in understanding and forecasting sequential data generated over time from domains such as finance, healthcare, climate science, manufacturing, and energy systems. Traditional statistical methods includingARIMA,Holt–Winters,andexponential smoothing have long been used for modeling temporal patterns,butthesemethodsoftenstruggletocapturenonlinear relationships, long-range dependencies, and highdimensional features present in modern datasets Deep learning has emerged as a powerful alternative, offering advanced capabilities for learning complex temporal patternsdirectlyfromrawdata.ModelssuchasRecurrent

Neural Networks (RNNs), Long Short-Term Memory networks (LSTMs), Gated Recurrent Units (GRUs), ConvolutionalNeuralNetworks(CNNs),andmorerecently, Transformers and attention-based architectures, have significantly improved predictive performance across varioustime-dependenttasks.Thesearchitecturesexcelat automatically extracting hierarchical features, handling irregulartimeintervals,processingmultivariatesequences, and learning long-term dependencies that traditional approachescannoteasilymodel.

Recent advances in deep learning have significantly transformed time series analysis by enabling models to capturecomplextemporalpatterns,nonlinearrelationships, and long-range dependencies more effectively than traditional statistical methods. Early research focused on RecurrentNeuralNetworks(RNNs),withLongShort-Term Memory (LSTM) and Gated Recurrent Unit (GRU) architectures addressing vanishing gradient issues and improving sequential modeling performance across tasks such as forecasting, classification, and anomaly detection. ConvolutionalNeuralNetworks(CNNs)werelateradapted for1Dtemporaldata,demonstratingstrongcapabilitiesin local pattern extraction and computational efficiency. TemporalConvolutionalNetworks(TCNs)furtherenhanced performance through dilated convolutions and residual connections, offering larger receptive fields and stable training. More recent studies highlight the impact of attentionmechanismsand Transformer-basedarchitectures, which model long-range temporal interactions without recurrence and achieve state-of-the-art results in multivariate and long-horizon forecasting. Additionally, representation learning approaches using autoencoders, variationalmodels,andself-supervisedcontrastivelearning have improved feature extraction, anomaly detection, and dataefficiency.HybridmodelscombiningCNNs,RNNs,and attention layers have been widely explored to leverage complementary strengths. Despite these advancements, challenges remain in interpretability, non-statio research intoefficient,interpretable,andgeneralizabledeeplearning frameworksfortimeseriesanalysis.

The methodology for this study follows a structured process involving data preparation, model development, training, and performance evaluation. Initially, the time series dataset undergoes preprocessing steps such as normalization,missingvaluehandling,noisereduction,and segmentation using a sliding window approach to create supervisedlearningsequences.Theprocesseddataisthen dividedintotraining,validation,andtestingsetsusingtimeawaresplittingtopreserve temporal order.Deeplearning models such as LSTM, GRU, CNN, and Transformer architecturesaredesignedtocapturebothshort-termand long-termdependenciesinthedata.

Theexistingsystemfortimeseriesforecastinglargelyrelies ontraditionalstatisticalmethodssuchasARIMA,SARIMA, andexponential smoothing, whichperform well forlinear and stationary data but struggle with complex, nonlinear, andmultivariatetimeseriespatterns.Thesemodelstypically require manual feature engineering, extensive parameter tuning, and domain expertise to achieve reasonable accuracy. Additionally, traditional methods have limited ability to capture long-range dependencies and often fail when faced with irregularities, noise, missing values, or sudden changes in temporal behavior. As datasets grow larger and more complex, existing systems demonstrate reducedscalabilityandinconsistentperformance,especially inreal-worldapplicationssuchasfinance,healthcare,energy demand,andIoTsensoranalysis.

The proposed system introduces deep learning–based architecturestoovercomethelimitationsoftraditionaltime series forecasting methods. It leverages models such as LSTM, GRU, CNN, and Transformer networks to automaticallylearnhierarchicaltemporalfeaturesandlongterm dependencies without the need for manual feature engineering. The system supports multivariate inputs, capturesnonlinearpatterns,andadaptseffectivelytolarge and complex datasets. By incorporating attention mechanisms, regularization techniques, and optimized hyperparameters,theproposedmodel providesimproved accuracy, robustness, and scalability. It enables reliable forecasting even in the presence of noisy, irregular, or missing data. Further, the system integrates efficient training, validation, and deployment workflows to ensure thatthedeeplearningmodel canbeappliedinreal-world environmentsforhigh-performancetimeseriesanalysis

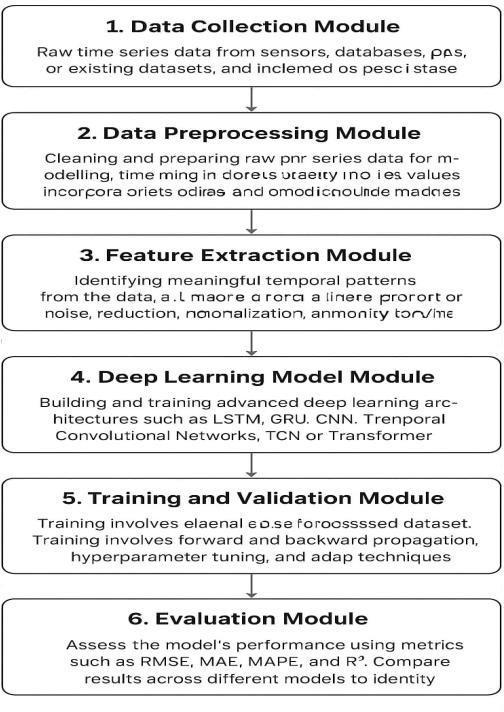

4.1. Data Collection Module

This module is responsible for gathering raw time series datafromvarioussourcessuchassensors,databases,APIs,

2395-0072

or existing datasets. It ensures that the collected data maintainschronologicalorderandincludesrelevantexternal factors such as weather conditions, events, or metadata whenrequiredformultivariateforecasting.

4.2. Data Preprocessing Module

In this module, the raw time series data is cleaned and preparedformodeling.Keystepsincludehandlingmissing values,removingnoise,normalizingvalues,andapplyinga slidingwindowtechniquetoconvertcontinuoussequences intosupervisedlearninginput–outputpairs.Thismodulealso performsdatasplittingintotraining,validation,andtesting sets using time-aware techniques to prevent temporal leakage

4.3.

This module focuses on identifying meaningful temporal patterns from the data. In deep learning–based systems, featureextractionislargelyautomated,butthemodulemay incorporate additional engineered features such as lag variables,time-basedfeatures(hour,day,season),orexternal contextualvariablestoenhancemodelperformance.

4.4. Deep Learning Model Module

This module builds and trains advanced deep learning architectures such as LSTM, GRU, CNN, Temporal ConvolutionalNetworks,orTransformermodels.Itdefines modellayers,activationfunctions,optimizationstrategies, andregularizationmethods.Themodellearnsshort-termand long-termdependenciesandextractshierarchicaltemporal featuresessentialforaccurateforecasting.

4.5. Training and Validation Module

In this module, the selected model is trained using the processeddataset.Traininginvolvesforwardandbackward propagation,hyperparametertuning,andtheapplicationof techniquessuchasdropout,earlystopping,andlearningrate scheduling. Performance is monitored using validation datasetstoavoidoverfittingandensuregeneralization.

4.6.

Thismoduleassessesthemodel'sperformanceusingmetrics suchasRMSE,MAE,MAPE,andR².Itcomparestheresults across different models to identify the best-performing architecture.Visualizationtoolssuchaslosscurves,predicted vs.actualgraphs,anderrordistributionsarealsopartofthis module.

The implementation of the proposed deep learning-based timeseriesanalysissysteminvolvesastructuredworkflow designedtohandlerawsequentialdataandconvertitinto accurateforecasts.Theprocessbeginswithcollectingtimedependent data from relevant sources such as sensors,

International Research

of

Technology (IRJET) e-ISSN: 2395-0056 p-ISSN: 2395-0072 Volume: 12 Issue: 11 | Nov 2025 www.irjet.net

financialrecords,orenvironmentaldatabases.Thecollected dataisthenpreprocessedthroughcleaning,normalization

handling missing values, and splitting into training and testing sets to ensure consistency and quality. After preprocessing, essential temporal features are extracted eithermanuallyorusingautomateddeeplearningfeature extractors.Thecleanedandstructureddataisthenfedinto theselecteddeeplearningarchitecture suchasLSTM,GRU, CNN,orTransformermodels dependingonthecomplexity ofpatternsandsequencelength.Themodelistrainedusing backpropagationandoptimizedthroughtechniquessuchas Adam optimizer, dropout, and early stopping to enhance accuracy while preventing overfitting. Once training is complete,themodelisvalidatedusingperformancemetrics like MAE, RMSE, and accuracy to evaluate its forecasting capability.Finally,thetrainedmodelisdeployedtogenerate real-time or batch forecasts, enabling practical use in applicationssuchastrendprediction,anomalydetection,and decision-making systems. . The model is trained using trainingdatasetswhilevalidationdataisusedto fine-tune parameters,preventoverfitting,andoptimizeperformance. Oncetrainingiscompleted,thesystemgeneratespredictions onunseendata,producingaccurateforecastsandidentifying anomaliesorpatternsdependingontheobjective.Thefinal stage involves evaluating the model using metrics suchas MAE,RMSE,accuracy,orMAPE,followedbydeploymentfor real-timeorbatchforecasting.Thisimplementationensuresa robust,scalable,andefficientworkflowcapableofhandling complex and nonlinear time series data in real-world environments The implementation of the proposed deep learning–basedtimeseriesanalysissystembeginswiththe systematic collection of raw time-dependent data from relevant sources such as sensors, databases, financial platforms,orhealthcaremonitoringsystems.Thecollected dataset undergoes preprocessing steps including noise removal, handling missing values, normalization, and transformation into supervised learning format to ensure cleanandconsistentinputforthemodel.

Figure1.ResultoftheDeepLearningTimeSeriesForecasting Model

Deeplearninghasemergedasapowerfulparadigmfor timeseriesanalysis,offeringsignificantimprovementsover traditionalstatisticalandmachine-learningtechniques.By automatically learning complex temporal dependencies, nonlinear patterns, and hidden structures, deep learning modelssuchasRNNs,LSTMs,GRUs,CNNs,andTransformers havedemonstratedsuperiorperformanceacrossforecasting, classification,anomalydetection,andsequence-to-sequence tasks.Thesemodelseliminatetheneedforextensivemanual featureengineeringandcaneffectivelyhandlelarge,highdimensional, and noisy datasets. Despite its advantages, deep learning also presents challenges, including high computational requirements, large data dependency, difficultyinmodelinterpretability,andtheneedforextensive hyperparametertuning.However,ongoingresearchinhybrid models,attentionmechanisms,explainableAI,andefficient architectures is making deep learning more accessible, interpretable,andadaptable.Overall,deeplearningcontinues to reshape the landscape of time series analysis, enabling more accurate predictions and deeper insights across domainssuchasfinance,healthcare,climatescience,energy, andindustrialmonitoring.Asadvancementscontinue,deep learning-driventimeseriesanalysisisexpectedtobecome evenmorerobust,scalable,andwidelyadoptedinreal-world applications.

International Research

of

and Technology (IRJET) e-ISSN: 2395-0056 p-ISSN: 2395-0072 Volume: 12 Issue: 11 | Nov 2025 www.irjet.net

Thefuturescopeofdeeplearningfortimeseriesanalysisis highly promising, driven by advancements in model architectures,computationalpower,andavailabilityoflargescaletemporaldatasets.Emergingtechniquessuchasselfsupervised learning, foundation models, and hybrid deep learningframeworksareexpectedtosignificantlyimprove forecasting accuracy and generalization across diverse domains. In addition, integrating explainable AI (XAI) will enhancetheinterpretabilityofcomplexmodels,makingthem moresuitableforsensitiveapplicationssuchashealthcare andfinance.Real-timeforecastingsystemspoweredbyedge AI and lightweight neural networks will enable faster, ondevice predictions for IoT and smart city environments. Furthermore,multimodaltimeserieslearning combining numerical data with text, audio, and sensor streams will open new research directions for more comprehensive situationalunderstanding.Finally,futureworkwillfocuson developingmorerobust,noise-tolerant,energy-efficient,and data-efficientmodelscapableofadaptingtodynamic,nonstationary environments encountered in real-world scenarios.

1. Fawaz, H. I., et al. (2019). Deep learning for time series classification: A review. Data Mining and Knowledge Discovery,33(4),917–963.

2.Lim,B.,&Zohren,S.(2021).Time-seriesforecastingwith deeplearning:Asurvey.Philosophical Transactionsofthe RoyalSocietyA,379(2194).

3. Shi, X., et al. (2015). Convolutional LSTM network: A machine learning approach for precipitation nowcasting. Advances in Neural Information Processing Systems (NeurIPS)

4.Hochreiter,S.,&Schmidhuber,J.(1997).Longshort-term memory.NeuralComputation,9(8),1735–1780.

5. Vaswani, A., et al. (2017). Attention is all you need. AdvancesinNeuralInformationProcessingSystems

6.Zhang,G.,EddyPatuwo,B.,&Hu,M.Y.(1998).Forecasting with artificial neural networks: The state of the art. InternationalJournalofForecasting,14(1),35–62

7.Salinas,D.,etal.(2020).DeepAR:Probabilisticforecasting with autoregressive recurrent networks. International JournalofForecasting.

8. Wu, Y., et al. (2021). Autoformer: Decomposition Transformers with auto-correlation for long-term series forecasting.AAAIConference.