International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 11 | Nov 2025 www.irjet.net p-ISSN: 2395-0072

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 11 | Nov 2025 www.irjet.net p-ISSN: 2395-0072

Durga v1 , Amsa S2

1 PG student, Department Of Computer Applications, Jaya College Of Arts and Science,Thiruninravur, Tamilnadu,India

2 Assistant Professor, Department Of Computer Applications, Jaya College Of Arts and Science , Thiruninravur, Tamilnadu,India

Abstract - Time series analysis has become increasingly critical across domains such as finance, healthcare, meteorology, manufacturing, and smart cities, where accurate forecasting and pattern recognition support data-driven decision-making. Traditional statistical approaches, while effective for linear and stationary data, often struggle to model the complex, nonlinear, and high-dimensional patterns found in modern time-dependent datasets. Deep learning has emerged as a powerful solution, offering advanced capabilities for feature extraction, long-term dependency modeling, anomaly detection, and multivariate forecasting. Techniques such as Recurrent Neural Networks (RNNs), Long Short-Term Memory (LSTM) networks, Gated Recurrent Units (GRUs), Convolutional Neural Networks (CNNs), and Transformer- based architectures have significantly enhanced predictive accuracy and scalability. This paper provides a comprehensive overview of deep learning techniques for time series analysis, highlighting their methodologies, applications, advantages, and challenges. The review emphasizes the growing shift toward hybrid models, attention mechanisms, and representation learning, which continue to push the boundaries of forecasting performance. Finally, the paper outlines future research directions, including improved interpretability, data-efficientlearning,androbustmodelsforreal-world environments

Key Words: Deep Learning, Time Series Analysis, LSTM, RNN, GRU, CNN, Transformer Model, Attention Mechanism, Sequence Modeling, Forecasting, Anomaly Detection, Multivariate Time Series, Feature Extraction, Prediction Models, Temporal Data.

Time series analysis plays a crucial role in understanding and forecasting sequential data generated over time from domainssuchasfinance,healthcare,climatescience,manufacturing,andenergysystems.Traditionalstatisticalmethods including ARIMA, Holt–Winters, and exponential smoothing have long been used for modeling temporal patterns, but these methods often struggle to capture non- linear relationships, long-range dependencies, and high- dimensional featurespresentinmoderndatasetsDeeplearning has emerged as a powerful alternative, offering advanced capabilities for learning complex temporal patterns directly from raw data. Models such as Recurrent Neural Networks (RNNs), Long Short-Term Memory networks (LSTMs), Gated Recurrent Units (GRUs), Convolutional Neural Networks (CNNs), and more recently, Transformers and attention-based architectures, have significantly improved predictive performance across various time-dependent tasks. These architectures excel at automatically extracting hierarchical features, handling irregular time intervals, processing multivariate sequences, and learning long-term dependenciesthattraditionalapproachescannoteasilymodel.

Recent advances in deep learning have significantly transformed time series analysis by enabling models to capture complex temporal patterns, nonlinear relationships, and long-range dependencies more effectively than traditional statisticalmethods.EarlyresearchfocusedonRecurrentNeuralNetworks(RNNs),withLongShort-TermMemory(LSTM) and Gated Recurrent Unit (GRU) architectures addressing vanishing gradient issues and improving sequential modeling performance across tasks such as forecasting, classification, and anomaly detection. Convolutional Neural Networks (CNNs) were later adapted for 1D temporal data, demonstrating strong capabilities in local pattern extraction and computational efficiency. Temporal Convolutional Networks (TCNs) further enhanced performance through dilated convolutions and residual connections, offering larger receptive fields and stable training. More recent studies highlight the impact of attention mechanisms and Transformer-based architectures, which model long-range temporal interactions without recurrence and achieve state-of-the-art results in multivariate and long-horizon forecasting. Additionally, representationlearningapproachesusingautoencoders, variationalmodels,andself-supervisedcontrastivelearninghave improvedfeatureextraction,anomalydetection,anddataefficiency.HybridmodelscombiningCNNs,RNNs,and attention layers have been widely explored to leveragecomplementary strengths. Despite these advancements, challenges remain in interpretability, non-statio research into efficient, interpretable, and generalizable deep learning frameworksfortimeseriesanalysis.

Volume: 12 Issue: 11 | Nov 2025 www.irjet.net

The methodologyfor thisstudy followsa structured processinvolvingdata preparation,model development,training, and performance evaluation. Initially, the time series dataset undergoes preprocessing steps such as normalization, missingvaluehandling,noisereduction,andsegmentationusingaslidingwindowapproachtocreatesupervisedlearning sequences. The processed data is then divided into training, validation, and testing sets using time- aware splitting to preservetemporalorder.DeeplearningmodelssuchasLSTM,GRU,CNN,andTransformerarchitecturesaredesignedto capturebothshort-termandlong-termdependenciesinthedata.

TheexistingsystemfortimeseriesforecastinglargelyreliesontraditionalstatisticalmethodssuchasARIMA,SARIMA,and exponential smoothing, which perform well for linear and stationary data but struggle with complex, nonlinear, and multivariate timeseries patterns. Thesemodels typically require manual feature engineering, extensive parameter tuning, and domain expertise to achieve reasonable accuracy. Additionally, traditional methods have limited ability to capture long-range dependencies and often fail when faced with irregularities, noise, missing values, or sudden changes in temporal behavior. As datasets grow larger and more complex, existing systems demonstrate reduced scalability and inconsistent performance, especially in real-world applications such as finance, healthcare, energy demand, and IoT sensor analysis.

The proposed system introduces deep learning–based architecturestoovercomethelimitations oftraditional time series forecasting methods. It leverages models such as LSTM, GRU, CNN, and Transformer networks to automatically learn hierarchicaltemporalfeaturesandlong-termdependencieswithouttheneedformanualfeatureengineering.Thesystem supports multivariate inputs, captures nonlinear patterns, and adapts effectively to large and complex datasets. By incorporating attention mechanisms, regularization techniques, and optimized hyperparameters, the proposed model provides improved accuracy, robustness, and scalability. It enables reliable forecasting even in the presence of noisy, irregular, or missing data. Further, the system integrates efficient training, validation, and deployment workflows to ensure thatthedeeplearningmodelcanbeappliedinreal-worldenvironmentsforhigh-performancetimeseriesanalysis

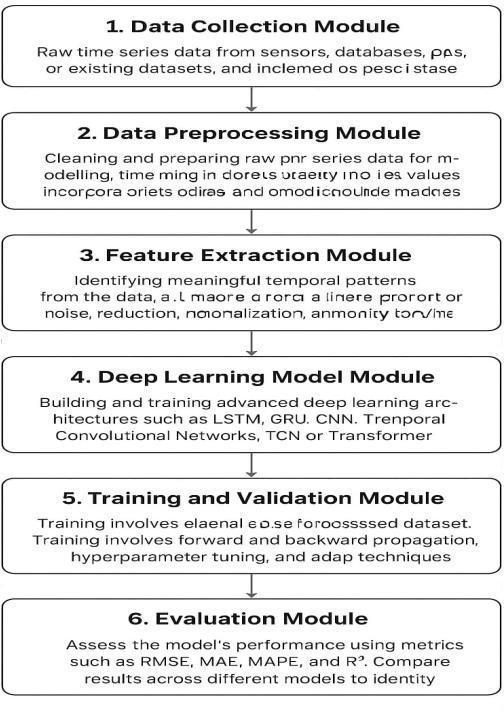

4.1. Data Collection Module

This module is responsible for gathering raw time series data from various sources such as sensors, databases, APIs, or existingdatasets.Itensuresthatthecollecteddatamaintainschronologicalorderandincludesrelevantexternalfactorssuch asweatherconditions,events,ormetadatawhenrequiredformultivariateforecasting.

4.2. Data Preprocessing Module

Inthismodule,therawtimeseriesdataiscleanedandpreparedformodeling.Keystepsincludehandlingmissingvalues, removing noise, normalizing values, and applying a sliding window technique to convert continuous sequences into supervised learning input–output pairs. This module also performs data splitting into training, validation, and testing sets usingtime-awaretechniquestopreventtemporalleakage

4.3. Feature Extraction Module

Thismodulefocusesonidentifyingmeaningfultemporalpatternsfromthedata.Indeeplearning–basedsystems,feature extractionislargelyautomated,butthemodulemayincorporateadditionalengineeredfeaturessuchaslagvariables,timebasedfeatures(hour,day,season),orexternalcontextualvariablestoenhancemodelperformance.

4.4. Deep Learning Model Module

This module builds and trains advanced deep learning architectures such as LSTM, GRU, CNN, Temporal Convolutional Networks,orTransformermodels.Itdefinesmodellayers,activationfunctions,optimizationstrategies, andregularization methods.Themodellearnsshort-termandlong-termdependenciesandextractshierarchicaltemporalfeaturesessentialfor accurateforecasting.

Volume: 12 Issue: 11 | Nov 2025 www.irjet.net

In this module, the selected model is trained using the processed dataset. Training involves forward and backward propagation, hyperparameter tuning, and the application of techniques such as dropout, early stopping, and learning rate scheduling.Performanceismonitoredusingvalidationdatasetstoavoidoverfittingandensuregeneralization.

This module assesses the model's performance using metrics such as RMSE, MAE, MAPE, and R². It compares the results across different models to identify the best-performing architecture. Visualization tools such as loss curves, predicted vs. actualgraphs,anderrordistributionsarealsopartofthismodule.

The implementation of the proposed deep learning-based time series analysis system involves a structured workflow designed to handle raw sequential data and convert it into accurate forecasts. The process begins with collecting timedependentdatafromrelevantsourcessuchassensors, financialrecords,orenvironmentaldatabases.Thecollecteddatais thenpreprocessedthroughcleaning,normalization,handlingmissingvalues,andsplittingintotrainingandtestingsetsto ensure consistency and quality. After preprocessing, essential temporal features are extracted either manually or using automated deep learning feature extractors. The cleaned and structured data is then fed into the selected deep learning architecture such as LSTM, GRU, CNN, or Transformer models depending on the complexity of patterns and sequence length. The model is trained using backpropagation and optimized through techniques such as Adam optimizer, dropout, andearlystoppingtoenhanceaccuracywhilepreventingoverfitting.Oncetrainingiscomplete,themodelisvalidatedusing performance metrics like MAE, RMSE, and accuracy to evaluate its forecasting capability. Finally, the trained model is deployedtogenerate real-time or batch forecasts, enabling practical use in applicationssuchas trend prediction,anomaly detection,anddecision-makingsystems..Themodelistrainedusingtrainingdatasetswhilevalidationdataisusedtofinetuneparameters,preventoverfitting,andoptimizeperformance.Oncetrainingiscompleted,thesystemgeneratespredictions onunseendata,producingaccurateforecastsandidentifyinganomaliesorpatternsdependingontheobjective.Thefinalstage involvesevaluatingthemodelusingmetricssuchasMAE,RMSE,accuracy,orMAPE,followedbydeploymentforreal-timeor batchforecasting.Thisimplementationensuresa robust,scalable,andefficient workflowcapable ofhandling complex and nonlinear time series data in real-world environments The implementation of the proposed deep learning–based time series analysis system begins with the systematic collection of raw time-dependent data from relevant sources such as sensors,databases,financialplatforms,orhealthcaremonitoringsystems.Thecollecteddatasetundergoespreprocessing stepsincludingnoiseremoval,handlingmissingvalues,normalization,andtransformationintosupervisedlearningformat toensurecleanandconsistentinputforthemodel.

International Research Journal of Engineering and Technology (IRJET) e-ISSN:

Volume: 12 Issue: 11 | Nov 2025 www.irjet.net

Deep learning has emerged as a powerful paradigm for time series analysis, offering significant improvements over traditional statistical and machine-learning techniques. By automatically learning complex temporal dependencies, nonlinearpatterns,andhiddenstructures,deeplearning modelssuchasRNNs,LSTMs,GRUs,CNNs,andTransformershave demonstrated superior performance across forecasting, classification, anomaly detection, and sequence-to-sequence tasks. These models eliminate the need for extensive manual feature engineering and can effectively handle large, highdimensional, and noisy datasets.Despite its advantages, deep learning also presents challenges, including high computational requirements, large data dependency, difficulty in model interpretability, and the need for extensive hyperparameter tuning. However, ongoing research in hybrid models, attention mechanisms, explainable AI, and efficient architectures is making deep learning more accessible, interpretable, and adaptable. Overall, deep learning continues to reshapethelandscapeoftimeseriesanalysis,enablingmoreaccuratepredictionsanddeeperinsightsacrossdomainssuch asfinance,healthcare,climatescience,energy,andindustrialmonitoring.Asadvancementscontinue,deeplearning-driven timeseriesanalysisisexpectedtobecomeevenmorerobust,scalable,andwidelyadoptedinreal-worldapplications.

The future scope of deep learning for time series analysis is highly promising, driven by advancements in model architectures, computational power, and availability of large- scale temporal datasets. Emerging techniques such as selfsupervised learning, foundation models, and hybrid deep learning frameworks are expected to significantly improve forecastingaccuracyandgeneralizationacrossdiversedomains.Inaddition,integratingexplainableAI(XAI)will enhance theinterpretabilityofcomplexmodels,makingthemmoresuitableforsensitiveapplicationssuchashealthcareandfinance. Real-time forecasting systems powered by edge AI and lightweight neural networks will enable faster, on- device predictions for IoT and smart city environments. Furthermore, multimodal time series learning combining numerical data with text, audio, and sensor streams will open new research directions for more comprehensive situational understanding.Finally, futurework will focus on developingmorerobust,noise-tolerant,energy-efficient,anddata-efficient modelscapableofadaptingtodynamic,non-stationaryenvironmentsencounteredinreal-worldscenarios.

1. Fawaz,H.I.,etal.(2019).Deeplearningfortimeseriesclassification:Areview.DataMiningandKnowledgeDiscovery, 33(4),917–963.

2.Lim, B., & Zohren, S. (2021). Time-series forecasting with deep learning: A survey. Philosophical Transactions of the RoyalSocietyA,379(2194).

3. Shi,X.,etal.(2015).ConvolutionalLSTMnetwork:Amachinelearningapproachforprecipitationnowcasting.Advances inNeuralInformationProcessingSystems(NeurIPS)

4.Hochreiter,S.,&Schmidhuber,J.(1997).Longshort-termmemory.NeuralComputation,9(8),1735–1780.

5. Vaswani,A.,etal.(2017).Attentionisallyouneed.AdvancesinNeuralInformationProcessingSystems

6.Zhang, G., Eddy Patuwo, B., & Hu, M. Y. (1998). Forecasting with artificial neural networks: The state of the art. InternationalJournalofForecasting,14(1),35–62

7.Salinas,D.,etal.(2020).DeepAR:Probabilisticforecastingwithautoregressiverecurrentnetworks.InternationalJournal ofForecasting.

8. Wu,Y.,etal.(2021).Autoformer:DecompositionTransformerswithauto-correlationforlong-termseriesforecasting. AAAIConference.