International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN: 2395-0072

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN: 2395-0072

Tejashwini D S1 , Dr. Rashmi C R2 , Dr. Shantala C P3

1PG Student, Dept. Of Computer Science & Engineering, Channabasaveshwara Institute of Technology, Gubbi, Karnataka, India

2Assistant Professor, Dept. Of Computer Science & Engineering, Channabasaveshwara Institute of Technology, Gubbi, Karnataka, India

3 Professor & Head, Dept. Of Computer Science & Engineering, Channabasaveshwara Institute of Technology, Gubbi, Karnataka, India

Abstract - In the modern day busy lifestyle, people are finding it very difficult to properly track what they eat, especially in determiningthe numberofcaloriestheyconsume. Manual search in nutritional tablets or smart apps is extremely time-consuming, error-prone, and fails to consider the variability of portions, which is a standardprocedure.This paper introduces a Food Recognition and Calorie Estimation System based on Deep Learning and automates the task of recognizing food objects on an image and mapping them to nutritional data. It uses the MobileNetV2convolutionalneural network architecture, which is selected due to its lightweight nature and processing capabilities,anditcanbe deployedona mobile and embedded platform.

The methodology includes preprocessing the food images by resizing, normalizing, and augmentingimages,andclassifying them by a fine-tuned MobileNetV2 model. The experimental results demonstrate that the system can be classified accurately at a reasonable level of efficiency with a high degree of classification, and can be applied in real-time. The paper demonstrates that deep learning and computer vision can be used to address the gap between dietary monitoring requirements and practicality, and to deliver an intelligent, scalable, and usable solution to calorie estimation.

Key Words: Food recognition, calorie estimation, deep learning, convolutional neural networks (CNN), MobileNetV2,computervision,transferlearning,nutritional database, image classification, mobile health applications, dietarymonitoring

1.INTRODUCTION

The modern world today where lifestyle is becoming increasinglyhecticandtechnology-sensitiveismakingpeople more conscious of their health and their dieting. Healthy nutrition is crucial to preserving overall well-being, preventive diseases, and an active lifestyle. Among the elements of nutrition management, the calorie content of food is among the most significant ones. Calories are the measurementofenergy,anddailycalorieconsumptionisa practice required by all individuals who are trying to lose weight,controlobesity,developfitnessprograms,ormerely liveahealthylife.

Nevertheless, it may be challenging and ineffective to estimate calories manually. Most people use the labels on foods, nutritional charts, or mobile apps, in which they manuallyenterthefoodproductthattheyhaveeaten.This methodisnotonlytimeconsumingbutcanalsobesubjectto humanerrorparticularlywheretheportionsarenotofthe samesize.Asanexample,thenumberofcaloriesinabanana canvarynotonlywithakindoffruitbutalsowithitssizeand maturity. Also, not all people know how to identify the correct values of calories or measures of portions. This providesadistancebetweenwhatitwouldtaketobeableto trackimportantcalorieintakeandtheconvenienceofitbeing doneindailylife.

In a bid to eliminate these constraints, the proposed projectwillbetocreateasmartfood-recognitionandcaloriecounting machine that will rely on artificial intelligence to automate the task. The system receives a food picture as input,recognizesthefooditemwithdeeplearningmethods, and then gives the calorie estimate as a mapping of the identified food to a pre-existing nutritional database. This savestheuseralotofeffortinvolvedandenhancesprecision intrackingdiets.

Thefieldofcomputervisionanddeeplearningisthekey to the existence of this project. In particular, the ConvolutionalNeuralNetwork(CNN)isappliedtoidentify andlabelfoodpictures.CNNsrepresentausefulsub-typeof neural networks that find extensive application in image recognition with their capacity to automatically identify patterns like edges, shapes, textures, and colors that are inherenttodata.

The CNN in this project is constructed with the MobileNetV2 architecture that is a lightweight and highly accuratedeeplearningmodel.MobileNetV2isespeciallyapt inthereal-worldduetoitscomputationalefficiency,which implies that it can be implemented on mobile devices and embedded systems without the heavy hardware resource usage. This renders the project accurate, practical, and scalable.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN: 2395-0072

Thesystemisplannedtohavethefollowingworkflowin theformofapipeline:

1. Image Input - The user starts with the step of uploading or taking a picture of a food item. This renders the system interactive and easy to use becausethereisnomanualinputonthenamesof foods.

2. Preprocessing-Tomaketheinputimagesconsistent, theyareresizedtoafixedsize(224x224pixels)and normalized. This move guarantees that the deep learning model will be able to accommodate differentimagesize,lighting,andquality.

3. Classification (CNN Model) - The trained MobileNetV2 model is run on the preprocessed imageanditclassifiesthetypeoffood.Themodel willgiveahighconfidencelevelofapple,say,given animageofanapple.

4. CalorieMapping-Whenthefooditemisidentified, thesystemretrievestheassociatedcalorievalueina nutritional database. Standardized calorie values (per100gramsorperpiece)areprovidedineach food category in the database. As an example, an appleismappedtoabout52kcal/100grams,anda bananaismappedtoabout89kcal/100grams.

5. Result Output - Lastly, the system will display the resultstotheuserinsimpleandclearformatwhich includes the name of the food and the estimated calories.Suchinformationcan be utilizedtomake soundeatingchoices.

Thisprojectrevealshowartificialintelligencecanmake dietary monitoring extremely easier by automating food recognitionandestimatingcalories.Theproposedsystem,as opposedtoconventionalprocesses,reducestheamountof effortusedbytheuserandlowerspossibleerrorsgenerated bytyping.Moreso,themodelhasbeentrainedonalargeset of different fruit and vegetable images, thus allowing it to generalizeinvarioussituations,includingchangingangles, lighting,andthebackgroundofthephotos.

Other than its fundamental purpose, this project has enormouspotentialinpracticalapplication.

1.1

Inthemodern-daybusylife,peoplecannotkeeptrackof what they are eating because of the time constraint, knowledge about nutrition, and precision of calculating caloriesbyhand.Currentlyusedapproaches,includingthe manualsearchoffooditemsinnutritionalchartsormobile apps, are time-consuming and are prone to inaccuracies whenportionsizesdiffer.Thissetsupadisconnectbetween what is required of correct calorie monitoring and the

convenienceofdoingitinourdailylives.Consequently,an intelligent system that could automatically identify food items based on the image and give corresponding calorie contentisrequiredtoassistuserstousetheirfoodtomake informedchoicesontheirdiet.

As a subset of dietary monitoring systems based on computer vision, food image recognition and calorie estimationhavebeenextensivelystudied.Theinitialstudies were aimed at developing annotated datasets, e.g., Food101.thatgavemorethan100,000imagesin101foodclasses, and UEC-FOOD100/256, which added bounding box annotations that can be used in localization tasks. Such datasetshavebeenusedasstandarddatasetstoassessthe performance of classification and transfer learning approaches.

Convolutional neural networks (CNNs) have played a leading role in terms of model architecture in food recognition tasks. Large-scale food classification was demonstratedbyBossardetal.(2014)withtheuseofFood101, and the subsequent literature examined lightweight neuralnetworksthatcouldbedeployedonmobiledevices.A balance between accuracy and cost of computation was introduced by Sandler et al. (2018) in MobileNetV2, a workable architecture with inverted residual blocks and depthwise separable convolutions. This has turned MobileNetV2intoapopularchoicetousewhenrecognizing foodindeviceswithresourceconstraints.

Twobroadmethodshavebeenseentoestimatecalories: (i)multi-stagepipelinesthatrepeatedlyidentifyfooditems, classify them, estimate portion size and map to calorie valuesusingnutritionaldatabases,and(ii)end-to-endmultitasklearningsystemsthatsimultaneouslypredictfoodtypes andcalorievalues.ItisimportanttonotethatMeyersetal. (2015)introducedIm2Calorieswhichisafoodrecognition systemwithnutritionallook-uptoamobilevisiondiaryand Ege and Yanai (2017) demonstrated that multi-task CNNs canestimatefoodcategoriesandcaloricvalueswithahigher accuracy.

Morerecentstudieshaveexaminedtheimprovementof thecalorieestimationbyusingmonoculardepthestimation, scalereferenceobjectestimation,andmulti-modallearning (using images of recipes and ingredient information with text). Cross-modal training of visual features related to recipes and nutritional information has been enabled indicatively, e.g., with datasets such as Recipe1M+. Furthermore, transformer-based layouts as well as crossmodal embeddings are experimented to gain better generalizationwithincomplicatedfoodscenes.

Inspiteofthesedevelopments,currentsystemscontinue tobechallengedbyportionsizeestimation,thedomainshift between curated data and real images, and mixed dish

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN: 2395-0072

occlusion.Thisproposedprojectcanalleviatesomeofthese constraints through the use of MobileNetV2 with transfer learningto performclassification efficiently,a customized nutritional database to map calories, and scalability to be able to deploy their technology on mobile and embedded devices.

The proposed food recognition and calorie estimation system is offered in the form of a modular pipeline that combines computer vision and deep learning with nutritionaldatabases.Themethodologywillbebasedonthe followingsteps:

1. DataPreparation,andDataCollection.

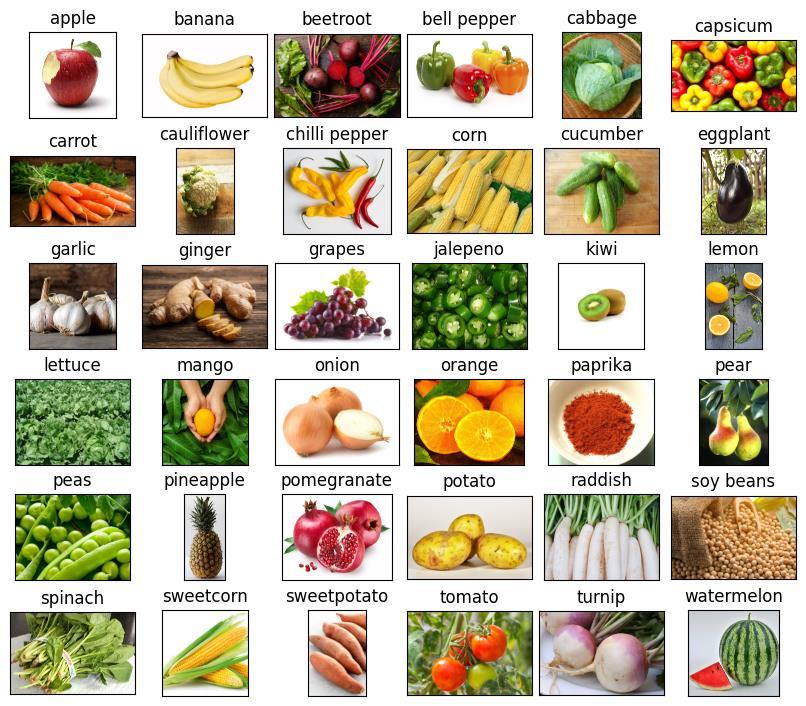

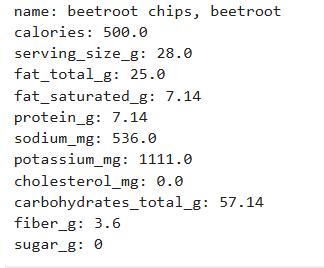

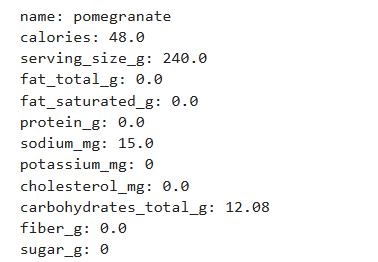

Thedatasetwasbuiltbasedonpubliclyavailablefood image repositories (Fruit-360 and Kaggle) and realworldimages,gatheredmanually.Thisguaranteedthe inconsistency of lighting conditions, backgrounds and views.Allimageswereassignedthecorrespondingfood category, and a nutritional database was further organized by mapping all the categories to their standardizedcaloriecontent(per100grams)usingfood databasessuchasUSDAFoodDataCentral.

2. ImagePreprocessin

Shrinkingtoaconstantinputof224224pixels.

Scalingofpixelvaluesto[0,1]tostabilizetraining.

Dataaugmentation(rotation,flipping,zooming,and adjustmentofbrightness)toimprovegeneralization andminimizeoverfitting.

3. ModelDevelopment

ConvolutionalNeuralNetwork(CNN)implementedusing MobileNetV2wasselectedasabackbonebecauseithasa lightweight structure and can be used on mobile computers.Themethodologyincluded:

Transfer Learning: ImageNet pretrained weightshavebeenusedtoexploitgenericvisual features.

Fine-tuning:MobileNetV2wasretrainedonthe food dataset at the apex layers to obtain task specificfeatures.

Custom Classification Head: Global Average Pooling (GAP) and then dense layers and a Softmaxclassifiertoclassifyfood.

The dataset was divided into training (70 percent), validation (15 percent), and testing (15percent).

To achieve a stable convergence, Adam optimizerusinga learningrateschedulerwas used.

Multi-class classification was done using CategoricalCross-Entropyastheloss.

Regularizationtechniquessuchasdropoutand augmentationwereemployedtoassistreduce overfitting.

To assess performance based on individual predictions in each class, accuracy, precision, recall, F1-score, and confusion matrices were used.

After the type of food was scanned, the system would then automatically find the value of calories in the nutritional database. The values of calories were standardizedto100gsothatthevaluescouldbesimilar and scaled. Consumers were allowed to customise furtherdependingontheestimatedportionsize.

6. SystemIntegrationandImplementation.

On-the-webdeployment:deployingTensorFlowon Flask/Djangobackendtomakereal-timepredictions inabrowserinterface.

Mobile deployment: Mobile deployment with TensorFlow Lite (TFLite) provides on-device inference, allows offline use, lower latency and betterprivacy.

Aninteractiveandeasytouseinterfacewascreatedthat allows users to upload or take pictures of food and receive the foodname andthe estimatedcaloric value immediately.Thismakesthesystemaccessibletonontechnicalusersandmakesitusableintherealworld.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN: 2395-0072

Thispaperwasabletodemonstratehowtodesignand implement an intelligent system of food recognitions and calorieestimationswiththehelpofdeeplearning.Usingthe MobileNetV2 architecture and transfer learning with ImageNet premade weights, the model had reached an accuracy-efficiency-lightweightbalance.Thiscustomizationof final layers enabled the network to be specialized in classifyingagiventypeoffood,preprocessingstrategiesand regularization techniques assisted towards minimizing overfittingandenhancinggeneralizationtounseendata.

The system was also enhanced by incorporation of a nutrition data base which allowed automatic mapping of known foodstuffs to their respective calorie content. The reason behind this calorie mapping was to provide consistencybetweenthevaluesbynormalizingthemper100 grams, hence the values were accurate and could be used directly to plan diets, monitor health and even conduct nutritionalanalysis.Real-lifeexampleshavemadethesystem usefultotheend-usersinapracticalway.

Deployment wise, the project also brought out the modularity of the model by providing it in both web and mobileplatform.Themachinewasabletooperateeffectively

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN: 2395-0072

in a smart phone setting with the optimization of the TensorFlowLiteandthisgavethesystemofflineaccessand real-timepredictionsmakingitaccessibleandconvenientto usersindiversesettings.Thepipelineisalsoscalablebecause ofthemodulardesign,whichcaneasilyaddnewfoodtypes and can be integrated into bigger health and fitness ecosystemsinthefuture.

[1] L.Bossard,M.Guillaumin,andL.VanGool,"Food-101–Mining Discriminative Components with Random Forests," in Proc. European Conf. Computer Vision (ECCV), 2014, pp. 446–461. Available: https://data.vision.ee.ethz.ch/cvl/datasets_extra/food101/static/bossard_eccv14_food-101.pdf

[2] M.Sandler,A.Howard,M.Zhu,A.Zhmoginov,andL.-C. Chen, "MobileNetV2: Inverted Residuals and Linear Bottlenecks," arXiv:1801.04381, 2018. Available: https://arxiv.org/abs/1801.04381

[3] A. Meyers, L. Johnston, V. Rathod, A. Korattikara, E. Gagne,M.Tomkins-Blake,etal.,"Im2Calories:Towards anAutomatedMobileVisionFoodDiary,"in Proc. IEEE International Conference on Computer Vision (ICCV), 2015, pp. 1233–1241. Available: https://research.google/pubs/archive/44321.pdf

[4] T.EgeandK.Yanai,"SimultaneousEstimationofFood CategoriesandCalorieswithMulti-taskCNN,"inProc. IAPR International Conference on Machine Vision Applications(MVA),2017.Available:https://www.mvaorg.jp/Proceedings/2017USB/papers/06-03.pdf

[5] UEC-FOOD256dataset,Foodcam(UEC)."UEC-FOOD256: 256-kind food dataset (release 1.0)." Available: https://foodcam.mobi/dataset256.html

[6] D.Liu,E.Zuo,D.Wang,L.He,L.Dong,andX.Lu,"Deep LearninginFoodImageRecognition:AComprehensive Review,"AppliedSciences,vol.15,no.14,Art.no.7626, 2025. doi:10.3390/app15147626. Available: https://www.mdpi.com/2076-3417/15/14/7626

[7] X.Chen,Y.He,andK.Yanai,"VIREOFood-172:ALargescale Dataset for Cross-modal Food Analysis," ACM Multimedia,2016.

[8] N.Martinel,C.Piciarelli,andC.Micheloni,"ASurveyon FoodRecognitionforDietaryAssessment,"Sensors,vol. 19,no.5,2019.

[9] M.Hoashi,K.Joutou,andK.Yanai,"ImageRecognitionof 85FoodCategoriesbyFeatureFusion,"ICM,2010(PFID dataset).

[10] J. Marin, et al., "Recipe1M+: A Dataset for Learning Cross-ModalEmbeddingsforCookingRecipesandFood Images,"IEEETPAMI,2021.

[11] M. Tan and Q. Le, "EfficientNet: Rethinking Model ScalingforConvolutionalNeuralNetworks,"ICML,2019.

[12] K. He, X. Zhang, S. Ren, and J. Sun, "Deep Residual LearningforImageRecognition,"CVPR,2016.

[13] A.Dosovitskiyetal.,"AnImageisWorth16x16Words: Transformers for Image Recognition at Scale," ICLR, 2021.

[14] F. Chen, K. Aizawa, and Y. Nagao, "Cross-modal Transformers for Food Image and Recipe Understanding,"ACMMultimedia,2022.

[15] A.Dehais,S.Anthimopoulos,andS.Mougiakakou,"Food VolumeEstimationUsingMonocularVision,Depthand ShapePriors,"ICCVWorkshops,2017.

[16] W.Pouladzadeh,S.Shirmohammadi,andR.Al-Maghrabi, "Measuring Calorie and Nutrition from Food Image," IEEE Transactions on Instrumentation and Measurement,vol.63,no.8,2014.

2025, IRJET | Impact Factor value: 8.315 |