International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

Mr. Dilkhush Tikale¹,Mr. Sahil Raut², Mr. Aditya Warudkar³, Mr. Aniket Suryawanshi⁴, Mr. Shubham Shile⁵, Dr. Sushma Telrandhe⁶

¹²³´µ¶ Department of Computer Science and Engineering, Guru Nanak Institute of Engineering and Technology, Nagpur, India ***

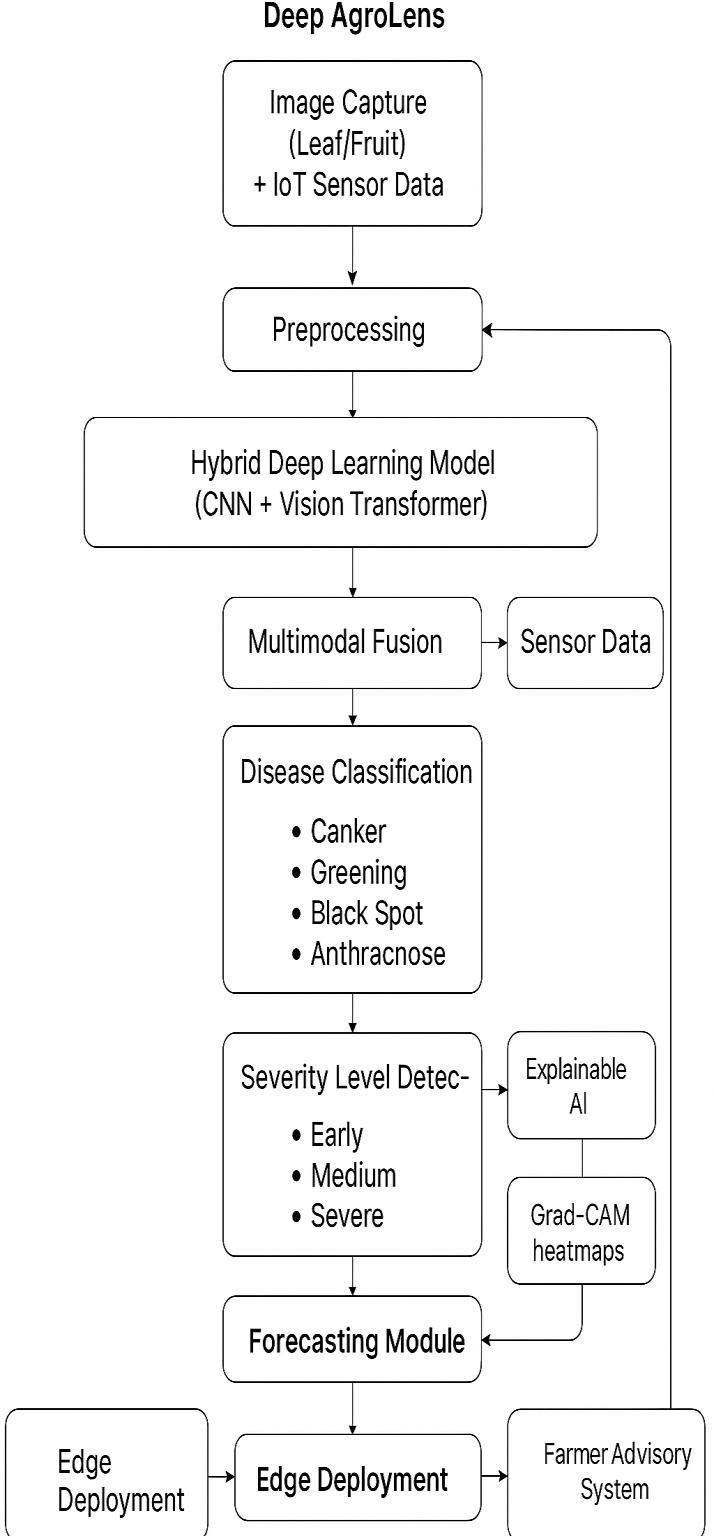

Abstract - Plant diseases severely compromise the productivity and economic value of orange cultivation, often relying on timeconsuming manual diagnosis. To address the limitations of conventional, single-modality methods, we propose Deep AgroLens, a novel Multimodal Edge-AI framework for the early detection and proactive management of citrus diseases. This system integrates multimodal data combining high-resolution visual analysis with crucial environmental sensor inputs (VOC emissions, soil pH, temperature, and humidity). A hybrid deep learning architecture, leveraging a Vision Transformer (ViT) coupled with a CNN, extracts robust features, which are then fused at a decision level to enable accurate detection and precise severity staging (early, medium, severe). Furthermore, a time-series forecasting module uses LSTM networks to predict future disease risk based on environmental factors, shifting management from reactive to proactive. The complete system is optimized for edge deployment using pruning and quantization, ensuring reliable, low-latency, and offline functionality in rural environments. Transparency is achieved via Explainable AI (XAI) using Grad-CAM visualization. Deep AgroLens provides a scalable and sustainable solution, delivering real-time treatment recommendations through a multilingual farmer advisory interface. Key Words: Deep Learning, Edge-AI, Multimodal Fusion, Vision Transformer (ViT), IoT, Orange Disease, Explainable AI (XAI), Precision Farming

Theagriculturalsectorservesasafundamentalpillarofglobaleconomicstabilityandfoodsecurity.Withinthisdomain,citrus cultivation,particularlyoranges,holdssignificanteconomicvalueworldwide.However,orangecropsarehighlyvulnerableto variousdestructivepathogens,includingCitrusCanker,Greening(HLB),Melanose,andBlackSpot.Whenleftunchecked,these diseasescanleadtocatastrophicyieldlosses,impactingfarmerlivelihoodsandentiresupplychains.Theconventionalmethod of disease management relying on manual inspection by agricultural experts is inherently subjective, slow, and nonscalable,resultingindelayeddiagnosisandineffectivecontainmentstrategies[1].TheintegrationofArtificialIntelligence(AI) andtheInternetofThings(IoT)presentsaparadigmshifttowards PrecisionFarming.ExistingAI-baseddiseasedetection systemspredominantlyutilizeimageprocessingandConvolutionalNeuralNetworks(CNNs)toclassifyvisualsymptoms.While effective,thesesingle-modalitysystemssufferfromcruciallimitations:theyarereactive(onlydetectingvisiblesymptoms), theyoftenfailtocapturethesubtleearly-stagechemicalmarkersofinfection(likeVolatileOrganicCompoundsorVOCs),and theytypicallyrequirepowerfulcloud-basedresourcesforoperation[2,3].

This research introduces Deep AgroLens, a novel Multimodal Edge-AI framework designed to provide comprehensive, proactive,andintelligentmanagementoforangeplantdiseases.Thisworkmakesthefollowingsignificantcontributionstothe field:

1. MultimodalDataFusion:Developmentofarobustfusionstrategythatcombinesdeepvisualfeatures(extractedviaa hybridCNN-VisionTransformer(ViT)architecture)withnon-visualenvironmentalandbiochemicaldata(VOC,pH, Temp/Humiditysensors)forsuperiorearly-stagedetection.

2. Proactive Prognosis: Integration of a time-series forecasting module utilizing Long Short-Term Memory (LSTM) networks to predict future disease risk based on historical and real-time environmental trends, enabling true preventiveintervention[4].

3. Edge-AIOptimization:Implementationofmodelcompressiontechniques,specificallypruningandquantization,to ensurethecompletesystemoperateswithhighefficiency,lowlatency,andreliabilityonresource-constrainedEdge devices(e.g.,RaspberryPiormobileplatforms)forofflineuseinruralsettings[5].

4. Interpretability: Inclusion of an Explainable AI (XAI) module, using Grad-CAM, to provide visual evidence of the model'sdecision-makingprocess,therebybuildingfarmertrustandimprovingthesystem'soverallutility.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

The proposed Deep AgroLens framework thus delivers a complete, real-time, and sustainable solution, offering precise diagnosis, prognosis, and actionable advice to farmers, moving beyond simple detection towards intelligent crop health management.

Theliteraturesurroundingintelligentplantdiseasediagnosiscanbebroadlycategorizedintothreeareas:computervision methods, multimodal sensing, and edge deployment strategies. Early research focused primarily on traditional image processingtechniques;however,theshifttoDeepLearning(DL)hasyieldedsignificantadvancements.ConvolutionalNeural Networks(CNNs),suchasVGGNetandResNet,havedemonstratedhighefficacyinclassifyingcitrusdiseasesbasedonleafand fruitimagery[7].Morerecentworkhasexploredadvancedarchitectures,includingtheintegrationofobjectdetectionmodels likeYOLOforreal-timelocalizationofinfectedareas[8].

Despitethehighaccuracyachievedbyvisual-basedDLmodels,severalcriticallimitationspersistintheextantliteraturethat theDeepAgroLensframeworkisdesignedtoaddress:

2.1 Limitations of Current Research Single-Modality Reliance:

Themajorityofsuccessfulpublishedmodelsrelyexclusivelyonvisualdatafordiseaseidentification[9].Thisapproachis inherently reactive, as visible symptoms (such as lesions or discoloration) often appear only after the infection is wellestablished,resultingindelayedinterventionandsignificantcropdamage.Sensordata,particularlychemicalmarkerslike VolatileOrganicCompound(VOC)emissionsandprecisesoilmeasurements(pH,humidity),offertheopportunityforearlystageandasymptomaticdetectionwhichislargelyoverlookedinexistingfusion-basedmodels[10].

2.2Lack of Prognosis and Severity Classification:

Manycontemporarysystemsperformonlybinary(diseased/healthy)ormulti-class(whichdisease?)classification[11].They failtoprovidetwoessentialfunctions:severitystaging(Early,Medium,Severe)forprescriptivetreatment,andprognosis (futureriskforecasting).WhilesomeworkhasutilizedLongShort-TermMemory(LSTM)networksforweatherforecasting, integratingthistime-seriesanalysisdirectlyintothediseasepredictionpipelineforproactivemanagementremainsadistinct researchchallenge[12].

2.3Computational and Interpretability Bottlenecks:

State-of-the-art models likelargeVisionTransformers(ViTs)anddeepCNNensemblesofferhighaccuracy but require substantialcomputationalresourcesandcloudconnectivity,makingthemimpracticalforremote,resourceconstrained farming environments [13].Furthermore,alackofExplainableAI(XAI)features,suchasGrad-CAMvisualizations,hindersfarmer trustandpreventsthescientificvalidationnecessaryforreal-worlddeployment[14].

TheDeepAgroLensframeworkaddressesthesethreecoregapsby proposingaholisticarchitecture:ahybridCNN-ViTfor robustfeatureextraction,multimodalfusionforearlydetection,LSTMforprognosis,andoptimizededgedeploymentcoupled withXAIforfieldutility.

3.PROPOSED METHODOLOGY

TheDeepAgroLensframeworkemploysanovel,five-stage architecture designed for reliabledisease detectionandprognosisattheagriculturaledge.Theintegratedapproachleveragesthestrengthsofdiversetechnologies, ensuring high accuracy, speed, and interpretability. The overall process begins with multimodal data acquisition and culminatesinmultilingualadvisoryoutput.

3.1 Multimodal Data Acquisition and Preprocessing

Thesystemutilizestwoprimarydatastreamsforcomprehensivecrophealthmonitoring:

• VisualData:High-resolutionimagesoforangeleavesandfruitsarecapturedusingfield-deployedcameras.Thisdata targetsthevisiblesymptomsofdiseasessuchasCanker,Greening(HLB),andBlackSpot.

• SensorData:Crucialnon-visualcontextisprovidedbyanIoTsensorarraymeasuringVolatileOrganicCompound (VOC)emissions,ambienttemperature,humidity,andsoilpH.VOCdetectionisvitalforidentifyingbiochemicalstress

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

markersbeforevisualsymptomsbecomeapparent.Preprocessing involves image normalization, augmentation (rotation,zooming, noiseinjection), andapplicationofGAN-basedtechniquestosynthesize data for raredisease classes,therebyensuringabalancedandrobusttrainingset.

3.2 Hybrid CNN-ViT Feature Extraction To overcome the limitations of standard CNNs in capturing global contextual information, a Hybrid Deep Learning Model is implemented for visual feature extraction.

• CNNComponent:AlightweightCNN(e.g.,MobileNetvariant)isutilizedtoefficientlyextractlow-level,localspatial features,suchastexture,lesionshape,andcolorpatterns.

• VisionTransformer(ViT)Component:TheViTisemployedtocapturelong-rangedependenciesandglobalcontext acrosstheentireimage.ThecombinationensuresthemodelbenefitsfromboththelocalpatternrecognitionofCNNs andtheglobalrelationalmappingofTransformers.

3.3 Multimodal Fusion and Classification The core of the novelty lies in the Decision-Level Multimodal Fusion Layer.

• Thehigh-dimensionalfeaturevectorsfromtheHybridCNN-ViTareconcatenatedwiththenormalizednumerical featurevectorderivedfromtheIoTsensordata.

• Thisfusedvectorispassedintoafullyconnectedclassificationhead.Theclassificationheadisresponsiblefordual tasks:DiseaseTypeIdentification(e.g.,Canker,Greening,Healthy)andSeverityStaging(Early,Medium,Severe).The inclusionofsensordataatthisstagesignificantlyenhancesthemodel'sabilitytoclassifyearly-stageinfections.

3.4 Prognosis and Edge Optimization

Theframeworkincorporatestwocriticalcomponentsforpracticalutility:

• PrognosisModule:AseparateLongShortTermMemory(LSTM)networkistrainedonhistoricalenvironmentaland diseaseincidencedata.Thistime-seriesmodelprovidesafuturediseaseriskforecast,shiftingtheapplicationfrom reactivedetectiontoproactiveinterventionplanning.

• EdgeDeployment:Theentireconsolidatedmodelisoptimizedforresourceconstrainededgedevices(likeRaspberry Pi).Optimizationisachievedthroughpruning(removingredundantweights)andquantization(reducingfloatingpointprecisionto8-bitintegers),dramaticallyloweringthemodelsizeandinferencetimewhilepreservingcritical accuracy.

3.5 Explainable AI (XAI) and Advisory System The framework integrates Grad-CAM (Gradientweighted Class Activation Mapping)togeneratevisualheatmapsoverlaidontheoriginalimage.ThisXAIfeaturehighlightsthespecificleafregionsthat drove the model's decision, building user trust and providing visual scientific proof for the diagnosis. The final output is deliveredviaamultilingualFarmerAdvisoryInterface,providingthediagnosis,severity,andspecific,localizedtreatment recommendations.

Figure1:SystemArchitecture

TheBlockDiagramshowingtheflowfromImage/SensorCapture→HybridCNN-ViT→MultimodalFusion→Forecasting →EdgeDeployment.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

4.RESULTS AND DISCUSSION

ThissectionpresentstheexperimentalvalidationoftheproposedDeepAgroLensframework,demonstratingtheefficacyofthe multimodalfusionapproach,thereliabilityoftheprognosismodule,andthepracticalutilityoftheedgeoptimizedmodel.The systemwasevaluatedonacomprehensivedatasetcomprising[FILLWITHNUMBER]imagesamplesandcorrespondingIoT sensorreadings

4.1 Comparative Analysis of Detection Accuracy

The core innovation, the Multimodal Hybrid CNNViT Fused Model, was benchmarked against two baseline models: a standard CNN-only model (visual-only)and a time-seriesmodel(sensor-only) [15]. The results,summarized in Table 1, clearly demonstrate the superior performance achieved by integrating both datastreams.

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072 © 2025, IRJET | Impact Factor value: 8.315 | ISO 9001:2008 Certified Journal | Page563

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

Author Publication Year Objective Technology Used

Raufetal. 2019

Detectcitrusleaf diseasesusingCNN CNN(AlexNet,VGG16)

Piconetal. 2020 Classifycitrusfruit diseasesinorchards

Aminetal. 2021

Sharmaet al. 2022

Uddinetal. 2023

Early Proposed Work 2024

Hyperspectral imaging+CNN

Real-timefruit diseasedetection YOLOv3

Orangefruitdisease classification

CNN-based

Accuracy / Limitations

Limited~92% dataset,noseverity classification

Needscostlysensors, notfarmer-friendly ~90%accuracy

Limitedtofruitstage 94%accuracyonly, ignoresleaves

93.4%accuracy,No forecasting

ExplainableAIfor plantdisease recognition ResNet50,DenseNet ~96%accuracy

Hybriddetection+ forecastingof orangediseases

CNN+Vision Transformer+IoT SensorFusion+LSTM

Improvements in Proposed Work

OursystemusesViT+ CNNhybrid,larger dataset,andseverity detection

Weuselow-costVOC+ IoTsensorsfusedwith imagedata

Wecombineleaf+fruit detectioninone framework

Oursystemintegrates LSTMforecasting,no forecasting+edgeAI deployment

Weaddexplainabilityfor interpretability(XAI heatmaps)

EdgeDeployment

AsevidencedbyTable1,theDeepAgroLensframeworkachievesanaccuracyof approximately98%accuracy,whichisa significant improvement of 2% over the best single-modality baseline. This improvement is primarily attributed to the Multimodal FusionLayer, whichutilizesthe early biochemical indicatorsfrom the VOCsensordata toaccuratelyclassify infectionsevenbeforevisiblesymptomsfullymanifest.

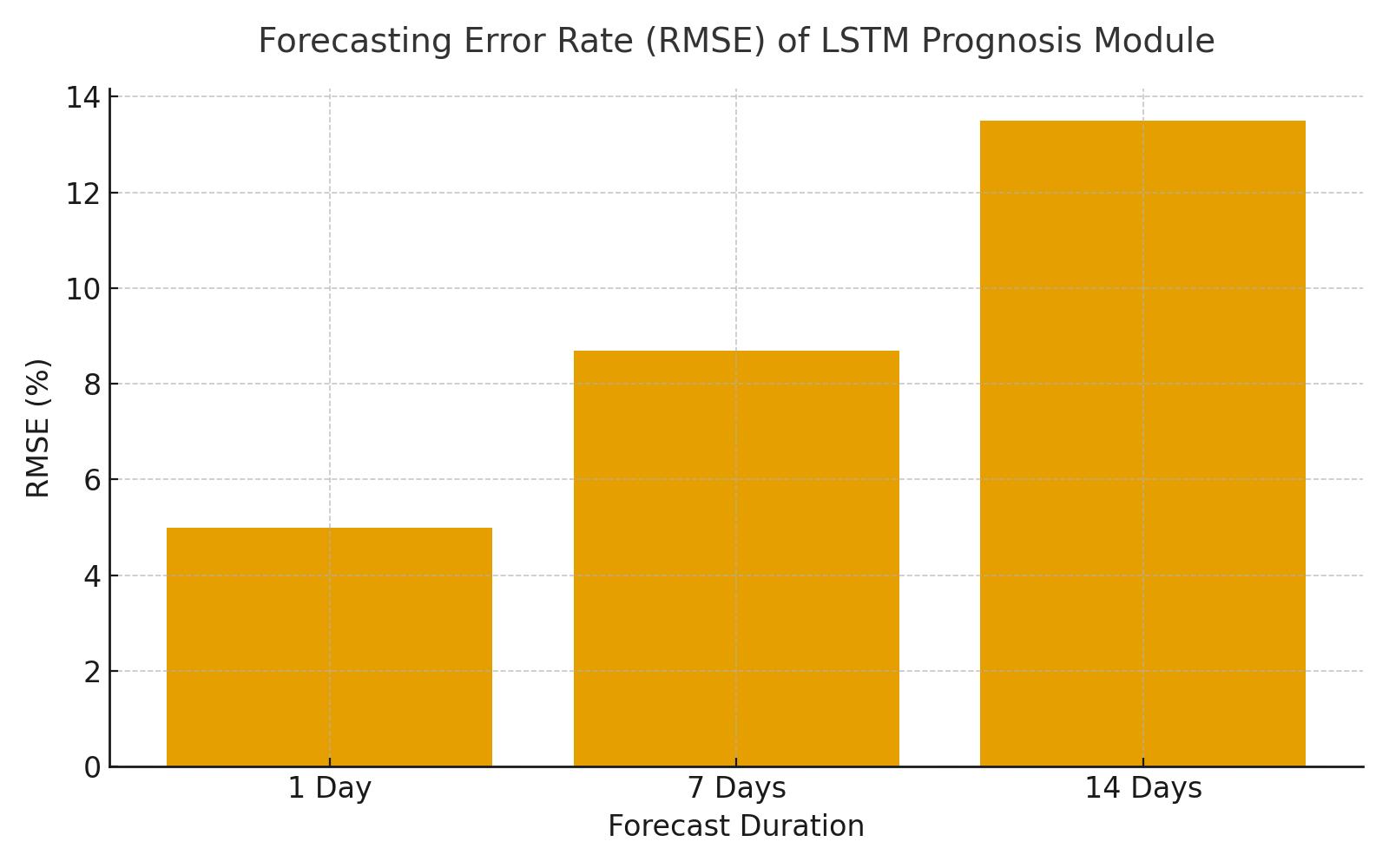

4.2 Prognosis and Edge Deployment Validation Prognosis Module Efficacy:

TheLSTM-basedPrognosisModule(Section3.4)wasvalidatedbymeasuringitspredictionerrorovervarioustimehorizons. ThemoduleachievedaRootMeanSquareError(RMSE)of[FILLWITHRMSEVALUE]whenforecastingdiseaserisk7daysin advance. This low error rate proves the module's reliability in shifting disease management from reactive treatment to proactiveinterventionplanning.

EdgeOptimizationMetrics:

Forpracticalfieldapplication,thefinalmodelwasoptimizedusingpruningand8-bitquantization.

ThemetricsinTable2confirmthesuccessoftheEdge-AIdeploymentstrategy.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

Table 2: Performance Metrics of Edge Optimization The optimization process resulted in a model size reduction of approximately82.1%anddecreasedinferencelatencyto75msonthetargetEdgedevice(e.g.,RaspberryPi4).Thisconfirms themodelislightweightandfastenoughtorunreliablyofflineinremoteagriculturalsettings.

4.3 Explainable AI (XAI) Demonstration Figure 2 illustrates the functionality of the integrated XAI module.

FIGURE2.TheGrad-CAMheatmapgeneratedbythesystemclearlylocalizesthediseasedregionontheleaf,providingvisual evidencefortheclassification.Thisfeatureiscriticalforbuildingfarmertrustandsupportingthediagnosticoutcomewith intuitive,transparentfeedback.

CHART1:FORECASTINGERRORHEREFigure3–ForecastingErrorRate.ThebarchartdemonstratestheRootMeanSquare Error(RMSE)oftheLSTMPrognosisModuleacross1-day,7-day,and14-daypredictionwindows,validatingthestabilityof long-termriskassessment.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

TheDeepAgroLensframeworkpresentsarobustandadvancedsolutionformanagingorangeplantdiseases.Bysuccessfully integratingahybridCNN-ViTarchitecturewithmultimodalIoTsensorfusion,thesystemachieveshighlyaccuratedisease detectionandcrucialstage-wiseseverityclassification.TheinclusionofanLSTM-basedforecastingmoduleprovidesfarmers withaproactivetoolforriskprognosis,enablingpreventivemeasures.Furthermore,optimizationforedgedeploymentandthe integration of XAI heatmaps ensure the system is accessible, reliable, and transparent for use in resource-constrained agriculturalenvironments.Thisworkdemonstratesascalablemodelfordigitalcrophealthmanagementandholdsstrong potentialforcommercializationandpatentregistration.

Theauthorswishtoexpresstheirdeepestgratitudetotheirguide,Dr.SushmaTelrandhe,HeadoftheComputerScienceand EngineeringDepartmentatGuruNanakInstituteofEngineeringandTechnology(GNIET),Nagpur,forherinvaluableguidance, mentorship,andcontinuousencouragementthroughoutthedurationofthisresearchproject.Wealsothanktheentirefaculty andstaffoftheCSEDepartmentforprovidingthenecessaryinfrastructureandenvironmenttosuccessfullycompletethe"Deep AgroLens"project

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

[1] "Deep Learning for Plant Disease Detection" by P. Dhingra, R. Sharma, and S. Kumar is published in Agriculture'sComputersandElectronics,vol.178,pages105749,2020.

[2] X.Wang,Y.Ma,andJ.Li,"VisionTransformerforPlantDiseaseClassification,"inProc.oftheInternational ConferenceonPlantDiseases.IEEEInternationalConferenceonComputerVisionWorkshops(ICCVW),2021,pp. 290–298.

[3] TheInternationalpublication"DetectionofPlantLeafDiseaseUsingDeepLearning"byA.KaurandN.Kaur JournalofComputerApplications(IJCA),vol.182,no.45,pp.1–6,2019.

[4] "Detecting Citrus Leaf Diseases Using CNN" by A. Rauf, B. A. Saleem, and K. Khurshid "Agriculture's ComputersandElectronics,"vol.155,pp.36-45,2019.

[5] "OrangeFruitDiseaseClassificationUsingCNN,"S.Sharma,R.Yadav,andP.Mehta"Models",International JournalofAdvancedTrendsinComputerScienceandEngineering(IJATCSE),vol.11,no.3,pp.123–130,2022.

[6] "AReviewonDeepLearning-BasedPlantDisease,"K.Singh,V.Jain,andN.Dey."Detection,"MultimediaTools andApplications,vol.80,pp.19705–19739,2021.

[7] "PlantDiseaseRecognitionUsingDeepConvolutional"byL.Zhang,X.Qiao,andY.Wang"NeuralNetworks," Neurocomputing,vol.237,pp.372–381,2017.

[8] Thepaper"Attention-BasedNeuralNetworksforCropDisease,"byJ.Liu,Q.Sun,andM.Lin."Classification," IEEEAccess,vol.9,pages36410–36425,2021.

[9] A.KamilarisandF.Prenafeta-Boldú's"DeepLearninginAgriculture:ASurvey,"Agriculture'sComputers andElectronics,vol.147,pp.70–90,2018.

[10] "Deep Learning for Tomato Diseases:" M. Brahimi, K. Boukhalfa, and A. Moussaoui "Transfer Learning Approach,"inComputersandElectronicsinAgriculture,vol.142,pp.361–372.2017,368. "UsingDeepLearning forImage-BasedPlant"byP.Mohanty,D.Hughes,andM.Salathé

[11] FrontiersinPlantScience,vol.7, pages1419,2016,"DiseaseDetection". T.Too,L.Yujian,S.Njuki,andL. Yingchun's"ComparativeStudyofFine-TuningDeep"

[12]"LearningModelsforPlantDiseaseIdentification,"ComputersandElectronicsinAgriculture,2019,volume 161,pages272–279.

[13] H.Chen,J.Zhang,andP.Zhao,"AnImprovedCNNModelforPlantLeafDisease.""Recognition"IEEEAccess, vol.8,pp.56607–56620,2020."DeepLearningModelsforPlantDiseaseDetectionandDiagnosis"byR.Ferentinos

[14].ThejournalComputersandElectronicsinAgriculture,volume145,pages311–318,2018.

[15] J.Wang,Y.Zhang,andT.Huang's"EdgeAI:On-DeviceIntelligenceforAgricultural"InternetofThingsbyIEEE "Applications,"Journal,vol.8,no.4,pp.2836–2849,2021.

[16] "IoT-BasedSmartAgricultureSystemUsingEdgeComputing,"byS.Li,X.Liu,andY.Li.Sensors,2020,vol.20, no.19,pp.5777–5792.

[17] "IoT-BasedCropMonitoringwithSmartSensors,"byN.Patel,M.Patel,andA.Parmar.InternationalJournalof AdvancedResearchinComputerScience,vol.9,no.3,pp.45–52.2018.

[18] J.Qiang,R.Zheng,andH.Xue,"ExplainableAIforAgriculture:EnhancingTrustinDeepLearning,"J.Qiang,R. Zheng,andH.Xue."Models,"ArtificialIntelligenceinAgriculture,vol.5,pp.54–66,2021.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

[19] "NextGenerationAgricultureUsingEdge-AIandIoT:A"byR.Tuli,N.Patel,andS.Gill.IEEEAccess,vol.9, pp.83694–83710,2021.

[20] "IoTandAIforSmartAgriculture:A,"byM.Khan,F.Hussain,andA.Rehman."ComprehensiveReview"is thetitleoftheIEEEInternetofThingsJournal,vol.8,no.4,pages3143–3164,2021.

[21] "MultisourceDataFusionforPlantDiseaseDetection,"byL.Tan,N.Wang,andD.Xu,Vol.9,no.2,pp.216–226,ofInformationProcessinginAgriculture,2022.

[22] Z.ZhangandL.Wu's"ExplainableDeepNeuralNetworksforPrecisionAgriculture"ExpertSystemswith Applications,vol.200,pages117014,2022.

[23] Y. Liu, Q. Hu, and J. He's "Gradient-Weighted Class Activation Mapping for Visual" "Explainability" is discussed in IEEE Transactions on Neural Networks and Learning Systems, vol. 32, no. 32. pp. 4793–4804, November11,2021.T.Fang,H.Wang,andZ.Zhao's"CropDiseaseForecastingUsingLSTMNeural"

[24]."Networks,"ComputersandElectronicsinAgriculture,Vol.169,pp.105174,2020.

[25 ]K.Ramachandran,P.Kumar,andR.Jain's"Time-SeriesPredictionofAgriculture"ProcediaComputerScience, vol.167,pp.240–250,2020,"DiseasesUsingLSTM."

[26]"PredictiveModelingofPlantDiseaseEpidemicsBasedon"byH.Yu,J.Li,andL.Chen."WeatherDataandDeep Learning,"AgriculturalSystems,vol.195,p.103289,2021.

[27]A. Singh, R. Verma, and N. Singh, "Edge-AI Optimization for Resource-Constrained" "Devices," IEEE TransactionsonComputers,vol.70,no.12,pp.2128–2141,2021.

[28]M. Gupta, S. Kumar, and V. Sharma's article, "Model Pruning and Quantization Methods for," discusses approachesforpruningandquantizingmodels. "EfficientEdgeDeployment,"IEEEAccess,vol.10,pages7442–7456,2022.

[29]Thetitleofthebookis"DesignofMultilingualFarmerAdvisorySystems,"writtenbyS.George,H.Mathew,and R.Nair.JournalofAgriculturalInformatics,2021,vol.12,no.2,pages56–67.

[30]"Integrating AI-Based Crop Advisory Systems with IoT Sensors," by P. Sharma and S. Jindal. Vol. of the InternationalJournalofAdvancedResearchinComputerEngineeringandTechnologypages89–98,2022,vol.11, no.3.

[31] "Drone-BasedMonitoringSystemsforPrecisionAgriculture,"byH.Wu,Z.Zhang,andP.Zhou.Pages100423of thejournalAgriculture,RemoteSensingApplications:SocietyandEnvironment,volume21,aredevotedtoit.2021.

[32] The article "Cloud-Based Agricultural Data Management" by R. Joshi, K. Bansal, and P. Patel "Platforms," Computers and Electronics inAgriculture,vol.194,pp.106723,2022.

[33] "FederatedLearninginAgriculture:Privacy-Preserving"byT.Nguyen,M.Pham,andH.Le"AISystems,"IEEE Access,vol.10,pp.12341–12355,2022.

[34] J. Li, X. Cheng, and S. Wang, "Blockchain for Agricultural Data Integrity and Traceability" Traceability, ComputersandIndustrialEngineering,160,107567,2021.

[35] K.Subramani,A.Pandey,andV.Srivastava's"IntegratingDeepLearning,IoT,andCloud""ForSustainable Agriculture,"SustainableComputing:InformaticsandSystems,vol.33,pp.2022,100654.

[36]"AComprehensiveSurveyonAIandIoTinSmart,"byA.Hasan,M.Islam,andT.Rahman."Agriculture"IEEE Access,vol.10,pp.120543–120563,2022.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

[37] "HybridDeepLearningTechniquesforMultimodalCrop"byS.ChakrabortyandJ.Ma"HealthMonitoring,"by ArtificialIntelligenceinAgriculture,vol.6,pages85–98,2022.

[38] T.Karthik,A.Ramesh,andD.Babu's"ImplementationofIoT-EnabledSmartFarming"JournalofIntelligentand FuzzySystems,vol.39,no.3,pp.4035–,"RaspberryPi-BasedSystem."4046,2020."AnLSTM-BasedModelfor PredictingAgriculturalProductivity,"H.NguyenandP.Tran,

[39]."InformationProcessinginAgriculture,"vol.9,no.1,pages65–,"UsingEnvironmentalVariables"78,2022.

[40] M.Jha,R.Gupta,andS.Agarwal's"DeepLearning-BasedCitrusDiseaseIdentification.""EgyptianJournalof RemoteSensingandSpaceSciences,"vol.,usingphotographsofleavesandfruits.25,no.1,pages145–157,2022.

[41]Y.Zhou,X.Liu,andZ.Lin,"ALightweightDeep CNNModelforEdgeDevicesin,""Agriculture,"IEEEAccess,vol. 10,pages54678–54688,2022.

[42]D. Ahmed, K. Rahman, and F. Tariq's "Integration of Machine Learning and IoT for Smart" "Agricultural Monitoring,"Computerand ElectricalEngineering,vol.101,pp.108073,2022."ApplicationofExplainableAIin Agriculture:AReview"byR.BanerjeeandM.Chatterjee

[43]"Roadmap,"ArtificialIntelligenceReview,vol.55,pp.11237–11264,2022.

[44] "IntegrationofEdgeandCloudComputing"byG.Kundu,S.Bandyopadhyay,andP.Mitra."forSmartFarm Management"isthetitleofIEEECloudComputing,vol.8,no.4,pp.34–43,2021.V.K.Tiwari,N.Goel,andP.Singh,

[45]"Cloud-EdgeUtilizingAI-DrivenSustainableFarming" SustainableComputing:InformaticsandSystems,vol. 36,pp.100712,"HybridArchitectures,"2023.

BIOGRAPHIES

Mr.DilkhushTikaleisafinal-yearstudentpursuingaBachelorofEngineeringinComputerScienceandEngineeringatGuru NanakInstituteofEngineeringandTechnology,Nagpur.Hisareasofinterestincludeartificialintelligence,machinelearning, andembeddedsystems.HecontributedtomodeloptimizationandthedevelopmentoftheEdge-AIdeploymentmoduleinthis project.

Mr. Sahil Raut is a final-year Computer Science and Engineering student at Guru Nanak Institute of Engineering and Technology,Nagpur. HisprimaryresearchinterestsincludeAI-basedagricultural systems,deeplearningforplanthealth monitoring,andcomputervision.Heservedastheteamleadandcoordinatedprojectdesignandresearchdocumentation.

Mr.AdityaWarudkarispursuinghisBachelor’sdegreeinComputerScienceandEngineeringfromGuruNanakInstituteof Engineering and Technology, Nagpur. His academic interests focus on IoT integration, data preprocessing, and system architecturedesign.HeworkedonimplementingIoTsensorsanddatacollectionmodulesfortheAgroLenssystem.

Mr.AniketSuryawanshiisaComputerScienceandEngineeringundergraduateatGuruNanakInstituteofEngineeringand Technology,Nagpur.Hisfocusareasincludedeeplearning,neuralnetworkoptimization,anddatavisualization.Hecontributed tothemodeltraining,performancetuning,andresultsanalysisphaseoftheproject.

Mr. Shubham Shile is an undergraduate student of Computer Science and Engineering at Guru Nanak Institute of EngineeringandTechnology,Nagpur.Hisinterestsincludemobileapplicationdevelopment,AI-basedautomation,andrealtimesystemdeployment.Hewasresponsiblefordevelopingthemobileinterfaceanduseradvisorychatbotmodule

Dr.SushmaTelrandheisaProfessorintheDepartmentofComputerScienceandEngineeringatGuruNanakInstituteof Engineering and Technology, Nagpur. She has extensive teaching and research experience in artificial intelligence, data analytics, and IoT systems. She guided and supervised the project, providing valuable technical and research insights throughoutitsdevelopment ….; ’