International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN:2395-0072

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN:2395-0072

Nisar Ahmad Kangoo1@

1 Higher Education Department, Union Territory of Jammu and Kashmir ***

Abstraction - This research employs a large dataset titled “A Curated Dataset for Hate Speech Detection on Social Media Text”, hosted in the Mendeley Data repository, to detect hate speech and analyze its frequency and traits. The dataset contains 451,709 text samples: 371,452 labeled as non-hateful and 80,250 as hateful. Our analysis combines machine learning techniques with natural language processing methods. We find that a substantial portion of the hateful content is directed at demographic groups. Additionally, we identify common themes and linguistic patterns associated with hate speech. This work contributes to ongoing efforts to combat online hate, and holds implications for community moderation and content governance.

Keywords: Hate speech, machine learning, NLP, online contentmoderation,textanalysis

Hate speech is defined as any speech that degrades, discriminates against, or ultimately incites specific violence on a person or group of people based on characteristics like race, color, creed, gender identity, sexual preference, national origin, or any other salient group identifier that defines a person. In this sense, the phenomenonembodiesapervasiveandsharplypolarizing oneinthecontextofa contemporaryworldsocietywhere communication extends beyond the borders of territory and culture. Hate speech, which is essentially based on hostilityanddiscrimination,ishighlyharmfultothesocial fabric, a serious attack on the basic principles of free speech,andcancauseseriousdamagetotheidentitiesand rightsofthegroupsandindividualstowhomitisdirected, perpetuating existing practices in discrimination and socialexclusion.

Having a complete understanding of what hate speech is, how it manifests, and the complex consequences of it-at both a personal level and over the wider social context-is extremely important to creating an environment that supports diversity, mutual respect and inclusion in an increasingly digital and interconnected global community.

Hate speech exists within many forms that vary from verbalandnonverbalexpressions,symbolicexpressionsto its dissemination through veiled language, making the phenomena challenging to identify and respond to. For example,thesocialmediadomainisoftencharacterizedby morecovertformsofhatedependingmoreonimplication and insinuation than clear-cut aggressive or direct hostility, which makes confronting these more difficult to effectivelydealwith.

Aim:Theaimofthisresearchpaperistorigorouslyassess multiplemethodologieswhichinvolvesupervisedmachine learninginanextensiveamountofdatasoastodetermine whichofthealgorithmicmethodologiesaremosteffective for detection and the analysis of hate speech in digital communication. By taking steps to identify and then remove hate speech on social networking sites and other online communities, we can play an important role in maintaining religious, regional, cultural and social peace on a global scale and thus lead to a more inclusive and respectfulonlinediscourse.

So far, numerous scholars and researchers from the academic community have focused their efforts and expertise on the complex and challenging problem of hate speech detection, which continues to be one of the most critical problems in the modern-day digital communications. These industrious researchers have scrupulously analyzed and researched a wide range of differentinformationthathasbeensystematicallygathered from major social media platforms including YouTube, Twitter-now known as X-, MySpace, Wikipedia, Usenet, Instagram as well as Facebook, so as to provide a holistic approach to the phenomenon they are investigating. The preparation of the summary and the conclusion from the previous research papers which is a rich source of information on this topic are presented in Table 1 below this text. Additionally, the associated literature also provides important perspectives on the identification of hatespeechinotherlanguagesbeyondEnglishwhichisthe dominantlanguageusedtherebyestablishingtheexistence ofthisimportantproblemworldwide.

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN:2395-0072

Table -1: summaryfrompreviousresearchpapersonhate speechdetection

Author and Year

Platfor m

Machin e Learnin g Approa ch

Features Representa tion Algorit hm

Park, Fung (2017 ) [1]

Wiegand et al. (2018 ) [2]

Warner, Hirschbe rg (2013) [3]

Burnap, Williams (2014 ) [4]

Twitter Supervi sed

Character and Word2vec Hybrid CNN

Twitter, Wikiped ia, UseNet

Supervi sed

Lexical, linguistics and word embedding SVM

Yahoo newsgr oup Supervi sed Templatebased, PoS tagging SVM

Twitter Supervi sed BOW, Dependenci es, Hateful Terms Bayesia n Logistic Regress ion

Gitari et al. (2015 ) [5] Blog SemiSupervi sed

Lexicon, Semantic, themebased features Rulebased 0.73

Waseem, Hovy (2016 ) [6] Twitter Supervi sed Character ngram Logistic regressi on

Pitsiliset al. (2018 ) [7] Twitter Supervi sed Word-based frequency vectorizatio n RNN and LSTM

Vidgen, B et.al (2020) [8]

(tweets)

Balouchz ahi, F et al. (2021) [9]

tweets

ThisdatasetcomesfromtheMendeleyData repository(“A Curated Hate Speech Dataset”), freely available for academic research. You can access it here: https://data.mendeley.com/datasets/9sxpkmm8xn/1 (accessed15September2025).

It includes 451,709 English-language sentences, of which 371,452 arelabeledas hateful contentand 80,250 as nonhateful

Keyfeaturesofthedataset:

A custom vocabulary of 145,046 unique words was constructed using an augmented, balanced versionofthedata.

The dataset also includes 6,403 English contractions. The count of “bad words” (frequentlyusedinhatefulcontent)is 377

Preprocessingstepsinclude:removinghyperlinks, emojis/emoticons conversion, expanding contractions, correcting misspellings, removing punctuation/special characters, and cleaning numericandaccentedentries.

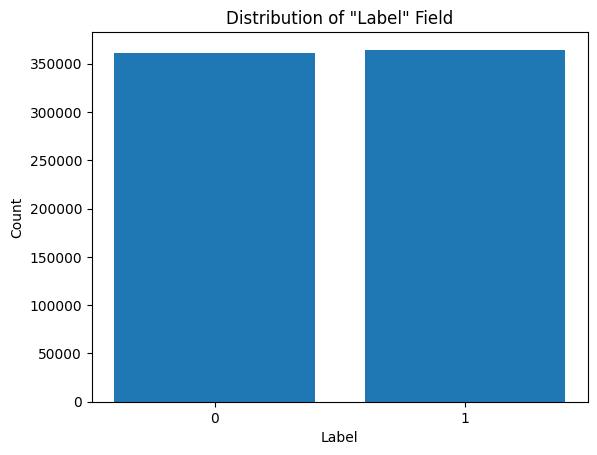

The data is balanced for training: after balancing, there are roughly 364,525 “1” s (hate) and 361,594 “0”s(non-hate).

Inthefinaldataset,sentencesarelabeledwith“1”forhate speechand“0”fornon-hatespeechasdepictedinFigure1.

Fig -1: Thebalanceddatasetwith1'sand0's

4. Machine learning Models

MachineLearning(ML)isabranchofArtificialIntelligence wherealearningagentistrainedondata,learnsfromthat

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net

data,andthenuses itslearned model to make predictions or decisions on new data. The main types of ML are supervised,unsupervised,andreinforcementlearning.

Supervised learning involves feeding the model labeled data data where the correct output is already known. Examples include classifying emails as spam or not spam, or marking text as hate speech vs. non-hate speech. It is suited for solvingclassificationandregressiontasks.

Unsupervised learning works with data that is notlabeled.Themodel seekstodiscoverinherent structure,groupings,orpatternswithinthedata such as clustering fruits by color, shape, or size whenyoudon’thavelabels.

Reinforcement learning is based on an agent’s interaction with an environment, receiving rewards or penalties depending on its actions. Overtime,theagentlearnspoliciesthatmaximize cumulativereward.

Since the dataset in this work is for classification (hate speech vs. non-hate speech), supervised machine learning approaches are the most suitable. We evaluate several supervised learning algorithms to compare their performance on this specific dataset. Below is a brief overviewoftheselectedalgorithms.

Naive Bayes: Naive Bayes classifier is basically a type of probabilistic classification algorithm, it determines the probabilityofagivendocument,denotedasDDD,ofbeing in a specified class, denoted as CCC. This determination comes to fruition via the application of Bayes theorem, working in regards to the crucial presumption that all the features concerned in the classification course of action areveryimpartialofeachother,contingentuponthegiven class. Due to the stipulation of this independence assumption, the model has been aptly designated as "naive," to point out its naivety of seeing feature relationships.

This specific algorithm is highly regarded in the realm of machine learning and its ability to solve an entire set of problems-the ability to process data based on a given feature, i.e. process data by considering them separatelyremains a very simple and illuminating concept for its abilitytoprocessahugesetoftrainingoperationsinavery shortperiodoftimeandto create predictionsinthesame enthusiastic tempo. Nevertheless, it is notable that the effectiveness of this model tends to be reduced significantly in those situations where the features have

p-ISSN:2395-0072

strong interdependencies or correlations in that such situations lead to the violation of the independence assumption,whichformsthetheoretical foundationofthe model.

Decision Tree: The decision tree model can be characterized by the quality of being a hierarchical structure that is made of nodes that are systematically linkedthroughtheuseofdirectedbranches,thatis,usedto facilitate the flow of information. During the training phase, documents are assigned into the decision tree in a well-organized performance of a binary yes/no (true/false) questions that evaluates their features. The internal nodes of this structure represent important decision-making nodes while the terminal leaf nodes are indicative of the ultimate class labels assigned to the documents. The paths going out from the root node down to a leaf node contain the conjunctions of the feature condition, which finally result in the assignment of those respective class labels. Despite the growing complexity introducedasmoreinputfeaturesaremoretobeused,the operational efficiency of decision trees presents an attractivefeature;further,decisiontreesarealsoknownto be lauded for their intuitive interpretability and relatively minimal pre-processing requirements on the underlying data.

Random Forest: Random Forest is designed as an ensemble-classifier(agroupofclassifiers)whichwillbuild several decision trees on different random subsets of the overall data set with each tree based on a different data set, using a set of random samples and randomly generatedvaluesresultingattheendanoveralldecisionof the ensemble classifier, which will then increase the accuracy of the predictive model. Rather than relying on the output of an individual decision tree, each of these individual trees in the forest enter a voting process, or each of the classes achieves the highest vote from the entiresetoftreesbecomethefinalpredictedoutput.

By virtue of it being an aggregate of many models, a RandomForestisgoodatchangingtherisksofoverfitting, an issue that can develop when a model is overly tailored to its training data, in comparison to a singular decision tree. This ensemble approach usually has a powerful ability to deal with large amounts of features effectively andthevotingmechanismbehinddecisionmakingprocess contributes to the stability and reliability of the predictions.

KNN: The KNN (K nearest neighbors) methodology is a representative of the supervised learning methodology thatcanbeusedforclassificationandregressionactivities.

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN:2395-0072

The general working principle underlying KNN is that pointsinthedataspacethatareneareachother,shouldbe likelytohavesimilarlabelsorvalues.

Rather than building a predictive model in the training process KNN keeps all the labeled instances for further references.Whenfacedwiththeneedtoclassifyorpredict a new instance where there is no associated label, KNN finds the 'k' training samples which lie closest to the new instance, by using a distance that is close to this new instance, such as the straight-line (Euclidean) distance. In the case of classification problems, it maps the recently evaluated instance to a class that is most common in the identified k neighbors; and in the case of regression problems, for example, it may calculate the average of the valuescorrespondingtotheseneighbors.

Ensemble Method: Ensemble methods are intended to combinemultiplemachinelearningmodelsalongwiththe goalofboostingtheoverallaccuracyandrobustnessofthe predicted results. In terms of this specific study, what we trytodoistotraintwodifferentRandomForestclassifiers withthesamesetofdata,butwithdifferentrandomseeds tomakeitchangeable.Eachoftheseclassifiersreceivesits own set of predictions and the final decision on the classification is based on some kind of majority voting which encapsulates the results of both classifiers. This voting-based ensemble approach has been found to be particularly effective in solving binary classification problems, as it effectively reduces the effect of individual model errors and plays a role in reducing the level of instabilityinthewayinwhichthedecisionismade.

Gradient Boosting Algorithms: Algorithms such as AdaBoost, Gradient Boosting Machines (GBM), and XGBoost work by iteratively enhancing model performance: they combine many simple (weak) learners toforma strongerpredictor.Eachnewlearnerfocuses on correcting errors from previous ones, so over successive roundstheoverallmodelbecomesincreasinglyaccurate.

The dataset in question comprises a large and carefully labeled corpus of sentences that have been carefully classifiedashatespeechornon-hatespeech,andassuchis therefore ideally suited for use in training various sentiment-based models meant to automatically classify content of sentences. This dataset is organized into two different columns; one column is where the actual text of the sentences is situated and the other one is where the corresponding labels are, where 0 means the sentence is categorized as non-hate speech and 1 means the sentence

is categorized as hate speech. To optimize the models evaluation, we split the dataset into training set and testing set using the ratio 80:20 which is the widely acceptedproportioninMLinorderthatwecanmakesure that the models are trained well and then test it on a different data. Based on these partitions, the chosen supervisedmachinelearningalgorithmsweresequentially fitted to the training data and their performance which is quantitatively measured using different metrics such as accuracy, precision, recall, F1 score displayed in Table 2 below, together they have been used for additional analysisandinterpretation.

Table -2: Summaryofresultsfromvariousclassification algorithmsused

5 Ensemble predictions

Gradient Boosting

8 XGBoost Classifier

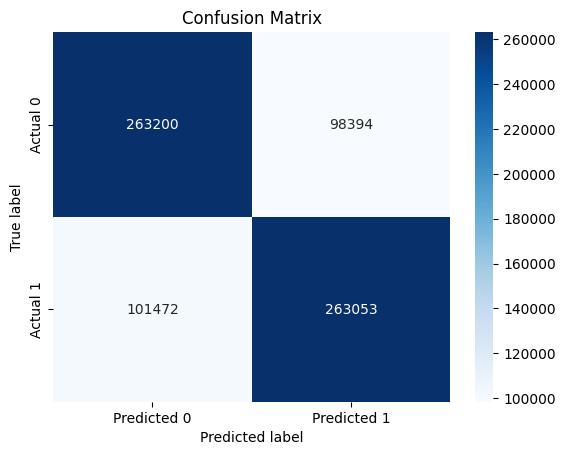

We have also used K-fold cross validation for dataset on randomforestclassificationtoaccessitsperformance.The result for the same is depicted in confusion matrix when numberoffolds=5asshowninfigure2.

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN:2395-0072

Fig- 2: ConfusionMatrixforK-foldcrossvalidationfor randomforestalgorithm

The confusion matrix is a 2x2 matrix that represents the performance of a classification algorithm. Here's how to interpretthedifferentelementsoftheconfusionmatrix:

True Positive (TP): 263200 - The number of instances of the positive class that were correctly predictedbythemodel.

True Negative (TN): 263053 - The number of instances of the negative class that were correctly predictedbythemodel.

False Positive (FP): 98394 - Also known as a Type I error, this is the number of instances that were incorrectlypredictedaspositivewhentheyareactually negative.

False Negative (FN): 101472 - Also known as a TypeIIerror,thisisthenumberofinstancesthatwere incorrectly predicted as negative when they are actuallypositive.

We observed that the Decision Tree, Random Forest, KNearest Neighbors (KNN), and Ensemble Prediction models consistently achieved an accuracy of 72%. In comparison, the AdaBoost, Gradient Boosting, and XGBoost classifiers exhibited slightly lower accuracies, ranging from 57% to 59%. The Naive Bayes classifier demonstratedthelowestperformance,withanaccuracyof 53%. These results suggest that while some models performmoderatelywell,noneoftheevaluatedalgorithms achieved highly satisfactory performance across all metrics.

The findings of this study indicate that the supervised machine learning algorithms considered here arelimitedintheirabilitytoaccuratelydetecthatespeech. It is therefore recommended to explore alternative approaches and more advanced models to achieve higher classification performance. The development of such models could contribute significantly to automatically identifyingandremovinghatespeechonsocialnetworking platforms, thereby promoting a safer and more harmoniousonlineenvironment.

[1] J. H. Park and P. Fung, “One-step and Two-step ClassificationforAbusive Language Detectionon Twitter,” inAICSConference,2017.

[2] M. Wiegand, J. Ruppenhofer, A. Schmidt, and C. Greenberg, “Inducing a Lexicon of Abusive Words – a Feature-Based Approach,” in Proceedings of the 2018 Conference of the North American Chapter of the Association for Computational Linguistics: Human LanguageTechnologies,Volume1(LongPapers),2018,pp. 1046–1056.

[3] W. Warner and J. Hirschberg, “Detecting Hate Speech ontheWorldWideWeb,”no.Lsm,pp.19–26,2012.

[4] P. Burnap and M. L. Williams, “Hate Speech, Machine Classification and Statistical Modelling of Information Flows on Twitter: Interpretation and Communication for Policy Decision Making,” in Proceedings of the Conference ontheInternet,Policy&Politics,2014,pp.1–18.

[5] N. D. Gitari, Z. Zuping, H. Damien, and J. Long, “A lexicon-based approach for hate speech detection,” Int. J. Multimed. Ubiquitous Eng., vol. 10, no. 4, pp. 215–230, 2015.

[6] Z. Waseem and D. Hovy, “Hateful Symbols or Hateful People? Predictive Features for Hate Speech Detection on Twitter,” Proc. NAACL Student Res. Work., pp. 88–93, 2016.

[7] G. K. Pitsilis, H. Ramampiaro, and H. Langseth, “Effective hate-speech detection in Twitter data using recurrentneuralnetworks,”Appl.Intell.,vol.48,no.12,pp. 4730–4742,Dec.2018.

[8] Vidgen, B.; Yasseri, T. Detecting weak and strong Islamophobic hate speech on social media. J. Inf. Technol. Polit.2020,17,66–78.

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 09 | Sep 2025 www.irjet.net p-ISSN:2395-0072

[9] Balouchzahi, F.; Shashirekha, H.L.; Sidorov, G. HSSD: HatespeechspreaderdetectionusingN-Gramsandvoting classifier.CEURWorkshopProc.2021,2936,1829–1836.