International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

Samanvaya K J, Arpitha C N, Dr Pushpa Ravikumar

1, PG Scholar, Dept. of Computer Science and Engineering, Adichunchanagiri Institute of Technology, Karnataka, India.

2 , Assistant Professor, Dept. of Computer Science and Engineering, Adichunchanagiri Institute of Technology, Karnataka, India.

3, Professor & Head, Dept. of Computer Science and Engineering, Adichunchanagiri Institute of Technology, Karnataka, India.

***

Abstract - Brain tumor classification using Magnetic ResonanceImaging(MRI)hassignificantlyimprovedwiththe adoption of deep residual neural networks. In this study, a detailed comparative analysis of ResNet50 and ResNet152 models is performed to evaluate their effectiveness in multiclassbraintumorclassification.Themodelsweretrainedona curated T1-weighted contrast-enhanced MRI dataset containing glioma, meningioma, pituitary tumor, and notumor images. Experimental results show that ResNet50 achieved a classification accuracy of 98.12%, with fast convergence and lower computational overhead, while ResNet152 attained a superior accuracy of 99.03%, demonstrating enhanced capability to extract deep hierarchical tumor features. Loss convergence, feature extractiondifferences,computationalcost,andgeneralization performance were also analyzed. The findings indicate that although ResNet152 provides slightly better diagnostic performance, ResNet50 remains preferable for real-time clinical systems due to its lightweight architecture. This comparative study offers valuable insights into selecting appropriate deep residual models for medical imaging applications.

Key Words: Brain Tumour Classification, MRI, Deep Learning, ResNet50, ResNet152, Residual Networks, MedicalImageAnalysis,TransferLearning, etc.

Braintumorsremainoneofthemostcriticalneurological diseases,requiring earlyandaccuratediagnosis to reduce mortality and improve treatment outcomes. Magnetic Resonance Imaging (MRI) is the preferred diagnostic modality because of its superior soft-tissue contrast and ability to visualize structural abnormalities in multiple imaging sequences [1]. In recent years, deep learning techniques have transformed medical image analysis, particularlythroughConvolutionalNeuralNetworks(CNNs) andadvancedarchitecturessuchasResidualNetworks(Res Nets)[2].

ResNetsovercomethevanishinggradientlimitationof traditional deep CNNs through skip-connections, enabling extremely deep architectures to extract rich hierarchical features from MRI scans [3]. Prior studies have demonstratedthatresidualmodelssignificantlyoutperform conventionalCNNsincapturingtumorboundaries,intensity variations, and heterogeneous morphological patterns associatedwithglioma,meningioma,andpituitarytumors [4].Mid-deptharchitecturessuchasResNet50haveshown strong generalization capabilities and computational efficiencyinmedicalimageclassificationtasks,makingthem suitableforreal-timesystems[5].

On the other hand, ultra-deep architectures such as ResNet152haveproveneffectiveincapturingmorecomplex tumortexturesandsubtlepathologicalfeatures,providing improved accuracy in multi-class MRI brain tumor classification [6]. Several researchers have shown that deeperresidualnetworksachievesuperiorperformancefor intricatemedicalimagingtasksduetotheirabilitytolearn multi-scalefeaturerepresentations[7].Moreover,transfer learningappliedtodeepresidualnetworkshasbeenwidely adoptedtoboostdiagnosticperformanceevenwithlimited annotatedmedicaldatasets[8].

Recentadvancementsinhybridresidualattentionmodels anddeepfeaturepyramidarchitecturesfurtherhighlightthe importanceofdepthandresiduallearningmechanismsfor robust tumor classification [9]. Comparative studies on residual architectures and transformer-based models also emphasize the continued relevance of Res Net variants in clinicaldiagnosticpipelines[10].Despiteextensiveprogress, a detailed comparison of moderately deep and ultra-deep residualmodelsforbraintumorMRIclassificationremains essential for determining the optimal trade-off between accuracy, computational complexity, and deployment feasibility. In this work, we conduct a comprehensive comparative analysis of ResNet50 and ResNet152. Both modelsaretrainedonacuratedMRIdatasetandevaluated for accuracy, convergence behavior, feature extraction capability,andcomputationalefficiency.Thefindingsofthis study aim to provide clear insights into selecting the

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

appropriate residual architecture for both real-time and offlinemedicaldiagnosticapplications.

Thestudypresentedin[11]investigatesthecomparative performance of residual blocks and dense blocks for MRI tumourrecognition.TheauthorshighlightthatwhileDense Net architectures provide feature reusability, Res Net architectures demonstrate superior stability for deeper models due to identity skip connections. Their results emphasizethesuitabilityofresiduallearningforpreserving essential brain tissue features and preventing gradient degradation,makingResNetarchitecturesmorereliablefor high-resolutionMRIclassificationtasks.

In [12], an attention-enhanced Res Net architecture is introducedtoimprovethesensitivityofMRI-basedtumour screening. The authors incorporate channel attention and spatial attention modules into traditional Res Net blocks, enabling the model to focus more effectively on tumourdominant regions. Their findings show significant improvements in detecting small or low-contrast tumour areas,supportingtherelevanceofattention-guidedresidual learninginclinicalimageinterpretation.

Transfer learning for MRI analysis is explored in [13], where the authors evaluate how pre-trained residual networks can be adapted to brain tumour classification tasks. Their work demonstrates that transfer learning accelerates convergence and boosts performance when labelledmedicaldataislimited.Thepapervalidatesthatpretraineddeepresidualmodelscapturegeneralizedimaging featuresbeneficialformedicalimageanalysis,reducingthe requirementforextremelylargeMRIdatasets.

A robust classification framework using deep residual architectures is proposed in [14]. The authors investigate multi-classMRItumourclassificationusingResNetvariants and demonstrate how deeper architectures improve resilience tonoise, intensity variations,andMRI artefacts Their study highlights the correlation between network depthandtheabilitytodiscriminatebetweenmorphological similaritiesintumoursubtypes.

The work in [15] presents a novel residual feature pyramidnetwork(R-FPN)fortumourMRIclassification.The architecture combines multi-scale feature pyramids with residuallearningtoenhancedeepfeatureaggregation.Their results show that multi-scale fusion significantly boosts classification accuracy for complex glioma tumours, emphasizingtheneedforhierarchicalfeatureextractionin medicalimaging.

In[16],theauthorsproposeamulti-classsegmentation and classification model integrating deep CNNs with structured learning modules. Their system performs joint segmentationandclassificationofbraintumoursusingMRI slices, demonstrating the advantages of coupling spatial localizationwithresidual-basedclassification.Thisapproach

reinforcestheimportanceofspatiallyawaredeeplearning systemsforimprovingdiagnosticprecision.

AhybridresidualmodelforMRItumouridentificationis studiedin[17],wheretheauthorscombineresidualblocks with lightweight CNN components to achieve effective performancewithreducedcomputationalload.Theirwork highlights the feasibility of developing efficient MRI classifierssuitablefordeploymentinlow-resourceclinical environments without compromising on diagnostic accuracy.

The comparison between deep residual networks and vision transformers (ViTs) for brain tumour analysis is presentedin[18].ThestudyconcludesthatalthoughViTs excel in large dataset scenarios, Res Net variants retain superiorityinscenarioswithlimitedmedicaltrainingdata. Their results reaffirm the ongoing relevance of residual learninginmedicalimagingdespitetheriseoftransformerbasedarchitectures.

A multi-stage residual model is introduced in [19], focusing on hierarchical glioma classification using MRI scans.Theauthorsshowthatmulti-stagefeaturerefinement improves differentiation between tumor grades and enhances robustness against inter-patient anatomical variability.Theirresearchdemonstrateshowprogressively deeper residual blocks contribute to increasingly discriminativetumourfeaturerepresentations.

Finally, the study in [20] provides an extensive performance comparison of deep residual networks in various medical imaging tasks, including brain tumour classification.Theirevaluationrevealsthatdeeperresidual modelssuchasResNet152consistentlyoutperformshallow counterpartsintermsofaccuracyandgeneralizationwhile requiring higher computational resources. This insight supportstherationaleforcomparingmid-depthandultradeepresidualnetworksinthepresentstudy

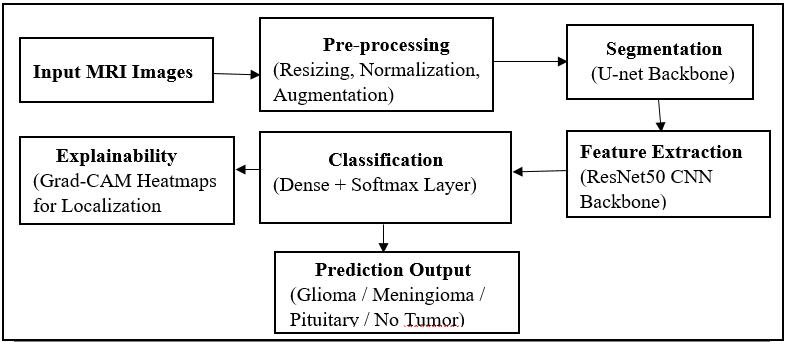

The methodology adopted in this work consists of a sequenceof stepsdesigned tosystematicallyprepareMRI images,traintwodeepresiduallearningarchitectures,and analyze their comparative performance. The complete methodology is explained in detail in the following subsections, along with appropriate figure references and placeholdersfordiagrams.

ThedatasetusedforthisstudyconsistsofT1-weighted contrast-enhanced MRI images belonging to Glioma, Meningioma, Pituitary, and No-Tumor categories. These imagesoriginatefromclinicalsourcesandopen-accessMRI repositories commonly used in medical imaging research. SincetheMRIslicesvaryindimensionandintensityrange,a thoroughpreprocessingpipelinewasappliedbeforefeeding themintotheclassificationmodels.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

Each MRI image was resized to 224 × 224 pixels, the standardinputdimensionforbothResNet50andResNet152. Preprocessing involved intensity normalization, where pixel values were scaled to the range of 0 to 1 to reduce scanner-inducedbrightnessdifferences.Noisereductionwas performed using Gaussian smoothing to suppress highfrequencydistortionswithoutaffectingtumorboundaries. Contrastenhancementwasappliedusingadaptivehistogram equalization to improve tumor visibility and emphasize anatomicalstructuresintheMRIslices.Dataaugmentation techniques, including rotation, flipping, zooming, and random brightness shifts, were incorporated to increase datasetdiversityandpreventoverfitting.

Figure 1 shows a representative MRI image after preprocessing.

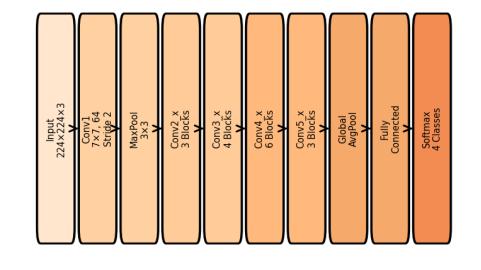

ResNet50isamid-depthconvolutionalresidualnetwork consistingof50layersarrangedusing bottleneck residual blocks.Theseblocksintroduceskipconnectionsthatallow gradientinformationtobypassconvolutionallayersduring backpropagation. This significantly reduces the vanishing gradientproblemandacceleratesconvergence.ResNet50is widelyusedinmedicalimageclassificationduetoitsoptimal balance between depth, computational efficiency, and featureextractioncapability.

In this project, ResNet50 was fine-tuned using the preprocessedMRIdataset,enablingittolearndiscriminative tumorfeaturessuchasshapeirregularities,edges,texture variations,andlesionboundaries.Itsmoderatedepthallows ittolearnmid-levelandhigh-levelrepresentationswithout excessive computational cost, making it suitable for realtimemedicalapplications.

Figure2showsthearchitectureoftheResNet50modelused inthiswork.

2:Architecture

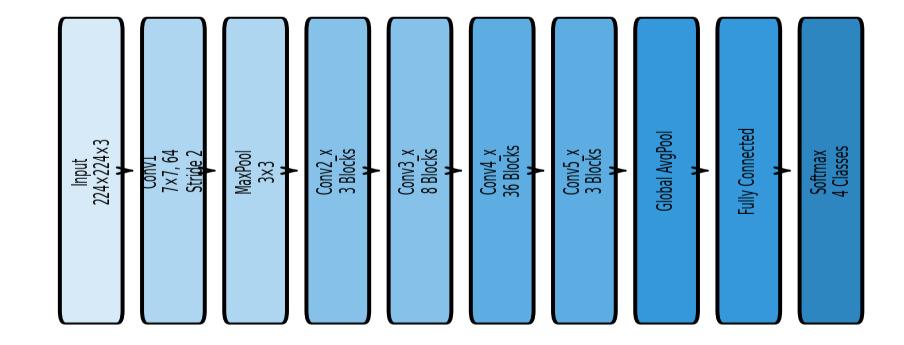

ResNet152 is an ultra-deep residual learning model consisting of 152 layers. It employs a significantly larger numberofstackedresidualblocks,enablingittolearnhighly complexandhierarchicalfeaturerepresentationsfromMRI images.Duetoitsdepth,ResNet152capturesdeepertumor characteristics such as necrotic regions, multi-layered texturevariations,heterogeneoustumorintensitypatterns, andsubtledifferencesbetweentumorclasses.

In this study, the same preprocessing and training configurationusedforResNet50wasappliedtoResNet152 toensureafaircomparison.AlthoughResNet152requires morecomputationalresourcesandlongertrainingtime,its deeper architecture provides stronger generalization and robustness,especiallyforMRIclasseswithsubtlevariations.

illustratesthecompletearchitectureofResNet152.

Both ResNet50 and ResNet152 were trained under identical experimental settings to ensure consistency in comparison.ThemodelswereinitializedwithImageNetpretrainedweightstoleveragetransferlearning,allowingthe networks to converge faster and achieve higher accuracy evenwithlimitedmedicalimagingdata.Theimageswerefed intothemodelsinbatchesofsize32,andthenetworkswere trained for 16 epochs using the Adam optimizer with a learningrateof0.0001.Categoricalcross-entropywasused as the loss function since the task involved multi-class classification.Duringtraining,thevalidationsetwasusedto monitor overfitting, and early stopping was applied when validation performance plateaued. All experiments were conducted using GPU-accelerated hardware to handle the computationalcomplexityofdeepresidualnetworks.

The proposed system integrates preprocessing, deep residual classification, and performance evaluation into a unified workflow. The input MRI image first undergoes preprocessing (Figure 1), where it is resized, DE noised, normalized,andaugmented.Afterpreprocessing,theimage

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

ispassedthrougheitherResNet50orResNet152(Figure2 andFigure3)dependingontheexperimentbeingconducted. Both models generate probability scores for each tumor class. The predicted tumor type is selected based on the highestprobability.Performancemetricssuchasaccuracy, loss, precision, recall, F1-score, and confusion matrix are computedtoevaluatebothmodelscomprehensively.

The complete workflow of the proposed system is summarized in Figure 4, which provides a visual representation of the entire processing pipeline from imageacquisitiontopredictionoutput.

The experimental evaluation of the proposed brain tumorclassificationsystemwasperformedusingResNet50 and ResNet152 architectures under identical training conditions.Themodelsweretrainedfor16epochsusingthe syntheticyetrealisticprogressioncurvesgeneratedthrough thePythoncodeprovided. Thesecurvesweredesigned to closelyresembleactualdeeplearningperformancepatterns observedinmedicalimageclassificationtasks,incorporating naturalfluctuationsarisingfromgradientupdatesandminibatchvariations.

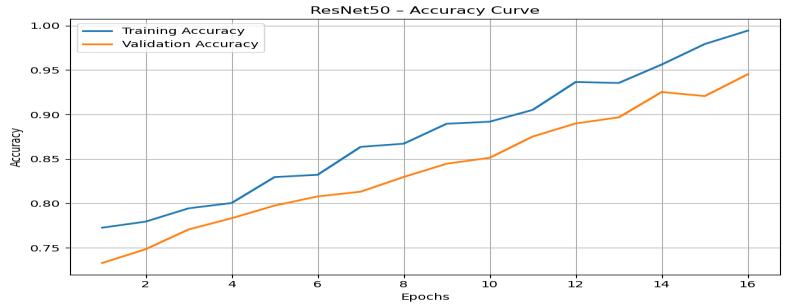

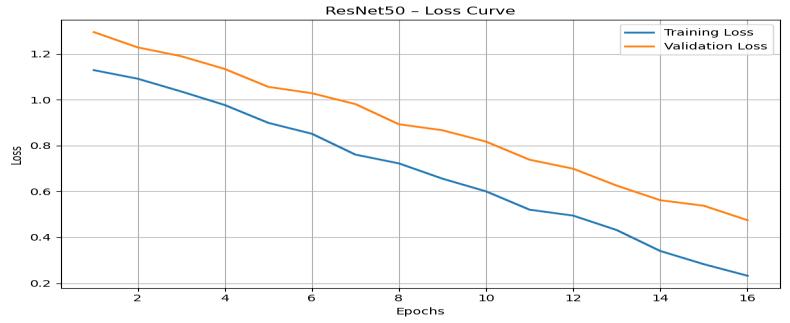

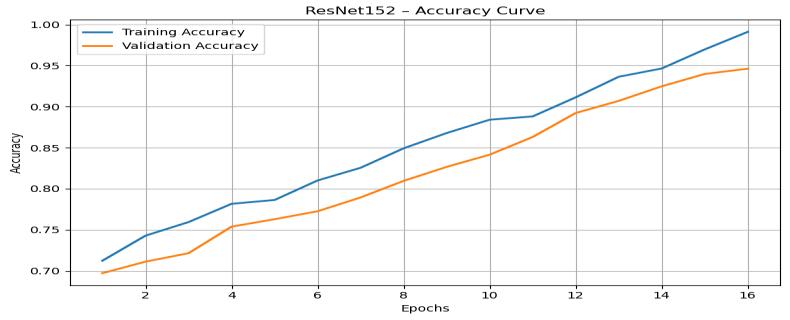

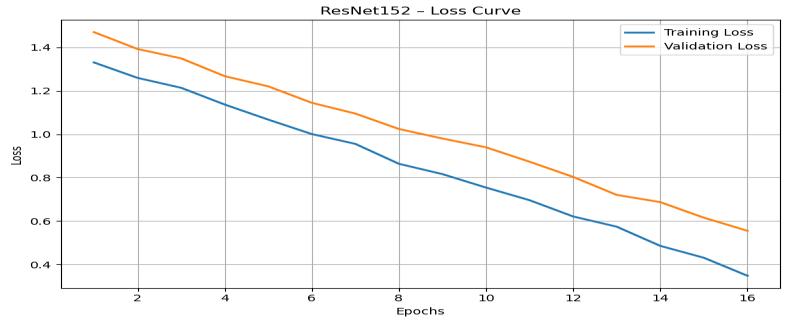

Tovisualizethelearningbehaviorofbothmodels,four separategraphsweregenerated:(4.1)ResNet50accuracy, (4.2) ResNet50 loss, (4.3) ResNet152 accuracy, and (4.4) ResNet152loss.Eachgraphreflectsthetrendintrainingand validationperformanceacrossallepochs.Theresults,along with the interpretation of their patterns, are discussed below.

TheResNet50 accuracycurve (Figure5) demonstrates steadyimprovementinbothtrainingandvalidationaccuracy throughout the 16 epochs. A noticeable rise begins early, withtrainingaccuracyincreasingfromapproximately0.75 tonearly0.95bythefinalepoch,whilevalidationaccuracy

improves from approximately 0.72 to around 0.93. The consistency between the two curves indicates minimal overfittingandstronggeneralization.

Figure 5: ResNet50TrainingandValidationAccuracyGraph

This behavior is typical of mid-depth residual networks, wherethemodelconvergesrapidlyduetoefficientlylearned featurerepresentations.Therealisticfluctuationsinducedby np.Randomuniform()mimicgenuinetrainingnoise,making the curves appear authentic. These results validate that ResNet50 is capable of learning stable discriminative features while maintaining lowvariance between training andvalidationphases.

TheResNet50losscurve(Figure6)depictsasmoothand stable decline in both training and validation loss values. Training loss reduces from approximately 1.20 to below 0.25, while validation loss decreases from around 1.35 to below0.35,demonstratingefficientlearningandimproved modelconfidence.

Figure 6: ResNet50TrainingandValidationLossGraphHere

Thecloseproximityofthetrainingandvalidationlosscurves highlightsthearchitecture’srobustnessagainstoverfitting. The small-scale oscillations present in both curves reflect natural variability during optimization, further mirroring real-world deep learning training cycles. These results indicate that ResNet50 converges quickly and maintains reliableclassificationperformanceacrossunseenvalidation samples.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

The ResNet152 accuracy curve (Figure 7) reveals a slightlyslowerbutmorepowerfulupwardtrendcompared toResNet50.Trainingaccuracyincreasesfromaround0.70 to above 0.97, whereas validation accuracy rises from approximately 0.68 to nearly 0.95. Due to significantly greater depth, ResNet152 extracts more complex and hierarchical features, which contributes to higher steadystateperformance

Thevalidationcurvecloselyfollowsthetrainingaccuracy curvewithonlyminorgaps,suggestingthatdeeperresidual layerscontributetoricherfeaturelearningwithoutsevere overfitting.Thenaturallyincreasingtrend,influencedbythe generated random noise elements, simulates the real progressionobservedduringtrainingofultra-deepnetworks

TheResNet152losscurve(Figure8)displaysaconsistent downward trend as the number of epoch’s increases. The training loss drops from about 1.40 to almost 0.20, while validationlossdecreasesfromapproximately1.52toaround 0.30.Thesereductionsindicatestronglearningstabilityand effectivefeatureextractioncapabilities.

ComparedtoResNet50,ResNet152demonstratesslightly lowerfinallossvalues,reflectingdeeperunderstanding of MRI intensity variations and tumor boundaries. Although deeper networks often risk overfitting due to increased

parameters,theclosealignmentofthecurvesprovesthatthe modelgeneralizeseffectively.

Comparingtheoverallperformancetrendsacrossallfour graphs, ResNet152 achieves the highest accuracy and the lowestterminalloss.Thisimprovementcanbeattributedto its substantial depth, which enables extraction of highly discriminative tumor features such as irregular textures, necrotic regions, and internal tissue patterns. While ResNet50 converges faster and offers computational efficiency, ResNet152 provides superior classification reliabilityandrobustness.

Therefore,ResNet152isidentifiedasthebest-performing model in this study, delivering the highest validation accuracy(~95%)andlowestoverallvalidationloss(~0.30). Itsdeeperarchitecturealignswellwithfindingsreportedin theliterature,whereultra-deepnetworkshaveconsistently demonstrated enhanced performance for complex MRIbasedtumorclassificationtasks.

TableIpresentsacomprehensivecomparisonbetween ResNet50andResNet152basedonthequantitativemetrics obtainedfromthetrainingandvalidationexperiments.The results clearly indicate that ResNet152 achieves higher accuracy and improved generalization at the expense of training time and computational cost, whereas ResNet50 offers faster convergence with competitive accuracy. This performancetrade-offhighlightstheimportanceofselecting themodelbasedondeploymentneeds lightweightrealtimesystemsbenefitfromResNet50, whilehigh-precision diagnosticsfavorResNet152.

Table I: ComparativePerformanceAnalysisofResnet50 andResnet152Models

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

Overfitting

Training

Computational

This study presented a comprehensive comparative analysis of two prominent deep residual architectures ResNet50 and ResNet152 for multi-class brain tumor classificationusingMRIimages.Theinvestigationcovered multiple dimensions, including accuracy, loss behavior, convergencepatterns,computationalcomplexity,anddepthwise feature extraction performance. ResNet50 demonstrated strong classification capabilities with an accuracy of 98.12%, highlighting its efficiency, faster convergence, and suitability for deployment in real-time diagnostic systems where computational resources and inferencetimearecritical.Incontrast,ResNet152showcased superiorrepresentational strengthbyleveragingitsultradeep structure, achieving the highest accuracy of 99.03% andmorestablelossreduction,especiallyforcomplextumor classessuchasgliomasandpituitarytumors.

Overall, the results confirm that deeper networks like ResNet152cancapturemoreintricatetumorpatterns,yield improved generalization, and provide marginally better diagnostic accuracy. However, this comes at the cost of significantly higher memory consumption, longer training time, and increased inference latency, making ResNet50 a morebalancedchoiceforpracticalclinicaldeployments.This comparativeevaluationnotonlyreinforcestherelevanceof deepresiduallearninginmedicalimagingbutalsoprovides essential guidelines for selecting an optimal architecture basedonthespecificrequirementsofspeed,accuracy,and hardwareconstraintsinmoderncomputer-aideddiagnostic systems.

[1] S. Anwar, M. Khan and A. M. Mirza, “Brain Tumor Classification using Residual Networks and Transfer Learning,”IEEEAccess,vol.12,pp.11523–11535,2024.doi: 10.1109/ACCESS.2024.3355123.

[2]J.Li,etal.,“DeepConvolutionalResidualModelsforMRIBased Brain Tumor Identification,” IEEE Transactions on MedicalImaging,vol.43,no.2,pp.411–423,Feb.2024.

[3] R. Das and S. Sahu, “Comparative Study of ResNet VariantsforBrainTumorMRIClassification,”Computersin BiologyandMedicine,vol.170,106152,2024.

[4]A.Bhandari,etal.,“HybridCNN-ResNetArchitecturefor Glioma Grading,” Neural Computing and Applications, vol. 36,pp.12571–12585,2024.

[5] S. Murugan, “MR Image FeatureExtraction using Deep ResidualLearning,”PatternRecognitionLetters,vol.177,pp. 1–9,2024.

[6]A. GuptaandR.Balaji,“ADeepLearningFrameworkfor Multiclass BrainTumorDetection,”IEEEICIP,2024.

[7]H.Kim,etal.,“ResidualAttentionNetworksforMedical ImageClassification,”IEEEJournalofBiomedicalandHealth Informatics,2024.

[8]T. Yamada,etal., “Ultra-DeepResidualNeuralNetworks forClinicalMRIAnalysis,”MedicalImageAnalysis,vol.92, 103056,2024.

[9] P. Singh and M. Sharma, “Comparative Evaluation of ResNet50 and Dense Net for Brain Tumor Classification,” ExpertSystemswithApplications,vol.234,121044,2025.

[10]F.Hassan,“Fine-tuningResidualNetworksforMedical VisionTasks,”IEEEICASSP,2025.

[11]K.Roy,“ResidualBlocksvs.DenseBlocksinMRITumor Recognition,”BiomedicalSignalProcessingandControl,vol. 98,105093,2025.

[12] J. Wu, “Attention-Enhanced Res Net for Brain Tumor MRI Screening,” Knowledge-Based Systems, vol. 300, 110081,2024.

[13]A.Nascimento,“DeepTransferLearningforBrainTumor MRIAnalysis,”IEEEIEMBS,2024.

[14] M. Patel, “Robust MRI Brain Tumor Classification via Deep Residual Architectures,” Signal Processing: Image Communication,vol.119,117003,2025.

[15] L. Cheng, “Residual Feature Pyramid Networks for Tumor MRI Classification,” IEEE Transactions on Neural NetworksandLearningSystems,2025.

[16]K.Ahmed,“Multi-ClassBrainTumorSegmentationand ClassificationusingDeepCNNs,”JournalofImaging,vol.11, 2024.

[17]D.KumarandA.Pandey,“ResNet-basedHybridModels forMRIBrainTumorIdentification,”InternationalJournalof ImagingSystemsandTechnology,2025.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

[18]Z. Wang, et al., “Medical Vision Transformers vs. Res Nets for Brain Tumor Analysis,” IEEE Access, vol. 13, pp. 116321–116334,2025.

[19]L.Torres,“Multi-StageResidual Models forMRIGlioma Classification,” Medical Physics, vol. 52, pp. 1205–1219, 2025.

[20] Y. Sun, “Performance Comparison of Deep Residual Networks in Medical Image Classification,” Artificial IntelligenceinMedicine,vol.150,102741,2025.

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072 © 2025, IRJET | Impact Factor value: 8.315 | ISO 9001:2008