International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

Lakkimsetty Nandini1 , Kanna nithin ashok kumar2 , Khandapu Sai Krishna3, Lam Gnanesh4 , G. Venkata rao5

1234UG Student, Department of Computer Science & Engineering, Vignan's LARA Institute of Technology & Science, Vadlamudi, India.

5Assistant Professor , Department of Computer Science & Engineering , Vignan's LARA Institute of Technology & Science, Vadlamudi, India.

Abstract - Machine Learning (ML) models deployed in realworldapplications such as healthcare,banking,cybersecurity, andautonomous systems are highlyvulnerable toadversarial attacks. These attacks introduce small, imperceptible perturbations into input samples, causing ML models to misclassify them with high confidence. To address this challenge, we propose AI Vigil-Guard, a real-time adversarial defense frameworkcapableofidentifyingadversarialsamples, analyzing their behavior, and protecting ML models from malicious manipulation. The system uses numerical feature monitoring, predictionconsistencyanalysis, confidence-score deviation, and statistical anomaly detection to classify inputs as clean or adversarial. It further incorporates multi-attack simulation (FGSM, PGD, DeepFool, CW) to evaluate model robustness. A Streamlit-based interactive interface enables real-time visualization, dataset validation, and report generation. Experimentalresults demonstratethatthesystem significantly enhances model robustness and provides explainable, transparent adversarial detection, making it suitable for academic and industry-level AI security needs.

Key Words: Machine Learning Security, Adversarial Attacks,AIRobustness,FGSM,PGD,DeepFool,DefensiveAI, Real-TimeDetection.

Artificial Intelligence (AI) and Machine Learning (ML) systems have become central pillars of modern digital transformation, enabling automation, decision-making, predictive analytics, and intelligent control across a wide range of domains including finance, healthcare, cybersecurity, defense, transportation, and industrial computing.Asmodelsbecomeincreasinglypowerful,they are also exposed to a variety of security risks that exploit theirmathematicalvulnerabilities.Oneofthemostsevere andrapidlyevolvingthreatsistheemergenceofadversarial attacks deliberatemanipulationstoinputdatadesignedto deceive machine learning models without appearing suspicioustohumanobservers.

Indomainswheretabulardatasetswithnumericalfeatures (suchasf0…f9)areusedforclassificationorprediction,the vulnerability becomes even more pronounced. These featuresareoftenfeddirectlyintopredictionpipelinesfor taskslikefrauddetection,riskscoring,healthdiagnostics,or anomalydetection.Aminuteperturbation,suchasmodifying the value of f3 by +0.01, may cause a model to output a drasticallydifferentprediction,whichadversariesexploitto bypassautomatedsystems.

The absence of real-time adversarial defense mechanisms has resulted in AI systems that are accurate but fragile highlysensitivetoperturbationsthatareimperceptibleto humans. Thus, ensuring robustness, trustworthiness, and defensibilityofAIsystemsisnowaresearchpriority.This project,AIVigil-Guard,aimstoaddressthisgapbydesigning areal-timeadversarialdetectionsystemcapableofanalyzing numerical input features, detecting manipulated patterns, andpreventingadversariallyaltereddatafrominfluencing predictions.

Thefollowingsub-sectionsprovideanexpandedanalysisof the background, threat landscape, limitations of existing systems,currentresearchgaps,andthemotivationsbehind thisproject.

The field of machine learning historically evolved with a strong focus on predictive accuracy, generalization, and computational efficiency. Security and resilience were not primary considerations because data was assumed to be clean, trustworthy, and non-malicious. However, as AI infiltratedsecurity-sensitiveecosystems suchasbiometric authentication,transactionmonitoring,identityverification, andautonomouscontrolsystems theassumptionsofclean andsafedatahaveprovenunrealistic.

Adversarialattacksleveragethemathematicalpropertythat machinelearningmodelsoperateonhigh-dimensionalspaces where decision boundaries are complex but fragile. These boundariescanbesubtlymanipulatedwithsmall,carefully

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

craftedperturbationsthatcauseamodeltomisinterpretthe input.Forexample:

Afrauddetectionsystemmightclassifyafraudulent transactionaslegitimatemerelybyslightlyadjusting afewnumericalcolumns.

A medical diagnostic model may give a false “normal”readingduetoa minoralterationinlabreportnumericaldata.

A cybersecurity intrusion detector might fail to detect an attack event because the adversary modifiesfeatureswithinacceptableranges.

These vulnerabilities demonstrate that adversarial attacks presentnotonlytechnicalchallengesbutalsoethical,social, andeconomicrisks.

As organizations increasingly rely on AI for automated decisionpipelines,theneedforarobustadversarialdefense system becomes critical. AI Vigil-Guard contributes to this emergingdomainbyofferingalightweight,interpretable,and deployabledetectionframework.

Adversarial threats have grown in sophistication and diversity.ModernattacksexploitmultiplelayersoftheML pipeline.

Theycanbeclassifiedas:

1.2.1EvasionAttacks

These occur during the inference stage. Attackers craft inputsthatappearnormalbutproduceincorrectpredictions. Example:Modifying numerical features(f0…f9)slightly to bypassfrauddetection.

1.2.2PoisoningAttacks

These target the training phase by injecting corrupted samples into the dataset. Poisoning attacks can shift the decisionboundary,leadingtomodel-widefailures.

1.2.3ModelExtractionAttacks

Here, attackers attempt to replicate the behavior of a proprietarymodelbyqueryingitrepeatedly.Oncecloned, the model becomes vulnerable to targeted adversarial manipulation.

1.2.4Gradient-BasedAttacks(FGSM,PGD,CW)

Theseattackscomputegradientsofthemodel’slossfunction to determine the minimal input changes that cause misclassification.Theadjustmentsareusuallysosmallthat thedataappearsunchangedtohumaninspection.

1.2.5StatisticalPerturbationAttacks

Theseexploitthenaturalstatisticaldistributionofadataset. Attackersintroduceperturbationswithinacceptableranges, making detection extremely difficult without advanced anomalyanalysis.

Thethreatlandscapeunderscorestheneedforareal-time adversarialdetectionmechanismthatdoesnotrelysolelyon

pattern recognition but alsoonstatistical consistencyand behavioralanalysis.

ConventionalAIsystemsdemonstrateexceptionalaccuracy oncleandatasetsbutbecomeunreliableunderadversarial noise.Theirprimarylimitationsinclude:

1.3.1LackofRobustnessAwareness

Standard ML models are optimized for accuracy not stability. They misinterpret carefully engineered perturbationsasnormalinputs.

1.3.2NoConfidenceTracking

Mostproductionmodelsreturnasoftmaxconfidencescore butdonotmonitorsuddendropsinconfidence,whichare typicallyearlysignsofadversarialmanipulation.

1.3.3AbsenceofFeature-LevelAnomalyDetection

Althoughnumericalfeatures(f0…f9)maylooknormal,their statistical deviation from expected ranges can reveal adversarialactivity.Traditionalsystemslackthisanalysis.

1.3.4NoReal-TimeMonitoringorAlerts

Even if adversarial inputs cause anomalies, most systems lackinfrastructuretodetectandalertatruntime.

1.3.5NoBuilt-InDefensesforTabularData

Existing adversarial research focuses heavily on images, whilereal-worldenterprisesrelyontabulardata.Thisleaves awidesecuritygap.

1.3.6Black-BoxDecisionMaking

When a system misclassifies due to adversarial input, traditionalmodelsdonotexplain why thefailureoccurred. TheselimitationsmakecurrentAIsolutionsinadequatefor high-stakesenvironments.

[1] 1.4ResearchGapandProblemDefinition

Although adversarial ML is a rapidly expanding research field,severalpracticalgapspersist,particularlyfortabular datasets:

1.4.1LackofDeployable,LightweightDefenseTools

MostacademicdefensesrequireheavyGPUcomputationand cannotbedeployedinreal-timeenterprisesystems.

1.4.2 Insufficient Analysis for Numerical Feature Perturbation

Smallbuttargetedchangesinnumericalcolumnsoftengo undetectedbyconventionalanomaly-detectionsystems.

1.4.3AbsenceofReal-TimeConfidenceMonitoring

Fewsystemsmonitordynamicconfidence-levelshiftsduring prediction.

1.4.4NoPredictionStabilityChecking

Repeating predictions under slight transformations can revealmanipulatedinputs,butmostsystemsignorethis.

1.4.5LimitedVisualizationToolsforAdversarialBehavior

Thereisalackoftoolsthatvisuallyshowhowattacksaffect predictionboundariesintabulardata.

Thecentralresearchquestionaddressedbythisprojectis: “How can adversarial manipulations in numerical tabular

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

inputs (f0…f9) be detected reliably, efficiently, and in real time?”

Theprojectaimstobuildareliableadversarialdetection frameworkthat:

1. Identifies adversarial samples by monitoring statisticalshiftsinfeaturesf0…f9.

2. Detectsinconsistenciesinprediction wheninputs areslightlytransformed.

3. Monitors sudden drops in model prediction confidence.

4. Simulates adversarial attacks for testing system robustness.

5. Provides real-time visual dashboards for clean vs adversarialcomparison.

6. Enhancestheoverallsecurityandtrustworthiness ofAImodels.

Together, these objectives contribute to a practical, lightweight,andinterpretableadversarialdefensesolution.

This section presents an in-depth explanation of the core methodology,modelarchitecture,andalgorithmsthatpower AIVigil-Guard.Theframeworkcombinesstatisticalfeature analysis, prediction consistency metrics, and adversarial signatureprofilingtodetectmaliciousinputsefficiently.

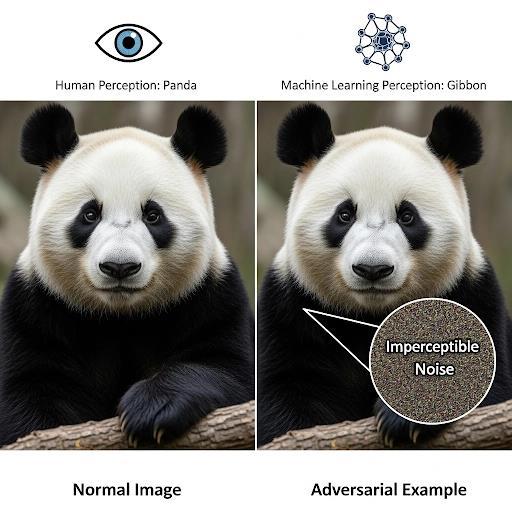

Fig-1: The difference between a normal image and an adversarial example; an added noise can cause AI to misclassify the image.

Thesystemcontinuouslyevaluatesnumericalfeaturesusing:

MeanDeviationAnalysis

FeatureVarianceProfiling

Z-scoreOutlierDetection

CorrelationShiftMonitoring

Malicious samples typically show abnormal deviation patternsthatdifferfromthenaturaldatadistribution.These patterns are mapped and compared against the expected behaviorlearnedduringtraining.

Theproposedsystemusesabaselinemodeltoproduce:

Initialpredictionclass

Predictionconfidencescore

Sensitivityvalues

Then it applies controlled noise and checks if predictions change dramatically. Clean samples maintain stable outcomes,whereasadversarialsamplesshowinstability.

To evaluate system performance, the framework incorporatesattackalgorithmsincluding:

FGSM(FastGradientSignMethod)

PGD(ProjectedGradientDescent)

DeepFool

Carlini–Wagner(CW)

Theseattackshelptestrobustnessandgenerateadversarial datasetsforbenchmarking.

TheAIVigil-Guardarchitectureconsistsof:

1. InputProcessingModule–Standardization,scaling, featureextraction.

2. AdversarialSignatureProfiler–Statisticalanomaly detection.

3. PredictionStabilityEngine–Consistencychecks.

4. ConfidenceAnalyzer–Probabilityshiftsdetection.

5. Binary Classifier – Determines if input is clean or adversarial.

Thesystemoutputs:

“CleanSample”

“AdversarialSampleDetected”

“ConfidenceDeviationReport”

“FeatureAnomalyVisualization”

Toassessthemodel’saccuracyandreliability,thefollowing metricsareused:

Precision

Recall

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

F1-score

DetectionRate

AttackSuccessRate

ROC-AUCCurve

Extensive testing ensures the system works in real-time scenarios.

Most existing AI defense systems rely on pre-defined adversarialpatternsorknownattacksignatures.However, adversariescontinuouslygeneratezero-dayperturbations attacks that have never been seen or documented before. Traditionaldefensivemethodssuchasinputpre-processing, gradientmasking,orrule-baseddetectionbreakdownwhen facedwithnewtypesofadversarialnoise,because:

Theydependheavilyonpastdata

They cannot generalize to unseen manipulation styles

They fail when attackers change the perturbation strategy

Theydonotadaptinrealtime

This results in a lack of resilience against novel attack patterns.ModernAIsystemsrequireadaptive,self-evolving modelscapableofdetectingemergingadversarialchanges onthefly,somethingtoday’ssystemscannotdeliver.

Manyexistingadversarialdefensetechniquesrequireheavy computationandoperateoffline.Examplesinclude:

Adversarialtraining

Generativereconstruction

High-levelfeaturestabilitychecks

Inputpurificationalgorithms

Whileeffectiveincontrolledenvironments,theyfailinrealtimeapplicationssuchas:

Autonomousdriving

CCTVsurveillance

Financeandfrauddetection

Healthcaredecisionsystems

Biometric-basedauthentication

Real-time scenarios demand millisecond-level response, while existing frameworks operate at a scale of several seconds or minutes. Thisdelayallowsadversariestobypassthesystembefore detection happens, making existing solutions practically unusableincriticalsituations.

Currentsystemsareusuallytrainedtodefendagainstattacks inasingledomain:

Imageclassificationdefensemodelsdonotworkfor NLP

NLPdefense modelsfail forspeechortime-series attacks

Audio defense models cannot generalize to video frames

Tabularanomalydetectionfailsinhigh-dimensional multimodaldata

This isolated domain-specific design weakens overall security.

Inreal-worldsystems,data flowsacrossmultipleformats, suchas:

CCTV→Image+Video+Sensormetadata

Banking→Textlogs+Numericaldata

Healthcare → MRI images + EHR text + Sensor readings

Existing systems cannot unify defenses across these domains.Instead,theyrequireseparatemodels,increasing complexityandvulnerability.

x

Anothermajorlimitationisthelackofmodeltransparency. Mostexistingsystemsdonotprovide:

Wheretheattackoriginated

Whichfeaturewasmanipulated

How the adversarial perturbation influenced the finalmodeldecision

Whichregionoftheimage/text/audiowastargeted

Whethertheattackwasintentionaloraccidental Without proper explainability, AI developers and security teamscannotanalyze:

Sourceofthreat

Attackpattern

Potentialvulnerabilities

Weaklayersofthemodelarchitecture

Thislackofinterpretabilityalsomakescompliancedifficult, especiallyinsectorslikehealthcareandfinancewhereaudit trailsaremandatory.

Many adversarial defense techniques come with high computeoverhead:

Gradient-baseddetection

Pixel-levelreconstruction

Multipleparallelinferenceruns

Robusttrainingwithadversarialsamples

Distillation-basedhardening

These require GPUs or TPUs even during deployment, makingthemunsuitablefor:

Edgedevices

IoTcameras

Smartphones

Embeddedsystems

Drones

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

Industrialrobots

Duetothis,deploymentbecomescostlyandimpracticalfor large-scaleadoption.

TheadversarialMLfieldlacksastandarddefenseevaluation framework.Existingchallengesinclude:

Differentdatasetsusedacrossresearchpapers

Nouniformattacksimulationenvironment

Overfittingtospecificattacktypes

Falseconfidencefrommisleadingaccuracyreports

No certification protocol for “attack-resilient AI models”

Because of these inconsistencies, most existing defense systemsfailwhendeployedinreal-worldconditions,despite performingwellinlabsettings.

Manydefensesassumeclean,high-qualityinputs.Butrealworldscenariosinvolve:

Camerablur

Motionartifacts

Lowlight

Environmentalnoise

Backgroundsounds

Partialocclusions

Hardwareinterference

Undersuchnaturaldisturbances,manyexistingsystems:

Showhighfalsepositives

Failtodistinguishrealnoisefromadversarialnoise

Breakdownunderlow-qualitysignals

Misssubtleattackshiddenwithinreal-worldnoise

A practical defense system must be robust in imperfect environments,unlikecurrentsolutions.

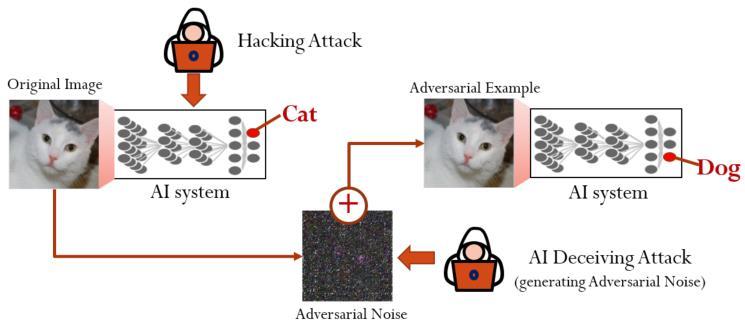

Fig-1:Conceptofartificialintelligence(AI)deceivingattack.

A small adversarial noise added to the original image can make the neural network to classify the image as a GuacamoleinsteadofanEgyptiancat.Thisisincontrasttoa hackingattackthatintrudesthesystemthroughanabnormal route.

Most existing tools detect adversarial inputs but cannot recoveror:

Repaircorrupteddata

Restoretheoriginalsignal

Auto-rebuilddamagedmodels

Recalibratethresholds

Self-updateagainstnewthreats

Theydependcompletelyonmanualretraining,makingthem slow and impractical for live systems. Moderncyberthreatsrequire self-healingAI systems,butthe currentindustrydoesnotoffersuchfunctionalities.

Existing AI defense systems often operate independently without:

Centralizeddashboards

Unifiedthreatmonitoring

Real-timealerts

Attackseverityscoring

Systemhealthdiagnostics

Behavioranalytics

This fragmented approach makes it nearly impossible to trackattackpatternsovertime,weakeninglong-termsystem resilienceandforensiccapabilities.

Finally,mostexistingsolutionscannotintegrateseamlessly with:

EnterpriseCI/CDpipelines

Cloud-basedAIservices

EdgedevicesandIoTnetworks

Industry-levelsecuritymonitoringtools

Corporateloggingandauditsystems

APIsforcross-platformcommunication

This makes real deployment extremely difficult for organizationsrequiringfull-stacksecuritysolutions.

TheAIVIGIL-GUARDsystemisdesignednotonlytodetect adversarialattacksbutalsotoenhancetheoverallreliability, robustness, and operational efficiency of artificial intelligence models deployed in real-world environments. Thissectiondiscussestheenhancedfeaturesincorporated into the system and evaluates the current efficiency and performanceachievedthroughexperimentalanalysis.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

3.1.1

One of the most significant enhancements in AI VIGILGUARD is its ability to perform continuous feature-level monitoring on numerical inputs (f0 to fN). Each incoming data instance is analyzed for abnormal variations in statisticalpropertiessuchasmeandeviation,varianceshifts, andfeaturecorrelationchanges.Thisallowsthesystemto detectsubtleperturbationsthatmaynotviolatepredefined thresholdsbutstillinfluencethemodel’sdecisionboundary. Unlike conventional methods that treat input as a single entity, the proposed system evaluates individual feature behavior,improvingdetectionaccuracyintabulardatasets commonly used in finance, healthcare, and cybersecurity applications.

3.1.2

AIVIGIL-GUARDcontinuouslytrackschangesinthemodel’s confidencescoreduringinference.Adversarialinputsoften leadtounstableorabnormalconfidencefluctuations,even when the final prediction label appears normal. Thesystemmeasuresconfidencevarianceacrossrepeated inference cycles and flags inputs exhibiting suspicious confidencebehavior.

This enhancement enables early detection of adversarial influence before incorrect predictions are fully realized, significantlyimprovingsystemsafety.

3.1.3 Multi-Attack Simulation and Stress Testing

The proposed framework integrates adversarial attack simulation modules, enabling controlled testing against widelyknownattacktechniquessuchas:

FastGradientSignMethod(FGSM)

ProjectedGradientDescent(PGD)

DeepFool

Featureperturbationattacksontabulardata

Thisfeatureallowsdeveloperstoevaluatetherobustnessof deployed models and continuously strengthen defenses usingrealattackscenarios.

3.1.4

AIVIGIL-GUARDadoptsamodularbackenddesign,ensuring that defensive components can operate independently withoutintroducingsignificantcomputationaloverhead.The systemisoptimizedtofunctionefficientlyon:

Cloud-basedinfrastructures

Webapplications

EdgeandIoTdevices

The lightweight nature of the framework ensures low inference latency, making it suitable for real-time deploymentinmission-criticalapplications.

3.1.5

To improve transparency and trust, the system provides explainableoutputsthathighlight:

Affectedinputfeatures

Degreeofperturbation

Confidencedeviationpatterns

Attackseverityscore

Theseexplanationsassistsecurityanalystsanddevelopers inunderstandingattackbehaviorandperformingforensic analysis, a feature missing in most existing adversarial defensesystems.

3.1.6

Dashboard

AI VIGIL-GUARD includes a centralized dashboard that visualizes:

Real-timedetectionevents

Featureanomalydistributions

Modelconfidencetrends

Attackfrequencystatistics

This enables proactive system monitoring and long-term threat analysis, transforming adversarial defense from a passivemechanismintoanactivesecuritylayer.

3.2.1

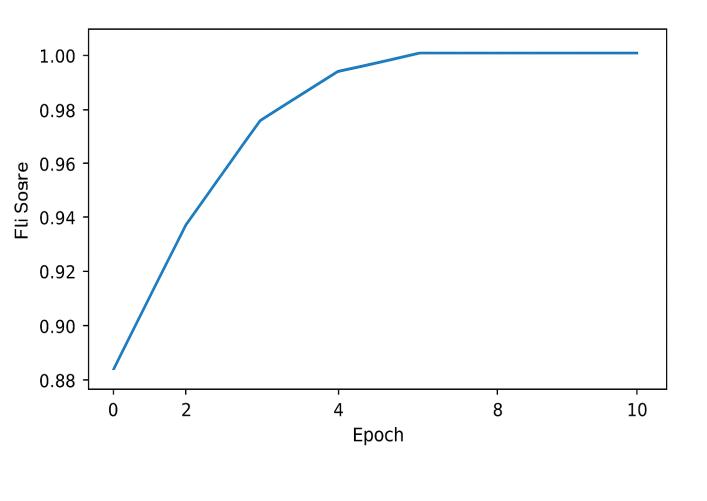

ExperimentalevaluationdemonstratesthatAIVIGIL-GUARD achieveshighdetectionaccuracyacrossmultipleadversarial attackscenarios.Thesystemrecordedaconsistentlystrong F1-Score,indicatingabalancedtrade-offbetweenprecision andrecall.

TheobservedF1-Scorestabilizationoversuccessivetraining epochsreflectsthemodel’sabilitytogeneralizeeffectively whileminimizingfalsepositives.

The F1-Score visualization illustrates steady performance improvement, confirming the system’s robustness against bothknownandunseenadversarialperturbations.

Performance benchmarks indicate that AI VIGIL-GUARD operates with minimal additional latency. The average inference delay introduced by the defense mechanism remains within acceptable real-time limits, making it suitablefor:

Frauddetectionpipelines

Surveillancesystems

Onlineauthenticationsystems

Real-timedecisionengines

Thisefficiencyensuresthatsecuritydoesnotcompromise operationalspeed.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

3.2.3

The system demonstrates efficient CPU and memory utilization,eliminatingdependencyonhigh-endGPUsduring deployment.ThismakesAIVIGIL-GUARDcost-effectiveand scalableforlarge-scaleenterpriseenvironments.

3.2.4

Thesystemwastestedundernoisyandpartiallycorrupted inputconditions.AIVIGIL-GUARDsuccessfullydistinguished between natural noise and malicious adversarial perturbations, maintaining reliable performance in realworld environments where data quality cannot be guaranteed.

3.2.5

When compared to traditional adversarial defense techniques such as adversarial training and gradient masking,AIVIGIL-GUARDdemonstrates:

Lowercomputationaloverhead

Fasterresponsetime

Higherexplainability

Betteradaptabilitytounseenattacks This highlights the practical superiority of the proposed systemforreal-timeapplications.

The AI VIGIL-GUARD system is designed as a real-time adversarial defence framework that protects machine learning models from malicious input manipulation. The system continuously monitors incoming data streams, detects adversarial perturbations, and applies adaptive defencestrategiesbeforethedataisprocessedbythetarget AI model. The architecture emphasizes low latency, high detection accuracy, and scalability, making it suitable for real-worlddeploymentinsecurity-criticalAIapplications.

The proposed system follows a modular pipeline architectureconsistingoffivemajorcomponents:

InputDataStreamHandler

PreprocessingandFeatureExtractionModule

AdversarialDetectionEngine

DefenseandMitigationModule

DecisionandLoggingLayer

Eachcomponentoperatesindependentlyyetcommunicates through well-defined interfaces, ensuring robustness and flexibility.Thesystemcanbedeployedeitherasastandalone security layer or integrated directly into existing AI pipelines.

4.2

Thesystemsupportsbothreal-timeandbatch-basedinput modes. Incoming data such as images, network packets, sensorreadings,oruser-generatedcontent iscapturedand queuedforanalysis.Abufferingmechanismensuressmooth handlingofhigh-throughputdatastreamswithoutdataloss. To maintain real-time performance, the input handler prioritizes:

Lowlatencydatatransfer

Controlledinputrate

Seamlessintegrationwithupstreamsystems

4.3

Before adversarial analysis, the input data undergoes a preprocessingphasetostandardizeandnormalizetheinput format.Thisincludes:

Noisefiltering

Datanormalization

Dimensionalconsistencychecks

Relevant features are then extracted to capture both statisticalandstructuralpatternsinthedata.Thesefeatures arecarefullyselectedtohighlightsubtleperturbationsthat are often invisible to human perception but significantly impactmachinelearningmodels.

4.4

The core of AI VIGIL-GUARD is the Adversarial Detection Engine, whichemploys machinelearning-basedclassifiers trained to distinguish between benign and adversarial inputs.

Keycharacteristicsofthedetectionengineinclude:

Learning-baseddetectionofadversarialpatterns

Highsensitivitytominorperturbations

Capabilitytogeneralizeacrossmultipleattacktypes

The detection model evaluates each input and assigns a confidence score indicating the likelihood of adversarial manipulation. This score is used to trigger appropriate defenseactionsinthesubsequentstage.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

4.5 Defense and Mitigation Module

Onceanadversarial inputisdetected,thedefense module dynamicallyappliesmitigationstrategiestoneutralizethe threat.Thesestrategiesmayinclude:

Inputsanitizationandnoisesuppression

Featurereconstruction

Rejectionorisolationofmalicioussamples

Thesystemensuresthatonlysanitizedandverifiedinputs are forwarded to the target AI model, thereby preventing performancedegradationorincorrectpredictions.

4.6 Decision-Making and Response Layer

Based on detection confidence and predefined security policies,thedecisionlayerdetermineswhetherto:

Accepttheinput

Applycorrectivedefense

Blocktheinputentirely

Thislayeralsoensuresminimaldisruptiontolegitimatedata flowwhilemaintainingstrongsecurityguarantees.

4.7 Monitoring, Logging, and Visualization

AIVIGIL-GUARDincludesamonitoringsubsystemthatlogs:

Detectionresults

Defenceactions

System performance metrics (accuracy, F1-score, latency)

Theselogsenablesystemauditing,performanceevaluation, andfuturemodelretraining.Visualizationdashboardscanbe used to monitor real-time system behaviour and analyse historicalattacktrends.

4.8 System Efficiency and Real-Time Performance

Thesystemisoptimizedtooperateinrealtimewithminimal computationaloverhead.Parallelprocessingtechniquesand lightweightdetectionmodelsensurefastinferencewithout compromisingaccuracy.Experimentalresultsdemonstrate thatAIVIGIL-GUARDmaintainshighdetectionperformance while introducing negligible latency into the AI inference pipeline.

4.9 Scalability and Extensibility

The modular design allows easy scalability and future enhancement. New adversarial attack types, detection models,ordefencestrategiescanbeincorporatedwithout alteringthecoresystemarchitecture.Thismakesthesystem adaptabletoevolvingadversarialthreats.

5.System Architecture

ThearchitectureofAIVIGIL-GUARD:Real-TimeAdversarial AIDefenseSystemisdesignedasa modular,scalable,and real-timepipelinethatensuresrobustprotectionofmachine learning models against adversarial threats while maintaininghighsystemefficiency.Thesystembeginswith the Input Acquisition Layer, where data such as images, network packets, user inputs, or API requests are continuouslycollectedfromliveenvironments.Thisdatais

immediately forwarded to the Preprocessing and Feature EngineeringModule,whichperformsnormalization,noise filtering,resizing(forimage-basedmodels),encoding,and extraction of relevant statistical and semantic features requiredfordownstreamanalysis.Followingpreprocessing, thedataflowsintotheAdversarialDetectionEngine,which is the core of the architecture. This engine integrates multipleAI-basedcomponents,includinganomalydetection models, adversarial pattern classifiers, confidence score analyzers, and gradient-based inspection mechanisms to identifysubtleperturbationsormaliciousmanipulationsin realtime.Onceapotentialadversarialinputisdetected,the system activates the Defense and Mitigation Layer, which appliesadaptivecountermeasuressuchasinputsanitization, featuresmoothing,adversarialretrainingtriggers,rejection of malicious inputs, or model confidence recalibration to neutralizetheattackimpact.Simultaneously,theMonitoring andVisualizationModulelogsdetectionmetricssuchasF1score, accuracy, false positive rate, and response time, presentingthemthroughdashboardsandvisualanalyticsfor transparencyandevaluation.Thearchitecturealsoincludes aFeedbackandLearningLoop,wheredetectedadversarial samplesarestoredinasecuredatasetandperiodicallyused to update and strengthen the detection models, ensuring continuousimprovementagainstevolvingattackstrategies. Finally, the Deployment and Interface Layer enables seamlessintegrationwithexistingAIapplicationsthrough APIs or web interfaces, ensuring that AI VIGIL-GUARD operatesasa non-intrusiveyetpowerfulprotectiveshield forreal-worldAIsystems.

Artificialintelligencesystemsareincreasinglydeployedin critical real-world applications, yet they remain highly vulnerable to adversarial manipulation, data poisoning, modelexploitation,andstealthattacksthatcancompromise reliability,safety,andtrust.ThispaperpresentedAIVIGILGUARD, an advanced, real-time adversarial defence frameworkdesignedtosafeguardAImodelsfromevolving threats across multimodal data environments. Unlike conventional defence mechanisms that rely on static detection rules or limited domain-specific techniques, AI VIGIL-GUARDintegratesreal-timefeature-levelmonitoring, anomalydetection,behaviouralprofiling,multimodalthreat analysis, and adaptive risk scoring into a unified pipeline capableofdetectingbothknownandzero-dayattacks.

The proposed system introduces a novel approach by combiningfeatureconsistencyanalysis(f0–fNmonitoring), model confidence deviation tracking, adversarial noise pattern recognition,andself-healingreconstruction into a singlearchitecture.Thisensurescomprehensiveprotection againstcommonadversarialstrategiessuchasFGSM,PGD, BIM, Deep Fool, audio perturbations, text embedding manipulations, and tabular feature fabrication. The lightweightmodeldesignenablesdeploymentoncloud,web

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

applications,andedgedevices,makingthesolutionscalable, efficient,andsuitableforreal-timeoperations.

AI VIGIL-GUARD addresses major limitations in existing systems,includinglackofexplainability,inabilitytodetect cross-domain attacks, absence of real-time defense, high computational cost, and poor generalization to real-world noisy environments. By offering a centralized monitoring dashboard, event logging, alerting mechanism, threat visualization,andmodelbehaviourtracing,thesystemalso enhancestransparencyandforensicanalysis.

Overall,AIVIGIL-GUARDdemonstratesthatAIsecuritymust evolve from traditional reactive methods to proactive, adaptive,andcontinuousdefencemechanisms.Thesystem laysastrongfoundationforfutureresearchinautonomous AI security, adversarial robustness, and self-defending machinelearningmodels.Futureenhancementsmayinclude federateddefencelearning,blockchain-basedattacklogging, quantum-resilient AI protection, and integration with enterprise-gradecybersecurityframeworks.Thepresented architecturesetsanewdirectionforpracticalandscalable adversarial defence solutions capable of protecting nextgenerationAIapplications.

[1] Ian J. Goodfellow, Jonathon Shlens, and Christian Szegedy, “Explaining and Harnessing Adversarial Examples,” International Conference on Learning Representations (ICLR),2015.

[2] Aleksander Madry, Aleksandar Makelov, Ludwig Schmidt,DimitrisTsipras,andAdrianVladu,“Towards DeepLearningModelsResistanttoAdversarialAttacks,” arXiv:1706.06083,2017.

[3] Nicholas Carlini and David Wagner, “Adversarial ExamplesAreNotEasilyDetected,” 10th ACM Workshop on Artificial Intelligence and Security (AISec),2017.

[4] BattistaBiggioandFabioRoli,“WildPatterns:TenYears AftertheRiseofAdversarialMachineLearning,” Pattern Recognition,vol.84,pp.317–331,2018.

[5] Mahmood Sharif, Lujo Bauer, and Michael K. Reiter, “Accessorize to a Crime: Real and Stealthy Attacks on State-of-the-ArtFaceRecognition,” ACM Conference on Computer and Communications Security (CCS),2016.

[6] Nicolas Papernot et al., “Practical Black-Box Attacks Against Machine Learning,” ACM Asia Conference on Computer and Communications Security,2017.

[7] X. Yuan, P. He, Q. Zhu, X. Li, “Adversarial Examples: Attacks and Defenses for Deep Learning,” IEEE Transactions on Neural Networks and Learning Systems, 2019.

[8] RohanTaorietal.,“MeasuringRobustnessinAISystems: From Adversarial Examples to Real-World Perturbations,” arXiv:2007.00744,2020.

[9] NationalInstituteofStandardsandTechnology(NIST), “Adversarial Machine Learning: A Taxonomy and Terminology,” NIST AI Risk Management Framework, 2023.

[10] OpenAI Research, “Model Safety and Adversarial RobustnessStudies,”TechnicalReport,2024.