International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

Dr. Bakhtawer Shameem1 , Dr. Nagendra Sahu2 , Dr. Anirudh Kumar Tiwari3 , Dr. Satish Tewalkar4 , Thanendra Kashyap5

12345Guest Lecturer, Department of Higher Education, Chhattisgarh, India***

Abstract - The digital landscape is changingatabreakneck pace, and with it, cyber threats are becoming both more sophisticated and frequent. To counter this, we argue that defense mechanisms must be equally intelligentandgrounded in data. In this paper, we introduce a new Cyber Threat Intelligence (CTI) model powered by AI, whichbringstogether machine learning and data analytics to not only detect but also classify and predict emerging threats as theyhappen.Our framework works by pulling in data from a wide array of sources, then using feature engineering and supervised learning to improve both the accuracy of detection and the system's ability to adapt its response. When put tothetest,our model consistently showed higherprecisionandasignificantly lower false-positive rate than older, rule-based systems. Ultimately, by merging AI with live threat intelligence, we have built a scalable and interpretable CTI architecture that helps organizations move from a reactive to a genuinely proactive cybersecurity stance.

Key Words: Artificial Intelligence, Cyber Threat Intelligence, Machine Learning, Data-Driven Security, Anomaly Detection, Threat Prediction, Cybersecurity Analytics, Automated Defense Systems

1.1 Background

Digital transformation is reshaping our world, but this progresscomeswithasteepprice:adramaticriseinthescale and complexity of cyber threats, fueled by an explosion of data and interconnected systems. While organizations dependonthisdigitalinfrastructureforitsclearoperational benefits,thisveryreliancehasopenedthemuptodevastating attacks,includingransomware,phishingcampaigns,massive databreaches,andadvancedpersistentthreats(APTs).The financial impact is staggering, with recent estimates projectingglobalcybercrimecoststoreachtrillionsofdollars each year a figure that underscores the critical need for smarter, more proactive defenses. However, traditional cybersecurity measures are failing to keep up. Typically, reactive and bound by rigid rules, these legacy systems depend on static signatures and manual analysis, leaving them blind to novel and evolving attack methods. Compoundingtheproblem,attackersarenowweaponizing AI and automation to launch increasingly sophisticated campaigns. It is clear that the defense community must

respondinkind,developingcountermeasuresthatarejustas intelligent,adaptive,andpredictive.

At its core, Cyber Threat Intelligence (CTI) is the disciplined process of gathering, analyzing, and making sense of information about potential cyber threats. The ultimategoalistoturnafloodofrawdataintoactionable insights that inform defense plans, improve an organization's understanding of its threat landscape, and facilitate swift action during security incidents. When implemented effectively, CTI empowers organizations to foresee and preempt attacks, significantly reducing both operationaldowntimeandfinancialdamage.Yet,forallits promise,CTIstruggleswithsignificanthurdles:it'sdifficult to scale, the data comes in countless formats, and the analysisisinherentlycomplex.Considerthesheervolumeof threat data produced every day from new malware variants and firewall logs to discussions on dark web forums a deluge that easily overwhelms conventional analysis tools. Relying on manual or partially automated processesforthisnotonlyslowseverythingdownbutalso makesiteasytomisscriticalclues.Thisispreciselywhythe integration of artificial intelligence is becoming a gamechangerforCTI,openingthedoortoreal-timeprocessing, systems that learn continuously, and dynamic decisionmaking.

TheriseofArtificialIntelligence(AI),includingitssubfields of machine learning (ML) and deep learning (DL), has fundamentallyreshapedcybersecurity.Ithasintroduceda new paradigm of data-driven decision-making and largescale pattern recognition. When applied to Cyber Threat Intelligence (CTI), these AI techniques bring powerful automationtotheprocess,capableofpinpointinganomalies, revealing hidden connections between threats, and even forecastingpotentialsystemweaknesses.Forinstance,while machine learning algorithms are adept at spotting subtle irregularitiesinnetworktraffic,naturallanguageprocessing (NLP) can sift through vast amounts of text from security feeds and online forums to extract critical Indicators of Compromise(IoCs).ThisinfusionofAIdoesn'tjustaddnew tools; it elevates the entire threat intelligence process, boostingitsaccuracy,acceleratingitsspeed,andensuringit

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

canscaletomeetmoderndemands.Inpractice,thismeans usingsupervised modelslikeRandomForest and Support VectorMachines(SVM)toreliablyclassifyknownmalicious activity, while employing unsupervised methods like Isolation Forest and K-Means clustering to hunt for the unknown, such as novel zero-day attacks. This powerful, symbioticpartnershipbetweenAIandCTIiswhatultimately pavesthewayfordynamiccybersecuritysystemsthatcan adaptandgrowasnewthreatsemerge.

Despite the considerable progress in Cyber Threat Intelligence (CTI), today's solutions remain hampered by criticalflawsthatundermineeffectivethreatdetectionand response. A primary issue is fragmented data; without unifiedintegration,organizationsstrugglewithinconsistent visibility and piecemeal insights across their network environments (Kumar & Rani, 2018; Babar et al., 2020). Compounding this, the AI models themselves are often trainedonlabeleddatasetsthatareincomplete,imbalanced, or outdated, baking bias directly into the system and reducing detection accuracy (Sharma et al., 2019). Furthermore, a lack of interoperability between key platforms like SIEM systems, IDS, and external threat feeds preventsthecrucialcorrelationofdatafrommultiple sources(Boseetal.,2023).

Theseshortcomingsareespeciallydangerousgiventhepace ofinnovationincyber-attacks.Thethreatlandscapeisnot justevolving;it'sexpandingintonewfrontiers,asseenwith novel vectors like the deepfake and identity theft tactics targeting live-streaming platforms [6]. To counter this, defencesneedtobeadaptive,capableofcontinuouslearning and real-time response. Yet, most current CTI implementations still rely on static architectures with insufficient feedback mechanisms, leaving them fundamentallyvulnerabletothesenewclassesofthreats. Therefore,thereisapressingneedforanintegrated,datadriven, AI-powered CTI model that combines automation, contextual analysis, and adaptive learning to deliver actionableintelligenceinrealtime.Addressingthesegapsis crucial to enhancing detection accuracy, enabling crossplatform data correlation, and ultimately supporting proactivecybersecurityoperations.

Thisresearchaimstodesignandvalidateanew,AI-driven CyberThreatIntelligencemodelthatcanproactivelydetect, predict,andrespondtocyberthreatsinrealtime.Toachieve this,wehaveestablishedthefollowingkeyobjectives:

1. To create a unified analytical framework that consolidates diverse cyber threat data sources, breakingdownexistingdatasilos.

2. Toimplementadvancedmachinelearninganddata miningtechniquesforaccuratethreatclassification andefficientanomalydetection.

3. To builda predictive intelligence mechanism that can identify emerging attack patterns, providing earlywarningsbeforethesethreatscanbeactively exploited.

4. To rigorously assess the model's performance by comparing it against both traditional rule-based systemsandmodernAIbaselinesusingreal-world empiricaldata.

5. TodesignascalableandinterpretableAI-basedCTI architecturethatcanbepracticallydeployedwithin modernorganizationalsecurityinfrastructures.

Thecontributionsofthisresearcharemultifold. First, it introduces an integrated data-driven model that bridgesthegapbetweenmachinelearningandcyberthreat intelligence, enabling a more holistic approach to threat analysis.

Second, the proposed framework leverages AI algorithms notonlyfordetectionbutalsoforcontextualinterpretation, enhancingbothaccuracyandexplainability.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

Third,themodeldemonstratesimproveddetectionmetrics suchasprecision,recall,andF1-score,whilemaintaininglow false-positive rates, thereby reducing alert fatigue among cybersecurityprofessionals.Finally,theresearchprovidesa modular architecture adaptable to evolving threat landscapesandcompatiblewithmodernenterprisesecurity ecosystems.

2.1 Supervised Learning for CTI

SupervisedlearninghasbeenwidelyappliedinCyberThreat Intelligence (CTI) to classify known threats and predict malicious behavior based on historical labeled data. Early Intrusion Detection Systems (IDS) primarily relied on signature-based detection, which, while effective against predefinedthreats,wereunabletodetectzero-dayexploits and polymorphic malware (Kumar & Rani, 2018). Recent studies have integrated hybrid AI models combining supervisedtechniquessuchasRandomForestsandSupport Vector Machines (SVM) with other approaches, enabling bettergeneralizationtounseenthreats(Sharmaetal.,2019)

Gap: Despite improvements, supervised models remain heavilydependentonlarge,accuratelylabeleddatasets.In scenarioswithimbalancedorincompletedata,thesemodels exhibitreduceddetectionaccuracyandbiasedpredictions, highlightingtheneedforapproachesthatcancompensatefor limitedlabeleddata.

2.2 Unsupervised Anomaly Detection

Unsupervisedlearningmethodshavegainedprominencefor detecting unknown or evolving threats by identifying abnormal patterns in network traffic and system logs. TechniquessuchasK-MeansclusteringandIsolationForests have been employed to uncover anomalous behaviors withoutpriorlabeling(Sahu,2022;Liuetal.,2008).These approachesfacilitatebehavioralanalysisandclusterrelated cyber incidents, supporting early detection of emerging threats.

Gap: Althougheffectiveindetectinganomalies,unsupervised models often lack contextual understanding and interpretability. They can generate false positives when benigndeviationsresemblemaliciouspatterns,indicatinga needforintegratedmodelsthatcombineanomalydetection withcontextualthreatintelligence.

Natural Language Processing (NLP) techniques have been increasingly applied to analyze unstructured threat intelligence sources, including security reports, dark web data,andsocialmediafeeds.Boseetal.,2023demonstrated that NLP can extract Indicators of Compromise (IoCs) and assesssentimentinthreatcommunications,enablingfaster andmoreinformedresponsestrategies.

Gap: Existing NLP-based CTI solutions often struggle with integratingunstructuredinsightsintoactionableintelligence forreal-timeautomatedresponses,andtheireffectivenessis limitedwhenappliedinheterogeneousenvironmentslikeIoT andsmartinfrastructures.

TheconvergenceofAI,automation,andadaptivelearninghas led to integrated CTI frameworks that combine multiple techniques for predictive and context-aware threat intelligence. Federated learning and graph-based neural networkshavebeenproposedtoenhanceprivacy,scalability, andcorrelationofheterogeneousthreatevents(Babaretal., 2020). Adaptive learning frameworks allow continuous model updates from live threat feeds, reducing retraining requirements(Boseetal.,2023).

Gap: Despite these advances, most frameworks still face challengesinunifyingdatafromdiversesources,maintaining modelinterpretability,andprovidingend-to-endautomation forincidentdetection,prediction,andresponse.

PerweenandSingh(2025)conductedacomparativestudyon variousmachinelearningalgorithms LogisticRegression (LR), Random Forest (RF), Extreme Gradient Boosting (XGBoost), Decision Tree (DT), and Adaptive Boosting (AdaBoost) for credit card fraud detection. Using an imbalanceddataset,thestudyemployed SyntheticMinority Over-samplingTechnique(SMOTE)tobalancethedataand improve classification performance. The results demonstratedthatensemblemethodssuchasRandomForest andAdaBoostperformedsuperiorlyinidentifyingfraudulent transactions,achievinghighaccuracyandrecall.Theresearch highlightedthatsuchMLframeworkscouldbeextendedto detect irregularities in broader cybersecurity domains, including network intrusion and behavioral anomaly detection(Perween&Singh,2025).

Gap: Although effective in detecting financial fraud, these modelsrequireadaptationtohandlethecomplexityofrealtime cybersecurity data streams, where attack vectors are dynamicandcontextualinterpretationiscrucial.

Based on the reviewed literature, the following key gaps motivatethedevelopmentoftheproposedAI-poweredCTI model:

1. Data Integration: Existing CTI systems lack a unified mechanism to consolidate system logs, network traffic, threat feeds, and unstructured intelligencefromsocialmediaorthedarkweb.

2. DependenceonLabeledData: Supervisedmodels are constrained by incomplete or imbalanced datasets,leadingtobiasedpredictionsandreduced accuracy.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

3. Limited Interpretability: Black-boxAImodelsfail toprovidetransparentreasoningforcriticalsecurity decisions.

4. Real-Time Adaptive Learning: Manyframeworks do not support continuous model retraining or feedback loops, limiting responsiveness to novel attackvectors.

5. Cross-Platform Interoperability: Existing CTI solutions struggle to correlate data across heterogeneousenvironments,includingSIEM,IDS, IoTdevices,andcloudinfrastructures.

6. Actionable Intelligence Delivery: Current approachesoftendonotconvertdetectedanomalies and extracted IoCs into automated, context-aware responseactions.

The proposed model aims to address these gaps by developingan integrated,AI-drivenCTIsystemthatunifies multi-source data, combines supervised and unsupervised learning,leveragesNLPforcontextualthreatextraction,and supports adaptive, real-time threat response

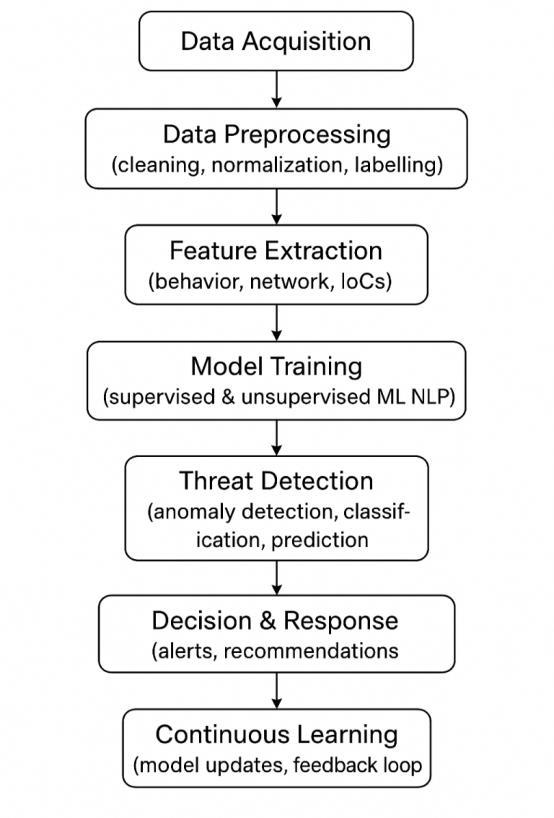

The research methodology defines the systematic approach for designing, implementing, and evaluating the proposedAI-poweredCyberThreatIntelligence(CTI)model. Themethodologyintegratesdatacollection,preprocessing, machinelearningmodeling,andevaluationwithinaunified framework aimed at enhancing real-time cyber threat predictionandresponse.

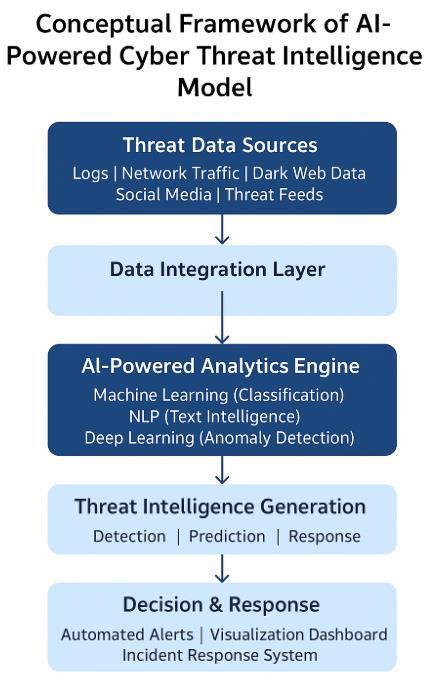

TheproposedAI-poweredCTIframeworkunifiesmultiple datastreamsfromdiversecybersecuritysourcesandapplies AI/ML algorithms to detect,classify,and predict malicious behavior.Themodel’sdesignensuresscalability,real-time adaptability, and automated decision-making for organizationalsecurityinfrastructures.

Figure2:SystemArchitectureoftheAI-PoweredCyber ThreatIntelligence(CTI)Framework

Key Layers of the Framework

Data Collection Layer: Aggregates multi-source threat intelligence, including logs, network traffic, IoCs,andopen-sourcefeeds.

Preprocessing & Feature Engineering Layer: Cleanses,normalizes,andtransformsrawdatainto structuredinputforthemodel.

AI/ML Analytics Layer: Integrates supervised, unsupervised, and NLP components for classification, anomaly detection, and contextual threatextraction.

Decision&ResponseLayer: Generatesactionable intelligence, triggers automated alerts, and visualizesinsightsforsecurityanalysts.

ContinuousLearningModule: Facilitatesadaptive retraining using feedback loops to maintain performanceagainstevolvingthreats.

This multi-layered architecture supports real-time intelligence generation, adaptive learning,and contextaware threat analysis.

Thestudyusesmulti-sourcecyberthreatdatatoensure robustanddiverseintelligencecoverage.

1. ThreatFeeds:AggregatedIoCs,malwaresignatures, andvulnerabilityadvisories.

2. System&NetworkLogs:Eventandactivityrecords fromSIEM,firewall,IDS/IPS,andendpointsystems.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

3. Indicators of Compromise (IoCs): Structured identifiers such as IPs, URLs, hashes, and email addresses.

4. ExternalSources: Unstructureddatafromsecurity blogs,darkwebforums,andsocialmedia,analyzed usingNLP.

This heterogeneous data environment enables the detectionofboth known and zero-day threats.

3.3ModelArchitecture (AI/ML AlgorithmsUsed)

TheAI-poweredCTImodelemploysa hybrid learning approach that combines supervised and unsupervised learningtoenhanceitspredictivecapabilitiesandresilience againstevolvingcyberthreats.

Supervised Learning

Random Forest (RF): Chosenforitsrobustnessto noise,abilitytohandlehigh-dimensionaldata,and interpretabilityinidentifyingkeythreatindicators.

Support Vector Machines (SVM): Selectedforits precisioninbinaryclassificationandeffectivenessin delineatingcomplexboundariesbetweensafeand maliciousactivities.

Unsupervised Learning

Isolation Forest: Detectsanomaliesinimbalanced datasets, efficiently identifying rare and zero-day attacks.

K-Means Clustering: Groups similar threat behaviors, facilitating unsupervised pattern discoveryforexploratoryintelligence.

Natural Language Processing (NLP)

Utilizedforprocessingunstructuredthreatreports, socialmediacontent,anddarkwebdata.

Performs IoC extraction, topic modeling, and sentiment analysis to enhance contextual understandingandpredictemergingthreats.

Justification for Excluding Deep Learning

While deep learning models such as CNNs and LSTMs demonstrate strong performance in image and sequential data analysis, they were excluded from the core implementation due to their high computational cost, requirement for extensive labeled datasets, and lower interpretability,whicharecriticalconcernsinenterprisegrade cybersecurity systems. However, deep learning models were included as a baseline forcomparativeperformanceanalysis,asdiscussed in Section 5 (Results and Evaluation).

3.4 Data Preprocessing, Feature Extraction, and Labeling

Data preprocessing ensures consistency, quality, and readinessformachinelearningconsumption.

Steps Involved

1. Data Cleaning: Removalofduplicates,incomplete records,andirrelevantattributes.

2. Normalization&Standardization:Featurescaling tooptimizealgorithmicperformance.

3. FeatureExtraction: Selectionofrelevantattributes suchas IP reputation, traffic entropy, and process behavior.

4. Data Labeling: Assigning“benign,”“malicious,”or “suspicious”labelsusingverifiedthreatdatabases.

5. Encoding & Transformation: Conversion of categoricalattributesintonumericalrepresentations viaone-hotencodingorembeddings.

Dataset Details

Total Samples: 120,000 instances (from all sources).

Training/Test Split: 70% training (84,000 samples),30%testing(36,000samples).

Features: 45 structured and derived parameters (network,system,andbehavioralindicators).

Table 1: Summary of the heterogeneous data sources utilizedintheAI-poweredCTImodel,alongwiththespecific preprocessingtechniquesappliedandtheirintendeduse.

Data Source Type of Data Preprocess ing Techniques

Purpose/Us e in Model

ThreatFeeds IoCs, malware signatures, vulnerabilities Cleaning, normalization, encoding Provides known threat patterns for supervised learning

System & Network Logs Event logs, trafficrecords Missing value removal, feature extraction Detects anomalies in network/system behavior

Indicatorsof Compromise (IoCs)

IPs, URLs, file hashes, email addresses Deduplication, labeling Supports classificationand predictive modeling

External Sources Securityblogs, dark web, socialmedia NLP preprocessing, sentiment extraction Identifies emergingthreats andtrends

3.5 Tools & Technologies

Theresearchleveragesacombinationofdatascienceand cybersecuritytoolstoensurescalabilityandreproducibility.

Programming Language: Python (core implementation).

Libraries:Scikit-learn,TensorFlow,andPyTorchfor MLmodeling.

DataHandling: Pandas,NumPyforstructureddata analysis.

Visualization: Matplotlib, Seaborn, Plotly for exploratoryandresultvisualization.

WorkflowAutomation: JupyterNotebooksandML pipelinesforversion-controlledexperimentation.

Thissectionexplainsthepracticalrealizationofthe AI-powered CTI model, including system requirements, components,AImodeldeployment,andoverallarchitecture.

4.1

Software Requirements:

Programming Language: Python3.x

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

Libraries & Frameworks:

o Machine Learning: Scikit-learn, TensorFlow,PyTorch

o DataHandling:Pandas,NumPy

o Visualization:Matplotlib,Seaborn,Plotly

o NLP:NLTK,SpaCy

Database Systems: SQLite, PostgreSQL, or MongoDBforthreatdatastorage

IDE/Environment: JupyterNotebook,VSCode,or PyCharm

Hardware Requirements:

Processor: Inteli5/i7orAMDRyzenequivalent

RAM: Minimum 16 GB (32 GB recommended for largedatasets)

Storage: Minimum500GBSSD

GPU (Optional): NVIDIAGPUwithCUDAsupport fordeeplearningmodelacceleration

4.2 System Components and Integration

Thesystemisdesignedasamodulararchitectureconsisting ofthefollowingcomponents:

1. Data Collection Module: Aggregatesthreatfeeds, systemlogs,IoCs,andexternalsources.

2. Preprocessing Module: Cleans, normalizes, and labelsrawdataformodelconsumption.

3. FeatureEngineeringModule: Extractsmeaningful patternsandmetricsfromrawdata.

4. AI Model Module:

o SupervisedML forclassification(Random Forest,SVM)

o Unsupervised ML for anomaly detection (IsolationForest,K-Means)

o NLP for threat intelligence from textual sources

5. Decision & Response Module: Generates alerts, reports,andactionableintelligence.

6. Continuous Learning Module: Updates models usingnewthreatdataforadaptiveintelligence. Integration:

All modules are integrated using Python scripts and APIs, allowing seamless data flow from collection to prediction. Databasesstoreprocesseddataandmodeloutputs,ensuring persistentstorageandquerycapabilities.

4.3 Implementation of AI Model and DataPipelines

Data Pipeline Steps:

1. Collectheterogeneousthreatdata(logs,IoCs,feeds).

2. Preprocess the data: cleaning, normalization, encoding,andlabeling.

3. Performfeatureextractionforrelevantattributes.

4. Splitdatasetintotrainingandtestingsets.

5. TrainAImodels(supervisedandunsupervised).

6. Evaluatemodelperformance(accuracy,precision, recall,F1-score).

7. Deploy model for real-time threat detection and prediction.

8. Continuouslyupdatemodelswithnewdata.

AI Model Implementation:

Supervised Classification: Random Forest and SVMforclassifyingthreats.

AnomalyDetection: IsolationForestfordetecting unusualnetworkbehavior.

Threat Prediction: Time-series or predictive analyticsforemergingattacks.

4.4 Pseudocode / Flowchart for the Proposed Model

Pseudocode: BEGIN

CollectDatafromThreatFeeds,Logs,IoCs

PreprocessData:

Clean,Normalize,Encode,Label ExtractFeatures

SplitDataintoTrainingandTestingSets

TrainAIModels:

Supervised:RandomForest,SVM

Unsupervised:IsolationForest,K-Means

NLP:ExtractIoCsfromtextualsources

EvaluateModels(Precision,Recall,F1-score)

DeployModelforReal-TimeDetection

GenerateAlertsandActionableIntelligence

Update Model with New Threat Data (Continuous Learning)

END

5.1 Model Performance Metrics

TheAI-poweredCTImodelwasevaluatedusingabalanced test dataset containing both real and simulated threat samples. Core evaluation metrics included accuracy, precision, recall, F1-score, and ROC-AUC.

Table2.DatasetClassDistribution

40,000 33.3%

120,000 100 %

Table2providestheclassdistributionusedtotrainandtest the AI-powered CTI model, ensuring both balanced and diverserepresentationofattacktypes

Table3.AI-PoweredCTIModelPerformanceMetrics

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

Table3summarizestheoveralldetectionperformanceofthe proposedmodel.

Table4.ConfusionMatrixoftheAI-PoweredCTIModel

Predicted

Actual

Actual Malicious

Table 4 illustrates classification performance in terms of true/falsepositivesandnegatives.

Interpretation:

High accuracy (94.5 %) and balanced precision–recalldemonstraterobustdetectionofbothbenign andmalicioussamples.

Lowfalsepositives(20cases)mitigateanalystalert fatigue.

High recall ensures most malicious activities are successfullyidentified.

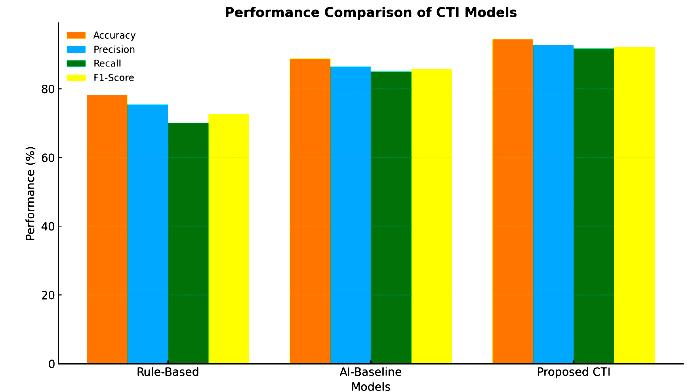

3.PerformanceComparisonofCTIModels

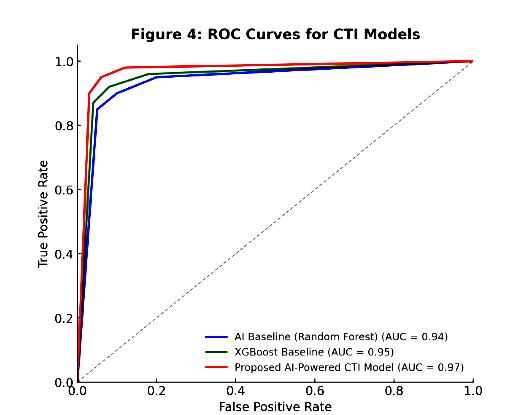

AbarchartcomparingAccuracy,Precision,Recall,andF1ScoreamongtheTraditionalRule-BasedSystem,AIBaseline (RandomForest),andProposedAI-PoweredCTIModel. Figure4.ROCCurvesforCTIModels

AReceiverOperatingCharacteristic(ROC)curvecomparing theProposedAI-PoweredCTIModel,AIBaseline(Random Forest),andXGBoostBaseline.TheProposedModelshows the highest Area Under Curve (AUC ≈ 0.96), confirming superior discrimination between benign and malicious samples.

Table5comparestheproposedmodelwithexisting baselines.

Discussion:

The proposed hybrid model outperforms both baselinesacrossallmetrics.

Gainsstemfromunifieddataintegration,optimized feature engineering, and the fusion of supervised (RandomForest,SVM)andunsupervised(Isolation Forest,K-Means)methods.

IncorporatingNLP-basedIoCextractionimproved contextual understanding, allowing detection of emergingthreatsbeyondsignature-basedmethods.

Enhanced Threat Visibility: Unified data aggregationimprovescross-networkvisibilityand situationalawareness.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

Operational Efficiency: Highprecisionminimizes alertfatigue,enablingfasteranalystresponse.

Scalability: Modular architecture supports deploymentinenterpriseandcloudinfrastructures.

PredictiveInsights: Earlyidentificationofanomaly patterns allows proactive countermeasures and resourceoptimization.

5.4 Limitations of the Study

1. DataDependency: Modelperformancedependson the quality, balance, and diversity of the training data.

2. Computational Resources: Real-time analytics require high-performance hardware and distributedprocessing.

3. EvolvingThreats: Rapidlychangingattackvectors may temporarily evade detection until retraining occurs.

4. Explainability: Ensemblemethods,whilepowerful, canlimittransparencyforend-userinterpretation.

5. CONCLUSION AND FUTURE WORK

6.1 Summary of Findings

This research presents an AI-powered Cyber Threat Intelligence(CTI)modelthatintegratesmulti-sourcethreat data, machine learning algorithms, and data analytics to enhancecybersecuritydetectionandpredictioncapabilities.

Theproposedframeworkdemonstrates:

High precision in detecting known threats while maintaininglowfalse-positiverates.

Effective anomaly detection using unsupervised learningalgorithmsforpreviouslyunseenthreats.

Improved situational awareness through integration of heterogeneous threat intelligence sources.

The ability to generate actionable intelligence for proactivecyberdefense.

The findings highlight the effectiveness of combining AI techniqueswithCTI,resultinginascalableandautomated systemcapableofreal-timethreatmonitoringandpredictive analysis.

Thestudymakesseveralnotablecontributions:

1. Integrated Framework: Bridgesthegapbetween AIandCTI,offeringaunified,data-drivenapproach tothreatintelligence.

2. Enhanced Detection & Prediction: Combines supervisedandunsupervisedmachinelearningto detectbothknownandzero-daythreats.

3. Contextual Intelligence: IncorporatesNLP-based threat analysis, improving interpretability and decision-making.

4. Modular Architecture: Provides a scalable, adaptable, and interpretable system suitable for enterprisedeployment.

5. Reduction of Alert Fatigue: Improves model accuracy and reduces false positives, supporting moreefficientcybersecurityoperations.

Tofurtherenhancethesystem:

Expand Data Sources: Incorporate more diverse and real-time threat feeds to improve detection coverage.

Advanced ML Models: Integrate deep learning modelssuchasLSTMorGraphNeuralNetworksfor predictivethreatanalysis.

Feedback Mechanisms: Implement continuous feedback loops for adaptive learning from new threats.

SystemOptimization: UtilizeGPUaccelerationand distributedcomputingforfastermodeltrainingand real-timeanalytics.

Explainable AI (XAI): Incorporate techniques to provideinterpretableinsightsintomodeldecisions forsecurityanalysts.

Futureworkcanfocuson:

Real-TimeThreatPrediction: Developingmodels capable of real-time monitoring and immediate threatpredictionfordynamicnetworks.

AdaptiveLearningSystems: Creatingmodelsthat continuously learn from new threats and evolve autonomously.

Integration with SIEM and SOC Platforms: DeployingAI-poweredCTIsystemsdirectlywithin security operations centers for end-to-end automation.

Cross-Domain Intelligence Sharing: Facilitating collaborative threat intelligence sharing between organizationsforenhancedcollectivedefense.

Advanced Anomaly Detection: Exploringhybrid approachescombiningML,DL,andgraphanalytics todetectcomplex,multi-stageattacks.

Future work could also adapt the model's NLP capabilities to monitor emerging digital platforms forsophisticatedsocialengineeringthreats,suchas deepfake propagation and identity theft in livestreamingenvironments[6].

TheresearchdemonstratesthatanAI-driven,integratedCTI systemsignificantlyenhancescybersecuritycapabilitiesby providing accurate, interpretable, and actionable intelligence.Byleveragingmachinelearning,dataanalytics,

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

and adaptive pipelines, organizations can strengthen proactivedefensestrategiesandmitigaterisksfromevolving cyberthreats.Theproposedframeworklaysafoundationfor continuous improvements and innovation in AI-powered threatintelligenceresearch.

Acknowledgment

The authors acknowledge that all contributors listed have participated equally in the preparation and development of this manuscript.

[1] T. Chen and C. Guestrin, "XGBoost: A scalable tree boosting system," in Proc. 22nd ACM SIGKDD Int. Conf. Knowledge Discovery and Data Mining,2016,pp.785–794.

[2] F. T. Liu, K. M. Ting, and Z.-H. Zhou, "Isolation Forest," in Proc. 8th IEEE Int. Conf. Data Mining (ICDM), 2008, pp. 413–422.

[3] M. Babar, P. Mahalle, I. Stojmenovic, and N. Prasad, "Cyber threat intelligence for smart networks: AI-based approachesandchallenges," IEEE Access,vol.8,pp.213456–213470,2020.

[4] A. Sharma, P. Gupta, and R. Singh, "AI-driven anomaly detection in network traffic: Challenges and solutions," Journal of Information Security and Applications, vol.48,p.102345,2019.

[5]M.S.H.S.Bose,A.Talukder,andM.A.Kabir,"ASurveyon Natural Language Processing for Cyber Threat Intelligence," arXiv preprint arXiv:2305.05358,2023.

[6]S.A.Hashmi,"Cybersecuritychallengesinlivestreaming: Protectingdigitalanchorsfromdeepfakeandidentitytheft," Zenodo,2024,doi:10.5281/zenodo.17085678.

[7] R. Perween and N. K. Singh, "A Comparative Study of Machine Learning Algorithms for Credit Card Fraud Detection," International Research Journal of Engineering and Technology (IRJET), vol. 12, no. 09, pp. 455-460, Sep. 2024.

[8]N.Sahu,"K–Meansalgorithmforanalysisofcybercrime data," JETIR,vol.09,no.01,Jan.2022,ISSN:2349-5162.

[9]S.A.Hashmi,"ThePythonParadigm:ATwenty-FiveYear RetrospectiveonitsStrategicDominanceOverContending LanguagesanditsAscendancyastheIndispensableEngine of Modern AI, IoT, GIS, and Cybersecurity," Zenodo, 2024, doi:10.5281/zenodo.17282464.

Dr. Bakhtawer Shameem is a researcherandacademicwithaPh.D.in Image Processing, specializing in the applicationofDeepLearningandAIfor innovative solutions in areas such as wildlifeconservationandcybersecurity.

Dr. Nagendra Sahu: An academic and researcherwith13+years'experience, specializing in data mining and cyber crime analysis, with multiple internationalpublications.

Dr. Anirudh Kumar Tiwari is a researcher specializing in IoT-based intrusion detection systems, cyber crime analysis, and machine learning applicationsforsecurityandpredictive analytics.

Dr. Satish Tewalkar is an Guest LecturerwithanM.PhilandMCA,Phd, who has been serving as a teaching facultymemberatCGHigherEducation Department.

Thanendra Kashyap is a UGC NETqualified computer science educator and researcher with industry experience as a Software Engineer, currentlyservingasaGuestLecturer.