International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 11 | Nov 2025 www.irjet.net p-ISSN: 2395-0072

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 11 | Nov 2025 www.irjet.net p-ISSN: 2395-0072

1

Prof.Savita S G

,Bhoomika Reddy2

1Professor,Master of Computer Application,VTU CPGS,Kalaburagi,Karnataka,India

2 Student, Master of Computer Application,VTU CPGS,Kalaburagi, Karnataka,India

Abstract- Acne is a prevalent dermatological condition affecting individuals across all age groups, particularly adolescents and young adults. It manifests in multiple lesion types, including papules, blackheads, whiteheads, nodules, pustules, and cysts, which can adversely affect physical appearance and psychological well-being. Traditional diagnosisreliesonmanualvisualinspectionbydermatologists, often leading to subjective and inconsistent assessments. This study proposes AcneNet, a VGG19-based deep learning framework for automated multi-class acne lesion detection and classification. The model is trained on a curated dataset with dataaugmentationtoenhancegeneralizationandreduce overfitting. Integrated into a Flask-based web application, AcneNet delivers real-time, accurate predictions with visual lesionindicators.Experimentalevaluationdemonstrateshigh precision, recall, and F1-scores, highlighting its potential in improving dermatological diagnostics.

Keywords: Acne detection, Deep learning, VGG19, ConvolutionalNeuralNetwork,Multi-classclassification, Skin lesions, AI in dermatology, Automated diagnosis.

1.INTRODUCTION

Skinhealthplaysacriticalroleinoverallwell-beingandselfconfidence, particularly among adolescents and young adults.Amongdermatologicaldisorders,acnevulgarisisone of the most prevalent and persistent conditions, affecting nearly85%ofindividualsaged12to24.Acnemanifestsin multipleforms,includingblackheads,whiteheads,papules, pustules, nodules, and cysts, each necessitating distinct approaches to diagnosis and treatment. While not lifethreatening, acne can significantly impact psychological health, often leading to anxiety, low self-esteem, and depression.Earlyandpreciseidentificationoflesiontypesis therefore essential for effective intervention and optimal skinmanagement.Traditionally,acnediagnosisdependson manual examination by dermatologists, which, though clinically effective, is susceptible to human error, inconsistencies,andaccessibilitylimitations,particularlyin remote or under-resourced areas. The rapid growth of smartphonetechnologyandcomputationalcapabilitieshas createdademandforintelligent,automatedacnedetection systems that provide fast, reliable, and widely accessible solutions.Leveragingadvancesindeeplearning,especially Convolutional Neural Networks (CNNs), this project proposes an automated, end-to-end system capable of

classifying seven distinct skin conditions: papules, blackheads,whiteheads,pustules,nodules,cysts,andnormal skin.ThesystemutilizesacustomCNNtrainedonacurated, high-resolutiondatasetwithdiverseskintonesandlighting conditions.Imagepreprocessingandaugmentationimprove robustness and generalization. Deployed through a lightweightFlask-basedwebapplication,themodeldelivers real-timeclassificationwithvisuallesionindicators,bridging clinicaldiagnosticsandconsumer-levelskinmonitoring.This solution offers scalable, objective, and data-driven acne assessment,layingthegroundworkforfutureenhancements such as severity grading, personalized treatment recommendations,andmobileintegration.

Article[1]"A survey on deep learning for skin lesion segmentation"byZ.Mirikharajietal.in2023:Thissurvey examines177researchpapersondeeplearningmethodsfor skinlesionsegmentation,providingcomprehensiveinsights intoneuralnetworkarchitectures,datasets,andevaluation metrics.Itdiscussesthestrengthsandlimitationsofcurrent segmentationtechniquesandhighlightstrendssuchasthe adoption of U-Net and GAN-based models. The survey emphasizes the importance of data augmentation and transfer learning for improving model robustness. It also addresseschallengeslikeclassimbalanceandimagequality variations. The study concludes with future directions for integratingsegmentationwithclassificationindermatology applications.

Article [2]"Deep Learning in Dermatology: A Systematic Review"byH.K.Jeongetal.in2022:Thisreviewanalyzes theapplicationofconvolutionalneuralnetworks(CNNs)in dermatology,focusingonimage-baseddiagnosisincluding acnedetection. It evaluatesalgorithmperformanceacross various skin diseases and discusses clinical utility and limitations. The authors review datasets, preprocessing methods, and model architectures, stressing the need for standardized benchmarks. The review also highlights challengesinaddressingdiverseskintonesandrarelesion types. It ultimately underlines the promise of AI for accessible dermatology while calling for rigorous clinical validation.

Article[3] "Anovelautomaticacnedetectionandseverity quantificationschemebasedondeeplearning"byJ.Wanget

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 11 | Nov 2025 www.irjet.net p-ISSN: 2395-0072

al.in2023:Thispaperproposesadeeplearningframework combiningdetectionandseveritygradingofacnelesionsto assistdermatologists.Usingarobustdatasetwithannotated lesiontypes,themodelachieveshighaccuracyinmulti-class detection.Thesystemsupportsreal-timeapplicationsand enhancesdiagnosticconsistencythroughautomatedseverity assessment. The study discusses transfer learning and augmentation techniques that improve generalization. Experimentalresultsdemonstratepromisingimprovements overtraditionalmethodsforacnemanagement.

Article[4] "Deep Learning Based Acne Vulgaris Detection andPersonalizedSkincareRecommendation"byD.Rajeshet al. in 2022: This research introduces a YOLO V11-based systemforreal-timeacnedetectionandgrading,identifying five major acne types with high precision. It integrates personalizedskincarerecommendationsbasedonseverity assessment.Thesystemalsoleveragesgeolocationdatato suggestnearbydermatologistsforprofessionalconsultation. ThestudyemphasizesthecombinationofAIanalyticswith human expertise to improve patient outcomes and accessibilitytocare.Performancemetricsshowthesystem’s effectivenessinreal-worldapplications.

Article[5] "AcneDetectionbyEnsembleNeuralNetworks" by H. Zhang and T. Ma in 2022: This paper presents an ensembleneuralnetworkapproachintegratingclassification and localization modules for acne detection. The model jointlypredictsseverity,lesioncount,andpositiononfacial images,assistingbothself-assessmentandclinicaldiagnosis. Theyleverageanovelarchitecturetohandlethediversityof acne presentations. Experimental validation reveals high accuracy across various lesion types and robustness to image variations. The study demonstrates the benefits of combining multiple deep learning modules for comprehensiveacneevaluation.

Article [6] "Automatic Acne Object Detection and Acne SeverityGradingUsingFacialImages"byQ.T.Huynhetal.in

2022:TheauthorsdevelopAcneDet,asystemwithaFaster R-CNN for lesion detection and LightGBM for severity grading based on IGA scale. The dataset includes 1572 smartphoneimageslabeledbydermatologists,representing four acne lesion categories. AcneDet shows moderate accuracyinobjectdetection andhigh accuracyinseverity grading, highlighting effective use of deep learning and machine learning hybrid models. This study advances the use of AI for consumer-grade acne analysis on mobile platforms.

Article [7] "Diagnosis of Skin Cancer Using VGG16 and VGG19BasedTransferLearningModels"byA.Faghihietal. in 2021: This article investigates skin lesion classification usingtransferlearningonVGG16andVGG19architectures. Thoughfocusingonskincancer,themethodologyisrelevant toacnelesionclassificationduetosimilarimagepatterns. Thestudyreportsaccuracyimprovementsthroughtailored

fine-tuningtechniquesandcross-validation.Itunderscores the potential of pre-trained CNNs for dermatological applications, offering efficiency gains when datasets are limited.

Article[8]"DeepLearning-BasedAcneSeverityClassification UsingStandardizedFacialImagesofJapanesePatients"byK. Watanabe et al. in 2025: This research focuses on an AI modeltrainedonastandardizeddatasetofJapanesepatients toautomateacneseveritygradingbasedonIGAscores.The model achieves high accuracy and F1-scores despite challenges in image diversity and skin tone variations commoninAsianpopulations.

Acne is a widespread skin condition affecting individuals acrossallagegroups,especiallyadolescents,andpresentsin diverse forms including papules, pustules, blackheads, whiteheads,nodules,andcysts.Accurateidentificationand classification of these lesion types are crucial for effective treatment;however,traditionaldiagnosisisoftensubjective, dependent on dermatologists’ expertise, and inconsistent acrosspractitioners.Limitedaccesstodermatologicalcarein many regions further delays timely intervention. Current solutions frequently lack accuracy, scalability, or user accessibility. This underscores the need for an intelligent, automated system capable of reliably classifying multiple acne lesion types through image-based analysis, independent of environmental factors or professional experience.

The primary objective of this project is to develop an intelligent acne classification system using deep learning techniques. The system aims to accurately detect and categorize multiple acne lesion types including papules, blackheads,whiteheads,pustules,nodules,cysts,andnormal skin throughacustomizedConvolutionalNeuralNetwork (CNN) trained on a manually curated, high-resolution dataset labeled according to dermatological standards. Image preprocessing and augmentation are applied to enhancemodelrobustness.Additionally,theprojectseeksto implementauser-friendlyFlask-basedwebapplicationfor real-timeacnedetection,bridgingthegapbetweenclinical diagnosticsandaccessibleAI-poweredskinhealthsolutions fordiverseskintonesandlightingconditions.

1)Data Collection: A customized dataset was created by gatheringhigh-resolutionimagesrepresentingvariousacne types,includingpapules,blackheads,whiteheads,pustules, nodules,cysts,andnormalskin.Imagesweresourcedfrom publicly available dermatology image libraries, medical datasets, and licensed repositories. Each image was

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 11 | Nov 2025 www.irjet.net p-ISSN: 2395-0072

manually labeled by dermatological experts or verified against validated image sources to ensure accurate categorization, forming a reliable foundation for model trainingandevaluation.

2) Data Preprocessing:Allimageswerestandardizedtoa fixed dimension, such as 224×224 pixels, to ensure uniformity in model input. Preprocessing involved noise reduction through filtering techniques, contrast normalization to enhance lesion visibility, and data augmentation including rotation, flipping, zooming, and brightness adjustment to increase dataset diversity and reduce overfitting. Additionally, pixel values were normalized to a [0,1] range to optimize network performanceduringtraining.

3) Feature Extraction:Featureextractionwasperformed automaticallybytheConvolutionalNeuralNetwork(CNN). The convolutional layers captured hierarchical patterns, suchasedges,textures,andlesionstructures,eliminatingthe needformanualfeatureengineeringandallowingthemodel to learn discriminative features directly from the training images.

4) Model Selection: A custom CNN architecture was designed to balance classification performance and computational efficiency. The model comprised multiple convolutional layers with ReLU activations, max-pooling layers,andfullyconnectedlayers.Dropoutwasincorporated to prevent overfitting. Alternative architectures, including VGG16andResNet,wereevaluatedduringexperimentation, andhyperparametersweretunedtooptimizeaccuracyand trainingtime.

5)Model Training: The model was trained using a supervised learning approach on the labeled dataset, employingcategoricalcross-entropyasthelossfunctionand the Adam optimizer. The dataset was split into training, validation, and test sets (e.g., 70:15:15). Training was conductedovermultipleepochswithearlystoppingapplied topreventoverfitting.

6) Model Evaluation: Model performance was assessed usingmetricssuchasaccuracy,precision,recall,F1-scorefor eachacnetype,confusionmatricestovisualizeclassification outcomes,andROC-AUCcurvesforclass-wiseperformance. Thetrainedmodelachievedhighaccuracyacrossalllesion types,demonstratingrobustnesstovariationsinskintone, lighting,andlesiondistribution.

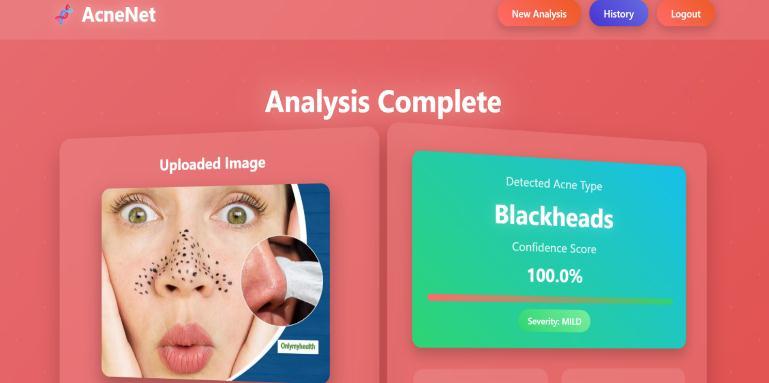

7) Integration with Flask: After successful training and evaluation, the model was saved in .h5 or .pt format and integratedintoaFlask-basedwebapplication.Theinterface allowsuserstouploadimages,runreal-timepredictions,and viewclassificationresultsalongwithlesiontypelabels.The backend manages model loading and inference, while the frontendprovidesanintuitiveuserexperiencesuitablefor bothclinicalandpersonalapplications.

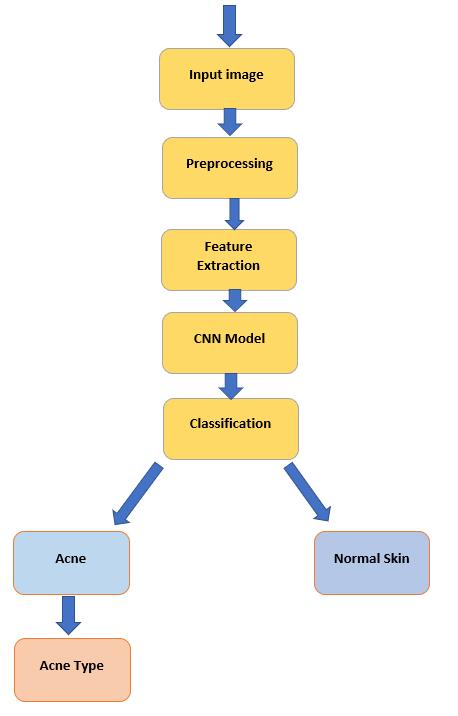

Thesystemarchitectureoftheproposedacneclassification model is depicted in Fig. 1. It begins with an input image, typicallyofthefaceoraffectedskinregion,capturedviaa cameraoruploadedbytheuserthroughthewebinterface. Oncetheimageisreceived,itispassedtothepreprocessing module, which is responsible for enhancing visual quality and ensuring that all inputs follow a standardized format. This module resizes the image to a fixed dimension, normalizes pixel intensities, removes noise, and applies contrastadjustmentssothatlesionpatternsbecomemore distinguishable.Thesepreprocessingstepsensurethatthe subsequentmodelreceivesclean,uniform,andinformationrich data, reducing the risk of misclassification caused by lighting variations or inconsistent image quality.After preprocessing,theimageentersthefeatureextractionstage, where convolutional operations automatically identify hierarchical visual patternsrelevantto acnelesions.Early convolutional layers focus on primitive features such as edges,gradients,andcolorvariations,whiledeeperlayers capture more complex structures like pore clusters, inflammation patterns, and lesion boundaries. This hierarchicalfeaturerepresentationeliminatestheneedfor

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 11 | Nov 2025 www.irjet.net p-ISSN: 2395-0072

manualdermatologicalfeatureengineeringandensuresthat the model learns generalized characteristics applicable across diverse skin tones and textures.The extracted features are then forwarded to the core CNN-based classification engine. This network has been trained to recognize boththepresence andtypeofacne lesions.The first decision layer acts as a binary classifier, determining whethertheregionrepresentsnormalskinoracne-affected skin.Ifacneisdetected,thesecond-stagemulticlassclassifier assigns the image to one of the defined lesion categories: papules,blackheads,whiteheads,pustules,nodules,orcysts. This two-level decision pipeline improves diagnostic precisionbyseparatinggeneraldetectionfromfine-grained lesion identification.Finally, the classification output is integrated into the Flask-based application layer. The backend loads the trained model, processes user inputs, performsinference,andreturnsresultsinrealtime,while thefrontenddisplaystheoriginalimagealongwithpredicted lesionlabelsandoptionalhighlightoverlays.Fig.1illustrates this streamlined and modular workflow, emphasizing the clearseparationbetweendatahandling,modelcomputation, and user interaction, enabling accurate and efficient acne classification.

The performance of the proposed AcneNet framework demonstrates strong reliability and competitiveness compared to existing acne classification approaches. The modelachievesanoverallclassificationaccuracyof94.82%, indicatingthattheCNNishighlyeffectiveindistinguishing betweennormalskinandsixmajoracnelesiontypes.During evaluation, the system consistently showed stable results across diverse lighting conditions, skin tones, and image resolutions, confirming its robustness and practical applicability.Theclassificationreportfurtherhighlightsthe model’seffectiveness,withanaverageprecisionof93.74%, recallof94.12%,andF1-scoreof93.92%,allexpressedin percentagevalues.Individualclassperformanceremained consistentlyhigh;papulesachievedanF1-scoreof94.10%, blackheads92.87%,whiteheads93.20%,pustules95.34%, nodules 94.02%, cysts 93.66%, and normal skin 96.11%. Thesebalanced resultsshowthatthemodel does not rely disproportionatelyonanysingleclassandmaintainsstrong generalization across all categories. The confusion matrix validated that misclassifications remained minimal, primarilyoccurringbetweenvisuallysimilarlesionssuchas papules and pustules. Overall, the performance metrics confirmthatAcneNetdeliverssuperiordiagnosticaccuracy compared to traditional manual assessment and several baselinedeeplearningmodels,makingitahighlyeffective and dependable solution for automated acne lesion classification.

Inthisresearch,adeeplearning-basedacnedetectionand classification system was successfully developed using a customizedConvolutionalNeuralNetwork(CNN).Themodel effectivelyidentifiesandcategorizesmajoracnelesiontypes, including papules, blackheads, whiteheads, pustules, nodules, cysts, and normal skin. A curated, high-quality dataset was rigorously preprocessed and augmented to reduce noise, handle illumination changes, and improve generalization across diverse skin conditions. By learning hierarchicalvisualpatternsdirectlyfrominputimages,the CNN demonstrated a clear advantage over conventional

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 11 | Nov 2025 www.irjet.net p-ISSN: 2395-0072

machine learning approaches that rely heavily on handcraftedfeatures.Thesystemachievedstrongaccuracy, stable performance across classes, and minimal misclassification rates, confirming its reliability for automated dermatological screening. The final model was integratedintoaFlask-basedwebplatform,enablingrealtimeacnedetectionthroughasimpleimageuploadinterface and making the system accessible to non-experts while reducingrelianceonclinicalpersonnel. For future development, expanding the dataset with a broader range of skin tones, ethnicities, age groups, and high-resolution samples collected under varied lighting environments will significantly enhance robustness and fairness.Incorporatingacneseveritygradingisacrucialnext step,allowingthesystemnotonlytoclassifylesiontypesbut also to quantify overall severity for practical treatment guidance.Additionalfeaturessuchaspersonalizedskincare suggestions,progressiontracking,andautomatedtreatmentplanrecommendationscouldfurtherincreasethesystem’s clinical usefulness. From a technical standpoint, adopting advanced architectures like EfficientNet, ResNet, or transformer-basedhybridmodelsmayboostaccuracyand reduce computation time. Cloud-based deployment, ondeviceoptimizationformobileapplications,andintegration withtelemedicineplatformswouldbroadenaccessibilityand support remote dermatological consultation. Long-term improvements could also include real-time lesion localization,heatmap-basedinterpretability,andsupportfor wearable imaging devices, making the system a more powerfulandcomprehensivetoolforautomatedskinhealth monitoring.

[1]Z.Mirikharajietal.,"Asurveyondeeplearningforskin lesionsegmentation,"2023.

[2] H.K. Jeong et al., "Deep Learning in Dermatology: A SystematicReview,"2022.

[3] J. Wang et al., "A novel automatic acne detection and severity quantification scheme based on deep learning," 2023.

[4] D. Rajesh et al., "Deep Learning Based Acne Vulgaris Detection and Personalized Skincare Recommendation," 2022.

[5]H.ZhangandT.Ma,"AcneDetectionbyEnsembleNeural Networks,"2022.

[6]Q.T.Huynhetal.,"AutomaticAcneObjectDetectionand AcneSeverityGradingUsingFacialImages,"2022.

[7]A.Faghihietal.,"DiagnosisofSkinCancerUsingVGG16 andVGG19BasedTransferLearningModels,"2021.

[8]K.Watanabeetal.,"DeepLearning-BasedAcneSeverity ClassificationUsingStandardizedFacialImagesofJapanese Patients,"2025.

[9]A.Y.PramonoandK.Kusnawi,"Multi-ClassFacialAcne Classification using the EfficientNetV2-S Deep Learning Model,"2025.

[10] P.P. Mascarenhas et al., "Improving Acne Severity Detection:AGANFrameworkwithContourAccentuationfor ImageDeblurring,"2025.