International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

Miss. Aparna Patil1 , Dr. Kishor Pandyaji2, Prof. Manoj Chavan3

1Student, 2Professor, 3Professor Département Of Electronics Engineering

Dr. V.P.S.S.Ms Padmabhooshan Vasantraodada Patil Institute of Technology, Budhgaon, Sangli, Maharashtra 416304 India. ***

Abstract - Bridgingthecommunicationdivideforindividuals with hearing and speech impairments requires innovative technological solutions. This research introduces a costeffective,vision-basedframework designedtointerpretstatic gestures from Indian Sign Language (ISL) and convert them into textual and auditory outputs. The proposed system circumvents the need for high-end hardware by utilizing a conventional webcam for visual input. The core processing involves a multi-stage image analysis strategy: hand region isolation through skin color segmentation in the YCrCb color space, image binarization via Otsu's method, and feature characterization using centroid-radial distance metrics and the Convex Hull technique. A Learning Vector Quantization (LVQ) neural network is employed for classification, selected for its proficiency in managing complex pattern recognition tasks with overlapping class distributions. The model was trained on a custom dataset encompassing all 26 ISL alphabets and achieved reliable real-time performance. The system delivers a dual-mode output displaying recognized text and generating synthetic speech thereby facilitating bidirectional communication. This work underscores the viability of a software-driven approach to develop accessible assistivetechnologiesthatenhancesocialparticipationforthe deaf and mute community.

Key Words: Indian Sign Language (ISL), Static Gesture Recognition, Convex Hull, Learning Vector Quantization (LVQ),ImageAnalysis,Human-MachineInteraction(HMI), AssistiveTechnology.

Non-verbalcommunicationthroughhandgesturesoffersa richandintuitivechannelforhumaninteraction,servingasa critical medium for individuals with hearing and speech disabilities.Theautomationofgestureinterpretationholds immensepotentialfordevelopingassistivetechnologiesthat can significantly improve quality of life and social integration.InIndia,wheremillionsareaffectedbyhearing loss,suchsystemscanactasavitalconduittothebroader society, reducing dependency on human interpreters and fosteringindependence.

Research in automated sign language recognition has historically followed two paths: device-based and visionbased approaches. Device-based methods, such as

instrumented gloves, offer high precision but are often costly,cumbersome,andsociallystigmatizing.Conversely, vision-based techniques provide a non-contact, userfriendly, and economically feasible alternative by using camerastocaptureandanalyzegestures.Thispaperaligns with the vision-based paradigm, presenting a dedicated systemforrecognizingstaticISLgestures.

Theproposedframeworkisbuiltonamulti-stageimage processing workflow. It begins with image capture via a standardwebcam,followedbyrobusthandsegmentationin the YCrCb color space to mitigate lighting variations. Key shape features are then extracted using the Convex Hull algorithm and radial distance calculations, forming a descriptivefeaturevector.ThisvectorisclassifiedbyanLVQ neuralnetwork,chosenforitseffectivenessinscenarioswith complexdecisionboundaries.Thefinaloutputispresented asbothon-screentextandcomputer-generatedspeech.

Theprincipalcontributionofthisworkisthedevelopment andimplementationofapractical,software-centrictoolthat accuratelydeciphersISLalphabets.Byprovidingaseamless translationfromgesturetospeechandtext,thissystemaims to empower users, thereby promoting inclusivity and autonomy.Subsequentsectionsdiscusspriorresearch,the detailed methodology, experimental outcomes, and concludingremarks.

The domain of sign language recognition has evolved significantly,withaclearshiftfromhardware-dependentto vision-basedsolutions.Earlysystemsleverageddatagloves [4, 5], which, despite their accuracy, were impractical for dailyuseduetocostandobtrusiveness.Theadventofvisionbased methods marked a pivotal turn, focusing on using camerasforamorenaturaluserexperience.

Acriticalstepinvision-basedrecognitionisthereliable segmentationofthehandfromthebackground.Researchers haveexperimentedwithvariouscolormodelslikeRGB,HSV, andYCbCrtocreaterobustskincolormodelsforthispurpose [6, 7]. For converting the segmented region into a binary image, Otsu's global thresholding technique [8] has been widelyadoptedforitsefficiency,anditisintegratedintoour pipeline.

Feature extraction is paramount for distinguishing between different gestures. Techniques such as Principal

International Research Journal of

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net

Component Analysis (PCA) for data compression [9] and Zernike moments for rotation-invariant shape description [15]havebeenexplored.Inthiswork,weutilizetheConvex Hull[11]toobtainasimplifiedgeometricrepresentationof thehandcontour.Theverticesanddefectsofthishullprovide arobustfeaturesetthatislargelyinvarianttoorientationand scale.

Theclassificationstagehasbeenaddressedusingdiverse machinelearning models. Hidden Markov Models (HMMs) have been successful for dynamic gestures involving temporalsequences[12].Forstaticpostures,ArtificialNeural Networks(ANNs)arecommonlyused[13].Weselectedthe LearningVectorQuantization(LVQ)network,asupervised neural model, for its strong performance in pattern classification, especially when classes are not linearly separable [14]. A comparative summary of different methodologiesispresentedinTable1. thedistinctadvantagesofourchosenapproach.

Table -1:ComparisonofDifferentRecognitionApproaches

Primary Method of Recogniti on

Artificial Neural Network (ANN) [13]

Hidden Markov Models (HMM) [12]

K-Nearest Neighbors (K-NN) [15]

3. PROPOSED METHODOLOGY

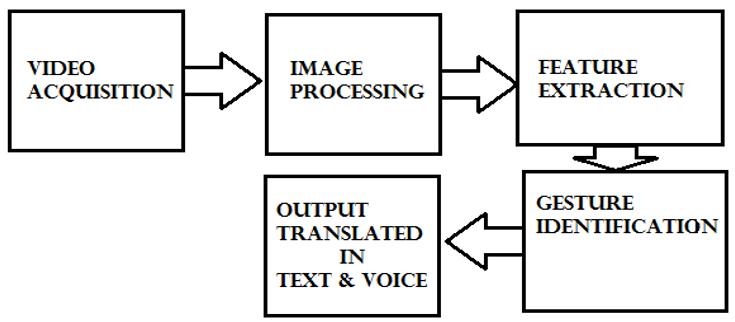

ThearchitecturefortheISLrecognitionsystemisdesigned as a sequential pipeline, encompassing image acquisition, preprocessing,featureextraction,classification,andoutput generation

Fig 1:- GeneralBlockDiagramofProposedSystem

Thesystemisarchitectedwithmodularityinmind,ensuring each component has a distinct role. The hardware setup involvesastandardwebcam,chosenforitsubiquityandlow cost, to capture gesture images. The software core is implementedinMATLAB,leveragingitsintegratedtoolboxes forimageprocessingandneuralnetworkdevelopment.This separation allows for flexible system updates and maintenance.

Data flows unidirectionally through the pipeline. Each moduleprocessesitsinputandpassestheresulttothenext, minimizinglatencyandmakingthesystemsuitableforrealtime interaction. The process culminates in a dual-output system that displays text and produces audio, creating a comprehensivecommunicationaid.

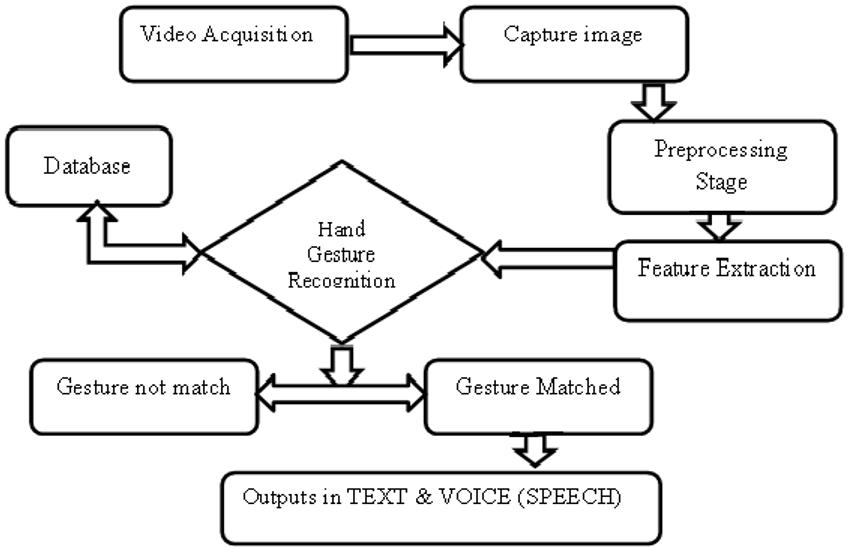

Fig 2:- SystemFlowChart

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

Theoperationcommenceswiththewebcamcapturinglive videoframes.EachframeisconvertedfromtheRGBcolor spacetotheYCrCbcolorspace.Thisconversioniscrucialas itseparatestheluminance(Y)fromthechrominance(Cr,Cb) components, making skin color detection less sensitive to lightingchanges.

Thesubsequentstepinvolvesapplyingaskincolorfilter in the YCrCb space to isolate the hand region. This segmented region is then binarized using Otsu's thresholding method, which automaticallydeterminesthe optimalthresholdvaluetoseparatetheforeground(hand) from the background. The binary image undergoes morphologicaloperations erosionfollowedbydilation to removenoiseandsmooththehandcontour,resultingina cleanbinarymaskreadyforfeatureextraction.

This phase converts the processed binary image into a discriminativefeaturevector.Theprocessinvolves:

1. Centroid Calculation: Thegeometriccenterofthe handmassiscomputedusingimagemoments.

2. Contour Detection: Theoutermostboundaryofthe handistraced.

3. Convex Hull Generation: The smallest convex polygon enveloping the hand contour is constructed.The"defects" indentationsbetween the hull and the contour are identified, which oftencorrespondtogapsbetweenfingers.

4. Radial Distance Feature: Asetofdistancesfrom thecentroidtothecontourpointsatfixedangular intervalsiscalculated,creatingarotation-invariant signature.

Thefinalfeaturevectorisacombinationofthenormalized coordinates of the convex hull vertices, the depths and anglesofthehulldefects,andtheradialdistancesignature.

3.4 Classification and Output Generation

The extracted feature vector is fed into the LVQ neural network for classification. The LVQ network operates by comparingtheinputvectortoasetofprototypevectorsit learnedduringtraining.Theclassoftheclosestprototypeis assignedtotheinputgesture.

Uponasuccessfulclassificationwithhighconfidence,the systemdisplaysthecorrespondingalphabetorwordonthe graphical user interface (GUI). Simultaneously, the text string is passed to a text-to-speech (TTS) engine, which synthesizes and vocalizes the output. This integrated approach ensures the system is useful for communication withbothhearingandnon-hearingindividuals.

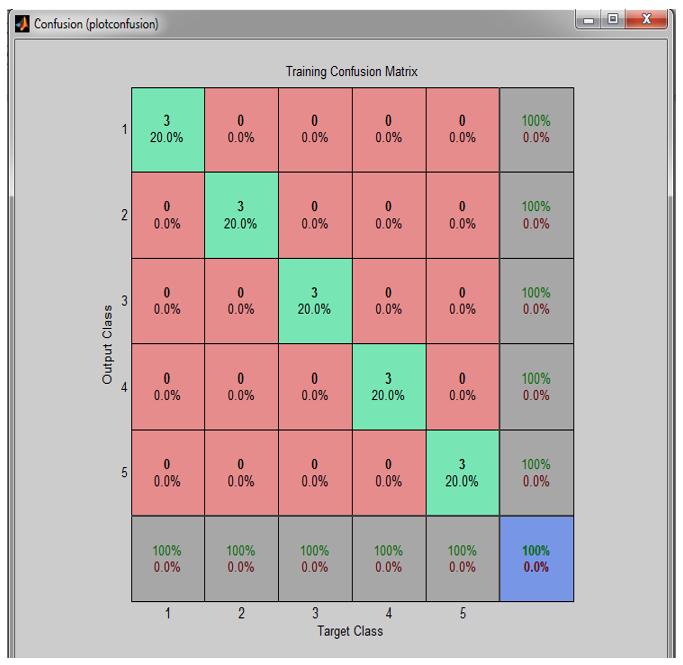

The proposed framework was implemented using MATLAB.Adatasetof78imageswascreated,containing3 samplesforeachofthe26ISLalphabets.TheLVQnetwork wastrainedonthisdataset.

Themodel'sperformancewasevaluatedusingaconfusion matrix, which showed a high concentration of correct

predictions along the main diagonal, indicating strong classificationaccuracywithfewconfusionsbetweenclasses.

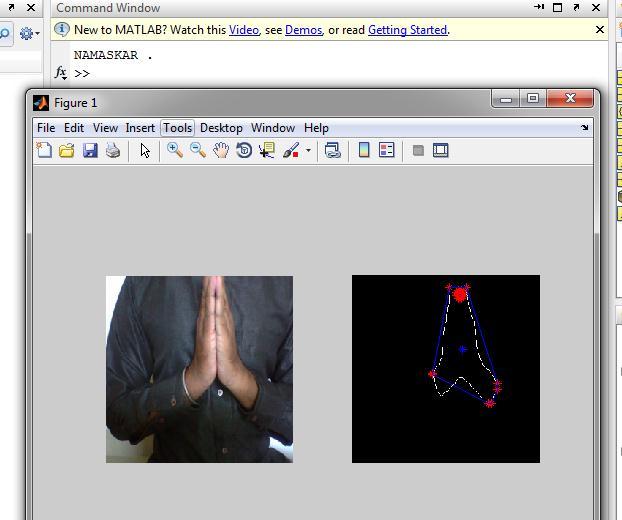

In this instance, the user performed the "NAMASKAR" gesture (a traditional Indian greeting with palms joined together).Theleft panel oftheGUI displays thelivevideo feed with the system's graphical overlay, showing the successfullydetectedhandcontour(blueline),thecalculated

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

convexhull(greenline),andthecentroid(reddot).Theright panel of the GUI clearly displays the recognized word "NAMASKAR" as the output. This successful recognitionhighlightsthesystem'seffectivenessinhandling culturally specific and complex signs beyond simple alphabets.

This research presented a functional and affordable vision-basedframework for recognizing staticIndian Sign Language gestures. By employing a combination of YCrCb colorspacesegmentation,Otsu'sthresholding,ConvexHull andradial-basedfeatureextraction,andLVQneuralnetwork classification, the system successfully translates hand gesturesintotextandspeech.Thisnon-intrusive,softwarefocusedsolutionservesasapracticalcommunicationtoolfor thehearingandspeechimpaired,encouraginggreatersocial inclusion and self-reliance. The work demonstrates that sophisticated assistive technology can be built without expensive hardware, establishing a foundation for future researchintorecognizingcomplex,dynamicsigns.

[1] Patil and G. V. Lohar, "Hand Motion Translator for Speech and Hearing Impaired," in International Conference on Convergence of Technology,2014.

[2] T.S.HunangandV.I.Pavloic,"HandGestureModeling, Analysis, and Synthesis," in Proc. of International Workshop on Automatic Face and Gesture Recognition, Zurich,1995.

[3] F.Ullah,"AmericanSignLanguagerecognitionsystem for hearing impaired people using Cartesian Genetic Programming," in 5th International Conference on Automation, Robotics and Applications (ICARA),2011.

[4] Y.F.AdmasuandK.Raimond,"Ethiopiansignlanguage recognition using Artificial Neural Network," in 10th International Conference on Intelligent Systems Design and Applications (ISDA),2010.

[5] G. Fang, W. Gao, and J. Ma, "Signer-independent sign language recognition based on SOFM/HMM," in IEEE ICCVWorkshoponRecognition,Analysis,andTrackingof Faces and Gestures in Real-Time Systems,2001.

[6] Sachin S. K. et al., "Novel Segmentation Algorithm for Hand Gesture Recognition," in IEEE Conference on Information and Communication Technologies (ICT), 2013.

[7] M. M. Hasan and P. K. Mishra, "HSV brightness factor matchingforgesturerecognitionsystem," International Journal of Image Processing (IJIP),vol.4,no.5,2010.

[8] N.Otsu,"AThresholdSelectionMethodfromGray-Level Histograms," IEEE Transactions on Systems, Man, and Cybernetics,vol.9,no.1,pp.62-66,Jan.1979.

[9] Divya Deora and Nikesh Bajaj, "Indian sign language recognition," in 1st International Conference on Emerging Technology Trends in Electronics, Communication and Networking,2012.

[10] Zhongliang, Q. and Wenjun, W., "Automatic Ship ClassificationbySuperstructureMomentInvariantsand Two-stage Classifier," in*ICCS/ISITA '92. CommunicationsontheMove*,1992.

[11] R.Minhasand J. Wu,"InvariantFeature Set inConvex HullforFastImageRegistration,"in IEEE International Conference,2007.

[12] P. Vamplew, "Recognition of sign language gestures using neural networks," in European Conference on Disabilities, Virtual Reality and Associated Technologies, Maidenhead,U.K.,1996.

[13] Adithya V., Vinod P. R., and Usha Gopalakrishnan, "ArtificialNeuralNetworkBasedMethodforIndianSign Language Recognition," in IEEE Conference on Information and Communication Technologies (ICT), 2013.

[14] E.S.Amin,"Applicationofartificialneuralnetworksto evaluatewelddefectsofnuclearcomponents," Journalof Nuclear and Radiation Physics, vol. 3, no. 2, pp. 83-92, 2008.

[15] A.WongandR.Shirmohammadi,"StaticHandPosture RecognitionusingHOGandK-NN,"in IEEEInternational Symposium on Haptic, Audio and Visual Environments and Games (HAVE),2013.