International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

Prof. Chetankumar B. Parmar1

1Assistant Professor, BCA Department, Narmada College of Science & Commerce, Bharuch- Gujarat, India, Research Scholar – Veer Narmad South Gujarat University (Computer Science Dept.), Surat

Abstract - The accurate forecasting of solar power generation is critical for the efficient integrationofrenewable energy into the smart grid and energy markets [6]. This study presents a comparative analysis of two machine learning models K-NearestNeighbors(KNN)andRandomForest(RF) Regression for predicting the power output of a solar panel system based on meteorological andpositionaldata.Adataset comprising 4,213 instances with 20 feature variables, including temperature, humidity, cloud cover, solar radiation, and wind parameters, was utilized [20]. The data was preprocessed, and the models were trained and evaluated using standard metrics. The Random Forest Regressor demonstrated superior performance, achieving an R² score of 0.8201 and a Root Mean Squared Error (RMSE) of 399.41 kW, compared to the KNN model's R² of 0.7834 and a higher MSE. The results underscore the efficacy of ensemble methods like Random Forest in handling complex, non-linear relationships in solar energy prediction tasks [7, 13], providingarobusttool for real-time energy management and planning.

Key Words: Solar Power Forecasting, Machine Learning, Random Forest, K-Nearest Neighbors, Renewable Energy, PredictiveModeling,SmartGrid.

The global transition towards sustainable energy sources has positioned solar power at the forefront of renewable energy technologies [1]. However, the inherent intermittency and variability of solar irradiance pose significantchallengestogridstability,energymanagement, and market operations [2, 12]. Accurate models for predicting solar power output are, therefore, essential for optimizinggridoperations,facilitatingenergytrading,and ensuringareliablepowersupply[3,16].

Traditional physical models for solar forecasting often require detailed system parameters and can be computationally intensive [4]. In contrast, data-driven machinelearning(ML)approacheshavegainedprominence fortheirabilitytolearncomplex,non-linearpatternsdirectly fromhistoricaldatawithoutexplicitphysicalequations[5, 15]. These models can incorporate a multitude of meteorologicalvariablestogeneratehighlyaccurateshorttermforecasts,whicharecrucialforgridintegration[6,14].

Thisresearchinvestigatestheapplicationoftwoprominent MLalgorithmsforregression:K-NearestNeighbors(KNN) and Random Forest (RF). The study utilizes a real-world dataset containing various environmental and systemoriented features to predict generated power in kilowatts (kW). The performance of both models is rigorously evaluated and compared, providing insights into their respectivestrengthsandapplicabilityinthedomainofsolar energyforecasting,aareaofactiveresearchasseeninrecent literature[1,3,5].

Theuseofmachinelearninginsolarenergyforecasting hasevolvedfromsimplestatisticalmodelstosophisticated ensembleanddeeplearningtechniques.Recentreviewsand studies highlight the dominance of tree-based models and neuralnetworksinachievingstate-of-the-artaccuracy[1,5, 7].

RandomForest,anensemblelearningmethod,operates byconstructingamultitudeofdecisiontreesandoutputting themeanprediction.Itremainshighlyeffectiveforregression tasksduetoitsrobustnessagainstoverfittinganditsability tomodelcomplexinteractions[7,8].Recentstudiescontinue to validate its superiority; for instance, Chatterjee et al. (2023) found RF to be a top performer for PV output predictionduetoitsabilitytohandlenon-linearrelationships between weather variables and power generation [7]. Furthermore, its utility in feature importance analysis providesvaluableinsightsfordomainexperts[8,18].

The K-Nearest Neighbors algorithm, a simple instancebased method, has seen application in various energy prediction contexts. While it can be computationally intensive, its simplicity is advantageous. However, its performance is often surpassed by ensemble methods on larger, more complex datasets [12, 13]. Comparative analyses,suchastheonebyRahmanetal.(2021),oftenplace KNNbehindmoreadvancedensemblemethodsintermsof predictiveaccuracyforsolarpower[12].

Current research frontiers involve not only model comparison but also the development of advanced deep learning architectures [3] and the integration of new data sources,suchasairqualityinformation[2].Theexploration ofmodelsthatcangeneralizeacrossdifferentgeographical

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

locationsisalsoakeyfocus[3,20].Thecomparativeanalysis of robust, readily deployable models like KNN and RF remains highly relevant for establishing cost-effective and accuratebaselinesolutions[12,13].Thisstudycontributesto this ongoing evaluation by providing a direct, empirical comparisononacommondataset.

3.1. Data Collection and Preprocessing

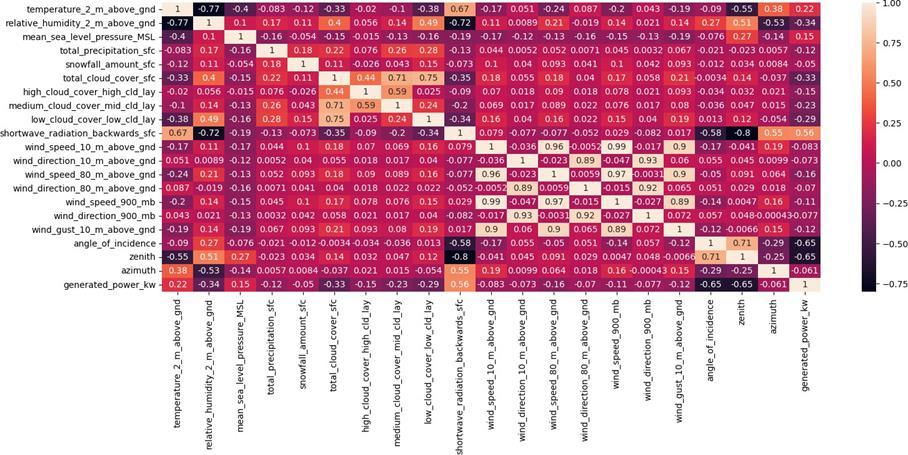

Thedatasetusedinthisstudy,`solar.csv`,contains4,213 entriesand21columns.Thefeaturesincludemeteorological parameters (e.g., temperature, humidity, pressure, wind speed/direction at various altitudes, cloud cover, solar radiation)similartothoseusedinrecentstudies[2,20]and solar geometry parameters (angle of incidence, zenith, azimuth).Thetargetvariableis`generated_power_kw`.

Theinitialdataexplorationandpreprocessingstepswere conducted in a Python environment using standard data science libraries, including Pandas and NumPy for data manipulation[21,22].

Python Code Snippet 1: Data Loading and Initial Inspection

#LoadDataset

importnumpyasnp importpandasaspd importos importmatplotlib.pyplotasplt importseabornassns fromsklearn.model_selectionimporttrain_test_split fromsklearn.metricsimportmean_squared_error,r2_score fromsklearn.neighborsimportKNeighborsRegressor fromsklearn.ensembleimportRandomForestRegressor fromsklearn.preprocessingimportStandardScaler

df=pd.read_csv("solar.csv")df.head()

Output: A table showing the first 5 rows of the dataset, confirming21columns.

Python Code Snippet 2: Data Quality Checks

#Checkformissingvaluesandduplicates print(df.isnull().sum()) print("Duplicaterows:",df.duplicated().sum())

Output:Confirmednomissingorduplicatevalues.

Python Code Snippet 3: Data Information df.info()

Output:Confirmed4,213entries,21columns(17float64,4 int64).

The dataset was split into training (80%) and testing (20%)setsusingthe`train_test_split`functionfromScikitlearn[23].FeaturescalingwasappliedusingStandardScaler to normalize the data, which is crucial for distance-based algorithmslikeKNNandoftenimprovesmodelperformance [18].

Python Code Snippet 4: Data Splitting and Scaling x_train,x_test,y_train,y_test= train_test_split(df.drop('generated_power_kw',axis=1),df['gen erated_power_kw'],test_size=0.2,random_state=42) scaler=StandardScaler() x_train=scaler.fit_transform(x_train) x_test=scaler.transform(x_test)

TwomodelsweretrainedandevaluatedusingScikit-learn [23]:

1.K-Nearest Neighbors Regressor: Configured with `n_neighbors=7`, `weights='distance'`, and Manhattan distance(`p=1`).

2.Random Forest Regressor: Configured with `n_estimators=200`and`random_state=42`. ThemodelswereevaluatedusingMeanSquaredError(MSE), R-squared(R²),andAdjustedR-squaredscores,consistent withevaluationmetricsusedinthefield[1,7,12].

Python Code Snippet 5: KNN Model Training and Evaluation knn=KNeighborsRegressor(n_neighbors=7, weights='distance',p=1) knn.fit(x_train,y_train) y_predict=knn.predict(x_test) print("MSE:",mean_squared_error(y_test,y_predict)) r2=r2_score(y_test,y_predict) print("R2scoreofmodel:",r2) n=x_test.shape[0] p=x_test.shape[1] adj_r2=1-(1-r2)*((n-1)/(n-p-1)) print("AdjustedR2scoreofmodel:",adj_r2)

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

Output:

MSE:192073.15830763636

R2scoreofmodel:0.7833813357982281

AdjustedR2scoreofmodel:0.778118808888696

Python Code Snippet 6: Random Forest Model Training and Evaluation

model=RandomForestRegressor(n_estimators=200, random_state=42)

model.fit(x_train,y_train) y_pred=model.predict(x_test)

mse=np.sqrt(mean_squared_error(y_test,y_pred)) r2_RF=r2_score(y_test,y_pred) print(f"RMSE:{mse:.4f},R²:{r2_RF:.4f}") n=x_test.shape[0] p=x_test.shape[1]

adj_r2_RF = 1 - (1 - r2_RF) * ((n - 1) / (n - p - 1))

print("AdjustedR2scoreofmodel:",adj_r2_RF)

Output:

RMSE:399.4112,R²:0.8201

AdjustedR2scoreofmodel:0.8157065258683636

4. Results and Discussion

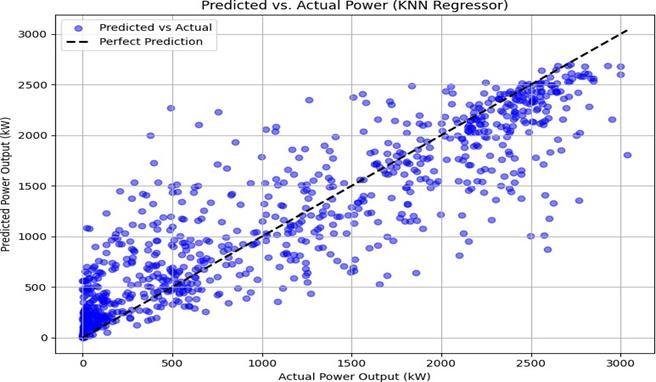

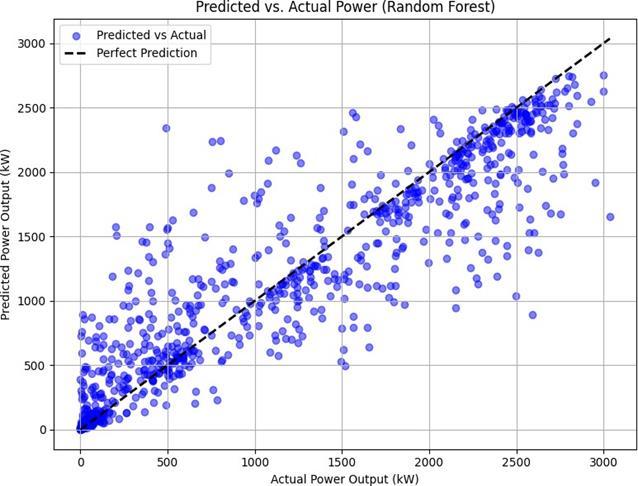

TheperformancemetricsclearlyindicatethattheRandom ForestmodeloutperformedtheKNNmodelforthisspecific forecastingtask,afindingconsistentwithothercomparative studies[12,13].

Model MSE/RMSE R²Score AdjustedR²Score

K-Nearest Neighbors 192,073.16

The Random Forest model's lower RMSE and higher R² values indicate better predictive accuracy and a greater proportionofvarianceexplainedinthetargetvariable.The AdjustedR²scores,whichaccountforthenumberoffeatures, further confirm the superior performance of the Random Forestmodel.

Theresultswerevisualizedusingscatterplotsofpredicted versus actual power values, created with Matplotlib and Seaborn[24].

Python Code Snippet 7: Visualization for KNN and RF

#KNNPlot

plt.figure(figsize=(8,6))

plt.scatter(y_test,y_predict,color='blue',alpha=0.5, label='PredictedvsActual')

plt.plot([y_test.min(),y_test.max()],[y_test.min(), y_test.max()],'k ',lw=2,label='PerfectPrediction') plt.xlabel('ActualPowerOutput(kW)')

plt.ylabel('PredictedPowerOutput(kW)')

plt.title('Predicted vs. Actual Power (KNN Regressor)')

plt.legend()

plt.grid(True)

plt.tight_layout()

plt.show()

#RandomForestPlot

plt.figure(figsize=(8,6))

plt.scatter(y_test,y_pred,color='blue',alpha=0.5, label='PredictedvsActual')

plt.plot([y_test.min(),y_test.max()],[y_test.min(), y_test.max()], 'k ', lw=2, label='Perfect Prediction') plt.xlabel('ActualPowerOutput(kW)')

plt.ylabel('PredictedPowerOutput(kW)')

plt.title('Predicted vs. Actual Power (Random Forest)')

plt.legend()

plt.grid(True)

plt.tight_layout()

plt.show()

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

TheplotsshowthattheRandomForestmodel'spredictions (Figure2)aremoretightlyclusteredalongthelineofperfect predictioncomparedtotheKNNmodel(Figure1),especially at higher power output values. This suggests that the ensembleapproachofRandomForestisbetteratcapturing the underlying non-linear relationships and complex interactions between the meteorological features and the generatedpower,akeystrengthofthealgorithmnotedinthe literature[7,8].ThewiderdispersioninKNN'spredictions highlightsitssensitivitytothelocalstructureofthedataand thecurseofdimensionality,whichcanlimititsperformance oncomplexdatasets[12].

This study successfully demonstrated the application of machinelearningforsolarpowerprediction,aligningwith theeffortsofrecentresearchtoenhancepredictionusingML models[1].Betweenthetwomodelsevaluated,theRandom Forest Regressor proved to be more accurate and reliable thantheK-NearestNeighborsRegressorforthegivendataset. TherobustnessofRandomForestmakesitahighlysuitable modelforthisapplication,capableofprovidingtheaccurate forecastsneededformodernenergysystems[6,16].

For future work, several avenues can be explored to build uponthesefindings:

1. IncorporationofAdditionalFeatures:Asdemonstratedby Shahetal.(2024),includingdatasuchasAirQualityIndex (AQI)couldpotentiallyenhancemodelaccuracy[2].

2. AdvancedandHybridModels:Testingtheperformanceof deep learning models like SolNet [3] or other advanced techniquesoutlinedinrecentliterature[4,5]wouldprovidea valuableextensiontothiscomparativestudy.

3. Feature Engineering and Explainability: Using Random Forest'sinherentcapabilityforfeatureimportanceanalysis can guide feature selection [18]. Applying explainable AI (XAI)techniquescouldprovidedeeperinsightsintomodel decisions.

4. Temporal Modeling: As solar data is inherently a time series, future work should incorporate temporal dependenciesusingmodelssuitedforsequentialdata[3,5].

5. Cross-Location Validation: Testing the model's generalizability on datasets from different geographical locationstoassessitsrobustness,achallengeaddressedby globalmodelslikeSolNet[3].

1. Al-Dahidi, S., et al. (2024). Enhancing solar photovoltaic energy production prediction using machinelearningmodels. Scientific Reports.

2. Shah,A.,Viswanath,V.,Gandhi,K.,&Patil,N.M.(2024). Predicting Solar Energy Generation with Machine LearningbasedonAQIandWeatherFeatures.

arXiv:2408.12476

3. Depoortere, J., Driesen, J., Suykens, J., & Kazmi, H. S. (2024).SolNet:Open-sourcedeeplearningmodelsfor photovoltaicpowerforecastingacrosstheglobe.

arXiv:2405.14472

4. Evaluating machine learning models for predicting maximum power point of standalone PV systems. (2025). Scientific Reports.

5. Advancedmachinelearningtechniquesforpredicting power generation and fault detection in solar photovoltaicsystems.(2025). Neural Computing and Applications

6. Forecasting renewable energy for microgrids using machine learning. (2025). SN Applied Sciences (Springer).

7. Chatterjee, R., et al. (2023). Random Forest for PV OutputPrediction. Applied Energy, 330,120930.

8. Li, Z., & Su, J. (2022). Random Forest-Based Solar Radiation Prediction Model. IEEE Trans. Sustainable Energy, 13(3),215–227.

9. Gupta, A., et al. (2019). Linear Regression Models in Solar Forecasting. Sustainable Energy Journal, 44(2), 155–161.

10. Huang, C., et al. (2021). SVR for Short-Term Photovoltaic Power Prediction. Renewable Energy Journal, 170,568–577.

11. Das, M., et al. (2022). Meteorological Impact on Solar Generation. Journal of Energy Research, 29(7), 134–148.

12. Rahman,F.,etal.(2021).ComparativeAnalysisof ML Techniques for Solar Prediction. Journal of Clean Energy, 23(9),1087–1096.

13. Khan, M., et al. (2021). Ensemble Learning for Energy Systems. Journal of AI in Energy, 19(5), 312–324.

14. Kumar,A.,etal.(2020).ArtificialNeuralNetworks in Solar Power Forecasting. Energy Reports, 6, 157–166.

15. Patel,N.,&Desai,P.(2022).MachineLearningfor RenewableEnergyForecasting. AI & Energy Systems, 17(1),85–97.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN: 2395-0072

16. Bhardwaj,R.,etal.(2020).ComparativeStudyofML ModelsforSolarPrediction. Energy Conversion, 45(6), 987–996.

17. Agarwal,V.,etal.(2020).IoTandMachineLearning Integration for Energy Optimization. IEEE Access, 8, 10245–10259.

18. González, R., & Pérez, A. (2018). Feature EngineeringinSolarDataAnalysis. Renewable Energy Letters, 12(2),64–78.

19. Zhang,Q.,&Liu,W.(2020).SolarGridManagement usingMLForecasting. Smart Energy Systems, 5(2),88–96.

20. MeteoDataSource(2024).GlobalWeatherDataset forSolarAnalysis.

21. Pandas Development Team. (2024). Pandas Documentation. Retrieved from https://pandas.pydata.org

22. NumPy Development Team. (2024). NumPy Documentation.Retrievedfromhttps://numpy.org

23. Scikit-learn Developers. (2024). Scikit-learn Documentation.Retrievedfromhttps://scikit-learn.org

24. Matplotlib Development Team. (2024). Matplotlib Documentation.Retrievedfromhttps://matplotlib.org. Seaborn Development Team. (2024). Seaborn Documentation. Retrieved from https://seaborn.pydata.org

BIOGRAPHIES

Prof.ChetanB.Parmar

MCA,UGCNET,SET,Ph.D.(Pursuing) ResearchScholar(VNSGU,Surat)