I had an “aha” moment on Christmas Day when I sat down to watch my hometown Washington Commanders take on the rival Dallas Cowboys. Although Netflix was the exclusive “broadcaster” of the game, fans in the D.C. and Dallas areas were also able to watch it via their local CBS affiliate. So I watched it on WUSA via YouTube TV. As I sat there, I mused about the fact that I was watching the world’s largest SVOD service air a live NFL game whose feed was carried (and produced by) America’s second-oldest broadcast network on a virtual MVPD (sorry, I was in a location that couldn’t receive an OTA signal).

To be fair, as the exclusive distributor, Netflix has contracted CBS Sports to produce its Christmas Day NFL games. Nevertheless, the fact that I was watching this game through three different platforms exemplified—in my mind at least—the dominance America’s favorite TV sport has on our media ecosystem. And there are no signs that that dominance will subside anytime soon.

The data coming out of the most recent NFL season is proof positive. Last month, the NFL reported that its 2025 regular season averaged 18.7 million television viewers per game. That’s the second-highest season average on record and up 10% from the 2024 season and 7% from 2023. That put the 2025 season’s viewership at just below the record set in 1989, when average viewing was about 19 million.

Viewing data from Nielsen also highlighted how important the NFL has become to broadcast networks, with NFL game telecasts accounting for 89 of the top 100 shows on TV since the start of the 2025 regular season.

As for the Christmas Day games? Netflix reported that the Detroit Lions-Minnesota Vikings matchup averaged 27.5 million U.S. viewers—making it the most-streamed NFL game in U.S. history, with viewership peaking at over 30 million during the broadcast.

That was eclipsed just several weeks later when the Jan. 10 playoff game between the Green Bay Packers and Chicago Bears set a new NFL streaming record, averaging about 31.61 million viewers on Prime Video. The game provided the highest average audience ever for an NFL game exclusively on a streaming platform.

While many fans may not know it, NFL (and college) football games can still be viewed on free TV and the NFL still recognizes the value of broadcast. With the rights locked into the Super Bowl to at least 2033, the big event is expected to remain free to access for the foreseeable future. As for the regular season and playoffs? Expect the streaming-broadcast mix to heat up in the years ahead.

And Netflix, for its part, has tempered its ambitions when it comes to the NFL. During a recent conference, Co-CEO Greg Peters said obtaining rights to more NFL games “doesn’t really fit with our strategy as we understand it right now,” according to Front Desk Sports. (Granted those comments were made before Netflix’s bid for Warner Bros. Discovery in early December.)

As for the Super Bowl, NBC has the honors this year of producing two of among the world’s biggest sports spectaculars just days apart—and not just with sports but high-profile entertainment productions that, on that day at least, are the greatest shows on earth.

Tom Butts Content Director

tom.butts@futurenet.com

Vol. 44 No. 2 | February 2026

FOLLOW US www.tvtech.com x.com/tvtechnology

CONTENT

Content Director

Tom Butts, tom.butts@futurenet.com

Content Manager

Michael Demenchuk, michael.demenchuk@futurenet

Senior Content Producer

George Winslow, george.winslow@futurenet.com

Contributors: Bruce Aleksander, Fred Dawson, Phil Kurz, Karl Paulson & Eric Zornes

Production Managers: Heather Tatrow, Nicole Schilling

Art Directors: Cliff Newman, Steven Mumby

ADVERTISING SALES

Managing Vice President of Sales, B2B Tech

Adam Goldstein, adam.goldstein@futurenet.com

Publisher, TV Tech/TVBEurope

Joe Palombo, joseph.palombo@futurenet.com

SUBSCRIBER CUSTOMER SERVICE

To subscribe, change your address, or check on your current account status, go to www.tvtechnology.com and click on About Us, email futureplc@computerfulfillment.com, call 888-266-5828, or write P.O. Box 8692, Lowell, MA 01853.

LICENSING/REPRINTS/PERMISSIONS

TV Technology is available for licensing. Contact the Licensing team to discuss partnership opportunities. Head of Print Licensing Rachel Shaw licensing@futurenet.com

MANAGEMENT

SVP, MD, B2B Amanda Darman-Allen VP, Global Head of Content, B2B Carmel King MD, Content, Broadcast Tech Paul McLane VP, Head of US Sales, B2B Tom Sikes VP, Global Head of Strategy & Ops, B2B Allison Markert VP, Product & Marketing, B2B Andrew Buchholz Head of Production US & UK Mark Constance Head of Design, B2B Nicole Cobban

FUTURE US, INC. 130 West 42nd Street, 7th Floor, New York, NY 10036

The Ambury, Bath BA1 1UA. All information contained in this publication is for information only and is, as far as we are aware, correct at the time of going to press. Future cannot accept any responsibility for errors or inaccuracies in such information. You are advised to contact manufacturers and retailers

A new study highlights the ongoing importance of free local TV and radio broadcasting in the U.S., with data showing local broadcasting fuels $1.19 trillion in gross domestic product (GDP) and supports 2.46 million jobs nationwide.

The study, conducted by Woods & Poole Economics Inc. with support from BIA Advisory Services and commissioned by the National Association of Broadcasters, also found that local broadcasters directly employ nearly 311,000 Americans, generating $54 billion in GDP through journalism, programming, engineering and advertising services.

Local broadcasting’s economic ripple effect extends deep into other sectors—from construction to retail—add-

The Corporation for Public Broadcasting’s board of directors voted last month to dissolve the organization that oversaw the federal government’s investment in public broadcasting and media for 58 years.

The move came after a decades-long political fight by conservatives to end federal funding for public media, culminating in 2025 with President Trump asking Congress to rescind previously appropriated money for public media and votes by the Republican-controlled Congress to end federal funding. Most of the staff was laid off last fall.

ing another $134 billion in GDP and supporting nearly 776,000 additional jobs. Advertising on local broadcast television and radio generates more than $997 billion in GDP and sustains more than 1.37 million jobs. Local businesses rely on this trusted platform to reach customers, grow their operations and fuel local economies, the organization said.

“No other industry gives more to Americans for free,” NAB President and CEO Curtis LeGeyt said. “Local stations provide trusted journalism, lifesaving emergency alerts and the sports and entertainment that bring our communities together. This report reinforces that broadcasters are not only essential to our democracy and daily lives, but to the strength of our economy, as well.”

mining that maintaining the corporation as a nonfunctional entity without funding would not serve the public interest or advance the goals of public media.

“A dormant and defunded CPB could have become vulnerable to future political manipulation or misuse, threatening the independence of public media and the trust audiences place in it, and potentially subjecting staff and board members to legal exposure from bad-faith actors,” the CPB said in a press release.

The National Association of Broadcasters has asked the Federal Communications Commission to act more quickly to set a sunset date for ATSC 1.0.

In comments filed last month, the NAB urged the agency “to adopt a date-certain ATSC 1.0 sunset, modernize its receiver standards so consumers can reliably receive authorized broadcast services, ensure continued MVPD carriage of stations’ primary ATSC 3.0 signals and associated program-related features, and reaffirm a stable approach to content protection that supports broadcasters’ ability to secure and deliver the high-value programming viewers expect while preserving longstanding consumer viewing expectations.”

“ATSC 3.0 is the future of free, local broadcasting, and the Commission has a timely opportunity to move the transition from its current, limited implementation phase to a full, nationwide deployment that serves the public interest,” NAB added.

While the agency has signaled that it wants to liberalize some rules in ways that will speed up the transition, it has not taken a position on two of the key issues raised by the NAB filing: a cutoff date for ATSC 1.0 broadcast signals and a requirement that all new TV sets be able to receive 3.0 signals.

The most recent comments by the NAB do not mention a specific cutoff date but in past FCC filings, it has argued for a 1.0 sunset in 2028 in larger markets and 2030 for the rest of the country

The NAB also argued that setting a clear, date-certain sunset for ATSC 1.0, would enable industry-wide planning that drives down costs, promotes innovation and avoids confusion for viewers. These tuner mandates are, however, opposed by the Consumer Technology Association.

The NAB also pressed the FCC to ensure continued access to free, over-the-air stations by updating receiver standards and maintaining MVPD carriage of ATSC 3.0 signals and advanced features.

CPB’s board took the vote after deter-

First authorized by Congress under the Public Broadcasting Act of 1967, CPB helped build and sustain a nationwide public-media system of more than 1,500 locally owned and operated public radio and TV stations.

In addition, NAB weighed in on content-security issues that have provoked opposition from some smaller device manufacturers and broadcasters: “ATSC 3.0 does not create new privacy concerns for viewers who watch broadcast television over the air without an internet connection,” the group argued. “A one-way broadcast signal, with no return path, cannot collect or transmit viewer information. For these viewers, watching ATSC 3.0 is no different from watching ATSC 1.0 from a privacy standpoint.”

Consumer Technology Association

President and CEO Gary Shapiro and Federal Communications Commission Chair Brendan Carr’s wide-ranging conversation Jan. 8 at CES in Las Vegas gave broadcasters a lot to think about when it comes to their future. It also prompted a couple of my own thoughts.

(If you missed it, C-SPAN carried the conversation, which is available at cspan.org.)

The future of local TV, general support for ATSC 3.0 at the commission, possible readjustment of the network-affiliate relationship and spectrum use and policy were among the highlights. Here, let’s focus on another: the public-interest obligation of broadcasters.

Setting the stage for discussing the public-interest obligation, Carr reminded the audience of the privilege of having a broadcast license and what that means to broadcasters when it comes to retransmission consent and ultimately must-carry dollars.

“[Broadcasting is] a very, very unique distribution medium…because the government is picking a winner and loser,” he said. “You get a license; you get this microphone; you get to speak; you don’t necessarily get to conduct yourself the same way you would if you run a podcast or a soapbox or a cable channel.”

For broadcasters who don’t like that obligation, Carr offered a couple of solutions: turn in your license and transition to a cable channel, start a podcast, become a YouTube channel or bid on your spectrum in an auction, “maybe let[ting] everyone have a fair and free shot at purchasing that spectrum without the public-interest obligation,” he said.

However, at the risk of revealing my naiveté, how many local TV broadcasters truly are clamoring to shed their public-interest obligation? On the whole, when have local TV stations not lived up to this obligation? Certainly not during tornadoes, hurricanes, incoming missile attacks (remember the Hawaii false alarm?), earthquakes and other emergencies.

On the contrary, unprompted by regulators. the TV industry has attempted to up its game in these situations with Advanced Emergency Alerting & Information (AEI&A), a built-in feature of the ATSC 3.0 standard. However, AEI&A—just like other 3.0 enhancements—can’t fully come to fruition until the industry can move forward on sunsetting 1.0.

Nor have they failed to serve the interests of the public each morning, noon and night when it comes to local news. Carr himself acknowledged in his comments that, given the decline of daily newspapers, local newscasts offer “the last of the real ‘gumshoe reporting.’”

My second observation is the 1934 Communications Act didn’t simply mandate a public interest obligation. There’s also the “convenience and necessity” portion of the phrase.

It seems to me to be counter to the spirit of TV broadcasters’ obligation to serve the “public interest, convenience and necessity” if the TV sets that the public watches are unable to provide the greatest convenience (think personalization and interactivity) and necessity (think AEI&A evacuation maps in flooding) that local TV broadcasters can deliver.

A new poll commissioned by the National Hispanic Media Coalition (NHMC) and Defend the Press Campaign found that large majorities of likely voters in the upcoming midterm elections opposed “large national broadcasters buying up or merging with local TV stations.”

Overall, 72% of respondents opposed the idea, including 75% of Democrats and 70% of Republicans. Only 7% were in favor of the acquisitions and 21% were unsure.

“The bottom line is that Americans across the political spectrum don’t want local TV-station consolidation,” said Brenda Victoria Castillo, president and CEO of the NHMC, which is opposed to the Federal Communications Commission loosening or lifting current ownership caps on station groups.

“They expect it to drive up their prices and give billionaires more power over what they see and hear, not to mention degrading the quality of coverage in their communities.”

The survey also found that 81% of respondents preferred TV stations to be locally owned as opposed to being owned by “large national broadcast corporations.” Only 2% said they preferred local stations to be owned by the national broadcast corporations.

In addition, 80% of respondents opposed loosening legal restrictions that would allow large corporations to buy more local stations, with 89% of Democrats and 70% of Republicans opposing changes to the rules.

By Tom Butts

NBC Sports is celebrating what it calls “Legendary February,” hosting three marquee sporting events expected to attract record viewership. The action begins with the opening ceremony from Milan, Italy, when the XXV Winter Olympic Games commence on Feb. 6. Two days later, the network will air the Super Bowl and, a week later, the NBA All-Star Game.

Planning for such a confluence of events isn’t easy, but NBC Sports has plenty of experience in handling crowded programming schedules, particularly when it comes to the Olympics, which it has televised for nearly 30 consecutive years.

The 2026 Winter Olympics, officially “Milano Cortina 2026,” will take place in the cities of Milan and Cortina d’Ampezzo, Feb. 6-22, and feature about 2,900 athletes across 16 sports. NBC promises more than 3,000 hours of coverage across all of its platforms (including NBC, USA Network, CNBC, Peacock, NBCOlympics.com, and the NBC Sports app),

with nearly 200 hours of coverage on NBC alone.

Darryl Jefferson, senior vice president of engineering and technology for NBC Olympics and Sports, will oversee broadcast operations to ensure the most comprehensive coverage of what the International Olympic Committee said is “the most geographically widespread Winter Olympics in history,” spanning an area of more than 22,000 square kilometers (over 8,500 square miles).

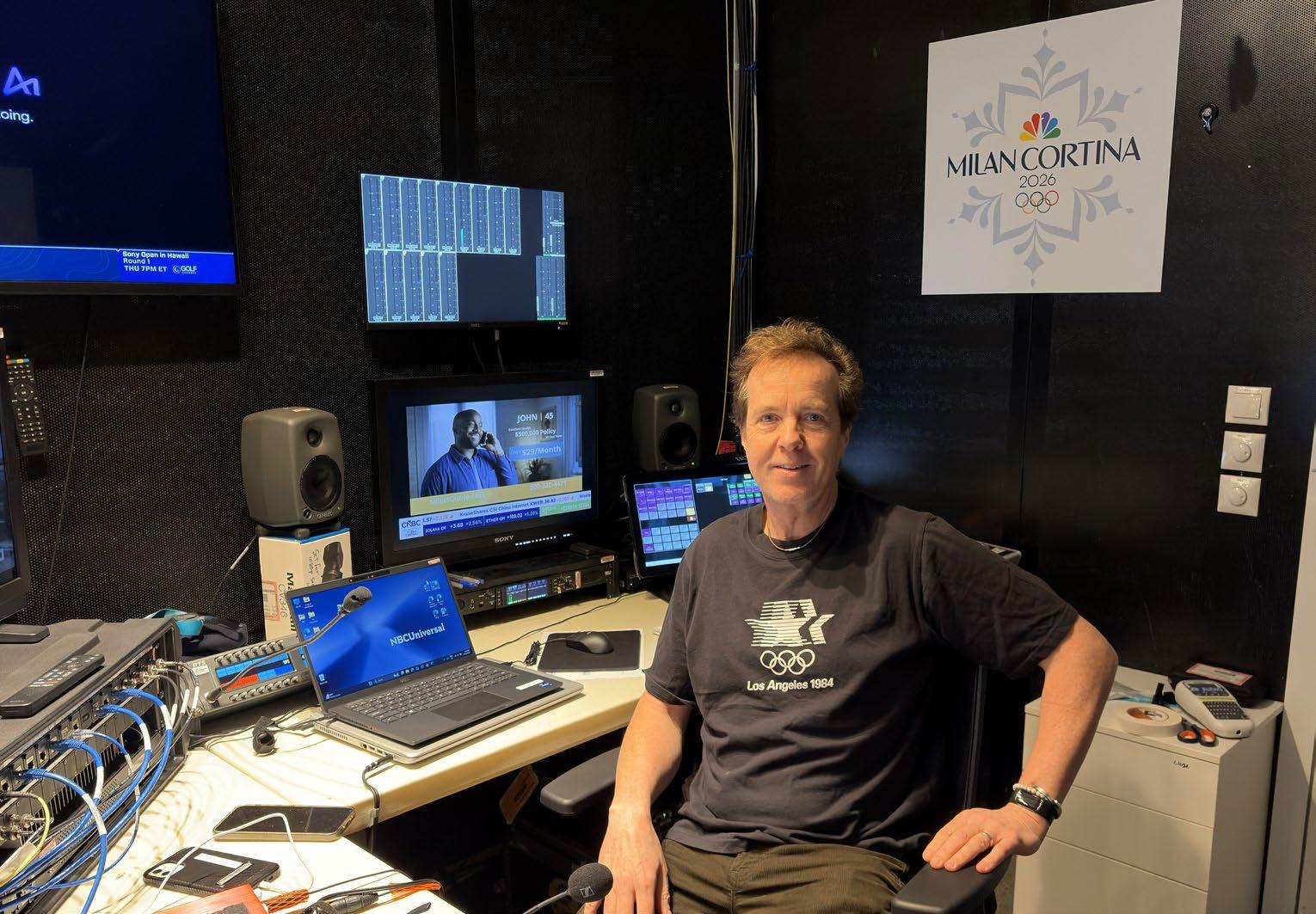

Although this is the first Winter Games with Jefferson at the helm—he succeeds Dave Mazza, who retired in 2023—Jefferson is a longtime veteran of NBC Olympics, joining in 2008 as director of postproduction.

And while the team had nearly two years

between the Paris Games and the 2026 event, that was not the case for the last Winter Games in Beijing in 2022, when NBC Olympics had only about a six-month window between the pandemic-delayed 2020 Tokyo Summer Games, held in the fall of 2021, and Beijing.

The biggest challenge for Milan-Cortina 2026 is the aforementioned geographic sprawl, Jefferson said. For example, the distance from the Olympic hub in Milan to the competition venues is measured in multiple hours, with the skating venue approximately five and a half hours away and the men’s and women’s alpine skiing venues three hours apart.

With most of the country’s municipalities opting to use existing facilities instead of constructing new purpose-built operations for the 16-day event, locations are not always optimal.

“That’s one of the reasons why all of our venues are so geographically spread out,” he said. “They’re well-established and beautiful, but they are further apart than we’ve ever seen before, so we had to build a plan that looked at very geographically disparate venues.

“In so many Olympic cities of the past, you’d go into the Olympic hub and you’d have

a number of venues that are clustered together,” he added. “Here, a lot of the venues are far apart, so we had to rethink deployment of people and gear. We’ve come up with a very good plan for doing all of those things, but we honestly had to throw out the old book.”

Nevertheless, it’s not like this is abnormal for a world-class event. “It is spread out, but that’s the Olympics for you,” Jefferson added.

With such a dispersed physical layout, particularly in rural areas, moving personnel and gear to venues is paramount, Jefferson said. The NBC team learned a lot while producing live coverage of sporting events during a pandemic, he said, with much of the coverage originating from the NBC facilities in Stamford, Conn.

“We learned a lot of valuable lessons with the ‘COVID Olympics’ in Tokyo and Beijing in that we still had a good deal of production on site in Tokyo—we had the primetime show and the control rooms in the IBC [International Broadcast Center] in Tokyo and Beijing; all of those things ended up coming home,” Jefferson said. “So a lot of those ‘lessons learned’ were about, ‘What can we do to maintain the high level of production value doing it from Stamford?’ And then, when COVID lifted, we tried to strike the balance of the teams that were on-site for Paris and the might of having a big broadcast facility in Stamford and pushing it to its limits.”

NBCUniversal hadn’t finalized its format plans for the two weeks of the Games by presstime, however with Sunday Feb. 8 being part of “Legendary February,” the network is presenting Super Bowl LX as well as the Games live all day in 4K HDR on NBC and Peacock—17 hours of 4K HDR coverage in all. This marks the first time the Olympics and Super Bowl are being presented in 4K HDR on NBC and Peacock, according to the network.

Jefferson noted NBCUniversal’s commitment to capturing all the action in the highest available quality for any platform.

“Like in Games past, and particularly like in Paris, we will capture and produce everything everywhere in 1080p/HDR, and we’ll have deliverables for a number of platforms, both linear and Peacock [NBCU’s streaming service] in 4K HDR as well,” he said. “We’re fairly committed to HDR and 4K deliverables.”

These data-hungry formats require evermore-increasing bandwidth between the Olympic host city and Stamford.

“In Paris, we had four 100-gig circuits and two 10-gig circuits for ancillary data and we will have the same for Milan-Cortina,” Jeffer-

son said. “The difference between the Summer and Winter Games is that the Summer Games have so many more concurrent sports, but from a technology and connectivity perspective, we won’t take a step backwards. The connectivity we’ll use for Milan-Cortina will echo what we used in Paris, which is kind of almost unfathomable, because there’s so many fewer sports.”

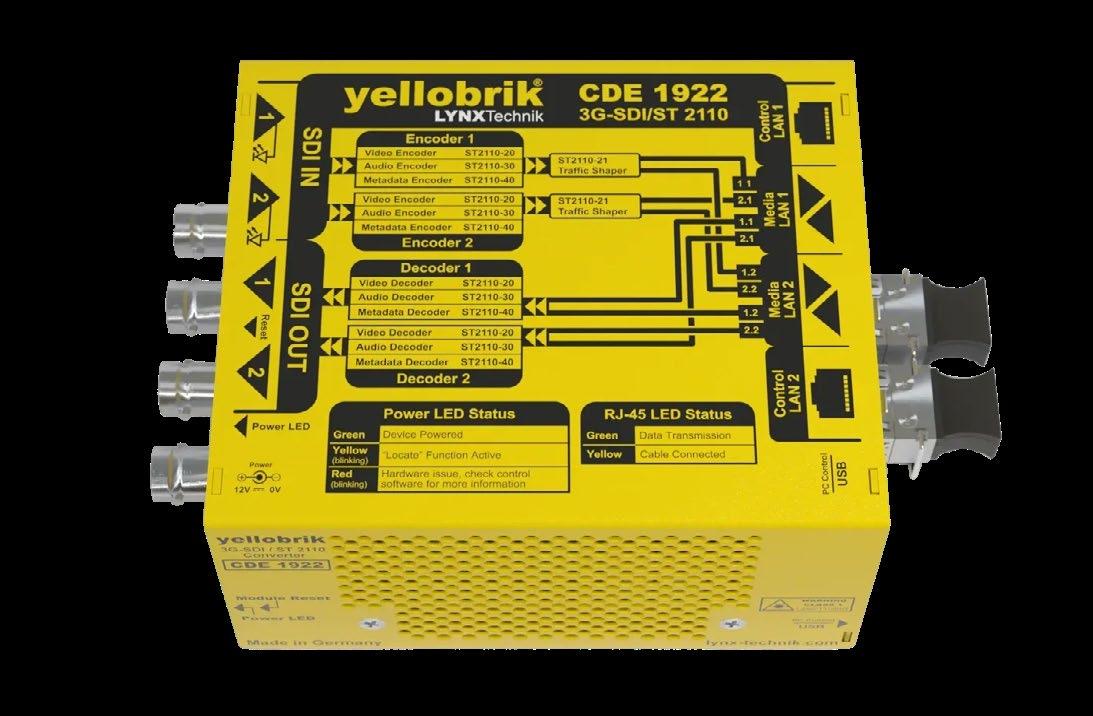

And, as in years past, NBC Olympics will work as a mostly SMPTE-2110 IP-based operation, according to Jefferson. “We still have some bits and pieces that are SDI, but we’re getting our primary feed from Olympic Broadcasting Service at the International Broadcast Center in 2110,” he said. “The core technology in the Stamford facility is 2110—that’s our preferred method of exchange, but you still get outliers out there that are SDI in nature, and we appreciate them as well.”

Stamford is playing an ever-increasing role in coverage, Jefferson added.

“All but one venue is being largely pro-

“All but one venue is being largely produced in Stamford for this Winter Games, which is a sea change.”

DARRYL JEFFERSON, NBC OLYMPICS AND SPORTS

Darryl Jefferson, senior vice president of engineering and technology for NBC Olympics and Sports

duced in Stamford for this Winter Games, which is a sea change,” he said. “Figure skating production will be wholly produced onsite, with the production team facilities onsite. Every other venue is a split production.”

Since winter sports are not as popular in the United States as in other areas of the world, Jefferson said NBC Olympics will use more data to enlighten viewers on the intricacies of sports they usually see only once every four years.

“Using technology to describe through data use and visualization is really cool, because it gives people the awe-inspiring things that we see athletes do,” he said. “So we’re using data in a lot of ways to present things like rotational velocity or height, the types of things to get people to really understand, like, ‘Holy crap, that’s really remarkable!’

“One of our biggest partners is the OBS partner, Omega [the official Olympics timekeeper], but we have a bunch of other partnerships looking at computer vision analysis, relative speed, jump height, this type of thing, to explain better, and some of those things are internal to help our commentators explain,” he continued. “So we get to tell the story a bit better.”

The content-creator community will also play an important role in Olympics coverage, with increased mobile device use, Jefferson added. “In Paris, OBS used a fleet of mobile phones to cover the athlete experience on the boats coming into and up the Seine [during the opening ceremony],” he said. “I think it will be a similar offering this go, in addition to all the broadcast cameras. There’s a multiplier effect with all those mobile devices in and around the fields of play, behind the scenes and so on.”

Jefferson said he’s excited about NBC’s ability to give viewers a better understanding of the history of the host country, whether it’s in San Siro Stadium, where the opening ceremony will be staged, or at a remote sports venue.

“There are two very different Italys being presented—you have a very metropolitan city with the vision of the Duomo [cathedral] in the city center and all that goes with the fashion and the architecture and all that stuff in Milan,” he said. “And then, on the other hand, you have the unbelievably gorgeous Dolomites backing these small, quaint ski towns.

“And that dichotomy will be presented not just at the opening ceremony, but throughout the whole Games,” he added. “So it will be amazing to present those two Italys to the United States.” ●

Network is looking to 5.1.4 Dolby Atmos to elevate audio and immerse viewers

By Phil Kurz

Talking to Karl Malone, senior director of audio engineering at NBC Sports and Olympics, about the sound he intends to capture and deliver during the Winter Games in Milan-Cortina reveals a passion and excitement for the event he hopes viewers will sense at home.

A critical part of making that happen will be the extensive use of immersive microphones to take advantage not only of the five speakers and subwoofer of a 5.1 surround mix, but also of four height channels dedicated to picking up mics elevated to capture a fuller soundscape and immerse the audience in the moment.

“It’s usually quite cold and potentially not too windy up there [in the starting hut of

downhill skiing], but it’s isolated, and I think immersive mics are really going to give us that feeling of isolation,” said Malone, offering an example of how channel-based immersive audio will benefit the coverage of one event.

“How we mix these normally, you’ll never hear any crowd in the mix because it’s all about the athletes and their time and the focus on them, the zoom in on the eyes and listening to the audio,” he said. “You see the breath, and you hear the breath. Then, as they go down the hill and they’re vying for a world record, you start to bring in the crowd and immersive.”

DOLBY ATMOS AND AUDINATE DANTE

Delivering immersive audio leveraging 10 channels of Dolby Atmos audio will be a major priority for the network during the Games.

“We have large 8.0—basically four height and four sort of lower-level—arrays that we will use for the general ambience at pretty much all venues just to get that larger immersive crowd sound whether indoors or outdoors,” Malone said.

“Then we have some smaller immersive mics as well, which are Dante-based,” he continued. “That’s a huge advancement in being able to get immersive audio into tighter spaces and also being able to do it on a single Ethernet cable, which is wonderful.”

Using Dante enables the A1s mixing coverage at the NBC Sports hub in Stamford, Conn., to control mics strategically positioned around venues in Milan-Cortina. Accessing the mics through a remote desktop, the A1s can not only operate them, but also steer pickup in real

time, as necessary, from 4,000 miles away.

“One of our hopes is to get these types of mics into the starting houses of the downhill races where the venue is smaller,” he said, specifically identifying how he hopes sound will convey “the pressure and the tension of being very high and very alone on the top of a mountain.”

“You can feel it, and you have to be able to hear that, too,” Malone said.

NBC will use 14 control rooms in Stamford, four of which can be considered primary control rooms, he said. One will be used for primetime, a second for daytime coverage, a third for USA Network and a fourth for NBC’s “Gold Zone,” its whip-around streaming coverage on Peacock, presenting the most exciting action from various venues. Each primary control room will handle 5.1.4 immersive mixes.

The remaining control rooms in Stamford are what the network refers to as “venue control rooms,” used to mix audio for ice hockey, cross-country skiing, speed skating, Alpine sliding, aerials and moguls, as well as at the Olympics Snow Park, where freestyle skiing and snowboarding will take place.

“Those control rooms act like trucks, feeding into a master audio control room where they’ll take the eight channels of immersive height information and mix that down into four,” Malone said. “So, it’s a finished product in 5.1.4.”

For this year’s Games, the network is giving its editors in Stamford the ability to edit 16 channels, not simply 5.1 surround. “Ultimately, you want those 16 channels to go back into a control room so that the A1 can play it back as live and have access to those eight channels of height microphones to be able to mix down into four channels,” Malone said.

“We championed the ability to maintain that whole 16-channel workflow through the building—from the venue all the way through edits and then back to a live control room,” he said. “That’s pretty important.”

There will be two exceptions to this remote integration model (REMI) workflow, however: the opening ceremony and figure skating.

U.S. viewers and the network regard figure skating as a “top-tier sport,” Malone said, and as such, NBC wants to have more production personnel on site (see “Hearing the Ice” on p. 16).

“They [NBC Olympics reporters and talent] have more access to athletes,” he said. “It’s a bigger production with more cameras and more NBC cameras. There are more NBC microphones. It’s just bigger.”

The same is true of the opening ceremony,

“It’s usually quite cold and potentially not too windy up there [in the starting hut of downhill skiing], but it’s isolated, and I think immersive mics are really going to give us that feeling of isolation.”

so both will be produced from an on-site mobile unit.

Audio from Stamford will be handed off as discrete 5.1.4 PCM to be encoded to Dolby Atmos and delivered to Comcast, Peacock, multichannel video programming distributor (MVPD) partners and the network itself. At that point, the network will remove the height channels for distribution to affiliates.

“All of the NBC O&Os will get AC-4 [the codec used by Dolby Atmos and integral to ATSC 3.0],” Malone said.

“What we did for Paris [2024 Summer Olympics] is they [the owned-and-operated stations] took our network 1080i and up-res-ed it to 1080p HDR with Dolby Vision and Atmos for

NextGen [TV],” Malone said, adding that some affiliates transmitting NextGen TV will do the same this time.

“The AC-4 pipe has already been laid out to NBC O&Os,” he said. “It’s really testing the pipe at this stage, and we’ve done very successful AC-4 tests with both immersive AD [audio description]—being able to take audio description and present that in a 5.1.4 presentation.”

To ensure its 5.1.4 immersive audio presentation is available to Peacock subscribers, the streaming service has conducted extensive testing of home devices, such as Roku boxes, Amazon Fire TV Sticks and others, to ensure glitch-free decoding and presentation, Malone said.

“That’s why it took a long time in my mind for Peacock to go immersive,” he said. “It’s because there are the creatives, including me, saying: ‘We’re ready to do this creatively. We can give you 5.1.4.’ But Peacock had to test every single device. They aren’t going to launch without making sure everything is right.”

Planning for the 2026 Winter Games started right after the Summer Olympics, with the goal of one-upping NBC’s award-winning coverage of the Paris Games.

“You don’t sit on your laurels,” Malone said. “You think: ‘What can we do better? How can we engage the audience more? How can we tell the story our directors and producers want to tell?’”

For the Milan-Cortina Games, when it comes to audio, the answer is clearly immersive. ●

By George Winslow

The Milan-Cortina Winter Olympics promises to be momentous both on screen and behind the scenes, in terms of new technologies and the consumer viewing experience. As Team USA heads to Milan with high hopes for its strongest performance in decades, NBC, Peacock and Comcast are readying a host of new technology and viewing experiences that they hope will strengthen, if not reverse, challenges to their business models that have been years in the making.

Addressing those challenges is particularly important for Comcast NBCUniversal, which spent some $7.75 billion for the U.S. rights to the Olympics between 2022 and 2032 and another $3 billion to extend those rights through 2036.

“The Olympics in Paris proved the Olympics are back and remain an unrivaled media property,” NBC Sports President Rick Cordella said at a press event, where he noted that NBCUniversal had already sold out its ad inventory for the Winter Games. “We expect Milan…to carry on that legacy…[by] mimicking and building on” NBC’s successful strategy for the Paris Olympics, he said.

How well Comcast and NBCUniversal deliver on that promise will have a major impact on both its traditional and newer streaming and digital businesses.

For its part, NBC is hoping to kick-start the celebration of its 100th anniversary this year by reversing recent Winter Olympics viewing declines. Average total audience hit

a record low in 2022 of 11.4 million for the Beijing Winter Olympics, down from the average audience of 19.8 million that viewed the Games in Pyeongchang, South Korea, in 2018 and only a quarter of the 45.6 million who watched the opening ceremony of the Salt Lake City Games in 2002.

The Games will also be crucial for Versant, owner of NBCU’s recently spun-off cable networks. Both USA Network, which will focus on Team USA with “Enhanced 4K” Dolby Vision and Atmos feeds, and CNBC will carry Olympics programming.

Similarly, Peacock, which will stream every event of the Games—around 3,000 hours of Olympics coverage—will look to solidify its stature as a major source of sports programming while the streamer’s owner, Comcast, will use the Games to lure back pay TV sub-

scribers and fend off increased competition from 5G wireless carriers by highlighting the cross-platform capabilities of its video platform and its fast, low-latency broadband network.

“We know that the customers who still have a pay TV service are, by and large, huge sports fans,” Vito Forlenza, vice president of sports and entertainment at Comcast, said. “So, we are really focusing on sports to showcase the technology we have. When you can blend linear TV and streaming together into a seamless experience, you’re offering something that is really hard to replicate on a streaming box or a fixed wireless connection.”

With NBC offering primetime coverage hosted by high-profile on-air talent, Peacock streaming all the events from 16 sports over 17 days, USA Network focusing on Team USA with 4K visuals and enhanced audio, and massive amounts of additional content available on CNBC, NBCSN and various digital offerings, one of the key issues facing the NBC Sports is finding ways to engage and not overwhelm viewers.

A major part of that consumer experience will be new production technologies. “Our mantra has been to make the best seat in the house even better,” Molly Solomon, executive producer and president, NBC Olympics Production, said. “This is going to be the most technologically advanced Olympics we’ve ever presented.”

That will include more extensive use of data analytics, live drones and mics on many athletes. “We will have mics on the U.S. men’s and women’s hockey players for the first time, and on freestyle, freestyle skiers and snowboarders,” Solomon said. “If you are a fan of snowboarding, you will hear Maddie Mastro give herself a pep talk at the top of the pipe.”

Less obvious will be improvements to the successful comprehensive cross-platform programming and tech strategy that was used during the Paris Olympics to provide viewers with many ways to interact and personalize their viewing experiences on TV, mobile and desktop.

“Customers have told us directly, ‘I love the Olympics, but there is so much of it I get overwhelmed,’” Forlenza explained. “There are customers who want to watch just about anything and some that want to watch specific events. All of them want us to make it as easy for me to get to an event I want to watch as quickly as possible…It doesn’t matter if you are streaming 3,000 hours on Peacock, if they can’t find the minute or two that they

really want to watch right now.”

On a high level, that imperative is reflected in a cross-platform programming and tech strategy that worked so well in Paris. As with the Summer Games, all events will be streamed on Peacock and USA’s 4K feeds will focus on Team USA, while the high-profile primetime and late-night programming on NBC will dive into the day’s biggest stories and events.

Tying this wide array of programming together will be several new and returning digital tools that will help viewers find content, interact with stories of interest and personalize their experience.

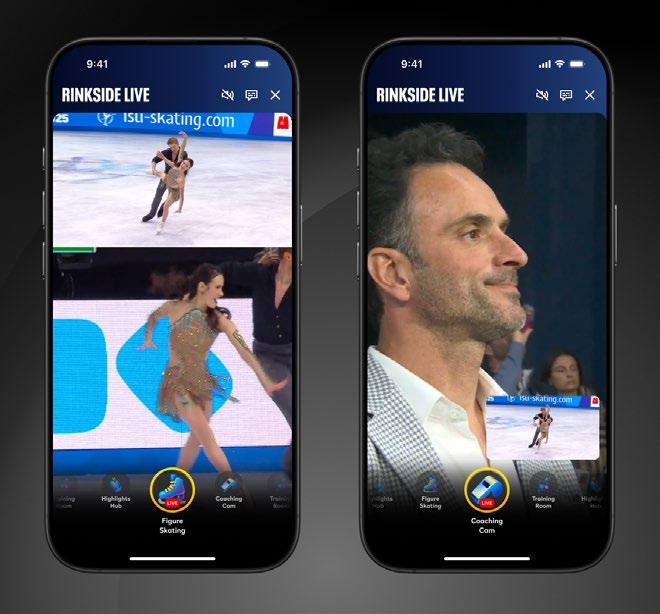

One notable new experience will be “Rinkside Live on Peacock,” where users of the streaming service can access new camera angles for live coverage and behind-thescenes shots of ice hockey and figure skating via a coach’s cam, a bench cam and other features, said Solomon.

Another notable digital tool is OLI, the AI-powered Olympic Guide that debuted in Paris and has been significantly upgraded for the Milan-Cortina Games. “This AI concierge will make following the games easier and more personalized than ever,” Jenny Storms, chief marketing officer, NBCUniversal Television and Streaming, said. “It’s like having a friendly Olympics expert on call across 19 NBCUniversal websites and apps.”

The clearest expression of this cross-platform strategy of melding programming and tech to greatly improve viewer engagement will be found on the Comcast Xfinity platform.

As part of that effort, Xfinity will bring back and improve popular interactive features from the Paris Games like AI-powered highlights, as well as newer tools like Fan View, which brings together stats, personalized playlists, live scores, athlete profiles, advanced DVR capabilities and betting odds, and Multiview, which lets viewers watch up to four different feeds at the same time.

“We are able to blur the lines between traditional TV and streaming to the point where it doesn’t matter if your favorite event is on broadcast or streaming on Peacock,” Forlenza said. “We’ll have a full and seamless integration of Peacock into our Olympic experience and wrap it all together with tons of interactivity.”

“The core of our business is coupling video, broadband and mobile,” he concluded. “We will be bringing all of that together to provide an unsurpassed experience that others can’t offer and fixed wireless can’t support.” ●

Many of the most notable improvements in the Olympics viewing experience also highlight trends in technology that will be important long after the Milan-Cortina Games have wrapped.

• Cross-Platform Tech and Programming: The Winter Olympics will see notable advances in cross-platform experiences, thanks to the development of advanced networks, improved user interfaces and AI technologies that allow networks, digital platforms and streaming services to blur the lines between streaming, TV and mobile.

• Bandwidth and Speed: With cable operators like Comcast losing broadband subs to 5G fixed wireless offerings, Comcast is heavily promoting the competitive advantages of its broadband network, which can deliver bandwidth-intensive Enhanced 4K HDR video feeds with very low latency.

• Artificial Intelligence: The Winter Olympics illustrate how companies are embracing AI to help viewers find content and to create clips and personalized playlists and experiences.

• NextGen TV and HDR: With live sports providing many of the most popular programs on linear TV, programmers and operators are under growing pressure to deliver visually stunning images. NBCU and some station groups declined to comment on their plans for NextGen TV/ATSC 3.0 broadcasts during the Winter Games, but stations in about 56 markets used ATSC 3.0 to deliver HDR feeds during the Paris Olympics.

— George Winslow

By Eric Zornes

Sports television has always chased immersion. Higher frame rates, longer lenses and increasingly complex graphics all served the same goal: putting the viewer closer to the action.

But for all the visual advances, sound remains a powerful shortcut to realism.

At the U.S. Figure Skating National Championships in St. Louis last month, a quiet piece of audio innovation brought audiences closer to the ice than ever before by placing microphones directly inside it.

Broadcast audio technicians arrived in St. Louis early to help the ice operations team install prototype Audio-Technica contact

microphones directly into the skating surface. These contact microphones converted vibrations in the ice into an electrical signal that could be amplified for broadcast.

From there, a narrow trench ran from the mic location back to the dasher boards, allowing the cable to exit cleanly at the rink perimeter. Once the microphones were seated, the cabling was dressed tightly against the wall so it could later be tied into the broadcast infrastructure.

After installation, the ice team packed slush over the microphones and trenches, resurfaced the area, and painted the ice to conceal any visible lines. When finished, there was no visible evidence that 10 microphones were sitting just beneath the surface, positioned evenly around the rink.

Once the physical work was complete, the broadcast audio team tied the microphones

into the broadcast truck, where the real test began.

The prototype microphones were contact mics, capturing only vibrations from the ice. Audio-Technica supplied the mics, with Gary Dixon, director of broadcast business development, closely involved in testing and exploring their applications.

“The goal is always the same,” Dixon explained. “How do we make sounds more interesting to people?”

Because the element was embedded directly into the ice, the microphones were inherently isolated from ambient noise. The mics use standard phantom power and were connected to conventional microphone inputs, with an internal amplifier bringing the piezo-element (an electrical charge or voltage

generated by mechanical stress) signal up to microphone level.

Crowd reaction, PA spill and arena noise simply did not factor into the signal path. What remained was pure mechanical sound: blades carving ice, shifts in pressure, landings, takeoffs and the rhythm of the skating itself.

The microphones used in St. Louis were available through Audio-Technica’s rental program, with plans for a future commercial release. Dixon sees broad potential well beyond figure skating, such as basketball rims, soccer goalposts, swimming environments and even engines. Anywhere vibration tells a story, contact microphones offer a new way to listen.

For Randy Pekich, senior audio mixer with NBC, the first listen was eye-opening.

“When I heard them, my first reaction was, ‘No way that’s a shotgun mic,’” Pekich said. “I was completely fascinated.”

With more than three decades in television audio and a background spanning music recording, studio television and large-scale remote productions, Pekich had embedded microphones into just about every imaginable place. But contact microphones in ice were new territory.

His initial concern was physics. With roughly an inch of ice covering each microphone, Pekich expected the sound to be thin, fragile or highly localized. Instead, he found that each mic captured a surprisingly wide area, roughly a 6- to 8-foot diameter, with enough detail to hear skaters working at center ice.

“They’re outstanding,” he said. “One of the best inventions to happen to the sport.”

Historically, figure skating audio relied on a combination of shotguns, PCC-style boundary microphones and occasionally lavaliers embedded in the dasher boards. As overall venue levels increased, those microphones inevitably behaved more like crowd mics, capturing PA and audience noise instead of isolating onice action. The in-ice contact microphones changed that equation entirely.

During the event, Pekich continuously monitored ambient sound levels in the arena, including crowd noise, music playback and general environmental spill, to understand what he was up against. With the contact microphones, much of that fight disappeared.

“I worried whether 10 microphones would be enough,” he admitted. “Then I heard everything.”

Because the microphones only responded

At the U.S. Figure Skating National Championships, prototype contact microphones were embedded directly in the skating surface. These contact microphones converted vibrations in the ice into an electrical signal that could be amplified for broadcast.

to vibration in the ice, fader management became significantly easier. Instead of chasing skaters around the rink or riding levels to avoid crowd surges, Pekich could focus on storytelling. Subtle compression and minimal EQ were all that was required.

“Considering the massive frequency restrictor of the surrounding ice, they performed flawlessly,” he said. “They’re very true to sound.”

Importantly, the sound resonated with those who knew it best. Former skaters serving as on-air analysts commented that the audio matched exactly what they remembered hearing while skating. In some cases, they could even identify blade placement, helping them explain technique and judging decisions to viewers.

Embedding equipment into the field of play is always a sensitive issue, particularly in a sport where surface quality directly affects athlete safety. John Monteleone, director of education with the U.S. Ice Rink Association, who oversaw the ice operations, acknowledged the initial hesitation.

“Most ice operations staff shy away from placing any foreign matter in the ice,” Monteleone said. “But after refining the process since 2019, we’re confident in the installation.”

The key, he explained, was precision and consistency. Keeping the cable runs straight, thoroughly removing ice shavings and carefully packing slush over the microphones ensured

Working alongside the ice crew, broadcast audio technicians used a router to cut channels into the surface approximately two to three inches wide to accommodate each microphone.

a uniform surface. Throughout the event, there were no impacts to ice quality, no interference from resurfacing equipment and no contact from skaters’ toe picks.

Removal, however, remained the most time-consuming part of the process. Locating and extracting the microphones after the event could take time and required chipping and hot water. Even so, Monteleone said he would be comfortable deploying the system again.

“My preference is always to keep the field of play as clean as possible,” he said. “But with the experience we’ve gained, I have no reservations about continuing to use in-ice microphones for these events.”

Figure skating is a deceptively difficult sport to mix. The action is fluid, the pacing varies and the most important moments are often quiet, such as a subtle shift before a jump or the scrape of an edge setting up a landing. These embedded contact microphones added an entirely new layer of that story.

The on-ice sound translated exceptionally well, earning praise from the production team, leadership and viewers alike.

For audio, innovation doesn’t always mean more microphones or more channels. Sometimes it means listening differently. By installing directly to the ice, these contact microphones offered a rare combination of isolation, authenticity and emotional impact, bringing audiences closer to the sport without ever being seen. ●

An increasing number of VP degree programs are sparking collaboration across disciplines that traditionally worked separately

By Fred Dawson

Surging college commitments to bankrolling students’ hands-on experience with virtual production in film and TV degree programs are rapidly closing the knowledge gap that has been a significant drag on the industry’s VP adoption rate.

From schools specializing in creative arts to big universities on both coasts and in many locations between, the last few years have seen a transformation in how students are taught not only to plan and execute productions, but also to work as writers, actors, set designers and other participants in the

new creative environment. For the first time, vast numbers of undergraduates, as well as graduates and working professionals who need to update their knowhow, have access to the latest advances in LED volume design, multipanel synchronization, 3D in-camera visual effects (ICVSF), virtual object placement, automated lighting dynamism, camera tracking and other hardware and software technologies that are impacting every facet of professional video production.

“After the pandemic there was a retraction in virtual production because people weren’t able to use the technology,” Habib Zargarpour, co-head of VP at the University of Southern California’s School of Cinematic Arts, said.

“We’re now getting a new generation of professionals who are able to do this work.”

USC’s soon to be completed Blavatnik Center for Virtual Production, which will greatly enhance an existing VP curriculum, is moving in the direction taken by a long list of institutions like New York University’s Tisch School of the Arts, with its new Martin Scorsese Center for Virtual Production, and the Savannah College of Arts and Design (SCAD), with professional-scale LED volume projects in Savannah and Atlanta.

Some other well-equipped VP learning centers include the University of Michigan’s Center for Academic Innovation, Arizona State University’s Media and Immersive eXperience Center, Texas A&M University’s Virtual Production Institute, the University of Florida’s Digital Worlds Institute and the University of South Florida’s Zimmerman School of Mass Communications.

NYU’s Scorsese Center, now midway through its second year, occupies 45,586 square feet in a building on the university’s 35-acre Innovation Campus along the Brooklyn waterfront. The facility supports two 3,500 square-foot double-height stages, two 1,800 square-foot television studios, state-of-theart broadcast and control rooms, dressing and makeup rooms, a lounge and bistro, scene workshops, offices, postproduction labs, finishing suites and training spaces.

“We’re really proud of our facilities,” said Sang-Jin Bae, Tisch arts professor and Scorsese Center director. “A major benefit of what we’re teaching is we’re focused on providing an environment where you can learn the hard skills you need.”

This goes to the quality of the instruction as well as the equipment, he added. For example, the use of the all-important Unreal Engine software stack is taught by three Scorsese staff members who are Epic Unreal Authorized Trainers.

Among the many emergent VP innovations working with Unreal Engine’s real-time execution of 3D environments at the center, Bae lists VICON’s motion-capture system used in camera tracking; AV Stumpfl’s PIXERA media server, supporting 2D playback on the LED volumes; MegaPixel VR processors orchestrating displays on ROE Visual’s BlackPearl2 LED panels; and the ARRI Alexa35 Camera and Zeiss lenses that serve to mitigate the moiré effect resulting from mismatches between camera sensors and LED display patterns.

Of course, learning the technical dimensions is just part of the VP education process. In fact,

a recurrent theme arising in discussions with educators like Bae is the extent to which the technical skills they teach are subservient to the goal of achieving creative success. “We’re coming from the end user storytelling side,” he said.

USC’s School of Cinematic Arts (SCA) in Los Angeles launched its first two courses in VP in 2022. The school expects its new Blavatnik Center for Virtual Production to be completed in 2027.

The VP education agenda unfolding at SCAD is no less impressive with regard to both the scale and sophistication of technical support and the range of learning experiences. As with everyone else engaged in bringing VP onto college campuses, the undertaking that started at SCAD in 2021 has been a “figure-it-out-as-you-go” experience, said Quinn Orear, associate chair of film and business at SCAD, who leads the film and TV department at the Atlanta facility.

“This technology is so new for everybody; a lot of us had to learn in step with students,” Orear said. But having no “how-to playbook” to work with had its advantages, including freeing staff “to be creative in discovering new ways to use the technology” aided by training provided by the teams that built the sites.

The experience has also brought into play a new realm of collaboration across disciplines that traditionally worked separately. Production design, visual effects, video game development, editing—it all came together with a faculty “that keeps our feet in the industry as we teach,” Orear said.

The first VP LED volume and XR stage installation was completed in 2021 as part of an expansion project on the 11-acre lot at Savannah Film Studios, which SCAD bills as the “largest and most comprehensive university film studio complex in the nation.” The project is now in its third stage, adding two sound stages and new backlot facades to over 40 separate street facades and more than 8,000 square feet of dressed set spaces that model historic Savannah, big-city streetscapes, and small town USA scenes from a variety of eras.

A year later, SCAD opened the second VP stage at the recently expanded SCAD Digital Media Center in Atlanta, which, like the Savannah stage, comprises a 60-by-16-foot curved LED wall coupled with a 38-by-20-foot LED ceiling. Both stages are powered by Disguise xR technology running on Disguise VX 4 and

“This technology is so new for everybody, a lot of us had to learn in step with students.”

QUINN OREAR, SAVANNAH COLLEGE OF ART & DESIGN

RX II servers with LED volumes supplied by DeNyse Digital.

Orear said SCAD, with over 100 degree programs, has made VP training available wherever it’s needed. And, with the Atlanta facility sitting amid a thriving Hollywood East production environment, the VP assets are also getting plenty of commercial use, which contributes further to the general learning atmosphere. “We’re seeing a lot of cross-disciplinary collaboration,” he said.

A similar culture prevails at USC’s School of Cinematic Arts (SCA) in Los Angeles, where the first two courses in VP were launched in 2022 in support of short filmmaking using the Unity game engine. This was followed by the implementation of the Unreal Engine, with the installation of an LED wall supplied by Sony. A class in LED volume usage followed with students now competing to have their five- to 10-minute projects produced for presentation at the end of the school year.

“We have students from production, ani-

mation, interactive, experimental animation, cinematograph—graduates as well as undergraduates,” Zargarpour said. “The multidisciplinary mix has worked well with roles shifting and students learning the best way to make use of the LED wall and how to integrate real with virtual sets.”

SCA doesn’t yet offer a degree in VP, but “we’re working on it,” he added. “Once the new [Blavatnik] center is ready, we’re going to be able to make greater use of the VP technology.”

Funded by a $25 million donation from the Blavatnik Family Foundation announced in mid-2025, the project is still in the design phase with the goal of getting it done “ASAP,” Zargarpour said. The plan, which he helped develop and builds on lessons learned from work with the current LED stage, calls for a multiuse space housing two stages with wraparound LED panel walls, as well as performance capture, camera tracking and lighting systems, along with multiple classrooms and labs equipped with 3D design software and digital asset libraries.

Understandably, given that the schools profiled here and the many others committing to VP are rooted in cinematic arts training, support for use of VP in live sports, news and other TV-centered productions is generally not part of the curriculum. Live “is currently outside our domain,” Zargarpour said. Occasionally, SCAD’s VP facilities are used by “students who want to learn about how LED volumes are utilized in live production,” Orear said, but such experiments are “feeling like early days as that evolves.” ●

Next-generation platforms will require different levels of control, security and cost

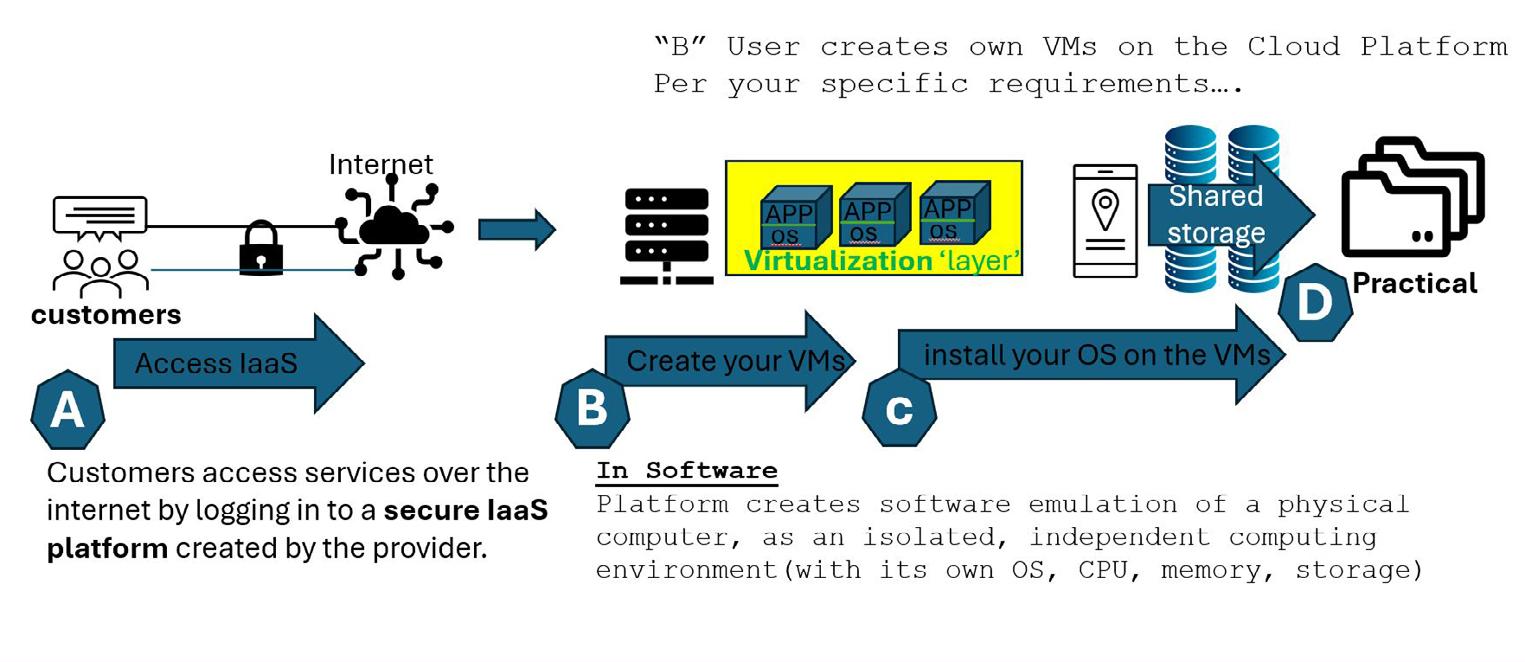

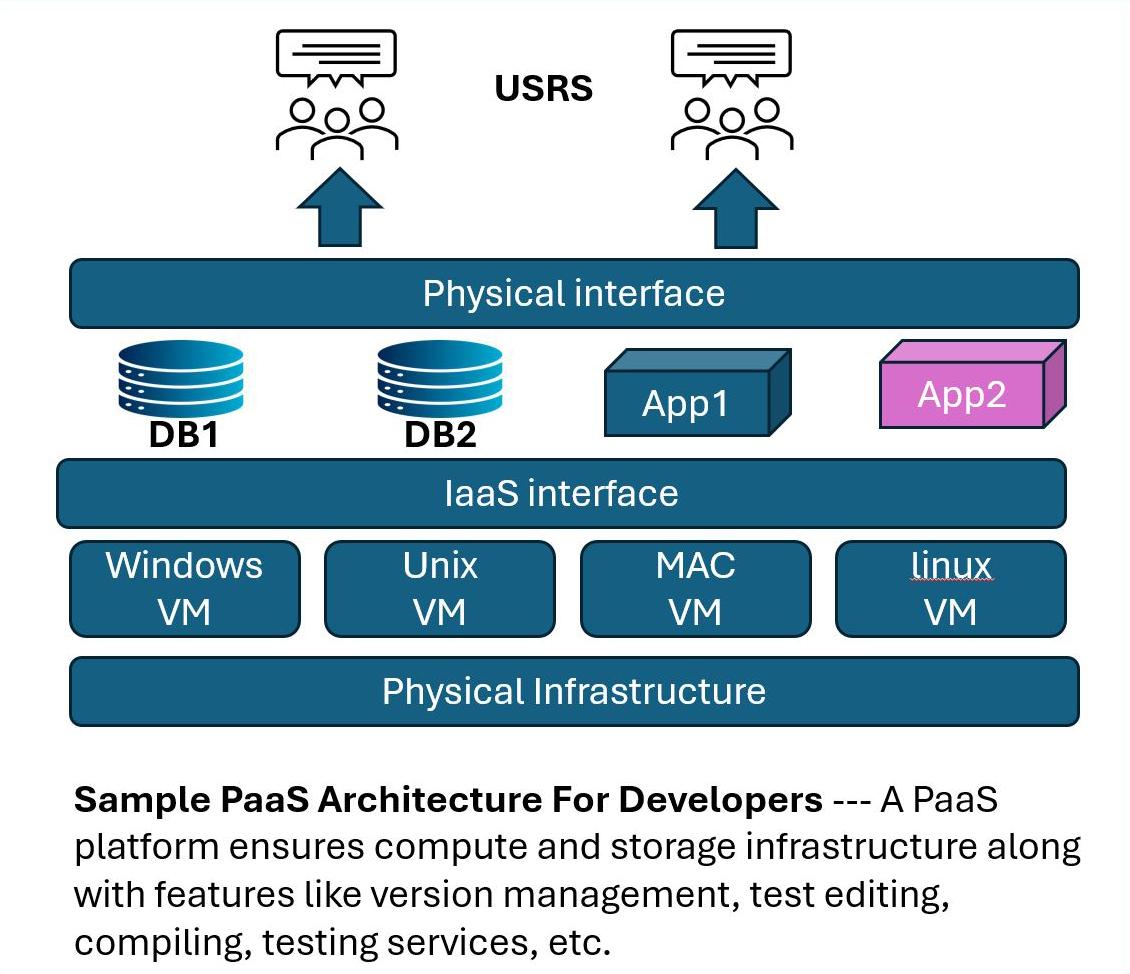

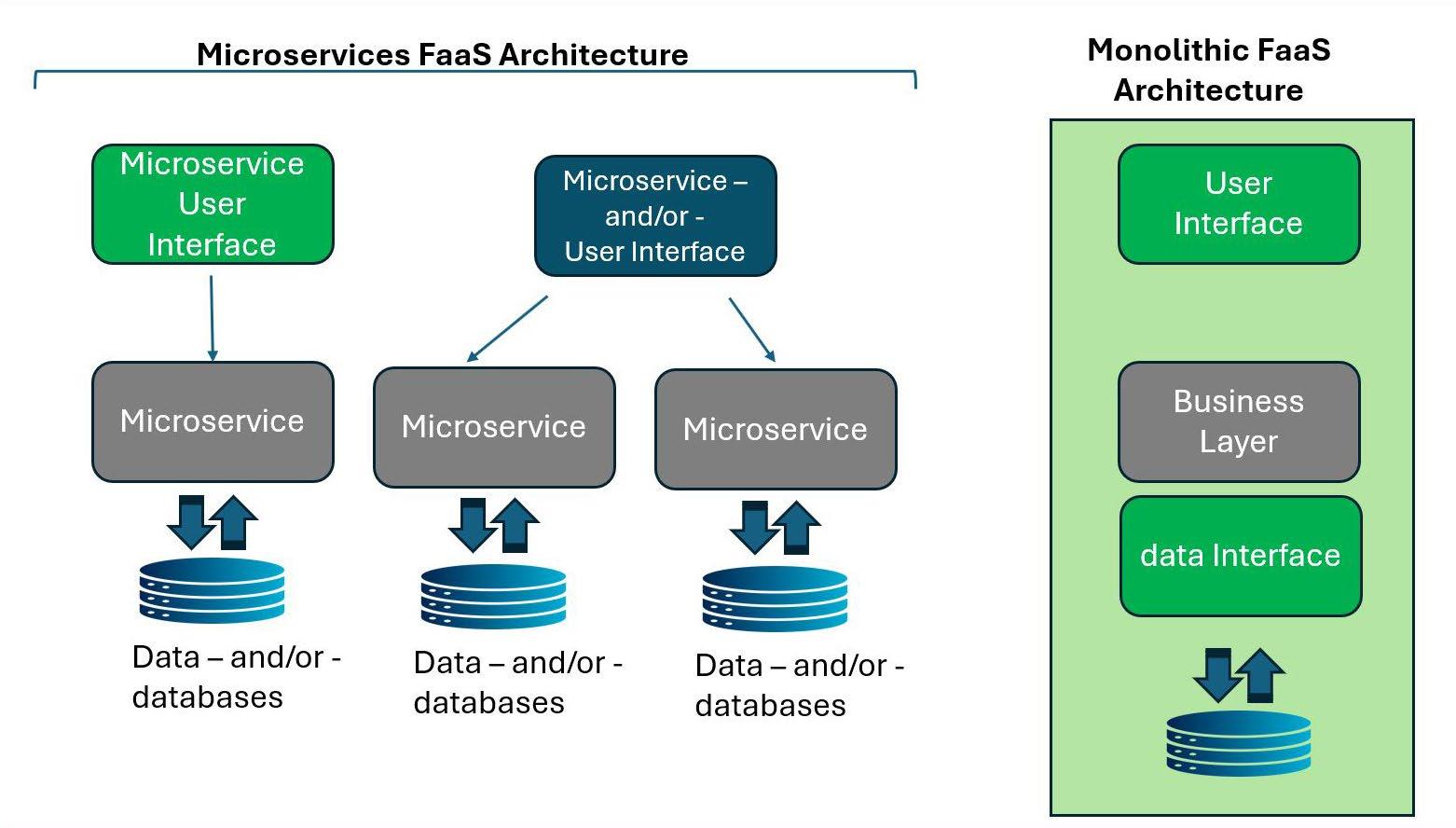

What can we begin to expect in terms of types of cloud services, including the trends for today and tomorrow? To best understand the kinds of services available in the cloud, readers should have fundamental perceptions of how various services function and articulate performance as driven by the user or subscriber to those particular services.

To review from the many previous articles in this column and TV Tech, these are the key “ready-to-use” applications found in many cloud computing platforms:

• “Infrastructure as a Service (IaaS),” see Fig. 1, for fundamental resources like servers.

• “Platform as a Service (PaaS),” see Fig. 2, for cloud-development environments.

• “Software as a Service (SaaS),” for ready-to-use applications.

• Serverless computing or “Function as a Service (FaaS),” see Fig. 3, for eventdriven functions, which focuses primarily on pure code execution.

That said, most of the various XaaS applications are pretty much solid, running in fixed data centers or as cloud-compute services. So, where indeed does “cloud comput-

ing” head next?

Again, we move back to those topics covered over the past 18 months or so in my TV Tech columns, which detail the four main types of cloud computing deployment models: public, private, hybrid and multi- and/or community clouds. In the not-toodistant future, the next generation of cloud-compute platforms will need to offer different levels of control, security and cost, especially when looking at operations and utiliza-

As competition for services increases and the adaptation of existing cloud-centric data centers yields more choices for users, we can certainly expect widespread adoption of multicloud and hybrid cloud strategies.

tion from shared public resources (i.e., “you” the end user).

Another division of overall “cloud” models, infrastructures or architecture must further address different types of cloud computing, including deployment and service models. Defining cloud computing models further describes which computing resources are appropriate for which applications, such as server vs. serverless, shared or distributed storage, databases, software and specialized applications, which are, generally, delivered over the internet. This implies companies can utilize these (and other emerging resources) without possessing or maintaining a huge or costly physical infrastructure.

Trends to expect in 2026 for cloud computing will likely involve the harmonization of techniques for deep AI/ML (artificial intelligence/machine learning) integration. We also anticipate significant shifts due in part to changes in data-center development and related infrastructures, i.e., the expansion of edge computing and the provision of sufficient bandwidth to deliver solutions “to the edge” needed to address expected demands from a variety of devices, mobile and otherwise.

As competition for services increases and the adaptation of existing cloud-centric data centers yields more choices for users, we can certainly expect widespread adoption of multicloud and hybrid cloud strategies.

New developments that will leverage a focus on cloud-native technologies, including serverless and containers, will change the level of I/O requirements. Internet egress (i.e., the on-ramps and off-ramps) will continue to expand as more players enter the cloud and/ or AI marketplace.

Furthermore, we can anticipate a growing interest in quantum computing—i.e., quantum mechanics (superposition and entanglement)—with qubits to process information, allowing them to be 0, 1, or both simultaneously, unlike classical bits (0 or 1 only).

This level of structure aimed at supporting quantum computing through the cloud will

surely require a strong emphasis on sustainability while managing costs via FinOps, or “finance” and “DevOps” defined as a collaborative, cultural practice. Financial-management discipline must help organizations reach high business value from cloud spending by bringing engineering, finance and business teams together to make data-driven decisions for optimizing costs, improving efficiency and aligning cloud usage with business goals.

In addition to more depth on each of the cited trends, data litigation and protection are also expected to be important global trends that may be categorized as Data Sovereignty and Compliance. Each of these trends plays on new or expanded capabilities in data-systems design and engineering including—if not especially—enhanced security (including DevSecOps and/or Zero Trust). The data industry will soon need to embrace Intelligent Security (otherwise known as DevSecOps) beginning at the initial software-development process.

capabilities, the provider extends the reach of the cloud to the edge of the network—enabling faster data processing from internet of things (IoT) devices, autonomous vehicles and other edge devices. AI in edge computing through on-device AI inference, on-theedge AI model training and thinedge AI was a key trend in 2024. Today and going forward, bringing compute and storage closer to devices, in turn, cuts latency and improves efficiency for time-sensitive tasks. In essence, the edge device needs only to send back certain essential data (information) back to the core “source” data center.

Driven in part by the emphasis on AI, expect that an increasing focus on meeting strict data regulations and ensuring data privacy could dramatically change the landscape, if it is not carefully orchestrated on an international basis that bypasses politics or attempts at “global dominance” by any governing body.

Developers and service providers will be expected to provide still “yet-to-be-fully-defined” levels of embedded security into the development pipeline (i.e., DevSecOps) and to adopt zero-trust models for automated, reliable cloud security.

Artificial intelligence is evolving deeply into embedded systems built on or in cloud platforms, optimizing every facet of cloud operations and security. Of importance is this integration of machine learning and AI at all levels of the computer chain. Among the features we can expect to see are real-time resource allocation, automated scaling (or the resizing of compute resources based upon the load), predictive maintenance and advanced security-threat detection. Promoters believe such changes are crucial to realizing the true needs and value of AI in any cloud computing environment.

Edge computing is a distributed IT approach that processes data nearer to its source (the

“edge” of the network) instead of in distant, centralized cloud data centers. This is exemplified by some of the functionality of mobile devices that make choices or provide answers without necessarily being specifically connected (wired or wirelessly) to “the network”—akin to how IDP systems will cache certain sets of predicted replies to the local server rather than rely on every communication sourcing back through the network to a mainstream data center.

AI will be used to make edge devices more intelligent, improving speed, accessibility and endurance for select mobile devices.

By balancing source vs. edge computing

Some 76% of businesses moving to the cloud use a hybrid or multicloud approach, according to a May 2025 blog post from managed services provider All Covered. In a broad sense, the primary trends in cloud computing include a rise in platform engineering that aims to manage multicloud complexity, as well as AI adoption as the main driver. Such improvements are properly coupled with FinOps (i.e., cloud-cost optimization trends and tools) and loud sustainability or “Green Cloud” computing trends. ●

Karl Paulsen, CPBE & SMPTE Fellow, is a retired chief technology officer and a longtime contributor to TV Tech on media technologies, workflows, IP networking and cloud. He can be reached at karl@ivideoserver.tv or at TV Tech.

Among the unexpected things the pandemic left in its wake is a higher tolerance for bad lighting. For evidence of this, just check out any Zoom meeting. Faces in shadows framed by blown-out backgrounds look more like witness-protection videos than what we normally see on camera.

Every new technology unleashed on the public is bound to have some hiccups. Ever notice how many teleconference meetings start like a séance, trying to contact departed spirits? “George, can you hear us? We can see you, but can’t hear you.”

For a technology that was previously only in the hands of “experts,” people with zero training have managed better than expected. We’re experiencing a democratization of telecommunications, enabled by plug-andplay components and readily accessible apps. Social media posts are today’s equivalent of pontificating from atop a soapbox in the park, but with a revenue stream. With these new tools, almost anyone can put together a video podcast.

The low quality of most video podcasts

makes “close enough for television” seem like the golden age of excellence. They’re cringe-worthy, rather than binge-worthy. As good as entry-level gear has become, production values suffer from a lack of decent lighting.

Newer cameras can make pictures in almost no light, which may ironically be why the lighting falls short. It no longer has to be properly lit to be seen, so it often isn’t. These novice media moguls could considerably up their game with a bit more attention to how things look.

The goal of lighting isn’t merely academic. Good lighting can help video podcasters connect with their audience by making the hosts appear more relatable. We tend to connect more with people when we can actually see their eyes, let alone their faces.

Video podcasting is a big deal, even if the podcasts often don’t always look the part. The top-rated video podcasters have millions of viewers with audience demographics that companies want most. Product placement, compensated endorsements and lucrative sponsorship deals have made this a multibillion-dollar industry.

In this competitive media landscape vying for viewer attention, getting the lighting right provides an edge. Unfortunately, many video podcasts look like they’re made in a closet— illuminated with all the lighting artistry of a bare bulb on a pull chain. Improving on this could be as simple as using a clip-on reflector work light pointed at the host.

podcasts.

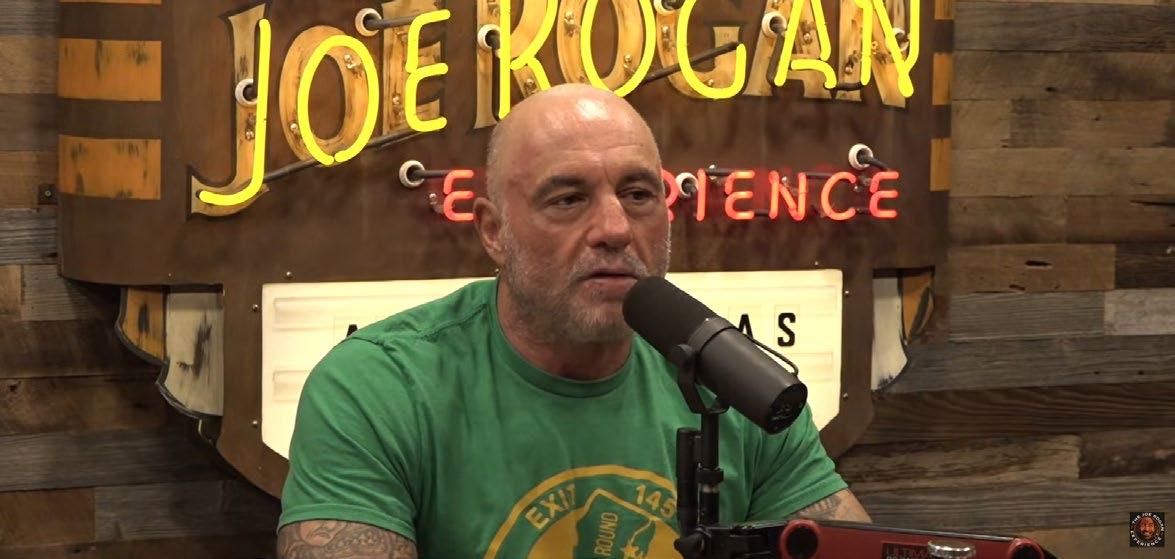

One popular “alpha bro” video podcast, “The Joe Rogan Experience,” looks like it spent more money on its neon sign than on lighting the host. Then again, maybe that dimly lit back-alley aesthetic appeals to his audience. Are the deeply shaded eyes meant to suggest some unvarnished authenticity, or just poor lighting choices? Could Rogan spin conspiracy theories as convincingly if viewers could see the twinkle in his eyes?

Other successful video podcasters have done a brilliant job of creating visual surroundings that support their show’s themes. Alex Cooper’s popular “Call Her Daddy” video podcast creates a cozy environment for her guests. The right lighting and inviting scenic touches help create a beguiling space for the confessions and gossip that its guests seem to snuggle into. Her show’s curated “look” works by design.

When lighting a video podcast, the questions are much the same as for any other show. The camera shots guide the lighting, so we need to know where the cameras are, who they’re shooting, and where they’re standing or sitting.

Beyond that comes the nuts and bolts of making it work. What types of lights need to go where, and how will they be mounted? Light stands and cables may clutter the floor, while ceiling mounts and cable runs require some additional engineering. In short, it’s like any other lighting project—but on a smaller scale.

Whatever the budget, the basic “three-point lighting” approach is a good place to start.

The illustrated example (two people and four lights) can be done in the corner of a basement, a small room, or a large closet. The size and power of the light fixtures should be chosen to meet the required throw distance. Always adhere to “best practices” for mounting the lights and running power. Remember that people will be under those lights, so use safety cables, sandbags and common sense.

The example illustrated in Fig. 1 is typical for video podcasting. A central camera covers two people chatting. Additional lights can be added to highlight items in the background. If the budget is tight, begin with the two soft lights in the front corners, adding the remaining lights as funding permits.

Remembering that the larger the fixture aperture is, the softer the light will be, so the corner fill lights should have a relatively wide opening. They should fill softly without creating noticeable shadows. Let the work of making a modeling shadow fall to the Fresnel (or other “hard” light) in the center

The low quality of most video podcasts makes ‘close enough for television’ seem like the golden age of excellence. They’re cringe-worthy rather than binge-worthy.

over the camera. Depending on the camera, an overall reading of 45 to 60 foot-candles should be perfect.

As for which lights to buy, you can’t go wrong with quality equipment backed by manufacturers that stand behind their products. Otherwise, “Buy cheap, buy twice.”

Lighting residential spaces for video presents different challenges than working in purpose-built studios; the bane of these impromptu spaces is the low ceiling and lack of hanging points. Getting lights secured where you need them always requires some creative mechanical engineering, or you can use stands. Use low-profile fixtures to reduce head bumps.

As for how to control the lights, most provide Bluetooth or other remote connectivity. For simplicity, it’s best if the lights can be controlled by a single app. Because LED lights have internal dimming electronics designed for full line voltage, don’t use external dimmers—they’ll cause damage.

While lighting a “black box” studio set calls for making a completely artificial space look more like a plausible environment, shooting in an ordinary room calls for adding some lighting “magic” to keep the space from looking too prosaic. And because the goal is connecting with your audience, always make sure the host is well-lit and looking good. ●

Bruce Aleksander invites comments from others interested in lighting at TVLightingguy@hotmail.com.

Telycam’s Mix One is an all-in-one video production switcher designed for PTZ-centric workflows. It combines an encoder, decoder, monitor, video switcher and PTZ controller within a single compact unit, giving creators a powerful, intuitive tool for live streaming, podcasting and professional content production. Built on industry standards and modern AV-over-IP architecture, Mix One offers an elegant, scalable alternative to traditional hardware-heavy production setups. With native support for NDI HX, SRT, RTMP and RTSP, users can work with low-latency IP video directly over the network without external converters or capture cards. IP video routing, remote camera control and automatic device discovery make scaling simple. Expanding or redeploying a system is as easy as connecting new devices to the same network.

https://telycam.com

Chyron’s new Virtual Placement 8.0 features new SMPTE 2110 IP connectivity and NBA-focused line tracking. The latest version’s 2110 support aligns Virtual Placement with the industry trend towards uncompressed and future-proofs the product. Virtual Placement 8.0 brings court-accurate tracking to basketball, keeping virtual graphics visually locked to the floor and aligned with the action, which enables stable, hardware-free on-court tracking and unlocks advanced graphics and analysis within live NBA broadcasts.

Virtual Placement continues to deliver virtual advertising, dynamic sports analysis tools and PRIME-powered broadcast graphics from a single platform.

https://chyron.com

Proton Camera Innovations’ compact Proton 4K Zoom is for productions working in confined spaces with limited accessibility, providing a new way to refine composition while preserving the immersive image quality of Proton’s 4K offering. Proton is also expanding lens compatibility across its range with the addition of C-mount options, complementing existing S-mount support. Having C-mount options allows users to access a broader selection of lenses, providing greater control over depth of field, focal length and visual character. The result is enhanced creative choice.

Proton is also adding connector-based cable interfaces, rather than permanently fixed connections, to its cameras. Now users can tailor cabling to specific installations, which simplifies replacement or reconfiguration while enabling more confident placement in challenging environments. https://proton-camera.com

Operative’s

new AOS Services Platform enables media companies to operate and grow their advertising businesses and power the development and deployment of AI-driven capabilities. The AOS Services Platform underpins modern media monetization by delivering a foundation of necessary services that supports all channels, integrates effortlessly into broader technology ecosystems and empowers teams to scale smarter and grow without infrastructure constraints.

Designed for organizations managing multiplatform monetization at scale, the new AOS Services Platform gives media companies the flexibility to standardize their foundational operations in a modular way while building unique capabilities on top and maintaining enterprise control. www.operative.com

Akta has introduced a new search function for its AI-First video platform, designed to deliver premium viewer experiences and provide media companies with more efficient operations.

Built on Google Gemini, the AI-First platform doesn’t simply add AI to existing solutions. Rather, AI is designed into the workflow, from how content is processed and prepared to how it’s delivered, monitored, and monetized. The new “AI-Powered Search” is a Gemini-powered intelligence layer that lets broadcasters and media publishers find, understand, and act on content instantly, across live, VOD, archives, metadata, transcripts and more. AI-Powered Search can search inside video; autogenerate and normalize metadata so results improve over time (even for back catalog); return precise segments, not just full programs—ready for clipping, publishing or syndication—and recommend next steps. www.akta.tech

Atomos has announced a new, free firmware update for its Ninja TX GO and Ninja TX monitor-recorders, enabling ProRes RAW recording from the Canon EOS R6 hybrid mirrorless camera. Firmware update 12.2.1 unlocks the full potential of the Canon EOS R6 Mark III with pristine ProRes RAW recording. Ninja TX supports a maximum video resolution of 6960 x 3672 up to 30p, and 4320 x 2278 up to 60p. Ninja TX GO has a maximum resolution of 4320 x 2278 up to 60p. Both also offer camera control of the EOS R6 Mk III over USB-C.

Ninja TX GO and Ninja TX are Atomos’ on-camera monitor-recorders designed for professional filmmakers and content creators. Both combine a bright, high-resolution 5-inch HDR touchscreen display with advanced recording capabilities, supporting formats like Apple ProRes RAW, ProRes RAW HQ, ProRes LT, 422 & 422 HQ and Avid DNx. www.atomos.com

Zoom is integrating NDI Advanced technologies across multiple Zoom platforms, offering organizations a way to transform their meeting rooms, shared spaces and event venues with flexible, high-quality video and audio connectivity. With NDI enabled within Custom AV for Zoom Rooms, enterprises can connect any NDI-compatible device or stream directly into Zoom meetings. This integration supports multicamera and multidisplay setups, making it easy to implement advanced AV-over-IP workflows in various spaces.

NDI Advanced technologies is also bringing new capabilities to Zoom’s “Zoom for Broadcast” tools, giving virtual and hybrid event producers enhanced abilities to deliver broadcast-quality experiences that drive interactivity and engagement at scale. https://ndi.video

New features for Cineverse’s proprietary content search and discovery tool, Cinesearch, include connected TV and voice capabilities that make it easier for users to find movies and TV shows. Powered by Matchpoint, Cinesearch is an AI-powered content platform available to consumers (cinesearch. com) and now for licensing to OEMs and streaming platforms. It leverages a proprietary dataset optimized for advanced AI search with an automated workflow that adds new metadata days after new films are released.

In addition to supporting content spanning over 500 streaming services, the underlying dataset, cineCore, now has an expansive taxonomy of contextual metadata that spans the spectrum of human experience: emotions, feelings, moods and vibes. The metadata has been structured into an expansive taxonomy that allows for highly effective search of over 75,000 film and TV series based on human emotion. https://cinesearch.com

Bridge Technologies’ new multiservice AV sync comparison feature in the AV Sync function of its VB440 production probe enables frame-accurate synchronization assessment across multiple delivery paths. Designed for live IP production environments but applicable in a range of contexts, it allows the assessment and comparison of multiple flows carrying the same service, including those across satellite, SRT or other IP routes.

The feature builds upon the core of the VB440’s AV Sync Generator’s capability as a first-line-of-defense approach to synchronization. The AV Sync Generator enables the system to embed machine-readable electronic markers directly into the audio and video signals. When the complete service is reconstructed at the client, these markers are analyzed to assess frame-accurate alignment of audio and video in real time. This ensures synchronization is measured on the content itself. https://bridgetech.tv

The Sony PDT-FP1 portable data transmitter was used in CBS Sports’ coverage of the PGA Championship, May 15-18, 2025 at Quail Hollow Club in Charlotte, N.C.

By Greg Coppa Senior Vice President, Engineering

CBS Sports and Craig Stevens

Vice President

Remote

Engineering

CBS

Sports

NEW YORK—The live sports broadcasting industry is grappling with a significant technological shift. Productions are becoming larger and more complex, and the demand for wireless cameras has outpaced the capabilities of traditional microwave RF systems that operate on increasingly crowded and limited frequency spectrums.

There’s a constant challenge to efficiently broadcast content wirelessly, which has created a strong industry-wide push to find viable next-generation solutions like 5G. All the traditional solutions still perform well, but when Sony demoed for us its 5G-powered live remote-production offering, comprised of a CBK-RPU7 encoder paired with the PDT-FP1 portable data transmitter and the NXL-ME80 media-edge processor to achieve ultra-low latency, our reaction was, “Why wouldn’t we look at it?”