Data and its management are a central concern for many healthscience professionals since AI, a burgeoning area of biotechnology, is only as good as the data it uses. For more on this read Through The Looking Glass (page 9), an article exploring how DeepMirror, an innovative UK-based company, is making already discovered research pathways available to health-science customers via its many and varied datasets. In a second data-focused article called Decoding Data Meaning (page 10) we look at why the health-science sector is more advanced than others in its use of data.

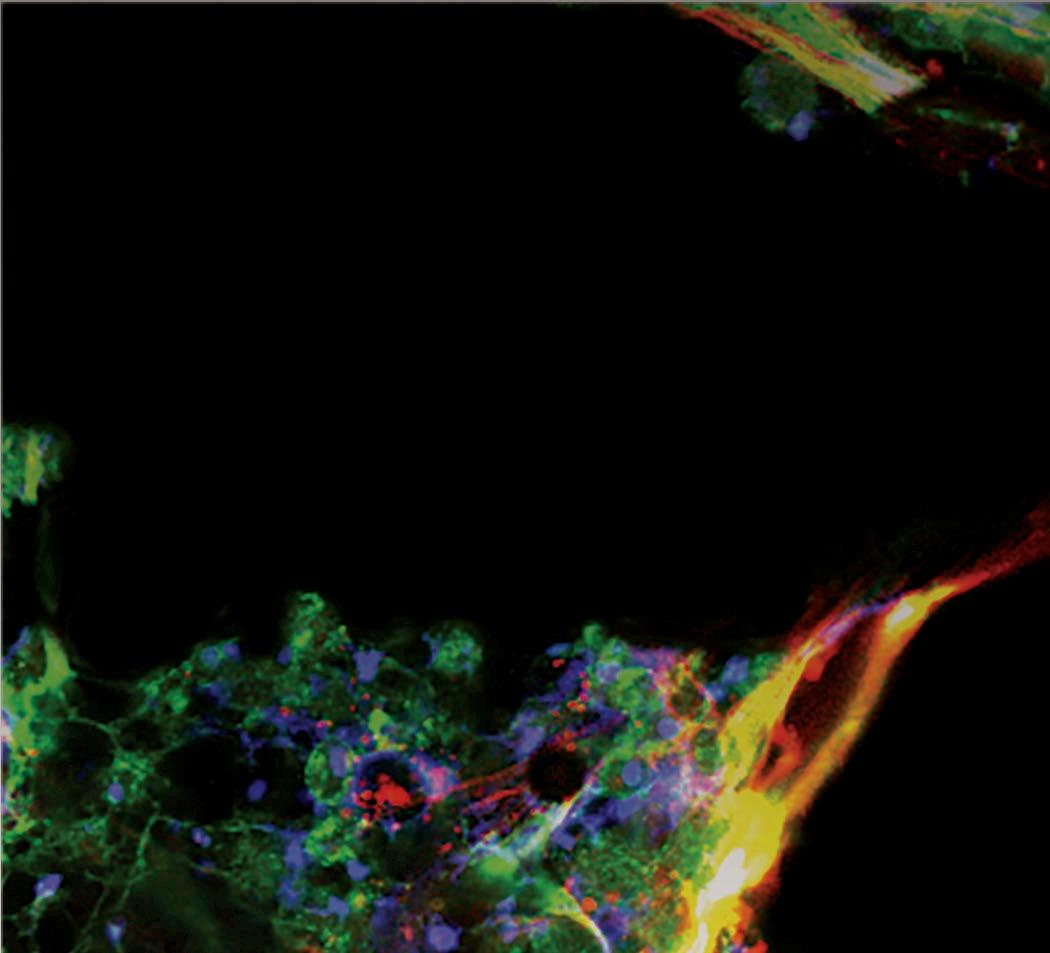

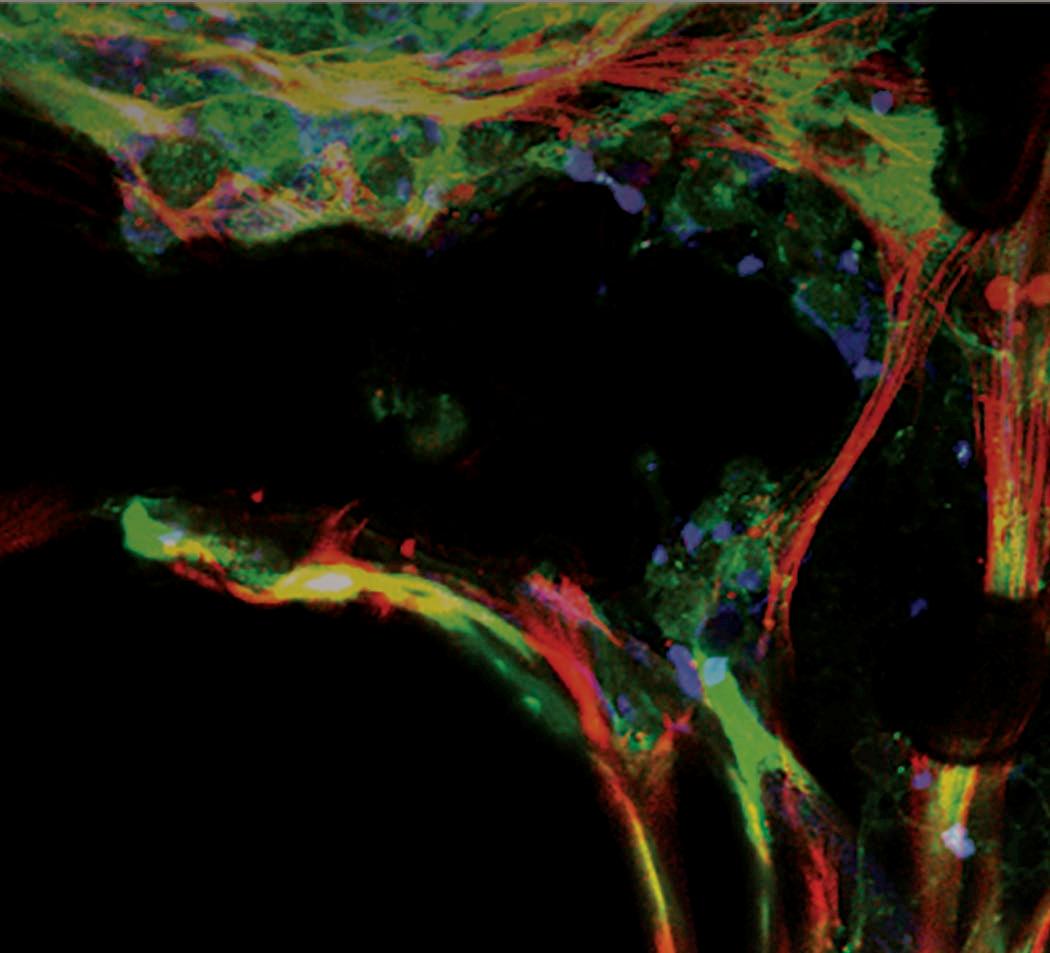

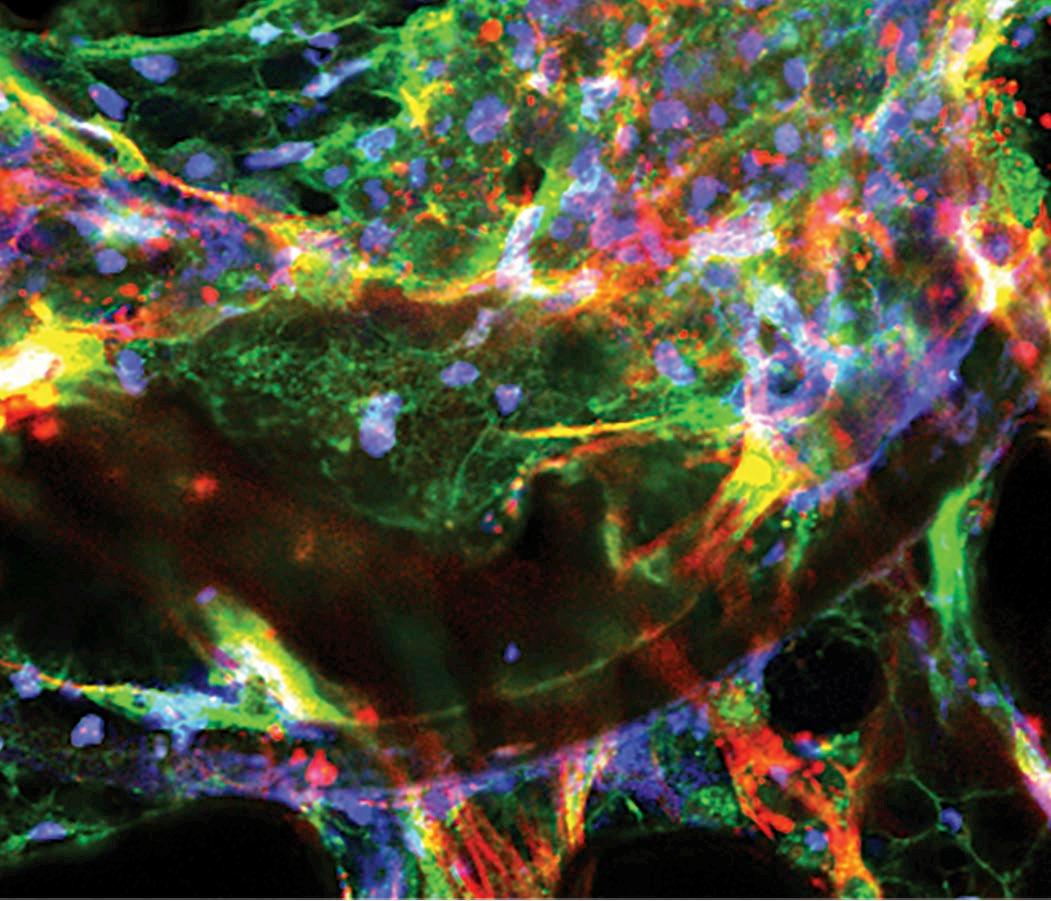

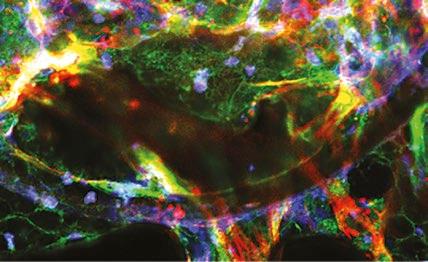

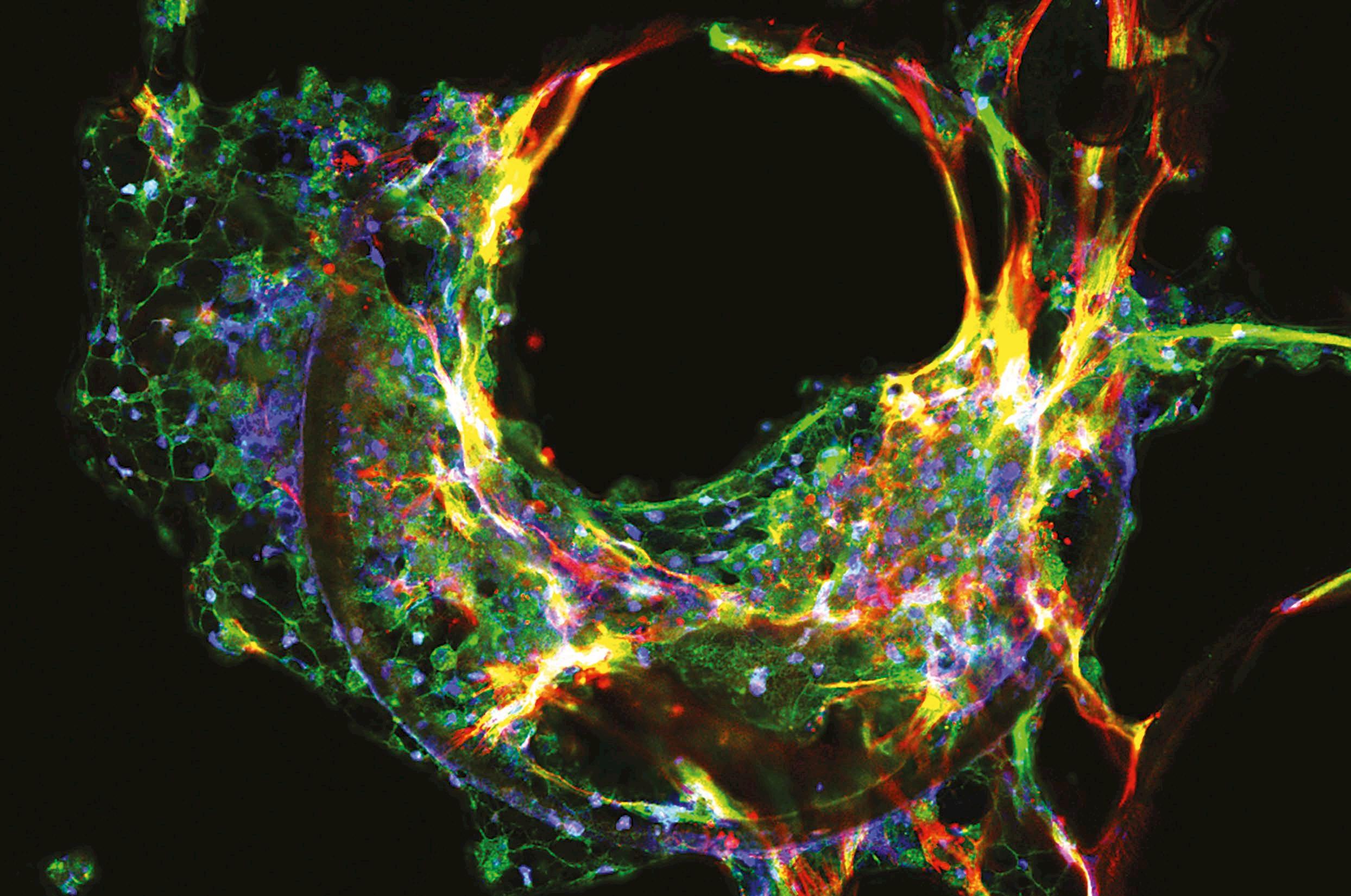

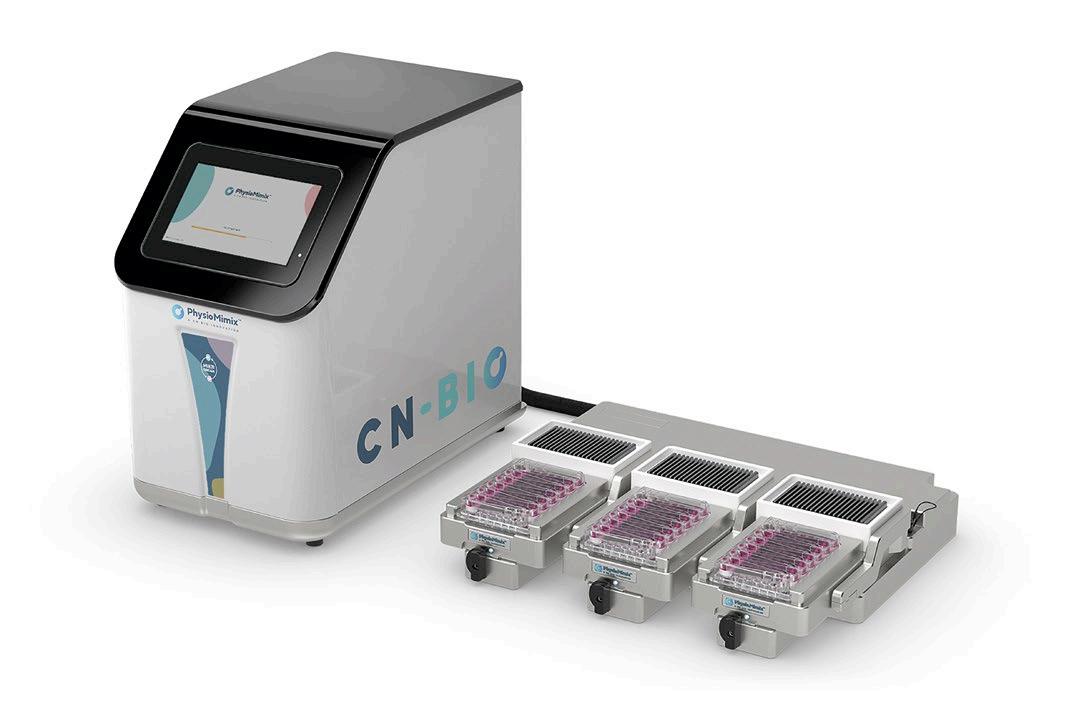

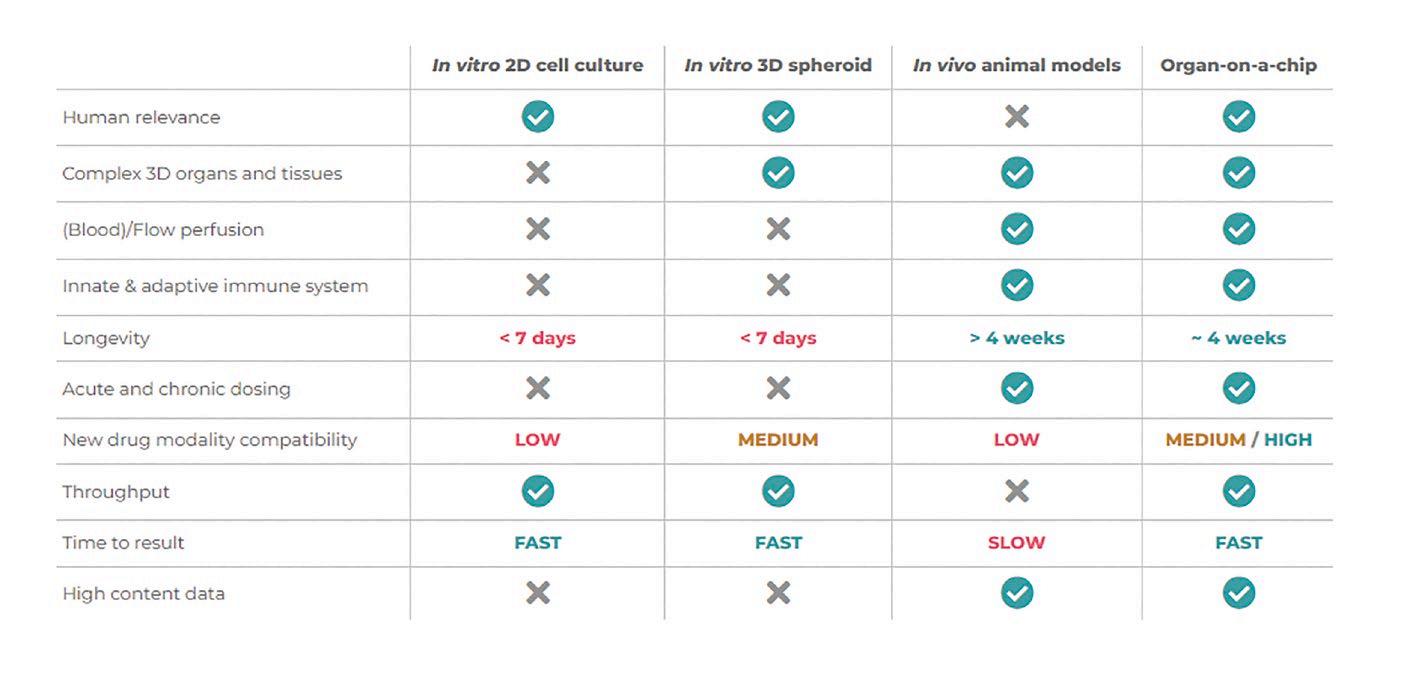

A fascinatingly futuristic development called organ-on-achip is explored in Next Generation Drug Discovery on page 38 - this clever product acts as a bridge between human and animal models in the lab.

In addition, the way that 3D printed medical implants might avoid infection or rejection is explored in more detail in Topography And Medical Device Effectiveness on page 14.

As a scientific journal that aims to reflect the concerns of the wider industry, it would be remiss not to include a piece or two on waste reduction, and our articles Improving Lab Sustainability and Walking Off Waste on pages 16 and 18 aim to help improve your green credentials.

Feel free to contact me regarding an article you might want to contribute to Eurolab or any subject you would like to see covered. Either way I'd love to hear from you!

Nicola Brittain Editor

COVER STORY

38 Next Generation Drug Discovery

Organ-on-a-chip technology helps bridge the gap between animal and human models

SPECTROSCOPY

9

10

14

Through The Looking Glass

A new company aims to simplify the relationship between AI and developers

& LAB EQUIPMENT

Decoding Data Meaning Health science companies are making better use of data than those in other sectors, but why?

Topography And Medical Device Effectiveness

An in-depth look at the way 3D printing is driving innovation in life sciences

16

18

Improving Lab Sustainability

Tips on reducing plastic use in laboratories

Walking Off Waste

How to make the most of your sustainability walks

21 Fluid Dispensing Explained

How dispensing techniques can lead to better process control in your facility

22 BEXS Vs EDS

Exploring the differences between BEX imaging and EDS mapping

How microplate readers can help accelerate drug discovery and development

How to break the vector characterisation bottleneck CHROMATOGRAPHY

Boosting Narrow Bore Column Performance

Why sample introduction optimisation will boost narrow bore column performance

The Magic Number

How to better understand a compound's physical structure

PUBLISHER

Jerry Ramsdale

EDITOR

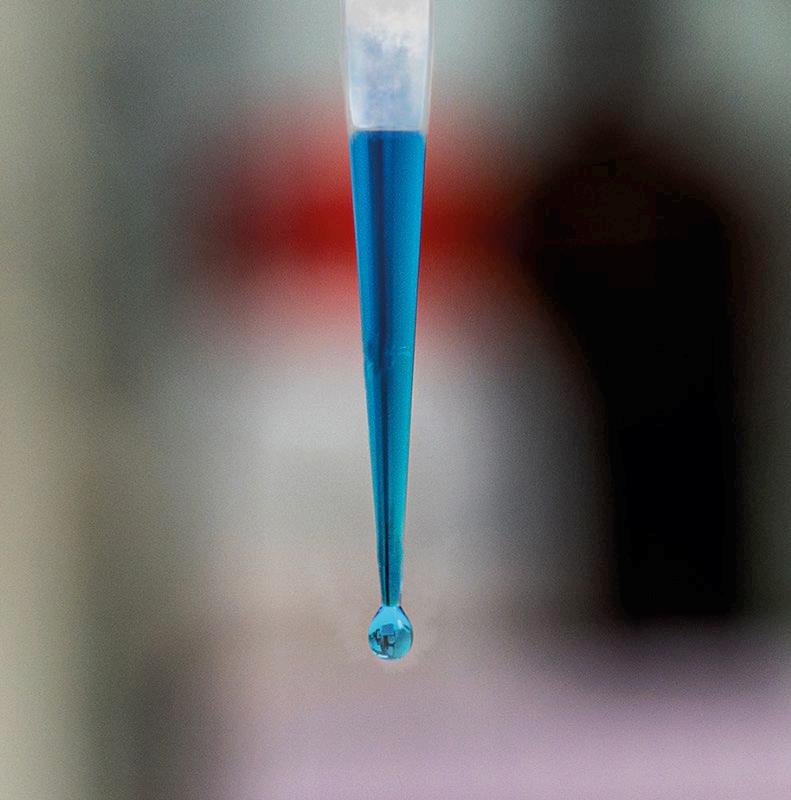

Tips For Pipetting

Nicola Brittain nbrittain@setform.com

DESIGN

Stephanie Taylor, Jill Harris

GROUP HEAD OF MARKETING

Shona Hayes shayes@setform.com

HEAD OF PRODUCTION

Christine Flaxman +44 (0)207 062 2573

BUSINESS MANAGER

John Abey +44 (0)207 062 2559

SALES MANAGER

Darren Ringer +44 (0)207 062 2566

ADVERTISEMENT EXECUTIVES

John Davis, Peter King, Iain Fletcher, Paul Maher

Setform Limited, 6, Brownlow Mews, London, WC1N 2LD, United Kingdom

+44 (0)207 253 2545

Compound Semiconductor analysis

This article visualises doping and topographic variation

Turning to tunable lasers

Why technological advances have lead to research labs using tunable lasers

Give GMO A Chance

How new legislation might increase cultivation of GMO in Europe

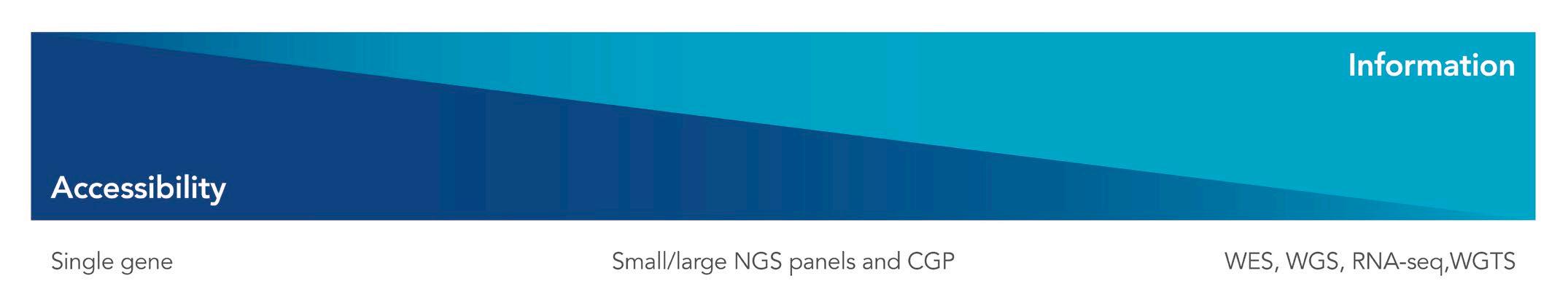

Revolutionising Precision Medicine

An overview of advances in oncology research

Cellular Protein Synthesis

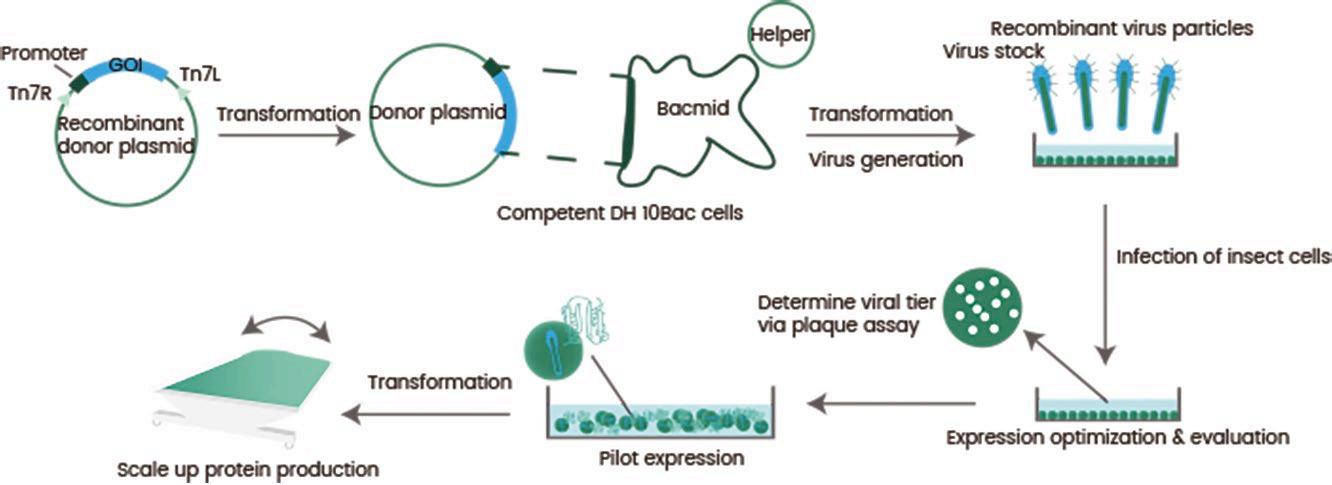

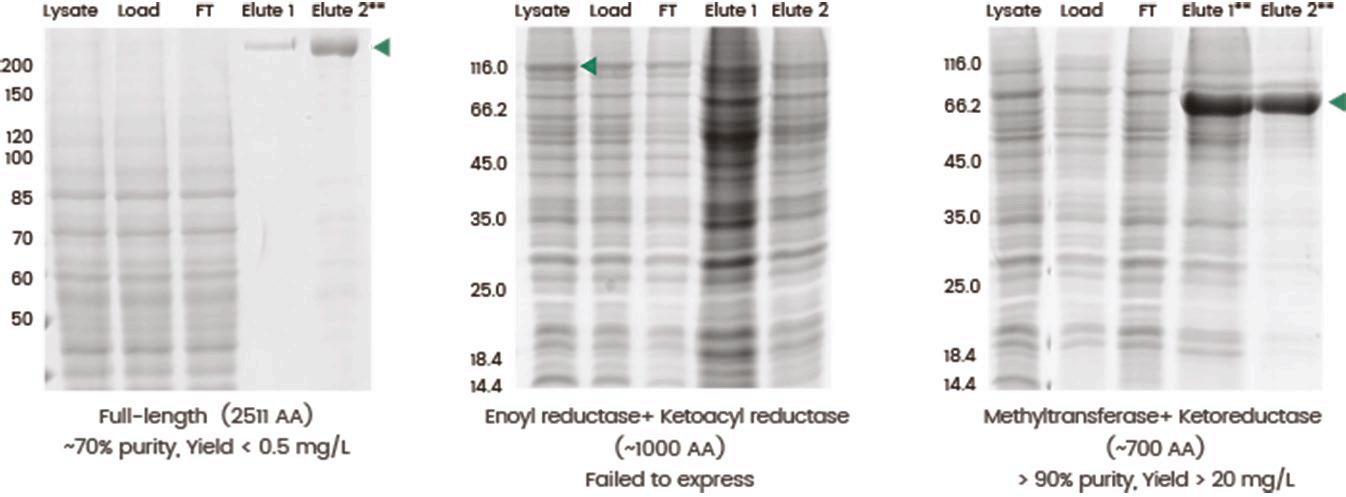

Exploring recombinant protein expression using a baculovirus insect cell system

How to achieve error-free pipetting for accurate results

AND LAB CONSUMABLES

The Role Of Relative Humidity

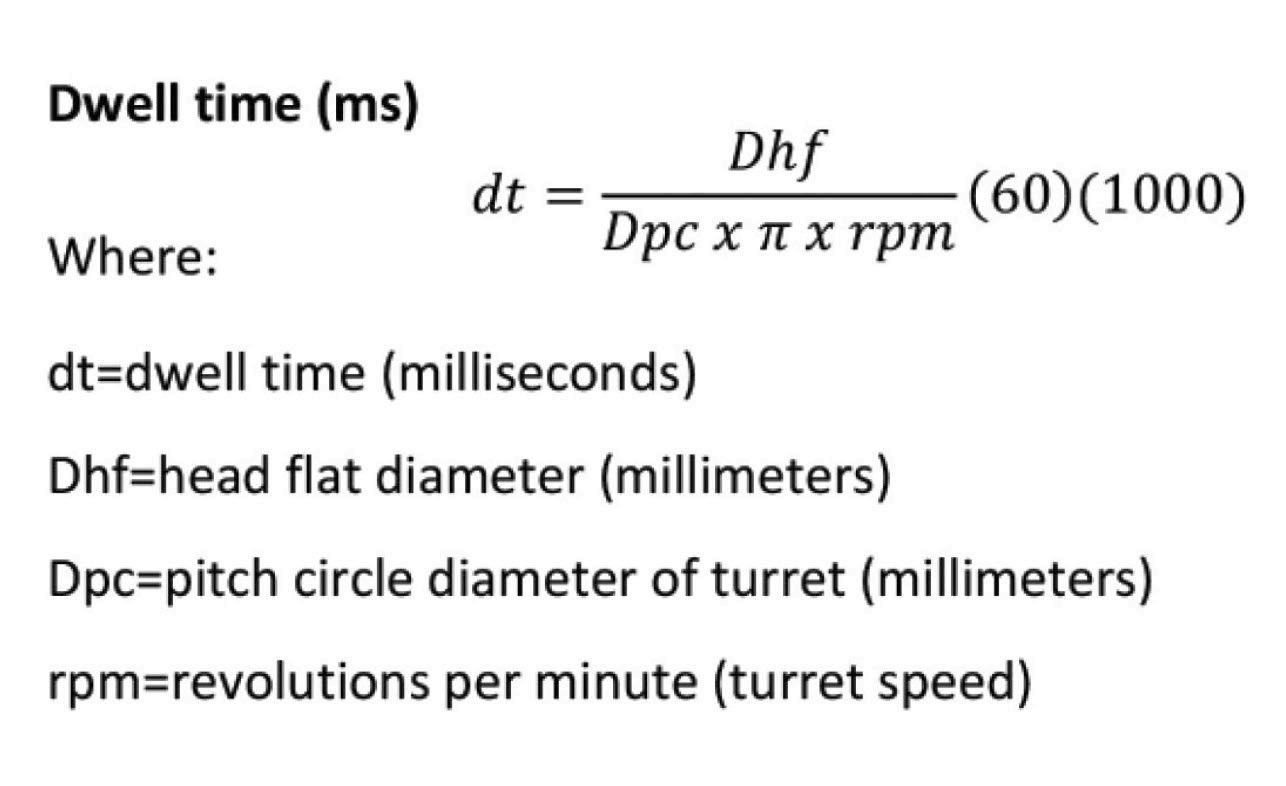

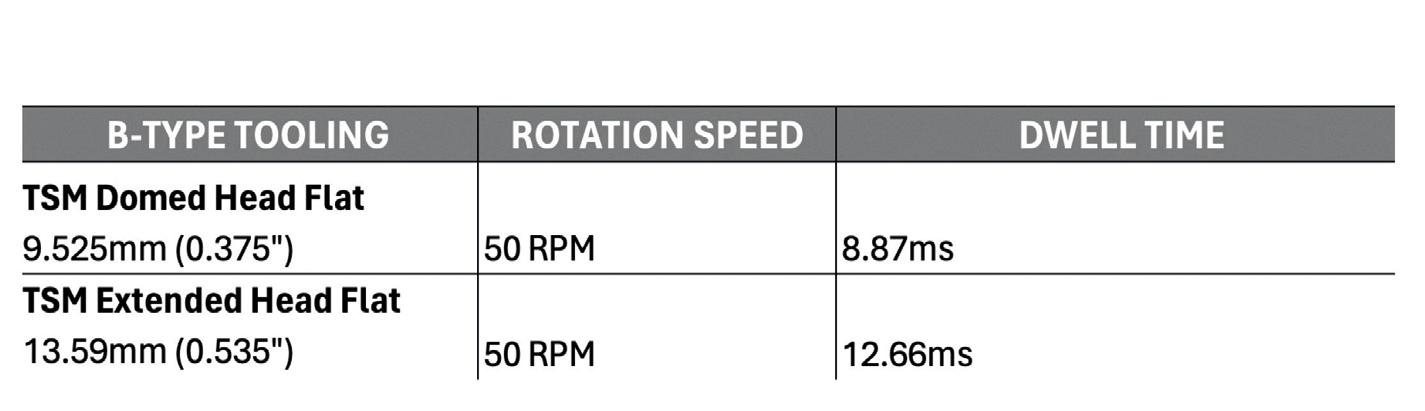

Why controlled humidity is essential for reliable research outcomes 52 Pressing Power

A guide to understanding how head dwell time can impact tablet compression

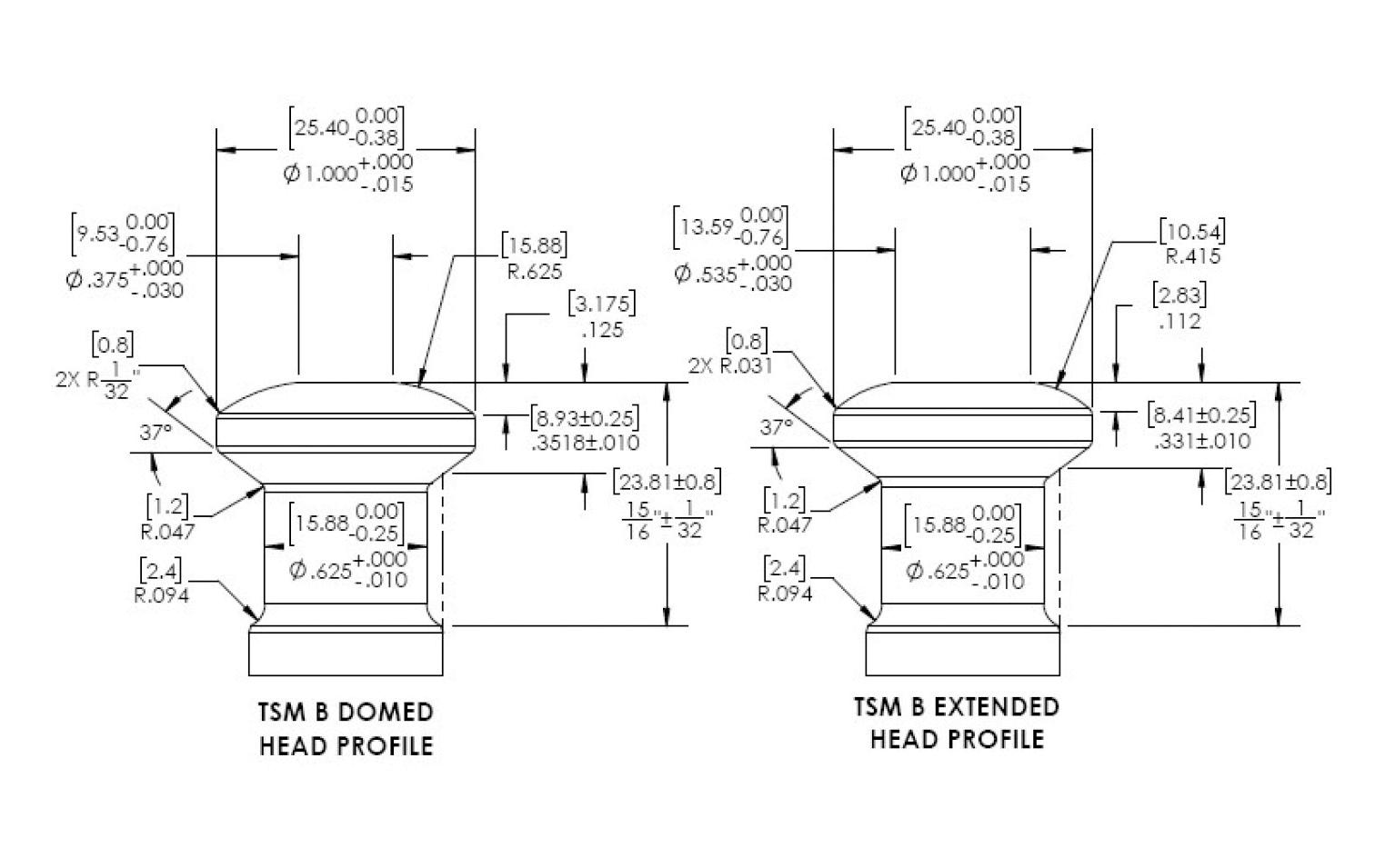

Dry Granulation

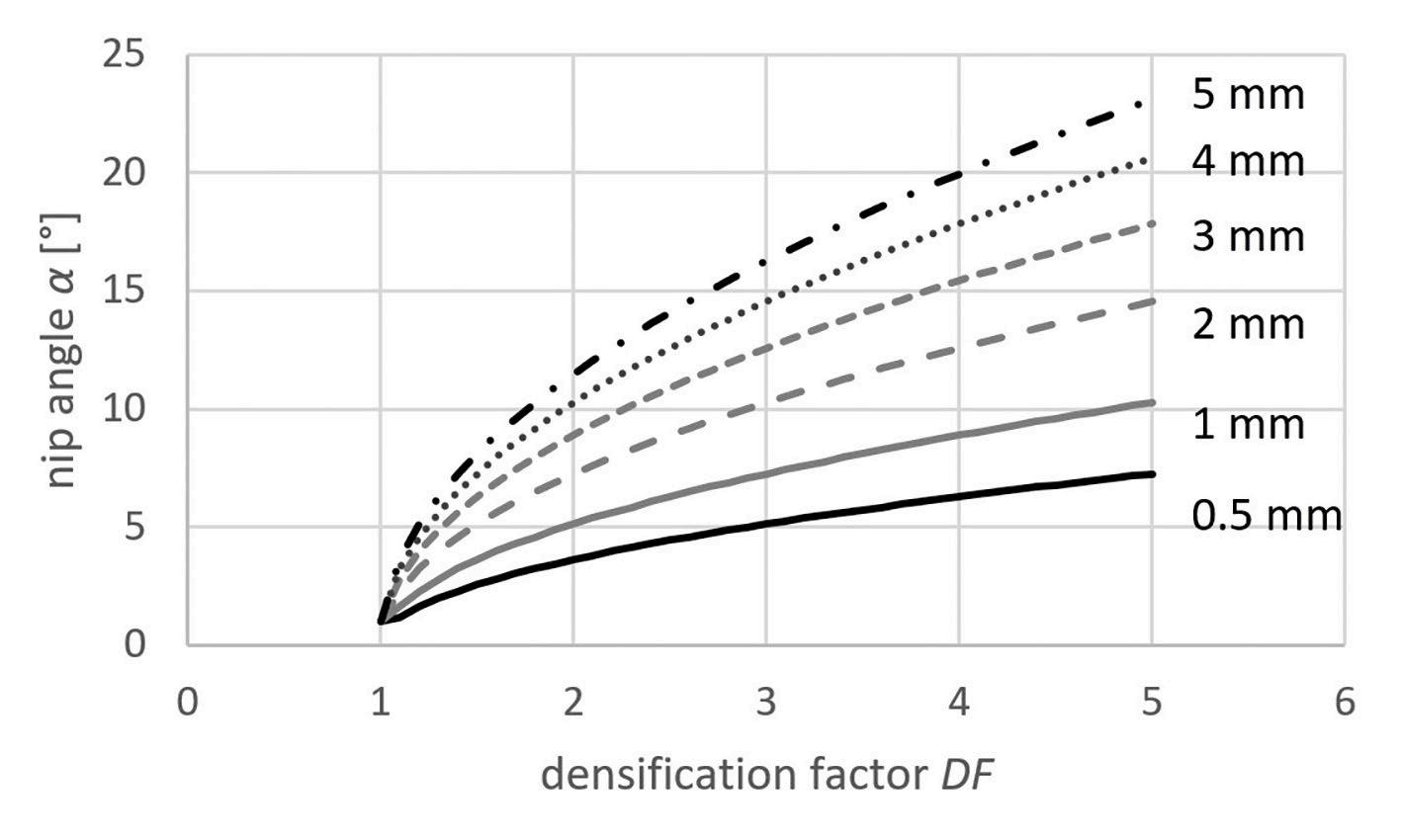

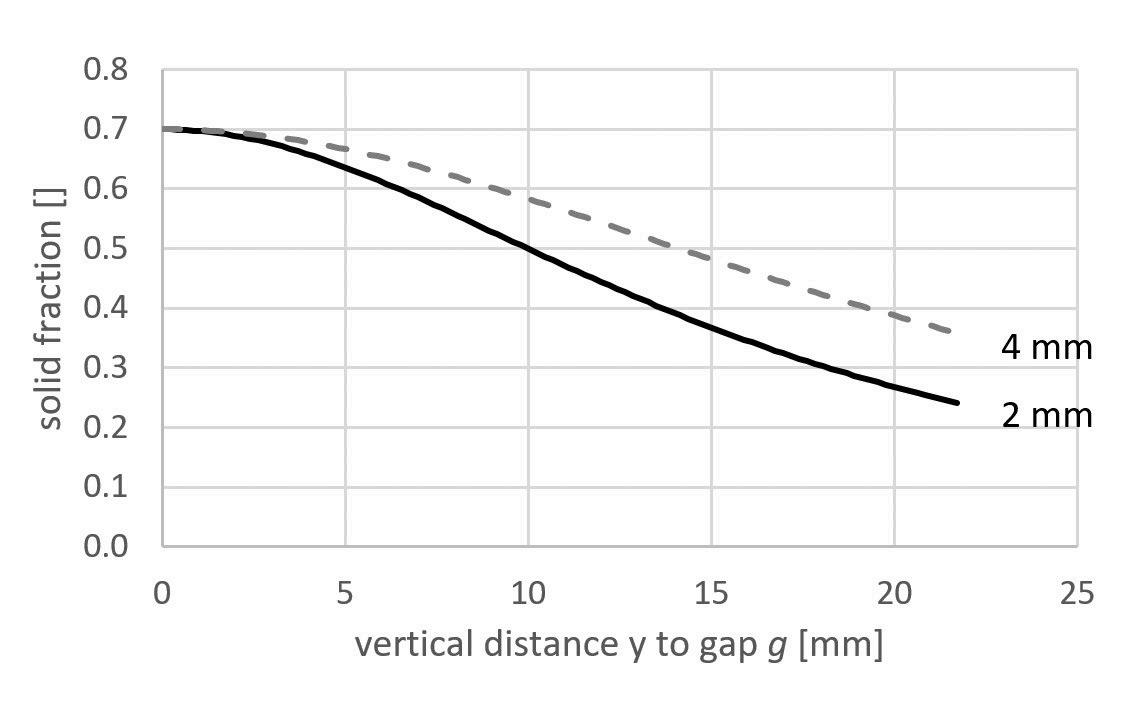

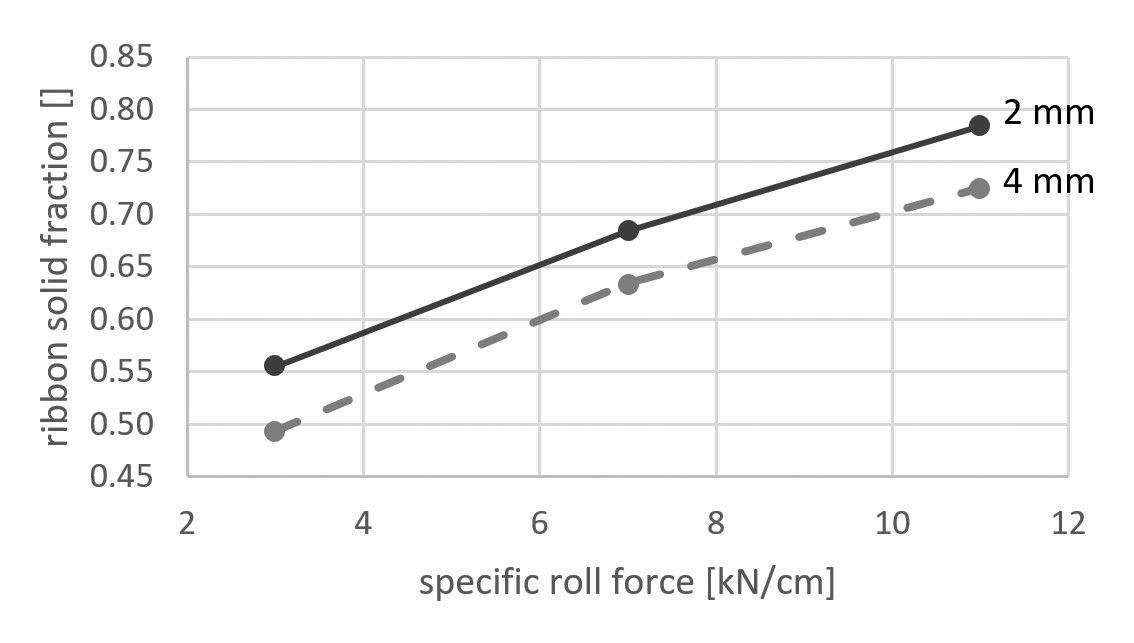

How to better understand the nip angle

Setform’s international magazine for scientists is published twice annually and distributed to senior professionals throughout the world. Other titles in the company portfolio focus on Process, Design, Transport, Oil & Gas, Energy and Mining engineering..

The publishers do not sponsor or otherwise support any substance or service advertised or mentioned in this book; nor is the publisher responsible for the accuracy of any statement in this publication. ©2024. The entire content of this publication is protected by copyright, full details of which are available from the publishers. All rights reserved. No part of this publication may be reproduced, stored in a retrieval system, or transmitted in any form or by any means, electronic, mechanical, photocopying, recording or otherwise, without the prior permission of the copyright owner.

Lab Innovations

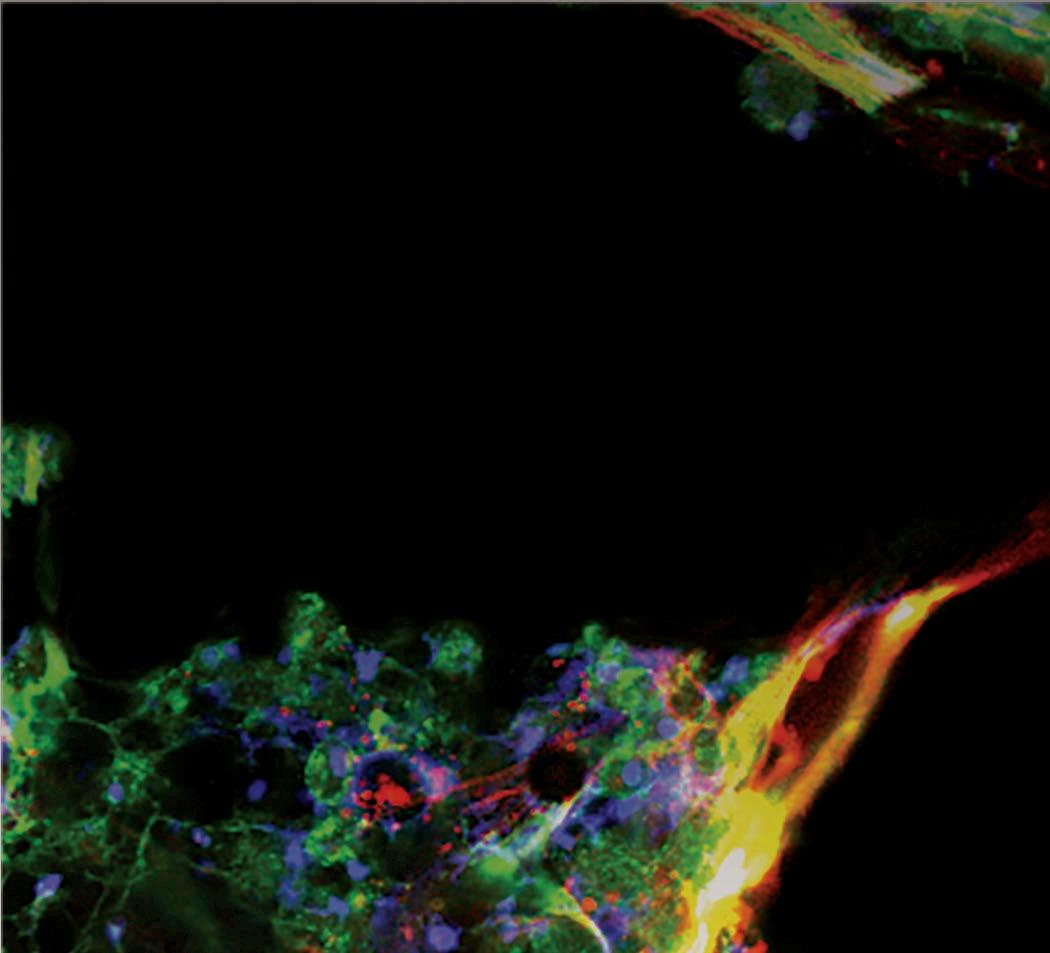

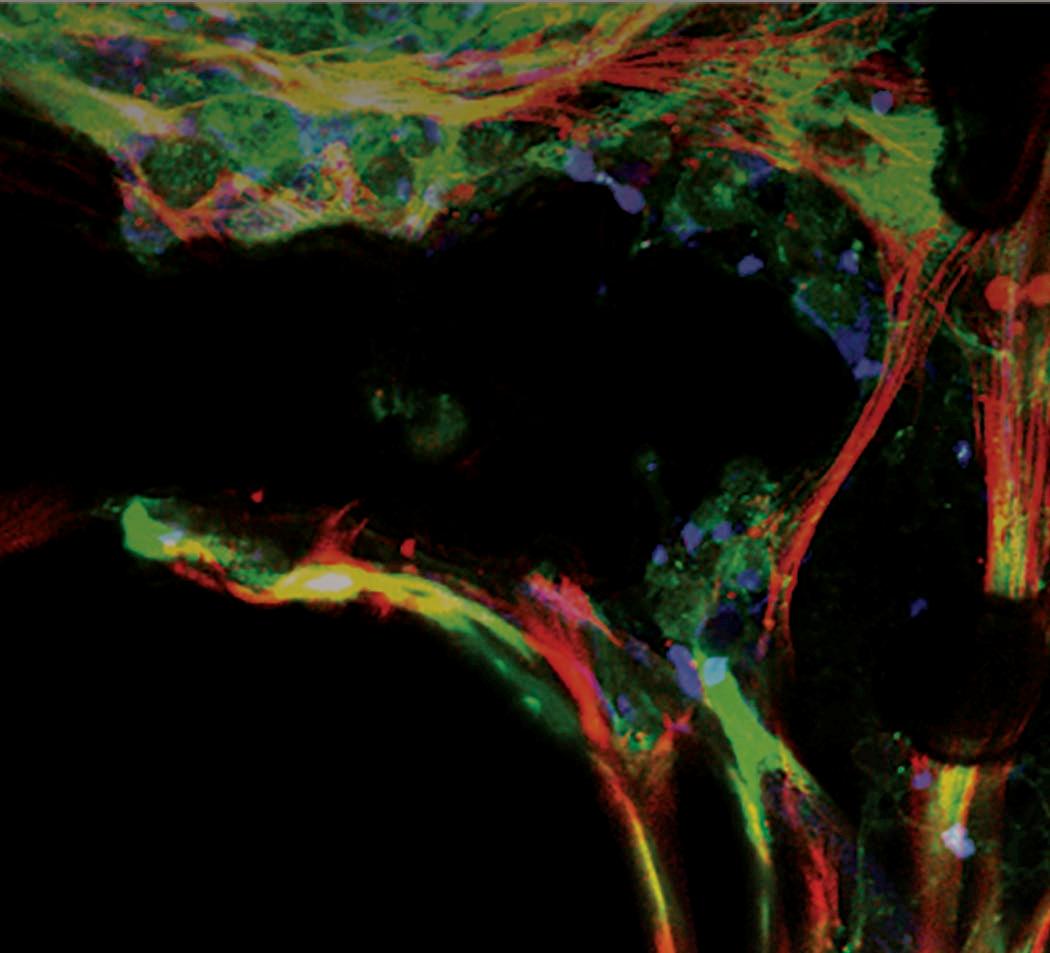

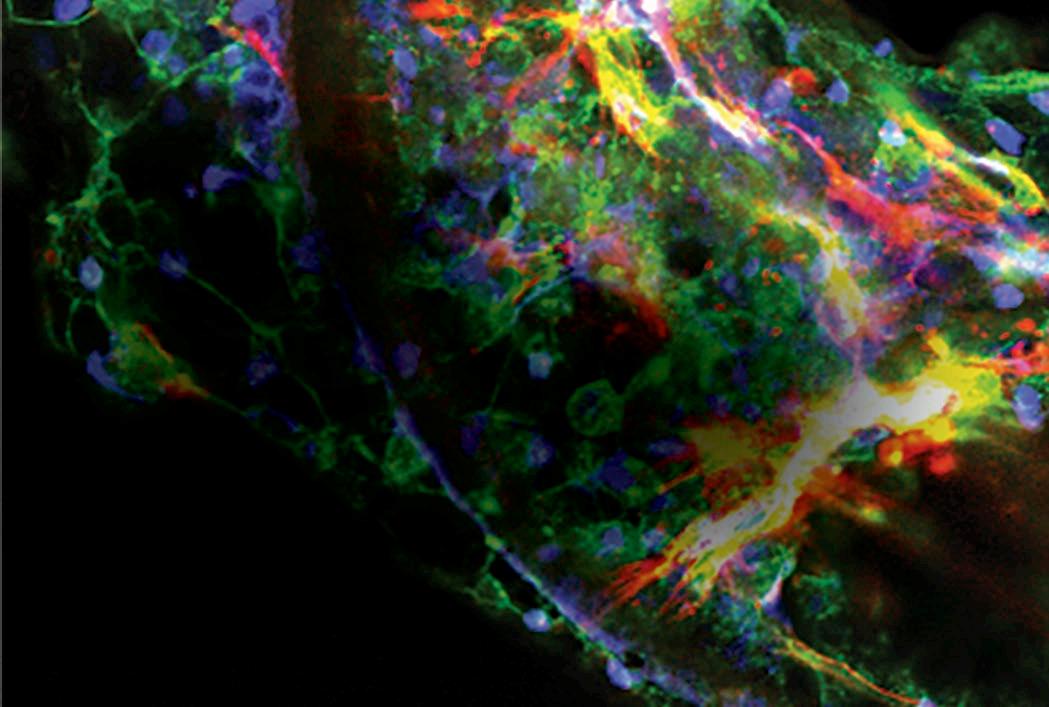

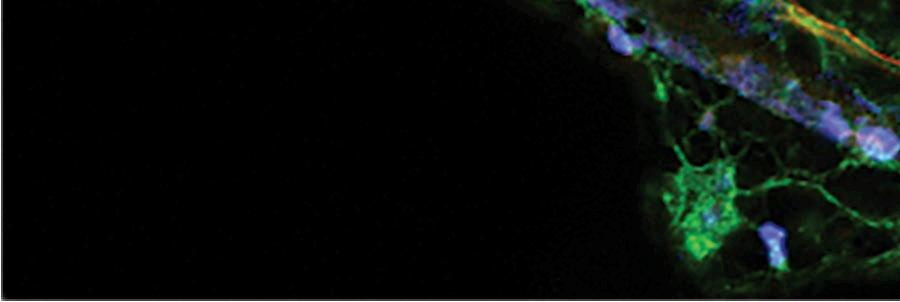

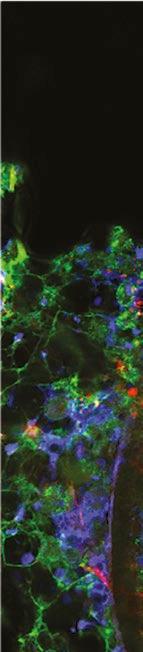

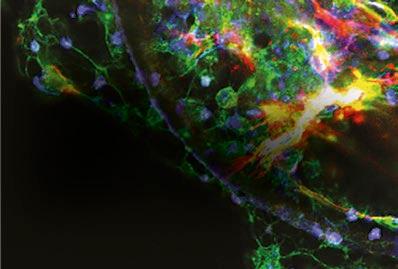

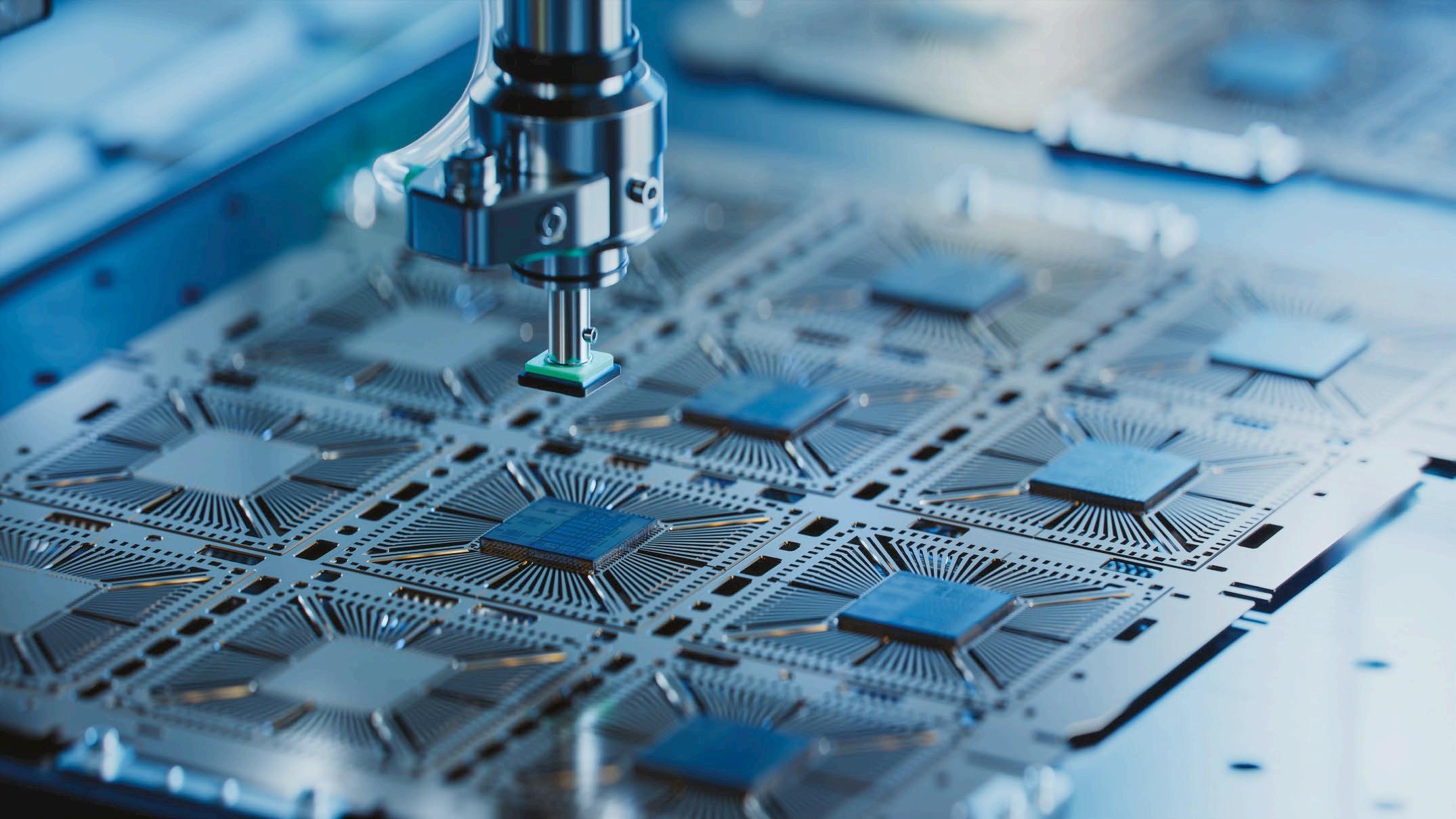

Organ-on-a-chip technology will bridge the

Your roundup of world news in the science industry

Biotechnology company Preci has partnered with Biopredic International, a company specialising in the design and manufacture of human and animal in vitro assay systems to collaborate on the production of pooled suspension human hepatocytes.

Under a license agreement, Biopredic will leverage Preci’s expertise and production capacity in sourcing primary hepatocytes, and combine this with its own IP and know-how in cell pooling.

The partnership will provide drug metabolism and pharmacokinetics researchers with access to large batches of high-performing suspended pooled hepatocytes with extended longevity from multiple donors.

Pooled suspension human hepatocytes play a crucial role in assessing drug metabolism and hepatic clearance, providing a more representative understanding of human hepatic metabolism for predicting drug outcomes and assessing their impact on safety and efficacy.

Despite this, the limited culture lifespan of pooled hepatocytes hinders long-term studies, potentially reducing metabolic competence with repeated use of the same batch.

Anton Hanopolskyi, CEO, Preci, said: “At Preci, we believe that increased diversity and availability of representative cell models will revolutionize the drug discovery landscape. This partnership strengthens our position in the human-derived assays market, providing our customers with access to high quality, reproducible assays, and we look forward to continuing to work with Biopredic to advance our translational models for use in drug metabolism and pharmacokinetics studies.”

A leading life-sciences company Mission Bio has introduced sample multiplexing features for its Tapestri Platform. These features enable the combination of several samples into a single run, reducing the per-sample costs for single-cell DNA and protein multiomic analysis by up to 60%, according to the company. By allowing researchers to simultaneously process multiple samples, the new features have been designed to o er biopharma companies opportunities for optimising product characterisation and drug development to provide academic researchers with the ability to scale critical single-cell insights, particularly in oncology and genome editing fields. This will lead to transformative advancements in health and disease management.

“At Mission Bio, our goal is to empower more researchers to harness the advantages of single-cell multiomics to accelerate scientific discoveries, from academics using genome editing techniques for disease modeling to biopharma in the cell and gene therapy space,” said Anjali Pradhan, chief product o cer, Mission Bio.

Artificial intelligence innovator FUSE-AI has received EU Medical Device Regulation (MDR) 2017/745 IIa certification for its AI software ‘Prostate.Carcinoma.ai’. As of January this year, the company was authorised to distribute its software as a medical product.

The product aids radiologists by automatically segmenting the prostate gland in MRI scans and independently identifying pathological changes, promising a 30% time saving per patient. This efficiency translates into considerable financial benefits for radiological clinics and practices, fuelling strong interest in the deployment of the AI algorithm.

“This AI software is a testament

to the interdisciplinary collaboration among scientists, AI developers, and radiologists. Receiving certification is a pivotal step, transitioning preliminary agreements into binding contracts and fully leveraging the software’s capabilities in clinical settings. This milestone substantially lowers investment risks into our company,” stated Matthias Steffen, Founder and CEO of FUSE-AI.

This favourable development for “Prostate.Carcinoma.ai” coincides with a prediction that the global market for medical image analysis software will reach a value of $4.545 billion by end of FY 2023, with a Compound Annual Growth Rate (CAGR) of 9.9% according to Reportlinker.com

London-based genomics company Broken String Biosciences has entered a research collaboration with biomedical discovery institute the Francis Crick Institute to develop novel applications for Broken String’s proprietary DNA break-mapping platform, INDUCE-seq, beyond its established capabilities in geneediting. The research will be focused on leveraging the technology to investigate the impact of genomic instability in the development of amyotrophic lateral sclerosis (ALS). ALS is a progressive and debilitating neurodegenerative disease, causing gradual loss of the ability to control voluntary movements and basic bodily functions.

The collaboration is focused on understanding the contribution of genome stability to ALS, combining the interests of Prof Simon Boulton and Dr Nishita Parnandi at the Crick Institute who are focused on genome stability and DNA doublestrand break (DSB), with Prof Rickie Patani and Dr Giulia Tyzack who are interested in understanding the underlying mechanism of the ALS disease. Recognising the utility of the novel INDUCE-seq platform developed by Broken String’s R&D department,

the teams aim to collaborate to demonstrate and further validate the INDUCE-seq technology in this setting.

The majority of ALS cases (90%) are considered sporadic. While there has been progress to better understand the genes and biological markers associated with the disease, very little is understood about the causes, with current treatment strategies focused on symptom management and slowing disease progression. Combining world-leading research from the Crick with Broken String’s expertise in genomics, sequencing, and bioinformatics, the partnership provides a unique opportunity to expand application of the Company’s INDUCE-seq technology in a key area of clinical unmet need, to support improved diagnosis and treatment of ALS.

The partnership has been secured via the Francis Crick Institute’s Business Engagement Fund, a new initiative supported by The Medical Research Council (MRCUKRI), designed to encourage collaborations with small-tomedium-sized enterprises (SMEs) and strengthen the Crick’s engagement with industry.

Leading provider of premium vector technology and services for the production of biologics ProteoNic Biosciences has launched its LV-2G UNic Early Access Program. This program marks a significant milestone in lentivirus manufacturing optimisation, according to the company, offering access to groundbreaking vector technology designed to revolutionise viral vector production.

The product is aimed at CDMOs, biotechs, and biopharmaceutical companies who would benefit from integrating this cuttingedge vector technology into their existing systems, paving the way for increased viral vector production capacity and substantial improvements in manufacturing cost efficiency.

Frank Pieper, CEO of ProteoNic, said of the launch: “The LV-2G UNic Early Access Program will serve as a launching platform for our viral vector manufacturing innovation. By offering early access to our state-of-theart vector technology, we empower researchers to unlock unprecedented levels of efficiency and productivity in viral vector manufacturing.”

DeepMirror’s bespoke software allows users to tap into AI-driven insights

A new company aims to simplify the relationship between AI and drug developers in a bid to reduce complexity and cost, Nicola Brittain reports

Drug discovery is a lengthy and costly process, with pre-clinical development alone costing an average of �£35-55m per drug and taking eight years to bring to market (Wellcome Trust, 2023). Drugs take this long to develop because chemists must painstakingly tweak molecules to increase their efficacy - AI promises to help automate this process. Although the promise of AI in this realm hasn’t yet lived up to its initial promise to map the entire human body and its likely responses to pharmaceuticals, predictions around which tweaks are likely to work better than others can reduce overall costs significantly and there are a number of interesting developments in this field; not least a new company called DeepMirror.

Set up as a spin off from the University of Cambridge, this team of experts in physics, biology, and AI recently launched their Early Access Programme after a successful closed beta programme during which chemists were invited to test

the software over several months. The company was set up to develop intuitive design software for the discovery of novel therapeutic drugs. Its bespoke software allows users to tap into AI-driven insights to improve and accelerate molecular design across the drug discovery pipeline through a secure and user-friendly interface which makes AI-powered drug discovery as ‘simple as using a spreadsheet’, according to the company. Users are provided with access to short cuts as found by other pharmaceutical companies during the drug discovery process.

AI-enabled drug discovery programmes often start with pharmaceutical companies partnering with AI companies to deliver insights for their drug discovery efforts. However, this approach requires extensive crosstalk between the two parties, resulting in long waiting times and considerable resources spent on both sides.

DeepMirror has developed a programme that aims to solve this issue by enabling research and development teams to carry out AI-driven research with workflow integration and without the need to engage external stakeholders, develop internal teams and software, or relinquish any intellectual property.

In drug development, predicting the properties of drugs (small molecules) before testing them in the laboratory is crucial to reducing the time and resources required to bring safe and effective new drugs to patients. Two main types of property predictions are crucial: properties that describe how ‘drug-like’ a molecule is, such as it’s absorption, it’s distribution in the body, how it gets removed from the body and how toxic it is; and second, properties that describe how good a drug is at binding to its target and exerting an effect against a disease (affinity, potency). Deep Mirror tested 184 AI approaches against 44 public datasets and the research highlighted the need for different AI approaches for different datasets. For example, traditional methods perform better

for low dataset sizes and datasets with affinity measurements, whereas modern AI methods (such as deep learning) perform better at higher dataset sizes and some drug-like property datasets.

The team also found that selecting the right featuriser was also dataset dependent. Featurisers are methods that turn molecular structures into a numerical format that computers can understand. Expert features (properties derived by cheminformaticians) worked best for affinity property datasets; yet molecular descriptors (chemical properties of a molecule) and Natural Language Processing (features derived from letter sequences such as molecules SMILES) worked best for drug-like property datasets.

All in all, the company did not find a single model “to rule them all” in small molecule drug property prediction. By benchmarking a wide range of AI methods across various datasets, Deep Mirror has developed a platform that can intelligently adapt to the specific needs of each dataset.

This adaptability is critical in a field as diverse and complex as drug discovery.

The company’s technology to fasttrack the drug discovery process, for example in the hit-to-lead and lead optimisation phases, can predict relevant properties such as drug binding, (bio-)activity, and toxicity, both from user data and from large proprietary curated databases. Laboratory results can be used to refine predictions and generate novel drug candidates for further experimentation, ultimately accelerating the drug discovery process by up to four times as estimated by the Wellcome Trust and the Boston Consulting Group.

Dr Max Jakobs, co-founder and CEO of DeepMirror, said: “Our mission is to make AI-powered drug design as simple as browsing the web. After

12 months of development and a successful beta-testing programme, we are excited to officially launch DeepMirror to early adopters. We are inviting researchers to get in touch to use our secure and user-friendly AI platform for drug design. DeepMirror has already been used on active drug discovery programmes, resulting in the discovery of novel lead series and inspiring the synthesis of new compounds.”

Dr Andrew McTeague, senior scientist, Medicinal Chemistry, Morphic Therapeutic, said: “DeepMirror is a huge step forward in the democratisation of machine learning models. Its user-friendly interface enables medicinal chemists of all levels to deploy this powerful approach in a fraction of the time. Being able to apply DeepMirror’s platform to any desired endpoint, empowers users to make more informed decisions and to do so faster. We’re always looking for new tools to improve the efficiency of our DMTA cycles and DeepMirror helps ensure that no stone is left unturned." n

Health

science companies are making better use of data than those in other sectors. But why, and what benefit does this afford them?

By Nicola Brittain

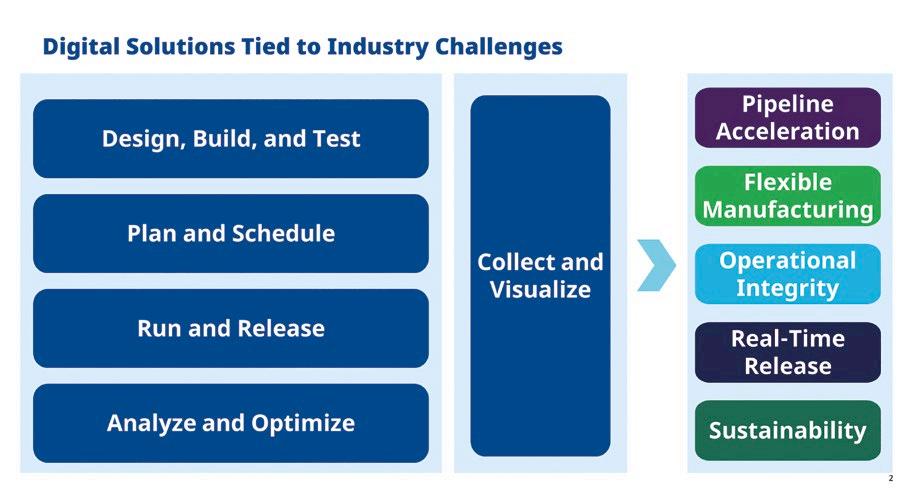

The IT and OT layers of an organisation have traditionally had very different remits and been run quite separately. However, with automation and virtualisation now ubiquitous, they are no longer distinct. In terms of merging these information types to make use of different data sets across the business, life-science companies are leading the charge, according to Peter Zornio, CTO of technology manufacturer Emerson. He was speaking at the recent Emerson Exchange conference in Dusseldorf. The merging of these technology stacks to make different data sets across the business and the resulting ‘bubbling up’ and sharing of previously siloed data, is leading to improved product time to market, enhanced sustainability, and reduced downtime.

Before we look at why this is happening, it is important to define the types of technology stack to which we are refering. OT (short for Operational

Michalle Adkins director of Life Sciences Strategy, Emerson

Technology) systems tend to be those closer to the plant level and shop floor. They require a user input, as well as data and information from the specific location. They tend to refer to information created in site specific databases, or related to plant specific operations. Reliability and availability are the most important concerns for OT

systems. OT information has tended to not be shared across a business. However, IT (short for information technology) systems tend to work across sites, do not require user input and are more focused on security and consistency than the former. Their remit might include internet, cloud, SaaS or CRM services as well as monitoring and information reporting.

Historically, the two stacks were distinct and often at logger heads (with different IT staff in charge) but times have changed and companies need a more holistic view of their data to be able to make the right decisions at the right time. Michalle Adkins director of life science consulting, Emerson said of recently developments: “Being able to merge and move data and information from both these stacks has become essential for the smooth running of labs.” She continues: “Pulling data out of bespoke or specific on-site machines can now be given context and be useful to a wider business. This bubbling up of data – or giving it contextual meaning – can be very useful in a number of scenarios.

Giving data context can lead to the sharing of operational efficiencies found in one site; sharing of capacity if one site is experiencing downtime; spreading research responsibilities if a product needs to be delivered quickly; and the reduction of repeat experiments in different parts of a business. Similarly, research work can be easily learnt from – particularly important in a large company with international sites; finally and importantly batch issues can be traced back to their root providing a better understanding of production problems.

As Michalle explained there are several key drivers for this development. These include getting new products to market quickly; a desire for pipeline acceleration; or the need to manufacture multiple products at a given process development facility to bring a new product to market quickly. Operational integrity and the need to be able to reliably deliver products on time, in full, and meeting

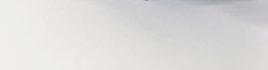

Sustainable Laboratory Solutions – No Compromise on Quality

Fastrak® and FastZAP™ pipette tip refill systems – reduce – reuse – recycle.

› Lighter, smaller, and minimal packaging

› Made from 95% renewable materials

› Printed with vegetable inks

› Components made form bio-based resin

› Manufactured using 61% renewable energy

› Fast, easy refilling of re-usable racks

Proudly displaying the ACT Verification eco label.

Guaranteeing sustainability accountability, consistency, and transparency. Each label features manufacturing, energy use, packaging, and lifetime rating.

› High-grade resin tips for precision and flexibility.

For more information or to place an order please visit: www.alphalabs.co.uk/fastrak

Our specialised Pharmaceutical Manufacturing Industry Collection has been developed to offer a range of products that enable the safe use of powders, chemicals and biohazard materials linked to the research and production of pharmaceuticals. In an evolving market, we help our clients maintain optimal standards whilst providing the best protection for your personnel and applications.

a pre-agreed schedule, can now be almost guaranteed because so many processes are automated. Similarly, equipment issues can be spotted and ironed out early in the process. Releasing products as soon as possible is helped by collating access from all the data across the systems involved. Similarly, sustainability has become increasingly important and being able to move data and information prevents repeat experiments and will improve efficiency. Being able to pinpoint and resolve bottle-necks will also lead to a smoother running facility.

Although Michalle wasn’t able to say for sure why health science companies are more advanced than other manufacturing sectors in terms of managing and making different types of data available to the wider business (merging their IT and OT systems), she was willing to hazard a guess that it is because there are more siloed parts of a bio-sciences organisation than outfits in other sectors. These will likely include a research facility, a process development facility, a clinical manufacturing arm, and a commercial manufacturing or a contract manufacturing facility (CDMO). Companies also often work with external partners when developing and manufacturing new products. Similarly, it is probably the case that new products are coming on line in

health science companies more often than in some other sectors and these require nimbleness and agility as well as a concerted push to get products to market. This sharing of data is probably more necessary in international than smaller companies, and many health science companies work at scale. Although companies in the oil and gas sector, for example, are also huge, they deliver the same product consistently. Simiarly, the manufacture of parts in the process industry may not be subject to the same speed of change as those in the health sciences sector - although this is probably not the case for car components or computer chips which are likely to be subject to similar time-to-market pressures.

❝ Being able to move and merge data and information from the IT and OT stacks has become essential for sustainability, efficiency and the smooth running of labs.

DeltaV workstations run on a specific set of preselected Dell computer hardware chosen to provide the best cost performance solution

Emerson provides a number of products that can help health companies manage their data. These include the DeltaV Automation System which ‘helps

When asked which companies Emerson works with in the health sciences space, an apposite answer might be be ‘who doesn’t it work with?’ Some 29 of the top 30 health science companies use Emerson technology and these include Thermo Fischer, a company that has spoken about its advances in gene therapy as a result of Deltav Automation; and FujiFilm, a CDMO that increases speed to market using a cloning-across-sites system built on utilising Drug Substance Manufacturing (DSM) modules. These modules rely on Emerson’s DeltaV automation system and two DSM modules that share an Emerson Syncade manufacturing execution system (MES). Other customers include Bayer and Novo Nordisk.

eliminate complexity and project risk by offering contextualised data,’ according to the company. In addition, Emerson has developed other related technology for the health sciences sector that including the DeltaV Spectral Process Analytic Technology (PAT), a distributed process control system that uses spectral analysers to measure reflected light frequencies from on-line product samples.

In 2021, the company also acquired a 55% stake in Aspentech in a bid to accelerate its industrial software strategy. Aspentech currently provides a range of data focused applications including twin technology as well as process automation simulation and other impressive tools to help health science companies deliver competitive advantage and cost savings n

For more information visit https://www.emerson.com/

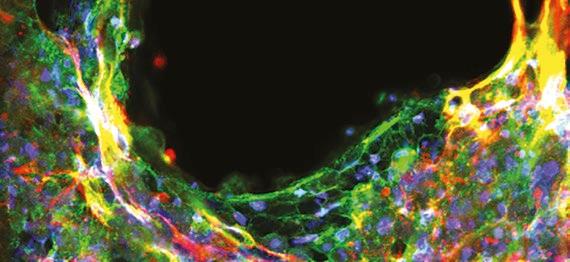

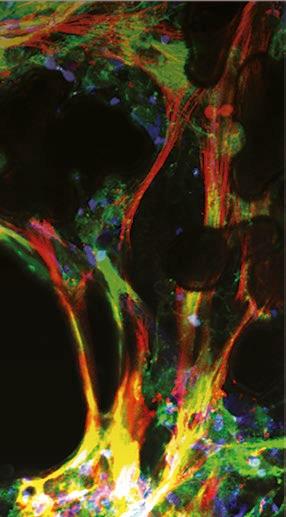

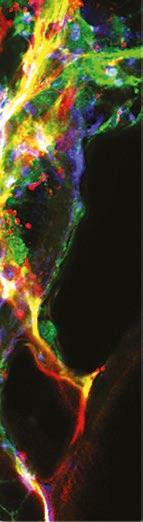

An exploration of the way 3D printing is driving innovation in healthcare and life sciences

Healthcare and life sciences are two industries where 3D printing is driving innovation, since it can print micro-precision parts that many medical devices require. Beyond medical devices, large healthcare and pharmaceutical companies are researching the ways that 3D printing can be used for next-generation drug development, like biomedicines, or personalised surgical techniques, like bone grafts.

Many projects also aim to explore the use of topography in optimising device effectiveness.

Last year, The university of Nottingham’s Centre for Additive Manufacturing selected BMF as an advisor for an EPSRC grant-funded 3D printing “Dial Up” project that focuses on“dialing up performance for on demand manufacturing,” where the multidisciplinary research group began to develop a playbook for standardising 3D printing in medtech and life sciences applications. This project runs alongside follow up work funded by an MRC project, the“Acellular / Smart Materials – 3D Architecture: UKRMP2 hub.”

Recently, BMF’s CEO, John Kawola, was asked to serve on the advisory board for another project based in the University of Nottingham’s

Biodiscovery Institute, which has long been a leader in researching new materials and medical devices, as they received a grant to focus on designing bio-instructive materials for translation ready medical devices. The goal of the EPSRC–funded “designing bio-instructive materials for translation ready medical devices” project is to address major compatibility issues of implanted medical devices.

These projects have differing goals, but have taken thematically similar approaches.

In Dial Up, BMF has taken a screening approach to understand how the process of identifying materials and processes for healthcare products to move quickly from concept to clinic might be automated. This will speed up adoption and streamline the process of making products that will help people with long-term chronic conditions, such as intestinal bowel disease.

The goal is to make an intestinal patch that will allow inflamed intestinal tissue to be regenerated

Medical devices can be long-term implants or temporary aids like catheters. Using 3D parts for implants can help to facilitate healthy cell growth while preventing bacterial infections, a common issue with implants. Researchers working on 3D medical implants tend to focus on developing advanced biomaterials that resist infections. Their work aims to create surfaces that naturally discourage bacterial growth while promoting healthy tissue integration. This work not only addresses the immediate challenges of reducing implant infections but also sets a foundation for safer, more reliable medical treatments in the future.

in situ, but this requires BMF’s technology to deliver structures with cell relevant features manufactured at the sizes needed.

Alongside, researchers are exploring how BMF’s technology can be used to create microarchitectures that can control and direct cell phenotype, with the aim of scaling up manufacturing of microparticles that can direct stem cells towards bone or other desired phenotypes. Once again, researchers are seeking the sweet spot between being able to manufacture with feature sizes that cells can respond to and at a scale where commercially viable production is achievable.

Device rejection is a significant healthcare problem, but researchers have found that physical surface

❝ Device rejection is a signficant healthcare problem but researchers have found that physical surface patterns or topographies, and the materials associated, are significant contributing factors

patterns, or topographies, and the materials associated are significant contributing factors in immune acceptance for implantable medical devices. In the project focusing on devices that counter foreign body response, the research team is utilising BMF’s micro-3D printing technology to scale up findings and produce manufacturingready devices where materials and topologies are tested with semiautomated in vitro measurements.

In each of these projects, researchers aim to collect suites of relevant data that can be utilised by artificial intelligence, specifically machine

learning, to build effective models of performance and provide mechanistic insights.

The capability of BMF’s highresolution and micro-precision technology, plus high throughput, makes micro-3D printing ideal for this application. The end goal is to develop new devices or to find new ways to manufacture existing devices that will improve patient care and recovery.

BMF’s PµSL technology is ideal due to its high-precision, and the manufacturing process allows materials to retain their bioinstructive properties all the way through the production process. These projects will build on BMF’s established work with the University of Nottingham, and it’s an exciting advancement of the partnership to propel innovation across medtech and healthcare, enabling optimised device effectiveness across industries. n

The Fastrak and FastZAP pipette tip refills from Alpha Laboratories use the minimum amount of packaging possible

Plastic in laboratories has helped further research for decades however as global governments move to create a more sustainable world, the use of plastic really must be reduced.

Virgin plastic is essential to ensuring the accuracy of many clinical and scientific processes, and the quality and cost has become dependent on this (1). While there have been substantial developments into the production and use of bioplastics, there are still many concerns, regarding; recyclability, price, and consistency (2).

Although we are a long way from removing plastics from laboratories, the race is on to find a suitable alternative that maintains sample integrity in long-term storage or use. The applicability of plastic alternatives is currently limited, but industry developments can address areas of production and supply without compromising the product itself.

Therefore, while the product remains virgin plastic, the manufacturing process and packaging materials should be increasingly sustainable, reducing emissions created by the producer and passed on to the user.

Product packaging often consists of multiple layers of various materials, providing protection during transport and storage. However, there is often overuse of materials, creating more waste than necessary. It is often believed that an increased ratio of paper-based to plastic packaging incites pro-environmental behaviour (3). However, replacing all plastic with paper-based materials can compromise packaging integrity, putting the product at risk while reducing all unnecessary packaging can lead to a better outcome. Products such as the Fastrak and FastZAP pipette tip refills, from Alpha Laboratories use the minimum amount

of packaging possible, while delivering a high-quality product. Packaging is stripped to the essentials; and through smart product design the fully recycled cardboard shell ensures the stability and integrity of the product on opening. The stacking style reduces the plastic racking and enables reuse of existing tip racks. The minimal weight and size of the packaging decreases associated transport emissions and allows consumers to save space and reduce wastage of materials. In addition, the products are manufactured using 61% renewable energy, further reducing emissions. The ACT label helps customers quantify energy use by detailing lifecycle emissions of the product, from manufacturing, distribution to end-oflife options. Comparisons can be easily made between products, allowing consumers to make informed decisions within their procurement. n

1. Urbina, M., et al. Labs should cut plastic waste too. Nature 528, 479 (2015).

2. Arikan, E.B. and Ozsoy, H.D., A review: investigation of bioplastics. J. Civ. Eng. Arch, 9(1), (2015) pp.188-192.

3. Sokolova, T., et al. Paper Meets Plastic: The Perceived Environmental Friendliness of Product Packaging, Journal of Consumer Research, Volume 50, Issue 3, (2023), pp. 468–491.

Water activity is one of the major parameters when it comes to product quality. It is not only important when it comes to the shelf life of products but also has a great influence on texture, taste and many other properties. Learn more about water activity in diffrent applications: www.novasina.ch/lab/applications

Watch Video to Learn More

DIAGNOSTIC DISPENSING SOLUTIONS

797PCP Progressive Cavity Pumps

• Bond medical device housing

• Dispense and freeze reagents for later use

• Highly precise uid volume accuracy and repeatability at ±1%

Its important to determine the opportunities for optimising management of waste streams

Here Ashley Davis from Kimberly Clark provides scientists and lab technicians with tips for a waste walk

One of the best ways to begin a waste reduction journey is to conduct a waste walk or a series of waste walks, depending on the size of your organisation. A well-planned walk can help determine the opportunities for optimising management of waste streams and help you figure out what can be diverted.

A waste walk, also known as a Gemba Walk in the long running waste management practice of Lean and Six Sigma, means taking the time to watch how a process is done and talking with those who do the job.

Here are five tips for a successful Waste Walk:

1

Visit your organisation’s receiving area

You need to understand what is coming into your facility and where it’s being used.

2 Map it out in advance

Don’t view your Waste Walk as a casual stroll through your facility. A Waste Walk should be properly planned and supported by all stakeholders at the site.

3 Document key details

Someone should be on hand to capture key information including photos of your waste, collection points and shipping containers and to do this at different times throughout a day.

4 Pay attention to behaviour and practices

Make sure to observe and take note of the behavior of personnel around waste management, waste and material flow throughout the site, as well as the location of all collection bins and disposal fees for waste tonnages.

5 Get leadership buy-in and stakeholder alignment

Leadership on the scope of work and key waste contributors should be involved from the start. Align on outcomes and set timelines for mapping out your waste reduction plan. End users should also be included since they will ultimately be

involved in implementing your waste reduction plan.

What comes next? Once you have as much detail as possible from your Waste Walk, you can begin prioritizing the work ahead. Make sure to assess the:

l Largest volumes of waste

l Largest valued materials

l Easiest solutions

l Most challenging solutions

After this, you need to determine solutions for your waste. If you have in-house experts in waste and recycling, lean on them to help you assess the composition of your materials and your waste streams as well as specific recycling solutions.

If not, try reaching out to a local waste management organisation for ‘simple’ waste such as paper, cardboard and general trash. For other waste, such as rubber, PPE, electronics or polymers, you may need to find a waste consultant who specializes in diverting these more complex waste streams. When choosing a waste and recycling management partner, ensure that they:

l Provide contracts with clearly outlined expectations.

l Supply financial and compliance information.

l Provide access to diversion data. Last, remember that a waste and recycling journey takes time. You can’t get there all at once, nor can you do it alone. Choose partners who will assist you in your journey. This could include manufacturer-led initiatives for recycling certain consumables, such as PPE, and ‘middlemen’ who will help provide your waste with a second life. Whatever you do, take your time, be thorough and choose reputable partners with a proven and verifiable track record of success. n

Look at for wasteful practices in all areas of the business

To learn more about Kimberly Clark’s products and how to recycle them through The RightCycle Programme, please visit www.kimtech.eu

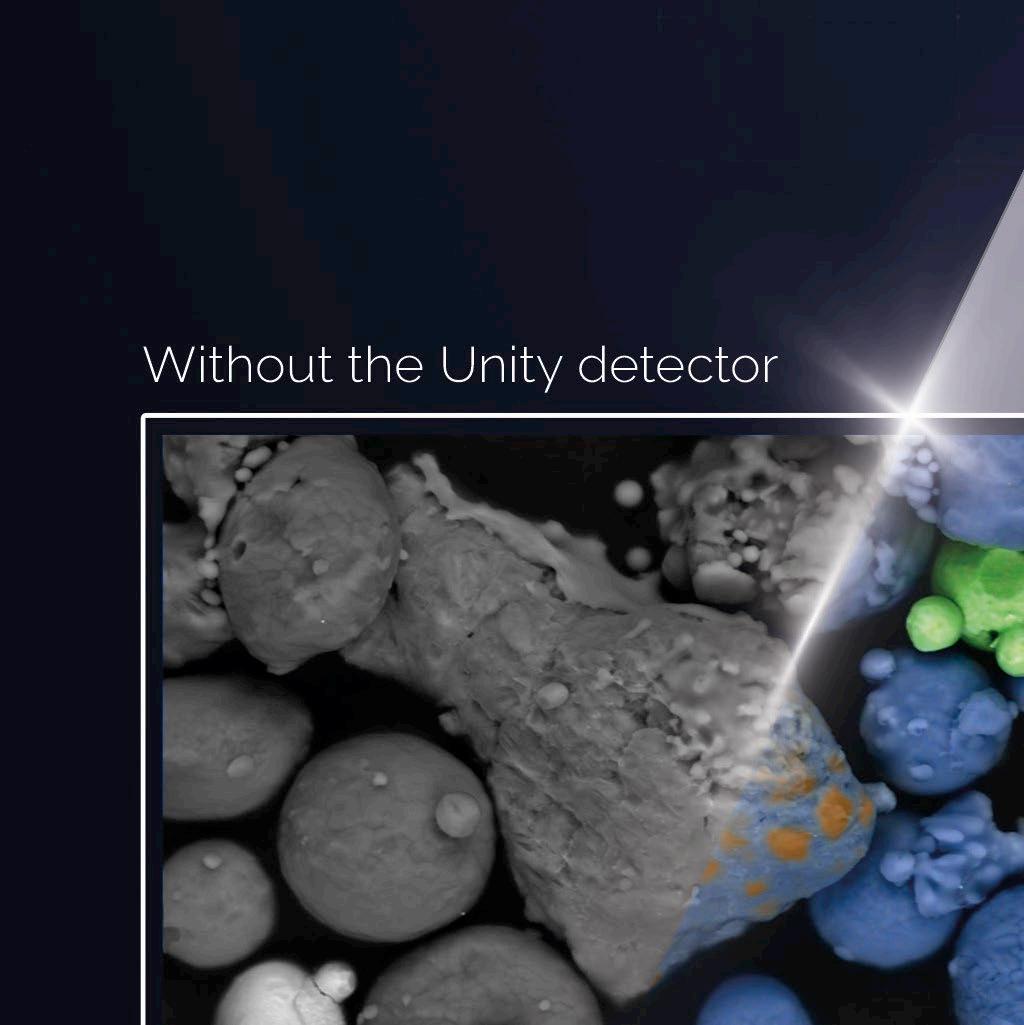

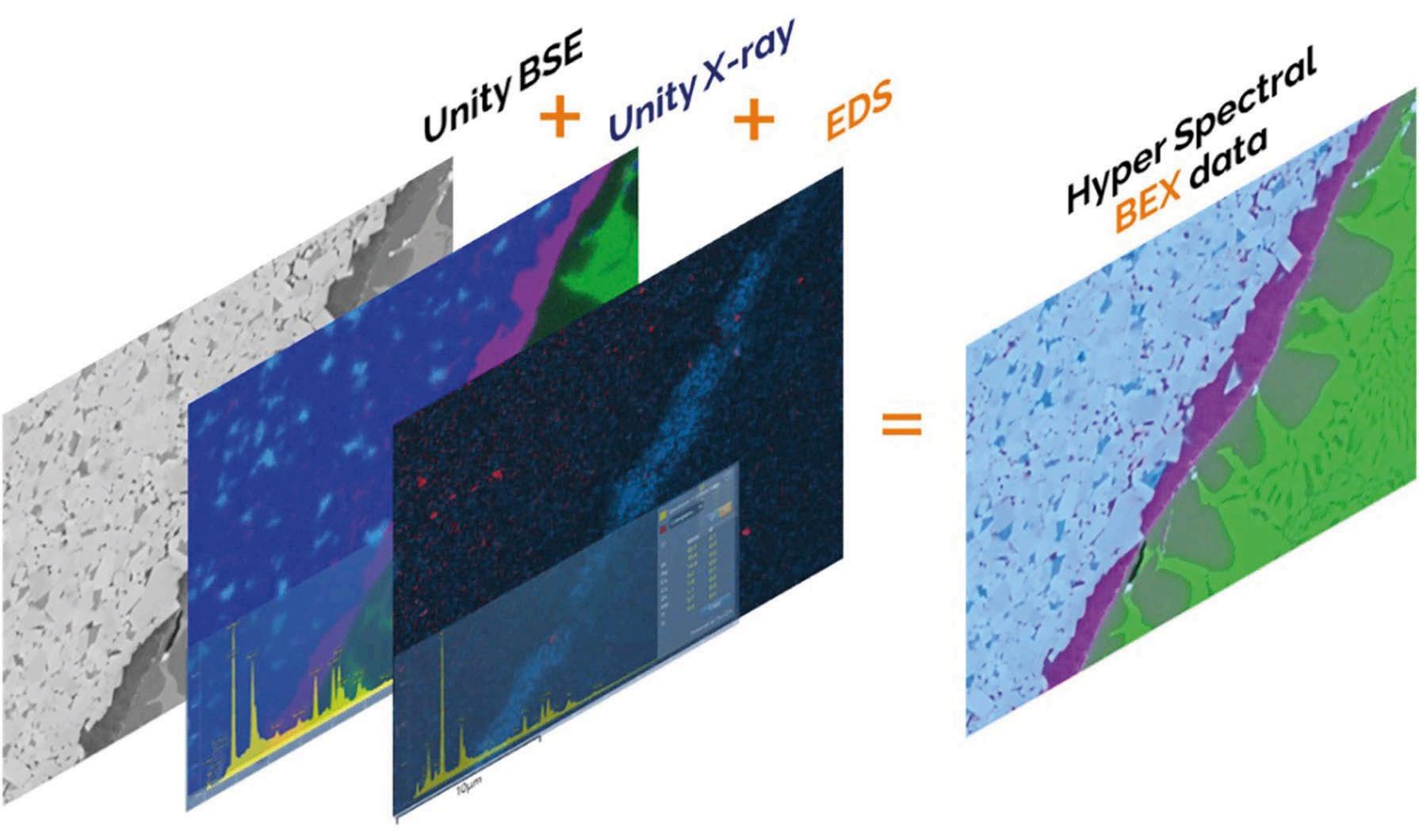

Introducing Unity, the world’s first combined Backscattered Electron and X-ray (BEX) imaging detector.

Accelerate your journey to scientific discovery with instant microstructural and chemical images, acquired simultaneously with the Unity detector.

out how we’re making sophisticated sample analyses simpler and faster than ever before.

Diagnostic application with Nordson EFD Jetting system PICO Pulse XP

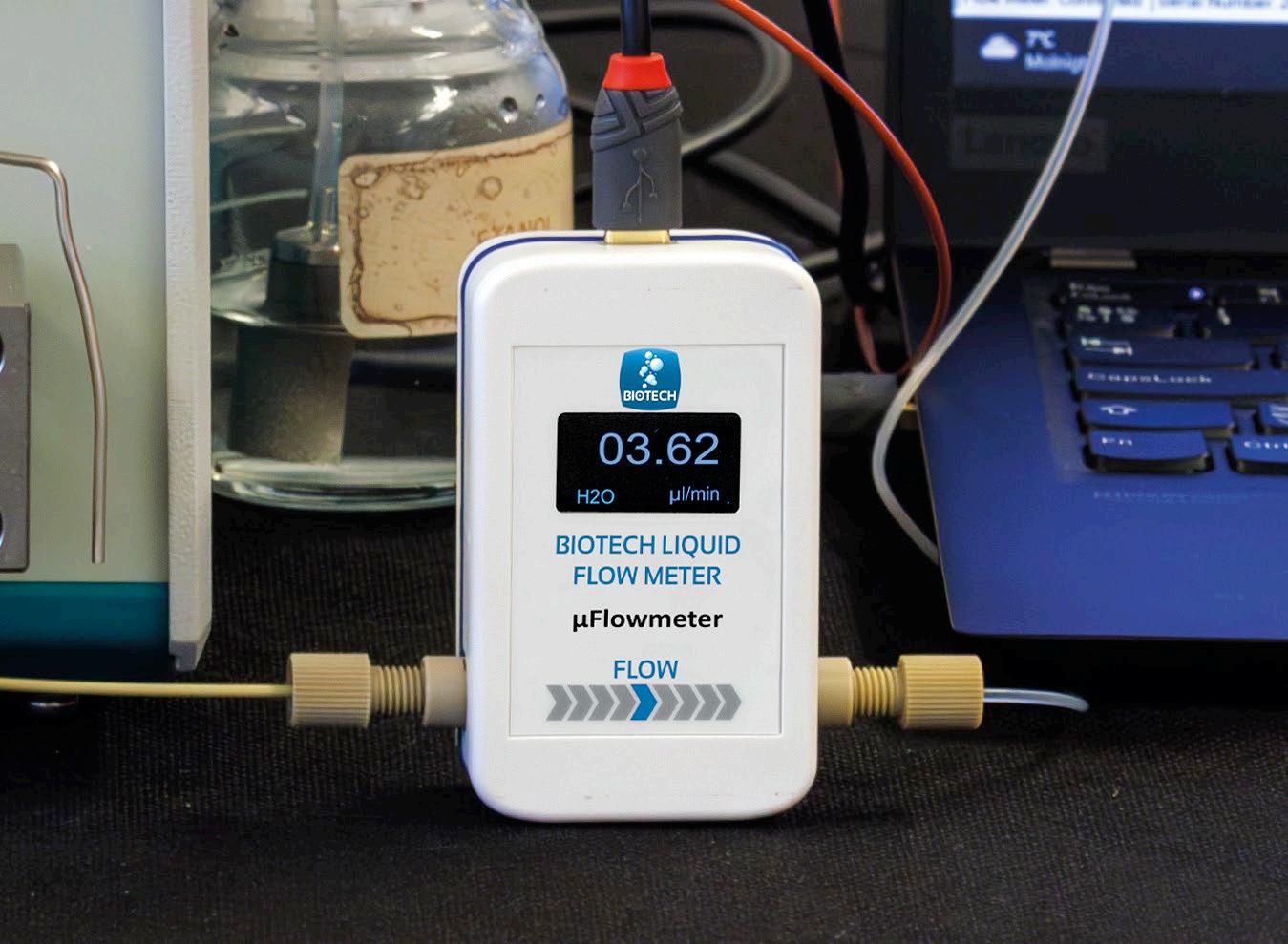

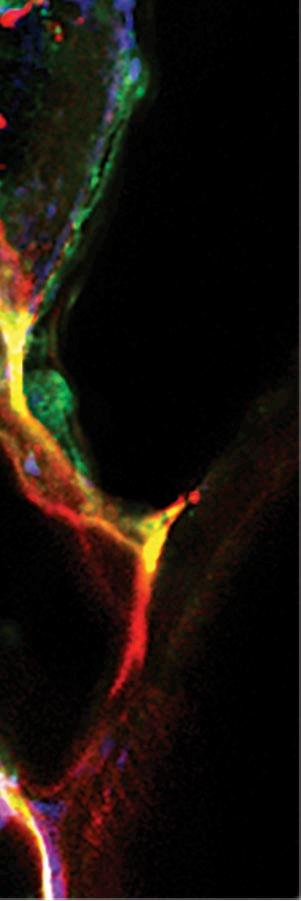

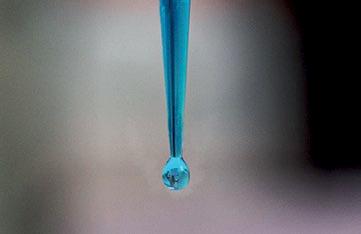

is article explores a range of dispensing techniques that can lead to better process control in the biosciences

During the assembly of medical devices, point-ofcare diagnostics, near-patient testing products and other life sciences applications, it is essential that dispensing of UV-cure adhesives, cyanoacrylates, silicones, and other fluids are accurately and consistently deposited.

Shot-to-shot repeatability and accuracy are critical factors in fluid dispensing, and with particular importance in the manufacture of life sciences products and devices.

Automated robotic dispensing has evolved to support the needs for higher throughput in the assembly of medical devices, point-of-care diagnostics, and near-patient testing products by developing specialised dispensing technologies for automated in-line and near-line assembly.

These technologies provide operators with the ability to set the time, pressure, and other dispensing parameters for an application that improves process control and ensures the right amount of fluid is placed on each part.

An important feature of precision

robotic dispensing is Automated Optical Inspection (AOI). When coupled with CCD cameras and confocal lasers, vision-guided automation platforms provide optical assurance of fluid deposit volume and placement accuracy ensuring a conforming deposit.

There are two basic types of fluid in-line micro-dispensing techniques: classic needle-based contact dispensing and non-contact jet dispensing. While the best method is ultimately determined by the material and application at hand, for many automated fluid dispensing processes, high-speed jetting technology is a popular alternative to traditional, needle-based contact dispensing.

Point-of-care diagnostics and nearpatient testing products both help doctors make critical decisions, and help patients monitor their health. These devices include HIV tests, blood tests, and pregnancy tests. One system that performs exceptionally well for this application is the proprietary PICO Pulse XP jetting system, due to

its fast-dispensing speed and high precision.

Vision has been used in fluid dispensing applications for more than two decades, and is becoming more prominent as robots, and their control software, get smarter. It permits robotic fluid dispensing systems to deliver faster production cycles, and removes the guesswork from the dispensing process. The latest evolution of fluid dispensing robot systems – exemplified in Nordson EFD’s GV, RV, EV, PRO and PROPlus Series models for 3-axes and 4-axes applications – with their advanced software and vision-guided capability, bring repeatability and precision to automated dispensing systems.

Medical devices sometimes warrant a more personalised and manual fluid dispensing approach in their assembly, compared to employing a more automated, robotic system. Often, the geometry of the parts is too complex to make robotic automation a viable option, or the production volume is too low to warrant the investment.

Benchtop fluid dispensing has proven to be a highly workable and reliable method for assembly of medical devices, and the UltimusPlus line of pneumatic benchtop dispensers from Nordson EFD, was designed to meet stringent fluid dispensing needs of today’s highly precise medical device manufacturing. ■

Operator-based fluid dispensing onto a Kythoplasty catheter

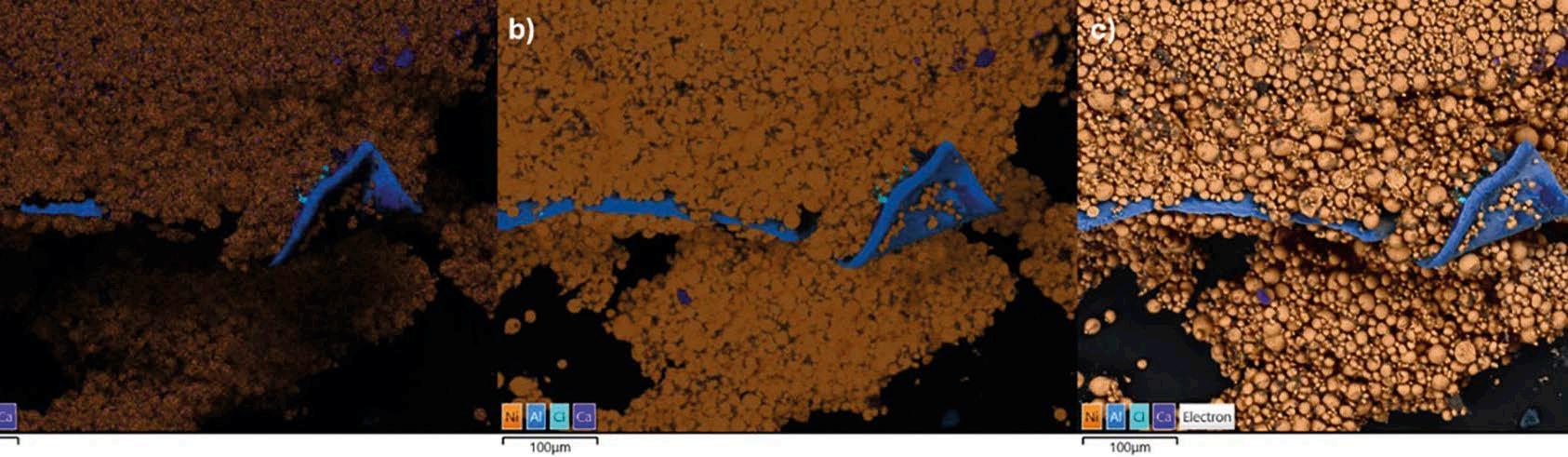

Conventional X-ray mapping, the basis of energy dispersive spectroscopy (EDS), has been around for many years. A backscattered electron image is first generated by the scanning electron microscope (SEM), then an X-ray map is acquired. It requires a prescribed working distance and elevated beam; the map being built up gradually under conditions optimised to ensure accuracy and throughput from a conventional EDS detector.

By contrast, backscattered electron and X-ray (BEX) imaging employs the seamless and simultaneous integration

of these two signals into a single image to understand a sample much more quickly and in more detail, and in essence, generates real-time imaging at a wide range of working distances and imaging conditions. The most important signals, in this case, are electrons (for topography and materials contrast) and X-ray (for constituent elements).

If you spend a lot of your SEM time doing all the exploratory work before collecting any of the data you actually want to analyse or report, then you will find the BEX technique liberating. A SEM without BEX is like driving a car without GPS or watching some films in

black and white; you can, but its either not as quick nor easy as it should be, or it is missing some critical details to achieve a satisfactory outcome or experience.

To achieve this, Unity, the world’s first BEX detector, is a radically different type of detector which collects significantly more X-rays and is comparatively unaffected by sample height compared with conventional side mounted EDS detectors, due to being located directly below the microscope pole piece.

The unique design of Unity makes BEX imaging a practical technique by combining electron and X-ray sensors in one unit above the sample and below the pole piece – occupying the traditional backscatter detector position in the microscope. Therefore, its X-ray sensors also benefit from this favourable geometry. The result is a much higher signal by ensuring good line of sight at any sample height. An additional advantage of this overhead view is considerably less sensitivity to topography (Figure 1), and this largely removes the problem of shadowing for rough/highly topographic samples.

It is the special shape of the Unity sensor head that allows line of site at most working distances. Unity’s signal processing is optimised, so it processes more X-rays faster and more accurately; EDS allows their contribution to BEX imaging to be optimised. Count rates

Figure 1: Battery cathode sample, comparison of outputs: A) EDS map B) BEX element images from the X-ray detectors in the Unity detector; note the significantly higher intensity and data from the shadowed parts of the sample in A, C) Complete BEX image where X-ray and backscatter images from Unity are combined

Figure 2: Multi-signal dataset combined together to produce a detailed picture of the sample

Figure 3: BEX image of an uncoated shell collected in VP mode; note the image quality and level of elemental information revealed from this highly topographic area

of the EDS are much lower under BEX imaging conditions. This suits more analytical tasks better, like automatic peak identification and low energy X-ray measurement.

By combining Unity with a conventional EDS detector, we achieve the best of all worlds, including an accurate element ID to identify the elements of interest in a sample and optimised light element detection (from EDS); a very fast, low artefact X-ray imaging for the majority of elements (from Unity); and quantitative information from EDS acquired at the same time.

From all this data collected from all other sensors, a single, hyperspectral image (Figure 2) is created by the AZtec BEX imaging software, seamlessly, in the background. The best data is selected by the software expert algorithms and presented automatically to the user. In this way, EDS is an important signal source that adds more information to the BEX image.

BEX enables analysis under conditions not feasible with EDS, such as low

vacuum (LV) mode. EDS in LV mode suffers from artefacts caused by scattering of the beam and low count rates. By contrast, the electron sensors in Unity work very well in LV mode, and at 50-100Pa, it collects clear, high quality, BEX images, including electron and X-ray information (Figure 3).

X-ray mapping will remain an important function of SEM. Unity is fully compatible with the X-ray mapping software in AZtec and can be used in combination with conventional EDS detectors during X-ray map acquisition, with the capability to compare the X-ray maps collected with each detector.

X-ray mapping is also much more efficient when BEX imaging has been used to identify those regions of the sample that require more detailed analysis by a more detailed and accurate EDS approach. n

Dr Haithem Mansour and Dr Simon Burgess are with Oxford Instruments. nano.oxinst.com

Dr Barry Whyte discusses how microplate readers could help accelerate drug discovery and development

Protein-protein interactions, which underpin many crucial molecular events taking place in a cell, are an important area of research and discovery. A typical cell contains thousands of proteins and few of them function alone. It is therefore vital to study how proteins interact with one another and the tools to investigate these interactions have continued to advance over time. Applications amenable to scale can significantly accelerate research and discovery of inhibitors and drugs, for instance. Here, we look at a few examples of how detection technologies in microplate readers provide options for the study of protein-protein interactions in the rapidly emerging area of targeted protein degradation.

Microplate readers support many

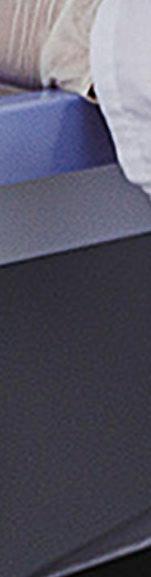

useful applications in the life sciences due to their ability to provide ready access to a range of detection technologies. Protein-protein interactions require efficient, highlysensitive assays often with many measurements in a short period of time. For the screening of molecules, it is crucial to have detection technologies that are compatible with automation, and which deliver the required sensitivity for miniaturised assays. Fluorescence- and luminescence-based measurements are often used for this purpose. In addition to detection methods based on Förster’s Resonance Energy Transfer (FRET), fluorescence polarisation is an advanced detection mode that offers exciting opportunities for the

study of protein-protein interactions. ATTECs (Autophagy-Tethering Compounds) are a novel class of bifunctional molecules proposed to hijack the autophagosomal pathway for the degradation of cellular components including proteins. The induction of Atg8 family protein interactions with target proteins of interest offers the possibility for novel targeted protein degradation approaches. LC3A is a member of the human Atg8 protein family and is only found in the autophagosome. The discovery of potent LC3A inhibitors would therefore serve as a handle for the development of ATTECs.1

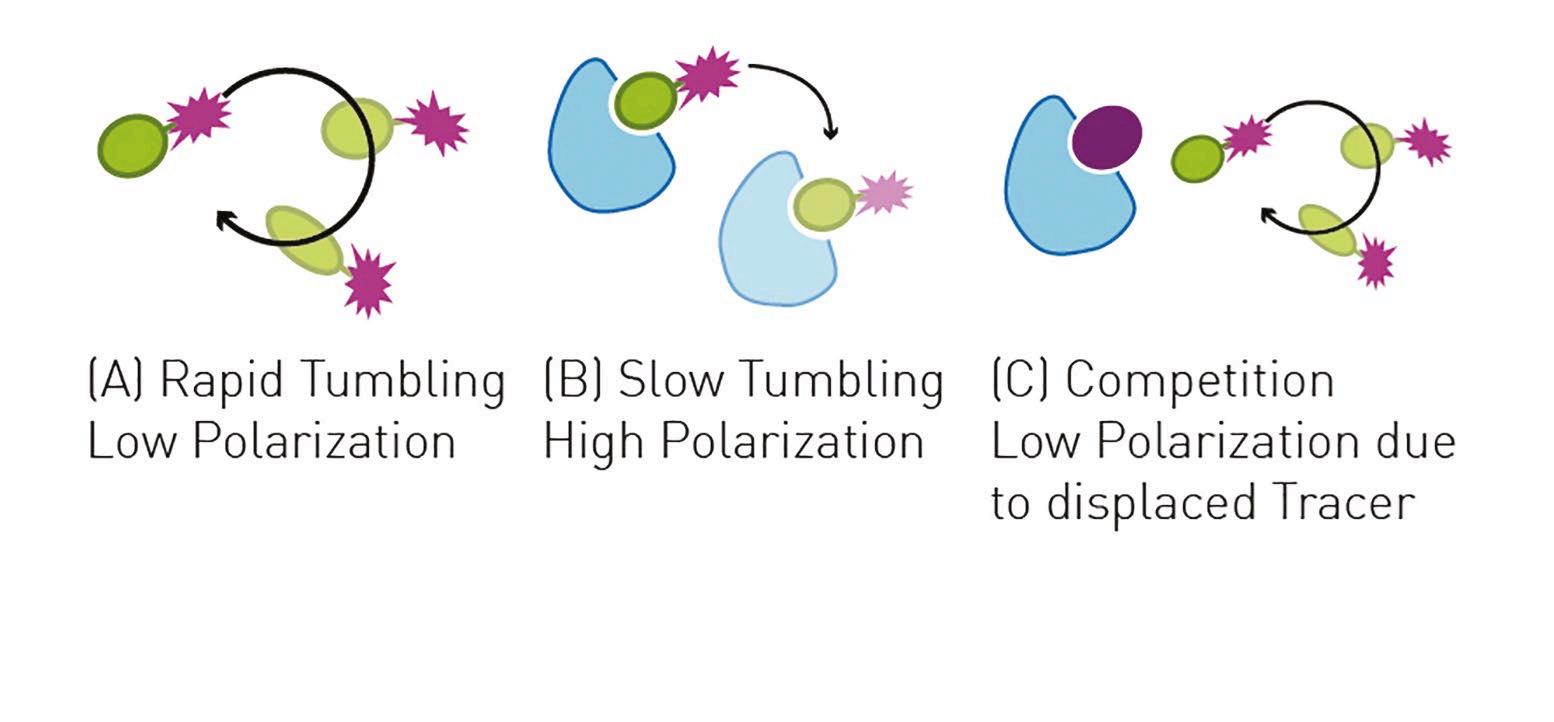

In the following example, a fluorescence polarisation assay was developed based on the use of a

Figure 1: Adding competing ligands of LC3A to the LC3A-tracer complex results in a displacement of tracer

fluorescently labelled peptide ligand that binds to LC3A. p62 LIR peptide, a peptide ligand of LC3A, was labelled with a Cy5 fluorophore to act as a tracer molecule. The addition of competing ligands of LC3A to the LC3A-tracer complex results in a displacement of tracer and a reduction of the fluorescence polarisation signal (Figure 1). Miniaturisation of this assay allowed for library screening in 1536-

well plates (Figure 2) that was suitable with automation for screening of large compound libraries in days.

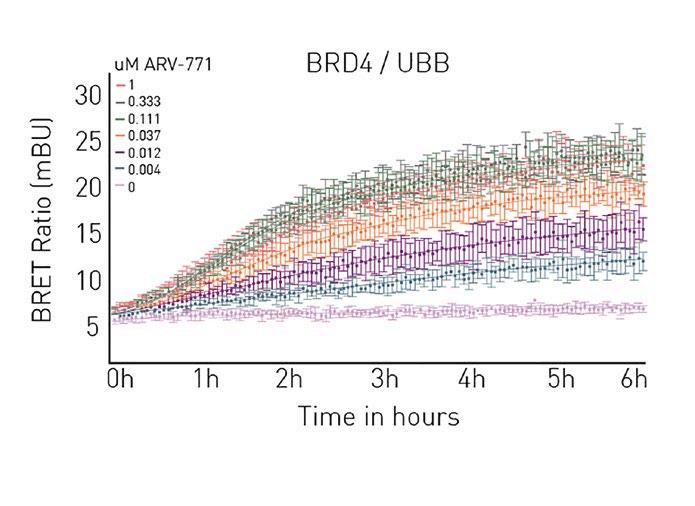

In another example related to targeted protein degradation, a NanoBRET-based approach was used to look at the dosedependent ubiquitination of BRD4. Bromodomain-containing protein 4 is a transcriptional regulator implicated in cancer biology and inflammation. Ubiquitination is a crucial step in the action of PROTACs, small molecules that help target unwanted proteins to the ubiquitin-proteasome system. In this case, PROTAC ARV-771 brought a BRD4-labeled HiBiT (a subunit of the NanoLuc luciferase) into proximity with the E3 ubiquitin ligase. Ubiquitin tags on the target protein earmarked it for degradation by the proteasome. Here it was possible to measure the live cell kinetics of ternary complex formation with the E3 ligase as well as the efficiency with which the target protein is ubiquitinated, crucial information to confirm mode of action and to probe ways to improve drug efficacy (Figure 3).

Figure 2: Miniaturisation of the LC3A fluorescence polarisation assay allowed for library screen in 1536 well plates

Figure 3: NanoBRET assay monitoring ternary complex formation in live cell kinetics

Many applications in the laboratory need to be performed at scale and sensitivity and speed are crucial. Highthroughput screening assays need to be compatible with automation to accelerate measurements but must also deliver the required sensitivity. Binding studies for protein-protein interactions provide valuable information on reaction kinetics, dissociation constants and the stoichiometry of interactions. FRET, for example, can be used together with dye-labelled proteins to determine the stoichiometry of protein interactions. Awareness of stoichiometry can be crucial for example in calculations to quantify labelled biomolecules that assemble or are

active in different ratios (dimers, trimers, etc.). Studies on binding kinetics on BMG Labtech microplate readers are facilitated by the MARS (Measurement, Analysis & Reporting) data analysis software.

Multimode microplate readers like the PHERAstar FSX from BMG Labtech offer many features that make them ideal platforms for research applications at scale (Figure 4). Users have flexibility of choice since all commonly-used detection modes are available at the performance level required for screening of molecules. In addition, features such as on-board reagent injectors, simultaneous dual emission for the detection of two emission signals at the same time, and ultrafast sampling rates with detection times of up to 0.01s allow kinetic analysis of interactions in real time at high throughput. Collectively, these features provide the robust high performance needed for automated applications at scale in the modern research laboratory. ■

Modified viruses are used as viral vectors (or ‘carriers’) in gene therapy

Breaking the vector characterisation

bottleneck with macro mass photometry

Viral vectors play a pivotal role in the advancement of vaccines and cell and gene therapies (CGTs), serving as versatile therapeutic delivery vehicles. Vector characterisation is an important analytical step of the therapeutic production pipeline, but can be a longwinded process, requiring extensive biological (e.g., PCR), cellbased (e.g., infectivity assays), and

physicochemical (e.g., analytical ultracentrifugation (AUC)) testing.

Conventional characterisation techniques bring limitations: Cellbased vector analysis takes days to perform, while more rapid approaches, like nanoparticle tracking analysis (NTA), provide limited characterisation data. Along with a paucity of in-process analysis tools, these obstacles create a significant bottleneck at the vector characterisation stage for vaccine and CGT programs.

In light of the demand for new innovations to improve the accuracy and speed of vector analysis, macro mass photometry has emerged as a promising solution. Using light scattering to analyse particles, this novel method unlocks a host of valuable vector characterization capabilities, including rapid and accurate determination of full/empty capsid ratios, sample purity and more.

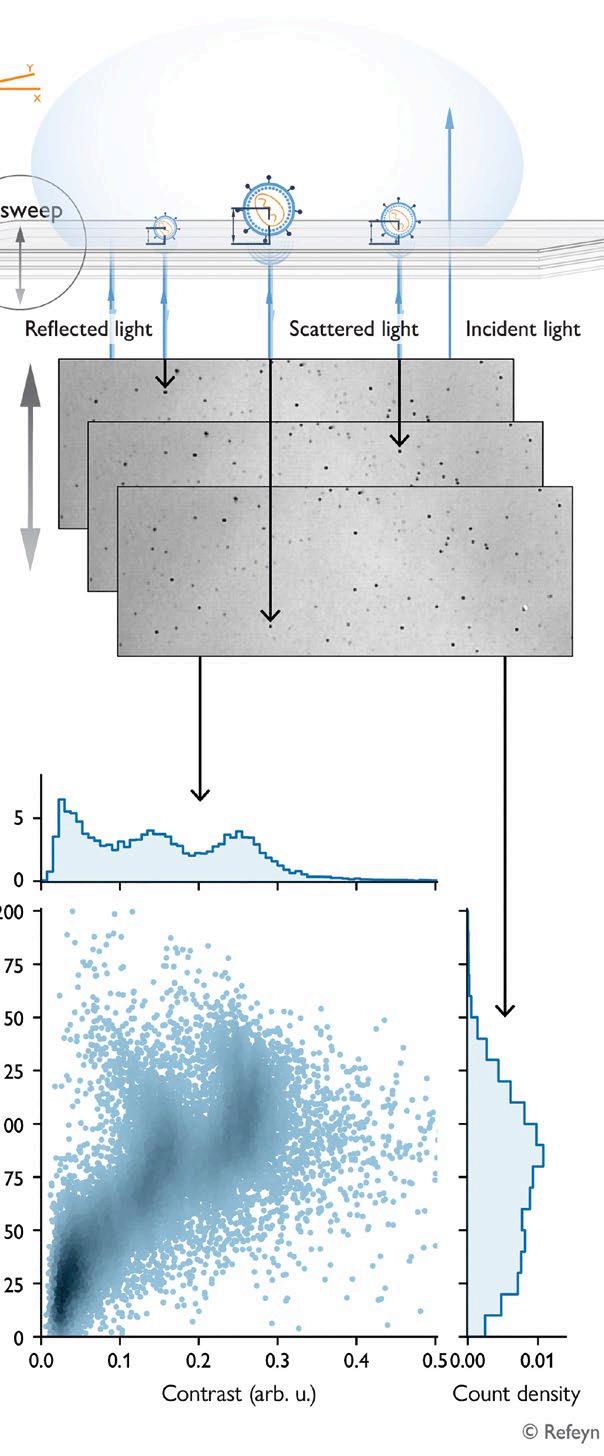

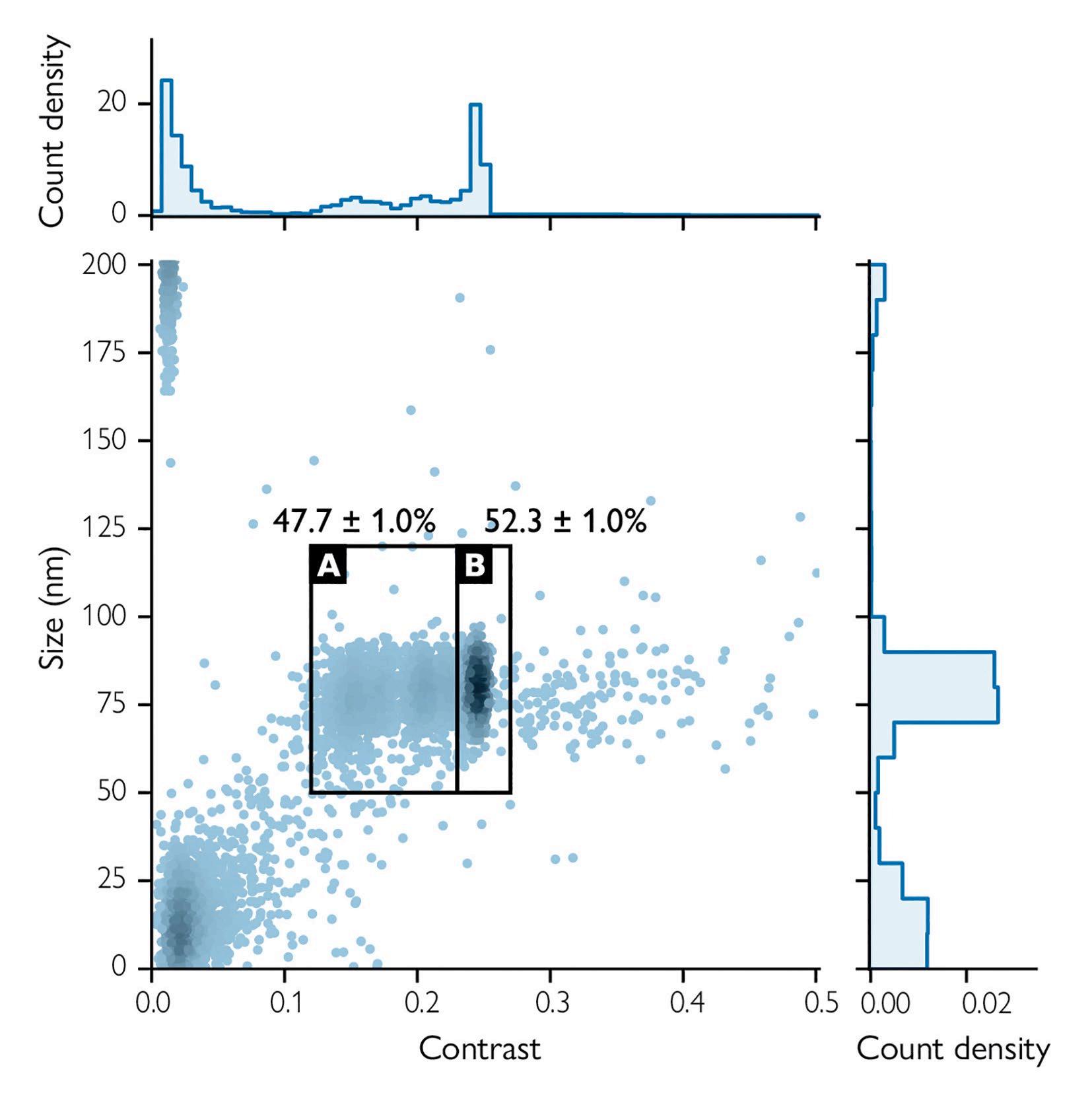

Macro mass photometry interrogates two parameters in parallel: particle scattering contrast (a proxy for mass)

and size (diameter). During a typical run, a droplet of sample on a carrier slide is illuminated from below and imaged while being moved vertically (Figure 1).

Each analysis returns size and contrast measurements for every particle, making it possible to determine the size-contrast distribution. This enables users to characterise multiple populations within a sample and assess sample purity and stability.

Developed by Refeyn Ltd., macro mass photometry is a rapid, reliable and convenient approach to inform process development and optimisation for vaccine and CGT development. Macro mass photometry is performed in an all-in-one benchtop instrument that delivers results in several minutes. In the analysis of vectors, the technology can be leveraged to inform:

● Relative population counts

Viral vectors are designed to deliver genetic material into cells and are one of the most e ective methods of gene therapy. Viruses have evolved to develop mechanisms that insert their genomes inside the cells they infect. Modified viruses are used as viral vectors (or ‘carriers’) in gene therapy, protecting the new gene from degradation while delivering it to the ‘gene cassette’ in target cells.

(correlating with physical and infectious titer)

● Particle morphology (size distribution)

● Sample purity

● Stability and degradation

Adenovirus (AdV) characterisation can be challenging due to the coexistence of various particle types in the process. Before downstream

❝ Adenovirus characterisation can be challenging due to the coexistence of various particle types in the process.

purification, a typical AdV sample will contain full AdVs, empty capsids, helper viruses, and fragments. Full AdVs are functional and therefore desired, while empty capsids and fragments can decrease therapeutic efficacy. Furthermore, helper viruses can be immunogenic and pose safety concerns.

Since molecular analysis techniques struggle to discriminate between these populations, AUC has become the benchmark for determining empty and full AdVs by their density, but

the approach lacks scalability. Macro mass photometry can efficiently distinguish between particles in a sample, providing convenient means for quantifying impurities and monitoring the process during manufacturing, saving time and resources (Figure 2).

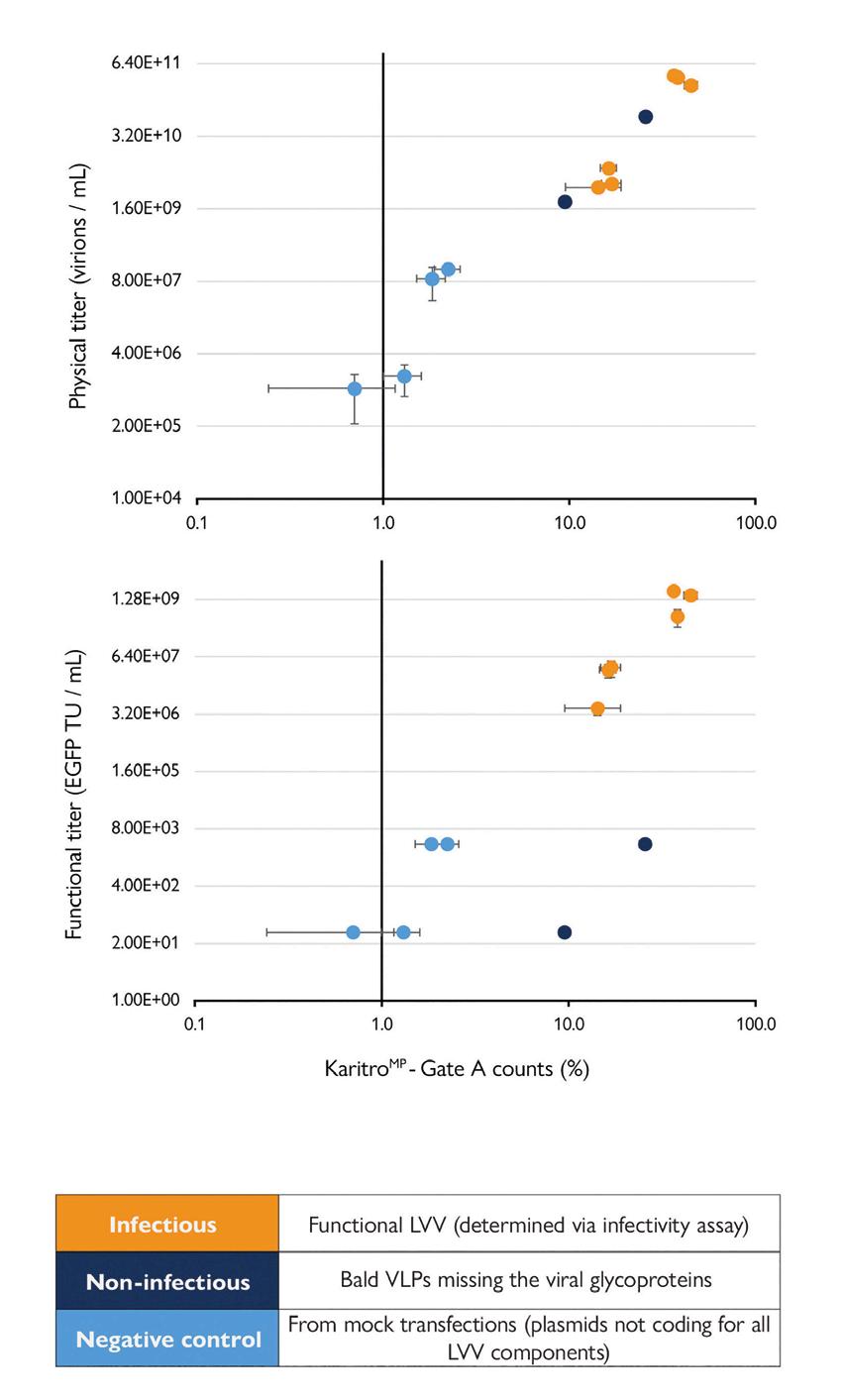

Following a lentiviral vector (LVV) production process,

the product containing infectious (functional) LVVs commonly retains a significant proportion of non-infectious vectors missing key genetic or protein components. Macro mass photometry can be leveraged to rapidly assess the amount of LVV present in a sample and distinguish LVV populations based on their functionality.

The study data shown (Figure 3) demonstrates a positive correlation between the percentage counts (determined by macro mass photometry) and physical titer measurements (determined by PERT assay). The percentage counts are also found to correlate positively with results from an infectivity assay, with the exception of measurements of the non-infectious samples as macro mass photometry is insensitive to infectivity. Overall, the results demonstrate the valuable utility of macro mass photometry in characterising LVV samples.. n

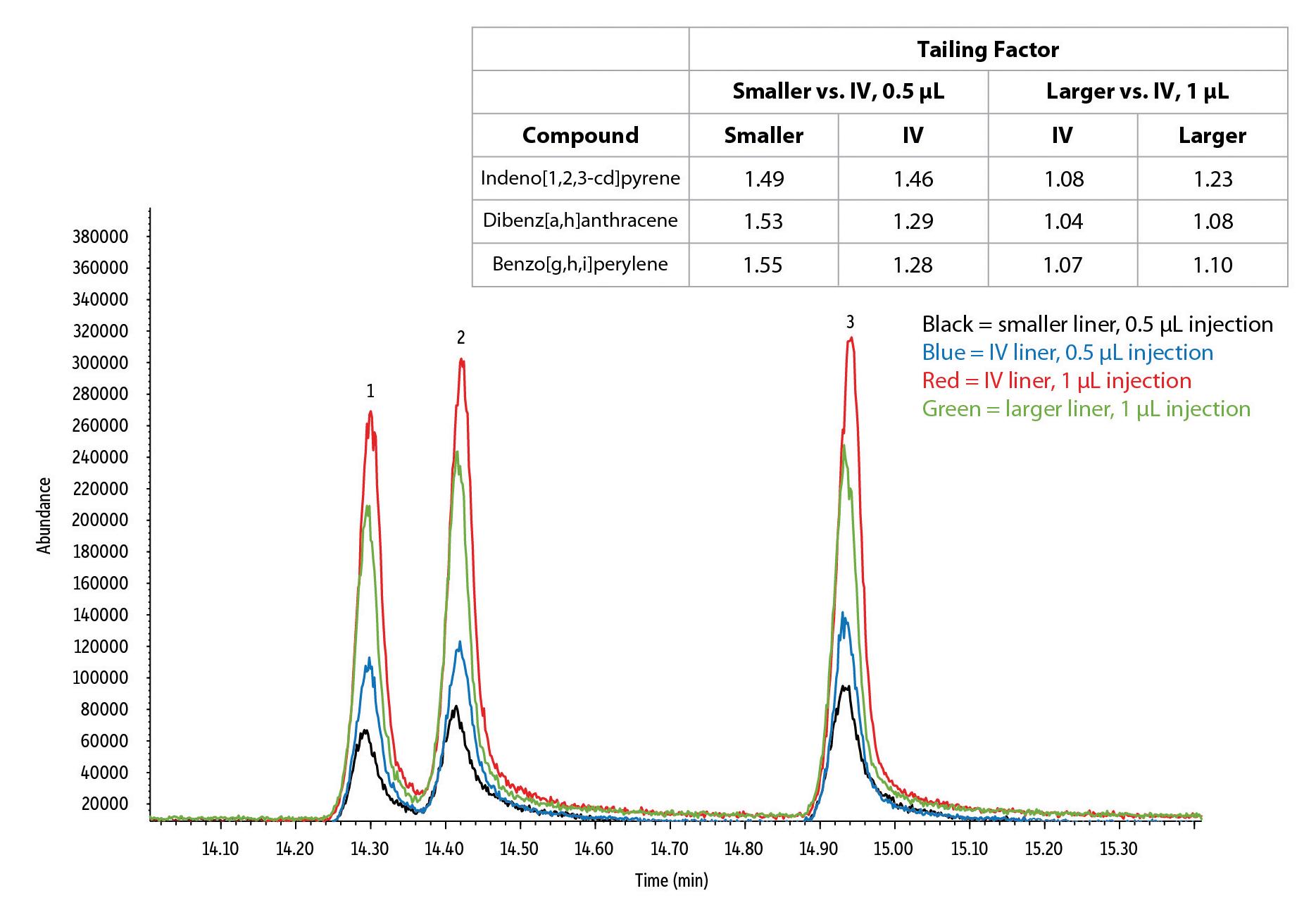

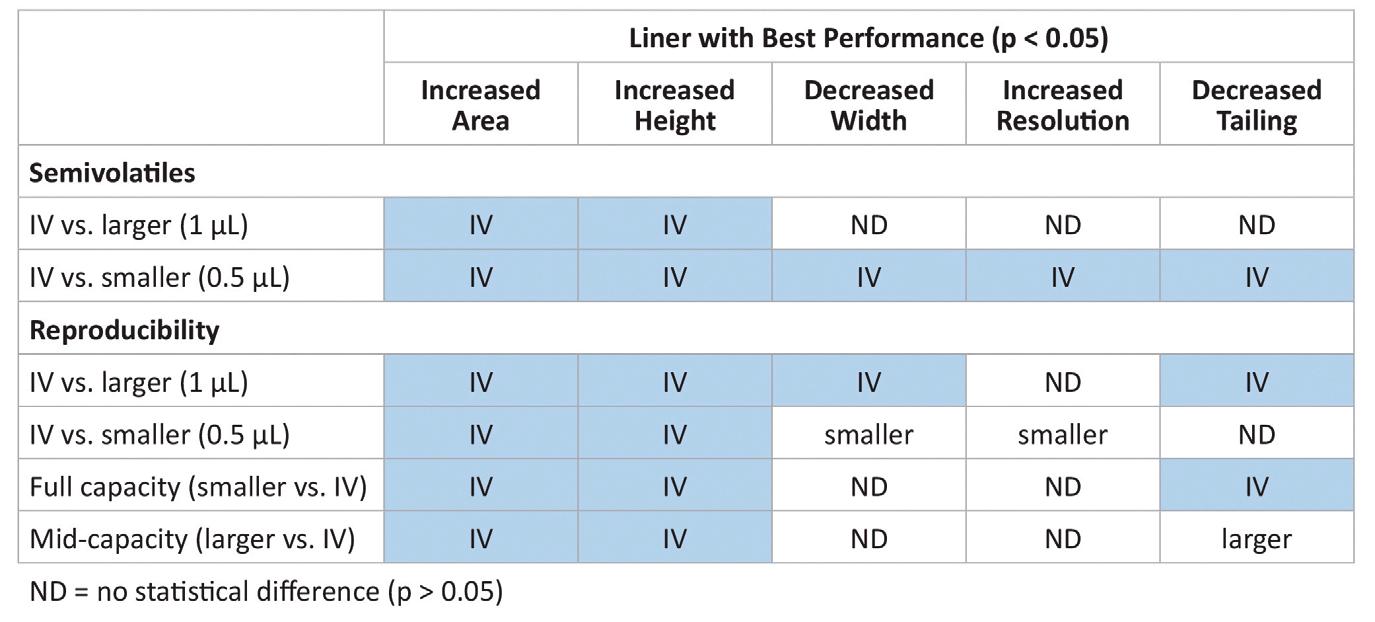

The benefits of high-efficiency, narrow-bore columns can only be fully realised when sample introduction is also optimised. Dr Mark Badger explores a novel way to improve chromatography and reproducibility

Faster analysis times can improve lab productivity, but only if chromatographic performance still allows accurate peak identification and quantification. Narrow-bore GC columns (<0.25 mm ID) speed up analysis because they have greater chromatographic efficiency, which produces tall, narrow, symmetrical peaks that are easy to identify. While using narrow-bore columns can be a good approach to creating fast, highly efficient chromatography with a typical GC-MS setup, their effectiveness is limited by sample introduction.

A variety of sample introduction options are available but split/splitless inlets are the most common. For these inlets, liners with internal diameters of ~2 mm or ~4 mm are typically used. The smaller volume liners (~2 mm ID) transfer sample onto the column faster and in a narrow band, which can improve resolution, decrease the splitless hold time, and minimise adverse interactions, such as adsorption and reactivity. However, lower liner volume means less sample can be injected, which can reduce

sensitivity and reproducibility. In contrast, larger volume liners ( 4 mm ID) provide greater sample capacity, which can improve sensitivity and reproducibility, but their lower flow rates can cause band broadening, wider peaks, poor resolution, and analyte degradation due to the longer residence time in the liner. Ultimately, when choosing between larger and smaller volume liners, tradeoffs must be made in terms of capacity and chromatographic performance.

To give narrowbore column users more flexibility and allow additional sample to be

injected, without risking backflash or compromising on peak characteristics, Restek has developed an intermediatevolume (IV) liner, that has a 3 mm ID. Compared to smaller volume liners, IV liners allow injection volumes to be doubled because they have significantly more room to contain the solvent vapor cloud. And, since IV liners have faster sample loading capabilities than larger volume liners, good chromatographic performance is maintained because the analytes spend less time in the inlet.

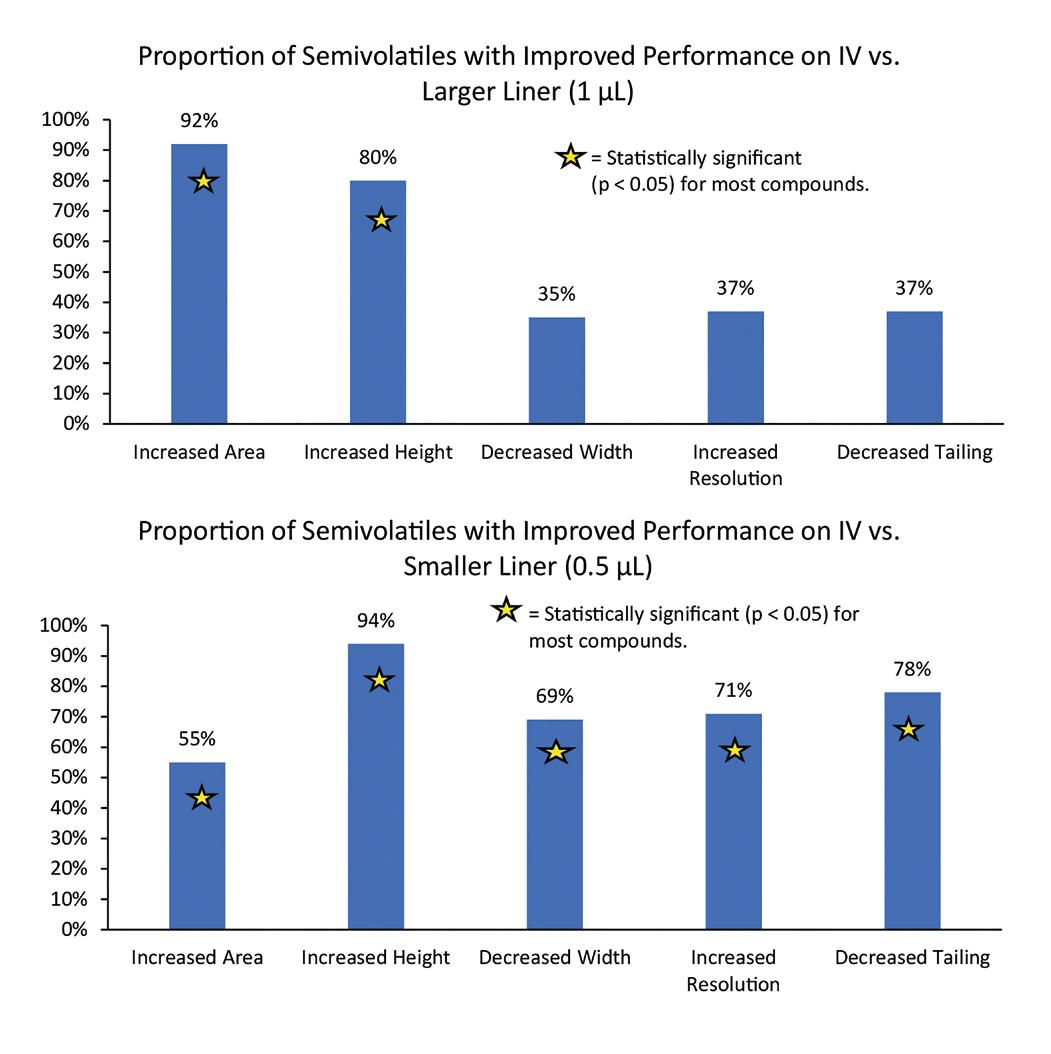

To assess the performance of IV liners versus larger and smaller liners when used with narrow-bore columns, we compared peak characteristics and resolution of 51 semivolatile compounds analyzed on an RxiSVOCms column (20 m x 0.15 mm ID x 0.15 µm). To avoid exceeding maximum liner capacities, six 0.5 µL injections were made on the IV and smaller liners, and six 1 µL injections were made on the IV and larger liners. When comparing the 1 µL injections, peak area and height were significantly improved (p < 0.05) for most compounds when using the IV liner, making peak identification and quantitation easier and more accurate. As shown in Figure 1, 92% of compounds showed greater average

2: Using IV liners improved peak height and symmetry compared with smaller and larger liners

For instrument conditions, visit www.restek.com and enter “GC_EV1510” in the chromatogram search

peak areas and 80% showed a greater average peak height when using the IV liner. Peak width, resolution, and symmetry were not statistically different from results for the larger volume liner for most compounds, which was attributed to solvent effects causing poor peak shape for some early eluting compounds using both liners. Performance did improve for early eluting compounds when less sample was injected as well as overall for later eluting compounds, which is likely the result of the IV liner’s faster sample transfer onto the column (Figure 2).

For the 0.5 µL injections, the difference was more dramatic: every peak parameter that was investigated showed statistically significant improvements when using the IV

liner versus the smaller volume liner (Figure 1). These benefits can be attributed to the IV liner providing a narrow sample transfer band and maintaining resolution from the solvent peak. Improved performance was particularly beneficial for separating closely eluting isobaric semivolatiles, such as benzo[b] fluoranthene and benzo[k]fluoranthene (resolution of 1.83 vs. 1.93 on the smaller and IV liner, respectively).

To evaluate how consistent chromatographic performance was, the relative standard deviations (%RSD) of averaged parameters across all compounds in all experiments were compared across the different liner

Table 1: Of the experimental comparisons evaluated, 86% (43/50) showed better performance or no statistical difference when using an IV liner with narrow-bore columns

sizes and injection volumes. As shown in Table I, the intermediate-volume IV liner demonstrated statistically better reproducibility for peak area and height on narrow-bore columns for both injection volumes and at both partial and full capacity. Results for peak symmetry were also more reproducible using an IV liner, except for the 0.5 µL injections where there was no statistical difference, and the mid-capacity comparison where symmetry was only 0.1% better using the larger volume liner.

Table I summarises the statistical results of all the experiments and provides a high-level evaluation of the effects of liner choice on chromatographic performance when using narrow-bore GC columns with splitless injection. Of the 50 comparisons, 43 (86%) showed the intermediate-volume IV liner provided improved (22/50) or equivalent (21/50) performance compared to smaller and larger volume liners. Results were most pronounced for area and height, which are critical for accurate peak identification and quantification. Effects were more varied for width, resolution, and symmetry, but these parameters can also be strongly affected by the other method conditions (isothermal temperatures, column phase, solubility, etc.) and elution time.

Based on this data, IV liners provide more balanced and improved chromatographic performance compared to smaller and larger volume liners. When using IV liners with narrow-bore columns, more sample can be injected compared to smaller liners, reducing the negative effects caused by solvent and liner capacity. In addition, compared to larger volume liners, the sample is introduced onto the column more quickly and in a narrower band, taking better advantage of the column’s intrinsic high efficiency. In conclusion, the intermediate volume of IV liners contributes to fast, sensitive, and highly reproducible analyses, which allows labs to maximize the benefits of narrow-bore columns. n

Dr Mark Badger is Product Manager at Restek.

How one product delivers a deeper understanding of the physical structure of a compound than traditional GPC/SEC characterisation

Tabsolute intrinsic viscosity and precise Mark-Howink coefficient data. This enables users to unlock information about both chain flexibility and molecular density.

As a result, the determination of macromolecular branching can be based on solid data rather than assumed or projected data, opening the way to a deeper understanding of the macromolecule under study.

and reproducibility can easily be achieved from one day to the next. This triple detector GPC/SEC platform is supported by a software suite that combines operation that aims to be intuitive with all the necessary tools to generate and present results from the collected raw data.

Each detector in the Trinity has

independent temperature control to ensure that high levels of precision

riple Detection GPC/SEC is a tried and tested technique for the complete and accurate characterisation of macromolecules including proteins, polysaccharides, and synthetic polymers. Most often the technique combines both light scattering and viscometer detectors with a refractive index (RI) detector to deliver invaluable molecular weight and structure information to researchers A powerful new triple detector for Gel Permeation Chromatography / Size Exclusion Chromatography (GPC/SEC) called Trinity has been released by Testa Analytical. [uses software]

limited to molecular weights

The product is aimed at researchers whose focus is not limited to molecular weights but needs a deeper understanding of the physical structure of a compound then the powerful combination of Differential Refractive Index (DRI), Multi-Angle Light Scattering (MALS) and Viscometer detectors.

FOR MOLECULAR

The Trinity Triple Detector delivers absolute values for molecular weights plus

Drawing upon over 30 years of experience, Testa Analytical has established itself as a creator and supplier of innovative, high performance chromatography instrument kits, and detectors with many end user and OEM clients around the world. ■

■

Detector delivers absolute values for molecular weights

• Outstanding inertness keeps calibrations passing and samples running.

• Consistent column-to-column performance.

Highly complex samples make it tough to see trace-level semivolatiles. But, new Rxi-SVOCms columns are designed specifically to reveal accurate results for the most challenging compounds. Get clear, consistent performance you can count on.

• Excellent resolution of critical pairs for improved accuracy.

• Long column lifetime.

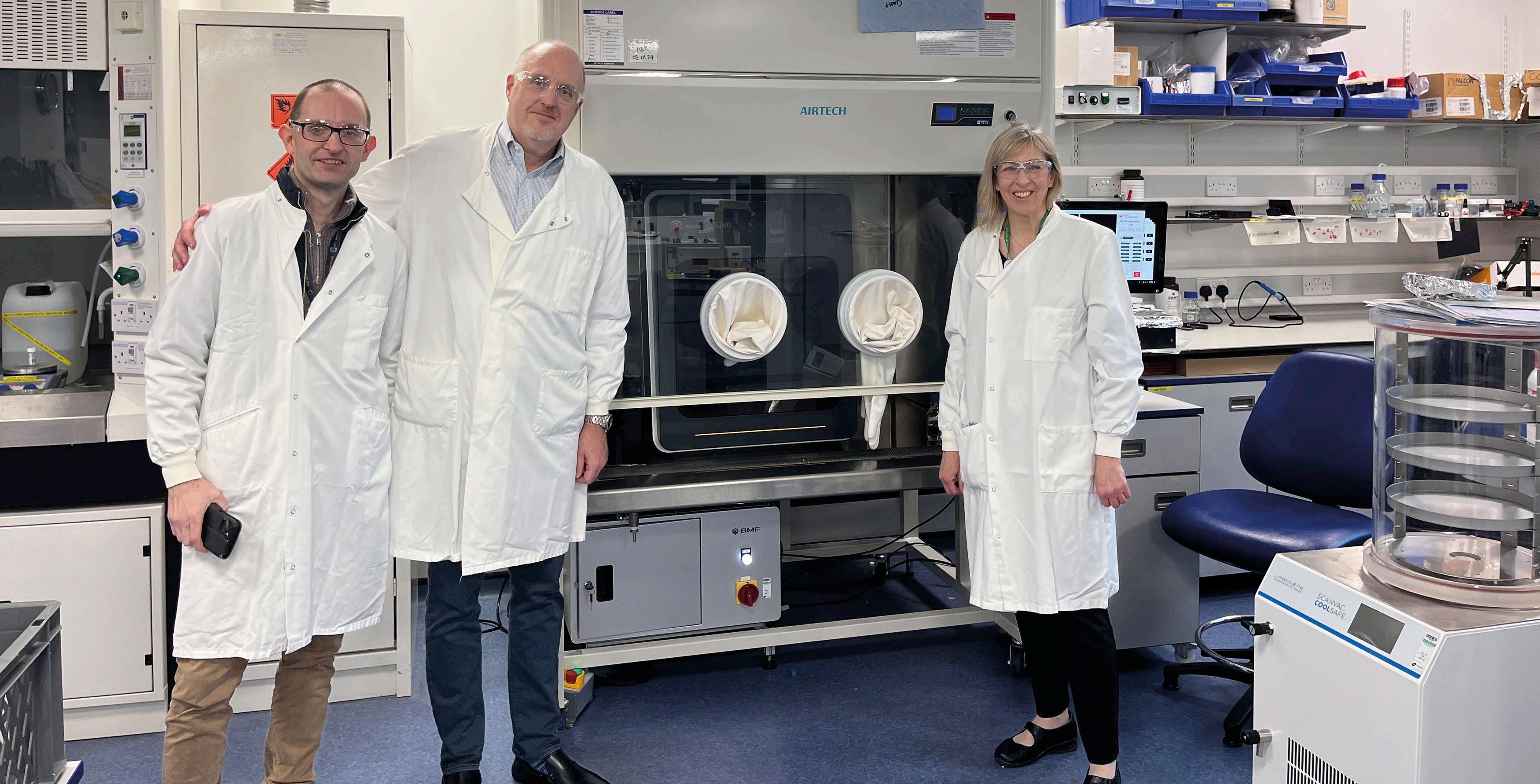

The advancement of semiconductors is among the most vital endeavours in technology

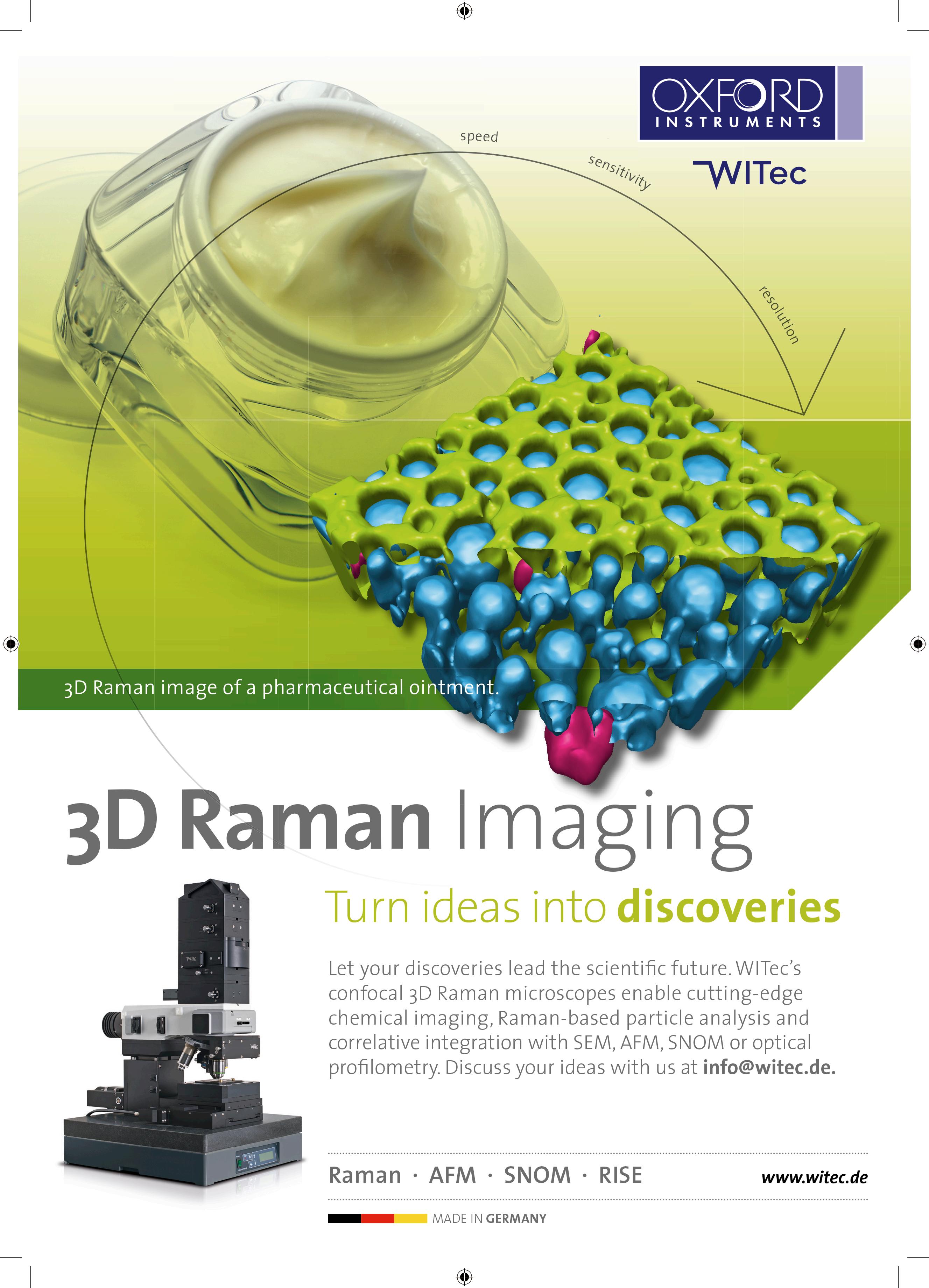

Doping and Topographic Variation Visualised by Thomas Meyer, Judith Beer and Damon StromOxford Instruments WITec

Semiconductors are the materials from which the engines of the information age are built, and their advancement is among the most vital endeavors in technology. The first step in their production generally involves crystal growth and sectioning into thin wafers. The wafers are then altered using methods such as doping to give them specific electronic properties. Access to the subtlest details of these chemical and structural modifications on the sub-micrometer scale is crucial in new device development and final product quality control.

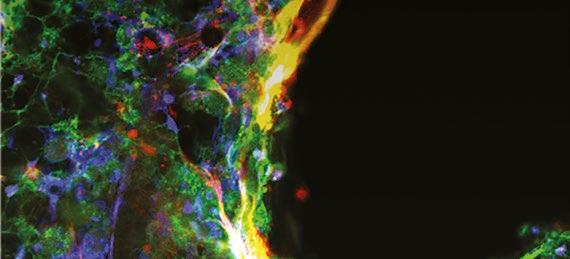

Based on inelastic light scattering by molecules that produces unique energy shifts, Raman spectroscopy can quickly identify material components. In Raman imaging, a spectrum is acquired at each pixel by scanning the sample, which provides local chemical information. Confocal Raman imaging features a beam path that strongly rejects light from outside the focal plane for generating depth scans and 3D measurements.

Raman microscopy is a powerful tool for semiconductor research that can nondestructively acquire highresolution, spatially-resolved information to determine the chemical composition of a sample, visualize component distribution, and characterize properties such as crystallinity, strain, stress or doping. This is particularly valuable for compound semiconductors, which often consist of multiple elements and complex structures.

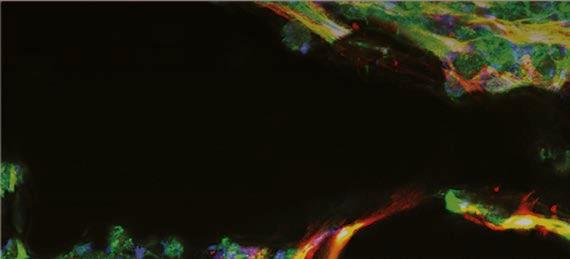

The measurements below demonstrate the insight that correlative Raman imaging can provide to researchers investigating stress, doping and topographic variation in a large-area wafer measurement, and evaluating a Frank-Read source in a 3D correlative Raman and photoluminescence imaging experiment.

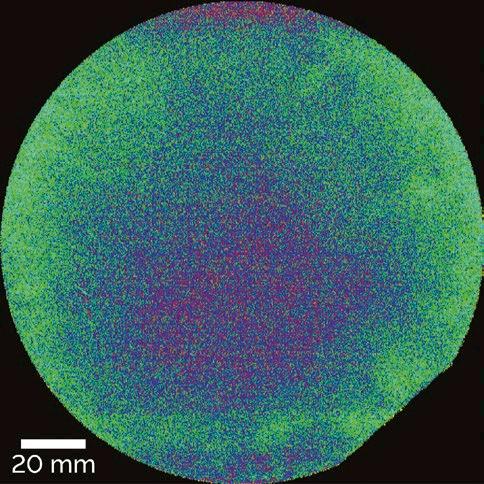

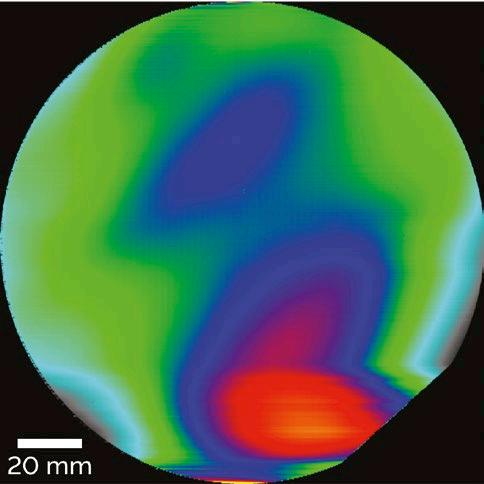

To meet the challenge of maintaining nanoscale precision across the surface of a 150 mm (6 inch) diameter Silicon Carbide (SiC) wafer, a WITec alpha300 Raman system was used. This example was outfitted with a large-area scanning stage and a TrueSurface profilometry module to compensate for topographic variations.

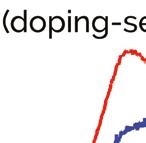

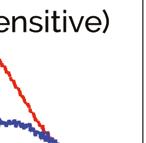

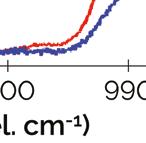

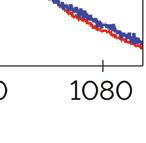

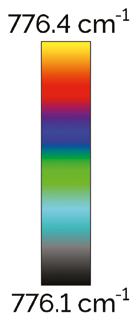

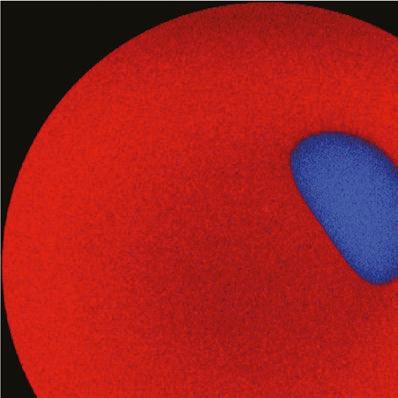

Raman imaging revealed alterations in the doping-sensitive A1(LO)mode at 960 rel. cm-1 of the Raman spectrum (Fig. 1A) for a region within the wafer (Fig. 1B). Compared to the bulk wafer area (red), this region contained a higher doping concentration (blue). The sensitivity of the system enabled the detection of minimal shifts of the E2(high) mode at 776 rel. cm-1 which is sensitive

Figure 1: Raman imaging of a 150 mm SiC wafer. A: Raman spectra of two components that di er in the doping-sensitive A1(LO) mode (ca. 990 rel. cm-1). B: Di erent doping concentration (blue) compared to the bulk wafer area (red) color coded according to (A). C: Distribution of stress-sensitive E2(high) peak (776 rel. cm-1), revealing compressive peak shifts in the wafer’s center and tensile shifts toward its edge. (D) Warpage of the SiC wafer with height variations of up to 40 µm. For

to material stress and strain. In comparison to the overall wafer, more central regions were exposed to compressive stress while distal regions were subjected to relatively higher tensile stress (Fig. 1C). TrueSurface compensated for height variations within the sample and allowed the recording of the wafer’s topography and warpage (Fig. 1D) simultaneously along with the Raman spectral information.

A a possible origin of stress in crystals, including crystalline semiconductors, is a Frank-Read source (FR-source). The term describes the dislocations and repeating wrapping patterns that result from deformations and alterations in a crystal lattice. These can be detected and located with Raman imaging.

In photoluminescence (PL), photons excite electrons, then fall back to a

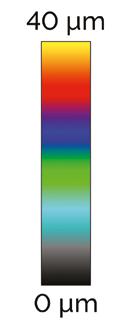

ground state and re-emit a photon at a longer emission wavelength which is characteristic for each material. For semiconductors, the PL-emitted light can serve as an indicator of its bandwidth, as the energy of excited electrons is reduced to the bandgap minimum in the relaxation process. Here, a WITec alpha300 Raman microscope equipped with the TrueComponent Analysis software

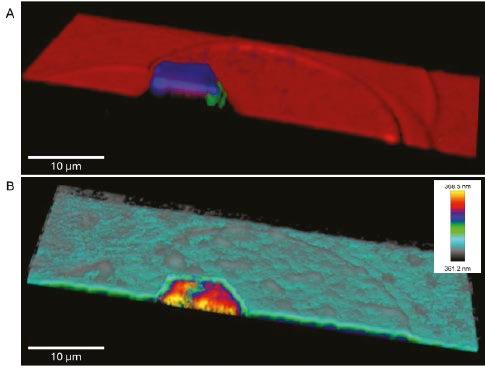

feature was used to generate a highresolution 3D map of an FR-source in GaN (Fig. 2A). The obtained Raman spectra were automatically analyzed to detect spectral differences and identify components. Three different components were found for GaN: The relatively relaxed GaN (red) and two stressed forms (blue, green) within the FR-source. Next, PL signals were analyzed, and the visualized emission peak position (Fig. 2B) shows a different PL fingerprint for the FR-source compared to the overall sample, confirming the alterations in its semiconducting properties.

The examples shown demonstrate the utility of Raman imaging for characterizing compound semiconductors. The alpha300 Raman system set up for large-area scanning measured doping, stress and topography in a 150 mm SiC wafer and another alpha300 Raman microscope carried out a correlative Raman-PL measurement of GaN that visualized its composition and stress states in three dimensions. Researchers in semiconductor development rely on detailed, conclusive investigations such as these to achieve a comprehensive understanding of their materials and manufacturing processes. The WITec alpha300 line of Raman microscopes offer precise, versatile tools that can help accelerate their rate of advance. ■

Figure 2: Highresolution 3D mapping of GaN with FRsource. A: Raman image generated using TrueComponent Analysis to identify GaN (red) and GaN stressed states (blue, green). B: The color-coded visualization of the photoluminescence (PL) peak position in GaN shows altered PL emission wavelength in the FR-source region.

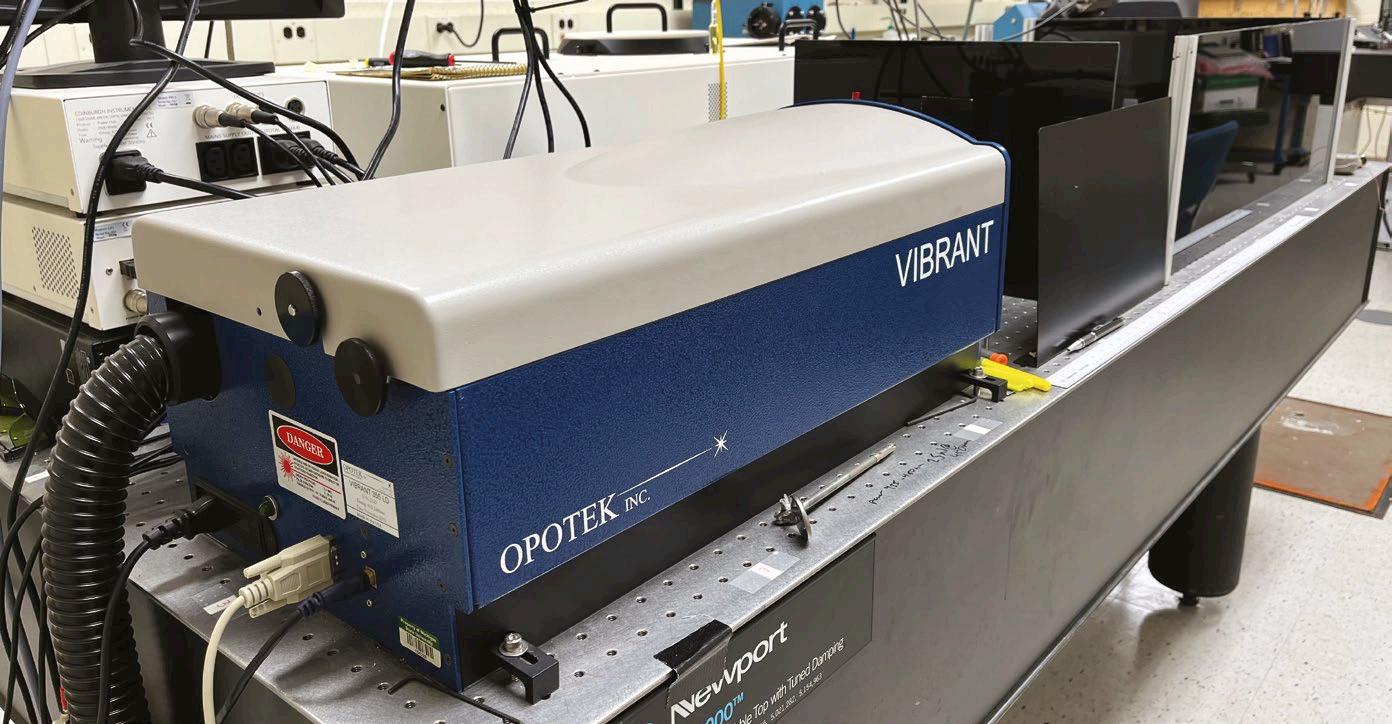

Technological advances have meant that many modern research labs are turning to tunable lasers

In biotechnology, light analysis can be used for various applications, such as identifying biomarkers, monitoring bioreactors, characterising biopharmaceuticals, and detecting contaminants. Techniques such as transient absorption are used to study the mechanistic and kinetic details of chemical processes that occur within just a few picoseconds to a femtosecond – the equivalent of one millionth of one billionth of a second.

To conduct this research, labs require fast lasers that can create an excited electronic state of a molecule on this time scale. Since all molecules do not absorb the same wavelengths of light, these lasers must be flexible enough to produce wavelengths across a broad spectrum. Technological advances have meant that many research labs are turning to tunable lasers, or Optical Parametric Oscillators (OPOs).

OPOs have long been used in sophisticated test and measurement applications such as mass spectrometry and photoacoustic imaging. Now, these ‘tunable’ pulsed

lasers are being utilised owing to the high resolution and variety of nanosecond wavelength pulses they can produce from visible light to deep UV.

“You don’t want to have to design your chemistry such that it is tailored just to the particular wavelengths you have available in your instrumentation. You want the lasers to be flexible enough to adapt to the chemistry you want to explore,” said Dr. James McCusker, an MSU Foundation Professor in the Department of Chemistry at Michigan State University who leads a research group of PhD students in the study of cutting edge techniques. His group conducts fundamental research on designing and synthesising molecules to absorb the visible part of the spectrum.

“We only want to be limited by our imaginations, not by what

wavelengths we can get out of a particular laser,” added Dr. McCusker, who has worked in the field for nearly 30 years.

Dr. McCusker and his team of PhD students study a class of compounds known as transition metal complexes. These compounds are based on elements from the so-called transition block of the periodic table. The focus of the group’s research is to understand how a molecule’s structure, composition and absorptive properties relate to their ability to carry out light-induced chemical reactions.

According to Dr. McCusker, one of the primary areas of research relates to solar energy conversion. Transition metal complexes are an important class of molecules in this field of research and can be studied using time-resolved spectroscopy to examine the thermodynamics and conversion efficiencies of solar cells, the potential use of alternative and less expensive earth-abundant materials for processes that can achieve light-to-chemical energy conversion, and for designing molecules whose excited-state properties will enable their use in a wide range of such compounds to enable new kinds of organic transformations of potential interest in the pharmaceutical industry.

“We are pursuing a systematic examination of chemical perturbations to excited-state electronic and geometric structure,” said Dr. McCusker. “As a result, we will be able to develop a comprehensive picture of how transition metal chromosphores absorb and dissipate energy.”

To facilitate this type of research, fast, pulsed lasers are required to selectively excite a molecule to a specific state to study the compound’s excited-state properties. In the early days of laser development, lasers were constructed to operate at very specific wavelengths. Single wavelength Nd:YAG lasers, for example, are inexpensive and simple to use. However, additional hardware is required to modify a 1064-nm laser before it can operate at a different harmonic frequency for testing such as 213, 266, 355 and 532 nm. This adds to the cost of the laser.

“There are gaps between the wavelengths, and the jump between 1064 nm to 532 nm is significant,” said Dr. Mark Little, technical and scientific consultant for Opotek, adding that testing each of those harmonics increases the cost. The Carlsbad, California-based Opotek offers solutions for specialised applications including photoacoustic, diagnostics, hyperspectral imaging, and medical research.

According to Little, OPO lasers can convert the fundamental wavelength of pulsed mode Nd:YAGs to a selected

frequency This tunability enables OPOs to generate light in a broad range of wavelengths that are amplified within the OPO for a usable output beam.

Dr. McCusker and his research group use the Vibrant 355 II Nd:YAGpumped OPO laser from Opotek which can quickly provide a tunable wavelength output from 300-2400 nm and is used for both time-resolved absorption and time-resolved emission requirements.

Dr. McCusker has relied exclusively on their OPO lasers for the portion of his research program focused on nanosecond time-resolved spectroscopy since 1995 when he was an assistant professor at UC Berkeley.

Much of the research conducted by Dr. McCusker and his team, such as solar energy conversion studies, involves designing and synthesising molecules that absorb light in the visible part of the spectrum.

However, he notes that for organic carbon-based or aromatic compounds, researchers may want to study

❝ To facilitate this type of research, fast, pulsed lasers are required to selectively excite a molecule to a specific state to study the compound’s excited state properties.