COLUMBIA SCIENCE REVIEW

Volume 19 Issue II

WINTER 2022-2023

Cover illustrated by Ananya Raghavan

Fair Use Notice

Columbia Science Review is a student publication. The opinions represented are those of the writers. Columbia University is not responsible for the accuracy and contents of Columbia Science Review and is not liable for any claibased on the contents or views expressed herein. All editorial decisions regarding grammar, content, and layout are made by the Editorial Board. All queries and complaints should be directed to the Editor-in-Chief.

This publication contains or may contain copyrighted material, the use of which has not always been specifically authorized by the copyright owner. We are making such material available in our efforts to advance understanding of issues of scientific significance. We believe this constitutes a “fair use” of any such copyrighted material, as provided for in section 107 of the US Copyright Law. In accordance with Title 17 U.S.C. Section 107, this publication is distributed without profit for research and educational purposes. If you wish to use copyrighted material from this publication for purposes of your own that go beyond “fair use,” you must obtain permission from the copyright owner.

EDITORIAL BOARD

CO-EDITOR-IN-CHIEF EMILY SUN MANAGING EDITOR AMANDA LEONE CHIEF DESIGN OFFICER ALEXANDRA COCHON CREATIVE CHAIR SARAH SANZEBIN

WRITERS RACHEL LEE, ANGEL LATT, ANUVA BANWASI, ISABELA TELLEZ, JENNA EVERARD, JIANNA MARTINEZ, JULIA GORALSKY, LAUREN GORALSKY, LAYA GOLLAMUDI, JULIETTE SHANG, AIDAN EICHMAN, TATA TIRAPONGPRASERT, DAVID CHANG, RAMYA SUBRAMANIAN, SYLVIE OLDEMAN, THILINA BALASOORIYA, NICHOLAS DJEDJOS, NICHOLAS LOFASO, MARIA VALERIO ROA, DANIEL SHNEIDER, NOAH WOHLSTADTER, CHARLIE BONKOWSKY, ALLIE LIN

CO-EDITOR-IN-CHIEF AIDA RAZAVILAR DIRECTOR OF LOGISTICS NICHOLAS LOFASO CHIEF ILLUSTRATOR YASSINE ABAAKIL ILLUSTRATORS ALICE WANG, ELIZABETH TORNA, KENDALL DOWNEND, SREOSHI SARKAR, TIFFANY QIAN, YI QU, AUDREY ACKEN, CINDY JIN, JULIAN MICHAUD, LUANA LIAO, NINA KORNFELD, ANDRE ANDONNINO, ARUNA DAS, SUMMER RENCK, MACARENA HEPP

LAYOUT DESIGNERS MONICA RIVERA, YASSINE ABAAKIL, ALEXANDRA COCHON, ISHAAN BARRETT EDITORS AMANDA PENG, EDWARD KIM, JIMMY ZHANG, NICHOLAS TAN, PRIYA RAY, SARAH BOYD, SHIVANI TRIPATHI, VANESSA YANG, ASHLEY GARCIA, KEVIN ZHANG, TREY PURVES, CADEN LIN, KYRA

EXECUTIVE BOARD

PRESIDENT JOSH YU

SECRETARY RANGER KUANG

PR CHAIR SONALI DASARI

SENIOR OCM ELLA SAFFRAN

OCMs EMILIYA AKHUNDOVA, KATHERINE WU, MIRIAM AZIZ, EMILY SARKAR, ASHLEY HOUSE, PORTIA CUMMINGS, PRISCILLA CASTRO, SOLE

TESFAYE, GREG TAN, OSHMITA GOLAM, LYNN NGUYEN

VICE PRESIDENT FARIHAH CHOWDHURY

TREASURER BRIAN CHEN

MEDIA CHAIR LUKE LLAURADO OCMS JENESIS WILLIAMS, KARSHISH KUMAR, MIRACLE ST. KITTS, SASHA MAMAYSKY, ZARYAAB KHAN, ZEKE JOHNSON, ANDREW

CHUN, PRANAY TALLA

SENIOR ADVISORS HANNAH LIN, AROOBA AHMED

The Executive Board represents the Columbia Science Review as an ABC-recognized Category B student organization at Columbia University.

06

WINTER

20

LETTERS FROM THE EDITORS / Emily Sun, Aida Razavilar 14 BOSTON’S BIOTECH ROOM / Nicholas Lofaso

24

BIOPROSPECTING / Julia Goralsky

28

THE SOUND OF SCIENCE / Angel Latt

WHAT THE CELL? / Lauren Goralsky

10 ALIEN LINGUISTICS / Charlie Bonkowsky 16 BESIDES DESIGNER BABIES / Sylvie Oldeman 22 CANNABIS AMNESIA / Allie Lin 26 ARE HOSPITALS SECRETLY KILLING US? / Thilina Balasooriya

WINTER 2022 - 2023

Dear Reader,

Hello and welcome to the Winter 2022-2023 edition of the Columbia Science Review (CSR)!

As someone who has engaged in scientific communication, I know that the scientific community can sometimes appear exclusive and impersonal. However, I have also learned through my involvement in CSR that science encompasses a wide range of disciplines and has the wonderful ability to bring people together. It is my greatest hope that this edition will show the fun and exciting side of science, inspiring you to explore science through your unique perspective.

Our team has worked hard to bring you a diverse set of fascinating topics, ranging from the intriguing connection between weed and and false memories to the possibility of communicating with aliens. In this edition, you will also delve into the mysteries of the human brain, explore the development and treatment of various medical conditions, learn about pharmaceuticals rooted in nature, and investigate novel biotechnological innovations and their applications.

While the content may be easily comprehensible, breaking down these complex topics into digestible bites is not a simple task. It is truly a testament to the dedication of the CSR team that we are able to present these ideas in such a readily accessible manner. I would like to express my deepest gratitude to every writer, editor, layout designer, and illustrator who has contributed to this edition. You are the heart and soul behind CSR— thank you for everything.

Finally, it is with bittersweetness that I announce that this will be my final semester in CSR. Over the past four years, CSR has been an essential part of my Thursday evenings, and I am endlessly grateful for the opportunity to be part of such a wonderful community. Though I am sad to be leaving, I am confident that CSR has a bright future and will continue to thrive with the incredible team and leadership. And congratulations to my fellow graduating seniors :)

Without further ado, I invite you to dive into the pages of this edition. Enjoy!

With love,

Emily Sun Co-Editor-in-Chief

6

Hello dear readers!

For those of you on the other side of this page, we are pleased to present the Winter 2022-2023 edition of the Columbia Science Review (CSR)!

It is increasingly evident that alongside the acceleration in scientific innovation and advancement there is an important responsibility of the scientific community to support efforts in evolving scientific communication. The foundations of science have always been rooted in understanding the world around us and by that token developing a better sense of our own humanity. For that reason, we hope that this edition, as the one’s before it, are infused with imagination and narrative in an effort of returning some of that necessary humanity in science. It is through that which we hope science is made accessible and engaging to provide multidisciplinary discourse in a field that is, necessarily so, shaped by society. I hope that something in this issue sparks some interest, and maybe even sparks conversation.

Our team has curated a captivating collection of articles on a wide range of topics, including the intriguing connection between drugs and false memories, conceptualizing language beyond a human understanding of it, and of music and the mind to name a few. Though nebulous and difficult concepts to write about I am, as always, impressed by the work of our extended team of writers and editors to thoughtfully, and iteratively develop these ideas into their final form. Coupled with the work of our illustrators and layout designers they are able to capture the vision and intrigue of science. The multidimensionality of science is reflected in each of their efforts — and with that I want to express my greatest gratitude for the way that has brought CSR and these pages to life.

After four wonderful years, I am announcing my departure from CSR, and leaving with confidence in the future of CSR. CSR has been an integral part of my time at Columbia, and a community that helped me grow in so many dimensions within and outside of science. The evolution of the club through these past years, but particularly the passion and revitalization of the energy in this past one makes me incredibly excited for what is in store. I have so much love and admiration for our team. This edition is just a further testament to each of their particular talents, so without further delay, please immerse yourself in this issue. Please enjoy the journey as well as the ends or inspiration it may lead you to.

Best wishes!

Aida Razavilar Co-Editor-in-Chief

Aida Razavilar Co-Editor-in-Chief

7

columbia science review EDITORIAL BOARD

Winter 2022 -2023

WELCOME TO THE WINTER 2022-2023 EDITION

Linguistics

Written by Charlie Bonkowsky

Illustrated by Aruna Das

Written by Charlie Bonkowsky

Illustrated by Aruna Das

10

There’s an unresolved paradox at the heart of astronomy. No, it has nothing to do with quantum physics, dark matter, black holes, nor anything else which threaten to shake the foundations of modern physics. No, it’s a paradox of what we don’t see, don’t hear, and don’t find. Our galaxy contains billions of stars, so probability, as well as modern evidence from our telescopes, dictates that many should have planets which can support life. And even if a tiny fraction develops life, the galaxy should be filled with their lights and communications.

“If life is so easy, someone from somewhere must have come calling by now,” we’re forced to conclude [1]. Or, in the words of Enrico Fermi [2], for whom this Fermi paradox is named, “where is everybody?”

Scientists have proposed numerous theories about why we don’t see the hallmarks of alien life everywhere we look: a Great Filter, for example, some barrier that prevents life from ever developing interstellar travel or communication. Maybe the formation of life from the primordial sludge is a one-in-a-trillion chance, a freak accident; maybe civilizations wipe themselves out in nuclear fire before they can reach the stars.

But there’s another possibility. Perhaps the galaxy is filled with signals flying back and forth—but we, bound by conventions of human language and understanding, simply don’t know how to read them.

How would aliens communicate with us?

In the summer of 1899, the inventor Nikola Tesla reported [3] that he was receiving strange, repetitive radio signals with no Earthly source. These signals disappeared when Mars disappeared from the Colorado horizon where his equipment was set up, and he ultimately concluded that “they must emanate from Mars…the greetings of one planet to another.” These signals carried a mathematical pattern: 1-2-3-4, which convinced him of their intelligent origin: “I believe the Martians used numbers for communication,” he said, “because numbers are universal.”

We know now that there are no Martian civilizations to send radio signals our way*—but many scientists, engineers, and astronomers scan the skies every day with radio telescopes in search of this kind of intelligent transmission. But what happens if we receive one? Learning that we’re not alone in the universe is the easy part; learning to communicate with an alien species may be infinitely more difficult.

When studying unknown languages, linguists begin [4] with repeated units of sound—phonemes—to indicate patterns which form meaning. Or, if there are no speakers, such as in the case of an archaic or dead language, written language can be analyzed for similar small units of meaning, morphemes. But doing so requires immense

11

Linguistics

amounts of data, an entire corpus of language to pore through, and significant amounts of context that wouldn’t be available in an alien message. Take the case [5] of the Etruscan language, a millennia-old language which survives only through written tablets. Decoding it relies not only on strict formal analysis, but the location of words: the words “god” or “gods” are likely to be found in tablets stored in a temple, but not on the inscriptions of banquet drinking vessels.

Any alien language would lack that archaeological context—nor would it even be human. Noam Chomsky’s theory of Universal Grammar posits that certain structures of language are universal, innate: shared across the body of all possible languages. But Chomsky’s theory is far from definitive. Other linguists propose [6] that “third factors — body shape, what your planet is like — would have more to do with language…little has to be innate.” If that’s true, it would be a heavy lift indeed to find linguistic commonalities with an alien species hailing from a vastly different world.

How would we communicate with aliens?

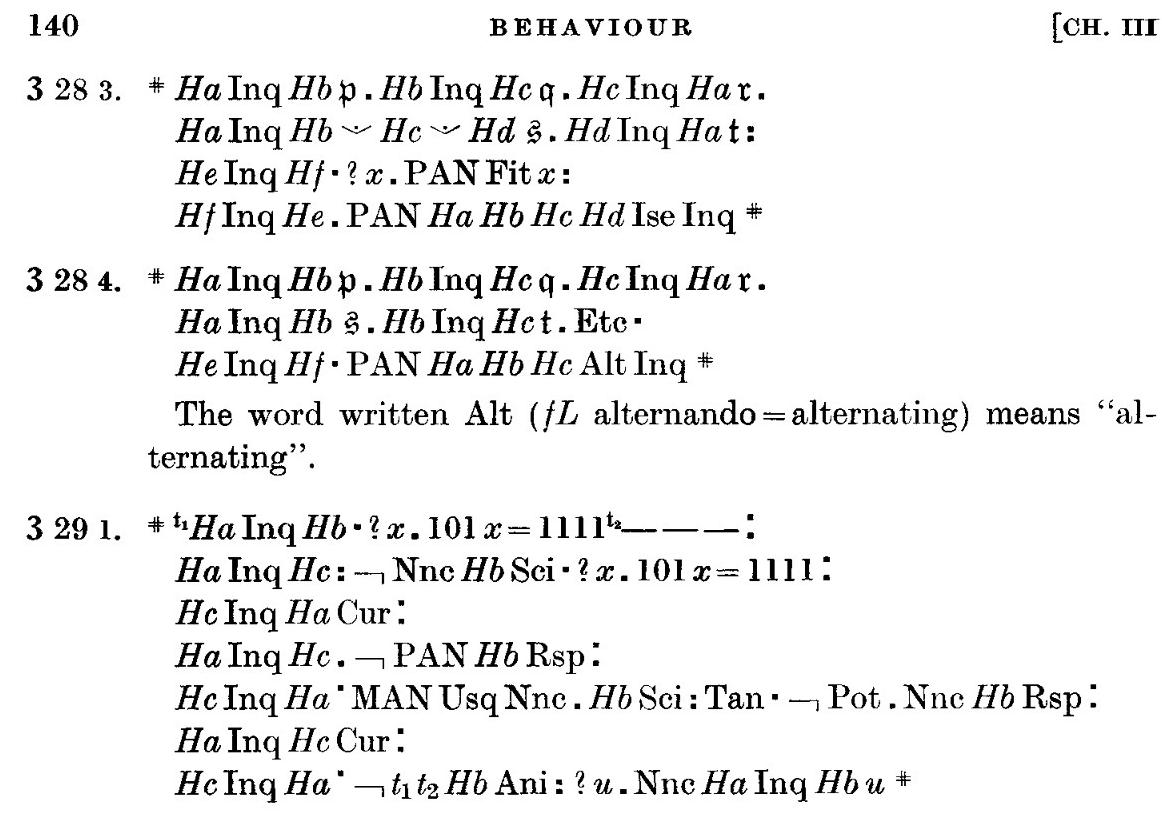

Mathematics is often floated as a universal language— dating back to Tesla and his not-quite-Martian messages. The first artificial language built for extraterrestrial communication was called Lincos, from lingua cosmica, by Dr. Hans Freudenthal in 1960. Freudenthal was a mathematician and professor of geometry, who wrote Lincos while at the University of Utrecht in the Netherlands. In the book’s introduction, he wrote that “my purpose is to design a language that can be understood by a person not acquainted with any of our natural languages or even their syntactic structures. The messages communicated by means of this language will contain not only mathematics, but in principle the whole bulk of our knowledge.”

Lincos was designed as a spoken language, not a written one—although designed to be “spoken” over unmodulated radio waves. The first messages sent to establish communications with Lincos would contain [7] the mathematical dictionary: a series of radio pulses, 1-2-3-4, followed by the mathematical operators like +, -, =, <, >, to demonstrate our mathematical notion and knowledge. Successive messages would define ever more complex subjects: human measures of physical concepts like time, mass, and space, or descriptions of human interactions and behavior between humans.

Professor J.D. Kraus at Ohio State, who helped build and design the Big Ear radio telescopes used to listen for alien communications, had [3] the same idea for initiating communication: “Is there anything that we and the dwellers on another planet even have in common? Is there any common semantic frame of reference? ... The idea of numbers or counting would be common,” he says.

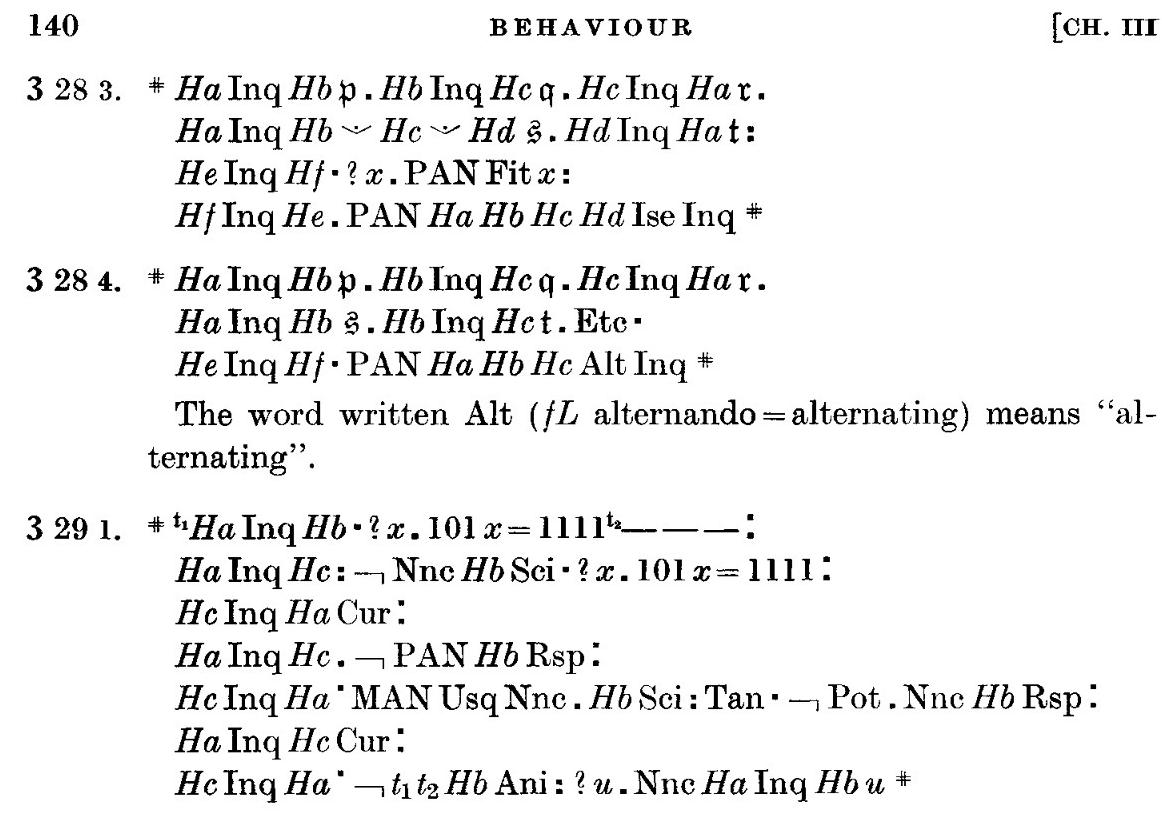

Potential conversations about human behavior in Lincos, from Dr. Freudenthal’s book creating the language. [8]

Potential conversations about human behavior in Lincos, from Dr. Freudenthal’s book creating the language. [8]

12

“We might suppose that the other-men could initiate their beacon transmission with a series of dots: ., .., …, …., etc.”

Yet, for almost four decades, Lincos went unused, an academic exercise and nothing else. Even Yvan Dutil, who, in 1999, was one [9] of a team of researchers who beamed a message in Lincos to four nearby stars, said of it: “Freudenthal’s book is the most boring I have ever

What if we don’t like what we hear?

The other question is: should we be trying to communicate with aliens? Recent science-fiction novels, like Cixin Liu’s award-winning Three-Body Problem [10], have explored the dark forest paradox: that it’s the nature of intelligent life to destroy other intelligent life. As the book explains it:

The universe is a dark forest. Every civilization is an armed hunter stalking through the trees like a ghost...the hunter has to be careful, because everywhere in the forest are stealthy hunters like him.

If he finds other life — another hunter, an angel or a demon, a delicate infant or a tottering old man, a fairy or a demigod — there’s only one thing he can do: open fire and eliminate them. In this forest, hell is other people. An eternal threat that any life that exposes its own existence will be swiftly wiped out. This is the picture of cosmic civilization. It’s the explanation for the Fermi Paradox.

(See also [Columbia Science Review Volume 19, Issue I])

Even if they can’t understand the language we use to transmit, we might be communicating the most important detail: where we are, and how to find us.

Works Cited

[1] Overbye, Dennis. “The Flip Side of Optimism About Life On Other Planets.” The New York Times, 3 Aug. 2015, www.nytimes. com/2015/08/04/science/space/the-flip-side-of-optimism-about-lifeon-other-planets.html.

[2] Jones, Eric. Los Alamos National Laboratory, 1985, “Where Is Everybody?” An Account of Fermi’s Question.

[3] Corum, Kenneth, and James Corum. NASA, 1996, Nikola Tesla and the Planetary Radio Signals.

[4] Yollin, Patricia. “UC Linguistics Students Get Lesson of Lifetime.” SFGATE, San Francisco Chronicle, 11 Feb. 2012, www.sfgate.com/ bayarea/article/UC-linguistics-students-get-lesson-of-lifetime-3266422. php.

[5] Rogers, Adelle. “Theories on the Origin of the Etruscan Language.” Purdue University, ProQuest Dissertations & Theses, 2018.

[6] Castelvecchi, Davide. “The Researchers Who Study Alien Linguistics.” Nature News, Nature Publishing Group, 1 June 2018, www.nature.com/articles/d41586-018-05310-x.

[7] Oberhaus, Daniel. “How to Build a Language to Communicate with Extraterrestrials.” The Atlantic, Atlantic Media Company, 6 Apr. 2016, www.theatlantic.com/science/archive/2016/04/mathlanguage-extraterrestrials/477051/.

[8] Freudenthal, Hans. Lincos. North-Holland Publ. Co., 1960.

[9] Dutil, Yvan, and Stéphane Dumas. “Annotated Cosmic Call Primer.” Smithsonian.com, Smithsonian Institution, 26 Sept. 2016, www.smithsonianmag.com/science-nature/annotated-cosmic-callprimer-180960566/.

[10] Liu, Cixin. The Three-Body Problem. Translated by Ken Liu, Tor Books, 2014.

“

13

Boston’s Biotech Boom

If you know anything about the biotechnology industry, you probably know that the Greater Boston area is one of the world’s main life science hubs. How did it get to be this way, and what does the future have in store for it?

Boston wasn’t always a major center of this high-tech industry; in fact, the industry itself wasn’t always the powerhouse that it is today. While the general concept of using biology to mass-produce something isn’t novel (just look at alcohol breweries as an example), the modern biotech industry takes things to another level entirely.

The first major step in biotech’s explosive growth was the famous 1953 Watson and Crick experiment that proved DNA to be the genetic material. This created an enormous interest in the fundamental nature of DNA; scientists wanted to learn more about what it truly was and how it could potentially be manipulated to solve other problems across biology and related fields, such as seemingly untreatable genetic disorders and computer information storage.

One of the discoveries to come out of this era was recombinant DNA, which was the true kickstarter in the biotech revolution By using restriction enzymes to “snip” DNA, scientists realized that they could introduce new strands of DNA that the cells would then “pick up” and integrate into their own genomes [1]. This was the very beginning of genetic engineering as a field, and the basis for the world’s first true biotech firm, Genentech, founded in 1976.

Boston’s own history with this field is a little more complicated. As with many new technologies, the idea of editing DNA to drastically change living organisms made many people uncomfortable [2]. So in 1976, the same year Genentech was founded, the city council of Cambridge passed a series of regulations regarding the industry. This created much more certainty around what was and wasn’t allowed, which made investors much more comfortable with the idea of funding biotech companies in the city [2].

Written by Nicholas Lofaso Illustrated by Yassine Abaakil

In 1978, Biogen was founded; generally agreed to be the first modern biotech company in the Boston area, it represented the start of a new, major sector in Massachusetts’s economy [3]. Focusing on neurological diseases and disorders, Biogen has become one of the biggest players in the entire industry. Their product lineup includes drugs for Alzheimer’s, MS, muscular atrophy and others, while their current pipeline includes promising new treatments for these diseases and more, such as depression and ALS [4, 5].

These accomplishments and innovations are impressive, but the revolution that Biogen essentially started is even more impressive. There are now over 1,000 biotech companies in Greater Boston, making up one of the largest hubs in the world and forming a key backbone of the Massachusetts economy [6]. These companies are located across the entire city, with the largest concentrations surrounding Cambridge’s Kendall Square and Boston’s relatively new Seaport district. They make up everything from more traditional pharmaceutical companies to some of the most barrier-breaking startups around, working at the forefront of our knowledge of the life sciences.

This industry is so successful, in fact, that Boston can’t seem to build enough new lab space to meet demand. Many of the new development projects happening in or around the city right now incorporate some form of space for the life science industry, including the redevelopment of Suffolk Downs that will add more than 5.2 million square feet of lab space to the region [7].

While Cambridge’s economic policies definitely played a large role in kicking off this boom, they don’t fully explain why Boston has seen such success in recent years. When you consider the rest of the region as a whole, though, things start to make much more sense.

14

Boston is, at its core, essentially a massive college town. There are over 138,000 undergraduate and graduate students in the city during the school year, with 29 colleges and universities in the city proper; this doesn’t even include schools that are outside the city limits such as Harvard, MIT, and Boston College [8]. This creates a constant flow of talent for companies in the city and means that there is an almost symbiotic relationship between industry and academia. A great example of this is MIT professor and Broad Institute core member Aviv Regev. A founding member of the Human Cell Atlas (an organization whose goal is to create an online dataset of every type of cell in the human body), she spent years running a computational biology lab in academia before being recruited by Genentech to run their early research and development team [9]. Relationships like these are incredibly important for development and are able to happen far easier when academia and industry are so close to each other, both physically and intellectually. What about New York City, though? With so many

references

1. Smith, J. Humble Beginnings: The Origin Story of Modern Biotechnology. (2020, Dec. 23). LaBioTech.eu. Retrieved from https://www.labiotech.eu/in-depth/history-biotechnology-genentech/.

2. Khalid, A. How Boston Became ‘The Best Place in the World’ To Launch A Biotech Company. (2017, June 19). WBUR Boston. Retrieved from https://www.wbur.org/news/2017/06/19/boston-biotech-success.

3. About Biogen. Biogen.com. Retrieved from https://www.biogen. com/company.html.

4. Medicines. Biogen.com. Retrieved from https://www.biogen.com/ medicines.html.

5. Science & Innovation: Our Pipeline. Biogen.com. Retrieved from https://www.biogen.com/science-and-innovation/pipeline.html.

6. Green, R. Boston Named World’s Top Biotech Hub as the City’s Leading Biotech Companies Continue to Make Major Breakthroughs. (2021, Oct. 27). Yahoo.com. Retrieved from https://www.yahoo.com/ video/boston-named-worlds-top-biotech-122424885.html?gucco unter=1&guce_referrer=aHR0cHM6Ly93d3cuZ29vZ2xlLmNvbS8&guce_referrer_sig= AQAAALndbb0FkbnFO-rzd1tJHAFu2E0-GGfssn1A8WE9OOP2CIKSCHELj12tmVDP M_N9rEMKK-

institutions of higher learning and so much talent that gathers here, we can be a prime candidate for the next biotech revolution. The New York metropolitan area is no stranger to the pharmaceutical industry, either–New Jersey has been home to many manufacturing and office centers for these companies for years [10]–but biotech overall has not been as important of an industry here as others.

However, this might be changing. NYU Langone recently opened the city’s largest biotech incubator, encouraging growth of the industry by providing many of the resources and stability that startups desire [11]. With any luck, we’ll have a similar level of success as Greater Boston, allowing for a new era of the city’s economy and becoming home to a new generation of scientific, medical, and technological progress.

9. Aviv Regev: Head, Executive Vice President, Genentech Research and Early Development. Genentech. Retrieved from https://www. gene.com/scientists/our-scientists/aviv-regev.

10. New Jersey’s Life Sciences: By the Numbers. HealthCare Institute of New Jersey. Retrieved from https://hinj.org/life-sciences-new-jersey/new-jerseys-life-sciences-by-the-numbers/.

11. Landi, H. NYU Langone Opens NYC’s Largest Biotech Incubator with Backing from Biopharma Giants. (2019, Dec. 17). Fierce Healthcare. Retrieved from https://www.fiercehealthcare.com/tech/ nyu-langone-biolabs-open-up-new-york-city-s-lar gest-biotech-startup-incubator.

15

Written by Sylvie Oldeman

16

Illustrated by Arooba Ahmed

17

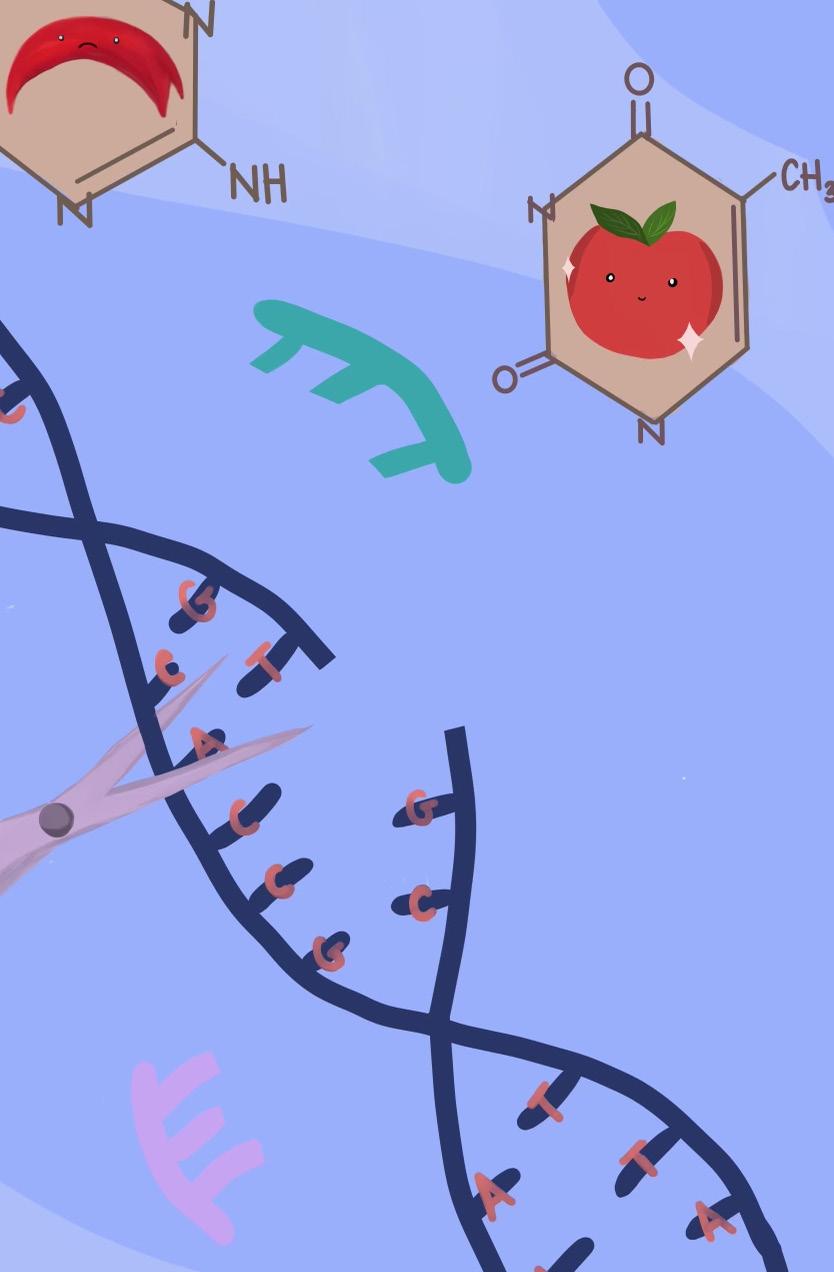

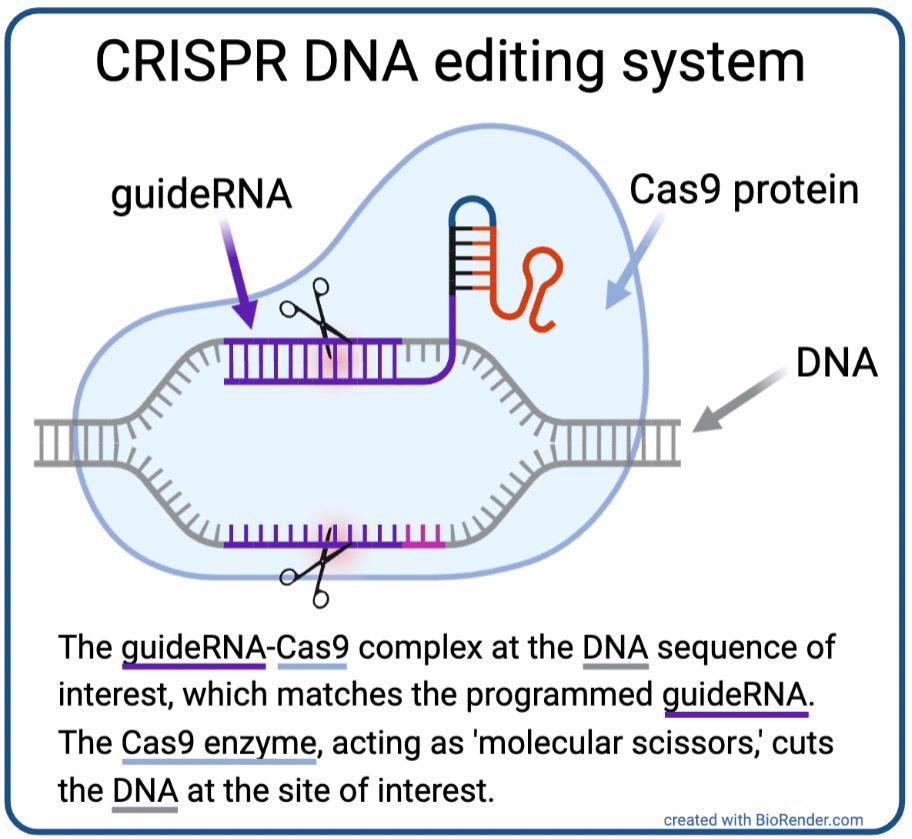

Since Emmanuelle Charpentier and Jennifer Doudna’s publication on the successful application of CRISPR technology to genome editing sparked a mainstream debate regarding the bioethics of editing the embryonic genome, the term “CRISPR” has been widely thrown around the internet. But what is CRISPR? Where did it come from? What is it currently capable of? What might the future hold for it?

CRISPR was initially discovered (1993), characterized, and termed (2005) by Spanish microbiologist Francisco Mojica as an aspect of the prokaryotic (i.e. bacterial and archaeal) immune system used to store pieces of viral DNA after experiencing a viral infection [1]. The system enables the prokaryote to mount a stronger immune response when confronted with a second viral attack by making enzymes (CRISPR-associated proteins, or Cas) that will target specific viral genetic sequences for destruction; rather than having a generic immune response, the organism retains a memory of past viral attacks to prepare it for future ones. The term CRISPR, an acronym for Clustered Regularly Interspaced Palindromic Repeats, describes the system’s structure, which stores viral DNA sequences in between the palindromic repeat sequences.

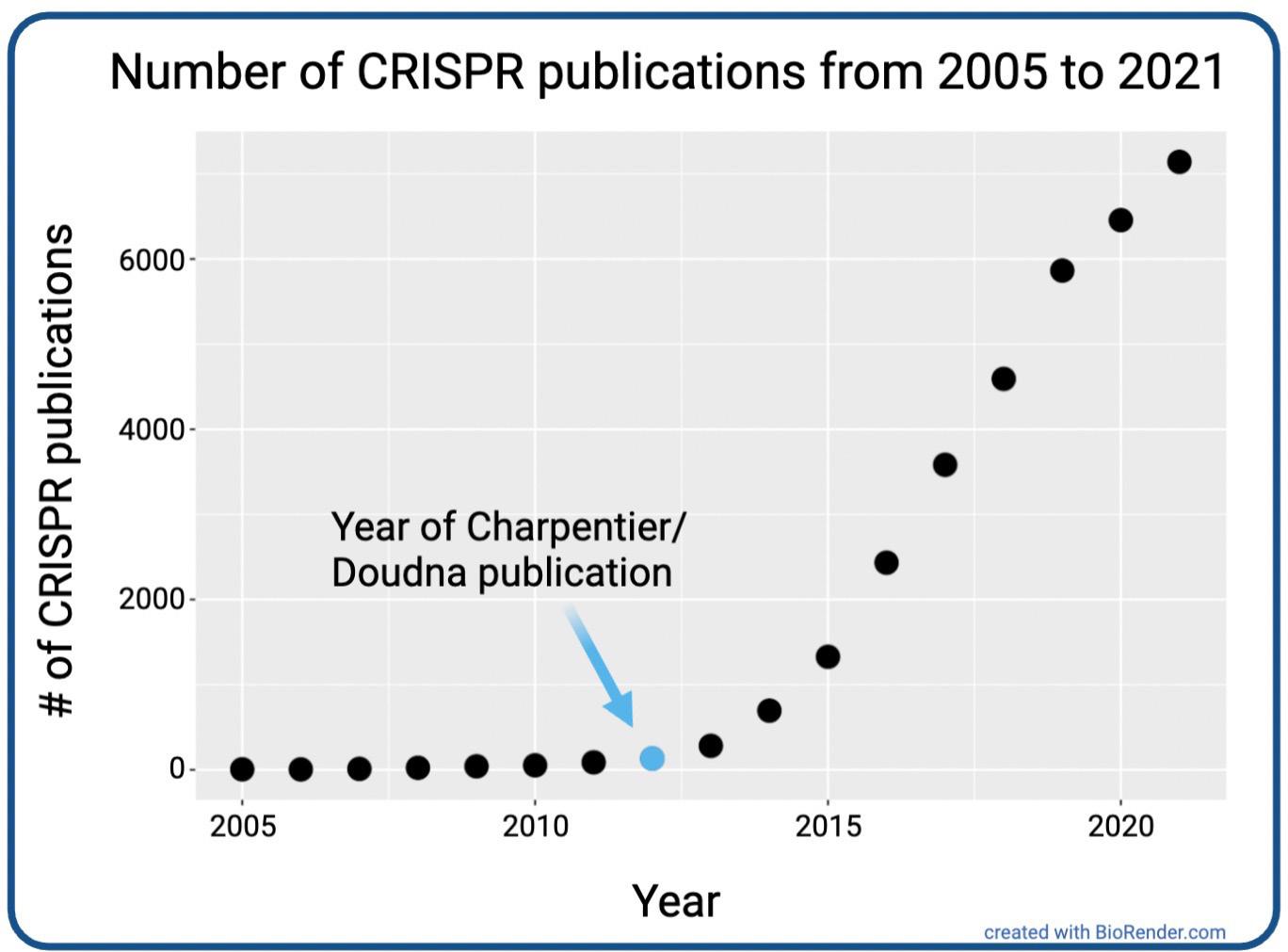

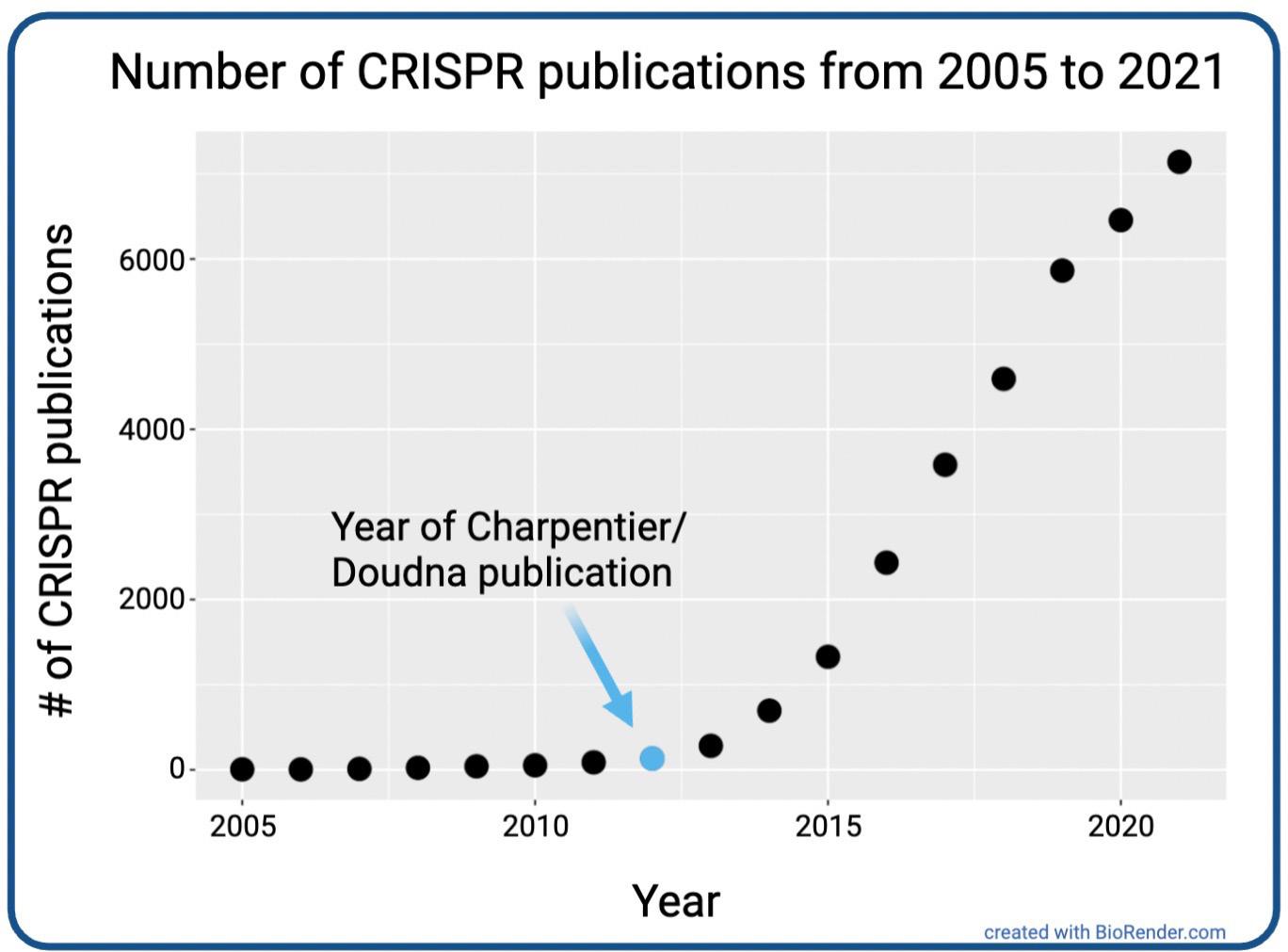

scientists and the public about the potential applications of CRISPR to biomedicine and agriculture (Fig. 2).

Since this discovery, many other elements of CRISPR have been revealed by scientists. For example, several Cas proteins, the enzymes that cut nucleic acids, other than the original Cas9 protein, have been discovered; in fact, there are Cas proteins now known to cut RNA instead of DNA.

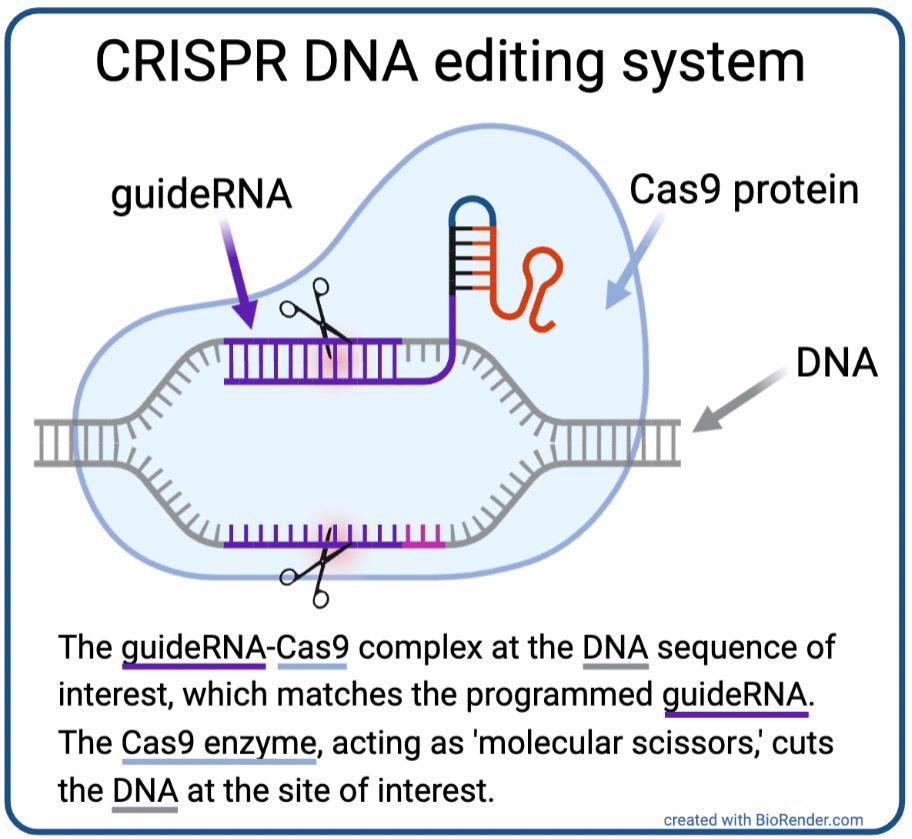

By 2011, Charpentier had identified one of the two essential components of the CRISPR system: guideRNA. GuideRNA describes an RNA molecule that guides the second essential component, a DNA-cutting Cas enzyme, to a specific spot in the genome (i.e. all of the genetic material present in a cell). After this discovery, Charpentier began collaborating with Doudna, and the two successfully used isolated guideRNAs and Cas enzymes to edit DNA in test tubes, proving that these two components were capable of precisely cutting DNA at a pre-programmed site of interest [1] (Fig. 1). Publication of this work in June 2012 sparked a revolution in the field of genome engineering, exciting

Additionally, CRISPR technology has been utilized to advance a variety of fields. In medicine, CRISPR holds promise for treating genetic diseases caused by mutations in a single gene, such as sickle cell disease and muscular dystrophy. In sickle cell disease, a mutation in the β-globin gene prevents red blood cells from efficiently carrying oxygen throughout the bloodstream. Researchers are using CRISPR to introduce a mutation in a gene responsible for turning off the fetal version of the β-globin gene, called γ-globin, during development. This would enable restoration of efficient oxygen delivery by red blood cells through using the functional γ-globin instead of the mutated β-globin. In fact, an experimental clinical trial has obtained promising results, including subjects who seem to be symptomatically cured of the disease [3, 4]. Success in the realm of sickle cell disease is promising for the future treatment of many other human genetic diseases.

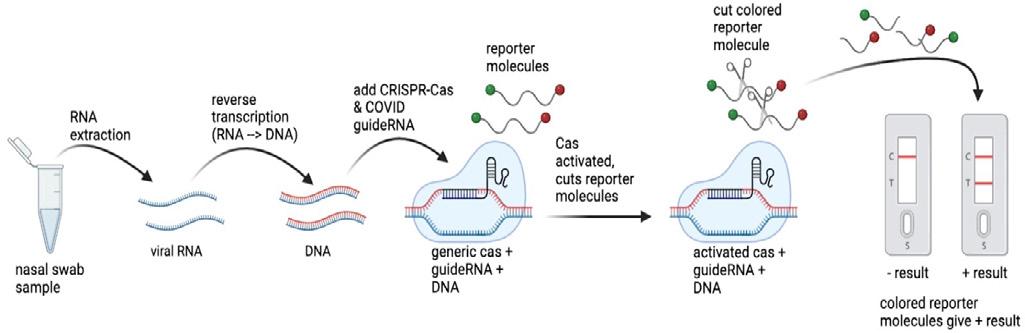

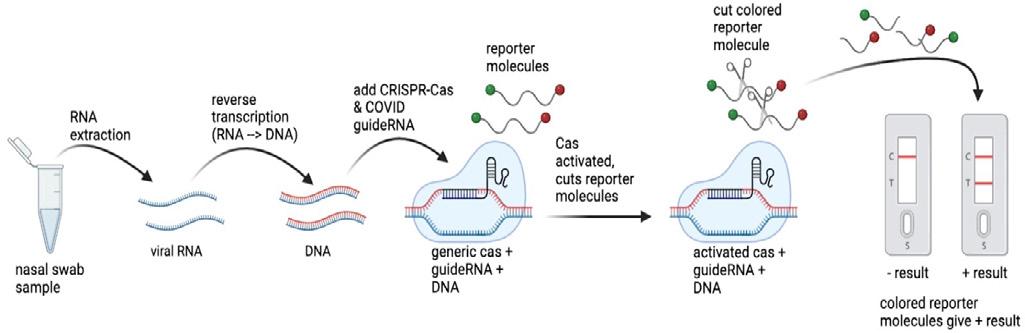

CRISPR has also been applied to the internationallyrelevant cause of preventing the spread of COVID-19 through testing. In the initial advancement of COVID-19 diagnostic tests beyond PCR tests, CRISPR technology was considered a promising avenue for development of faster and less expensive testing options [6]. Although several different CRISPR-based options were developed, the general basis of the technology is similar among these tests (Fig. 3). Essentially, to obtain a positive result, the test converts the RNA from the coronavirus present in an infected patient’s nasal swab sample to DNA via PCR, a method that amplifies the number of copies of the

Figure 1. CRISPR DNA editing system diagram [2].

Figure 2. Trends in CRISPR publications from 2005 to 2021 [2].

Figure 1. CRISPR DNA editing system diagram [2].

Figure 2. Trends in CRISPR publications from 2005 to 2021 [2].

18

sequence of interest – in this case, a sequence found only in the coronavirus genome – which is used to determine whether the sample is positive or negative for COVID-19. Because the tests use different types of Cas proteins, some tests convert this amplified DNA back into RNA. Next, the amplified nucleic acid sequence of interest is bound by a complementary guideRNA, which brings the Cas enzyme with it. Many of these tests also contain a reporter molecule, which is a short DNA strand with a colored molecule on either end. Binding of the sequence of interest by its complementary guideRNA activates the Cas enzyme, causing it to cut the reporter molecule in half, releasing the colored molecules to form the pigmented line that demonstrates a positive test result. Although these tests did not remain very prevalent after the development of the antibody-based rapid tests that are widely used today, the high specificity of the CRISPR guideRNAs in identifying the COVID-19 sequence of interest as well as the short time span (~40-60 minutes) of the test are notable attributes [4].

Additionally, the CRISPR system has claimed a lead role in cancer research, as many cancers are driven by oncogenes, genes that are responsible for regulating growth in normal cells but are harnessed to drive uncontrollable tumor growth in malignant cancer cells. Researchers employ the method of “CRISPR knockout” to mutate specific genes in cancer cells in the laboratory to determine the functional importance of a given gene in the cell’s growth. To inactivate a gene using CRISPR knockout, a researcher can design a guideRNA that targets the potential gene of interest, which will bring Cas to that site and direct it to make a cut in the DNA. Following this break in the DNA strand, the cell will use a repair process called non-homologous end joining (NHEJ) to rejoin the broken ends. However, NHEJ is an error-prone process which typically introduces insertions or deletions of a couple of nucleotides at the site of repair, resulting in mutation of the initially targeted gene, ideally resulting in that gene being knocked out. The CRISPR knockout method is especially helpful in “disentangl[ing] alterations that are driving the processes of tumor evolution from passenger mutations,” mutations that exist in a cancer cell but do not greatly impact its growth [6]. Identification of genes that drive growth of cancer cells enables the development of therapies that target the functions of such important oncogenes as a treatment strategy to reduce or prevent the growth of a tumor. This method of CRISPR knockout is applicable to many additional fields of biomedical

[7] Rojas-Downing, M. M., Nejadhashemi, A. P., Harrigan, T., Woznicki, S. A. (2017, Feb 12). Climate change and livestock: Impacts, adaptation, and mitigation. Climate Risk Management, 16, 145–163. Retrieved from https://www.sciencedirect.com/science/article/pii/S221209631730027X

[8] Crawford, M. (2017, May 31). 8 Ways CRISPR-Cas9 Can Change the World. The American Society of Mechanical Engineers. Retrieved from https://www.asme.org/topics-resources/content/8-wayscrisprcas9-can-change-world

Figure 3. CRISPR-based COVID diagnostic test generic schematic [2], based on figures in [4].

19

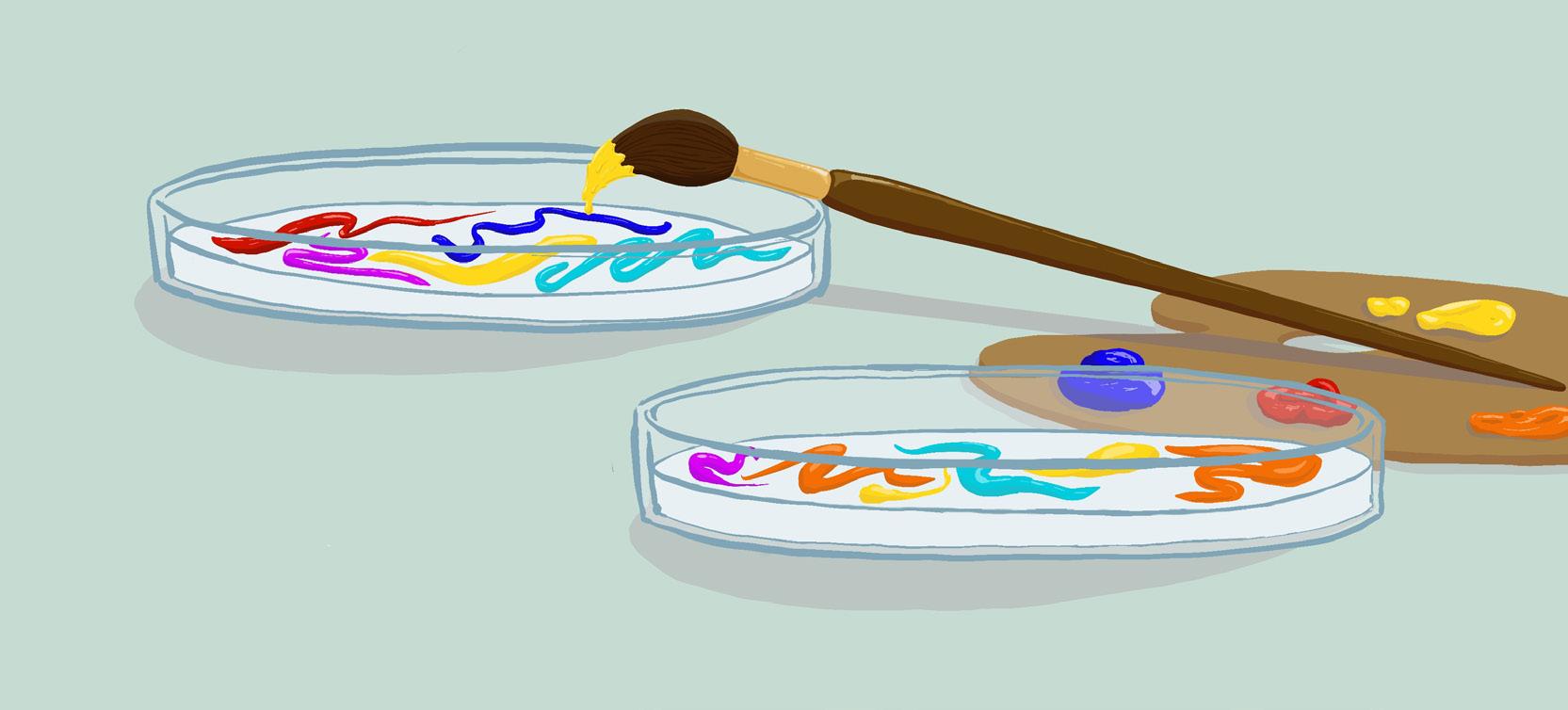

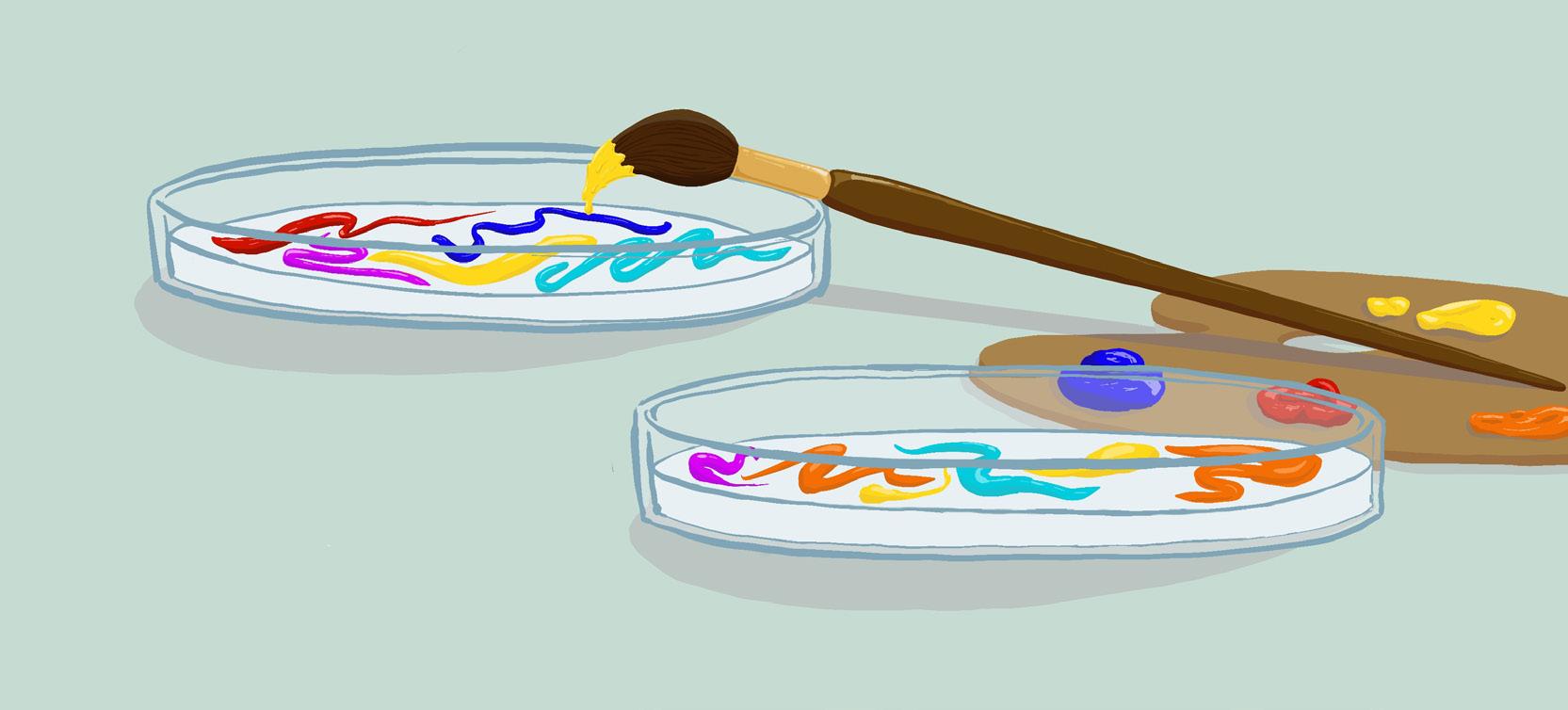

Bioprospecting a doctor’s guide to plants

Written by Julia Goralsky

Written by Julia Goralsky

What do you do when you get sick? With the miracle of modern medicine, it is a no-brainer for many individuals to open their medicine cabinets and pull from its depths pharmaceuticals such as Bendadryl, Advil, or PeptoBismol [1]. Yet, what did people rely on before a couple of pills were expected to solve every minor ache, fever, or cough? While it seems impossible to imagine our life without these prescriptions, it also seems absurd to picture Julius Caesar taking Ibuprofen after crossing the Rubicon or Genghis Khan a NightQuil after establishing the Yuan Dynasty. So, where did all of these modern medications come from?

In essence, most common pharmaceuticals can be traced back to nature, particularly in the secrets of its abundant biodiversity. In fact, more than 40% of medicines today either have direct origins in, or are derivatives of, plants [2]. Take Advil, for example. Before its analgesic properties were converted into a small red and blue pill, similar pain-relief activity was found in willow bark [3]. In this sense, scientists mimicked the chemical properties of salicin, a compound found within the plant, in order to make the modern day therapeutic. Through making successive modifications of the molecular structure of salicin, such as adding an acetyl group to the benzene ring, chemists were able to reduce side effects, including gastric irritation, to perfect the drug’s commercial form found today [4, 5].

Further analysis of modern medical advancements reveal other surprising correlations such as that between digoxin and the flower Digitalis lanata and between morphine and poppies. Digitalis is best exemplified by bunches of long purples flowers, commonly termed as foxgloves. Towards the end of the 18th century, this lively flower was being incorporated into treatments for dropsy, also known today as congestive heart failure. By breaking down the plant into its molecular

components, scientists discovered cardiac glycosides, which are now commonly incorporated into drugs like digoxin that treat rapid atrial fibrillation [6]. Similarly, poppies were discovered to have an additional use outside of adding a splash of color to home gardens. Using the molecular template from the Opium poppy Papaver somniferum, the composition of the pain-relieving drug, morphine, was successfully synthesized [7].

The process of deriving medicinal properties from natural compounds has become so common throughout human history that it has been given the name bioprospecting [8]. At its core, bioprospecting involves the race to find biochemical or genetic material within natural sources that can be applied to commercial endeavors. While we have been emphasizing the medicinal value of this field, bioprospecting also contributes greatly to the development of agricultural projects that rely on the example set by nature to improve both the harvesting of crops as well as the maintenance of livestock.

Illustrated by Alice Wang

20

Yet, it can still be hard to wrap your head around the transition from a singular plant to an entire commercial venture. How does this process really work? Although bioprospecting can take many different forms, there is a general outline that encompasses each application: collection, scientific research and analysis, and commercialization [8]. The first step involves identifying a certain property and its source within the natural world. Ultimately, this stage of the process involves integrating an environmental and cultural perspective, as many commercial candidates are often derived from the knowledge collected by populations indigenous to specific areas [9]. After all, willow bark was originally employed by ancient Egyptians and indigenous Americans. The next stage involves intensive screening and characterization that, in terms of medicinal developments, can take upwards of 10-15 years to complete in order to first determine the chemical structure of the source’s active components before progressing to clinical trials. The last stage involves the production, advertisement, and sale of the product to fulfill a requirement of a certain niche speciality.

As evidenced throughout its variegated process, bioprospecting involves the cooperation of multiple fields of study as a rather interdisciplinary endeavor. For in-

references

[1] Indiana University Bloomington. (n.d.). Overthe-counter medication list. Student Health Center. Retrieved from https://healthcenter.indiana.edu/medical/pharmacy/otc-medication-list.htmlBiodiversity [2] Mathur, S., & Hoskins, C. (2017). Drug development: Lessons from nature (Review).

Biomedical Reports, 6(6), pp. 612-614. https://doi. org/10.3892/br.2017.909

[3] Tylenol & Advil – when to use which. Tylenol & Advil – When to Use Which - Mercy Medical Center. (2018, November 29). Retrieved from https://www.mercycare.org/healthy-living/ health-education/tylenol--advil--when-to-use- which/

[4] Willow Bark. Mount Sinai Health System. (n.d.). Retrieved from https://www.mountsinai. org/health-library/herb/willow-bark#:~:text=The%20bark%20of %20white%20willow,to%20 aspirin%20(acetylsalicylic%20acid)

stance, not only must skilled scientific researchers and clinical coordinators take a prominent role in the process, environmental biologists and conservation ecologists, cultural and historical scholars, government officials, and economists must assume leading roles. Despite this internal diversity within the bioprospecting process, the field itself is united in its support of environmental conservation and its embrace of human diversity. For example, given its belief that nature provides the best model for products that support the human race, bioprospecting adopts a conservationist attitude, encouraging the protection of natural resources that can potentially have positive effects both directly and indirectly on human beings. In this sense, bioprospecting can not only in itself help advance human health to provide answers to each of our various ailments, yet it also provides a career path that allows the support of both the collaboration of humans across the boundaries of various cultures and histories as well as the interaction between humanity and the amazing natural world that surrounds us.

[5] Desborough, M. J. R., Keeling, D. M. (2017). The aspirin story – from willow to wonder drug. British Journal of Haematology, 177(5). https:// doi.org/10.1111/bjh.14520

[6] Gerwick, W. H. (2013). Plant sources of drugs and chemicals. Encyclopedia of Biodiversity (Second Edition), pp. 129-139. https://doi. org/10.1016/B978-0-12-384719-5.00111-8

[7] Davies, M. K., & Hollman, A. (2002). The opium poppy, morphine, and verapamil. Heart, 88(1), 3.https://www.ncbi.nlm.nih.gov/

[8] Bioprospecting. PUB programme. (2014, January 1). Retrieved from https://www.pub.ac.za/ wp-content/uploads/2015/06/Factsheet-Pub-BioprospectingPRINT 2.pdf

[9] Conservation Finance Training Guide. (2001, November). Retrieved from https://www.cbd.int/ doc/nbsap/finance/Guide_BioProsp_Nov2001.pdf

21

Cannabis Amnesia

With the recent legislation passed by the state of New York, adults 21 and older are free to smoke recreational mari juana in public areas where smoking tobacco is also permitted. Perhaps a reflection of the modern era, the push for marijuana legalization has become more popular over the last two decades. And yet, many advocates mistakenly believe they are talking about the marijuana of 20 years ago, when THC content was much lower, at about 2% per joint [1]. THC, or tetrahydrocan nabinol, is the psychoactive component of marijuana that causes addiction. By 2021, the marijuana industry had taken a hard look at the tobacco and alcohol industries and copied their behav ior; they increased the addictive component, because more THC means higher chance of alddiction, which means a higher chance of purchase and ultimately, more money flowing into the industry. As a more modern recreational activity, little research has been done on cannabis, at least the way it is used today. Its effect on the brain is even more elusive, as neurological ramifications may take years to manifest. To add to the complexity, the flowers and leaves of the cannabis plant that are smoked or vaped today are called marijuana, but there are many other forms of cannabis that can contain more of the psychoactive part [1]. Products such as edibles, shatter, oil, and dab can have THC contents as high as 95%. There is no evidence suggesting this level of THC is beneficial for any use, whether medical or recreational, but because of legalization efforts all over the world, society has normalized marijuana as ‘healthy’ and ‘organic’ [1]. Altogether, there has not been enough research on marijuana use, especially in terms

Addiction for all drugs of abuse starts with the nucleus accumbens. After dopamine—the neurotransmitter in the brain involved in pleasure—is repeatedly released, certain neuronal connections get stronger through repeated activation, reinforcing the behavior of taking the drug and causing addiction. All drugs of abuse, such as nicotine, alcohol, cocaine, heroin, or THC, impair the growth of neurons in the hippocampus, the brain structure crucially significant in memory encoding and retrieval. In fact, users of THC often show reduced hippocampal volumes [1]. Cannabis use also disrupts the efficiency of glutamate, a neurotransmitter which plays a large role in pruning inefficient neuron connections. Without sufficient pruning, normal brain development is delayed and even impaired. Cannabis users also exhibit less neurological activation in brain regions important in semantic and episodic memory retrieval [2].

High densities of CB1, the receptor that THC binds to, have been found in the hippocampus, more so than in other brain regions. Under normal circumstances, CB1 receptors combine with anandamides, their natural ligand, to regulate processes such as food intake, pain, mood, and reinforcement and reward understanding. When THC is introduced into the system, they “take over” these CB1 receptors, causing overactivation. The overactivity of these CB1 receptors has also been suggested to cause incidental associations, which are associations between low-salience stimuli that can affect behavior through a process called mediated learning [3]. These incidental associations are precursors to false memories.

To unpack this, it is necessary to consider the two current theories explaining the creation of false memories. On one hand, there is

Written by Allie Lin Illustrated by Emily Sun

the association activation theory, or AAT, which says that when one memory is activated, neighboring interconnected memories become activated as well. The nodes are related to each other either through semantic relationships or source relationships (based on the fact that one is the source of the other).

According to the AAT model, false memories are created when the spread of activation automatically triggers nearby irrelevant information. The other theory prevalent in the field is the fuzzy trace theory, or FTT, which separates all memory events into ei ther verbatim or gist memory traces, the former meaning specific details of any memory and the latter being the general “idea” of a memory. An example of a verbatim trace could be how the shape of the leaves on a tree you loved climbing as a child meant it was an oak tree, whereas a gist trace might be the concept that there was a tree [3]. The FTT states false memories are formed when verbatim traces are forgotten, or when individuals overly rely on gist traces, resulting in memory inaccuracies. The four core studies that I will use to introduce the hypothesis all use the Deese Roediger-McDermott paradigm as a measure for false memories. First introduced by James Deese in 1959 and modified by Henry Roediger and Kathleen McDermott in 1995, the DRM is a common and effective method of measuring false memory creation. It operates on the presentation of word lists to the subjects at hand. These word lists contain words that are all somewhat semantically related to each other, as well as to a critical “lure,” or theme, word, which is not presented in the initial list. For example, a word list commonly used in the DSM para digm thematically deals with the lure word, “anger,” and consists of words like “enrage,” “hate,” “emotion,” “red.” Participants are made to study these word lists during the encoding phase; during the retrieval stage, they are presented a variety of words and asked to categorize them into presented or non-presented. A false memory is recorded when a participant denotes an unrelat ed word, or the critical lure, as a word they had been presented with during the encoding phase.

An interesting nuance about these studies is that two of them [2, 3] do not administer THC to their study members; rather, their cannabis users are recruited from the general population. The other two [4,5] use a placebo-controlled study design, administering THC to their participants. Because of the participant recruitment process in Riba et al. and Kloft et al. (2019), these two studies were able to address false memories in chronic cannabis users, not just light cannabis users. Riba et al.’s participants consisted of habitual cannabis users, which is defined as heavy daily consumption for 2 or more years. This chronic regular use is dis tinguished from the other two studies, whose experimenters chose to limit the THC administration to 15 mg and 15-21 mg, depending on body weight [4, 5]. For comparison, Riba et al.’s “chronic” user had 1.75-2.75 grams of cannabis a day.

In Riba et al.’s paper, results indicated cannabis users were more likely to make memory illusions like those made by neurologi cal and psychiatric patients.These memory illusions are akin to visual or auditory hallucinations, but without the severity and the associated stigma. Repeated fMRI scans reveal that cannabis users exhibit hypoactivation in areas of the brain including not only the hippocampus and parahippocampal gyrus, but also the

22

Why is it so hard to remember being high?

left parietal and left dorsolateral prefrontal cortices. These first two regions are known to be strongly implicated in memory, but the parietal and prefrontal cortices are also incredibly import ant because of their role in attentional processes, planning, and judgment. Memory deficits of this kind had been found in older individuals, leading the team of researchers in Riba et al. to spec ulate that chronic cannabis use can expedite the memory deficits associated with the normal aging process. This increase in false memories is explained by FTT: when verbatim memory traces are impaired by cannabis, users rely on gist memory traces to decide if a memory is real or false. Of course, that loses a lot of infor mation that is specific to the memory, which results in false mem ories. To put it simply, FTT says a false memory is created when someone loses verbatim memory traces and is left only with the gist memory trace. But because they still need to “remember” a “whole” memory, they simply “fill in” the gaps to make that memory “whole” again. For example, if I had a really nice memory from childhood where I was picking wildflowers with my mother for an afternoon, but 10 years later, I couldn’t recall the specific of the situation and only remembered a park and a presence

nated with cannabis users exhibiting the highest false-memory rates in response to leading questions. They also showed high rates for being unable to ascertain whether a non-presented stimulus was presented at the scene or not. In the perpetrator scenario, intoxicated participants displayed a tendency to respond “yes” to all questions. This study also highlights the persistence of the effects. In addition to having more false memories immediately after receiving cannabis (so when they were “high”), cannabis users were found to have less true memory recognition as well as more false memory recognition 1 week later, when they were

The general takeaway is yes, cannabis increases false memory rates. As the governmental barriers are slowly being lowered and cannabis proliferates throughout the world, the need to understand their effects on the brain becomes paramount. Moreover, the implications that arise from their findings encourages the global population to consider the true consequences of smoking a joint. These papers, reviewed in such a way, motivate the lay public to re-evaluate their views on the safety of marijuana.

References

1. Stuyt, E. (2018). The problem with the current high potency THC marijuana from the perspective of an addiction psychiatrist. Missouri medicine, 115(6), 482.

2. Riba, J., Valle, M., Sampedro, F., Rodríguez-Pujadas, A., Martínez-Horta, S., Kulisevsky, J., & Rodríguez-Fornells, A. (2015). Telling true from false: cannabis users show increased susceptibility to false memories. Molecular Psychiatry, 20(6), 772-777.

3. Kloft, L., Otgaar, H., Blokland, A., Garbaciak, A., Monds, L. A., & Ramaekers, J. G. (2019). False memory formation in cannabis users: A field study. Psychopharmacology, 236(12), 3439-3450.

4. Kloft, L., Otgaar, H., Blokland, A., Monds, L. A., Toennes, S. W., Loftus, E. F., & Ramaekers, J.

5. G. (2020). Cannabis increases susceptibility to false mem ory. Proceedings of the National Academy of Sciences, 117(9), 4585-4589.

6. Doss, M. K., Weafer, J., Gallo, D. A., & de Wit, H. (2018). Δ9-tetrahydrocannabinol at retrieval drives false recollection of neutral and emotional memories. Biological psychiatry, 84(10), 743-750.

means the familiarity basis is gone and the lack of certainty for study members becomes greater. This suggests that cannabis users are more likely to respond liberally. This liberal responding is an effect of cannabis use, as incidental associations (from AAT) increase with CB1 receptor presence [3]. Therefore, like the impulsivity of adolescence, cannabis has been associated with increased recklessness and lack of planning.

Kloft et al.’s 2020 study changes the method of testing slightly. Instead of the conventional DRM paradigm that all the discussed studies so far have used, the participants were divided into two groups and assigned either an “eyewitness” or a “perpetrator” role. In these misinformation paradigms, the experimenters used virtual reality headsets to mimic a real-life situation [4]. An “eyewitness” saw a crime initiated by the “perpetrator,” and participants in both roles would be asked after the fabricated scene if they remembered X event happening, even though event X didn’t happen in the scene. Through the power of suggestion (i.e. a leading question), false memories were implanted in participants’ memories by the experimenters. The eyewitness scenario culmi-

23

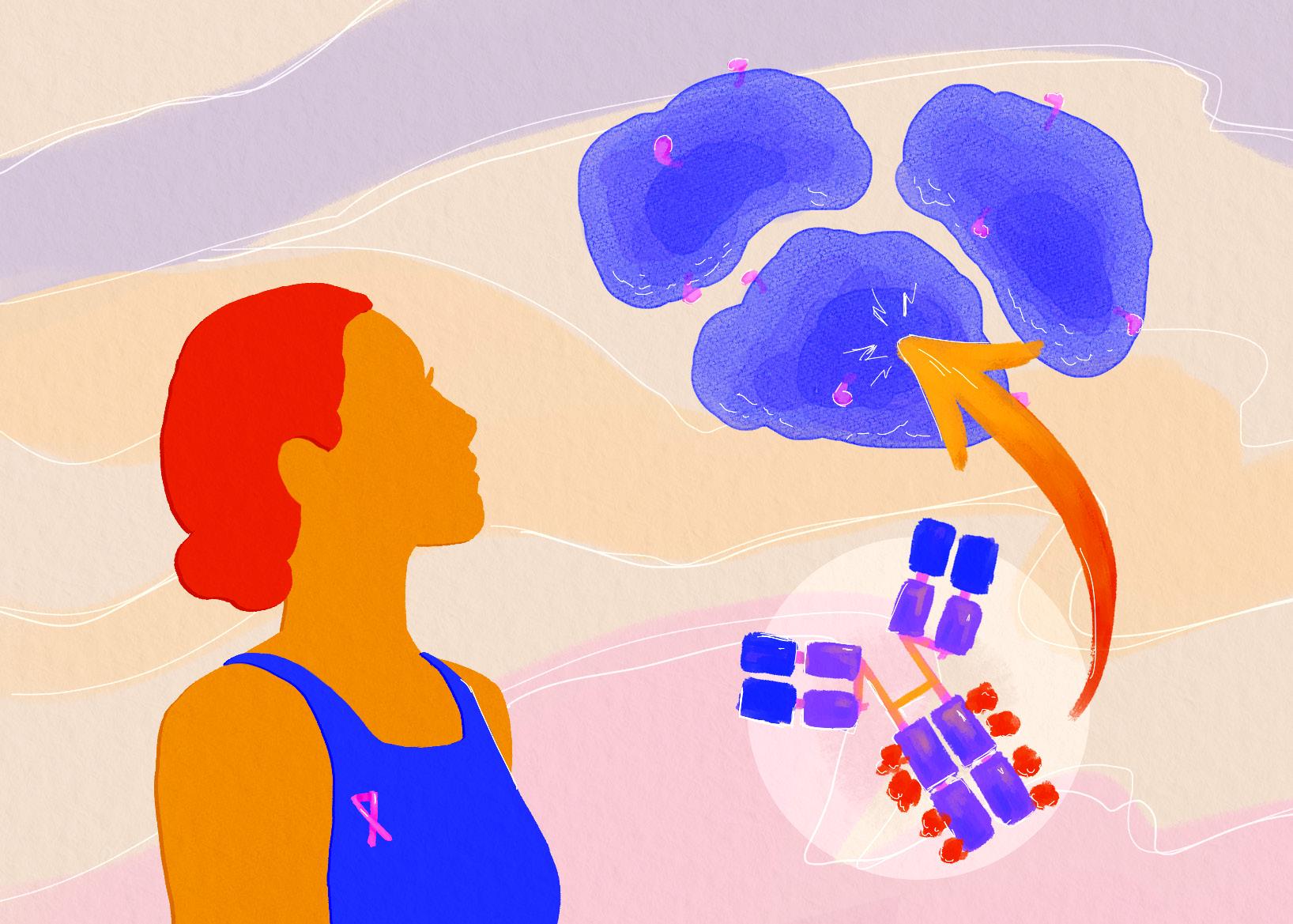

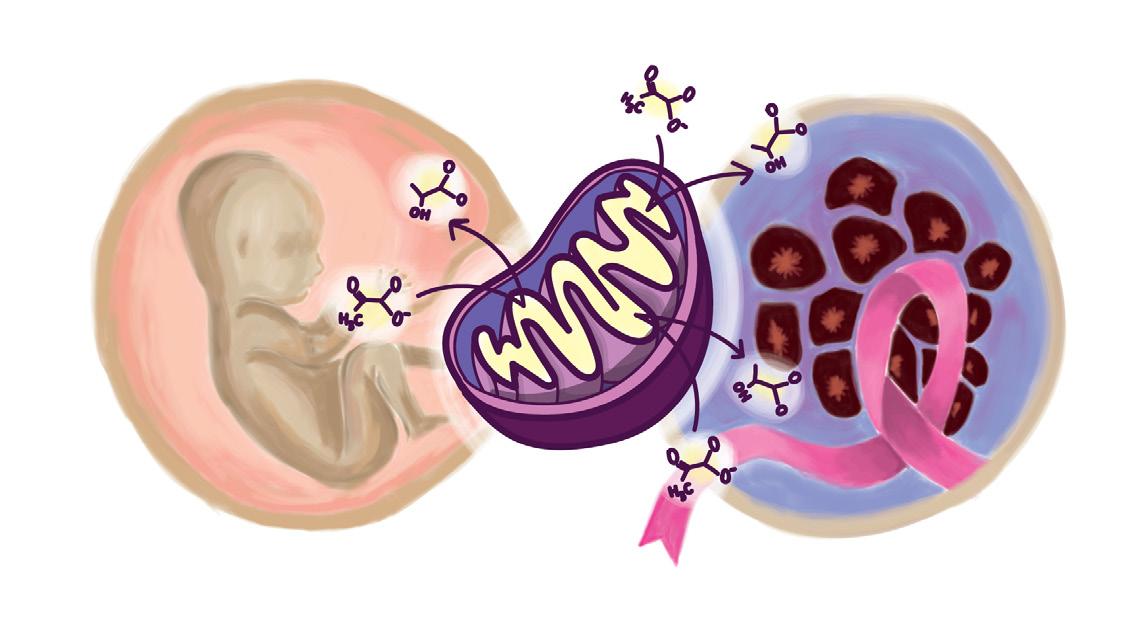

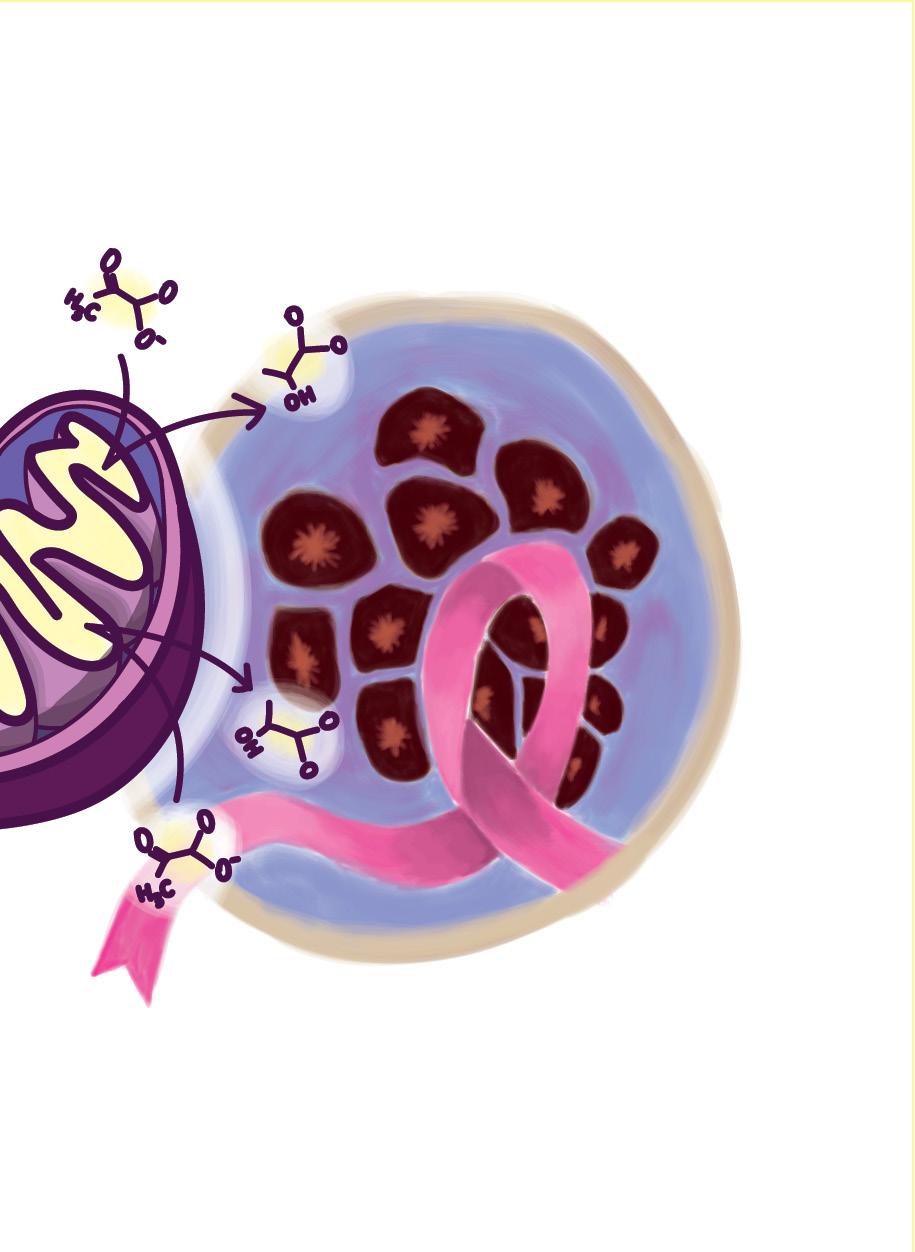

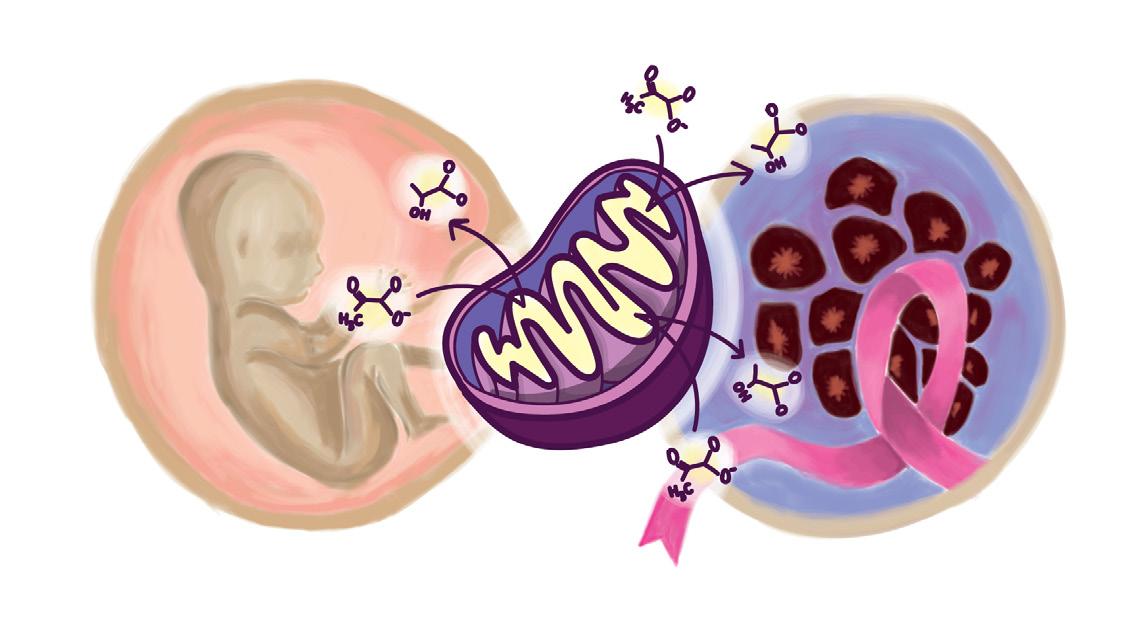

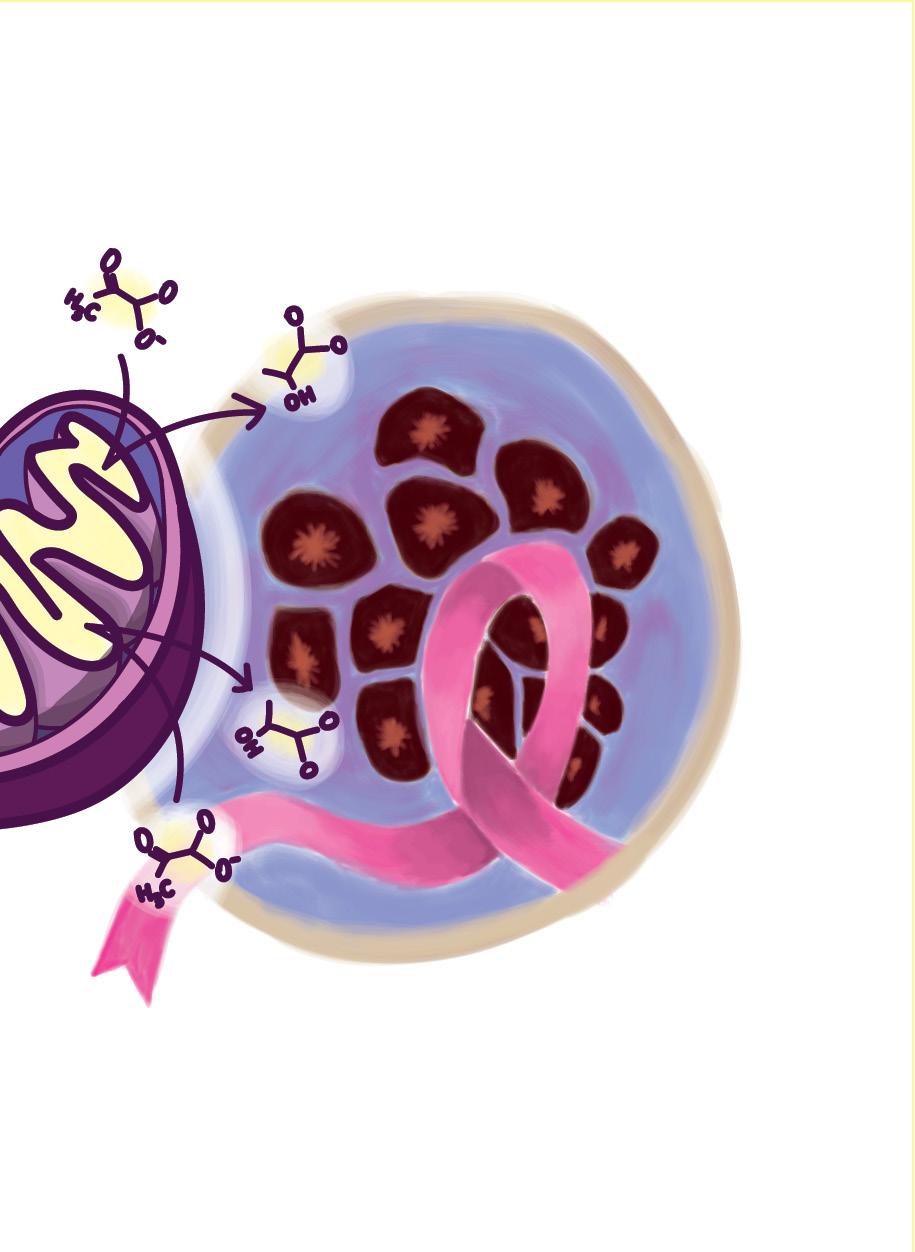

WHAT THE CELL? Investigating Cancer Cells

Every living human was once an embryo: a cell mass with primitive organs, incapable of surviving on its own. The process of development allows for an individual, once such a collection of cells, to exercise the powers of rationality, even permitting an individual such as myself to write this article. Hence, this miracle of growth that forms our society today is of especial interest to many developmental biologists. While the method of cellular formation that produces living, functioning human beings may seem contrite or inapplicable outside of the biological realm, it is the very understanding of these developmental processes that have the potential to lead to medical innovation. Therefore, in order to understand the relevance of such developmental processes to modern-day diseases, specifically cancer, it is essential to begin at the beginning: cellular differentiation.

During the embryonic stage of development, most internal and external organs are formed. Within the mass of cells are embryonic stem cells, which differentiate into multiple different kinds of embryonic tissue layers. In order words, generalized cells are assigned specific roles within the body’s system, assisting in the development of specific organs and broader systems within the body, including the nervous system [1]. In analyzing development, the neural crest cells are of particular importance. This type of cell is migratory and responsible for forming essential features of the body; neural crest cells can be found in bone comprising the human face, cartilage, and muscle, brain, and nerve cells. Scientists, including contemporary researchers such as Marcos Simoes-Costa, have long since investigated neural crest cells. Siomes-Costa himself remained particularly interested in the unique abilities of neural crest cells located in different areas of the embryo. Specifically, such cells in the head-space region of the embryo are known to be able to form bone and cartilage, while others in the bottom region of the embryo prove unable to undertake this task.

Written by Lauren Goralsky Illustrated by Audrey Acken

Written by Lauren Goralsky Illustrated by Audrey Acken

However, the most relevant investigation of neural crest cells in relation to modern medicine stems not from what these cells differentiate into, but from the forms of metabolism that are undergone during these periods of differentiation. Specifically, neural crest cells perform cellular respiration anaerobically, even in the presence of oxygen. This is unique given that anaerobic respiration lacks the presence of oxygen which can be reduced at the end of an electron transport chain. In other words, the presence of oxygen is essential in

driving the final synthesis of ATP, a chemical which is used to store energy for cells. Normally, cells process glucose using a metabolic process known as aerobic respiration in which oxygen is used to convert the glucose into large amounts of ATP [2]. Without oxygen, a cell may undergo fermentation at the end of glycolysis limiting the amount of ATP produced. Thus, researchers were initially confused why cells such as neural crest cells and cancerous cells would choose a version of respiration which requires more glucose to produce less energy.

24

This particular behavior is of interest to present researchers given the similar effects observed in cancerous cells, known as the Warburg effect. The Warburg effect is a deviation in normal glucose consumption and ATP, or energy production. Through the study of cancerous cells, the Warburg effect has been hypothesized to provide select benefits, supporting extensive and unregulated proliferation within a cellular environment [3]. For example, the increased uptake in glucose in anaerobic respiration can lead to the increased generation of nucleotides and lipids, materials the cell needs to grow and divide, colonizing specific cellular environments. Additionally, other researchers have proposed that the increased uptake of glucose increases the inputs into the pentose phosphate pathway, a reaction occurring alongside glycolysis, that increases

as they are specifically differentiated to a specific area of the body, the ability to migrate to different biological systems and areas of the body is especially attractive to cancerous cells. The more such cells are able to migrate, the more they can colonize particular areas of the body, and subsequently metastasize , increasing the occurrence of cancerous growth within the body as a whole. In understanding the significance of this effect, around 90% of cancer deaths are correlated to instances of metastasis [5].

Identifying the similar occurrence of the Warburg effect in neural crest cells does not mean that cancer is “solved.” Yet, what it does suggest is further understanding of the mechanisms which allow the proliferation of cancer, ultimately supporting the ability of medical-scientists today to develop drugs and therapeutics to combat this disease. Therefore, although an embryo may appear small, it is the very understanding of the molecular processes occurring within the miracle of development that may hold additional answers to saving the people into which such embryos develop.

Works Cited

[1] Ding, D. C., Shyu, W. C. & Lin, S. Z. (2011). Mesenchymal Stem Cells. Cell transplantation, 20(1):514. Retrieved October 29, 2022, from https://pubmed.ncbi.nlm. nih.gov/21396235/ Liberti, M. V., & Locasale, J. W. (2016, March). The Warburg effect: How does it benefit cancer cells? Trends in biochemical sciences. Retrieved October 29, 2022, from https://www. ncbi.nlm.nih.gov/pmc/articles/ MC4783224/#:~:text=The%20 Warburg%20 Effect%20is%20 defined,function%20of%20 the%20Warburg%20Effect.

[2] Reece, J. B., Urry, L. A., Cain, M. L., Wasserman, S., Minorsky, P. V., Jackson, R. B. & Campbell, N. A. (2014). Cellular Respiration and Fermentation. In Campbell Biology (10th ed.). Pearson, Boston.

[3] Liberti, M. V., & Locasale, J. W. (2016). The Warburg effect: How does it benefit cancer cells? Trends in Biochemical Sciences, 41(3), 211–218. https://doi. org/10.1016/j.tibs.2015.12.001

the number of reduced agents called NADPH with a cell that can be used to fuel the cell.

However, in particular relation to neural crest cells, the research of Simoes-Costa found that the Warburg effect is what allows cells to move during initial development [4]. While non-embryonic cells should lack the ability to move

[4] Seyfried, T. N., & Huysentruyt, L. C. (2013). On the origin of cancer metastasis. Critical reviews in oncogenesis. Retrieved October 29, 2022, from https://www.ncbi.nlm.nih.gov/pmc/ articles/PMC3597235/

[5] Abell, A. (2022, September 29). This biologist believes the developing embryo is “our best teacher”. Science News. Retrieved October 29, 2022, from https://www.sciencenews. org/article/marcos-simoes-costa-embryo-sn-10-scientist-to-wat ch

25

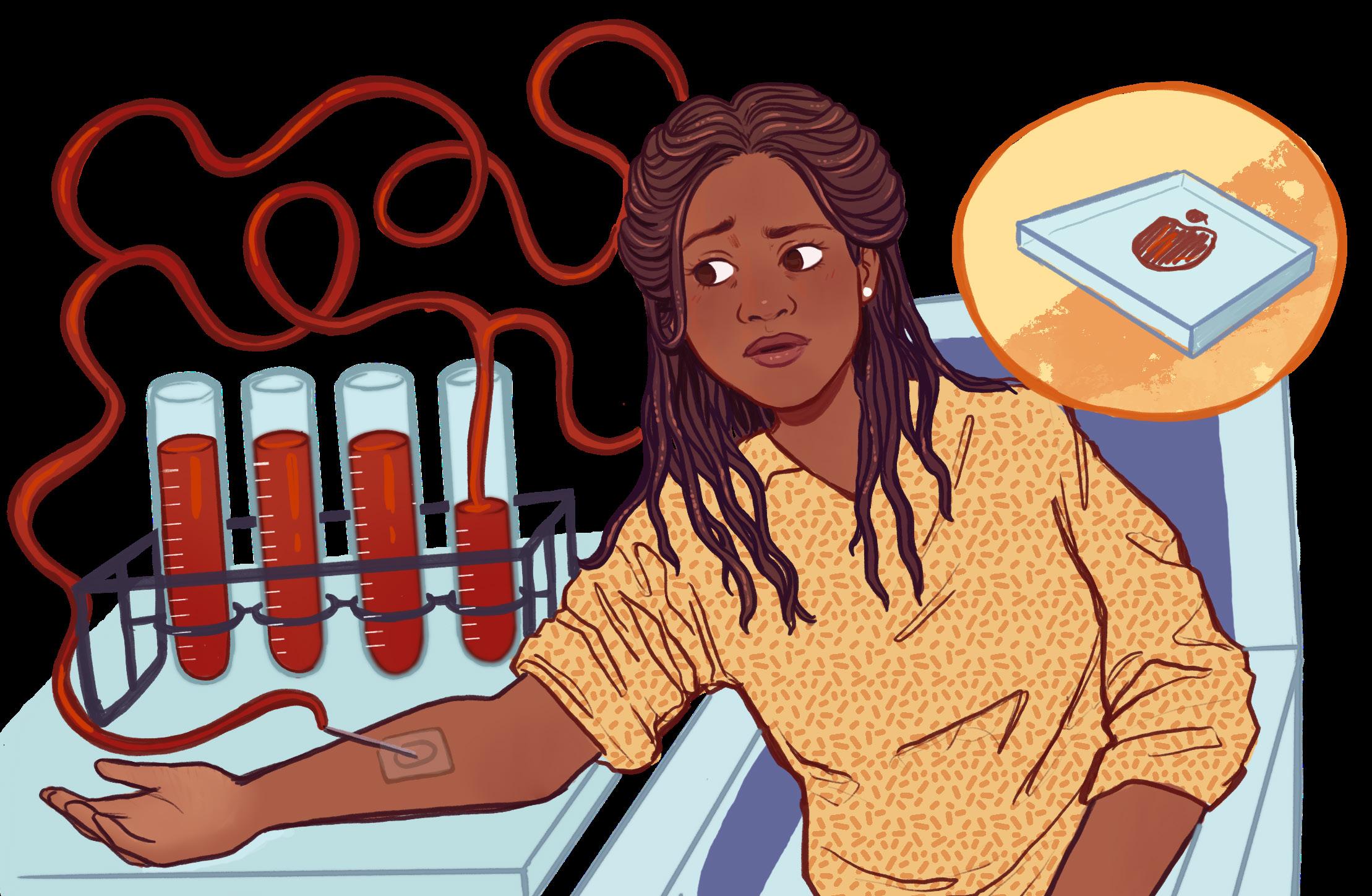

Are Hospitals Secretly Killing Us?

Unsurprisingly, no. However, in many hospitals, doctors may be unintentionally counteracting the crucial care that they provide through excessive amounts of blood withdrawal.

The needle is one of the most versatile yet feared instruments used in modern medicine. Despite their extensive use in the medical field, from vaccines to IVs to blood diagnostics, many patients maintain a deep fear of them. In particular, children are disproportionately affected by the fear of needles (trypanophobia), with about 67% of children being afflicted; still, however, approximately 16% of adults avoid vaccinations solely due to this fear [1]. Although trypanophobia often stems from an emotional response such as hypersensitivity to pain or needle-related trauma, new research shows that there may be a new reason to fear the needle: Hospital-Acquired Anemia (HAA). HAA is a condition in which patients are admitted into hospital care with normal hemoglobin levels, but develop anemia during their hospitalization due to excessive blood diagnostic testing to monitor patient condition [2].

Although the idea of a patient’s condition worsening as a result of hospital treatments may seem comical at first, the evidence is compelling. A study conducted by Koch et al. in the Journal of Hospital Medicine demonstrated that in a sample of 188,447 patients who were tracked during their hospitalization in an academic medical center and 9 different community hospitals, 74% of patients developed HAA. Furthermore, a whopping 30% developed severe HAA, which is defined as having a hemoglobin level (Hgb) of less than 9.0 g/dL (the healthy Hgb for males and females are 13.2 - 16.6 and 11.6-15.0 g/dL respectively) [3, 4]. Problematically, research also demonstrates that the effects of HAA can prevail even after treatment. Severe HAA has been associated with increases in 30-day mortality (death within 30 days of hospital admission) and increased readmission rates into hospitals after discharge [2].

Written by Thilina Balasooriya Illustrated by Macarena Hepp

The logical question then becomes: what can the medical industry do to halt the prevalence of HAA? Firstly, doctors generally collect far more blood than is required. Studies show that most hospitals collect almost 12 times more blood than necessary and often most of it goes to waste [5]. Each vial typically collects about 5-10 mL of blood, which can accumulate quickly to dangerous levels with extensive testing, especially in infants or chronically ill patients. It has been shown that many labs should be able to decrease the volume of blood that they collect while still obtaining the same results on patient status [5].

In addition to this somewhat trivial solution, a research lab at Arizona State University is completely reinventing the current blood diagnostic method to reduce the amount of blood necessary for diagnostics analysis with their technology, InnovaStrip [6]. Unlike Theranos, a failed blood diagnostics innovation that claimed to be able to use only nanoliters of liquid blood (not nearly enough to make accurate measurements), InnovaStrip uses 5-10 microliters (µL) of blood (about 1000 times more than Theranos, but one thousand times less than what is currently used), which is solidified into a Homogenous Thin Solid Film (HTSF) using a porous, hyper-hydrophilic coating called HemaDrop that rapidly attracts water downwards to solidify the blood [6]. This is approximately one thousand times less blood per test than the 5-10 mL that is currently used as the standard. Its hyper-hydrophilic nature means that the coating rapidly attracts water downwards to solidify the blood drop with minimal phase separation, meaning that blood does not divide into different parts like whole blood, serum and plasma, allowing for uniform and reproducible measurements of the HTSF surface. The HTSF is measured using solid state analysis techniques such as X-Ray Fluorescence, which shoots x-rays at the HTSF and can measure important elements in the blood such as electrolytes (K, Mg) and iron based on the scattered electrons detected from the sample [7]. As innovation in this field continues to occur

26

and develop, the hope is that the combination of increased scrutiny on hospital procedures, as well as novel research such as InnovaStrip, can help decrease the prevalence of HAA in hospitals around the world.

References:

1. Trypanophobia (fear of Needles): Symptoms & treatment. Cleveland Clinic. (n.d.). Retrieved September 17, 2022, from https://my.clevelandclinic.org/health/ diseases/22731-trypanophobia-fear-of-ne edles#:~:text=Trypanophobia%20is%20the%20extreme%20 fear,or%20avoid%20necessary%20medical%20care

2. Makam, A. N., Nguyen, O. K., Clark, C., & Halm, E. A. (2017). Incidence, Predictors, and Outcomes of Hospital-Acquired Anemia. Journal of hospital medicine, 12(5), 317–322. https://doi.org/10.12788/jhm.2712

3. Koch, C.G., Li, L., Sun, Z., Hixson, E.D., Tang, A., Phillips, S.C., Blackstone, E.H. and Henderson, J.M. (2013), Hospital-acquired anemia: Prevalence, outcomes, and healthcare implications. J. Hosp. Med., 8: 506-512. https://doi.org/10.1002/jhm.2061

4. Mayo Foundation for Medical Education and Research. (2022, February 11). Hemoglobin test. Mayo Clinic. Retrieved September 17, 2022, from

https://www.mayoclinic.org/tests-procedures/hemoglobin-test/about/pac-20385075#:~:text=The%20 healthy%20range%20for%20hemoglobin,to%20 15%20grams%20per%20deciliter

5. Tackling Hospital-Acquired Anemia. AACC. (2016, April). Retrieved September 17, 2022, from https:// www.aacc.org/cln/articles/2016/april/tackling-hospital -acquired-anemia-lab-based-interventions-to-reduce-diagnostic-blood-loss

6. Herbots, N., Suresh, N. C., Khanna, S., Narayan, S. R., Chow, A. A., Sahal, M., Ram, S., Day, J. M., Pershad, Y. W., Thinakaran, H. L., Culbertson, R. J., Culbertson, E. J., & Kavanagh, K. L. (2019). Optimizing Homogeneous Thin Solid Films (HTSFs) from µl-Blood Droplets via Hyper-Hydrophilic Coatings (HemaDrop™) for Accurate Compositional Analysis via IBA, XRF, and XPS. MRS Advances, 4(46-47), 2489-2513. https://doi.org/10.1557/adv.2019.398

7. Balasooriya, T. et. al (2020, October 24). New algorithm for small volume, fast, accurate blood analysis (FABA) for blood diagnostics via hand-held XRF on drops rapidly solidified into uniform thin films. Bulletin of the American Physical Society. Retrieved September 17, 2022, from https://meetings.aps.org/Meet-

27

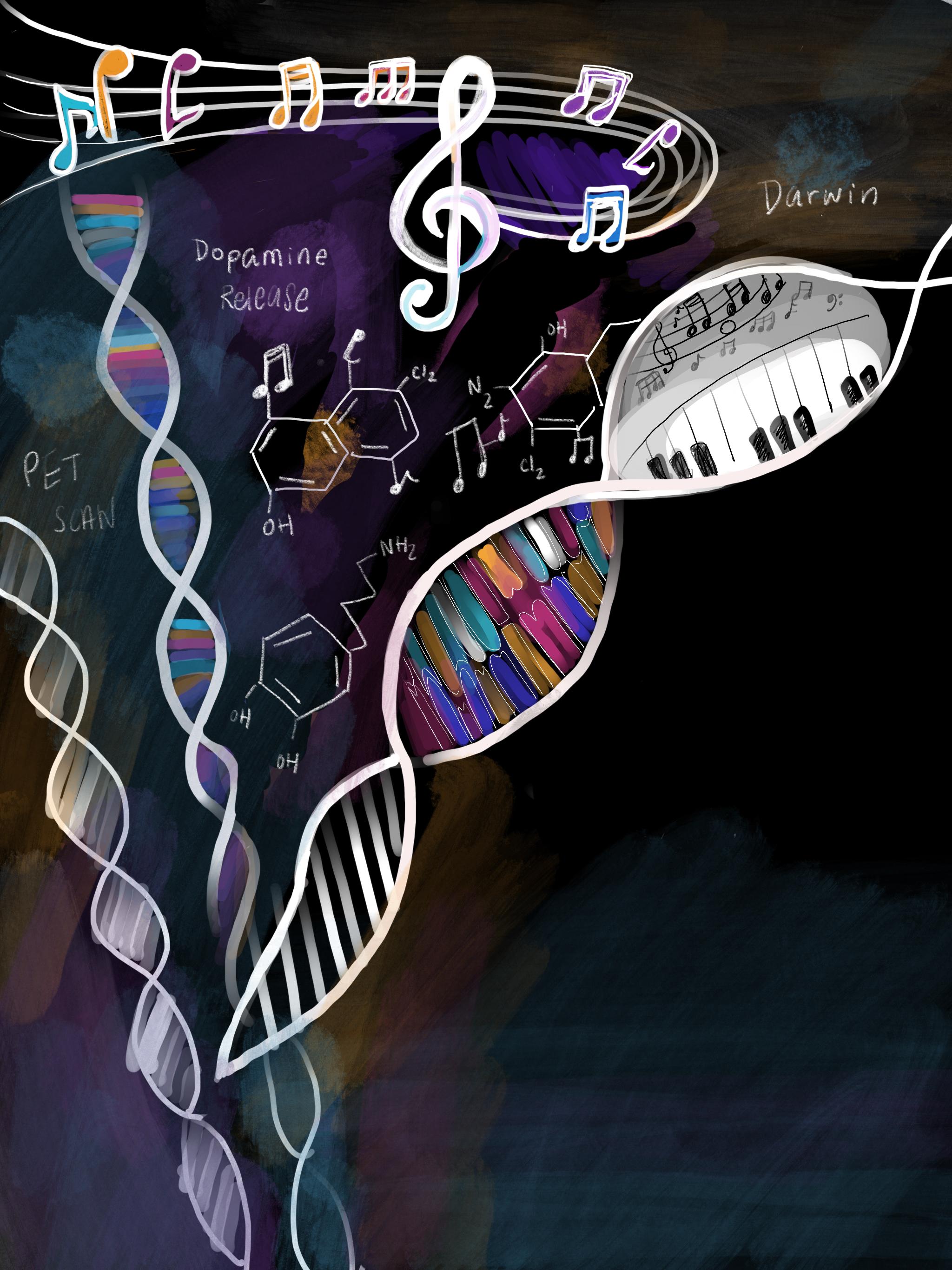

THE SOUND of SCIENCE

Written by Angel Latt

Written by Angel Latt

co-editors-in-chief letters

—

28

Illustrated by Ananya Raghavan

you’re like me, you probably have earbuds in as you walk from class to class, pump-up jams blasting as you work out, or background music playing aloud as you cook. Music is timeless. People have been creating and listening to music for ages. We often fill the silence with songs and tunes that ease our discomfort and stress or simply to lift our moods. Yet, how have we become creatures who crave these beautiful melodies? What is in these notes and chords that keep us coming back to them?

One of the reasons we enjoy music is based on evolutionary approaches. Darwin said sexual selection is the reason for such musical capacities: “Musical notes and rhythm were first acquired by the male or female progenitors of mankind for the sake of charming the opposite sex [1].” Some have argued that music making is a reasonable index of biological fitness, proving physiological fitness to create music. Another theory suggests that music arose from humming or singing in order to maintain infant-mother attachment. The “putting-down-the-baby hypothesis” indicates that humming or singing was a comforting signal that the mother was nearby in the absence of physical touch. Some even claim that music helps humans cope with life’s transitoriness and existential anxieties. Music can also simply be a way of passing time [2].

When we listen to our favorite tunes, our brain is actively engaging with each chord. Harvard Medical School neurologist and psychiatrist David Silbersweig, MD noted how different regions of the brain are stimulated as we listen to music [3]. The temporal lobe processes tone and pitch. The cerebellum processes and regulates rhythm, timing, and physical movement. The amygdala and hippocampus make connections with emotions and memories. All of these parts work together to create the symphony of notes and chords that we put on repeat.

Sometimes when we listen to music, we feel the hairs on our arms rise as goosebumps and chills shiver down our spine. This phenomenon is indicative of dopaminergic musical pleasure. In one study that used positron emission tomography (PET) scanning trying to correlate our brain’s reward system with listening to music, researchers found endogenous dopamine release in the striatum, which is responsible for motor and reward pathways, during music listening [4]. Notably, they also found that dopamine release begins in anticipation of a peak arousal moment in the song, such as a beat drop, chorus, or a bridge, in addition to the peak pleasure itself. Music can also be stress-relieving as well. A study found that listening to music prior to being exposed to a stressor can mitigate the endocrinological and psychological stress response that is activated by our fight-or-flight response [5].

Music provides us with these amazing qualities. It comforts us, takes our stress away, and also makes us happy, which explains why so many people listen to music everywhere. However, how is our brain able to handle music, especially lyrical music, as we are trying to cook, workout, or even study? Current evidence on background

music is not uniform and there is not a one-size fit’s all answer. There are many conflicting results on the effects of background music on cognition and performance. One study found that background music increased task-focus states compared to silence. Another study found that music can worsen cognitive function for those who have a high need for external stimulation and get bored easily, compared to those who have a low need for external stimulation where music tends to help their mental health performance. Additionally, the choice to listen to music should be dependent upon the difficulty of the task at hand. According to Yerkes-Dodson law, a moderate level of arousal can optimize performance. So if adding music provides you with a balanced sense of arousal while you are working away, you will most likely benefit from listening to music in the background. However, if the task is rather intensive and requires increased concentration, listening to music, especially complex lyrical music, may decrease performance due to high arousal [6].

Some genres of music have been popularized for increasing one’s productivity. Classical music has been long hailed as great study music, and there have even been studies on the “Mozart effect” for increasing spatial reasoning skills [7]. Another study has found that participants who listened to classical music had an increase in dopamine secretion, a neurotransmitter responsible for reward, as well as increases in synaptic function that creates more neural connections, thus creating an overall pleasant studying experience [8]. Additionally, the famous “LoFi Girl” on YouTube has been acclaimed by students worldwide for having not only relaxing and rhythmic tempo but also repeated and predictable tracks to keep listeners actively engaged in their tasks [9].

So while you might be listening to Beethoven’s “Symphony No. 5 in C Minor” as you work on your pset, your friend might be listening to Taylor Swift’s Midnight album. What is happening in your brain when you’re listening to your favorite tunes? A study had participants listen to various genres of music: classical, country, rap, rock, Chinese opera, and a participant’s pre-reported favorite song. The results showed that when music was disliked, the precuneus, a region of the brain in the parietal lobe responsible for preference, was relatively isolated from the rest of the brain in fMRI imaging. Similarly, the global efficiency, or how spread an activated region in our brain, was high only for the Liked condition in the auditory cortex, a region associated with processing sound. When listening to one’s favorite song, the hippocampus, a region associated with memory, became functionally distinct from the auditory cortex, as opposed to the Liked and Disliked groups where the activation of the hippocampus was relatively low. This shows how preferences for different types of music and even specific songs can drastically affect the neural connections in your brain [10].

Whether or not you’re an avid music enthusiast, we all come across music everyday in our lives and react to it even if we don’t notice it. With more artists and different

If

29

30

31

Aida Razavilar Co-Editor-in-Chief

Aida Razavilar Co-Editor-in-Chief

Written by Charlie Bonkowsky

Illustrated by Aruna Das

Written by Charlie Bonkowsky

Illustrated by Aruna Das

Potential conversations about human behavior in Lincos, from Dr. Freudenthal’s book creating the language. [8]

Potential conversations about human behavior in Lincos, from Dr. Freudenthal’s book creating the language. [8]

Figure 1. CRISPR DNA editing system diagram [2].

Figure 2. Trends in CRISPR publications from 2005 to 2021 [2].

Figure 1. CRISPR DNA editing system diagram [2].

Figure 2. Trends in CRISPR publications from 2005 to 2021 [2].

Written by Julia Goralsky

Written by Julia Goralsky

Written by Lauren Goralsky Illustrated by Audrey Acken

Written by Lauren Goralsky Illustrated by Audrey Acken

Written by Angel Latt

Written by Angel Latt