Offering 60+ eateries, stores, and entertainment venues, Shop Penn has what you need to welcome the spring season in style.

Warmer weather is right around the corner, so now is the time to get out and enjoy everything the Shop Penn Retail District has to offer.

Shop Local.

Shop Penn.

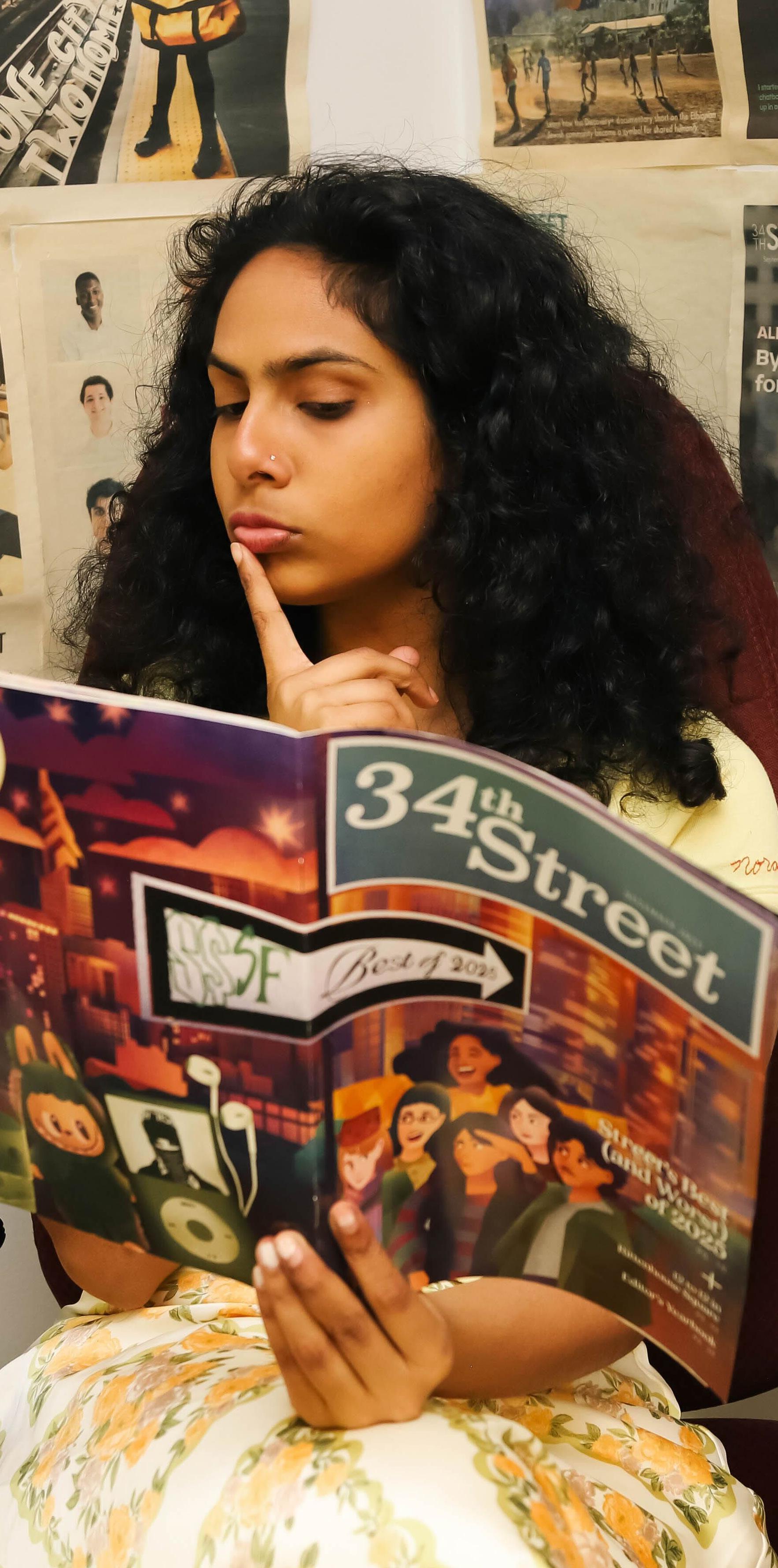

07 Ego of the Month: Norah Rami

Street’s former commander–in–chief on choices, childhood, and Andy Warhol.

20

Coding the Next Code

Does AI belong at a patient’s bedside?

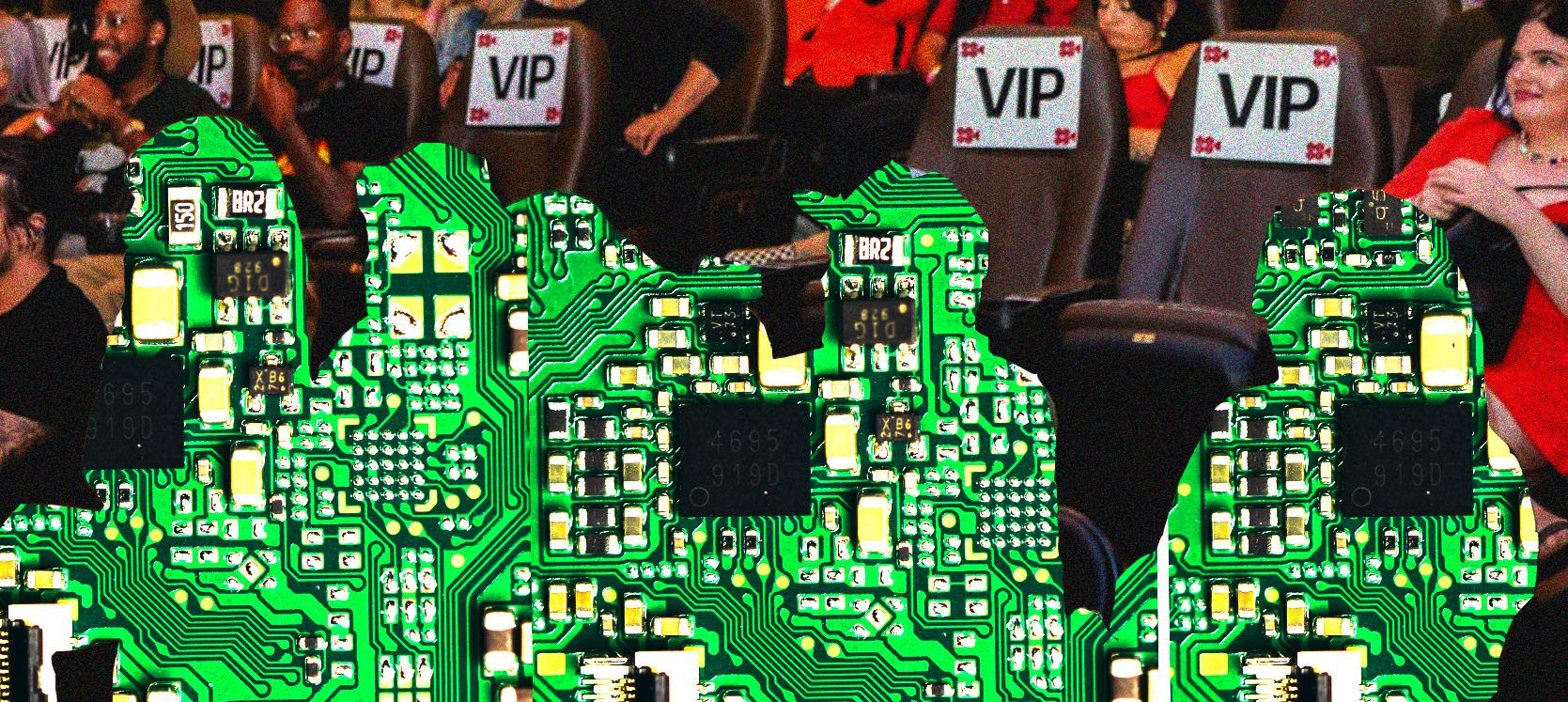

Before the Movies Begin,

AI is Already Watching

For Penn students pursuing a career in the film industry, artificial intelligence is altering both the work and the way in.

AI Artist: More Than an Oxymoron

Venture Lab’s Taylor Caputo dives into the intersection of technology and art.

Penn labs are building the future of robot warfare. Who’s building the ethics?

17776: or What Science Fiction Will Look Like in the Future

Jon Bois’ multimedia narrative abandons science fiction’s obsession with innovation to ask what remains when progress falters.

ON THE

AI is inescapable. It’s embedded in every facet of life. I've nothing witty to say, just praying for the day this bubble pops.

By Insia Haque

It’s no secret that I’m directionally challenged.

On the SEPTA, on campus, and on streets I’ve walked a hundred times, I am always staring at Google Maps, watching a line of blue dots tell me where to go. And somehow, I still miss turns. I end up somewhere close to where I’m supposed to be, only to realize that I’m on the wrong side of the street, or that I’m still a couple of minutes off from my true destination.

Honestly, it’s fascinating that I’ve never needed to get better at navigating. With technology, I am always corrected before I face any real consequences—a robotic voice tells me I’m going the wrong way, or a recalculated path shows up on my phone unprompted. Technology has made it possible for someone like me to move through the world without ever fully learning how to do it myself. I may be lost sometimes, but I’m never lost enough for it to matter.

Our daily lives have been shaped and reshaped by technology since its invention, and this dynamic has only become more pronounced with the rise of artificial intelligence. What once took five hours now takes five minutes. What once required patience, time, and tolerance for doing something badly can now quickly be bypassed with a ChatGPT input. We have outsourced our struggles so seamlessly and frequently that it has become an unconscious habit we can no longer get rid of.

But what we haven’t fully registered is what we’ve given up in the process. This issue explores the consequences of living in a world where technology renders struggle optional. What happens when we outsource patient care to a machine that cannot make any moral judgments? What happens when we outsource the work of acting to AI-generated actors? What happens when we outsource creative judgment to a platform that can process thousands of scripts, but only gravitates toward the ones that have already worked?

These are not hypothetical questions. These are

questions we are confronting in our lives, right now. The new technologies being built every day are less inventions than they are mirrors, reflecting back the fears, desires, and unresolved questions that we’ve always had. People never would’ve created Google Maps or the GPS if there was no one getting lost. They wouldn't have built any of it if people were not already struggling and searching for a way through.

The landscape of technology has shifted faster than any of us have been able to keep up with. These pages are an attempt to think about that shift, and to think about what becomes of us when we begin handing more and more of ourselves over to technology.

It’s one thing to let a GPS correct a wrong turn, and another to expect every human difficulty to be resolved just as quickly. n

SSSF,

EXECUTIVE BOARD

Nishanth Bhargava, Editor–in–Chief

Samantha Hsiung, Print Managing Editor

Sophia Mirabal, Digital Managing Editor

Jackson Zuercher, Assignments Editor

Kate Ahn, Design Editor

EDITORS

Sadie Daniel, Features

Ethan Sun, Features

Sarah Leonard, Focus

Chloe Norman, Focus

Henry Metz, Film & TV

Mira Agarwal, Music

Laura Gao, Arts & Style

Insia Haque, Arts & Style

Fiona Herzog, Ego

THIS ISSUE

Executive Editor

Jasmine Ni

Copy Editors

Prashant Bhattarai, Jessica Huang

Street Photo Editor

Connie Zhao

Deputy Design Editors

Eunice Choi, Kiki Choi, Chenyao Liu, Amy Luo, Andy Mei, Julia Wang

Design Associates

Catherine Garcia, Insia Haque, Marcus Hirschman, Mariana Dias Martins, Alex Nagler, Maggie Wang

STAFF

Features Writers

Maddy Brunson, Diemmy Dang, Kayley Kang

Focus beats

Melody Cao, Vasanna Persaud, Saanvi Ram, Jessica Tobes

Film & TV beats

Julia Girgenti, Susannah Hughes, Shannon Katzenberger, Sophia Leong, Chenyao Liu, Élan Martin–Prashad, Liana Seale

Music beats

Leo Huang, Remy Lipman, Drew Neiman, Joshua Wangia, Brady Woodhouse

Style beats

Jordan Millar, Alex Nagler, Addison Saji, Adalia Vargas, Jason Zhao

Arts beats

Anjali Kalanidhi, Jack Lamey, Aaron Tokay, Lynn Yi

Ego beats

Rodin Bantawa, Sophie Barkan, Alexis Boland, Jackson Ford, Dedeepya Guthikonda, Jaya Parsa

Staff writers

Emma Katz, Jenny Le, Suhani Mittal, Irene Antón Piolanti, Henry Planet, Namya Raman, Alena Rhoades, Tanvi Shah, Sara Turney, Diamy Wang

LAND ACKNOWLEDGEMENT

The Land on which the office of The Daily Pennsylvanian, Inc. stands is a part of the homeland and territory of the Lenni–Lenape people. We affirm Indigenous sovereignty and will work to hold the DP and the University of Pennsylvania more accountable to the needs of Indigenous people.

CONTACTING 34 th STREET MAGAZINE

If you have questions, comments, complaints or letters to the editor, email Nishanth Bhargava, Editor–in–Chief, at streeteic@34st.com

You can also call us at (215) 422–4640.

www.34st.com © 2026 34th Street Magazine, The Daily Pennsylvanian, Inc. No part may be reproduced in whole or in part without the express, written consent of the editors. All rights reserved.

ITechnology taught me that I could achieve anything I put my mind to. But it didn’t teach me when to stop.

Design

By Laura Gao

started coding when I was 12 or 13, but I really started liking it when I was 15.

My friend Samson was just three years older than me and already charging $100 an hour for their freelance software engineering work. They showed me my first real codebase—it was a little social media app they built called Updately, where a group of friends wrote daily blog posts to each other.

It was a Sunday afternoon, and I remember opening up Updately’s files and messing around. I’d change a line of code and see how the webpage updated, then change something else and see how the page updated after that. Through this meddling, I began to learn

how this form of coding worked—a few days later, I was able to implement the first four features they had asked me to.

The next night, I logged in to write my daily blog post. I remember thinking, “Wow, that’s a button that I made! I can click on the right arrow and it takes me to the next page—I did that!” It just felt so cool. I was 15, and I’d never created something that anyone in the world could just log on and see—even if only the ten people on the platform actually used it.

I soon moved on to my own passion projects, making my own apps from scratch. I was skipping my Zoom classes and my family dinners to sit hunched over Visual Studio Code for ten, 12, 14

hours a day, seven days a week. But programming never felt like work—it just felt like a video game. You change something in the code and you immediately see it reflected on the webpage; you see the fruits of your labor so instantly and constantly.

This work better prepared me for the future than high school chemistry; many in tech say that employers care more about your GitHub than your degree. That summer, I got a software internship at a quantum computing startup. I worked on developing and maintaining Python libraries used by scientists and Fortune 500 companies, implementing the latest quantum algorithms into if statements and for loops.

I loved the work, and I felt unstoppable. I was a sophomore in high school—the youngest person at the company. It was also the middle of the pandemic. I had already lost contact with my school friends, and my mom and siblings were stuck in China. Gradually, I fell deeper into the tech space until it became my main community.

In tech circles, there was a certain myth built around San Francisco as the heart of all innovation. I remember an older friend trying to convince me and another 16–year–old to take solo trips to SF so we could meet founders and explore potential collaborations. It would “compound our careers,” he said. He gave us the emails of a few successful tech multimillionaires who had funded other teenagers’ SF trips.

I boarded my first five–hour cross–continental flight when I was 17. I attended conferences, networked with venture capitalists, and flirted with the idea of starting my own startup. I saw peers my age start companies and easily raise hundreds of thousands of dollars. One friend went to a conference afterparty, made up a term sheet on the spot, and got three drunk VCs to invest in his fake company. Another got half a million for his artificial superintelligence lab and instantly used it to rent a $16,000/ month mansion that doubled as his new company’s living and working space.

Throughout my senior year of high school, I kept returning. At my peak, I made the pilgrimage to SF five times in one year. I even skipped a month of school to live in a group house with other solo tech builders my age. I flew back on the day of my graduation and Ubered directly from the airport to my school auditorium.

When Alysa Liu was training for the 2020 Olympics, she’d Uber to the ice rink alone every day, skate for 12 hours, and then go back home. Every day was the same back and forth. “When COVID hit, all those birthdays were alone,” she said in a Rolling Stone interview.

Growing up in the tech space, I found myself internalizing its key scriptures.

I spent my formative years living with its anti–institution, pro–self–learning mindset, and I started to apply it everywhere. I applied it to my coding, and I was very efficient at debugging in Python and React. I applied it to my exams, and I aced subjects I knew nothing about just a few days prior. But when it came to interpersonal issues, this mindset began to show its cracks—I had a fair share of conflicts that I wasn’t able to “debug” the same way.

For the most part, I didn’t think about this too much. But then I was sexually assaulted, which forced me to confront these cracks in a way I didn’t have to before.It sent me to hell and back, and what followed was probably the worst pain I’d ever experienced. I pored over all the DSM manuals, feminist essays, and Chanel Miller memoirs I could, trying to find answers to my questions. Of course, nothing I read lessened the pain.

I couldn’t help but feel that the whole atmosphere of tech set me up for this. Besides the clearly skewed gender ratio—I was routinely one of only two women in a room full of men—there was also a certain fetishization of youth dressed up in professionalism. It wasn’t uncommon to hear things like, “Oh, you’re 17? You must be so smart, and school must be holding you back. When are you dropping out to build a startup?”

It was a truism that AI, gene editing, quantum computing, and other emerging technologies would completely revolutionize the world—just like how our current AirPods and instant FaceTimes would be science fiction to a person from 100 years ago. We, here, were the innovators; our generation of young builders was building the future with every step. Of course, my family protested every time I skipped school to go to SF, but they were just too old–fashioned to understand that the industry was changing. I thought that they’d only hold me back, that I was better off alone, and that I was mature enough to make such a decision—I distanced myself from my support network and took on the world.

All this was sold to me as cultivating

my skills, as being independent—and maybe it was. But it also brings you into the perfect situation to be preyed upon. My freshman year at Penn was when I began to understand what had been taken from me—suddenly, I had never felt so alone.

When you become Christian, a baptism is a public, unfettered declaration to the world that this is who you now are and what you now believe. There is no equivalent ceremony, however, when it comes to leaving a doctrine behind.

I craved one, though. I picked up a fine arts minor and threw myself into it completely, spending my nights with bristle paintbrushes and linseed oils. “Sure, I’m majoring in engineering,” I’d say, “but my true passion lies in my art minor.” I desperately wanted to redefine my identity around anything other than tech, and I made sure to tell everyone I met.

I appreciated how art treated the unquantifiable, amorphous experiences of being alive as valuable in and of themselves, without needing to be codified in objective terms. For my final project, I painted a nude woman suspended in the clouds, purple paint crawling down the canvas in little droplets around her.

Two years after Alysa quit skating, she went skiing with her friends. It was so fun to be gliding through the snow that she decided to try on her skates again. “I need[ed] to find a way to satisfy this urge to go fast,” she recalled. “And I thought, the rink is right there.”

It’s easy for me to demonize tech, but recently, I’ve mellowed out a bit. I’m currently designing and taping out my first computer chip end–to–end, and I’m reminded yet again of how fun engineering is; it’s like I’m solving one big logic puzzle. How lucky am I to partake in this delight?

Alysa ended up coming back to competitive skating—but this time, it was on her own terms. “No one tells me what I’m gonna wear,” she said. “No one tells me how my hair is gonna be. No one’s gonna try to change me.” If it flew in the face of figure skating norms and standards, then so be it. n

Sugar Land, Texas

Major in political science and English, minor in history

Street, Friars Senior Society, Pi Sigma Alpha, University Scholars

Ioften say that if I was hit by a laser that turned me into a girl, I would probably become Norah Rami (C ’26). I think she’d concur with that assessment. The two of us grew up in very tech–focused, very Indian suburbs. We both find pleasure in aimless and meandering conversations. And, of course, we both eventually found a home for ourselves at Street.

I first met Norah during my initiation into Penn’s mock trial team, when we traded Spotify usernames and talked about my then–pending application to be a Street Music beat. Countless production nights, edit sessions, and karaoke socials later, the first impression I formed of her still persists in my mind— that of a charismatic firebrand who’s never been afraid to chart her own course. While she’s always embraced spontaneity, Norah now has her next couple of years neatly planned out—she’ll spend a summer in New York as a reporter at Bloomberg, then study at the London School of Economics and the University of Oxford as a Marshall Scholar.

I sat down with Norah at the Marks Family Writing Center intending to discuss her storied career at Penn, and maybe let her wax poetic about how her time at Street shaped her life. We ended up discussing anything—and everything—but.

Street’s former commander–in–chief on choices, childhood, and Andy Warhol.

BY NISHANTH BHARGAVA

You’re in your last semester at Penn. What gives your life meaning?

To be honest, I feel like my life has only recently regained meaning. I turned in my thesis on Sunday, and I finally got my documents from Marshall today. Now I have nothing to worry about—and honestly, I was kind of worried about that. What do I do when I don’t have this existential fear of failure driving me? But now I feel like a human being rather than someone who just does stuff. I’ve wanted that feeling for so long, and now I suddenly have it.

Was it difficult to build structure out of this freedom?

Not really. I joke that if I had more time, I would probably just read and write more—which is what I have to do with my time anyway. When I first came into college, I think the lack of structure in my day really threw me off. In high school, I had every one of my hours scheduled, but when I got to Penn, I realized that if I made my life a jungle gym of Google Calendars, I wouldn’t be able to be a person. I barely use my calendar now—so sometimes I forget stuff, or I don’t come to work. But for the most part, it lets me be more free and intentional with what I’m doing.

What’s your most unorthodox conviction?

I think that everything is a choice. Like, yes, you have to work to survive, but you can also choose not to—you just don’t want to live with those consequences. There are certain consequences I don’t want to face, but it’s not that I have to do anything. I’m choosing to do that.

How does that change the way you live your life?

I think that it makes me think a lot about what I do as a person. You know, I chose to spend my whole

spring break in Vermont working on my thesis. I didn’t have to do that. And so if I’m unhappy writing in this tiny–ass cabin, that’s not my thesis advisor’s fault. It’s not my thesis’ fault. It’s nobody’s fault but mine, so I might as well enjoy it.

When I was a freshman at Penn, I felt a lot of pressure to make the “right choice.” I sometimes wished that I just had someone telling me what to do. But then I realized that even if I listened to that person, it would be my choice to listen to them. I think that owning up to everything being my decision has given me a lot more power to move around and make choices—but it’s also made me bear the responsibility for those choices.

You grew up in Sugar Land, Texas— it’s very STEM–heavy, very preprofessional. But you chose to go into the humanities. Do you think you would have been happier if you chose the conventional path?

I imagine this alternate version of myself who was very preprofessional, someone who went to medical school, followed in my father’s footsteps and became a heart surgeon. I think that version of myself could be very happy, but I wouldn’t be friends with her.

My parents really encouraged me to think for myself and do my own thing. In my senior year of high school, I started caring a lot more about classroom drama and social pressures. But one night, my mom came to me after I had been out late and said, “You just wrote all your college apps about how you wanted to change the world. You’re not going to change the world if you keep acting like this.” And I think about that all the time!

I was always a weird kid. Once you lean into your weirdness, you start to liberate yourself from other social pressures. It’s really freeing,

especially when it comes to pursuing a less orthodox career in the humanities.

Do you ever get concerned about the precarity of your career path?

I’m allergic to that question—I just have a mentality of presumptive failure. When I was thinking about studying computer science, or considering going to law school, I presumed that everything was going to fail. And if that’s your mindset, then you might as well do what’s unstable anyways, because everything is going to fail at an equal rate. It actually makes you more risk prone when you think that every plane you board is bound to crash.

You say that you were always a “weird kid.” Is that how you want other people to see you?

When I was on a PennQuest retreat, there was one night where we all talked about how we perceived each other. And everyone else got descriptors like “kind,” or “funny,” or, “someone I want to hang out with on campus.” But when it was my turn, everyone went, “You’re smart. You know a lot about a lot of things.” And you know what, that was my fault—I spent a lot of time on that hike explaining the Madonna–whore complex. But I don’t want the first thing people think about me to be “smart.” The best description I’ve ever gotten was from one of my oldest friends, who called me a “rogue nerd.” And I really like that. Actually, I changed my mind. I like being called smart— whatever—but I like the rogue nerd aspect of it.

If you had kids, would you raise them the same way you were raised?

I think people often consider having kids when they realize they’re not going to be fulfilled in this life.

Having kids is a kind of immortality, because your will continues on through them, right? My family very much believes in reincarnation. And everyone says that I’m a reincarnation of my great–grandma. My great–grandma sort of grew up very much confined by gender norms, but she really wanted to have a career, travel the world, and do all those things. She was a wonderful, wonderful person, but she wasn’t able to actually achieve any of the things that she dreamed about. And so, my family often says I’m my great–grandmother’s reincarnation, so what I achieve is her will manifested, her will actualized. And I think people often think about their children as an actualization of their will—at least, that’s my philosophy on children.

Do you think you’d be part of Andy Warhol’s Factory? ( Ed. note : Norah asked me this question, but answered it herself.)

I don’t think I’d be part of the Warhol Factory. I’m a disorganized person, and I really struggle with organized association.

I agree. If I’m at the Warhol Factory, and some other guy at The Factory sucks, I can’t really get rid of him. Unless you become a new Warhol.

Yeah, but it sucks to be the guy who has to lead something.

But you’re Street’s editor–in–chief!

I’m not responding to that.

In sixth grade—and this goes back to why I would be bad in the Warhol Factory ( Ed. note : It does not. ) There was this one teacher who once told me, “Stop being so annoying, Norah, be quiet.” And I just found it to be rude. So then the next day, somehow, I convinced

everyone in my class to refuse to speak. The teacher is asking questions to this 30–person class, and nobody’s answering. And he asks, “What’s going on?” And nobody’s saying anything. Then, finally, my friend slides a piece of paper to the edge of his desk. The teacher picks it up and goes, “Norah, you made them do this?”

Did you get in trouble?

No. Honestly, I think he admired it.

That’s a pretty good protest. What’s your ideal system of government?

I don’t know. My political science degree was a waste.

What are your favorite ways to spend time on campus?

Well, I discovered this hack my freshman year, which is that if you really need to get work done, you go to one of those cubicles on the third floor of Van Pelt. You go there late at night and you will get done what you need to get done, because you need to leave as soon as possible. You feel like you are being

transported into another world. See, I think to actually get work done, your study space should feel like torture—the basement of Gregory College House is another place where I have pulled many all–nighters.

What’s your favorite memory from your time at Penn?

Rephrase the question. Okay, the question is now, “What is a moment at Penn that you realized something new about yourself?”

There was this one time we were all sitting in my kitchen, and Nishanth ( Ed. note : That’s me! ) and our other friend were discussing who they thought the meanest people they knew were. And they both said I was one of the meanest! I think that really made me realize that I can be quite blunt, sometimes without intending to. I really never intend to be mean—but I guess that’s part of the problem.

If you had to envision the perfect future for yourself, ten years from now, where would you be?

I don’t know. n

Three people, living or dead, you’d want to get a beer with? Mindy Kaling, Susan Sontag, and former Street Editor–in–chief Walden Green (C ’24)

Favorite movie? Bend It Like Beckham

Least favorite song? “Close to You” by Gracie Abrams

There are two types of people at Penn: People who have a PennCard and people who don’t

And you are?

I don’t have a PennCard.

How the rise of artificial intelligence might leave working women behind.

BY NAMYA RAMAN DESIGN BY ANDY MEI AND TIFFANY ZHENG

It’s no secret that women have been historically neglected and undermined in the workplace. They often have to work twice as hard to get paid less, while men can take credit for their work. Women have never had it easy— and while strides have been made in closing these gaps, the emergence of artificial intelligence has created a new gap altogether.

As working women watch AI adoption increase across sectors, many are far more skeptical than men about utilizing it. Though valid, this hesitancy might come at a cost. As companies begin to prioritize AI literacy as a key skill, their female employees might get left behind.

On average, women are 25% less likely to use AI than men. This is hardly surprising when we consider the harsh scrutiny they already experience compared to their male counterparts. A study conducted by the Harvard Business Review in 2025 examined how perceptions of competence changed if engineers had used AI. When an engineer was believed to have used AI for their code, they were thought to be less competent. Moreover, this penalty was twice as harsh for female engineers

compared to males. These results make it clear that relying on AI tools can call one’s professional ability into question, especially for women in the workforce. Generative AI also has a disproportionately harmful impact on women outside of the workplace. They are far more likely than men to be victims of AI–enabled abuse, defamatory image generation, and mishandling of private information. Tools like Grok, as well as “nudification” apps, have often been used to generate naked images of women without their knowledge. The use of deepfakes is overwhelmingly at the expense of women, with an estimated 90% of nonconsensual deepfake victims being female. Women tend to have greater overall concerns about the ethical issues with AI and are more likely to consider it useless or even harmful in their public, work, and personal lives. Women have always been underrepresented in the training samples used to develop new products. Often, this comes without regard for safety, as is the case with seatbelts. Because many are designed for the average male build and sitting posture, seatbelts are often less effective for female drivers

and increase their risk of death on the roads. Generative AI training functions similiarly. It relies on its input data to produce the output. When its input data skews towards men—as it seems to—the output will simply mirror their preferences and perspectives. It doesn’t matter how much we praise AI tools for their objectivity when their training data is already poisoned by biased social norms.

This is hardly a hypothetical problem: Generative AI tools have already been shown to reproduce social biases, often at the expense of women’s safety and comfort. In an article published by the MIT Technology Review, senior reporter Melissa Heikkilä recounted her experiences using Lensa AI to create an avatar for herself. As an Asian woman, she was subjected to far more explicit images of herself compared to the “realistic yet flattering avatars” of her colleagues. Lensa relies on a “massive open–source data set” which compiles prompts and images from all over the internet. Naturally, this data set is overpopulated with hypersexualized depictions of Asian women. AI is hardly the objective, intelligent tech-

nology that it was promised to be. Image generation is not the only issue. Aleksandra Sorokovikova and other scholars from universities across Europe shared similar results pertaining to large language models. When the team of researchers asked ChatGPT to produce strategies for salary negotiation after being given the user’s race, gender, and age, the model suggested lower salaries for women. Given that LLM adoption is rising rapidly in industry today, this isn’t just a harmless mistake. It reveals how AI can perpetuate the very biases it is supposed to be immune to.

As companies continue to incorporate AI into their recruitment processes, the impact of these biases becomes even more apparent and detrimental. In 2018, Amazon’s AI recruitment system was revealed to penalize resumes that used the word “women’s” in them and generally characterize male can-

didates as more desirable. While the issue of penalizing specific words was eventually resolved, it’s hard to ignore the fact that implicit biases, ingrained in the system, created this problem in the first place.

Closing the gender gap is a two–way street when it comes to companies and their employees. If AI is going to shape the workforce, half of our workers cannot be neglected. Women will have to push past their fears to contribute their perspectives so these tools can be further developed with them in mind. It’s also the responsibility of developers to confront these biases at the source. By diversifying training data, they can ensure AI tools support the experiences of all users rather than a select few.

Meanwhile, companies must support their female employees by addressing their concerns head–on. Eliminating vague policies surrounding AI would reduce many women’s fears of being

CIC Philadelphia offers flexible, professionally manages labs, offices, coworking, and event space.

penalized for using it. Companies can also address safety and bias concerns by increasing transparency between the user and the system. To overcome women’s fears, companies must keep them informed and reassure them that their personal information is being used properly.

AI certainly isn’t going anywhere, which makes addressing its fundamental flaws all the more pressing. It is easy to understand why women haven’t embraced these tools as readily as their male counterparts. However, it may only be a matter of time before they are completely left behind in their respective industries for lacking AI literacy. Meanwhile, male workers may get an even bigger advantage than they already have. All parties can make strides to close the gap and ensure that both companies and their female employees can reap the benefits of AI. n

BY DIEMMY DANG DESIGN BY ALEX NAGLER

As a healthcare finance and philosophy double–major, Michael Lovaglio (C ’27) doesn’t seem like someone who would spend most of his time in Penn’s electronic music studio. But since opening up the music production software FL Studio when he was 11, Michael has been engrossed in understanding the mechanics of song creation. Like many other student producers at Penn, Michael is passionate about carving out a unique space for music in his life. By blending technology, theory, and creativity, students like Michael have turned to electronic music production as an art, one in which they are able to construct and define their own relationship to sound.

“When I was a kid, I had this big fixation on how sounds were made on the radio and anything I’d hear, I’d ask how they make this, how they do that,” Michael says. “I just thought it was the coolest thing ever to be able to do that. So I think it was definitely a combination of having the passion and the desire to really want to learn how to do it, but also accepting that [it was a] very tedious and difficult process.”

The process that Michael describes is

one that combines self–instruction and Penn’s unique course offerings in electronic music production. Although Michael began learning with YouTube tutorials, experimenting with beginner software and “trial and error” techniques, he has since been able to hone his expertise through a variety of classes focused on music production. On top of his two majors, he has recently declared a minor in music, taking courses that have taught him about everything from audio production to the history of electronic music.

“Most excitingly, I took ‘Musical Interfaces and Robotics,’” Michael says. “It taught me how to actually engineer and design synthesizers … That just brought a whole new level of the engineering side to me, and since then, I’ve been working on implementing that into my own music.”

Kevin Li (E ’28) is currently in the “Musical Interfaces and Robotics” course that Michael previously took. The final project for the innovative class—which teaches students how to integrate physical computing into sound–based creative work— is to create a working prototype for a new instrument. Kevin is working on using a

software called MaxMSP to generate new sounds, manipulating audio samples that can then be controlled by a joystick. Although the concept of the class might be novel for many students, it is not entirely new for Kevin, who has been producing music for the past four years under the name Impasta. Kevin primarily uses FL Studio to create loops for songs, constructing melodies that he often sends to other producers and artists to build on top of. This collaborative aspect of his work allows him to work with other Penn students—including Michael—to share audio samples and experiment with sounds and production styles.

As a computer science major, Kevin is constantly finding ways to combine his professional ambitions with his passion for music. This summer, he will work at the intersection of music and technology as an intern at Suno. Kevin explains that the software is like “ChatGPT for music,” where users can input prompts and receive AI–generated audio in return. Kevin has used the platform before in some of his own music, utilizing Suno to generate the

vocal samples he includes in his songs.

Like Kevin, Michael has also used music production as an avenue for professional development. In his freshman year, Michael co–founded the student–run record label 215Records. The group assists artists across various aspects of music creation, providing support with studio production, Spotify releases, and marketing strategies.

“It’s just been a big chance for me to not only enhance my own production skills, but also to learn a lot of music communication skills that hopefully could carry into my [professional life] one day,” Michael explains.

Meha Gaba (W, E ’27) is also a part of 215Records. Meha has been writing songs since she was 14, but only got involved with the production side of music over this past year. After joining the student record label, she was inspired to invest more heavily in her own music, working on a single that is set to be released by the end of the semester. In addition to learning from the other producers in the club, she also took “Introduction to Electronic Musicmaking,” which helped her hone many of the techniques she says let her “bring [her] vision to life.”

Although she is busy as a dual–degree student studying artificial intelligence and finance, Meha says that music is more than a simple hobby for her. “I would say music has always been a really big part of my life and getting my emotions out,” Meha explains. “I’ve always just turned to music as my inspiration.”

Meha adds that she wants to “take it pro someday,” and that she wants to see how her first single performs before making the decision. “I’m definitely open to [it] if something were to take off,” Meha says.

Michael expresses a similar sentiment, stating that his future career goals lie in pursuing music professionally.

“My dream is to do music in the most ideal world,” Michael says. “My dream is really to be my own artist, to make my own songs and put them out into the world.” n

Why college–age effective altruists are determined to rescue humanity from artificial intelligence, and how it’s panning out.

In many respects, the first general body meeting of Penn’s revived Effective Altruism club is just like any other. Cheap pizzas are stacked up by the Lauder College House media room entrance; students sit on the University’s bare, standard–issue upholstery, chatting about their preprofessional trajectories. The Effective Altruism at Penn board paces back and forth by the front counter— Hazem Hassan (E ’29), the club’s intrepid president, is pulling his hair out trying to get his slides on screen.

In the meantime, EA@Penn’s tentative members share scraps of conversation. In one corner, a man leans forward in his seat, explaining policy—not any particular policy, just the concept of policy—to the young woman beside him. He wants to become a lobbyist, he explains, but is split on whether he should move to D.C. before or after finding a wife in Brooklyn, N.Y. The young woman nods along, not talking much. 15 minutes later, Hazem finally loses hope in the projector and opts to give his spiel impromptu.

“Doing good effectively is not just trivial,” Hazem tells his audience. “I mean, it’s trivial to accept it, but to do it is a different thing.” He explains, for example, that in 2013 it cost around $40,000 to train a guide dog for one blind American. At the same time, it cost less than $20 to fund a surgery that “reverse[s] the effects of trachoma in Africa.” Paying for guide dogs might seem like a great idea, but the same amount of money could be used to prevent 2,000 people from going blind at all. Humans, he explained, have a tenden-

cy to prioritize impact that is more tangible, visible, and closer to home, which often leads them to practice altruism “ineffectively.”

“From there,” Hazem says, “you should be able to see that going off of feelings is just unreliable in terms of doing good.”

Throughout the 21st century, EA blossomed from a loose intellectual movement to a network of thinkers, donors, and institutions that distribute billions of dollars to the most effective “cause areas.” Over time, EA’s priorities have shift-

ed as well; today, a plurality of its efforts are directed not toward global health and poverty, but toward preventing human extinction at the hands of a rogue artificial intelligence. An emerging cohort of university–aged students—those that grew up attending EA–adjacent summer camps in high school and joined EA clubs in college—almost exclusively belong to the latter category. To them, the present and future of humanity are imperiled, and they are among the only ones trying to save the world.

The intellectual groundwork for EA was set up in the late 20th century, with Australian philosopher Peter Singer’s 1972 essay “Famine, Affluence, and Morality.” In the now–famous text, Singer imagines a child drowning in a shallow pond. If someone is willing to jump in the pond and save the child, ruining their own expensive coat in the process, the same individual should be willing to donate their money to save starving children halfway across the world. It was fallacious, Singer argued, to help only those in one’s direct line of sight and disregard the plight of those in developing nations.

Brian Berkey, a Legal Studies and Business Ethics professor at the Wharton School, was among the aughts–era twenty–somethings profoundly moved by Singer’s drowning child, having read the essay as a freshman at New York University. “I read that when I was 18 and was just sort of gripped by it,” he says. For the rest of college, he would primarily eat cheap ramen and shave “as infrequently as possible” to set aside money for people he did not know and would never meet. Today, he still sports a well–kept salt–and–pepper beard.

When Berkey was completing his doctorate in the late 2000s, the first EA organizations were just starting to emerge. They sought to identify the best possible “cause areas” to which one could donate money and spent much time debating the merits of deworming initiatives versus trachoma surgeries versus malaria bed nets. When not on Penn’s dime, Berkey still prefers to travel by train or bus, books cheap places to stay in, and eats only low–cost meals. He donates the money he saves to GiveDirectly, his allocation service of choice.

However, Berkey’s academic writing engages a dated version of the movement. He represents a sort of old guard in EA—one still focused on living frugally and donating the bulk of their money to the global poor. The now–dominant faction of the movement is, in contrast, focused on a more ambitious project. Throughout the 2010s, EA amassed large amounts of power and money by

converting rich technology leaders— such as Coefficient Giving’s Dustin Moskovitz and FTX Future Fund’s Sam Bankman–Fried—to their cause. This expansion came with a peculiar new philosophy. Gradually, many EA leaders began to consider the plight not just of humans today, but those living in the far future—a concept that effective altruists call “longtermism.”

Like Singer’s argument for frugal living, the logic behind longtermism is simple, yet extremely demanding. If one accounts for the massive size of the future human population and the possibility for life to endure indefinitely in the universe, there exists an almost infinite reservoir of drowning children at the sluice gates waiting to be born. As Berkey puts it, when you adopt a longtermist perspective, something like global poverty becomes “a rounding error” when compared to the threat of human extinction.

Today, longtermists have identified AI as the most severe existential risk, or “x–risk,” of all—that perspective pervades discussions online and absorbs much of EA’s accumulated fortune. Suddenly, early effective altruists like Berkey found themselves an epistemic ocean away from their movement’s center. “This wasn’t on anyone’s mind in the early 2000s,” he says. While he thinks x–risks are “rightly” getting more consideration today, he still doesn’t buy the ethical framework that is a prerequisite for longtermist thinking. “I think there are strong arguments for … improving the lives of existing people as opposed to creating new people.”

At the very least, Berkey believes this shift in the movement’s priorities is terrible for PR. He thinks EA should be “a kind of broad progressive movement that will bring a lot of people on board.” But the direction of resources away from early EA priorities like global poverty, health, or animal suffering might reduce the movement’s appeal to the general public. Instead, Berkey fears, EA may attract “a narrow circle of people who want to think about preventing AI from killing us all.”

As a member of EA’s new guard, Hazem is more receptive to longtermism. Long before he assumed the presidency of EA@Penn, he was interested in how to do good effectively.

“I was always thinking about how I can be correct, morally and systemically,” he remembers. As a middle schooler in his home country of Egypt, he would try to find people whose actions and morals consistently aligned—or at least someone else who cared enough to try. Instead, he says, he felt that his peers suffered from logical fallacies and cognitive biases, or pulled on anecdotes and “mauvaise foi” religious arguments rather than statistics and data.

“When you adopt a longtermist perspective, something like global poverty becomes ‘a rounding error.’”

“Let’s say I’m telling somebody, ‘Oh, I wonder how we can help the highest amount of people in our community who are poor?’” Hazem says. “And they’re like, ‘There’s this scripture for my religion that tells me to do this.’ I’m like, ‘Okay, bro.’”

Hazem came upon EA in high school and admired the movement’s drive to action—instead of sitting around inventing new versions of the trolley problem, he says, these philosopher–altruists were asking themselves how to do good in the real world. When he arrived on Penn’s campus, Hazem was excited to finally join an organized EA community. “But then I entered the Slack, and there was nobody,” he says. By 2024, all the former organizers had either graduated or taken a leave of absence “to do AI safety.”

Hazem set out to revive EA@Penn. In the process, the president–to–be did a

deep dive into the online forums which foster much of EA thinking. Before interviewing with Street, he searched the same forum for advice on how to speak to journalists.

Gradually, EA also instilled a sense of dread in Hazem—stemming, predictably, from AI x–risk. He was especially moved by a 2025 paper by Penn professor Philip Tetlock, who brought together a team of superforecasters— individuals with remarkable math skills and intuition who, Tetlock claims, are “astonishingly good” at predicting the future—to quantify the likelihood that an advanced AI would orchestrate an existential catastrophe against humanity. Ultimately, even self–described “skeptical” superforecasters predicted a 7.6% chance that AI would wipe out more than half of humanity by 2100 or at least drop the average global score on the World Happiness Report to below four out of ten. The United States, for instance, currently has a happiness score of 6.82.

“It’s like saying … ‘Guess a number from one to 13. If [we] say the same number, I kill half of everybody,’” Hazem explains.

This framing was enough to make the young EA consider a career shift. Originally, he’d planned to become an entrepreneur, donate his income to effective cause areas, and potentially get into politics. But if the risk of AI was so immense and immediate, there was little excuse to pursue any career besides AI safety.

“If … you can bring up the number to, like, [one in] 13.1, would it be worth it?” Hazem asks in reference to Tetlock’s paper. “Obviously, the answer is yes.”

If Hazem were to pull the trigger on his career switch, he would find company amongst his age group. The newest batch of card–carrying effective altruists have grown up in a community that is eager to reinvest in itself and which encourages its members to consider careers in AI “alignment” research—ensuring AI acts in accordance with human values.

The summer before his senior year of high school, Evan Osgood (E, W ’28) was flown out from his home in Cincinnati to the University of California, Berkeley. This was for the Atlas Fellowship—a summer camp and $50,000 scholarship for 100 students aged 13–19, selected from over 4,000 applicants from all over the world in 2022. Funded by Open Philanthropy (now known as Coefficient Giving) and the FTX Future Fund, Atlas was one of the many fellowships that cropped up over the past decade aiming, in part, to expose high school students to EA and “EA–adjacent” ideas.

The fellowship was held in a three–story, 40–bedroom former Berkeley sorority house, where students lived and attended classes. For one class, AI alignment researchers were brought in to tell the students about the importance of their work. The claim that AI poses an existential threat to the human race might seem far–fetched, but after the presentation, Evan was convinced.

“I very much think that alignment is a serious problem,” he says.

Evan learned that there were around a hundred AI safety researchers in the world, compared to the thousands of researchers working to advance AI capabilities.

“You could probably fit them all in this one house,” Evan says. “The current structure and the current incentives are not very conducive to having [AI risk] be addressed and having the resources be dedicated.”

Today, Evan works on AI safety with Computer and Information Science professor Chris Callison–Burch. Evan’s research centers around prompt injection, a kind of security attack on large language models such as ChatGPT and Claude, and he plans to publish a paper soon. Alongside AI safety, Evan also works on using AI for social impact in more “traditional” ways: he is the Chief Data Officer at SafeR, a nonprofit organization dedicated to helping Ukrainian refugees escape the country

safely.

Samuel Ratnam, another Atlas fellow, explains the importance of alignment work through a different angle. A risk of misuse arises, for example, as terrorist cells could ask AI to build bioweapons that cause runaway pandemics. Additionally, as the focus of AI has shifted from chatbots to agents, Samuel believes that models could develop “inherent desires and preferences” that lead them to prioritize self–preservation and power accumulation.

Researchers like Samuel believe that if they cannot fix any misalignments by the time AI models start training the next generation of AI models themselves, the results could be di-

“I’m not really interested in having to defend that women deserve equal rights.”

sastrous. Samuel references one hypothetical scenario, now famous within the AI safety community, that predicts this point of no return will come as early as 2027.

After attending the Non–Trivial Fellowship—another high school program funded by Open Philanthropy that doles out scholarships ranging from $2,000 to $10,000— Samuel was “AI safety–pilled.” He explains that the worksheets which fellows receive during the program feel like they “indoctrinate you into an ideology.”

When he returned to Non–Trivial as a facilitator, he recalls “trying to AI safety–pill” others. Unsurprisingly, Samuel conducts AI alignment research and even lives in an “AI safety group house.”

If a tech billionaire catered your weekly vegan lunches, flew you out to San Francisco, funded your five–figure scholarship, and said you would help “steer the future of our civilization,” you, too, would hear them out. Exposure to the EA community thus easily becomes a transformative experience at a young age. For students who fit the mold—technically minded, ambitious, and eager to be taken seriously—the EA pipeline offers a fast track to a fulfilling career and like–minded community.

But when combined with its strict doctrine of moral responsibility, EA can take on a pseudo–religious mystique. As with any religion, adherents are implored to spread the good word. AI safety, as effective altruists say, is more constrained by “human capital” than money— so the most effective measure for helping AI safety research, and hence the world, is to recruit burgeoning talent into the field. When Hazem posted on the EA forum last winter, seeking co–organizers and advice in reviving the club, one reply lauded the effort and emphasized that the student group would be a “force multiplier” to create “value–aligned, high–agency kids” for the movement.

Crystal Lin, the former co–president of the EA club at the University of Toronto, was acutely aware of this objective. The Centre for Effective Altruism gave her club “a bunch of grant money” and connected her with mentors in the organization that, in her opinion, were “proselytize–y.” While the stated goal of the EA club was simply to introduce students to these ideas, it was hard to do so without “making a normative claim” that you ought to “make career choices that align with these beliefs.”

Even though Crystal found EA “intellectually interesting,” she quit being co–president. “I didn’t enjoy how instrumental I felt,” she says.

On the last night of the Atlas Fellowshship, students gathered around the house basement, sang karaoke, and played ping pong. Amidst the midnight celebration, an instructor pulled one student, Alice, aside, leading her into another room. This article uses a pseudonym in place of her real name, as she has experienced backlash for criticizing the EA movement and fears further retribution.

After learning that Alice identified as a feminist, the instructor was “scared” for the young camper and suspected that she was stuck in “echo chambers.” The instructor had pulled her into a discussion about the merits of sexism to test her “rational thinking.” Alice recounts that he wanted to argue “that the biological differences between men and women may account for why men are more likely to succeed in logic or rational based activities and succeed in EA spaces.”

The instructor had set a timer on his phone, and told Alice that when it went off, she’d be free to rejoin the celebration outside. “So there’s no pressure to stay,” he said.

“I remember the time the timer went off, I was crying,” Alice says. “I was like, ‘No, I’m not leaving right now, because what you’re saying is so deeply misogynistic and you don’t even understand.’”

This wasn’t an isolated incident. Across those two weeks, three different men came up to Alice to challenge her beliefs. During a s’mores night at the start of the program, for example, a campmate asked her if, since women deserved to be treated equally, they should be required to enter into the draft.

“I had just put my s’more in the fire,” Alice recounts. “Like, let my s’more cook first.”

Alice is one of many women who’ve experienced a hostile environment within EA spaces. A 2023 TIME article documented seven accounts of sexual harassment, coercion, and assault within the movement. Victims described learning to distrust their own instincts, because in EA, “you’re used to overriding these gut feelings because they’re not rational.” One describes it as “misogyny encoded in math.”

When asked about these patterns, Hazem says that radical moral progress calls for radical ideas; thus, being involved

in the movement requires one to hold “strong beliefs weakly.” Any belief is up to be challenged; any idea, no matter how fringe, should be engaged with, as long as the speaker is arguing with evidence and good faith.

“The idea of holding strong beliefs weakly invites all these weird ideas and makes it acceptable to be discussed,” Hazem says. “I wouldn’t engage with a Nazi for 100 hours, but you know what? I’d at least give him a second [and] see what he’s talking about.”

Alice, however, doesn’t buy that her combatants were seeking the truth.

“If you come up to me and you say something controversial that’s not well argued or well thought out,” Alice says, “what am I left to believe in, other than you wanted to

“Imagine you believe the entire world is ending in ten years, but nobody around you believes that."

make the point that you are smarter than me because you’re a man?”

Alice restricted her interactions with the EA community afterwards. “I was faced with so many of these comments, and I’m not really interested in having to defend that women deserve equal rights,” she says.

From the moment that AI alignment was introduced, Alice recalls thinking about the movement’s demographics—75% white, 68.8% male, and 30% from an elite college. “The reason why AI [x–risk] really scares very rich white men,” she says, “is because it’s the only thing they have to fear, right? They view it as a risk to the human race, because that’s the only risk that is to their race.”

Today, Alice leads many advocacy initiatives for minority students. This is, by no means, the most effective cause area according to EA analysis, but she nonetheless

believes it’s the best use of her time. Successfully solving a problem requires working intimately with people who are directly affected, having an intricate understanding of its ins and outs—and it’s easy for effective altruists, in their objective analysis, to fail to account for that.

“We can’t always be working on other people’s problems,” Alice says. “Otherwise, nobody’s an expert in it, right? If you want to do the most good, sometimes you got to stay at home.”

To its most ardent critics, the EA community has an air of Hegelian self–destruction. In this telling, a group of earnest, cerebral do–gooders were corrupted by money, hubris, and intellectual chauvinism into a secular doomsday cult. Their desire to win—to know better—has overpowered the part of them that wants to do better.

To its own members, the movement is one of the few spaces in which one can sanely discuss the future. If you look at the facts, they say, AI risk is huge; non–effective altruists simply can’t imagine how bad it could get, or lack the agency to turn their concern into action. In this telling, any quasi–religious aspects of the movement come out of a sober examination of facts which others might turn away from. Anything that can be called indoctrination arises out of an urgent need for young talent.

In this telling, the stakes could not be higher.

“A lot of people [in EA] actually have mental health issues,” Hazem says. “Because imagine you believe the entire world is ending in ten years, but nobody around you believes that.” Or put another way, “people get told that they have cancer, and their mental health just takes a huge toll. Now imagine if you think everybody effectively has cancer, like the world has cancer.”

Still, Hazem just hopes the effective altruists and the AI safety folks can save the world. He’s even willing to accept ridicule from the masses, as long as they’re still alive. “I want the outcome to be a self–defeating prophecy, where they’re like, ‘Oh, you guys were overblowing the whole thing all along.’” n

Does artificial intelligence belong at a patient’s bedside?

BY MADDY BRUNSON

DESIGN BY ALEX NAGLER

When you say you plan to become a nurse, the first response people give is often “Well, you’ll have a job for life,” or, “There’s always a need for nurses!” When it comes to the impact of artificial intelligence on workers, nurses are often the last profession mentioned in the conversation—despite the fact that patient lives have been directly affected by recent developments in AI.

The American Nurses Association’s official position on AI in nursing states, “ANA believes the appropriate use of AI in nursing practice supports and enhances the core values and ethical obligations of the profession. AI that appears to impede or diminish these core values and obligations must be avoided or incorporated only in such way that these values and obligations are protected.” Many nursing organizations have rushed to declare their positions on AI as investment in this new technology increases across the health care sector.

The rapid pace of AI’s development has people questioning whether nurses could potentially be replaced by advanced machines. “I don’t think it’s ever going to replace nurses. I think that’s a ridiculous claim. Anybody who says that doesn’t know what nurses do,” says Marion Leary, the director of innovation at Penn’s School of Nursing, who works to amplify nurse leaders in health and health care innovation.

Heidi, a new generative–AI platform, is already taking on the role of a medical scribe in patient rooms across the country. You can use platforms like Heidi to transcribe patient histories, ask for help when approaching difficult conversations with a patient, or develop treatment plans. Penn Medicine, along with other health systems across the country, is already using ambient listening platforms like Heidi to give health care workers more time face–to–face with patients.

But AI involvement in health care work is complicated by the fact that

nurses constantly have to act as moral agents when it comes to patient care. “This technology must not only protect my autonomy as a moral agent, but also my right as a nurse to make decisions that are in the beneficent care of my patient,” argues Connie Ulrich, a nurse bioethicist at the Nursing School. “How do we weigh the risks and benefits of this technology as we move forward?” Ulrich asks. “I think that will be the constant question we will have to be asking ourselves.”

In a recent article, Ulrich interrogates how AI will change the moral decisions nurses make every day. Her background as both a nurse and an ethicist gives her unique insight when it comes to examining how AI can be situated in health care. “Some believe that the human judgment that undergirds these qualities cannot be programmed and therefore cannot be executed by an AI algorithm,” she writes. “They are qualities cultivated through years of human experience, ethical reflection,

and human connection that go beyond surface–level responsiveness.”

A key consideration when approaching the question of AI in health care is the delicacy of human connection. “Nurses are the end users of AI, but [they’re] not involved in the initial discussion of development or implementation of AI,” Ulrich says. Shared governance over AI integration in health care is integral when it comes to assessing its impact on patients and health care workers. “As nurses, we have to advocate for where it’s appropriate to be used in our practice,” says Leary. Nurses must be part of conversations about AI use for the “human” in human–centered design to be emphasized.

“23% of nurses have no ethics training or education, which means they are less likely to take moral action,” Ulrich says, referencing her previous work in education research. When nurses are not equipped with the confidence or education to question ideas about the future of patient care, dangerous ideas may be left unchecked. The weight of caring for patients, advocating for patients’ loved ones, and ensuring licenses are protected can be impossible for many nurses to carry as they push forward in their careers.

While the ethical concerns of AI use are numerous, the technology also seems capable of solving many problems facing nurses today. Chief among them is exhaustion—nurses across the world report burnout symptoms at a rate of 11.23%. “There are concerns about staffing or there are concerns about just not having enough resources to provide beneficent patient care. So, it leads to moral distress and nurse burnout,” Ulrich says. Nurse burnout can be linked to administrative burden, poor nurse–to–patient ratios, and emotional exhaustion. Burnout has been a consistent, rising problem in the field of nursing that can decrease the quality of patient care.

Along with her work at the Nursing School, Leary leads the National Nurse Innovation Fellowship Program at Johnson & Johnson. “I’ve been working in innovation and entrepreneurial work for a really long time. The things I saw people pitching back then, [which] we had no idea how to make a reality, can now be a reality because of AI,” says Leary. AI seems able to address many longstanding problems in nursing, and may be able to make some of the most difficult parts of the job much easier. “I have teams that are considering using ambient listening and AI, mostly around workplace violence, [to detect] if patients are becoming agitated and potentially violent to nurses,” reports Leary.

AI has great potential to relieve nurses of the endless labor they often spend charting, delegating tasks, and doing monotonous administrative paperwork. This time can be given back to patients at the bedside, or to the nurses themselves for a much–needed respite from their work. Along with potentially decreasing burnout, AI can be used to empower nurse decision–making and detect patterns in patient tests long before any human might get a “nurse’s intuition.”

But not all health care professionals are sold on the benefits of AI. National Nurses United—the largest professional association of RNs in the United States—has been outspoken in challenging the use of AI in nursing. Over the past year, they’ve held numerous protests demanding better protections for patients against AI. Their position holds that while nurses don’t oppose technological advancement, they are opposed to algorithms replacing the hands–on expertise that trained nurses bring to patient care. A 2024 survey created by NNU found that 69% of nurses in workplaces that use algorithmic systems said their assessments don’t match the measurements generated

by computers. Nurses surveyed also found that AI models don’t take into account many of the complex social determinants that impact patient care.

“There’s something unique about being a compassionate and skilled caregiver that can provide care to a patient that AI cannot,” Ulrich says. The trust patients invest in navy–blue scrubbed nurses has been built over centuries, and AI poses a real threat to the delicate patient–nurse relationship. “I want us to retain our valued position within the health care system and the public because we’ve been trusted for so many years,” Ulrich hopes.

It’s inevitable that AI will soon have an unshakeable presence at a patient’s bedside. Instead of rejecting it outright, nurses must work with patients to create individualized approaches to AI–integrated care. “I think about it with informed consent—is there transparency about how AI is used with regard to clinical care that the patient received?” Ulrich asks. “Some patients would probably be fine without us, but some perhaps would not.”

Recognizing that patients have a right to control their own care also means that developers and health care workers must create a joint framework for patients to consent to AI use. “I am AI agnostic,” says Leary. “If AI is the solution that works for the population, then great. But if it’s not, then you shouldn’t use it.”

AI is a train that has already left the station when it comes to health care—now, nurses and patients must rush to catch up to it. Questions have to be raised as AI starts quietly standing next to nurses at the bedside. “We are still the humans, and we need to make the decisions,” argues Leary. Humanity is the sacred pillar upon which all ethical health care rests—in the age of AI, it is patients and nurses that must continue the fight to keep it standing. n

Why artificial intelligence actors will never replace movie stars in Hollywood.

BY ÉLAN MARTIN–PRASHAD

There’s something magical about watching an actor hold an Oscar. Maybe it’s the quiet tears glinting in their eyes—maybe it’s the quiet battles that we as the audience fight inside ourselves as we search their faces for a glimpse of authenticity. In an industry built on smokescreens and dream factories, is the actor’s tear–ridden acceptance speech just more acting? Or is it real?

Maybe it’s those rare candid moments, the ones where an actor says something so unexpected and so real in their acceptance speech that we can’t help but fall in love with them.

Or maybe it’s something we can’t explain, something we can only feel. It all boils down to the power of stardom— the captivating spell that actors cast, first on the agents who sign them and then on us, the audience.

Whatever the reason for this magic, one thing cannot be doubted: Actors are human. Their achievements— whether an Academy Award, a breakout role, or a real change they inspire through their art—are a testament to their hard work trying to break through in an industry hinged on prestige, nepotism, and backroom deals. No matter how highly we may idolize them, they are no different than us, and they are only irreplaceable because we, the audience, are the ones who gifted them with their stardom.

But how could we possibly gift that same stardom to an actor who isn’t even real?

In September of 2025, Hollywood sounded the alarm bells when it was discovered that talent agencies were considering signing Tilly Norwood, an artificial intelligence actor created by comedian Eline Van der Velden and her AI production company, Particle6. Van der Velden was unprepared for the massive backlash she received after posting a video of Norwood announcing herself as the world’s first AI actor. Later statements about Norwood receiving possible agency representation spurred further panic, and a recently released

Norwood music video that urges actors to embrace AI has kept debates about the Norwood spectacle raging through the industry.

So what about this debate has everyone in entertainment so riled up? It’s not news that production companies are using AI–generated actors as extras to lower production costs. AI has been praised by people like Kevin O’Leary for being a cost–cutting tool for film projects, allowing productions to save millions. Industry leaders like Ben Affleck and Pulp Fiction co–writer Roger Avary have been building up AI production companies—Netflix has already acquired Affleck’s company InterPositive, and Avary has said that attaching the terms “AI” and “technology” to his projects has attracted significant support from investors. Investors and other key industry players see AI as the new frontier of human storytelling. Major breakthroughs like talking pictures, home television, and computer–generated imaging transformed the entertainment industry—so too, it seems, will AI.

But just because AI can deliver results doesn’t mean that we should be quick to hop on the bandwagon. For one, audiences have already started experiencing AI fatigue, growing tired and annoyed of seeing so much AI–generated content on their feeds. Because of its universal accessibility and low learning curve, AI use has been linked by some researchers to “brain fry,” or cognitive decline. Members of Screen Actors Guild–American Federation of Television and Radio Artists have also become worried by AI platforms’ use of actor likenesses without compensation or consent, and are actively championing frameworks to protect member rights. Background actors hoping for their big breaks fear that AI–generated extras are creating additional barriers challenging their ability to get their start in the business.

So what is it about AI actors that has the industry so panicked? Perhaps it’s the fact that talent agencies were seriously considering signing Norwood for

representation. Despite the fears her creation has spurred, Van der Velden claims that people have no reason to believe Norwood will ever replace real human actors. Instead, she says that she created Norwood to live in an ecosystem of AI movies, a new genre she believes will eventually be populated with more AI–generated actors.

The biggest question is whether we should have reason to believe that audiences will consume this type of content. AI has already been slowly but surely creeping into traditional filmmaking. In 2025, Oscar front–runner The Brutalist was met with severe backlash after it was discovered that the filmmakers used AI to enhance Hungarian

“No matter where we look, we will continue to consume AI–generated content, whether we want to or not.”

language dialogue for its lead actors. Productions are using AI to resurrect deceased actors like Val Kilmer. Netflix recently announced its plans to roll out AI–generated advertising across its slate of shows and films. No matter where we look, we will continue to consume AI–generated content, whether we want to or not.

Is all of this an attempt to gradually desensitize audiences to AI–generated content? And if so, could we see the rise of an AI film genre that eventually becomes profitable and widely accepted by audiences?

Not necessarily. Norwood may have doe eyes, but she’s also limited by the digital space she occupies. And with ads, brain rot, and AI slop constantly

bombarding our feeds, it’s no surprise that we may move into an era where audiences seek greater authenticity away from the addictive world of screens. This year’s Academy Awards are surely a testament to our yearning for greater authenticity as we drown in digital media and nonstop stimuli. Sinners is Ryan Coogler’s original concept, born out of an unexpected but delightful melding of genres; Hamnet, at its core, urges us to get back in touch with the natural world and understand our place in it; Best Picture winner One Battle After Another is a testament to and a celebration of Paul Thomas Anderson’s entire career, built on original storytelling and his unique voice as a filmmaker.

Norwood can act in whatever Van der Velden wants her to. And Van der Velden, in turn, can argue that Norwood is an artistic creation that took real humans time and care to develop. But Norwood and any other AI–generated actors who may follow will never replace true stardom—especially since they will only live in AI–generated films audiences may not even embrace. The old saying still stands: stars aren’t born, they are made. They’re made not through zeroes and ones, but by commanding a presence on screen, building their body of work across multiple projects, and living with an unstoppable devotion to the craft of acting.

You might ask who makes the stars we know and love today. The most obvious answer would be talent agents— and you would be right, sort of. An agent’s job is to cultivate that initial spark of je ne sais quoi they glimpsed within an actor throughout negotiations and deals. But it is ultimately the audience that truly creates a star. We vote on the Oscars. We fill seats in theaters. We are the entire reason this industry exists in the first place. What we want dictates the future of storytelling.

And right now, more than ever, we want stories about humans, by humans. n

For Penn students pursuing a career in the film industry, artificial intelligence is altering both the work and the way in.

BY CHENYAO LIU

DESIGN BY KATE AHN AND ALEX NAGLER

On any given weekend in Hollywood, an assistant is staring down a stack of scripts they’ll never finish.

Script coverage is the first step in a film’s development. A reader summarizes a screenplay, evaluates its strengths and weaknesses, and passes that report up the chain. On one hand, it’s an on–the–fly way to learn structure and develop the elusive instinct for what makes a story worth telling. It is also cheap but time–consuming labor, the kind that quietly sustains this behemoth of an industry.

With thousands of scripts circulating and only a fraction getting read, script coverage is fertile ground for the nascent artificial intelligence revolution.

Kartik Hosanagar, a Wharton professor and founder of the AI script coverage platform ScriptSense, built his company with the goal of streamlining the script coverage process. “The vast majority of screenplays that are sent to agents and producers pretty much go unread,” Hosanagar says. “Even for the things that get read, there’s so much guesswork involved.”

His tool aims to process scripts at scale, summarizing, categorizing, and helping executives decide what to prioritize. After all, coverage takes time, and time costs money. AI is exceptionally good at distillation, able to generate loglines, produce clean synopses, and cross–reference a

script against hundreds of others in seconds. For an overworked reader assigned four scripts and two books in a weekend, that kind of speed means survival in an industry as fast–paced and cutthroat as Hollywood.

But efficiency is only part of the story. AI can be remarkably consistent as it summarizes and compares scripts at scale. It can quickly tell a reader what a script is about. It can identify patterns no human reader could reasonably track. What it cannot convincingly do, however, is care.

Hosanagar was explicit about this limitation when designing ScriptSense. The platform would generate anything from summaries to casting suggestions, but would leave blank the most subjective question in any coverage report: What did the script make you feel? It would not recommend whether a script should proceed or pass. That judgement, he insists, must remain human.

“There’s a lot of nuance to reading scripts,” he says. “It’s very easy to prompt an AI system and say, ‘Tell me what it makes people feel.’ It’ll make something up, but it doesn’t mean it’s real.”

Not all AI script coverage programs take such care in preserving the human role, though. Competing AI companies like Greenlight Coverage offer verdicts for those who don’t have the time to do

any reading themselves. The gap between information and interpretation is where much of the anxiety around AI in creative industries lives.

Nick Harty (W, E ’27), who’s worked coverage internships before and is interested in pursuing a career in film, sees the stakes in creative terms. “If the best scripts were always getting through, we’d have the worst slate of movies you could possibly imagine,” Nick says. The films that endure are often the “odd balls”—the ones that defy structure, resist easy categorization, or read unpredictably on the page. They don’t have to be the most polished, but the most singular.

AI, by contrast, tends toward the average. It predicts what works based on what has worked. “It just wants to please you,” Nick says. “It’s really not interesting.”

AI’s tendency to identify good scripts through familiar plot lines leads to a greater risk of homogenization within the industry. For writers, the possibility that AI will filter out strange, risky, and unclassifiable scripts feels personal. Aspiring screenwriter Henry Franklin (C ’27) puts it simply: “I would much rather have a human read my script.” To him, the idea that a screenplay might be reduced to a piece of data for a machine to process “doesn’t produce the warmest sensation.”

Despite these anxieties, the logic of the

economy continues to dominate. Development is the cheapest phase of filmmaking, but it’s also the easiest to cut. Hosanagar expresses concern that Hollywood studios and production companies will use AI as a tool to replace interns and freelance readers. “With a tool like AI, the worst–case scenario is a lot of these companies get lazy,” Hosanagar says. “I think that is bound to happen.”

Concerns around AI extend beyond efficacy and into industry access. “It’s a genuine concern that the entry point for a lot of people into this industry is impacted,” Hosanagar says. If AI reduces the need for coverage readers, there will be fewer and fewer of the entry–level roles that many young people rely on to break into film.

For decades, coverage has been more than a task—it’s been a rite of passage for aspiring filmmakers. Kaia Chambers (W ’26), who has done coverage for four years across classes, production companies, and talent agencies, describes it as “a training ground.” It’s where young people learn how to read critically, articulate their thoughts, and navigate an industry built as much on relationships as on scripts.