DECEMBER 2024

Cutting In

en em ag w an no t t m as se c as ad y? ia bro tr ed s us m e; i nd e: ag IT i su r is sto an is d th an

to the

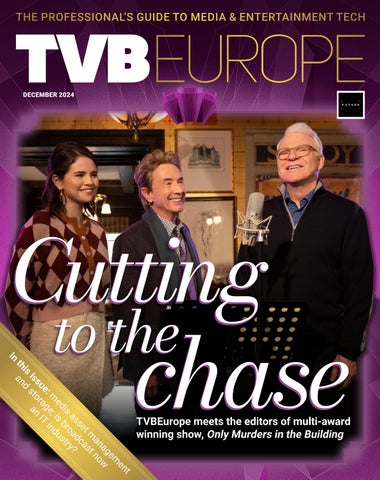

chase TVBEurope meets the editors of multi-award winning show, Only Murders in the Building

t

DECEMBER 2024

Cutting In

en em ag w an no t t m as se c as ad y? ia bro tr ed s us m e; i nd e: ag IT i su r is sto an is d th an

to the

chase TVBEurope meets the editors of multi-award winning show, Only Murders in the Building

t