Welcome to Issue #127 of TM Broadcast International, that promises to be a rich collage of insights and innovations from the world of broadcast and audiovisual production. This issue is a vibrant palette for our readers, featuring exclusive interviews with industry frontrunners such as TVMonaco, a new player in the broadcasting field, and an insightful conversation with Will Cohen, the VFX maestro behind Dr. Who’s mesmerizing visuals at Bad Wolf, in which he shares his experiences, shedding light on the intersection of storytelling and technology.

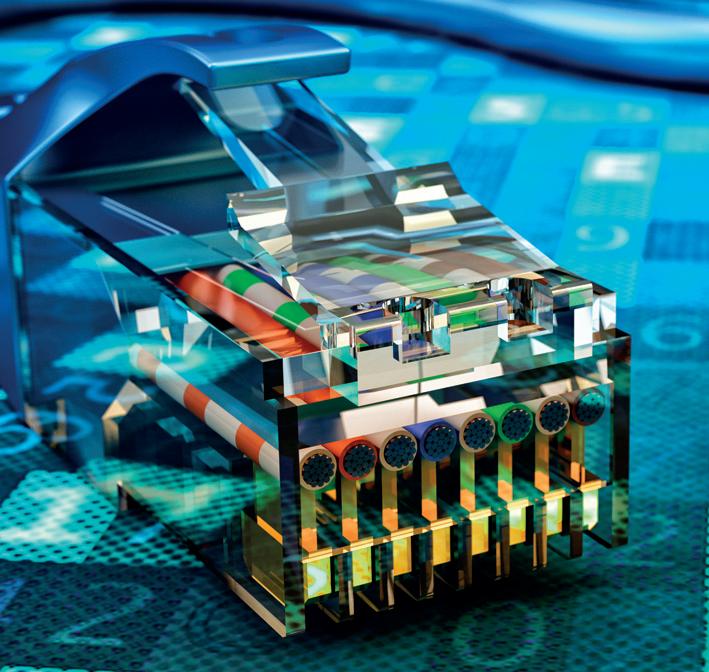

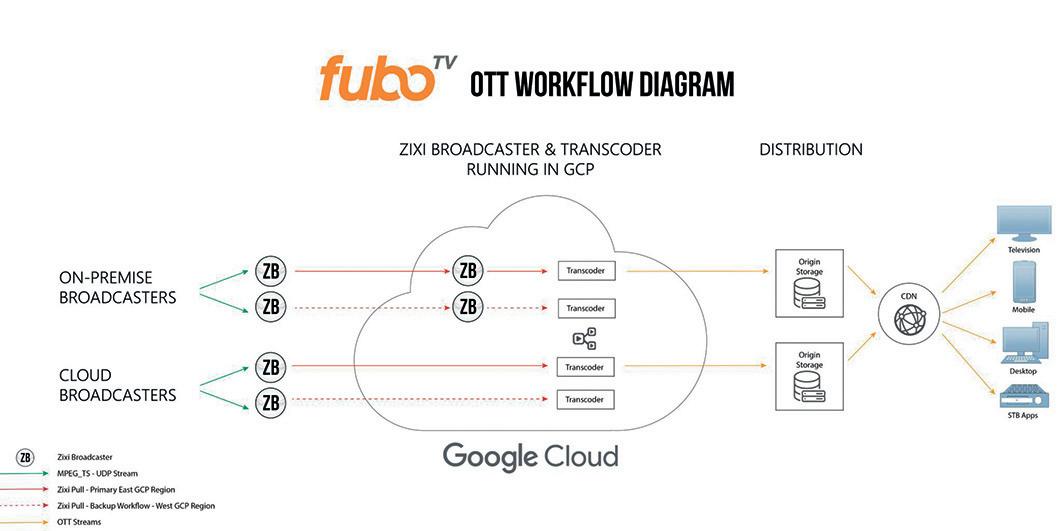

Along the present issue, readers also will find an analysis that looks into the broadcasting industry’s gradual transition to Internet Protocol (IP) technology, showcasing its role in enhancing the flexibility, efficiency, and quality of content delivery. The article features

Editor in chief

Javier de Martín editor@tmbroadcast.com

Key account manager

Patricia Pérez ppt@tmbroadcast.com

Editorial staff press@tmbroadcast.com

case studies from leading organizations like the Canadian Broadcasting Corporation (CBC), ITN, Foxtel, and Fubo, highlighting their journeys in adopting IP technology to improve content production, distribution, and collaboration across different locations.

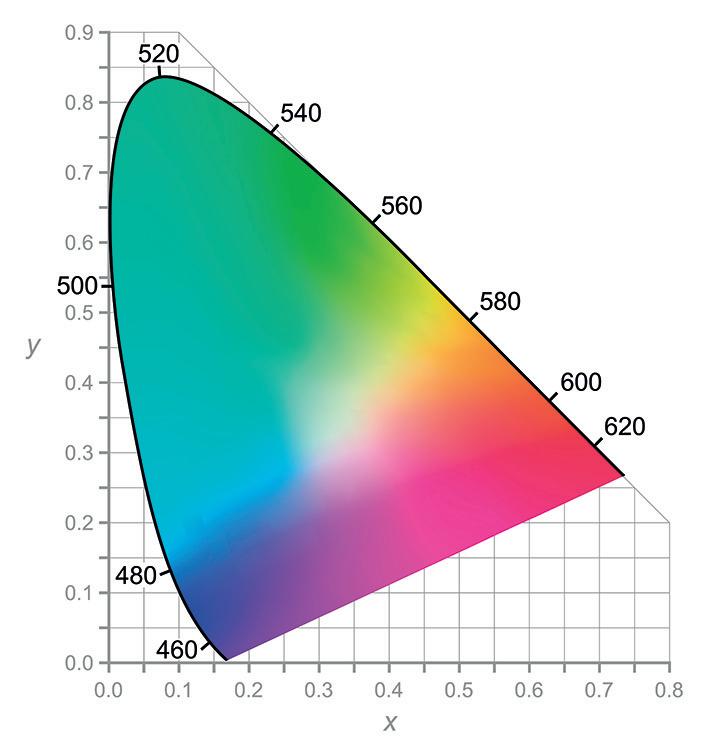

This TM Broadcast International magazine’s issue also features specialized articles on “Color in Broadcast”, delving into the technical and aesthetic aspects of color in TV production, and “AI in Content Creation”, highlighting the cutting-edge use of artificial intelligence in crafting compelling content.

Join us as we embark on this enlightening journey through the latest trends, challenges, and breakthroughs that define the dynamic world of broadcast and audiovisual production.

Creative Direction

Mercedes González

mercedes.gonzalez@tmbroadcast.com

Administration

Laura de Diego administration@tmbroadcast.com

Published in Spain

ISSN: 2659-5966

TM

#127 March 2024

TM

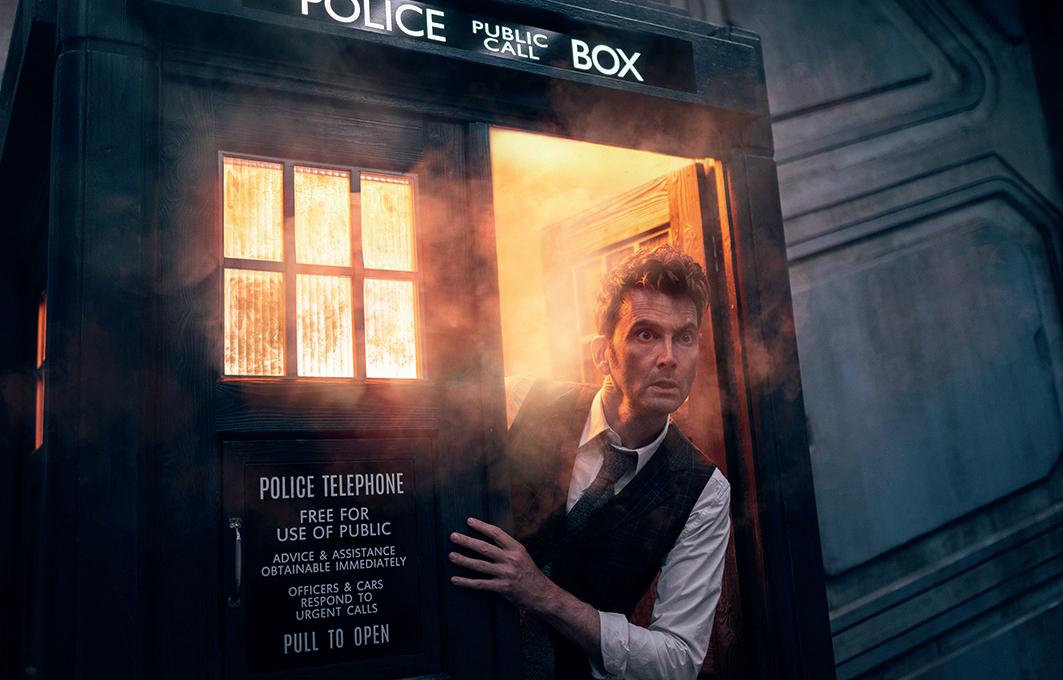

In an exclusive interview with TM Broadcast International Magazine, we delve into the creative mind behind the visual spectacles of one of Britain’s most beloved shows, Doctor Who. Will Cohen, the mastermind VFX producer responsible for bringing the Time Lord’s adventures to life, shares his experiences and insights from working on the series, especially during its landmark 60th anniversary specials. Cohen’s journey with Doctor Who is a fascinating blend of nostalgia, innovation, and creative camaraderie, highlighting the challenges and triumphs of modern visual effects storytelling.

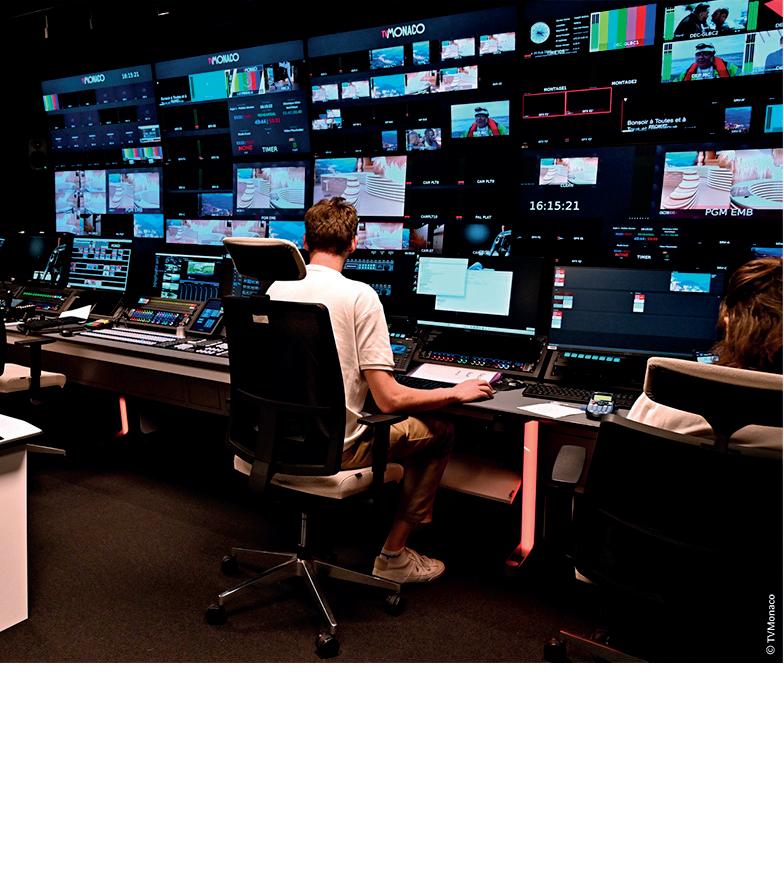

In the fast-growing landscape of global broadcasting, the tiny yet prestigious principality of Monaco has embarked on an ambitious journey to establish its voice on the international stage through the launch of its public broadcaster within the TV5Monde network.

From

58

74

82

Agile Content has rolled out its Agile CDN Director solution at Telenor Sweden, a major telecommunications operator in the country. This deployment marks a continuation of the longstanding partnership between the two companies, aimed at enhancing efficiencies and optimizing the utilization of Agile Content subsidiary Edgeware’s content delivery network.

As piracy continues to surge in the over-the-top (OTT) sector, the significance of technological solutions like Agile CDN Director is becoming increasingly apparent. Television piracy alone constituted nearly half (48%) of all access to infringing sites in the EU in 2022, according to a report from the European Union Intellectual Property Office (EUIPO).

Agile CDN Director builds upon Edgeware’s existing CDN architecture, introducing advanced features such as realtime cache, network switching, and integrated server quality of experience monitoring. This evolution enables Telenor Sweden to enhance the efficiency of its next-generation television services by seamlessly integrating its CDN with that of its sister company, Telenor Norway, facilitating resource utilization across networks and ensuring uninterrupted service even in the event of site loss.

Marielle Lind, Telenor Sweden’s technical architect for TV backend systems, said of the Agile CDN Director: “As responsible for Telenor Sweden’s CDN and ensuring our customers get the quality of experience they expect on their devices, I have a long list of requirements I want from my network, some of which we couldn’t meet. But with Agile CDN Director now up and running, suddenly a lot more is possible. So far, I haven’t found anything that’s impossible.”

Another key advantage of the solution that Telenor Sweden has found surprisingly effective is its ability to keep hackers out and transmit only to authorised users, company stated. As Telenor also considers, Agile CDN Director provides greater visibility into everything happening in the CDN at any given time, including the use of common access tokens that prove that customer requests are coming

from Telenor’s own back-end and not from external agents, including hackers trying to access a network.

This additional layer of security protects a CDN like Telenor’s Edgeware Network and ensures that only valid customers have access, which in turn frees up capacity and ultimately provides better network speeds for the TV viewing public, according to the information released. Agile CDN Director provides a whole new level of security. “Our records show that we have blocked between 50,000 and 60,000 invalid requests each day that were trying to access the CDN and reach a streaming server,” said Marielle. “With Agile CDN Director, it’s easy to identify those invalid requests and deny them access. If we didn’t have it, the network would be much busier with traffic that shouldn’t be there.”

Nevion has announced that the signal processing capabilities of its software-defined media node Virtuoso have been extended with a new audio interface and additional up/down/cross conversion (UDC) functionality. These capabilities increase the versatility of Virtuoso for live production applications, such as outside broadcast or centralized processing (“glue”) infrastructure for studio, production control room or master control room operations.

A key component of Sony’s Networked Live offering, Nevion Virtuoso has been widely deployed across the globe to transport, process, and monitor signals in real-time. The video and audio processing capabilities of Virtuoso in particular are being used daily by many broadcasters in their facilities to support their national and regional radio and TV productions.

Virtuoso already offers comprehensive audio capabilities, including bidirectional AES3, MADI and SMPTE ST 2110 / AES67 IP audio interfacing. Processing features include monitoring, routing, embedding, mixing, shuffling and perchannel control of polarity, gain, and delay. With the new RPRO interface aimed at remote

production applications, Virtuoso can now also interface and transport mixed digital and analogue audio signals, along with GPIO and sync distribution.

For video processing, Virtuoso’s UDC capability offers a variety of high-quality format conversions for HD and UHD with native SMPTE ST 2110-20 uncompressed video input/ output. The existing functionality includes de-interlacing, scaling, HDR/SDR conversion, legalization, frame synchronization and delay. The new additions to Virtuoso’s UDC capabilities include frame rate conversion and configurable 3D LUTs for color space conversion (with a subset of BBCs 3D LUTs pre-loaded).

The latest Virtuoso media function release also includes a further enhancement to the JPEG-XS in TS (TR-07) capability announced at NAB 2023, adding the ability to handle IP-in/IP-out workflows.

Steve Hard, VP of Product Management, Virtuoso at Nevion says: “Nevion Virtuoso is obviously well-known for its media transport functionality, but it also offers exceptional media processing capabilities. This makes Virtuoso an extremely versatile media node – something that our customers recognize and appreciate. These latest extensions to the media processing capabilities of Virtuoso, cement its position as one of the highest performers for in-facilities and outside broadcast applications.”

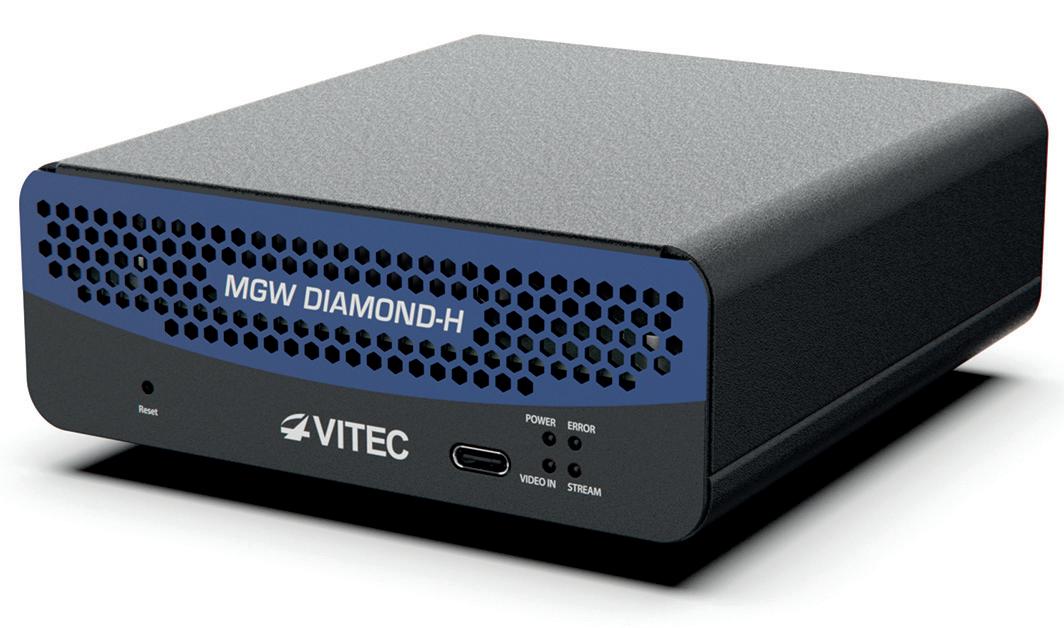

Vitec is launching the MGW Diamond-H 4K HDMI encoder to further enhance its broad portfolio of HEVC encode and decode products.

“Vitec is proud to introduce the MGW Diamond-H, a powerful compact 4K HDMI Encoder demonstrating our commitment to innovation and meeting the evolving needs of our customers,” says Richard Bernard, Head of Product Management, at Vitec. “With its impressive encoding capabilities, seamless integration features, and Power over Ethernet support, the MGW Diamond-H opens up new possibilities for IPTV distribution and video contribution across various industries.”

The MGW Diamond-H, a portable 4K HDMI Encoder is a significant

addition to Vitec’s portable HEVC encoder product line, setting a new standard for quality, efficiency, and integration. With the ability to encode up to 4 channels from two HDMI inputs, the MGW Diamond-H empowers users to capture and stream content with unparalleled quality and minimal latency.

The unit is designed to facilitate

integration into existing setups. Featuring HDMI loop through, the MGW Diamond-H ensures a smooth workflow and enhanced connectivity with existing video equipment. For optimal reliability and power efficiency, the MGW Diamond-H can be powered via Power over Ethernet, streamlining installations and reducing the need for additional power sources.

Timeline, a broadcast facilities company with headquarters in UK, has teamed up with Videosys Broadcast to enhance coverage of the British Basketball League championships, currently broadcasted on Sky Sports, the League’s UK partner. By implementing Videosys camera control at each basketball venue, Timeline’s production staff can manage multiple cameras remotely from a central location in London.

The British Basketball League championships feature 10 clubs playing three to four games every weekend across various UK venues. To optimize resources, Timeline has devised a remote production solution, minimizing expenses on personnel and equipment.

The solution entails Timeline providing studio space at its Ealing Broadcast Centre in West London for the League’s production crew and presenters. Additionally, technical personnel and identical flyaway equipment kits are deployed at each venue. On match days, feeds from four Sony cameras at the venue are transmitted back to Ealing via ethernet connections, allowing the production crew to handle aspects like color balance, camera angles, and vision mixing from the gallery.

John Hayhurst, Senior RF Project Manager at Timeline TV, highlights the transition to data transmission via ethernet cables and IP addresses: “These days, we don’t move anything around in baseband video – it’s all just data sent via ethernet cables and IP addresses.” The production is centralized at Ealing, where camera control and tally information are managed, with the latter sent over an IP system to control radio cameras at the venue.

Timeline utilizes Videosys camera control system, comprising an Indoor Unit (IDU) at Ealing, an Outdoor Unit (ODU) at the venue, and a compact Camera Receiver (RXSM-E) mounted on each camera. The system allows seamless control of camera settings regardless of location.

While currently unicasting camera control data between Ealing and each venue, Timeline’s system has multicasting capabilities, enabled by identical equipment at all venues. Videosys’ CCU system, fully IP-based, supports TCP and UDP delivery, ensuring data transmission over wide area networks with guaranteed latency as the company states.

The overarching aim of remote production is to streamline operations across multiple events on the same day. “This is what lies at the heart of the technical setup we have put into place for the League’s basketball coverage,” says John Hayhurst. “Years ago, covering multiple games would involve sending an entire crew to each venue, which was obviously expensive and labor-intensive.”

Snapdragon Stadium, located within SDSU Mission Valley, serves as a year-round sports and entertainment destination and a hub of community engagement. Home to SDSU Aztec Football, San Diego Wave FC of the National Women’s Soccer League, San Diego Legion of Major League Rugby and the newly announced MLS team, San Diego FC, the venue hosts a myriad of events, including concerts, festivals, motocross shows, international sporting events, championships, community events, private and corporate events, and more. Notably, Snapdragon Stadium has emerged as a favored venue for the US Women’s National Soccer Team. Since its opening, the facility has hosted multiple ‘friendlies’ and is currently a key venue for the CONCACAF Women’s Gold Cup. ClearCom’s communication solutions contribute to the success of these events, enhancing the overall experience for athletes, performers, and fans alike.

Snapdragon Stadium’s cutting-edge communication infrastructure, featuring Clear-

Com’s advanced equipment, plays a pivotal role in addressing diverse needs associated with its status as a multi-use facility for both sports and entertainment. The heart of Snapdragon Stadium’s communication infrastructure lies in the deployment of Clear-Com’s FreeSpeak II® digital wireless system combined with Encore® analog partyline elements, providing a robust and reliable communication platform that ensures seamless coordination during various events hosted at the 35,000-seat multipurpose venue.

Ceci Bacerra, Manager, AV & Broadcast at Snapdragon Stadium, expressed their satisfaction with Clear-Com’s solutions, stating, “Many sporting teams, shows, and concerts travel with Clear-Com equipment that can easily integrate into our house system. Their online portal makes it user-friendly and easy to configure for each event and the specific needs that may arise.”

In the control room, the deployment includes the Encore Main Station, a mix of 4

channel and 2 channel Remote Stations, and Encore Speaker Stations in U-Box. For remote beltpacks and interfacing needs, the venue relies on five Encore RS-701 Single Channel beltpacks and the Encore MT701 for interfacing with external systems. The FreeSpeak II digital wireless system, comprising the FreeSpeak II-BASE-II Base Station, Transceivers, Splitters, and beltpacks, offers unparalleled flexibility. The 1.9GHz product provides the necessary range and flexibility for beltpacks to seemlessly roam anywhere in the facility, covering sidelines, the bowl, control room, locker rooms, and dock areas. ClearCom’s advanced technology ensures a robust and reliable communication network, underscoring their commitment to technical excellence in sports and entertainment venues.

The system’s ability to seamlessly integrate into Snapdragon Stadium’s infrastructure and adapt to the diverse requirements of sporting events, concerts, and other entertainment productions showcases Clear-Com’s leadership in communication systems for sports and entertainment. The company’s reputation for delivering reliable and innovative solutions played a crucial role in the selection process, ensuring that the venue is equipped with the latest technology to meet the communication demands of its diverse events.

Bainet Group, a Spain-based production powerhouse with a rich history spanning over three decades in TV, cinema, advertising, and online content creation, has embraced LiveU to revolutionize its live content acquisition process. Deploying 20 portable units from LiveU’s IP-video EcoSystem, Bainet Group now delivers breaking news and related stories to broadcasters and audiences with unprecedented speed and dynamism.

Founded in 1992, Bainet Group has earned a sterling reputation for pushing technological boundaries to enhance service quality for its diverse clientele.

From regional broadcasters in Spain like RTVCE, EITB, and RTCM to national networks such as A3 and La Sexta, Bainet Group caters to a wide array of clients, including international production companies and corporate entities.

Sebastian Diaz, General Manager of Bainet Group in Madrid, remarked, “LiveU has been a game-changer for us. Previously, live content production posed significant challenges, especially in terms of agility and timeliness. With LiveU’s field units, we’ve significantly ramped up our live broadcasting, particularly in news coverage. We’ve transitioned from managing one live event per day to handling up to 20, equating to over 7,000 live events annually.”

Diaz underscores the reliability and robustness of LiveU’s technology, crucial for their news production services, whether for live broadcasts or content acquisition and transmission to broadcasters. “The biggest boon for us has been the mobility offered by LiveU’s backpack technology. We now have unfettered access to locations and live stories that were previously out of reach. Gone are the days of grappling with DSNG trucks, satellite connectivity woes, and logistical hassles. LiveU has eliminated these

barriers, allowing us to position ourselves anywhere and transmit live signals from any location instantly.”

He also emphasizes the technology’s ability to capture stories even in extreme conditions, such as conflict zones and natural disasters, enabling Bainet Group to deliver compelling content to global audiences. “The signal stability, coupled with robust MCR monitoring, is exceptional. Combined with the costeffectiveness compared to DSNGs and exceptional service from LiveU staff, I am thoroughly satisfied both on a technical level, ensuring seamless content delivery to broadcasters, and with the personalized technical assistance and support from the local team,” Diaz added.

Laura Llames, Country Manager South Europe, LiveU, commented, “Spain represents a burgeoning market for LiveU, with a growing number of production companies, broadcasters, and rights holders embracing the transformative potential of our technology. Bainet Group’s expansion of live content acquisition in news is testament to the impactful capabilities of the LiveU EcoSystem in content creation.”

Prime Vision Studio, based in Dubai and specializing in high-quality broadcast studio and mobile television content production services, has made a strategic investment in the latest generation Ikegami UHK-X700 4K studio/EFP cameras to enhance its production capabilities. With over two decades of experience in systems design and operation, Prime Vision Studio is wellequipped to cater to both studiobased and location events.

According to Mr. Mansoor Meghani, Managing Director of Prime Vision Studio, the decision to invest in Ikegami UHK-X700 cameras was driven by the increasing demand for 4K-UHD HDR capability, ensuring that productions remain relevant and commercially viable in the long term. The cameras were chosen for their versatility, high signal quality, and robust build, making them suitable for various applications, including studio-based projects, outside broadcasts, and mobile content creation, in Meghani’s words.

The support received from Ikegami Middle East further solidified the decision to opt for the UHK-X700 cameras. Prime Vision Studio has added eight

UHK-X700 camera systems, including a high-frame-rate version, to its rental fleet. These cameras will be available for dry hire, crewed hire, or as part of a comprehensive production management service.

Abdul Ghani, General Manager of Ikegami Middle East, expressed confidence that Prime Vision Studio’s production team will find the UHK-X700 cameras to be robust and feature-rich solutions for a wide range of applications.

The Ikegami UHK-X700 is a recent addition to the Ikegami Unicam XE 4K series, designed for both studio and outdoor use. It features three 2/3-inch CMOS 4K sensors with a global shutter, providing freedom from rollingshutter distortion and flash-

banding artifacts, as company states. The camera supports full HDR/SDR and offers a choice between BT.709 and BT.2020 color spaces. High frame-rate shooting options make it suitable for capturing fast motion in sports and stage events.

When combined with the Ikegami BSX-100 base station, the UHK-X700 allows for cable lengths of up to 3 km, supporting simultaneous output in HD SDR and UHD HDR video formats. Peripheral options for the UHK-X700 include the OCP-300 control panel, MCP-300 master control panel, and SE-U430 system expander. Ikegami also offers a choice of two types of viewfinders for this model: the VFL201D (2-inch LCD) and VFLP700AD (7-inch full HD LCD).

StreamGuys’ SGrecast technology is amplifying the NHL juggernaut’s global presence through its 24/7 audio streaming platform, Sharks Audio Network, while also facilitating advertisement insertion and monitoring services.

Founded in 1991, the San Jose Sharks, a prominent NHL team stationed at San Jose’s SAP Center in the Bay Area of San Francisco, transitioned from a 20-year tenure on The South Bay’s 98.5 KFOX station to establish their own 24/7 audio network in 2001. StreamGuys’ SGrecast has been instrumental in managing podcast automation, rebroadcasting, and live streaming across various platforms.

The Sharks Audio Network serves as a strategic move to engage fans and deliver continuous audio content to San Jose Sharks enthusiasts worldwide.

O

ffering a blend of live regular season and Stanley Cup playoff matches alongside a diverse array of on-demand content, the network features interviews, player profiles, replays, pre-game coverage, highlight reels, lifestyle programming, and live news updates.

Dan Rusanowsky, a seasoned play-by-play announcer with a background in radio, spearheads the team’s audio production

efforts. With over three decades of experience with the Sharks, Rusanowsky oversees all aspects of audio content, ensuring fans are kept abreast of the action from the renowned “Shark Tank”.

“I am primarily recognized as the play-by-play commentator for the San Jose Sharks, but I am also responsible for managing

the Shark’s audio network and coordinating all audio production tasks alongside our team,” remarks Rusanowsky. “Having been with the Sharks since its inception in 1991, setting up radio network coverage was part of my initial responsibilities. However, transitioning to a 24-hour format expanded our operations significantly.”

Partnering with StreamGuys since 2019, Rusanowsky leveraged the SGrecast SaaS platform to convert and redistribute live content, broaden the network’s reach, and capitalize on the Sharks’ online presence to engage their global fan base. While most listeners access the stream through the Sharks Plus SAP Center app, the San Jose Sharks website employs the SGrecast player to facilitate seamless connectivity.

“Although we maintain a terrestrial radio network in Northern California, we’ve shifted focus towards our 24hour programming on the app,” explains Rusanowsky. “This approach enables us to reach audiences in previously untapped regions, and SGrecast simplifies content delivery to both NHL platforms and our terrestrial affiliates through Skyview Satellite.”

Rusanowsky emphasizes SGrecast’s role as a central repository for managing programming and automatically archiving content. The platform’s recording capability enables prompt access to game airchecks, facilitating swift editing, repackaging, and re-upload processes. With dedicated studio space at the SAP Center, the Sharks maintain full control over content, driving increased fan engagement and enhanced monetization opportunities, as company stated.

“Traditional radio excels in live programming, but we’re witnessing a shift, particularly among younger demographics,” observes Rusanowsky. “Operating across multiple channels allows us to leverage programming for both client acquisition and retention, with the Sharks Audio Network serving as a pivotal tool in maintaining fan engagement.”

Neil Carducci, Quality Assurance Tester at StreamGuys, highlights Rusanowsky’s innovative approach to content repurposing, maximizing SGrecast’s potential through dynamic workflows. By blending recordings, live broadcasts, scheduled programming, and podcasting, the Sharks have optimized content utilization.

Rusanowsky further underscores the utilization of StreamGuys’ ad insertion technology to integrate commercials into non-live programming. Analytics provided by SGreports offer valuable insights into audience demographics and listening patterns, empowering targeted marketing strategies.

“Expanding transmitter coverage digitally has immense value, particularly for organizations with niche audiences,” concludes Rusanowsky. “StreamGuys’ solutions offer cost-effective means to reach and engage audiences globally, making it an invaluable asset for any broadcasting endeavor.”

Evision, a player in the media and entertainment realm, is gearing up to introduce the Red Bull TV channel to Starz On, promising an exhilarating experience for thrill-seeking viewers.

In a groundbreaking move, Starz On becomes the inaugural streaming platform in the MENA region to feature the Red Bull TV channel.

Olivier Bramly, CEO of evision by e& life, expressed excitement about the collaboration, stating: “We’re delighted to partner with Red Bull, a brand renowned for its boundarypushing ethos and ability to surpass expectations. Together, we’re poised to elevate entertainment to new heights for Starz On viewers across the region. This alliance signifies a pivotal moment for our MENA audience, as we bring them live events, compelling narratives, and pulse-pounding experiences.”

Red Bull TV isn’t just about adrenaline-fueled action sports; it offers a rich array of live events, shows, and documentaries spanning extreme sports, music, lifestyle, and culture.

Starting February 2024, Starz On users will have free access to the Red Bull TV FAST channel. As the pioneer streaming platform in the region to host the Red Bull FAST TV channel, the streaming service is making this content available through its adsupported streaming platform.

Reliance Industries Limited (RIL), Viacom 18 Media Private Limited (Viacom18), and The Walt Disney Company (NYSE: DIS) have announced a significant partnership aimed at consolidating their digital streaming and television assets in India. The joint venture (JV) will integrate the operations of Viacom18 and Star India, with Reliance committing ₹11,500 crore to support its growth strategy.

Under the agreements, Viacom18’s media business will merge into Star India Private Limited (SIPL) through a court-approved arrangement. Reliance’s substantial investment at closing, approximately ₹11,500 crore or US$ 1.4 billion, underscores its commitment to the JV’s success. The total value of the JV stands at ₹70,352 crore or US$ 8.5 billion post-transaction, excluding synergies. Following completion, Reliance will control

the JV, holding a 16.34% stake, while Viacom18 and Disney will own 46.82% and 36.84%, respectively.

Disney may contribute additional media assets to the JV, pending regulatory and third-party approvals. Mrs. Nita M. Ambani will serve as Chairperson, with Mr. Uday Shankar appointed as Vice Chairperson, providing strategic direction.

The JV aims to become a prominent player in TV and digital streaming for entertainment and sports content in India, leveraging iconic brands such as Colors, StarPlus, StarGOLD, Star Sports, and Sports18, alongside JioCinema and Hotstar. With a combined audience of over 750 million in India and beyond, the JV intends to lead the digital transformation of India’s media and entertainment industry, with the aim of offering diverse and high-quality content accessible anytime, anywhere.

The partnership seeks to enhance the digital entertainment experience by combining Viacom18 and Star India’s expertise, technology, and content libraries. This includes Disney’s acclaimed films and shows, promising a rich and accessible entertainment offering for Indian audiences worldwide.

Furthermore, the JV will have exclusive rights to distribute Disney content in India, boasting a license to over 30,000 Disney assets. Mukesh D Ambani, Chairman & Managing Director of Reliance Industries, expressed enthusiasm about the agreement, emphasizing the joint venture’s potential to deliver unparalleled content at affordable prices.

Bob Iger, CEO of The Walt Disney Company, highlighted the immense opportunities in India, foreseeing long-term value creation through the joint venture. Uday Shankar, Cofounder of Bodhi Tree Systems, emphasized the commitment to delivering exceptional value to audiences, advertisers, and partners, while shaping the future of entertainment in India.

The transaction is subject to regulatory and shareholder approvals and is expected to be completed by the last quarter of Calendar Year 2024 or the first quarter of Calendar Year 2025.

SMPTE and the IMF User Group have announced a collaboration aimed at advancing the Interoperable Master Format (IMF) family of SMPTE Standards. The IMF User Group, established in 2016 under the Hollywood Professional Association (HPA), serves as a global forum for endusers and implementors of IMF standards.

IMF, outlined in SMPTE ST 2067, streamlines the storage of audio-visual content necessary for creating various distribution versions across multiple territories and platforms. This format facilitates business-tobusiness content exchange among content owners, post facilities, and distribution platforms, playing a crucial role in modern content fulfillment. It has significantly contributed to transitioning from tape-based to file-based workflows in television and streaming, emerging as the preferred UHD media delivery format for numerous content providers.

The IMF UG’s mission is to promote IMF adoption by bringing together content owners, service providers, retailers, and equipment/ software vendors. Through member meetings, workshops,

plugfests, and best practice publications, the group fosters collaboration and knowledge sharing. Members actively contribute to shaping the longterm roadmap of IMF standards.

“We are honored to become the home for the IMF User Group and thankful to our colleagues at HPA for all they’ve done to administer the user group to this point,” said SMPTE Executive Director David Grindle. “Community organizations, like IMF UG, are vital to keeping our standards updated with the feedback from those using the systems.”

“IMF is a true example of how a standard was developed in one organization, deployed into the

industry and then gathered a community of users via the HPA and now SMPTE,” said SMPTE President Renard T. Jenkins. “As a longtime member of the UG and SMPTE, I am excited that the user group and the standards community are coming together to continue its growth and further its development.”

According to both companies’ statements, the collaboration between SMPTE and the IMF User Group signifies a strategic partnership aimed at furthering the adoption and development of IMF standards, ultimately benefiting media professionals, technologists, engineers, and stakeholders across the industry.

In a landmark move, BBC Studios has announced its acquisition of full ownership of BritBox International by purchasing ITV’s 50% stake for £255 million. This strategic acquisition positions the BBC’s branch to further expand and scale BritBox International’s reach.

BritBox International, established by BBC Studios and ITV in 2017, has rapidly become the premier streaming service for top-tier British television, particularly known for its compelling mystery dramas. The platform has seen growth, with subscriber numbers increasing by over 300% in the last four years, now boasting over 3.75 million subscribers and valued at approximately £500 million.

This acquisition not only signifies a pivotal moment for BBC Studios

but also ensures the continued delivery of a broad spectrum of British content to BritBox International, thanks to extended licensing agreements with ITV.

Following BritBox International’s move into BBC Studios’ Global Media & Streaming division, its global CEO Reemah Sakaan is stepping down. Sakaan has been an important part of the company since the start, and for the past three years in the role of CEO she has overseen the venture’s accelerated growth and creative success. Tom Fussell BBC Studios CEO said: “I’d like to thank Reemah for her outstanding contribution to BritBox International, which under her stewardship has seen remarkable year-on-year growth. Her passion and dedication has helped create a great culture

and build a business that is loved by audiences and that has real momentum.”

Carolyn McCall, ITV CEO said: “I would like to thank the BritBox International team for making the company such a success and particularly CEO Reemah Sakaan for her leadership, drive and vision.”

BritBox International will be integrated into BBC Studios’ Global Media and Streaming division, enhancing its digital and direct-to-consumer service offerings. Rebecca Glashow, CEO of BBC Studios Global Media & Streaming, expressed enthusiasm about the acquisition, seeing it as a profitable venture with significant growth potential, backed by the full support of BBC.

beIN Media Group has recently secured exclusive rights to broadcast the FIA Formula One World Championship™ in 25 countries, spanning the Middle East and North Africa (MENA) as well as Türkiye, for the next decade. The agreement, extending until the end of the 2033 season, marks a significant milestone in the realm of sports broadcasting for the region.

Under this 10-year deal, viewers across MENA and Türkiye will have access to live coverage of Practice, Qualifying, F1 Sprint, and Grand Prix races through beIN’s flagship platforms – beIN Sports and beIN’s OTT platform TOD. The coverage will include commentary in Arabic, Turkish, and English, along with exclusive analysis from prominent presenters and pundits. Additionally, viewers will have

the opportunity to watch each race in 4K/UHD quality on beIN Sports and TOD. F2 and F3 races will also be available live and exclusively in Arabic, Turkish, and English.

Moreover, the agreement entails an exclusive content partnership tailored for the MENA region, aiming to cater to the diverse and passionate audience in the area. This collaboration will see the creation of region-specific content, with Doha serving as a dedicated production hub, leveraging beIN’s renowned production capabilities.

The 2024 Formula One season, set to feature a record 24 race calendar, will kick off and conclude in MENA. This season includes four major regional Grands Prix, commencing in Bahrain in February and

culminating in Abu Dhabi in December.

Yousef Al-Obaidly, CEO of beIN Media Group, expressed enthusiasm about the return of Formula 1 to the beIN platform, highlighting the significance of the sport within their portfolio and their commitment to delivering captivating experiences to fans across the region.

Stefano Domenicali, President and CEO of Formula 1, emphasized the partnership’s role in enhancing the broadcast experience for fans at home, acknowledging the growing fanbase in the Middle East and Türkiye.

Ian Holmes, Director of Media Rights and Content Creation at Formula 1, echoed the sentiment, acknowledging the high demand for Formula 1 in the region and expressing confidence in beIN’s ability to elevate the broadcast programming.

This long-term partnership not only expands beIN’s global footprint as a sports broadcaster but also signifies a strategic move to enhance the multi-sport offering for viewers in MENA and Türkiye, according to the company’s statements.

In a strategic move aimed at bolstering its position in the professional digital cinema camera market, Nikon Corporation (Nikon) has announced its agreement to acquire RED.com, LLC (RED), a leading US cinema camera manufacturer. Under this agreement, Nikon will acquire 100% ownership of RED, making it a wholly-owned subsidiary, pending the fulfillment of certain closing conditions outlined in the Membership Interest Purchase Agreement with RED’s founder, Mr. James Jannard, and its current President, Mr. Jarred Land.

RED, established in 2005, has been a trailblazer in the realm of digital cinema cameras, introducing products such as the original RED ONE 4K and the cutting-edge V-RAPTOR [X] equipped with its proprietary RAW compression technology.

Recognized with an Academy Award, RED cameras have become the preferred choice for numerous Hollywood productions, acclaimed by directors and cinematographers worldwide for their innovative features and unparalleled image quality tailored for top-tier filmmaking and video production.

The acquisition stems from the shared vision of Nikon and RED to meet customer demands and deliver great user experiences, amalgamating the strengths of both entities, according to both technological companies. As both companies stated, Nikon’s renowned expertise in product development, reliability, and proficiency in image processing, optical technology, and user interface, combined with RED’s expertise in cinema cameras, including distinctive image compression technology and color science, will pave the way for the creation of unique products in the professional digital cinema camera market.

In the Japanese technological group’s own words, this acquisition marks Nikon’s strategic move to capitalize on the burgeoning professional digital cinema camera market, leveraging the solid business foundations and networks of both companies.

Dalet and Veritone have announced a collaboration to integrate the Dalet Flex media workflow ecosystem with Veritone’s AI-powered Digital Media Hub (DMH), featuring commerce and monetization capabilities. This partnership aims to streamline workflows from content creation to distribution, offering media, sports, and entertainment customers the means to monetize their digital media archives effectively.

“With content consumption being at an all-time high and media-rich organizations seeking new ways to bring in additional revenue streams, monetization of media archives and assets is key,” states Carl Farrell, CEO and Board Member of Dalet. “The combined power of Dalet and Veritone enables customers to overhaul their monetization initiatives, exposing and licensing their assets quickly, securely and with the highest level of control.”

Through the Dalet and Veritone referral partnership, media and entertainment companies can maximize the return on investment of their content assets and generate new revenue streams. The solution ensures secure and scalable content delivery to partners while allowing organizations to retain control over their content catalog.

“Veritone’s AI-enabled technology has long been the tool of choice for some of the world’s most recognized brands because of its ability to more efficiently and effectively organize, manage and monetize content,” said Sean King, SVP, GM at Veritone“.

ROXi, a music streaming company, is set to debut a series of linear music video channels on FAST (Free Advertising Supported Television). Frequency, known for powering several renowned streaming television channels and connected TV platforms worldwide, has been chosen by ROXi to deliver these channels to FAST providers. LG has become the first to introduce ROXi’s music video channels to UK audiences, with 10 channels already accessible on LG Channels.

The array of ROXi music video channels, expertly delivered by Frequency to LG Channels’ FAST users on LG Smart TVs across the UK, includes “Hot Right Now,” showcasing the latest music videos from global icons like Ed Sheeran, Taylor Swift, and Calvin Harris, along with “Music Video Karaoke,” presenting official music videos of beloved karaoke tracks with scrolling lyrics. Additionally, viewers can enjoy “Greatest Music Videos of All Time,” featuring a curated selection of timeless classics from artists such as Beyoncé, Prince, and Eminem.

ROXi CEO Rob Lewis expressed his commitment to providing consumers with an unparalleled music experience on television,

stating, “We believe consumers deserve the best made-for-TV music experience, which is why we’re making ROXi’s curated music video channels available to millions of FAST users for free.” Lewis emphasized the data-driven approach of ROXi, leveraging insights from years of ROXi TV app usage to craft highly optimized linear music video channels for FAST platforms.

Utilizing Frequency Studio 5.0, ROXi is swiftly deploying its music video channels across various platforms, including LG Channels. Frequency CEO Blair Harrison highlighted the synergy between ROXi’s curated content and the FAST landscape, emphasizing the need for efficient and costeffective channel launches.

Harrison noted that Frequency Studio 5.0 offers ROXi an efficient suite of tools to ensure seamless deployment.

ROXi’s expansion into FAST channels complements its existing TV app business, which provides on-demand interactive music video streaming and curated channels. With the meteoric rise of FAST services globally, ROXi sees a significant opportunity to engage new audiences through curated music content.

In addition to its FAST channels, ROXi’s free TV app is already available on a wide range of Smart and Pay TV platforms, including Sky Q, Stream and Glass, LG, Samsung, Fire TV, Android TV, and Google TV. Besides, ROXi’s entry into FAST follows its recent collaboration with Sinclair Broadcast Group, announced at CES, which saw the launch of interactive music channels on the new digital standard for US TV, ATSC 3.0.

In an exclusive interview with TM Broadcast International Magazine, we delve into the creative mind behind the visual spectacles of one of Britain’s most beloved shows, Doctor Who. Will Cohen, the mastermind VFX producer responsible for bringing the Time Lord’s adventures to life, shares his experiences and insights from working on the series, especially during its landmark 60th anniversary specials. Cohen’s journey with Doctor Who is a fascinating blend of nostalgia, innovation, and creative camaraderie, highlighting the challenges and triumphs of modern visual effects storytelling.

This interview offers a rare glimpse into the intricate world of VFX production in television, through the lens of a seasoned professional who has helped shape the visual landscape of a series that has captivated audiences for decades. Cohen’s journey with Doctor Who is not just a testament to his personal achievements but also a signal fire for the future of visual storytelling in the ever-evolving world of television and cinema.

Can you share insights into your role as VFX producer for Doctor Who, particularly in the 60th anniversary special episodes?

Reflecting on my journey as the VFX producer for Doctor Who, particularly during the momentous 60th anniversary special episodes, it evokes a tapestry of emotions and memories. My adventure with this beloved series commenced in the early 2000s, a period that now seems both a distant memory and as vivid as yesterday. The serendipity of life’s timing played a pivotal role in my return to this universe. As I was transitioning away from leading a visual effects company, a chance encounter with Phil, a producer and an old acquaintance, at the theatre post-pandemic, rekindled connections to my past work on Doctor Who. Our subsequent conversation in January 2022 was a watershed moment. I shared insights into how the visual effects industry had evolved dramatically, especially in the wake of the pandemic’s disruptions and the subsequent surge in content production. This era of transformation

presented both challenges and opportunities, marking a distinct departure from the landscape Phil was accustomed to.

Motivated by a desire to contribute to the show’s enduring legacy, I transitioned into a consulting role to navigate the evolving VFX landscape and strategize for the show’s ambitious visual effects. This consultancy soon blossomed into a full-fledged role as the VFX producer, thanks to Phil’s proposition and the collaborative spirit of

Joel Collins and the executive team.

Embarking on this project felt like a harmonious blend of nostalgia and innovation. The unveiling of the plan for the specials, coupled with the compelling scripts, filled me with anticipation and excitement. Reuniting with Russell (T. Davis), David (Tennant), Catherine (Tate), Julie (Gardner), and Jane (Tranter) was not just a professional engagement but a heartfelt reunion of creative minds.

The ethos behind our strategy was to elevate the series to a cinematic echelon, aspiring to meet the lofty standards of global entertainment giants.

Fundamentally, my journey has come full circle, returning me to the origins of my adventure with Doctor Who. It says a lot about the enduring impact of the series and the ever-evolving art of visual effects storytelling. The opportunity to contribute to such a landmark occasion in the series’ history has been both a privilege and a thrilling challenge, embodying the spirit of innovation and creative camaraderie that defines the series.

How did Bad Wolf Productions approach the monumental task of celebrating Doctor Who’s 60th anniversary, considering its rich history?

Navigating the grand celebration of Doctor Who’s 60th anniversary was akin to orchestrating a symphony with numerous moving parts, each requiring meticulous attention and coordination. The landscape of 2022 presented a unique set of challenges, underscored by the bustling nature of the industry.

Reaching out to esteemed colleagues across the globe, I was met with the stark reality of the times: lengthy waiting periods and substantial financial commitments were the new norm, a testament to the industry’s unprecedented demand.

Amidst this bustling backdrop, our objective was crystal clear: to craft a series of specials that not only honoured the rich tapestry of Doctor Who’s history but also reignited the passion of its global fanbase.

Collaborating closely with Dan May, Joel (Collins), Phil (Sims) and the creative pillars of Russell, Julie, David, and Jane, we devised a strategic plan. Our approach championed the engagement of boutiquestyle visual effects companies,

each possessing unique talents and an eagerness to contribute to the Doctor Who legacy.

The ethos behind our strategy was to elevate the series to a cinematic echelon, aspiring to meet the lofty standards of global entertainment giants. This ambition was not just about enhancing the visual spectacle; it was about rekindling the essence of Doctor Who in a contemporary context, ensuring it resonated with both longstanding fans and new audiences alike.

Our tactical approach involved diversifying our visual effects partnerships rather than consolidating our resources with a singular entity. This

decision was driven by a desire to infuse the project with a sense of zeal and innovation reminiscent of our initial forays into the Doctor Who universe. We sought partners who would not only relish the global visibility but also potentially embark on new creative trajectories because of their involvement.

The logistical timeline was tight, with a mere three months to transition from conceptualization to execution, a period during which we tirelessly sought and engaged with companies and individuals whose visions aligned with ours. This process was deeply personal to me, prioritizing collaborations with professionals who shared a

direct line of communication, ensuring that decisions were made swiftly and effectively without delays.

My role transcended the mere assembly of a plan; it was about stewarding this plan through the inevitable ebbs and flows of production, extinguishing unforeseen fires, and continuously adapting our strategy to the evolving narrative and technical demands of the series. This journey was not just about revisiting the past; it was about defining what Doctor Who means in the contemporary era and how it can continue to captivate and inspire. The excitement of projecting the series into the future, while

rooted in its illustrious past, made this venture not only a professional commitment but a personal passion.

What was the vision behind the new opening sequence for Doctor Who on BBC iPlayer, showcasing every era of the series?

To delve into the creative process behind the new opening sequence for Doctor Who on BBC iPlayer, an endeavour that artfully encapsulates the series’ rich tapestry of eras, is an intriguing topic. Although this aspect fell more squarely within the purview of Joel (Collins), one of the executive minds, and the branding and marketing team, their vision

From my perspective, even as an observer in this instance, the opening sequence stood as a bold declaration of the series’ ongoing evolution, inviting viewers of all ages to partake in the timeless adventure that is Doctor Who.

was nothing short of brilliant, drawing evident inspiration from the grand storytelling traditions of industry giants like Marvel and Disney.

I must admit, my involvement in this specific facet of the project was minimal, and I came across the final output somewhat later in its development. The selection process for the content, given the voluminous archives spanning decades of Doctor Who history, must have been an Herculean task. The final product, vibrant and resonant with the series’ legacy, struck me profoundly when I first laid eyes on it. It was a masterful blend of nostalgia and contemporary flair, designed to appeal to a broad spectrum of audiences, from die-hard fans familiar with every Doctor’s quirk and adversary to newcomers embarking on their first journey through time and space.

The sequence, with its rapid-fire montage of iconic moments, characters, and settings, was meticulously crafted to capture the essence of Doctor Who’s enduring appeal. Each frame, each transition was a nod to the series’ heritage while also serving as a beacon for its future direction. From my perspective, even as an observer in this instance, the opening sequence stood as a bold declaration of the series’ ongoing evolution, inviting viewers of all ages to partake in the timeless adventure that is Doctor Who. Witnessing this fresh yet respectful homage to the series’ history was both humbling and exhilarating, underscoring the boundless creativity and reverence for the Doctor Who legacy that continues to fuel its journey through time and space.

Russell T Davies aims to make all Doctor Who content available in one place. How did this impact your role in coordinating VFX for the expanded Whoniverse?

My focus as the VFX producer remained steadfastly on the monumental task at hand. The intricacies of coordinating visual effects for such an expansive narrative

landscape are, by nature, immersive and demanding, often transcending broader strategic initiatives.

Our mission was clearcut yet complex: to bring to life the myriad stories within the Whoniverse with the resources at our disposal. This endeavour often involved meticulous deliberation on every visual

element we intended to create, balancing creative aspirations with budgetary constraints. The art of visual storytelling, particularly in a universe as rich and varied as Doctor Who’s, necessitates a thoughtful approach to each scene, each effect, considering its narrative impact and feasibility.

The evolution of our processes, especially with advancements in previsualization techniques, has undoubtedly enhanced our ability to craft epic, cinematic

experiences that resonate with the series’ legacy. Yet, the essence of our work remains unchanged. The quest to achieve narrative depth and visual grandeur within the parameters set before us continues to be our guiding principle.

Navigating through thousands of visual effects shots across the specials and the series, coordinating with multiple vendors, and managing an array of meetings and communications consumed our daily operations. The

initial phase of the specials was particularly pivotal, setting the tone and expectations for our collaborative efforts. Our reliance on the expertise and direct involvement of our early vendor partners was instrumental in laying the groundwork for the visual effects department.

While the broader discussions about unifying the Doctor

Who content and expanding its universe buzzed around us, they served more as a backdrop to our immediate priorities. However, the prospect of contributing to a larger, more interconnected Whoniverse did imbue our work with an added layer of excitement and anticipation. The idea of Doctor Who evolving into an even more expansive franchise, with

spinoffs and new narratives, while not directly impacting our day-to-day responsibilities, certainly fuelled our enthusiasm for the project. It underscored the significance of our work in shaping the future of this beloved series, reminding us of the vast, imaginative canvas we were helping to bring to life.

The introduction of the spin-off series “Tales of the TARDIS” is an exciting addition. How did VFX contribute to this new series, and what can viewers expect?

Embarking on the “Tales of the TARDIS” series was a remarkable journey that underscored the unique stature of Doctor Who, not just as a television series but as a cultural phenomenon akin to the likes of James Bond. This project, particularly in the wake of the 60th anniversary celebrations, brought to light the immense responsibility and honour of contributing to such an esteemed legacy. Doctor Who has always transcended the typical confines of a sci-fi show, capturing the imagination of audiences and the media alike, a real proof of its significant place in British heritage.

The introduction of “Tales of the TARDIS” amidst this landmark celebration added a new layer of complexity and excitement to our work. The series, akin to a vibrant tapestry of the Whoniverse, brought forth a multitude of opportunities and challenges, from engaging with the colour restoration of classic episodes to navigating the intricacies of new narratives. These endeavours were not just tasks but a tribute to the storied history of Doctor Who, an opportunity to delve deeper into its rich mythology and bring forth new stories that resonate with both longtime fans and newcomers.

In terms of visual effects, our role in “Tales of the TARDIS” was nuanced and collaborative. Given the series’ scope and the budgetary considerations, our involvement centered around conceptual discussions on how to craft compelling opening and closing sequences that were both visually engaging and financially viable. This collaborative effort speaks volumes about the creative synergy that defines the production of Doctor Who, where every decision is a delicate balance between ambition and feasibility.

The orchestration of VFX post-production work within the Doctor Who series is a symphony of collaboration, dialogue, and meticulous planning that begins right from the initial stages of script development and pre-production.

Moreover, the presence of iconic actors from the Doctor Who legacy wandering the corridors during production served as a constant reminder of the series’ storied past and the legacy we were contributing to. It was a surreal experience, one that bridged generations of storytelling and brought the history of the TARDIS to life in a new light.

While the primary visual effects responsibilities for “Tales of the TARDIS” were adeptly handled by the talented team at Painting Practice, our involvement in the discussions and

planning stages was crucial. It underscored the collaborative spirit that is the hallmark of Doctor Who’s production, where every department brings its expertise to the fore to collectively elevate the narrative.

How do you divide the VFX post production work among the different departments or professionals?

The orchestration of VFX post-production work within the Doctor Who series is a symphony of collaboration, dialogue, and meticulous planning that begins right from the initial stages of script development and preproduction. My experiences working alongside talents like Phil Sims, our production designer, and Joel (Collins) have been instrumental in shaping the visual narrative of the series.

From the outset, we immerse ourselves in extensive discussions and visual explorations to map out the conceptual framework for key sequences, debating the nature of creatures and elements we aim to bring to life, be they prosthetic, digital, or a hybrid. These early deliberations are crucial,

as the decision to construct elements practically demands significant lead time well before filming commences, encompassing real-time preparations and other logistical considerations.

As the directorial vision takes shape, our team engages closely with the experts at Painting Practice to delve into pre-visualization processes. This phase is not just about laying out scenes; it’s an expansive creative exercise where directors, alongside our VFX team, scout locations, refine the narrative, and envision the story’s visual representation. This collaborative journey ensures that every department, from scriptwriters to set designers, shares a unified vision, fostering a cohesive and harmonious production environment.

One of the unique aspects of our workflow is the seamless integration of Painting Practice within the art department, bridging the gap that often exists between production design and visual effects. This integration allows for a more holistic approach to visual storytelling, where ideas and feedback flow freely, ensuring that every visual element, from the grandest set piece to

the smallest detail, contributes to the narrative’s overall impact.

This collaborative ethos extends to our interactions with the entire production team, where early discussions, shared visualizations, and collective brainstorming sessions set the foundation for a synchronized effort. By involving all heads of departments from the earliest stages, we not only align our creative visions but also streamline the logistical aspects of production, from budgeting to scheduling.

The division of VFX postproduction work is less about segmenting tasks among departments and more about fostering a culture of open communication, shared responsibility, and creative partnership. This approach not only enhances the efficiency and coherence of our work but also ensures that the magical world of Doctor Who is brought to life with the utmost fidelity to our collective vision.

Can you elaborate on the collaboration with Painting Practice in designing VFX for the 60th-anniversary special episodes, including the unique challenges faced?

Collaborating with Painting Practice on the visual effects for the 60th-anniversary specials of Doctor Who was an extraordinary journey, enriched by the collective genius of incredibly talented

individuals. Phil Sims, our esteemed production designer, has a longstanding rapport with Painting Practice, which set a strong foundation for our collaboration. The depth of experience in visual effects and design within their team was pivotal in bringing to life sequences that demanded grandeur and finesse.

One memorable instance was the meticulously pre-visualized

sequence involving helicopters approaching UNIT tower in the third special. This sequence, reminiscent of high-octane cinematic experiences, truly elevated the visual storytelling, embodying the essence of Doctor Who in its most majestic form. The realization of such scenes required a harmonious blend of design, narrative integration, and visual effects artistry. Phil’s vision for UNIT tower and its surroundings, coupled with the adept supervision of Dan May and the creative courage of Painting Practice’s team, translated into visuals that resonated with cinematic quality, seamlessly blending with the narrative fabric of Doctor Who.

The key to achieving such impactful visuals lies in the early stages of planning. It’s not about retrofitting grandeur into existing footage; it’s about embedding scale and scope from the conceptual phase. This foresight in planning allows for a more cohesive visual narrative, where each element is purposefully designed to contribute to the overarching story.

Our approach to visual effects is deeply rooted in a comprehensive understanding

of the filmmaking process. We constantly evaluate the feasibility of achieving certain effects in-camera versus in post-production, considering factors such as time, budget, practicality, and overall visual impact. This evaluation is a collaborative effort, involving discussions with directors, cinematographers, and producers to determine the most effective approach to bring our shared vision to life.

The collaboration with Painting Practice, Dan, Phil, and the various visual effects studios was emblematic of the creative synergy that drives this industry. It’s a testament to the magic that unfolds when experts from diverse fields unite, each contributing their unique perspective and expertise. This partnership was not just about working alongside one another; it was a deeply integrated process where ideas, challenges, and solutions were shared openly, fostering an environment of creativity and innovation.

In essence, the journey of designing VFX for the 60thanniversary specials was a celebration of collaborative artistry, where the fusion of design, narrative, and technical expertise culminated in visuals that not only

honoured the legacy of Doctor Who but also pushed the boundaries of what’s possible in visual storytelling. It was an endeavour that, through the challenges and triumphs, reminded us of the exhilarating possibilities when we come together to create something truly extraordinary.

In the specials episodes, there are futuristic and sci-fi scenes. How did you use virtual production and CGI to bring these elements to life, especially in the episode “Wild Blue Yonder”?

The making of “Wild Blue Yonder” for Doctor Who’s special episodes presented an exhilarating blend of challenges and opportunities, particularly in the realms of virtual production and CGI. From the early conceptual discussions in March-April 2022 to the delivery in AugustSeptember 2023, the journey was a testament to the ingenuity and collaborative spirit that defines our work.

Russell’s vision for this episode, with its expansive 40-kilometer spaceship corridor, demanded a creative approach that balanced

Collaboration with Real Time, a company with extensive experience in gaming and virtual technologies, was key.

ambitious storytelling with practical execution. The options before us ranged from filming on massive locations to constructing extensive sets or leveraging green screens for chroma keying. Each potential solution came with its own set of complexities, particularly given the time constraints and budget considerations.

The pivotal decision to harness virtual effects to realize the corridor was born out of necessity and innovation. Working with Tom Kingsley, a director whose fresh perspective and openness to digital technologies were invaluable, we embarked on a meticulous pre-visualization process. This phase was crucial, as it involved storyboarding every moment to ensure clarity in narrative progression and visual coherence.

Our solution was a hybrid form of virtual production, where only the floor was physically built for the actors, with the rest of the

environment rendered digitally. This approach required Tom to storyboard extensively, ensuring every scene was meticulously planned in relation to the virtual space.

Collaboration with Real Time, a company with extensive experience in gaming and virtual technologies, was key. They helped us transition the pre-visualization assets into shoot-ready digital environments using Unreal Engine, allowing us to maintain visual consistency and adapt in real-time to the dynamic needs of filming.

The use of Mo-Sys technology was another cornerstone of our strategy, enabling us to track camera movements and render backgrounds in near real-time, a process that significantly enhanced the actors’ ability to interact with their surroundings, despite the prevalence of green screens.

This venture was not just about employing new

technologies; it was about reimagining the workflow of visual effects to suit the narrative and technical demands of “Wild Blue Yonder.” The editing process, led by the talented Tim (Hodges), was streamlined thanks to the pre-rendered backgrounds, allowing for a more intuitive and efficient post-production phase.

The economic efficiency of rendering 4K backgrounds for significant portions of the episode underscored the value of this hybrid approach. Yet, traditional VFX techniques were still vital, especially for sequences involving spaceships and the dramatic implosion of the corridor, showcasing the diverse skill set required to bring such a complex episode to life.

It’s clear that innovation, while inherently risky, is crucial for pushing the boundaries of what’s possible in television production. “Wild Blue Yonder” not only honoured the legacy of Doctor Who by embracing new techniques but also set a precedent for future explorations in virtual production, highlighting the ever-evolving nature of storytelling in the digital age. The experience was a profound reminder of the joys and challenges ingrained in Doctor Who, where no two projects are ever the same, and the potential for creativity is boundless.

We were going to ask for the corridor and spaceship environment and how did you create it using virtual production and

new technologies, but you already gave us an idea. Absolutely, the evolution of these technologies, particularly the unique hybrid workflow we’ve adopted for Doctor Who, is something I eagerly anticipate. This approach notably alleviates some of the conventional pressures of production, specifically the need to retroactively address issues that arise with virtual production environments.

Traditionally, production teams are accustomed to a certain flow - scouting and selecting locations, finalizing cast, constructing sets, and making real-time adjustments to props and set designs. This tangible, handson methodology is deeply ingrained in the production

psyche, offering a level of flexibility and immediacy that’s challenging to replicate in a purely digital environment.

The shift towards virtual production, particularly with LED screens and comprehensive previsualization, demands a significant paradigm shift. Every element, from the backdrop to the minutiae of a scene, must be meticulously planned and integrated into the virtual environment well before the actual shoot. This level of pre-production detail can be daunting, as it seemingly locks in creative decisions far earlier than traditional methods, potentially constraining the spontaneity and fluidity that come with on-the-spot direction and production design adjustments.

However, the trade-offs come with their own set of advantages, such as the ability to create and manipulate vast and complex environments that would be impractical, if not impossible, to construct physically. The challenge lies in adapting to this new rhythm, embracing the opportunities it presents while navigating the constraints.

As the industry begins to acclimate to these virtual production tools, it’s fascinating to witness the

growing understanding and appreciation of their potential. The learning curve is steep, but the creative possibilities are expansive, promising a future where the lines between physical and digital production blur, offering unprecedented storytelling capabilities. This transition period is a crucible of innovation, and I’m eager to see how these methodologies evolve, enhancing our ability to bring the fantastical worlds of Doctor Who to life with even greater authenticity and immersion.

Which technology has boosted VFX the most during last years? If you have to choose among all the advances, tech advances that have been until now, which one would you choose?

Navigating through the myriad of technological advancements that have revolutionized the VFX landscape is no small feat. The continuous improvements across the board are staggering, but if I were to highlight one transformative development, it would be the advent of Universal Scene Description (USD) pipelines. This innovation has fundamentally altered the collaborative dynamics

within visual effects studios, allowing for a more integrated and cohesive approach to scene creation. This departure from the sequential, compartmentalized workflows of the past has been nothing short of revolutionary, enabling teams to work concurrently on complex scenes, thereby enhancing both efficiency and output quality.

However, the emergence of Unreal Engine as a tool in the VFX arsenal marks a significant milestone in our industry. Beyond the initial buzz surrounding LED walls, Unreal Engine offers a level of immediacy and flexibility that was previously unattainable. This tool embodies the convergence of real-time rendering capabilities and high-quality visual effects, a combination that has long been the holy grail for VFX artists, particularly those of us drawing inspiration from the gaming industry.

The pandemic underscored the potential of virtual production, with promises of remote shooting locations and reduced logistical hurdles. While these benefits are tangible, the reality is nuanced, encompassing both advantages and limitations, from cost implications to

aesthetic considerations. Yet, the true value of Unreal Engine lies in its capacity to dramatically accelerate the visualization process. The ability to rapidly prototype and iterate on environments, down to the details like foliage density or water placement, without leaving the digital domain, is a game-changer. This efficiency not only saves time but also opens up new realms of creative exploration, enabling us to refine our visions with unprecedented speed and flexibility.

Looking ahead, the integration of artificial intelligence into the VFX workflow looms on the horizon, promising further innovations in how we conceive and execute visual effects. The potential for automating certain tasks within the VFX pipeline could empower smaller teams to accomplish more, amplifying both creativity and productivity.

Tools like Unreal Engine are not just about doing more with less; they’re about expanding the boundaries of what’s possible in visual storytelling. They offer us the ability to bring our wildest imaginations to life with a speed and fidelity that was previously unimaginable,

marking an exciting era for creators and audiences alike.

The regeneration of the Doctor is always a crucial moment. How did you approach the VFX for the iconic regeneration sequence into the Fifteenth Doctor?

Approaching the regeneration sequence into the Fifteenth Doctor was a venture that required a blend of meticulous planning, creative innovation, and technical prowess. Russell T. Davies, with his distinctively visual storytelling style, crafts narratives that are rich in detail and vivid in imagination. His scripts serve not just as textual narratives but as blueprints brimming with visual cues, guiding the visual effects team towards his envisioned spectacle.

For the regeneration sequence, while Russell’s script laid the groundwork, it left room for visual interpretation and innovation, particularly concerning the design of the VFX. This iconic moment in Doctor Who’s lore necessitated a blend of creativity and technical ingenuity to bring to life. The preparation involved a thorough previsualization of

the action, but the specific design of the regeneration effect itself was something we decided to evolve postfilming, providing us with the flexibility to refine and adapt the visuals.

The execution of this sequence was a testament to the collaborative spirit of our team. Utilizing a techno crane for a motion control pass, we were able to craft a seamless transition between the Doctors, a process that was both ambitious and fraught with logistical challenges. Concerns about the feasibility of this approach, given the weight restrictions and the complexity of the setup on the back lot, nearly led us to reconsider. I must confess, there was a moment when I contemplated a more conventional approach, questioning the necessity of such an elaborate setup for a moment already imbued with inherent significance.

However, the determination and ingenuity of our team, particularly Sean Varney, our shoot VFX Supervisor, and Richard Widgery, a motion control expert, were instrumental in overcoming these hurdles. Sean’s practical problem-solving on set, combined with

Richard’s custom software for technical visualization, allowed us to transform Dan’s dynamic previsualized shot into a feasible reality. Their perseverance and technical acumen were pivotal in realizing this sequence, ensuring that the ambitious vision could be actualized without compromise.

The post-production phase was equally critical. Seb (Sebastian Barker), our VFX supervisor from Automatik, played a crucial role in defining the final aesthetic of the regeneration effect. His initial designs and subsequent refinements encapsulated the essence of the moment, blending seamlessly with the narrative and visual fabric of the series. This period of post-production, enriched by the luxury of time, allowed for a thoughtful consideration of

the show’s evolving tone and visual language, ensuring that the regeneration sequence was not only a spectacle but a coherent part of the series’ broader aesthetic.

I insist it’s evident that while technology serves as an enabler, amplifying our creative capabilities, the heart of our industry lies in the people. The collaborative synergy between writers, directors, VFX artists, and technical experts underscores the human element at the core of storytelling. Just as the essence of editing remains centered around the editor’s vision, irrespective of the tools at their disposal, the magic of visual effects is a product of human creativity and ingenuity. In the end, the realization of such iconic moments in Doctor Who is a celebration of collective

creativity, a harmonious blend of vision, talent, and technology.

Sound has a lot of importance in film industry, how do you see the intersection of audio and visual elements in creating a seamless viewer experience, especially in a series like Doctor Who?

In the realm of film and television production, particularly in a series as immersive as Doctor Who, the symbiosis between sound and visual elements is paramount. Recently, I navigated through a complex segment of work, the intricacies of which I’m eager to share in the coming months. This experience reinforced a fundamental truth: sound constitutes more than half of the storytelling impact. It’s the unsung hero that, when misaligned, can unravel even the most visually stunning sequences.

The visual effects domain often bears the brunt of misconceptions, with CGI sometimes unfairly maligned. Yet, it’s crucial to recognize that many challenges perceived as visual can, in fact, find their resolution within the auditory landscape. There was a particular instance where

no visual adjustment could rectify an issue — the solution lay entirely in the auditory realm. This underscored a vital lesson: the potency of sound in shaping narrative perception and emotional engagement is unparalleled. Consider cinema’s most iconic moments; their resonance is as much about the auditory experience as the visual. The synergy of music and sound design plays a crucial role in enveloping the audience, transporting them into the narrative’s heart. A prime example is the film “The Insider,” where Russell Crowe’s character’s escalating paranoia is masterfully amplified through sound design — a testament to audio’s capacity to manipulate emotion and tension.

In the context of virtual production, the principles of sound design and integration remain unchanged. The process of capturing performances on stage may be consistent, but the post-production phase is where sound truly sculpts the viewer’s experience. Collaborating with our executive team has highlighted the exhilaration that accompanies the final mix — a pivotal juncture where the visual and auditory

elements coalesce to realize the narrative’s full potential.

The grading process, too, plays a critical role in this alchemy. Our colourist, an integral member of the visual effects team, leverages advanced software capabilities to elevate the visual narrative, marrying it seamlessly with the sound design to enhance the story’s emotional and thematic depth.

This confluence of sound and visuals in the final stages of production is a moment of culmination, where months of iterative edits and versions transform into the cohesive and polished entity that reaches the audience. It’s a period marked by anticipation and excitement, as we witness the disparate elements of our creative endeavour meld into a singular, compelling narrative. In Doctor Who, where the canvas of storytelling spans the cosmos and traverses time, this harmony between sound and visuals is not just desirable — it’s essential to capturing the imagination of viewers and faithfully conveying the grandeur and nuance of the Whoniverse.

Finally, we were wondering about your future projects as VFX

producer and BadWolf’s future developments; any interesting project that you can share with us?

Currently, my focus is entirely dedicated to Doctor Who, with my tenure on this iconic series drawing to a close in the upcoming months. It’s been an immersive journey, one that has deeply engaged me, leaving little room to venture into other projects at this juncture. However, I’m keenly aware of the vibrant developments within Bad Wolf, buoyed by the insights from our executive team who are involved in a slew of other ventures.

Bad Wolf is at the forefront of embracing cutting-edge technology, with a steadfast commitment to producing content that stands out for its quality and innovation. The pipeline is brimming with exciting projects, both within and beyond the Doctor Who universe, some of which you’ve hinted at in your inquiry. The future looks promising for Bad Wolf, poised to elevate their repertoire with even more compelling narratives and groundbreaking visual storytelling. So, I encourage everyone to keep an eye on their upcoming productions; there’s much to anticipate and celebrate in what lies ahead.