There is less than one week left, as we write these lines, before one of the most important events in the world of Broadcasting, -the NAB Show- begins. One more year, TM Broadcast International will attend the Las Vegas Convention Center so as not to miss any element of the novelties and technological advances that more than 1,000 exhibitors will showcase in this edition.

This year, the fair is celebrating no less than its 100th anniversary, and there will be many events and activities to celebrate it. Although its number of attendees is still far from pre-pandemic figures, the organizers expect that it will sharply increase this year. In 2022 52,000 people visited the fair, including technology manufacturers, broadcasting companies, filmmakers, producers, journalists and many other industry professionals. This is still a far cry from the 100,000 attendees in 2019, but undoubtedly in 2023 we will see an increase in visitors as compared to last

year. Because although remote working has spread considerably throughout the world, face-to-face is essential when doing business.

Technology fairs are an integral part of development and innovation in any field. They represent a unique opportunity for companies to introduce their latest products and services to a global audience and for attendees to discover new trends and emerging technologies in their field of work. Technology manufacturers can present their latest inventions and receive direct feedback from end users, which in turn can help improve and refine their products.

In addition, these events are a crucial source of information and education for industry professionals. Visitors can attend conference sessions and workshops on relevant industry topics and learn from experts and leaders in their respective fields. So let’s pack! The NAB Show is waiting for us.

There are only a few days left before the NAB Show, one of the most important fairs in the Broadcast sector, begins. Although the visitors and exhibitors who attend it are mostly American, the truth is that many Europeans also come as well as visitors from other continents. One more year, the TM Broadcast International team will be there to examine all the novelties firsthand. In the next issue, an extensive report will cover everything that we find interesting for the development of our market. In the meantime, in this article we make a compilation of what, in our opinion, will be most relevant to see at the Las Vegas Convention Center.

Alessandro Lonati, Director of Infront Productions, and the project management team of Thomas Borghi, Alessandro Zampini and Andrea Mariani, explain how this competition is produced and what are the most important challenges they have had to overcome.

Edge computing technology is becoming an essential tool for streaming live and real-time events. In this article, we will delve into the details of edge computing, its application in live audiovisual broadcasts and the benefits it brings to viewers and broadcast service providers.

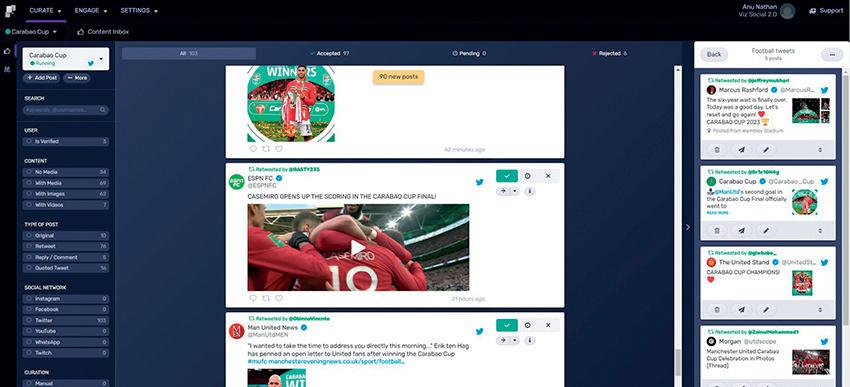

Vizrt has announced the release of the new version of Viz Social, its cloud-based platform remote and instudio production of live graphics and interactive content.

Completely cloud-native and browser-based, it features a Flowics-based interface to provide a more intuitive and improved experience for users. This version of Viz Social increases speed, simplicity and offers users an advanced suite of integrations with social media and messaging platforms, alongside addons such as Twitch and Facebook Live extensions. Users of Viz Social can also incorporate custom data

from RSS and JSON feeds.

Unique interaction mechanics such as ‘Flockto-Unlock’ enable producers to generate interest around exclusive content which is ‘unlocked’ by reaching a target. Audience scoring and a real-time on-air progress bar further drives audience engagement. These interactive activities offer a distinctive viewing experience and create giveaway opportunities for content creators.

Other advanced capabilities of Viz Social include: easy integration with Vizrt graphics workflows (Viz Trio, Viz Pilot Edge), supports a wide range of broadcast graphics engines, hashtag-

based polls across multiple social media platforms, audience engagement mechanics, social media counters and streaming Extensions.

“Many conversations we have had with broadcast customers recently have reinforced the need for clever solutions regarding visualization and viewer participation tools. The acquisition of Flowics by Vizrt last year expanded our potential to offer a faster, more efficient, and optimized platform for the curation, management and visualization of social media posts,” says Tehseen Akhtar, Deputy Global Head of Product Management Vizrt Group.

Disguise has announced the launch of EX 3, a 4K video playback solution designed specifically for location-based experiences and fixed installations.

It has been designed for minimal latency and lets creatives use the same disguise tools that power the immersive Illuminarium experience. The platform includes disguise’s Designer software, newly-launched APIs and the disguise Cloud collaboration toolkit.

It has three 4K video outputs suitable for both projection and LED displays. Depending on the needs of the show, additional EX 3 machines can be added and synced together to

scale out the video playout. EX 3 units can even be used in a session with other disguise media servers for more complex experiences. Users can work at scale and maintain the ability to make changes to their content on the fly, from Designer, without changing the system infrastructure.

“Creative studios increasingly have to deliver a wider variety of projects,” says Raed Al Tikriti, Chief Product and Technology Officer at disguise. “With EX 3, we’ve made doing this easier and more accessible than ever. Even if you only need video playback for an hour, you’ll be able to design, edit and deliver scalable installations, all

without leaving the disguise platform.”

“Displaying high-resolution video on a building, LED stage or event space can make a massive difference to how immersive your final experience is,” says Tikriti. “With EX 3 you can do this while being flexible for whatever tomorrow might bring. You can build your video sequence and make changes on the fly in Designer, share 3D previews of your stage in the cloud with our Previz app and even build custom show control with our APIs. Then, you’ll be able to trust that EX 3 will map it all out onto any canvas — at pixelperfect quality.”

of a larger production.

“Typically, NDI is associated with fixed acquisition points, for example PTZ cameras,” said Trevor Elbourne, CEO of Atomos.

“Now we’re making it possible to use pretty much any HDMI or SDI-equipped camera as a mobile NDI source. It’s the perfect solution for creatives who need their content delivered live to a remote device, for review, recording or transmission.”

Atomos added NDI support to its CONNECT Series. Now, for anyone with a Ninja V or V+ fitted with an Atomos CONNECT, or a Shogun CONNECT, Atomos is enabling mobile (WiFi) or Ethernet NDI connectivity for a huge range of cameras, with built-in live monitoring and recording.

The new NDI HX2 firmware update supports NDI HX2 transmission up to 1080p60 with Shogun CONNECT and Ninja V+ with ATOMOS CONNECT (1080p30 for Ninja V+ and ATOMOS CONNECT), while

simultaneously recording in Apple ProRes or Avid DNx.

A camera and microphone can be connected by HDMI or SDI to the Atomos CONNECT series monitor-recorder, and then connected to a local area network using either Wi-Fi or an ethernet cable. Once NDI is enabled to transmit, the video stream is available to any suitable device on the local area network subnet. The NDI stream can then be viewed, recorded, or used as a source for OBS or a NewTek TriCaster, and then retransmitted as part

“When innovative companies like Atomos adopt NDI connectivity into yet another very portable product line, it results in expanded possibilities for video creators,” commented Roberto Musso, Technical Product Marketing Director at NDI. “This is just another example of how flexible our technology and formats are. We are consistently developing updated and more efficient formats, like HX3, but it’s our growing community of adopters that integrates them into amazing products and experiences.”

Brainstorm has announced its participation in the European Project 6G Bricks funded by the European Commission under the Horizon Europe Programme and coordinated by Christos Verikoukis from Industrial Systems Institute / ATHENA – Research and Innovation Centre.

The R&D project focuses on developing an interactive video-conference system inside virtual spaces to test and validate the capabilities of the 6G network. Javier Montesa, Technical Coordinator at Brainstorm, says that the main objective

is to verify and determine how the capabilities of the 6G network will support the deployment of this kind of technology, particularly as depth sensors quality improves and buffers with significantly greater resolution become available. The development team will rescale depth buffers up to the largest size permitted by the 6G network and the coding methods to accomplish this goal.

The outcome will be an interactive video conferencing system that operates within virtual environments and is

built to work with current and upcoming sensor technologies.

“Due to the benefits that the 6G network offers, the project focuses on testing several methods for recording volumetric videos and developing an interactive videoconference system inside of virtual spaces. The development team will employ depth sensors to capture users with its volume. The resulting footage will be live streamed over the Internet” says Francisco Ibáñez, R&D Project Manager at Brainstorm.

AMP VISUAL TV, the established French live production company, has selected Sony equipment, including the new MLS-X1 modular system, launched at IBC 2022, as the core switcher for its next generation premium fleet vehicles, the Millenium Signature 14 and Millenium Signature 19.

Looking forward to the major sporting tournaments of 2023 & 2024, AMP VISUAL TV has been investing in, upgrading, or commissioning new premium Outside Broadcast trucks to meet the requirements of

upcoming high end live productions. The MLS-X1 is part of a wider Sony investment, including 50 HDC-3500 and 9 PVM-X1800 monitors to furbish the new trucks.

The new Modular Live System MLS-X1, one of the key solution building blocks of Networked Live ecosystem, is a highly modular and scalable Live Production Switcher platform. Designed to suit flexible deployment models, on-premise or private cloud, onsite or remotely, the IP native MLS-X1 enables integrated operational control of processor nodes at multiple

locations from a single user interface. It builds on the established reliability of Sony switchers for mission critical live environments.

The HDC-3500 system camera with its 2/3-inch 4K CMOS sensor, captures pristine images with 4K high resolution, exceptionally low noise impressive sensitivity (F10 at 1080/59.94p or F11 at 1080/50p) and high dynamic range, while achieving the ITU-R BT.2020 broadcast standard wide colour space. Thanks to its versatility and image quality, it is an established camera for any live production and fits into the Networked Live ecosystem.

Seven Network on 10 April.

In addition to delivering all broadcast technologies and as “host” broadcast company, Gravity Media has also been appointed by Stawell Gift Event Management as the television production company for the event, creating, producing and delivering the complete television production of the Powercor Stawell Gift.

Gravity Media Australia has confirmed a new technology and production partnership to deliver the complete broadcast of this year’s Powercor Stawell Gift.

Stawell Gift Event Management, the Stawell Athletic Club and 360 Sport + Entertainment have signed Gravity Media Australia to a new multiple year agreement that will

see Gravity Media create and produce the complete all-screens Easter Monday coverage of the iconic Powercor Stawell Gift, first run in 1878 and today is Australia’s oldest and richest short distance running race.

This will be Gravity Media’s seventh year at the Powercor Stawell Gift which will be broadcast on the

Gravity Media Australia will access internationally acknowledged camera, broadcast and production technologies designed and developed by Gravity Media.

The production undertaking for this year’s Powercor Stawell Gift in the Grampian Mountains district of western Victoria includes 11 cameras and state of the art high definition outside broadcast and production trucks.

There are only a few days left before the NAB Show, one of the most important fairs in the Broadcast sector, begins. Although the visitors and exhibitors who attend it are mostly American, the truth is that many Europeans also come as well as visitors from other continents. One more year, the TM Broadcast International team will be there to examine all the novelties firsthand. In the next issue, an extensive report will cover everything that we find interesting for the development of our market. In the meantime, in this article we make a compilation of what, in our opinion, will be most relevant to see at the Las Vegas Convention Center.

Appear has recently announced the launch of its hardware accelerated video transport technology over the SRT (Secure Reliable Transport) protocol. The technology will officially be presented, and integrated into its X Platform, at booth #W2512.

Actus Digital will unveil its latest solutions for TV, radio and MVPD (multichannel video programming distributors). Actus v.9.0 expanded offerings address the needs of traditional broadcasters and MVPDs, and the new OTT StreamWatch, extends benefits to those creating and distributing OTT FAST/ IPTV streaming channels.

Available via SaaS and onpremises solutions, OTT StreamWatch provides 24x7 OTT stream monitoring and recording of native HLS streams. It makes it easy for engineers to monitor and maintain the quality of linear FAST/IPTV channels through the workflow - from encoding through delivery.

OTT StreamWatch– handles a range of resolutions and speeds returning from various CDNs across unmanaged networks.

The Actus platform provides a single interface that is intuitive and searchable for content based on channel/date/time, issues, closed captions, advertiser, program names and more. Taking advantage of the content and metadata captured within the Actus platform, optional AI-based workflows make it easy to create and edit clips together, manually or using automation for effortless creation and monetization of VoD content and publishing to social media to optimize viewer engagement.

This platform is used for live contribution, remote production and distribution. The X Platform offers the ability to blend encapsulation formats such as MPEG TS, ST20226, ST2110, Zixi and now high capacity SRT, with several codecs and video processing functions. In a single 1RU chassis, Appear’s X Platform as an SRT Gateway can handle more than 192 SRT connections and 18 Gbit/s throughput.

Archiware GmbH presents the latest developments of its P5 data management platform at this year’s NAB Show. Highlights include the S3 Object Archive featured in the upcoming P5 version 7.2 and numerous partner integrations showcased at Archiware’s booth N3376.

Archiware’s P5 Archive module provides a long-

term solution to move unused data to offline storage, with options for integration by thirdparty applications. The S3 Object Archive is an S3 interface for archiving with P5. It caters to data management solution partners and MAM/DAM/ PAM vendors by enabling seamless integration of the P5 Archive module. Radically simple archiving of files is realized by using the S3 protocol. Therein, P5 Archive acts as an S3 server that archives files to disk, LTO/LTFS and/ or to the cloud. This upcoming feature is platform-independent, with no additional licensing or integration costs.

Featured partner integrations at NAB 2023 include DataIntell, Seagate and MagStor.

Ateme has announced the launch of its Virtual Lounge solution, which converges traditional television and gaming, enabling users to enjoy ground-breaking experiences.

The solution is a digital space embedding multiple low-latency OTT players. These players can stream the same event viewed from different angles, all in synch, or even multiple events, bringing the experience of a sports bar into the digital space.

Thus, the solution brings OTT streams into a gaming environment, facilitating social interactions between viewers of live events even when they are very distant from each other. By projecting themselves into a common virtual space,

audiences can design their own video-consumption experiences.

The solution enables D2C streaming providers and sports leagues to reinforce their position as innovators, as well as to increase their monetization with new adplacement opportunities.

Ateme’s Virtual solution will be in booth W1517.

In booth W1322, Black Box will showcase new additions to the company’s award-winning Emerald®

KVM-over-IP platform. The company will offer visitors to their booth a demonstration of the Emerald® DESKVUE, a completely new concept in KVM-over-IP.

Black Box will also present more about DESKVUE in the Connect Innovation Theater W3421 on April 17 at 4:00 p.m.

Black Box® highperformance KVM-overIP solutions address the

requirements of modern broadcast, gaming, and esports environments. In addition to providing proven reliability, ensuring low-bandwidth requirements, and enabling exceptional user experiences with anytime, anywhere access, these solutions lower users’ cost of ownership, support greater workflow optimization, and contribute to more nimble IP-based operations.

As NAB celebrates its Centennial Anniversary, Black Box also celebrates its history as an NAB member and serving the broadcast market since the early 1990s with innovative KVM solutions.

The company, in its 30th Anniversary year, congratulates NAB for turning 100!

Brainstorm will exhibit at NAB Show for the 25th time. Brainstorm is proud of this commitment with the industry that underlines how a company focused on innovation can continue to excel year after year in the dynamic and ever evolving broadcast market. Also, being part of the NAB, which turns 100 this year, Brainstorm strongly believes this Anniversary proves the NAB’s strength, vision and flexibility to continue leading the path for broadcast and AV companies in our everchanging industry.

Brainstorm will showcase its most advanced

technologies, starting with a presentation in the main Virtual Production Theater, demonstrating the possibilities InfinitySet offers for creating virtual content of all kind. The main demo will display advanced, photorealistic augmented and mixed reality content in a highly visually engaging presentation, by using a combination of chroma sets and shaped LED videowalls, provided by Unilumin, and featuring real-time, seamless extrarender and set extension with color-matching 3D LUTs, in-context AR motion graphics, fully immersive talent tele-transport, multi-background content

and much more. Fully compatible with Unreal Engine 5 (UE5) Vanilla, InfinitySet now includes full integration of objects created in InfinitySet or Aston within the UE environment and viceversa, including shadows, reflections, and AR with Unreal Engine, providing unmatched flexibility for content creation.

Also, Brainstorm will introduce in the US the Edison Ecosystem. Built around Edison PRO, it is a complete solution that transforms any live or online presentation into an immersive experience by using AR and virtual

environments. The Edison ecosystem features custombuilt capture environments like EdisonGO, physical surfaces like Edison eDesk, and includes control devices (Stream Deck) or free applications like Edison OnDemand, so any user can take advantage of Edison PRO’s powerful tools. This ecosystem of tools enhances storytelling with easily created realtime 3D graphics and other

visual aids, while presenters are immersed in a virtual, photorealistic environment, running the presentation with clickers or other remote devices.

Clear-Com® will be celebrating its 55th anniversary at the centennial NAB Show, where the company will

showcase innovative new product features of their

flagship Eclipse® HX Digital Matrix Intercom System, including DynamEC™ real-time production software, IP-based V-Series IrisX™ user panels, and industry-leading Role-based Workflows. Additionally, Clear-Com will highlight their award-winning IPbased Arcadia® Central Station.

A popular choice for broadcast applications such as flypacks and OB vans, Arcadia Central Station brings together HelixNet®, FreeSpeak®, Clear-Com Encore®, other 2W/4W endpoints, and third-party Dante devices in a single, integrated system. Arcadia offers licensed-based scalability that allows it to meet numerous production needs, with support for over 100 beltpacks and up to 128 IP ports, with additional upgrades available in the future.

For applications requiring up to 200 FreeSpeak beltpacks and pointto-point workflows, the Eclipse HX Digital Matrix offers a range of unique tools to deliver power and efficiency, notably the Dynam-EC software tool which is a powerful and intuitive tool that allows for operator situational control over all your Clear-Com audio input and outputs, audio mapping, IFBs and partylines. New features introduced in EHX 13 for Eclipse HX support many of the needs of Broadcast applications for large-

scale events, such as the World Cup or other global sporting events.

Clear-Com’s new V-Series IrisX IP User Panel for Eclipse delivers thin-filmtransistor (TFT) displays for brightness and enhanced resolution – ideal for outdoor applications such as sporting events –combined with low latency and high port capacity.

CueScript debuts a new cloud-based service, CueTALK Cloud, at NAB 2023 (Booth C4421). This new cloud offering

is designed to allow CueScripts’ prompting software CueiT- and CueTALK-enabled devices, which feature the latest in IP connectivity, to communicate over WiFi. This brings an enhanced level of flexibility to users working in remote locations.

CueTALK Cloud allows controllers and prompt devices to be accessible via the cloud using standard public internet connection. It is also designed to align CueiT to be more userfriendly and easily set up between local and remote applications.

Dalet will showcase its new SaaS solutions that power modern, agile, and efficient digital content production, management and distribution workflows. The featured cloud-native solutions provide tailored workflows for:

• Modern Archive Management and Monetization

• Powerful Media Supply Chain and Distribution

• Streamlined Production Asset Management

• Faster News Production and Delivery

The new Dalet SaaS solutions are underpinned by the proven Dalet Flex platform and Dalet Pyramid modules. They offer faster deployment with a roll-out

in days rather than months. For customers who want to maximize their existing Dalet investment, Dalet Flex and Dalet Pyramid offer a gradual transition path.

Dalet product specialists will present a technology preview of elastic ingest workflows in the cloud at the Dalet booth. A cutting-edge, cloud-native technology codenamed “Dalet InStream” built on the market-leading Dalet Brio I/O solution, the preview will showcase end-to-end workflows in the cloud, from ingest to production and distribution.

On stand, Dalet will showcase:

• Dalet Flex - Media Workflows & Collaboration

• Dalet Pyramid - News Production & Distribution

AI-powered workflows will also be featured with the latest version of Dalet Media Cortex that provides improved speech-to-text and translation capabilities, allowing for up to 80% time savings for caption creation.

Evertz will show a range of RF solutions that are designed to support mission critical applications around the world.

Evertz offers a range of RF over IP solutions that give broadcasters the ability to reliably and securely transport Analog RF signals, over any distance, within their digital network. These solutions, which also preserve Carrier-to NoiseRatio (CNR), deliver greater flexibility to operators searching for a Satellite/ IP hybrid workflow and immensely expand the ability to virtualize the

satellite ground segment, resulting in improved operational efficiencies and new possibilities.

On NAB Booth N2225, Evertz will present its RF over IP platform, which features up to four RF over IP conversions per module. Each module offers 1+1 redundant QSFP ports, each port with up to 100G support. A maximum of 28 RF over IP conversions are possible in a mere 3RU chassis, or up to 8 conversions in a 1 RU chassis.

Evertz will also highlight RF Satcom products that have been developed in

close collaboration with its subsidiary Quintech. These span key applications such as RF over Fiber and IP transport, RF distribution and routing matrices, RF Receivers and monitoring, and antenna and teleport services.

In addition, Evertz is showing the MIO-DM4SAT Series, which takes its proven Satellite (DVB-S/ S2/S2X) Demodulator to

the next level by making it available in a MIO module that fits into the SCORPION Flexible Media Processing Platform.

EVS will showcase its LiveCeption, MediaInfra and MediaCeption solutions on booth #N2147, with several innovative features and products making their debut at the show.

Dedicated areas on the booth will serve to demonstrate EVS’ latest advancements in the field of live production, replays and highlights, media infrastructure, and asset management for

premium live productions. Additionally, this year’s booth will feature a tech pavilion, providing visitors with a more personalized setting to discuss current tech challenges with EVS experts. The aim is to foster candid conversations about critical industry topics such as the shift to IP, sustainability for future growth, cybersecurity and zero trust policy, as well as cloud, edge, and hybrid workflows.

FingerWorks™ Telestrators introduces its new FingerWorks Computer Vision software at NAB 2023 (North Hall, Booth N1810). This new software solution supports real-time player- and field-tracking, as well as a new masking technology.

FingerWorks Computer

Vision’s Player Tracking tool traces the path a player takes and can be applied to a live feed or during a replay. Player tracking allows any player

to be selected and focuses on that player and their movements. The Field Tracking tool allows any graphic to be placed and positioned under the players, in real time, and remain in that position during camera pans, tilts and zooms. Fingerworks new masking technology, which is also part of the Computer Vision software, eliminates all logos, markers, etc. and makes them a “virtual” part of the imagery under the players.

Fingerworks Computer Vision also automatically detects scene changes such as fast camera moves and camera switches, and clears the tools.

Grass Valley announces new hardware and software tools for any way and anywhere you want to work.

New solutions launching at NAB 2023 include

• New Kayenne switcher panel with touchscreen transition control. A choice of buttons or touchscreen allows TDs to use whichever mode is more comfortable for their style of working. Also new is a software update that allows flexible assignment of M/E keyers. Keyers from one ME bank may be assigned to another for optimized usage of all available keyers.

• LDX C135 compact camera for easy positioning and remote operation. The LDX C135 offers all the awardwinning features of the full-size UHD HDR native LDX 135 camera in a compact housing. The camera integrates seamless with camera-

robotics and Creative Grading for remotely IP or cloud-connected shading to provide new options for rich storytelling from all those hard to reach, unmanned places. And with the unique NativeIP option, the camera becomes a fully SMPTE 2110 enabled camera.

• Processing will introduce new functionality in both the Densité XIP-3911 and Kudos Pro series along with new Flex MV and KIP-X240 multiviewers.

GV Orbit will also show hybrid infrastructure orchestration with a new Tally system.

• AMPP Local provides a new option for a SaaS platform that is entirely on-premises. When you need to be completely disconnected from the internet, AMPP Local hosts the entire AMPP instance, both control plane and data plane, in an on-prem COTS datacenter. Also showing for the first time is the AMPP Streaming SDK.

• AMPP Grid provides horizontal scalability by allowing multiple compute nodes to be connected into a single pool of compute with uncompressed video flows moving seamlessly between machines with minimal latency.

• Working seamlessly with live production applications, Framelight

X can quickly assemble playlists from LiveTouch X replay, use HTML5 graphics in its editor, easily transcode the various flows that AMPP supports, and provide federated rendering that brings together the work of a distributed creative team into a single project.

• Ignite production automation now controls AMPP-connected devices such as K-Frame CS X for easy migration to cloud-enhanced workflows. AMPP clip players populated from Framelight X or an onprem MAM can be triggered using a MOS rundown.

• GV Playout X will also be demonstrating new HTML5 graphics along with alternative options from third-party graphics solutions for easy integration into existing workflows.

Hitachi Kokusai Electric America, Ltd. (Hitachi Kokusai) will demonstrate its latest 4K UHD camera

systems in booth C6117. Product debuts include an upgradeable camera system for professional live events and broadcast production with a costefficient path to 4K, and a next generation box camera for use in a variety of applications.

The company will showcase its SK-UHD7000, introduced at NAB Show 2022, along with the new SK-UHD7000-S2. The SKUHD7000, now shipping and in use worldwide, incorporates three 2/3inch CMOS image sensors with native 4K resolution to enable the pristine capture of 3840x2160 UHD video. The sensors’ global shutter technology minimizes

unwanted artifacts including flickering and banding that could otherwise occur when capturing LED walls and asynchronous lighting in event venues. The SK-UHD7000 is ideal for live events but also makes premium 4K acquisition cost-effective for everyone from television broadcasters futureproofing their production to houses of worship linking live and satellite feeds.

The SK-UHD7000 offers formats ranging from 480p to 2160p and delivers extraordinary sensitivity and quality for Ultra HD production. Its Sensitivity of F11 enables high-quality acquisition in low lighting conditions, while a signal-to-

noise ratio (SNR) of 62 dB provides ultra-quiet images. The camera’s new prism design delivers superior color reproduction and compliance with BT.2020 color gamut. Its dual 4K and HDTV workflow enables seamless multi-format productions and supports separate controls for High Dynamic Range (HDR) and standard dynamic range (SDR).

The new SK-UHD7000-S2, now shipping, provides the same pristine quality and production benefits. The SK-UHD7000-S2 leverages

Hitachi Kokusai’s latest HD and 4K camera technology, but it can convert to a 4K camera with an affordable license upgrade option. This offers a lower initial purchase point for customers that want highquality 1080p immediately along with a cost-effective, field-upgradeable path to 4K in the future.

Imagine Communications continues to add groundbreaking features to its Selenio™ Network Processor (SNP). At the 2023 NAB Show (April 1619, Las Vegas Convention Center, booth W2775), Imagine will showcase new master control and branding functionality for HD, UHD and HDR in its industry-acclaimed 1RU processing platform.

The SNP Master Control Lite (MCL) personality adds branding, live graphics, and full master control functionality to the latest release of SNP. Offering support for both HD and UHD signals, the SNP MCL can also be configured as an industryfirst, ST 2110-compatible dual downstream keyer (DSK). As the SNP is an entirely software-defined application platform built on high-density hardware,

the MCL personality can be licensed onto any SNP unit that has ever shipped, enabling current users to easily add this new functionality to their existing system.

The new SNP MCL personality provides highvalue channel release functions within the framework of a powerful 1RU processing platform. Features include fullresolution 10-bit and HDR-capable graphics; four full-frame keyers; three external Key/Fill inputs; full frame sync on every input including Key/ Fill; 16 channels of audio processing, including voice over and graphics audio; flexible interfaces, including 12G SDI, 2SI-SDI and ST 2110; and integration with automation systems via the well-known Imagine IconMaster™ switcher protocol, over IP network connections.

Visitors to the Lawo booth (#C4111) will be the first to see Lawo’s “Elasticity” concept, which was officially introduced during a special event on April 4th, in action. Furthermore, at this year’s centennial NAB Show, Lawo will exhibit more than 10 new products and major updates to its existing audio, video, control and monitoring portfolio.

LiveU returns to NAB for the 14th year to share tangible ways that customers can reduce production costs, meet the increased demand for quality content and optimize the monetization of their content assets by leveraging cloud-based workflows.

It will demonstrate its complete suite of live video services – from contribution and production to distribution – as part of an enhanced live video production ecosystem. This is all built on LiveU’s fieldproven LRT™ (LiveU Reliable Transport) protocol for low

latency, high quality, and rock-solid resiliency.

It will also be coming with a world-first production approach, leveraging LRT™ across the entire workflow!

Putting the customer in control, LiveU’s on-demand subscription solutions encompass seamless cloud, on-prem and hybrid workflows – saving money by lowering costs, reducing time to air, increasing audience engagement, and simultaneously distributing to multiple channels.

LiveU will be showcasing its latest 5G offerings and cloud-based solutions at booth #N3058 in the North

Hall. It will also be hosting live demos and special programming highlighting the latest trends shaping live video for news, sports, and a host of vertical market segments.

LTN will be demonstrating its cloud and IP-powered product portfolio at this year’s NAB Show in Las Vegas. Visitors meeting at LTN’s booth (#W2621), will experience the future of media through LTN’s reliable IP and cloud solutions that enable new digital monetization opportunities while driving cost-efficiencies.

LTN will be displaying its extensive product portfolio that equips customers with the tools that they need to creatively grow audiences and revenue. These services include how IPbased transmission solution LTN Wave provides media companies with flexible, reliable, and intelligent alternatives to satellite distribution. Wave creates next generation distribution and contribution possibilities including the ability to manage complex rights deals and the associated requirements for ad signaling across multiple platforms.

LTN will also showcase LTN Arc, the fully managed, cloud-enabled production solution that handles

every aspect of live event versioning for rights holders and sports broadcasters, and LTN Lift, the cloudbased playout solution with automated versioning capabilities to seamlessly spin up new channels and deliver customized programming across digital/ OTT/FAST services.

Exhibiting in booth C5031 at the 2023 NAB Show in Las Vegas from April 16 to 19, the company will demonstrate new products and updates across all three of its core portfolios -- capture, conversion and streaming -- that seamlessly bridge signals, software, streams and screens.

The USB Fusion multiinput video capture and mixing hardware and its accompanying app let users easily combine camera, screenshare and media file sources into engaging live presentations for streaming, event production, online lectures, webinars, video conferencing and other applications. Magewell will unveil powerful new features that dramatically expand the input source possibilities of this versatile device. More details of these new features will be shared closer to the show. Magewell will also highlight its recently announced Eco Capture AIO M.2

ultra-compact video capture card. Featuring selectable HDMI and SDI interfaces, the new model combines flexible input connectivity with low power consumption in a spaceefficient M.2 form factor.

Conversion

Magewell’s converters bridge traditional and IP media infrastructures by easily bringing SDI or HDMI signals into and out of IP production workflows and AV-over-IP networks. Demonstrations will feature Pro Convert NDI® encoders and multi-format decoders in end-to-end workflows, all managed by Magewell’s centralized control software.

Streaming

Making their first NAB Show appearance, Ultra Encode AIO live media encoders build on the flexibility of the original Ultra Encode family with expanded features including HDMI and SDI input connectivity in a single unit; 4K (30fps) encoding and streaming from the HDMI input; simultaneous multi-protocol streaming;

and file recording. Ultra Encode AIO can encode one live input source or mix the HDMI and SDI inputs (picture-in-picture or side-by-side) into a combined output, and flexibly supports H.264 and H.265 streaming in multiple protocols including RTMP, SRT, HLS, TVU’s ISSP technology and more. It also supports NDI®|HX2 and NDI®|HX3 for IP workflows.

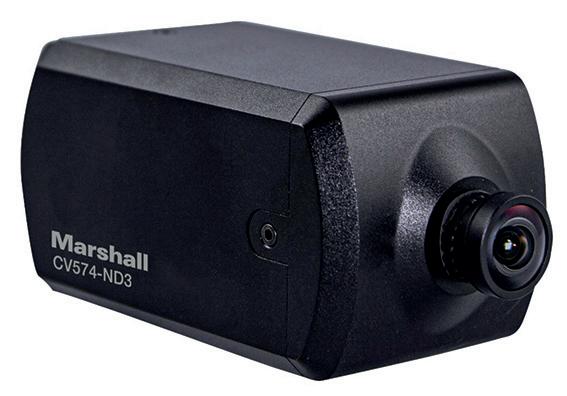

Marshall Electronics continues to evolve its product offerings with the latest NDI codecs and will be showcasing four new camera models at Booth

C5520. The CV570/CV574 Miniature Cameras and CV370/CV374 Compact Cameras all feature low latency NDI|HX3 streaming as well as standard IP (HEVC) encoding with SRT, while also offering an HDMI simultaneous output for traditional workflows.

The CV570-ND3 miniature and CV370-ND3 compact NDI|HX3 cameras both contain a new Sony HD sensor, which is also being implemented into other top-selling Marshall POV cameras this year. These will have resolutions of up to 1920x1080 (progressive), 1920x1080i (interlaced) and 1280x720p. Also, these cameras are designed with the same flexible features

including interchangeable lenses, multiple broadcast framerates, remote adjustability and very discreet and durable bodies made of lightweight aluminum alloy with rear I/O protection wings.

MediaKind (#W2100) will showcase its latest broadcast and video streaming technology and solutions and discuss the latest monetization approaches behind some of the live streaming applications.

Visitors will experience MediaKind’s video streaming approach that combines high-quality AI-based 4K upscaling, massive bandwidth savings brought by Versatile Video Coding (VVC), and large energy savings gained from hardware acceleration without any quality compromise.

MediaKind will demonstrate new monetization scenarios that include DRM-protected ultra-low latency streaming for premium interactive

events, advanced playback controls for monetizing short segments, and the automated creation of video summaries.

The company will also illustrate how operators can leverage its technology and public cloud storage to create next-generation Cloud DVR services.

Visitors will also learn how MediaKind’s customers can increase average revenue per user (ARPU) and customer lifetime value (CLTV) by unlocking new pay-perview models to achieve higher CPMs, exploit the FAST opportunity, and grow audience reach and advertising revenue.

On booth W1578, Mobius Labs, a developer of nextgeneration AI-powered metadata technology, will demonstrate the latest version of Visual DNA, the company’s unique AI-based metadata solution that can be deployed on premises or in the cloud. NAB attendees will also see a new suite of complementary audio tools with state-of-the-art speech-to-text and English translation capabilities that further improve asset management workflows and increases the value of an organization’s content.

Visual DNA reduces the processing cost of video ingest, tagging, search

and archive. Mobius’ unique one-pass process creates an ultra-compact description of video frames (in essence, the Visual DNA) that is around 1,500 times smaller than the corresponding 4k video. Once assigned, Visual DNA can effectively enable users to turn back time by reindexing 1 hour of content in under 10 seconds. Thus, tags can always be kept up to date with new metadata using custom trained AI without requiring further ingest and poorly indexed archives become a thing of the past.

In parallel it can generate highly accurate audio transcripts in 30+ languages and optionally translate them into English without having to rely on separate natural language processing modules as required by traditional cloud-based vendors.

Mark Roberts Motion Control (MRMC), a Nikon company, is to present its largest-ever product lineup at NAB 2023. The company will use the event

as the launch pad for three new products which will be showcased on stand C5325: Polymotion Relay, a sports circuit-based tracking solution, the Light Lift System and the AJS-2 autoJib for enhanced live content capture production. In addition, an enhanced version of the virtual production experience ‘Unreal Ride’ returns to the company’s Motion Control stand in the central lobby (L1) in partnership with VU to wow visitors following its smash-hit success last year.

Assaff Rawner, CEO of MRMC said: “We are excited to be part of the 100th NAB show with the biggest ever presence in our company’s history. Our broadcast solutions stand will be split into two key focus areas: Studios and Sports; with dozens of demonstrations and opportunities to network with our team of experts. We will be introducing Slidekamera by MRMC products for the first time to NAB audiences. We’re also delighted to see delegates playing at being a director by taking our virtual production

experience Unreal Ride to the next level.”

Net Insight together with its solution partner Comprimato will be demonstrating the use of live JPEG XS compressed ST 2110 streams using TR07, transported over WAN (Wide Area Network) to cloud workflows at this year’s NAB Show in Las Vegas. This bridges the gap between cloud and highend IP workflows in a new way.

At the heart of the solution is Net Insight’s Nimbra 1060 taking in ST 2110 video streams encoding them using ultra-low latency JPEG XS and sending them as TR07 streams directly into the cloud where it’s transcoded and transported to the recipients using the cloud-native Nimbra Edge platform residing in Oracle cloud. This opens up new Tier 1 media cloud productions, leveraging cloud resources for the highest flexibility and efficiency.

Net Insight showcases TR07 interworking by sending the streams from Nimbra 1060 to Comprimato’s cloudbased Live Transcoder connected to Nimbra Edge for further delivery into cloud production and distribution workflows. This powerful combination offers a direct route connection from ST 2110 on-prem equipment to the cloud, where highend cloud production and adaptation can take place. In addition, the Live Transcoder is further used in a live demo together with Nimbra Edge to demonstrate a seamless NDI (Network Device Interface) workflow in and out of the cloud, enabling NDI productions with global connectivity.

Pebble will be showcasing its playout automation and integrated channel solutions as well as its IP connection management tool, Pebble Control, and web-based monitoring system Pebble Remote.

Pebble Control is built specifically to enable

broadcasters to make the leap to an all-IP facility without the need to deploy a bespoke enterprise solution. It is self-contained, scalable and easy to configure, leveraging full support for the Networked Media Open Specifications (NMOS) suite of protocols produced by the Advanced Media Workflow Association to facilitate networked media for professional applications.

Pebble Control Free (the

freemium version of Pebble Control) was launched last year, and went on to win the Media & Entertainment Best in Market Award in late 2022. It enables broadcasters to work with entry-level access of 25 concurrent input and output connections at no cost. The industry award recognised Pebble Control Free as being one of the best innovative solutions that made an impact in the media and entertainment industries in 2022.

Pebble Remote, a webbased monitoring and control tool, will also be showcased. This cloud based solution enables secure 24/7 remote access to mission-critical control for playlists, both on-premises and off-site. Additionally, it facilitates centralised control access across multiple playout sites, making it an ideal solution for broadcasters who manage complex systems with multiple channels. Pebble Remote has proven to be a valuable tool for overcoming the challenges associated with operating outside the typical confines of a playout facility.

PlayBox Neo will demonstrate the latest additions to its range of broadcast TV channel branding and playout solutions. Exhibit highlights on Central Hall Booth C2427 will include integration of EAS (Emergency Alert System) and expansions to the feature set of TS Time Delay.

Also on demonstration will be the latest-generation AirBox Neo-20 playout, ProductionAirBox Neo-20 production playout, Media Gateway live media delivery and distribution, and the Channel-in-a-Box television

branding and playout system.

At booth N2867, Quicklink will demonstrate the AIenabled STS410 & Cre8, the next-generation video production platform available on premises or in the cloud, incorporating innovative NVIDIA AI technology.

The solution is an easyto-use video production interface for creating professional virtual, in-person and hybrid events. Quicklink’s STS410 combines all the tools required to create the most professional productions

possible for connecting with your global audience and delivering an unforgettable experience. Cre8 empowers you to engage your audience and grow your community.

Utilising NVIDIA Maxine, Cre8 includes NVIDIA’s AI and machine learning technology which is used to increase the production quality and improve productivity of your productions by applying AI-powered filters to your sources.

Qvest will showcase products and solutions for media workflows in the West Hall (W2042) of the Las Vegas Convention Center. For the first time worldwide, the latest

version of the cloud playout automation makalu will be presented in live demos. The upgrade of the software-based playout automation for broadcasters, publishers and content owners includes an extended range of functions and a completely revised user interface. Particularly efficient media workflows can also be realized with Qvest’s Remote Editing. Visitors to NAB can experience the possibilities of this enterprise solution for location-independent video editing with native and full integration of the Adobe Creative Cloud at demo pods. At the NAB premiere of qibb, the media integration platform will be showcased in live demos at the Qvest booth. The focus will also be on automated

workflows with generative Artificial Intelligence for media applications, including the integration of ChatGPT4.

Under its NAB theme, “From idea to audience, let’s make it real,” Ross Video will showcase these latest advancements for live broadcast, worship, esports, stadium, studio and stage environments at Booth N2201

Among the innovations on show will be:

- The multi-platform Inception newsroom computer system, demonstrating the long-requested ‘Story Grouping’ feature, along with the launch of Inception Mobile, which will take users beyond the newsroom and into the field.

- Demos of ChatGPT technology showing how this AI tool can work within newsroom workflows.

- Following the success of the iconic cable camera

system, Spidercam, a newer and smaller Spidercam Light+ system, built for indoor venues and smaller outdoor events. Other Camera Motion System developments include unique StableTrac technology to enhance the stability and traction of Ross’ Furio track-based camera systems.

- Kiva Express Duet, a lower cost, operator-driven digital media playout solution for simplified live event production.

Visitors to the Ross Video booth can also see previews of:

- The convergence of Ross’ Streamline and Primestream media asset management systems,

with a new, highly integrated newsroom workflow.

NAB Visitors are invited to experience the combined power of four brands at booth No. C5217: Sennheiser, Neumann, Dear Reality and Merging Technologies will showcase their full range of audio solutions for video

production, sound design, recording, and more to equip professionals of all levels, from social media creators to audio mixers for film and television.

Besides its full range of mics for camera use, Sennheiser will showcase its EW-DX and Digital 6000 wireless microphone systems and also debut its latest wireless audio system, designed specifically for film makers, high-profile content creators and broadcasters. The product portfolio on show is rounded off by the company’s broadcast headsets. Attendees will also have the chance to listen live to Emmynominated foley artist Sanaa Kelley and head mixer Arno Stephanian –captured by Sennheiser’s world-class shotgun microphones.

Tedial has announced that its award-winning smartWork cloudnative, NoCode Media Integration Platform will be at NAB 2023 with a suite of Package Business Capabilities (PBCs). The enhancement is the M&E’s industry’s first PBC solution and was designed to bring broadcasters closer to the cloud and enable the composable enterprise, a modular approach that leverages existing digital capabilities to create new products and services.

At this year’s NAB Tedial will present an enhanced version of smartWork in Booth W3959 that now features smartPackages,

a set of PBC modules capable of streamlining the processes of different business units including, but not limited to: news, content delivery, postproduction, archive and even IMF.

PBCs are reusable software components that provide the key building blocks of a composable enterprise, used to create best-ofbreed solutions in many verticals. In the M&E industry PBCs represent self-contained units that solve a specific problem: Localization, Content Delivery, Post-Production, etc. PBCs function without external dependencies, or the need for direct external access to data. For interaction with the rest of

the enterprise’s systems and services, each PBC offers a data schema, an API, an event notification system and a set of services.

Applying modularity to M&E entities achieves the scale and pace required to enact ambitious business practices. The modularity of PBCs combined with smartWork’s nocode technology – that allows applications to be created without any prior knowledge of traditional programming - enhances composability resulting in the flexible design of applications and services enabling organizations to innovate and adapt quickly to changing business needs.

Tedial’s smartWork can be deployed on-premises, on any cloud or in a hybrid architecture for incredible flexibility. Cloud capabilities enable media services to be quickly built, deployed and evolved as business needs change by adding or adjusting business processes. Tedial recently successfully completed and achieved the AWS

Technical Review

for smartWork. All smartWork services are available as apps, which can be provided by AWS.

The launch of smartPackages coupled with Tedial’s recent AWS partnership, earned ISO 9001 and 27001 certificates and its plans to offer additional cloud options that reflect the Company’s consistent commitment to providing the highestlevel quality solutions and supporting the latest, most advanced protocols and standards.

At NAB 2023, on booth W1501, Telestream® has announced Telestream Content Manager™. The next-generation solution provides a single point of access for content across an organization’s entire storage ecosystem, including cloud and onprem storage.

Built on the DIVA Core technology, Telestream Content Manager is tightly integrated with the Telestream workflow

orchestration tools, as well as supporting all major MAM, PAM, and automation systems.

Three innovations combine to make this unification of cloud and on-prem content management practical and cost effective. First, an intuitive web-based user interface provides users with the tools that they need to discover and work with their media content. All content information is indexed and searchable, including both system metadata, editorial metadata imported from other systems, and customer-configurable metadata. Once content is found, it can be played back directly within the application and transferred

to any connected device such as a storage, production, or playout system.

Second, auto-object discovery and the ability to index files directly from cloud storage eliminates the egress costs associated with copying to another location and enables enterprises to efficiently manage both legacy content and incoming files. Finally, the automation of content management actions and triggering of automated workflows enables greater efficiency through the ability to create sophisticated supply chain workflows that incorporate content movement, lifecycle management, and media processing.

It is planned for worldwide availability in Summer 2023.

Telos Alliance® will exhibit for the first time in three years with a focus on game-changing broadcast audio solutions that create limitless possibilities for content. Telos Alliance team members will be stationed in booth #W3766, to participate in direct, consultative engagements with customers and demonstrate the suite of products and solutions. Additionally, in an effort to foster more intimate solution conversations with their customers, Telos Alliance has booked hospitality suite #W3673 located directly behind the booth.

At this year’s NAB Show, customers will be able to experience several new offerings that have

been introduced across all product families, including Quasar™ XR and SR consoles, the fully AoIP native and cloudcapable Telos Infinity® communications system with its newly introduced mobile app; Forza, the newest member of Omnia processing family, Linear Acoustic’s audio for video offerings that bring Next Generation Audio to life, and the unparalleled processing power of the AIXpressor with FlexAI processing infrastructure from partner Jünger Audio.

Tieline the Codec Company has announced it will unveil two new MPX codecs for the first time at NAB2023.

Tieline’s MPX I and MPX II codecs deliver composite FM multiplex (MPX) codec solutions for real-time network distribution of

FM-MPX or MicroMPX (μMPX) signals to transmitter sites. The MPX I is ideal for transmitting a composite STL signal from a single station with return monitoring, whereas the Tieline MPX II can transport two discrete composite FM-MPX signals from the studio to transmitters with return monitoring. Both units support analog MPX on BNC, MPX over AES192, and multipoint signal distribution, to deliver a wide range of flexible composite encoder and decoder solutions for different applications.

The MPX I and MPX II support sending the full uncompressed FM signal, or high quality compressed μMPX at much lower bitrates. An optional satellite tuner card with MPEGTS and MPE support can receive DVB-S or DVB-S2 signals.

TSL will showcase for the first time on booth C2416 its new MPA1-MIX-NET solution alongside its flagship Precision Audio Monitoring (PAM) range, both of which provide smooth migration paths to IP. In addition to the technology showcase, TSL announces the appointment of media expert Steve Cole as TSL Audio Product Manager.

NEW: MPA1-MIX-NET

- Simple Confidence Monitoring - Easing the transition to IP is MPA1MIX-NET, TSL’s newest 1U audio confidence monitor and mixer with 16 instantly recallable independent mixes. Designed in collaboration with NEP, the MPA1-MIX-NET is ideal for ST 2110 trucks and installations. A 1G AoIP connection provides 64 channels of input, with a

further 64 available via an optional MADI SFP. Support for in-band NMOS is builtin for integration with TSL control and leading thirdparty systems. Additionally, SNMP integration allows remote configuration changes for added flexibility and improved productivity.

Monitoring

- TSL’s flagship PAM range continues to evolve, offering market-leading sound quality, reliability and an unmatched range of inputs and outputs that set the bar for performance. The PAM dashboard provides users with helpful system health information such as software/hardware version information, temperatures and fan speeds as well as diagnostic information such as PTP lock status, IP packet counters and multicast addresses and port numbers of the essences currently being subscribed to.

VIDA Content OS, a cloudbased SaaS platform and Media Asset Management solution, will demonstrate streamlined FAST Channel support and advanced artificial intelligence integration at booth W2312. The VIDA ecosystem continues to evolve, taking the stage at NAB with several enhancements in its content management and distribution features. VIDA now incorporates OpenAI GPT, allowing users to easily create synopses and build summaries for assets to increase media discoverability, automate metadata generation, and make content rapidly searchable and easy to disseminate. The AIpowered integration enhances the searchability of content and adds new levels of automation to

the media asset workflow. VIDA’s artificial intelligenceenabled features deliver audio and visual analysis including text file conversions, language translation, facial/text/shot/ technical cue detections and recognition, and efficiently handles asset automation at scale.

Enabling further monetization opportunities across the content management value chain, VIDA also announces enhanced FAST Channel support for independent and large content owners. Through its integration with Amagi’s cloud-based SaaS technology, VIDA simplifies the dynamic delivery of content to hundreds of distribution points. Amagi’s advanced technology combined with VIDA’s AI toolset and open API architecture provides the simplest way to deliver content, serving hundreds of partners and millions of viewers watching adsupported streaming television channels.

Vislink will debut its ultracompact Cliq OFDM Mobile Transmitter for the first time at a U.S.-based show at NAB 2023.

The Cliq is one of the smallest 4K mobile transmitters in the world. Capable of full 4K or transmission of two HD video services, the Cliq OFDM Mobile Transmitter provides broadcasters with an uncontended wireless video network connection to obtain unique and immersive camera views with complete freedom to roam.

With its HEVC capability, the Cliq OFDM Mobile Transmitter provides operators with the flexibility

to deliver more camera views in their allocated bandwidth or wirelessly capture content over twice the distance compared to older MPEG-4 devices. In addition to support for Tier 1 live events including delivering shots from body-worn cameras, the wireless transmitters can also support a wide variety of applications including onboard vehicles, PoV cameras and drones.

Vizrt promises an entirely new Vizrt Experience with ROE Visual for NAB 2023. The organizations are coming together to make magic for the 100th anniversary of the iconic broadcast technology

show by combining leading technologies in real-time broadcast and virtual production, GhostFrame and Vizrt’s Viz Engine 5.

Combining cuttingedge LED and camera technology, GhostFrame enables creative and innovative use of video and broadcast technology. Uniting with Viz Engine 5’s advanced rendering capabilities, ROE Visual’s Ruby RB1.9Bv2 highfrequency video wall, driven by HELIOS® LED processing will be used to achieve new possibilities in virtual live production. GhostFrame works exclusively with ROE Visual LED panels and Megapixel VR HELIOS® LED Processing.

GhostFrame can receive up to four Viz Engine signals at one time, which enables limitless creative possibilities in broadcast virtual production. When combined with Vizrt’s Virtual Window™ technology, introduced in 2012, broadcasters can preview other camera perspectives simultaneously which was previously impossible. Uniting with GhostFrame,

this is now a reality and will be displayed at NAB.

The setup for the live XR demonstration, the “Vizrt Experience Las Vegas” uses ROE Visual’s Ruby LED panel, equipped with 1.9-pixel pitch, wide color gamut, and a bitdepth of 16bit, to create brilliant visuals. The visuals, created by design firm dotconnector, will be captured by two RED Digital KOMODO cameras. One mounted on a stYpe Human Crane with stYpe RedSpy tracking and Follower for interacting with AR graphics, the other camera is mounted on a rail dolly from Blackcam controlled by electric. friends with AI automated shots. The virtual scenes displayed will be rendered natively in Viz Engine 5.1 and in Unreal Engine 5.1. In this setup, both render blades have an ultra-low latency, allowing for fast camera movements.

VoiceInteraction is set to unveil Version 7.0 of its Product Suite at the NAB Show. These AI-

driven platforms combine proprietary Automatic Speech Recognition technology with carefully designed interfaces, aimed to address broadcasters’ current needs. Broadcasters can expect seamless integration into their workflow and an improved user experience in VoiceInteraction’s AIdriven Closed Captioning and Broadcast Compliance platforms:

Audimus.Media

Audimus.Media is VoiceInteraction’s flagship product for automatic closed captioning, with reliable speech recognition and the ability to handle marketspecific requirements. It offers high accuracy, great delivery, and caption reusability for VOD, making it a cost-effective solution to improve accessibility. Supporting any SDI or IP workflow, the platform also features automatic English-Spanish translation, for broadcasters reaching Hispanic audiences. With an intuitive web dashboard, Audimus.Media allows for a customized setup, control

over every configured channel, access to specific features, event scheduling, and clip import into nonlinear editors with fully synchronized captions.

MMS is a platform that helps Television Broadcasters reduce costs, maximize revenue, and increase viewer loyalty by providing a seamless experience. It combines proprietary Speech Processing Technologies with AI algorithms to go beyond ordinary compliance solutions, providing added features such as news segmentation from topic and keyword detection. MMS not only monitors every relevant QoS element with a multiviewer and configurable alert center, but it also incorporates analytics and metadata, allowing broadcasters to gain valuable insights into their network and competitors. Automated royalties’ reports, Ad delivery and ratings,

and much more. With customizable and userfriendly features, MMS empowers broadcasters to focus on creating and delivering great content.

Zero Density has announced its attendance at NAB 2023 where will be present three new virtual production solutions. The new solutions will involve a major update to Zero Density’s TRAXIS brand, which specializes in stateof-the-art tracking for virtual studios.

The company will also be launching a new software bundle that will make photoreal Unreal Engine-

based graphics accessible to all broadcasters.

All the new products can be seen at the Zero Density booth, which will feature a green screen stage with a news set, an LED-based XR stage with a virtual basketball set and multiple demo pods so that showgoers can get handson with Zero Density’s solutions.

Throughout the show, Zero Density’s technical experts will deliver demos every half hour on both the cyclorama and the LED-based XR stages, demonstrating their groundbreaking virtual studio, augmented reality and XR virtual production capabilities.

The Lega Serie A (Lega Nazionale Professionisti Serie A), body that governs the most important club football tournaments in Italy, has been relying on Infront Productions for years. That is why Lega Serie A has entrusted to this company the production of the eSerie A TIM, Lega Serie A esports competition, in order to reach the youngest audience.

Alessandro Lonati, Director of Infront Productions, and the project management team of Thomas Borghi, Alessandro Zampini and Andrea Mariani, explain how this competition is produced and what are the most important challenges they have had to overcome.

How did your involvement in this project come about?

eSerie A TIM is Lega Serie

A’s strand within the esports arena. Infront’s long and trusted partnership with Lega Serie A led to conversations about how to expand the brand and gain exposure with younger fans on platforms where Lega Serie A did not have an existing market presence and a share of the audience. The result was an annual esports tournament featuring players representing Serie A clubs, complemented by additional programming.

It was an organic process for us, all major football leagues now have their esport tournaments and proposing to Lega Serie A an esport event, both to establish a presence on the market and to try and involve the younger generation in Italian football coverage, was a natural next step.

With the advent of streaming, digital content and the second screen, the interest of young people

has become much more fragmented, and the habit of watching a full match on television or in the stadium is being lost, in favour of content that is easier to consume, such as gaming and esports.

Lega Serie A proved to be very interested and attentive to this dynamic, and they immediately believed in it, so much so that they pushed the competition with conviction, considering it on a par with the others they organise.

The beginning was not straightforward. The first edition was supposed to be in 2020, but due to the pandemic we had to postpone everything for a year. The second edition still had limitations in terms of possible audience participation, so the 2023 edition is the first with real freedom to engage with the public.

What are the characteristics of the eSerie A TIM? How does the competition take place? Where does it take place? Is it usually held remotely, or does

it involve bringing competitors together in one place?

The regular season takes place at Infront’s facility in Milan, the eSerie A TIM Arena, utilising a six-camera coverage plan and a team of around 20 people working on the production,

organisation and logistics of each event. A draft and eSuper Cup tournament take place before the regular season begins, then four rounds of competition precede the Play-Offs and Final Eight. The Final Eight event is taken on the road and is a special occasion,

played at an open-air venue. This whole schedule takes place over four months from January to April, with qualifying events beginning the previous November.

We are now in the third season, which we have enriched a lot, compared

to previous years, by replacing more and more online events with live and face-to-face moments. We were also able to involve the clubs more, with events held at some clubs’ stadiums. Those events were part of a Road Show prepared with Lega Serie

A and EA Sports. We were in Udine for the launch event, in Milan for the Milan Games Week with a dedicated stand, in Rome in the prestigious TIM headquarters and at the Allianz Stadium in Turin to present the Juventus Dsyre team.

The goal is to have the tournament interacting with the audience throughout the season, which is why we are planning a final event in a grand style that will be open to the public and ticketed. This approach allows us to cover all angles, with online competitions and live events and with the presence of the public, connecting with the players and sponsors.

During these first editions we have witnessed significant growth in audience, with figures doubling between the first and second year. The aforementioned activities have been put in place to stimulate growth – the Road Show around different territories, the organisation of the Finals in an arena that can accommodate the general public.

Despite having started after many of the other major leagues’ esports tournaments we already boast numbers higher in some cases than other European tournaments.

Going step-by-step, that is, detailing all the technological infrastructure that takes part in the process, what are the workflows you have implemented to

deliver this content to fans?

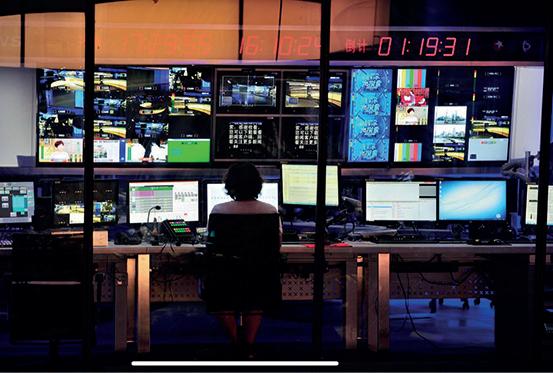

We work in a full TV studio that is fast-paced and constantly evolving, which can provide plenty of opportunities for learning new skills and technologies.

Everything is operated by the control room, which is the core of the broadcast operation, where all the technical aspects of a production are coordinated

and managed. Our control room is staffed by a team of professionals, including a director, producer, technical director, audio engineer, graphics operator and other support staff

Overall, the broadcast control room, is a critical component of any television or radio production, providing the technical expertise and support necessary to deliver high-

quality programming to audiences around the world.

The programme goes to the master control room, which is responsible for ensuring that the broadcast is properly received by the audience. This includes controlling and monitoring the video and audio signals, the transmission equipment and the signal path from the studio to the transmitter.

Any technical problems or failures that occur during the transmission process must be quickly identified and resolved to avoid interruptions or delays to the broadcast.

What technological resources has Infront Productions deployed for each stage of production? What are the reasons behind such a deployment? Which are the manufacturers you have relied on (brands of technological solutions)?

First of all, we worked together with a set designer to give fans a different visual experience than last year, once everything

was approved we started to work on the ground with all the production staff, technicians, graphics, storyteller, talents and so on.

Our goal is to make a mix between a TV show and an esports show, taking the best from both and delivering the best show we can. We relied on some established manufacturers in this area, like Grass Valley for cameras, Ross for video mixers, Yamaha for audio mixers, Blackmagic for player POV cameras and EVS for replay and video playback.

What are the challenges you have encountered in producing an event like this? How have you solved them?

The biggest challenge is definitely related to finding the right tone and language for the broadcast. The audience is mainly on Twitch, a very young one that has watched little television and is mostly accustomed to a different set of production values. Although on Twitch it is possible to become

extremely popular with a lot of followers, even with just a webcam and amateur equipment, we have the need to convey a high production value for the competition. However, we must be careful to find the right balance.

On Twitch, most of the live streams are singlecamera, and there are no camera movements, cuts, or tracking shots, all of which are necessary elements in the production of a sports event. The difficulty lies in being able to use all these techniques without alienating an audience that is not used to them, and who finds it perfectly normal, for example, to have seconds, if not minutes, of live broadcasting without anyone speaking.

Another element to consider is the lack of interaction with the chat, which is one of the distinctive elements of platforms like Twitch or YouTube, for which this kind of content is designed. To make up for this lack, we interact with the chat with questions and surveys

related to the games, but we never respond directly during the live stream, so broadcasters who have acquired the rights can broadcast it with ease. You come from offering broadcast services for sporting events, what differences and similarities have you found between sports and esports?

In terms of production, there isn’t really that much difference between producing a big sports event or an esports event. In the world of esports, the advantage is that you’re always in controlled environments, such as a television studio or a sports arena, but the basic elements are essentially the same: there are athletes to follow, an enthusiastic audience, and hosts/ casters and analysts to commentate.

What changes is the final output, which appears more static since the athletes are seated in chairs, but it’s still a sports event in the end. Where it differs from a traditional

sports event is the duration: an eSerie A TIM day lasts on average between five and six hours and at least 10 matches are played. Since the gameplay is the core of the entire broadcast, there’s little screen time for everything else, and you have to be careful to entertain the audience without losing them by extending the segments between one match and another.

The roles required also differ. The caster of an esports event is a figure similar to the commentator, but with enormous direct knowledge of the subject. Often, they are players or ex-players who talk not only about what they see, but about the game in general, the dynamics that move it, the differences with past years.

esports are based on video games that do not have a rigid set of rules like traditional sports, but rather change from year to year, season to season, from update to update, and sometimes they do so substantially. The caster must therefore not only

tell the audience what is happening in the game, but in general educate the public on the whole context of the competition. The analyst, on the other hand, is more similar to the ‘colour’ commentator of traditional commentary, and often comes from the world of streaming, with extensive knowledge, good relationships with the players and the

ability to highlight hidden peculiarities of each game.

It is important to point out that our casters and analysts comment on fouror five-hour broadcasts, and they do so without ever missing a beat, never having a moment of silence during the broadcast.

The esports industry seems to have settled in

our society. Likewise, the broadcasting of these events is constantly growing. What growth have you observed in this type of events?

The eSerie A TIM championship has been a huge success so far. The 2022 event has over two million views, a 13% increase over the previous year. The championship

generated over 100 million social media impressions, a 60% increase over the previous season, and 10 million unique users, a 150% increase. In addition, there was an engagement of 2.2 million and around 1,000 articles published about the event.

This year, the data is even more encouraging, and in much of the data just mentioned, we noticed an increase of 8-10% compared to last season.

The eSerie A TIM championship has provided the platform to reach new audiences and engage them on their own terms. With its hybrid approach to live and online events, the league has created a new world of possibilities, uniting the people who play with those who watch and offering a new way to experience the passion of competitive video games. This year, there are even more streaming minutes thanks to a series of new weekly formats that help tell the story of everything that surrounds the game and the event, and can reach

out to even more fans.

The interest in clubs of building their own esports teams has grown in tandem with the increase in the number of events. We were the first to support all teams by designing a tournament format that includes a first phase where fans can play. A draft allows clubs to choose their players, meaning that each team is made up of one professional and one from the draft. This not only supports the clubs in building their teams but also stimulates participation from the public, who have the chance to realise their dream of representing their club.

In our reporting we’ve come across the idea that esports promotes a lot of innovation in terms of how to deliver content to fans. What innovative processes have you developed to broadcast eSerie A TIM?

The idea of organising the Road Shows, for which we are sure there will be more entries from year to year, was to bring the

competition closer to the kids and the fans who are used to watching the competition only on Twitch. We started this season earlier than in other years, organising the EA eSuperCup, which also saw the All Bars Game collective perform in an eSports-themed battle rap before the semi-finals. This is another novelty to try to make the product even more transversal, without, however, losing the identity of the tournament. We have organised all of this in our new home, the eSerie A TIM Arena, where all the teams and guests can cheer live and make their emotions felt.

There will probably always be a hybrid system because it is the best way to give everyone the opportunity to participate. Many players come from the general public and are ‘drafted’ to professional clubs, so the online part is essential to give anyone the opportunity to participate. Even if we did a lot of local events, we would never touch all the people we can reach online. However, the trend remains