14 minute read

TypesofReinforcementLearning

Positive Reinforcement

Positivebehavior followedbypositive consequences

Advertisement

Punishment

Negativebehavior followedbynegative Consequences

Negative Reinforcement

Positivebehavior followedbyremoval ofnegative Consequences

Extinction

Positivebehavior followedbyremoval ofpositive Consequences

Manager stopsnagging theemployee

Manager ignoresthe behavior

Positive reinforcement

Positive reinforcement is one of the fundamental concepts in reinforcement learning, which refers to the idea of strengthening a behavior by providing a reward or positive consequence for it. In other words, when a positive event or consequence follows a behavior, the likelihood of that behavior being repeated in the future increases. This can be achieved by giving the agent a reward signal when it takes a desired action in a particular state.

Positive reinforcement has several advantages in reinforcement learning. First, it can maximize the performance of an action, as the agent will strive to take the action that provides the greatest reward. Second, it can sustain change for longer, as the agent will continue to take the reinforced action even after removing the reward. This is because the agent has learned that the action is associated with a positive outcome and will continue to take it in the hope of receiving the same reward.

However, positive reinforcement also has a potential disadvantage. If the reinforcement is excessive, the agent may become overloaded with states, which can minimize the results. This is because the agent may become overly focused on a single state and may not explore other states that may lead to even greater rewards. Therefore, it is important to balance providing enough reinforcement to encourage the desired behavior while avoiding overloading the agent with too much information.

Negative reinforcement

Negative reinforcement in reinforcement learning refers to strengthening a behavior by removing or avoiding a negative condition or stimulus. This can be seen as a way of escaping or avoiding an undesirable situation, reinforcing the behavior that led to the escape or avoidance.

One advantage of negative reinforcement is that it can help to maximize a desired behavior. For example, suppose an employee receives negative feedback from their manager for not meeting a deadline. In that case, they may work harder in the future to avoid negative feedback, which can lead to improved performance.

Another advantage of negative reinforcement is that it can provide a baseline level of performance. For example, a student may study harder for a test to avoid the negative consequence of a failing grade, which can ensure a minimum level of understanding and knowledge.

However, one disadvantage of negative reinforcement is that it may only limit the behavior to the minimum necessary to avoid the negative consequence without encouraging further improvement or excellence. For example, an employee may only do the bare minimum required to avoid negative feedback from their manager rather than strive to exceed expectations and achieve greater success.

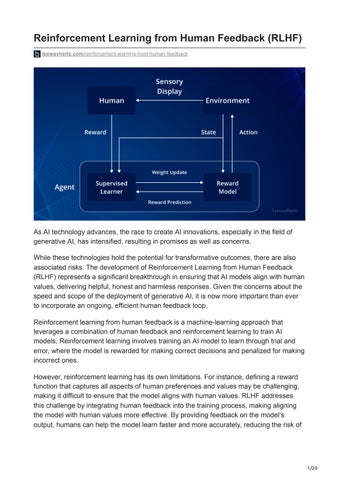

How does reinforcement learning work?

Reinforcement learning (RL) is a machine learning approach that involves training an agent to take the “best” actions in an environment by providing positive, negative, or neutral rewards based on its actions. This process is similar to how we train pets or how babies learn about their surroundings by exploring and interacting with their environment.

The agent in RL represents an “intelligent actor,” such as a player in a game or a selfdriving car, that interacts with its environment. Meanwhile, the environment is the “world” where the agent “lives” or operates.

The two critical components of RL are the agent and its environment, which interact with each other through an action space and a state (observation) space. The agent can perform actions within its environment, and the available action space can be either discrete or continuous. In contrast, a state describes the agent’s information from the environment, and an observation partially describes the state.

Perhaps the most crucial piece of the puzzle in RL is the reward. We train the agent to take the “best” actions by giving a positive, neutral, or negative reward based on whether the action has taken the agent closer to achieving a specific goal. The reward function is crucial to the model’s success, and it is essential to balance short-term and longer-term rewards using a discount factor (gamma).

The final concept in RL is the trade-off between exploration and exploitation. We typically want to encourage the agent to explore its environment instead of using 100% of the time exploiting the knowledge it already has about it. We do this by introducing an additional parameter (epsilon) that specifies in what percentage of situations the agent should take a random action (i.e., explore).

Several algorithms are used in RL, such as Q-learning, SARSA, and Deep Q-Networks (DQN). Q-learning and SARSA are value-based algorithms that rely on updating the value function of the state-action pairs, while DQN is a deep learning-based algorithm that uses a neural network to approximate the Q-value function.

RL has several applications, such as in-game playing, robotics, and self-driving cars. It can potentially solve problems that are challenging to solve with traditional programming methods, such as those that involve decision-making in complex and dynamic environments.

What is Reinforcement Learning from Human Feedback (RLHF)?

In traditional RL, the reward signal is defined by a mathematical function based on the goal of the task at hand. However, in some cases, it may be difficult to specify a reward function that captures all the aspects of a task that are important to humans. For example, in the case of a robot that cooks pizza, the automated reward system may be able to measure objective factors such as crust thickness and amount of sauce and cheese, but it may not be able to capture the subjective factors that make a pizza delicious.

This is where Reinforcement learning from human feedback (RLHF) comes in. RLHF is a method of training RL agents that incorporates feedback from human supervisors to supplement the automated reward signal. By doing so, the RL agent can learn to account for the aspects of the task that the automated reward function cannot capture.

However, relying solely on human feedback is not always practical because it can be time-consuming and expensive. Therefore, most RLHF systems use a combination of automated and human-provided reward signals. The automated reward system provides the primary feedback to the RL agent, and the human supervisor provides additional feedback to supplement the automated reward signal. This may involve providing occasional rewards or punishments to the agent or providing data to train a reward model that can help improve the automated reward signal.

One advantage of RLHF is that it can help improve the safety and reliability of RL agents by allowing humans to intervene and provide feedback when the agent is performing poorly or making mistakes. Additionally, RLHF can help ensure that the agent is learning to perform the task in a way consistent with human preferences and values.

Some applications of RLHF

Game playing: Human feedback can play a vital role in improving the performance of AI agents in game-playing scenarios. With feedback from human experts, agents can learn effective strategies and tactics that work in different game scenarios. For example, human feedback can help an AI agent improve its gameplay and decisionmaking skills in the game of Go.

Personalized recommendation systems: Personalized recommendation systems rely on human feedback to learn the preferences of individual users. By analyzing user feedback on recommended products, the agent can learn which features are most important to them. This allows the agent to provide more personalized recommendations in the future, improving the overall user experience.

Robotics human feedback: Robotics human feedback is crucial in teaching AI agents how to interact with the physical environment safely and efficiently. In robotics, an AI agent could learn to navigate a new environment more quickly with feedback from a human operator on the best path to take or which objects to avoid. This can help improve the safety and efficiency of robots in various applications, such as manufacturing and logistics.

Education AI-based tutors: Education AI-based tutors can use human feedback to personalize the learning experience for students. With feedback from teachers on which teaching strategies work best with different students, an AI-based tutor can help students learn more effectively. This can lead to improved learning outcomes and a better student learning experience.

Launch your project with LeewayHertz

Our RLHF-optimized AI models can adapt and evolve based on real-world conditions to stay relevant and perform optimally in dynamic environments

In reinforcement learning, the key idea is to train an agent to interact with an environment, learn from its experiences, and take actions that maximize some notion of cumulative reward. The environment in NLP tasks can be a dataset or a set of annotated texts, and the agent can be a machine learning model that learns to perform a task, such as classifying the sentiment of a sentence or translating a sentence from one language to another

While language models can be used as part of the agent, other machine learning models such as decision trees, support vector machines, or neural networks can also be used. The choice of the agent architecture depends on the nature of the task, the size and complexity of the dataset and the available computational resources.

Reinforcement learning from human feedback is a complex and multi-stage process. While RLHF can be applied to natural language processing (NLP) tasks, such as machine translation or text summarization, it can also be applied to other domains, such as robotics, gaming or advertising. In these domains, the agent can be a robot, a game player, or an advertising system, and human feedback can come from users, experts or crowdsourcing platforms.

Let’s explore it step by step, discussing the nitty-gritty of training a language model (LM), gathering data, training a reward model and fine-tuning the LM with reinforcement learning.

Pretraining language models

Pretraining language models is a crucial step in the working mechanism of RLHF. The initial model, which has already been trained on a massive corpus of text data using classical pretraining objectives, serves as a starting point for the RLHF training process. This model can be fine-tuned on additional text or conditions but is not always necessary For example, OpenAI’s InstructGPT was fine-tuned on human-generated text, while Anthropic distilled an original LM on context clues for their specific criteria. The size and complexity of the model used can vary widely, from a smaller version of GPT-3 to a 280 billion parameter model like Gopher used by DeepMind.

Pretraining for RLHF, in this case, typically involves training a large-scale language model (LM) on massive amounts of text data using unsupervised learning techniques. The goal of pretraining is to enable the LM to understand the underlying structure of language and generate coherent and semantically meaningful text. This pre-trained LM is then used as a starting point for fine-tuning the RLHF training process. Pretraining is usually done using techniques such as autoencoding or predicting masked tokens, which forces the LM to learn the contextual relationships between words in the input text. The most popular pretraining approach for language models is the transformer-based architecture, which uses self-attention mechanisms to model the dependencies between all input tokens.

Different variants of the transformer architecture, such as GPT, BERT and RoBERTa, have been successfully used for pretraining large-scale LMs for RLHF. However, there is ongoing research on developing new pretraining objectives and architectures that can further improve the performance of LMs for RLHF.

Generating data to train a reward model is the next step, which allows human preferences to be integrated into the RLHF system.

Reward model training

PromptsDataset TrainOn {sample,reward}pairs

SampleManyPrompts

GeneratedText

Reward(Preference Model) HumanScoring LeewayHertz

OutputsAreRanked (Relative,ELO,etc)

The main goal of RLHF in this scenario is to create a system that can take in a sequence of text and return a scalar reward that represents human preferences. The reward model (RM) is the component that accomplishes this task, and it can take various forms, including an end-to-end LM or a modular system that outputs a reward based on ranking.

When it comes to training the RM, there are two main approaches: using a fine-tuned LM or training an LM from scratch on the preference data. Anthropic uses a specialized method of fine-tuning called preference model pretraining (PMP) to initialize these models after pretraining. On the other hand, OpenAI used prompts submitted by users to the GPT API for generating their training dataset.

The training dataset for the RM is generated by sampling prompts from a predefined dataset and passing them through the initial language model to generate new text. Human annotators then rank the generated text outputs to create a better-regularized dataset. One successful method of ranking text is to have users compare the generated text from two language models conditioned on the same prompt. The Elo system can then be used to rank the models and outputs relative to each other. These different methods of ranking are normalized into a scalar reward signal for training.

It’s worth noting that the capacity of the reward language models varies in relation to the text generation models. This means that the preference model must have a similar capacity as the model that would generate the said text to understand the text given to them. Therefore, choosing an appropriate reward model capacity is crucial to ensure the success of the RLHF system.

Finally, with an initial language model that can generate text and a preference model that assigns a score of how well humans perceive it, RL is used to optimize the original language model with respect to the reward model. This process is known as fine-tuning and is a crucial step in the RLHF training process.

Fine-tuning with RL

Fine-tuning a language model with reinforcement learning was once thought impossible, but organizations have found success by using the Proximal Policy Optimization (PPO) algorithm to fine-tune some or all of the parameters of a copy of the initial language model. This is because fine-tuning an entire 10B or 100B+ parameter model is prohibitively expensive. The policy is the LM that takes in a prompt and returns a sequence of text; the action space is all the tokens corresponding to the vocabulary of the LM. The observation space is the distribution of possible input token sequences. The reward function is a combination of the preference model and a constraint on policy shift.

To ensure that the fine-tuned model still generates text similar to the pre-trained model, we use a “preference model” that helps us compare the two. The preference model tells us which text is better between the original pre-trained text and the new text generated by the fine-tuned model. To help the model generate better text, we give it a “reward” when it generates text that is more preferred by the preference model. However, we also penalize the model when it generates very different text from the original pre-trained text. We use a math formula to measure the different texts and apply a penalty based on that. This helps the model stay close to the original text and generate coherent text snippets.

The update rule is how we update the model’s parameters to improve its text generation. We use “PPO” to ensure the updates do not mess up what the model has learned. Some reinforcement learning models may have additional terms in their reward function to help them learn better.

RLHF can continue from this point by iteratively updating the reward model and the policy together. As the RL policy updates, users can continue ranking these outputs versus the model’s earlier versions. This is known as Iterated Online RLHF and introduces complex dynamics of the policy and reward model evolving, representing a complex and open research question.

Red teaming

Red teaming in RLHF involves a systematic approach to evaluating generative AI models through the use of human evaluators, who are experts in different fields and can provide valuable feedback on the accuracy and relevance of the models. Red teaming is an important part of the RLHF process in the case of large language models, as it helps to identify potential biases, ethical issues, and other concerns that may arise when the models are used in real-world settings. Red teaming allows generative AI models to be tested in different scenarios, such as natural language processing and computer vision, to ensure they can accurately interpret and respond to the input data they receive. In this process, the human evaluators assess the models’ performance, identify gaps, and recommend improvements.

Red teaming also involves using edge cases which are the scenarios or situations that are unlikely to occur but can potentially cause significant harm if they do, and unforeseen circumstances, which are situations the models were not specifically designed to handle.

Testing the models in these scenarios makes it possible to identify limitations and potential vulnerabilities that need to be addressed.

Launch your project with LeewayHertz

Our RLHF-optimized AI models can adapt and evolve based on real-world conditions to stay relevant and perform optimally in dynamic environments

Learn More

How is RLHF used in large language models like ChatGPT?

LLMs have become a fundamental tool in Natural Language Processing (NLP) and have shown remarkable performance in various language tasks such as language modeling, machine translation, and question-answering. However, even with their impressive capabilities, LLMs still suffer from limitations, such as being prone to generating lowquality, irrelevant, or even offensive text.

One of the main challenges in training LLMs is obtaining high-quality training data, as LLMs require vast amounts of data to achieve high performance. Additionally, human annotators are needed to label the data for supervised learning, which is a timeconsuming and expensive process.

To overcome these challenges, RLHF was introduced as a framework that can provide high-quality labels for training data. In this framework, the LLM is first pre-trained through unsupervised learning and then fine-tuned using RLHF to generate high-quality, relevant, coherent text.

RLHF allows LLMs to learn from human preferences and generate outputs more aligned with user goals and intents, which can have significant implications for various NLP applications. By combining reinforcement learning and human feedback, RLHF can efficiently train LLMs with less labeled data and improve their performance on specific tasks. Therefore, RLHF is a powerful framework for enhancing the capabilities of LLMs and improving their ability to understand and generate natural language.

In RLHF, the LLM is first pre-trained through unsupervised learning on a large corpus of text data. This allows the model to learn the underlying patterns and structures of language, essential for generating coherent and meaningful outputs. Pre-training the LLM is computationally expensive, but it provides a solid foundation that can be fine-tuned using RLHF

The second phase involves creating a reward model, a machine learning model that evaluates the quality of the text generated by the LLM. The reward model takes the output of the LLM as input and produces a scalar value that represents the quality of the output. The reward model can be another LLM modified to output a single scalar value instead of a sequence of text tokens.

To train the reward model, a dataset of LLM-generated text is labeled for quality by human evaluators. The LLM is given a prompt, generating several outputs that human evaluators rank from best to worst. The reward model is then trained to predict the quality score of the LLM-generated text. The reward model creates a mathematical representation of human preferences by learning from the LLM’s output and the ranking scores assigned by human evaluators.

In the final phase, the LLM becomes the RL agent, creating a reinforcement learning loop. The LLM takes several prompts from a training dataset in each training episode and generates text. Its output is then passed to the reward model, which provides a score that evaluates its alignment with human preferences. The LLM is then updated to generate outputs that score higher on the reward model.

One of the challenges of RLHF is maintaining a balance between reward optimization and language consistency. The reward model is an imperfect approximation of human preferences, and the RL agent might find a shortcut to maximize rewards while violating grammatical or logical consistencies. To prevent this, the ML engineering team keeps a copy of the original LLM in the RL loop. The difference between the output of the original and RL-trained LLMs, also known as the Kullback-Leibler divergence, is integrated into the reward signal as a negative value to prevent the model from drifting too much from the original output.

How

Step1

Collectdemonstration dataandtraina supervisedpolicy